Abstract

Background

Attention-Deficit/Hyperactivity Disorder (ADHD) is a complex neurodevelopmental disorder requiring professional diagnosis. Recently, short-video platforms such as TikTok and Bilibili have seen a surge in ADHD-related content, driving a trend of self-diagnosis among the public, particularly young adults. The scientific quality and potential risks of this content have not been systematically evaluated. This study aimed to systematically evaluate the quality and reliability of ADHD content on TikTok and Bilibili, analyze its content characteristics, and specifically investigate the prevalence of content encouraging self-diagnosis and its association with user engagement.

Methods

The top 100 videos from each platform were retrieved using the keywords “ADHD” and “多动症.” After a screening process, a total of 164 videos were included for analysis. Two senior clinical psychologists independently assessed the videos using the modified DISCERN (mDISCERN) tool and the Global Quality Score (GQS). Videos were classified by uploader type (e.g., healthcare professionals, patients/influencers) and content theme (e.g., symptom education, self-tests). A novel Self-Diagnosis Risk Scale (SDRS) was also applied. Nonparametric statistical methods were used for data analysis.

Results

A total of 164 videos were analyzed (88 from TikTok, 76 from Bilibili). Significant platform differences emerged, with Bilibili videos demonstrating superior quality scores (GQS: 3.05 ± 0.91 vs. 2.45 ± 0.88; mDISCERN: 2.62 ± 0.85 vs. 1.88 ± 0.72; both p < 0.001) but TikTok videos showing higher self-diagnosis risk (SDRS: 1.71 ± 0.51 vs. 1.30 ± 0.69; p < 0.001). Healthcare professionals produced the highest quality content (GQS: 3.65 ± 0.68; mDISCERN: 3.15 ± 0.81) with lowest diagnostic risk (SDRS: 0.75 ± 0.49), while patients/influencers created content with the lowest quality and highest risk scores. Critically, a “quality-engagement paradox” was identified: videos with higher self-diagnosis risk received significantly more user engagement (likes: r = 0.45, p < 0.001; shares: r = 0.42, p < 0.001), while quality metrics showed no significant correlation with user engagement measures.

Conclusions

This study reveals concerning patterns in ADHD-related content on major Chinese short-video platforms, where potentially harmful content encouraging self-diagnosis receives preferential algorithmic promotion over scientifically rigorous material. The inverse relationship between content quality and user engagement suggests current platform mechanisms may inadvertently amplify misleading health information while marginalizing evidence-based content. These findings underscore the urgent need for collaborative interventions involving platform operators, healthcare professionals, and public health educators to develop content guidelines, improve algorithmic curation of health information, and support healthcare professionals in creating engaging, evidence-based content. As social media platforms continue serving as primary health information sources, ensuring quality and safety of mental health content must become a priority for platform governance and public health policy.

Introduction

Attention-Deficit/Hyperactivity Disorder (ADHD) is a complex neurodevelopmental disorder. An accurate formal diagnosis requires comprehensive clinical assessment by qualified professionals to ensure effective intervention.1–3 In the digital era, the landscape of health information seeking has fundamentally shifted. Short-video platforms have revolutionized how the public accesses health data. TikTok and its Chinese counterpart, Douyin, are dominant short-video applications characterized by their algorithmic recommendation systems and massive user bases. Douyin alone boasts over 700 million daily active users, serving as a primary information hub. Bilibili, often likened to YouTube, adds a unique dimension; it is a leading video-sharing platform in China popular among Generation Z, known for its interactive “bullet chat” (danmu) feature and longer-form educational content. On these platforms, mental health topics have garnered billions of views. 4 This platforms’ algorithm-driven nature, combined with highly engaging video formats, makes them powerful tools for disseminating information. This is particularly true for mental health, where platforms offer spaces to find relatable experiences, build community, and reduce the stigma often associated with psychological conditions. 5 Consequently, an online ecosystem of mental health content, created by a diverse range of uploaders from licensed clinicians to individuals sharing their personal stories, has flourished.

This trend has given rise to a significant and concerning phenomenon: the diverse range of u of ADHD. The platform is saturated with content presenting ADHD symptoms through relatable skits and checklists (“If you do these 5 things, you might have ADHD”). This facilitates self-diagnosis, defined as the process where individuals identify themselves as having a medical condition based on their own interpretation of symptoms, often without professional consultation. Several factors contribute to this phenomenon. At the individual level, barriers to professional care and a desire for validation drive users online. Content-wise, short videos often decontextualize complex psychiatric symptoms into generalized behaviors, leading viewers to falsely pathologize normal experiences. 6 While this content can be empowering—raising awareness, destigmatizing the disorder, and prompting some individuals to seek professional help—it also carries substantial risks of fostering anxiety and inaccurate self-identification. Furthermore, the lack of rigorous content moderation raises concerns about the spread of misinformation regarding treatments and coping strategies. Recent studies have begun to scrutinize the validity of ADHD content on social media, primarily focusing on Western platforms. For instance, Schiros et al. demonstrated that exposure to ADHD misinformation on TikTok not only decreases accurate knowledge but also paradoxically increases treatment-seeking intentions. 7 Similarly, Karasavva found that fewer than 50% of symptom claims in popular videos aligned with clinical criteria, yet frequent consumption was linked to inflated estimates of ADHD prevalence in the general population. 8 In the digital era, social media has transformed health information seeking. While previous studies have analyzed mental health discourse on text-based platforms like X (formerly Twitter),9–11 the landscape of short-video platforms remains understudied. Unlike text-based interactions, short-video platforms such as TikTok and Bilibili rely heavily on algorithmic recommendation systems and high-engagement visual formats, potentially amplifying misinformation in unique ways. Therefore, investigating these major Chinese platforms offers a critical opportunity to understand how neurodevelopmental disorders are portrayed in a non-English speaking, algorithm-driven digital environment. The primary objectives are: (1) to systematically evaluate the quality and reliability of the information presented; (2) to characterize the videos based on uploader source and content theme; and (3) to specifically investigate the prevalence of content that encourages self-diagnosis and analyze its relationship with user engagement metrics. We hypothesize that videos uploaded by nonprofessionals (e.g., patients, influencers) will be more popular but of lower scientific quality and will pose a higher risk for self-diagnosis compared to content from healthcare professionals. We further hypothesize that user engagement metrics will not be a reliable indicator of video quality but will positively correlate with the risk of self-diagnosis.

Methods

Search strategy and data collection

This study employed a cross-sectional content analysis design. On 15 March 2025, we conducted a systematic search on the Chinese versions of TikTok (Douyin) and Bilibili. To mitigate algorithmic bias from personalized recommendations, the searches were performed using a newly registered account with no prior viewing history. The search keywords used were “ADHD” and “多动症” (duō dòng zhèng, the Chinese term for ADHD). The top 100 videos from each platform were retrieved using the default “comprehensive ranking” mode, which sorts content based on a weighted algorithm of relevance, views, and engagement metrics. This number was chosen based on previous studies indicating that user viewing rarely extends beyond the first 100–200 results, making this sample representative of the most frequently encountered content. 12

Two researchers (initials) independently screened the 200 videos from each platform. Data extraction for each video included: the video URL, uploader name, uploader verification status, video duration, and user engagement metrics (number of likes, collections/favorites, shares, and comments). All data were recorded on a standardized Microsoft Excel spreadsheet.

Inclusion and exclusion criteria

Videos were included if they were in Mandarin Chinese and primarily focused on ADHD-related topics with educational or experiential content. Exclusion criteria were as follows: (1) videos irrelevant to ADHD; (2) duplicate videos; (3) videos that were purely advertisements for products or services; (4) videos shorter than 15 s were excluded, as they typically consist of entertainment-focused “memes” insufficient for conveying substantive health information; (5) videos without audio or comprehensible subtitles; and (6) user-generated live stream recordings. The detailed screening process is illustrated in the PRISMA flowchart (Figure 1).

Search strategy and video filtering program.

Video classification

Following the screening process, all included videos were systematically categorized by two independent coders based on their uploader source and primary content theme. The uploader source was determined by examining the creator's profile page, self-description, and any official verification badges. Uploaders were classified into one of four distinct groups: Healthcare Professionals (verified doctors or psychologists), ADHD Coaches (individuals identifying as coaches without formal medical licensure), Patients/Influencers (individuals sharing personal experiences or general content creators), and Professional Institutions (official accounts of health organizations). Similarly, each video was assigned to a primary content theme that best described its dominant focus. These themes included Clinical Education, Symptom Checklist/Self-Test, Lived Experience/Coping Skills, Medication Information, and Stigma/Awareness.

Quality assessment tools

The quality and reliability of the videos were assessed using a multi-instrument approach. The Global Quality Score (GQS), a validated 5-point Likert scale, was employed to evaluate the overall quality, flow, and educational usefulness of the content. 13 To assess the reliability of the health information, we used the modified DISCERN (mDISCERN) tool, a 5-item instrument adapted for short-form media that evaluates clarity, balance, and transparency. 14 To specifically address the primary concern of this study, we developed the Self-Diagnosis Risk Scale (SDRS) based on the diagnostic criteria for ADHD in the DSM-5 and emerging literature characterizing social media health misinformation. The scale was refined through a pilot calibration process on 20 videos to ensure construct validity. The SDRS operationalizes “diagnostic risk” not by the creator's motivation, but by the determinism of the language used and the lack of clinical context, thereby quantifying the extent to which content facilitates or promotes premature self-identification.

The scale comprises three distinct risk levels. A score of 0 (Low Risk) is assigned to content that includes clear disclaimers recommending professional consultation, such as explicitly stating “This is not a diagnosis,” where symptoms are described as general experiences or possibilities rather than definitive signs. A score of 1 (Moderate Risk) applies to content that presents symptoms ambiguously without disclaimers, discussing ADHD traits but lacking the definitive language that explicitly confirms a diagnosis for the viewer. Finally, a score of 2 (High Risk) characterizes content that utilizes definitive, deterministic language, such as “If you do these 5 things, you have ADHD.” This high-risk category includes videos that present universal behaviors—exploiting the Barnum effect—as pathognomonic signs, explicitly suggesting viewers align themselves with the diagnosis without professional input.

Evaluation process

The evaluation was conducted by two board-certified clinical psychologists, each with over ten years of experience in the diagnosis and treatment of ADHD. To ensure a high degree of consistency, the raters first completed a calibration exercise on a pilot sample of 20 videos, discussing scoring criteria to establish a shared understanding. Subsequently, all included videos were scored independently by both raters. While blinding to platform identity was not possible due to distinct interface markers, raters were blinded to the specific study hypotheses to minimize bias. Any discrepancies in scores were resolved through a consensus discussion. Inter-rater reliability was calculated using Cohen's kappa coefficient (κ) to ensure the robustness of the scoring process. The analysis demonstrated high agreement across all measures: GQS (κ = 0.80), mDISCERN (κ = 0.81), and the SDRS (κ = 0.81). Content validity was ensured by the expertise of the raters, who are board-certified clinical psychologists.

Statistical analysis

All statistical analyses were conducted using SPSS software (Version 28.0). Descriptive statistics were used to summarize the general characteristics of the videos. Due to the non-normal distribution of the data, as confirmed by the Shapiro–Wilk test, nonparametric statistical methods were employed throughout the analysis. The Mann–Whitney U test was used for comparisons between two independent groups (e.g., TikTok vs. Bilibili), while the Kruskal–Wallis H test, followed by Dunn's post hoc test for pairwise comparisons, was used for analyses involving more than two groups (e.g., uploader types). To explore the relationships between video quality scores, SDRS, and user engagement metrics, Spearman's rank correlation coefficient (ρ) was calculated. For all statistical tests, a p-value of less than 0.05 was considered to indicate statistical significance.

Results

Video screening and general characteristics

A total of 200 videos were initially retrieved, with 100 from TikTok and 100 from Bilibili. Following the screening process detailed in the PRISMA flowchart (Figure 1), 36 videos were excluded: 12 were irrelevant to the topic, 10 were duplicates, 8 were pure advertisements, and 6 were shorter than 15 s. The final analytic sample comprised 164 videos, 88 (53.7%) from TikTok and 76 (46.3%) from Bilibili. Table 1 summarizes the general characteristics of the included videos. Significant platform differences emerged. TikTok videos achieved markedly higher user engagement, with a median of 369 likes (interquartile range [IQR] 144–1741), compared with 73 likes (IQR 17–408) on Bilibili (p < 0.001). Conversely, Bilibili videos were substantially longer, posting a median duration of 304 s (IQR 225–949), whereas TikTok videos averaged only 74 s (IQR 52–96) (p < 0.001). No statistically significant differences were observed between platforms in the median numbers of collections or shares.

General characteristics of included videos on TikTok and Bilibili.

IQR: interquartile range.

Data are presented as median [25th percentile, 75th percentile]. P-values were calculated using the Mann–Whitney U test.

Uploader and content theme distribution

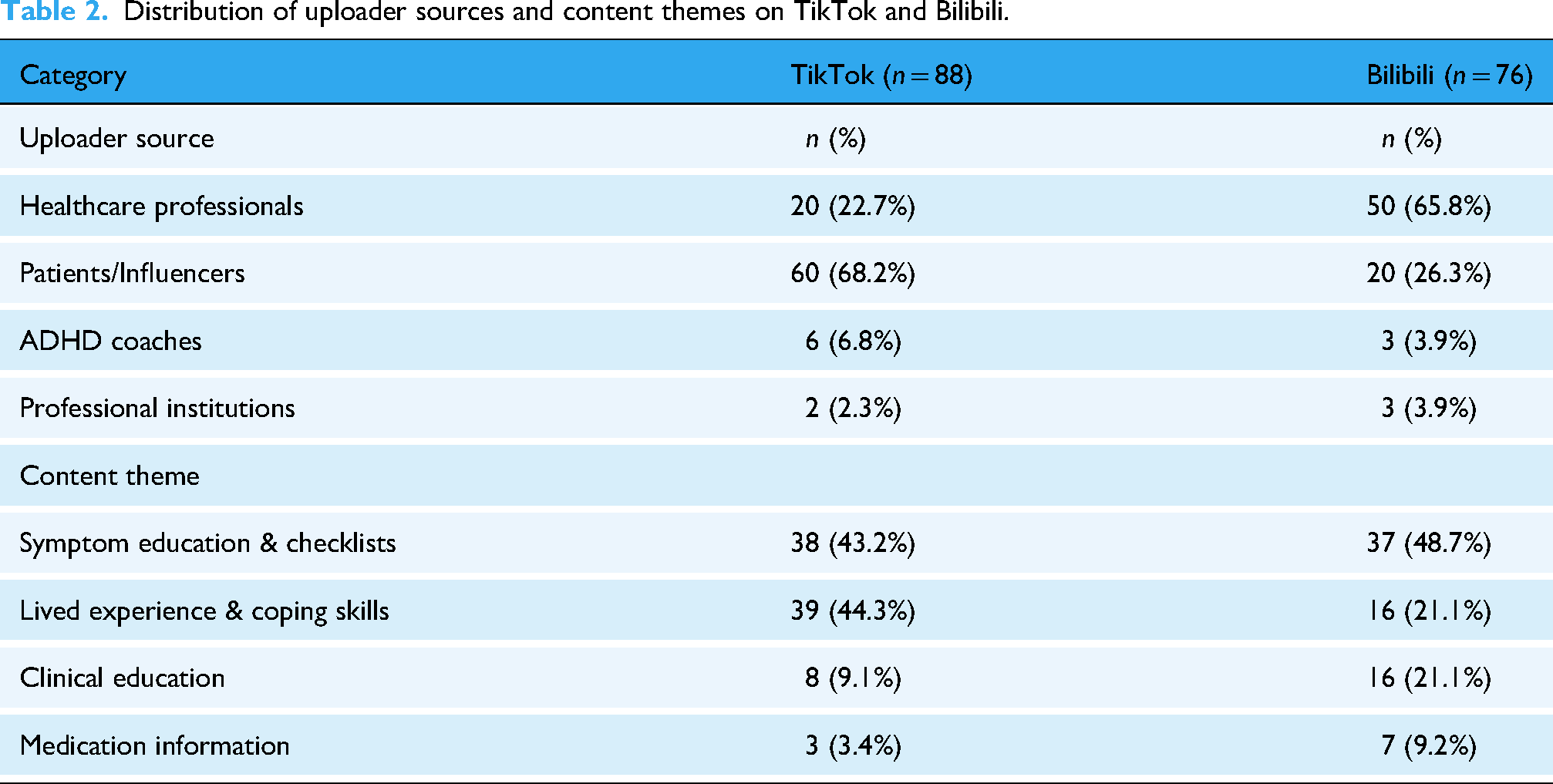

As detailed in Table 2, a platform-specific analysis revealed distinct uploader source distributions. On TikTok (n = 88), content was predominantly created by Patients/Influencers, who accounted for 68.2% of the videos. Healthcare Professionals contributed 22.7% of the content, followed by ADHD Coaches at 6.8% and Professional Institutions at 2.3%. In contrast, the creator ecosystem on Bilibili (n = 76) was led by Healthcare Professionals, who were responsible for 65.8% of the platform's videos. Patients/Influencers accounted for 26.3% of Bilibili's content, while both ADHD Coaches and Professional Institutions each contributed 3.9%.

Distribution of uploader sources and content themes on TikTok and Bilibili.

Regarding content themes (Table 2), “Symptom Education & Checklists” was the most prevalent category, accounting for 45.7% (n = 75) of all analyzed videos. This was followed by “Lived Experience & Coping Skills” (33.5%; n = 55) and “Clinical Education” (14.6%; n = 24). Content specifically about medication was the least common theme, appearing in only 6.1% (n = 10) of the videos.

When analyzing the platforms separately, distinct content priorities emerged, reflecting their different user bases and formats. On TikTok (n = 88), the content heavily favored personal and easily digestible formats. “Lived Experience & Coping Skills” was a dominant theme, making up 44.3% (n = 39) of its videos, alongside “Symptom Education & Checklists” at 43.2% (n = 38). In contrast, themes requiring more depth were less common, with “Clinical Education” accounting for 9.1% (n = 8) and “Medication Information” for just 3.4% (n = 3) of the videos.

On Bilibili (n = 76), the content distribution was more balanced and leaned towards educational material. While “Symptom Education & Checklists” remained the most common theme at 48.7% (n = 37), “Clinical Education” represented a significantly larger share of content at 21.1% (n = 16) compared to TikTok. Similarly, “Medication Information” was more prevalent, accounting for 9.2% (n = 7) of Bilibili's videos. The “Lived Experience & Coping Skills” theme, while still present, constituted a smaller portion of the content at 21.1% (n = 16).

Video quality and self-diagnosis risk assessment

Prior to examining platform differences, an analysis of the overall sample (N = 164) revealed that the general quality of ADHD content was suboptimal. The mean GQS score for all videos was 2.73 ± 0.94 (out of 5), indicating that the average video offered only “poor” to “fair” educational value. Similarly, the mean mDISCERN score was 2.22 ± 0.88 (out of 5), suggesting widespread issues with reliability and source transparency. The results of the quality and risk assessments are presented in Table 3. In this study, “quality” (measured by GQS) reflects the educational value, flow, and comprehensiveness of the video, while “reliability” (measured by mDISCERN) reflects the clarity of sources, balance of content, and transparency. There is a significant disparity in quality between the two platforms. Videos on Bilibili have significantly higher scores on both the GQS (3.05 ± 0.91 vs. 2.45 ± 0.88; p < 0.001) and the mDISCERN (2.62 ± 0.85 vs. 1.88 ± 0.72; p < 0.001) than videos on TikTok, which indicates superior overall quality and reliability. Conversely, TikTok videos have a significantly higher mean SDRS (1.71 ± 0.51 vs. 1.30 ± 0.69; p < 0.001), suggesting that they are more likely to encourage risky self-assessment behaviors.

Video quality and self-diagnosis risk scores by platform and uploader source.

Statistically significant differences are also found across all assessment scores based on the uploader source (p < 0.001 for all). Content produced by Healthcare Professionals consistently achieves the highest quality and lowest risk, with the highest mean GQS (3.65 ± 0.68) and mDISCERN scores (3.15 ± 0.81), and the lowest mean SDRS (0.75 ± 0.49). Videos from Professional Institutions demonstrate similarly high quality (GQS: 3.55 ± 0.73; mDISCERN: 3.10 ± 0.79). In stark contrast, videos from Patients/Influencers are of the lowest quality (GQS: 2.05 ± 0.71; mDISCERN: 1.51 ± 0.53) and pose the highest risk for self-diagnosis (SDRS: 1.95 ± 0.38). The content from ADHD Coaches mirrors this trend of low quality and high diagnostic risk (GQS: 2.15 ± 0.62; SDRS: 1.85 ± 0.42).

Correlation analysis

Spearman's rank correlation analysis was performed (see Supplementary Table S1). No significant correlations were found between video quality metrics (GQS, mDISCERN) and user engagement metrics (likes, shares, comments) (all p > 0.05). However, SDRS scores showed significant positive correlations with likes (r = 0.45, p < 0.001), shares (r = 0.42, p < 0.001), and comments (r = 0.36, p < 0.001). Additionally, a negative correlation was observed between GQS and SDRS scores (r = −0.52, p < 0.001).

Discussion

Principal findings

This study represents the first systematic evaluation of ADHD-related content quality and self-diagnosis risk on major Chinese short-video platforms. Our analysis of 164 videos revealed several concerning patterns that have significant implications for public health and digital health literacy. The findings demonstrate a troubling “quality-engagement paradox” where scientifically rigorous, professionally created content receives less user interaction than potentially misleading material that encourages premature self-diagnosis.

The most striking finding was the inverse relationship between content quality and user engagement. Videos with higher SDRSs consistently attracted more likes, shares, and comments, while professionally produced, evidence-based content failed to achieve comparable engagement levels. This pattern suggests that social media algorithms may inadvertently amplify potentially harmful health information while marginalizing educational content that could genuinely benefit users seeking ADHD-related information.

Platform differences

Consistent with our hypothesis, Bilibili videos demonstrated higher quality scores compared to TikTok. This aligns with Bilibili's community norm favoring longer, educational content, whereas TikTok's format favors brevity and rapid consumption. 13 These findings are consistent with previous research indicating that platform characteristics significantly influence health information quality.6,15 Bilibili's longer video format (median 304 s vs. 74 s on TikTok) allows for more comprehensive information presentation, which correlates with our finding that video duration positively associated with quality scores. 15 The platform differences also extend to creator composition. TikTok's content was dominated by patients and influencers (68.2%), while Bilibili showed a more balanced distribution with healthcare professionals comprising 65.8% of content creators. This disparity may explain why TikTok videos demonstrated higher self-diagnosis risk scores, as nonprofessional creators are more likely to present anecdotal experiences without appropriate clinical disclaimers. 16 These findings align with previous research on health content quality across different social media platforms. Studies examining knee osteoarthritis 6 and myopia prevention 17 content have similarly found that platform characteristics significantly influence information quality, with longer-form platforms generally supporting more comprehensive educational content.

The self-diagnosis risk phenomenon

The prevalence of content encouraging self-diagnosis represents a particularly concerning aspect of our findings. The average SDRS score of 1.52 indicates a moderate to high risk across the analyzed videos, with patient and influencer content showing the highest risk scores (1.95 ± 0.38). This trend reflects broader concerns about the “TikTok diagnosis” phenomenon. Our findings resonate with recent work by Karasavva et al., 8 who found a similar disconnect between popular TikTok content and clinical criteria. 7 Furthermore, the high engagement with risk-promoting content in our study supports the experimental findings of Schiros et al., 7 which demonstrated that exposure to misinformation increases treatment-seeking intentions based on inaccurate knowledge. The positive correlation between SDRS scores and engagement suggests that high-risk content attracts significantly more user interaction. While we cannot infer a direct causal algorithmic promotion from this cross-sectional data, the results indicate that such content thrives within the current ecosystem, achieving greater visibility than lower-risk educational material. 18 The appeal of self-diagnostic content may stem from several factors: the accessibility of simplified symptom presentations, the validation offered by relatable personal experiences, and the perceived empowerment of self-identification. However, our findings demonstrate that such content typically lacks the nuanced clinical context necessary for accurate ADHD assessment, potentially leading to misunderstanding of the disorder's complexity. 1

Professional versus nonprofessional content creation

Healthcare professionals consistently produced higher-quality content with lower diagnostic risk, achieving the highest mean GQS (3.65 ± 0.68) and mDISCERN scores (3.15 ± 0.81) while maintaining the lowest SDRS scores (0.75 ± 0.49). However, this superior quality did not translate to higher user engagement, highlighting a fundamental disconnect between clinical expertise and social media effectiveness.

This pattern mirrors findings from studies of other health conditions on short-video platforms. Research on osteoporosis content found that while 93% of videos were created by doctors, the overall quality remained low, and engagement metrics did not correlate with content quality. 19 Similarly, studies of myopia prevention content revealed that ophthalmologists produced higher-quality videos but achieved lower engagement than nonprofessional creators. 13

The engagement disparity may reflect differences in communication style, with healthcare professionals often using technical language and formal presentation formats that may not resonate with social media audiences seeking accessible, relatable content. This suggests a need for improved science communication training for healthcare professionals engaging with social media platforms. 20

Clinical and public health implications

The proliferation of low-quality, high-risk ADHD content on popular social media platforms poses several clinical challenges. Healthcare providers may encounter patients who have formed preconceptions about ADHD based on misleading social media content, potentially complicating the diagnostic process. More critically, reliance on invalid self-diagnoses can lead to dual harms: some individuals may bypass professional help entirely, attempting ineffective self-management strategies; others may burden an already taxed healthcare system by seeking validation for inaccurate self-assessments, thereby lengthening wait times for those with genuine clinical needs. 2 The study's findings also highlight the need for proactive engagement by mental health professionals in digital health communication. Rather than avoiding social media platforms, clinicians should consider strategic participation to ensure evidence-based information reaches audiences currently exposed to potentially harmful content. 21 This approach requires developing platform-appropriate communication strategies that maintain scientific rigor while achieving user engagement. Specifically, healthcare professionals should adopt “edutainment” strategies: utilizing narrative storytelling (“hooks”) in the first few seconds of a video, translating complex medical jargon into accessible language, and leveraging trending video formats or hashtags to increase visibility without compromising accuracy. From a public health perspective, the results suggest that current content moderation approaches are insufficient to address health misinformation on short-video platforms. The positive correlation between diagnostic risk and user engagement indicates that market-driven algorithmic promotion may systematically favor content that undermines public health objectives.

Limitations

Several limitations should be acknowledged when interpreting these findings. First, the cross-sectional design provides only a snapshot of content at a specific time point, and the rapidly evolving nature of social media content means that quality trends may shift over time. Second, the study focused exclusively on Chinese-language content, which may limit generalizability to other linguistic and cultural contexts. Third, while the GQS and mDISCERN are established tools, the SDRS is a novel instrument developed for this study. Fourth, the SDRS is a novel instrument developed for this study. While it showed high inter-rater reliability, it has not yet undergone broad psychometric validation, and results should be interpreted with this constraint in mind. Its application to short-video formats constitutes a preliminary attempt to quantify diagnostic risk. However, its significant negative correlation with validated quality metrics (GQS and mDISCERN) provides preliminary evidence of its convergent validity. The subjective nature of content evaluation, despite the use of multiple trained raters, introduces potential for assessment bias. Finally, the study did not examine user behavior or outcomes following exposure to different types of content, limiting our understanding of the actual impact of quality variations on health-seeking behavior and self-diagnosis practices.

Future research directions

Future studies should employ longitudinal designs to track content quality trends over time and examine the relationship between content exposure and subsequent health-seeking behaviors. Research investigating user decision-making processes when encountering ADHD-related content could provide insights into why low-quality material achieves higher engagement. Additionally, intervention studies examining the effectiveness of different approaches to improving health content quality on social media platforms would be valuable. Such research could inform evidence-based strategies for healthcare professionals and platform operators seeking to promote responsible health information sharing. Cross-cultural studies comparing ADHD content across different linguistic and cultural contexts could enhance our understanding of how cultural factors influence health information quality and self-diagnosis risk on social media platforms.

Conclusions

This study identifies a “quality-engagement paradox” on Chinese short-video platforms, where ADHD content encouraging self-diagnosis is associated with higher user engagement than evidence-based material. Platform differences were significant, with Bilibili offering higher quality content than TikTok. These findings suggest that current engagement-driven mechanisms may inadvertently amplify misleading health information. Collaborative efforts between professionals and platforms are needed to improve the visibility of scientifically accurate content.

Supplemental Material

sj-docx-1-dhj-10.1177_20552076261434141 - Supplemental material for Evaluating the quality, reliability, and diagnostic risk of ADHD content on TikTok and Bilibili: A cross-sectional content analysis

Supplemental material, sj-docx-1-dhj-10.1177_20552076261434141 for Evaluating the quality, reliability, and diagnostic risk of ADHD content on TikTok and Bilibili: A cross-sectional content analysis by Wei-xia Yu, Zhi-Bin Li, Xiao-yu Shao, Wen-Jun Cai, Ming Wang, Cheng-hao Yang and Wei Lu in DIGITAL HEALTH

Supplemental Material

sj-docx-2-dhj-10.1177_20552076261434141 - Supplemental material for Evaluating the quality, reliability, and diagnostic risk of ADHD content on TikTok and Bilibili: A cross-sectional content analysis

Supplemental material, sj-docx-2-dhj-10.1177_20552076261434141 for Evaluating the quality, reliability, and diagnostic risk of ADHD content on TikTok and Bilibili: A cross-sectional content analysis by Wei-xia Yu, Zhi-Bin Li, Xiao-yu Shao, Wen-Jun Cai, Ming Wang, Cheng-hao Yang and Wei Lu in DIGITAL HEALTH

Footnotes

Ethical approval

This study constitutes an analysis of publicly available data and did not involve human participants. The study protocol was reviewed by the Ethics Committee of Shanghai Putuo People's Hospital, School of Medicine, Tongji University, which granted a waiver for formal ethical approval as the research exclusively utilized publicly accessible data with no direct interaction with or identification of individuals. Consequently, the requirement for written informed consent was deemed not applicable and waived by the ethics committee. The data collection and analysis were conducted in full compliance with the terms of service for both the TikTok (Douyin) and Bilibili platforms and adhered to the ethical principles outlined in the Declaration of Helsinki.

Contributorship

Wei-xia Yu and Zhi-Bin Li contributed equally to this work. Wei-xia Yu and Zhi-Bin Li were responsible for the study design, data collection, analysis, and the drafting of the manuscript. Xiao-yu Shao, Cheng-hao Yang, Wen-Jun Cai, and Wei Lu were involved in the conception of the study, supervision, and critical revision of the manuscript. All authors read and approved the final manuscript.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data availability

The original data used in this study were obtained from the TikTok (Douyin) and Bilibili platforms, where they are publicly available. The analyzed datasets generated during the current study are available from the corresponding author upon reasonable request.

Supplemental material

Supplemental material for this article is available online.