Abstract

Background

Artificial intelligence (AI) is transforming telemedicine within the broader digital-health ecosystem, yet systematic evidence on its technological hotspots and long-run trajectories remains limited.

Objective

To map the technological evolution of AI in telemedicine and identify innovation clusters and pathways that can inform policy, standards, and implementation planning.

Methods

We analysed 1451 AI-telemedicine patents (1992–2024) from the PatSnap database using a text-mining pipeline that combines classification-based social network analysis (SNA) with latent Dirichlet allocation (LDA) topic modelling. Topics were organised by life-cycle phases to trace semantic evolution. Model robustness was assessed using topic coherence and perplexity scores. Network centrality metrics (degree, betweenness, closeness) were used to identify structurally influential technologies.

Results

Four dominant trends emerged: (1) surgical robotics evolving from hardware optimisation to intelligent control (e.g. ‘surgical’ 0.045; ‘robot’ 0.042; ‘control’ 0.016); (2) multimodal data fusion supplanting transmission-only designs (‘patient’ 0.188; ‘site’ 0.117; ‘data’ 0.056); (3) convergence of AI and advanced connectivity (e.g. 5G) enabling personalised, patient-centred telemedicine; and (4) deep-learning image analysis extending from diagnostic support to early disease prediction (‘image’ 0.034; ‘diagnosis’ 0.022; ‘early’ 0.010). Centrality results position surgical robotics and data-fusion infrastructures as persistent long-run technological hubs.

Conclusions

By integrating semantic topic evolution with structural network dynamics, this study provides an empirical overview of the technological evolution of AI-telemedicine. The findings highlight several priority domains, including data-fusion infrastructure, explainable imaging AI, and surgical and remote-care applications, offering insights relevant to digital-health policy and governance.

Keywords

Introduction

The global healthcare sector is undergoing profound transformations. 1 The uneven distribution of medical resources, an aging population and emerging public health crises, such as the COVID-19 pandemic, have accelerated the adoption of telemedicine.2,3 By leveraging information and communication technology (ICT), telemedicine overcomes geographical barriers, reduces costs, and enhances accessibility for underserved populations, thereby promoting equity in health-care delivery.4,5

The integration of artificial intelligence (AI) into telemedicine has increasingly introduced new opportunities to enhance healthcare delivery. In this study, ‘AI-telemedicine’ refers to telemedical platforms or infrastructures that incorporate AI techniques to enable functions such as remote diagnosis, patient monitoring and clinical decision support. These technologies have significantly improved diagnostic accuracy, operational efficiency and clinical decision-making.6,7 For instance, studies have shown that AI-driven breast cancer screening systems achieve diagnostic performance comparable to, or even surpassing, that of radiologists.6,8 These advancements have the potential to optimise healthcare quality and improve resource allocation. 9 Recent evidence also indicates the transformative potential of AI in the health-care industry. AI has played an important role in global crisis management through predictive efficacy observation, 10 while AI-driven conversational agents have been increasingly used for promoting healthy lifestyles and clinical screening. 11 Furthermore, generative models like ChatGPT offer promise in bridging medical education gaps 12 and automating clinical documentation in specialised fields such as plastic surgery. 13

Despite this momentum, a critical knowledge gap persists regarding the structural and semantic evolution of the technological ecosystems driving this integration. Existing research on AI-telemedicine has predominantly focused on application-level outcomes, such as chronic disease management, mental health support, and home-based care delivery.14–19 While these studies yield valuable clinical insights, they are largely outcome-oriented and rarely explore the underlying technological ecosystems driving AI integration. Traditional systematic reviews and meta-analyses,20–24 although comprehensive in evaluating effectiveness, are retrospective in nature and limited in their ability to capture emerging innovation trends. Consequently, a longitudinal and technology-driven perspective remains missing from the current discourse. In contrast, patent data provide a forward-looking and objective source of technical knowledge, offering timeliness, novelty and rich detail. 25 Patent text mining, therefore, holds considerable potential for identifying emerging technologies and mapping their developmental trajectories, 26 yet remains underutilised in this domain.

To address this gap, the study seeks to explore how AI-telemedicine technologies have structurally and semantically evolved through patent-based evidence. This leads to the following research questions: What are the core technologies in AI-telemedicine based on patent classification structures? How have these technologies evolved semantically across distinct phases of development between 1992 and 2024? To address these questions, this study proposes an integrated patent text mining framework combining social network analysis (SNA) for structural mapping and latent Dirichlet allocation (LDA) for semantic topic modelling. This combination enables both relational and thematic exploration of the AI-telemedicine domain across a 30-year period. Accordingly, the objectives of this research are to:

Identify key innovation hotspots in AI-telemedicine using network analysis of patent classification codes; Reveal the structural relationships and clusters among core technologies via co-occurrence analysis; Trace the semantic trajectories of these technologies across three developmental phases (1992–2009, 2010–2021, 2022–2024) using topic modelling; and Provide actionable implications for future policy planning and industrial innovation.

The originality of this study lies in its integrated dual-perspective framework, providing the first longitudinal, system-level foresight into the AI-telemedicine landscape. By merging SNA-based structural analysis with LDA-driven semantic modelling, this research distinguishes itself from traditional clinical studies, offering a unique lens through which to understand how core technologies evolve, converge and diversify over a continuous 30-year period. The findings are expected to inform both academic discourse and strategic planning in digital health innovation.

Methods

This study adopts an analytical framework combining SNA and LDA topic modelling to examine the technological evolution of AI-telemedicine. SNA is applied to patent classification co-occurrence networks to capture structural relationships among technologies and to identify influential technological domains using centrality measures, a common approach in patent-based innovation studies.27,28 LDA is then employed to analyse patent texts and uncover latent semantic topics, enabling the identification of dominant technological themes and their evolution over time. 29 Together, these methods enable a complementary analysis of technological structure and thematic content across different stages of development.

Patent data acquisition

This study employed PatSnap as the primary source of patent data, owing to its extensive international coverage, which includes over 190 million records across 172 jurisdictions, as well as its structured bibliographic fields and daily updates. 30 These characteristics make it suitable for large-scale and text-based analyses such as topic modelling and network analysis.

Building on this data source, we developed a search strategy focused on the core themes of ‘telemedicine’ and ‘artificial intelligence’, expanding the scope by incorporating synonyms identified from relevant literature.3,31 To eliminate noise interference, the search process was refined through iterative testing of preliminary search terms and exclusion criteria. The grouping of keyword terms used in the search is summarised in Table 1. The search period was set from 1992 (the year of the earliest identified patent application) to 31 December 2024, and only invention patents, including applications and granted patents, were considered. The search was conducted within the title and abstract fields. The final Boolean search formula is presented in Table 1.

Search formula using Boolean logic.

To further ensure data relevance, a screening process was employed to systematically eliminate irrelevant patents. As a result, a final data sample of 1451 patents was obtained. This sample size was not pre-determined but defined by the boundaries of the AI-telemedicine domain over the period 1992–2024, representing the comprehensive dataset obtained after rigorous filtering. Analysing the complete identified population provides sufficient volume to support robust network and topic modelling analyses (e.g. centrality measures and topic coherence), while ensuring a representative and statistically reliable mapping of the global innovation landscape in this field.

SNA: hotspot technology identification

Hotspot technologies reflect the research focus and trends in a specific period. SNA is commonly used to identify them by mining hidden relationships and revealing technological correlations and core nodes.27,32 In SNA, a network model consists of ‘nodes’ and ‘edges’. The subgroup level of the International Patent Classification (IPC) is used as nodes due to its detailed reflection of patent features. 33 For example, ‘A61B5/097’ refers to devices for facilitating breath collection or directing breath through measuring instruments. Edges represent co-occurrence relationships between IPC codes within the same patent, with edge weight determined by co-occurrence frequency – the higher the frequency, the stronger the technological association.

IPC data were extracted from the 1451 patents using Python (pandas, numpy) to generate a co-occurrence matrix quantifying pairwise edge weights. The matrix was imported into Gephi to construct the network and compute node- and edge-level metrics. Three centrality measures were calculated using Gephi's built-in statistics module: (1) Degree centrality, measuring the number of direct links connected to a node, and indicating the prominence of a technology within the network; (2) Closeness centrality, capturing how efficiently a node can reach all others, thus reflecting its potential for system-level integration; and (3) Betweenness centrality, quantifying how often a node lies on the shortest paths between other nodes, highlighting its role in bridging distinct technological domains. These indicators jointly reveal the structural positioning of key technologies within the AI-telemedicine landscape.

In summary, SNA enables the identification of core technologies and their interconnections of the AI-telemedicine. The combined use of Python for network construction and Gephi for quantitative analysis and visualisation supports rigorous hotspot identification, including visual outputs such as node size, edge thickness and modularity-based community colouring.

LDA topic modelling: technology topic evolution

Technology evolution traces the development trajectory of technological systems over time. While IPC classifications offer a structured taxonomy, they may not fully capture the intricate details within patent texts. Patent titles and abstracts, serving as high-level content summaries, are suitable for uncovering technological themes and evolutionary pathways through text mining. However, patent data consists of unstructured text with complex semantics, posing challenges for traditional analytical methods. Advances in topic modelling techniques provide effective tools for detecting hidden patterns and trends in such data. 34

Building on this, this paper applied the LDA model to extract technological themes and analyse their evolution. LDA is a probabilistic topic modelling algorithm developed by Blei et al., 29 which assumes that each document comprises a mixture of latent topics, each characterised by a distinct distribution over words. During training, the model estimates the topic distribution for each document and the word distribution for each topic, with the number of topics (K) as the main input parameter. 35 LDA was selected for its compatibility with the semantic and structural characteristics of patent texts. Patent titles and abstracts typically contain semi-structured and interdisciplinary content, where multiple technical domains often coexist within a single document. LDA's ability to model documents as mixtures of topics aligns with this compositional nature, making it suitable for capturing overlapping and evolving technological themes. 36

Compared to alternative unsupervised methods, LDA offers methodological advantages for the longitudinal analysis of domain-specific patent corpora. Unlike clustering-based approaches such as BERTopic, which primarily assign documents to a single dominant cluster, 37 LDA supports multi-topic assignment, enabling finer-grained semantic representation. Hierarchical Dirichlet Process (HDP), while capable of automatically inferring the number of topics from data, may introduce higher computational complexity and yield variable topic structures across time segments, which can complicate consistent longitudinal comparison. 38 These models may be preferable in different contexts. While BERTopic is often favoured for short, dynamic social media texts and HDP is suitable for exploratory studies in domains with highly uncertain topic structures, LDA remains one of the most commonly used approaches in patent informatics due to its high interpretability and controllable topic granularity, making it suitable for longitudinal topic modelling in the patent domain.39–41

To operationalise the LDA model, K needed to be specified. In this study, K was constrained to a range of 5–15, 42 based on prior research that emphasises topic interpretability and seeks to avoid overfitting, particularly given the limited sample size per phase.43,44 Within this range, K was selected using the LdaModel and CoherenceModel modules in the Gensim library for Python, based on topic coherence (semantic consistency) and log-perplexity (predictive ability). Higher coherence reflects more interpretable and semantically consistent topics, while lower perplexity indicates better model generalisation. As these two metrics do not necessarily reach their optimal values at the same topic number, coherence was not treated as the sole decision criterion. Instead, the final K for each phase was determined through joint consideration of coherence and log-perplexity, prioritising semantic interpretability while maintaining stable model performance.

For Stage 1 (1992–2009), although K = 5 achieved a higher coherence score, K = 8 yielded the lowest log-perplexity value, indicating better model fit and generalisation. In addition, smaller K values tended to merge conceptually distinct technological domains into a few broad topics, limiting the representation of early-stage technological differentiation. Therefore, K = 8 was selected as it provided a more appropriate balance between semantic interpretability and model performance. For Stage 2 (2010–2021) and Stage 3 (2022–2024), the selected topic numbers were primarily guided by coherence scores, as log-perplexity values across different K settings remained relatively stable with only minor variation. Under these conditions, coherence served as the main distinguishing criterion, leading to the selection of K = 11 for Stage 2 and K = 9 for Stage 3, respectively. Full evaluation results are presented in Supplemental Table S1.

Overall, this paper followed four steps to analyse technological evolution. Firstly, patent application trends over time were used to segment the technology life cycle (TLC) into distinct phases: introduction, growth, maturity and decline. Secondly, the Gensim-based LDA model was applied to each phase's patent texts to identify key technical terms and their relative prominence. Thirdly, the Python library Plotly was used to connect semantically similar topics across stages, generating a visual map of technology evolution. Finally, a forward-looking analysis was conducted using time-series patterns and simple regression modelling to assess the trajectory and reliability of emerging technologies. By integrating insights from SNA-derived dominant topics, this multi-step approach enabled both interpretive and predictive analysis of thematic shifts across the TLC.

Results and discussion

Hotspot technology analysis

To identify hotspot technologies in AI-telemedicine, an IPC co-occurrence network was constructed using SNA (Figure 1). Node size reflects degree centrality (Table 2), while edge thickness corresponds to co-occurrence frequency (edge weight, Table 3). The meanings of individual IPC codes are listed in the ‘Meaning’ column of Table 2. Four colour-coded communities were automatically generated via Gephi's modularity algorithm to enhance visual clarity only, as the analysis focuses on high-centrality IPC codes rather than community structures. Based on this network structure, we identified key technologies with the highest degree centrality, reflecting their prominence in AI-telemedicine innovation.

IPC co-occurrence network diagram constructed using degree centrality. Each node represents an IPC subgroup classification. Node size reflects degree centrality; edge thickness represents the strength of IPC co-classification. Each colour corresponds to a separate community in the network.

High-centrality IPC codes and functional descriptions.

Top IPC code pairs ranked by co-occurrence edge weight.

As shown in Figure 1, the 1992–2024 AI-telemedicine SNA network identifies 44 key technologies (degree centrality >60). A61B5/00 (monitoring physiological or physical conditions of patients) exhibits the highest centrality, followed by G16H40/67 (remote operation), G16H50/20 (AI-assisted diagnosis), G16H80/00 (doctor-patient communication) and G16H10/60 (patient data processing), which highlights patient monitoring, remote diagnosis and data management as technological hotspots.

Several ICT-related technologies demonstrated high closeness centrality, indicating their critical role in efficient information flow and system integration. These include: AI-assisted diagnosis (G16H50/20), doctor–patient communication (G16H80/00), patient data management (G16H10/60), health risk assessment (G16H50/30) and medical image processing (G16H30/20). Their prominence suggests that seamless data exchange, real-time interaction and predictive analytics are key drivers of AI-telemedicine. The high betweenness centrality of G06N3/08 (medical image processing) highlights its role as a cross-domain bridge, linking AI-driven image analysis with other diagnostic and treatment technologies.

In combination with the network edges in Figure 1, Table 3 reveals the strongest IPC co-occurrence relationships, reinforcing the central role of G16H40/67 (remote operation) in AI-telemedicine. It frequently co-occurs with A61B5/00 (physiological monitoring, weight = 189) and G16H50/20 (AI-assisted diagnosis, weight = 182) – the two highest edge weights in the network. These connections reflect the frequent integration of sensing, control and diagnostic functions. Additional high-weight links, such as A61B5/00-G16H50/20 (171) and G16H10/60-G16H50/20 (133), further suggest that core technologies are typically developed as interoperable systems rather than in isolation.

Technology evolution analysis

Division of TLC

The TLC describes the development trajectory of a technology, from its conceptualisation and R&D investment to eventual maturity, decline or substitution. 45 It serves as a framework for understanding technological evolution, innovation dynamics and industrial transformation. Based on technological maturity and market performance, TLC is typically segmented into four stages: (1) Introduction, (2) Growth, (3) Maturity and (4) Decline. Each stage exhibits distinct technical characteristics, market challenges and strategic implications.46,47 As shown in Table 4, these insights offer valuable references for firms in technology strategy formulation and innovation management.

Characteristics, challenges and coping strategies of TLC stages.

Note: ‘↑’ denotes increasing or upward trends; ‘↓’ denotes decreasing or downward trends.

Figure 2 illustrates the trend of patent filings and cumulative patent applications in AI-telemedicine from 1992 to 2024. The Introduction Stage (1992–2009) is characterised by a low-volume period where annual applications consistently remained below 10 items (mean = 2.94), marking the nascent phase of the technology. A structural change occurred in 2010, marking the beginning of the Growth Stage (2010–2021). During this period, the Compound Annual Growth Rate (CAGR) was 25.6%, as the annual volume rose from 15 items in 2010 to 186 items in 2021. The transition to the Maturity Stage (2022–2024) is identified by the stabilisation of annual volume at a higher plateau (mean = 281.7). Although the cumulative total continues to increase, the CAGR declined to 6.1% during this final phase. The observed deceleration in the growth slope suggests that the field has entered an early stage of maturity.

Life cycle stage chart of the number and cumulative number of patent applications for AI-telemedicine.

Overall, the TLC of AI-telemedicine exhibits three distinct stages: the Introduction Stage (1992–2009), the Growth Stage (2010–2021) and the Maturity Stage (2022–2024). As the technology has not yet entered the Decline Stage, this paper adopts this division to analyse its technological evolution.

Formation of technical topics

The analytical workflow involved merging patent titles and abstracts, performing word segmentation, removing stop words, constructing a bag-of-words representation, and calculating coherence and perplexity scores. After selecting the optimal K, the LDA model was trained separately for each time period. This process resulted in the identification of 8 topics for Stage 1 (1992–2009), 11 for Stage 2 (2010–2021) and 9 for Stage 3 (2022–2024). Topics were derived from word-topic and document-topic probabilities, with the top ten most frequent words selected as central terms. Tables 5 to 7 present the topics and their strength for each phase. Term strength denotes the probability of each keyword appearing within a given topic, as generated by the LDA model. Higher values indicate greater importance of the term in defining the topic.

Topics of Stage 1 (1992–2009).

Note: ‘Strength’ refers to the LDA-generated probability of each term within its topic, indicating the term's relative importance in defining the topic.

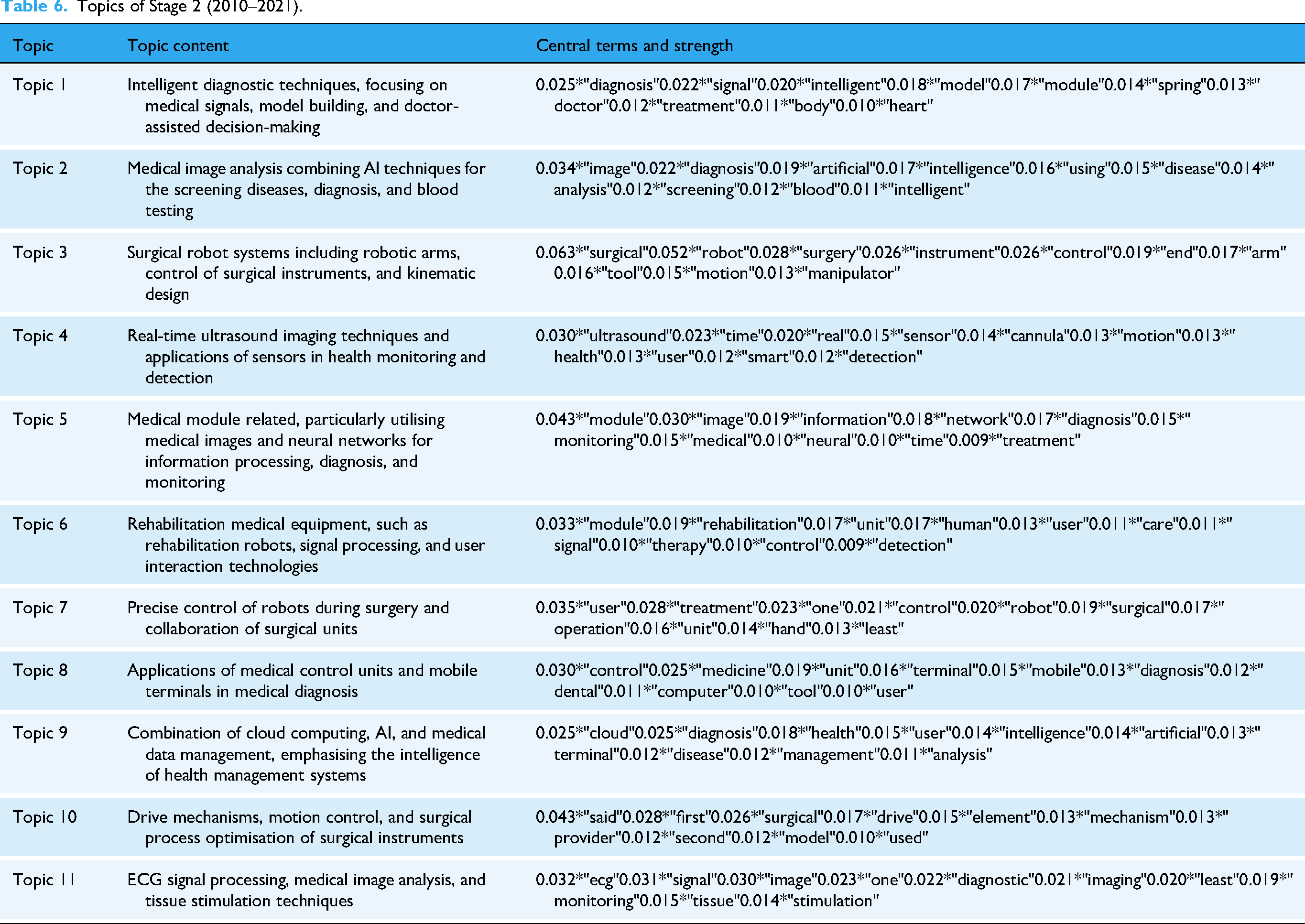

Topics of Stage 2 (2010–2021).

Topics of Stage 3 (2022–2024).

Construction of the technology evolution diagram

To analyse technological evolution, cosine similarity is used to measure thematic continuity between consecutive stages. A higher similarity score corresponds to a thicker connecting line, indicating stronger topic correlation. 48 Based on the calculated topic relevance and strength, a Sankey diagram (Figure 3) was constructed to visualise the technological trajectory of AI-telemedicine (1992–2024). Additionally, the hotspot identification results based on SNA were validated.

Sankey diagram of topic evolution across three TLC stages. Each node represents an LDA-derived topic, with node size proportional to its prevalence. Colours distinguish topics; flow thickness and transparency indicate cosine similarity across phases. For the full list of topic descriptions, see Tables 5 to 7.

This section examines the overall technological evolution of AI-telemedicine, as illustrated in Figure 3 and Tables 5 to 7.

Core technology of long-term evolution

Remote surgical robots integrating precise manipulation and intelligent data analysis are recognised as a core technology in the long-term evolution of AI-telemedicine. This technology has exhibited strong connectivity and clear technological continuity in the evolutionary diagram (Figure 3). Stage 1-Topic 2 (‘link’ 0.128, ‘device’ 0.082, ‘robotic’ 0.070…) focused on surgical robot hardware components and connectivity at the physical layer. Stage 2-Topic 3 (‘surgical’ 0.063, ‘robot’ 0.052, ‘control’ 0.026…) emphasised the implementation and precise control of surgical robots, while Topic 7 (‘user’ 0.035, ‘treatment’ 0.028, ‘control’ 0.021…) highlighted human–machine collaboration, optimising user interaction and treatment control strategies. Topic 10 (‘said’ 0.043, ‘surgical’ 0.026, ‘drive’ 0.017…) addressed driving mechanisms and intelligence in surgical procedures. Ultimately, these technological elements converged in the mature phase into a systemic framework centred on intelligent control of surgical robots (Stage 3-Topic 1: ‘surgical’ 0.045, ‘robot’ 0.042, ‘control’ 0.016, ‘module’ 0.014…).

These longitudinal patterns derived from topic modelling are echoed in recent clinical and technical research. Tian et al. 49 reported successful application of 5G-based telerobotic spinal surgery in 12 patients, achieving precise pedicle screw placement with a mean deviation of just 0.76 mm. Similarly, Li et al. 50 presented robust evidence for the feasibility of 5G-assisted and robot-assisted laparoscopic nephrectomy, with 29 remote procedures conducted at a median delay of 26 ms and no major complications. Furthermore, Marwaha et al. 51 emphasised that the digital transformation of surgery increasingly depends on machine vision, remote monitoring and wearable feedback loops to support intraoperative decision-making. This trend reflects the systemic integration of intelligent control, sensing and procedural coordination identified in Stage 3-Topic 1 of our analysis.

Technical competition and substitution

The evolutionary diagram (Figure 3) illustrates that AI-driven multi-modal data fusion has gradually supplanted conventional remote data transmission models. Stage 1-Topic 1 (‘patient’ 0.188, ‘site’ 0.117, ‘data’ 0.056, ‘network’ 0.038…) and Topic 7 (‘network’ 0.027, ‘medical’ 0.027, ‘site’ 0.027, ‘image’ 0.026…) exhibit weak correlations with subsequent stages and did not persist, suggesting that foundational technologies such as patient monitoring, basic data processing and early image transmission were eventually marginalised and replaced by more advanced integration strategies.

This pattern is mirrored in real-world patent innovations. For instance, Fan et al. 52 developed an intraoperative data optimisation system that leveraged local AI processing to address the bandwidth constraints and latency risks of real-time surgical environments. Another patent 53 introduced an AI-driven diagnostic workstation that integrated clinical metrics, genomic data and imaging outputs for cancer risk assessment, illustrating the shift from segmented to fused-data systems in clinical contexts. Further reinforcing this trend, a recent US patent 54 described a cognitive medical collaboration platform that enabled synchronous and asynchronous multimodal communication between clinicians, AI agents and robotic systems. While these developments provide inline support for the shift towards data-rich environments, certain studies highlight a contradictory reality in clinical practice.15,17 Despite patent-level maturity, the persistence of data silos and a lack of unified interoperability standards often hinder the transition from transmission-only models to fused-data systems, particularly in multi-institutional settings. This suggests that while multimodal fusion is the established technological goal, its practical substitution of conventional models remains constrained by the current state of digital health infrastructure.

Technology differentiation and integration

Telemedicine technology evolution shows differentiation and integration: technologies diverge to form specialised branches addressing diverse needs, and converge into innovative forms at key stages. Figure 3 illustrates that Stage 1-Topic 2 branches into Stage 2 Topics 3, 7 and 10, which later integrate into Stage 3-Topic 1, reflecting complex yet patterned development. AI surgical robots analysis, detailed in ‘Core Technology of Long-term Evolution’, supports this trend. Additionally, Stage 1-Topic 6 (‘operation’ 0.309, ‘control’ 0.209, ‘medical’ 0.108…), focusing on surgical manipulation, strongly links to Stage 2-Topic 9 (‘cloud’ 0.025, ‘diagnosis’ 0.025, ‘intelligence’ 0.014…) and evolves into multi-branch technologies in Stage 3, enhancing personalised care.

As shown in Table 6, Stage 2-Topic 9 establishes a computational foundation via cloud computing and intelligent diagnosis, Topic 8 (‘control’ 0.030, ‘medicine’ 0.025, ‘mobile’ 0.015…) improves equipment flexibility and mobile care, and Topic 6 (‘module’ 0.033, ‘rehabilitation’ 0.019, ‘care’ 0.011…) targets modular rehabilitation. These converge into Stage 3-Topic 7 (‘user’ 0.046, ‘information’ 0.023, ‘plan’ 0.015…), forming a user-centric medical planning system. Enabled by AI and 5G, cross-domain integration boosts diagnostic and therapeutic precision, shifting telemedicine from universal to individualised interventions, reflecting a transition from specialisation to data fusion for precision medicine.

For instance, Beijing Tiantan Hospital developed a robotic vascular intervention system that adjusted control parameters in real time based on vascular condition indicators, enabling personalised endovascular procedures. 55 Moreover, an AI-based surgical path planning algorithm was proposed to generate individualised trajectories by analysing patient-specific bone and joint structures, thereby improving intraoperative accuracy. 56 In rehabilitation, multifunctional AI-powered modules were designed to predict patient movement intent and dynamically tailor therapy programs, optimising personalised recovery strategies. 57 Extending into home care, a patented system applied risk stratification based on elderly individuals’ medical histories and environmental parameters, enabling adaptive monitoring and early warning functions. 58 These advancements provide inline evidence of the technical feasibility of precision telemedicine. 57 Nonetheless, studies on clinical implementation highlight that the increasing complexity of integrated systems presents nuanced challenges, particularly regarding user-end accessibility and the potential for a digital divide in resource-limited settings. 19 This suggests that while the technological framework for personalised care is maturing, achieving its full potential requires addressing the practical barriers to equitable deployment identified in clinical practice.

Breakthroughs in new technologies

From 2010 to 2021, AI-telemedicine entered the period of accelerated growth, with rapid advancements in medical image analysis and disease prediction. As illustrated in Figure 3, Stage 2-Topic 2 (‘AI-based medical image disease screening and diagnosis’) lacks clear links to Stage 1, suggesting a novel breakthrough. It exhibits strong connections to Stage 3-Topic 8, evolving into ‘deep learning-based medical image analysis and early disease prediction’, underscoring enhanced precision and intelligence.

Deep learning and multimodal data fusion have progressively transformed medical image analysis from basic transmission systems into intelligent diagnostic frameworks.59,60 Representative advances include 3D reconstruction systems from limited imaging data, 61 AI-based models for pulmonary nodule analysis 62 and teleconsultation platforms integrating AI-enhanced visual annotation for remote surgical collaboration. 63 In parallel, AI applications have expanded into disease prediction, with machine learning models used for risk assessment in conditions such as dengue fever, tuberculosis, malnutrition and foetal complications. 64 Patented systems in postoperative care further demonstrate the integration of patient records and predictive modules to evaluate recovery trajectories and complications. 65 Nevertheless, challenges persist regarding the explainability of deep learning models and the security of cloud-based data fusion. 15 Collectively, these developments illustrate a strategic shift from image-based diagnostics to predictive analytics. While this transition improves the preventative capabilities of telemedicine, its clinical success remains contingent on addressing the technical and ethical complexities identified in practical application.

Trend validation and forward projection

To strengthen the empirical robustness of the topic modelling results, we conducted a quantitative validation of temporal trends for four hotspot technologies based on annual patent application data.

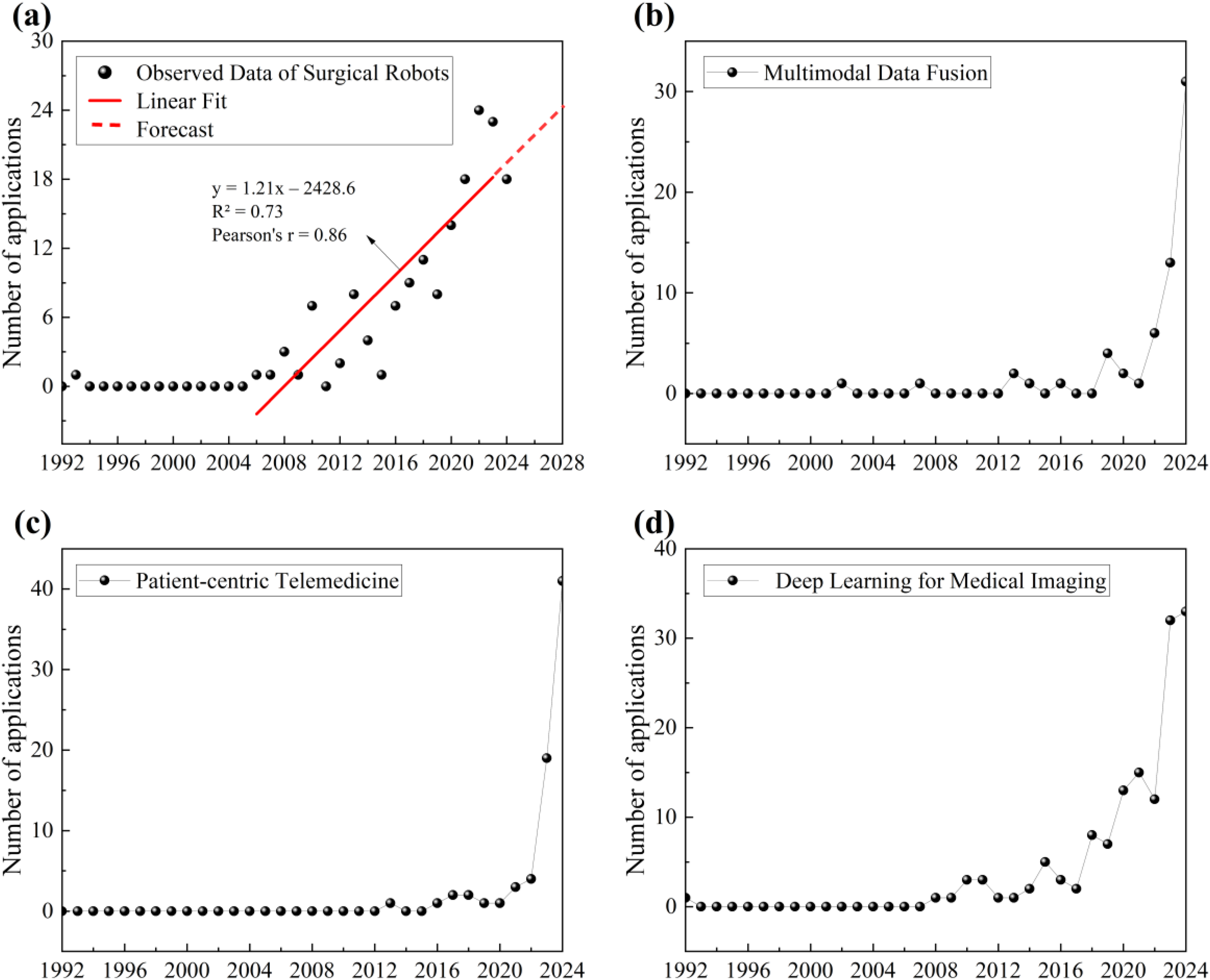

As shown in Figure 4(a), surgical robots exhibit a steady upward trajectory over the past two decades. A linear regression was fitted to the period from 2006 to 2023 to capture the emergence of sustained filing activity. The year 2024 was excluded from regression due to the typical 0–18-month delay between application and publication. As the data were retrieved in mid-2025, filings from 2024 are only partially disclosed, and the figure underestimates the actual volume. For completeness, the 2024 value is shown for reference. The regression results (R2 = 0.73, r = 0.86) indicate a statistically significant linear trend. A simple forward projection was added to illustrate the likely continuation of this growth.

Annual patent application trends of four hotspot technologies (1992–2024). (a) Surgical robots: a linear regression confirms an upward trend (R2= 0.73, r = 0.86), with forecast shown in dashed line. (b) Multimodal data fusion. (c) Patient-centric telemedicine. (d) Deep learning for medical imaging.

For the other three technologies, namely multimodal data fusion (Figure 4(b)), patient-centric telemedicine (Figure 4(c)) and deep learning for medical imaging (Figure 4(d)), no regression was fitted due to recent data surges or limited historical stability. As 2024 data may be incomplete due to publication lag, the consistent rise across all three technologies nonetheless supports their classification as emerging hotspots. These findings, along with the overall growth observed in AI-telemedicine patents (Figure 2), substantiate the topic modelling outcomes and indicate varying levels of technological maturity.

Practical implications

This study positions AI-telemedicine in the early maturity stage (Figure 2), marked by sustained cumulative growth, a stabilising annual filing rate and rising innovation pressure. According to TLC theory (Table 4), this stage requires both continuous innovation and strategic consolidation, making it a critical window for forward-looking policy and investment actions.

Informed by the technology evolution analysis and supported by the trend patterns illustrated in Figure 4, three priority domains are identified for strategic attention: surgical robotics, multimodal data fusion infrastructure and explainable AI in medical imaging. These domains reflect a shift towards more integrated AI-telemedicine systems, as evidenced by the increasing co-occurrence of data, algorithmic and system-level themes, and a growing emphasis on interoperability, model transparency and coordinated system design, rather than isolated technological development. From a system perspective, the progression towards more personalised and data-intensive telemedicine also raises challenges related to access, inclusiveness and institutional capacity, especially across heterogeneous healthcare settings. Addressing these issues at the early maturity stage may influence how technological consolidation affects the long-term structure and accessibility of AI-telemedicine systems.

Limitations

While this study offers an integrated perspective on the technological evolution of AI-telemedicine, several limitations merit critical attention. Patent data are inherently biased towards commercialisable innovations, as patenting behaviour is influenced by national policies and institutional environments. Variations in intellectual property regulations and government subsidies across jurisdictions may result in a dataset that reflects policy-driven incentives rather than purely technical activity. 27 Second, the dataset is constrained by the strategic choices of innovators. Foundational AI algorithms are often retained as trade secrets or released through open-source channels to avoid public disclosure, which may lead to the underrepresentation of certain core technologies.25,66 Third, the statutory disclosure lag of up to 18 months between patent application and publication means that data for the most recent period of analysis are inherently incomplete, providing a conservative estimate of recent technological activity. 67

Moreover, the LDA model employed in this study relies on probabilistic word co-occurrence patterns. While this approach is suitable for large-scale semantic extraction, it may oversimplify complex technical meanings, particularly in interdisciplinary contexts. Although coherence and perplexity scores were used to optimise the number of topics, some degree of thematic overlap or residual underfitting or overfitting risk cannot be fully excluded.35,36 Finally, the reliance on patent titles and abstracts, rather than full texts, introduces variability in terminological richness and technical detail, which may affect the semantic resolution of the extracted topics.

Conclusions and policy recommendations

This study examined the technological evolution of AI-telemedicine and identified key innovation hotspots and evolutionary trajectories using an integrated approach combining knowledge network analysis and topic modelling. Four major trends were observed. First, remote surgical robotics has evolved from a focus on hardware optimisation towards more intelligent control and system integration, forming an important component of high-risk AI-telemedicine applications. Second, multimodal data fusion has moved beyond basic data transmission to support more coordinated and responsive remote care, highlighting its infrastructural role. Third, AI-telemedicine exhibits concurrent differentiation and integration, with AI and 5G technologies contributing to the transition from generalised services towards more personalised, patient-centric healthcare models. Fourth, deep learning and predictive analytics have expanded the role of medical imaging, extending it from diagnostic support to broader applications in prediction and clinical decision-making.

Building on these findings, several policy recommendations can be outlined. Regulators and standard-setting bodies should prioritise the development of interoperability and data-governance standards to support secure and scalable multimodal data exchange across telemedicine systems. In parallel, health authorities and payers may facilitate adoption through clearer clinical validation and procurement pathways for higher-risk AI-telemedicine applications, such as telesurgery and remote monitoring, accompanied by appropriate post-market surveillance mechanisms. In addition, medical AI evaluation protocols and controlled regulatory sandbox approaches may help manage model updates and transparency requirements while allowing for iterative development. Finally, reimbursement frameworks and implementation strategies should take equity considerations into account, in order to reduce the risk that underserved populations are excluded as AI-telemedicine systems expand.

To support evidence-based policy design and implementation, future research can extend this work in two directions. One involves integrating patent-based topic models with scientific and funding metadata, such as citation networks, co-authorship patterns or grant distributions, to better contextualise the relationship between technological invention and academic knowledge production. The other calls for empirical investigations of AI-telemedicine systems in clinical practice, aiming to validate how closely disclosed innovation trajectories align with real-world effectiveness and societal impact.

Supplemental Material

sj-pdf-1-dhj-10.1177_20552076261434054 - Supplemental material for Artificial intelligence in telemedicine: Topic modelling and network analysis of patents (1992–2024)

Supplemental material, sj-pdf-1-dhj-10.1177_20552076261434054 for Artificial intelligence in telemedicine: Topic modelling and network analysis of patents (1992–2024) by Ruyi Xiao, Shiya Ma, An Lu, Weihong Shi and Jin Zhang in DIGITAL HEALTH

Footnotes

Author contributions

R.X.: conceptualisation, methodology, formal analysis, writing – original draft, visualisation. S.M.: writing – review & editing. A. L.: writing – review & editing. W.S.: writing – review & editing. J.Z.: conceptualisation, methodology, writing – review & editing, supervision, project administration.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the 2024 Innovative Talents International Cooperation Training Program (Project No. 202407070131) of the China Scholarship Council, the National Intellectual Property Administration (NIPA) research project ‘Analysis of Several Legal Issues on Patent Data Ownership’ (Project No. ZX202306) and the Key Laboratory of Fluid and Power Machinery, Ministry of Education, Xihua University (Project No. LTDL-2024004).

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data availability statement

Data generated during the current study are available from the corresponding author on reasonable request.

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.