Abstract

Objective

Ambient listening with subsequent artificial intelligence enabled medical record documentation is changing how physicians interact with the electronic health record (EHR). We studied primary care physician use, documentation time, and changes in note metrics associated with ambient listening technology.

Methods

We calculated the percentage of physicians adopting the ambient listening tool, Abridge. Note input contributed by Abridge was determined by the percentage of total characters in generated notes. Using the Epic EHR Signal metrics, we analyzed the change in note documentation time and note length for Abridge users before and after they implemented Abridge in their note generation process.

Results

The percentage of physicians using Abridge increased from 15% to 50% in 8 weeks. Adoption was also reflected in the percentage of the note attributable to the use of ambient listening technology. Note content generated by Abridge tripled, from 5% to 15% over the same 8 weeks. After Abridge adoption, 332 primary care physicians had a mean time of 4.16 minutes [confidence interval (CI) 95%; 3.86, 4.46] time in notes per note in a paired comparison to 5.11 minutes [CI 95%; 4.75, 5.47] per note prior to Abridge. Mean time saved per note after Abridge was 0.95 minutes [CI 95%; 0.48, 1.42, p < 0.0001], an 18.6% reduction in time. Mean note length for Abridge adopters increased by 238 characters [CI 95%; 89, 387, p < 0.0001] from a pre-implementation mean of 4404 characters per note (+5.4%).

Conclusion

Ambient listening had rapid uptake and widespread use by physicians in primary care. It was also associated with a significant decrease in time in notes.

Keywords

Introduction

The demands of medical documentation pose a significant challenge to modern healthcare. Studies have shown that physicians spend a substantial portion of their time on electronic health record (EHR) tasks, such as documenting patient encounters, which has led to reduced time for direct patient interaction and decreased job satisfaction.1–3 In response to these challenges, various strategies have been implemented to streamline the medical record documentation process.

Human scribes, trained documentation staff who accompany physicians and other clinicians during patient encounters, have been used for this purpose for many years.4–11 Scribes have been demonstrated to increase provider efficiency by decreasing provider time spent on documentation.4,6,7 By using scribes, providers are able to spend more face time with patients, improve provider and patient satisfaction, and increase revenue.7,9,12 This has been thought to represent a win–win situation for a medical practice. The practice saves money through increased provider efficiency, and providers avoid the documentation burden of writing notes.6,7,11

Artificial intelligence (AI) software has only recently competed with human scribes. A systematic review in 2023 found only eight published articles that studied how automatic speech recognition tools affected physician–patient interactions and documentation of patient encounters. 13

These ambient AI scribes are ambient listening and AI documentation tools that are effectively replacing human scribes. Ambient listening during a patient encounter occurs through a cell phone or tablet that the provider places in a location to achieve adequate sound quality. The AI software then constructs a medical note based on content summarized from ambient listening.

There are a number of ambient listening AI tools now available, including Abridge, Nuance DAX (Dragon Ambient eXperience), and others.14–16 Several features make ambient listening AI tools attractive to medical practices and providers. A qualitative study of providers’ views of Nuance DAX found that physicians had positive experiences with temporal demand and engagement with patients. 17 The same study also found that providers reported positive experiences concerning ease of use of the software, cognitive demand, workload, and work–life balance. 17 Quantitative studies have also been performed which show a significant decrease in note documentation time with ambient listening.18,19

In this study we examined the Mayo Clinic implementation of Abridge software, an AI ambient listening and documentation tool. We examined primary care physicians’ adoption and use of Abridge, the percentage of note content attributable to Abridge, and we quantified physicians’ time in notes documentation before and after use of Abridge.

Methods

Setting

This retrospective study took place at primary care practices within Mayo Clinic. The study took place from 28 April 2024 through 19 April 2025.

Mayo Clinic is a multispecialty, multisite healthcare institution with locations both in the United States and internationally and has over 1.3 million outpatient visits annually. Our study was limited to physicians (MD/DO/MBBS/MBBCh) in the Mayo Clinic primary care practice.

The Abridge ambient listening and documentation process

Abridge is the specific ambient listening tool that has been integrated into the Mayo Clinic Epic® EHR documentation workflow process. Abridge was available on iPhones or iPads within the Epic Haiku® or Canto® electronic health record (EHR) app. No Abridge EHR integration other than through Epic Haiku or Canto was used by physicians during this study.

In the Epic Haiku app (iPhones) or Epic Canto app (iPads), physicians selected their appointment schedule and selected the patient's name to access Abridge ambient listening. With patient verbal consent, the physician selected the Abridge icon in the patient appointment screen to initiate ambient listening. The ambient listening setup and consent usually took less than 30 seconds. To complete the listening process, the physician pressed “Create Note” on the app and the documentation summary process began. Within a few minutes the note populated in the EHR, ready for editing and signing.

Abridge integration into primary care

Our study included four primary care physicians who participated in a 4 week Abridge proof-of-concept study, ending in November 2023, before Abridge was fully integrated in the Mayo Clinic Epic EHR in 2024. Other than those four physicians, no primary care physicians in our study had experience with Abridge use in the Mayo EHR prior to our study start on 28 April 2024.

Our study spanned four phases of Abridge use in primary care starting on 28 April 2024, as noted above. From initiation through week 12 (phase 1) there were no uses of Abridge in primary care note documentation. This was followed by a pre-pilot phase (phase 2) during weeks 13 through 15 in which 7 family medicine physicians tried Abridge. A larger pilot Abridge integration (phase 3) during study weeks 16 through 37 consisted of 71 physicians from pediatrics, family medicine, and internal medicine who used Abridge. At study week 38 (phase 4) Abridge was made available to all physicians in the Mayo ambulatory practice. This final Abridge rollout included all primary care physicians. The pre-pilot and pilot phases of integration were by invitation from practice leadership. Thus, pre-pilot and pilot Abridge users were not random subsets of physicians. Adoption and use of Abridge was entirely voluntary whether obtained by invite (in pre-pilot and pilot phases) or at study week 38 when all Mayo Clinic physicians had access to Abridge.

Data collection

Collection of data was based on weekly data from each unique physician in the community practices of family medicine, internal medicine, and pediatrics, summarized by Epic Systems from the Mayo Clinic EHR and stored in the Epic Signal data repository.

Epic Signal data includes weekly summaries of data concerning several different measures (Epic Signal metrics) such as counts of characters in notes attributed to ambient listening technology, and provider time in minutes spent in notes.

For this study we used Epic Signal metrics 1022, 317, and 1252. Epic Signal metric 1022 provides the number of characters in notes attributable to mutually exclusive sources of note content input. For Mayo Clinic, during the study, metric 1022 gave the character counts used by each of seven different character inputs for notes. Each week, every physician would have a total character count in their notes summed with each total separated into counts attributed to the following Epic defined character input processes: (1) Ambient listening/Abridge, (2) Copy/paste, (3) Manual (keyboard), (4) NoteWriter, (5) SmartTool, (6) Transcription, and (7) Voice recognition. It should be noted that the Epic character input process labeled “(7) Voice recognition” is provider voice input to verbatim text.

Ambient listening tools go well beyond verbatim text transcription from voice input. Abridge captures the patient–provider conversation, and, after selecting “Create Note,” starts a back-end AI process. AI enables the organizing, summarizing, and creation of paragraphs and headers that are placed in appropriate locations within the EHR note structure. The text output of Abridge captures relevant medical information from the patient–provider interaction and can appropriately leave out visit content that may not pertain to the reason for the visit.

Transcription (#6 above) is dictated voice to text. NoteWriter and SmartTool inputs (#4 and #5) noted above are customizable, highly automated text inputs implemented in Epic.

The summed total of all seven separate weekly character counts attributed to the seven input processes by each physician equaled the total character count in notes for each physician during that week. Thus, an increase in ambient listening percentage would cause the sum of the six other input percentages to drop by the same percentage.

The Epic Signal dataset was configured so that note character counts across the entire population of primary care physicians could be summed by week and by each physician. As such, we could obtain the percentage of the note text that was due to Abridge across all primary care by each week and the percentage use of Abridge for note content for each physician ambient user by each week.

Epic Signal metric 317 was a “time in notes” measure, meaning specifically that it calculated the average time spent writing notes per note. Epic Signal supplied each physician's “total time in notes” in minutes for each week, as the numerator, as well as “total notes” (the total number of notes written that week) as the denominator. The calculated time in notes divided by total notes was the Epic metric time in notes per note for each physician for that given week. Since this was measured for each provider for each week there were generally multiple physician-weeks of time in notes per note pre-implementation of Abridge ambient listening. For those who used Abridge, we also had physician-weeks of postimplementation time in notes per note from the same physicians. This pre- and post-Abridge time in notes per note for each physician was the source of data for our matched pairs analysis as described below.

Epic Signal metric 1252 was the average characters per note, also collected for each physician. It was calculated by the total number of characters in the notes for the collection week divided by the total number of notes within the collection week.

As explained above for Epic Signal metric 1022, the note character counts from the 7 input sources for each physician-week were summed separately and then divided by the total note count that week. For a hypothetical physician with 100 notes during a week with 50,000 total characters attributed to Abridge from a total week character count of 500,000, the Abridge input would be 10%. That 10% could have been from 10 physician notes of 5000 characters each, all with 100% Abridge input to make the 50,000 characters of Abridge input that week. The 50,000 Abridge characters that week could also have been from 20 physician notes with 2500 characters each from 5000-character notes (50% Abridge input), and so on. Of course, these examples leave many of those hypothetical notes without any Abridge input at all. As suggested by this example, there was likely variability among physicians in how they used Abridge to construct their notes, but we were unable to capture that with the aggregated weekly Epic Signal data. It should also be noted that patients could refuse ambient listening, which would mean the physician note for that encounter would have 0% Abridge input. Physicians could also have a patient encounter unsuitable for ambient listening and voluntarily decide not to use Abridge for that note documentation. Our data could only give us a weekly average of Abridge associated content by physician-week. Histograms of individual physician's frequency of note counts by percent Abridge content could not be generated from the weekly aggregated Epic Signal data.

Measures

Table 1 summarizes the measures used in this study. Our primary measures were Abridge ambient listening adoption by primary care physicians, percentage of total note text created by Abridge, and pre- to post-Abridge change in physician time in notes.

Description of measures, calculations of measures, and data required.

Data analysis and statistics

Descriptive statistics were used in graphs showing the percentages of physicians who used Abridge and percentage of note content that was attributed to Abridge. If there were any characters in notes attributable to Abridge during a physician-week then the physician-week was categorized as Abridge use. Only those physician-weeks with zero characters in notes attributed to Abridge were Abridge nonuse weeks.

Physicians with data for time in notes both pre- and post-Abridge implementation underwent a matched pairs t-test comparing each physician's mean time in notes with and without Abridge use. Progress note length differences were analyzed by t-test mean comparisons. Linear regression was used to test for the association of percent Abridge content in notes (the continuous dependent variable) by physician's weeks of experience with Abridge (the continuous independent variable).

Results

We had data on 582 primary care physicians, 62.5% (364/582) in family medicine, 26% (151/582) in community internal medicine, and 11.5% (67/582) in community pediatrics. Figure 1 shows that 84% (503/582) of physicians had at least 30 weeks of data available for use to analyze.

Histogram counts of physicians by number of weeks of data available to use for analysis.

Physician adoption of Abridge ambient listening technology

Figure 2 shows the time course of primary care physician use of Abridge over the course of the study. This graphically recapitulates the four phases of the study described in the Methods section. There were no Abridge users from weeks 0 through 12 (phase 1). There was a pre-pilot phase of seven users from weeks 13 to 15 (phase 2). The pilot phase occurred from weeks 16 to 37 (phase 3) with as many as 71 Abridge adopters. From week 38 through week 50, Abridge was available to all primary care physicians (phase 4). Physician use of Abridge increased from 15.3%, at the conclusion of the pilot phase (week 37), to 49.8% in eight weeks. At the end of data collection, 13 weeks after Abridge had become widely available, physician usage was 58.4%.

Percentage of physicians over time using Abridge, the ambient listening tool. Pediatric is Community Pediatrics physicians, Family Med is Family Medicine physicians, and Internal Med is Community Internal Medicine physicians.

Note content contributed by Abridge ambient listening technology

Figure 3 shows the percentage of total text that was generated by Abridge week over week for the note content of the entire practice (black line), and just for Abridge users (red dots). After Abridge became available across the practice at week 38, the percentage of text in notes attributable to Abridge rose rapidly for the entire primary care practice. Some decreases in Abridge-created text by users were observed during the more general rollout of Abridge. These drops in Abridge-created note content were associated with some selective pauses in use based on practice requirements to standardize note templates used with Abridge. When the standardized note templates were disseminated, the content contributed by Abridge increased rapidly again after the pauses, as shown in Figure 3.

Percentage of total note text created in primary care with Abridge ambient listening week by week over the duration of the study. All primary care physicians (black connected line) and just those physicians using Abridge (red dots) are shown during those weeks.

Figure 3 also shows some evidence that for the Abridge adopters, the percentage of content attributable to Abridge continued to increase. For the seven users in the 3 weeks of pre-pilot phase, Figure 3 shows that up to 34% of their combined weekly note content was from Abridge. The average percentage of Abridge-generated content decreased with additional pilot users during the pilot phase. When the pilot phase ended and more general access was available at week 38, the combined user note content from Abridge increased to 28.0% by study end.

Wide variability was observed in the maximum weekly percentage of Abridge content in each physician's notes. Figure 4 shows that the 333 physicians who used Abridge had maximum weekly contributions to their notes from just above 0% to 79.6%. The fitted regression line to this data showed a significant slope increase of 0.5% per week of Abridge use [confidence interval (CI) 95%; .37 to .69] with a constant of 28.0 [CI 95%; 25 to 31, p < 0.0001]. To account for a possible carryover of Abridge experience from the four physicians involved in the proof-of-concept study, we carried out a sensitivity analysis to determine whether the linear regression findings were sensitive to the removal of the four physicians. Using only the 329 physicians without any prior Abridge use, the results were very similar to those including all 333 Abridge users in the study. The linear regression of the remaining 329 showed a slope increase of 0.5% per week of Abridge use [CI 95%; .35 to .68] with a constant of 28.1, [CI 95% 26 to 31, p < 0.0001].

Maximum weekly percentage of Abridge content in notes for each of 333 primary care physicians who had at least 1 week of Abridge use. Spikes (red) in graph are the 95% confidence intervals (CI 95%) of a fitted linear regression line showing a significant increase in physicians’ weekly percent Abridge note content associated with increasing number of weeks that a physician had used Abridge.

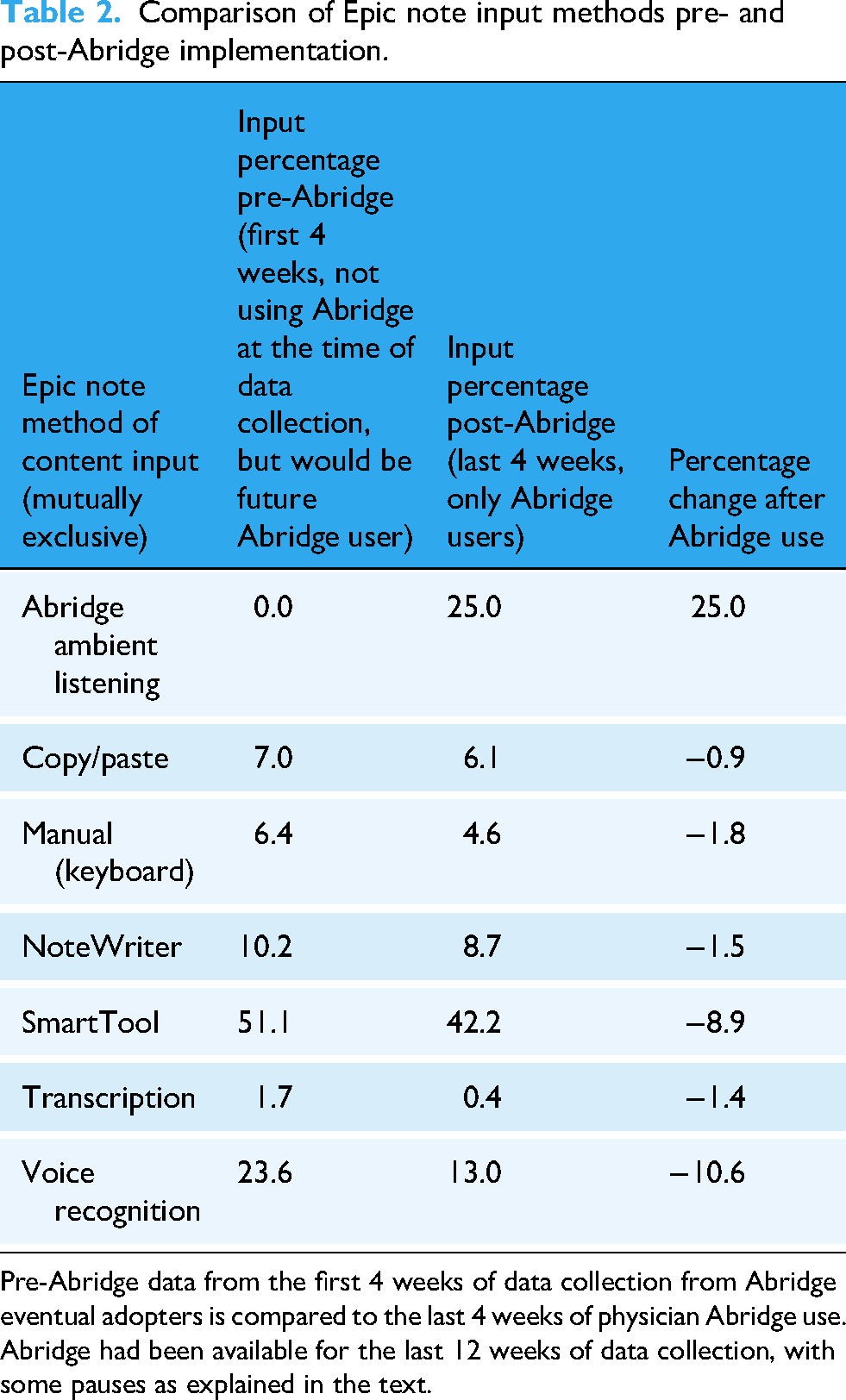

Change in note method of input before and after Abridge

Note input changed dramatically over time for those who used Abridge. For Abridge adopters, we compared the last 4 weeks of note input methods to the first 4 weeks (before Abridge was available). The first 4 weeks data for this comparison was only from physicians who eventually adopted Abridge. Table 2 shows these results which reveal a major shift in how note content was created for these Abridge adopters from before Abridge to after. Of note is the minimal reliance on transcription for these eventual Abridge users even before Abridge use (only 1.7% transcription content pre-Abridge). Also, there was an overall decrease in physician voice contributions in note content from 25.3% (1.7% transcription + 23.6% voice recognition) to 13.4% (0.4% transcription + 13% voice recognition). This was a 45% reduction in nonambient physician voice input from pre- to post-Abridge implementation.

Comparison of Epic note input methods pre- and post-Abridge implementation.

Pre-Abridge data from the first 4 weeks of data collection from Abridge eventual adopters is compared to the last 4 weeks of physician Abridge use. Abridge had been available for the last 12 weeks of data collection, with some pauses as explained in the text.

Table 3 shows a “control” group of Abridge nonadopters during the year-long study. We looked at note input for the Abridge nonadopters in the first 4 weeks and compared it to the nonadopters of Abridge note input in the last 4 weeks of the study.

Comparison of Epic note input methods over time for Abridge nonadopters during the study.

First 4 weeks of nonadopters are compared with the last 4 weeks of nonadopters.

Table 3 shows that there were minimal changes in input methods from the first 4 weeks to the last 4 weeks for Abridge nonadopters. We observed that the nonadopters of Abridge had 3.7 (6.3% / 1.7%) times the use of transcription at the start of the study compared to Abridge adopters. We also observed that the total voice input (the sum of transcription and voice recognition) was similar in adopters and nonadopters at the start of the study, despite the difference in transcription use. Abridge adopters at study start had voice input percentage of 25.3% (1.7% transcription + 23.6% voice recognition), while nonadopters at study start had voice input percentage of 26.1% (6.3% transcription + 19.8% voice recognition).

Change in note length after Abridge implementation

To determine the association of Abridge with note length, we took the weekly note length averages of Abridge users for the last 4 weeks of the study and compared them to physicians during the first 4 weeks who were identified as Abridge adopters. The mean note length of the Abridge users was 4643 characters [CI 95%; 4548, 4737] compared to a pre-Abridge mean note length of 4404 [CI 95%; 4288, 4521], showing a significant increase in of note length of 238 characters [CI 95%; 89, 387], p < 0.0001 (+ 5.4%). For Abridge nonadopters, the mean note length for the first 4 weeks was 4147 characters [CI 95%; 3973, 4321], compared to 3829 characters [CI 95%; 3633, 4025] the last 4 weeks for a significant decrease in note length of 318 characters [CI 95% 56, 580], p = 0.017 (−7.7%).

Physician time in notes pre- and post-Abridge implementation

Figure 5 shows the data flow for the pre-/post-Abridge analysis. We had data on 22,495 physician-weeks from 582 unique physicians. The physician Abridge use data (Epic Signal metric 1022) was merged with the physician time in notes per note (Epic Signal metric 317). This created a dataset containing both data on Abridge use and time in notes for each of the 582 physicians. Figure 5 shows how we obtained a final dataset of 332 physicians who each had data of time in notes for weeks both pre- and post-Abridge.

Data flow for obtaining matched pairs of pre- and post-Abridge physician time in notes.

We excluded 0.5% (117/22,495) of the 22,495 physician-weeks of data because of outliers of time in notes per note greater than 30 minutes for those weeks. Exclusions of these physician-weeks did not exclude any physician from analysis; these outliers were mostly associated with note counts less than 10 for those excluded weeks. Thus, a large time in one or two notes had an oversize effect for the entire week, resulting in the exclusion. Only one physician who used Abridge was excluded from this analysis due to 1 week of Abridge use but no pre-Abridge use.

For the 332 physicians, each having pre–post data for analysis, the mean pre-implementation time in notes was 5.11 minutes [CI 95%; 4.75, 5.47]. Postimplementation, the time was 4.16 minutes [CI 95%; 3.86, 4.46] with a mean difference of 0.95 minutes [CI 95%; 0.48, 1.42], p < 0.0001. Figure 6 shows the histogram of the individual 332 physicians pre-Abridge to post-Abridge implementation differences in time in notes. The median change in time for those using Abridge was a decrease of 0.65 minutes.

Histogram of differences in time in notes using 332 physicians, matching each physician's time in notes pre-Abridge to their time in notes post-Abridge. Positive difference means more time in notes pre-Abridge compared to post-Abridge.

Discussion

Principle findings

When given the opportunity, physicians rapidly started using Abridge. For those using Abridge, the overall note content contributed by Abridge approached 30% over a few months of use.

Physicians using Abridge had a statistically significant decrease in time in notes overall. The mean time in notes went from 5.11 to 4.16 minutes per note after use of Abridge, for a mean saving of 0.95 minutes (18.6% decrease time in notes).

Practice implications

The average Abridge content in notes rose to 17.9% for the entire practice (Figure 3) and was close to 30% for the subset of physician Abridge users in the final week of data collection. Figure 4 shows that several physicians had above 60% Abridge content in their notes for at least 1 week of data collection. There were 39% (130/333) of physicians using Abridge who had at least 1 week with 40% or more content attributable to Abridge in their notes. We also found a statistically significant association between physician Abridge content in notes and the number of weeks that a physician used Abridge.

The practice impact of many physicians transitioning from no AI note content to over 40% of note content from an AI source was a dramatic change in the documentation process for these physicians. Shifting documentation processes away from voice recognition and other sources to AI has not been well-studied. 13 However, one study of 46 ambient listening users found improvements in documentation burden, mental overload from documentation, and ability to interact face-to-face with patients. 18 In the same study, one of the largest changes from pre- to postambient listening implementation was a decrease in positive responses to the following “My documentation burden prevents me from achieving a better work-life balance.” 18 In addition, Table 2 showed an absolute drop of 1.8% in manual input (keyboard). This may seem trivial. However, the 6.4% (11.23 million keystroked characters/176 million characters) pre-Abridge keyboard input compared with the post-Abridge 4.6% (9.0 million keystrokes/196 million characters) keyboard input is worth considering in more detail. There were over 2.2 million fewer characters typed those 4 weeks for those who used Abridge, even considering the overall documentation was greater (176 million to 196 million characters), a 20-million-character increase. Typing 2 million fewer characters perhaps contributes to the perceived reduction in documentation burden, burnout, and improved work–life balance seen in other studies.20–23

Cost-effectiveness of ambient listening technology has also not been well-studied. Table 2 was notable for showing a relative decrease in labor-intensive transcription for those using Abridge. Perhaps more importantly, Table 3 points to a significant opportunity for decreasing the 7% transcription use of the physicians who have not yet adopted Abridge. Comparison of costs of transcriptionists to ambient listening software should help evaluate the cost-effectiveness of this technology.

Patient implications

We observed that much of the increase in note input coming from Abridge was associated with a decrease in the physician voice input processes of front-end speech recognition and transcription. Service industries often use the term “voice of the customer” when looking for ways to improve quality of services.24,25 In medicine, it is increasingly important to have the “voice of the patient” in the EHR.26–28 Ambient listening tools that can summarize patient concerns at the point of care may be an important way to incorporate the voice of the patient into the medical record.

Time in notes comparison with other studies

Other studies have also examined changes in time in notes associated with ambient intelligence tools. Ma et al. 19 noted median time per note was reduced by 0.57 minutes for 45 users of the ambient intelligence tool Nuance DAX. Guo et al. 29 in a study of 31 physicians using Abridge found a pre- to post-Abridge decrease of 1.01 minutes time in notes (6.46 minutes baseline, 5.45 minutes at 3 months post-Abridge). Stults et al. 30 found a decrease of 0.9 minutes time in notes for 91 Abridge users (6.2 minutes pre-Abridge to 5.3 minutes post-Abridge). Our findings (median reduction of 0.65 minutes; mean reduction of 0.95 minutes) using a larger sample size (332 physicians) are notably similar to the findings of the three studies cited above.

Limitations

Although this retrospective study demonstrated a rapid uptake of Abridge as well as increase in Abridge-generated note content, it is necessary to acknowledge limitations, one being the lack of randomization of Abridge use. The lack of randomization is particularly apparent when looking at the difference in use of transcription at study initiation which was 3 times greater for nonadopters than eventual adopters (6.3% compared to 1.7%). Also, the study's focus on a single institution, using a specific ambient listening tool (Abridge), integrated with a single EHR (Epic), limits the generalizability of the findings. Other institutions may have different implementation processes and EHR systems, potentially influencing results.

The weekly aggregated data collection that we described in Methods limited our ability to examine how individual physicians used Abridge to construct their notes. We could not identify individual notes that were 100% Abridge input, 0% Abridge input, or a hybrid. Our matching pairs analysis was across unique physicians who had weeks pre-Abridge paired with weeks post-Abridge. Because of the aggregated data we could not exclude notes without Abridge input. This resulted in an intention to treat type analysis in which our analysis included non-Abridge notes due to patient refusal of ambient listening, or physicians electing not to use Abridge in some encounters. Because notes without Abridge content could not be excluded, estimates of time savings or changes in documentation process could be diluted.

Future research

Our data shows just the beginning phases of implementation of Abridge ambient listening in a large primary care practice. We do not yet know where the physician-use percentage will level off and what the ceiling will be for the percent note content contributed by Abridge. We also do not know the percentage of physicians who may try Abridge, find it counterproductive, and stop using it.

There are voice input and workflow differences among pediatric, internal medicine, and family medicine practices. Ambient listening in a pediatric visit can involve one or more responsible adults along with the child and healthcare provider. An internal medicine visit may involve the patient with spouse or partner and one or more adult children or other adult healthcare advocates. Future research will be needed to examine how the challenges of multiple individuals in the visit, potentially with different healthcare views, affect the AI-generated documentation output and the choice of documentation input.

The association of increased use of Abridge with increasing exposure suggests that physicians are learning new ways to use Abridge as they get more experience. Future research should identify the variety of ways that physicians have learned to use Abridge in different clinical scenarios and use this to help nonadopters and new physicians understand ways that ambient listening can help them with documentation tasks.

Although our study and others have shown decreased time in notes associated with ambient listening, future research will be needed to examine how that translates into improved workflow, access, or reduced after-hours work. Changes in documentation cognitive load from ambient listening tools also need further examination.

An increase in length of notes following ambient listening has also been reported at the University of California, Irvine. 29 However, further study will be needed to determine if the extra content is a value add. The term “note bloat” has been given to the increase in note length over time, even before ambient listening was in use.31,32 Ambient listening quite literally captures the voice of the patient. Future research should examine how ambient listening can capture and summarize patient goals and service needs. If documentation of important patient goals and service needs results in some increase in note length, it may not be “bloat.”

The quality of notes generated with the help of ambient listening needs further attention. The EHR is being considered an input source for identification of patient safety concerns, including error reporting and patients at risk. 33 It will be important for physicians using ambient listening to review the AI-generated note text. Current literature is full of terminology that is concerning about the accuracy of AI-generated text. 34 Whether termed “AI hallucinations,” “fabrication,” “‘stochastic parroting,” or “hasty generalization,” 34 we will need to examine the increasing amount of AI-generated text being placed into physicians’ notes.

Our data represents only a snapshot in time for a software product that is still in active development. Additional longitudinal studies will be needed as ambient listening tools evolve.

Conclusion

There was rapid and widespread adoption of Abridge among primary care physicians at Mayo Clinic resulting in a significant reduction in documentation time. Within 8 weeks, Abridge use increased from 15% to 50%. The physicians using Abridge saw their time in documentation decrease by a mean of 0.95 minutes per note and the amount of their note content attributed to Abridge rose to 28% by study end.

Footnotes

Acknowledgements

The authors thank Michael Wright for help with data collection and Epic metrics, Emily J. North for manuscript review and constructive comments, and Megna Kuverji for project timelines and Abridge implementation details.

Ethics approval

This study was approved by the Mayo Clinic Institutional Review Board (IRB 25-005894). The approval includes a waiver of the requirement to obtain informed consent in accordance with 45 CFR 46.116 as justified by the Investigator, and waiver of HIPAA authorization in accordance with applicable HIPAA regulations.

Contributorships

FN was involved in study conception, data acquisition, and data analysis and statistics; FN, MM, AI, JP, and JE in study design, data interpretation, and final manuscript critical review and revisions; FN, MM, AI, and JP in manuscript draft. All authors agree to the publication of this work.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Guarantor

Frederick North

Peer review

Review completed with anonymized reviewers chosen without author input.