Abstract

Objectives

Socially assistive robots (SARs) have emerged as promising solutions for elderly care, offering companionship, cognitive stimulation, and therapeutic interventions. However, their evaluation presents unique challenges due to the multidisciplinary nature of these systems and difficulty assessing them in real situations. This study presents SHARA-WoZ, a multistakeholder framework for evaluating SARs through Wizard of Oz (WoZ) methods.

Methods

The framework implements a modular web-based infrastructure comprising three components: robot simulation interface, central server, and desktop wizard control application, supporting autonomous, semi-autonomous, and full-manual operational modes. Evaluation employed a between-subjects study with healthcare professionals and technical experts (

Results

The framework achieved above-average ratings across all UEQ dimensions. Healthcare professionals showed consistently higher ratings than technical experts, with largest gaps in Efficiency (

Conclusion

The findings demonstrate that modular, web-based WoZ infrastructures effectively bridge the gap between research prototypes and real-world deployment requirements. The study confirms the critical importance of multistakeholder evaluation approaches, where healthcare professionals provide clinical perspectives while technical experts contribute optimization insights, ensuring both technical excellence and clinical utility in assistive robotics development.

Introduction

The global demographic transition toward an aging population presents unprecedented challenges for healthcare systems worldwide. Projections indicate that the proportion of individuals aged 65 and over will increase from 9.7% in 2022 to 16.4% by 2050, while the number of elderly individuals unable to engage in physical activity is anticipated to surpass 440 million. This demographic shift coincides with shortages in healthcare personnel, where nursing staff dedicated to elderly care represents only 9% of the professional nursing workforce. 1

These challenges have found a potential technological answer in socially assistive robots (SARs), offering companionship, cognitive stimulation, and therapeutic interventions for older adults. 2 Recent advances have demonstrated the potential of social robots to improve psychosocial well-being, reduce isolation, and support independent living among elderly populations.3,4 However, the transition from developing prototypes to real-world deployments faces significant obstacles, particularly in the development of effective evaluation methodologies.

The evaluation of social robots for elderly care presents unique challenges that extend beyond traditional usability testing. Current approaches often lack the scalability, accessibility, and methodological rigor necessary for assessment across diverse environments and user population. 5 Moreover, effective validation requires input from diverse participants, end-users, clinicians, family members, and caregivers, rather than relying solely on technical experts, which increases coordination challenges. 6 The complexity of measuring social, emotional, and therapeutic outcomes requires sophisticated evaluation frameworks that can accommodate multiple stakeholders while maintaining scientific validity.

Despite growing interest in hybrid evaluation approaches that combine automated assessment with human expert oversight, existing platforms often lack the modular, web-based infrastructures necessary to support large-scale studies. 7 The integration of modern web technologies with established Wizard of Oz (WoZ) methodologies 8 presents an opportunity to address these limitations while enabling more accessible and scalable evaluation processes.

The main contributions of this work are: (a) a modular web-based infrastructure for virtualizing SAR control without requiring specialized software installation; (b) a three-component integrated architecture comprising central server infrastructure, web-based robot simulation interface, and desktop wizard control application; (c) integration with external AI services matching real robot capabilities enabling realistic remote operation; and (d) support for three operational modes (autonomous, semi-autonomous, and full-manual control) that facilitate flexible management in diverse scenarios.

The evaluation of the usability and utility of the proposed framework is based on a between-subjects study with two expert groups (healthcare professionals and technical experts,

The remainder of this paper is organized as follows: “Related work’’ section reviews studies in SARs and robot evaluation methodologies for elderly care, including WoZ systems; “Materials and methods’’ section details the framework architecture, including the three-component design, communication protocols, describes the evaluation methodology with the three-phase protocol and dual expert group approach; “Results’’ section presents results of the analysis; “Discussions’’ section interprets these results; and “Conclusions’’ section concludes with implications and lessons learned for WoZ-based evaluation platforms focused on SAR systems.

Related work

The development and evaluation of SARs represents a rapidly evolving field that intersects multiple research domains including human–robot interaction (HRI), assistive technologies, and simulation-based evaluation methodologies. This section provides a comprehensive review of current approaches focused on elderly care and identifies the key challenges that our proposed system addresses.

Socially assistive robots for elderly care

SARs have emerged as a distinct paradigm where robots provide assistance through social rather than physical interactions. 2 This approach has gained significant traction in addressing the growing needs of an ageing global population, with applications spanning from medication reminders to social companionship and cognitive stimulation. 10 The diversity of SAR applications in elderly care reflects both the versatility of the technology and the complex multifaceted natures of ageing-related care needs.

Reviews of SAR implementations have revealed both significant potential benefits and current limitations in elderly care settings. 11 Key factors for successful robot acceptance include the importance of personalized interactions, cultural sensitivity, and the need for gradual introductions of robotic technologies. Successful SAR deployment requires special attention to user comfort, gradual technology introduction, and sensitivity to cognitive and physical limitation specific to elderly populations. 12

Effective social robot requires deep understanding of user experience principles and human-centered design methodologies. Comprehensive frameworks for understanding user experience in social HRI identify key factors that influence user acceptance and engagement, emphasizing that technical functionalities alone are insufficient. 13 For this, successful social robots must provide positive emotional experiences and maintain user trust over extended interaction periods and their evaluation requires particular attention to age-related factors that influence technology acceptance and usability. 14 For example, the specialized assessment tool Robot-Era Inventory addresses unique considerations such as cognitive accessibility, social presence perception, and integration with existing routines. 14

The acceptance of healthcare technologies by clinical professionals represents a critical factor in the successful implementation. Research on ICT-based healthcare systems has demonstrated the utility of integrated theoretical frameworks combining the technology acceptance model (TAM) with task-technology fit (TTF) to understand the adoption patterns among healthcare workers. 15 These frameworks emphasize that perceived usefulness and ease of use, when aligned with specific task requirements, significantly influence technology acceptance in clinical settings, principles that directly inform our multistakeholder evaluation approach for the SHARA-WoZ framework.

In general, research has emphasized the importance of cultural and contextual factors in assessing assistive robots. 7 Consequently, evaluation methodologies must account for diverse user backgrounds, care environments, and social contexts to ensure that the assessment results are generalizable across different implementation scenarios.

Evaluation methodologies for social robotics

The evaluation of social robots for elderly care presents unique challenges that extend beyond traditional usability testing. Current approaches often lack the scalability, accessibility, and methodological rigor necessary for assessment across diverse environments and user populations. 5 The complexity of measuring social, emotional, and therapeutic outcomes requires sophisticated evaluation frameworks that can accommodate multiple stakeholders while maintaining scientific validity.

Contemporary social robotics evaluation encompasses diverse methodological approaches that address different aspects of HRI assessment. Mixed-methods evaluation framework has emerged as particularly valuable for capturing the multifaceted nature of social robot effectiveness. 7 These approaches combine quantitative measures with qualitative insights, enabling researchers to assess both measurable outcomes and subjective user experiences that are crucial for understanding robot acceptance in elderly care environments. The integration of structured quantitative assessments with ethnographic observation techniques provides understanding of how social robots work within complex care contexts.

Building upon these mixed-methods approaches longitudinal evaluation studies represent another critical methodology for assessing social robots in elderly care settings. 16 Unlike short-term laboratory studies, longitudinal approaches track user acceptance, behavioral changes, and therapeutic outcomes over extended periods, typically spanning weeks to months. This methodology is particularly essential for elderly populations, where initial novelty effects may diminish and true patterns of robot acceptance and therapeutic efficacy emerge over time. Research has demonstrated that longitudinal studies revel variations in user engagement patterns that are not visible in shorter evaluation periods, highlighting the importance of sustained interaction assessment for social robotics applications. 17

To put into practice these longitudinal and mixed-methods approaches effectively, scenario-based evaluation methodologies provide structured approaches for testing social robots across diverse interaction contexts relevant to elderly care. 5 These methodologies involve designing realistic care scenarios that reflect common challenges and interactions in elderly care environments.

While scenario-based approaches offer controlled testing environments, field studies and naturalistic evaluation approaches have gained prominence as researchers recognize the limitations of laboratory-based assessments. 4 These methodologies involve deploying social robots in real care environments, such as assisted living facilities or private homes, where real interactions can be observed and assessed. Field studies provide invaluable insights into how environmental factors, daily routines, and social dynamics influence robot acceptance and effectiveness. However, these approaches require careful consideration of ethical implications and privacy concerns when conducting research in sensitive care environments. 18

Expanding on the naturalistic evaluation paradigm, participatory evaluation methodologies actively involve elderly users, caregivers, and healthcare professionals in the assessment process, ensuring the evaluation criteria reflect stakeholder needs and preferences. 19 These approaches incorporate user-defined success criteria and culturally relevant assessment frameworks. While participatory evaluation methodologies emphasize stakeholder involvement and real-world contexts, controlled experimental approaches offer complementary insights through standardized testing conditions. These controlled methods allow researchers to systematically evaluate specific aspects of HRI while maintaining experimental rigor and reproducibility across different studies.

Among the controlled experimental methodologies, the WoZ

8

technique represents a fundamental research methodology in human–computer interaction that has been extensively adapted for HRI studies. In WoZ experiments, participants interact with what they believe to be an autonomous robotic system, while in reality, a human operator (the “wizard,” also called

Research has demonstrated that WoZ studies can effectively evaluate social interaction strategies for robots operating under perceptual constraints. 20 Even when robots have limited sensory capabilities, carefully designed WoZ studies reveal successful interaction patterns that can be later incorporated into autonomous systems. This highlights the value of WoZ methodologies not just for evaluation, but for active discovery of effective robot behaviors.

WoZ has established itself as a cornerstone methodology in HRI research for several compelling reason. First, it enables rapid prototyping and hypothesis testing of robotic behaviors without the substantial time and resource investment required for developing fully autonomous systems. 21 Research has demonstrated that WoZ studies can effectively reveal social interaction strategies for robots operating under perceptual constraints, with carefully designed WoZ studies uncovering successful interaction patterns that can be later incorporated into autonomous systems. 20 Second, the methodology provides precise experimental control over interaction variables while maintaining focus on natural human responses, which is particularly valuable when studying complex social, emotional, and therapeutic outcomes that require sophisticated evaluation frameworks. 7 Third, WoZ facilitates human-centered design approaches by enabling researchers to test and refine robot behaviors based on authentic user feedback in realistic settings before committing to full autonomous implementation. 22

A more advanced approach combines autonomous robot functions with wizard control when needed, called Hybrid WoZ systems, represent a significant advancement over traditional methodologies. 21 These systems enable more realistic evaluation of semi-autonomous social robots and facilitates gradual transitions from wizard control to full autonomy. The hybrid approach proves particularly valuable for developing and testing adaptive robot behaviors that must respond to unpredictable social situations.

As a summary, the complexity of measuring therapeutic and social outcomes in assistive robotics requires sophisticated evaluation frameworks that extend beyond traditional usability metrics. 5 Factors such as emotional engagement, therapeutic efficacy, and long-term behavioral changes must be systematically assessed using validated instruments and longitudinal study designs. Emerging research emphasizes the importance of wizard training and error measurement in WoZ studies. 23 Systematic approaches to wizard preparation, including standardized training protocols and performance monitoring, are essential for ensuring reliable and replicable results.

From a Health Informatics perspective, the successful integration of AI and robotic systems into clinical workflows requires addressing multifaceted adoption challenges that extend beyond technical functionality. Recent surveys of US health systems have identified immature AI tools, financial constraints, and regulatory uncertainty as primary barriers to AI deployment in healthcare settings, 24 while comprehensive reviews emphasize that AI-powered decision support systems must streamline clinical workflows and enable personalized treatment within robust governance frameworks that address ethical, legal, and regulatory considerations. 25 These challenges are particularly acute for robotics applications, where integration within intensive care units demands careful consideration of safety protocols, patient privacy protections, and responsibility delineation. 26

Contemporary WoZ platforms and tools

Despite significant advances in social robotics and evaluation methodologies, several important gaps remain in current WoZ systems. These often lack standardized, modular infrastructures that would enable non-technical stakeholders to participate effectively in robot development and evaluation processes. 22 Additionally, most existing platforms focus on either fully automated or fully wizard-controlled systems, with limited support for hybrid approaches that may be most effective for complex social robotics applications.

The WoZ4U (https://github.com/frietz58/WoZ4U. Last accessed: 07/11/2025) platform represents a significant advancement in open-source WoZ interface development. 21 This system provides keyboard shortcuts, automated behavior chains, and streamlined wizard operations designed to reduce cognitive load and improve interaction consistency. WoZ4U employs a browser-based front-end with a backend server architecture, specifically implemented for SoftBank’s Pepper robot, though its architecture can be adapted to other robotic platforms supporting ROS. The platform utilizes a YAML configuration file that enables researchers to customize the interface for different experiments without requiring programming expertise. However, WoZ4U primarily supports manual wizard control without built-in autonomous or semi-autonomous operational modes, and does not provide specialized features for multistakeholder evaluation involving both technical and non-technical experts.

Hoffman’s OpenWoZ framework 21 shares similar ambitions to create a runtime-configurable WoZ system. However, the extent of its implemented functionality and continued development remain unclear in the literature, limiting its practical adoption for large-scale HRI studies.

Specialized WoZ implementations have emerged for specific robot platforms and application domains. Thunberg et al. developed a WoZ-based tool for the Furhat robot specifically designed for psychotherapy sessions with older adults suffering from depression in combination with dementia. 27 This system provides a graphical user interface that allows therapists to control the robot’s speech and facial expressions through configurable clickable buttons and free-form text input. Although this platform demonstrates the value of customized WoZ interfaces for particular user populations and therapeutic contexts, it remains domain-specific and does not provide generalized evaluation capabilities across diverse stakeholder groups or operational modes.

Building upon these specialized implementations, commercial platforms have begun incorporating WoZ capabilities into their robotic development ecosystems. The Cognicam Cloud Platform (https://www.cogniteam.com/. Last accessed: 27/07/2025) provides integrated telepresence and monitoring features specifically designed to carry out realistic WoZ experiments. Such platforms facilitate rapid switching between autonomous and controlled modes, enabling more sophisticated hybrid evaluation scenarios. However, these commercial solutions often lack the accessibility and customization that open-source alternatives provide and typically do not incorporate multistakeholder evaluation frameworks necessary for a complete assessment of SARs in healthcare contexts.

Table 1 presents a comparative analysis of contemporary WoZ platforms across key dimensions relevant to SAR evaluation. While existing platforms excel in specific areas, WoZ4U in open-source accessibility, Furhat WoZ in healthcare specificity, and Cognicam in cloud-based deployment—none comprehensively address the combination of requirements essential for rigorous SAR evaluation in elderly care: (1) web-based accessibility without specialized software requirements, (2) support for multiple operational modes facilitating gradual transitions from wizard control to autonomy, (3) systematic multistakeholder evaluation frameworks, and (4) integration with modern AI services matching real robot capabilities.

Comparative analysis of contemporary woZ platforms for HRI.

SHARA-WoZ addresses these gaps through its modular three-component architecture (web-based robot simulation, central server, desktop wizard application) supporting three distinct operational modes (autonomous, semi-autonomous, full-manual). Unlike existing platforms, SHARA-WoZ explicitly incorporates multistakeholder evaluation methodology, enabling systematic assessment by both healthcare professionals and technical experts. This approach recognizes that effective SAR development requires complementary perspectives: clinical professionals provide insights into therapeutic appropriateness and workflow integration, while technical experts contribute optimization and implementation expertise. Furthermore, the framework’s integration with external AI services (OpenAI GPT-4o-mini) enables realistic evaluation of conversational capabilities without requiring specialized AI development, while maintaining the flexibility for instant wizard control takeover when needed.

Materials and methods

This section presents the technical implementation and methodological framework for evaluating SAR-based systems proposed in this study. The system architecture (SHARA-WoZ) (https://github.com/GuillermoCuberoCharco/ViSHARA) details the three-component modular infrastructure comprising the web-based robot simulation interface, central server coordinating communications, and desktop wizard control application. The experimental procedure outlines the three-phase evaluation protocol that systematically assesses different automation levels (autonomous observation, semi-autonomous control, and full manual control) and the dual expert group structure (healthcare professionals and technical experts). The analysis methods encompass quantitative analysis of UEQ responses, custom usability questionnaire analysis for individual system components, and systematic qualitative analysis of post-session interviews to extract actionable improvement suggestions. This methodology enables systematic evaluation of the system from both clinical and technical perspectives, facilitating identification of strengths, limitations, and refinement opportunities for WoZ platform development in socially assistive robotics.

Framework architecture

The SHARA-WoZ framework implements a hybrid WoZ architecture that enables experts with diverse professional backgrounds to evaluate the performance of SARs by supervising and controlling robot interactions with remote users. This service-oriented architecture comprises three main components: (1) a web-based robot simulation interface, (2) a central server infrastructure, and (3) a desktop wizard control application, as shown in Figure 1.

Representation of the system architecture. It incorporates two-way communication between the three components.

The framework operates through a coordinated message flow between components. When a participant interacts with the web interface, synchronization messages are sent to the central server. The server processes these messages according to the current operational mode (autonomous and semi-autonomous), routing them appropriately between the participant interface and the wizard control application.

Web-based robot simulation interface

The web interface presents a 3D simulation of a particular SAR (in this case called SHARA robot, 28 Figure 2). This is the application that will use the end-user.

Web interface with 3D simulation of SHARA robot.

SHARA represents a specialized SAR designed to address the specific companionship and cognitive stimulation needs of elderly populations through conversational interaction. This robot system embodies the principles of social robotics for eldercare by providing empathetic, personalized communication designed to reduce social isolation and support emotional well-being among older adults. The physical version of SHARA has been already proved for these purposes28–30 whose form of interaction is through verbal conversation.

The Robot Simulation Interface renders the virtual version of SHARA robot in standard web browsers without requiring specialized software installation. The interface includes a chat component that displays the conversation history and the current system status, as shown in Figure 3. End-users can hide this chat component when they prefer a more natural interaction experience.

Chat component with conversation history and system state for user feedback.

The virtual version of SHARA replicates its facial animations, and also extends the physical robot’s capabilities. It implements additional nonverbal communication features, including head movements and arm gestures, which provide visual feedback during conversations, becoming a more “animated” version of SHARA. These movements are synchronized with the conversational flow to enhance social presence and support natural interaction patterns. The 3D simulation enables consistent delivery of these nonverbal behaviors across evaluation sessions, which would be more difficult to standardize with physical robot deployments.

The interface maintains real-time communication with the central server, enabling immediate message delivery and preserving natural conversation flow. The simulation interface integrates camera and voice input capabilities, supporting the natural interaction functionalities of the SHARA robot that align with older adults’ primary reliance on verbal communication for technology interaction. 31

Central server infrastructure

The server component works as the communication hub for the entire system. It manages communication coordination, artificial intelligence integration, state management, and data persistence across evaluation sessions. The server routes messages bidirectionally between the participant interface and wizard control system while maintaining conversation context and applying appropriate filtering based on current operational mode.

The server integrates with external artificial intelligence (AI) services through OpenAI’s API (See https://openai.com/api/. Last accessed: 27/07/2025), using the same configuration and prompts as the physical SHARA robot for building conversations. The system prompt (Figure 4) configures the AI to respond as a SAR for elderly care, maintaining appropriate tone, empathy, and therapeutic communication patterns. When end-users talk to the robot, the server:

Prompt structure elements used as input for the conversational AI system. The four core components include user input message, temporal context (timestamp), user identification (username), and proactive conversation starters (proactive_question) with corresponding examples for each element.

Response processing framework illustrating Shara’s personality core traits, conversation memory integration system, and proactive question logic. The personality core defines key characteristics for elderly care interactions, while the memory system enables personalized responses based on previous conversations and health-related context.

Output generation architecture showing the three main components of Shara’s response system: conversation state management (continue), emotional expression selection (robot_mood), and natural language response generation (response).

The server has a Google Cloud Speech-to-Text (See https://cloud.google.com/speech-to-text?hl=es-419. Last accessed: 27/07/2025) service for automatic audio transcription from the user with adaptive configuration handling multiple input formats. This approach addresses the challenges where the prevalence of local dialects among elderly populations creates difficulties for speech recognition systems based on standardized models. 32

For robot’s voice synthesis, the server utilizes Google Cloud Text-to-Speech (See https://cloud.google.com/text-to-speech?hl=es_419. Last accessed: 27/07/2025) with configuration specifically optimized for elderly user interactions. The system employs the

The server incorporates a facial recognition system using the

It also implements a conversation management service that maintains individualized conversations per user, preserving conversations and facilitating personalized interactions. Each user can maintain multiple conversations with maximum message limits per conversation (1000) and conversations limits per user (50) to optimize performance. The conversation management system includes persistent context storage with structured message storage and temporal metadata, which is automatically managed by the OpenAI service infrastructure to maintain continuity and contextual awareness across interaction sessions. The service maintains conversation logs including message content, timestamps, user roles (assistant, wizard, user), and metadata such as emotional states and system events.

The server also manages video streaming capabilities, processing real-time video feeds from the participant environment and distributing them to the wizard operator. This real-time video visualization of the end-user camera simulates what the robot is seeing.

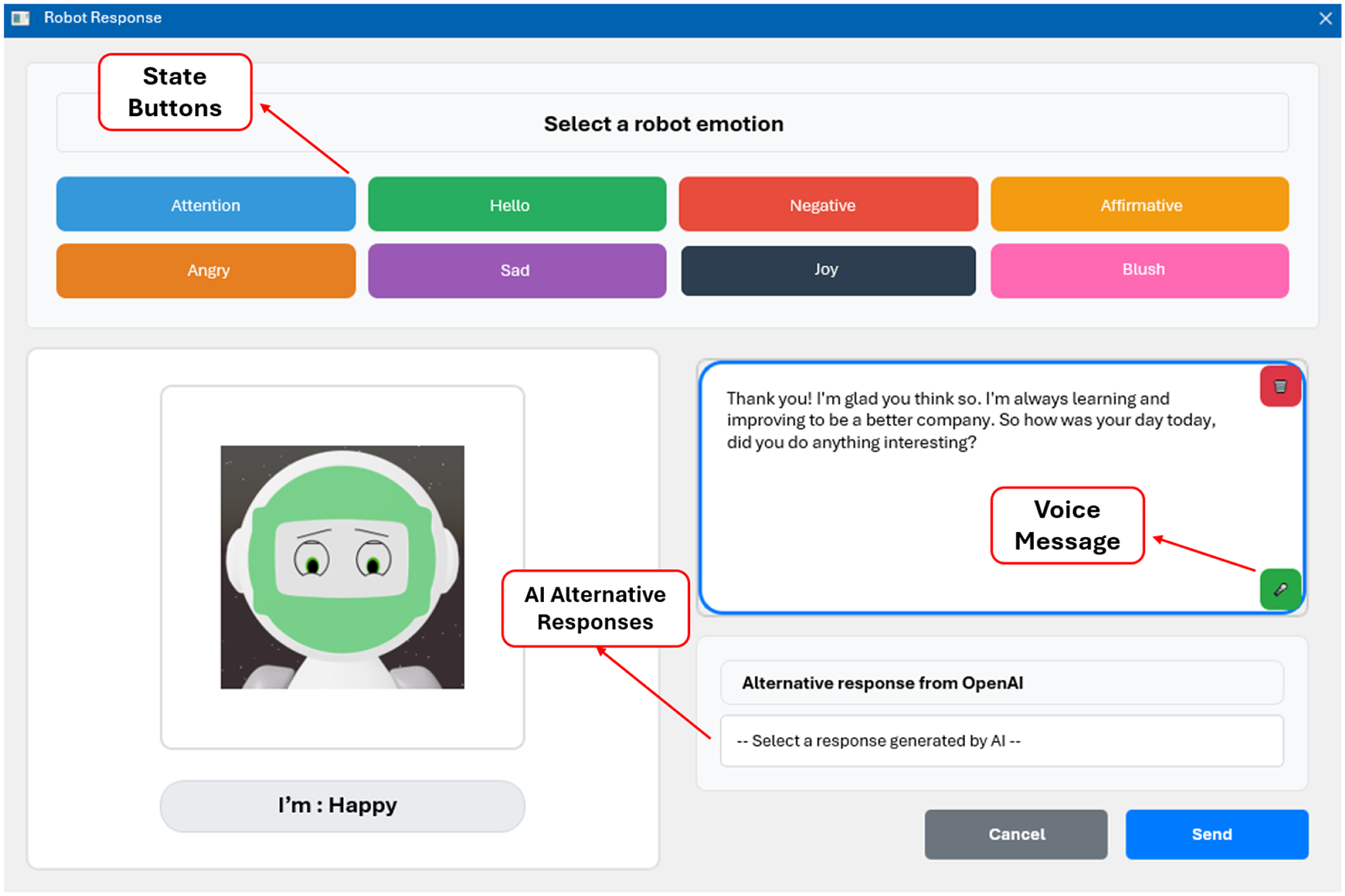

Desktop wizard control application

The wizard application provides operators with control and monitoring capabilities for managing robot interactions. This desktop application implements a multipanel interface that accommodates simultaneous monitoring of conversation context, visual environments, system status, and control options (Figure 7). The operator can switch between autonomous, semi-autonomous and full manual modes using a button.

In autonomous mode, the system enables evaluation of the robot simulation’s independent behavior without operator intervention. In semi-autonomous mode, the operator maintains validation control over each response generated by the system, allowing for real-time oversight and intervention when necessary. In full manual mode, the operator controls all aspects of the interactions, editing at all levels the responses and states of the simulation, and even sending robot messages with out the input of the end-user.

Operator interface shows the complete interaction environment with four main components: chat interface displaying real-time conversation between user and operator/AI system (left panel), user video feedback stream (upper right), 3D avatar web interface with animated character representation (lower right), and emotion control buttons for manual mood selection.

The conversation management panel displays the real-time conversation and enables response composition and editing. The video monitoring panel presents live feeds from the end-user environment with connection status indicators and automatic reconnection capabilities. It supports operator decision-making, particularly important in eldercare applications where social cues and environmental awareness impact interaction appropriateness.

When operating in semi-autonomous or full-manual modes, operators can directly edit any generated response through an integrated text editor that supports real-time modification and formatting, this panel, as shown in Figure 8, provides access to AI-generated response options that operators can select, modify, or replace. The system generates multiple contextual alternatives through the integrated OpenAI service, that processes conversation history and participant context to produce appropriate suggestions alongside emotional state variants. These alternative responses are generated in parallel to maintain system responsiveness, allowing operators to choose the most contextually appropriate option for each interaction scenario.

Response evaluation window, the operator has the ability to manipulate the state and response of the robot.

Response editing capabilities extend beyond text modification to include audio input functionality. Operators can record voice responses directly through the interface using integrated microphone access, with recorded audio automatically transcribed through Google Speech-to-Text service. Voice recordings are processed in real-time, enabling operators to provide natural spoken responses that are converted to text and delivered to participants seamlessly.

Each operational mode provides operators with distinct capabilities designed to support different evaluation and interaction scenarios. In autonomous mode, operators are passive monitors with logging and analysis tools. In semi-autonomous mode, operators serve as supervisory editors with access to AI suggestions, alternative response options, and editing capabilities that support rapid intervention when clinical judgment requires response modification. In full manual mode, operators can assume complete creative and therapeutic control, utilizing the full range of interface tools including editing features, emotional state management, and response customization options.

Experimental procedure

As mentioned above, the evaluation of WoZ-based interfaces for social robotics presents challenges that extend beyond traditional usability tests. 8 Although previous research has shown the effectiveness of WoZ methodologies in developing and improving HRI, 21 this study addresses this gap by proposing an evaluation framework specifically designed to evaluate the usability and utility of the SHARA-WoZ interface from both healthcare and technical perspectives. The following subsections will explain the experimental procedure, distinguishing between participant selection and the evaluation protocol.

Participants criteria

The evaluation employs two distinct expert groups, each providing specialized perspectives on the system’s usability and utility.

Recruiting both healthcare and technical experts is essential because each group evaluates different critical aspects for system success; healthcare experts evaluate clinical utility and workflow integration, while technical experts evaluate technical implementation and usability design principles.

Before participation, all experts (

The sample size of 10 participants per expert group (

Healthcare professionals were recruited through collaboration with elderly care facilities and healthcare institutions in Spain. Recruitment employed purposive sampling, targeting professionals with direct elderly care responsibilities. Initial contact was established through institutional coordination with nursing departments, psychology services, and geriatric care units. In addition, technical experts were recruited from the human–computer interaction and robotics research communities at the University of Castilla-La Mancha and collaborating institutions through direct email invitations and professional networks. All potential participants received detailed information sheets describing the study objectives, time commitment, and the voluntary nature of participation. No financial compensation was provided; participation was voluntary and based on professional interest in assistive robotics evaluation. From the initial recruitment pool, 22 individuals expressed interest, of which 20 met the inclusion criteria and completed the full evaluation protocol (10 healthcare professionals, 10 technical experts).

For the healthcare expert group, inclusion criteria were required: (1) professional experience in elderly care (minimum 2 years), (2) background as nurses, psychologists, occupational therapists or geriatric specialists, and (3) familiarity with technological interventions in healthcare settings. For the technical expert group, the inclusion criteria required: (1) expertise in human–computer interaction, robotics, or interface design, (2) experience with usability evaluation methodologies, and (3) understanding of assistive technology systems. The excluded criteria for both groups included: (1) prior involvement in the development of the SHARA project, (2) lack of proficiency in Spanish (evaluation language) and (3) inability to complete the full evaluation session. All participants provided their informed consent and met the ethical requirements established by the institutional review board.

Table 2 presents the professional characteristics of both expert groups.

Professional characteristics of study participants.

This study represents a usability evaluation of a WoZ framework rather than a traditional WoZ experiment investigating HRI. Participants were evaluators assessing the interface’s capacity to support wizard operations, not wizards operating within an actual HRI study. Consequently, variability in how participants utilized interface features was an expected outcome reflecting diverse user preferences, precisely the insight this multistakeholder evaluation aimed to capture. The UEQ instrument, custom component questionnaires, and structured interviews provided systematic usability measures between both expert groups.

Protocol of the evaluation

Each evaluation session was conducted individually in a controlled laboratory environment. The physical setup consisted of two separate rooms connected via network infrastructure: (1) the participant room, where the wizard operator (study participant) used the desktop wizard control application on a workstation and (2) the simulation room, where an actor portraying an elderly user interacted with the web-based robot simulation interface displayed on a laptop with integrated webcam and microphone.

To ensure methodological rigor, all participants followed a standardized protocol controlling for variability across evaluation sessions. Each 45-minute session began with a 5-minute training period providing: (1) live demonstration of the three-component architecture, (2) guided exploration of the wizard interface features (chat panel, AI alternatives, voice recording, emotional controls, video monitoring), (3) explicit instructions for each evaluation phase, and (4) opportunity for clarification questions. The interface follows a simple and intuitive design that allows users to operate it with only very basic computer knowledge.

An actor portraying an elderly care recipient followed a standardized interaction script across all sessions, maintaining consistent greeting sequences, conversational topics (health concerns, daily activities, social interaction), timing, and emotional tone. This eliminated end-user behavior variability as a confounding factor.

The mentioned evaluation follows a structures three-phase protocol designed to systematically assess different operational modes:

Each evaluation session last approximately 45 minutes, including initial briefing and consent procedures (5 minutes), system demonstration and training (5 minutes), three-phase evaluation sequence (15 minutes), questionnaire completion (5 minutes), and post-session interview (15 minutes).

The 45 minute single session evaluation protocol was designed following established practices in WoZ interface usability studies, 34 where initial usability issues and user experience impressions can be reliably captured within focused interactions periods. This approach aligns with Nielsen’s research on usability testing, which demonstrates that the majority usability problems are identifiable within first-use sessions. 35 The three-phase evaluation protocol (autonomous observation, semi-autonomous control, and full manual control) provided systematic exposure to all operational modes, allowing participants to form impressions of system capabilities within a constrained timeframe.

Following the three experimental phases, participants complete the UEQ, 33 which serves as the primary quantitative evaluation instrument. The UEQ provides reliable measurement across six key dimensions of user experience: attractiveness (overall impression and general acceptance), perspicuity (ease of understanding and clarity), efficiency (task completion speed and interaction smoothness), dependability (system reliability and predictable behavior), stimulation (user engagement and motivation), and novelty (interface innovation and creative interaction design). Additionally, the UEQ enables calculation of an overall system score that provides an assessment of the general user experience quality.

Furthermore, participants take part in a post-session interview that is recorded and transcribed to capture qualitative insights about their experience with the interface, usability issues encountered, and suggestions for improvement.

For the specific evaluation objectives presented, assessing interface usability, identifying design flaws, and gathering expert feedback on system components, a single structured session provides sufficient data. Initial system acceptance and usability barriers are typically most pronounced during first encounters. In addition, the expert pool of participants has professional analytical capabilities enabling them to provide informed evaluations based on limited exposure. This work acknowledges that longitudinal effects (learning curves, satisfaction changes over repeated use, and long-term reliability perceptions) cannot be captured in single session designs. However, such longitudinal evaluations represent a distinct research phase appropriate after initial usability refinements identified through our methodology have been implemented.

Analysis methods

The analysis employs a descriptive statistical approach with between-group comparisons, following established protocols for user experience evaluation in HRI research.33,36 This approach is particularly suited for WoZ interface evaluation as it enables systematic examination of user experience differences between expert groups while maintaining interpretability of results for both technical and clinical stakeholders. 7 The analysis allows for direct comparison with established UEQ benchmarks and provides clear, actionable insights for interface refinement, which is essential for the iterative development of a assistive robotics platforms. 4

User experience questionnaire analysis

The UEQ is a validated, standardized instrument for measuring the user experience of interactive products. 9 The UEQ has demonstrated reliable psychometric properties across multiple studies, with Cronbach’s alpha values indicating acceptable to good internal consistency for all scales. 37 The instrument has been extensively validated in various contexts and languages, including its application to evaluate HRI systems and assistive technologies. 33 The 26-item questionnaire employs a 7-point semantic differential scale and measures six dimensions: Attractiveness, Perspicuity, Efficiency, Dependability, Stimulation, and Novelty.

UEQ responses will be processed following the standardized calculation protocol established by Schrepp,

37

adapted for the 5-point Likert scale used in this study through its 26 items. The UEQ provides reliable measurement across six key dimensions of user experience: Attractiveness (overall impression and general acceptance), Perspicuity (ease of understanding and clarity), Efficiency (task completion speed and interaction smoothness), Dependability (system reliability and predictable behavior), Stimulation (user engagement and motivation), and Novelty (interface innovation and creative interaction design). Raw item scores are first transformed to the standard UEQ scale ranging from

Each UEQ dimension score is computed as the arithmetic mean of its constituent items:

The six UEQ dimensions evaluate complementary aspects of user experience in interactive systems. Attractiveness measures the overall impression and aesthetic appeal of the system, reflecting whether users perceive the product as pleasant and desirable. Perspicuity assesses the clarity and ease of understanding of the interface, determining whether users can navigate and comprehend the system without excessive cognitive effort. Efficiency focuses on the speed and effectiveness with which users can complete their tasks, measuring whether the system facilitates smooth and productive workflow. Dependability examines the sense of control and predictability that users experience, evaluating whether the system responds consistently and reliably to user actions. Stimulation measures the level of motivation and engagement that the system generates, determining whether the interaction feels exciting and interesting to the user. Finally, Novelty evaluates the innovation and creativity of the design, measuring whether the system presents original and creative features that capture user attention. 9

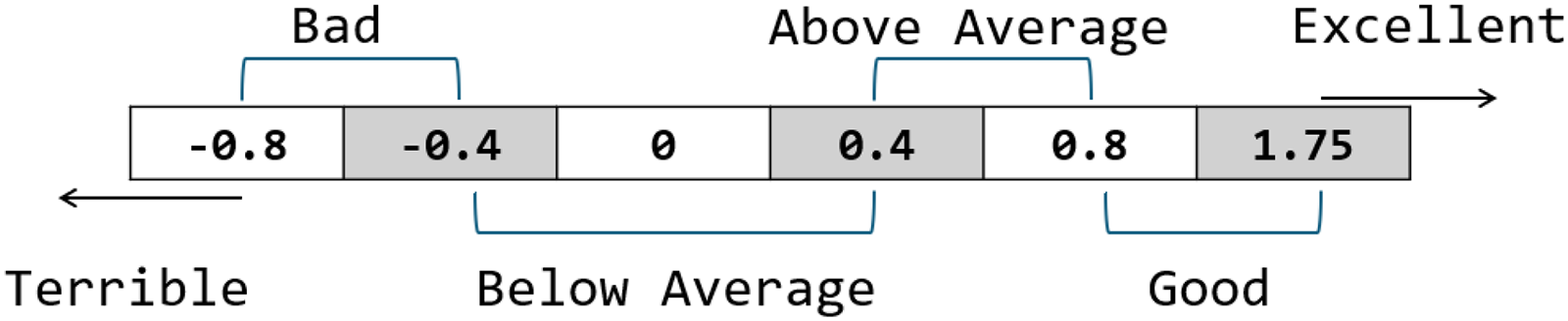

For each dimension and expert group, Mean(

Results will be interpreted using established UEQ benchmarks, 37 where dimension scores are classified as shown in Figure 9. UEQ results will be presented separately for healthcare experts, technical experts, and the combined sample using charts to visualize the six-dimensional user experience profile.

UEQ Benchmark Comparison, from “Terrible” to “Excellent” evaluation.

Specific system component analysis

To evaluate individual system components, a custom assessment instrument was developed specifically for this study. This ad-hoc questionnaire measures participants perceptions of individual system components using a 5-point Likert scale (1

The system components evaluated include:

By analyzing utility and ease-of-use separately, we can identify components that may be highly useful but difficult to use, or conversely, easy to use but of limited value. This granular analysis enables targeted improvements to specific aspects of component design and functionality.

Descriptive statistics will be calculated separately for healthcare experts (

Qualitative post-interview analysis

The interview transcripts will undergo systematic analysis using an adaptation of the Framework Method

38

focused on extracting improvement suggestions. The analysis will proceed through the following stages:

Identified comments and improvements will be systematically categorized based on themes emerging from the data into the following broad categories:

Results will be presented using pie charts showing suggestions frequency, and a descriptive analysis highlighting suggestions unique to each expert group versus shared suggestions. This approach enables identification of priority improvement areas based on user consensus while maintaining visibility of group-specific needs and preferences.

Results

This section reports the evaluation findings for the hybrid WoZ framework, examining usability and utility through assessments with two expert groups. The analysis encompasses quantitative user experience evaluation through the standardized UEQ, custom usability assessment of individual system components, and qualitative analysis of post-session interviews with actionable improvement suggestions.

User experience questionnaire

The combined sample analysis reveals consistently positive user experience ratings across all six UEQ dimensions, as illustrated in Figure 10. It also displays the analysis with benchmark comparisons. The specific dimension means can be visualized in Table 3. The overall user experience profile demonstrates that the hybrid WoZ framework interface achieved “

UEQ dimension scores with benchmark comparison for combined sample (n=20). All six dimensions achieved “Above Average” classification according to established UEQ benchmarks.

Usability dimensions evaluation: scores, classification, and results interpretation.

All scores are in the “above average” range.

The six-dimensional user experience profile, visualized in Figure 10, reveals a balanced performance across pragmatic and hedonic quality aspects. Pragmatic qualities (Perspicuity, Efficiency, and Dependability) relate to the system’s usability and task-oriented functionality, while hedonic qualities (Attractiveness, Stimulation and Novelty) concern the emotional and experiential aspects of user interaction. Perspicuity achieved the highest score (1.67), indicating that participants found the interface particularly clear and understandable.

Efficiency recorded the lowest score (1.28) among the dimensions, though still maintaining above-average classification. This suggests potential opportunities for interface optimization to enhance interaction speed and task completion efficiency during WoZ operations.

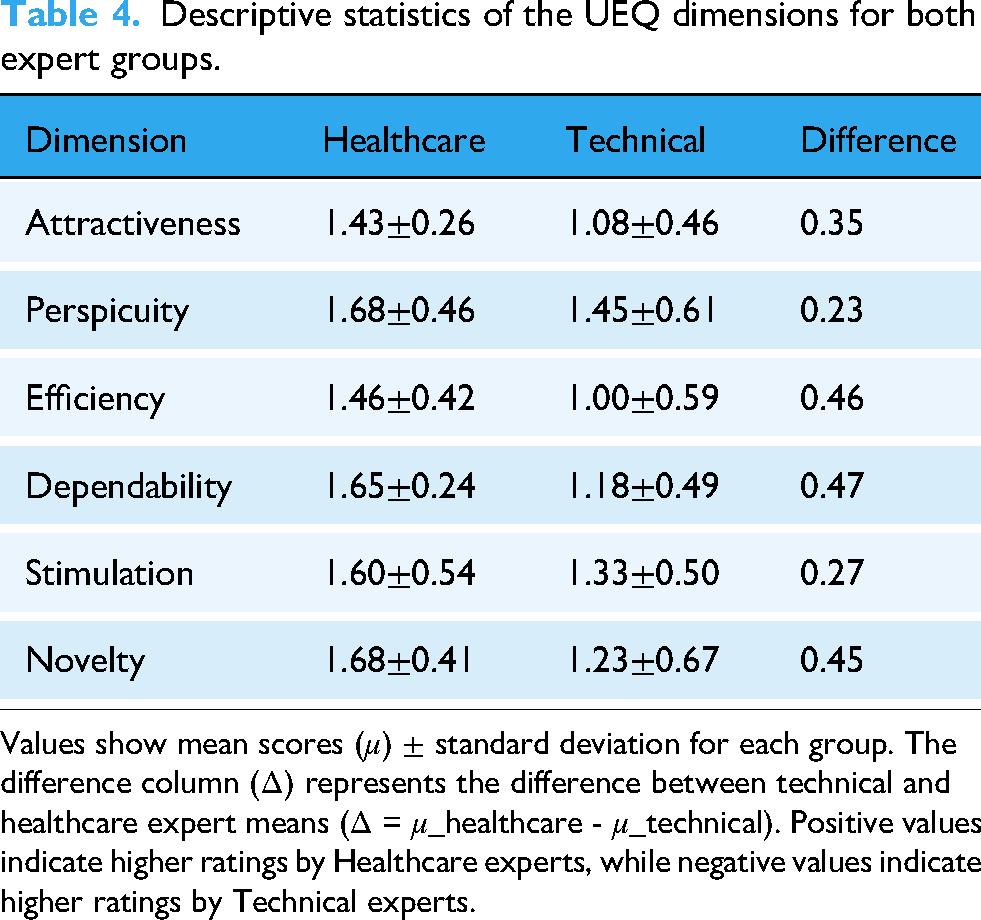

The dimension comparison between healthcare professionals and technical experts is shown in Figure 11. Healthcare experts consistently provided higher ratings across all six UEQ dimensions, suggesting that the SHARA-WoZ interface aligns more closely with the expectations and requirements of clinical professionals than technical specialists. Detailed statistical comparisons are presented in Table 4.

UEQ dimensions comparison between healthcare experts (

Descriptive statistics of the UEQ dimensions for both expert groups.

Values show mean scores (

Table 4 shows the mean (

Healthcare experts demonstrated consistently positive evaluations with all dimensions achieving “Good” to “Excellent” classifications according to UEQ benchmarks. Perspicuity (

Technical experts provided more conservative evaluations, with most dimensions falling in the “Above Average” range. Efficiency (

Specific system component analysis

The results of utility and ease-of-use dimensions reveal high overall system scores in both expert groups (Figures 12 and 13).

Custom utility and ease-of-use assessment showing component scores comparison between healthcare and technical experts.

Overall system utility and ease-of-use comparison across the combined sample of experts.

The evaluation of user perceptions revealed a consistent performance disparity between the Healthcare and Technical sectors. Overall, the Healthcare group reported uniformly higher mean scores for both perceived utility (4.72

The analysis shows the nature of this disparity, as Figure 13 illustrates. The

The

These results suggest that the system’s performance perception (specifically in utility and ease-of-use) is highly context-dependent, with sector-specific workflows and needs.

Qualitative analysis of post-interview

The systematic qualitative analysis of post-session interviews yielded improvement suggestions categorized into five primary domains. The analysis processed transcripts from both expert groups (healthcare experts,

The analysis of the combined sample (

The combined analysis reveals the complementary nature of both expert groups’ perspectives. Technical Performance received the highest total number of suggestions and Interface Design took second place for technical experts, as shown in Figure 14. On the other hand, healthcare professionals as illustrated in Figure 15, demonstrated remarkably balanced attention across improvement categories, with three categories receiving equal priority and New Functionalities emerging as the primary concern area.

Qualitative analysis results for technical experts (

Qualitative analysis results for healthcare experts (

This distribution pattern demonstrates how different professional backgrounds lead to overlapping concerns in critical system areas while maintaining distinct evaluation focuses. The analysis of healthcare expert interviews (

New Functionalities received the highest absolute number of suggestions, such as medication reminder alerts, contact with family members or medical centers in the event of a detected emergency, including the ability to detect emergencies for this purpose.

Robot Behavior emerged as one of the most significant concern for healthcare experts, as it was the category in which the experts expanded and delved deepest during the interview. Healthcare experts’ focus on the importance of empathy and care in the field of elderly care, as well as ensuring that the robot’s expressions (animations) were appropriate for the emotion it was intended to convey.

Interface Design, and Technical Performance each received lesser suggestions, while Integration Features received the least. Suggestions such as replacing descriptive text with emoticons to facilitate visualization or using a more standard color scheme for better understanding of the interface. In terms of performance improvements, the reduction in transcription errors was noteworthy.

The analysis of technical expert interviews revealed suggestions distributed across four categories, with Technical Performance receiving the highest attention, concentrating focus on performance optimization and technical implementation details. Notably, no participant in this group make a suggestion for the category of Integration Feature

Technical Performance emerged as the primary concern for technical experts, reflecting systematic evaluation of system reliability, response times, and technical robustness.

Interface Design received significant attention, indicating substantial focus on user experience optimization, visual design elements, and interaction workflow efficiency.

New Functionalities suggestions implies comprehensive evaluation of system capabilities and identification of specific areas for feature expansion and operational enhancement.

Robot Behavior approached technical experts’ appreciation for natural interaction patterns while maintaining focus on implementation feasibility. Their suggestions in this category likely addressed technical aspects of behavioral rendering and response generation mechanisms.

Notably, technical experts did not provide any suggestions for Integration Features. This absence highlights the complementary nature of multistakeholder evaluation approaches, where healthcare professionals identify clinical integration requirements that technical experts might not consider essential for system functionality assessment.

Discussion

This study presents a WoZ-based evaluation framework for SARs (based on the developed SHARA-WoZ system), examining usability and utility through multiple analytical perspectives that encompass quantitative user experience assessment, component-level interface evaluation, and qualitative improvement analysis. This discussion synthesizes the findings from both expert groups to provide insights into the system’s strengths, limitations, and future development directions for WoZ-based platforms in socially assistive robotics for elderly care.

The UEQ analysis reveals a consistently positive user experience with the hybrid WoZ framework, achieving above average benchmark ratings across all six UEQ dimensions. This indicates successful design implementation for social robotics applications. However, a significant and consistent disparity emerges between healthcare and technical experts, with healthcare professionals providing higher ratings across all dimensions.

The largest gaps appear in pragmatic quality dimensions, Efficiency (

Notably, while technical experts provided more conservative ratings, their positive scores in stimulation and perspicuity indicate the interface maintains engagement and clarity across both groups. The higher standard deviations in technical ratings, particularly for novelty (0.67) and efficiency (0.59), suggest diverse needs or expectations within technical roles that the current design may not address.

These findings emphasize that while the interface successfully serves both domains, its optimization appears more aligned with healthcare workflows. The technical group’s ratings suggest opportunities for improving system responsiveness, predictability, and customization to better support technical operations and varied use cases within this sector.

The observed divergence between Healthcare and Technical groups in the system components, highlights how domain-specific needs shape technology perception. The significantly lower ease-of-use ratings from Technical users, particularly for

The post-interview analysis reveals differences in evaluation focus and suggestion distribution patterns between expert groups. Technical experts provided nearly three times as many suggestions compared to healthcare experts, indicating more detailed technical evaluation and identification of specific optimization opportunities. However, healthcare experts demonstrated broader categorical coverage by addressing all five improvement domains, including Integration Features, which received limited attention but represents unique clinical concerns. The feedback from both expert groups showed different but complementary perspectives that highlight key areas for improvement and future development considerations.

Technical experts focused primarily on system functionality and interface design. They emphasized that while the system

Audio functionality emerged as a critical weakness across both expert groups. Technical experts found

Healthcare experts concentrated on behavioral authenticity and clinical relevance, raising concerns that extend beyond technical functionality. They emphasized the need for more natural interaction, stating the system should provide

Both expert groups identified response length as problematic, with technical experts noting that

The healthcare experts provided unique insights into clinical integration requirements that technical experts did not consider. They suggested

Technical experts identified specific interface improvements, including the need for

The combination of these perspectives is crucial. Technical experts identified critical issues for system reliability and operation, while healthcare experts ensured the system’s design and functionality would meet real-world clinical needs and integrate into care workflows. This multistakeholder approach ensures the development of a system that is both technically sound and therapeutically relevant.

Limitations

While this study demonstrates the application of the SHARA-WoZ framework for multistakeholder evaluation of SARs, several limitations should be acknowledged.

First, the custom questionnaire developed to evaluate specific system components (utility and ease-of-use dimensions) has not undergone formal pilot testing. Although this limits the generalizability and comparability of these specific findings, this ad-hoc instrument was designed to capture component-level usability aspects specific to the SHARA-WoZ framework that are not addressed by standardized questionnaires.

Second, the evaluation was conducted in controlled laboratory settings with simulated elderly users (actors) and in relatively short evaluation sessions (45 minutes), which may not fully capture the complexity and unpredictability of real-world interactions with actual elderly populations.

Third, all participants were recruited from Spain and identified as native Spanish speakers with cultural backgrounds representative of Mediterranean European societies. This cultural homogeneity, while limiting generalizability, provided consistency in evaluating the Spanish language conversational capabilities of the SHARA robot simulation.

And finally, while healthcare professionals did not spontaneously raise concerns regarding data privacy, security, or ethical implications of the SHARA-WoZ system during post-session interviews, we assume the importance of these aspects, which were not the focus of the evaluation. Future work should incorporate these aspects as components of the evaluation framework to ensure the validation of healthcare technologies that handle sensitive personal information.

Conclusions

This research demonstrates the feasibility and effectiveness of web-based WoZ platforms for evaluating SARs in elderly care contexts. The SHARA-WoZ system successfully achieved above-average user experience ratings across all evaluated dimensions, with healthcare professionals showing particularly strong acceptance levels that indicate successful alignment with clinical workflows and therapeutic requirements. The multistakeholder evaluation approach revealed complementary perspectives essential for framework development, where technical experts provided detailed optimization insights while healthcare professionals ensured clinical relevance and therapeutic appropriateness.

The evaluation process revealed that successful WoZ platform development requires integrated expertise from both technical and healthcare domains. While technical experts provide necessary system optimization insights, healthcare professionals contribute essential perspectives on appropriate behavior and clinical needs. The complementary nature of these perspectives suggests that single-domain evaluation approaches may miss requirements, particularly those emerging from real-world care applications.

These findings contribute significantly to the field of social robotics evaluation methodologies by demonstrating that modular, web-based WoZ infrastructures can effectively bridge the gap between research prototypes and real-world deployment requirements. The support of multiple operational modes, from autonomous observation to full manual control, provides researchers and clinicians with flexible evaluation tools that can accommodate diverse study designs and clinical scenarios. Furthermore, the integration of modern AI services with traditional WoZ methodologies presents a scalable approach for conducting sophisticated HRI studies without requiring extensive technical infrastructure or specialized software installations.

The most significant learning is that WoZ systems for socially assistive robotics must balance technical functionality with behavioral authenticity while remaining clinically relevant. The feedback indicates that this kind of systems should prioritize emotional sophistication, streamlined interfaces, reliable voice interaction, and integration with healthcare workflows to create platforms that can effectively support elderly care in real-world environments.

The multistakeholder evaluation framework established in this study serves as a model for future research in socially assistive robotics, emphasizing the critical importance of incorporating diverse professional perspectives to ensure both technical excellence and clinical utility in assistive technology development.

Looking forward, the identified improvement areas provide a clear road-map for enhancing WoZ platforms in assistive robotics. These developments should prioritize interface design, enhanced naturalness of robotic interactions and emphasize the need for differentiated design strategies: simplifying interaction patterns for technical users and exploring customizable feature sets to better align with differing operational priorities across sectors.

Footnotes

Ethics approval

The study involving human participants was conducted in accordance with the Declaration of Helsinki as well as ICHGCP, and was reviewed and approved by the Social Research Ethics Committee of the University of Castilla-La Mancha, under reference number CEIS-2025- 80271. Written informed consent was obtained from all participants prior to study initiation. All participants were provided with comprehensive information regarding study objectives, procedures, potential risks and benefits, voluntary participation, and data confidentiality. Participants were informed of their right to withdraw from the study at any time without penalty. All data were anonymized and stored securely in accordance with institutional data protection policies and applicable regulations.

Contributorship

Conceptualization was done by G.C. and R.H.; methodology was done by G.C., L.V., T.M. and R.H.; software was provided by G.C.; validation was done by G.C., L.V. and T.M.; formal analysis, investigation, data curation and writing—original draft preparation were done by G.C.; resources was provided by R.H.; writing—review and editing was provided by G.C., L.V., T.M. and R.H.; supervision, project administration, and funding acquisition were done by R.H. All authors have read and agreed to the published version of the manuscript.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was funded by JUNTA DE COMUNIDADES DE CASTILLA-LA MANCHA grants numbers SBPLY/21/180501/000160 (SHARA3) and SBPLY/24/180225/000176 (AKAI-SHARA); and by MINISTERIO DE CIENCIA, INNOVACIÓN Y UNIVERSIDADES grant number FPU22/00839 (predoctoral contract).

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article. The authors declare that they have no known competing financial interests or personal relationships that could have appeared to influence the work reported in this paper.

AI tools disclosure

Regarding the use of AI tools, AI-assisted technology was employed solely for language editing purposes, including grammar correction, spelling verification, and stylistic improvements to enhance the clarity and readability of the manuscript. No AI tools were used in the conceptualization, design, data collection, analysis, interpretation of results, or generation of substantive content of this research. All intellectual contributions, including the research methodology, experimental design, data analysis, and scientific conclusions, are entirely the original work of the authors.

Copyright and permissions

Regarding the tools and questionnaires used in this study, the user experience questionnaire (UEQ) is freely available for research and professional use without copyright restrictions, as stated on the official UEQ website (![]() ), where all materials are provided free of charge for academic purposes. All other questionnaires and assessment tools employed in this research were specifically designed and developed by the authors for this study, and therefore no copyright permissions were required. Similarly, all figures, diagrams, and visual materials presented in this manuscript have been originally designed and created by the authors specifically for this publication. As such, no figures are subject to third-party copyright, and no permissions from external copyright holders were necessary.

), where all materials are provided free of charge for academic purposes. All other questionnaires and assessment tools employed in this research were specifically designed and developed by the authors for this study, and therefore no copyright permissions were required. Similarly, all figures, diagrams, and visual materials presented in this manuscript have been originally designed and created by the authors specifically for this publication. As such, no figures are subject to third-party copyright, and no permissions from external copyright holders were necessary.