Abstract

Objective

This study aims to develop a measurement model for health technology acceptability using a theoretical framework and a range of validated instruments to measure user experience, acceptance, usability, health and digital health literacy.

Methods

A cross-sectional evaluation study using a mixed-methods approach was conducted. An online survey was administered to patients who used a pulse oximeter in a virtual hospital setting during COVID-19. The model development was conducted in three steps: (1) exploratory factor analysis for conceptual model development, (2) measurement model confirmation through confirmatory factor analysis followed by structural equation modelling and (3) test of model external validity on four outcome measures. Finally, the different constructs of the developed model were used to compare two types of pulse oximeters by measuring the standardised scores.

Results

Two hundred and two participants were included in the analysis, 37.6% were female and the average age was 53 years (SD:15.38). A four-construct model comprising Task Load, Affective Attitude, Self-Efficacy and Value of Use (0.636–0.857 factor loadings) with 12 items resulted from the exploratory factor analysis and yielded a good fit (RMSEA = .026). Health and digital health literacy did not affect the overall reliability of the model. Frustration, performance, trust and satisfaction were identified as outcomes of the model. No significant differences were observed in the acceptability constructs when comparing the two pulse oximeter devices.

Conclusions

This article proposes a model for the measurement of the acceptability of health technologies used by patients in a remote care setting based on the use of a pulse oximeter in COVID-19 remote monitoring.

Keywords

Introduction

Adoption of virtual healthcare models, models of health service delivery that include virtual consultations and remote monitoring (e.g. home monitoring), has risen rapidly since the COVID-19 pandemic commenced,1,2 facilitated by the development and implementation of enabling technologies (e.g. virtual hospital). These models of care required solutions to assist with at-home care for large numbers of patients with COVID-19, reducing the risk of infection exposure for patients and healthcare workers and hospital bed demand. 3

An array of wearable technologies were trialled in virtual care to monitor the vital signs of remotely monitored patients.4,5 One important technology is the pulse oximeter, a wearable device with the capacity to measure oxygen saturation levels (SpO2), a critical indicator for monitoring patient with COVID-19 deterioration, as well as pulse. 6

Remote monitoring can enhance patients’ self-management of their health condition while receiving support from healthcare professionals,

7

but patients need to become confident with the technology. The shift from tasks being completed in person by clinicians to tasks being completed by patients using remote monitoring technologies may require new ways of measuring acceptance and evaluating initial user experience (UX) and patient perceptions about using devices for remote monitoring tasks. There is limited research on the assessment of user experience in virtual care settings (e.g. the use of the pulse oximeter), and the measurement model for ‘acceptability’ (of the pulse oximeter) needs to be explored and potentially expanded in this context to incorporate additional dimensions and scales that are relevant to the evaluation of technology in a remote virtual care context. A ‘re-modelling’ approach to assess existing validated instruments as a basis for expansion will involve:

Reviewing and assessing the validity and suitability of existing and commonly used validated instruments (including NASA Task Load Index, System Usability Scale and various psychometric measures developed for capturing users’ summative behavioural responses in technology acceptance and adoption studies). Reconstructing a new measurement model for ‘acceptability’ by synthesising existing validated instruments of a consumer-grade Pulse Oximeter used in supporting remote monitoring. Operationalizing the measurement model for assessing important aspects of ‘acceptability’ based on patient experiences from using the pulse oximeter (used for remote monitoring) device to evaluate the effects of user-technology interactions and assessing the acceptability of the technology used as a virtual hospital (virtual healthcare model) intervention. The measurement model can be used to benchmark similar technologies from user experience survey instruments.

Although these devices are designed to be user-friendly, several studies have shown that usability issues with the devices still exist, and these may impact the accuracy of the readings.8,9 More research is needed to understand how the attitude towards the pulse oximeter impacts the acceptance of the device.

10

Moreover, there is limited research on patients’ experiences of health devices in remote monitoring models during COVID-19. A recent study evaluating patient with COVID-19 experiences with remote home monitoring services in England reported that although patients had overall positive experiences using remote monitoring, some participants who used the pulse oximeter at home hesitated to interpret readings and thresholds on the device, and this impacted the engagement with the service.

11

Health and digital health knowledge are also two factors impacting the usability of health technologies. Health knowledge, which is the perceived ability to collect and evaluate health information, 12 has been associated with the user's perceived value of a device, defined as the user's perception of the benefits or value towards using the pulse oximeter (particularly the effectiveness and satisfaction aspects). 13 Similarly, digital health knowledge, which is the knowledge to interact with technology-based health tools, has been found to influence the perceived usability of health technologies. 14

Amongst commonly used acceptance-based survey instruments for explaining adoption decisions, 15 an expanded Theoretical Framework of Acceptability (TFA) 16 specifically for healthcare interventions, incorporated seven dimensions to assess user perceptions and acceptability for the clinical task (i.e. Affective Attitude, Burden, Ethicality, Intervention coherence, Opportunity costs, Perceived Effectiveness, and Self-Efficacy). These dimensions are conceptually related to other measurement models in the literature developed for technology or system adoption such as the Technology Acceptance Model which highlights two important perspectives like the Ease of Use (EoU) and Usefulness, and the Task-Technology-Fit (TTF) intended to address the user perceived ‘fitness’ of the technology for the task.17,18 In our case, assessing the fitness of a pulse oximeter used in remote monitoring tasks performed by patients (assisted by clinicians remotely). The TFA defines acceptability as the extent to which people delivering or receiving a healthcare intervention consider it appropriate, based on anticipated or experiential cognitive and emotional responses to the intervention.16,19

Different validated scales may also address the different aspects of acceptability. The 10-questionnaire items from the System Usability Scale (SUS) were developed to capture expressions of the attitude of the user when using a system, 20 which is related to the ‘affective attitude’ dimension of the TFA, defined as how the individual feels about a health intervention. 16

The 6-question NASA-Task Load Index (NASA-TLX)21,22 was developed to address the overall workload or effort users perceive it takes to perform a task, in this case, to use the pulse oximeter in remote monitoring, which is related to the construct of burden defined in the TFA. Tubbs-Colley et al. suggested that the items of Performance and Frustration could be considered as outcomes of workload instead of characteristics of workload. 23 Therefore, Overall Workload or Burden may be measured by four items (i.e., Mental Demand, Physical Demand, Temporal Demand, and Effort) of the NASA-TLX.

Using psychological theories like Theory of Planned Behaviour, items from the Unified Theory of Acceptance and Use of Technology (UTAUT) 24 framework of technology acceptance aim to predict the behavioural intention to use the technology. Similarly, an integrative framework combining technology acceptance and resistance theories considers the perceived value and self-efficacy as key factors for alleviating user resistance in information systems implementation, such as the integration of remote technologies for patient care. 25

Another key factor in understanding technology acceptance in healthcare is trust, which is related to the decision to use a new technology.26,27 Finally, satisfaction is a common dimension to address UX, and has also been related to user acceptance of technology.24,28 These concepts should be considered when evaluating the user experience of health devices.

There are additional challenges for developing survey instruments that reflect the behavioural responses towards the use of remote monitoring technologies. The measurement model should cover a broad range of usability aspects in the context of a consumer-grade pulse oximeter (health technology) used by patients who are not necessarily health-literate, to perform a set of remote monitoring clinical procedures in a virtual care model. The development of this acceptance model will adopt well-characterised methodologies to explore and then confirm empirically if each dimension would be of practical value to be included in future patient experience surveys where remote monitoring technology is part of the intervention.

Aim

The aim of this study was to re-develop, synthesise and validate a measurement model for assessing the acceptability of health technologies used in remote monitoring and using a conceptually appropriate framework with a range of validated instruments to measure UX, usability and acceptance, Health Literacy (HL) and Digital Health Literacy (DHL) to ensure the measured behaviour is related to user experience. In the confirmation process, the measurement model was first built on the conceptual basis of TFA, considering the health technology as an intervention, 16 and the underlying constructs were then translated into instruments synthesised from a variety of validated relevant survey tools and then tested for reliability and validity.

Secondary aims included:

- To evaluate the effect of the different model constructs on user experience outcomes. - To evaluate the acceptability constructs and using the model, compare two brands of pulse oximeter employed for remote monitoring during COVID-19.

Methodology

This study was part of a cross-sectional evaluation study using a mixed-methods approach including an online survey administered to patients who used the pulse oximeter in a virtual hospital during COVID-19. Qualitative data from interviews and usability testing was also collected as part of the study, but these are reported elsewhere. 29 For this study, a series of steps were conducted to develop and validate a model for the acceptability of the pulse oximeter.

Stage A: Theoretical consideration and literature review

Acceptability and usability are the most common dimensions examined when evaluating UX of the pulse oximeter. 15

The TFA16,19 comprises seven dimensions to address acceptability shown in Table 1.

Theoretical Framework of Acceptability (TFA) dimensions.

A synthesised conceptual model combined constructs that had overlapping item-scale measures into four constructs: affective attitude (subdimensions: ethicality, perceived effectiveness), burden (subdimensions: opportunity costs), intervention coherence and self-efficacy. This refined model resulted from a preliminary study using Delphi testing and a closed sorting process. 15 Nevertheless, further validating procedures such as Confirmatory Factor Analysis (CFA) are necessary for the validation of the model to assess if the constructs reflect the different aspects impacting acceptability.

Moreover, patients’ attitudes and self-efficacy, the burden of using the device, and value/benefits of the intervention should be considered when evaluating the acceptability of the device. For example, the affective attitude could lead to identifying any concerns or attitudes the patient has towards using the device. The burden may allow the measurement of cognitive effort or time to participate in the intervention. Self-efficacy could address the patient's confidence in the capability of using the device for remote monitoring. The ‘Value’ of use (perceived) could be addressed by the degree to which the health technology is ethically suitable (safe and fit for its purpose), defined by the TFA as ethicality (i.e. the intervention's good fit in the individual's value system), the benefits that have to be given up to engage in the intervention (i.e. opportunity costs) and the perceived effectiveness. Although conceptually easy, having all seven distinct constructs would be operationally difficult. Therefore, a refined model combining constructs could be easier to apply in practice.

Stage B: Instrument considerations and synthesis – user experience survey

A condensed four-construct conceptual model was developed from a preliminary study using Delphi and sorting methodologies attempting to explore the relations between selected item measures (used in TLX, SUS and UTAUT) and latent constructs described in TFA. 15 Thirty-one items for patients were considered for the assessment of pulse oximeter acceptability. Questions from validated scales such as NASA-Task Load Index (NASA-TLX)21,22 (6 questions scored from 0-lower task load to 100-higher task load), System Usability Scale (SUS)20,30,31 (10 questions scored from 1-Strongly disagree to 5-Strongly agree), and Unified Theory of Acceptance and Use of Technology (UTAUT) 32 (15 questions scored from 1-Strongly disagree to 5-Strongly agree) were adapted to the final version of the survey. The final version of the survey can be found in Supplemental Appendix 1. A broad range of items was included to ensure the user experience evaluation could cover the dimensions described in the TFA.

Demographic variables (e.g. age, gender, and education level) and items to assess digital health literacy (DHL) 33 and health literacy (HL) 34 were also included in the user experience survey. Including HL and DHL in the analysis was to adjust for bias of users with prior relevant knowledge and literacy and to check how sensitive users’ perceptions of acceptability of the device were to HL and DHL.

For this study, the intervention was defined as the use of the pulse oximeter in a virtual hospital during COVID-19.

Stage C: Development and validation of the acceptability model for remote monitoring technology (pulse oximeter)

The development and validation of the final scale comprised three steps:

1. Conceptual measurement model development and item selection: Using factor analysis for exploratory analysis, based on statistical convergence and discriminant validity. The Statistical Package for the Social Sciences (IBM® SPSS®) statistics software platform (Version 28.0.0) was used to conduct Exploratory Factor Analysis (EFA) and Principal Components Analysis (PCA). Items’ scores from the TLX scale were inverted and converted into a 5-points scale to conduct the analysis. 2. Measurement Model Confirmation Testing: Confirmatory Factor Analysis (CFA) followed by Structural Equation Modelling (SEM) to confirm the reliability and fitness of the measurement model reflective of TFA dimensions based on a set of statistically qualified item instruments. CFA using SEM was conducted in Analysis of a Moment Structures (AMOS) (IBM® SPSS®) Version 28.0.0.

35

Multigroup path analysis to compare patients with high versus low levels of DHL and HL was also performed as part of the evaluation of the model's overall goodness of fit. 3. Measure Model Confirmation Validation: To test the validity (external validity) of the measurement model by evaluating the association between the measurement model and outcome variables like performance and frustration,

23

trust and satisfaction,

26

and to assess the moderating effect of DHL and HL scores.

Data

RPA virtual hospital (rpavirtual)

The patients with COVID-19 at home or hotel isolation since March 2020 were enrolled in the RPA Virtual Hospital (rpavirtual) – virtual health facility, established by the Sydney Local Health District (SLHD) and Based at Royal Prince Alfred hospital (RPAH), Sydney, in New South Wales (NSW), Australia.36,37 After being referred to rpavirtual by public health units in SLHD and confirmed eligible, a registered nurse contacted the patient on a video consultation, monitored the patient's symptoms and could escalate the patient if deterioration was detected. A pulse oximeter, approved by the Therapeutic Goods Administration in Australia, 38 to monitor oxygen saturation levels while recovering from COVID-19 was also delivered to the patient, who had 24/7 access to the rpavirtual service.36,37

Study participants

Participants were eligible for inclusion if they were adult patients (i.e. ≥ 18 years old) who had been monitored by rpavirtual and used the pulse oximeter.36,37 Data only from participants who fully completed the survey between October 2021 and March 2022 were included.

For factor analysis, an estimated sample size of 200 patients, based on a ratio of approximately 5 to 10 subjects per item, was considered to be sufficient for the statistical analysis. 35

Survey administration

Potential participants who met the inclusion criteria were identified by staff at rpavirtual and contacted by the research coordinator. An SMS message or email invitation along with a user survey link was sent to patients inviting them to participate in a user experience survey via REDCap (Research Electronic Data Capture) database. Survey invitations were sent between October 2021 and June 2022. Participants were made aware that by taking the user survey they provided consent for researchers to use their data (implied consent). This information was included in the participant information sheet provided to potential participants prior to commencing the survey. This approach was approved as part of the ethics application to the SLHD Research Ethics Committee. Those who did not complete the survey were reminded a maximum of 2 more times by the research coordinator by text or email.

Consent form information and survey data were stored in a password-protected REDCap dedicated project. Survey invitations were sent to a total of 5915 patients during the study period. Attrition rates across the survey sections for incomplete responses were: first section – Survey introduction section (18.87%), second section – General evaluation of the intervention questions (38.02%), third section – Pulse oximeter questions (13.62%), fourth section – Self-reported general health questions (8.92%), fifth section – Health literacy questions (9.39%), sixth section – Internet use questions (10.80%) and seventh section – Digital health literacy questions (0.47%).

Analysis

This section describes the analysis for the development and validation of the acceptability model for remote monitoring technology (pulse oximeter) – Stage C of the methodology.

Conceptual measurement model development and item selection (exploratory factor analysis)

The validity and reliability of the model were examined through EFA and Cronbach's alpha reliabilities. A value greater or equal to 0.70 was considered acceptable. 39 Convergent and discriminant validity were assessed within factors. Factor loadings ≥0.6 were considered necessary for items to belong to a common construct whereas loadings ≤ 0.3 were considered for discriminant validity. 40 Cross-loadings were also examined to assess discriminant validity. 41

Measurement model confirmation testing (confirmatory factor analysis)

Model fit of latent variables of the SEM model was evaluated by CFA, Composite Reliability (CR) and Average Variance Extracted (AVE). CR values greater or equal to 0.70 were considered acceptable. 42 An AVE value greater than 0.50 generally indicates that a substantial amount of variance in the indicators is accounted by for the constructs of the model. 43

The fit of the model was examined using fit indices suggested by Hu and Bentler. 44 A good model fit was considered if Root Mean Square Error of Approximation (RMSEA) was <0.05, a Comparative Fit Index (CFI) and Tucker-Lewis Index (TLI) were >0.95, and a Standardised Root Mean Residual (SRMR) < 0.08. Literacy scores were categorised as high (i.e., > mean value) and low (i.e., ≤ mean value). Model comparisons between low versus high DHL and low versus high HL were also performed.

Evaluation of the utility of the measurement model on four outcome measures

Standardized factor loading estimates and standard error (SE) between the constructs and the outcomes were explored through SEM. Factor loading estimates for the moderating effect of DHL and HL were also analyzed for external validation.

Case study

A comparison of standardised scores (standard deviation, SD) between two types of pulse oximeters used by rpavirtual (USB-charge and Bluetooth operated iHealth®, and battery-operated Suresense®) across the different constructs of the developed model was conducted. A Mann-Whitney U Test to compare differences between both groups not normally distributed was performed.

The IBM® SPSS® statistics software platform (Version 28.0.0) was used to analyze the data.

Results

Participants’ characteristics

A total of 202 patients were included in the analysis. The average age of patients was 53 years (SD:15.38), 123 (60.9%) were female, 76 (37.6%) male and the remaining selected the ‘other’ option. And 47 patients (23.3%) had postgraduate education, 51 (25.2%) had a graduate degree, 32 (15.8%) had a diploma, 31 (15.3%) had a certificate, 36 (17.8%) had completed high school, 3 (1.5%) primary school, and 2 (1.0%) reported no education. The average digital health literacy score (ranging from 1 = low to 4 = high) was 3.09 (SD = 0.59) and the health literacy score (ranging from 0 = low to 2 = high) was 1.81 (SD = 0.29).

Conceptual measurement model development (four-construct model)

Twelve of 31 items of the original survey were included after the EFA. Factor loadings of the included items ranged between 0.636 and 0.857.

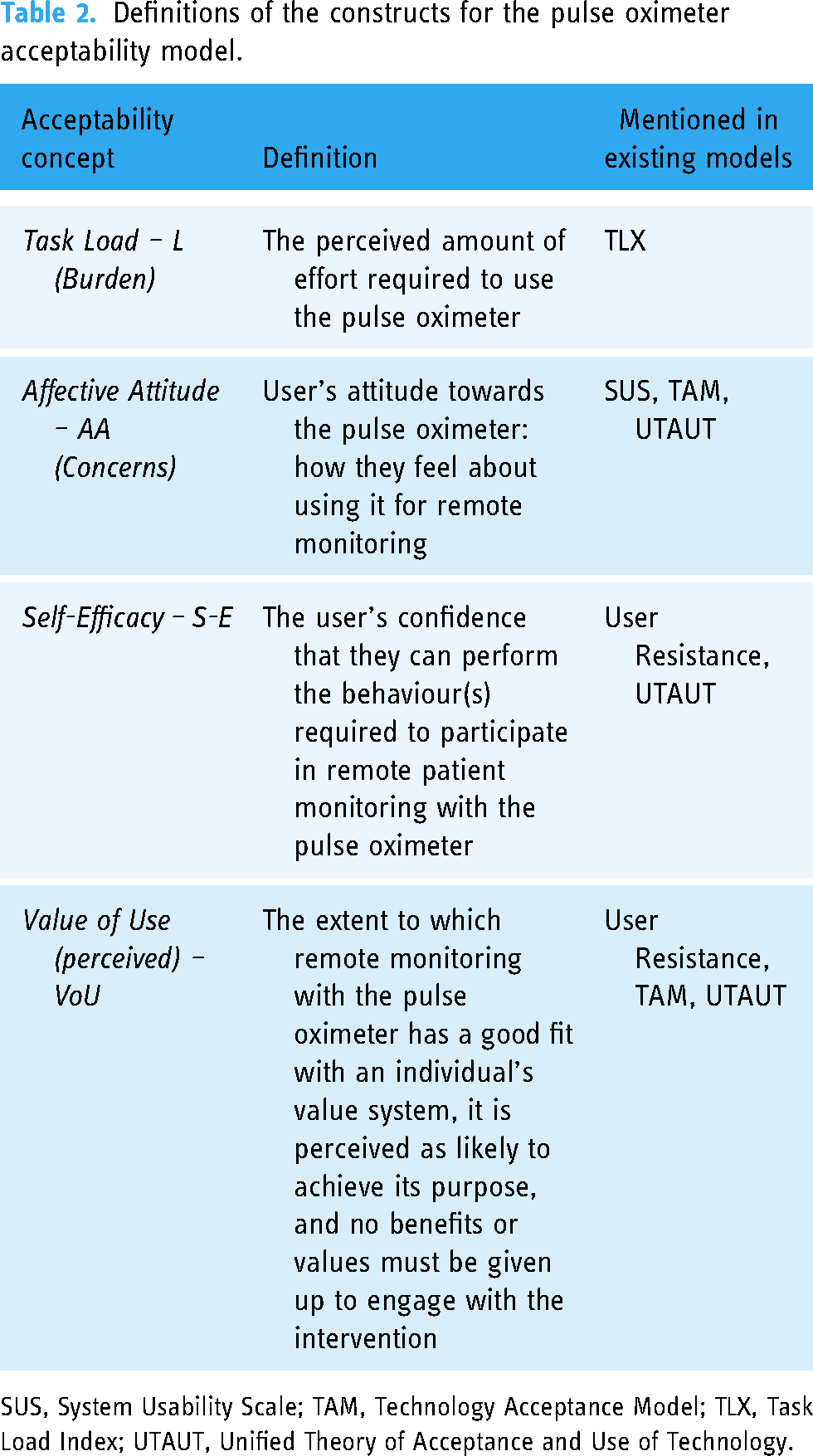

Four components of the PCA had a smaller number of cross-loadings and were the ones selected as part of the proposed model. Three clear components (i.e. Burden, Affective Attitude (AA), Self-Efficacy (S-E)) emerged from the model. A fourth concept was based on multiple components and defined as Value of Use (VoU) based on the contained items. The definition of the constructs is described in Table 2. The Burden/Load (4 items; α = .872), AA (4 items; α = .770), S-E (2 items; α = .811) and VoU (2 items; α = .814) constructs were found to be highly reliable.

Definitions of the constructs for the pulse oximeter acceptability model.

SUS, System Usability Scale; TAM, Technology Acceptance Model; TLX, Task Load Index; UTAUT, Unified Theory of Acceptance and Use of Technology.

A final four-construct conceptual measurement model was found to have convergent and discriminant validity. The next step was to test the fitness of the model.

Measurement model confirmation (CFA)

Composite reliability (CR) ranged from .77 to .88 (Burden/Load = .88, Concerns/AA = .77, S-E = .83, Value = .81), which met the acceptable level.

The AVE ranged from .47 to .71 (Burden/Load = .66, Concerns/AA = .47, SE = .71, VoU = .69), with most levels meeting the acceptable level 0.5. According to Fornell and Larcker, 43 the convergent validity of the construct AA is still adequate as the CR is above acceptable. The square root of AVE for each latent variable (diagonal element) was greater than the correlations between latent variables squared, confirming the validity of the model. The final model is described in Figure 1.

Model 1: acceptability of healthcare interventions measured by 4 constructs and 12 items.

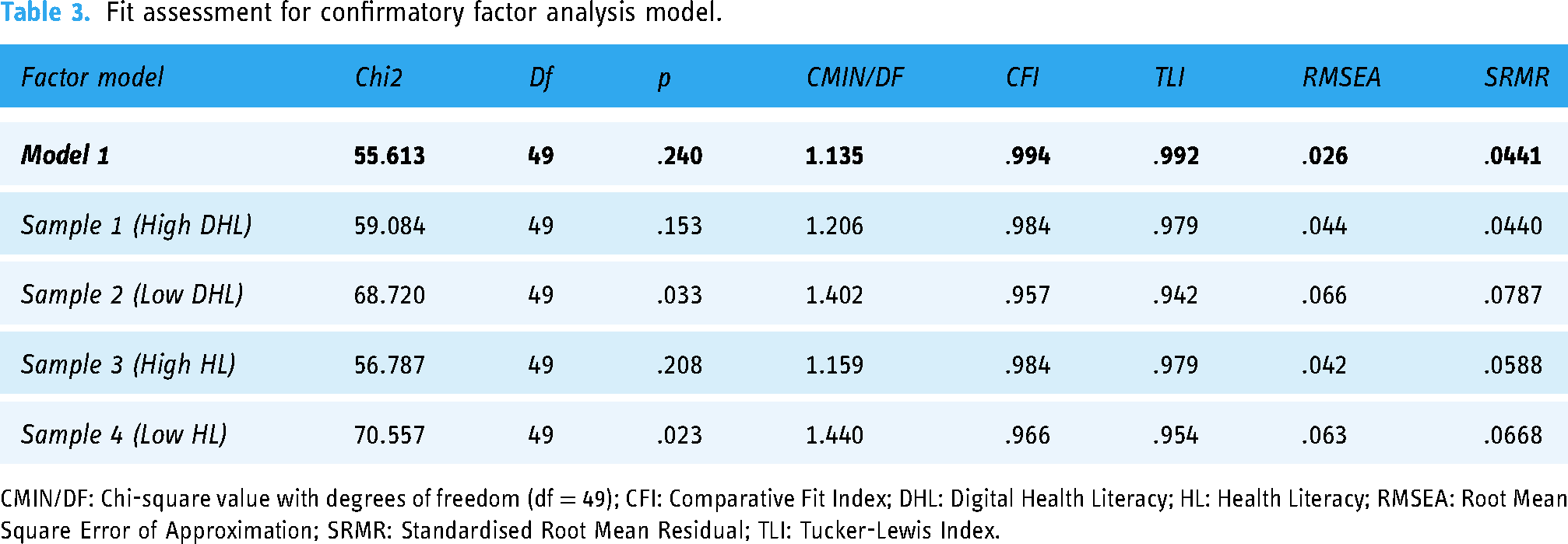

The model-fit measures were used to assess the model's overall goodness of fit. The four-factor model (L, AA, S-E and VoU) yielded good fit (Table 3) for the data.

Fit assessment for confirmatory factor analysis model.

CMIN/DF: Chi-square value with degrees of freedom (df = 49); CFI: Comparative Fit Index; DHL: Digital Health Literacy; HL: Health Literacy; RMSEA: Root Mean Square Error of Approximation; SRMR: Standardised Root Mean Residual; TLI: Tucker-Lewis Index.

Based on the CFA results from AMOS, the four-constructs TFA measurement model was statistically reliable for all the samples. Although variation in responses from users with different DHL and HL were observed, this did not affect the overall reliability for either of the literacy groups.

Evaluation of the utility of the measurement model on four outcome measures (external validity) – association of the measurement model and outcome variables

Figure 2 describes the acceptability model and the outcome variables. Frustration and Performance from the NASA-TLX scale and Trust and Satisfaction were defined as outcomes representing the users’ behavioural response.

Model 2: acceptability of healthcare interventions constructs and user experience outcomes.

A statistically significant effect of the construct of Burden on Frustration and Performance outcomes was observed. There was no statistically significant effect of the construct AA on the four outcomes.

S-E had an effect on the four outcomes of interest and these results were statistically significant. Magnitude of effects on Trust and Satisfaction (with very strong significance <0.001) were 2 to 3 times higher than Frustration and Performance (with weaker significance).

VoU had an effect on the outcomes Trust, Frustration, and Satisfaction and these estimates were statistically significant. Statistical significances were very strong on Trust and strong on Frustration and Satisfaction.

The standardised factor loading estimates for all the constructs and outcomes are described in Table 4.

Model 2: standardized estimates between constructs and outcomes, and moderating effects of DHL and HL.

* p-value <.10 (weak significance).

**p-value <.05 (significance).

***p-value <.01 (strong significance).

****p-value <.001 (very strong significance).

AA: Affective Attitude; DHL: Digital Health Literacy; HL: Health Literacy; S-E: Self-Efficacy; SE: Standard Error; VoU: Value of Use.

The moderation effect of DHL and HL on Model 2 was also analysed. Based on the SEM Regression results, DHL was found to be a positive moderating factor of S-E on both Trust (Estimate = .175, Standard Error = .144, p-value = .044) and Satisfaction (Estimate = .341, Standard Error = .229, p-value < .001), and a negative moderating factor of VoU on both Satisfaction (Estimate = −.134, Standard Error = .123, p-value = .033) and Trust (Estimate = −.112, SE = −.125, p-value = .092). HL had no significant direct impact on outcome variables.

Case study: Applying measurement model to benchmark 2 comparable technologies

After developing our CFA-tested TFA measurement model, we used it as a benchmarking tool to compare and contrast usability (four acceptability constructs) and outcome expectations between two brands of pulse oximeter devices used by rpavirtual.

No differences in user behaviours (based on the acceptability constructs) were observed between both devices. Statistically significant differences were found between pulse oximeters for the outcomes of satisfaction and frustration (Table 5).

Standardised scores and standard deviation (SD) of the four constructs, DHL and HL for the different types of PO

Mann-Whitney Test.

* p-value <.10 (weak significance).

**p-value <.05 (significance).

***p-value <.01 (strong significance).

****p-value <.001 (very strong significance).

CI: Confidence Interval of the Difference; DHL: Digital Health Literacy; HL: Health Literacy; SD: Standard Deviation; SE: Standard Error.

Discussion

This article describes the development of a measurement model for evaluating user acceptability of remote monitoring technology, using consumer pulse oximetry as an important practical example. This model was synthesised from existing validated tools commonly adopted for health technology acceptance studies and mapped onto a framework considering appropriate constructs for evaluating the technology as a health intervention.

The results of this study suggest that a four-construct model comprising Load (Burden), AA (Concerns), S-E and VoU is a reliable and valid instrument to reflect users’ acceptability and usability of the pulse oximeter for remote monitoring. Existing validated survey items have been found suitable (after modification) to address the acceptability of healthcare interventions based on Sekhon et al. definitions. 16 Although the validity of the four-factor measurement model was confirmed, AA remains an issue conceptually and may indicate that item content based on the questions could be mixed as users are patients and their attitude towards the use of the pulse oximeter could vary significantly.

Sekhon et al. have previously defined these usability constructs theoretically to address the acceptability of healthcare interventions that aim to achieve clinical outcomes. 16 This highlights the importance of considering the use of remote monitoring technology as a healthcare intervention when aiming to achieve clinical outcomes.

For the construct Burden, items are related to the amount of workload (e.g. mental demand, temporal demand, physical demand, effort) that a user perceives when using the remote monitoring technology. These items are based on the NASA-TLX subscales, 21 which have been used to measure the workload in similar settings, such as the use of home medical devices, 45 highlighting the importance of including them in the final model. For the construct AA, items involve concepts adapted from the SUS and UTAUT framework that allow the identification of the user's potential concerns about trying the technology (e.g. perception of unnecessary complexity, previous knowledge and technical support required to manage the device, and privacy risk from using the device), that is, reflecting the user's attitude towards using the device. Due to the ambiguity of the construct of AA (based on the low AVE value), it may be better to use this to help interpret and explain user behaviour. For the construct Self Efficacy, items reflect aspects of the users’ confidence in their capabilities to use the remote monitoring technology (e.g. knowledge of how the device works or understanding the readings), which have been integrated into health models to address how health beliefs (e.g. health knowledge) might impact on peoples’ self-management behaviours. 46 While the previous three constructs are all focused on the perceptions of the remote monitoring technology, AA and Self Efficacy also consider the interaction between patients and healthcare professionals while using the remote monitoring technology (e.g. support provided by healthcare providers, communication of readings to the healthcare professionals delivering the intervention), with this being related to the reasons to engage with the intervention (i.e. perceived ‘value of use’ of remote monitoring with the pulse oximeter). This construct encompasses the intervention fit and opportunity costs defined by the TFA, 16 which are also consistent with the perceived benefits and costs associated with user resistance to information system implementation. 25

Consistent with previous research, the construct of Load (Burden) influenced the outcomes of Frustration and Performance, 23 indicating that frustration and performance are not innate characteristics of burden. As a result, a high perceived effort to use the remote monitoring technology may result in patients thinking that they are not successful and so are discouraged from engaging in the health intervention. Therefore, when evaluating the acceptability and usability of remote monitoring technologies it would be fundamental to target patients’ burden, to achieve high levels of performance and low frustration.

The construct of Self Efficacy (i.e., self-intrinsic ability) had a positive impact on the four outcomes, including Trust and Satisfaction, which are considered indicators of the acceptability of health technologies. 26 A less significant impact was observed on Performance, which may be related to users not seeing themselves performing well when asked to perform the task. By increasing patients’ confidence in using the remote monitoring technology, they may feel they can trust the device. Patients also may feel they are in control of the situation (e.g. using the technology for remote monitoring) and therefore, increase their satisfaction and acceptance of the technology. Therefore, improving patients’ self-efficacy (e.g. providing better support, clearer instructions, or training on the use of the device) may be necessary to ensure the acceptability and long-term integration of remote technology in healthcare.

VoU had a significant effect on all the outcomes except for Performance. Items included in this construct refer to the interaction between patients and the healthcare providers providing the intervention (e.g. satisfaction with the support provided by rpavirtual staff) and do not describe patients’ performance (e.g. how they use the device). This could explain the significant impact of this construct only on Trust, Satisfaction and Frustration but not on Performance. For example, patient's satisfaction with the support received by healthcare providers would not be likely to impact their perception of success at using the device (i.e. performance).

There was insufficient evidence to establish the effects of the construct of AA on the four outcome variables. It may be that the effect of the other constructs on the outcomes was stronger when analyzing them together, impacting the effect of the affective attitude construct. Also, attitude towards the use of the technology might be less meaningful when the use of the technology is ‘mandatory’ as part of the intervention. In our study, patients may have already felt they had to use the technology (i.e. pulse oximeter) so they could be remotely monitored by clinicians. Nevertheless, there is some evidence that this construct could impact the defined outcomes. Based on Technology Acceptance Model (TAM), Attitude is affected by Ease-of-Use and Usefulness as mediating variables to satisfaction. 47

Technology acceptance and resistance theories include concepts such as Self Efficacy and Perceived use as supporting factors that reduce resistance to information system implementation. 25 Including these concepts in our proposed model could allow us to compare the ‘acceptability of the technology’ more objectively in different devices for the same clinical task (e.g. different pulse oximeter types) and to identify usability dimensions to improve acceptability or reduce resistance to the implementation of these interventions.

Digital health literacy was found to impact the trust in the device for remote monitoring. Patients with low knowledge of the health condition or the use of the medical device might find the pulse oximeter more challenging to use or require more instructions and training to use it correctly, thus impacting the device's acceptability and usability. Health knowledge aspects such as understanding the purpose of the device or the meaning of the oxygen levels have been reported to be correlated to the usability of the pulse oximeter in previous research. 13 Therefore, improving digital health literacy would be important for the inclusion of digital health tools such as remote monitoring technology. 48

Digital health literacy was also found to be a moderating factor for Self Efficacy on Trust and Satisfaction. Self-efficacy and digital health literacy had positive interactions, indicating they are complementary. An association between digital health literacy levels and self-efficacy has been reported before.49,50 Therefore, targeting digital health literacy needs may influence a patient's capacity to use the technology to complete the task and as a consequence, acceptance of the technology.

The effect of the VoU construct on Satisfaction and Trust was also seen as negatively moderated by digital health literacy. Digital health literacy is a baseline measure of general knowledge that enables users to evaluate against some perceived reference standard, which is extremely difficult to establish in usability studies. Based on the items included in the VoU construct, it may be that patients with high digital health literacy perceive they need less support from healthcare providers to use the pulse oximeter remotely.

This analysis not only allowed us to compare the users’ experience (via the 4 dimensions of acceptability), but also to benchmark users’ satisfaction (via the 4 outcome measures). In our case study, there were no significant differences in the HL and DHL of patients using both types of pulse oximeteras as well as their interactions with the technology (i.e. acceptability constructs). We can conclude there are differences in each of the outcome measures based on the proposed model (i.e. users found Suresense is higher in trust, satisfaction and performance, and less in frustration). These four metrics assess different dimensions, but they can be affected by the same functionality or aspect of the device. These results showed that patients rated SureSense (as a device) as high in trust, satisfaction and performance, and less in frustration with literacy adjusted.

Limitations

This study had some limitations. Only one type of technology (e.g. pulse oximeter) was tested, and it is not clear how transferable the model would be to other remote monitoring devices. Other established scores such as Net Promoter Score could also be considered for these acceptability models. As the survey was voluntary, there may be self-selection bias as participants who completed the survey may have stronger opinions on the usability of the pulse oximeter. The low response rate also highlights the potential magnitude of the self-selection bias. Nevertheless, the surveys were distributed to all the eligible participants to reflect the different experiences and data was collected until the target sample was reached and was sufficient for statistical analysis. Due to the timeframe between the intervention (rpavirtual) was first provided and the survey invitation, there may be recall bias. Nonetheless, data was collected progressively to reduce this bias. There may be some bias in not including incomplete surveys. This may be due to the length of the survey and the inclusion of different measurements (e.g. health and digital health literacy questions). Incomplete responses were examined and compared to complete surveys. For the analysis in this manuscript, only completed responses were included. Finally, our findings are limited to the use of the pulse oximeter in the rpavirtual intervention during COVID-19 and may not reflect the patient experience in other settings.

Conclusion

The use of remote monitoring devices in virtual care shifts the task of collecting vital signs from clinicians in a healthcare setting, to patients in a home environment with limited support, using a device such as the pulse oximeter.

A measurement model for evaluating acceptability of a health technology device being used in a remote virtual care setting was developed. Survey-based instruments were tested for validity and reliability, and were suitable for patients, as users of the health technology device, to evaluate the acceptability of the device being used. Constructs and items presented in the measurement model can be augmented for future evaluation studies, as they can be used as diagnostics instruments to identify related usability issues and also as a benchmarking tool to compare and contrast different comparable technologies in the market.

Future work should examine the association between patient clinical outcomes (e.g. oxygen saturation levels) and the acceptability constructs and user experience outcomes. Also, future work should integrate data collected from clinician user experience surveys to evaluate these technologies beyond technical performance, to also assess workflow and health impacts.

Supplemental Material

sj-docx-1-dhj-10.1177_20552076241269513 - Supplemental material for Assessment of health technology acceptability for remote monitoring of patients with COVID-19: A measurement model for user perceptions of pulse oximeters

Supplemental material, sj-docx-1-dhj-10.1177_20552076241269513 for Assessment of health technology acceptability for remote monitoring of patients with COVID-19: A measurement model for user perceptions of pulse oximeters by Andrea Torres-Robles, Melissa Baysari, Karen Allison, Miranda Shaw, Owen Hutchings, Warwick J Britton and Andrew Wilson, Simon K Poon in DIGITAL HEALTH

Footnotes

Acknowledgements

The authors would like to acknowledge and thank all the patients who participated in this study, and staff at RPA Virtual Hospital for their cooperation.

Contributorship

AW, WB, OH, MB and SKP conceptualized the study. ATR and KA collected the data. SKP and ATR conducted the analyses. ATR drafted the manuscript. All authors contributed to the revision, editing and approval of the final version of the manuscript.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Ethics approval

Ethical approval (Protocol no. X21–0182) was granted by the Human Research Ethics Committee of the Sydney Local Health District.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Medical Research Future Fund: MRFF–Coronavirus Research Response–2020 Rapid Response Digital, the Health Infrastructure Grant (RRDHI000011) and the Office of Health and Medical Research–Funding enhancement for research in Sydney Local Health District.

Guarantor

SKP

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.