Abstract

Background

Glaucoma is a leading cause of irreversible blindness, with a rising global prevalence, driving an increased public search for health information online. Online video platforms, such as Douyin and Bilibili, have become key channels for health communication. However, the quality and reliability of glaucoma-related content on these platforms remain unclear, raising concerns regarding potential misinformation.

Methods

On 22 October 2025, the top 100 Chinese-language videos of glaucoma from Douyin and Bilibili were systematically collected. The video metadata and engagement metrics were recorded. Quality and reliability were assessed using the Global Quality Score (GQS), modified Decision-making Information Support Criteria for Evaluating the Reliability of Nonrandomized Studies (mDISCERN), JAMA (Journal of the American Medical Association) benchmark criteria, and Patient Education Materials Assessment Tool (PEMAT) for Audio Visual Content. Statistical analysis including Spearman correlation was used to examine the relationships between video variables and quality scores.

Results

Douyin videos exhibited significantly higher user interaction (likes, comments, shares, and saves) than Bilibili videos. However, the Bilibili videos demonstrated significantly higher median scores for GQS and PEMAT actionability. Videos from professional sources, particularly institutions, and those focusing on disease prevention or using expert monologue/visual aids consistently showed superior quality and reliability across all the assessment tools. Spearman correlation revealed that longer video duration was positively correlated with higher GQS, mDISCERN, and PEMAT understandability scores, whereas fewer comments were negatively correlated with these scores.

Conclusions

The overall quality and reliability of glaucoma-related online videos from Douyin and Bilibili were suboptimal. Content from nonprofessional sources was problematic. These findings highlight the need for public vigilance when consuming health information on such platforms, and underscore the importance of encouraging greater involvement from healthcare professionals in creating accurate, high-quality educational content.

Introduction

Glaucoma is the leading cause of irreversible blindness worldwide and poses a major public health challenge. Its age-standardized prevalence is 3% to 5% among people aged 40 years and above, 1 and the number of affected individuals is projected to rise to 112 million by 2040 owing to population aging. 2 The disease involves progressive optic neuropathy, often linked to elevated intraocular pressure (IOP), and can cause severe vision loss if left untreated. Key risk factors include nonmodifiable factors, such as family history (increasing risk by 2.85-fold), 3 advanced age, and male sex, 4 as well as modifiable conditions, such as elevated IOP, 4 systemic hypertension, 5 high myopia, 6 diabetes mellitus, 7 and prolonged corticosteroid use. 8

In the digital age, the public is increasingly turning to the Internet and social media for health information. Among these sources, online video platforms such as Douyin (a short-video social platform similar to TikTok) and Bilibili (a comprehensive video-sharing platform often compared to YouTube) have gained immense popularity and have emerged as powerful channels for health communication, especially among younger and middle-aged people.9,10 Online videos, with their engaging audiovisual format and concise presentation, are often more attractive and easier to understand than traditional text-based information, potentially enhancing knowledge acquisition and retention for diverse audiences.11,12 This has led to significant growth in health-related content on these platforms, making them a primary source of medical information for millions of users. 13

However, the lack of peer reviews and stringent regulatory mechanisms on these platforms raises significant concerns about the quality and reliability of disseminated health information. The open nature of content creation allows anyone to generate and share medical advice, leading to highly variable content quality and the potential for misinformation. 14 For instance, studies analyzing health videos on Douyin and Bilibili concerning other ophthalmic conditions, such as myopia and cataracts, have reported issues of scientific inaccuracy, incompleteness, and poor overall quality.15,16 The presence of misleading or false information poses a tangible risk to public health as it may influence patients’ understanding of diseases and their healthcare decisions.

Although previous research has begun to evaluate the quality of health information on online video platforms for various diseases, a systematic analysis focused specifically on glaucoma-related content remains limited. Furthermore, glaucoma differs from many other diseases evaluated in prior online video content analyses in that it is often “silent” until advanced stages and leads to irreversible vision loss; therefore, patient education must prioritize early detection behaviors (regular eye examinations for at-risk individuals), recognition of urgent warning signs (e.g. symptoms suggestive of acute angle closure), and sustained long-term self-management such as adherence to topical therapy and followup. This disease-specific need makes the evaluation of understandability and actionability on online video platforms uniquely important for glaucoma.17–19 Therefore, this study aims to fill this gap by systematically assessing the quality and reliability of glaucoma-related online videos on two major Chinese platforms: Douyin and Bilibili. Using validated assessment tools,including the Global Quality Score (GQS), modified Decision-making Information Support Criteria for Evaluating the Reliability of Nonrandomized Studies (mDISCERN), Journal of the American Medical Association (JAMA) benchmark criteria, and the Patient Education Materials Assessment Tool (PEMAT-A/V),we seek to characterize the current landscape of glaucoma information, identify factors associated with higher quality, and provide a scientific basis for improving health communication strategies for this sight-threatening condition.

Materials and methods

Ethical considerations

This investigation did not use any clinical data, human specimens, or laboratory animals. All data were sourced from publicly accessible videos in Douyin and Bilibili, and no private information was available. Furthermore, because the research design included no direct interactions with platform users, it was exempt from requiring a formal ethics committee review.

Data acquisition

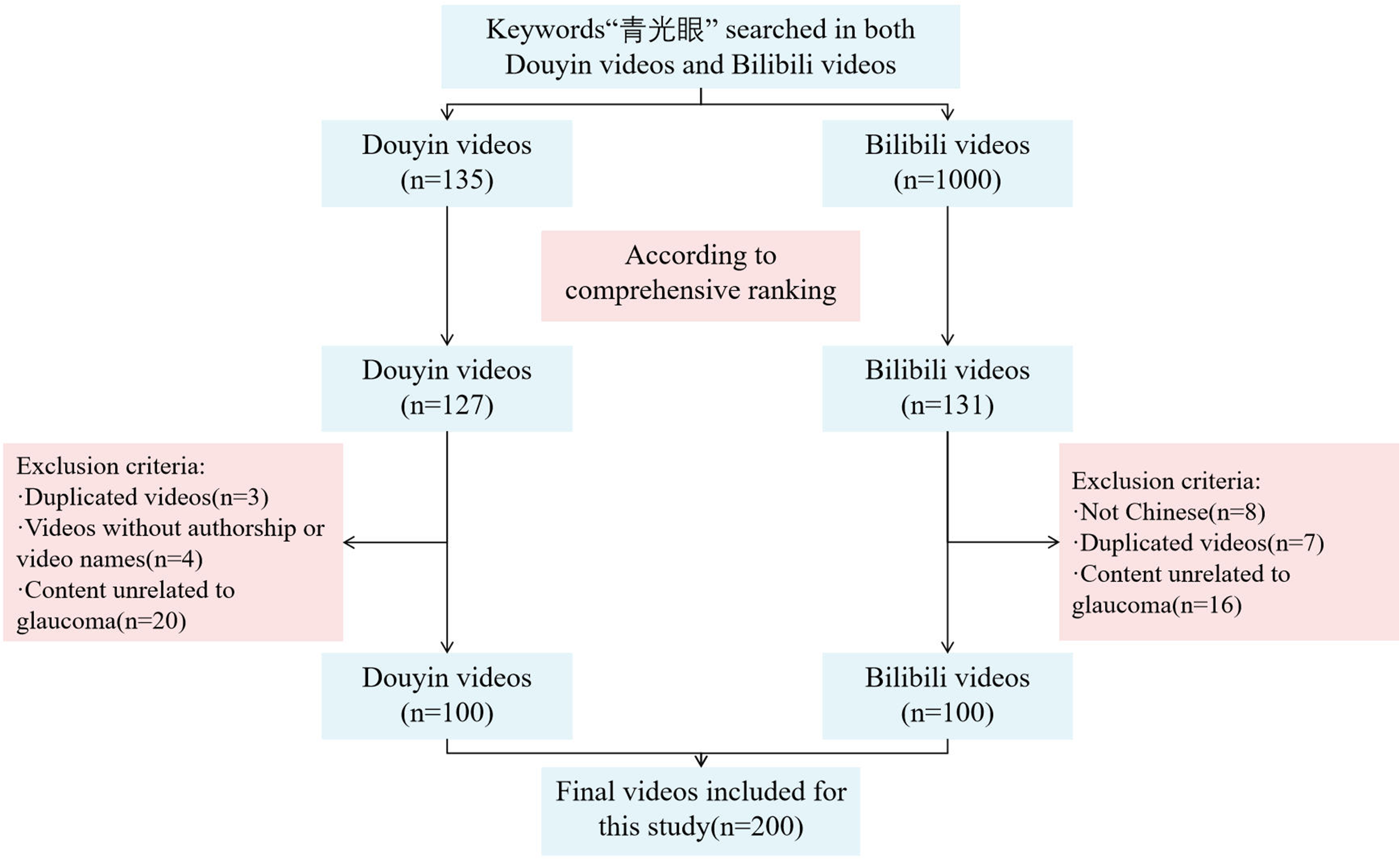

This cross-sectional investigation retrieved the top 100 videos by searching for the keyword “青光眼” (glaucoma) on the Chinese platforms Douyin and Bilibili on 22 October 2025 (Figure 1). To reduce the potential bias from personalized recommendation algorithms, new user accounts were created and used to access each platform. The platform's comprehensive ranking algorithm, which factors in video completion rate (viewers watching over 5 s), such as rate, comment rate, follow rate, and upload time, promotes both recently posted and highly popular content. The exclusion criteria were non-Chinese videos, duplicates (identical content from different uploaders), content unrelated to glaucoma, videos lacking identifiable authors or titles, and advertisements. This filtering was continued until a final set of 100 videos was compiled for each platform. The decision to limit the analysis to the top 100 entries was based on prior studies indicating that videos beyond this rank exert minimal influence on analytical outcomes.20–22 For each included video, we documented fundamental information, such as title, uploader details (name and identity), uploaders’ fans, publication year, video duration, video content, presentation format, and engagement metrics (likes, comments, shares, and saves), as well as the number of days since publication. All the collected data were systematically entered into a Microsoft Excel spreadsheet for management.

Search strategy for online videos on glaucoma.

Classification of videos

We divided the videos into four groups according to the source, six groups according to the presentation form, and four groups according to the content. Video sources were categorized as follows: (1) professional individuals, (2) nonprofessional individuals, (3) professional institutions, and (4) nonprofessional institutions. The video presentation form was categorized as follows: (1) expert monologue, (2) dialogue, (3) visual pictures and literature, (4) animation, (5) vlogs of patients, and (6) others. Video content was categorized as follows: (1) disease knowledge, (2) treatment, (3) prevention, and (4) news and reports. Regarding videos from doctors and physicians, further classification was performed as follows: (1) chief physicians, (2) associate chief physicians, and (3) attending physicians. The classification criteria are listed in Supplemental Table S1.

Video quality assessment

In this investigation, one researcher handled video retrieval and data-recording tasks. To ensure consistency in the video quality assessment, two physicians independently performed the evaluations and reached a consensus on the scores through deliberation. 23 In instances where the initial evaluators disagreed, a senior physician was consulted to make the final determination of video quality.

The assessment employed several established tools: the JAMA benchmark criteria (Supplemental Table S2), GQS scale (Supplemental Table S3), a modified version of the DISCERN (Supplemental Table S4), and the Patient Education Materials Assessment Tool for Audio Visual Content (PEMAT-A/V) (Supplemental Table S5).23–27 The JAMA benchmark criteria focus on assessing video credibility across four key domains: (a) authorship, (b) sourcing and copyright, (c) the timeliness of information, and (d) the disclosure of conflicts of interest. Each domain contributes one point to the total score. For the overall quality, the GQS utilizes a five-level scale, where a higher level corresponds to a superior rating. Adapted from the original DISCERN instrument, the modified DISCERN tool evaluates video reliability based on five aspects: (a) achievement of the stated purpose, (b) use of credible sources, (c) objectivity of content, (d) inclusion of supplementary information, and (e) acknowledgment of areas with uncertainty. A single point was awarded for each criterion. Finally, the PEMAT-A/V instrument measures quality from the standpoint of comprehensibility and actionability, comprising 17 items (13 for understanding and 4 for usability). The results for these two domains were presented as percentages of the total possible scores.

Statistical analysis

Continuous data were summarized as mean with standard deviation (SD) or median with interquartile range (IQR). Categorical data were described as frequencies and percentages. Cohen's κ was used to evaluate the concordance between the two raters. The interpretation of the κ coefficients was as follows: a value exceeding 0.8 denoted excellent agreement; 0.6 to 0.8 represented substantial agreement; 0.4 to 0.6 was considered moderate; and any value at or below 0.4 reflected poor agreement. Continuous variables were analyzed using the Wilcoxon rank-sum test to compare the video parameters from Bilibili and Douyin. Group comparisons for the GQS, JAMA, mDISCERN, and PEMAT-A/V scores were conducted using the Wilcoxon rank-sum test (two-tailed). The performance of the predictive model was evaluated by analyzing the receiver operating characteristic (ROC) curve. Ordinal quality metrics were additionally dichotomized into high and low categories to support ROC-based prediction and logistic regression with clinically interpretable endpoints. Dichotomization was adopted because the primary clinical question, whether a video meets an acceptable quality threshold, is inherently binary and because the unequal conceptual intervals of the five-point GQS and mDISCERN scales limit the validity of treating these scores as continuous variables in linear models.

28

We acknowledge that dichotomization entails some loss of statistical information

28

, however, this approach enhances clinical applicability and permits direct comparison with the substantial body of prior video quality research that employed identical thresholds.9,29,30 Consistent with prior video-based health information evaluations, GQS and mDISCERN were dichotomized at 4 on the 5-point scales (≥4 vs <4), with scores ≥4 reflecting good overall quality/reliability.9,29,30 For PEMAT-A/V understandability (0–100%), materials scoring ≥67% were considered sufficiently understandable, a threshold close to the commonly applied 70% benchmark in PEMAT-based assessments.

31

These literature-derived cutoffs were subsequently corroborated by ROC analyses, which confirmed that the selected thresholds maximized discrimination (sensitivity and specificity) for identifying higher-quality videos based on video characteristics (e.g. duration and comment count), thereby providing duala priori and empiricalvalidation of the dichotomization boundaries, in line with prior ROC applications in video quality research and methodological guidance.32,33 The relationships between variables were investigated using Spearman correlation analysis. To identify associations between video scores and key variables, we constructed both univariate and multivariate logistic regression models and reported odds ratios (ORs) and their corresponding 95% confidence intervals (CIs). Any variable showing a univariate association with a

Results

Landscape of the glaucoma-related videos on Douyin and Bilibili

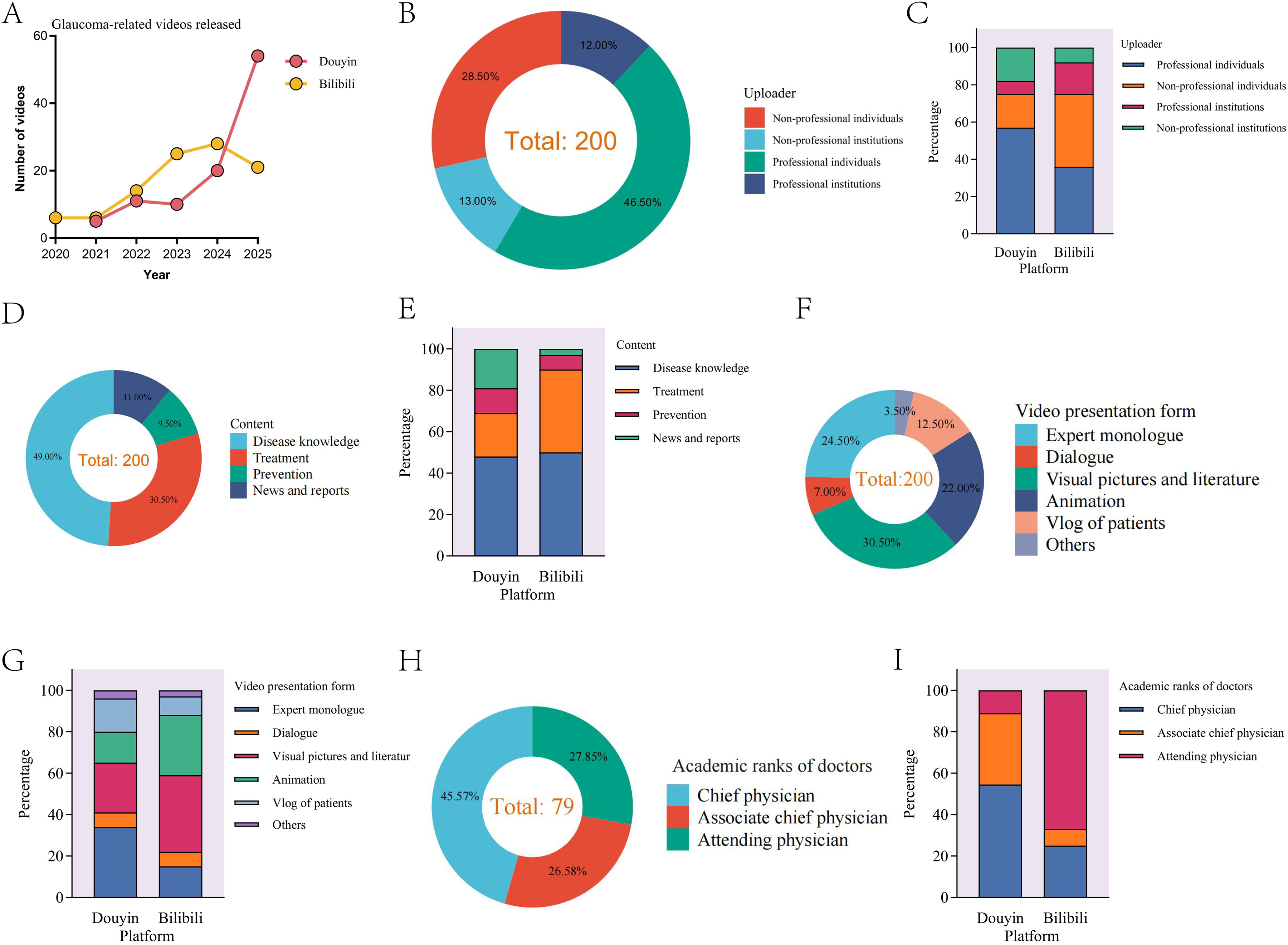

A comprehensive search revealed no glaucoma-related videos on either platform prior to 2020, which was likely attributable to their relatively recent rise in prominence. A total of 100 videos from each platform uploaded between 2020 and 2025 were analyzed. On Bilibili, the annual distribution was as follows: 6 videos (6%) in 2020, 6 (6%) in 2021, 14 (14%) in 2022, 25 (25%) in 2023, 28 (28%) in 2024, and 21 (21%) in 2025 (Figure 2(a)). Video counts on Douyin began in 2021, with 5 (5%) that year, followed by 11 (11%) in 2022, 10 (10%) in 2023, 20 (20%) in 2024, and 54 (54%) in 2025 (Figure 2(a)). Although the total number of glaucoma-related online videos was similar across both platforms, Douyin has exhibited a sharp increase in recent years.

General information on glaucoma-related videos from Douyin and Bilibili. (a) A line chart shows eligible glaucoma-related videos released between 2020 and 2025. (b) Doughnut chart shows the sources of all included videos. (c) Bar chart shows the sources of videos from Douyin and Bilibili. (d) Doughnut chart shows the content of all included videos. (e) Bar chart shows the content of videos from Douyin and Bilibili. (f) Doughnut chart shows the presentation form of all included videos. (g) Bar chart shows the presentation form of videos from Douyin and Bilibili. (h) Doughnut chart shows the academic ranks of doctors. (i) Bar chart shows the academic ranks of doctors from Douyin and Bilibili.

Given the unrestricted nature of video uploads, the content was contributed by a diverse range of users. Uploaders were categorized into four groups based on their professionalism and operational structure (individual or institution): professional individuals, nonprofessional individuals, professional institutions, and nonprofessional institutions. Statistical analysis indicated that the majority of videos (93/200, 46.5%) were uploaded by professionals. The remaining videos were distributed among nonprofessional individuals (57/200, 28.5%), nonprofessional institutions (26/200, 13.0%), and professional institutions (24/200, 12.0%; Figure 2(b)). Overall, the quantity of videos from reputable sources slightly surpassed that from nonprofessional sources on both platforms (Figure 2(c)). In terms of content, disease knowledge was the predominant category, accounting for 49% (98/200) of the videos (Figure 2(d)). This trend was consistent for both Douyin and Bilibili, with professional sources contributing the largest proportion of the disease knowledge content (Figure 2(e)). Regarding the presentation format, videos employing visual pictures and literature were the most common overall, comprising 30.5% (61/200) of the sample (Figure 2(f)). However, this trend was platform-specific: while visual pictures and literature predominated on Bilibili, expert monologues were the most frequent format on Douyin (Figure 2(g)). In terms of the academic rank of doctors, the chief physician predominated, accounting for 45.57% of doctors (36/79; Figure 2(h)). This trend was true only for Douyin, but the attending physician was predominant on Bilibili (Figure 2(i)).

General information and index of videos

The number of views, likes, comments, shares, and saves for videos on Douyin and Bilibili served as indicators of user attention, making these metrics appropriate for evaluating video influence. First, we compared the engagement indices of the two platforms. On average, glaucoma-related videos on Bilibili accumulated more views (approximately 1956 per video) but demonstrated lower levels of other engagement metrics (likes, comments, shares, and saves) as well as fewer followers for their uploaders (Table 1). In contrast, videos on Douyin garnered significantly higher levels of interaction, surpassing Bilibili in all metrics except view count (Table 1). Furthermore, videos on Bilibili were typically longer and published earlier. In summary, the Bilibili dataset was characterized by earlier-published, longer videos, whereas the Douyin dataset was characterized by higher user interactivity.

Basic index of videos about glaucoma on Bilibili and Douyin.

Note: Continuous variables that do not follow a normal distribution are expressed as M(P25,P75); binary categorical variables are presented as percentages. aThe result of the Wilcoxon rank-sum test. bViews are not available on Douyin.

Subsequently, we compared view counts, likes, comments, shares, and saves across different video sources, content types, and presentation formats. On Bilibili, videos from professional sources garnered more attention, while those from nonprofessional sources and institutions were less popular. Conversely, on Douyin, videos from nonprofessional institutions attracted the most attention, whereas those from individual and professional institutional sources received less attention (Table 2). Regarding content type, disease-knowledge videos received less attention on both platforms, whereas news- and report-related videos were more popular. A key difference emerged in that prevention-related videos were popular on Douyin, while treatment-related videos were more popular on Bilibili (Table 2). In terms of presentation format, animated videos received the least attention on both platforms, whereas live-action videos were favored. Another platform-specific difference was observed: patient vlogs were widely popular on Bilibili, whereas dialogue-based formats were more popular on Douyin (Table 2).

Comparison of source, content and presentation form of videos about glaucoma on two platforms.

Quality analysis of web-based videos

To objectively evaluate the video quality, we conducted a comprehensive analysis using internationally recognized scoring systems: the GQS, mDISCERN, JAMA benchmark criteria, and the Patient Education Materials Assessment Tool (PEMAT) for understandability and actionability. GQS scores were predominantly low. The majority of videos received a score of 2 (68/200, 34.0%), followed by scores of 3 (58/200, 29.0%), 4 (44/200, 22.0%), 1 (19/200, 9.5%), and 5 (11/200, 5.5%; Figure 3(a)). A similar distribution was observed for mDISCERN scores, where a score of 3 was the most common (80/200, 40.0%), followed by 2 (67/200, 33.5%), 4 (19/200, 9.5%), 0 (18/200, 9.0%), 1 (15/200, 7.5%), and 5 (1/200, 0.5%; Figure 3(b)). For the JAMA benchmark scores, a rating of 2 was most frequent (113/200, 56.5%), with the remaining scores being 3 (44/200, 22.0%), 1 (24/200, 12.0%), 0 (15/200, 7.5%), and 4 (4/200, 2.0%; Figure 3(c)). Analysis of the PEMAT scores revealed that for understandability, the highest proportion of videos scored in the 67% to 100% range (97/200, 48.5%), followed by 34% to 66% (71/200, 35.5%), and 0% to 33% (32/200, 16.0%; Figure 3(d)). In contrast, most videos scored poorly in the 0% to 33% range (112/200, 56.0%), followed by 34% to 66% (51/200, 25.5%), and 67% to 100% (37/200, 18.5%; Figure 3(e)). Collectively, these findings indicated that the overall quality of the videos was moderate, with a small proportion of videos being of either excellent or poor quality.

Quality analysis of videos. (a) A pie chart shows the GQS scores of all included videos. (b) A pie chart shows the mDISCERN scores of all included videos. (c) A pie chart shows the JAMA scores of all included videos. (d) A pie chart shows the PEMAT understandability scores of all included videos. (e) A pie chart shows the PEMAT actionability scores of all included videos. (f) A line chart shows the mean GQS, mDISCERN, JAMA, PEMAT understandability, and PEMAT actionability scores of eligible videos released between 2020 and 2025 on Bilibili. (g) A line chart shows the mean GQS, mDISCERN, JAMA, PEMAT understandability, and PEMAT actionability scores of eligible videos released between 2020 and 2025 on Douyin.

We subsequently analyzed the annual quality scores of the videos from 2020 to 2025 to determine whether a gradual improvement has occurred in recent years. The results indicated that scores for GQS, mDISCERN, PEMAT understandability, and PEMAT actionability peaked on Bilibili in 2023 (Figure 3(f)) and scores for all the five assessment tools peaked on Douyin in 2024 (Figure 3(g)). Contrary to the improvement trend, the quality of glaucoma-related videos generally remained stable or displayed a slight decline across all metrics in the latter years of the study period.

Quality comparison across platforms, types of uploaders, content and formats

Video quality was assessed using the GQS, PEMAT for understandability, and PEMAT for actionability, whereas reliability was evaluated using the mDISCERN and JAMA instruments. Bilibili videos demonstrated moderate quality and medium reliability, with median (IQR) scores as follows: GQS, 3 (2–4); PEMAT understandability [69 (38–85)]%; PEMAT actionability [50 (25–75)]%; mDISCERN, 3 (2–3); and JAMA, 2 (1–2). In contrast, Douyin videos had median (IQR) scores of GQS 2 (2–3), mDISCERN 2 (2–3), JAMA 2 (1–2), PEMAT understandability [62(50–73)]%, and PEMAT actionability [25(12.5–50)]%, indicating generally poor quality and low reliability. Statistical analysis confirmed that Bilibili videos exhibited significantly better quality and reliability than those on Douyin, with significant differences observed, particularly in GQS and PEMAT actionability scores (Figure 4).

The video quality score of videos related to glaucoma on Douyin and Bilibili. (a) Comparison of GQS between Douyin and Bilibili videos; (b) comparison of mDISCERN score between Douyin and Bilibili videos; (c) comparison of JAMA score between Douyin and Bilibili videos; (d) comparison of PEMAT understandability score between Douyin and Bilibili videos; (e) comparison of PEMAT actionability score between Douyin and Bilibili videos; (f) Ridge plot showing the overall distribution of GQS; (g) ridge plot showing the overall distribution of mDISCERN score; (h) ridge plot showing the overall distribution of JAMA score; (i) ridge plot showing the overall distribution of PEMAT understandability score; (j) ridge plot showing the overall distribution of PEMAT actionability score. “**” means

Furthermore, we conducted a statistical analysis of the GQS, mDISCERN, JAMA, and PEMAT scores based on the video source, content, and presentation format; the specific values are presented in Table 3. Regarding video sources (Figure 5(a1)–(j1)), on Bilibili, videos from professional sources showed no significant difference from nonprofessional sources in GQS, PEMAT understandability, or PEMAT actionability. On Douyin, however, videos from professional sources had significantly higher PEMAT understandability and actionability scores than those from nonprofessional sources. In terms of reliability, professional sources achieved significantly higher mDISCERN and JAMA scores than nonprofessional sources on both platforms. In summary, videos from professional institutions were of superior quality and reliability. Regarding video content (Figure 5(a2)–(j2)), on Douyin, videos focusing on disease prevention obtained higher GQS, mDISCERN, and PEMAT actionability scores than those featuring case reports or news. In contrast, no significant differences were observed among the content types of bilibili. Overall, disease-prevention-related content exhibited the highest quality and reliability, while case reports scored the lowest. Regarding presentation formats (Figure 5(a3)–(j3)), on Bilibili, formats using visual aids with text and animation showed higher GQS and PEMAT understandability scores than patient vlogs. On Douyin, expert monologues scored significantly higher than patient vlogs in GQS, PEMAT understandability, PEMAT actionability, mDISCERN, and JAMA. In terms of reliability of Bilibili, visual aids with text and animation also had higher mDISCERN and JAMA scores than patient vlogs.

The video sources scores, video content scores, and video presentation form scores related to glaucoma on Bilibili and Douyin. (a1)–(a3) The GQS score on Bilibili. (b1)–(b3) The mDISCERN score on Bilibili. (c1)–(c3) The JAMA score on Bilibili. (d1)–(d3) The PEMAT understandability score on Bilibili. (e1)–(e3) The PEMAT actionability score on Bilibili. (f1)–(f3) The GQS score on Douyin. (g1)–(g3) The mDISCERN score on Douyin. (h1)–(h3) The JAMA score on Douyin. (i1)–(i3) The PEMAT understandability score on Douyin. (j1)–(j3) The PEMAT actionability score on Douyin. “*” means

The GQS, mDISCERN, JAMA, PEMAT understandability, and PEMAT actionability score based on different video sources, contents, and formats about glaucoma on Douyin and Bilibili.

GQS: Global Quality Scale; JAMA: Journal of American Medical Association; mDISCERN: modified Decision-making Information Support Criteria for Evaluating the Reliability of Nonrandomized Studies; PEMAT: Patient Education Materials Assessment Tool.

An investigation into determinants of video quality

An initial correlation analysis confirmed a positive relationship among the GQS, mDISCERN, and PEMAT understandability scores, supporting the robustness of our scoring outcomes (Figure 6(a)). Subsequently, Spearman correlation analysis was employed to identify the relationships between video variables and quality scores. The results indicated that GQS, mDISCERN, and PEMAT understandability scores were exclusively correlated with video duration and number of comments. No significant correlation was found with other metrics such as likes, uploader fans, saves, shares, or days since publication (Figure 6(a)). Furthermore, receiver operating characteristic (ROC) curve analysis revealed that videos with higher quality scores—specifically, a GQS or mDISCERN score above 4, or a PEMAT understandability score above 67%—were typically longer than 81.0 seconds and had fewer than 13.5 comments (Figure 6(b)–(d)).

An investigation into determinants of video quality from Douyin and Bilibili. (a) Correlation analysis between video scores and video variables. The depth of blue represents the strength of the negative correlation between them; the depth of red represents the strength of the positive correlation between them. (b) Receiver operator characteristic curve of the video durations and comments for GQS score prediction. The cutoff value (specificity and sensitivity) are shown in the figure. (c) Receiver operator characteristic curve of the video durations and comments for mDISCERN score prediction. The cutoff value (specificity and sensitivity) are shown in the figure. (d) Receiver operator characteristic curve of the video durations and comments for PEMAT understandability score prediction. The cutoff value (specificity and sensitivity) are shown in the figure. (e) Univariate and multivariate logistic analysis of potential determinants with GQS scores of ≥4. “Reference” indicates the baseline category used for comparison for each multinomial variable.

Finally, we performed a comprehensive analysis of all the potential factors influencing video quality. Univariate analysis identified video format, content category, platform, and uploader type as significant determinants of quality (Figure 6(e)). However, subsequent multivariate analysis revealed that only presentation format remained an independent factor affecting video quality (Figure 6(e)). Put succinctly, professional-related videos tended to exhibit superior quality.

Discussion

Glaucoma remains the leading cause of irreversible blindness worldwide and presents a substantial public health challenge.34–36 As the public increasingly relies on digital platforms for health information, social media has become a pivotal channel for disseminating medical knowledge. 37 Online video platforms such as Douyin and Bilibili, which are immensely popular among younger and middle-aged people, have emerged as particularly influential sources of health-related content.9,10 However, the quality and reliability of this information are highly variable, raising concerns about misinformation and its potential impact on public health decisions.14,37,38 This study systematically assessed the quality and reliability of glaucoma-related videos on these two major Chinese platforms, thereby addressing a critical gap in the literature on social media-based health communication.

Using established instruments, such as GQS, mDISCERN, JAMA, and PEMAT, we found that the overall quality of glaucoma-related videos was moderate, with only a small proportion achieving high scores. Videos from professional institutions consistently outperformed those from nonprofessional sources in terms of reliability and actionability. Notably, formats such as expert monologues and visual aids with text were associated with higher comprehensibility and trustworthiness. These results underscore the influence of uploader identity and presentation style on video quality, echoing previous research that highlights the risks of misleading health information when content is generated by nonexperts.

Our correlation analysis indicated that video duration and comment count were associated with the GQS, mDISCERN, and PEMAT understandability scores. This finding aligns with the structural differences between the two platforms. Bilibili, acting as a traditional video repository with virtually no stringent time constraints (supporting videos up to several hours), naturally facilitates more in-depth medical explanations compared to the more ephemeral and concise nature of Douyin, which is optimized for rapid engagement. However, no significant correlations were observed with other engagement metrics such as likes, shares, or saves. This suggests that longer videos may facilitate more comprehensive content coverage, whereas superficial engagement metrics are unreliable indicators of educational quality. These findings contrast with prior studies that reported no significant associations between popularity and quality,39,40 highlighting the need for careful interpretation of user metrics in health communication research. Furthermore, our ROC analysis, which identified specific thresholds for duration and comment count, provided concrete criteria for predicting higher-quality content.

Our analysis reveals several key findings. Why higher-quality glaucoma videos on Bilibili received a lower interaction than Douyin videos? Although Bilibili videos achieved significantly higher educational quality (GQS) and actionability (PEMAT-A/V) than Douyin videos, user interaction (likes/comments/shares/saves) was significantly higher on Douyin. This divergence may reflect platform ecology rather than content value. Bilibili is often characterized by a longer-form, more “knowledge-oriented” viewing, whereas Douyin emphasizes short, attention-efficient consumption, which is more likely to trigger rapid engagement behaviors. Therefore, interaction metrics alone should not be interpreted as proxies for information quality, particularly on highly entertainment-oriented short video platforms. This interpretation is supported by cross-platform health video research reporting higher quality on Bilibili, but higher engagement on TikTok/Douyin-like platforms.41,42 The following actionable recommendations were proposed based on these findings. Platform-specific Content Optimization: Douyin creators should adopt “micro-learning” designs—combining brief hooks with 2–3 evidence-based points—and prioritize expert monologues, while Bilibili creators should utilize structured chapters and “clinician–patient co-creation” to bridge the gap between engagement and clinical reliability. Prioritize Professionalism and Completeness: Clinicians and institutions are encouraged to include explicit “next-step” guidance (e.g. screening indications and medication adherence) and utilize series-based formats to preserve information depth within short video constraints. Strengthening Platform Governance: Platforms should enhance the visibility of verified medical accounts, mandate the disclosure of authorship and sources, and refine recommendation algorithms to preferentially promote structured educational content over low-quality, sensationalized videos.

This study makes several contributions. This study provides novel glaucoma-specific insights beyond prior online video analyses of other diseases.9,10,15,16 Glaucoma is frequently asymptomatic until late stages and causes irreversible visual field loss; thus, effective public-facing communication must emphasize early detection and sustained lifelong management behaviors (including adherence to topical therapy and followup) rather than symptom-driven care. In this context, our finding that PEMAT actionability is generally low, particularly on the high-reach platform Douyin, highlights a glaucoma-relevant gap: many videos do not translate information into concrete actions that can prevent irreversible progression. In addition, we show that glaucoma prevention-focused content and professional/institutional sources achieve higher quality and reliability, and that expert monologue/visual-aid formats and longer durations are associated with better understandability and overall quality. Together, these results offer actionable guidance for glaucoma health communication strategies on online video platforms by prioritizing professional sourcing, prevention-oriented messaging, and structured, visually supported explanations that explicitly prompt screening/followup and adherence behaviors.

This study has several limitations. First, the dynamic nature of social media content meant that our data reflected a specific snapshot in time, and the landscape may have evolved since the data collection. Second, the study was limited to Chinese language platforms, which may restrict the generalizability of the findings. Third, the use of multiple scoring tools, although comprehensive, may introduce subjectivity despite efforts to ensure rater consistency. Fourth, it is important to acknowledge that the recommendation algorithms of online video platforms such as Douyin and Bilibili are characterized by a rapidly evolving nature with frequent updates that can significantly alter content visibility and user engagement patterns over short periods. Furthermore, potential sampling bias should be considered. Although we used neutral search terms and created new user accounts, the inherent algorithmic logic of these platforms may still introduce biases in the videos retrieved. Future research should consider longitudinal designs, incorporate AI-assisted content analysis, and expand cross-linguistic and cross-cultural comparisons to further elucidate global trends in health-related social media content.

Conclusion

In conclusion, this systematic assessment revealed that glaucoma-related online videos on Bilibili and Douyin generally exhibited suboptimal quality and reliability. Videos from professional sources, particularly institutions, scored higher than those from nonprofessional uploaders. These findings highlight the need for greater involvement of healthcare professionals in creating accurate content, improved platform moderation, and more critical public engagement with health information on such platforms.

Supplemental Material

sj-docx-1-dhj-10.1177_20552076261431461 - Supplemental material for Quality and reliability of glaucoma-related online Chinese videos on Douyin and Bilibili: Cross-sectional content analysis study

Supplemental material, sj-docx-1-dhj-10.1177_20552076261431461 for Quality and reliability of glaucoma-related online Chinese videos on Douyin and Bilibili: Cross-sectional content analysis study by Dangdang Wang, Yanyu Pu, Yunfei Li, Yang Peng, Yuyan He, Xi Gao, Huiyu Zhu, Wenyi Wang, Shaowen Du and Hong Li in DIGITAL HEALTH

Supplemental Material

sj-docx-2-dhj-10.1177_20552076261431461 - Supplemental material for Quality and reliability of glaucoma-related online Chinese videos on Douyin and Bilibili: Cross-sectional content analysis study

Supplemental material, sj-docx-2-dhj-10.1177_20552076261431461 for Quality and reliability of glaucoma-related online Chinese videos on Douyin and Bilibili: Cross-sectional content analysis study by Dangdang Wang, Yanyu Pu, Yunfei Li, Yang Peng, Yuyan He, Xi Gao, Huiyu Zhu, Wenyi Wang, Shaowen Du and Hong Li in DIGITAL HEALTH

Supplemental Material

sj-docx-3-dhj-10.1177_20552076261431461 - Supplemental material for Quality and reliability of glaucoma-related online Chinese videos on Douyin and Bilibili: Cross-sectional content analysis study

Supplemental material, sj-docx-3-dhj-10.1177_20552076261431461 for Quality and reliability of glaucoma-related online Chinese videos on Douyin and Bilibili: Cross-sectional content analysis study by Dangdang Wang, Yanyu Pu, Yunfei Li, Yang Peng, Yuyan He, Xi Gao, Huiyu Zhu, Wenyi Wang, Shaowen Du and Hong Li in DIGITAL HEALTH

Supplemental Material

sj-docx-4-dhj-10.1177_20552076261431461 - Supplemental material for Quality and reliability of glaucoma-related online Chinese videos on Douyin and Bilibili: Cross-sectional content analysis study

Supplemental material, sj-docx-4-dhj-10.1177_20552076261431461 for Quality and reliability of glaucoma-related online Chinese videos on Douyin and Bilibili: Cross-sectional content analysis study by Dangdang Wang, Yanyu Pu, Yunfei Li, Yang Peng, Yuyan He, Xi Gao, Huiyu Zhu, Wenyi Wang, Shaowen Du and Hong Li in DIGITAL HEALTH

Supplemental Material

sj-docx-5-dhj-10.1177_20552076261431461 - Supplemental material for Quality and reliability of glaucoma-related online Chinese videos on Douyin and Bilibili: Cross-sectional content analysis study

Supplemental material, sj-docx-5-dhj-10.1177_20552076261431461 for Quality and reliability of glaucoma-related online Chinese videos on Douyin and Bilibili: Cross-sectional content analysis study by Dangdang Wang, Yanyu Pu, Yunfei Li, Yang Peng, Yuyan He, Xi Gao, Huiyu Zhu, Wenyi Wang, Shaowen Du and Hong Li in DIGITAL HEALTH

Supplemental Material

sj-xls-6-dhj-10.1177_20552076261431461 - Supplemental material for Quality and reliability of glaucoma-related online Chinese videos on Douyin and Bilibili: Cross-sectional content analysis study

Supplemental material, sj-xls-6-dhj-10.1177_20552076261431461 for Quality and reliability of glaucoma-related online Chinese videos on Douyin and Bilibili: Cross-sectional content analysis study by Dangdang Wang, Yanyu Pu, Yunfei Li, Yang Peng, Yuyan He, Xi Gao, Huiyu Zhu, Wenyi Wang, Shaowen Du and Hong Li in DIGITAL HEALTH

Footnotes

Acknowledgements

The authors would like to express their gratitude to the participants of this study.

Author contributions

The first draft of this manuscript was written by DW, YP, YL, and YP. Data collection and analysis were performed by HZ, YH, XG, WW, and SD. HL revised the manuscript and helped with the project administration and funding acquisition. All authors contributed to the study design and have read and approved the final manuscript.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This study was supported by grants from the National Natural Science Foundation of China (grant nos. 81974131 and 82471064), the Natural Science Foundation of Chongqing Municipality (CSTB2024NSCQ-KJFZMSX0073), and the Science and Technology Innovation Key R&D Program of Chongqing (CSTB2025TIAD-STX0008).

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data availability statement

The datasets generated and/or analyzed during the current study are available from the corresponding author upon reasonable request.

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.