Abstract

Background

The incidence and mortality of renal cell carcinoma (RCC) have risen significantly in recent years, attracting considerable public attention. Short-video platforms such as TikTok and Bilibili have become important sources of health information, yet the quality and reliability of RCC-related content on these platforms remain unclear.

Methods

On August 31, 2025, we systematically retrieved the top 110 videos related to RCC from both TikTok and Bilibili using the keyword “RCC.” Basic video characteristics were extracted, and two validated instruments—the Global Quality Scale (GQS) and the modified DISCERN (mDISCERN)—were employed to evaluate video quality and reliability, respectively. Spearman correlation analysis was used to examine relationships between engagement metrics and quality scores.

Results

Of 196 videos included, TikTok content was predominantly from medical professionals (86.0%), while Bilibili had more non-professional uploads (53.13%). TikTok videos demonstrated significantly higher median scores than Bilibili in both GQS (3 [IQR: 2, 4] vs. 2 [IQR: 2, 3],

Conclusion

The overall quality and reliability of RCC-related short videos on TikTok and Bilibili are suboptimal. Content from medical professionals is more trustworthy, highlighting their essential role in public health education. These findings underscore the need for enhanced content oversight on platforms and critical discernment among viewers when accessing health information online.

Keywords

Introduction

Renal cell carcinoma (RCC) is the most common malignant tumor of the kidney worldwide, accounting for more than 90% of all renal malignancies. 1 In recent years, the global incidence and mortality of RCC have continued to rise. According to the 2020 global cancer statistics, there are approximately 431,000 new cases and 179,000 deaths annually due to RCC. 2 The situation is equally concerning in China, where recent data indicate an incidence rate of 3.5 per 100,000 people, with a year-by-year upward trend, making it a significant public health threat. 3

The clinical significance of RCC lies in its insidious onset and low early diagnosis rate. Approximately 30% of patients already have distant metastases at the time of initial diagnosis. 4 Furthermore, treatment strategies for RCC are complex, including partial nephrectomy, radical nephrectomy, targeted therapy, and immunotherapy all of which impose substantial physiological and psychological burdens on patients. 5,6 Studies have shown that RCC patients not only face threats from the disease itself but also frequently experience psychological issues such as anxiety and depression, significantly impairing their quality of life. 7

With the rapid development of internet technology, the ways in which the public accesses health information have undergone fundamental changes. In recent years, short-video platforms such as Bilibili and TikTok have become important channels for the public to obtain medical and health information. 8 Through vivid and intuitive visual presentations, these platforms significantly lower the barriers to understanding health-related content and enhance the accessibility and dissemination efficiency of information. 9 Studies indicate that over 60% of internet users have sought health-related information through short-video platforms, with content related to cancer being among the most frequently searched topics. 10 Another study found that among patients with inflammatory bowel disease, those who acquired health information via short videos demonstrated better treatment adherence and disease awareness compared to those relying on traditional text-based information. 9 These findings underscore the growing importance of short-video platforms as a major source of health information, particularly among younger populations and digital natives.

However, the accuracy and reliability of content on these platforms remain concerning. Previous studies have revealed inconsistent information quality across multiple disease areas on short-video platforms.11–14 This concern extends to RCC-specific information. A recent study by Shakhnazaryan et al. evaluating websites on metastatic RCC treatment revealed that the majority provided incomplete and, in some cases, inaccurate information, with highly variable quality, highlighting a significant gap in the quality of online textual resources for RCC patients. 15 Given the specialized knowledge and complex treatment options associated with RCC, there is a particularly urgent need for high-quality information among patients and their families.

While the study by Shakhnazaryan et al. sheds light on the quality of traditional web-based text information, to date, no study has systematically evaluated the quality and reliability of RCC-related content on short-video platforms. Therefore, this cross-sectional study aims to assess the informational quality and reliability of short videos pertaining to RCC on Bilibili and TikTok, in order to guide patients toward accurate information and support the standardized dissemination of health-related content.

Materials and methods

Search strategy and data extraction

The primary objective of this study was to collect short video data related to “RCC” from the Bilibili (https://www.bilibili.com) and TikTok (https://www.douyin.com) platforms. Bilibili is a major Chinese video-sharing platform renowned for its active user communities and diverse content, often featuring longer, in-depth videos. TikTok is a globally popular short-video platform characterized by its algorithm-driven, rapid-content-consumption model, typically featuring brief and engaging videos. On August 31, 2025, we performed systematic data retrieval separately on both platforms using the Chinese search term “肾癌” (RCC) as the keyword. To minimize bias introduced by personalized recommendations, all searches were conducted using newly created accounts in incognito mode, thereby preventing the influence of historical data or algorithmic personalization.

Initially, the top 110 videos from each platform, ranked by comprehensive default sorting, were collected. Videos published within the last 7 days, duplicate videos (same content uploaded by different users), and content irrelevant to RCC were excluded. Key information from each included video was recorded, including the video title, uploader's name and identity, video duration, content type, and metrics such as the number of likes, comments, shares, and collections, as well as the number of days since publication. The detailed selection process is illustrated in Figure 1. All extracted data were systematically recorded in a Microsoft Excel spreadsheet (Microsoft Corporation).

The flow chart of this study.

Classification of videos

Videos were classified based on both their source and content. The four source categories consisted of professional individuals, nonprofessional individuals, professional institutions, and nonprofessional institutions. Content was divided into five types: disease knowledge, treatment, prevention, news and reports, as well as advertisement and others. Furthermore, videos originating from professional individuals were subdivided into doctors specializing in renal cancer–related modern medicine, those focusing on other areas of modern medicine, practitioners of traditional medicine, and other health care professionals. Detailed criteria for these classifications are provided in Supplementary Material 1.

Video quality and reliability assessments

The quality of information in the videos was evaluated using the Global Quality Scale (GQS), while reliability was assessed with the modified DISCERN instrument (mDISCERN). The GQS consists of five criteria to evaluate overall video quality, with total scores ranging from 1 (very poor) to 5 (very good). 16 Using the mDISCERN tool, each video was evaluated regarding the following criteria: clarity, relevance, traceability, robustness, and fairness. 17 Each criterion was rated as “yes” (1 point) or “no” (0 points), and a cumulative score was calculated, ranging from 0 (extremely unreliable) to 5 (very reliable). 18 Detailed scoring criteria for both GQS and mDISCERN are provided in Supplementary Material 2.

Two qualified physicians (SJ and XH) with extensive experience in urology independently evaluated and scored the videos. Both evaluators are attending urologists with over eight years of clinical experience, specializing in urologic oncology, and are thus highly familiar with the standard diagnosis, treatment, and management of RCC. Prior to assessment, both reviewers familiarized themselves with the scoring guidelines for the mDISCERN and GQS instruments and discussed the criteria in detail to minimize cognitive bias. In cases of scoring discrepancies, a third arbitrator (SC) made the final decision. Full consensus was ultimately reached among all authors regarding all assigned scores. Inter-rater reliability was quantified using Cohen's κ coefficient. According to the criteria proposed by Landis and Koch, a κ value greater than 0.80 indicates excellent agreement, 0.61–0.80 substantial agreement, 0.41–0.60 moderate agreement, and below 0.40 poor agreement.

Statistical analysis

Descriptive statistics and nonparametric tests were employed to analyze video characteristics and quality metrics. Categorical variables are presented as frequencies and percentages, while continuous variables are summarized as medians with interquartile ranges (IQRs). The chi-square test or Fisher's exact test was applied as appropriate to assess differences in platform distribution and, similarly, for item-level comparisons of mDISCERN criteria between platforms. The Mann–Whitney U test was used to compare engagement metrics and quality scores between TikTok and Bilibili. For comparisons among multiple groups—such as video source, uploader identity, and content type—the Kruskal–Wallis test was applied, followed by Dunn's post hoc test for pairwise comparisons. Spearman's correlation analysis was conducted to examine relationships between video metrics and GQS/mDISCERN scores. Inter-rater reliability for GQS and mDISCERN scores was evaluated using Cohen's kappa coefficient. A significance threshold of

Result

Video characteristics

Based on Table 1, a total of 196 short videos on renal cancer were analyzed, with 100 (51.02%) from TikTok and the remaining 96 (48.98%) from Bilibili. The majority of videos (65.31%) came from professional individuals, followed by nonprofessional individuals (18.37%). Only 6 (3.06%) videos were sourced from professional institutions. Among professional individuals, the most common sources were doctors specializing in renal cancer (61.72%), followed by those specializing in other areas of modern medicine (32.03%).

Video characteristics.

Regarding video content, disease knowledge was the most prevalent (58.67%), followed by treatment-related videos (23.47%) and advertisements (15.82%). The median number of likes was 278 (IQR: 25.75, 676.75), with comments, collections, and shares also showing considerable variation. Videos had a median duration of 121.5 s (IQR: 62.75, 341.75). In terms of quality, the median GQS score was 3 (IQR: 2, 3), indicating moderate quality, while the mDISCERN score was 2 (IQR: 1, 2), suggesting a relatively low level of reliability. Excellent inter-observer agreement was observed, with Cohen's κ values reaching 0.913 for the GQS and 0.935 for the mDISCERN score.

An item-level mDISCERN analysis revealed notably low fulfillment rates for listing additional sources (Q4: 3.06%) and mentioning uncertainties (Q5: 2.55%). Valid source citation (Q2) was achieved in 53.57% of videos, and balanced/unbiased presentation (Q3) in 38.27%. In contrast, most videos (87.76%) met the clarity criterion (Q1). TikTok significantly outperformed Bilibili across multiple items, including clarity (95.00% vs. 80.21%,

Item-level analysis of the modified DISCERN (mDISCERN) tool.

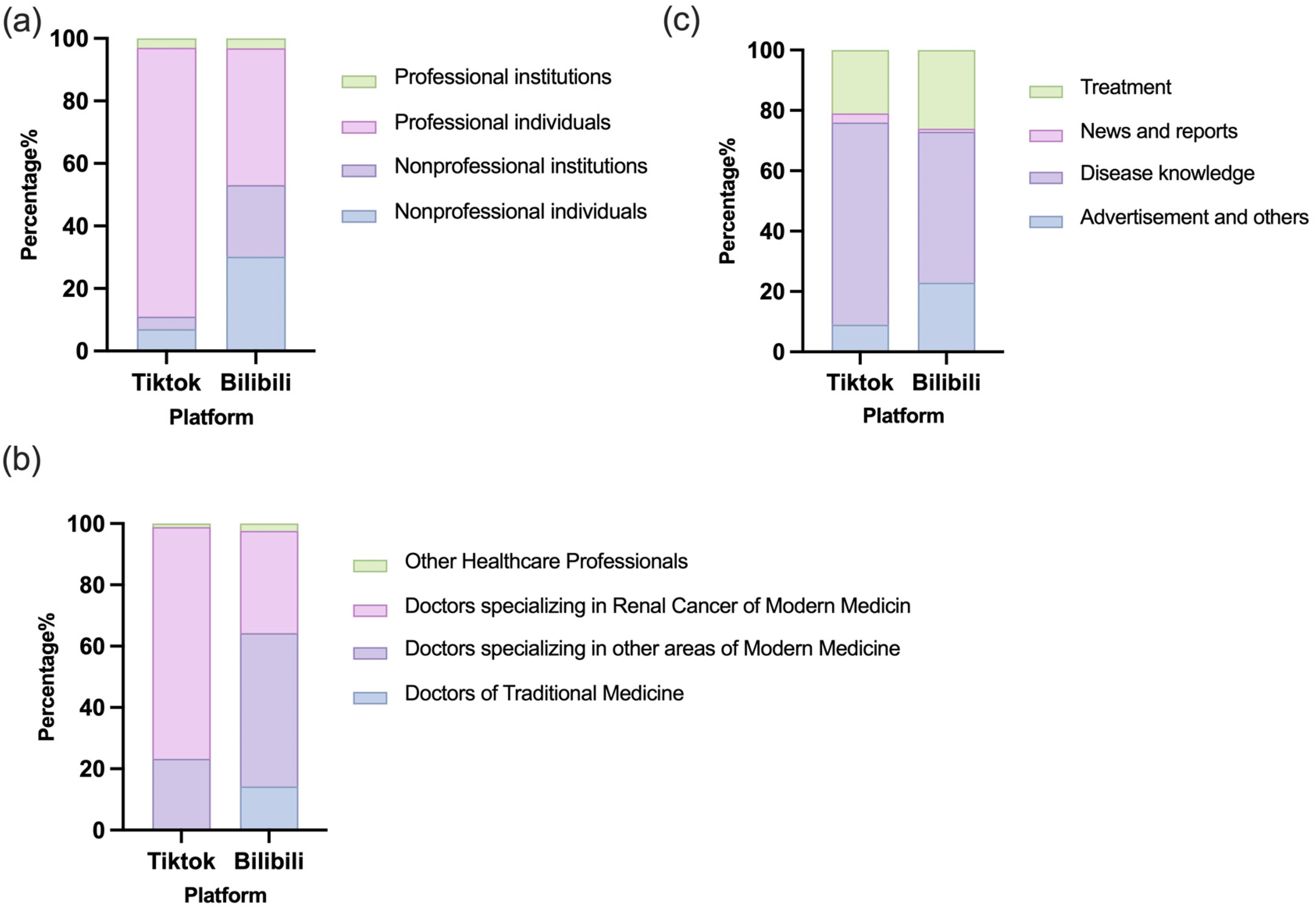

Comparison between different platforms

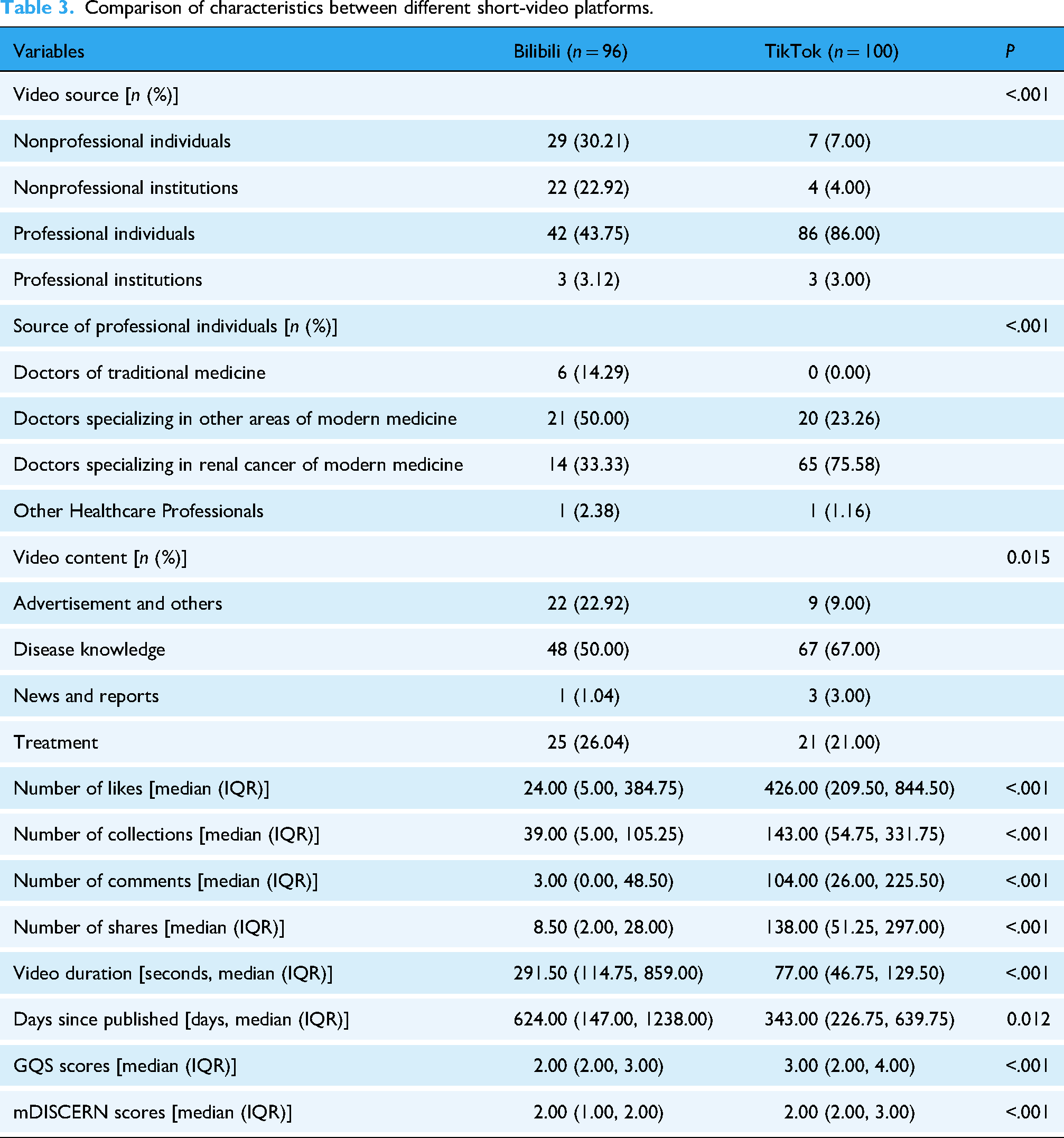

Table 3 and Figure 2 compares the characteristics of short videos about renal cancer on Bilibili and TikTok. Significant differences were observed in several areas. TikTok had a higher proportion of professional individual sources (86.00%) compared to Bilibili (43.75%), while Bilibili had more videos from nonprofessional individuals (30.21%) and institutions (22.92%). Videos from TikTok were predominantly created by doctors specializing in renal cancer (75.58%), while Bilibili had a more diverse professional base, including doctors specializing in other areas of modern medicine (50.00%) and traditional medicine (14.29%).

Distribution of video sources, professional backgrounds of individual creators, and content types across bilibili and TikTok platforms. (a) Distribution of videos by source type. (b) Distribution of videos by content category. (c) Distribution of professional backgrounds among individual medical uploaders.

Comparison of characteristics between different short-video platforms.

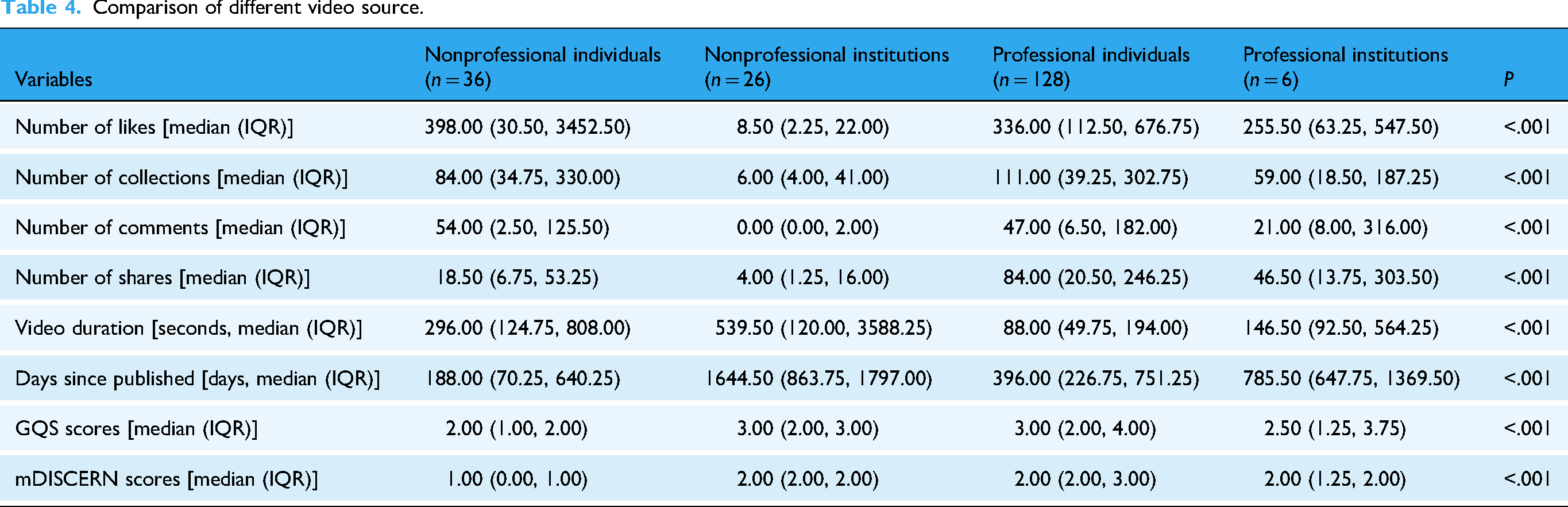

Regarding video content, TikTok focused more on disease knowledge (67.00%) and treatment (21.00%), while Bilibili featured a greater percentage of videos on advertisement and other topics (22.92%) and treatment (26.04%). TikTok videos had significantly higher engagement metrics, including likes, collections, comments, and shares. TikTok also had shorter video durations (77.00 s vs. 291.50 s for Bilibili). Furthermore, videos on TikTok were published more recently (343 days median vs. 624 days for Bilibili). Despite these differences, as shown in Figure 3, the median GQS and mDISCERN scores were similar for both platforms, with TikTok showing slightly higher quality and reliability.

Comparison of video quality and reliability between TikTok and Bilibili platforms. (a) Comparison of GQS between Bilibili and TikTok. (b) Proportion of different levels of video quality. (c) Comparison of mDISCERN between Bilibili and TikTok. (d) Proportion of different levels of video reliability.

Comparison of video characteristics by source type

As shown in Table 4, significant differences were observed across videos from different source types in terms of engagement, duration, recency, and quality scores (all

Comparison of different video source.

Videos from nonprofessional individuals received the highest median number of likes (398.00), whereas those from nonprofessional institutions had the lowest (8.50). Professional individuals’ videos showed relatively high engagement across all metrics. Videos from nonprofessional institutions were the longest in duration and had been published the longest time ago, whereas those from professional individuals were the shortest and most recent.

Regarding video quality and reliability, videos uploaded by professionals individuals achieved the highest GQS score (3.00) and mDISCERN score (2.00), whereas those uploaded by nonprofessionals individuals received the lowest GQS score (2.00) and mDISCERN score (1.00).

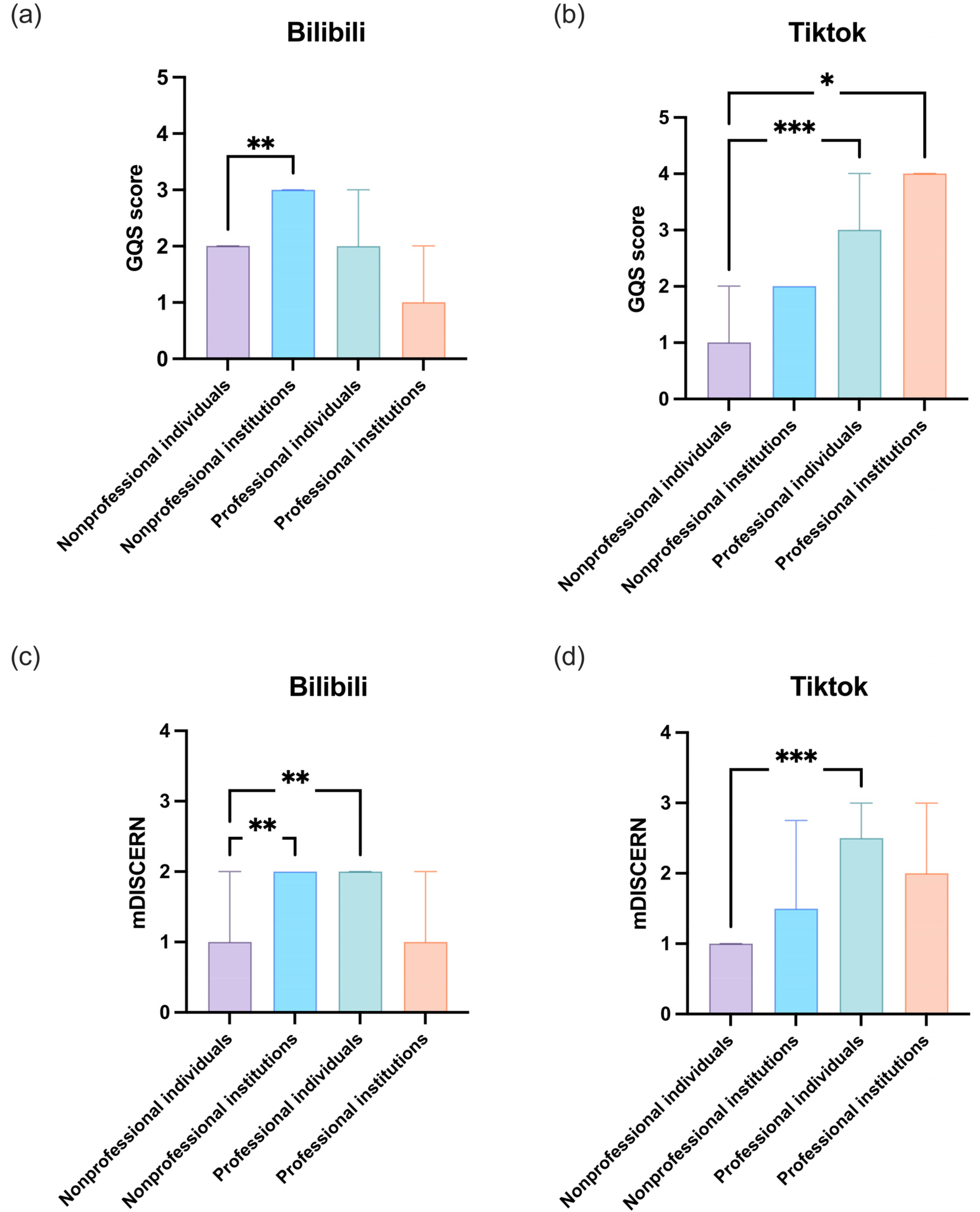

Additionally, as shown in Figure 4, on Bilibili, videos from nonprofessional institutions scored significantly higher in GQS and mDISCERN than those from nonprofessional individuals (

GQS and mDISCERN scores of videos on RCC from different sources on Bilibili and TikTok. (a) GQS of Bilibili videos from different sources. (b) GQS of TikTok videos from different sources. (c) mDISCERN scores of Bilibili videos from different sources. (d) mDISCERN scores of TikTok videos from different sources. RCC: Renal Cell Carcinoma; GQS: Global Quality Scale; mDISCERN: modified DISCERN. *

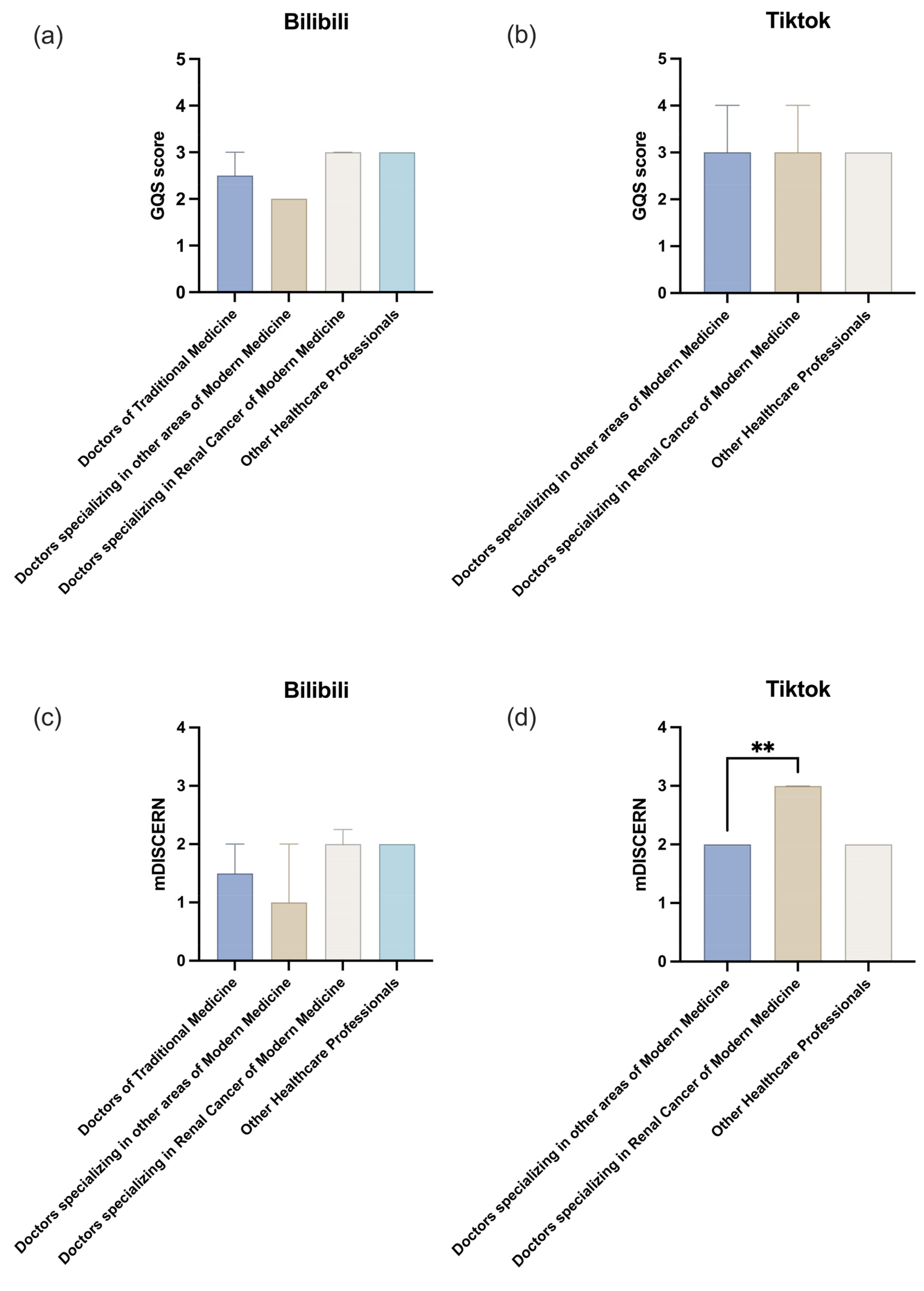

Comparison of video characteristics by professional individuals

As shown in Table 5, significant differences were observed in engagement, duration, and quality/reliability scores among videos created by different types of professional individuals, with the exception of days since publication.

Comparison of different professional individuals.

Videos created by doctors specializing in other areas of modern medicine received the highest median number of likes (403.00) and comments (100.00), while those by doctors of traditional medicine had significantly lower engagement across all metrics.

In terms of quality, videos produced by doctors specializing in renal cancer achieved the highest median GQS score (3.00) and mDISCERN score (3.00), indicating superior quality and reliability. In contrast, videos from doctors of traditional medicine had the lowest median mDISCERN score (1.50). Videos from renal cancer specialists were also the shortest in duration (70.00 s).

As illustrated in Figure 5, on TikTok, videos by renal cancer specialists achieved significantly higher mDISCERN scores than those by doctors of traditional medicine (

GQS and mDISCERN scores of videos on RCC from different professional individual types on Bilibili and TikTok. (a) GQS of Bilibili videos from different professional individual types. (b) GQS of TikTok videos from different professional individual types. (c) mDISCERN scores of Bilibili videos from different professional individual types. (d) mDISCERN scores of TikTok videos from different professional individual types. RCC: renal cell carcinoma; GQS: Global Quality Scale; mDISCERN: modified DISCERN. *

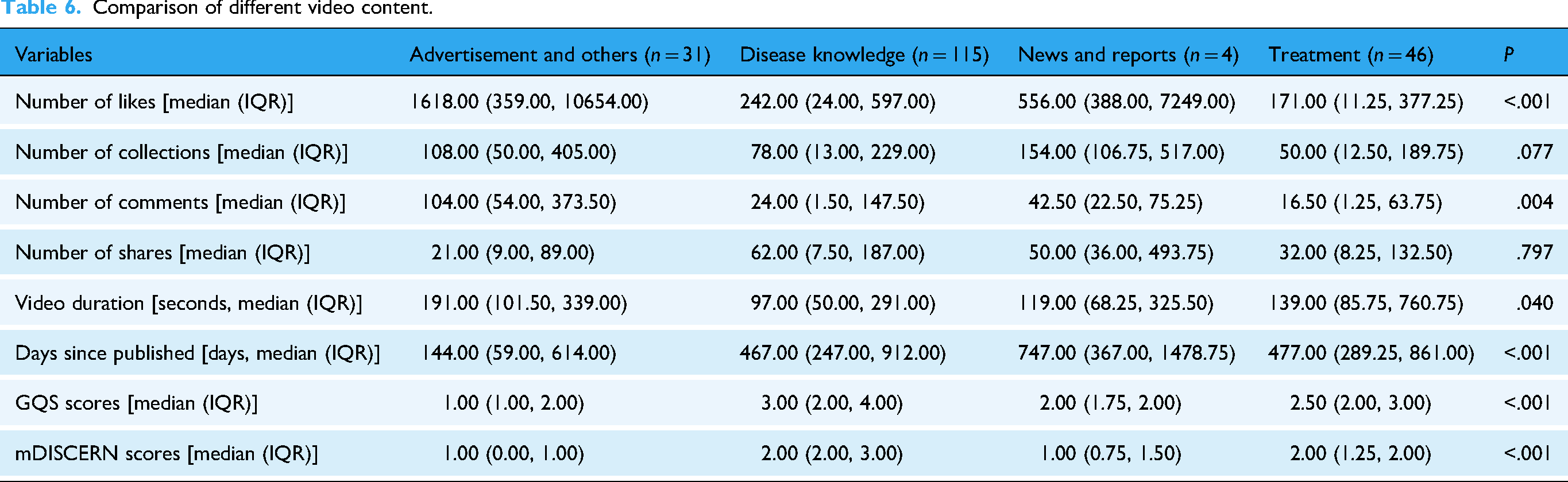

Comparison of video characteristics by content type

Based on Table 6, significant differences were observed across video content types in user engagement, recency, and quality/reliability scores. Videos with advertisement content received the highest median number of likes (1618.00), significantly outperforming other categories. In contrast, treatment-related videos had the lowest median likes (171.00). Disease knowledge videos achieved the highest median GQS and mDISCERN scores (3.00 and 2.00, respectively), indicating superior quality and reliability, while advertisement videos scored the lowest on both scales.

Comparison of different video content.

Additionally, advertisement videos were the most recently published (median: 144 days), whereas news and reports videos had been online the longest (median: 747 days). No significant differences were found in the number of collections and shares among content types.

As illustrated in Figure 6, disease knowledge videos consistently outperformed other categories in both GQS and mDISCERN scores on Bilibili and TikTok, underscoring their superior informational quality and reliability. Advertisement and other content types demonstrated the lowest scores across both platforms, while treatment-related videos scored moderately but significantly higher than advertisements on Bilibili and TikTok. News and reports videos also showed relatively low reliability, particularly on Bilibili.

GQS and mDISCERN scores of videos on RCC from different content types on Bilibili and TikTok. (a) GQS of Bilibili videos from different content types. (b) GQS of TikTok videos from different content types. (c) mDISCERN scores of Bilibili videos from different content types. (d) mDISCERN scores of TikTok videos from different content types. RCC: renal cell carcinoma; GQS: Global Quality Scale; mDISCERN: modified DISCERN. *

Correlation analysis of video metrics

Figure 7 reveals correlations between video metrics and GQS/mDISCERN scores. On TikTok, strong positive correlations were observed among engagement metrics (likes, collections, comments, and shares), while these metrics showed weak negative correlations with quality and reliability scores. In contrast, although engagement metrics were also positively correlated with each other on Bilibili, no significant correlation was found between these metrics and GQS or mDISCERN scores. Video duration showed a weak positive correlation with GQS on TikTok (

Correlation heatmap of video metrics on TikTok and Bilibili.

Discussion

Research background

With the rise of social media, particularly the emergence of short-video platforms, significant changes have occurred in how the public accesses health information.

19

Platforms such as TikTok and Bilibili have become major channels for disseminating health-related content, attracting a large number of users. Although scientific research on social media is continually expanding, studies focusing on urological diseases remain limited. Existing literature reports findings consistent with ours. A study by Xue et al. revealed that the overall quality and reliability of videos related to genitourinary cancers on TikTok were generally poor, with over one-third containing misleading information, and most failing to adequately explain professional terminology.

20

Research by H. P. N. Wong et al. indicated that videos released by healthcare professionals on benign prostatic hyperplasia (BPH) demonstrated higher completeness and reliability compared to those by non-healthcare providers. However, the vast majority of BPH-related content on TikTok was of low quality and incomplete, highlighting an urgent need to improve professional accuracy and comprehensiveness to mitigate the risk of misleading large audiences.

21

Liang et al. found that Bilibili had significantly higher-quality prostate cancer videos than TikTok, with urologist-generated and knowledge-focused content being more reliable than patient-shared or experience-based videos.

22

Similarly, Augustyn et al. found that more than half of the kidney stone-related content on YouTube and TikTok was of low quality, with a significantly higher misinformation rate on TikTok than on YouTube (34% vs. 2%), and no correlation between content quality and engagement metrics such as likes or views on either platform (

Despite the exponential growth in empirical studies evaluating the quality of medical information on social media in recent years, there has been no reported assessment of short-video content related to RCC, which accounts for approximately 3% of adult malignancies. 24 This cross-sectional study is the first to systematically evaluate the quality and reliability of short videos pertaining to RCC on two of China's most influential social media platforms, Bilibili and TikTok. It aims to address the evidence gap regarding social media content quality for this specific cancer type and provide a quantitative baseline for future targeted interventions and platform governance.

Key findings and drivers of quality

Our analysis revealed several key patterns. This study observed that short videos related to RCC on TikTok scored significantly higher than those on Bilibili in both GQS (3 [IQR: 2, 4] vs. 2 [IQR: 2, 3],

From a dissemination perspective, videos on TikTok showed significantly higher user engagement across all metrics, shorter duration, and more recent publication dates. This environment attracts more professional doctors aiming to expand their influence, among whom up to 75.58% are specialists in renal oncology. In contrast, Bilibili emphasizes community interaction and supports longer-form content, attracting a broader range of creators, including patients, enthusiasts, and marketing accounts. For users seeking health information on RCC, TikTok offers quicker access to brief, highly engaging videos created by experts—though their potential lack of depth requires critical evaluation. Conversely, Bilibili provides more diverse and in-depth content but demands stronger discernment to filter out non-professional or promotional information.

Correlation analysis of video metrics

All user engagement metrics (likes, comments, favorites, shares) were strongly positively correlated with each other. However, their correlation with content quality and reliability was either weakly negative (on TikTok) or statistically insignificant (on Bilibili).

The weak negative correlation observed on TikTok suggests a potential algorithmic paradox: content that garners high engagement tends to be less reliable. This aligns with TikTok's algorithm, which promotes videos that maximize watch time and user interaction—often favoring emotionally charged or sensational content over scientifically accurate material. This poses a systemic risk within the platform's health information ecosystem.

Conversely, the lack of correlation on Bilibili may stem from its community-driven nature. Bilibili users are more likely to actively search for content, and interactions such as “coins” and “favorites” may reflect genuine appreciation for informative or in-depth videos rather than impulsive reactions. Thus, quality and engagement are more decoupled on this platform.

The weak correlation between video length and GQS on TikTok suggests that the platform's algorithm or user preference for shorter content may constrain the depth of information presented. In contrast, no significant relationship was observed on Bilibili, indicating that video length is not a primary determinant of quality and reliability. Instead, the expertise of content creators and their ability to deliver well-structured, evidence-based information appear to be more critical factors, regardless of video duration. This highlights the need for viewers to look beyond superficial popularity metrics and prioritize the credentials of the content creator and the informational value of the content.

Advantages and significance

This study offers several methodological and practical contributions to the field of digital health communication:

First, it is among the first to systematically compare the quality of medical short videos related to renal cancer across two major short-video platforms—TikTok and Bilibili. This cross-platform design provides new insights into structural differences in content ecosystems, creator demographics, and engagement patterns, offering a transferable framework for future research in other disease areas. Second, by integrating both the GQS and the mDISCERN tool, this study balances assessments of content comprehensiveness and scientific rigor, thereby enhancing the robustness, repeatability, and interpretability of findings. 28 Third, the study offers a nuanced classification of video creators—not only distinguishing between professional and non-professional sources but further subdividing professionals into renal specialists, general medical practitioners, traditional medicine doctors, and other healthcare providers. This granularity allows for a more precise understanding of how source characteristics influence content quality.

In terms of practical implications, these findings provide empirical evidence for policymakers to support regulatory initiatives, such as implementing health content auditing systems, verifying professional credentials, and flagging misinformation. Moreover, for platform developers, the results highlight the need for algorithmic adjustments that prioritize recommending content from verified healthcare professionals based on information quality rather than relying solely on engagement metrics, thereby balancing commercial viability with social responsibility. Furthermore, for public health educators, this study underscores the importance of promoting digital health literacy, as user engagement metrics do not positively correlate with information quality. Therefore, users should be trained to critically assess content based on source credibility and informational structure. 29 These insights can guide the development of educational tools and programs aimed at enhancing users’ ability to identify credible health information in the online environment.

Limitations

Despite its methodological rigor, this study has several inherent limitations. First, its cross-sectional design captures only a snapshot in time and cannot reflect the dynamic evolution of short-video content. Second, the exclusive focus on Chinese-language platforms limits the generalizability of findings to other cultural and linguistic contexts. Health information-seeking behaviors, content creation norms, and the regulatory environment can vary significantly across regions. 30 Therefore, our results are most directly applicable to the Chinese digital ecosystem. Third, while the GQS and mDISCERN tools evaluate structural and reliability aspects of information, they do not directly measure factual accuracy against current clinical guidelines. 31 Additionally, the scoring remains subjective despite high inter-rater reliability (Cohen's κ > .9). Fourth, the analysis lacked stratification of user demographics, which may influence how content is perceived and engaged with. Fifth, the sampling strategy—relying on the platforms’ default “comprehensive” ranking and a single keyword (“肾癌”)—may have underrepresented less popular content and videos using alternative terms, thus limiting the diversity of perspectives captured.

Future research

Building on these limitations, several directions warrant further investigation: (1) longitudinal or time-series designs to track changes in content quality and engagement patterns; (2) cross-platform and cross-lingual comparisons (e.g., including YouTube or Instagram Reels) to enhance external validity; (3) integration of AI-assisted tools (e.g., natural language processing, automated video analysis) to improve objectivity and scalability in content evaluation; (4) multidimensional user studies incorporating demographic and behavioral data to better understand audience reception and comprehension; and (5) more comprehensive search strategies that employ multiple keywords and account for platform-specific algorithmic biases.

Conclusion

This study evaluated RCC-related short videos on TikTok and Bilibili using GQS and mDISCERN tools, revealing generally low quality and reliability across both platforms. Videos from healthcare professionals, particularly specialists, were significantly more reliable than those from non-professional sources. These findings highlight the need for greater involvement from medical experts in creating evidence-based content and improved oversight by platforms to limit misinformation. Viewers should critically assess health-related videos and prioritize content from certified professionals.

Supplemental Material

sj-docx-1-dhj-10.1177_20552076261417853 - Supplemental material for A cross-sectional study of the quality and reliability of renal cell carcinoma-related short videos on Bilibili and TikTok

Supplemental material, sj-docx-1-dhj-10.1177_20552076261417853 for A cross-sectional study of the quality and reliability of renal cell carcinoma-related short videos on Bilibili and TikTok by Jianzhi Su, Helong Xiao, Qinghui Xiao, Ren Xu, Yanan Ren, Luyang Su and Changjin Shi in DIGITAL HEALTH

Supplemental Material

sj-docx-2-dhj-10.1177_20552076261417853 - Supplemental material for A cross-sectional study of the quality and reliability of renal cell carcinoma-related short videos on Bilibili and TikTok

Supplemental material, sj-docx-2-dhj-10.1177_20552076261417853 for A cross-sectional study of the quality and reliability of renal cell carcinoma-related short videos on Bilibili and TikTok by Jianzhi Su, Helong Xiao, Qinghui Xiao, Ren Xu, Yanan Ren, Luyang Su and Changjin Shi in DIGITAL HEALTH

Footnotes

Acknowledgements

The authors would like to express their gratitude to the participants who participated in the study.

Ethical statement

The data used in this study were sourced from publicly available video content published on platforms such as Bilibili and TikTok. These videos are publicly accessible, and no personal privacy information was involved during the data collection process. All analyzed content was publicly available, and the study did not involve the collection or processing of users’ private information. In accordance with relevant ethical review guidelines, ethical approval for this study was not required.

Contributorship

Changjin Shi did conceptualization and writing the original draft. Ren Xu did conceptualization, formal analysis, and validation. Yanan Ren performed data curation and formal analysis. Helong Xiao and Jianzhi Su data curation. Qinghui Xiao and Luyang Su was responsible for the software and visualization. All authors contributed to the article and approved the submitted version.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Medical Science Research Project of Hebei (grant number NO. 20230953).

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.