Abstract

Background

Artificial intelligence (AI) is increasingly embedded in clinical decision-making, making trust in AI-generated recommendations essential for safe and effective healthcare delivery. Nursing students, as future practitioners, are critical stakeholders in this transition; however, their trust in AI within rapidly digitizing educational and clinical contexts in Saudi Arabia remains underexplored, despite national digital health initiatives aligned with Vision 2030.

Objective

This study examined nursing students’ trust in AI-driven clinical recommendations across Saudi universities and investigated how perceived risks, perceived benefits, AI education, and academic progression influence trust development.

Methods

A multicenter cross-sectional survey was conducted between September and November 2025 across five Saudi nursing institutions (three all-male, one all-female, and one mixed-gender university). A total of 622 undergraduate nursing students completed validated instruments measuring functional and ethical trust, perceived benefits, perceived risks, applicability, and barriers to AI learning. Multiple linear regression and moderation analyses were performed using robust standard errors.

Results

Although students reported highly positive attitudes toward AI (mean = 3.94/5.00) and strong perceived benefits (mean = 3.98/5.00), overall trust in AI-generated clinical recommendations was low (mean = 2.28/5.00), indicating a trust–optimism paradox. Perceived risks positively predicted trust (β = 0.136,

Conclusions

Nursing students’ trust in AI is dynamic and influenced by educational exposure and clinical experience. Transparent, structured AI education integrating technical and ethical dimensions is associated with calibrated trust and support for responsible AI adoption in clinical practice. These findings have direct implications for nursing education policy, suggesting that integrating transparent, evidence-based AI curricula into digital health education frameworks can support workforce readiness and responsible technology adoption aligned with national healthcare transformation initiatives.

Keywords

Introduction

Artificial intelligence (AI) is rapidly transforming clinical practice, with systems now supporting diagnosis, treatment planning, and patient monitoring across healthcare settings.1,2 In Saudi Arabia, this transformation is particularly pronounced, driven by Vision 2030's strategic emphasis on digital health innovation. 3 Despite this technological advancement, a critical question remains underexplored: how much do future healthcare professionals trust AI-generated clinical recommendations? While physicians’ adoption of AI tools has received scholarly attention, 4 empirical evidence regarding nursing perspectives remains limited despite nurses’ central role in care coordination and their position as the largest healthcare workforce globally.

Trust in clinical AI represents a complex psychological construct that balances anticipated benefits against perceived risks. 5 On the one hand, AI promises improved diagnostic accuracy, error reduction, and workflow optimization. 6 On the other hand, legitimate concerns exist regarding algorithmic bias, liability assignment, and potential professional deskilling. 7 High-profile cases of algorithmic bias in healthcare resource allocation 8 and diagnostic imaging 9 have heightened awareness of AI's limitations, even as growing evidence demonstrates its clinical utility in sepsis prediction, pressure injury prevention, and medication safety. 10 This duality creates a challenging landscape for nursing students who lack the experiential grounding that experienced clinicians rely upon to benchmark AI outputs against their professional judgment. Without this practical validation framework, nursing students’ trust formation likely depends more heavily on educational exposure and theoretical understanding than on clinical experience.

Contemporary nursing education faces significant challenges in preparing students for AI-integrated practice, with less than one-third of programs offering dedicated AI coursework. 11 This curricular inconsistency creates variability in educational exposure that may shape trust formation differently than clinical experience. In the Saudi Arabian context, nursing education operates within a gender-segregated higher education system where most universities maintain separate campuses, potentially influencing technology exposure patterns. Digital-native students demonstrate comfort with consumer AI technologies yet lack professional frameworks for evaluating AI in high-stakes healthcare contexts, a paradox of technological familiarity paired with clinical inexperience that creates uncertainty about how they will calibrate trust in AI clinical recommendations.

Theoretical perspectives on trust in automation suggest that appropriate trust calibration is essential for safe technology use. 12 Both insufficient trust (leading to beneficial system underutilization) and excessive trust (resulting in automation bias where clinicians overlook system errors) pose significant patient safety risks. 13 This challenge becomes particularly acute during professional identity formation when foundational attitudes toward technology become established. Understanding how nursing students develop trust in AI systems has profound implications for curriculum design, workforce preparation, and ultimately patient outcomes in increasingly automated care environments.

To address these critical gaps, we conducted a multicenter cross-sectional study with three specific objectives: (1) to characterize nursing students’ trust in AI-generated clinical recommendations across functional and ethical dimensions; (2) to examine associations between trust and theoretically relevant predictors including perceived benefits, perceived risks, applicability, and learning barriers; and (3) to assess whether educational exposure and academic progression moderate these relationships. We hypothesized that perceived benefits would positively predict trust, while the relationship between risk awareness and trust would depend on educational preparation, specifically, that informed understanding of risks paired with mitigation strategies would be associated with calibrated trust rather than diminished confidence.

By examining trust formation during professional development, this research provides insights for nursing education leaders, healthcare administrators, and AI developers working to prepare the nursing workforce for technology-intensive care environments. Our findings contribute evidence for designing curricula that foster appropriate trust calibration, neither uncritical acceptance nor reflexive rejection of AI systems, ultimately supporting safer integration of these technologies into nursing practice and patient care.

Methods

Study design

We conducted a multicenter, descriptive cross-sectional survey to examine associations between nursing students’ perceived benefits, perceived risks, and trust in AI-driven clinical recommendations. This design was selected to capture current attitudes toward emerging technologies across diverse educational settings, providing a comprehensive snapshot of trust formation during professional identity development. The study adhered to STROBE guidelines for observational research 14 and was implemented between 12 September and 11 November 2025.

Study setting and context

Saudi Arabian higher education and clinical context

Data were collected from five Saudi Arabian nursing institutions: Northern Border University (NBU), King Abdulaziz University (KAU), Bisha University (BU), Qassim University (QU), and Saudi Electronic University (SEU). Saudi Arabia's higher education system operates predominantly through gender-segregated campuses. Our study setting reflects this institutional structure: NBU, BU, and QU operate all-male nursing programs; KAU maintains separate male and female colleges; and SEU employs a mixed-gender online education model with gender-segregated clinical placements. This institutional diversity encompasses variations in gender composition, curriculum models, technological integration, and student demographics, thereby enhancing external validity.

Clinical training sites associated with these institutions vary substantially in AI adoption. Teaching hospitals affiliated with KAU and SEU actively integrate AI-enhanced electronic health records (EHRs), predictive analytics for sepsis detection, and AI-assisted diagnostic imaging interpretation. In contrast, regional hospitals serving NBU, BU, and QU students have limited AI implementation, primarily restricted to automated vital sign monitoring and basic documentation systems. This variation in clinical AI exposure is critical for interpreting our findings, particularly regarding the moderating effects of academic year on trust development.

Sampling strategy and participant recruitment

A stratified random sampling technique was employed, with universities stratified by enrollment size: large (>1000 nursing students: NBU,

Within each stratum, proportional allocation determined target sample sizes reflecting institutional enrollment. Specifically, we invited 200 students from NBU (16.0% of enrollment), 200 from QU (18.0%), 150 from KAU (14.7%), 150 from BU (21.8%), and 100 from SEU (16.3%), totaling 800 invitations across 4677 eligible students. Students were randomly selected from registrar-provided enrollment lists using computer-generated random numbers in SPSS (RAND function). Selection was stratified by academic year within each institution to ensure adequate representation of second-, third-, and fourth-year students, as clinical exposure increases substantially in advanced years and may independently influence AI attitudes. First-year students were excluded because they had not yet commenced clinical rotations and lacked clinical context for evaluating AI applications.

Sample size determination

Sample size was calculated using two complementary approaches. First, Cochran's formula for finite populations (total nursing students across five institutions:

Eligibility criteria

Inclusion criteria: Undergraduate nursing students enrolled full-time in accredited Bachelor of Science in Nursing programs, aged ≥18 years, in second year or above (having commenced clinical rotations), and capable of providing informed consent in Arabic or English.

Exclusion criteria: Graduate students, first-year students (pre-clinical), part-time enrollees, students on academic leave during data collection, and those enrolled in non-accredited programs.

Ethical considerations

Ethical approval was obtained from the Nursing Research Ethical Committee at KAU (Reference No. 1M.176), with additional institutional approvals secured from participating universities. All procedures complied with the Declaration of Helsinki. Students received detailed electronic information sheets explaining the study's purpose, voluntary nature, data confidentiality procedures, and their right to withdraw without penalty. Electronic informed consent was obtained before survey access. Participation was entirely voluntary with no academic incentives or penalties; students were explicitly assured that responses would not affect their academic standing, clinical evaluations, or relationships with faculty. To minimize perceived coercion, faculty members were not involved in recruitment or data collection; non-faculty research coordinators conducted all recruitment activities. Data confidentiality was maintained through complete anonymization at submission; no personally identifiable information, email addresses, or internet protocol (IP) addresses were collected. Each response received an automated numeric identifier, and data were stored on encrypted, password-protected servers accessible only to the research team.

Survey instrument

Instrument structure and content

The survey comprised six sections: (1) demographic characteristics (age, sex, university, academic year, English proficiency, and computer skills), (2) attitudes toward AI, (3) perceived applicability of AI in nursing, (4) perceived risks of AI, (5) prior AI educational exposure, and (6) barriers to AI learning. The complete questionnaire contained 47 items and required approximately 15–20 min for completion as determined during pilot testing. All attitudinal and perceptual items employed 5-point Likert scales (1 = strongly disagree, 2 = disagree, 3 = neutral, 4 = agree, 5 = strongly agree).

Trust assessment

Trust in AI was assessed using the negative subscale of the General Attitudes toward AI Scale (GAAIS), 14 conceptually divided into two dimensions: Functional Trust (confidence in technical reliability, diagnostic accuracy, and system performance) and Ethical Trust (confidence in fairness, transparency, value alignment with nursing ethics, and absence of bias). Items were reverse-coded such that higher scores indicated greater trust. A composite Total Trust Score was calculated by averaging all trust items, providing an overall index of confidence in AI clinical recommendations. The GAAIS has demonstrated strong psychometric properties across international samples (original validation: Cronbach's α = 0.89). 14

Additional scales

Perceived benefits were measured using items adapted from validated technology acceptance instruments, 14 assessing beliefs about AI's capacity to enhance diagnostic accuracy, reduce errors, improve workflow efficiency, and support clinical decision-making. Perceived risks assessed concerns about algorithmic bias, diagnostic errors, patient privacy breaches, professional deskilling, and liability ambiguities. Perceived applicability measured students’ judgments about AI's practical relevance to nursing-specific tasks such as care planning, patient assessment, and clinical documentation. Barriers to learning AI captured perceived obstacles, including insufficient curriculum integration, lack of training resources, technical complexity, and limited access to AI systems during clinical rotations. These scales were adapted from established frameworks in technology acceptance research.15–17

Prior AI education was assessed dichotomously (yes/no) with the question: “Have you received formal education or training about artificial intelligence in healthcare through university coursework, workshops, or structured programs?” Formal education was defined as structured instructional content delivered through dedicated courses, guest lectures, or official workshops, explicitly excluding self-directed learning, casual media consumption, or general computer literacy training. Students responding affirmatively were also asked to specify the format (e.g. dedicated AI course, integrated module within another course, and one-time workshop).

Instrument validation and translation

Content validity was established through expert review by three nursing educators with curriculum development expertise and two AI ethics specialists with healthcare informatics backgrounds (content validity index = 0.89). Items were evaluated for clarity, relevance, and alignment with study constructs. Construct validity was confirmed using confirmatory factor analysis in a pilot sample of 30 students, which supported scale dimensionality (root mean square error of approximation = 0.06, comparative fit index = 0.93, indicating acceptable model fit). 18

Translation followed rigorous forward–backward protocols established in cross-cultural survey research. 20 Two independent bilingual translators (native Arabic speakers fluent in English) forward-translated the English instrument into Arabic. A reconciliation panel of three bilingual nursing faculty members reviewed both translations, resolved discrepancies, and produced a consensus Arabic version. Two different bilingual translators (native English speakers fluent in Arabic) then back-translated the Arabic version into English without access to the original. The research team compared back-translations with the original English instrument, confirming semantic and conceptual equivalence. Pilot testing with 30 nursing students (15 completing the Arabic version, 15 completing the English version) identified no comprehension difficulties or cultural inappropriateness.

Publicly available scales were used in accordance with their open-access licensing terms. The GAAIS is freely available for research use with appropriate citation. 14

Data collection procedures

Survey administration

Data collection occurred via Google Forms between 12 September and 11 November 2025. Students were presented with both Arabic and English versions simultaneously in a bilingual format within the same questionnaire. At the survey outset, students indicated their preferred primary language, which determined the default display order (preferred language shown first in left-to-right or right-to-left text orientation as appropriate), but both versions remained accessible throughout via toggle buttons to accommodate varying language comfort levels across technical terminology. This dual-language approach ensured comprehension while allowing students to reference either version as needed.

Unique anonymous survey links were generated for each invited student using Google Forms by embedding an individual, non-identifiable study code directly into the survey URL. Each participant received a personalized link that allowed the survey to be completed only once and was automatically deactivated after submission to prevent duplicate responses. To ensure complete anonymity, Google Forms’ email collection feature was disabled, and participants were not required to sign in to a Google account. Links were distributed through departmental academic coordinators rather than directly from the research team, and no IP address tracking or identifiable metadata were collected. Google Forms’ built-in timestamp logging was retained only to assess response patterns for quality control (early vs. late responders) but could not be linked to individual participants.

Recruitment utilized three channels: (1) institutional email lists managed by registrars (invitation emails sent from departmental addresses, not individual researchers), (2) learning management system announcements, and (3) in-class announcements conducted by non-faculty research coordinators to minimize coercion. Participants could pause and resume survey completion within a 72-h window to accommodate busy academic schedules. While this feature introduced a theoretical risk of inter-student consultation, the window was deliberately limited to 72 h (rather than longer durations) to minimize contamination risk. Post-hoc analysis revealed that 89.3% of respondents completed the survey in a single session (median completion time: 18 min), and only 4.2% used the full 72-h window, suggesting minimal opportunity for collaborative responses. Additionally, variability in response patterns and the absence of suspicious clustering in completion timestamps further supported data integrity. Automated email reminders were sent at one week and two weeks post-invitation to non-responders. No financial or academic incentives were offered.

Data quality assurance

Multiple quality control measures were implemented. Mandatory response fields eliminated missing data (<0.5% missing on any item). Three embedded attention-check items (e.g. “To demonstrate attention, please select ‘Strongly Agree’ for this item”) were distributed throughout the survey. 21 Inattentive responding was operationally defined as: (1) failing any attention check, (2) completing the survey in <7 min (indicating insufficient engagement relative to the 47-item length), or (3) exhibiting invariant response patterns (selecting the same response option for >90% of items, suggesting non-reflective responding). 19 These criteria were applied programmatically during data cleaning using SPSS syntax. Six cases (0.96% of respondents) met exclusion criteria and were removed, yielding a final analytical sample of 622.

Non-response bias was assessed by comparing early responders (those completing within the first two weeks,

Instrument reliability

Internal consistency was assessed using Cronbach's alpha coefficients for each scale in the final sample (

Statistical analysis

All analyses were performed using IBM SPSS Statistics version 28.0 (IBM Corp., Armonk, NY, USA). Descriptive statistics summarized demographic characteristics and study variables, including means, standard deviations, medians, and interquartile ranges for continuous variables, and frequencies and percentages for categorical variables. Normality was assessed using Shapiro–Wilk tests and visual inspection of histograms and Q–Q plots. All continuous variables exhibited significant departures from normality (

Primary analysis: Predictors of trust

Multiple linear regression identified predictors of the total trust score. Six predictor variables were entered simultaneously: perceived benefits, perceived risks, perceived applicability, barriers to learning AI, general attitudes toward AI, and attitudes toward AI in healthcare. Model fit was evaluated using

Moderation analyses

Hierarchical multiple regression tested whether prior AI education and academic year moderated the relationship between perceived risks and total trust score.

24

For each moderation analysis, Step 1 entered main effects (perceived risks and the moderator variable, mean-centered to reduce multicollinearity), and Step 2 added the interaction term (perceived risks × moderator). Significant interactions (

Statistical assumptions and sensitivity analyses

Regression assumptions were rigorously verified. Linearity was assessed through scatterplots of predicted values versus residuals (no curvilinear patterns observed). Independence of residuals was evaluated using the Durbin–Watson statistic (observed value: 1.98, within acceptable range 1.5–2.5). 26 Multicollinearity was assessed via variance inflation factors (VIFs); all VIFs were <5 (range: 1.23–3.87), indicating acceptable collinearity. 27 Homoscedasticity was examined through residual plots; evidence of heteroskedasticity prompted the use of heteroskedasticity-consistent (HC3) robust standard errors for all regression models. 28

To address potential clustering effects due to students being nested within five institutions, we conducted sensitivity analyses. 29 First, we calculated the intraclass correlation coefficient (ICC) for the total trust score, which quantifies the proportion of variance attributable to institutional membership (ICC = 0.04, indicating minimal clustering). Second, we computed institution-specific regression models separately for each university to assess whether predictor-outcome relationships varied substantially across sites; results showed consistent directions and magnitudes of effects. Third, we re-ran primary regression models including institution as a fixed-effect covariate (dummy-coded); inclusion of institution did not substantively alter findings (βs changed < .05). These analyses justified pooled analyses with robust standard errors rather than multilevel modeling.

Due to the self-reported nature of all measures collected within a single survey administration, common-method bias was a potential concern. 30 To assess this risk, we conducted Harman's single-factor test using exploratory factor analysis with unrotated principal axis factoring. 31 All items were entered into a single factor analysis; if a single factor accounts for >50% of variance, common-method bias may be problematic. In our data, the largest unrotated factor accounted for 28.3% of total variance, well below the 50% threshold, suggesting that common-method bias did not substantially distort findings.

Additional sensitivity analyses included: (1) bootstrapping with 5000 resamples to generate bias-corrected 95% confidence intervals, confirming stability of regression coefficients 32 ; (2) subgroup analyses stratified by sex, university, and computer proficiency, all demonstrating consistent patterns; (3) exclusion of potential outliers identified via standardized residuals (±>3.0); no cases met this criterion.

All tests were two-tailed with α = 0.05. Effect sizes (standardized β coefficients,

Results

Participant characteristics and descriptive statistics

A total of 622 nursing students participated in this multicenter cross-sectional study from five Saudi Arabian universities. The demographic profile of participants is presented in Table 1. The sample was predominantly female (85.9%,

Demographic characteristics of participants (

Participants were distributed across NBU (29.1%,

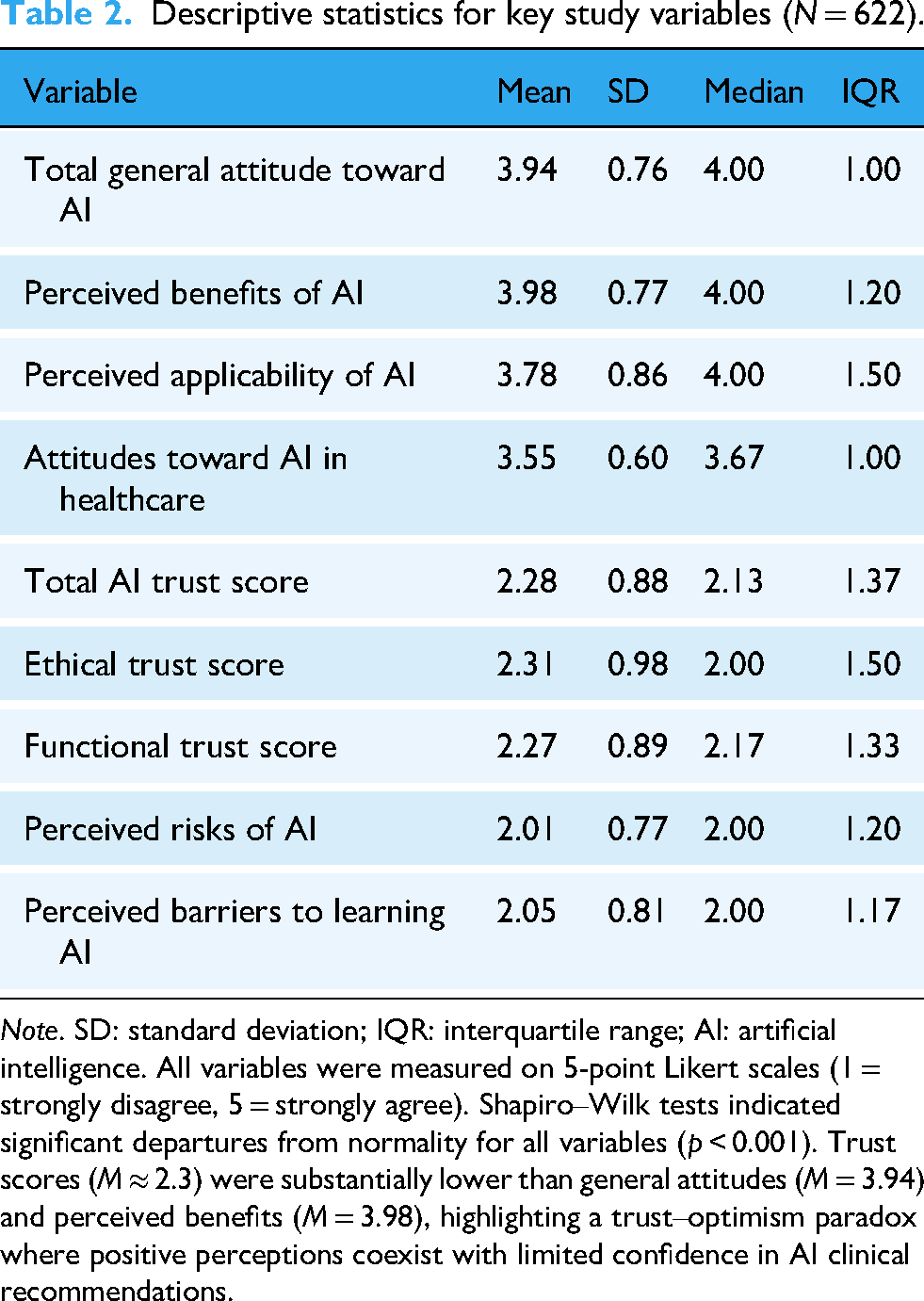

Table 2 displays descriptive statistics for all key study variables. Nursing students reported moderately high perceptions of AI benefits (

Descriptive statistics for key study variables (

A notable divergence emerged between these affirmative attitudes and students’ trust in AI systems, revealing a trust–optimism paradox. The total AI trust score was comparatively low (

Predictors of trust in AI

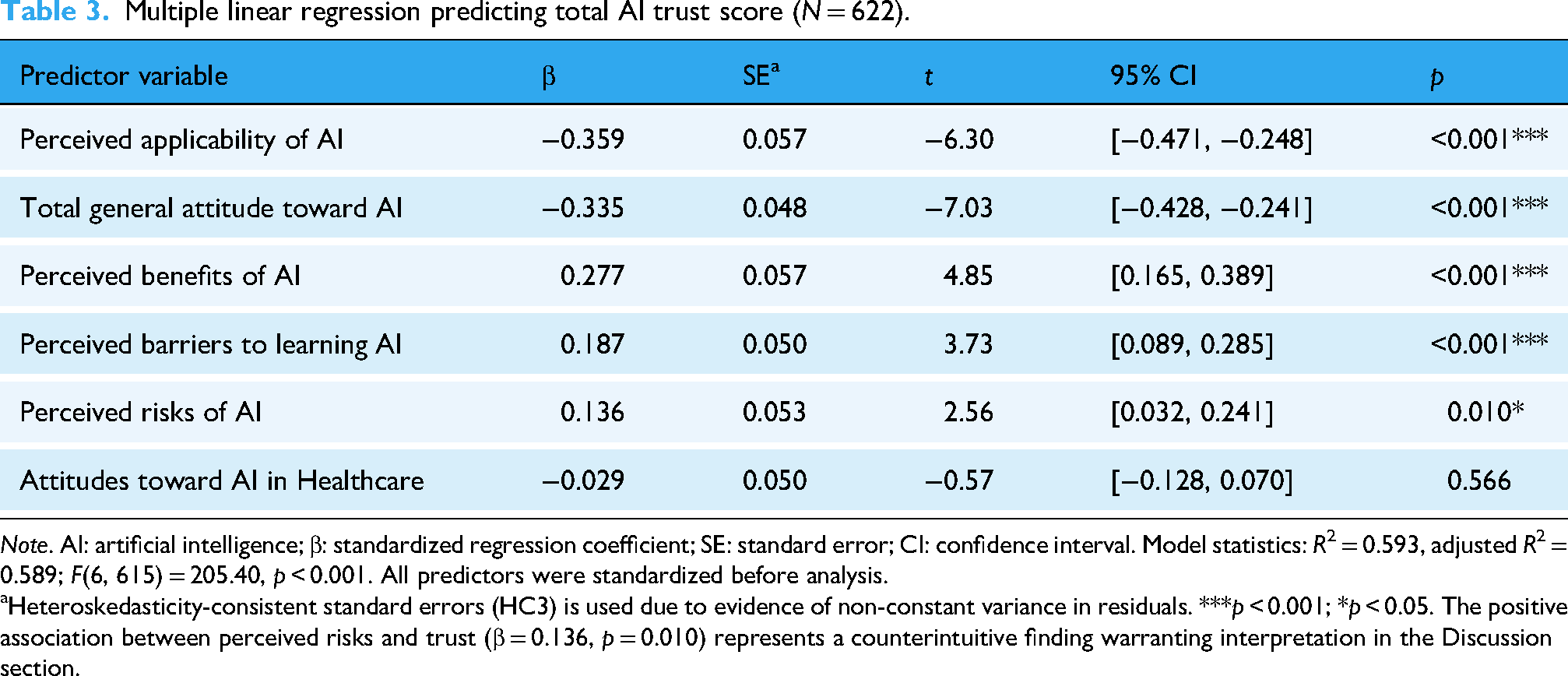

Multiple linear regression analysis identified significant predictors of nursing students’ trust in AI (Table 3). The model explained a substantial proportion of variance in AI trust (

Multiple linear regression predicting total AI trust score (

Heteroskedasticity-consistent standard errors (HC3) is used due to evidence of non-constant variance in residuals. ***

Three variables demonstrated positive associations with AI trust. Perceived benefits of AI emerged as the strongest positive predictor (β = 0.277, 95% CI [0.165, 0.389],

Two variables exhibited negative associations with trust. Perceived applicability of AI showed a strong inverse relationship with trust (β = −0.359, 95% CI [−0.471, −0.248],

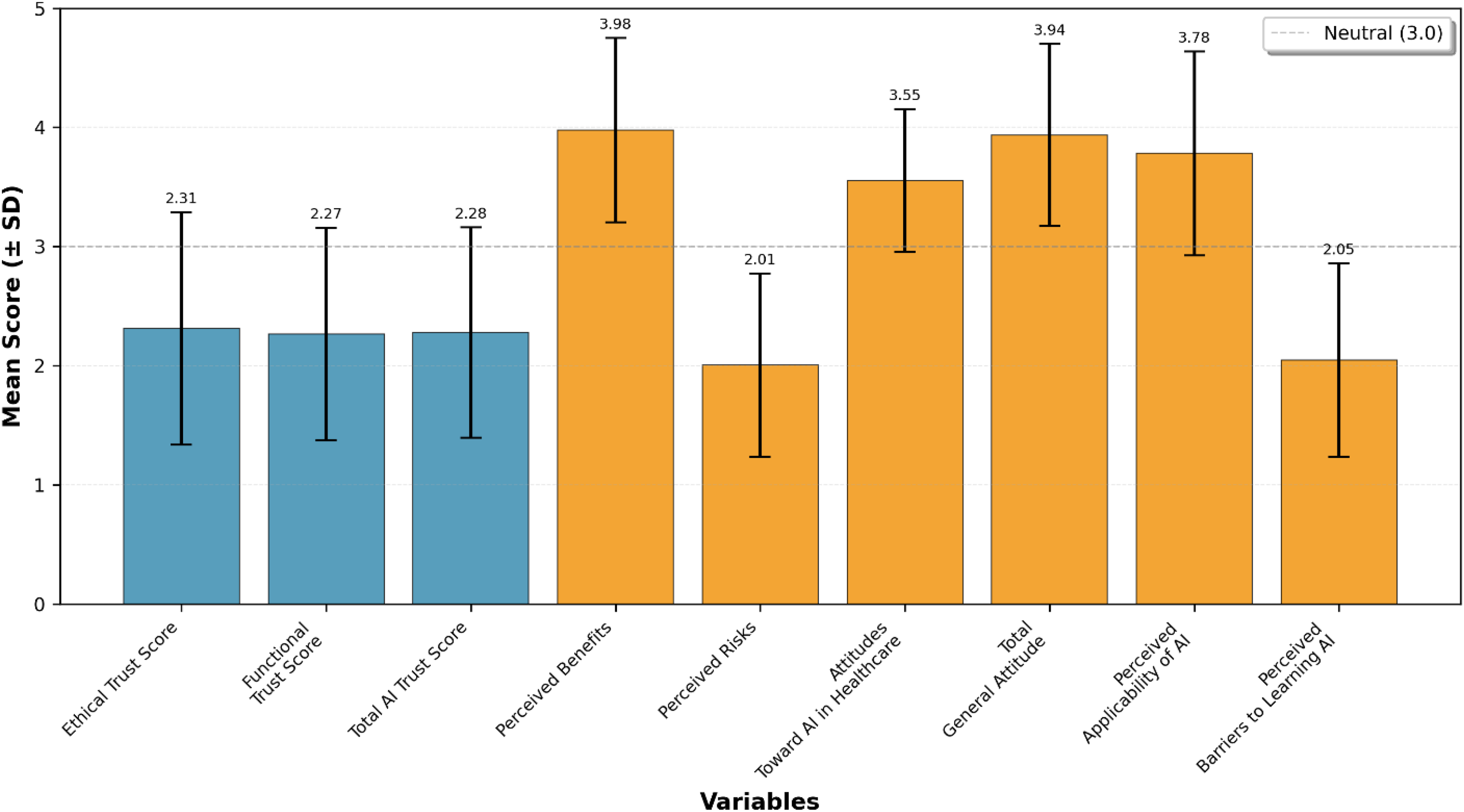

Figure 1 illustrates the disparity between students’ high ratings of AI benefits and attitudes versus their comparatively low trust scores. The pronounced gap between general attitudes (

Comparative mean scores of artificial intelligence (AI) trust, attitudes, perceived risks, and benefits (

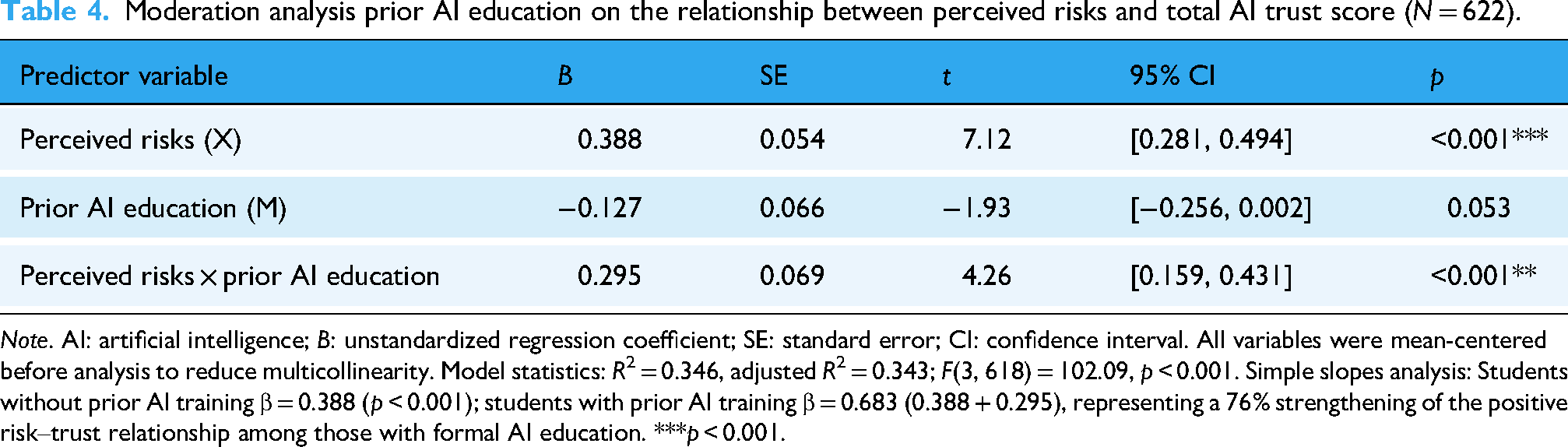

Moderation by prior AI education

Prior AI education significantly moderated the relationship between perceived risks and AI trust (β = 0.295, 95% CI [0.159, 0.431],

Moderation analysis prior AI education on the relationship between perceived risks and total AI trust score (

Moderation by academic year

Student academic year demonstrated a significant moderating effect on the risks–trust relationship, with the interaction term for fourth-year students reaching statistical significance (β = −0.274, 95% CI [−0.481, −0.068],

(a) Visually presents both moderation effects, illustrating how educational and developmental contexts shape the relationship between risk perception and trust. (b) Moderating effects of academic year and prior artificial intelligence (AI) education on the relationship between perceived risks and AI trust.

Moderation analysis, academic year on the relationship between perceived risks and total AI trust score (

Discussion

This multicenter investigation reveals a profound incongruity between nursing students’ favorable attitudes toward AI (

The moderate-to-high ratings for perceived benefits (

The counterintuitive positive risk–trust association

Perhaps the study's most theoretically significant finding is the positive association between perceived risks and trust in AI (β = 0.136,

Drawing on Lee and See's 12 trust calibration framework, we interpret this finding as evidence that explicit risk awareness is associated with appropriately calibrated reliance rather than overtrust (automation bias) or undertrust (system disuse). This is consistent with nursing's professional emphasis on critical thinking and error prevention, 36 suggesting students who actively evaluate AI limitations develop more realistic mental models of system capabilities. Empirical support comes from Gaube et al., 19 who demonstrated greater AI acceptance when failure modes were transparently communicated, and from Pine and Mazmanian, 20 who documented how candid discussions of limitations enhance credibility in clinical technology adoption.

This positive risk–trust association may reflect a crucial distinction between naïve trust (unconditional confidence without critical evaluation) and informed trust (confidence grounded in a comprehensive understanding of both benefits and limitations). Students exhibiting high scores on both constructs may demonstrate mature professional judgment, recognizing that all clinical tools carry inherent risks requiring mitigation rather than avoidance. 21 Contemporary extensions of Mayer et al.'s 23 organizational trust framework support this interpretation, emphasizing that integrity (transparency about limitations) and benevolence (active implementation of safeguards) alongside technical ability constitute critical dimensions of healthcare AI trust.7,37

When relevance undermines confidence: The applicability–trust paradox

The inverse relationship between perceived applicability and trust (β = −0.359,

We advance the implementation realism hypothesis to explain this phenomenon: as students develop a concrete understanding of AI applications in medication management, vital sign monitoring, and documentation, they simultaneously identify technical, organizational, and ethical challenges hindering effective integration. These may include interoperability limitations, workflow disruptions, and liability ambiguities, factors that temper enthusiasm generated by abstract portrayals of AI capabilities. This interpretation is consistent with Sendak et al., 24 who documented multiple “implementation challenges” undermining otherwise robust predictive models due to poor EHR integration, alert fatigue, and misalignment between algorithmic outputs and clinical decision processes.

Students maintaining globally positive attitudes may have engaged less critically with implementation scenarios, sustaining optimistic but abstract perspectives, whereas those interrogating concrete applications adopt more skeptical assessments of present trustworthiness. This shift, described by Shaw et al. 25 as moving from “technological solutionism” to “pragmatic evaluation” in healthcare professional development, is observed as a cross-sectional association rather than a causal progression in our data.

The education multiplier effect: How training amplifies risk awareness

The substantial moderating effect of prior AI education (β = 0.295,

AI education likely equips students with conceptual frameworks for organizing risk-related information. Without such scaffolding, risk awareness may manifest as diffuse anxiety; educational structure reframes the same awareness as organized knowledge about specific, addressable challenges. 26 For example, understanding algorithmic bias as a well-characterized issue with established detection and mitigation strategies may convert vague concern into manageable technical knowledge, paradoxically enhancing trust through comprehensive understanding.22,25

Nursing curricula emphasize systematic approaches to risk identification, assessment, and mitigation, competencies directly transferable to AI evaluation. 38 Educational strategies connecting AI-related risks to familiar clinical risk management frameworks may help students conceptualize AI systems as manageable tools requiring professional oversight. Empirical support comes from Pinto-Coelho, 28 who found nursing informatics curricula integrating AI within established quality improvement frameworks produced significantly higher trust scores than standalone technology-focused instruction.

Contemporary approaches to AI education increasingly prioritize critical digital health literacy, the ability to appraise technology's appropriateness, interrogate underlying assumptions, and advocate for patient-centered implementation.29,30 This empowerment-oriented perspective may explain why students with higher digital health literacy demonstrate greater trust despite heightened risk awareness: they perceive themselves as active agents in AI governance rather than passive recipients of imposed systems. 37 Building on Norman and Skinner's foundational eHealth literacy model, 31 recent updates such as eHealth Literacy 3.0 32 emphasize that competence encompasses both functional skills and critical evaluation capabilities. When students develop these dual dimensions, they may feel equipped to identify and mitigate AI limitations, reflecting confidence in their own ability to use AI responsibly rather than blind faith in technological infallibility, though our cross-sectional design precludes determining whether education causes this effect or reflects self-selection among more technologically inclined students. 39

Clinical exposure without educational support: The professional skepticism effect

Our findings reveal a marked attenuation of the risk–trust relationship among senior nursing students (β = −0.274,

This developmental trajectory suggests that intensive clinical exposure accentuates discrepancies between AI's theoretical promise and practical performance, without equipping learners with frameworks to contextualize limitations. 40 Fourth-year students typically undertake capstone rotations assuming near-complete patient care responsibilities, exposing them to scenarios where AI tools are absent, poorly integrated, or underperforming due to data quality issues or workflow misalignment, conditions that may be associated with disillusionment in the absence of structured educational strategies.18,41

Nursing scholarship has long documented the “theory–practice gap,” wherein classroom instruction fails to align with clinical realities.27,42 Our results indicate this gap may be particularly pronounced for emerging technologies such as AI, where curricular content often portrays idealized capabilities that clash with underdeveloped clinical implementations. Without ongoing education that reconciles these discrepancies, clinical exposure is associated with cynicism rather than sophisticated evaluative skills. 3

It is important to recognize that students’ clinical training occurs across diverse hospital settings with varying levels of AI integration. Teaching hospitals affiliated with KAU and SEU actively utilize AI-enhanced EHRs, predictive analytics for sepsis detection, and AI-assisted diagnostic imaging. In contrast, regional hospitals serving NBU, BU, and QU students have limited AI implementation, primarily restricted to automated vital sign monitoring and basic documentation systems. This variability in AI exposure may partially explain the professional skepticism effect: students assigned to AI-limited clinical sites may develop skepticism based on the absence of technology rather than experience with poorly implemented systems, potentially confounding the relationship between clinical seniority and trust attenuation. Future longitudinal research should explicitly measure students’ direct exposure to functional AI systems in clinical settings to establish temporal precedence and clarify these mechanisms.

Functional and ethical trust: Equivalent concerns

Although both functional trust (confidence in technical performance, mean = 2.27) and ethical trust (confidence in fairness and value alignment, mean = 2.31) were low, their near-equivalence warrants attention. Prior scholarship often frames ethical concerns as the dominant barrier to AI adoption,16,21 yet our findings suggest nursing students exhibit comparable skepticism toward technical reliability.

This parity likely reflects factors intrinsic to nursing practice and current AI maturity. Nursing operates under stringent accountability standards where errors can result in severe patient harm. 5 Consequently, reliability concerns are amplified: students may recognize that even systems with 95% sensitivity still produce false negatives in 5% of cases, a margin unacceptable in high-stakes clinical contexts despite being considered excellent by machine learning benchmarks. 43

Moreover, many high-performing AI models rely on deep learning architectures that lack interpretability, generating output without transparent reasoning. 44 This opacity conflicts with nursing's emphasis on critical thinking and clinical reasoning, core competencies requiring practitioners to justify interventions. 45 Students may therefore question their ability to exercise professional judgment when delegating decisions to systems they cannot interrogate, creating functional trust deficits independent of ethical concerns. 22

Low ethical trust scores likely reflect heightened awareness of widely publicized issues such as algorithmic bias, privacy breaches, and inequitable outcomes, coupled with limited confidence that current systems adequately mitigate these risks.9,21 Nursing curricula increasingly integrate social justice and health equity principles; applying these lenses, students may perceive AI as vulnerable to perpetuating disparities through biased training data and opaque decision-making. 3

Disciplinary contrasts: Nursing versus medical students

Placing these findings within broader healthcare education literature highlights notable disciplinary contrasts. Research on medical students’ perceptions of AI46,47 consistently reports higher trust levels and stronger enthusiasm for adoption compared to nursing students. Three interrelated factors may explain this divergence.

First, physicians’ diagnostic and treatment-oriented roles align closely with current AI strengths, particularly in pattern recognition and predictive analytics central to medical decision-making.2,38 In contrast, nursing's holistic, relationship-centered orientation, encompassing psychosocial support, advocacy, and care coordination, may feel less compatible with AI's technical focus, fostering ambivalence about relevance and value.3,47

Second, medical education emphasizes clinical autonomy and decision-making authority, positioning AI as an empowering augmentation of professional judgment. Nursing's traditionally hierarchical structure, where many decisions require physician orders, may frame AI as an additional external constraint rather than an enabler, potentially explaining lower trust.48,49

Third, gender dynamics may play a role in the specific context of this study. Nursing is a predominantly female profession globally, with women comprising approximately 87% of registered nurses in the United States, 50 and our sample similarly exhibited a strong female predominance (85.9%), reflecting both global nursing workforce patterns and enrollment distributions within the participating institutions. While this distribution is broadly representative of nursing demographics internationally, it may not capture the full range of gender compositions across all Saudi nursing programs. Therefore, interpretations of gender-related differences in technology attitudes should be made with caution, recognizing that our cross-sectional data cannot establish causal relationships between gender and trust patterns. Importantly, the gender-stereotype hypothesis, suggesting lower technical aptitude among women, lacks empirical support and is not an appropriate explanatory framework for the present findings. Instead, structural features of Saudi nursing education, particularly institutional gender segregation, may be associated with distinct professional identities, socialization processes, and pedagogical climates that influence technology attitudes through mechanisms independent of individual technical capability.

Saudi higher education has historically operated within a gender-segregated framework, including nursing programs delivered in male-only and female-only institutions, which may be associated with distinct professional identities, socialization processes, and pedagogical climates.51,52 Prior research on Saudi nursing education indicates that male and female nursing students experience different role expectations, clinical exposures, and professional socialization pathways, 53 factors that may influence perceptions of technological competence and institutional trust independent of biological sex.

Accordingly, future research should explicitly examine whether trust patterns differ between male-only, female-only, and mixed-gender nursing programs within the Saudi context, and whether the trust–optimism paradox observed in this study generalizes across diverse nursing populations internationally. These disciplinary and contextual differences underscore the importance of profession-specific and education-system-sensitive research, rather than extrapolating gender effects across healthcare domains or assuming universal patterns of technology acceptance.

The role of AI education content and clinical AI exposure

An important consideration is the nature and focus of AI education received by the 56.1% of students reporting prior training. Our study assessed AI education dichotomously without capturing the specific content, duration, pedagogical approach, or whether curricula emphasized AI's role in clinical practice versus didactic learning support. This distinction is critical: if AI education primarily focuses on how AI tools (e.g. ChatGPT and literature search engines) can enhance academic studying and assignment completion, students may develop enthusiasm for AI's educational utility while remaining uninformed about its clinical applications in diagnostic support, risk prediction, or treatment planning. This compartmentalization between “AI for learning” and “AI for clinical practice” may partially explain the trust–optimism paradox: students recognize AI's benefits in familiar academic contexts (contributing to high perceived benefits scores) while remaining skeptical about its reliability in unfamiliar, high-stakes clinical decision-making contexts (resulting in low trust scores).

Furthermore, the extent to which students have directly encountered AI systems during clinical rotations remains unmeasured in our study. Students may have theoretical knowledge about AI from coursework, but lack hands-on experience with AI-enabled clinical decision support systems, predictive analytics dashboards, or AI-assisted diagnostic tools in practice settings. The theory–practice gap documented in nursing education42,43 suggests that abstract classroom presentations of AI's capabilities may not translate into confidence when students observe (or fail to observe) AI implementation in actual care delivery, though our cross-sectional design prevents determining temporal ordering of educational exposure, clinical experience, and trust development. The variation in clinical site AI adoption, with some teaching hospitals actively using AI-enhanced systems while regional hospitals have minimal integration, creates differential exposure that our sampling strategy captured institutionally but did not measure individually. Future longitudinal research should assess: (1) the specific content and clinical focus of AI education programs, (2) students’ direct hands-on experience with AI tools during clinical placements, and (3) whether the quality and context of AI exposure (didactic-only vs. clinically integrated education and observation-only vs. hands-on use) differentially predict trust formation over time.

Limitations and methodological considerations

Several limitations warrant acknowledgment. First, the cross-sectional design restricts causal inference; although prior AI education moderated the risk–trust relationship, we cannot determine whether education caused this effect or reflected self-selection among more technologically inclined students. The temporal ordering of educational exposure, clinical experience, and trust development remains unclear. Longitudinal studies tracking cohorts through structured AI curricula would provide stronger evidence of causal relationships and establish whether observed associations represent developmental trajectories or cohort differences.

Second, reliance on self-reported perceptions may not accurately predict behavior when students encounter AI systems in real clinical contexts. The well-documented attitude–behavior gap in technology adoption suggests that expressed trust may shift substantially under conditions of actual decision-making. Future research incorporating behavioral measures or simulation-based assessments would offer valuable complementary insights.

Third, the “prior AI education” variable was measured dichotomously, without capturing duration, content, pedagogical approach, or instructional quality. Given our finding that education significantly moderates trust formation, though causality cannot be established from cross-sectional data, a more granular characterization of educational interventions is essential. Specifically, future studies should document: (1) whether AI education focuses on clinical applications versus academic study support, (2) the extent of hands-on experience with AI clinical tools, (3) integration of AI content throughout curricula versus standalone courses, and (4) pedagogical approaches (e.g. critical evaluation versus uncritical promotion). These dimensions may differentially influence trust calibration and should be measured explicitly.

Fourth, the study did not assess students’ direct experience with AI systems in either clinical or educational settings, relying instead on self-reported prior training and application use. Students may have encountered AI passively (observing clinicians use AI tools) or actively (personally operating AI systems), experiences that may be associated with different trust trajectories. Additionally, we did not measure the specific types of AI applications used (e.g. consumer tools such as ChatGPT for studying vs. clinical decision support systems in hospitals), limiting our ability to determine whether familiarity with consumer AI translates into trust in clinical AI. Future research should distinguish between different modalities and contexts of AI exposure to clarify which experiences are most strongly associated with appropriate trust calibration.

Fifth, common-method bias due to self-report in one survey administration is a potential concern. Although Harman's single-factor test suggested this bias did not substantially distort findings (the largest factor: 28.3% of variance), single-source data collection may inflate associations between perceptually measured constructs. Future research employing multi-method approaches (e.g. combining self-report surveys with objective measures of AI knowledge, behavioral simulations of trust-dependent decisions, or triangulation with faculty assessments) would strengthen confidence in observed relationships.

Sixth, while we addressed potential clustering effects by calculating ICCs (ICC = 0.04) and conducting site-specific sensitivity analyses, students nested within institutions and clinical sites may share unmeasured contextual influences (e.g. institutional culture toward technology, faculty attitudes, and specific AI systems encountered). Although our analyses suggested minimal clustering, the use of fixed-effects adjustments rather than multilevel modeling may have underestimated institution-level variance. Future studies employing hierarchical linear modeling could better partition variance attributable to individual, institutional, and clinical site factors.

Seventh, the predominantly female sample (85.9%) reflects global nursing demographics but may limit generalizability to male-majority nursing programs and to educational systems with different gender structures. Although three single-gender universities (Northern Border, Qassim, and Bisha) collectively contributed 71.4% of participants and mixed-gender institutions contributed 28.6%, this distribution is representative of the sampled institutions rather than all nursing education contexts.

Whether the trust–optimism paradox and education multiplier effect observed in this study generalize to male-majority nursing populations or to healthcare education systems outside Saudi Arabia requires empirical verification. Additionally, gender segregation within Saudi nursing education may be associated with distinct professional socialization and learning environments that influence technology attitudes through institutional and pedagogical mechanisms beyond individual gender identity. Future research should therefore examine trust patterns across diverse gender compositions, institutional models, and cultural contexts internationally.

Eighth, the Saudi Arabian context, characterized by rapid digital health expansion aligned with Vision 2030 initiatives and gender-segregated nursing education, may limit generalizability to other cultural and healthcare contexts. Trust formation mechanisms observed in this study may differ in settings with different regulatory environments, patient expectations, or professional norms, though our cross-sectional design cannot establish whether contextual factors cause these differences.

Implications for nursing education and practice

These findings suggest important implications for nursing curriculum development and workforce preparation, though the cross-sectional design precludes definitive causal recommendations. The education multiplier effect, wherein formal AI training strengthens the positive association between risk awareness and trust by 76%, provides empirical justification for integrating structured AI education throughout nursing programs. However, the content and pedagogical approach of such education warrants careful consideration. Based on the observed associations in our data, curricula should:

Integrate clinical and ethical dimensions: AI education must extend beyond technical functionality to encompass critical appraisal of algorithmic bias, transparency limitations, and clinical applicability. The positive risk–trust association suggests that transparent discussion of limitations is associated with enhanced confidence when coupled with frameworks for risk mitigation. Bridge theory and practice: The professional skepticism effect observed among fourth-year students suggests that clinical exposure without concurrent educational support may be associated with disillusionment. AI education should be distributed throughout clinical rotations, not confined to preclinical didactic coursework, with explicit connections between theoretical capabilities and practical implementations that students observe (or notably fail to observe) in clinical settings. Emphasize hands-on experience: Students should have opportunities to directly interact with AI clinical decision support systems, predictive analytics tools, and AI-assisted diagnostic platforms in supervised settings, moving beyond passive observation to active engagement that may support competence and appropriate trust calibration. Distinguish educational versus clinical AI applications: Curricula should explicitly differentiate between AI tools that support academic learning (e.g. literature search engines and study assistants) and those designed for clinical decision-making (e.g. sepsis prediction algorithms and medication safety alerts), ensuring students develop informed trust in clinically relevant systems rather than generalized enthusiasm based on educational technology familiarity. Foster critical digital health literacy: Education should cultivate students’ capacity to evaluate AI appropriateness for specific clinical contexts, interrogate underlying assumptions and training data, identify potential biases, and advocate for patient-centered implementation rather than promoting uncritical technology adoption.

For healthcare organizations implementing AI systems in clinical training environments, findings suggest that transparent communication about AI limitations, structured opportunities for supervised AI use, and integration of AI tools into clinical workflows visible to students may be associated with preventing the development of professional skepticism. The theory–practice gap identified here suggests that discrepancies between classroom portrayals of AI's capabilities and students’ observations of absent or poorly integrated clinical AI systems may undermine trust; organizations should therefore ensure that educational partnerships involve exposure to high-quality AI implementations rather than exclusively AI-limited settings.

Future research directions

Several research directions emerge from these findings:

Longitudinal studies tracking trust trajectories: Following nursing student cohorts from program entry through graduation and into early professional practice would clarify whether the trust–optimism paradox resolves, persists, or intensifies with professional experience, and whether educational interventions produce enduring effects on trust calibration or reflect pre-existing individual differences. Intervention studies evaluating educational approaches: Experimental or quasi-experimental designs comparing different AI education modalities (e.g. critical evaluation-focused vs. technology-promotion-focused curricula; integrated vs. standalone courses; and hands-on vs. lecture-only instruction) would provide causal evidence identifying most effective pedagogical strategies for fostering appropriate trust. Behavioral measures of trust: Research incorporating behavioral simulations, clinical decision-making scenarios, or actual AI system usage patterns would complement self-reported trust measures and illuminate the attitude–behavior relationship in high-stakes clinical contexts. Comparative studies across healthcare disciplines: Parallel investigations of medical, pharmacy, and allied health students would clarify whether trust formation mechanisms differ by professional role, clinical responsibilities, or disciplinary values, informing profession-specific educational strategies. Cross-cultural validation: Replication in diverse international contexts would assess generalizability beyond the Saudi Arabian setting and identify culturally specific trust determinants requiring tailored educational approaches. Granular measurement of AI exposure: Studies should measure specific dimensions of AI education (content focus, duration, pedagogy, and clinical integration) and direct AI system experience (passive observation vs. active use; consumer vs. clinical applications) to identify which exposure types are most strongly associated with informed trust. Gender-comparative studies: Within the Saudi context, research comparing male-only, female-only, and mixed-gender nursing programs would clarify whether gender composition or institutional structures influence technology attitudes, while international studies would assess whether findings generalize across nursing's predominantly female global workforce.

Conclusions

This multicenter study of 622 nursing students across five Saudi Arabian universities reveals a significant trust–optimism paradox: students recognize AI's theoretical benefits yet demonstrate substantially lower trust in AI clinical recommendations, representing a 42% perception–trust gap.

Contrary to conventional assumptions, perceived risks positively predicted trust (β = 0.136,

These findings carry important implications for nursing education, though the cross-sectional design precludes causal conclusions. The education multiplier effect provides empirical justification for integrating structured AI curricula that transparently address both capabilities and limitations. The professional skepticism effect observed among senior students suggests that clinical exposure alone, without educational scaffolding, may be associated with disillusionment rather than sophisticated evaluative skills, highlighting the need for AI education distributed throughout clinical rotations rather than confined to preclinical coursework.

The near-equivalent concerns about functional reliability and ethical dimensions indicate that nursing students’ trust deficits encompass both technical performance and value alignment, requiring curricula that integrate computational competencies with critical appraisal of algorithmic bias, transparency limitations, and clinical applicability. Educational approaches should foster critical digital health literacy, empowering students to evaluate AI appropriateness for specific contexts, interrogate underlying assumptions, and advocate for patient-centered implementation rather than promoting uncritical adoption.

Future longitudinal research is essential to establish temporal precedence and causal mechanisms. Studies should track trust trajectories from program entry through early professional practice, measure granular dimensions of AI educational exposure (content focus, pedagogical approach, and clinical integration), assess direct hands-on experience with clinical AI systems, and employ experimental designs to evaluate which educational modalities most effectively cultivate appropriate trust calibration. Cross-cultural replication would clarify generalizability beyond the Saudi Arabian context and identify culturally specific trust determinants.

In conclusion, nursing students’ trust in AI is dynamic and shaped by educational exposure and clinical experience in complex, context-dependent ways. Transparent, evidence-based AI education integrating technical and ethical dimensions is associated with calibrated trust that balances benefit recognition with limitation awareness. As healthcare systems worldwide accelerate AI integration, preparing the nursing workforce through thoughtfully designed curricula becomes essential for safe, effective, and equitable technology adoption in patient care. 54

Footnotes

Acknowledgements

The authors extend their appreciation to the Deanship of Scientific Research at NBU, Arar, Saudi Arabia, for funding this research work through the project number (NBU-FFR-2026–3326-04).

Ethical approval

This study was approved by the Nursing Research Ethical Committee at KAU (Reference No. 1M.176) and conducted in accordance with the Declaration of Helsinki principles.

Author contributions

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Deanship of Scientific Research at NBU (grant number NBU-FFR-2026-3326-04).

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data availability statement

Due to privacy restrictions, the dataset is not publicly available. However, de-identified data may be provided by the corresponding author upon reasonable request.

AI contribution

The authors used AI-assisted tools (Microsoft Copilot and ChatGPT) for improving clarity and grammar. No AI tool was used for data analysis, interpretation, or generating original scientific content. All intellectual contributions and final decisions were made by the authors.