Abstract

Objective

Artificial intelligence (AI)-based clinical decision support systems (AI-CDSS) have the potential to improve many facets of care, whether aimed toward unlocking new analysis methods, improving efficiency, or increasing patient safety. As AI-CDSS begins to see further real-world usage, there is an urgent need to understand how user experiences develop temporally, more so given these systems dynamic iterative nature. To explore this gap, we conducted a scoping review aiming to map user experiences with AI-CDSS, barriers, and facilitators and synthesize an overview of experiences temporally.

Method

Following the scoping review methodology of Arksey and O’Malley, three databases were searched with 257 records retrieved, 16 of which met the inclusion criteria. After identifying reported experiences, we carried out a reflexive thematic analysis and “best-fit” synthesis to explore reported user experiences over time.

Results

Nine overall user experience themes spanning two domains emerged from our analysis with 23 sub-themes. Themes include clinical context, clinical users, learnability, usability, trust and usefulness. Temporal mapping of experiences highlights their dynamic, interconnected nature and how these develop over time. These findings enrich the current understanding of how experiences with AI-CDSS develop over time, presenting implications for design. We additionally discuss gaps in current knowledge and opportunities for future work.

Conclusions

Long-term experiences, particularly those occurring as a result of changes in model performance or as a result of iterative updates to AI-CDSS are sparsely described. Future work in this area should explore in more detail how experiences unfold over time and alongside AI-CDSS as they evolve.

Keywords

Introduction

Recent years have shown a massive and accelerating interest in the use of artificial intelligence (AI) technology, not least in the realm of healthcare, where these technologies may be key to improving many facets of care. Recent work have for instance explored: AI supported cancer detection, 1 AI-enhanced patient–doctor consultations, 2 AI-powered systems for managing conditions such as diabetes3,4 and more broadly mental health. 5

Clinical decision support systems (CDSS) are systems that support clinicians in their decision-making. 6 These systems have traditionally been rule or heuristic-based relying on human experts to manually craft knowledge bases.6,7 More contemporary systems, however, use emerging AI technology to support decision-making, also called AI-based clinical decision support systems (AI-CDSS).8,9 Contextually, AI can be described as enabling machines to carry out operations such as learning, reasoning, and problem solving similar to human intelligence. 9 A recent systematic review for example highlights AI-CDSS may improve clinical factors such as: accuracy, timeliness, and efficiency of diagnosis and treatment decisions. 9 Although, successful real-world usage has been found to be highly challenging.9–13

However, while there is significant focus on the design,14–16 implementation4,17,18 and evaluation4,7–9 of AI-CDSS more emphasis should be placed on exploring and understanding the user experience of these systems,7,19–23 particularly their usage over time.

A unique aspect of AI-CDSS is their dynamic nature, that is, the experience of engaging with AI-CDSS differs over time as the performance and output of these systems can change24,25 This has several direct implications on user experiences, for instance the usefulness of outputs can change for the better or worse, affecting trust and raising ethical concerns. Prior works on AI-CDSS have, for example, noted how contextual use changes with medical expertise 15 and how trust in a technology remains a dynamic factor based on experiences with the system over time.15,26,27 Trust in a system can vary depending on positive and negative experiences over time, 27 and develop as technology matures and receives updates to functionality over time, an often overlooked and expected aspect of AI technology. 24 This system evolution in line with the fast-paced nature of AI development, requires careful alignment between AI, humans, and society. 24 Broader work on human–computer interaction has additionally shown that experiences with adopting technology also tend to be dynamic over time.28,29 Users, for instance, initially need to invest time and effort into learning to operate these technologies or develop expertise in their use, leading to changes in how they use them. 30 Implicitly, these insights highlight that users experiences with AI-CDSS changes significantly not just in the short term as users adopt technology but also over time in response to naturally occurring performance changes and updates to the technology.

User experience has been noted to cover all aspects of end-user interaction with a product or technology, and as a consequence of the user’s state, context, predispositions, expectations, needs and mood.31,32 A key aspect of user experience is that these are affected by prior experiences and in turn affect future experiences. 32 It is therefore important to consider the context of user experience and the time span of user experience interactions. 31

Many user experience (UX) methods are nevertheless limited in the scope of their assessment, focusing on limited experience sampling, that is, before, during, or after use surveys, despite the need for exploring how users experiences and relationship with a technology evolves over time. 31 For instance, when it comes to new technology such as AI in this case, acceptance is a topic of particular interest, and can be assessed through the technology acceptance model, 33 its various derivatives34,35 or through the different versions of the Unified Theory of Technology Acceptance and Use.36,37 However, as previously noted, these models lack a temporal dimension. 38

Karapanos et al.’s previously conceptualized the “Temporality of experience” framework for adoption of a product presenting the means for looking at the user experiences over time, through the framework phases: Anticipation, Orientation, Incorporation, and Identification. 28 Karapanos et al. in building the temporality of experience not only provides a means of analyzing experiences over time, but also demonstrated the complex nature of motivating sustained usage. 28 The nonadoption, abandonment, scale-up, spread, and sustainability (NASSS) framework by Greenhalgh et al. similarly considers temporality, describing various domains influencing technology adoption over time in a healthcare context through: “continuous embedding and adaptation over time”—Greenhalgh et al,. 39

Research on AI-CDSS have already identified a number of factors that should be considered for their design. These include: socio-technical context, 15 workflow integration, 40 trust 27 and integration with existing systems to be useful. 9 Socio-technical factors include the type of clinic, types of patients, work culture, liability, and legal requirements. 15 Considering established, intended or improved workflows are similarly important to ensure the usefulness of new designs.7,40 Several studies have also explored design guidelines and recommendations for creating AI-systems41–43 with many more providing early recommendations for the design of these systems.14,15,40,44 The decision support framework by Herrmanny and Torkamaan, for example, present three core aims and a number of related elements for such systems focused around: empower, encourage, and engage. 42 He et al. meanwhile proposes a comprehensive 25-item design guideline for creating AI-CDSS including points such as seamless workflow integration, design for explainability, simplification of design to reduce workload and need for early-stage evaluations, iterations, and pilots, among others. 40 The systematic review by Wang et al. similarly noted a number of design implications related to: transparency for AI-CDSS, alignment with clinical workflow, consideration of contextual factors (social, organizational or environmental), respecting professional autonomy and the need for human-centered design. 8 These works, nevertheless do not adequately consider or explain the temporality of experiences and the dynamic nature of adopting new technology, presenting a critical gap in knowledge for designing better AI-CDSS technology, addressing user needs, and for increasing user acceptance and uptake.

Objective

The aim of this scoping review is to analyze how user experience with AI-CDSS evolves over time by mapping reported experiences and identifying associated barriers and facilitators to use.

This review addresses the following research questions: RQ1: What is the user experience of engaging with AI-CDSS? RQ2: What barriers and facilitators shape user experiences with AI-CDSS? RQ3: How is user experience over time reflected in users’ current reported AI-CDSS experiences?

Methods

A scoping review was conducted to answer RQ1 to RQ3 following the five-step framework of Arksey & O’Malley for conducting a scoping review 45 and the methodological advancement by Levac et al. 46 We follow the PRISMA-ScR (Preferred Reporting Items for Systematic Reviews and Meta-analyses Extension for Scoping Reviews) guidelines in carrying out and reporting this work. 47

Search strategy

A systematic search for articles was carried out using the ACM, PubMed, and Scopus databases, all of which have previously been found suitable for evidence synthesis. 48 The search fields for ACM and PubMed were “all fields” (“anywhere” on ACM); for Scopus it was limited to “Article Title, Abstract, Keywords.” No filters were applied to any database. In accordance with the aims of RQ1 to RQ3, of broadly exploring reported user experiences with AI-CDSS in a healthcare setting, we used the search terms: “AI” AND “decision support system” AND “healthcare” AND “usability.”

Eligibility criteria

Inclusion criteria were: (i) a peer reviewed journal or conference article, (ii) written in English, (iii) which includes a qualitative assessment of the user experience of interacting with an AI-CDSS, (iv) in a healthcare setting with (v) relation to either human mental or physical health.

Exclusion criteria were (i) other article types (e.g. protocols, extended abstracts or reviews), (ii) articles written in languages other than English, (iii) lacking a qualitative assessment of the user experience of interacting with an AI-CDSS, (iv) usage in settings outside healthcare, and (v) a focus on topics other than human mental or physical health.

In this case, our emphasis on requiring a qualitative assessment is motivated by the aim of this work, which requires us to collect experiences. Given the implicit aim of trying to understand how/why a technology works or does not work,

49

we include early-stage human–computer interaction research so long as it includes a qualitative assessment of a prototype or key aspects of AI-CDSS experiences, allowing exploration of the “anticipation” phase of user experience over time. As we aim to map the breadth of

Study selection

The search was conducted on the 22nd of March 2025. Duplicates were removed by the first author, with screening of title/abstract being conducted by the two authors independently. Full-text screening against inclusion/exclusion criteria was conducted by the first author, with the second author cross-checking the final literature selection and excluded records. An adjudication process was used for disagreements between authors, including discussion and an additional review by both authors.

Data extraction and charting

Two types of data extraction were employed for this review. The first consisted of an overall study characteristics extraction using an Microsoft Excel template consisting of, for example, author, year, paper type, study target, study participants, number of participants, and fidelity (for instance, functional prototype or “real world” deployed technology).

The second type of data extraction being focused on identifying and extracting important paragraphs and insights on users experiences with AI-CDSS. Rather than mapping individual quotes from users, which are not always available in the literature, we instead summarized experiences descriptively as cards, merging similar ones. However, to reduce potential interpretation bias and to ensure internal traceability and validity, we ensured all cards kept references to their original sources with annotations on extracted paragraphs and insights.

Synthesis of results

Our analysis of results, include two distinct forms of thematic analysis aiming to address RQ1 to RQ3.

In addressing RQ1 to RQ2 we employed a reflexive thematic analysis approach.53,54 Initial familiarization and coding was carried out by the first author, with reflective discussions between both authors resulting in iteratively refined thematic coding. These discussions drew on authors’ experiences in human–computer interaction, ubiquitous computing, digital health, and human-centered AI. This iterative process included revisiting and discussing the original paragraphs and quotes to improve accuracy and reduce potential interpretive bias.

In addressing RQ3, we created a synthesized overview of experiences over time. We based this work on Karapanos et al.’s UX overtime framework consisting of the phases: Anticipation, Orientation, Incorporation, and Identification. 28 However, a limitation of this framework is that it does not focus on healthcare and the AI domain, for example, AI systems are expected to evolve, 24 an aspect not covered by the original framework. To extend the framework to the present AI-CDSS health context and experiences, we used a “best fit” framework synthesis approach. 55 In the present work, we have chosen to base our “best fit” synthesis on Karapanos temporality of experience as opposed to other UX models as these lack focus on temporal elements (e.g. Unified Theory of Technology Acceptance and Use).33,36,38 We considered other healthcare or AI domain situated models that include temporality such as those by Greenhalgh et al. and Shen et al. but chose not to base our synthesis on these, as they have less direct emphasis on users’ individual experiences.24,39

Constituent experiences over time were mapped against the

Results

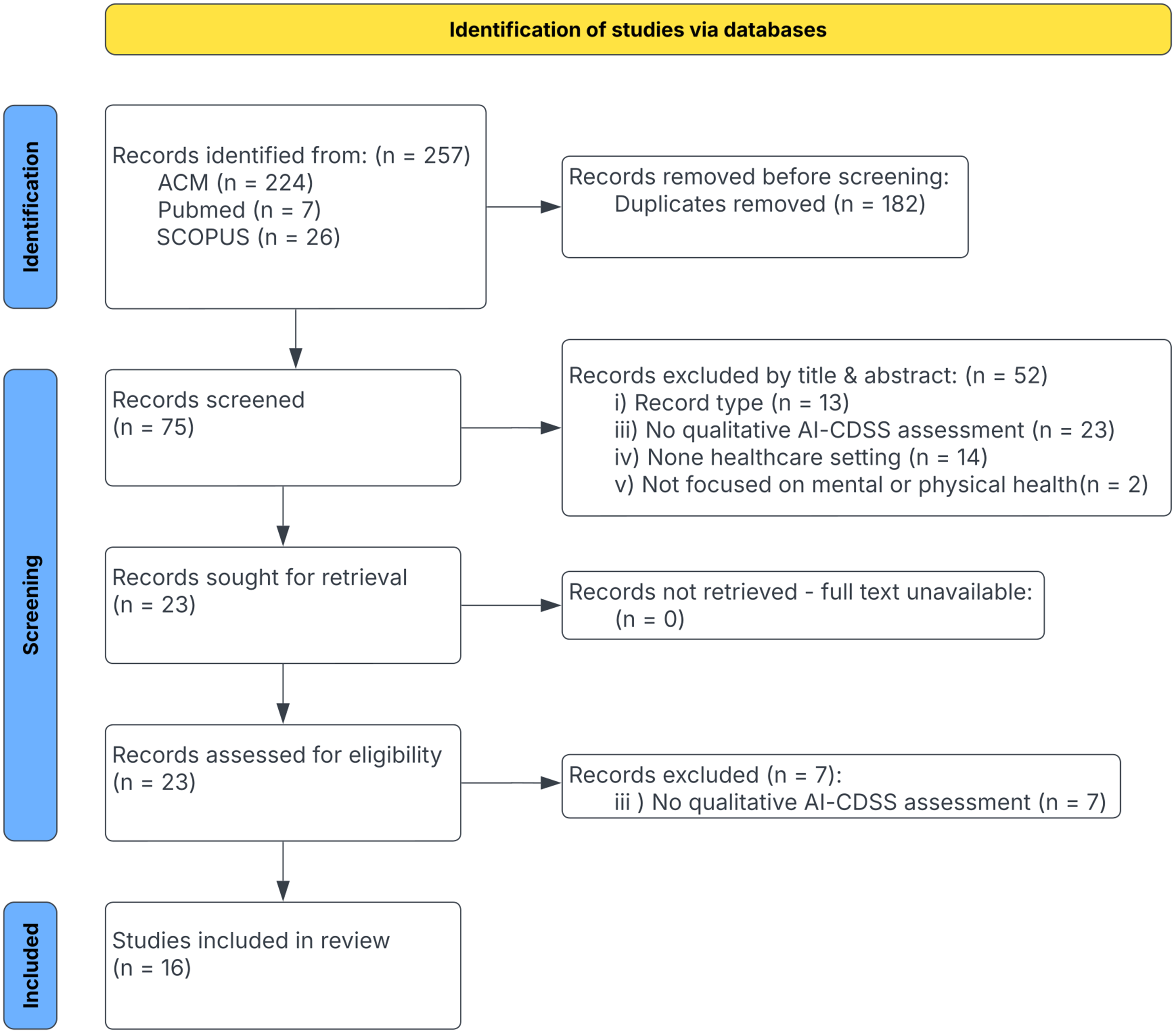

The flow diagram 57 of the literature search and selection process is shown in Figure 1. Our search resulted in the retrieval of 257 records, of which 182 were removed as duplicates. 75 records were screened for title and abstract, resulting in 23 records sought for retrieval, and full-text review, of these 7 were removed due to lacking a qualitative analysis or for not focusing on AI-CDSS.

PRISMA flow diagram showing the study’s inclusion process.

All included articles were published after 2020. Table 1 provides an overview of the characteristics of the included literature. Design and evaluation type studies were the most common, employing methods such as observations, interviews, surveys. These studies describe a variety of AI-CDSS targeting: medical imaging (n=6/16, 37.5%),22,23,58–61 general care (n=4/16, 25%)2,7,25,62 and other purposes (n=6/16, 37.5%), such as insulin titration,

63

diagnosing cognitive impairment,

26

stroke rehabilitation,

30

assist treatment of diabetic retinopathy and age-related macular degeneration,

64

with two papers having several different targets.23,59 About half (n=9/16 56%) the included literature tested forms of functional prototypes or pre-production prototypes2,19,22,23,25,58,62–64 with various levels of features (In this case features refer to features of the AI-CDSS and not data features.), four papers (25%) additionally included real-world systems7,21,30,61 with the remaining three studies reflecting interactive prototypes and various simulations26,59,60 (19%). Most studies7,21,25,26,30,58,60,62–64 include between 10 and 31 participants (

Overview of articles included in the review including key characteristics.

* The study does not report the number of participants, but provides a qualitative analysis of clinical accountability. †Parenthesized participants numbers indicates number of participants across different stages/conditions of a study.

Themes

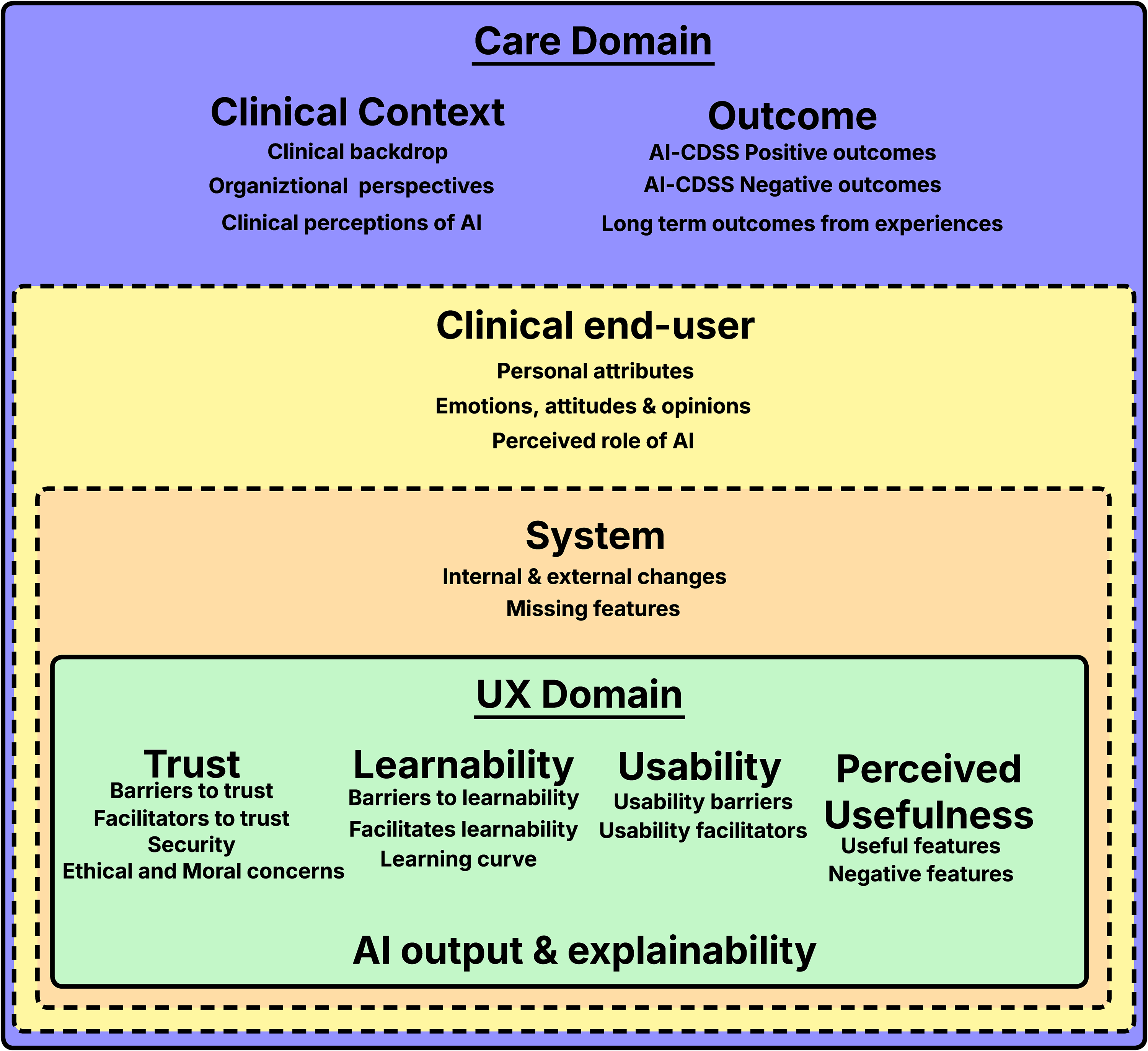

The reported user experiences are organized into two overall domains, 9 themes, and 23 sub-themes, Figure 2 showing an overview of these. The two overall domains for these experience themes is the overall care domain in which the AI-CDSS is deployed, that is the themes: (1) clinical context, (2) outcomes, (3) the clinical end-user and (4) the AI-CDSS, and the UX domain comprising qualities of the AI-CDSS experiences through the themes: (5) trust, (6) learnability, (7) usability, (8) perceived usefulness, and (9) AI output and explainability. For instance, opinions about AI can be formed on the basis of a users’ context that is clinical backdrop, be based on organizational perspectives, or be related to users’ personal emotions, attitudes, and opinions. Such opinions are not necessarily a result of use of the system, but are nevertheless important aspects of the user experience as these affect future experiences. Tables 2 and 3 provides an overview of the relationship between sub-themes. In the following subsections, we cover the experiences of engaging with AI-CDSS, including experiences related to barriers and facilitators. In the synthesis results section we cover our overtime synthesis and analysis.

Overview of identified themes and sub-themes representing reported experiences.

Interconnectivity of sub-themes for the clinical domain.

Letters in brackets indicates a relation between sub-themes for instance clinical end-user opinions (H) is related to and affects trust (L,M).

Interconnectivity of sub-themes for the UX domain.

Clinical context

The first theme consists of user experiences related to the clinical context and consists of the sub-themes: (a) clinical backdrop, (b) organizational perspectives, and (c) clinical perceptions of AI.

Clinical backdrop

Clinical backdrop refers to experiences forming the basis for where an AI-CDSS is deployed, this includes experiences related to: the clinic type, 7 patient context, 62 time availability, 2 workload, 58 workflows (e.g. actual vs. textbook), 7 the process of making a diagnosis, 58 factors affecting this process 25 and legal or organizational requirements, including liability. 7

Organizational perspectives

‘‘Organizational perspectives’’ covers experiences closely tied to the organization. End-users may, for example, benefit from trust in AI-CDSS being built prior to its deployment, 26 but organizational infrastructure and resources can challenge this process. 25 The experiences of adopting AI-CDSS are thus also affected by organizational culture, leadership, and resources 25 and include a need for adapting workflows. 58 AI usage also depends on team dynamics, with some users perceiving usage as more likely if recommended by senior team members. 21 However, AI-CDSS usage can be challenged by negative experiences such as the system being unable to meet necessary background expectations and output not being supportive of organizational standards. 61

Clinical perceptions of AI

Various clinical perceptions of AI have been reported: these include the perception that AI is unable to see the whole picture, that using AI-CDSS takes you away from the patient, 21 that there is little time to use these systems 7 or that the value of AI-CDSS predictions and benefits are unclear.58,62 Experiences also reflect how different users engage with AI, including AI-CDSS in different phases of analysis/diagnosis,2,30 AI predictions aligning poorly with users subjective understanding 30 or reflect various needs to personalize or configure decision thresholds. 58

Outcomes

Our second theme covers the outcomes of using AI-CDSS including the sub-themes (a) positive outcomes, (b) negative outcomes, and (c) outcomes from long term use. It is worth noting that outcome here refers to the results of using AI-CDSS and not outcomes in a more clinical sense.

AI-CDSS positive outcomes

Among useful outcomes reported are: efficiency gains in clinical workflows,2,25,63 reduced effort, reduced workload, 58 finding unexpected insights, quick overviews, 2 reduced frustrations7,30 and support for personalized treatment plans. 63

AI-CDSS negative outcomes

The use of AI-CDSS can, however, also result in more negative outcomes such as: prolonged workflow,7,58 exacerbate time/patient volume pressure7,21 and worsen clinician stress. 25 Some users perceive that AI suggestions are not useful or correct, 60 that the system does not always perform well,7,30 and that it is not needed in many cases. 7 In some cases, users noted that traditional methods without AI support are faster and more flexible7,58 or that AI lacks the decision accountability needed for reporting. 61 Other negative outcomes include accountability concerns from automating aspects of patient care, worries that AI-flagged areas of interest add unproportionally to task duration and that these take focus away from more important areas particularly when system predictions fluctuate. 23

Long-term outcomes from experiences

One long-term outcome from AI usage is

The clinical end-user

Our third theme relates to the clinical end-users, (a) personal attributes, (b) the perceived role of AI, and (c) attitudes, emotions, and opinions toward AI-CDSS.

Personal attributes

Personal attributes cover experiences and factors that affect user’s individual experiences, with reported examples being: stress level, burnout, depression, anxiety, 25 subjectivity, an individual’s learning rate 58 and their overall medical seniority. 21 Stress, burnout, and anxiety are factors previously noted to affect users learning rate, 66 with a long term outcome being the potential to compromise patient outcomes 25 and over-reliance on AI. 59 Although AI-CDSS has also been reported to have the potential to alleviate some stress through management of routine burdens or by providing means for reducing stress. 25

The perceived role of AI

The perceived role of AI can vary wildly between users, some seeing it merely as a tool, 26 others as a partner or consultant 21 or even a specialist. 63 For example, AI-CDSS annotations can be perceived as similar to those made by trainees for use by senior clinicians. 58 Clinicians might also feel like they are being replaced by an AI-CDSS, 62 but this feeling seems to dissipate with the use of AI-CDSS. 7

Emotions, attitudes, and opinion

The emotions, attitudes, and opinions of AI-CDSS are wide-ranging, some reflecting user expectations, others prior experiences of using AI-CDSS. Various emotional experiences with AI-CDSS include fear that AI will challenge professional autonomy,7,62 dislike of workplace digitization, 58 general distrust of machine learning,26,64 feeling uncomfortable following AI suggestions 7 particularly in acute situations, 21 or preference for relying on one’s own judgment.7,22 Attitudes toward AI vary between algorithms being perceived as more precise, 26 with AIs advice having “more weight” than similar human advice or vice versa 59 or negatively in the sense that assertive recommendations feel like orders. 19 Views on explainability also differ, for instance, that understanding the “blackbox” is not necessary or that a comprehensive introduction and understanding is crucial, 26 with skeptical views on AI being noted as challenging the use of AI-CDSS. 25

AI-CDSS

The fourth theme is focused around user experiences relating to the deployed AI-CDSS consisting of the sub-themes (a) Internal and external changes and (b) missing features.

Internal and external changes

Over longer periods of time, experiences with AI-CDSS can change as performance varies 61 leading to either positive or negative experiences depending on the changes. Experiences include those related to real-time feedback loops aiming to maintain system integrity or those that drive iterative improvements and updates to technology. 25 External changes can also occur as technical or usability improvements are added over time. 25

Missing features

User experiences with AI-CDSS can also reflect missing features such as: lack of interconnectivity between systems,7,21 lack of topic coverage, 7 lack of sources/citations/links 21 and the perception that output should have a different format.21,26

Trust

The fifth theme covers experiences related to trust with four sub-themes: (a) barriers to trust, (b) facilitating trust, (c) security, and (d) ethical and moral concerns.

Barriers to trust

Barriers to trust include blackbox decision making, 60 output uncertainty, unclear results, 58 too much information 2 and a need for further explanation of AI decisions. 60 Reported experiences additionally, suggest various forms of concerns related to biases which affects trust, such as dataset biases and quality 26 particularly generalizability for patient subgroups,22,23 or the introduction of cognitive biases as a result of AI-CDSS use. 60 Other barriers include information not always being accurate or updated, 7 the experience that system behavior cannot be taken at face value, 61 fear or concerns related to AI-hallucinations 21 or previous negative experiences from, for example, poor performance30,58,60,61 or being misled by AI-CDSS. 59 Experiencing significant computational mistakes by AI-CDSS may also damage trust long term as clinicians might believe such errors to be omnipresent, which might be the case for flawed algorithms, but not necessarily, non-deterministic AI where single errors are not indicative of erroreous models. 64 Bad usability can also act as a barrier to trust, for instance, alarms, while useful, can cause frustration when too frequent, nontransparent, and in-actionable. 7

Facilitators to trust

As noted in the “Clinical context’’ section trust is affected by both organization, team dynamics and the way trust develops before a system is deployed. However, trust also involves understanding AI predictions,26,60 how algorithms work,59,64 and the ability to review the AI output to see if the system or clinician made a mistake, 30 for example, AI can be used to provide a second opinion on possible diagnosis. 7 AI-CDSS making decisions which are similar to or perceived as aligning well with clinicians judgment also being noted as increasing trust in a system. 64 Up-to-date guidelines and transparent iterative model improvements also support trust building. 25 Trust is highly interconnected with perceived usefulness: positive experiences with AI-CDSS capability increase trust, while negative experiences reduce trust. For example, gaining unexpected insights 2 or being alerted to a common mistake or oversights 7 may facilitate the building of trust in a system, while poor performance, usability, frequent false positives, and false negatives lead to mistrust. 61

Security

System security has also been noted as a positive factor in building trust, for example, by reducing data sharing concerns, providing strong privacy and security. 25

Ethical and moral concerns

Ethical and moral concerns have been reported in the literature, for instance, doubts about what model performance means in practice, 26 ethical concerns related to AI-CDSS and the high-stakes nature of diagnoses,58,60 in addition to liability, responsibility, and other legal and moral concerns. 21

Learnability

The sixth theme, learnability, consists of three sub-themes: (a) barriers to learnability, (b) facilitating learnability, and (c) learning curves.

Barriers to learnability

In terms of barriers to learnability, these include being unaware of “hidden” system features, 7 experiencing initial confusion from using new software, that is, AI-CDSS 62 or needing assistance to understand the full functionality. 63 Overly complex visuals impact learnability while it generally takes time to become familiar with new visualizations and image formats.2,58,60,63 Additionally, technical or usability problems can impact learnability.

Facilitators to learnability

Facilitating learnability includes ease of use, good interface design, 26 simple explanations, 58 consistent and organized structuring, 2 a quick/intuitive understanding of predicted assessments, 30 use of familiar visualizations 2 and previous positive experiences with similar technology. 25

Learning curve

Some experiences reflect a learning curve, for example, chat prompts become more direct over time 21 or that understanding new forms of AI-supported assessment takes time. 26 Chen et al. for instance, describes how clinicians initially test a new assessment themselves to support understanding of how it works and to understand the connection between medical information and the new AI-CDSS assessment/tools. 26 Wang et al. similarly describe a learning curve effect related to whether a system is useful or not 7 where Lee et al. describe this process as creating a mental model of system capabilities leading to adjusted use and validation. 30

Usability

The seventh theme, usability, consists of two sub-themes: (a) usability barriers and (b) usability facilitators.

Usability barriers

User experiences related to usability barriers include the presence of cumbersome interactions, 63 lack of support for different measuring units 62 and incompatibility with workflows. 58 Moreover, AI-CDSS taking up screen space when not needed results in a cumbersome and inflexible system workflow. 7 Authors also note issues with interfaces that contain too much information,2,26,58 lack structure, and the need for filtering information. 2 Other reported usability issues including: accessibility issues from colorblindness, 60 color overlaps causing confusion when looking at certain figures and subtle mismatches between system/medical terminology.22,23

Usability facilitators

Design aspects that have been noted as facilitators of usability include: quick adaption and task navigation, 63 interfaces that facilitate or support quick, easy, and intuitive understandings of predicted assessments 30 and colors that support information gathering. 26 Chen et al. additionally note that clinicians prefer to receive key diagnostic information in an easily digestible output format, 26 with another study noting contextual needs/preferences for seeing raw data rather than a graph. 22 Clinicians also note that it should be quick, easy, reliable and intuitive to turn AI on/off, but also that accidental changes should not occur. 23 Lastly, many positive features covered in the perceived usefulness section are also related to positive usability.

AI output and explainability

The eight theme, AI output and explainability, includes users experiences where AI output can be difficult to interpret, 58 contain too much information, lack structure, 2 lack clear explanations for AI decision making 60 and that system alerts can be difficult to interpret. 7 In general, blackbox decision making negatively impacts the transparency of decisions. 7 AI scoring may also be difficult to interpret,22,26 with Chen et al. noting that counterfactuals are hard to grasp, not actionable and that it is difficult to know what prediction accuracy means in practice. 26 Nevertheless, it can also take too long for clinicians to input information needed to generate worthwhile output for each patient. 7 XAI it-self although positively perceived between studies,26,59,60 is reported through experiences as itself presenting various challenges, such as risking introduction of bias, 59 balancing ease-of-use with information load, 26 and clinicians needing education on XAI to increase awareness. 60

Perceived usefulness

Our final theme covers the perceived usefulness of features, with the sub-themes (a) useful features and (b) negative features.

Useful features

A number of experiences have been associated with useful features, such as the system supporting reviewing of trends, 30 comparison of disease progression, 26 and being able to do everything in one session.63,64 Gu et al. for instance, describes situations where the AI can mark points of interest allowing users to easily classify certain conditions, 58 while Leist et al. notes AI-CDSS may be particularly useful in borderline cases. 64 Other useful features include being able to verify initial diagnosis, being alerted to common mistakes/oversights,7,23 inclusion of novel, comparable performance data26,30 or supporting cross referencing. 58

Negative features

Features can however, also be perceived negatively, for instance, when low confidence scores create mistrust of recommendations or when confidence intervals are not useful. 7 AI-CDSS, moreover, cannot empathize with patients nor capture subtle clues that clinicians deal with all the time7,21 and does not consider factors such as patient income and insurance coverage. 7 Several features are also poorly received due to the aforementioned usability challenges, including challenges with AI output.

Synthesis results

Temporality of experiences

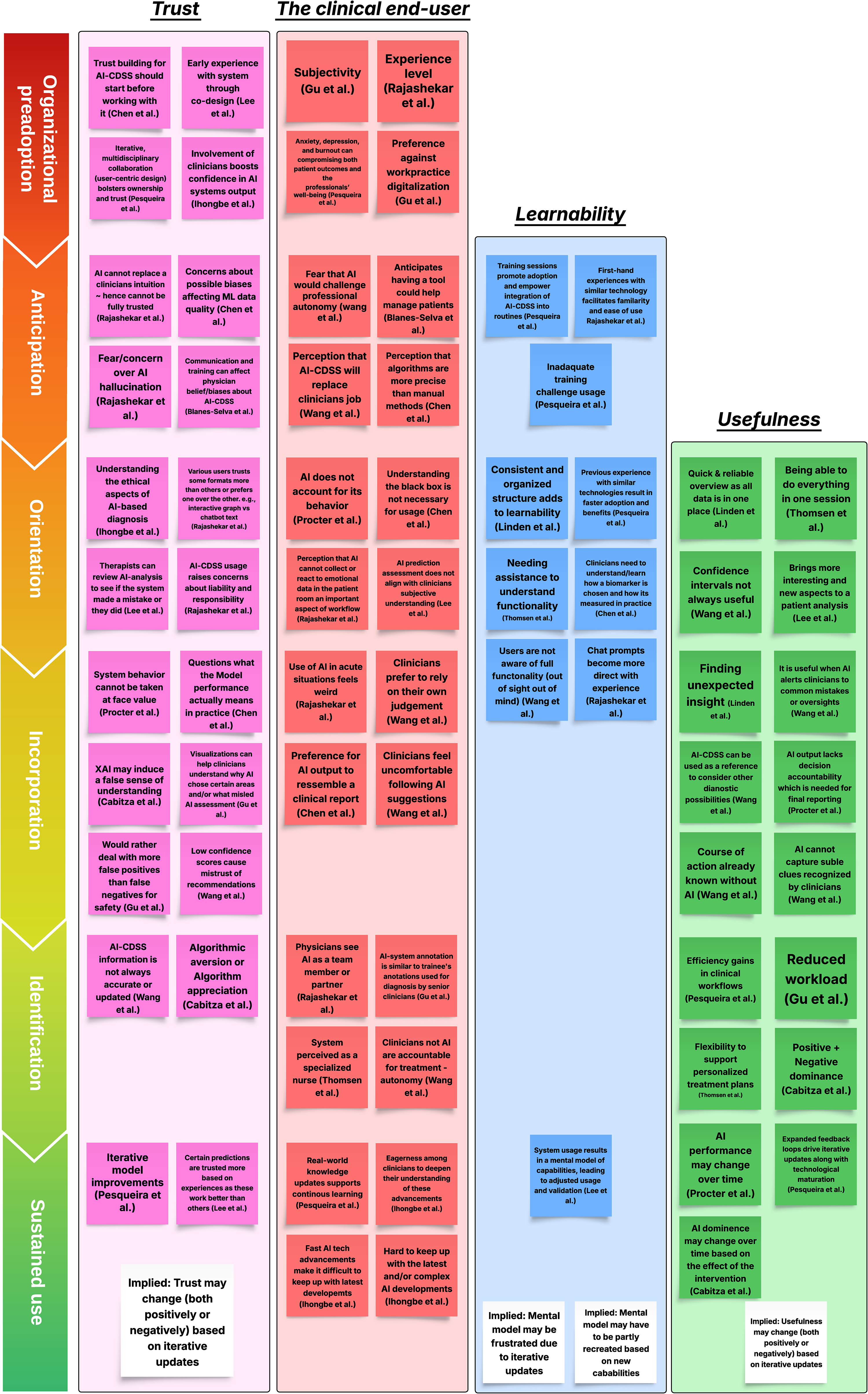

Following the “best fit” synthesis approach results in two additions to Karapanos et al.’s original framework,

28

Figure 3 presents a subset of mapped experiences following this synthesis and Figure 4 provides a visual representation of the extended synthesized framework. Firstly, prior to the traditional anticipation phase, we include an

Subset experiences from the themes: trust (pink), clinical end-user (red), learnability (blue), and usefulness (green) themes mapped from an temporal perspective according to the synthesis. White cards do not indicate specific experiences, but implications from the analysis.

The resulting “best fit” synthesis framework covering the original four phases (anticipation to identification) and the two additional AI-CDSS phases (Organizational preadoption and Sustained use).

Before we move on to exploring experiences temporally it is worth briefly expanding upon the rationale of Figure 4. Organizational preadoption represents various background experiences, which helps stage and shape anticipation, for instance, through organizational, legal requirements or work processes and through development or testing of various prototypes or related technologies. From there, anticipation helps develop expectations of future experiences which in turn follow: orientation, incorporation and identification. 28 We nevertheless have some notable additions to Karapanos et al.’s frameworks “judgment” factors in that for the AI-CDSS domain there is less focus on emotional attachment and more on professional attachment and provided value, which may, for example, it-self lead to various patterns of positive and negative reliance. We also note that the identification phase has less focus on social factors than the original framework but still emphasize that users view AIs role and hence their relationship with AI in different ways and that identification generally results in the formation of a mental model of system capabilities. Although, as we will make note of in upcoming sections, this model likely begins to form in the orientation and incorporation phases before becoming more realized in identification. Through sustained use, users established mental model may be challenged by internal and external model changes which causes shifting experiences and perceptions of the system. However, we shall explore these further in the following sections.

Organizational preadoption

In the organizational preadoption phase there are several factors and experiences that set the backdrop for AI-CDSS before it is actually adopted by an organization and an anticipation phase for its use occurs in practice. The type of clinic, patient context, legal requirements and liability in addition to everyday experiences in this context, such as time availability 7 and organizational structure/culture 25 are likely to affect whether a technology is adopted in the first place. An organization and their members can be involved in various activities prior to expected adoption as exemplified by included literature including concept validation/simulation,59,60 early validation, 63 the various forms of development processes, 25 or may even help co-design technology. 30 One of the themes identified by Chen et al. for instances makes note of the fact that “trust building starts before using the tool”—Chen et al. 26 It can also be noted how the work of Chen et al. itself demonstrates this point through their study, that is participants (clinical end-users) are left with initial perceptions of a potential solution affecting anticipation, for better or worse. The work by Pesqueira et al. additionally suggest that previous experience with similar technology results in faster adoption and, hence, benefits. 25

Anticipation

In the anticipation phase, experiences related to the care domain, that is, clinical context and clinical end-user and adopted system occurs, while experiences related to the UX domain that is trust, and learnability begin to occur. Various features of the anticipation phase include foundational experiences with technology, various concerns (e.g. autonomy, 7 hallucination, 21 biased data, 26 introduction of cognitive bias 60 ), personal factors (e.g. stress, burnout, 25 preferences for/against digitization, 58 eagerness to understand technology 60 ) and various perceptions of the technology ranging from mistrust of AI 21 to positivity toward new tools and accuracy. 26 Users’ various opinions on AI including its perceived trustworthiness are either static, reinforced, or reconsidered in subsequent phases. The anticipation phase could also include learning about an AI-CDSS through training sessions aiming to promote adoption and integration. 25

Orientation

In the orientation phase when users first begin using the system, experiences differ or comply with anticipations, users might begin to experience challenges in terms of usability, learnability, and start forming a consensus of a systems usefulness. In this phase the themes from learnability feature prominently. Adopting AI-CDSS requires an initial time investment from users, like all other technology. Users appreciate easily usable interfaces, 26 quick overviews of information 2 and simple visual explanations 58 or may be frustrated by technical and usability issues. For instance, a usability issue such as AI-CDSS always taking up screen space even when not needed 7 is first encountered at this phase, but continues to frustrate users in the future. However, the orientation phase also includes experiences related to external guidance on using the system or more comprehensive guides to its use.22,26 The paper by Lee et al. also suggests the presence of a learning curve where, based on use, users develop a “mental model of system capability” that informs and adjusts their usage and validation of results. 30 Van Berkel et al. unpacks this model as being user’s understanding of the inner workings of a system, including clinicians internal representation and reasoning about actions in their clinical world, which enables them to understand expected and actual AI behavior. 23 Users may additionally, integrate use of the system in analysis/diagnosis in subtly different ways based on experiences in this phase. 30

Incorporation

In the incorporation phase, experiences related to usefulness and long term usability gradually become more present, as opposed to those related to learnability. Here perceived usefulness or lack thereof plays a large role. Users experiences in this phase are varied depending on the specific AI-CDSS purpose but can be characterized by a mix of positive and negative experiences across the UX domain. Such experiences include: cases where AI-CDSS provide value, 2 frustration from interpreting predictions 26 or discovering new capabilities. 30 Adjustment of AI thresholds, settings, and other personalization is also likely to occur in this phase as users aim to ensure that, for example, the systems output matches their needs. 58 Both positive and negative outcomes of AI-CDSS are also likely to become more apparent in this phase.

Identification

In the identification phase, as users become more familiar with the AI-CDSS and its abilities, they start to develop a relationship 28 with the AI-CDSS as it becomes integrated into actual workflows. Reported experiences indicate that this can take the form of viewing or comparing the AI-CDSS to a consultant or team member. 21 Users may, based on their developed mental model of system capability, start developing an understanding for when predictions are more trustworthy 30 and feel benefits from its usage.2,25,59 Experiences in the phase also indicate users will avoid interactions with the AI-CDSS, based on their mental model of its capabilities, particularly when it is not expected to provide useful or trustworthy results.

Sustained use

In the sustained use phase, the mental model that began to develop in the orientation phase and continued to develop in subsequent phases may be disrupted by internal or external changes, causing various experiences. Users may find functionality lacking in some cases,7,60 derive frustration from degraded performance, question changes in output, or through improved performance, find cases where AI-CDSS is particularly useful or gain unexpected value from interactions with the system. The mental model thus begins to develop in the orientation/incorporation phase, but remains dynamic over time even in the sustained use phase as users derive positive or negative experiences from using the AI-CDSS. However, eventually through sustained use, the mental model can be challenged by internal and external system changes: model performance may deteriorate, 61 knowledge bases can become outdated 7 or models can be iteratively improved along with technical improvements and usability problem mitigation. 25 The dynamic nature of AI-CDSS capabilities can challenge clinical end-users’ mental model, resulting in users creating new mental models of the system. Some users welcome this as an opportunity to grow, 25 while other users might derive frustration from having to keep up with changes in the system. 60 Other experiences in this phase include ones related to reliance/non-reliance 59 and feedback mechanics aiming to maintain or improve performance. 25

Experiences over time insights

The analysis/synthesis also provides insights into four areas of experiences with AI-CDSS namely, learnability over time, trust over time, user perceptions and emerging relationships with AI-CDSS, and the dynamic nature of AI-CDSS.

Learnability over time

The synthesis and analysis highlights the need for a broader view on learnability in AI-CDSS, which considers initial learning about the system prior to use, learning how to use the system and longer term learning, that is, when to use the system. Learning how to use the system is foundational to many AI-CDSS experiences, but is nevertheless different to learning when to use it, for example, when results are useful.

The user experiences reported in the literature highlight users find it preferable to learn about the system prior to use, in the anticipation phase, which can counteract various individual perceptions and beliefs about AI through introduction of the system, training and communication. 62 Reported experiences suggest this reduces barriers to adoption of AI-CDSS, 25 promotes trust and address various users needs for knowledge about the system. 26 For instance, users may prior wish to know how a system was made, the model developed or be reassured about its quality including that of the datasets prior to use. 26

Learning

Trust over time

The second insight from the synthesis highlights the dynamic nature of establishing and maintaining trust over time. User experiences from organizational preadoption show that various perceptions of AI-CDSS trust can form through, for example, experiences related to development and prototypes, which occur before the anticipation phase.25,26,30 These early experiences can both positively or negatively affect the anticipation phase which occurs when a system is actually chosen for adoption by an organization. Positive experiences were found related to transparency during the AI-CDSS development process with said processes and prototypes exemplify opportunities for early trust building with AI-CDSS.26,30 Ihongbe et al., similarly, highlights the importance of fundamentally involving clinical end-users in design noting how this involvement can boost end-users’ trust in AI-CDSS output. 60

In the anticipation phase, prospective users have initial perceptions or concerns for trust, relating to aforementioned exposure and preconceptions of the technology, for example, concerns related to AI-biases. 26 The anticipation phase, nevertheless also presents opportunities for affecting these preconceptions and for building trust through education and communication. 62

In the orientation phase experiences related to: learnability, usability, usefulness and previous experience with technology may facilitate or frustrate trust. For instance, trust can be significantly challenged by AI-output and explainability issues.7,21,26,58,60 Users develop their trust through learning how to use the system,26,62 interpret its results,26,30 navigate potential ethical aspects of a diagnosis26,60 and through establishing routines for reviewing results to see if they or the system made a mistake.30,58

As the orientation phase gradually transitions into the incorporation phase, experiences related to long-term usability and usefulness start affecting trust increasingly. A useful system providing various advantages2,30,58,63 and positive experiences2,7,30 is more likely to be trusted, compared to systems with poorer technical performance, that is, error rates and suspicious system behavior.58,61 Gu et al. also reported how clinical end-users would rather deal with more false positives than false negatives for safety, 58 highlighting the complex balance between system usefulness, trust, and patient safety. The incorporation phase also brings more subtle problems in AI trust to the forefront through potential cognitive biases59,60 and through the ethical aspects of everyday usage. For instance, it can be unclear what model performance actually means in practice, 26 raising not only ethical concerns, but also moral and legal ones. 21

As users continue to use the system, trust gradually results in experiences related to algorithmic aversion or appreciation, reflecting opposing dimensions of reliance and none reliance. 59 That is, users may actively utilize advice or avoid said advice, leading to a number of possible experiences, for example, over-reliance leading to acceptance of incorrect advice, or rejection of correct advice as users wish to avoid or do not trust predictions. 59 Trust remains dynamic affected by a balance of positive and negative experiences with the system. This balance can nevertheless also be affected by the dynamic nature of AI systems, as performance changes and iterative updates occur. These iterative updates potentially increasing trust through improved functionality, but can also negatively affect trust if users cannot make sense the changes occurring, for example, significant output differences. Lee et al. also note that users are likely to lose trust in a system if it repetitively makes mistakes and does not improve over time. 30

Users’ perceptions of AI and emerging relationship

Perceptions of AI are wide-ranging between users, as are the possible concerns whether related to trust or various other emotions or perceptions. Users in the anticipation phase can fear being replaced by AI or perceive AI as a challenge to autonomy.7,62 Anticipation for these systems can also be more positive as users see them as helping manage patients 62 or have positively associated views on computers accuracy. 26 However, initial perceptions evolve with use in the orientation and incorporation phases, as users, for example, experience AI cannot account for its behavior, 61 or that AI cannot perceive important patient cues. 21 Such initial perceptions, nevertheless also present opportunistic targets for intervention, where the system or design helps users realize AI cannot replace them and that they remain autonomous and responsible for treatment, 7 or that predictions should be carefully reviewed to avoid automation bias. Positive initial perceptions can however also sour through repeated incorrect task disruption overtime leading to frustration and AI-CDSS abandonment. 23

Findings also suggest the formation of a personal relationship 28 occurs relatively quickly with AI-CDSS, with indications this can occur in the orientation phase.21,58 Clinical end-users can see AI as an assistant, 7 team member, consultant/attending in the case of medical students 21 or as a specialized expert. 63 While this early formation of a relationship seems odd, it can be contextualized to many AI-CDSS emulating existing roles in clinical contexts such as the medical student receiving advice from a senior 21 or AI annotations being similar to the ones made by junior radiologists and used by senior radiologists for diagnosis. 58 Although some users may continue to perceive AI/ML as merely a tool or as better used elsewhere or for more menial tasks. 7

Dynamic nature of AI-CDSS

A number of implications can also be derived related to the sustained use phase of AI-CDSS. Firstly, the performance and experiences of a AI-CDSS vary over time,23,61 this variation being affected by, for example, “drift”, 61 information not being up to date, and various mechanisms such as expanded feedback loops enabling iterative updates to the AI-CDSS. 25 Not only does this highlight how sustained experiences with AI-CDSS varies long term but also that experiences related to tagging poor outputs and providing feedback are crucial for AI-CDSS as they help maintain system integrity and can help improve models iteratively. Secondly, this variety in long term experiences can affect various aspects of the user experience, for example, usefulness of output and trust. Trust remains a dynamic factor affected by the dynamic evolving nature of AI technology, requiring users to reevaluate their mental model of capabilities over time. Thirdly, the nature of interactions between user and AI-CDSS will evolve based on experiences with the system, through previous concepts such as automation bias, positive dominance, negative dominance, or through the white box paradox. 59 These concepts denoting experiences where the users become less vigilant to errors, the AI helps users avoid errors, the AI misleads the user or XAI explanations helping rationalize a wrong decision.

These implications nevertheless, only scratch the surface of AI-CDSS dynamic nature with other experiences such as those related to AI-CDSS supporting learning over time or fatigue from alarms, as well as specific experiences relating to, for example, model updates suggesting the need for further exploration of AI-CDSS usage in the long term.

Implications for design

Finally, from the synthesis and individual works included in this scoping review, we summarize implications for future AI-CDSS design works, Table 4 providing an overview of these. We base these implications and recommendations on the results of our scoping review, as well as on the insights and conclusions provided by the individual works, as referenced in the table.

Table of design implications and preliminary recommendations.

Discussion

This scoping review has covered the user experiences of engaging with various AI-CDSS (RQ1), barriers, and facilitators that shape use (RQ2), and reflected on and mapped experiences from a temporal perspective (RQ3). Through our exploration, we unpack the highly interconnected nature of AI-CDSS experiences, which of these facilitates or presents barriers to, for example, trust, learnability, usability, perceived usefulness, AI output and explainability. Moreover, we highlight how various user experiences lead to development of a mental model of system capabilities and how this might be disrupted overtime by AI technologies natural life cycle.

In regards to building trust over time, it has previously been established how trust is an important factor in user acceptance and adoption of AI technology.67,68 Our findings align with those by Matthiesen et al. in regards to trust emerging from real-world usage, 27 however, we add to this that mistrust can also occur as a result of use.7,30 We find similarly to previous work that trust in technology is a dynamic factor15,26,27 that can be affected by both internal and external changes to the technology over time. 25 Our findings also support those by Burgess et al. which suggests that the introduction of an AI tool is an opportunity for building trust, 14 that is, in the orientation phase. Nevertheless, we also find that there are opportunities for building trust prior to adoption during organizational preadoption and anticipation. 26

The overall experience themes uncovered in this scoping review also align well with those reported in the recent broader review by Wang et al. 8 These findings align particularly well in regard to perceived benefits (AI-CDSS positive outcomes & useful features sub-themes), technical limitations (missing features & negative features sub-themes) usability issues and mismatch between clinical end-user information needs and AI-CDSS output. 8 We also find significant overlap between clinical end-user sub-themes, trust, and the clinician perspectives reported by Ouanes et al. 9 The findings related to mismatches with AI-output ties into the broader challenges of aligning AI to humans. 24 This also suggests long term design should be built around AIs lifecycle, as sensibly measuring complex value systems is paramount to improving human-to-AI alignment 24 and for human-in-the-loop configuration in both the design and usage phases. 69

In terms of our design implications, prior work has already noted the importance of considering the clinical context and adoption process for which a system is deployed.15,39 The previously mentioned NASSS framework for instance considers factors such as the condition, technology, value proposition, adopters (i.e. users/patients), organization and legal framework. 39 The implication that AI-output should be carefully built around user needs, as noted in many included works7,19,21,26 reflect previously reported challenges of designing AI interactions,70–72 that is, prototyping fuzzy at times open ended interactions and technical challenges. Design implications related to trust also align well with existing literature, for example, the preferability of learning about an AI-CDSS prior to usage 73 and trust being dynamic based on experiences. 27 Using AI design to automatically address certain user needs or address potential user concerns and biases has significant potential in improving user experiences: for example, an AI-CDSS may be designed in such a way that concerns related to dataset quality can be easily addressed.

As long-term use and value is a goal of AI-CDSS, design should include considerations for long term usability and transparency, for example, in response to the dynamic performance of AI, iterative updates and the fast moving nature of AI development. A notion also previously touched upon in literature: “Existing literature often treats AI alignment as static, ignoring its dynamic nature. A long term perspective must consider the co-evolution of AI, humans, and society.”—Shen et al. 24 Not only do we share this view, but also observe through this scoping review that there is a lack of long term perspectives on experiences, how these develop, and consequently also a lack of consideration for these in design. For instance, while we have a reasonable grasp on early experiences related to learnability, we know little about long term learning and how users adapt to performance changes over time. Similarly, while user perceptions of AI-CDSS suggest that the technology can support continuous learning, it is unclear how this occurs in practice and how it might be supported by design. Understanding how user experiences develop temporally and particularly in response to the dynamic evolving nature of AI is key to addressing barriers and leveraging facilitators through design with this work providing a first step in this direction.

Implications for future works

As discussed, experiences and perceptions, for example, usefulness and trust are dynamic, changing over time, continuously shaped by previous experiences of engaging with present and past systems. While we have synthesized an overview of how user experience develops over time, more work is necessary to understand how time alters the way clinical end-users experience AI-CDSS technology. We see three immediate avenues for this exploration, future works could employ forms of longitudinal experience sampling, such as the Day Reconstruction Method 28 or the Experience Sampling Method 74 to map how users’ relationship with AI-CDSS develops over time. Alternatively, this exploration could occur through more limited sampling or through interviews exploring the effects of iterative updates to AI-CDSS, for example, how such changes affect users perceived experiences, trust, mental model of capabilities and usefulness.

Previous works have noted the importance of stakeholder engagement in the design and integration process of AI-CDSS.9,25,40,75,76 This engagement is important as it ensures compatibility with work practices, user needs, and organizational requirements. However, based on the experiences observed in this review, we similar to prior works70–72 observe significant challenges in adapting design for clinical context and output needs, which can be associated with technical challenges, 24 the difficulty of designing AI70,71 and challenges relating to classical user-centered design, 70 that is, the “user as a subject”. 77 The latter of which suggests there are advantages in design which to a greater extent is designed from the end-users perspective,23,73,78 that is, the “user a partner” 77 which could be done through participatory design, co-design or co-creation.23,77,79,80 The construction of trust between AI technology and clinical end-users is as previously noted rather complex and can easily change over time as highlighted in previous sections. Trust may be built up with said technology through user-centered design, 62 carefully planned rigorous development processes 25 or through co-design. 30 Considering the potential for building trust, through transparency in design9,60 and considering the reported experience mismatches in AI-output there seem to be significant opportunities in utilizing a more co-creation 77 oriented approach to AI-CDSS design. Van Berkel et al. also notes a participatory design approach may help challenge design norms and exploration of subtle design decisions with profound impacts on contextual integration satisfaction. 23 Co-creation and participatory design approaches nevertheless face the same challenges as those of user-centered design in actually creating AI-CDSS technology,70,71 that is those, associated with the availability of experts, the construction of these types of systems including the complexity of training accurate explainable models 81 and data availability. 24 Accordingly, this requires prudent care to be put into facilitation,71,82 group creativity,83,84 negotiations between stakeholders85,86 and which stakeholders to include. However, we will leave the user-centered versus participatory/co-creation discussion here leaving it to future works to explore which approach works best in practice while balancing the particulars of building complex technology.

Limitations

The aim of the work presented here was never to collect and map every possible user experience of engaging with AI-CDSS, but rather to provide an overview of experiences and synthesize how these unfold in temporality. With this in mind, there are three noteworthy limitations to this work, which may impact the generalizability of insights. Firstly, in terms of our review, we have somewhat stringent search terms and include a limited number of studies in our analysis. Despite 16 articles meeting inclusion criteria, the limited search terms may have resulted in relevant literature using adjacent terminology being left out, which may limit generalizability. Secondly, some works included in our analysis are rather formative in nature: focusing on initial perceptions including early stage testing and are hence likely to miss various experiences occurring over time and as a result of sustained use, in addition to potentially over representing learnability and usability experiences. Thirdly, our inclusion of AI-CDSS from different medical domains, for example, general medicine and cancer screening, limits the relevance of certain experiences detailed in this work when applied to other domains. As more AI-CDSS are deployed and evaluated operating in real-world or near real-world conditions there may however, also be future opportunities for carrying out a broader systematic review on AI-CDSS experiences in temporality.

Another important limitation of this work is that reviews, by their nature, provide an aggregate overview of what is reported in the literature. That is, individual works included may have only reported the most relevant experiences found, rather than all observed. Accordingly, future works are needed to verify the generalizability of these findings. However, despite these limitations, we believe that this work is an important step in exploring the design of AI-CDSS with an emphasis on how experiences unfold and change over time as AI technology matures. Our results indeed also suggest the need for additional emphasis on design, which to a larger extent considers and supports the dynamic nature of AI-CDSS experiences over time.

Conclusion

In this scoping review, we have explored the reported experiences of engaging with AI-CDSS, looking at the emerging themes, barriers, and facilitators and how experiences develop over time. Based on these findings, we present a number of preliminary design implications, including the need for better alignment of output with needs and for designs to more fundamentally consider the dynamic nature of AI systems. Currently, there is a need to improve our understanding of how users integrate AI-CDSS into their work practices and how experiences develop over time. As more AI-CDSS are deployed and evaluated “in the wild”, future work should assess more fundamentally how experiences develop and how iterative updates to the technology affect users’ mental model of capabilities, trust, and perceived usefulness.

Footnotes

Contributorship

Conceptualization, data curation, and formal analysis were done by DRP (lead), TOA. Investigation was done by DRP (lead), TOA (equal). Methodology was done by DRP, TOA. Supervision was done by TOA. Validation and visualization were done by DRP, TOA. Writing, original draft was done by DRP (lead), TOA. Writing, review, and editing were done by DRP, TOA.

Ethical considerations

Ethics approval and consent to participate were not applicable to this study given the nature of scoping reviews, that is, evidence synthesis of existing literature.

Consent to participate

Not applicable.

Consent for publication

Not applicable.

Declaration of conflicting interest

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Data availability

Information about review data is available by contacting the corresponding author.

Guarantor

DRP