Abstract

Background

Post-stroke aphasia impairs language abilities. Although digital therapy apps offer accessible self-administered alternatives, the effectiveness of effortful lexical retrieval in such therapies remains unclear.

Objective

This study aimed to evaluate the feasibility, usability, and preliminary effectiveness of a tablet-based lexical retrieval therapy app designed to support effortful lexical retrieval in individuals with post-stroke aphasia.

Methods

Patients with post-stroke aphasia were randomly assigned to receive lexical retrieval therapy app or workbook-based cognitive exercises for two weeks. Feasibility was assessed through adherence and engagement and usability through the system usability scale (SUS). Preliminary effectiveness of the proposed method was measured by improvements in retrieval performance and the Boston Naming Test (BNT).

Results

The study included 17 participants, with the intervention group (9/17) showing an 88.9% retention rate. They showed significantly higher post-intervention lexical retrieval scores than the control group (F1,14 = 10.82, p = .005). BNT scores significantly improved in the intervention group compared to the control group (F1,14 = 12.94, p = .003). Intrinsic motivation scores were significantly higher in the intervention group (p = .002), and usability was rated as excellent (mean = 84.56). Training accuracy improved over time, and response time data indicated increased retrieval effort in some participants.

Conclusions

This study provides preliminary evidence that a self-administered app using effortful therapy can improve lexical retrieval in post-stroke aphasia, highlighting its potential as an accessible alternative to clinician-led interventions. Future research should explore long-term adherence, adaptive strategies, and their lasting therapeutic effects.

Introduction

Aphasia is a prevalent consequence of stroke, affecting more than one-third of stroke survivors. 1 It disrupts core language abilities, including comprehension, speaking, reading, and writing. 2 Among its symptoms, word retrieval difficulties are particularly common and debilitating. 3 These challenges stem from disruptions in the brain's lexical networks, making it hard to find and use the correct words in spontaneous speech and structured tasks. 4 Symptoms often manifest as hesitations, paraphrasing, and phonological or semantic errors, particularly during naming tasks that require precise lexical access. 5 Impaired naming ability reduces fluency and confidence, disrupting everyday conversations and increasing the risk of social isolation. 4

Speech and language therapy (SLT) has been shown to effectively improve these symptoms through repetitive, practice-based interventions.6–8 Evidence indicates that repeated practice of retrieving lexical items from long-term memory is particularly beneficial for improving naming accuracy in individuals with post-stroke aphasia.4,9–11 One technique that leverages this principle is effortful lexical retrieval, a cognitively demanding process that promotes neuroplasticity, enhances learning, and supports long-term naming improvement.

Maximizing therapy outcomes requires active engagement from individuals in the learning process. Errorful therapy, based on effortful learning principles, encourages errors during lexical retrieval to enhance learning efficiency. However, these errors should occur within a structured intervention, allowing individuals with post-stroke aphasia to identify and correct them through mechanisms that repair errors. This process reinforces accurate responses, leading to better learning outcomes.12,13 Research suggests that the effectiveness of retrieval-based practice hinges on successful retrievals.10,14 Errors alone are insufficient; they must be followed by successful attempts to enhance learning effectiveness. When retrieval attempts fail despite feedback, learning becomes less effective, making improvements in naming accuracy challenging.10,15 Therefore, effective therapy should allow for errors while incorporating mechanisms for detection and correction, maximizing the benefits of retrieval-based learning.

Despite the benefits of SLT, many individuals with post-stroke aphasia encounter barriers to accessing adequate therapy. 16 Limited mobility and long travel distances complicate outpatient clinic visits.17,18 Financial constraints and insufficient clinical resources further limit access to SLT, creating a significant unmet demand for treatment. 19 Digital therapy has emerged as a promising solution to these barriers and provides an accessible and cost-effective platform for ongoing therapy. Digital therapy apps allow therapy to be conducted anytime and anywhere, significantly increasing practice opportunities.20,21

Studies have shown that digital therapy apps can effectively deliver therapy as either an alternative or a supplement to face-to-face sessions.22–25 Some digital therapy apps offer automated feedback on error patterns to enhance language skills without clinician supervision. These apps also track progress and adjust task difficulty based on individual performance, ensuring appropriately challenging and structured practice. Digital therapy apps can be administered either with the therapist present or through a self-administered approach, allowing patients to practice independently.

While several studies have evaluated the outcomes of self-administered digital therapy apps for individuals with post-stroke aphasia and naming impairments,26–29 significant gaps remain because it is unclear how individuals engage with and learn from these platforms in the absence of clinician guidance. For patients with aphasia, self-administered digital therapy apps pose challenges because their performance may not align with clinical guidelines, making it difficult to assess treatment fidelity. 30

Furthermore, negative feedback from unsuccessful attempts can lead to frustration and adversely affect adherence to therapy. Given that digital therapy apps have demonstrated variable outcomes influenced by behavioral and environmental factors,31–33 further research is necessary.

Specifically, it is unclear whether individuals engage effectively in self-administered lexical retrieval app that are based on errorful learning. Without clinician monitoring, it is difficult to determine whether patients are making sufficient effort to retrieve lexical information. This also makes it unclear whether error correction, which is a vital mechanism of errorful learning, can be performed adequately. Therefore, when a lexical retrieval therapy app based on errorful learning is administered in a self-directed setting, it is important to ensure that the learning effectiveness of effortful learning is properly delivered.

To address this gap, this study aimed to develop and evaluate a self-administered lexical retrieval app that facilitates effortful lexical retrieval for individuals with post-stroke aphasia.

This pilot study aimed to assess the feasibility, preliminary effectiveness, and usability of an app-based lexical retrieval therapy for individuals with post-stroke aphasia, in preparation for a future randomized controlled trial. The intervention group received the lexical retrieval therapy app, while the control group completed nonverbal cognitive exercises using a structured workbook. Over the two-week intervention period, we evaluated participant adherence, engagement, and usability through quantitative indicators. In addition, we explored how individuals interacted with automated feedback during effortful learning to inform future improvements in self-administered digital therapies.

Methods

Recruitment

Participants were recruited from a rehabilitation center in Seoul, South Korea. Recruitment was conducted by a stroke specialist. Written informed consent was obtained from all participants. This study follows the CONSORT 2010 guidelines for randomized pilot and feasibility trials and is registered with the Clinical Research Information Service (CRIS ID: KCT0010563).

Participants

The inclusion criteria were as follows: (1) age years 19 or older; (2) diagnosis of stroke at least 6 months before enrollment; (3) assessment for aphasia following a stroke based on the National Institutes of Health Stroke Scale (NIHSS) criteria 34 ; (4) normal cognitive abilities deemed sufficient for lexical retrieval therapy app (tablet device) use by clinicians; and (5) no impairments in vision, hearing, or motor function that would affect tablet use.

The exclusion criteria were as follows: (1) major co-existing neurological disorders such as epilepsy, multiple sclerosis, or Parkinson's disease; (2) severe mental health conditions such as major depression, schizophrenia, or alcohol dependence; (3) insufficient cognitive abilities for independent device use (Mini-Mental State Examination score ≤ 26) 35 ; and (4) illiteracy.

Participants were randomly assigned to one of two groups: an intervention group that used the lexical retrieval therapy app and a control group that received a workbook-based intervention. Randomization was conducted using a pre-determined list to ensure balanced allocation between the two groups in a 1:1 ratio. The randomization procedure was conducted by an independent researcher.

Blinding was not feasible because participants had to actively use either the app or the workbook, requiring direct interaction. Additionally, the researchers could not be blinded because they provided instructions and assisted the participants in navigating the app or using the workbook correctly. Consequently, it was not possible to conceal group assignments.

Lexical retrieval therapy app

The lexical retrieval therapy app was designed to allow individuals with post-stroke aphasia to use it independently, without assistance from clinicians or caregivers. The app includes features that promote effort and support accurate responses that facilitate successful lexical retrieval.

For the intervention, we used Anki, a free flashcard software that is effective for delivering naming therapies.28,36 Anki allows for multimedia flashcards, incorporating images, text, sound, and video. Our app featured 100 lexical items comprising verbs, adjectives, nouns, and pronouns. 37 These items included both highly imageable words (e.g., “refrigerator”) and abstract terms representing relatively intangible concepts (e.g., “poverty”).

The therapy involves two lexical decision training tasks: (1) antonym selection and (2) non-antonymous, non-synonymous associate (NANSA) selection. Antonyms are words with opposing meanings and require training because they often result in a high error rate during spontaneous speech. This was particularly beneficial for learning abstract concepts (e.g., abundance-poverty). Moreover, individuals with post-stroke aphasia typically distinguished antonyms more effectively than synonyms or related words, enhancing their learning experience. As shown in Figure 1, a single lexical item was displayed on the screen, and participants needed to identify and select the correct opposite lexical item from a list of options.

Antonym selection training in the lexical retrieval therapy app. (A) Multiple-choice task for selecting antonyms. (B) Image cues related to the target word were displayed after incorrect responses. (C) The feedback screen showed the correct answer, an explanation of the target word, and an audio replay button for reinforcement.

NANSA pairs consisted of contextually related words that were neither opposites nor synonyms. They focused on learning the relationship between commonly associated terms (e.g., neck-giraffe, nose-elephant). This approach enables individuals with post-stroke aphasia to understand how words function in specific contexts. 38 As shown in Figure 2, lexical items were presented on the screen. Participants were required to analyze the relationship between the pair of lexical items and select the word from the options most closely related to the single item.

NANSA selection training in the lexical retrieval therapy app. (A) Multiple-choice task for selecting NANSAs. (B) Image cues corresponding to the options were displayed after incorrect responses. (C) The feedback screen showed the correct answer, an explanation of the selected word, and an audio replay button for reinforcement.

If a user selected an incorrect answer for any question, they completed a learning session before proceeding to the next item. This session included feedback that allowed users to compare their incorrect responses with the correct ones. The session also provided supplementary visual aids and semantic information for the target word. This method provided repeated learning opportunities for individuals with post-stroke aphasia.

Participants completed a total of 10 sessions over two weeks, with one session per day, totaling five sessions each week. Each session lasted approximately 30 min, consistent with recent studies utilizing Anki for lexical retrieval therapy. 28 Each training session included 50 target words, which ensured a balanced representation of overall frequency and familiarity across tasks. The app tracked user performance data, including accuracy rates and response times, allowing researchers to analyze learning progress effectively. The session duration was designed to enhance participant engagement and align with established evidence for effective intervention delivery.

Multiple-choice questions

Both training tasks were delivered in a multiple-choice format, encouraging users to actively evaluate the correct answer among competitive options. These options included at least one phonologically and semantically similar word. Presenting multiple choices with the correct answer among distractors helped mitigate frustration from unsuccessful retrieval attempts.39,40

A prompt was initially displayed with four options, one of which was correct. Users responded by selecting their choice via touch on the screen. No cues about the correct answer were provided until users selected. Figures 1 and 2 show screenshots of the multiple-choice question interface.

System-guided corrective cues

Cues were designed to encourage sustained retrieval attempts. The system employed a three-step hierarchy, providing progressively specific prompts to help participants arrive at the correct answer through effortful retrieval. Cues, such as phonemic and semantic prompts, have been widely used in aphasia therapy to aid lexical retrieval and improve naming accuracy. 33 Each target word was paired with these two system-guided corrective cues, automatically provided based on users’ responses,41,42 as shown in Figure 3. This approach was based on the increasing cueing strategy, which aligns with the effortful learning framework by correcting errors as part of the learning process.

Stepwise cueing process in the lexical retrieval therapy app. Following incorrect responses, users received progressively specific cues. The process begins with an image cue, followed by a phonemic cue if necessary, and concludes with a full cue before proceeding to the next word.

When a user selected an incorrect answer, the first cue was displayed. On the first incorrect attempt, an image cue appeared, helping users infer the context or characteristics of the target word. An audio cue containing phonemic information was provided on the second incorrect attempt. If the user failed on the final attempt, the correct answer was fully revealed, and the item was marked as completed. Each item allowed for two retries with increasingly specific cues. This stepwise cueing process ensured that users had multiple chances to attempt retrieval before being shown the correct answer.

User-friendly interface

The interface of our lexical retrieval therapy app was designed to accommodate the digital skills of stroke patients, 43 with modifications aimed at enhancing usability, particularly for older adults. In the Anki program, detailed card configuration settings were utilized to enlarge font sizes and button dimensions.

When pressed, buttons were animated to differentiate them from other elements. The spacing between buttons was increased to prevent accidental selections of incorrect options. Buttons were also color-coded to provide immediate feedback: green for correct answers and red for incorrect ones. This visual feedback enabled users to easily recognize whether their responses were correct.

Procedure

Participants underwent a baseline assessment before group assignment to assess their initial language ability. To confirm their aphasia diagnosis and assess its severity and type, they completed the Korean version of the Western Aphasia Battery-Aphasia Quotient (K-WAB-AQ). 44 If they met the eligibility criteria, demographic details were collected. Following the assessment, participants were randomly assigned to one of the two groups. To assess baseline word retrieval ability and create a personalized training list, researchers prepared 100 color images that represented lexical items, which were reviewed by a speech-language pathologist to ensure content validity. To evaluate the interrater reliability of the 100-item lexical retrieval test, six healthy older adults in their 60 s independently rated each item. The Fleiss’ Kappa coefficient was calculated to assess agreement across raters. The result indicated a substantial level of agreement (K = 0.667, p < .001). 45

Each participant was seated in a quiet room for assessment by a researcher. The researcher presented images one by one and asked the participant to name the corresponding word aloud. The participant's responses were recorded for later analysis. Another researcher reviewed the recordings to mark any incorrect responses.

From the list of incorrectly named words, 30 words were randomly selected for each participant to create a personalized training list. If a participant had fewer than 30 incorrect responses, additional words were chosen from correctly named items to reach the total. These 30 words were then divided into three sets of 10. Two sets were used for training, while the remaining set was left untrained to assess generalization effects.

Participants in the intervention group engaged in trainings using a lexical retrieval therapy app for at least 30 min daily, five days a week, over two weeks. The app guided participants through antonym selection and NANSA selection tasks, reinforcing retrieval with automated feedback. Before the intervention began, researchers logged into each participant's Anki account, uploaded the personalized word sets, and provided aphasia-friendly written instructions 46 with app screenshots to ensure independent use.

In contrast, participants in the control group engaged in nonverbal cognitive exercises using a structured workbook during the same intervention period. As this was a pilot study involving an app-based intervention, the group was designed to control for the effects of elements that do not provide lexical retrieval therapy. 47 The workbook was refined using standard cognitive rehabilitation materials for stroke rehabilitation. The workbook included cognitive exercises such as visual pattern recognition, problem-solving tasks, and symbol-matching activities. 25 Participants identified corresponding patterns in visual tasks, solved logical reasoning problems, and matched symbols to given reference figures. This workbook was intended to control for the effects of additional activity and attention present in the lexical retrieval therapy app group. Also, unlike participants in the intervention group, those in the control group did not receive personalized word lists to avoid unintended lexical retrieval training. This design ensured that any observed effects could be attributed to lexical retrieval training rather than general cognitive engagement.

Training adherence was tracked for both groups. The intervention group's training data were automatically recorded in the Anki app, capturing task completion rates, response accuracy, and response times. The control group self-reported their practice sessions using a checklist.

Participants in both groups were contacted by phone every other day during the two-week intervention to monitor adherence. In the intervention group, these calls also served to address any technical issues related to tablet use.

At the end of the intervention, participants returned the tablets or checklists. Control group participants were given the option to use the lexical retrieval therapy app for an additional two weeks after the study.

Outcomes measurement

Evaluations

Evaluations were conducted at baseline and two weeks after the intervention. To maintain objectivity, post-treatment assessments were conducted by a researcher who had not participated in the initial assessment. After completing the post-intervention assessment, participants completed a survey to assess their intrinsic motivation. Additionally, participants in the intervention group completed an extra survey to evaluate the usability of the lexical retrieval therapy app.

Primary Outcomes: Lexical Retrieval Test

The lexical retrieval test evaluated participants’ ability to retrieve words accurately. During the baseline assessment, a researcher presented 100 lexical items, asking participants to name them aloud while another researcher recorded responses and noted correct and incorrect answers. No cues or feedback were provided during the test, and only accurately named words were marked as correct. The test was adapted from previous research to suit our study. 48

The total number of correctly retrieved words was recorded to ensure objectivity. A different set of images was used for the post-intervention assessment to prevent participants from memorizing associations.

Secondary Outcomes: Boston Naming Test

The Korean Boston Naming Test (K-BNT) was used to evaluate naming ability and word retrieval skills. 49 The K-BNT is commonly utilized to evaluate language function and detect aphasia. This study employed a shorter version designed for patients with limited attention spans and difficulty with lengthy assessments.

The original 60-item K-BNT is divided into four parallel sets of 15 items, each designed to maintain comparable difficulty levels. Each assessment used one of these short-form sets to accommodate participants’ attention spans, with different sets assigned for pre- and post-intervention evaluations to minimize learning effects.

Scores were calculated according to K-BNT criteria, with the highest possible score being 60 points. 50 A correct response without assistance earned 4 points. Receiving a semantic cue reduced the score to 3 points, a first syllable cue to 1 point, and a second syllable cue to 0.5 points. No points were awarded for an incorrect response.

Feasibility & Usability

Feasibility was determined based on adherence and engagement. Adherence was assessed by measuring daily training completion rates throughout the intended therapy period to evaluate how well participants followed the prescribed intervention protocol. As the intervention was grounded in the principles of effortful therapy, successful and effortful lexical retrieval was a central goal. Accordingly, Engagement was evaluated based on motivation levels, training accuracy, and response times. Training accuracy and response time were measured throughout the intervention period in the intervention group as indicators of successful effortful retrieval. Participants’ motivation was evaluated using the Intrinsic Motivation Inventory (IMI), a validated tool in stroke rehabilitation research.

The IMI measures various dimensions, including interest/enjoyment, competence, effort/importance, value/usefulness, pressure/tension, autonomy, and relatedness. Twenty items from the validated Korean version were selected and rated on a 7-point Likert scale. The Korean version of the IMI, validated with high reliability (Cronbach α = .96), 51 was used in this study.

We assessed daily training accuracy to evaluate lexical retrieval performance based on the number of correctly identified words, both with and without self-correction. To analyze retrieval effort, response times were recorded from when an item appeared on the screen until a response was selected. Longer response times suggested greater retrieval effort, while shorter times indicated minimal engagement. All app data were collected using Anki's statistical features and add-ons.

Usability was evaluated to determine whether the lexical retrieval therapy app was suitable for use by individuals with aphasia. To assess this, we administered the system usability scale (SUS), a validated questionnaire that measures perceived usability. 52 Participants rated the app's usability on a 5-point scale ranging from “Strongly Disagree” to “Strongly Agree,” focusing on whether individuals with post-stroke aphasia could use the app independently without major usability issues. SUS scores were calculated following the standard scoring method, which involves reverse-coding even-numbered items, summing all responses, and multiplying the total by 2.5 to obtain a final score on a scale of 0–100. 53

Statistical Analysis

Power analysis indicated that 18 participants were needed to achieve 80% power at a significance level of 0.05, assuming an effect size of 0.62.54,55 To account for a 10% dropout rate, the target sample size was set at 20 participants, with 10 per group. A post-hoc power analysis was conducted following the analysis of the primary outcome.

Before the intervention, group homogeneity was assessed for comparability. The Mann-Whitney U test was used for continuous variables, and the Chi-square test was used for categorical variables.

Changes in lexical retrieval test and BNT scores were analyzed using analysis of covariance (ANCOVA). The dependent variables were post-intervention scores on the lexical retrieval test and the BNT, with the group as the independent variable. Baseline scores were included as covariates to control for initial group differences and to address baseline heterogeneity.

To further analyze primary outcomes, the Wilcoxon signed-rank test was used to compare the improvement rates of trained and untrained words within the intervention group.

To assess the engagement component of feasibility, training accuracy and response times were analyzed using the Friedman test for repeated measures. In addition, IMI scores were compared between the intervention and control groups using the Mann-Whitney U test to evaluate differences in motivation levels.

All statistical analyses were performed using SPSS (version 30.0; IBM Corp), with statistical significance set at P < .05.

Results

Participants

Participants were recruited between December 2024 and January 2025, and the intervention period lasted 2 weeks for each participant. As Figure 4, 20 participants were screened for eligibility. Two participants were excluded because they had difficulties using a tablet device, and one declined to participate. Seventeen participants were deemed eligible and were randomly assigned to either the intervention (n = 9) or control (n = 8) group. No participants withdrew during the study, and all 17 completed the intervention, which allowed their inclusion in the final analysis.

Flowchart of the participants in the study.

Table 1 presents a summary of the participants’ baseline demographics and clinical characteristics. The mean age of the participants was 67.36 years (SD = 11.24, range = 52–86 years), and 11 participants (65%) were male. The mean time since their stroke was 6.12 years (SD = 3.24 years).

NIHSS: National Institutes of Health Stroke Scale.

WAB-AQ: Western Aphasia Battery-Aphasia Quotient.

MMSE: Mini-Mental Statement Examination.

Aphasia severity was evaluated using the NIHSS. As detailed in Table 1, 82% of participants exhibited mild to moderate post-stroke aphasia, while 18% had severe post-stroke aphasia. The subtypes of aphasia varied, with anomic aphasia (29%) being the most common, followed by global aphasia (18%), motor aphasia (18%), and Broca's aphasia (18%). Educational attainment among participants varied, with 29.4% having completed primary school or lower, while 35% had a college-level education or higher.

The baseline assessment indicated that most characteristics of the intervention and control groups were comparable. However, significant differences were observed in the lexical retrieval test (P = .02) and BNT (P = .02), with the intervention group achieving higher scores in both tests. The mean score on the lexical retrieval test was 37.44 (SD = 14.07) for the intervention group and 23.88 (SD = 14.75) for the control group. The mean BNT score was 44.38 (SD = 16.49) for the intervention group and 25.06 (SD = 23.53) for the control group.

Furthermore, the WAB-AQ scores ranged from 21 to 86.9, with a mean score of 73.2 (SD = 7.3) for the intervention group and 84.3 (SD = 4.1) for the control group.

MMSE scores ranged from 26 to 30, with comparable cognitive status between the intervention (M = 28.17, SD = 2.04) and control groups (M = 28.75, SD = 1.16).

Primary outcomes

ANCOVA was conducted to compare lexical retrieval test scores between the intervention and control groups. After adjusting for baseline scores, the post-intervention mean score was significantly higher in the intervention group (M = 57.22, SD = 16.21) than in the control group (M = 28.25, SD = 17.38). The adjusted mean difference was 9.26 (SD = 2.81), with a 95% confidence interval (CI) of 3.23–15.31. The ANCOVA analysis indicated a significant group effect (F1,14 = 10.82, P = .005), suggesting that the intervention positively affected lexical retrieval ability. A post hoc power analysis was conducted using G*Power to evaluate the adequacy of the sample size. Based on the observed effect size (η² = 0.44, f = 0.886), a total sample of 17 participants, and an α level of .05, the statistical power was calculated to be 0.92. This indicates sufficient power to detect between-group differences in the ANCOVA model.

A Wilcoxon signed-rank test was used to compare the improvement rates of trained and untrained words within the intervention group. The results showed that trained words improved by 71.25% (SD = 13.82), whereas untrained words improved by 61.25% (SD = 8.34). This difference was statistically significant (Z = −1.98, P = 0.04) with a large effect size (r = 0.70). (Table 2)

Results of ANCOVA for lexical retrieval test and Boston naming test.

Secondary outcomes

The standardized BNT scores improved in the intervention group, with a mean score increase of 7.67 points (SD = 11.14). In contrast, the control group experienced a mean decrease of 1.75 points (SD = 5.44). After adjusting for baseline scores, the mean difference was 11.04 (SD = 6.04), with a 95% CI of 11.50–45.48. According to the ANCOVA analysis, the intervention group showed greater improvement than the control group on BNT score, as evidenced by a significant group effect (F1,14 = 12.94, p = .003).

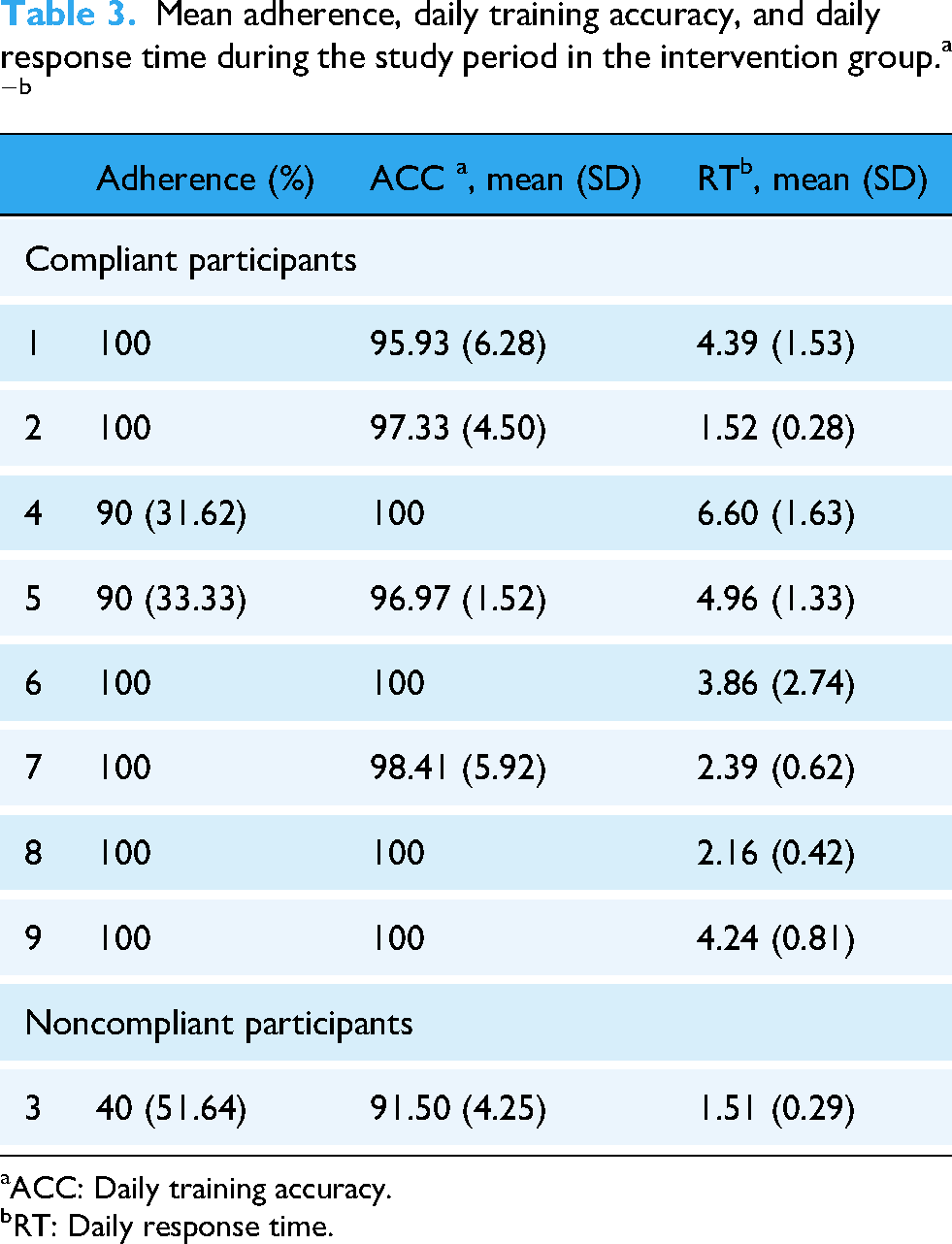

Feasibility

Table 3 presents adherence and engagement-related measures, including daily training accuracy and response times, for all participants in the intervention group. Of the nine participants, eight completed the two-week intervention, meeting the recommended training duration. Frequent researchers contact likely supported adherence through consistent monitoring.

ACC: Daily training accuracy.

RT: Daily response time.

Figure 5 illustrates the daily training accuracy and response time for compliant participants. The Friedman test revealed a significant increase in daily accuracy over the study period (χ213 = 32.94, P = .002). However, response times showed high variability and did not demonstrate a statistically significant change over time (χ213 = 19.06, P = .12). While overall response times did not significantly increase, three participants exhibited a positive correlation between response time and training duration: participant 1 (ρ = .93, P < 0.001), participant 6 (ρ = .67, P = 0.008), and participant 7 (ρ = 0.53, P = 0.05) exhibited increasing response times throughout the intervention.

(A) Daily response accuracy and (B) daily response time over the study period among compliant participants in the intervention group.

Table 4 presents the IMI scores for both groups following the intervention. The intervention group demonstrated significantly higher intrinsic motivation (M = 89.75, SD = 12.60) compared to the control group (M = 59.20, SD = 6.05; U = 36.5, Z = 2.42, P = .02). Within the subdomains, the intervention group scored significantly higher in effort (M = 23.62, SD = 4.20) than the control group (M = 12.80, SD = 2.16; U = 40, Z = 2.93, P = 0.002). A similar pattern was noted for interest, with the intervention group scoring 19.62 (SD = 5.60), compared to the control group at 9.40 (SD = 2.70; U = 36.5, Z = 2.42, P = 0.01). Additionally, the intervention group reported higher perceived choice (M = 10.37, SD = 3.02; P = 0.003) and relatedness (M = 7.75, SD = 2.25; P = 0.02). There were no significant differences between groups regarding perceived competence, pressure/tension, or value/usefulness.

IMI scores for the intervention and control groups analyzed using the Mann-Whitney u test a

IMI: Intrinsic Motivation Inventory.

Usability

The mean SUS score was 83.88 (SD = 5.17), with the lowest score of 77.5 and the highest score of 92.5. Results showed that most users found the lexical retrieval therapy app easy to use (66% strongly agreed), felt confident while using it (66% strongly agreed), and believed that most people would be able to learn it quickly (77% strongly agreed).

The average SUS score corresponded to Grade A on the grade scale, indicating excellent usability. 56 In general, a SUS score above 71 is generally considered acceptable, 57 with scores ranging from 74.1 to 77.1 indicating a good level of usability based on established benchmarks. 58

However, if we recalculate the SUS average score excluding the noncompliant participants, the average rating would be 84.68 (SD = 4.89), which reflects an A + grade, indicating excellent or best-imaginable usability. This suggests that lower usability perception may have influenced adherence. The average of the answers for the different items is shown in Table 5.

SUS scores for the intervention group.

Discussion

This study evaluated the feasibility and preliminary effectiveness of a self-administered lexical retrieval therapy app designed for individuals with post-stroke aphasia. The intervention group demonstrated significant improvements in naming ability compared to the control group, suggesting that self-administered lexical retrieval therapy app can effectively support lexical retrieval. Additionally, participants in the intervention group reported higher levels of intrinsic motivation, particularly in terms of effort and interest, indicating that self-administered training helped maintain engagement.

Previous studies have explored digital therapy apps in aphasia, highlighting their potential benefits in speech-language therapy.31,33 However, most focused on clinician-assisted interventions, providing limited insight into how individuals with aphasia engage in self-administered therapy.33,43 This study addressed this gap by evaluating usability and feasibility in an independent learning context, offering valuable insights into engagement patterns and training behaviors.

The central theoretical basis of our app is effortful learning, which distinguishes it from previous studies that predominantly employed errorless learning in digital therapy for aphasia. For example, the Big CACTUS trial adopted an errorless therapy-based app, which has been shown to produce positive learning outcomes. 25 However, this approach has been criticized for its passive nature and the risk of inducing boredom due to its repetitive and constrained structure.59,60 In contrast, errorful learning fosters active engagement and deeper processing through effortful retrieval. 41 Empirical evidence supports that effortful lexical retrieval therapy enhances naming performance in individuals with post-stroke aphasia.4,10,13

Effortful lexical retrieval therapy in self-administered settings presents unique challenges. Negative feedback from the system may adversely affect patient recovery.9,10 In particular, misclassification of speech attempts can lead to frustration and may increase the risk of users disengaging from the intervention. 61 To address these challenges, we developed a self-administered lexical retrieval therapy app grounded in the principles of effortful learning.

Traditional SLT cueing strategies rely on static, therapist-provided cues. In contrast, this app utilized a dynamic, system-guided cueing hierarchy that automatically adjusted the specificity of cues based on user responses, aligning with the principles of increasing cueing. This structured cueing hierarchy likely enhanced user engagement and improved task completion rates, consistent with previous findings on adaptive cueing strategies. 62 Additionally, the inclusion of multiple-choice questions allowed participants to self-correct errors, which is a vital aspect of effortful learning and differentiates this app from conventional lexical retrieval therapy apps.

By adhering to the principles of effortful learning, this app integrated feedback mechanisms to help users distinguish correct answers from mistakes and reinforce correct responses. Previous research indicates that feedback after retrieval failures improves learning outcomes. 63 Unlike conventional SLT approaches, which often provide praise only for correct answers, this app employed motivational feedback (e.g., “It's okay to make mistakes” or “Failure teaches success”) based on growth mindset principles.64,65 This feedback strategy mitigated frustration and sustained motivation, particularly for individuals with post-stroke aphasia who often face retrieval challenges.

Based on this approach, we evaluated the app's feasibility, usability, and preliminary effectiveness. Participants in the intervention group completed two weeks of training with the lexical retrieval therapy app. They exhibited significant improvements in lexical retrieval test when compared to the control group. These findings align with previous research that supports the benefits of structured retrieval-based interventions in aphasia therapy.2–4 Notably, the effects of the lexical retrieval therapy app were more pronounced for trained words. Improvements were also observed in untrained words, suggesting the potential for generalization of naming ability. 66 This finding is supported by the significant improvement in the intervention group's performance on the BNT. Therefore, our lexical retrieval therapy app demonstrated the potential for generalized naming improvement. However, prior findings suggest that improvements in trained words may not necessarily generalize to everyday communication in individuals with aphasia. 27 Thus, future research is needed to investigate whether these effects extend to functional, real-life conversational contexts.

This study also explored the relationship between retrieval effort and training outcomes. Most participants in the intervention group maintained high accuracy throughout the study period (over 90%), although response times varied. Some participants exhibited an upward trend in response time, which may indicate greater cognitive effort in lexical retrieval. These participants also showed above-average improvements in lexical retrieval tests and BNT scores, supporting the hypothesis that sustained effortful retrieval enhances therapy outcomes.11,13,61 Longer response times in self-administered settings might signify greater cognitive effort in retrieval without relying on cues. 67 Conversely, excessively short response times may reflect superficial engagement. 68 These findings align with prior research suggesting that increased effort enhances focus and performance. 69 The variability in response times underscores the concept of “optimal response time,” which emphasizes the balance between accuracy and retrieval speed as a critical factor in learning efficiency. 68 We extend these concepts by suggesting that the balance between accuracy and response time may also influence perceived usability. The participant who reported the lowest SUS score also showed the lowest accuracy and extremely short response times within the intervention group. This finding implies that optimizing learning efficiency may be critical to enhancing usability for individuals with aphasia.

In terms of adherence, a total of 89% of participants in the intervention group completed the two-week intervention as intended. The only non-compliant participant was the one who reported the lowest usability score. Meanwhile, participants expressed differing perceptions of the training intensity after intervention. One participant found the training challenging, while another considered it insufficiently difficult. This discrepancy may stem from adaptive item scheduling, as the app prioritized harder, less accurately recalled words using Anki's spaced retrieval algorithm.4,70,71 While spaced retrieval has effectively reinforced word learning in prior research, its impact on training engagement in digital aphasia therapy warrants further investigation. These findings suggest that digital therapy should incorporate flexible adjustments in training intensity to accommodate diverse patient needs and optimize adherence.

Limitations

This study has some limitations. First, as a pilot study, the small sample size limits the generalizability of the findings. Despite efforts to maintain demographic homogeneity, baseline differences in lexical retrieval and BNT scores were observed between the intervention and control groups. This imbalance suggests that randomization was not fully achieved, likely due to the small sample size. These baseline differences may have influenced the observed improvements, particularly in primary outcome measures, and should be considered when interpreting the results. Although post-hoc power analysis indicated sufficient power to detect large effects, the small sample size may still increase the risk of effect size overestimation.

In addition, the potential for self-selection bias should be acknowledged, as participants more comfortable with technology may have been more likely to enroll. Future studies should address these issues by including larger sample sizes and broader recruitment strategies to improve group comparability and reduce potential bias.

Second, the two-week intervention period was relatively short, limiting the ability to assess the long-term effects of the digital therapy app intervention. A longer intervention period and follow-up assessments are necessary to determine whether improvements in naming ability are sustained over time. Future studies should explore the optimal duration of digital therapy and its long-term impact on lexical retrieval.

Third, this study focused on improving naming ability but did not evaluate whether these improvements resulted in functional communication gains in daily life. Naming deficits are a fundamental symptom of aphasia; however, successful naming in therapy does not necessarily reflect enhanced communication in real-world situations. Future research should investigate whether gains in lexical retrieval lead to significant improvements in conversational skills and everyday communication tasks.

Fourth, while this study evaluated the feasibility and usability of the lexical retrieval therapy app, it did not assess patient satisfaction beyond motivation metrics. Although intrinsic motivation scores indicated high engagement, further qualitative research is needed to better understand user experiences, perceived challenges, and preferences in self-administered digital therapy. Moreover, while we observed potential relationships between accuracy, response time, usability, and adherence, it was difficult to determine whether these associations stemmed from specific system features or individual participant characteristics. Therefore, future studies should adopt an explanatory sequential mixed methods approach, incorporating qualitative interviews to help contextualize and explain patterns observed in the quantitative data.

By addressing these limitations, future research can refine the implementation of digital interventions and demonstrate their effectiveness in treating aphasia.

Conclusions

This study provides preliminary evidence supporting the feasibility and effectiveness of a self-administered lexical retrieval therapy app for lexical retrieval therapy in individuals with post-stroke aphasia. Although digital interventions have shown promise in aphasia treatment, research on self-administered, tablet-based lexical retrieval therapy remains limited. This study fills that gap by designing a therapy based on effortful learning principles, building on previous research on digital lexical retrieval treatments, and evaluating the effects the app on naming ability for both trained and untrained lexical items.

The results revealed significant improvements in naming accuracy for trained words, with generalization to untrained words. This finding suggests that structured effortful retrieval can enhance lexical retrieval performance in self-administered settings. The app facilitated structured engagement by incorporating real-time corrective cues and multiple-choice tasks, while maintaining an optimal balance between effort and success. These features enabled consistent participation without overwhelming users, which contributed to sustained engagement and motivation. Furthermore, the app's self-administered format addresses accessibility challenges for individuals with limited access to clinical support, providing an alternative to traditional therapist-led interventions.

Future research should investigate the long-term retention of naming improvements and adherence patterns among a larger participant group with extended follow-up periods. Additionally, exploring adaptive intervention strategies, such as real-time difficulty adjustments and personalized feedback mechanisms, may further enhance engagement and optimize therapeutic outcomes. Large-scale controlled studies are essential to validate these findings and establish tablet-based lexical retrieval therapy as a scalable approach for post-stroke aphasia treatment.

Footnotes

Abbreviations

Acknowledgments

This research was supported by the Institute of Information & Communications Technology Planning & Evaluation grant funded by the Korean government (H0906-24-1018) to support the digital transformation of AI-based healthcare systems. This research was supported by a grant from the Korea Health Technology R&D Project through the Korea Health Industry Development Institute (KHIDI), funded by the Ministry of Health & Welfare, Republic of Korea (grant number: RS-2023-00262087).

Ethics approval and consent

This study was approved by the Yonsei University institutional review board (approval no. 7001988-202412-HR-2555-03) and conducted in accordance with the Declaration of Helsinki. No adverse events or unintended effects were reported during the study.

Consent for publication

None.

Author contributions

All authors contributed significantly to this study. KSB and YK conceived and designed the study. KSB, MK, and SC conducted the investigation and collected data. KSB, MK, and SC analyzed and interpreted the data. KSB and JWK developed the study methodology. KSB drafted and revised the paper. MK and YK critically reviewed the paper. YK and JK secured funding for this study. All authors have reviewed and approved the final version of the paper for submission.

Funding

This research was supported by the Institute of Information & Communications Technology Planning & Evaluation grant funded by the Korean government (H0906-24-1018) to support the digital transformation of AI-based healthcare systems. This research was supported by a grant from the Korea Health Technology R&D Project through the Korea Health Industry Development Institute (KHIDI), funded by the Ministry of Health & Welfare, Republic of Korea (grant number: RS-2023-00262087).

National IT Industry Promotion Agency, Korea Health Industry Development Institute, (grant number H0906-24-1018, RS-2023-00262087).

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Availability of data and materials

The original contributions presented in this study are included in the article. Further inquiries can be directed to the corresponding author(s).