Abstract

Background

Self-care mobile health (mHealth) apps empower users to manage their health independently by supporting symptom tracking, medication adherence, and lifestyle changes. Given the limitations of mobile platforms, such as small screens and limited input capabilities, usability is a critical factor influencing the successful adoption of these apps.

Methods

This article presents a systematic review of user-based usability testing practices in self-care mHealth applications. A total of 68 primary studies were retrieved and analyzed from the Scopus database, published between 2014 and 2024, following established systematic review protocols.

Results

The review revealed a growing research interest in usability testing for self-care mHealth apps. Usability testing commonly employs mixed-method approaches, combining standardized questionnaires, such as the System Usability Scale, with qualitative methods (think-aloud protocols and interviews). A diverse group of participants, including patients and healthcare professionals, was used for testing. Testing was conducted in both controlled (labs and hospitals) and uncontrolled (homes) environments. The core usability attributes, ease of use, engagement, and satisfaction, were widely used.

Conclusion

The review offers actionable insights for researchers seeking to improve the usability of self-care applications. Further research is needed in several directions: justifying the appropriate sample size for participants, expanding the scope of usability testing to include underexplored attributes, and conducting longitudinal usability studies, and so on.

Introduction

The rapid adoption of mobile health (mHealth) technologies has revolutionized the delivery of healthcare services by enabling patients to take charge of their health through self-care apps.1,2 Self-care apps help facilitate health-related activities such as managing chronic conditions and promoting a healthy lifestyle.3,4 These apps also provide easy access to reliable health information, helping patients better understand symptoms, medications, and lifestyle changes needed to improve their quality of life.5–7 This reduces the need for frequent visits to clinics, saving time and cost. 8 Furthermore, these apps can enhance communication by enabling data sharing with healthcare providers, allowing doctors to track patient progress remotely and deliver personalized care.

With the increasing reliance on mHealth apps, ensuring their usability has become important for their success and acceptance.3,9 Usability refers to how effectively, efficiently, and satisfactorily users can achieve their goals. 10 Usability testing ensures the application is simple enough for diverse user groups, including people with limited digital literacy.11,12 It provides valuable feedback from users, which can be used to improve the app. 11 Poor usability can lead to user frustration and even abandonment. In the case of self-care apps, usability is not only important to retain users but also to ensure that these apps positively impact patients’ health outcomes.9,13,14 These apps cater to diverse populations, including older adults with varying levels of digital literacy, and usability challenges can disproportionately affect those who need them most. 14 Self-care mHealth apps differ from clinical mHealth applications in their objectives. While clinical apps are typically designed for use under medical supervision, self-care apps are often used independently by individuals seeking to manage their own health and well-being. This demands for different usability testing approaches for self-care apps.

Motivated by this, a systematic review was conducted to establish a body of knowledge on the usability testing practices of self-care apps. Usability testing can be classified into three broad categories: expert-based (heuristic evaluation and cognitive walkthrough), user-based (potential users), and automated (click stream analysis). In this research, our focus is on user-based usability testing practices. User-based usability testing is crucial because it evaluates a product with real users, ensuring it meets their needs and expectations. The review will consolidate information on the state of research, participants, data collection instruments, test environments, and usability attributes used. Systematic reviews are important because they can provide evidence-based discussion on the topic of interest.15,16 By consolidating findings from multiple studies, systematic reviews can help identify best practices in the domain. 17 systematic review will make a significant contribution to the domain of self-care mHealth applications. By synthesizing existing usability testing practices, the study aims to inform the development of a framework specifically for testing self-care apps. This framework can offer developers practical guidelines for conducting usability testing. The rest of the article is organized as follows: the next section discusses usability and its importance in the field of mHealth apps. The Methodology section describes how the research was designed and executed. The Results section analyses the extracted data. The Discussion section presents the findings and implications of the study. Finally, the article concludes by suggesting directions for further investigation in the field.

Usability

Usability refers to the ease with which users can interact with a system to achieve their goals. 10 Poor usability can result in user frustration and abandonment of the application.10,11,18,19 In mHealth apps, usability ensures that diverse user populations, including those with limited technical skills, older adults, and individuals with disabilities, can use the app easily.20,21 Lewis 11 defined usability testing methods as formative and summative. Formative methods, such as heuristic evaluations, cognitive walkthroughs, and user testing, are conducted during the development phase to identify and correct usability issues, while summative methods include analytics-based studies to measure the overall user experience and satisfaction.

mHealth apps encounter unique usability challenges due to their diverse user base and complex functionality.22–24 These challenges include cognitive overload, inconsistent interfaces, accessibility barriers, and data privacy concerns.25,26 Cognitive overload is widespread, as many apps present excessive information that overwhelms users and hinders their ability to concentrate on critical tasks.27,28 Inconsistent interfaces involve variations in design elements across screens that confuse users and decrease efficiency. 29 Accessibility barriers affect older adults, visually impaired users, and individuals with low literacy levels.21,30–32 Data privacy concerns persist, with users often reluctant to provide sensitive health information due to poorly designed privacy features.33,34

Several strategies are in place to address these challenges. User-centered design involves end users throughout the development process, ensuring that apps meet their needs and preferences.35,36 Simplified interfaces with clear, minimalistic designs reduce cognitive load and improve navigation. 29 Accessibility features, such as text resizing, voice commands, and multilingual support, make apps more inclusive.32,37,38

Methodology

The systematic review was divided into three stages: planning, conducting, and reporting. The planning stage included developing the review protocol. The conducting stage includes selecting and reviewing the studies. The reporting stage involves writing up the review and sharing the findings with the research community. Figure 1 illustrates the systematic review process adopted in this article.

Systematic review process.

Planning

Data sources

The search was conducted using the Scopus database. The Scopus database is recognized as the largest abstract and citation repository of peer-reviewed literature. It is widely regarded for its comprehensive coverage of high-quality research across a broad range of disciplines. The use of the Scopus database for the search ensured access to a credible collection of indexed publications. Journal articles and conference papers were included as data sources to ensure a balanced representation of research on usability testing for self-care apps.

Research questions

The goal of the review is to explore usability testing practices for self-care apps. Based on the goal, research questions were generated. Table 1 lists the research questions. Addressing these questions will provide a comprehensive understanding of usability testing practices for self-care apps.

Research questions.

Search strategy

This systematic review adopted a comprehensive search strategy to identify relevant studies. Keywords included terms such as “usability testing,” “usability evaluation,” “self-care,” “mHealth apps,” and “mobile health apps.” Boolean operators (e.g. AND, OR) were utilized to refine search results, ensuring the inclusion of a wide range of studies. Searches were limited to articles published between 2014 and 2024 to capture recent results from the past 10 years. The search was executed between 10 December 2024 and 20 December 2024. Additionally, reference lists of included articles were manually screened to identify any potentially relevant studies that might have been overlooked.

Inclusion and exclusion criteria

The inclusion criteria for this review were as follows:

IC1: Studies focusing on mHealth apps designed for self-care. IC2: User-based usability testing of self-care apps has been reported in the article. IC3: Studies provide empirical data on participants, data collection instruments, usability attributes, and so on.

The exclusion criteria for this review were as follows:

EC1: Studies focusing on mHealth apps but unrelated to self-care. EC2: Reviews, opinion pieces, or editorials without empirical data. EC3. Studies not published in English.

IC1 was defined to only include work that is pertinent to the research and centered around self-care apps. IC2 made sure that only papers’ on usability testing of self-care apps were included. We meticulously examined the title and abstract of every paper we gathered to apply IC1 and IC2 manually. To make sure all requirements were met, the complete text of the work was reviewed. IC3. Papers that do not contribute to usability testing of self-care apps were excluded by EC1 and EC2. Finally, we only included English-language papers using EC3.

Conducting

Study selection

This review followed the Preferred Reporting Items for Systematic Reviews and Meta-Analyses (PRISMA) guidelines to ensure transparency and reproducibility. A PRISMA flowchart was included to illustrate the study selection process, from initial search results to the final inclusion of studies. The flowchart in Figure 2 details the number of records identified through database searches, records screened, full-text articles assessed for eligibility, and studies included in the review.

PRISMA flowchart.

Quality assessment

The quality of the included studies was assessed manually using a checklist tailored to the goals of this systematic review. The studies should use a suitable design for usability testing (e.g. task-based testing, surveys, and interviews), provide sufficient information about participant demographics and sample size, describe the data collection instruments and procedures in detail, and clearly report and interpret the usability findings.

Data extraction

Data from the selected studies were systematically extracted using a predefined template to ensure consistency and accuracy throughout the review process. Extracted information included publication trends (year of publication and sources), and contextual details about participants, such as their age and other relevant characteristics (e.g. familiarity with self-care technologies), as these factors can significantly influence usability outcomes. In addition, data collection instruments, such as surveys, interviews, think-aloud protocols, or automated tracking tools, were documented to understand the methods used to gather user feedback. Information about the test environment was also recorded, including whether testing occurred in a controlled lab setting, a real-world environment, or remotely, as the context can impact user behavior and the generalizability of findings. Finally, usability attributes were extracted to identify the key dimensions of usability assessed in the studies. These attributes included metrics such as effectiveness, efficiency, user satisfaction, and so on.

Results

RQ1. What is the current publication trend?

In recent years, there has been a surge in usability research emphasizing the importance of usability in self-care apps. As shown in Figure 3, the number increased from just one or two publications per year in the early years to a high of 10 publications in 2021 and 2022, illustrating a significant upward shift. This growth aligns with the broader adoption of mHealth technologies, particularly accelerated by the COVID-19 pandemic, which emphasized the need for accessible and effective self-care tools. The studies were also published in high-impact journals such as the Journal of Medical Internet Research (JMIR), which frequently publishes research on this topic. A comparison of publication venues shows that journal papers (n = 63) significantly outnumber conference papers (n = 5). This suggests that usability research on self-care apps is predominantly published in journals, likely due to the need for comprehensive studies with rigorous methodologies, detailed analyses, and broader discussions. Journal publications typically undergo a more extensive peer-review process, allowing for higher-quality contributions to the field. In contrast, the limited number of conference papers indicates that shorter or more experimental findings are less commonly presented at conferences, and there may be fewer conferences on this topic.

Year wise distribution of publications of studies on self-care apps.

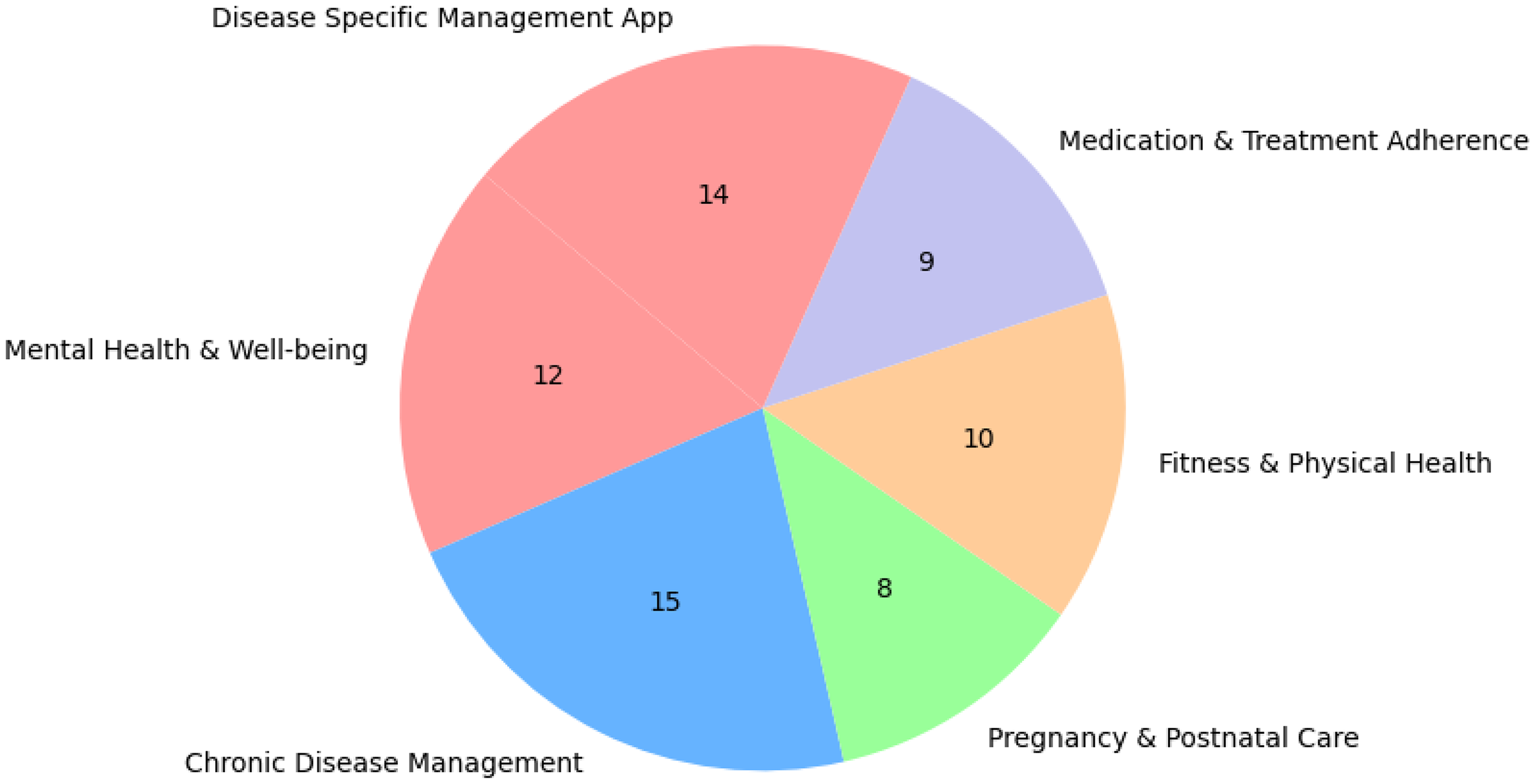

The types of self-care apps studied in usability include mental health and well-being apps (n = 12), which receive significant attention. Fitness and physical health apps (n = 10), which include exercise tracking and nutrition management, are also widely studied. Additionally, chronic disease management apps (n = 15), particularly for conditions such as diabetes and cardiovascular diseases, have been a focal point of usability testing due to their critical role in patient self-care. Pregnancy and postnatal care apps (n = 8) are also growing, addressing maternal health and early childhood care. Furthermore, medication and treatment adherence apps (n = 9), such as those supporting chemotherapy patients and cardiac rehabilitation, highlight the role of mobile health solutions in improving long-term health outcomes. Disease-specific management apps (n = 14), including those focused on Parkinson's disease, asthma, and liver cirrhosis, further demonstrate the breadth of self-care applications under investigation. Figure 4 shows the different types of self-care apps used for analysis in this article.

Categorical distribution of studies on self-care apps.

RQ2. Who are the typical participants in usability testing?

Diverse groups of participants, including patients and health care professionals, were used for usability testing. Patients (n = 56) were often aged between 18 and 65 years, with the minimum and maximum sample sizes ranging from three to 421 participants. The participants were recruited via convenience or purposive sampling with inclusion criteria such as smartphone ownership, language proficiency, and willingness to engage in usability testing. Gender distribution varied, with many studies reporting a higher proportion of female participants, and education levels ranged from primary to tertiary. Healthcare professionals (n = 25) included dietitians, nurses, and a minimum and maximum number were two and 24, respectively, contributing based on their expertise, often with years of experience in their respective fields. Recruitment for experts typically occurred through hospitals or professional networks. Age diversity was often underreported or narrowly focused. Most studies limited participant recruitment to the working-age population (18–65 years), excluding older adults, who are a key demographic for many self-care apps, particularly those targeting chronic disease management, medication adherence, or rehabilitation. Only a minority of studies explicitly included participants aged 65 and above, and even fewer conducted usability analysis tailored to age-related factors such as reduced dexterity, visual impairments, or digital literacy. Few studies also adopted a mixed approach by combining patients and health care professionals (n = 12), with sample sizes ranging from a minimum of eight to a maximum of 303 participants. This approach facilitated a comprehensive evaluation.

In summary, sample sizes ranged from two participants to large evaluations involving hundreds of participants. While ethical approvals and informed consent were consistently addressed, many studies lacked justification for their sample sizes and omitted diversity metrics like ethnicity or socioeconomic background. In terms of age and gender diversity, many studies focused on adult populations between 18 and 65 years, with limited inclusion of older adults (65 +), despite their importance in self-care. Gender representation varied; few studies provided disaggregated usability findings by gender, and non-binary or gender-diverse participants were rarely reported. Figure 5 illustrates the Venn diagram on participant selection.

Venn diagram on participant distribution.

RQ3. What methods are used to collect data during usability testing?

The studies employed various data collection techniques, including questionnaires, observation, focus groups, and think-aloud protocols. Questionnaires were among the most frequently employed methods for usability evaluation (n = 46). These questionnaires were often implemented as pre-test and post-test tools. Pre-test questionnaires generally gathered user demographics, prior experiences, and familiarity with digital tools, while post-test questionnaires focused on assessing usability. A wide range of standardized scales was incorporated into these questionnaires, including the System Usability Scale (SUS), Mobile Application Rating Scale (MARS), and mHealth App Usability Questionnaire (MAUQ). Other frameworks used to develop questionnaire items included ISO/IEC 25010 and Nielsen's usability heuristic.

Observation was frequently used with other methods (n = 28). Observations were conducted by researchers, clinicians, and usability experts, capturing real-time interactions. Tools like think-aloud protocols, video recordings, and task performance metrics were used to collect comprehensive observational data. This approach facilitated the collection of detailed observation data across both user and expert-based evaluations. The Delphi method was also employed in specific instances to observe expert interactions. Interviews were conducted as supplementary methods following primary data collection activities such as surveys or usability testing (n = 16). Several studies utilized interviews to gather deeper insights into user experiences, with eight (8) papers highlighting interviews as a secondary method and two (2) employing interviews as a primary technique. The interview formats varied from semi-structured to fully structured sessions, often incorporating open-ended questions. Most interviews were conducted in informal or user-friendly environments to facilitate honest feedback, although expert interviews were occasionally more formal and focused. Interviews were typically audio-recorded or documented with observation notes and analyzed using thematic or content analysis to identify patterns and actionable feedback. One (1) research interview also employed the MoSCoW Analysis framework. Group interviews were commonly used for general usability evaluations, while individual interviews with professionals such as medical practitioners and patients offered detailed insights.

Focus Groups and think-aloud protocols were also utilized among the data collection techniques employed in the studies (n = 11). In eight (8) studies, focus groups were often supplemented with quantitative methods such as usability scales or task performance metrics. Three (3) studies utilized focus groups as a standalone method, particularly during app development's early design or refinement phases. Six (6) studies employed think-aloud protocols to capture participants’ thought processes while interacting with the applications. This method was mostly conducted with general users (n = 5), highlighting usability challenges such as interface inconsistencies. One (1) study combined think-aloud protocols with multi-criteria decision-making approaches to rank the identified usability challenges. In one (1) study, patients verbalized their experiences, identifying barriers like unclear labels, while another combined think-aloud protocols with video recordings to analyze user behavior during task completion. Only one (1) study used think-aloud protocols with experts, gathering domain-specific feedback on clinical usability. Figure 6 highlights the frequency of data collection techniques across the studies reviewed.

Frequency of data collection techniques.

The choice of data collection can significantly influence the quality and type of usability insights obtained. Questionnaires offered standardized, scalable data ideal for benchmarking but lacked contextual depth. Observations provided rich, real-time behavioral data, revealing issues users may not articulate, though interpretation could be subjective. Interviews generated in-depth, user-centered insights, particularly when exploring complex experiences, but were time-consuming and prone to bias. Focus groups enabled the exploration of shared perspectives but risked group thinking, while think-aloud protocols offered access to users’ thought processes during interaction, helping identify interface-level issues, with potential performance effects. Overall, combining multiple methods can improve data validity and provide a more holistic view of usability.

RQ4. Where are usability tests conducted?

The usability tests were conducted in both controlled and uncontrolled environments. In controlled settings (n = 14), studies typically involved structured, supervised sessions in laboratories, hospitals, or online platforms. Participants were evaluated in a computer lab or via video demonstrations, where they completed guided tasks such as registration, data input, and interpreting predictive results. Sessions lasted between 20 and 45 minutes and were supervised by facilitators, ensuring participants received the necessary instructions and feedback, followed by usability surveys. Controlled tests generally focused on short, intensive sessions to capture immediate usability feedback through tools like the SUS and structured interviews. These tests ensured consistent data collection but may have limited the observation of natural interaction patterns.

Conversely, uncontrolled environments (n = 2) allowed participants to independently engage with the mobile apps in natural settings, such as their homes or daily routines. Participants interacted with the app without supervision, providing insights into real-world usability and functional challenges, including connectivity issues, enabling participants to explore the app features at their own pace, reflecting authentic usage scenarios. Uncontrolled environments provided authentic user experiences and valuable insights into app performance under diverse real-world conditions, contributing to more comprehensive usability evaluations. Many studies strategically combined controlled and uncontrolled environments (n = 52) to achieve comprehensive usability evaluations. Participants first engaged in guided sessions within a computer lab, performing structured tasks under facilitator supervision, allowing immediate feedback through observations and questionnaires. Subsequently, participants independently used the app, providing insights into authentic user engagement, feature usage patterns, and user satisfaction. This approach balanced detailed usability assessments with real-world interaction data. Figure 7 highlights the frequency of usability test environments.

Frequency of test environments.

Finally, the testing environment plays a critical role in shaping usability outcomes, depending on the app type and target user population. Controlled settings are ideal for clinically oriented apps and users requiring guidance, such as older adults or patients, offering structured, high-quality feedback on task performance and interface clarity. Uncontrolled environments, by contrast, provide insights into daily use, making them especially valuable for lifestyle or wellness apps and for diverse user populations in real-world settings, including low-literacy groups. Hybrid approaches combined the strengths of both, enabling detailed task analysis alongside long-term engagement insights.

RQ5. What key usability attributes are evaluated?

The majority of usability attributes have been addressed in the studies examined. User engagement, ease of use, satisfaction, and learnability were the most commonly evaluated attributes. Learnability was noted as critical, especially for diverse user groups, as certain populations faced challenges. Despite its importance, efforts to enhance learnability were limited, often focusing on improving user instructions rather than mobile app redesign. Ease of use was one of the most studied attributes. Satisfaction, evaluated in nearly all studies, highlighted users’ emotional and practical responses to the apps. Customization and feedback were crucial for improving user experience, with studies frequently incorporating personalized content, adaptive feedback, and tailored health-based goal-setting features. Accessibility was another prominent attribute, with many apps adopting cross-platform compatibility and simplified language. The attribute of adaptivity is less frequently studied in health-tracking applications. Only a handful of studies explored attributes such as efficiency and memorability, reflecting a gap in usability evaluations. Although evaluated in many studies, error prevention often centered on minimizing navigational errors rather than addressing systemic design flaws. Other attributes like inclusiveness and cognitive load were underrepresented, suggesting the need for broader research. Figure 8 illustrates the various usability attributes utilized in the different studies analyzed.

Frequency of usability attributes.

Discussion

Findings

The systematic review provides a consolidated body of knowledge on usability testing practices for self-care apps. The publication trend indicates a growing interest in this field, and more studies are anticipated in the coming years. The participants comprised both patients and health professionals, enhancing the depth of usability testing. However, there was insufficient justification for the selection of participants and sample sizes in the published studies. In cases with small sample sizes (n < 3), such limited sample sizes can compromise the reliability of findings by increasing the risk of sampling bias and reducing statistical power. As a result, the data may fail to reflect population variability, limiting generalizability and increasing the chance of misleading conclusions. This limitation highlights the need for further research in this area to ensure that findings can be generalized across diverse populations.

The data collection methods included both quantitative and qualitative approaches. In the quantitative approach, standardized questionnaires were primarily used for data collection, which included the SUS, MARS, and MAUQ. In the qualitative approach, think-aloud protocols, focus groups, and interviews were used. The choice of data collection can significantly influence the quality and type of usability insights obtained. Combining multiple methods can improve data validity and provide a more holistic view of usability. Usability tests were conducted in both controlled and uncontrolled settings. Controlled settings included labs and hospitals, while uncontrolled settings usually included participants’ homes. A mixed-method approach that combined both environments was used in the reported studies. The key usability attributes assessed included ease of use, user engagement, and satisfaction, while attributes such as memorability, adaptivity, and cognitive load received limited attention. The lack of focus on these attributes indicates an important research gap that should be addressed.

The findings of this systematic review align with prior research on the usability of mHealth applications while offering an enhanced understanding of the unique demands of self-care apps. Reemphasizing trends observed by Zapata et al. 9 and Ansaar et al., 20 the growing volume of publications on usability testing underscores increasing awareness of successful mHealth adoption. However, unlike clinical mHealth apps, which are often evaluated within professional healthcare settings and emphasize clinical accuracy and compliance. Clinical app studies often assess functionality and accuracy from a healthcare provider's standpoint, whereas self-care app evaluations tend to focus on intuitiveness and accessibility. For instance, our review confirms findings by Wang et al. 14 that adult general users are the predominant test group in self-care usability studies, with minimal representation of older adults and marginalized populations. This gap is significant, as these groups may have unique usability needs such as larger text sizes, simplified navigation, and lower cognitive load that are often overlooked. In terms of participant demographics, our findings reinforce a previous study by Wang et al., 14 confirming that general users, particularly adults, make up the primary test group with limited inclusion of older adults and marginalized populations. The usability testing often involved both patients and health professionals, but many studies lack justification for sample sizes, highlighting a gap in inclusive usability research. The review also highlights the widespread use of mixed-method approaches in usability testing, with questionnaires, for example, SUS, MARS, MAUQ, being the most frequently employed, aligning with previous findings. Finally, while usability attributes such as engagement, ease of use, and satisfaction were extensively evaluated, attributes like adaptivity, memorability, and cognitive load were underrepresented.

Implications

The findings of this systematic review have several implications.

The studies lacked a rationale for sample sizes and participant selection. There is a need for further study to establish guidelines on sample size and participant selection, ensuring the generalizability of the results. Many studies engaged participants from narrow age ranges and backgrounds. This lack of diversity may result in usability findings that fail to generalize across broader user populations. Researchers must include participants from varied age groups, genders, digital literacy levels, disabilities, and backgrounds. The frequently used usability attributes included ease of use, user engagement, memorability, adaptability, and cognitive load. The lack of attention to adaptivity, memorability, and cognitive load highlights a major study gap. The reliance on standardized questionnaires (e.g. SUS, MARS, and MAUQ) indicates a preference for quantitative evaluation, whereas qualitative methods like think-aloud protocols and focus groups offer deeper user insights. There is a need to design a mixed-methods approach for data collection. Most usability studies reviewed assessed usability at a single point in time. However, self-care mHealth applications are designed for continuous and long-term use; thus, they should incorporate longitudinal usability studies to evaluate user experience. There was a considerable variation in how usability testing was designed and implemented across studies, especially in participant selection, data collection, testing environments, and usability attributes assessed. The lack of standardized protocols may compromise cross-study findings. Establishing and adopting standardized usability testing guidelines would improve methodological consistency. The review reveals that usability testing practices often underreport or overlook cultural and linguistic considerations. Many studies employ interfaces and instructions that may not be fully accessible to non-native speakers or users from different cultural backgrounds, thereby introducing cultural and language bias into the testing process. Future research should address these limitations by incorporating multilingual testing materials and culturally adapted interface content.

Limitations

There are a few limitations that should be considered when interpreting the findings. The selection bias may arise because studies were retrieved solely from the Scopus database, which could exclude relevant research published in other databases or not indexed in Scopus. To reduce selection bias, future updates to this review should consider including additional databases to capture a broader range of studies. The application of strict inclusion criteria may inadvertently exclude some studies. In future iterations, criteria may be refined to allow broader inclusion while maintaining relevance and rigor. There is also the possibility of publication bias, where studies with positive results on usability may be more likely to be published, leading to an overrepresentation of favorable outcomes regarding the usability of self-care apps. Efforts were made to include all relevant studies regardless of outcome.

Conclusion

This systematic review consolidates knowledge on usability testing for self-care apps, emphasizing the importance of usability in digital health solutions. The main contribution of the study includes (i) an evidence-based discussion on usability testing practices of self-care apps and (ii) providing direction for further research to strengthen the field of study. While substantial progress has been made in evaluating usability, there remain gaps in approaches to usability testing. Future research should focus on developing clear guidelines for sample size and participant selection while promoting greater diversity across age, gender, digital literacy, and cultural backgrounds to enhance generalizability. Attention should be given to underexplored usability attributes like adaptivity, memorability, and cognitive load. Mixed-methods approaches combining quantitative and qualitative techniques should be adopted for deeper user insights. Longitudinal studies are needed to assess usability over time, reflecting real-world use patterns. Additionally, establishing standardized testing protocols would improve consistency and comparability across studies. It should also address cultural and linguistic biases by incorporating multilingual materials and culturally adapted content to ensure inclusivity in usability evaluations.

Footnotes

Ethical approval

Ethical approval was not required because no human participants were involved.

Authors’ contributions

SS collected and analyzed data, interpreted results, and drafted the manuscript. BAK supervised data collection and revised the manuscript.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data availability statement

The datasets used and/or analyzed during the current study are available from the corresponding author on reasonable request.