Abstract

Objective

Mobile health applications hold immense potential for enhancing health outcomes. Usability is one of the main factors for the adoption and use of mobile health applications. However, despite the growing importance of mHealth applications, clear standards for their evaluation remain elusive. The present study aimed to determine heuristics for the usability evaluation of health-related applications.

Methods

We systematically searched multiple databases for relevant papers published between January 2008 and April 2021. Articles were reviewed, and data were extracted and categorized from those meeting inclusion criteria by two authors independently. Heuristics were identified based on statements, words, and concepts expressed in the studies. These heuristics were first mapped to Nielsen's heuristics based on their differences or similarities. The remaining heuristics that were very important for mobile applications were categorized into new heuristics.

Results

Seventeen studies met the eligibility criteria. Seventy-nine heuristics were extracted from the papers. After combining the items with the same concepts and removing irrelevant items based on the exclusion criteria, 20 heuristics remained. Common heuristics such as “Visibility of system status” and “Flexibility and efficiency of use” were categorized into 10 previously established heuristics and new heuristics like “Navigation” and “User engagement” were recognized as new ones.

Conclusions

In our study, we have meticulously identified 20 heuristics that hold promise for evaluating and designing mHealth applications. These heuristics can be used by the researchers for the development of robust tools for heuristic evaluation. These tools, when adapted or tailored for health domain applications, have the potential to significantly enhance the quality of mHealth applications. Ultimately, this improvement in quality translates to enhanced patient safety.

Protocol Registration

(10.17605/OSF.IO/PZJ7H)

Introduction

Smartphones, now immensely popular, have transformed interpersonal communication and information exchange.1,2 An important reason for the popularity of these devices is their use on the go.3,4 The proliferation of mobile devices has spawned impactful applications, including mobile health (mHealth) applications.5,6 The World Health Organization defines mHealth as “the use of mobile wireless technologies for health”. 7 Health professionals commonly employ mHealth applications at the point of care.8–14 These applications assist with clinical decisions, provide health record access, and enhance communication.15–19 They’re also valuable for teaching and learning clinical skills.20,21 Patients benefit from these applications to manage their health status through diet, exercise, and smoking cessation. Additionally, these applications are used to manage chronic diseases,22,23 reduce mortality, 24 and improve caregiver interactions. 8 However, errors in their design can endanger patient safety.25–28

One way to prevent these errors is to check the quality of these programs using usability evaluation. 29 Moreover, some specific features of mobile applications have resulted in new usability challenges. So if users cannot easily use even an esthetically attractive application, they may refuse to use it.30,31 International Organization for Standardization defines usability as follows: 32 “the extent to which a product can be used by specified users to achieve specified goals with effectiveness, efficiency and satisfaction in a specified context of use.”

Usability evaluation encompasses various methods, including expert-based and user-based approaches.33,34 One of the expert-based methods is heuristic evaluation (HE). Nielsen and Molich defined HE as “a usability evaluation method where a number of evaluators are presented with an interface design and asked to comment on it.” 35 In HE, evaluators assess interface designs using predefined principles called heuristics. 36 This method is common, popular, fast, cost-effective, and adept at detecting practical usability issues.37–42 Relevant studies in the healthcare domain have demonstrated that adapting evidence-based heuristics for health information systems can efficiently evaluates patient safety features.43,44 While this method is typically used for desktop interfaces, it can be tailored for mobile applications despite differences in screen size, touch input, and data entry.45–48 But because of these differences, this method needs to be adapted.49–52

Some studies have developed general heuristics for mobile applications without considering a specific domain.53,54 The existing literature has either adapted the pre-existing heuristics (such as Nielsen 37 ) for the evaluation of mobile applications or has developed new ones. These studies developed heuristics in different domains such as e-commerce, 55 education, 56 and gaming. 57 Customizing heuristics for specific domains and thoroughly validating them enhances usability.55,56 For mHealth applications, domain-specific heuristics need to be carefully developed, modified, and validated.

Based on our search in the literature, no systematic review has been conducted to explore the HE methods used in health-related mobile applications. Previous systematic literature reviews on the HE of mobile applications have been domain-independent.57,58 Another systematic literature review (SLR) has been conducted on developing heuristics to evaluate the quality of smartphone applications. 59 None of these studies has focused on the heuristics developed for applications in the healthcare domain. The only SLR that addressed this issue in mobile health applications is a technical report by Reolon et al. 29 Yet, it has not been published in any scientific journal. Some reviews also identified frameworks, dimensions, criteria, and scales for evaluating health applications,60–63 but none considered heuristics in this domain. Other systematic reviews have only included studies that addressed the usability evaluation of mobile applications. The studies that developed specific heuristics for applications were not included in these systematic reviews.31,64–67

There is a wide range of available research concerning HE but few that are domain specific tied to mHealth mobile applications. Existing heuristics face limitations when applied to mHealth because they either focus on general heuristics for mobile applications or span diverse domains. Additionally, the precise validation process for these heuristics remains incomplete. Another limitation of these heuristics is the significant variety of their definitions, which in some cases leads to confusion during evaluation. The present research aimed to address these limitations through systematic review, quality assessment, and attempting to establish clearer definitions of suggested heuristics for use in mHealth applications.

Methodology

Protocol registration and amendment

We followed the PRISMA (Preferred Reporting Items for Systematic Reviews and Meta-Analysis) guidelines to report the results. 68 A study protocol was registered on Open Science Framework 69 (Registration ID: 10.17605/OSF.IO/PZJ7H). We could not use the level of evidence method despite what is proposed in the protocol. We use levels of evidence when the included studies are of the types of studies in the evidence pyramid, such as cohort, trial, case-control, etc. In the preliminary search, our intention was to use levels of evidence to assess quality. But the studies that were finally included in our study were not from any of these studies and we could not use this tool. Also, Cochrane's risk of bias 70 and other critical appraisal tools71,72 were not applicable either. Therefore, we created a checklist for assessing the quality.

Data sources and search strategy

We searched databases including PubMed/MEDLINE, Scopus, Embase (Embase.com), WoS (Clarivate Analytics), ACM digital library, and IEEE Explore (Institute of Electrical and Electronics Engineers) for primary studies from 1 January 2008 to 30 April 2021 without any language limitation (see A.1 for search syntax in Supplemental material). We performed a manual search of the Mobile HCI Conference Series website 73 to retrieve the articles that were not index in these databases. We set the beginning of the search at 2008, as smartphones emerged this time. 74 The reference lists of the included studies and related systematic reviews were investigated too. Keywords were found by reviewing thesaurus systems such as MeSH (Medical Subject Headings) and Emtree (Embase subject headings), the free text method, expert opinions, and the review of relevant primary studies and reviews. We used two categories of keywords related to mobile application and also mHealth application (e.g. “Mobile Application”, “Mobile App”, “Smartphones Application”, “mHealth application”, “Mobile Health application”) and HE (e.g., “Heuristic evaluation,” “Usability heuristic,” “Heuristic checklist”). The latest search was done on 16 July 2021.

Eligibility criteria

We included studies that met the following conditions: (1) having developed, adapted, or proposed a heuristic for evaluating the usability of mHealth applications; (2) having proposed general heuristics to evaluate mobile applications; (3) having developed an instrument such as a checklist, a questionnaire, a method, or an approach to evaluate the usability of mobile applications based on heuristics. The exclusion criteria were: (1) conducting a usability evaluation of mobile applications without developing or proposing usability heuristics; (2) doing a web-based or desktop-based evaluation; (3) proposing domain-specific heuristics other than health, for example, for e-commerce, e-learning, and games; (4) proposing usability metrics, criteria, and attributes; (5) letter-to-editors and protocol studies; (6) having an updated version of the heuristics published by the same authors; and (7) no full-text available.

The focus of this study is on the usability of mHealth applications. Therefore, we included all studies of health applications regardless of the user type (provider or patient) and disease type that met the above criteria.

Study selection

The results of all the search of the databases and other resources were transferred to a Mendeley desktop as a reference manager software. The duplicates were then removed. The remaining papers were imported to the Rayyan site for a review. 75 In the screening stage, the titles and abstracts of all papers were reviewed by two authors (ZG and SN) independently based on the inclusion and exclusion criteria. In the next stage, the full-text of the papers were independently reviewed by the same two authors to find the relevant studies. In the third step, based on reading the full-text of studies, we included primary studies that addressed the eligibility criteria. In the whole study selection process, the reviewers had conservative approach in excluding studies based on the eligibility criteria. Moreover, disagreements were resolved based on a discussion and consensus between the two researchers. In case of dispute, the opinion of an expert (RK) in the research team was obtained to resolve the issue.

Methodological quality assessment

A quality assessment checklist was created (Table B.1 in Supplemental material) using critical appraisal skills program quality assessment tools, 72 studies on consensus methods,76–78 and the review of studies conducted by Dissanayake et al. 79 and Raeesi et al. 80 This tool was developed in a focus group session using the opinions of two epidemiologists, and three medical informatics experts familiar with the principles of HCI and systematic review.

Two important dimensions in developing heuristics are the method used to (1) create and (2) validate them. Therefore, we considered these two dimensions in the design of our instrument, based on which we evaluated the quality of the included studies. The first dimension refers to the categorization of the sources used to create heuristics, for which we used the level of evidence pyramid modified by Raeesi et al. 80 This checklist consists of two parts. The first part is related to creating or selecting heuristics including: (1) the clarity of research questions and (2) the source(s) used to extract and create them based on the modified levels of evidence approach. Studies were scored based on the source they used to create the heuristics. The levels of evidence used are as follows: “International guidelines,” “National guidelines,” “Institutional guidelines,” “Systematic review,” “Specialized methods under the supervision of specialists in the field,” and other types of studies, for example, “literature review.”

The other dimension of the instrument is related to the validation process of heuristics, as mentioned in the second part of the checklist. This part contains three actions:

identifying the method used for decision-making process to validate heuristics, checking the process description and specifications of the decision-making process for validating heuristics, and determining whether the paper reports the validation result.

After the heuristic's development step, different methods are used for validation. In this study, the included studies were scored based on the method they used to validate the heuristics. These methods were taken from the study conducted by Quiñones et al.,

81

which included: “Consensus method with subject experts,” “Evaluation with real users,” “Evaluation with subject expert,” “Consensus method without subject experts.” It is very important that the studies about the validation process of heuristics have the necessary transparency. Therefore, we devoted the second and third questions of this part to the issue of whether experts performed this validation, whether the field of those who performed the validation process is clear, and whether the results were reported correctly. Some studies have only reported that the validation process was done without any clear explanation in reporting the results of the validation process.

Scoring the studies based on the quality assessment checklist

First dimension: A study using international, national, and institutional guidelines or systematic review receives a score of 2. A study conducted based on specialized methods under the supervision of field specialists or a literature review gets a score of 1.

Second dimension: If a study uses one of the following methods, it receives a score of 2: “Consensus methods with field experts,” “Evaluation with real users,” and “Evaluation with subject experts.” If a study uses the “consensus method without field experts,” it receives a score of 1.

If the status of each question in any dimension is unclear, the score is 0. Based on the checklist (Supplemental Table B.1), each question is assigned a score of 0, 1, or 2 depending on meeting the desired conditions. Finally, a total score is calculated for each article by summing up the scores of the checklist questions. Total score less than 4 indicates low quality. Total score between 4 and 6 indicates medium quality. Total score of 7 indicates high quality.

All studies included were evaluated independently by two authors (ZG and SN). Any disagreement at this stage was resolved by the two authors’ consensus and consultation with a third expert (RK).

Data extraction

According to our protocol, we developed a data extraction form in Microsoft Word (2016). We extracted the following information from each study: first author's name, year of publication, country, objective(s), number and specifications of evaluators, set of heuristics used, those developed or adapted, and the purpose of the study. Based on a systematic review, 81 we decided to add other variables. Following a thorough review of this systematic review study, we acquired valuable insights into the essential dimensions employed in heuristic development, as well as the various associated methods (including the domain and method of developing and validating usability heuristics). Recognizing the critical role of these dimensions in ensuring a comprehensive study, we added them in close consultation with our research team.

This systematic review 81 identified the following heuristic development methods: (1) “existing heuristics which indicates using existing sets of usability heuristics to develop new heuristics”; (2) the “methodology” is a formal and systematic process to create usability heuristics; (3) literature reviews which indicates reviewing the literature to identify concepts, specific characteristics of the domains, existing heuristics, and other relevant elements to develop new usability heuristics; (4) creating heuristics based on existing guidelines, principles, or design recommendations; (5) identifying and analyzing usability problems using different methods of usability evaluation (e.g. HE, user test, questionnaire, interview among others); (6) collecting information by conducting interviews with users encountered using an application;, (7) developing heuristics by reviewing and analyzing theories related to a specific domain; and (8) a mixing process which indicates creating heuristics based on using two or more of the previous methods.

Heuristic validation methods:

HE in comparison to the previous heuristics: the developed heuristics are compared with previous heuristics (such as Nielsen and Inostroza) and the problems identified by the two methods are compared. Use of heuristics in case studies: the developed heuristics in the case studies are tested and they are modified using the identified usability problems. HE with expert evaluators: conducting an HE on an application using usability and HCI experts. Online survey with HCI experts and researchers: conducting HE through an online survey using experts in the field of evaluation. Validation by expert researchers: conducting HE on an application using nonusability experts. Validation through an inquiry test: participants in this method are divided into two groups: (1) evaluators with no previous experience and (2) those with previous experience. Both groups use the developed heuristics and finally, the results are analyzed by the evaluation experts.

81

The extracted heuristics were divided into the previously established and new categories. The previously established category refers to the well-known ones developed by pioneer researchers such as Nielsen

35

for assessing all user interfaces. The new category refers to the heuristics that were not in Nielsen's heuristics category. Heuristics used for mobile applications and also important in the health domain were mapped onto this category. The final analysis did not include heuristics that were not related to usability (e.g. Behavior change and Self-monitoring). Data extraction was done by two independent authors (ZG and SN). Disagreements were also resolved through discussions between the authors.

Data analysis and synthesis

After the initial extracting of data, we could not have performed a meta-analysis due to methodological heterogeneity in included studies. For data synthesis, we used inductive methods. At first, heuristics were extracted based on statements, words, descriptions, and concepts expressed in the included studies. Then heuristics with a similar content were mapped to the same category. These heuristics were mapped onto Nielsen's heuristics based on their differences or similarities. As commented by the research team, the heuristics that were very important for mobile applications were mapped onto the category of new heuristics. Although the inductive method was used to group the heuristics to achieve the most internal consistency and the least external inconsistency, but also the back-and-forth approach was used. Heuristics were extracted independently by two (ZG and SN) reviewers who were trained in the field of usability evaluation. Then they were categorized through a discussion between two reviewers. At the end, in the focus group meetings with the third author, who is a subject expert in the field of HE, the lack of agreement was investigated and the classification of heuristics was finalized.

Results

We identified 2538 studies. After excluding the duplicates, 1680 remained and were screened for title and abstract. Among these, 66 studies were reviewed in full text. Seventeen studies met our inclusion criteria (Figure 1). Three studies2,82,83 were identified through the reference lists. As the updated or modified versions of these papers were already included, they were excluded in the final analysis.

PRISMA flow diagram.

Study characteristics

The characteristics of the included studies are summarized in Table 1. A new set of heuristics were proposed, validated, and refined in nine papers.3,45,46,59,84–88 Heuristics were presented in the format of a methodology, model, or guideline by three papers.49,89,90 Nielsen and Morville's Honeycomb models were adapted in two papers.91,92 Additionally, health-related instruments were developed in two papers, and a general instrument was introduced in another.93–95 Based on the domain, 12 studies proposed heuristics for the general domain, while 5 studies specifically addressed health-related contexts. Notably, most of the 17 papers were published in 2019 (constituting 35.2% of the total), and 29.4% of the studies were conducted in Brazil.

Characteristics of the included studies.

Usability heuristics extracted from the included studies

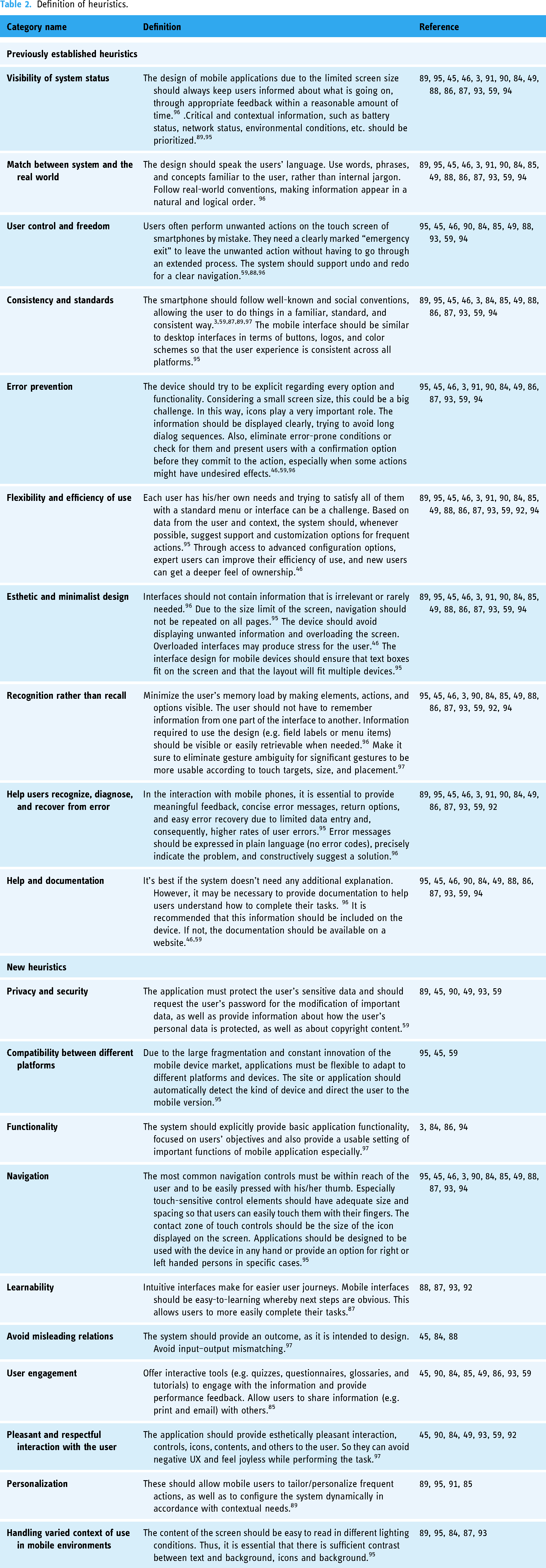

A total number of 79 heuristics were extracted from the studies. We combined the items with the same concepts. Finally, 20 were retained. These were then divided into 10 previously established and 10 new heuristics. Table 2 shows the extracted heuristics and definition. Also, the additional information about categorization of heuristics is provided in Table B.3 in Supplemental material.

Definition of heuristics.

Previously established heuristics

The heuristics presented in the papers were mapped to 10 Nielsen ones. In 15 studies, the heuristics of “Visibility of system status” were reported. Sixteen studies mentioned the “Match between system and the real world.” Eleven studies reported the “User control and freedom.” Fourteen studies considered the “Consistency and standards” and the “Error prevention.” All studies mentioned the “Flexibility and efficiency of use” and 16 studies mentioned the “Aesthetic and minimalist design.” Studies12,15,14 reported three heuristics in their categorization, including the “Help users recognize, diagnose and recover from error,” the “Recognition rather than recall,” and the “Help and documentation,” respectively (Supplemental Table B.3).

New heuristics

Ten new heuristics were suggested in the papers reviewed. Twelve reported the “Navigation” and eight studies mentioned the “User engagement.” In more than six studies, the “Pleasant and respectful interaction with the user” and the “Privacy and Security” were identified. Five studies reported the “Handling varied context of use in mobile environments.” Four mentioned the “Functionality.” Four reported the “Learnability,” and four mentioned the “Personalization.” Three studies reported the “Avoid misleading relations,” and three others mentioned the heuristic of “Compatibility between different platforms” (Table B.3 in Supplemental material).

Development and validation of usability heuristics in included studies

Table 3 shows the development methods in the included studies. The review revealed that most studies (n = 7) used a literature review approach to develop them. The approaches used in other studies include applying existing heuristics (n = 1), a mixing process (n = 1), guidelines or design principles/recommendations (n = 2), and methodologies (n = 6). Among the six studies using a methodological approach, three proposed Rusu et al.'s methodology, 98 and three used various methodologies.2,49,99 In general, in different approaches, the most prevalent heuristics were developed based on Nielsen, 35 Shneiderman, 100 Inostroza, 46 and Bertini, 2 respectively. Figure 2 shows the validation methods in the included studies. The validation process of 40% of papers was unclear, 25% of the studies used the “HE method in comparison to previous heuristics,” 15% used the “use heuristics method in case studies with experts.” Some studies used more than one method for validation.46,87

Heuristics validation methods in the included studies.

Characteristics of the heuristics in included studies.

HE: heuristic evaluation; N/A: not applicable.

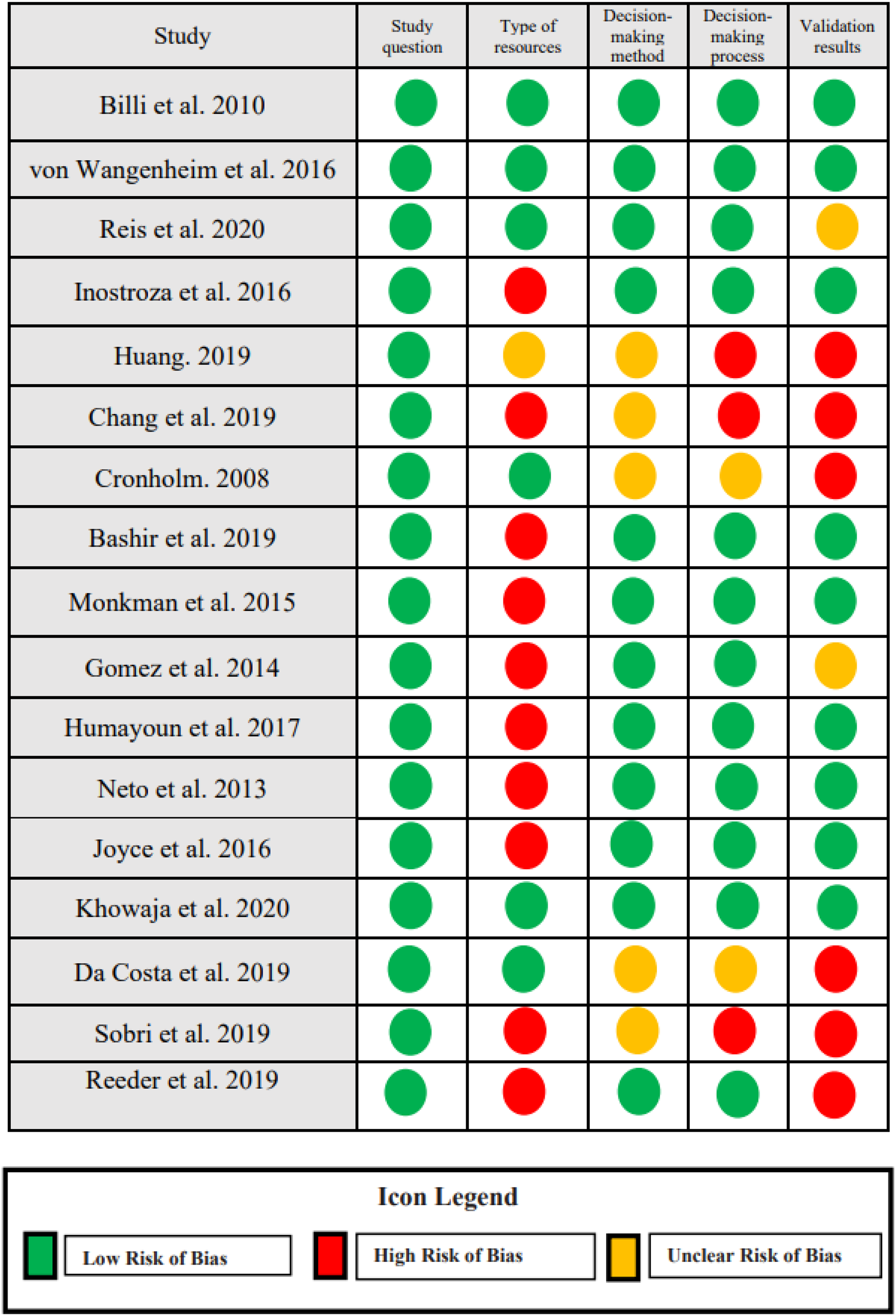

Methodological quality assessment

Quality assessment in 17 studies based on the developed checklist (Table B.1 and B.2 in Supplemental material) is presented in Figure 3. Based on the results of quality assessment three studies had a score equal 7,89,93,95 and 9 studies a score between 4 and 6.46,47,50,59,86–89,95 The quality score of 5 studies was below 4.3,59,90–92 The evaluation results for the resources used in extracting the heuristics based on the levels of evidence 80 showed that one study was based on a guideline (health literacy online guideline 101 ), 5 based on the systematic review, and 10 based on the literature review or previous heuristics (e.g. Nielsen and Inostroza).

Quality assessment summary for all studies.

Studies used different decision-making methods to validate them. Twelve studies have used one consensus method with subject experts, evaluation with real users, evaluation with subject expert. Since five studies did not mention the method of decision making in the article, based on the checklist their status was unclear. Twelve studies described the decision-making process in detail, including the number of experts, their area of expertise, and the type of decision-making method. Eight studies accurately reported the heuristic validation process. Six did not report the validation process, and three studies, although they mentioned that they performed a validation process but did not report any information about it. Therefore, the status of these studies was left unclear.

Discussion

In this systematic review, among all the included studies, after combining and eliminating duplicates, 20 heuristics were identified and divided into two categories: previously established and new heuristic. More than two-thirds of the studies suggested general heuristics for mobile applications. We identified 17 studies, of which only 5 proposed heuristics in the health domain,85,91–94 2 of which developed checklists in this area.93,94 Most studies modified their heuristics based on the existing heuristics.35,46,87

Classification of the heuristics into two categorizations previously established and new showed that in the new category, “Navigation,” “Security and privacy,” and “User engagement” heuristics were reported more than others in the included studies. The results of our study related to navigation heuristic were in line with Agarwal et al. 102 Also, in a study by Azad-Khaneghah et al., 63 easy navigation was considered as one scale in usability assessment questionnaires. The reason for the importance of navigation heuristic is that improper navigation leads to suboptimal interactions, rendering applications unused by users and reducing the potential for applications to improve care.103–105

Although smartphones’ increased hardware and software capabilities (e.g. increased memory capacity, the ability to encrypt using fingerprints) have encouraged people to use them more and more to store information, however the lack of proper monitoring instructions on security and privacy is one factor that can affect how patients use health applications. Health experts mentioned privacy and security concerns as a limiting factor in patients’ use of health applications. 106 As perceived by Wager et al., privacy and security are essential factors in maintaining data safety. 107 Nouri et al. also introduced this heuristic as one of the seven main categories of quality evaluation criteria for mHealth applications. 61 In a study by Llorens-Vernet et al., 108 security and privacy are introduced as the criteria related to the standards of mHealth applications.

User engagement is a relevant issue in application usability. Optimal usability can lead to higher levels of interaction as well as user satisfaction. 109 In a study by Liu et al., 110 user engagement was assessed along with usability to evaluate the effectiveness of designing mHealth applications for the elderly. In Hensher et al., 62 user engagement was one domain mentioned in the evaluation framework of mHealth application. In mobile app rating scale (MARS) 111 and the user version of the MARS (uMARS) 112 tools used to evaluate the quality of mHealth applications, user engagement was also considered a significant category.

n the present study, in the previously established category, “Flexibility and efficiency of use” and “Aesthetic and Minimalist Design” heuristics were reported more than others, respectively. In a review by Zahra et al., “Efficiency” was also introduced as a significant dimension of using mobile applications for chronic diseases. 60 In a literature review conducted by Salazar et al., 57 the most frequently cited heuristics for mobile applications were “Aesthetic and Minimalist Design” followed by “Consistency and Standards.” The reason for the importance of “Aesthetic and Minimalist Design” is that the improper design of interfaces can cause stress to users. Studies have shown that the acceptance and use of mHealth applications are often low due to their improper designs.110,113

In the included studies, the most commonly used approaches to developing a new collection of heuristics were literature review followed by methodology (e.g. Rusu and Quinones). Given that usually, one step of the methodology is the literature review, it is suggested that a specific methodology be used to develop a new set of heuristics. The use of a formal process facilitates their design and development. 81 One of the important steps mentioned in the proposed methodologies is validation that which is a critical step in the process of heuristics development, various methodologies have dedicated one step to the validation process.98,99,114 Using the validation process, the effectiveness of heuristics is examined. The effectiveness of heuristics means they identify more problems in a particular domain. 115 To develop a new set in a particular domain, it is better to validate those that have been developed. According to Rusu's claim, nondomain specific heuristics cannot adequately identify a particular domain's usability problems. 116 Therefore, the more we use heuristics of a higher validation, the more usability problems we can detect.

One issue identified in the studies was that the heuristics validation process was not done or explicitly reported. Another issue with developing heuristics, as pointed out by Joyce, 117 was the use of similar titles to Nielsen's heuristics that differed in definition and concept of them. This issue can confuse usability and HCI experts. In this study, however, to solve this problem, we mapped heuristics with similar concepts to a category that could solve the inconsistency problem.

There were three limitations in the present study. First, some studies included in our review considered hardware besides the software to suggest the target heuristics. Our study only focused on software. Future studies need to consider hardware too. Second, we did not include heuristics related to a specific target groups (e.g. the elderly, the deaf) or domains other than the healthcare (e.g. e-commerce, e-learning, and games). Although this could result in missing heuristics applicable to a specific group or domain, however helped us to identify the heuristics that are applicable on a broader range of health applications. Third, we did not exclude any studies based on quality assessment because the number of studies was small and our goal was not to miss any evidence at any level.

One strength of the present study was the methodological quality assessment of the studies. We considered two main aspects in the process of developing heuristics to check the quality level of the studies: the methods used to create and validate them. In this study, five primary studies were of a low quality, which was due to lower levels of evidence or the lack of any validation process. Another one was the heuristics were categorized by experts after being extracted from the studies, and a comprehensive list of heuristics was reported. But a similar systematic review conducted by Reolon et al. only reported some heuristics and did not identify many heuristics in the health domain. Another advantage of the present study over Reolon et al.'s is that the former attempted to follow the principles of systematic review studies (protocol registration, searching more databases and gray literature, and a longer period of time, acting based on the comprehensiveness and quality principle).

The variety of recent review studies61,62,108,118 on the evaluation of health applications have shown the need to evaluate health applications in the light of recent advancements. These studies show that, to date, there has been no agreed-on “gold standard” for evaluating the usability of mHealth applications. Study 25 also stated that most studies did not use appropriately customized heuristics with mobile phone features and healthcare features to conduct the evaluation. To our knowledge, this study is the first systematic review that attempts to assemble a more comprehensive set of mHealth heuristics based on evidence to date, which are important in the domain of health, and presenting them in a comprehensive and categorized manner with definitions. The present findings can be used as a guide for stakeholders in this field. Mobile application designers can use the present findings for a better and more accurate design at the beginning of the system design process. These heuristics can be modified according to the type of application, the target group (e.g. the elderly and the disabled), the type of disease or even the patient and healthcare providers. For example, if an application is designed for the elderly, according to their special conditions, navigation, or learnability can be given more importance.119,120 In addition, evaluators can use our findings to identify problems with the usability of mobile applications at each stage of the evaluation process.

Conclusion

In this review, we presented the results of an SLR to identify the heuristics for the usability evaluation of mHealth applications. We identified 20 heuristics that were used to evaluate the usability of mHealth applications. The heuristics indentified in this study can be used as a basis for developing HE tools and evaluating applications in the healthcare domain. Due to the potential advancement of mHealth applications and the importance of their usability, further research is required in developing tools used for HE. The set of heuristics that were categorized can be used as a guide for the HE of mobile applications in all phases of software design. The use of heuristics for evaluating mHealth applications in the health domain can significantly improve their quality and, ultimately, patient safety.

First, we established a method of evaluation the currently wide range of studies in regard to HE. Second through our methodology we were able to focus definitions of some terms and similar concepts and map these more carefully in an attempt to standardize what is currently available which may help further study by decreasing confusion in current literature. Finally, through systematic review we propose an expanded set of heuristics that may improve the HE tied more specifically to the use of mHealth applications.

We failed to meet this aim and further study may be required to establish this aim more clearly.

Supplemental Material

sj-docx-1-dhj-10.1177_20552076241253539 - Supplemental material for Heuristics used for evaluating the usability of mobile health applications: A systematic literature review

Supplemental material, sj-docx-1-dhj-10.1177_20552076241253539 for Heuristics used for evaluating the usability of mobile health applications: A systematic literature review by Zahra Galavi, Somaye Norouzi and Reza Khajouei in DIGITAL HEALTH

Supplemental Material

sj-docx-2-dhj-10.1177_20552076241253539 - Supplemental material for Heuristics used for evaluating the usability of mobile health applications: A systematic literature review

Supplemental material, sj-docx-2-dhj-10.1177_20552076241253539 for Heuristics used for evaluating the usability of mobile health applications: A systematic literature review by Zahra Galavi, Somaye Norouzi and Reza Khajouei in DIGITAL HEALTH

Supplemental Material

sj-docx-3-dhj-10.1177_20552076241253539 - Supplemental material for Heuristics used for evaluating the usability of mobile health applications: A systematic literature review

Supplemental material, sj-docx-3-dhj-10.1177_20552076241253539 for Heuristics used for evaluating the usability of mobile health applications: A systematic literature review by Zahra Galavi, Somaye Norouzi and Reza Khajouei in DIGITAL HEALTH

Supplemental Material

sj-docx-4-dhj-10.1177_20552076241253539 - Supplemental material for Heuristics used for evaluating the usability of mobile health applications: A systematic literature review

Supplemental material, sj-docx-4-dhj-10.1177_20552076241253539 for Heuristics used for evaluating the usability of mobile health applications: A systematic literature review by Zahra Galavi, Somaye Norouzi and Reza Khajouei in DIGITAL HEALTH

Supplemental Material

sj-doc-5-dhj-10.1177_20552076241253539 - Supplemental material for Heuristics used for evaluating the usability of mobile health applications: A systematic literature review

Supplemental material, sj-doc-5-dhj-10.1177_20552076241253539 for Heuristics used for evaluating the usability of mobile health applications: A systematic literature review by Zahra Galavi, Somaye Norouzi and Reza Khajouei in DIGITAL HEALTH

Supplemental Material

sj-png-6-dhj-10.1177_20552076241253539 - Supplemental material for Heuristics used for evaluating the usability of mobile health applications: A systematic literature review

Supplemental material, sj-png-6-dhj-10.1177_20552076241253539 for Heuristics used for evaluating the usability of mobile health applications: A systematic literature review by Zahra Galavi, Somaye Norouzi and Reza Khajouei in DIGITAL HEALTH

Footnotes

Acknowledgements

We would like to acknowledge the contribution of Dr Leila Ahmadian (Professor of Medical Informatics), Dr Abbasali Keshtkar (Assistant professor of Epidemiology), and Dr Hamid Sharifi (Professor of Epidemiology) for designing the quality assessment checklist.

Contributorship

All authors contributed to this research conception and design. The search procedure (screening the papers, assessing the full-texts, and extracting data) was carried out by ZG and SN with arbitration and confirmation by RK. All authors contributed to the analytic strategy to achieve the final classification of heuristics evaluation. RK critically revised the manuscript. All authors provided the final approval and agreed to be accountable for all aspects of this work.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Ethical approval

The ethics committee of Kerman University of Medical Sciences confirmed this study (ethics code: IR.KMU.REC.1402.075).

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Guarantor

RK.

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.