Abstract

Artificial intelligence (AI) technologies have the potential to improve healthcare and public health. Although there has been success in AI for research uses, little progress has been made in implementing health-related AI technologies in health systems. Responsible AI for health systems requires engagement and co-design with health system partners, policymakers, and the community. Deploying responsible AI requires engaging stakeholders, particularly those affected by the technology. This commentary presents the importance of participatory approaches for responsible AI implementation. In this commentary, we discuss the planned use of participatory approaches to responsibly deploying validated machine learning models, with a specific case example of diabetes prediction models that can address the challenge of preventing and managing diabetes in a health system.. The participatory methods engage policy-, provider-, and community-level actors to deploy and implement the AI diabetes tools, inform how AI is implemented in health settings, and overcome common deployment barriers. The future of AI in health settings rests on fine-tuning these practices to enable trust, acceptability, and oversight of these technologies to be deeply established in health systems.

Background

Artificial intelligence (AI) technologies offer immense potential to enhance the quality and efficiency of healthcare and public health. Despite the wide variety of promising use cases, from accurately identifying those most at risk of worsening health to developing individually tailored approaches for precision medicine, 1 little progress has been made in implementing health-related AI technologies at scale. 2 In their recent editorial on AI-based diabetes care, Wang et al. 3 outlined a series of challenges related to identifying the best-performing model for predicting the risk of developing Type 2 diabetes. There is often a strong focus on challenges associated with model performance; however, there are equally important downstream challenges, particularly related to model deployment's social acceptability and trustworthiness across diverse communities. We contend that the underlying difficulties in deploying AI technologies in priority health domains such as diabetes are a lack of social acceptability and proven co-design strategies to support trustworthy implementation and scale.

The increasing calls for responsible AI demand practical strategies for incorporating ethical values into developing and deploying AI technologies. 4 Responsible AI requires a clear understanding of which actors are accountable for developing and deploying AI technologies and how they can do so effectively while meaningfully engaging communities and other relevant stakeholders. When applied to health systems, responsible AI demands a unique set of considerations. 5 Several frameworks have been developed to define the values and core considerations that should guide responsible AI, including the Montreal Declaration on Responsible AI, 6 Responsible Innovation in Health Framework, 7 and other frameworks that present universal guidelines for ethics and governance of AI for health.8–10 However, there are major gaps in understanding how to apply these core principles in the practice of responsible AI governance and implementation in health systems.11,12 Failure to address this gap presents challenges when employing participatory methods that engage diverse actors within health systems, including policymakers, healthcare providers, the community, and patients.

Responsible AI ensures that AI technologies are developed and used with ethical, fair, transparent, and accountable principles. Core to achieving responsible AI is engaging stakeholders, particularly those affected by the technology, to ensure that ethical considerations are integrated in the deployment. There has been limited evidence in the literature on how beneficiaries of healthcare (i.e. patients, families, and caregivers) are involved in AI implementation and deployment. Specifically, a recent scoping review on AI and ML applications in healthcare identified only 21 articles of 10,886 searched that mentioned community involvement during model development, validation, or implementation. 13 This commentary presents our planed approach to participatory and responsible AI implementation for diabetes prediction and prevention as a learning case.

Diabetes is an urgent global health problem requiring novel and innovative solutions to curb the growing burden. Worldwide, there were an estimated 463 million people diagnosed with Type 2 diabetes in 2019. 14 By 2030, almost 14 million Canadians will have either diabetes or pre-diabetes, which is estimated to incur ∼$5 billion in direct costs to the health system. 15 Most people with Type 2 diabetes have more than one chronic condition, known as multimorbidity, 16 which increases the complexity of care and resource coordination. 17 The challenges with scaling effective diabetes prevention and management strategies from the individual patient to the entire population stem from systems-level barriers, including socioeconomic disparities, poor access to care, high medication costs, lack of access to healthy and affordable foods, and the built environment.18–23 The deployment of AI algorithms to inform the prevention and treatment of diabetes offers immense promise to guide effective and impactful investments in population health and health systems. Responsible deployment of AI-based tools for diabetes needs to consider not only technical considerations (e.g. predictive accuracy and algorithmic biases), but also how to engage and co-design with communities so that we build AI tools that are relevant and trustworthy for informing the range of interventions and resource allocation needed.

AI for diabetes prediction and prevention initiative

The

Our AI4DPP Solution Network has adopted a participatory community-engaged approach 27 to engage policy-, provider-, and community-level actors to responsibly deploy and implement the AI diabetes tools in a local community setting. Our approach builds on established responsible AI and participatory design frameworks by explicitly centering implementation within the context of public health and chronic disease prevention. While models such as the Montreal Declaration6 and the WHO's guidance on AI8 ethics emphasize principles such as inclusiveness, transparency, and accountability, our approach operationalizes these principles through structured, iterative phases of engagement with stakeholders embedded in the healthcare system and affected communities. In doing so, we aim to extend existing frameworks by emphasizing long-term relational engagement, co-governance, and real-world deployment pathways tailored to population-level AI applications.

Participatory approach for responsible deployment of AI for health systems

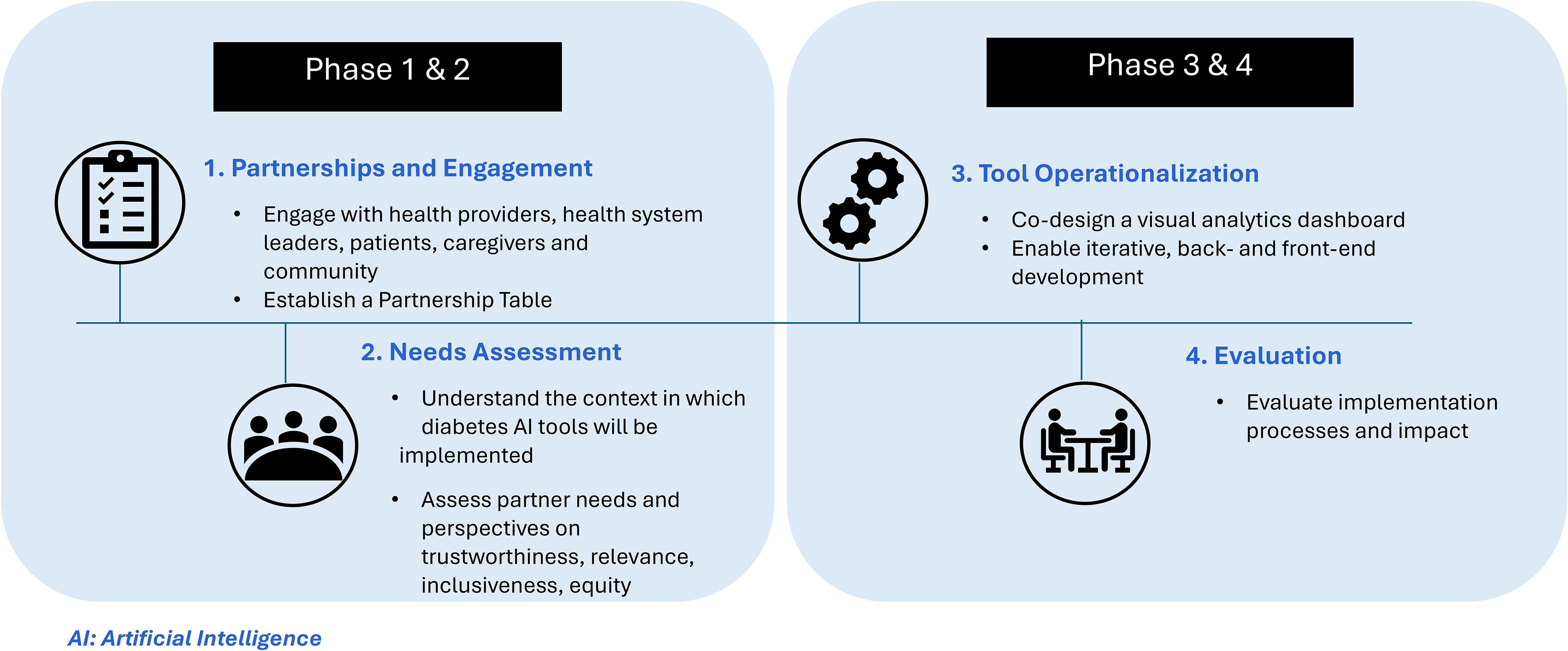

The participatory approach has been mapped out in sequential phases, as shown in Figure 1 with a specific objective and anticipated outcome. Phase 1 focuses on engagement and forming partnerships. Phase 2 centers on needs assessment an establishing baseline trust and equity considerations. Phase 3 supports tailored implementation planning and tool operationalization. Phase 4 carries out the evaluation of the implementation. This phased structure allows for iterative refinement and ensures that stakeholder perspectives are embedded throughout the lifecycle of AI deployment. Our project is based on the central assertion that responsible deployment of AI in health systems demands participatory approaches to its implementation and scale and implies a commitment to oversight of model use and continual assessment of the need for revision of models in practice. Our participatory co-design approach with partners ( sets the stage for responsible AI deployment. Our participatory co-design approach engages partners across policy, provider, and community levels to support deployment. Including the perspectives of policymakers, healthcare providers, and other decision-makers is particularly important, as population-level applications of AI/ML models in health systems are often designed to support this stakeholder group in guiding resource allocation, service delivery, and public health decision-making. 28 At the same time, our approach ensures community engagement throughout the deployment process to incorporate the often-overlooked perspectives of patients, families, and caregivers, who are ultimately the intended beneficiaries of these technologies. 13 We intend to deploy validated diabetes ML models to meet a critical public health need and establish an applicable locally informed implementation plan, while generating insights that will inform its scale-up to other settings and systems of care. By proving the responsible deployment of the diabetes ML models in the local setting of Peel region, we will establish the viability of responsible equity-centred AI solutions in health systems, addressing some of the concerns related to the adoption and uptake of AI.12,29 Previous work on scale-up of innovation notes that successful demonstration is a prerequisite to effective scale-up. 30 To steer this process, Phase 1 involves establishing a partnership table that includes senior leaders from the provincial government health care agencies; clinicians from local hospitals, primary care networks and family health teams; community-based organizations and public health agencies; as well as patients and caregivers with lived experience of Type 2 diabetes and the healthcare system. Our engagement strategies include bi-annual network meetings for garnering collective input, identifying emergent needs, and evaluating to inform areas of strength and improvement. Central among these strategies is the ongoing development of relationships with community leaders who either represent or directly work with diabetes prevention and care, and people with lived experiences of diabetes in the region. Such relationships require the investment of substantial time and resources on both ends and are viewed as essential to achieving the goals of the project. By adopting a participatory approach, we intend to directly identify and mitigate ethical issues that may result in deployment.

Stages of the participatory approach for responsible deployment of AI for health systems.

Working collaboratively with our partnership table, in Phase 2, we complete a needs assessment to understand the context in which the diabetes AI tools will be implemented. This will involve assessing perspectives on factors related to

Potential challenges and limitations

We acknowledge potential limitations and challenges in our approach. Sustaining meaningful stakeholder engagement over time can be resource-intensive for all parties and may be impacted by turnover or shifting institutional priorities. To mitigate this, we have built in routine check-ins to support long-term collaboration. Another challenge involves identifying and addressing potential biases in the predictive algorithms themselves, particularly when working with historical or structurally biased data. We are addressing this through community-informed validation and explicit attention to fairness in model refinement. Power imbalances, particularly between institutional actors and community members, are also being monitored through reflexive practices and inclusive governance mechanisms.

Toward a scalable framework for responsible AI deployment

This commentary offers a reflection on the essential elements required for the responsible deployment of AI-based diabetes prediction tools within health systems with a planned real-world deployment as a case example. By investing in both technical rigor and community-centered implementation, our goal is to contribute to a scalable and adaptable framework that can inform future efforts to deploy AI responsibly, ensuring these tools are trustworthy, ethical, and aligned with the needs of diverse stakeholders. As health systems increasingly explore the use of AI, our experience offers several practical recommendations: (1) embed co-design and evaluation as integral components of AI deployment, rather than add-on activities; (2) establish feedback mechanisms to monitor stakeholder trust and acceptability during implementation; and (3) invest in governance infrastructure that ensures accountability and responsiveness to ethical and social considerations throughout the AI lifecycle. We believe this approach has broad applicability and can be adapted to support the responsible implementation of ML technologies in health systems globally.

Footnotes

Ethical considerations

This study was approved by the University of Toronto Health Sciences Research Ethics Board (Protocol 46174).

Author contributions

LCR and JS: writing–original draft preparation; SG, IOA, JLG, LL, KK, RZ, VC, and IUI: writing–review and editing. All authors have read and agreed to the published version of the manuscript.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Canadian Institute for Advanced Research.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Guarantor

Laura Rosella.