Abstract

Objective

Implementation outcomes are important in intervention research as a necessary precursor to achieving desired health outcomes. Considering the critical role of implementation outcomes, this study involved a comprehensive review of implementation outcome measures used in digital health interventions specifically targeting young adults.

Methods

This scoping review was reported using the Preferred Reporting Items for Systematic Reviews and Meta-Analyses extension for scoping reviews, and the search incorporated the elements of population, concept, and content framework in three electronic databases (PubMed, Embase, and CINAHL). A matrix was used for data extraction and integrative thematic synthesis for analysis. Implementation outcomes were reported based on the indicators in each study, totaling eight outcomes: acceptability, adoption, appropriateness, cost, feasibility, fidelity, penetration, and sustainability.

Results

The search yielded 2441 articles, and 17 were finally identified. The intervention implementation techniques that were adopted were telephone calls (n = 1); social media (n = 2); web-based programs (n = 4); short message service (n = 5); wearable devices (n = 1); mobile applications (n = 3); and a combination of phone calls, emails, and text messaging (n = 1). The highest number of implementation outcomes that were assessed in all the studies were acceptability (n = 10), feasibility (n = 10), and fidelity (n = 8). Short message service (n = 14), web-based programs (n = 11), and mobile applications (n = 7) had the highest number of implementation outcomes.

Conclusions

Researchers have largely assessed the acceptability and feasibility outcomes. The need to integrate the implementation outcomes framework in intervention research design is underscored.

Introduction

Implementation outcomes are a critical component that must be addressed by intervention science researchers. The translation of research into clinical or community practice has been slow because of the limited information regarding implementation outcomes. 1 Indeed, implementation outcomes narrow the research practice gap by identifying barriers and facilitators in the context of designing interventions to achieve desired outcomes, termed effectiveness. 2 Implementation outcomes must be distinguished from intervention effectiveness during research development and implementation.3,4 The former refers to the intentional actions taken to deliver a program and implement new treatments, practices, and services by highlighting the processes that lead to effective interventions. 4 Implementation outcomes are benchmarks for attaining service outcomes, which preceded client-level outcomes (satisfaction, function, and symptomatology) to measure treatment progress. 5 In addition, differentiating implementation outcomes from service outcomes (efficiency, safety, equity, patient-centeredness, and timeliness); client-centered outcomes; and intervention context indicators (scalability and usability) are imperative for effectiveness.3,4

Implementation outcomes are increasingly recognized as crucial in intervention research, as they provide insights into not only how interventions achieve their intended clinical outcomes but also the barriers and facilitators influencing their successful implementation. It is particularly noteworthy for such intervention with the increasing complexity of using digital health intervention strategies for health promotion among young adults.6,7 Diverse health promotion interventions, including smoking and alcohol cessation, physical activity, improvement in health literacy, and stress management, focus on young adults by leveraging digital health interventions. 7 Digital health interventions provide an avenue for health promotion among young adults because of their propensity to use healthcare technology and associated global trends. 6 In essence, a digital health intervention refers to the secure use of information and communication technologies in support of health and related fields and encompasses telehealth, telemedicine, mobile health, electronic medical or health records, big data, wearables, artificial intelligence, and short message service. 8 In terms of age, young adults between 20 and 24 years are in a stage when their technological use is at its peak.8,9 Therefore, assessing implementation outcomes is important among young adults, because health interventions are likely to use digital health intervention strategies. 9

The changing trend in assessing healthcare research effectiveness with the increasing use of digital health interventions makes it important to adopt standardized evaluation criteria.4,10,11 This is critical for the quality evaluation of research to determine usability and scalability. 12 There has been an increasing trend in the use of implementation outcomes in the development of interventions, especially within the last decade. 11 This study adopted the implementation outcomes described by Proctor et al. 4 because they are comprehensive, simple, easy to use, and robust. 10 Other researchers Grimshaw et al. 13 and Michie et al. 14 have produced diverse taxonomies for implementation outcomes; however, Proctor et al. 4 provided a clear definition with critical indicators. The implementation outcomes encompass eight measures (Supplemental File 1): acceptability, adoption, appropriateness, cost, feasibility, fidelity, penetration, and sustainability.

Previous evaluations of implementation outcomes have been criticized for focusing on mental health and behavioral healthcare, 10 physical activity, 15 and determining the trends of use of implementation outcomes. 16 There is a lack of understanding regarding the use of implementation outcomes in digital health promotion interventions targeting young adults. To address this gap, the study aims to identify the inclusion of implementation outcomes in studies on digital health interventions among young adults and to analyze the tools used to assess these outcomes. This will contribute to our understanding of how these interventions can be effectively implemented and evaluated in this population, thereby facilitating the use of standardized and comprehensive evaluation frameworks.

Methods

Study design

This was a scoping review utilizing the Preferred Reporting Items for Systematic Reviews and Meta-Analyses extension for Scoping Reviews (PRISMA-ScR) Checklist. Before the conduct of this study, the study protocol had not yet been published.

Search strategy

This study focused on the elements of population, content, concept, and design framework to search three electronic databases: PubMed, Embase, and CINAHL (Supplemental File 2). The study was limited to these three electronic databases because additional searches in other databases did not yield any additional papers after screening. The population was young adults (18–25 years), 17 the content was digital health interventions, and the concept was implementation outcomes and limited to randomized controlled trials (RCTs) or intervention studies. The keywords were developed by incorporating appropriate Boolean operators: MeSH in PubMed, CINAHL MeSH in CINAHL, and Entree in EMBASE. Wildcards and truncation were also used. The search was limited to the period from January 2018 to May 2023. The search was limited to the last 5 years because of the influence of fast-evolving technology, especially in the health sciences. Also, within the period, a grey search was done in Google Scholar, but no additional papers from this source were included after screening. The retrieved studies were transported to an endnote (24.3.1) for screening (by KDK and ZAI) and confirmed by the other researchers (JL and HL). In addition, one of the authors (HL), had several years of experience in conducting search terms for systematic reviews and had her experience guiding the process. Also, the Peer Review of Electronic Search Strategies (PRESS) checklist was used to ensure the adequacy and comprehensiveness of the search terms.

Study selection and eligibility criteria

Two researchers independently evaluated the retrieved titles and abstracts to identify articles that met the inclusion criteria. During the screening phase, studies were considered for inclusion if they specifically targeted young adults aged 18–25 or included this age group within a broader study population. Additionally, only studies focused on digital health interventions, published in peer-reviewed journals, and written in English were included. Studies that assessed digital health interventions in chronic diseases such as diabetes, hypertension, cardiovascular disease, depression, and dementia, and described any phase of testing a medication or disease-related technological product were excluded. During the screening process, discrepancies were discussed in meetings between the two screening researchers or with the other research team members. All discrepancies were resolved through consensus.

Data charting

Three researchers extracted, compared, and integrated data from each selected study using a predetermined matrix. First, a matrix was developed, discussed, and agreed upon by the research team. The data extraction matrix contained the study characteristics, implementation outcome reports, the type of implementation outcomes used based on the type of digital health intervention, and implementation outcomes based on health promotion. The integrative thematic synthesis analysis method 18 was used. Data were transformed into descriptive qualitative statements and interventions were assessed for each implementation outcome. The implementation outcomes were mapped according to the type of digital health intervention and health promotion intervention. Consequently, the frequency of implementation outcomes was determined and recorded.

Results

The study results are presented as search results and characteristics, reported intervention outcome measures, implementation outcomes-based health promotions, and implementation outcomes-based digital health intervention methods.

Search results and study characteristics

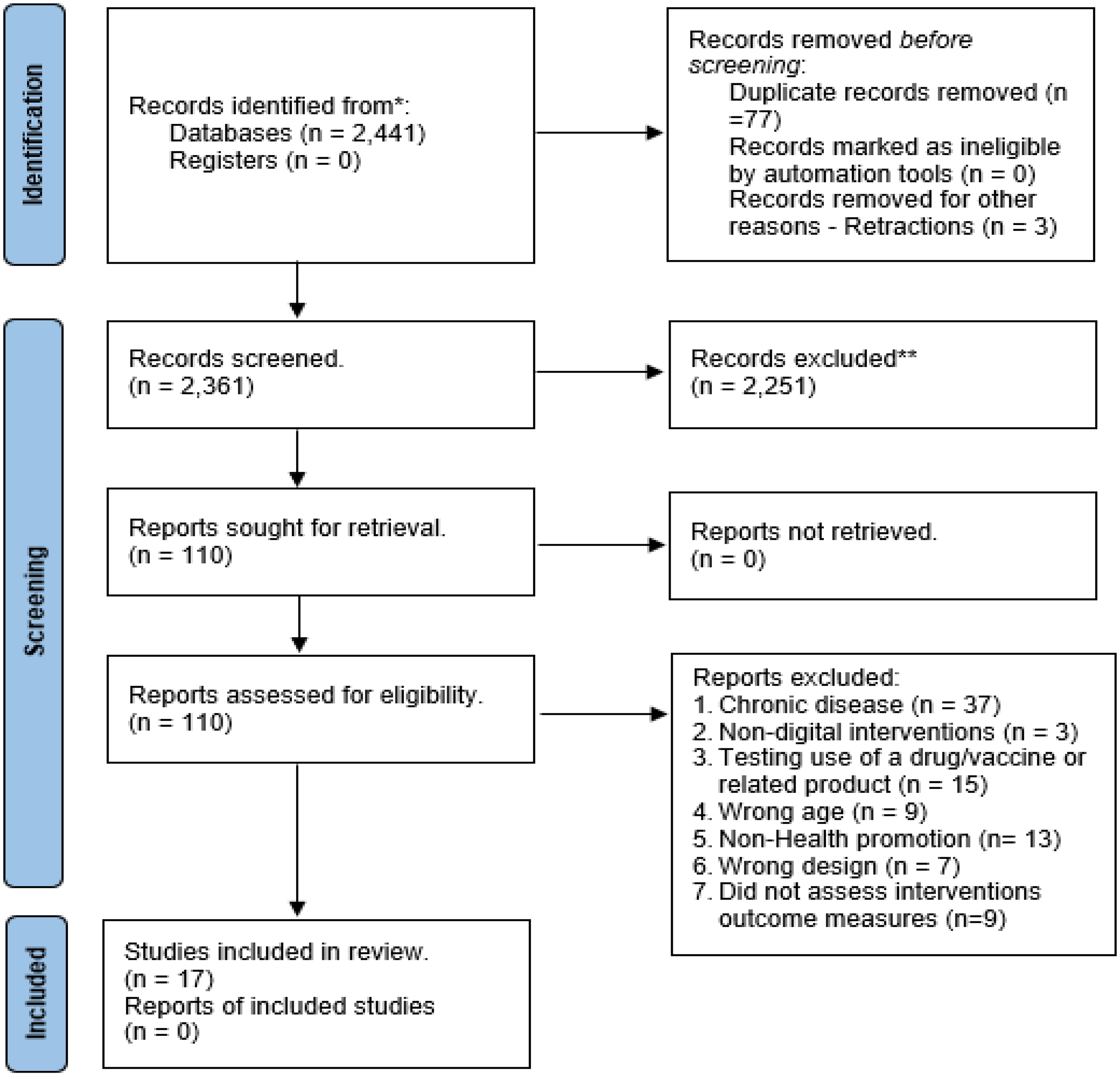

The search in PubMed (1358), Embase (377), and CINAHL (706) identified 2441 titles. The titles were transferred to Endnote software (Version 24.3.1). Then, duplicates (77) and retractions (three) were identified electronically and manually and removed. Next, 2361 articles were screened and 2251 were excluded, resulting in 110 studies selected for full-text review. Eventually, 17 studies were selected as eligible (Figure 1). The studies were conducted in the United States of America (n = 6), the United Kingdom/England (n = 4), Australia (n = 2), Germany (n = 1), Taiwan (n = 1), Netherlands (n = 1), Japan (n = 1), and Singapore (n = 1). The studies were conducted at a university or college (n = 12), at the workplace (n = 1), using online crowdsourcing (n = 1), in the community (n = 1), or in a hospital setting (n = 2). Table 1 provides details on the characteristics of the studies.

Preferred Reporting Items for Systematic Reviews and Meta-Analyses (PRISMA) flow diagram.

Study characteristics and key findings.

RCT: randomized controlled trial.

Reported intervention outcome measures

Among all the studies reviewed, eight implementation outcomes were reported, and the minimum number of outcomes reported was one (Table 2). No study assessed more than four implementation outcomes. Specifically, the number of implementation outcomes assessed in the studies was four (n = 3), three (n = 7), two (n = 6), and one (n = 1).

Acceptability: Acceptability was the most assessed implementation outcome (n = 10) and was directly measured in some of the studies, whereas in other studies, acceptability was assigned based on measurement indicators. The indicators included app usage, patient motivation and satisfaction with the intervention, complexity, and error19–21; the Intrinsic Motivation Inventory Scale

22

; and operability, complexity, and participant satisfaction.

21

Adoption/uptake: None of the studies specifically reported on adoption or uptake as an implementation outcome. However, based on the reported indicators, adoption was assigned in three studies as an implementation outcome.20,23,24 The studies where adoption was assigned reported on use preference based on participants’ preferred language,

23

applicability and social acceptance,

24

and intention to use.

20

Appropriateness: None of the studies reported appropriateness as an implementation outcome. However, based on the indicators that were reported, intervention appropriateness was assigned in seven studies. These studies assessed perceived fit24,25; safety and suitability

26

; usefulness

27

; relevance and usefulness

20

; interest and usefulness

22

; and usefulness, relevance, and usability.

28

Cost: As an implementation outcome, cost was assessed in four of the studies.29–32 Regarding assigned indicators of cost, the studies measured the total and recruitment costs.

29

Feasibility: Ten of the studies assessed the feasibility of the implementation outcomes. In four studies, the implementation outcome was assigned based on the indicators reported. These studies assessed recruitment and retention,26,28,33,34 interest, and enjoyment.

22

Fidelity: Fidelity of the interventions was reported in eight of the studies, of which four assigned fidelities based on the indicators reported. These studies were based on a predetermined protocol,

24

ensured the quality of implementation intention plans,

33

adhered to previous camera protocols,

25

adhered to weekly topics with example text messages,

23

and ensured accuracy owing to predetermined protocol.

21

Penetration/saturation: None of the studies specifically reported that they assessed penetration/saturation as an implementation outcome. However, in assessing each study using the proposed indicators, only one study had penetration/ saturation assigned as an implementation outcome.

35

Sustainability: None of the studies reported intervention sustainability as an implementation outcome. However, sustainability was assigned in three studies using the proposed indicators. These studies reported on the follow-up to evaluate the quit rate to show continuation into the study,

30

attitude based on a 7-point Likert-type motivation scale,

20

and willingness to recommend the intervention.

22

Intervention outcome measures.

*Indicates cases where the implementation outcome was originally specified using a different measurement method but was reassigned by the authors based on the reported indicators. ## Indicates cases where the specific measurement method was not detailed, but the authors assessed that it had been measured.

Implementation outcomes based on health promotion intervention

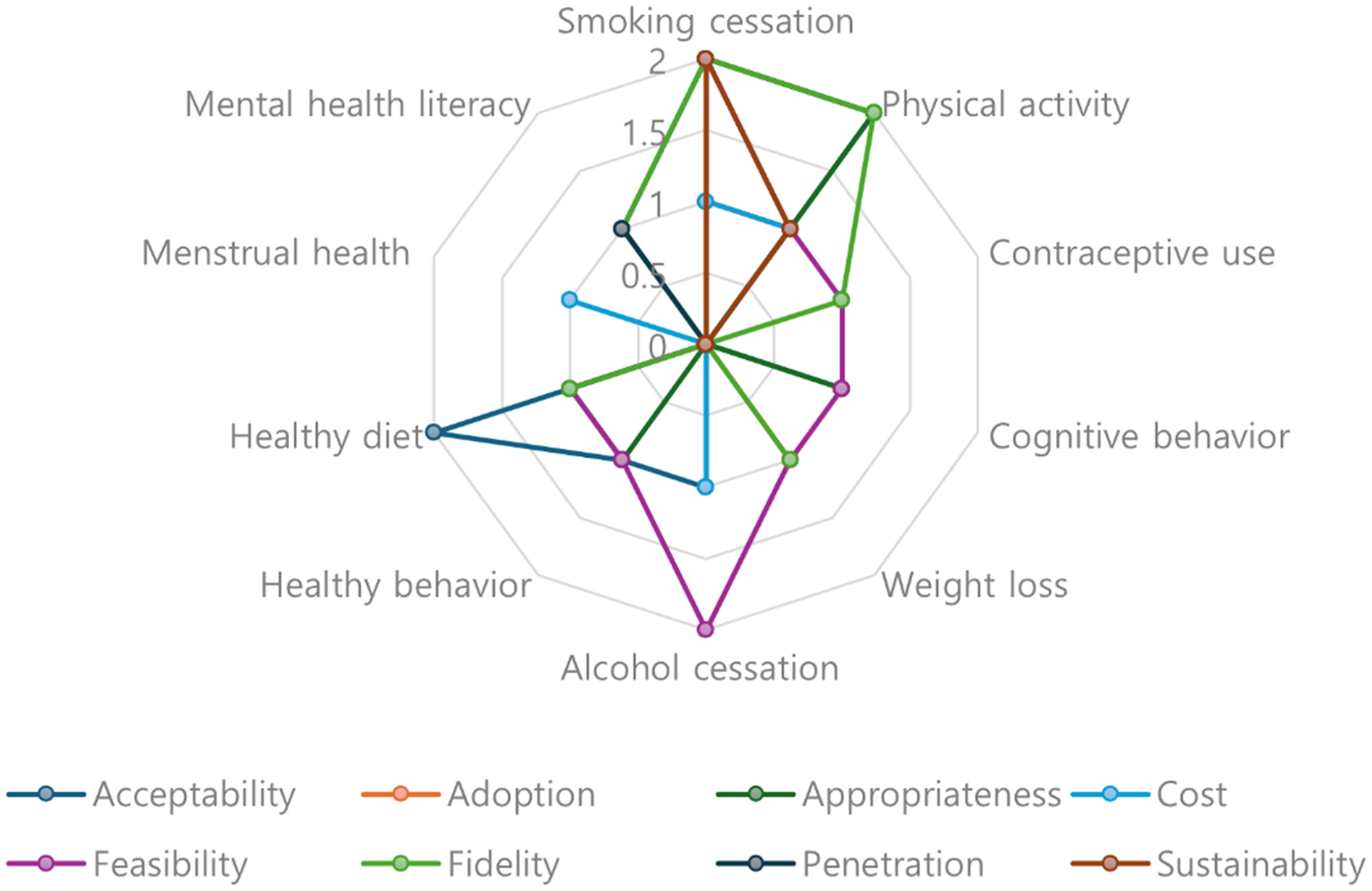

The health promotion outcomes targeted in the various interventions included smoking cessation (n = 4), physical activity (n = 2), contraceptive use (n = 1), cognitive behavioral models (n = 1), weight loss (n = 1), alcohol cessation (n = 2), positive health behavior (n = 1), menstrual health (n = 1), a healthy diet (n = 2), and mental health literacy (n = 1). Regarding the implementation outcomes reported based on the specific health promotion intervention in each study, the highest single reported interventions were smoking cessation (n = 12) and physical activity (n = 10), while menstrual health was the least reported intervention. The health promotion interventions that reported at least all the implementation outcomes were physical activity and smoking cessation, except for penetration. In addition, for smoking cessation, acceptability, appropriateness, feasibility, fidelity, and sustainability were the most assessed (n = 2), while cost was the least assessed (n = 1). For physical activity, acceptability, appropriateness, feasibility, and fidelity were the most assessed (n = 2), while adoption, cost, feasibility, and sustainability were the least assessed. Figure 2 visualizes these findings, with each axis representing a specific health promotion domain (e.g. physical activity, smoking cessation, and healthy diet). The values increased outward from the center, indicating a higher frequency of reported implementation outcomes.

Implementation outcomes based on health promotion interventions.

Implementation outcomes based on digital health intervention methods

The digital implementation techniques used included telephone calls (n = 1); social media (n = 2); web-based programs (n = 4); short message service (n = 5); wearable devices (n = 1); a mobile application (n = 3); and a combination of telephone calls, emails, and short message service (n = 1). The implementation outcomes that were reported under each method showed that short message service (n = 14), web-based programs (n = 11), and mobile applications (n = 7) were the most frequently reported, whereas telephone calls (n = 3); wearables (n = 2); and the combination of phone calls, emails, and short message service (n = 2) were the least frequently reported. The short message service-based techniques assessed all implementation outcomes except for penetration. Web-based interventions also reported all the implementation outcomes except for adoption, cost, and penetration, whereas mobile applications reported all except for adoption and penetration. Social media methods assessed all the implementation outcomes except for penetration and sustainability (Figure 3).

Implementation outcomes based on digital health intervention.

Discussion

This review contributes to the scientific literature by examining the types of implementation outcomes in digital health interventions targeting young adults, focusing on a specific healthy population and intervention field. Considering that digital health interventions are becoming an increasingly important strategy among young people, the contribution of this study is even more meaningful. A key strength of the review lies in its systematic approach, which includes a focused research scope, stringent screening criteria, and the thorough mapping of implementation outcomes to specific health promotion and digital health interventions. Furthermore, unlike previous reviews that assigned indicators only when explicitly mentioned in studies, this review provides a more comprehensive synthesis by incorporating a diverse range of reported indicators.

Implementation outcomes are essential for the generation of scientific knowledge for the advancement of implementation science, 4 particularly in ensuring the successful translation of interventions from controlled, laboratory-type settings to real-world clinical environments. 11 Both implementation outcomes and intervention outcomes (effectiveness) are crucial for creating scientific knowledge that is beneficial for the entire population, 36 but implementation outcomes are critical for determining the effectiveness of intervention on a target population.37,38 Implementation outcomes serve as prerequisites for achieving service-level outcomes, which in turn influence client outcomes.4,36,38–40 They play a fundamental role in facilitating the successful delivery of complex interventions owing to the mitigating influence they possess over the sociopolitical context. 41 Therefore, considering implementation outcomes during program development will promote decision-making and optimizing service and client outcomes. 40

Nevertheless, this review found that although at least one implementation outcome, a crucial factor for ensuring scientific rigor, 15 was reported in each study, none of the studies assessed all implementation outcomes comprehensively. Additionally, there was an uneven distribution in the evaluation of implementation outcomes across studies, depending on intervention type, duration, participants, and resource availability. This review aligns with a previous study that also reported acceptability and feasibility as the most assessed implementation outcome.15,42 However, unlike other fields where appropriateness was considered as a key outcome,16,43 our review found that fidelity was more commonly prioritized in digital health interventions. Implementation outcomes are valuable in the decision-making process, as they are adaptable to research settings, easy to implement, and highly acceptable. 44 Therefore, it is crucial for researchers to select implementation outcomes that are appropriate for the target population and intervention context.

Furthermore, this review identified that the definition of implementation outcomes tends to be ambiguous and often overlaps with other indicators. In some cases, implementation outcomes were applied inconsistently or inappropriately.11,45 For instance, one study reported consistently assessing feasibility, 27 yet failed to specify concrete measurement criteria. Additionally, terms such as recruitment and retention, which are often classified under feasibility, and attitude, categorized under sustainability, were not originally included in the standard implementation outcome indicators. 15 These inconsistencies and overlaps complicate cross-study comparisons, underscoring the need for clearer definitions and standardized measurement criteria for implementation outcomes.10,43,46

Given that implementation outcomes often focus on specific aspects and do not comprehensively address all relevant indicators,15,43 there is a clear need for conceptual refinement and a systematic approach to their assessment. With researchers increasingly recognizing the importance of implementation outcomes and incorporating them in the early stages of intervention development, it is essential to reach a consensus on the most critical implementation outcomes to be evaluated. In conclusion, researchers should strive to establish clearer definitions of implementation outcomes and integrate them with theoretical frameworks to effectively analyze intervention effectiveness and service outcomes.15,16 Additionally, strategies should be developed to engage stakeholders in the decision-making process, facilitating policy development and practical application.

We mapped the implementation outcomes to digital health interventions and health promotion interventions among young adults. Understanding and evaluating implementation outcomes in digital health interventions, regardless of the intervention focus, target disease/problem, setting, and population, can be a panacea for attaining intervention effectiveness. Implementation outcomes were mostly used among web-based interventions compared to telephone call interventions, indicating that with the increasing complexity of intervention methods, researchers have assessed implementation outcomes. Regardless of the implementation method, implementation outcomes are important to consider, and implementation research should not focus only on effectiveness. 43 We showed that smoking cessation and physical activity were the most prevalent types of health promotion intervention and had the most implementation outcomes. Additionally, implementation outcomes are important measures for health promotion intervention suitability, scalability, usability, policy, and program outcomes.47–49 These are critical indices for translating research into real-world clinical practice. 48

However, the limitation of this study is the exclusion of non-English studies, which limits the generalizability of the findings. Notably, the majority of the interventions analyzed in this review were conducted in high-resource settings, highlighting the existing disparity in the advancement of implementation science within low-resource contexts.1,2 Researchers must address this gap by ensuring the efficient utilization of implementation outcomes to facilitate the development and application of digital health interventions in resource-limited settings.10,11,15 Such efforts are essential to promote equitable access to evidence-based interventions across diverse populations. Moreover, as implementation science is still evolving, there is a likelihood that studies that used different terminologies to refer to or describe the implementation outcomes might not have been indexed appropriately and were excluded from the search or screening. In addition, this scoping review focused on implementation outcomes instead of other implementation outcomes. Hence, it is difficult to establish a direct relationship between the influence of implementation outcomes assessment and intervention effectiveness. Therefore, future systematic reviews should determine the influence of assessing implementation outcomes and its subsequent influence on intervention effectiveness and client-level outcome measures. Furthermore, due to the limited number of studies available in this field, this review conceptually included both implementation and intervention studies and expanded the age range to encompass all age groups that include young adults. Given that the objective of this scoping review is to assess the utilization of implementation outcomes rather than to evaluate intervention effectiveness, the inclusion of such studies was deemed appropriate. Therefore, future reviews should consider refining study designs to more precisely identify the utilization of implementation outcomes within specific research frameworks.

Conclusions

Researchers studying digital health interventions that target young adults in health promotion have largely assessed acceptability and feasibility, whereas there was no significant emphasis on other outcomes. There is a need to focus on the process of generating knowledge and intervening in implementation outcomes, a critical aspect. This study found that diverse and multiple indicators were used to describe implementation outcomes. Therefore, researchers in implementation science must develop and use standardized terminology to describe the different indicators for implementation outcomes. Developing and utilizing standard concepts, terminology, and indicators for implementation outcomes is critical for advancing implementation science. In this review, it was noted that none of the studies assessed all the implementation outcomes. For intervention scientists to achieve the desired impact, implementation planning is warranted, including identifying barriers in advance, using theoretical frameworks, tailoring interventions, engaging stakeholders, and co-designing processes by prioritizing implementation outcomes.

Supplemental Material

sj-docx-1-dhj-10.1177_20552076251330194 - Supplemental material for The inclusion of implementation outcomes in digital health interventions for young adults: A scoping review

Supplemental material, sj-docx-1-dhj-10.1177_20552076251330194 for The inclusion of implementation outcomes in digital health interventions for young adults: A scoping review by Kennedy Diema Konlan, Zainab Auwalu Ibrahim, Jisu Lee and Hyeonkyeong Lee in DIGITAL HEALTH

Supplemental Material

sj-docx-2-dhj-10.1177_20552076251330194 - Supplemental material for The inclusion of implementation outcomes in digital health interventions for young adults: A scoping review

Supplemental material, sj-docx-2-dhj-10.1177_20552076251330194 for The inclusion of implementation outcomes in digital health interventions for young adults: A scoping review by Kennedy Diema Konlan, Zainab Auwalu Ibrahim, Jisu Lee and Hyeonkyeong Lee in DIGITAL HEALTH

Supplemental Material

sj-docx-3-dhj-10.1177_20552076251330194 - Supplemental material for The inclusion of implementation outcomes in digital health interventions for young adults: A scoping review

Supplemental material, sj-docx-3-dhj-10.1177_20552076251330194 for The inclusion of implementation outcomes in digital health interventions for young adults: A scoping review by Kennedy Diema Konlan, Zainab Auwalu Ibrahim, Jisu Lee and Hyeonkyeong Lee in DIGITAL HEALTH

Footnotes

Acknowledgements

The authors ZAI and JL received a scholarship from Brain Korea 21 FOUR Project funded by NRF of Korea, Yonsei University College of Nursing.

Availability of data and materials

The datasets used and/or analyzed during the current study are available from the corresponding author upon reasonable request.

Consent to participate

Not applicable.

Consent for publication

Not applicable.

Contributorship

KDK and HKL: conceptualization; KDK, HKL, ZAI, and JL: methodology; KDK, ZAI, and JL: investigation; KDK, HKL, ZAI, and JL: data curation; KDK: writing–original draft preparation; KDK, HKL, ZAI, and JL: writing–review and editing; HKL: supervision; HKL: project administration.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was supported by the National Research Foundation of Korea (NRF) grant, funded by the Ministry of Education under Grant [NRF-2020R11A2069894] and the Ministry of Science and ICT [RS-2023-00279916].

Ethical approval

Not applicable.

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.