Abstract

Aim

This paper describes how we engaged with adolescents and health providers to integrate access to digital health interventions as part of a large-scale secondary school health and wellbeing survey in New Zealand.

Methods

We conducted nine participatory, iterative co-design sessions involving 29 adolescents, and two workshops with young people (n = 11), digital and health service providers (n = 11) and researchers (n = 9) to gain insights into end-user perspectives on the concept and how best to integrate digital interventions in to the survey.

Results

Students’ perceived integrating access to digital health interventions into a large-scale youth health survey as acceptable and highly beneficial. They did not want personalized/normative feedback, but thought that every student should be offered all the help options. Participants identified key principles: assurance of confidentiality, usability, participant choice and control, and language. They highlighted wording as important for ease and comfort, and emphasised the importance of user control. Participants expressed that it would be useful and acceptable for survey respondents to receive information about digital help options addressing a range of health and wellbeing topics.

Conclusion

The methodology of adolescent-practitioner-researcher collaboration and partnership was central to this research and provided useful insights for the development and delivery of adolescent health surveys integrated with digital help options. The results from the ongoing study will provide useful data on the impact of digital health interventions integrated in large-scale surveys, as a novel methodology. Future research on engaging with adolescents once interventions are delivered will be useful to explore benefits over time.

Keywords

Introduction

Many digital health interventions have been shown to be effective for improving health and wellbeing in adolescents1–8 and are of significant interest due to their potential for enormous scalability and cost-effectiveness. Digital health interventions and digital surveys often recruit from the same communities and share the goal of improving health outcomes. Access to digital interventions could be integrated into digital surveys, for example, so that survey respondents are automatically and confidentially offered opportunities to register for or receive health information or links to programs or apps. Such an approach could offer significant potential gains. First, digital technology can allow anonymized linking of survey and intervention uptake data, enabling nuanced analysis of intervention uptake. Second, as survey respondents will have just reflected on their health, they may be particularly receptive to direct and easy access to health information and interventions. Health survey respondents are typically not provided with support regarding health concerns following surveys, therefore developing a simple and acceptable approach to do this could be an important ethical development.

We systematically reviewed the literature and found no large-scale population health surveys that incorporated access to digital interventions. Small-scale surveys and clinical screening tools that have provided access to tailored interventions or links to relevant web-based resources have had promising or positive results9–11 and been seen as useful by school health nurses and youth. 12 Available data on uptake and appreciation of survey-integrated interventions for any age group is limited but shows potential to encourage participants to seek help and change behavior.10–14

Overview of the Youth2000 surveys

The Youth2000 surveys are a series of cross-sectional studies carried out in 2001, 2007 and 2012, focusing on the indicators of health and wellbeing in New Zealand secondary school students. The size of each survey was substantial, with approximately 100 schools and between 8500 and 10000 students involved in each survey. 15 Nationally representative samples of secondary school students aged 12–18 years were recruited by randomly selecting schools within New Zealand and then randomly selecting students from included school rolls. The surveys featured hundreds of questions on a wide range of health topics, including substance abuse, sexual health, physical activity, emotional health and social connectedness. Anonymised, self-reported responses were collected through multimedia computer-assisted self-interviews (M-CASI). These were administered through laptops for the 2001 survey and tablets in the 2007 and 2012 surveys. 15 Youth19 is the most recent survey of the Youth2000 series and is currently ongoing.

At a time when health surveys and brief health interventions each frequently use digital technology, often recruiting participants from the same population, we aimed to: (1) develop an adolescent health survey that included integrated ‘opt in’ digital interventions; (2) explore digital intervention uptake by, and impact on, subgroups of the surveyed population; and (3) improve the uptake of digital interventions among underserved groups.

In this study, we used participatory design principles to explore secondary school students’ perspectives on the concept of a health survey that includes integrated ‘opt in’ digital health interventions (the ‘

We used a co-design process that involved repeated evaluation of designs by users from the early stages of the study. This iterative qualitative research process identifies and incorporates the perspectives of the target users.19–21 As researchers, we played the role of ‘facilitators’ providing survey questions, design templates and ideas to the participants. The users or student participants were actively involved in the design process, serving the role of experts in their own experience. Students were therefore empowered to generate new ideas and concepts in accordance with their needs and in cooperation with the researchers. 22 Co-design principles have been successfully used in working with young people to develop web-based support tools to promote youth mental health 23 and sexual health. 24 , 25

This paper describes the process by which we engaged with young people and health practitioners, including digital service providers, to develop an intervention-integrated survey for adolescents. We aimed to explore secondary school students’ perspectives on the overall concept of intervention-integrated surveys and the particular interventions to be integrated. We also sought to develop processes for linking the survey and the digital help options in a way that was secure and appealing to young people. The survey (the

Methods

Participants

Co-design sessions

We chose secondary school students as our co-designers to gain insights into end-user perspectives. We purposefully selected and recruited three schools from ethnically diverse low income areas and two schools from ethnically diverse high income areas. The study was advertised in each school and students over the age of 16 years volunteered to participate. The University Ethics Committee regard young persons aged 16 or above as able to give consent for their own participation in research. In total, 29 students (18 female; 9 male; 1 transmale; 1 nonbinary: 6 Māori, 1 Pacific Islander, 7 Asian, 16 European) were recruited for nine co-design sessions, each involving between two and four student participants.

Workshops

We invited secondary school students, young post-secondary school students, digital health service providers, and school and community health practitioners to participate in workshops. We held two workshops that included both adolescents and adults. In total, there were eight adolescents, three young adults, five digital health care service providers, six school or community stakeholders and nine researchers.

Study design

The study, conducted in 2018 and 2019, embodied adolescent-practitioner-researcher engagement through co-design sessions and workshops. The key steps of the study approaches are summarised in Figure 1 and detailed below.

Summary of study steps.

Co-design sessions

Each session lasted up to one-and-a-half hours and involved a new set of students. Interview prompts are provided in Table 1. First, participants worked through parts of the survey to orientate themselves to the research questions. They were then prompted to comment on (1) their overall thoughts about the survey and (2) their perspectives on the concept of offering survey respondents the opportunity to have interventions sent to their phone or email. Each group of students evaluated the results from the previous session and generated new ideas to develop and improve.

Participatory co-design questions.

Participants in the initial co-design sessions (Sessions 1-6) were shown screenshots that demonstrated how the information on digital help options could be presented (Figure 2). Their perspectives on the following aspects of the overall concept were then explored: (1) where in the survey the information should be placed (e.g. at the end of the survey, after each set of relevant questions etc.); (2) how the information should be sent to survey respondents; (3) comfort with providing email/phone contact details; (4) other potential contact methods; (5) how the process could be done effectively, including when in the survey respondents would feel the most comfortable providing their contact details; and (6) the wording and formatting of messaging to be sent to respondents’ phones (via text) or email addresses.

Draft screenshot of information on health interventions.

Participants in the second tranche of co-design sessions (Sessions 7–9) were provided screenshots of the proposed content at the end of the survey (Figure 3) and were asked their perspectives on the overall concept, wording and method of presentation. The remainder of these sessions focused on how the information would be sent to survey respondents (e.g. wording and formatting of messages) and the different types of ‘help’ that could be provided (e.g. specific topics).

Example screenshots of end of survey information.

Workshops

We used workshops to explore perspectives on the consolidated findings of each tranche of co-design sessions. Workshops focused on selecting help options to be linked to the survey and developing processes to link the survey to the digital interventions in a way that was secure and appealing to young people. The workshops were designed to strengthen collaborative partnerships based on mutual awareness of the challenges and aspirations of the communities of concern. A key objective of these workshops was to support facilitated dialogue between secondary school students, researchers and digital and school health service providers.

Each workshop was conducted over 1–2 hours at a location convenient to participants. We convened semi-structured conversations in small groups, so that young people, digital health service providers and researchers could talk together about their mutual challenges, any constraints, and why these might exist. We were especially mindful of perceived power differentials and facilitated a process that gave voice to secondary school students.

Prototype development

The material and feedback from the co-design sessions and workshops were reviewed to develop the wording and look of the website, identify help options for potential inclusion and draft key topic areas, including the types of information that should be available under each topic. Researchers then searched for sources relating to each topic with the student participants’ and, school and digital health service providers’ ideas in mind, which would become accessible to students in a website format. Clinical expertise and peer review from members of the research team and external community clinicians were also considered when selecting sources.

Data interpretation

The focus was on participatory analyses and interpretation of the data gathered and the use of these findings to inform the design and delivery of links to digital health information and interventions. In each participatory design session, we used the “think aloud” method to gather information. This method involves research participants speaking aloud while performing specific tasks and provides detailed information about the thought processes of users during task performance. 26 The approach is particularly useful for the design of computer systems. The process provided opportunities for participants to raise issues that may have been ignored in a more researcher-directed data collection strategy.

We used the affinity diagramming technique to interpret data generated from the co-design sessions. 27 The principles of this analytic technique have been previously adapted to suit prototype evaluations, 28 and interview data, 29 making it well suited to this study. The co-design process involved repeated evaluation of designs. First, researchers read transcribed interviews and created notes based on these data. The notes were then reviewed, organised into themes, further reviewed, and presented to the next co-design meeting.

Pilot testing the survey with integrated digital interventions

Following the participatory design sessions, the intervention-integrated Youth19 survey was developed and piloted at two schools, one semi-private co-educational school and one boys-only state school. The two schools, provided us the opportunity to pilot test the survey amongst ethnic and socioeconomically diverse students similar to those who participated in the co-design sessions as well as the secondary school students who are likely to participate in the Youth19 survey.

Results

Intervention-integrated survey: Perceptions and process

There was a high level of consistency and enthusiasm for integrating access to help options in a large-scale youth health survey and this was universally viewed as highly beneficial and acceptable. As one student mentioned, “

There was strong and consistent agreement for providing all survey respondents links to all help options, rather than personalized links based on their survey responses. As noted by one student, “

Further, participants thought that it would be better to offer survey respondents the choice of interventions once at the end of the survey, regardless of whether the survey was fully completed, rather than at the end of specific sections. They thought that the latter approach could discourage survey responders from completing the survey or engaging with the information as it would become too repetitive. A few participants thought that it would be helpful to make the offer of further information available throughout the survey via a ‘help’ button in the corner, but the majority thought this was unnecessary as survey responders would most likely assume it was related to technical help for the survey and would not use it.

Providing cell phone numbers or email addresses to receive digital help options was considered acceptable if there was clear assurance that confidentiality and anonymity would be protected. Students highlighted that survey respondents would feel assured if, at the beginning of the survey, it was explained how their confidentiality would be ensured. However, they felt that a written statement would not be sufficient, as they believed the majority of responders would not read it and suggested that a verbal explanation would be more effective and would enhance usability. Further, students endorsed the idea of offering the choice of interventions via both text and email, as this would enhance usability for those who may not have access to either a smartphone or a computer.

Participants preferred a simple format with all the information available sent via one email or text message. They also stated that information should be sent to survey responders within a week of completing the survey and that having one or two follow up emails would be acceptable, as long as this contact was not too regular (e.g. one in a fortnight and one in a month). They indicated that these emails should be generic to assure users that their activity was not being monitored. To enable user control, participants recommended a generic subject line such as ‘Youth19 Survey’, which would allow survey responders the opportunity to open the information in their own time once they knew it was received and ensure that others around them who may happen to see the email would not be aware of its content.

Information on interventions to be integrated

Students and school and community health service providers indicated that offering access to digital health tools and information via a website would be a good way of integrating opt-in options in the digital health survey. Overall, participants found the types of information suggested acceptable and of potential benefit and identified key principles around wording, such as inclusivity, friendliness and neutral wording. Participants also identified key principles to be taken into account, namely: confidentiality, user choice and control, and usability. These key principles extended to the way in which information would be presented to survey responders. Students indicated that a short, sincere message from the Youth19 research team should be included in the email or text to remind survey responders that there are people who care about their health and wellbeing.

Digital health providers Participants indicated that the wording of any information presented to survey responders should be carefully considered to ensure ease and comfort of uptake. Students They preferred neutral language. For example,

Participants gave feedback on the specific wording of key headings and the types of information that should be made available. For example, students preferred the heading ‘

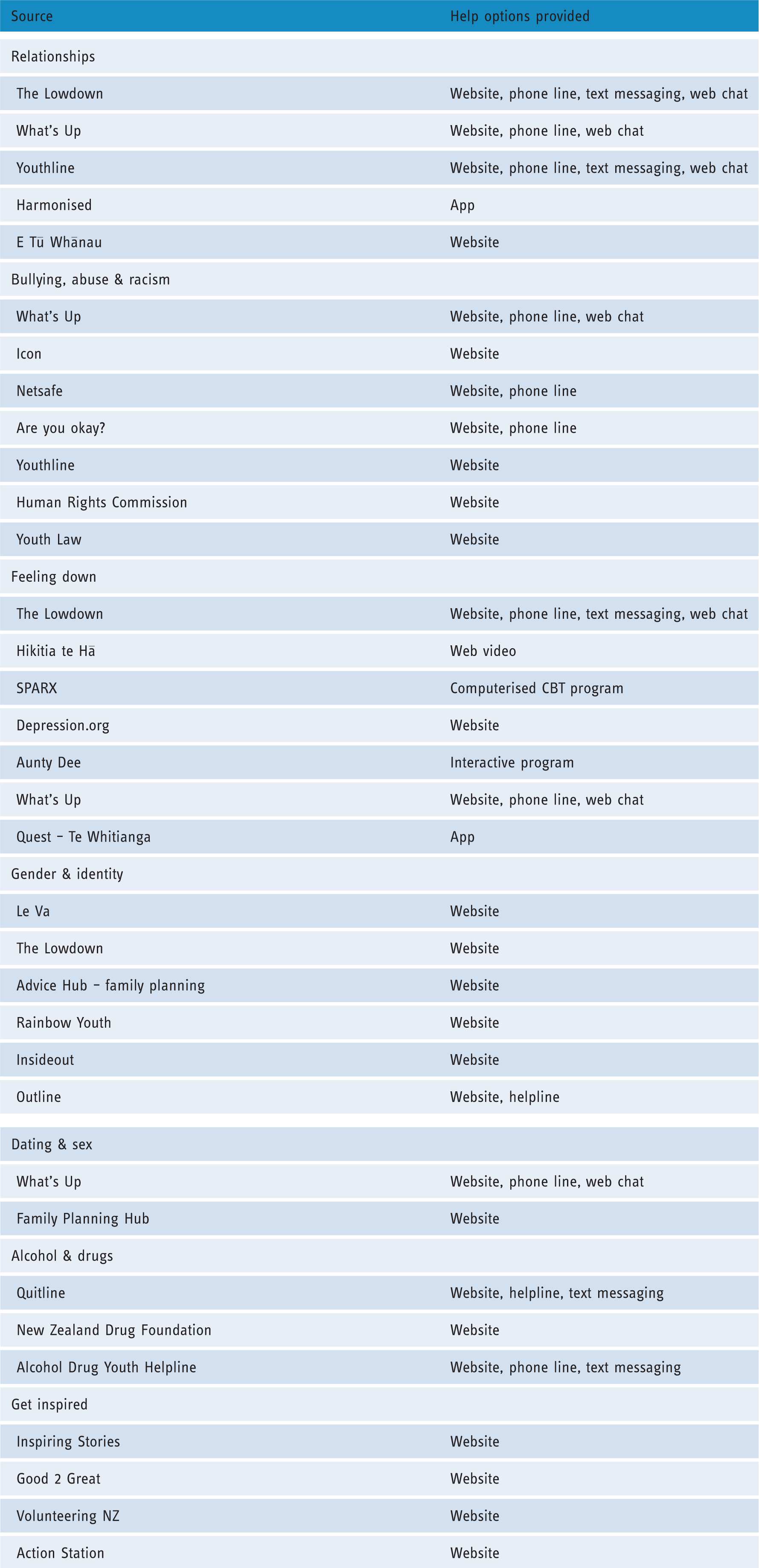

The health and wellbeing resources that were included in the intervention-integrated Youth19 survey are shown in Table 2.

Integrated information on health and wellbeing interventions.

Discussion

In this innovative co-design process, we identified universal support from participating adolescents, digital health providers, and community stakeholders for integrating access to health interventions into a large scale youth health survey. In fact, adolescents considered this helpful and were occasionally surprised or disappointed that this was not the norm. Participants provided clear and consistent feedback for guiding this process including: all students should be offered all help options, rather than specific options based on personalized/normative feedback; assurance of confidentiality; usability; participant choice and control; and neutral, yet sincere, language. The tone of messaging, such as inclusivity, friendliness and being non-judgmental, was considered important to ensure ease and comfort of uptake. Identified interventions ranged from emotional health to internet safety and the types of information available in the selected digital resources was viewed as being acceptable and beneficial.

Service-user and service-provider collaboration and partnership was central to this research through the consultative process we embedded throughout the research. The participatory design methodology we used ensured that secondary school students, the end-users, were involved from the beginning of the study. The workshops provided further opportunities for the end-users to engage with and discuss ways to optimise the delivery of the intervention-integrated survey in ways that would work for them. Previous studies 24 , 25 , 30 , 31 that engaged with young people in developing digital interventions have also identified that using co-design processes has highlighted the value of user-centred design that allowed for meaningful engagement and enabled focus on user need. For example, Nakarada-Kordic et al. (2017) 31 conducted co-design sessions to develop an online resource for young people experiencing psychosis and found that participants discussed matters that were not expected by clinicians. This information allowed for the development of a resource that was more effective for its users. The current research highlighted the importance of wording as it is influential to the level of ease and comfort that users will have to engage with receiving information and emphasises user control. A study conducted by Buus et al. (2019) 32 used similar methods to gain user perspectives on developing an app for people in suicidal crisis and found that participants perceived clinical language to be unhelpful to users.

Our study provides useful insights for digital health service providers about important factors, such as assuring confidentiality and user choice that must be considered when providing the option to seek help from a digital health survey. Confidentiality concerns are commonly raised by adolescents in relation to digital health interventions and need to be managed to facilitate participation.33–35 This study also emphasizes that it is important for researchers looking to integrate digital health interventions in surveys to meaningfully engage with service users in co-design and workshop settings to ensure that what is being provided to them will be the most beneficial.

This paper has focused on the methods used to collaborate with young people and stakeholders about the acceptability of integrating access to help options into a large-scale adolescent health survey. The results of the actual uptake of the interventions and their ‘real life’ usage will provide greater clarity on whether adolescents will actually engage with these help options when given the opportunity. Future research could also consider how to engage with adolescents once the interventions have been delivered to ensure they continue to be acceptable and beneficial over time.

Limitations

First, because each pair of students only attended one co-design session, it was time consuming to brief each new pair of students on the objectives of the project. Second, because each pair had differing views, the design choices of one group sometimes contrasted with those of other groups. However, we decided that this was the best approach, as it would allow us to build on the knowledge and experiences of a variety of students from diverse backgrounds. As mentioned previously, conflicts in opinion are regarded as resources in the co-design approach. Wadley et al. (2013) 36 used a similar method when developing an online therapy for youth mental health, although they conducted separate co-design workshops for distinct groups of users, patients and clinicians. 36 We also acknowledge that the views of the limited number of adolescents involved in the study may not reflect those of all adolescents and that participants’ expressed preferences may change over time.

A further limitation is that we engaged with students who had not previously used the digital help options tested and may not need to use them in future. Previous studies using similar methods have highlighted the need to engage with young people who have used the finalised intervention in order to receive their feedback.19,37 Hetrick et al. (2018) 37 used a similar method when developing an app to facilitate self-monitoring and management of mood symptoms, and argued that it would be beneficial to continue co-design sessions as the intervention developed, as co-design is an iterative process. Given that young people are a heterogeneous group with varied needs and preferences, the adolescents involved in our co-design sessions may not be representative of those who need access to the health interventions. This limitation has been previously noted by others using a similar approach. 19

Conclusion

In this innovative co-design study, adolescents and stakeholders were strongly supportive of the concept of integrating access to help options into a large-scale youth health survey and provided clear direction for its implementation.

Footnotes

Acknowledgements

None.

Contributorship

RPJ and TF researched literature, conceived the study and developed the protocol. RPJ was involved in gaining ethical approval. All authors were involved in participant recruitment, data collection and data analysis. RPJ and LD wrote the first draft of the manuscript. All authors reviewed and edited the manuscript and approved the final version of the manuscript.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Ethical approval

The study was approved by the University of Auckland Human Participant Ethics Committee (reference number 021823).

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was funded by the Health Research Council of New Zealand (grant number 18/473).

Guarantor

RPJ.

Peer review

This manuscript was reviewed by reviewers who have chosen to remain anonymous.