Abstract

Background

Generative artificial intelligence (AI) integrated programs such as Chat Generative Pre-trained Transformers (ChatGPT) are becoming more widespread in educational settings, with mounting ethical and reliability concerns regarding its usage. This paper explores the experiences, perceptions, and usability of ChatGPT in undergraduate health sciences students.

Methods

Twenty-seven students at Carleton University (Canada) were enrolled in a crossover randomized controlled trial study from a Health Sciences course during the Fall 2023 academic term. The intervention condition involved the use of ChatGPT-3.5, whereas the control condition involved using conventional web-based tools. Technology usability was compared between ChatGPT-3.5 and the traditional tools using questionnaires. Focus group discussions were conducted with seven students to further elaborate on student perceptions and experiences. Reflexive thematic analysis was employed to identify themes from the focus group data.

Results

Easiness of learnability for personal use and a perception of quick learnability towards ChatGPT-3.5 were significantly higher, compared to conventional online tools from the Systems Usability Scale. Qualitative results highlighted strong benefits of ChatGPT-3.5, such as being a tool for increased overall productivity and brainstorming. However, students identified challenges associated with reliability and accuracy, and concerns about academic integrity.

Conclusions

Despite the benefits and positive usability of ChatGPT-3.5 identified by students, an explicit need for the development of policies, procedures and regulations remains. An established framework of best practices for the usage of AI within health science education is necessary. This will ensure accountability of users and lead to a more effective integration of AI technologies into academic settings.

Keywords

Introduction

Generative artificial intelligence (AI) is a branch of computer science that includes intelligent machines created from machine learning, deep learning, natural language processing, and so on. 1 Large language models (LLMs) can generate human-like responses based on natural language processing, using a text-based interface. 1 As the use of LLMs such as Chat Generative Pre-trained Transformer (ChatGPT) become more prominent, their integration into education remains hotly contested. To date, the educational use of such programs is mixed, with some institutions banning the use of ChatGPT and others embracing the technology and encouraging its use. 2 The educational use of LLMs can benefit students by assisting with writing proficiency and acting as a study tool to improve comprehension of course material.2,3 However, in addition to the benefits of models such as ChatGPT, challenges surrounding misinformation and academic integrity are also present.3,4

The concerns put forth towards to use of ChatGPT, including ethical concerns around inaccurate information, risk of bias, plagiarism and transparency issues, and data privacy and security, hinder its successful integration into educational practice.2–4 The evolving landscape requires further exploration and responsible adoption of technologies such as AI in healthcare education, as integration ranges from attempts to formally incorporate AI into the curriculum to students using programs like ChatGPT independently.5,6 Addressing the growing concerns from students about the overall impact on their educational experiences is imperative to ensure successful integration of AI in educational settings. 4 A Jordan-based survey evaluating health students’ attitudes and usage of ChatGPT emphasized the significance of factors such as perceived risks, usefulness, ease of use, and attitudes toward technology, along with behavioral considerations, when adopting new technologies in healthcare education. 4 A cross-sectional study examining Pharm-D students’ perceptions, concerns, and experiences regarding the integration of ChatGPT into clinical pharmacy education found similar results. Most students acknowledged the potential benefits of using ChatGPT for diverse clinical tasks but expressed concerns about over-reliance, accuracy, and ethical considerations. 7 Additionally, Rajabi et al. (2023) explored how students and faculty at a Canadian research university view the use of ChatGPT in post-secondary education. 8 Findings highlighted the need for clear guidelines, increased assessments, and mandatory reporting of ChatGPT use. 8 These studies contribute to the emerging research on ChatGPT in higher education, emphasizing the importance of addressing challenges and opportunities associated with its adoption.4,7,8

Recently, Sallam et al. (2023) found ‘perceived risks’ to be a crucial factor for influencing student attitudes and usage of ChatGPT. 4 Risk perception plays a crucial role in the decision to use these technologies, as well as the effectiveness with which they can be implemented. 4 The ongoing academic discourse surrounding ChatGPT is expanding rapidly, however, the current ethical discussion tends to be unbalanced, focusing primarily on specific issues without adequately considering both the positive and negative dimensions. 9 In addition, the emerging literature on the usability of AI is mostly cross-sectional and observational, with limited randomized controlled trials (RCT) and mixed methods studies. It is paramount to rigorously investigate the utility of ChatGPT itself in an educational setting, while also considering the experiences and perceptions of student users. Institutions utilizing such technologies require dependable guidance, and educators responsible for providing such guidance must base it on a comprehensive and academically sound foundation. 10 Considering recommendations from user experiences will inform guidelines and safeguards to allow educators and learners alike to benefit from AI in educational settings.

The current study aimed to: (a) assess the usability and effectiveness of ChatGPT when juxtaposed with conventional online tools, including supplementary assistant tools, in an undergraduate health sciences education course; and (b) explore the perceptions of student users regarding the advantages, challenges, and ethical considerations associated with the integration of ChatGPT within their education.

Methods

Ethical considerations

Ethical approval was obtained from Carleton University Research Ethics Board-B, under clearance CUREB-B #119784. Written informed consent was obtained from all participants and students who did not consent were not included in the study. Those who participated in the focus group also consented to having the focus group sessions recorded before engaging in the study. The methodologies employed in this study adhered to pertinent guidelines and ethical regulations outlined in the Ethical Declarations. The course instructor was not involved in participant recruitment, consent or data collection to address any ethical concerns regarding power dynamics. All recruitment and participant interactions were with the research assistant, as outlined in the protocol. 11

Participants and study design

Participants were recruited from a third-year health sciences undergraduate course on “Chronic Disease and Disability” at Carleton University in Fall 2023 (September–December). To be eligible to participate in the study, students had to be 18 years of age or older. Students enrolled in the course consented to completing two online surveys assessing learning experiences.

The study followed a crossover RCT design, employing a mixed-methods approach to assess health science students’ experiences, perceptions, and usage of ChatGPT-3.5. 11 Participants were randomly assigned to one of two streams: (a) ChatGPT-3.5 followed by conventional online tools (sequence AB); or (b) conventional online tools followed by ChatGPT-3.5 (sequence BA). 11 Students enrolled in the RCT were invited to a focus group, for an open discussion on their experience and perceptions following the use of ChatGPT-3.5. This mixed methods study design, including a combination of quantitative and qualitative methodologies, was specifically chosen to enable a comprehensive exploration of the integration and adoption of ChatGPT-3.5 in health sciences education. 12 Following a crossover RCT design, qualitative data collection with validated questionnaires provides objective results to indicate statistically significant trends and patterns in the implementation of the technologies. The incorporation of a qualitative approach provides a better understanding of participants’ perceptions and experiences of ChatGPT-3.5, leading to a more nuanced interpretation of the results. Data collection for both the quantitative and qualitative studies occurred simultaneously, in parallel, as illustrated in the study protocol. 11

This study adhered to two specific reporting guidelines: the CONSORT (Consolidated Standards of Reporting Trials) and its extension, CONSORT-AI. Published in 2020, CONSORT-AI is an extension of the CONSORT 2010 statement and was specifically designed for the comprehensive reporting of randomized controlled trials involving AI.13,14

Data collection

Data were collected in October and November, 2023. In this study, students were randomly assigned to either an AB or BA intervention condition sequence to complete their assignment. 11 Students were given a period of 6 days to complete the first intervention in their respective condition. In the experimental condition, students were to use ChatGPT-3.5 and were given two specific guides. The first guide included tips to interact with ChatpGPT-3.5, such as providing context for prompts, rephrasing questions, providing follow-up questions, etc. 11 The second guide consisted of points for ethical and equitable use, outlining responsible use, when to use citations, privacy, etc. 11 The control intervention involved the use of conventional online tools (i.e. any web-based tool excluding AI), with instructions on how to complete the assignment. 11 After the first intervention period, participants were given 24 hours to complete a survey assessing usability of their respective technology. 11 A 21-day washout period was implemented as part of the crossover design between the two intervention periods to minimize any potential carryover effects and ensure a clearer distinction between the effects of the two interventions. 11 Following the washout period and crossover, students were also given a period of 6 days to complete their assignment in the second component of the study. 11 Similarly, participants were given 24 hours to complete a survey assessing usability of their respective technology after the second intervention period. 11

Participants who had taken part in this study were invited to partake in one of two structured focus groups (see Appendix A for interview guide). Focus group interviews were used as a method to foster interaction among participants, encouraging the expression of their perceptions on accountability, barriers, and equity, and ethical concerns related to the use of AI. The interviews were conducted online using Zoom (Zoom Video Communications Inc.) to facilitate participation and enhance accessibility for all involved participants. Two focus groups were conducted on two separate occasions, one on October 10, 2023 and another on November 7, 2023. During the second focus group discussions, no new themes emerged, and consistent patterns of responses were observed across participants. It was concluded that sufficient data had been gathered to address the research question, confirming data saturation was met.

Measures

Quantitative outcome measures consisted of the System Usability Scale (SUS) and the students’ perceptions and experiences questionnaires. The SUS consists of 10 items scored on a 5-point Likert scale, and is used to assess technology usability and effectiveness. 15 Higher scores signify better usability, with a score of 0 indicating very poor perceived usability and a score of 100 indicating excellent perceived usability. This scale was administered to measure the usability of both ChatGPT-3.5 and conventional tools. Participants were also provided with a series of questionnaires to assess perceptions and experiences surrounding AI literacy, benefits, challenges, satisfaction, and effectiveness. 11

Data analysis

Each stage of data analysis was performed consecutively and independently to prevent bias.

Quantitative analysis

Descriptive statistics were used to analyze demographic characteristics and usability scores. All analyses were conducted with SPSS (version 28.0; IBM Corp, Armonk, NY) for Mac. Qualtrics data management system (Qualtrics International Inc.) was used for data capture. The SUS scores for ChatGPT-3.5 and conventional tools were compared using a Wilcoxon test, with statistical significance set at p < 0.05.

Analysis of the conditions involved exploring participants’ responses to individual SUS items. Statistical tests, including Fisher's exact test or Pearson's Chi-squared test, were employed to assess significant differences between the students’ perception of ChatGPT-3.5 and conventional tools.

Qualitative analysis

The focus group discussions were conducted, digitally recorded, transcribed, and anonymized by the research assistant (HS). The qualitative analysis followed a reflexive thematic analysis methodology as proposed by Braun and Clarke (2019). 16 Our approach followed a constructivist epistemology and an experiential orientation. First, three authors (MV, H. Shannon, BB) read all transcripts to become familiar with the full dataset. The authors also engaged in reflexive journaling to document their assumptions and biases to help aid future interpretation of the findings. Second, the authors independently generated initial codes through an approach largely driven by a latent-coding perspective and inductive analysis. Next, themes were generated and refined through discussion. The final step in the analysis was the integration of the quantitative and qualitative findings. Here, the authors (MV, H. Shannon, BB) actively sought areas of convergence and divergence between the findings of the quantitative and qualitative analyses. 17

All transcripts were uploaded to the qualitative software QSR NVivo 14. Our reporting adheres to the Standards for Reporting Qualitative Research (SRQR) guideline, as delineated by O’Brien et al. (2014). 18

Results

Of the 50 students enrolled in the course, 27 participants completed both interventions. Students were between 19 and 24 years of age, and either a current third or fourth-year undergraduate student at Carleton University. Twenty-five of the 27 participants reported previously using ChatGPT-3.5. Additional demographic characteristics can be found in Table 1.

Demographic characteristics of participants.

*Quantitative group includes all students who participated in the study. Qualitative group includes exclusively students who participated in the focus group.

Usability of ChatGPT

The average total usability score for ChatGPT-3.5 in the intervention condition was 67.29 (SD = 9.67), while the score for conventional tools was 60.10 (SD = 12.19). These scores fall within the marginally acceptable range of 50 to 70, with ChatGPT-3.5 performing better than conventional tools. Generally, usability scores above 70 are considered acceptable, while those below 50 are deemed unacceptable. As shown in Table 2, the usability score, as indicated by the System Usability Scale (SUS), did not show a statistically significant difference between the conditions (p = 0.055). Among the individual questions assessing usability, Question 7 (“I would imagine that most people would learn to use this system very quickly”) showed a statistically significant difference, with the ChatGPT-3.5 condition rating it higher than the Conventional condition (4.33 ± 0.56 vs. 3.83 ± 0.87, p = 0.015). Question 10 (“I needed to learn a lot of things before I could get going with the system”) also demonstrated a significant difference, with the ChatGPT-3.5 condition reporting a lower perceived need for learning compared to Conventional tools (1.92 ± 0.78 vs. 2.46 ± 1.02, p = 0.047).

Comparative usability assessment: ChatGPT vs. conventional tools

Mean ± SD scores for each question on the System Usability Scale pertaining to either ChatGPT or conventional tools used.

Students’ perception of ChatGPT

The findings of the students’ perceptions survey demonstrate that 75.0% of students reported using AI for brainstorming. Moreover, a majority of respondents (56.3%) emphasized the importance of addressing ethical considerations when employing AI systems in academic environments. Additionally, the data highlights a strong institutional focus on responsible chatbot usage, as evidenced by 81.8% of respondents reporting the importance of rules or guidelines established by their university professor. Additionally, 70.0% of respondents expressed agreement that the chatbots they use contribute to their effectiveness as learners, indicating a positive perception of chatbots’ efficacy in augmenting learning outcomes.

Qualitative results

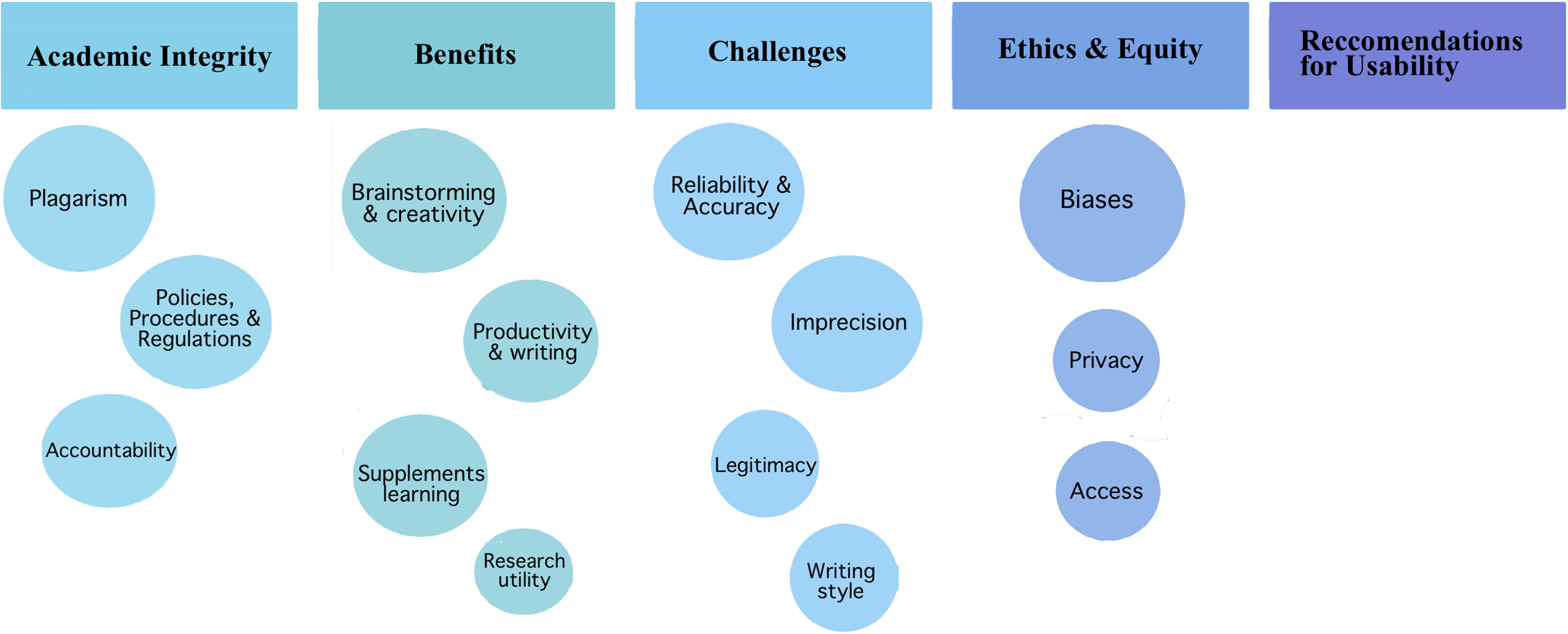

The qualitative analysis yielded ## themes and ## sub-themes (see Figure 1).

Schematic summary of the findings from the qualitative analysis. Themes are depicted in boxes and subthemes in circles of corresponding color

Theme 1: boundaries of academic integrity

Participants discussed concerns about the boundaries of using AI programs for educational purposes. These concerns reflected a desire to have clear and consistent instructions about when and to what extent the use of AI programs was permitted for educational purposes. As one student noted, “This course is different from other courses I’m taking currently because, in the syllabus itself, it gives very defined boundaries of what you should be using it for [and] what you should not be using it for versus just saying, ‘don’t use it’ (P2).” The need for clear-cut boundaries was also discussed in relation to concerns about when the use of AI may infringe upon principles of academic integrity: “In this course, it's well defined in terms of how AI can be used for select assignments … so, I am going to make the assumption that there are not many people that are going to abuse it and use it to write an entire assignment (P2).”

Three subthemes emerged from this theme.

Theme 1.1: the need for clear policies, procedures, and regulations

A common solution endorsed by participants to the problem of unclear boundaries was the development of policies, procedures, and regulations concerning the use of AI. Participants described the importance of having clear policies on the use of AI at the institutional level: “I think what the university has done has been pretty interesting in their pivot on their stance on using ChatGPT, because initially you might think something like this is just automatically bad to use. But I think understanding the tools and understand[ing] [sic] the limitations changes a perspective (P6).” Such a position was an important starting point; however, participants also expressed the need for specific guidelines to help protect students against academic dishonesty. As one student put it, “In order to monitor and protect, you need to be able to identify what you’re protecting from. So, if you’re trying to avoid plagiarism or the use of AI for academic integrity, then we would need to develop policies in advance … [this] can only come after the use of regulations (P4).” The implementation of clear policies, procedures, and regulations will serve as a framework to guide academics and students on the proper use of AI for educational purposes.

Theme 1.2: accountability from users

Another solution raised by participants to the problem of unclear boundaries was personal accountability from both instructors and learners. Participants highlighted that learners who use AI programs for educational purposes cannot blindly accept the output at face value; instead, learners must “[go] further, take an extra mile and just do a little research and see if that information is reliable (P3).” Importantly, participants also identified a duty for instructors to provide clear expectations when allowing the use of AI in their courses. For instance, one participant noted, “normally, in [the] course syllabus, [instructors] just kind of say, ‘Well use ChatGPT at your own discretion’, and they don't really attempt to integrate it within the course syllabus (P2).” Taken together, participants highlighted distinct actions for both instructors and learners to perform in order to ensure that the boundaries of the use of AI for educational purposes are not overstepped.

Theme 1.3: concerns about plagiarism

One of the most predominant concerns that participants had about the influence of unclear boundaries on academic integrity was the increased risk of unintentional plagiarism. Many participants shared a similar sentiment on this topic: “I did struggle a good amount to not plagiarize essentially (P2).” This phenomenon transcended the traditional conception of plagiarism because, rather than reflecting a clear understanding of wrongdoing, these concerns actually represented a sense of confusion about what constituted academic dishonesty. As one participant put it, “[It is unclear] where exactly to draw that boundary of when you can use AI to utilize and help as a support tool for your learning versus where it becomes another tool used for plagiarism and is completely banned (P4).”

In effect, participants were not concerned about “getting caught” doing something wrong, but were instead concerned that they would unintentionally violate the unclear boundaries of when and how to appropriately use AI in their coursework, and that such a violation could affect their performance. Overall, these concerns could be allayed with clearly defined instructions and expectations from instructors.

Theme 2: benefits of using ai programs for education purposes

In this theme, participants identified advantages of integrating AI programs into educational settings, emphasizing its role in facilitating research, fostering creativity, enhancing productivity, complementing traditional classroom learning, and utilizing ChatGPT-3.5 to provide supplemental instruction. This theme includes four subthemes: (a) strong utility for research purposes; (b) inspiration for brainstorming and creativity; (c) improved productivity and writing skills; and (d) supplementation of classroom learning.

Theme 2.1: strong utility for research purposes

In this subtheme, participants emphasized the potential use of ChatGPT-3.5 to identify research gaps, seeing it as a convenient starting point for their research studies. They highlighted how the AI could quickly identify areas of interest within extensive literature, streamlining the process of identifying research gaps. Participant 2 mentioned, “I guess, or like that first place to start in terms of a research, right,” and another participant also added, “I know when we have research papers, we’re encouraged to look at finding research gaps and like if we’re like, trying to scroll through like, like a hundred different papers, like they can just like probably ask ChatGPT to find a research gap on a specific subject (P6).” Although participants identified using ChatGPT-3.5 to identify research gaps as a valuable benefit, we have concerns about its appropriateness. ChatGPT-3.5 may not have the same understanding of literature content as experienced researchers who can critically analyze articles and identify gaps that may not be explicitly stated. Hence, while it may provide a starting point, its ability to accurately identify research needs may be limited.

Theme 2.2: inspires brainstorming and creativity

In this subtheme, participants emphasize the role of ChatGPT-3.5 in fostering innovative thinking and creative problem-solving among students. Participants emphasized that AI tools facilitated connections between concepts and generating novel ideas, encouraging them to “think outside the box (P4).” It provides a starting point, helps with brainstorming, inspires creativity, and assists students in developing innovative solutions to academic tasks.

Theme 2.3: improves productivity and writing

One of the benefits of ChatGPT-3.5 that participants identified was enhanced writing efficiency and quality. Participants commented that the AI can reorganize the writing process by providing feedback and suggestions for improvement. Participant 5 commented, “it can also just overall, like help streamline the process.” Similar to grammar-checking software like Grammarly, AI can offer advanced assistance by identifying specific errors and highlighting recurring patterns in writing, as was mentioned by participant 5: “It can give your work feedback and I know lots of people use things like Grammarly, but we could use AI as like a more advanced Grammarly almost and tell it to look out for specific errors that you might have or have it tell you what kind of errors you’re making often so that you can sort of be on the lookout for it in the future whenever you’re writing and just have better written words.”

Theme 2.4: supplements classroom learning

This subtheme emphasizes the use of ChatGPT-3.5 in complementing traditional classroom instruction and enhancing student learning experiences. Participants highlighted the utility of AI in generating study aids such as flashcards and practice questions, offering alternative explanations for challenging concepts, and providing additional resources for further understanding. For example, participant 4 commented, “you can just give it prompts to create things like flashcards or practice questions and things like that to help you study.” Participant 3 stated, “If there's a topic you don't understand, you could get it to explain and elaborate for you or provide you with resources on how it could help you.” Participant 2 also added that “This is how I have run into it. Essentially, steps will be pretty okay for the most part. Like it might be slightly different from how your teacher teaches you, but if you ask it to kind of, you know, explain it like a fifth grader, like that's how I do it. Teach it to me, like I'm a dummy. It does work.”

Theme 3: challenges of using AI programs for education purposes

Although the attitudes towards AI were generally positive, students identified many key challenges. These challenges, especially concerning content outputs, are critical to consider as various AI programs such as ChatGPT-3.5 are increasingly prevalent in educational settings. Within this theme of challenges, we identified four subthemes.

Theme 3.1: unclear legitimacy compared to other information sources

Students were concerned about the lack of transparency from AI programs such as ChatGPT-3.5. This lack of transparency calls into question the legitimacy of such technologies: “people look at Wikipedia as like the worst resource you can use… ChatGPT is a better resource. But Wikipedia actually has cited sources and links to different things when you scroll through the page. But chat.GPT [sic] doesn’t have that (P6).” Clearer information sourcing is needed to ensure the legitimacy of ChatGPT-3.5's output.

Theme 3.2: questionable reliability and accuracy of the output

In addition to the sourcing of information, students identified challenges surrounding the reliability and accuracy of outputs from ChatGPT-3.5 as a major concern: “if you … ask it about a particular topic or you tell it to delve into something and it's not aware of it, it will just give you an answer. Even if the answer is wrong, and you won’t be able to know that if you don’t have background information on the topic (P3).” Students highlighted the potential to perpetuate misinformation from inaccurate outputs and the need to check the reliability of ChatGPT-3.5.

Theme 3.3: imprecision in the complexity of the output

Another challenge identified was the level of complexity of the outputs ChatGPT-3.5 produced, whereby context may be not interpreted accurately: “With using chatGPT, like the context you may be using, it may not understand it. So it gives you something totally different from then what you are thinking (P1).” ChatGPT-3.5 output also cannot be customized to the level of academia it is being used for, which can be especially detrimental in educational contexts where students of all educational backgrounds and disciplines may be using it: “if they had different types of levels. So like if you typed in write me a paper or like help me formulate a topic on whatever topic it is and say it needs to be at an upper undergraduate level or something like that (P4).”

Theme 3.4: impersonal nature of writing style

Another challenge identified by students when using AI for educational purposes was the impersonal nature of the writing style. The style of writing in ChatGPT-3.5 outputs changed from the writing style that the user inputted: “It did well, but it was like almost too well because then I'd read it and it wouldn't sound like I wrote it (P2).” In addition, when stylistics of the outputs changed, students remarked that it was less personal and of a robotic nature: “you don't want something that necessarily sounds robotic or … to the point. You want to add some actual personal stylistics into your work, so it kind of feels a bit more authentic (P2).”

Theme 4: Ethical and equitable considerations when using AI programs for education purposes

Concerns about access and biases frequently arose when discussing ethical and equitable considerations for ChatGPT-3.5. Access to generative AI can vary based on direct access to the technologies itself, but also the amount of information provided. In addition, biases from AI development have a downstream effect on the outputs produced, creating an immense ethical dilemma for ChatGPT-3.5 use. Three subthemes related to ethical and equitable considerations were identified.

Theme 4.1: inequity in the access to AI technologies

Inequity was discussed in terms of a students’ ability to directly access AI technologies: “Certain groups, or less fortunate groups may not be able to have access to AI. Whereas you would see more fortunate people [would] be able to have access to AI and use it (P1).” Factors such as socioeconomic status may play into one's access to using AI. In addition, education was identified as having an equitable influence on accessing AI. One participant noted that “schools that don't rely on technology or schools in … third world … countries or still developing countries, they might not all have access to computers and access to what we have. That also just puts them kind of one step back in terms of learning (P4).” A number of equitable considerations, such as factors of socioeconomic status, can be at play when examining access to AI technologies.

Theme 4.2: concerns about user and data privacy

In addition, ethical and equitable concerns arise regarding user and data privacy when using AI such as ChatGPT-3.5. The primary concern involves sharing data with AI technologies: “there is a risk of you losing or spreading your data as a result of … doing your data analysis and if it's your research there is a chance that this information can be leaked (P3).” The possibility of unethical data sharing is a key concern, and it raises another equity issue in terms of who then accesses such technologies: “people that didn’t want to give select information in order to access the technology… what alternatives would they have (P2).”

Theme 4.3: potential biases in the development, processing algorithms, and output of the AI technology

Lastly, biases are not only seen in the development of AI, but all the way through to the outputs generated for the user. As participant 6 noted, “AI is simply algorithms that people feed and it'’ important to note who is developing these and whether there are biases.” Although AI programs are based on machine processing, human opinions and biases can be present in the development of such technologies. These biases can therefore be reflected in the algorithm processing and outputs: “opinions and biases can lead towards not so nice outcomes, an example of this could be like for Google translate. When it translates doctor to English, a lot of times it translates to masculine, but … then translates nurse to the feminine. But that's just because [of] the type of writing it was fed (P3).” Biases in AI programs were a significant concern to students.

Theme 5: recommendations for improved usability of AI technologies

Participants gave several suggestions to enhance usability of AI technologies. First, users suggested integrating the capability to open links to external resources, enhancing the functionality and expanding the interaction. Secondly, emphasizing thorough citation and referencing within AI-generated content would empower users to assess the credibility of its output. Moreover, implementing routine checks to ensure the alignment of AI outputs with user expectations is necessary for maintaining relevance and reliability. Additionally, integrating video and voice inputs could significantly enhance user-friendliness, facilitating tasks such as generating lecture notes from audio recordings.

Mixed methods: areas of convergence and divergence

A high level of convergence was observed between the quantitative and qualitative findings (see Table 3). The majority of students believed that ChatGPT-3.5 provided an overall benefit to their academic work. The student perceptions and experiences questionnaire revealed that the majority of students use ChatGPT-3.5 for brainstorming and generating ideas in academic work. This was reflected in the qualitative data, as brainstorming was frequently discussed as a benefit. Similarly, high convergence was seen within the concept of ethical considerations, with students highlighting the benefits of AI technologies in education in both data strands. Divergence between the findings arose when addressing the challenges related to AI in education. Although there was a consistent agreement over concerns of bias, none of the students identified personally having negative experiences or challenges related to the usage of AI in the perceptions and experiences questionnaire. However, when barriers were discussed in the focus groups, challenges surrounding accuracy, such as the specificity of outputs generated by prompts, were communicated. Lastly, divergence also appeared in terms of the presence of guidelines for responsible use. Despite an agreement on the needed role of academic institutions for developing guidelines in the quantitative data, there were discrepancies on the integration of such guidelines in an academic setting. The majority of students reported rules or guidelines set out by the university or teacher(s) being present, although this was largely opposed in the qualitative data whereby students emphasized a need for guidelines and better integration of ChatGPT-3.5 into course syllabi.

Areas of convergence and divergence between quantitative and qualitative results

*Quantitative questions are from the student perceptions and experiences questionnaire.

Discussion

This mixed-methods crossover RCT aimed to investigate the usability of ChatGPT-3.5 compared to conventional online tools, as well as to explore the perceptions and experience of health sciences students regarding ChatGPT usage in the classroom. The easiness of learnability for personal use and a perception of quick learnability towards ChatGPT-3.5 emerged as significant, when compared to the usability of conventional tools. Qualitative results of the study highlighted strong perceived benefits, however ethical considerations were still prominent surrounding the usage of ChatGPT in academia, along with the importance of guidelines. Convergence was seen across quantitative and qualitative data, with agreement on the benefits and ethical considerations. However, convergence occurred when addressing challenges and accountability of ChatGPT usage in education. Taking into account the advantages, challenges, as well as ethical and equity considerations associated with ChatGPT within students’ educational journey is essential to ensure appropriate integration of AI technologies into academic settings.

Participants perceived ChatGPT-3.5 as more user-friendly and requiring less learning effort compared to conventional tools, suggesting certain usability aspects may be more beneficial. While the overall SUS score of the ChatGPT-3.5 condition did not reach statistical significance compared to conventional tools, specific aspects related to perceived learning needs and quick learnability favored ChatGPT-3.5. In response to Question 7 from the SUS, which measures the perceived ease of learning the system quickly, ChatGPT-3.5 provided a statistically significant higher rating compared to conventional tools. This suggests that participants in the ChatGPT-3.5 condition believe that most individuals would quickly grasp the usage of the system, reflecting a positive perception of its learnability. Similarly, Question 10 from the SUS, examining the perceived need for learning before getting started with the system, yielded a significant difference. The ChatGPT-3.5 condition reported a statistically significant lower perceived need for learning the system than conventional tools. This implies a perceived advantage in terms of ease of use for beginning to utilize ChatGPT-3.5. Our results are in line with those of other studies demonstrating the ease of use of ChatGPT in diverse learning contexts.19,20 More specifically, ChatGPT-3.5 showed good usability with learners in the health sciences field. 21 Among the elements that could explain this usability, the strongest positive predictors of high SUS scores were participants’ belief in the benefits of the AI chatbot for medical research, self-assessed familiarity with ChatGPT and self-assessed mastery of computer skills. 21 ChatGPT's self-learning capability fosters intrinsic motivation among users, making it an effective educational tool. This intrinsic motivation is a key factor in its high usability. 22

The student experience and perception survey, along with the focus group discussions, highlighted both the benefits and challenges pertaining to the educational use of ChatGPT-3.5. Benefits of ChatGPT-3.5 identified in the context of education included: a tool for increasing overall productivity, brainstorming, increasing creativity, and supplementing classroom learning. Challenges encompassed issues surrounding reliability and accuracy, as well as the imprecision and impersonal nature of outputs. A balance of positive and negative themes were identified, as reflected by recent medical education research, concerns of academic integrity was a prominent theme.2,20,23 Subthemes identified within concerns of academic integrity included concerns of plagiarism, accountability from users, and the need for clear policies, procedures and regulations. Interestingly, participants discussed unclear boundaries of AI usage in education, whereby the onus should be on both the learners (i.e. students) and instructors. However, this also further highlights the need for policies, procedures, and regulations to be developed in order to identify such boundaries. Without policies and regulations of artificial intelligence technologies, such as ChatGPT, boundaries of academic integrity will remain unclear in education. 24

Strengths and limitations

The current study contains both important strengths and limitations. Thus far, the application of AI in medical education and physician decision making has been addressed in the literature, however the current study addresses the gaps in the literature concerning the use of ChatGPT-3.5 in undergraduate education. 25 Secondly, the crossover randomized controlled trial study design employed allows for a reduction of potential biases while using ChatGPT-3.5 as an intervention, while the qualitative aspect of the study provided additional insights into student perspectives and experiences. Limitations of the study include the relatively small sample size and potential biases associated with self-reported data. The small sample size of this study limits the generalizability of the findings to all university students. Furthermore, the small sample size means that the results of statistically insignificant differences between interventions that were obtained for some SUS scale questions could be due to a lack of statistical power. Despite this, however, the results show a tendency towards a better perceived usability of ChatGPT compared with conventional tools. It is likely that these results are underestimated, and that a larger sample size would have further highlighted other significant differences in favor of ChatGPT in terms of usability. The generalizability is further constrained by the demographic makeup of our sample, as the study had a predominantly female population (>80%). However, some evidence suggests that women may have less positive attitudes towards artificial intelligence compared to men.26,27 Although the present study included more women than men, the gender ratio of the sample likely had little influence on the results, since students generally had positive attitudes towards ChatGPT generative AI. Other factors such as socio-economic status and ethnicity could have an influence on students’ perceived usability of artificial intelligence.26,28 There were notable discrepancies in ethnicity between the qualitative and quantitative groups. For instance, 40% of participants in the quantitative group were white, whereas none in the qualitative focus group were. The impact of these factors is unlikely to be significant enough to influence the trend in the results, but may contribute to the limitations in generalizing our results. Finally, the study focused on short-term usability, and long-term effects may not be fully captured. Future research could investigate long-term usability and how this may be influenced across disciplines and by assignment type within education.

Conclusions

In conclusion, the current landscape of AI incorporation into education remains inadequate, with the absence of clear boundaries representing a significant challenge. The perceived usability of AI generative ChatGPT is an important asset to facilitate the implementation of this tool in education. This study provides insights into how students can use AI in the realization of an educational project in healthcare fields. The results show that students demonstrate creativity in using this tool, which is versatile in enabling different types of tasks to be carried out. Despite the benefits and positive usability and learnability of ChatGPT-3.5 identified by students in the current study, the challenges surrounding concerns of academic integrity persist. We identified an explicit need for the development of policies, procedures and regulations in order to begin conceptualizing a framework of best practice for the usage of such technologies. In addition, accountability must be present, ranging from an institutional level to students themselves to effectively integrate AI into educational settings. It is important that students develop their autonomy in using these tools, their critical thinking skills with regard to the information generated, and their judgment with regard to the legal and ethical issues associated with the use of these tools. In this respect, students have clearly expressed the need for guidelines to support them through the issues associated with the use of these tools. It's clear that such guidelines, which would provide a better framework for the use of generative AI in student training, would enable these learning tools to be better exploited to their full potential.

The appearance of new AI technologies is fast and daunting, and its longevity and relevance is unquestionable. Therefore, a clear understanding of the benefits and challenges of these technologies, and when and how to use them in higher education, is necessary for academic administrators, instructors, and students alike.

Footnotes

Abbreviations

Acknowledgments

The authors would like to express our sincere gratitude to Dr Martin Holcik, Professor and Chair Department of Health Sciences, Carleton University, Ottawa, Canada, for his invaluable support throughout this research project. His commitment to support the integration of AI into education has been instrumental in successfully completing this study. They truly appreciate his visionary approach to advancing research in our field, and his support has significantly contributed to the project's completion.

They want to extend their genuine thanks to Claire MacArthur, Health Sciences Department, Carleton University, for her invaluable assistance with the research administrative budget and her support to the research assistant as needed, which have significantly contributed to the overall achievement of their goals.

Contributorship

MV, DK, and J-OD conceptualized this study. MV drafted the initial version of the manuscript along with H Shannon and BB contributing to the mix method session. H. Shannon wrote the final version with critical inputs from MV, J-OD, BB, DK, MR, HS, PGBS, and DR. H. Shannon collected qualitative and quantitative data. BB contributed to the qualitative analysis and mixed methods analysis. MV, PGBS, H. Shannon, and BB analyzed the data with critical inputs from J-OD, DK, MR, HS and DR. All authors contributed to the study design, methods and manuscript drafting. All authors have read and approved the final manuscript.

Data availability

The data sets used and analyzed during this study are available from the corresponding author upon reasonable request.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This project was funded by the Scholarship of Teaching and Learning Grant from Carleton University.