Abstract

Objective

Poor conditions in the intraoral environment often lead to low-quality photos and videos, hindering further clinical diagnosis. To restore these digital records, this study proposes a real-time interactive restoration system using segment anything model.

Methods

Intraoral digital videos, obtained from the vident-lab dataset through an intraoral camera, serve as the input for interactive restoration system. The initial phase employs an interactive segmentation module leveraging segment anything model. Subsequently, a real-time intraframe restoration module and a video enhancement module were designed. A series of ablation studies were systematically conducted to illustrate the superior design of interactive restoration system. Our quantitative evaluation criteria contain restoration quality, segmentation accuracy, and processing speed. Furthermore, the clinical applicability of the processed videos was evaluated by experts.

Results

Extensive experiments demonstrated its performance on segmentation with a mean intersection-over-union of 0.977. On video restoration, it leads to reliable performances with peak signal-to-noise ratio of 37.09 and structural similarity index measure of 0.961, respectively. More visualization results are shown on the https://yogurtsam.github.io/iveproject page.

Conclusion

Interactive restoration system demonstrates its potential to serve patients and dentists with reliable and controllable intraoral video restoration.

Keywords

Introduction

In response to the escalating requisites for dental healthcare, the application of computer-aided design systems and digital dentistry is gaining significant traction.1,3,5,2,4 Intraoral digital photos and videos have been increasingly used in clinical practice as auxiliary and informative methods for documenting the progress of a patient’s treatment and recording their clinical condition.6,7 They can help assess various aspects, such as the extent of caries,8,9 tooth wear rates, 10 restorations,6,11,12 staining, 13 and demineralization. 14 In comparison to the photos, intraoral videos offer manifold advantages. The videos provide real-time visualization, allowing dentists to observe and assess live images during dental examinations or procedures. These videos can also capture dynamic movements within the oral cavity, allowing dentists to assess occlusion (bite) and jaw movements during different functions like chewing or speaking. This information is essential for orthodontic and prosthodontic treatments. For the patients, seeing live images of their oral health conditions can build trust and confidence in the dentist’s expertise, fostering a positive patient-dentist relationship. In addition, these videos enable remote dental examination and cross-checking from specialist diagnostic support, that is, “store and forward” telemedicine.

In contemporary clinical practice, dentists use various instruments to obtain intraoral digital photos and videos, including single-lens reflex (SLR), 15 dental operating microscope (DOM), 16 intraoral camera (IOC), 17 and even smartphone. 18 While both SLR and DOM are recognized as the most standardized methods in dental practice, their elevated costs, labor-intensive nature, and technical intricacies may constrain their application. For example, when employing an SLR to take a photo of the patient’s occlusal surface, the involvement of an assistant is essential. This collaboration entails the use of instruments such as mouth openers and reflectors, as the operator alone is unable to manage all aspects of the process. 15 Blind use of DOM without professional training and adequate practice may hinder results and burden treatment. 19 Meanwhile, both of these devices necessitate patient cooperation and a considerable degree of mouth opening. In this condition, integrating an IOC with an intraoral instrument, such as a dental handpiece, empowers a dental practitioner to maintain constant surveillance over the process of the procedure. The IOC reduces the difficulty of recording in the clinical consultation without adequate conditions. 20 IOC functionalities have expanded to encompass a diverse array of features, including macro mode for magnification, a curing light for composites, light emitting diode lights, capabilities for picture or video recording, and fluorescence for detecting various stages of caries, plaque, and gingival inflammation. 21

However, in clinical dentistry, the intraoral environment introduces additional complexities that can pose challenges, such as lip shadow, specular reflection, non-centered teeth, and tremulous images. 18 , 22 These challenges pose obstacles to the extended utilization of photography in dental diagnosis. For instance, tremulous images may introduce ghosting artifacts. In addition, unpredictable variations in illumination, combined with the interference of fluids like water and saliva, present substantial challenges in preserving image fidelity. 18 , 23 These unintended distortions influence the interpretation of the resulting images, potentially affecting diagnostic precision and therapeutic decision-making. Compared with static photos, intraoral videos capture a broader range of details and scenes throughout the treatment process. Nonetheless, the quality of videos is more susceptible to the aforementioned factors compared to static photos. These challenges emphasize the necessity that restore and enhance the captured videos, ensuring their reliability and clinical utility.

To address the above issues, the utilization of video restoration and enhancement techniques emerges as a pivotal strategy. Previous approaches employ deep neural networks to restore the video frames automatically.23–25 Though straightforward, they encounter challenges in accurately identifying and restoring significant tooth regions amidst complex backgrounds.26,27 These automatic methods28,29 have not exhibited sufficiently accurate and robust results for clinical use, primarily due to the inherent complexities in intraoral scenarios. Consequently, expert intervention is frequently required for post-hoc correction. In practical applications, the absence of human interaction makes it unfeasible to reliably control the results of video restoration. In addition, they need to solve multi-task optimization problems, which hampers their effectiveness in real-time applications within digital dental workflows.

The aim of this study is to investigate the reliable restoration and enhancement of intraoral digital videos through an interactive and real-time system. The proposed system integrates interactive segmentation as a preliminary step, leveraging the advancements of the recent segment anything model (SAM). 30 SAM, as the pioneering foundational model for general image segmentation, demonstrates robust capabilities in generating accurate object masks through interactive prompts provided by users (e.g. bounding boxes and click points). The entire system comprises three modules: an interactive segmentation module utilizing SAM, an intraframe restoration module, and an interframe enhancement module. This system can achieve real-time restoration of specific regions through interactive prompts from users (i.e. doctor-in-the-loop31,32). Furthermore, to attain additional video enhancement, we delve into the potential of artificial intelligence generated content (AIGC)33–35 for clinical applications in the interframe enhancement module. A series of video super-resolution36,37 and video interpolation38,39 approaches are further employed 1 . Finally, both video frame restoration quality and real-time performance are evaluated. The proposed system is flexible and can seamlessly integrate more advanced SAM and AIGC approaches in future iterations. 2 Extensive experiments demonstrate that it achieves reliable performance on intraoral video restoration. The project page has been released to further support and promote the research efforts. 40

Methods

Overview

The proposed system takes low-quality videos captured by an intraoral camera as input. Later, the system performs interactive video restoration guided by user prompts. This system is evaluated using vident-lab dataset, 41 which provides paired low-quality and high-quality videos depicting the same dental scene. To illustrate the superior design of our framework, ablation studies are conducted on each sub-module. We compared the effect of this system with other previous approaches in various aspects, including quantitative analyses such as restoration, segmentation, and processing speed, as well as qualitative evaluation of clinical applicability. More details will be shown in the following sections.

Data acquisition

To obtain the paired low-quality and high-quality intraoral videos for training, a micro-camera and a high-definition camera with larger sensors and optics are tightly coupled through a

Framework of interactive restoration system (IARS)

The proposed framework contains an interactive segmentation module using SAM, an intraframe restoration module, and an interframe enhancement module. Figure 1 illustrates the whole system. The input comprises low-quality videos of the vident-lab dataset for training, while the corresponding high-quality videos are regarded as the ground truth. Figure 2 shows the system design and architecture.

Workflow of the proposed system. The system takes intraoral video frames as input and produces the enhanced videos. Following interactive segmentation and intraframe restoration, “Human Improvement” is executed iteratively on intraoral videos through prompts (doctor-in-the-loop) until “Expert Evaluation” attains high quality.

System design and architecture. (a) The architecture of the interactive segmentation module. (b) The architecture of intraframe restoration module. “Fusion” denotes the concatenation between region-level and entire frame features from intermediate layers. “Conv” means the convolutional layer. (c) The architecture of the interframe enhancement module. The input is restored video frames with low frame rate (

Interactive segmentation module

Firstly, the low-quality video is divided into multiple frame images with a resolution of

Intraframe restoration module

Secondly, the intraframe restoration module contains two branches of restoration networks. One focuses on restoring the region-level images, and the other is dedicated to restoring the entire frame. The two networks share the same architecture but possess independent model weights. 25 The region images and the entire frame are fed into the region-level restoration network and the entire frame restoration network, respectively. Smooth L1 loss is employed to optimize the minimization problems arising from the disparity between low-quality and high-quality frame images. 42 In addition, a fusion strategy using channel concatenation is also employed to combine the region features and entire frame features extracted from the intermediate layers. In this way, the framework is able to focus on the restoration details based on user prompts. With a focus on clinical usability, this interactive process facilitates continuous improvement through iterative input prompts, thereby enhancing the overall performance and reliability of the system.

Interframe enhancement module

Thirdly, given the above-restored video frames, the interframe enhancement module is used to enhance the videos, including video super-resolution36,37 and video interpolation38,39 operations. Both of these techniques serve as a significant part of AIGC for videos. Specifically, video super-resolution aims at recovering a high-resolution video from the corresponding low-resolution counterpart, while video interpolation increases the frame rate by generating intermediate frames between consecutive input frames. Here, this study investigates the potential of AIGC on intraoral videos using MMagic toolbox. 43 The MMagic toolbox is an open-source AIGC tool equipped with multiple powerful models for image/video processing, editing, and generation. The application of this tool is straightforward and flexible, as it does not require additional annotations for training.

Finally, through the above process, the low-quality intraoral video is restored and enhanced, resulting in a clear reference for clinical purposes.

Ablation studies

Ablation studies are conducted on each sub-module to illustrate the superior design of our framework. Firstly, we compared the impact of different numbers of click points on the effect of segmentation. Then, the segmentation prior (“

Quantitative evaluation of restoration, segmentation, and processing speed

Following the experimental protocol by existing methods,

23

peak signal-to-noise ratio (PSNR) and structural similarity index measure (SSIM) are adopted to evaluate the video frame restoration quality. PSNR is defined based on the mean squared error (MSE). Given an image

Comparison with methods on restoration, segmentation, and processing speed.

MU: multi-input multi-output U-net 48 ; EN: efficient spatio-temporal recurrent neural network 24 ; DLab: DeepLabv3+ 52 ; UN: UNet++ 47 ; MN: MOST-Net 23 . “Auto-Res.,” “Auto-Seg.,” and “Interact-Res.” denote automatic restoration, automatic segmentation, and interactive restoration, respectively. Three click points were used here. “ ± ” means the result ranges of the repeated experiments. PSNR: peak signal-to-noise ratio; SSIM: structural similarity index measure; mIoU: mean intersection-over-union; FPS: frames-per- second.

Visualization and qualitative evaluation of clinical applicability

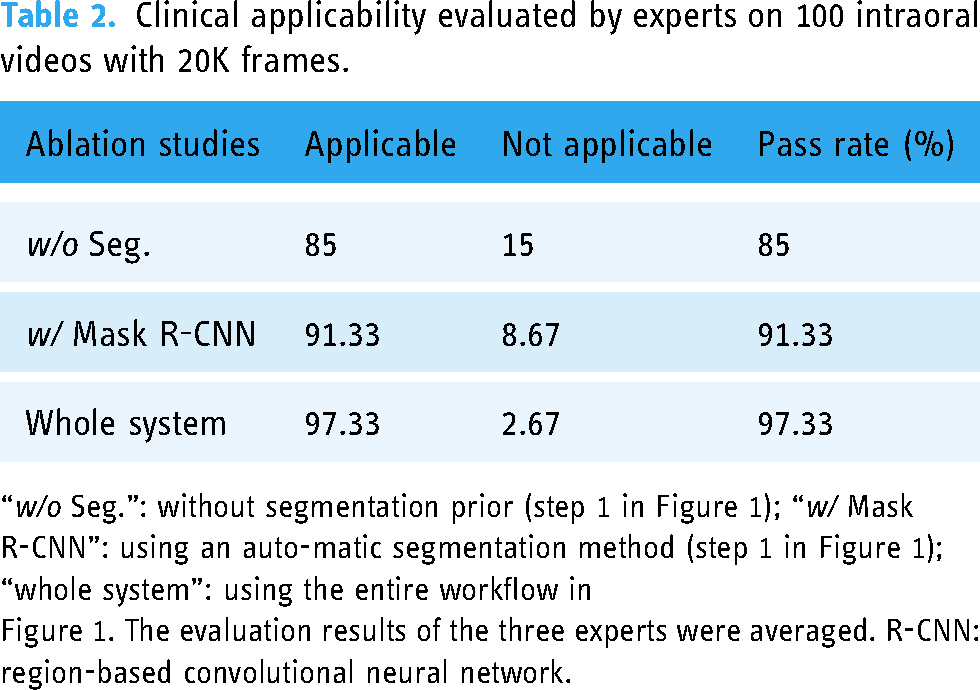

The qualitative visualization is presented in Figure 3. Three human experts (XC, XL, and YH) who have more than 10 years of clinical experience are blinded. They independently evaluate the clinical applicability of videos processed by the different systems, including the system without segmentation prior (“

Segmentation results from comparison among different prompts and ground truth. “3 or 5 points” denotes the number of the point prompt. Please zoom in for best view.

Clinical applicability evaluated by experts on 100 intraoral videos with 20K frames.

“

Figure 1. The evaluation results of the three experts were averaged. R-CNN: region-based convolutional neural network.

Results

Experimental setup

For the interactive segmentation module, the intraoral frame segmentation is evaluated with three settings:

Interactive segmentation module achieves promising performance on segmentation of intraoral digital videos

Figure 3 presents the segmentation results of the proposed framework for various scenarios. The visualization includes the segmentation results of two interactive prompts (click points and boxes) and a fully automatic method. It demonstrates that the interactive segmentation module based on SAM, whether employed in an interactive or automatic manner, achieves promising performance in segmentation tasks.

Segmentation prior improves the restoration effect

Figure 4 presents the restoration results and corresponding visual explanations using the class activation map from gradient-weighted class activation mapping.50,51 Thanks to the utilization of the segmentation prior, the proposed system is capable of focusing on the tooth region, resulting in improved restoration output. More randomly chosen visualizations are included on the project page. 40

Visualization of automatic restoration (“

Ablation studies verify the rationality of IARS design

Figure 5(a) demonstrates that the segmentation effect improves as the number of click points increases. In Figure 5(b) and (c), omitting the segmentation prior (“

Ablation studies on interactive segmentation module and intraframe restoration module. (a) Different number of click points. (b) and (c)

IARS shows significant advantages in restoration, segmentation, and processing speed

A comprehensive quantitative analysis is conducted to evaluate the video restoration quality, segmentation accuracy, and real-time processing speed. First, the proposed framework with different prompts is compared with the single-task approaches of restoration and segmentation, respectively. The framework outperforms the single-task baselines significantly in both aspects. In Table 1, the results revealed that IARS with the box prompt (#9) achieved the highest performance with a mIoU of

IARS shows certain clinical applicability

The clinical applicability evaluation is detailed in Table 2. For the system without segmentation prior (“

Discussion

In this study, we proposed a real-time IARS for intraoral videos, consisting of an interactive segmentation module, an intraframe restoration module, and an interframe enhancement module. Our study demonstrated that due to its rational design, IARS makes better performance than other previous methods in both segmentation and restoration, with good efficiency. Moreover, the restored videos have certain clinical applicability after blind evaluation by experienced human experts. The key insights are two-fold. Firstly, we introduce interactive segmentation as the initial step, which enables the precise identification of tooth and background (oral mucosa, gingiva, etc.) regions. Secondly, the system employs a powerful foundation model SAM, 30 which only needs user’s prompts (click points or bounding boxes) to correct the segmentation results without additional multi-task optimization, thereby ensuring real-time processing. The segmentation results will be entered into the following restoration module and guide the restoration of tooth regions accurately.

Challenges such as low-light conditions and blur in the videos may complicate the photographic diagnosis process for dentists, thereby impeding its clinical application.6,22,23,53 To address the issue of low-quality intraoral videos, IARS incorporates interactive segmentation prior, which enables users to intervene in the process. Effective segmentation enables the extraction of both foreground (tooth-level) and background (oral mucosa, gingiva, etc.) regions with accuracy. Previous studies23–25 have mainly focused on automatic restoration techniques with the multi-task learning network and demonstrated their effectiveness. However, these studies have not sufficiently showcased accurate restoration for tooth regions due to the complex intraoral environment. There is a lack of explicit complementarity and guidance between these independent tasks. In contrast, the proposed system introduces interactive segmentation as the initial step, which enables the precise identification of tooth and background regions and instructs the following restoration.

To the best of our knowledge, this study represents the first attempt at interactive restoration of intraoral videos. Our results support that the design of segmentation prior can improve the effect of video restoration, both in the conditions being compared with the single-task approaches of restorations and omitting the segmentation module. Besides, the interactive segmentation effect is better than the automatic methods. In this system, the user plays a crucial role in controlling the regions of video restoration and correcting any errors (i.e. doctor-in-the-loop 31,32). In a word, it is important to emphasize that the segmentation provides valuable guidance for video restoration (Figure 4). Improved segmentation performance directly correlates with greater gains in video restoration.

Another contribution of this study is the exploration of SAM,34,36–39 a recent deep learning-based large model. Previous models44,46 are often tailored to specific domains and targets, and their generalization ability is limited. As the first promptable foundation model for image segmentation, SAM is trained using an extensive dataset that encompasses an unprecedented number of images and annotations. This results in a considerable potential for SAM to successfully perform object segmentation even for object types that it has not seen, that is, strong zero-shot generalization. Extensive demonstrations have showcased the successful segmentation capabilities of SAM across diverse scenarios. There have been some studies demonstrating the application of this model and its variants in the domain of medical images. Different from automatic segmentation models, SAM can generate accurate segmentation results conditioned by the user prompts. These prompts can be provided in the form of a set of click points or a bounding box, which simply specifies what to segment in an image. Specifically, SAM utilizes a vision transformer-based image encoder to compute an image embedding, while employing a prompt encoder to embed prompts and integrate user interactions. Subsequently, the extracted information from both encoders is fed into a lightweight mask decoder to generate segmentation results. As reported, the prompt encoder and mask decoder predict segmentation results from a prompt within

In this study, the potential of SAM in intraoral video segmentation and restoration is investigated through a series of experiments divided into three parts. Initially, SAM is evaluated in the auto-prompt mode, revealing that its zero-shot generalization falls short of competing with baseline models. Then, the box-prompt mode and point-prompt mode are examined to assess their performance on segmentation. The extensive experiments demonstrate that the box-prompt mode achieves higher mIoU accuracy than other prompt modes (Table 1). For point-prompt mode, various settings involving different number of points are evaluated (Figure 5). As the number of prompt points increases, the performance gradually surpasses other configurations. We use SAM for interactive segmentation to guide the precise restoration of intraoral videos without additional multi-task optimization, ensuring the process is real-time.

Furthermore, distinct from previous approaches, this system is flexible to address the task by organizing cooperation among models, without being constrained by any elaborate module or rigid framework. More noteworthy, the design of this system (Figure 1) enables the pipeline to leverage a diverse range of powerful AIGC techniques (i.e. video super-resolution36,37 and video interpolation38,39). Our study has shown that the enhanced digital videos have certain clinical applicability.

However, actually, IARS is not only limited to intraoral videos captured by IOC but is suitable for both images and videos shot with any tools that need to be restored due to factors such as light and jitter. In this investigation, we have evaluated the segmentation, restoration, and processing speed among the proposed system and other previous methods. This evaluation only assesses the effect of the previous two modules (Figure 2(a) and (b)) for multiple frame images divided from videos. Therefore, we can find that the combination of the interactive segmentation module and intraframe restoration module in this system has shown great restoration capabilities for images. Given the flexibility of the system, we may be able to build a more powerful restoration system with better performance for intraoral digital photography in the future.

IARS is mainly aimed at the restoration of intraoral digital videos, with a certain potential to restore images. One of our ideal clinical applications is to integrate it into an IOC. In the late 1980s, the inaugural IOC emerged. Subsequently, iterative refinement and enhancement by multiple manufacturers culminated in sophisticated high-end IOC. 21 Presently, IOC is a small handheld device that is ergonomic, lightweight, comfortable to use, relatively inexpensive, and can capture images and videos that are readily available for the patient and the clinician which can be magnified and viewed. Some studies 54 have highlighted the utility of oral endoscopy as a valuable adjunct, aiding physicians in recording treatment progress, archiving images, and facilitating post-treatment follow-up. While shooting, patients can engage in real-time visualization of their oral status on a screen, fostering effective doctor-patient communication. One of the advantages of IOS is that it can easily penetrate deep into the inside of the mouth to shoot at specific areas. But its shortcomings are also obvious. Affected by various factors in the oral cavity easily, especially poor lighting and possible shaking when holding by hand, the quality of the pictures and videos it captures may be reduced. If we can integrate IARS into the clinical application of IOC, it may be possible to improve the quality of the pictures and videos output by IOC under doctor’s real-time interactive prompts, optimizing its clinical application effect and expanding its clinical practicability. Besides, it will be another good idea to integrate it into a smartphone camera application.

On the whole, this research has preliminarily proved the following: firstly, the large basic model SAM can realize real-time interactive segmentation of intraoral digital videos; secondly, the interactive segmentation prior helps guide a more precise restoration process; thirdly, the proposed system is expected to be integrated into digital dental applications, contributing to the prevention, diagnosis, treatment, and even remote treatment of the oral diseases.

There are nevertheless some limitations in this study. Firstly, the limitations of the dataset should be acknowledged. The dataset has a limited number of videos. The overall quality of the videos is poor, which may affect the segmentation and restoration of the system. Also, the videos are shot from a single angle, which may not be able to fully simulate the real scenario or examine the ability of the system to optimize the videos from other angles. Second, several studies 55 have shown that SAM may fail in some challenging scenarios, especially when the targets have weak boundaries. This is not surprising since SAM is mainly trained on natural image datasets where the objects usually have strong edge information.

In the future, the next step is to collect more representative datasets for training and testing the system to further optimize its performance. Moreover, considering that photographs are the main form of clinical monitoring for doctors, we will also do further optimization to enhance its function of image restoration. Meanwhile, given that IOC and smartphones are complementary to the use of SLR and DOM in certain conditions, the system may be integrated into an IOC program or smartphone camera application in the future for doctors and patients.

Conclusion

This study presents a real-time interactive and clinically applicable system named IARS for the restoration of intraoral digital videos. IARS significantly outperforms previous methods. It can be seamlessly integrated into real-world clinical intraoral cameras, benefiting both patients and dentists. In this investigation, we emphasize the necessity of interactive segmentation prior in restoration. Furthermore, this study explores the potential of utilizing the deep learning-based large foundation model SAM in digital dentistry.

Supplemental Material

sj-docx-1-dhj-10.1177_20552076241269536 - Supplemental material for A real-time interactive restoration system for intraoral digital videos using segment anything model

Supplemental material, sj-docx-1-dhj-10.1177_20552076241269536 for A real-time interactive restoration system for intraoral digital videos using segment anything model by Yongjia Wu, Li Zeng, Yaya Hong, Xiaojun Li and Xuepeng Chen in DIGITAL HEALTH

Footnotes

Acknowledgements

Not applicable.

Contributorship

The project was conceptualized by Yongjia Wu, Xiaojun Li, and Xuepeng Chen. Yongjia Wu, Li Zeng, and Yaya Hong designed the study. Yongjia Wu acquired and analyzed the data, and wrote the original manuscript. Yongjia Wu, Li Zeng, and Yaya Hong. prepared figures and tables. Li Zeng, Yaya Hong, Xiaojun Li, and Xuepeng Chen revised the manuscript. All authors reviewed and edited the manuscript and approved the final version of the manuscript.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Ethical approval

Not applicable.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was funded by National Natural Science Foundation of China, grant number 81400511; Key R&D Program of Zhejiang, grant numbers 2022C03088, 2023C03072; Zhejiang Provincial Natural Science Foundation of China, grant number LY18H140001; R&D Program of the Stomatology Hospital of Zhejiang University School of Medicine, grant numbers RD2022DLYB03, RD2022JCEL04.

Guarantor

XC and XL.

Supplemental material

Supplemental material for this article is available online.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.