Abstract

Background

It has been estimated that more than one-third of university students suffer from insomnia. Few accessible eHealth sleep education programmes exist for university students and of the ones that do exist, fewer were developed using a user-centred approach, which allows for student input to be systematically collected and utilized to provide students with a programme that they consider to be easy to use and implement and to be effective.

Aims

The purpose of this study is to evaluate the usability of the

Methods

Canadian undergraduate students (

Results

Average quantitative ratings were positive across user experience dimensions, ranging from 3.43 to 4.46 (out of 5). Qualitative responses indicated overall positive experiences with the programme. The only constructive feedback that met the criteria for revising the programme was to include more interactive features in Session 4.

Conclusions

This study demonstrates that university students found

Introduction

Many university students suffer from poor sleep health. An American multi-university study of over 7000 students found that 64% met criteria for poor sleep. 1 The National Sleep Foundation recommends that young adults need 7 to 9 hours of sleep to function at their best 2 ; however, many university students are not meeting this recommendation. Specifically, among university students, prevalence rates range from 9.4% to 38.2% for insomnia. Insomnia is defined as chronic and specific difficulty falling asleep, staying asleep and/or early morning awakenings despite adequate opportunity for sleep and associated with distress or impairment in functioning.3,4

Many factors contribute to insomnia (defined henceforth as those meeting either the full symptom range, or partial symptoms) in university students. During adolescence, there is a biological shift in circadian preference for later sleep and wake times. Bedtimes and wake times become progressively later, reaching a maximum at around 20 years of age. 5 Furthermore, many psychosocial factors have been found to interfere with sleep, including waking up at night due to noise of other students when living in shared accommodations, bedtimes and waketimes on weekends and weekdays that frequently differ more than 1 to 2 hours and socializing late in the evening.6,7 In addition, many studies have demonstrated the negative impact of Internet usage and social media use at bedtime on sleep outcomes.8,9

Insomnia can negatively impact functioning across many aspects of daily living. Insomnia is associated with mental health consequences such as anxiety and depressive symptoms.10–12 Furthermore, chronic insomnia (insomnia lasting at least three months) has been demonstrated to be associated with increased stress, fatigue, lower quality of life and higher rates of substance use for sleep problems. 12 Given the wide-ranging negative consequences of insomnia, accessible interventions targeting sleep and healthy sleep practices are of paramount importance. Within the broad spectrum of sleep interventions, sleep education interventions (e.g. in-person lectures, educational sessions on healthy sleep practices) are effective in improving sleep outcomes.13,14 Despite there being several effective university sleep education interventions, the majority are not easily accessible and frequently require in-person sessions that can be difficult to accommodate in students’ schedules. One way to bridge the accessibility gap is to use eHealth (i.e. technology applied using the internet, in which healthcare services are provided to improve quality of life outcomes and facilitate healthcare delivery). 15 Some of the potential benefits of eHealth delivery methods include being able to reach a wider number of individuals who require services, scalability of the programme, affordability of services and flexibility for the individual. 16 To this end, there has been an increasing focus on developing digital interventions to address sleep problems, as this would enhance accessibility.

Several reviews have been conducted to evaluate the use and usability of eHealth apps focused on sleep across the lifespan. A scoping review conducted by Bullock et al., 2022 on mobile phone applications for shift workers found only two papers met inclusion criteria, suggesting a dearth of studies that highlight sleep management apps for this population. 17 A literature review by Rowan et al., 2024, evaluated the evidence for efficacy and quality of CBT-I sleep apps for ages 12 and older. The authors found the most commonly used components being sleep hygiene and relaxation/meditation. They found the strongest evidence for success was stimulus control and sleep restriction. In their review they found the most efficacy with studies that used self-report measures of sleep, with more mixed results on measures of sleep efficiency taken from objective measures of sleep and sleep diaries. 18 In a review conducted by Nuo et al., 2023, on sleep apps, it was found that the largest proportion of sleep apps focused on sleep-tracking, followed by apps that intervene with sleep and apps that diagnose sleep issues. The majority of apps focused on breathing-related sleep disorders and insomnia sleep disorders. The study additionally indicated that although many sleep apps have been developed, most of the newer apps are not being used in the real world, but rather being used in laboratory studies. The review suggests that this may be attributed to app usability, suggesting that apps with high usability will be more likely to be used and recommended to others. 19

There have been several empirical studies evaluating eHealth sleep interventions for adolescents and young adults. Consistently, these studies have found the programmes to be of high acceptability20–23 and have proven to be effective at improving sleep duration, sleep difficulties and sleep knowledge. 24 An example is a study on the feasibility and acceptability of the DOZE eHealth programme for improving sleep for youth ages 15–24 years found the programme to be highly acceptable in areas including ease of use, understandability, time commitment and overall satisfaction. The programme was also rated as credible to participants. 23 An experimental study conducted by Chu et al. (2018) evaluating a mobile sleep-management learning system for university students found the programme was easy to use and useful for students, with the number of students having decreased insomnia. Additionally, they found significant improvements in morning lingering in bed, total wake time, sleep efficiency, total sleep time and self-reported insomnia severity. They additionally found significant improvements in anxiety, depression and energy. 25

Several eHealth sleep interventions for university students have been developed and studied25–28 However, few studies have demonstrated improvements in sleep outcomes.25,28,29 Furthermore, of the limited literature that exists, very few studies reported incorporating end-users in programme development.30–32 User-centred designs incorporate user-activities and user-feedback throughout the development process as guidance toward the needs of end-users.33,34 Research has demonstrated that involving end-users can reduce the development time and increase user acceptance.35,36 Understanding user acceptance is critical, as it has been demonstrated that a quarter of all online application downloads are only used one time.37,38 This underscores the importance of creating programmes that maintain user engagement. It has also been demonstrated that using an iterative process, whereby usability is tested early in development can allow for identification of important requirements for end-users that elicit behaviour change, and the identification of undesirable aspects of a programme that hinder a programme's adoption. 39 Furthermore, it has been recommended that eHealth or telehealth programmes use end-user participation in development in order to produce programmes that are better tailored to user needs. 40 In addition, in a survey of practitioners who have used user-centred design formats for at least three years, the majority reported that using user-centred design methods significantly improved the usefulness (79%) and usability (82%) of products developed in their organizations. 41 This improved usability and usefulness ultimately may lead to higher rates of programme retention.

Considering what is known about the benefits of user-centred designs, it is surprising that very few eHealth sleep education intervention studies for university students have reported on end user inclusion in the development and evaluation process.30–32,42 One study that evaluated the effectiveness of an email intervention reported using feedback from users only to guide future iterations, rather than the current iteration of the programme. 32 Furthermore, the researchers only asked participants about their perceptions of effectiveness of the strategies to use, and what could be changed to improve upon them. Another eHealth study reported using focus groups to inform the development of a text-message intervention to allow for refinement before testing the effectiveness of the intervention, however no information was reported on the results of the discussion. 32 Additionally, no individual tailoring was reported in this intervention, with students only receiving standardized text messages with sleep-related educational content. Results of effectiveness testing demonstrated no significant differences on sleep quality, sleep hygiene behaviours, or sleep knowledge.

A third eHealth sleep education intervention was an integrated intervention for heavy alcohol use and sleep difficulties. 30 The intervention was an online programme that delivered evidence-based sleep content, behavioural advice, relaxation training and cognitive strategies to target maladaptive beliefs about sleep. Focus groups were used to guide development of the programme, with 24 college students giving their perceptions of sleep and alcohol/sleep interactions and their behavioural health treatment preferences. 30 Results demonstrated an overall positive reaction to having an integrated alcohol use/sleep intervention and the majority wanted the content to be personalized to their unique health profile. Participants also requested easy navigation, a way to monitor progress, daily added content and the option for peer or clinician support. A second set of focus groups were utilized with a beta-model of the programme to gain feedback through watching a power-point, however no results were reported. The study incorporated feedback by delivering new health information modules each week and giving a personalized summary of health characteristics from students’ initial questionnaire data (e.g. sleep quality, alcohol use), however, did not tailor the recommendations in the programme to their sleep data. Results of pilot testing demonstrated effectiveness in improving sleep quality and sleep-related impairment ratings. 31

Despite the limited use of user-centred design in the development and evaluation of eHealth sleep interventions for university students, end-user engagement has been often employed in other areas of paediatric behavioural health. For example, King et al. 43 engaged children, parents and educators in their evaluation of eHealth intervention for chronic pain, McManama O’Brien et al. 44 engaged adolescents and their parents when developing a smartphone app for suicidal adolescents and Nitsch et al. 45 engaged youth with body image or disordered eating symptoms to evaluate an online intervention. A range of methodologies were used including think-aloud activities (i.e. sharing thoughts as one uses the programme), demonstration and discussion, as well as semi-structured interviews and surveys/questionnaires where the participants are asked to reflect on their experiences with the programme, resulting in both quantitative and qualitative data. The results of these studies indicated needed changes in terms of delivery (e.g. make easier to navigate) and content (e.g. reducing content to main points).

Given the limited research on sleep interventions to be used with university students, there is a clear need for more accessible eHealth sleep education interventions that were developed utilizing a user-centred design. Our team previously developed and evaluated the usability of eHealth sleep education programme,

The

Dr. Corkum and her colleagues have also developed and evaluated a separate, unique eHealth programme for parents of children 4–12 with neurodevelopmental disorders.51–53 In a study that examined barriers and facilitators of using the programme using semi-structured interviews with parents (

The goal of the current study is to gain feedback from older adolescents (i.e. university students ages 18–24 years) to determine if the original intervention (

As such, the primary aim of the present study is to evaluate, using the User Experience Honeycomb framework,

43

the usability of

Materials and methods

Intervention

In the original

Better nights, better days programme content.

Participants

Participants were recruited through word of mouth from the researchers, posters placed around the Dalhousie University campus, and through online methods including the Facebook, Kijiji and Twitter. The target audience was university students across Canada who experience insomnia or symptoms of insomnia. Individuals were eligible if they were 18 to 24 years old, experienced symptoms of insomnia in the subthreshold range, attended a university, resided in Canada, had access to the Internet and e-mail, had no hearing or cognitive difficulties that they thought would interfere with participation and could understand and speak English. Participants were eligible to receive up to $60.00 in Amazon.ca gift cards for their participation in the study. Study recruitment spanned March 2019 to May, 2020.

Procedure

Interested participants were directed to the online advertisement. Those who viewed the online advertisement clicked a link that directed them to the online Eligibility Questionnaire via the secure survey software, Opinio. Participants who met inclusion/exclusion criteria were then e-mailed an online Information and Consent form. Upon providing consent, participants were e-mailed a questionnaire to collect data on demographic information and sleep behaviours and practices. After these questionnaires were completed, participants were given access to the

Measures

Eligibility questionnaire

The questionnaire was created by the study authors for a previous study, 49 and asked whether participants were 18–24 years of age, have no hearing or cognitive deficits that would interfere with participation, have access to the internet and an e-mail account and can understand and speak English.

Insomnia severity index (ISI)

The ISI questionnaire is a measure used to detect insomnia. The ISI includes 7 items rated on a 5-point Likert scale (0 = no problem; 4 = very severe problem).

55

Scores on the ISI range from 0 to 28, with 8 to 14 considered to be subthreshold insomnia symptoms, 15 to 21 moderate symptoms and 22 to 28 severe symptoms. The ISI demonstrates a high internal consistency in adults (

Demographic questionnaire

The questionnaire was created by the study authors and included questions regarding the participant's age, sex, university attended, whether an international student, location, living arrangement (i.e. living at home with family, in residence, alone in the community, with housemates in the community), ethnic/cultural heritage, highest level of educational attainment and employment status.

Sleep hygiene index (SHI)

The SHI is a 13-item questionnaire that asks about current sleep behaviours and practices.

57

The SHI requires participants to report how frequently they engage in certain poor sleep practices, such as taking naps, exercising before going to bed, worrying in bed and caffeine and alcohol intake, on a 5-point scale from ‘Never’ to ‘Always’. The scores are then summed to produce a global sleep hygiene score ranging from 13 to 65, in which higher scores indicate poorer sleep hygiene. The SHI has demonstrated moderate internal consistency (

Usability questionnaires

The Session Feedback Questionnaire contained 33 items used to assess usability for each of four

Data analysis

Descriptive statistics were used to analyse the data from the demographic questionnaire, including frequency counts, means, standard deviations and percentages. The SHI and ISI questionnaires were summed by individual and then averaged across individuals.

Closed-ended questions (i.e. quantitative data) were analysed by using descriptive statistics including means, standard deviations and ranges for each of the Session Feedback Questionnaires and the Programme Feedback Questionnaire. Each usability dimension included two closed ended questions that were averaged for each participant to obtain a score for each dimension (see Supplementary Materials for the questions each dimension was derived from).

Open-ended questions (i.e. qualitative information) were analysed and coded using directed content analysis, which allows coding to be made within an existing framework. Following this, a second round of coding was completing to identify themes within each of the user experience categories. Two coders independently coded the comments to the User Experience Honeycomb categories and one coder coded themes within these categories, with the second coder coding according to the themes independently. Inter-rater reliability was calculated using Cohen's kappa (κ =0.93) and disagreements were discussed until consensus was obtained. Finally, within each dimension, all suggestions and constructive feedback were tallied based on the number of participants who endorsed the suggestion for each session questionnaire and the programme questionnaire. Comments within each questionnaire that countered these suggestions were tallied and a percentage in support of change was created. As used in prior usability studies, a minimum criterion of 10% in support of change was required to consider making the change. 47 For example, if there were 100 participants and 20 stated they disliked the visuals and 5 endorsed enjoying the visuals, a percentage change of 15% would be reported, meeting the criterion of 10% required to consider making a change to the programme.

Results

Participants

Of 85 participants who consented to the study and completed the demographic questionnaire, 53 participants gained access to the programme and completed at least one questionnaire. Of these 53 participants, 46 completed all questionnaires and were included in the analysis (see Figure 1). Given this was a usability study, it was important that the feedback used in the study was from those who had experienced the programme in its entirety, to allow consideration of all aspects of the programme.

Participant flow diagram depicting the number of participants at each stage of study progression.

The 46 participants who completed all questionnaires had a mean age of 20.6 years (

Usability ratings

The following results are reported separately for the overall programme ratings and the session ratings, with overall programme results first, followed by session ratings.

Overall programme ratings

Quantitative analyses

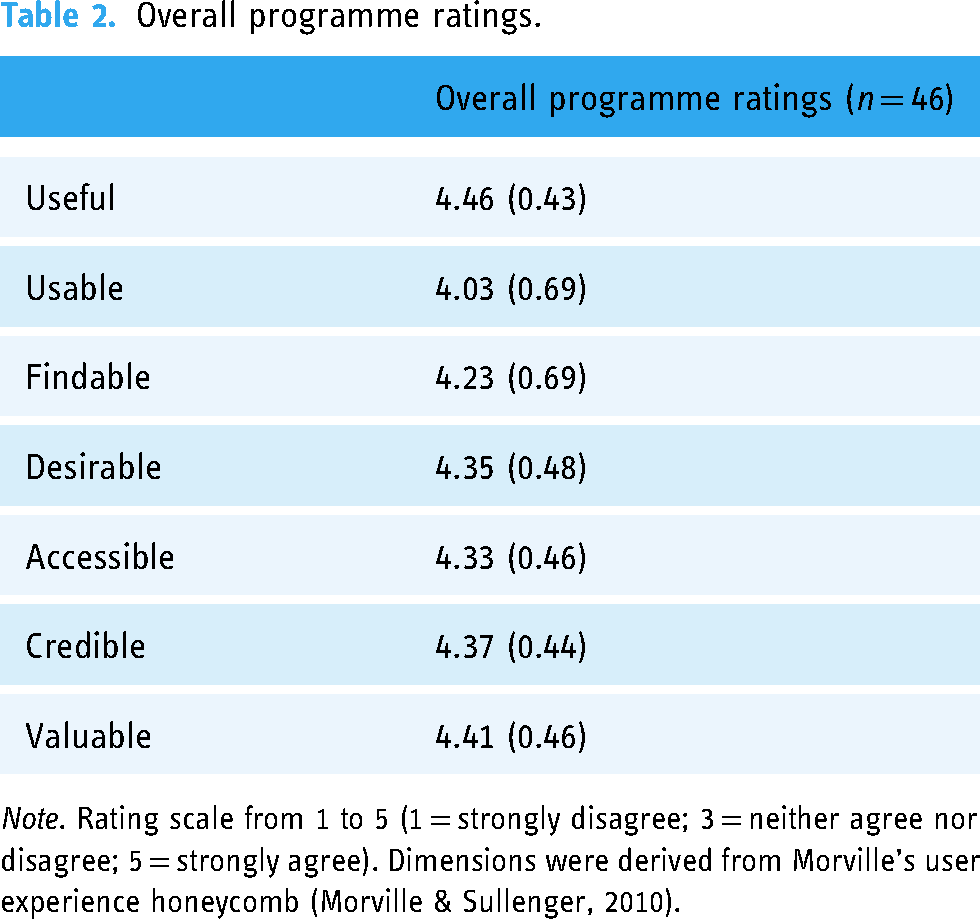

Mean usability ratings for the overall programme on Morville's user experience dimensions ranged from 4.03–4.46 on a scale of 1–5 (1 = strongly disagree, 3 = neither agree nor disagree, 5 = strongly agree). Of the seven ratings, no mean ratings from the overall programme were identified as negative (score of 1 or 2) or neutral (score of 3). All mean ratings were identified as positive (4–5) (see Table 2).

Overall programme ratings.

Qualitative analyses

For the dimension of usefulness, participants provided only positive comments with 73.9% of participants (

For four dimensions, there was more positive comments than constructive comments. For findability, 65.2% (

Two dimensions had a balance of positive and constructive comments. For credibility, 47.8% of participants (

No dimension had more negative than positive feedback. The favourite components of the programme were techniques for improving their sleep (

Examples of qualitative feedback.

Session feedback ratings

Quantitative analyses

Of 28 mean usability ratings across four sessions and seven usability dimensions, no mean ratings were negative (score of 1 or 2). Neutral ratings (score of 3) were identified only for Session 4 on the user experience dimensions of useful (3.78) and desirable (3.43). The 26 remaining ratings were identified as positive (4–5), ranging from 4.0–4.46 (see Table 4).

Session feedback ratings.

Qualitative analyses

As quantitative ratings only differed for Session 4 on usefulness and desirability, qualitative feedback is described for just those dimensions. For the usefulness dimension of Session 4, participants endorsed both positive and constructive feedback. 41.3% of participants (

Percentage in support of change

In addition to analysing the data for themes, a percentage in support of change was calculated for suggestions or criticisms within each session questionnaire and for the programme questionnaire. Only one suggestion met the 10% criteria for considering making a change to the programme. In Session 4, 26 participants (56.5% of the total sample) reported that there were no appealing features such as videos, pictures, or interactive components in the session and only 9 participants (19.6% of the sample) countered with comments that suggested that they thought the session was visually appealing. This led to a percentage in support of change of 36.9%.

Additional qualitative feedback

Other qualitative feedback was provided on favourite and least favourite features of the sessions, with most responses indicating features as the interactive images, quizzes and videos (

Discussion

The current study presents evidence that the

The qualitative data collected evaluating the programme overall, consisted mostly of positive comments in the areas of usefulness, value, findability, accessibility and desirability. In terms of the usability of the programme, the majority suggested that the programme was user-friendly; however, there were mixed reviews on length, with some suggesting that the length was reasonable and some suggesting the programme to be too long; however, this constructive feedback did not meet the 10% criterion cut-off to be considered to make a change to the length in programme. Similarly, the credibility of the programme had mixed reviews, with participants’ constructive comments suggesting that the programme could use more article citation, though the constructive feedback did not reach the criterion for considering change. Moving forward, it may be worthwhile to incorporate more citations to promote more credibility and to remind the user of why the programme is the length it is (e.g. importance of the information included in the programme).

Qualitative feedback for the programme sessions was mixed on the dimensions of usefulness and desirability for Session 4. While some participants provided positive feedback suggesting that the session was useful for treating sleep in special situations (e.g. sleeping when on vacation), other participants suggested that the session was just a summary of previous learnings. Although the bulk of Session 4 is mainly a summary of previous sessions, the session also encourages participants to reflect on their goals and progress since starting the programme and provides new information on roadblocks that can arise when trying to obtain adequate sleep (e.g. what to do on vacation, during daylight savings). Furthermore, Session 4 differed on desirability. As Session 4 was mainly a summary, it has no extra features (e.g. videos interactive activities) and feedback reflected this, with most constructive feedback suggesting that there was not enough relevant information in the session for it to be desirable, as well participants noting they wanted more features. Of these two themes, only the comments on lack of features in the session met the criterion of 10% to consider making a change to the session.

Overall, users experienced

The current results were based on the seven dimensions of the User Experience Honeycomb framework (usability, usefulness, value, accessibility, credibility, findability and desirability). Other studies have discussed additional theoretical underpinnings of the intention to use mobile health services. One widely used framework, the technology acceptance model (TAM) 63 suggests two key factors that influence users’ attitudes and behavioural intentions for using mobile health services: perceived usefulness and perceived ease of use. These factors are encompassed under the User Experience Honeycomb, under the usability and usefulness dimensions. On the contrary, Mouloudj et al. (2023) provided evidence for an extended Technology Acceptance model (TAM) by incorporating the concepts of perceived self-efficacy, attitudes towards digital applications and trust in the digital health system. 64 While the User Experience Honeycomb does take into account trust (credibility), perceived self-efficacy and attitudes towards digital applications are additional factors that are not considered within the framework used and may be important to consider in the future. Additionally, the unified theory of acceptance and use of technology (UTAUT-2) model has been used to investigate intentions of using mobile healthcare apps in China. 65 This model suggests seven factors that influence the acceptance and use of technology; performance expectancy (perception of how much the system will help an individual attain gains), effort expectancy (ease of use), social influence (the degree to which an individual feels it is important for others to believe he/she should use the system), facilitating conditions (the degree to which an individual believes that organizational and technical infrastructure exists to support use of the system), perceived risk, price value and perceived trust of the system. The User Experience Honeycomb takes into account many of these factors, including performance expectancy (desirability, value), effort expectancy (usability), facilitating conditions (accessibility, findability) and perceived trust (credibility). In the future it may be prudent to include social influence, perceived risk and price value, to bolster the likelihood of use of the programme.

Comparing the results of this study to prior BNBD-Youth studies, results were similar. The prior usability study with younger adolescents demonstrated mostly positive quantitative ratings with the exception of the dimension of useful and desirable for Sessions 1, 2 and 4 and value for Session 2 which all were in the neutral range, whereas these were positive for university students. In terms of meeting the 10% criterion to consider making changes to the programme, both studies found that for the dimension of desirability, in particular to add more interactive features, was met for change. However, in the study with younger adolescents there were additional suggestions including having fewer paragraphs and improved visual design and to increase compatibility of features and videos with varying media devices in the dimension of accessibility. These results would indicate that most usability issues were addressed after the study with younger adolescents, but some remain to be addressed further.

In comparing

Similar to Fucito et al.,30,31 the

Much like other eHealth sleep programmes, the

In summary, this study found that the

Strengths and limitations

A strength of this study was the relatively large sample of 46 students, with usability studies suggesting appropriate sample sizes ranging from 5 to 20 participants to catch 95% usability problems. 67 Additionally, this study utilized a diverse sample of students from universities across Canada, including international students. Moreover, this study was conceptualized using a user-centre designed, capitalizing on end-users’ suggestions for what they wanted to see in the programme, what they enjoyed about the programme once developed and what aspects require modifications. Furthermore, this programme used a formal usability framework to evaluate usability perceptions, unlike the other programmes that incorporated user feedback into their designs.

A limitation of the study was that individuals self-selected to participate. As such, there is the possibility of selection bias, in which the sample collected may not reflect the overall population of interest, but a proportion of the population that was interested in participating in the research study. Another limitation is that honoraria were given for completing the questionnaires and as such, there was a potential external motivation to complete the programme and perhaps provide favourable ratings. Furthermore, as online questionnaires were used to collect qualitative data, some participants did not provide rich feedback (e.g. only ‘it was useful’ or ‘I liked the programme’). Another limitation of this study is that only self-report measures were used to gather feedback. As such, it is possible that students provided inaccurate feedback. An additional limitation of this study is the large percentage of attrition. After participants were enrolled in the programme, 37% of participants did not complete the study requirements. Although this percentage is common in online higher education68–70 there is a possibility that a proportion of these participants disliked the programme and thus did not continue with it, or did not wish to fulfil the demands of the study itself. Another limitation of the study is that session questionnaires were sent to participants at the beginning of each session (to allow for participants to complete the questionnaire while going through the session). As such, there is the possibility that participants completed the questionnaire before completing the session and thus their responses may not reflect their true experiences using the programme. Another limitation of the study is the disproportionate number of students who described themselves as international students (43%). In the 2019/2020 school year, Statistics Canada reported that international students made up approximately 20.6% of enrolments in Canadian universities.

71

This suggests that the study may not generalize completely to a Canadian university student population. It should also be noted that this programme has been tested only with students in universities and so it does not generalize to the whole young adult student population (e.g. students who attend community colleges, vocational programmes and other non-traditional educational programmes). An additional limitation of the

Future directions

After incorporating the suggested changes to the

Conclusions

Supplemental Material

sj-docx-1-dhj-10.1177_20552076241260480 - Supplemental material for Usability of an eHealth sleep education intervention for university students

Supplemental material, sj-docx-1-dhj-10.1177_20552076241260480 for Usability of an eHealth sleep education intervention for university students by Lindsay Rosenberg, Gabrielle Rigney, Anastasija Jemcov and Derek van Voorst, Penny Corkum in DIGITAL HEALTH

Supplemental Material

sj-docx-2-dhj-10.1177_20552076241260480 - Supplemental material for Usability of an eHealth sleep education intervention for university students

Supplemental material, sj-docx-2-dhj-10.1177_20552076241260480 for Usability of an eHealth sleep education intervention for university students by Lindsay Rosenberg, Gabrielle Rigney, Anastasija Jemcov and Derek van Voorst, Penny Corkum in DIGITAL HEALTH

Supplemental Material

sj-docx-3-dhj-10.1177_20552076241260480 - Supplemental material for Usability of an eHealth sleep education intervention for university students

Supplemental material, sj-docx-3-dhj-10.1177_20552076241260480 for Usability of an eHealth sleep education intervention for university students by Lindsay Rosenberg, Gabrielle Rigney, Anastasija Jemcov and Derek van Voorst, Penny Corkum in DIGITAL HEALTH

Footnotes

Acknowledgements

The authors wish to acknowledge Michelle Tougas and Joshua Mugford for their contributions to developing and evaluating the adolescent version of the

Contributorship

LR participated in the statistical analysis, writing, review and editing; GR participated in conceptualization, methodology, review and editing; AR participated in statistical analysis and editing; DV participated in project administration, statistical analysis and editing; PC participated in conceptualization, methodology, review and editing and supervision.

Declaration of conflicting interests

In the future, there is a possibility that the

Ethical approval

The IWK Health Centre Research Ethics Board approved this study.

Funding

The authors disclosed receipt of the following financial support for the research, authorship and/or publication of this article: from the IWK Health Centre and the Nova Scotia Health Research Foundation for the development of the

Guarantor

PC.

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.