Abstract

Purpose

Artificial Intelligence (AI) imitating human-like language, such as ChatGPT, has impacted lives throughout various multidisciplinary fields. However, despite these innovations, it is unclear how well its implementation will assist patients in clinical situations. We evaluated changes in patient perceptions regarding AI before and after reading a ChatGPT-written explanation.

Materials and methods

In total, 24 South Korean patients receiving urolithiasis treatment were surveyed through questionnaires. The ChatGPT explanatory note was provided between the first and second questionnaires, detailing lifestyle modifications for preventing urolithiasis recurrence. The study questionnaire was the Korean version of the General Attitudes toward Artificial Intelligence Scale, including positive and negative attitude items. Wilcoxon signed-rank tests were accomplished to compare questionnaire scores before and after receiving the explanatory note. A linear regression analysis with stepwise elimination was used to assess variable (demographic data) accuracy in predicting outcomes.

Results

There were significant differences between total negative questionnaire scores pre- and post-surveys of ChatGPT, but not in the positive scores. Among variables, only education level significantly influenced mean score differences in the negative questionnaires.

Conclusions

The negative perception change among urolithiasis patients after receiving the explanatory note provided by the AI chatbot program was observed, evidencing that patients with lower education levels expressed a more negative response. The explanatory note provided by the AI chatbot program could provoke an adverse change in AI perception. Negative human responses must be considered to improve and adapt new technology in health care. Only through changing patient perspectives will upgraded AI technology integrate into medical healthcare.

Introduction

Artificial Intelligence (AI) is a multidisciplinary field combining computer science and linguistics to develop machines that can perform tasks that generally require human intelligence. 1 Large Language Models (LLMs) are an AI breakthrough that enables them to replicate human-like language. They are based on deep learning techniques, such as neural networks, and are trained through extensive data from books, news articles, scientific journals, and other diverse sources. LLMs can generate high-quality text with striking coherence and realism by analyzing and learning patterns or relationships through training data. This process allows them to predict words or phrases likely to appear next in a given context. Understanding and generating language establish LLMs as conducive to various natural language processing fields, such as text classification, chatbots, and sentiment analysis. 2

The generative pre-trained transformer (GPT) is an LLM model designed by OpenAI (San Francisco, CA, USA). In November 2022, they launched ChatGPT using GPT-3.5, trained on 175 billion parameter tests. 3 Recently, GPT-4 was released with a 170 trillion parameter model size supporting image inputs. 3 GPT architecture utilizes a neural network to process natural language and generate responses based on the input's context. ChatGPT's superiority over its GPT-based predecessors lies in its ability to generate refined and highly sophisticated responses in multiple languages based on advanced modeling. In a currently available preprint manuscript, ChatGPT successfully completed all three sections of the United States Medical Licensing Examination. 4 Despite these impressive results, it is unclear how well ChatGPT will assist patients in clinical situations. Therefore, this study aims to evaluate changes in patient perceptions regarding AI before and after receiving a ChatGPT-written explanatory note.

Patients and methods

Study design

Our institute's ethics committee approved this observational study. Questionnaires were completed through self-survey; the first questionnaire was issued before the explanatory note regarding lifestyle modifications for preventing urolithiasis recurrence, and subsequent questionnaires were provided after receiving the note. ChatGPT wrote the explanatory note (Table 1). The first question was, “Please explain lifestyle modification to prevent urolithiasis recurrence” in English. Each answer from ChatGPT was linked to detailed subsequent questions. All answers were translated into Korean through Google Translate. Our input entailed printing the answers and crafting the explanatory note.

Questions and ChatGPT responses.

Study population

Participants comprised patients receiving urolithiasis treatment, in which diagnosis was confirmed through computed tomography. All patients were treated with ureterorenoscopy for ureter or renal stone management from April 2023. Inclusion criteria required an age less than 80 but more than 18 years. Exclusion criteria encompassed those unable to understand the explanatory note or check questionnaires.

Data collection

The study questionnaire was the Korean version of the General Attitudes toward Artificial Intelligence Scale (GAAIS-K), 5 a validated adaptation of the original GAAIS. 6 After proving their informed consent, patients first completed the GAAIS-K. The explanatory note concerning lifestyle modifications for preventing urolithiasis recurrence was provided upon submission. Then, the second questionnaire was distributed, which surveyed satisfaction regarding the ChatGPT explanatory note.

Statistical analysis

Wilcoxon signed-rank tests were used to compare questionnaire scores before and after supplying the explanatory note. This study's primary outcome measurement was to ascertain score differences in negative questionnaires between pre- and post-explanatory note learning. Sample size calculation was based on the mean difference of negative questionnaires using paired-group sample analysis. The mean difference was 1.29, and the standard deviation was 2.09. We accepted two-sided α-errors of 5% and β-errors of 20% when identifying significant differences. Based on these calculations, this study's post hoc power was 0.825 with a 0.6172249 effect size. A linear regression analysis with stepwise elimination was used to assess predictor variable roles (demographic data included income, education, age, sex, religion, and marriage status) in extrapolating outcome variables (mean differences of positive or negative questionnaire scores). All p values were two-tailed, with p < 0.05 considered statistically significant. All statistical analyses were performed using R version 4.2.2 (R Project for Statistical Computing; http://www.r-project.org/).

Results

Demographic data and AI questionnaire scores are summarized in Tables 2 and 3. There were significant differences in the summation of negative questionnaire scores between pre- and post-surveys of ChatGPT but not in the positive questionnaire scores. The mean difference of negative questionnaires was increased by 1.3 ± 2.1, indicating that negative emotions, such as worry or wariness relative to the AI, were augmented.

Patient's demographics.

Scores pre- and post-survey regarding the ChatGPT explanatory note.

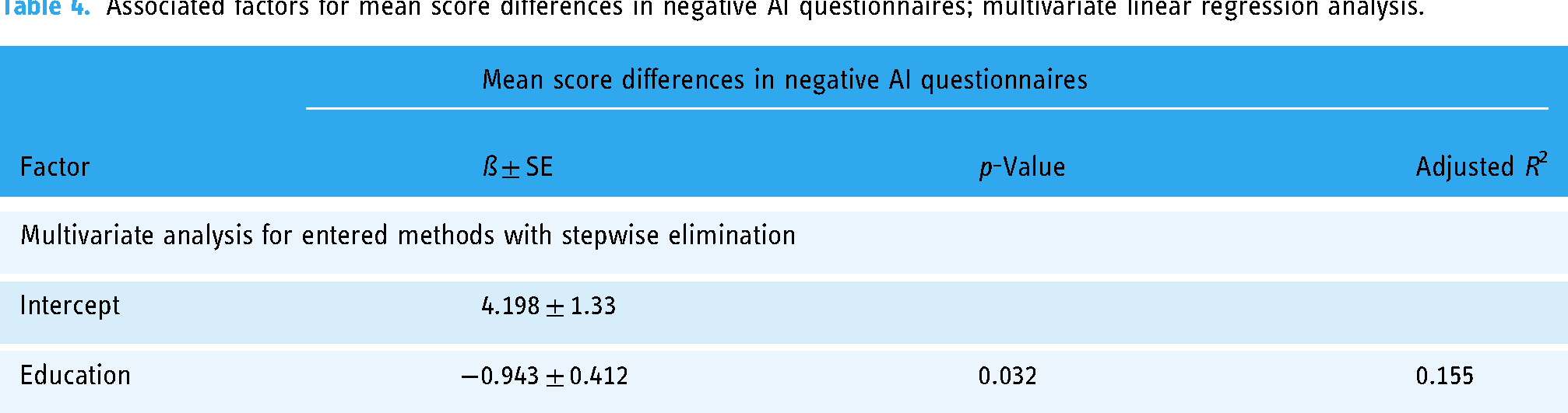

Linear regression was used to identify how demographic factors influenced this mean score difference in the negative questionnaires. However, only education level was deemed significantly influential (Table 4). In this analysis, patients with lower education levels expressed increased negative sentiments after receiving ChatGPT's explanatory note.

Associated factors for mean score differences in negative AI questionnaires; multivariate linear regression analysis.

In the satisfaction questionnaires (Table 5), approximately 80% of patients replied that the explanatory note helped them understand the disease (agree or strongly agree) and that the sentences were not too awkward (strongly disagree or disagree, 58.3%). However, their confidence in the explanatory note (agree or strongly agree, 66.7%) was lower than the rated helpfulness. There were no correlations between demographic data and satisfaction questionnaire results (p > 0.05).

Satisfaction questionnaires for the explanatory note by ChatGPT.

Discussion

After supplying the explanatory note about lifestyle modification to patients who underwent urolithiasis, we surveyed attitude shifts regarding AI. ChatGPT provided detailed advice to improve lifestyle adjustments for preventing urolithiasis. However, negative attitude scores increased among patients, revealing a rising trend in negative perception. This trend was influenced by education level, establishing that patients with lower education levels will likely express more negative emotions after reading ChatGPT's explanatory note. Nonetheless, patients replied that the explanation note reinforced their understanding of the disease, although they did not harbor enough confidence in this type of explanatory note.

AI development has impacted people's lives in transportation, health, science, finance, and the military. 7 AI programs employ human-like language to overcome the “uncanny valley” impression, which remains a challenge yet to be resolved.8,9 Concerning the medical field and their own health, some humans opposed replacing human doctors with medical AI. 10 Lower education levels correlated to a negative likelihood of AI improving an individual's health, 11 potentially due to a lack of technological understanding and fear of job security. 12 Different perceptions between highly and less educated people significantly influence AI program development and adoption. Therefore, AI programs assisting the medical field must consider individuals with lower education levels by implementing a more user-friendly interface and providing clear information. 13

Urolithiasis recurrence rates were 26% at 5 and 35% at 10 years post-initial diagnosis. 14 Recurrent urolithiasis can burden patients’ physical and mental aspects. 15 However, dietary or behavioral risk factors potentially exacerbate stone formation engendered by supersaturation, nucleation, crystal growth, and aggregation. 16 Therefore, lifestyle modifications to reduce risk factors may be the most effective strategy in preventing urolithiasis. According to recent guidelines, 17 sufficient fluid intake and maintaining urine output to 2.0–2.5 L/day were recommended. Furthermore, excessive dietary oxalate may increase urolithiasis risk. 18 Although multicomponent dietary interventions were heterogeneous, low protein and sodium and non-poor calcium may reduce recurrence 19 ; obesity is also considered a urolithiasis risk factor. 20 Therefore, lifestyle adjustments to avert urolithiasis onset are vital for urolithiasis patients.

Unfortunately, daily lifestyle modifications can be challenging for patients, but new technology could offer new opportunities to maintain efforts. Behavioral adjustment techniques applied through smartphone, tablet, or computer applications could facilitate self-monitoring and goal setting. 21 These mobile healthcare applications have already expanded areas such as diabetes, obesity, antismoking, stress management, and depression. 22 In addition, platforms such as YouTube further influence patient lifestyles, as does the excessive disease information flooding the internet. 23 AI programs like ChatGPT can summarize data into key points without expending considerable time or effort.

However, whether these briefs can be trusted is another matter. Because circulating online data sources have not been verified, errors may occur during the recapitulations. AI programs provide answers by collecting web information statistically closest to the correct answer, but this answer may be incorrect. Last February, Google's new chatbot Bard was based on the LaMDA LLM and delivered incorrect answers, diminishing Google's stock. Thus, future developments may require authoritative verification to refine AI chatbot reliability.

Nevertheless, AI chatbot programs could aid in low-complexity tasks and facilitate the information flow. 24 In the clinical field, AI chatbots can summarize clinical information, medical documentation, insurance company letters, patient education, and scheduling, 24 improving physician efficiency by reducing simple and repetitive labor. In addition, AI chatbots are similar to high-quality medical databases and can provide solid recommendations with high-level evidence. 25 These programs could also ascertain evidence for writing articles by completing a case report's outline, drafting, and conclusions. 26 The AI chatbot renaissance could resolve current limitations in the near future.

However, modern AI chatbots cannot replace a real doctor. AI hallucination is a primary concern, in which an AI provides a confident response that does not seem to be justified by its training data. Upon reference checking, some references were invalid or never surfaced, 27 potentially fostering adverse effects on decision-making and leading to ethical and legal issues. In addition, AI output depends on training data; thus, accurate data effectuate accurate results. Data is still primarily manually produced by humans, but someday AI will automatically fill in records. Nonetheless, regarding risk management, advanced AI chatbot programs would alleviate physician burden and encourage scientific progression.

This study does acknowledge some limitations. First, a larger subject pool and specific disease entity are needed for patient generalizations. However, we verified that the AI chatbot program could refashion AI impressions from urinary stone patients, a disease in which lifestyle adjustments are critical. This study evidences that AI chatbots can be implemented for medically assisting patients in the future. Second, this study used GPT-3.5; GPT-3.5 is limited as it only considers information up to 2021, whereas GPT-4 is trained on newer data. GPT-4 demonstrated an improved understanding of complex surgical information compared to GPT-3.5. 28 However, GPT-3.5 results were reliable as urinary stone preventions have primarily stayed the same. Third, we did not validate the Korean translation by the Google translator. Most ChatGPT training data was in English, and translation into other languages is challenging. However, the Google translator used displayed acceptable performance in translating highly academic writings. 29 Lastly, we only confirmed short-term changes in AI perception, not long-term lifestyle changes.

Conclusions

Our study investigated perception changes in urolithiasis patients after receiving an explanatory note provided by an AI chatbot program. Patients with lower education levels will likely express negative impressions after learning from the explanatory note. In addition, most patients expressed unmet needs for disease understanding. For improving and adapting the new technology in healthcare, negative human responses must be considered. Improving patient perception will enable upgraded AI technology to integrate into medical healthcare.

Footnotes

Acknowledgements

We thank Jung-Won Ahn of Gangneung-Wonju National University, who provided the Korean version of the questionnaire.

Contributorship

SYC and SHK were involved in initial conception of the study. SYC and JHT were involved in acquisition of data. SYC, SHK, JC, JHK, and JWK were involved in analysis and interpretation of data. SYC and SHK prepared the first draft. IHC, T-HK, SCM, TTN, and YSL were involved in critical revision of the manuscript. SYC obtained funding and supervised. All authors approved the final manuscript.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Ethical approval

The studies involving human participants were reviewed and approved by the Institutional Ethics Committee of Chung-Ang University Hospital (IRB No. 2302-010-542).

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This study was supported by the Basic Science Research Program through the National Research Foundation of Korea (NRF), funded by the Ministry of Science, ICT & Future Planning (NRF-2022R1A2C2008207 & NRF-2023R1A2C1003830).

Guarantor

Se Young Choi.

Informed Consent

Participants provided their written informed consent to take part in this study.