Abstract

By operationalizing how public policy is enacted, government algorithms play a key role of

Keywords

Introduction

In the United States, experts within administrative agencies play a critical role in overseeing complex social problems and responding quickly to emerging issues. Yet the authority of agency policymakers—who are appointed rather than elected—and the legitimacy of the administrative state more fundamentally have been the subject of debate for as long as these agencies have existed (Beermann, 2024; Bressman, 2003; Fisch, 2024). Early models of public administration located the legitimacy—and value-neutrality (Seidenfeld, 1994)—of agency decision-making in apolitical scientific expertise and fidelity to congressional statutes (Seifter, 2014). However, the interpretation and implementation of policy requires managing ambiguities inherent to both law and science (Kumar, 2025; Larkin, 2020). Whether agency officials have the authority to resolve such ambiguities, and how those ambiguities are resolved, has thus long been a locus of political tension.

The ambiguity of public administration invites quantification. Numbers promise impartial and rational adjudication of difficult policy questions. Historically, agencies have emphasized quantification to assert legitimacy in contexts where trust and consensus are difficult to come by (Porter, 1995). New forms of quantification, however, challenge this logic. Specifically, the adoption of algorithms within agency operations is reanimating many long-held tensions in the legitimacy of the administrative state (Abdu and Jacobs, 2024). Shifting authority from bureaucrats to algorithms (and the technologists who design them) raises questions as to whether and how this authority is legitimized (Hong, 2023). Despite substantively overlapping concerns and mechanisms, the literature on quantification in public administration has been largely disconnected from the literature on algorithmic legitimacy and public administration. In this article, we propose that the algorithmic turn in public administration is part of a longer legacy of quantification in government. In doing so, we echo earlier calls to situate the data-driven governance movement in government within the broader epistemology of quantification (Rieder and Simon, 2016).

So what then is at stake for the legitimacy of the administrative state? Administrative law has long emphasized the legitimacy and authority of agency experts by appealing to their efficiency, mitigating their capacity for arbitrary decision-making, and expanding their accountability (Bressman, 2003; DeCanio, 2021). We see echoes of such longstanding legitimacy practices in contemporary debates about agency uses of algorithms to administer policy.

Scholars have, for instance, emphasized algorithmic systems’ capacity to enhance legitimacy through efficient and fair administration (Engstrom et al., 2020) and to make policy decisions visible (Coglianese and Lehr, 2019; Levy et al., 2021). More commonly, the literature has highlighted the challenges that algorithms pose to administrative legitimacy: making decisions more opaque (Pasquale, 2015), displacing decision-making power (Cooper et al., 2022; Mulligan and Bamberger, 2019), threatening expert deliberation (Calo and Citron, 2020), and eroding public participation and oversight (Citron, 2007; Kroll et al., 2017).

That is, the literature on algorithms in public administration has primarily focused on

Roadmap

We empirically investigate how the adoption of algorithms in public administration is transforming administrative legitimacy and its underlying values. Through a systematic qualitative analysis of reports and testimonies published by the U.S. Government Accountability Office (GAO), we examine how GAO conceptualizes the risks and promises of agency use of algorithmic technology and examine what values they point to in order to establish the legitimacy of incorporating algorithmic systems into governance processes.

To contextualize our analysis, first, we characterize the role of legitimacy in the administrative state. Then, we contextualize algorithmic technology use in U.S. public administration within the legacy of nonalgorithmic forms of bureaucratic quantification and broader histories of administrative legitimacy. We then introduce the methods and empirical strategy, through which we examine GAO's legitimacy practices as they relate to the domains, promises, and challenges of algorithmic use, and the interventions that GAO proposes. We organize our findings of how legitimacy is enacted and renegotiated around algorithms along the dimensions of

Legitimacy practices

We focus on administrative

The meaning of political legitimacy is contested and ambiguous, appearing within the literature as both a moral and a sociological concept (Buchanan, 2002; Weisner and Harfst, 2022). When studied normatively, legitimacy refers to a moral justification of authority (e.g., Buchanan, 2002; Rawls, 1971; Stone and Mittelstadt, 2025); when studied descriptively, legitimacy typically refers to people's beliefs about authority and the conditions under which they are willing to submit to authority (e.g., Martin and Waldman, 2023; Tyler, 1990). Shared across these conceptualizations of legitimacy is that legitimacy is defined relationally: between actors, within or between organizations, to account for notions of responsibility, recourse, or permissibility (Tyler, 1997). However, missing from both these perspectives is an examination of how those with authority shape legitimacy.

Because legitimacy is fundamentally relational, it is not only defined by those who submit to authority but also by those who exercise it. Indeed, Rodney Barker notes that the state, rather than those it governs, “is the principal user of the language of legitimacy” (Barker, 1990: 159). We follow Laura Nader's call to “study up,” (Nader, 1972) adopting a new perspective on a common question by devoting attention to powerful institutions. We argue that the state shapes what is a permissible exercise of authority through its own norms, policies, and rhetoric. Understanding how legitimacy is constructed therefore requires understanding the state's legitimacy practices: the tactics it uses to demonstrate and enact legitimacy. Legitimacy is not performed only, or even most often, for the general public: instead, these practices are largely enacted

We focus on the U.S. administrative state's pursuit of legitimacy as a crucial site where legitimacy is negotiated through everyday practices. While in the United States, the authority of legislators is legitimated by democratic elections, agency officials—who are appointed rather than elected—must look elsewhere. The history of U.S. agency policy-making points to the intricate strategies involved in establishing the legitimacy of the administrative state, centered around two key aspects of legitimacy: the suppression of arbitrariness and the expansion of accountability (Bressman, 2003). Attempts to minimize arbitrariness have relied on establishing objectivity through appeals to rules, procedure, and expertise, as well as through demonstrably consistent and fair decisions. Meanwhile, efforts to expand accountability have involved transparency, public participation, and susceptibility to consequences. At times, the legitimacy of the administrative state has been conceptualized as a trade-off with the efficiency achieved through agency experts’ flexibility and capacity to oversee complex issues (Bressman, 2003; Waldman, 2020). However, efficiency has alternatively been conceptualized as a unique affordance of the administrative state that justifies its existence and therefore plays a key role in its legitimacy (Calo and Citron, 2020; DeCanio, 2021). Examining the administrative state's legitimacy practices reveals the values for which legitimacy stands:

Administrative legitimacy and quantification

We propose that agency practices around algorithms belong to a class of legitimacy practices based in

Notably, quantification is not only used to pursue legitimacy but it also changes how legitimacy is enacted. The proliferation of quantification in government has redefined legitimacy practices within policy spaces by privileging quantitative demonstrations of legitimacy. In the following subsections, we outline existing work about how quantification has been used to demonstrate legitimacy in bureaucratic governments. We highlight how quantitative practices have been used to pursue efficiency, nonarbitrariness, and accountability. We also discuss how quantification has changed the way that these values are conceptualized and enacted.

Quantification and efficiency

Quantification has long been used toward the goal of more efficient government. Transforming policy decisions into numbers enables efficiency by simplifying complex policy landscapes, narrowing the language of dispute (Demortain, 2019), and enabling both the measurement of and optimization toward efficiency-based targets (Mennicken and Espeland, 2019).

Importantly, quantification allows policymakers to measure, compare, and improve policies on the basis of their efficiency. Cost–benefit analysis and risk analysis are two forms of quantification that have flourished within U.S. agencies to demonstrate both the efficiency and legitimacy of policy decisions (Sunstein, 2002).

By simplifying complex information into easily comparable numbers, these quantitative comparisons enable dispersed surveillance and distant governance (Espeland and Stevens, 2008). This distance has been key to the efficient functioning of large-scale bureaucracies because it allows national governments to operate at scale, allowing the state to abstract away differences between individual subjects (1998), excluding the complexities of local issues in service of efficiency (Demortain, 2019).

The close link between quantification and efficiency has transformed legitimacy practices to further emphasize efficiency over justifications of authority. For example, the administrative state has increasingly privileged efficiency-based analysis to demonstrate nonarbitrariness (Porter, 1995; Sunstein, 2017). Similarly, Berman (2022) describes the U.S. government's turn toward an economic style of thinking that privileges readily quantifiable and efficiency-based bases for legitimacy. This turn toward quantitative, efficiency-based legitimacy practices encourages economization, threatening to recast policy decisions as economic decisions and democratic goals as market goals. 1 Even when quantification fails to live up to its goals, these transformations are durable (Berman, 2022; Hacking, 1982) and have long-term implications for justifications of authority not based in efficiency.

Quantification and nonarbitrariness

Quantification promises to minimize arbitrariness through its suggestion of objectivity and neutrality (Espeland and Stevens, 1998; Porter, 1995). Numbers derive authority from their perceived objectivity, resistance manipulation, and clarity—making them particularly useful in the political domain (Deringer, 2018).

The concept of “mechanical objectivity” is central to the way that numbers are used to demonstrate nonarbitrariness: suppressing subjectivity through rigid rules and procedures (Daston and Galison, 2007; Porter, 1995). This approach to nonarbitrariness aligns with efforts to constrain agency officials’ discretion by legislating their behavior extensively. However, mechanical objectivity diverges from historical attempts to establish agency officials’ legitimacy by appealing to their scientific expertise (what Porter (1995) calls “disciplinary objectivity”). Consequently, quantification has reshaped the meaning of arbitrariness within U.S. agencies: mathematical modeling of risk has taken the place of expert judgment as the gold standard for evidence against arbitrary decision-making (Porter, 1995).

Throughout the quantification literature, the objectivity of numbers is demonstrated through consistency and fairness. Consistency has been particularly essential for establishing the legitimacy of social numbers, demonstrating that these numbers are impervious to distortion by the individual whims of those who create them (Didier, 2020; Espeland and Stevens, 2008). Beyond consistency, quantification also promises to achieve fairness by eliminating human biases. As a result, quantitative demonstrations of unbiasedness have proliferated, in turn reshaping the meaning of fairness (Espeland and Stevens, 2008).

Quantification and accountability

Contemporary statistical practices around open data and open science enact accountability first and foremost through transparency (Freese and Peterson, 2018). Under this paradigm, transparency enables verification, discourages incautious or dishonest practices through the threat of sanction, and allows claims to be compared and jointly assessed. Indeed, transparency has become closely associated with the production and communication of numbers that quantification enables, making visible decisions, people, and objects that may previously have been hidden (Espeland and Stevens, 2008).

The transparency of numbers also facilitates accountability by enabling public participation in political life (Espeland and Vannebo, 2007). This participation is facilitated through both political representation—via national census, for example—and debate. Indeed, Deringer (2018) conceptualizes calculations as instruments of dispute, tools of argumentation and persuasion that have historically not only allowed those in power to exercise their authority but to allow outsiders to critique those in power.

Methods

Study setting

The U.S. GAO is an independent agency in the legislative branch that provides oversight over the federal government, including administrative agencies in the executive branch, on behalf of Congress. The GAO aims to preserve administrative legitimacy through its day-to-day operations, emphasizing nonpartisan information gathering and the accountability of the administrative state (GAO, 2024). As such, GAO offers a natural setting through which to explore everyday state legitimacy practices.

The GAO pursues this mission via the publication of reports, either initiated externally by Congress (most common) or by existing laws, or internally via the Comptroller General. Congress has other oversight tools available—for example, initiating committee hearings. Historically, Congress has leaned on GAO reports more heavily in times of unified government to advance shared policy goals, rather than to undermine the executive branch (Kennedy, 2023). These reports ultimately reflect areas of political attention, including specific ways that government functions become politicized (Figure 1).

Illustrative examples of GAO reports in our sample (Source: GAO). Political anxieties can direct attention to agency activities to target different concerns (e.g., fraud, unemployment, national security) and perform different values (e.g., efficiency, flexibility, consistency).

Through their reports on agency use of algorithms, GAO implicitly intervenes on where and how it is legitimate for algorithms to take on administrative roles. These reports meaningfully shape agency practices: GAO estimates that the recommendations issued in their reports are taken up by agencies about 75% of the time. We use a corpus of reports published by GAO to examine everyday legitimacy practices and how they are shaped by, and shape, the algorithmic turn in the administrative state.

Data

Our analysis draws from a corpus of reports published by the U.S. GAO since its inception. We selected all reports that included the term “algorithm” and/or the term “artificial intelligence.” We chose these keywords to trace the evolution of contemporary algorithmic technologies that have reanimated questions of legitimacy in public administration. A broader search might also consider predecessors to these terms such as “automation” or “intelligent machines,” but we limit our search for consistency in the types of technologies described. There were 953 reports that met our inclusion criteria, the earliest of which was published in 1968 (Figure 2). We randomly sampled 10 reports from each presidential administration in our corpus, except for administrations where fewer than 10 reports were published for which we included all reports (Table 1). This left us with a total of 90 reports, ranging in length from 11 to 888 pages. We performed qualitative coding on these 90 reports following the approach outlined in the following subsection.

Government Accountability Office (GAO) reports 1968–2024. Top: Histogram of the number of GAO reports including the terms “algorithm” and/or “artificial intelligence” per year. (Note: Our data collection stopped 18 March 2024, but this table includes all reports through the end of 2024.) Bottom: Histogram of all GAO reports during the same period for comparison.

Number of reports included in final analysis by presidential administration.

We randomly sampled 10 reports per presidential administration in our original corpus, except for the Johnson, Nixon, and Ford administrations in which fewer than 10 reports were published. For these administrations, we included all available reports.

Empirical strategy

We treat the GAO reports in our sample as

We employed thematic line-by-line coding of each GAO report in our sample along the following dimensions:

DOMAIN: Where does the government turn to algorithms? Where does GAO conceptualize algorithmic intervention as legitimate or illegitimate? PROMISES: How does GAO justify the turn to algorithms? How is this transforming conception of legitimacy? CHALLENGES: Where does GAO conceptualize the risks and challenges of algorithms? How does this align with notions of legitimacy? INTERVENTION: What steps does GAO propose to address the challenges outlined above? How are these interventions designed to ensure or demonstrate the legitimacy of algorithmic systems?

Applying this coding scheme, we might for instance find in a report “health care” as a domain, “speed” and “accuracy” as promises, “opacity” and “data privacy” as challenges, and “oversight” as a proposed intervention. Once we applied this coding scheme, we grouped related codes together and identified major themes. Specifically, we examine promises, challenges, and interventions through the lens of legitimacy, grouping codes under the major themes of accountability, efficiency, and nonarbitrariness—historical pillars of administrative legitimacy and quantification, which we find are also central to how GAO conceptualizes legitimate agency use of algorithms. Drawing on the history of administrative legitimacy, our analysis allows us to identify where algorithms extend but also transform agency legitimacy practices vis-à-vis these three central dimensions. By examining how GAO discusses the promises and challenges of algorithmic systems, we highlight the aspects of legitimacy that govern where and why algorithms are adopted. Attending to interventions designed to make algorithms more legitimate allows us to anticipate how conceptualizations of legitimacy are transformed by agency practices. We conceptualize these interventions as everyday legitimacy practices: the steps toward legitimacy that agencies take, not when legitimacy is in crisis, but rather when new modes of administration reconfigure the scope of legitimate administrative decision-making.

Findings

We analyzed how GAO reports between 1968 and 2024 have discussed agency use of algorithmic technologies and artificial intelligence. Through our coding and analysis of application domains, we found that the majority of agencies’ use of these technologies was adopted for internal management purposes—such as cybersecurity, billing, or agency workforce management—rather than for public-facing policy implementation. These findings match a 2023 GAO report showing that, except for “science,” internal management was the most common agency use case of AI (U.S. Government Accountability Office, 2023).

Through our analysis of the promises and challenges of algorithmic technology we find that efficiency, nonarbitrariness, and accountability are still key values used to demonstrate legitimacy. However, we find that efficiency and nonarbitrariness are more central than accountability in establishing the legitimacy of algorithms. Finally, we examine the interventions used to address potential legitimacy challenges imposed by algorithm. We conceptualize these interventions as

In the following sections, we examine how the core values of administrative legitimacy are operationalized, emphasizing continuities and shifts with prior forms of quantification and the history of administrative legitimacy more broadly. To structure our findings, we draw on Bressman's (2003) account of legitimacy, which we divide into three dimensions: efficiency, nonarbitrariness, and accountability.

Findings: Efficiency in the algorithmic administrative state

In our sample of GAO reports, efficiency is frequently used to justify and legitimize the promises of algorithmic systems within agencies (Figure 3). Efficiency was the most commonly cited promise of algorithms, providing empirical evidence that efficiency narratives have been core to the uptake of automated systems. This finding aligns with legal and sociological scholarship that theorizes that algorithmic decision-making systems’ orientation toward agility, frictionlessness, and cost-reduction make them a natural ally of the U.S. government (Waldman, 2020)—that is, of a government that increasingly prioritizes efficiency over other social and political values (Berman, 2022). However, a closer look at this rhetoric in the GAO data reveals the

The reports primarily conceptualize efficiency in terms of

Despite its prevalence, economic cost is not the only conception of efficiency used to justify the promises of algorithmic technology. Beyond reduced costs and increased revenue, GAO reports also emphasize the promise of enhanced performance and needed functionalities, pointing to the effectiveness and accuracy of these new systems. However, this functionality remains secondary to economic efficiency improvements. The GAO sometimes presents the efficiency of algorithmic systems as necessary due to the size or the complexity of agency problems (Figure 3). In these circumstances, GAO relies on efficiency alone to establish the legitimacy of adopting algorithmic technologies. Where algorithms are framed as necessary to meet the demands of increased scale and speed, efficiency risks crowding out other legitimacy considerations, like functionality. Prior work about the adoption of algorithmic systems in government, prioritizing economic efficiency over other values’ risks undermining the administrative state's pursuit of legitimacy, particularly where the adoption of algorithmic systems implicates human rights (Waldman, 2020). While this literature largely discusses how algorithms

Similar to other forms of quantification, enhanced efficiency is the central promise of algorithmic adoption (Engstrom et al., 2020). Here, we observe continuity in the way that efficiency is operationalized: algorithms promise to extend the legacy of quantification by automating the assessment of and optimization toward economic goals. Yet, GAO also engages with efficiency in how they conceptualize challenges of algorithms through the economic costs of algorithms. We observe that the promise of greater efficiency is uncertain. When presented as a risk of algorithms, efficiency continues to be conceptualized in economic terms. Our findings indicate that, while efficiency is conceptualized in similar ways under the algorithmic regime and the prealgorithmic quantification regime, there is less certainty about the result of algorithmic technology. Although economic efficiency remains an essential tenet of legitimacy, algorithms promise to further efficiency just as they threaten to undermine it.

To intervene where efficiency is uncertain, we find that GAO relies on nonalgorithmic forms of quantification to establish the legitimacy of new forms of algorithmic administration. To mitigate the efficiency risks brought on by the uncertainty associated with algorithms, GAO turns to more established everyday quantification practices, like cost–benefit analysis or quantitative risk analysis, as demonstrated in Figure 3. We note the collision of long-time legitimacy mechanisms, like cost–benefit analysis and oversight, with new mechanisms like technical validation, pointing to a subtle shift to extend rather than disrupt prior logics of cost-based quantification. By combining the adoption of algorithms with more established forms of quantification, GAO attempts to reinforce algorithmic technology where uncertainty around its efficiency threatens to undermine administrative legitimacy. This reflects continuity in conceptions of efficiency and legitimacy between algorithmic and nonalgorithmic forms of quantification. However, algorithmic technologies are not associated with the same level of legitimacy as other forms of quantification like cost–benefit analysis, which has been institutionalized within administrative legitimacy practices.

Findings: Nonarbitrariness in the algorithmic administrative state

The GAO refers to the value of nonarbitrariness as a promise of algorithms (Figure 4). As with other forms of bureaucratic quantification, the value of nonarbitrariness is demonstrated through appeals to

The administrative state's efforts to establish its own legitimacy have long appealed to their own objectivity and nonarbitrariness, including quantification as an instrument for objectivity. However, the critical literature stresses that—just as any decision-making system—algorithms are never neutral. Rather, algorithms require policy choices around “what data sets to use to train the algorithm, to how to define what the algorithm's target output is, to how likely the algorithm is to produce false positives versus false negatives” (Kaminski, 2020: 122). That is, algorithmic technology cannot and can never achieve objectivity, although their proposed benefits include reduced bias, increased accuracy, and increased consistency (e.g., Engstrom et al., 2020; Kleinberg et al., 2018). We see these values—fairness, accuracy, and consistency—standing in for evidence of nonarbitrariness in algorithmic decision-making.

Consider one example, where GAO takes issue with a system referred to as the “ground officer algorithm” for its misleading name. Unlike mathematical or computerized algorithms, which GAO claims are “systematic,” the ground officer algorithm relies primarily on human judgment, which they imply does not entail the same protections against arbitrary decisions (Figure 4). In addition, we see that quantitative demonstrations of nonarbitrariness are privileged over judgment; for instance, quantitative information takes the place of, and makes obsolete, other forms of justification. The adoption of algorithms extends quantification's turn toward mechanical objectivity as a way to enact nonarbitrariness (Daston and Galison, 2007), further marginalizing the role of agency expertise in demonstrations of administrative legitimacy.

While nonarbitrariness is sometimes framed as a promise of algorithms, it also appears as a challenge of algorithms in the administrative state, focused particularly on potential inaccuracies. We find that where the arbitrariness of algorithmic systems is in question, demonstrations of nonarbitrariness are based in appeal to metrics like accuracy rather than reason-giving. Providing expert-based justification and reasoning for deviating from prior decisions has long been a cornerstone of administrative defense against the arbitrary and capricious legal standard to which agencies are held. However, in the context of algorithms, accuracy metrics stand in for more reason-based explanations for decisions. Under this vision of nonarbitrariness, it is not the unjustified exercise of discretion but the possibility of errors that threatens to undermine the legitimacy of algorithmic systems. In one example, we observe nonarbitrariness conceptualized as predictive validity, refocusing attention to prediction and accuracy metrics.

This is distinct from other quantitative demonstrations of nonarbitrariness like cost–benefit analysis: Cost–benefit analysis leaves room for agencies to engage in interpretation and judgment through deciding how to assign value, an inherently ambiguous task. Yet algorithms inherit the same ambiguities, particularly in deciding how to operationalize and encode policy goals. These acts of interpretation become detached from assessments of legitimacy when evaluation becomes about assessing accuracy, a task that assumes away ambiguities in favor of a simple binary between correct and incorrect.

Focusing on inaccuracy as the primary risk of nonarbitrariness represents a shift in how arbitrariness is understood within agencies. Even when algorithmic systems are highly accurate, the inner workings of systems may be opaque or unexplainable in ways that challenge traditional notions of nonarbitrariness. As Berman explains: “To lack arbitrariness, the government must have some justification for the exercise of its power, and that exercise of power must have some connection to the proffered explanation” (Berman, 2018). Linking nonarbitrariness with reason-giving reveals

This shift in nonarbitrariness is further exemplified in GAO's legitimacy practices. Across the GAO reports, we observe that interventions to identify algorithmic errors emphasize human assessment, but that the role of human judgment shifts over time. In our earliest report—written in 1968 and quoted in Figure 4, GAO privileges human judgment above algorithmic outputs. This orientation toward human judgment continued for decades. In this context, human expertise, that is, expert judgment, is positioned as a solution to the problem of inherent uncertainty, ambiguity, or general purposeness that potentially arbitrary machine decisions leave open.

However, in more recent reports, we observe technical improvements become a primary intervention while human judgment is reduced to the role of review

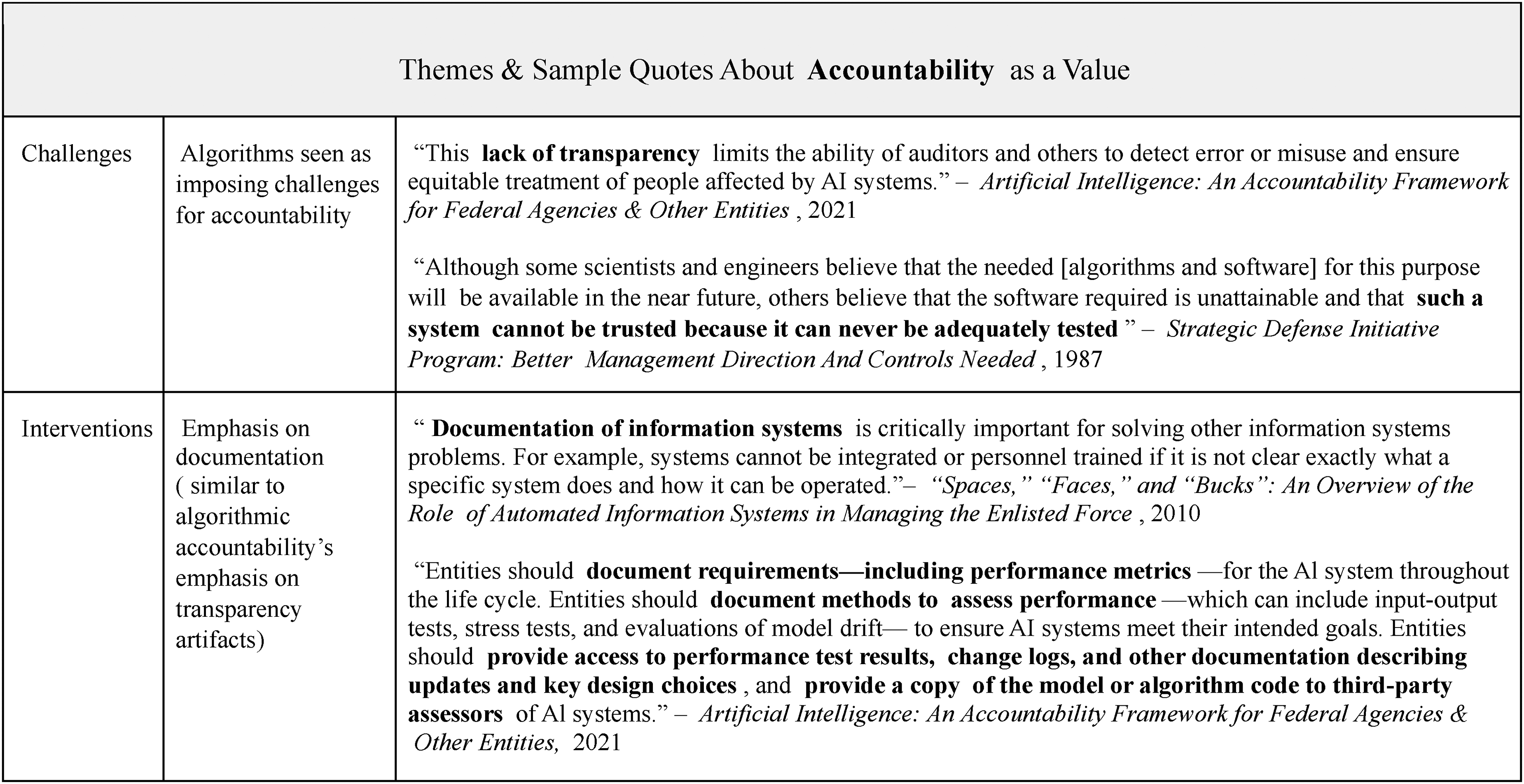

Findings: Accountability in the algorithmic administrative state

We find that accountability is not called upon to legitimate the adoption of algorithmic technology to the same extent as efficiency and nonarbitrariness. In the GAO reports, we do not observe accountability called upon to support the adoption of algorithmic technologies; instead, accountability appears primarily in the context of their challenges. The examples in Figure 5 demonstrate the challenges of oversight due to both opacity and inherent technical limitations.

Critiques of algorithmic systems have raised concerns that the inscrutability of complex technical systems poses a threat to transparency, explainability, and, as a result, on the ability of nontechnical stakeholders and experts to meaningfully participate in accountability processes. The lack of explicit attention to accountability from the GAO may be related to the fundamental barriers to accountability around algorithmic contexts. Building upon Nissenbaum's (1996) “Accountability in a Computerized Society,” Cooper and colleagues (2022) have theorized that the barriers to accountability are heightened in an increasingly algorithmic state. In response to accountability concerns, a significant portion of the literature on the use of algorithms in government has focused on how these systems can be made more accountable. These solutions tend to focus on either transparency and explainability or on expert and public participation. (Ajunwa, 2023; Busuioc, 2021; Citron, 2007; Kaminski, 2020; Vaccaro et al., 2019).

Some research argues that algorithmic systems, especially those designed to be explainable, enable or even improve transparency in government decision-making (Coglianese and Lehr, 2019; Kleinberg et al., 2018; Levy et al., 2021) through explicit problem formulation and explanation of design choices. In contrast, we observe that accountability-related promises are seldom used to justify the adoption of algorithms; accountability is frequently cited as a challenge of algorithmic adoption. And while opacity and transparency concerns are highlighted by GAO, it is worth noting the well-recognized risks to algorithmic technologies that were not highlighted in GAO reports. Work on data justice highlights the expansion of data-driven surveillance that undergirds algorithmic governance (Dencik et al., 2016; Kitchin, 2023). Prioritizing efficiency while neglecting accountability legitimizes algorithmic public administration, without imposing a duty to justify the intensified data collection that these algorithms demand.

This focus on opacity as the primary accountability challenge is reflected in GAO's practices around algorithms. We observe that interventions and legitimacy practices based on accountability emphasize documentation. This emphasis aligns with the algorithmic accountability literature's embrace of transparency artifacts. Indeed, treating documentation as a substitute for accountability can be seen as part of a larger policy shift towards traceability as accountability (Kroll, 2021). This shift seems to be an interesting outcome of algorithmic adoption: This new conception of accountability is a shift from traditional administrative legitimacy practices that have centered presidential or judicial oversight and public participation as key means to establish accountability (Bressman, 2003). While documentation might in theory facilitate such oversight and participation, this link is not made explicit in the GAO reports that we analyze. Instead, “thirdparty assessors” are invoked Figure 5, perhaps reflecting the perceived challenges of ensuring algorithmic accountability without technical expertise. Archival scholars have long noted the importance of open records for democratic accountability, but also highlight the complications brought about by questions of control, access, trustworthiness, and preservation (Cox and Wallace, 2002). These concerns are particularly salient when information is governed by private entities, such as algorithm developers, with different obligations to the public than traditional government actors (Baykurt, 2025; Kranich, 2006). While GAO has identified transparency as a first step toward accountability, they risk overlooking these challenges when documentation practices are not explicitly designed with goals like democratic participation in mind.

Discussion

Quantification has long been used as a strategy to produce legitimacy. In turn, quantification has shifted how legitimacy is enacted by the state. Our findings show that the uptake of algorithms is an extension, not fully a deviation from, this history of quantification. Quantification's promises of efficiency, nonarbitrariness, and accountability are similarly used to legitimate agency adoption of algorithms and artificial intelligence. We observe that the promises of algorithms follow similar arguments as quantification—but are also tempered by accompanying concerns about how they might instead undermine efficiency, nonarbitrariness, and accountability.

Unlike prior forms of quantification that have been adopted to produce legitimacy in contentious, low-trust settings (Porter, 1995), our findings show that algorithms have instead been adopted for uses that are not subject to significant external scrutiny. We find that the majority of domains for which algorithmic adoption was discussed were related to internal agency management, in line with reported agency use of AI (U.S. Government Accountability Office, 2023).

This key difference—algorithms appearing in internal use rather whereas quantification historically intervened upon contested, low-trust settings—points to a subtle deviation in the administrative use of algorithms. We propose that algorithms have not yet been institutionalized as legitimation tools in U.S. public administration. For the administrative state, legitimacy is both used as a reason to adopt algorithms and as a downstream impact of algorithms.

Through this dual function, administrative algorithms shift what makes bureaucratic authority legitimate: the adoption of algorithms encourages legitimacy practices that align with the affordances of these technologies. Like earlier forms of quantification, algorithms encourage number-based, easily measured demonstrations of legitimacy, like financial cost. Consequently, algorithms are positioned as a solution to problems of efficiency—particularly of scale and cost— rendering increased efficiency the primary outcome upon which the success, and the legitimacy, of algorithms are assessed. Algorithms also bring about new assessments of legitimacy such as the use of predictive accuracy, a traditional way of evaluating algorithmic technologies, to stand in for other demonstrations of nonarbitrariness. Simultaneously, algorithms have a downstream impact of making accountability more challenging. Where algorithmic technologies don’t align with traditional legitimacy practices—notably, their opacity contrasts with traditional demands for transparency, participation, expert reasoning, and accountability—these concerns are often sidelined. This encourages algorithmic adoption in less visible arenas and ultimately sidelines accountability as a central tenet of legitimacy within the algorithmic administrative state.

Beyond shaping legitimacy based on what is actionable, these technologies also rearrange organizational function. Quantification not only shapes what practices stand in for legitimacy but also how they are negotiated. Algorithms do the same. Scholars and government agencies have called to attend to not just what algorithms do, but how they claim to do it. Specifically, the implicit measurement processes in quantified and algorithmic systems change where administrative decisions are made: What are these systems aiming to do? What concepts (benefits eligibility, fraud; predicted relevance; predicted needs) are operationalized? How, and by whom? (see e.g., Jacobs and Mulligan (2022).) As Mulligan and Bamberger (2019) have argued, procurement of algorithmic technologies can swiftly displace and redefine administrative functions (and expertise, accountability, and oversight). This literature reveals that replacing one component of a sociotechnical system (e.g., whether an agency official or an algorithmic system performs the task of policy administration), even when ostensibly performing the same function, can have larger political implications for the system's values (Abdu et al., 2024; Goldenfein et al., 2020; Mulligan and Nissenbaum, 2020). Understanding how algorithmic technologies reshape governance processes remains an open empirical question (Farrell, 2025).

Both existing administrative practices and the technical affordances of algorithmic technologies come together to shape how algorithms are incorporated in government. The resulting collision has meaningfully shaped how agency legitimacy is understood; algorithmic technologies are merely one part of a growing push toward efficiency. Although efficiency comprises many meanings, a cost-based conception of efficiency has served as one of the strongest rhetorical strategies for both deregulatory activity and algorithmic adoption. Efficiency, including lack of so-called waste, is used to demonstrate the legitimacy of agencies. However, this perspective—which evaluates agencies not by the tasks they accomplish but by how little they cost—invites systematic underfunding of the administrative state (itself not a new activity) 2 and its dissolution (words containing “efficient” or “efficiency” appear 154 times in Project 2025; Dans and Groves, 2023; cf. Chen 2025). Scholars concerned with the adoption of algorithms and AI in public administration must reckon with the broader movement toward efficiency-based legitimacy practices that algorithms accelerate and entrench.

Limitations

We acknowledge some basic limitations to our empirical strategy. First, our analysis is necessarily limited by its United States-centric approach, and within that context, GAO is only one aspect of how legitimacy is operationalized. We could instead consider other empirical sites for understanding legitimacy in the administrative state, for example, through other oversight bodies, in judicial decisions, or across individual agencies and their on-the-ground deployment. Choices comprising our empirical strategy, including case studies, qualitative coding, limited sample (random subsample of identified corpus), and a nonrepresentative sample due to choice of corpus (reports with “algorithm” or “artificial intelligence”), all limit our potential findings. Although we limited our search to only a narrow set of keywords, our findings cover a wide range of technologies. Early technologies include biometric identification, defense simulations, and cryptographic cybersecurity, while more recent reports cover risk prediction algorithms and automated compliance tools. The functionality and ethical implications of these technologies vary widely. Despite this variation, we observe widely similar legitimacy language across different domains and types of technology. Additional text-based methods including larger-scale computational analysis could additionally be used to expand this analysis.

Yet, GAO's central role in overseeing the entire administrative apparatus of the U.S. government provides an important case study in how everyday practices around technology shape what is seen as legitimate. Through their oversight and policy recommendations, GAO governs when it is permissible for agencies to turn to algorithms, on what grounds, and with what safeguards. More broadly, the institutional context of GAO, the historical turn to quantification in public administration, and the technical capabilities of algorithms all affect what is ultimately seen as a legitimate agency use of algorithms. This results in a co-constitutive relationship between administrative legitimacy and agency adoption of algorithms.

Conversely, existing legitimacy practices shape how algorithmic technologies are incorporated into agency workflows. The GAO's long history as an accounting institution, for example, has shaped the way that it performs its oversight duties. Even as its mission has shifted to emphasize accountability, GAO conceptualizes accountability's primary goal as ensuring the cost efficiency of agencies.

3

Already institutionalized everyday legitimacy practices like cost–benefit analysis reflect a broader push toward administrative efficiency and dictate where agency algorithms are seen as legitimate. When algorithms are financially costly, they are seen as risky, but when they help underresourced agencies increase productivity, they are seen as necessary despite other risks and limitations. This accelerates an ongoing collision of corporate and administrative legitimacy that evaluates agencies using the same metrics as private companies, overlooking the government's primary goal of serving the public interest (Short, 2023). We see the role of agency officials shifting from experts to professionals with technical expertise increasingly expected to be located

Conclusion

We contextualize the adoption of algorithms in U.S. public administration within the legacy of nonalgorithmic forms of quantification and broader histories of administrative legitimacy. Whereas the history of bureaucratic quantification points toward the deployment of numbers to confer legitimacy, our analysis shows that government algorithms have largely not been deployed to advance administrative legitimacy. Yet, the justifications used to deploy algorithms in agencies’ everyday activities demonstrate how legitimacy is reformulated to account for new practices. We show that while the traditional pillars of administrative legitimacy—accountability, nonarbitrariness, and efficiency—remain essential, their relative importance has shifted alongside the practices associated with them. Specifically, we note that algorithms are legitimized by diverting attention away from administrative accountability practices and refocusing legitimacy conversations to highlight the importance of efficiency. Changing notions of nonarbitrariness that center accuracy over explanation transforms the role of experts from substantive, conceptual work to supervisory auditing.

While we have focused on the administrative state as a key site where legitimacy, authority, and power are negotiated, our findings have implications beyond the state. Platform studies scholarship has noted that private technology companies have increasingly taken on government functions, extending questions of legitimacy beyond the state and further complicating questions of accountability (Cohen, 2025; Iliadis and Acker, 2024). As authority is increasingly wielded by those outside of government, the legitimacy of their authority, and the practices used to establish their legitimacy, must be continually reassessed. In this article, we demonstrate the need to examine legitimacy not only as a theoretical construct, but as an empirical boundary defined and maintained through the everyday practices of those in power. This has important implications for who has the authority to enact policy, and on what grounds—both within the administrative state and beyond.

Footnotes

Acknowledgements

The authors presented an earlier version of this Article at the Privacy Law Scholars Conference, May 30–31, 2024, at the Georgetown University Law Center. The authors are thankful for comments from those participants and particularly for feedback from Kendra Albert, Lindsey Barrett, Julie Cohen, A. Feder Cooper, Jake Goldenfein, Karen Levy, Kirsten Martin, Jeremy Seeman, Alicia Solow-Niederman, Richmond Wong, and Elana Zeide. This work was supported in part by the Initiative for Democracy and Civic Empowerment at the University of Michigan.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Dr. Jacobs has received funding from Microsoft and is a 2024 Microsoft Research AI & Society Fellow. Both authors received funding for this work from the University of Michigan Initiative for Democracy & Civic Empowerment.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.