Abstract

Governments around the world are increasingly turning to algorithms to support or fulfil administrative tasks – from automating social benefit regulation to the automatic determination of the merit of court cases. With this global trend came along an increasing number of scandals that often resulted from a mismatch between implementation of an algorithmic process by the government and public expectation. These (violated) expectations concern several factors of the deployment, which are currently understudied. This article analyses how six of these factors – institution, aim, legal field, data provenance, trigger, and level of automation – influence social acceptance of automating administrative and legal processes in France. From our factorial vignette study with 6 variables across 540 vignettes and N=7,560 assessments, we draw three main conclusions. First, we find that the institution, aim, data provenance, trigger and level of automation are statistically significant in driving the acceptance of automatically processable regulations. Second, we find that acceptance across all conditions is only slightly positive on average, when expressed on a scale from −5 to +5. Overall, our study confirms that the social acceptability of the implementation of algorithms in administrative and legal processes is highly dependent on specific implementation and contextual details; public discourse on the implementation of such algorithms hence requires information about specifics of their deployment.

Introduction

Public administrations increasingly deploy algorithms to render administrative processes more accessible and efficient (Tamò-Larrieux et al., 2025). The academic literature as well as reports of civil society have documented these practices and raised awareness about the various social implications that automation in the public administration entails (Gerdon et al., 2022; Kolkman et al., 2024; Mittelstadt et al., 2016; Munch et al., 2024). We have seen a growing concern in particular when public administration processes are automated that prescribe a legal process, which has been termed computational law, legal artificial intelligence (AI), or automatically processable regulation (APR, see Guitton et al., 2022a). Those terms have in common an intentional translation of a regulation into a computer programme in order to be rendered in an automatic manner (e.g. enabling a legally binding decision to be taken, determining if social benefits law allows for certain benefits, enabling a judge to render a decision about whether someone should be released on bail). We adopt the terminology of ‘APR’ in this article as we deem it more accurately and narrowly defined while at the same time encompassing certain trends in the automation of public administration, such as automatic decision-making. Namely, APR is defined as: ‘pieces of regulation that are possibly interconnected semantically and are expressed in a form that makes them accessible to be processed automatically, typically by a computer system that executes a specified algorithm, i.e., a finite series of unambiguous and uncontradictory computer-implementable instructions to solve a specific set of computable problems’ (Guitton et al., 2022a). Past implementations of APR include obtaining access to social benefits and issuing binding tax invoice (Gutknecht, 2025; Peeters and Widlak, 2023). A concrete and well-documented example relates to a law for a tax rebate, the New Zealand Rates Rebate Act, which states that a taxpayer is entitled to a rebate calculated as such: ‘two-thirds of the amount by which those rates exceed $160, reduced by (ii) $1 for each $8 by which the ratepayer's income for the preceding tax year exceeded $26,510, that last-mentioned amount being increased by $500 in respect of each person who was a dependant of the ratepayer at the commencement of the rating year in respect of which the application is made; or (b) $665,- whichever amount is smaller’. This part of the law would then be transformed into computer code as presented in Figure 1.

One automatically processable regulation (APR) version of New Zealand's Rates Rebate Act (from Tamò-Larrieux et al., 2025).

The implementation of the computer code therefore allows individuals to understand how the regulation applies to their specific case, and if their situation changes, what the impact would be. Similar implementations across many legal fields have already taken place, giving users a way to learn the law. APR is hence broader than automated decision-making systems in that it can be used outside of decision-making process (notably to foster access to the law), and it can be used outside of state entities by private actors seeking to replicate some of its advantages.

But the automation of administrative and legal processes raises further implications, from the range of possible interpretation of the law, to the access to the implementation choices depending on the very specific context in which APR is deployed (Cobbe et al., 2020; Diver et al. 2022; Guitton et al., 2022b; Hildebrandt, 2020). Despite the benefits of automating administrative and legal processes, legal scholars have voiced concerns about formalising administrative/legal processes, warning that code might pose a threat to the nature of law, the democratic principles which underpin our society, and to power relations (Hildebrandt, 2020; Brownsword, 2019). Automated administrative and legal processes introduce social challenges, such as failures to uphold public values like fairness, meaningful human involvement and technical ones, such lack of accuracy and reliability. Addressing these socio-technical challenges requires a multidimensional approach, addressing the design, validation, use, management and oversight of automation projects (Novelli et al., 2024; Wieringa, 2020). This also means ensuring legitimacy at every stage: from gathering data and decisions (input, see e.g. Kolkman et al., 2024), to how they are handled (process, see e.g. Janeček, 2023), to whether the outcomes are fair and acceptable (output, see e.g. Grimmelikhuijsen and Meijer, 2022).

Beside the challenges that these projects trigger, the bulk of them are conducted with little to no consultation of the concerned citizens, leading scholars to conclude that ‘the citizen's perspective is missing’ (in this case in Finland; Esko and Koulu, 2023). To remedy this missing perspective, scholars have started surveying the public's position towards automated decision-making in public administrations in the United States (see e.g. Waldman and Martin, 2022; Grimmelikhuijsen and Meijer, 2022; Lünich and Kieslich, 2024; Sidhu et al., 2024) and notably looking at whether the decisions are perceived as being legitimate. It is hence warranted to expand such studies to contribute specifically on the social acceptability of APR in public administration.

Social acceptability, though rarely defined (Wüstenhagen et al., 2007), generally refers to public support for a policy, either as passive behaviour or as attitudes before its implementation (Schuitema and Bergstad, 2018). Which public is required to bring support can be controversial (Yates and Lalande, 2021). Passive behaviour refers to the stance that acceptability is ‘the absence of social disapproval’ (Vandenbos, 2007), with disapproval expressed in the forms of reactions such as contestations ranging from starting a petition to running afoul of the policy. The passive behaviour definition is often to be found in the human–computer interaction literature (Alexandre et al., 2018; Koelle et al., 2020; Koelle et al., 2019), where certain distinctions exist, for instance between perspectives centred on the community a person belongs to, or their social structure (Koelle et al., 2020). The human–computer interaction's emphasis on users is most prominently evidenced with the seminal work of the ‘Technology Acceptance Model’ of Davis et al. (1989), and in the ‘Unified Theory of Acceptance and Use of Technology’ of Venkatesh et al. (2003). Factors at the level of the individual certainly do play a role, for instance when it comes to driving the two-pronged inequality gap between those possessing the technology and those who do not, as well as those who use it and those who do not (Granjon, 2022).

The findings from the literature on user acceptance contrasts with the one on social acceptance. Generally, beyond factors at the individual level, the characteristics of a system and its design, as well as the conditions of its implementation, significantly shape acceptance, including what is at stake and how individuals can navigate the process. For example, Schuitema and Bergstad (2018) highlighted how social acceptability is a precondition for (environmental) policy acceptance, including individual outcomes, collective outcomes and perceived fairness. Furthermore, Chan et al. (2025) showed that the exact design of e-government services influences citizens’ experiences based on the public value perspective. In the context of government automated decision-making, public acceptance is shaped by a few further factors: the broader policy context, accuracy of outcomes and procedural fairness, including opportunities for human communication and perceived control over the process (Haim and Yogev, 2025); variations depending on the type of decision, perceived fairness and efficiency (Starke et al., 2022), political ideology, and personal demographics (Sidhu et al., 2024; Granjon, 2022); and citizen involvement in the design and implementation process to support legitimacy, trust and social responsiveness (Gesk and Leyer, 2022). Understanding social acceptance might provide crucial insights for effectively deploying automation in the public sector (Gesk and Leyer, 2022; Parycek et al., 2023). Such knowledge can guide policymakers and administrators in deciding first whether automation of administrative and legal processes should be pursued at all. And if automation should be pursued, how should policymakers and administrators align the implementation with the public's expectations?

In this article, we investigate through an online experiment the effect of different contextual factors of automating administrative and legal processes on social acceptability. We acknowledge that cultural differences are central in social acceptability studies (Kothaneth, 2010; Lee et al., 2013), and that cultural differences are not bound to a specific country. However, for the experiment we needed to focus on a large enough population which is why we aimed for French residents. This choice is supported by the development of the proactive administration in France (Labo Société Numérique, 2023) where there is an increasing number of initiatives to simplify administrative procedures, in a logic of going towards citizens, from pre-filling administrative forms based on data held by the administration (e.g. tax returns), to experimentations to proactively inform citizens of their rights (Direction Interministérielle du Numérique, 2023, 2023) to the automatic payment of allowances (e.g. automatic payment of Chèque Énergie). As part of this drive to simplify administrative procedures, legal provisions have been passed, for example by facilitating the data sharing between administrations (Loi n° 2022–217).

The online experiment (see Figure 2) specifically focused on realistic APR projects and test how different factors (institution, aim, legal field, data provenance, trigger and level of automation), influence the social acceptability of APR, and this in two different contexts: one general with actors ranging from judges to MPs to public administration clerks, and one ‘narrow’ focused only on projects within public administration, projects that would implement a version of proactive administration.

Overview of the experiment conducted on the social acceptability of automatically processable regulation (APR).

The rest of the article is structured as follows. We first proceed with a methodology section discussing each hypothesis and experiment design. Upon this basis we briefly present which hypotheses can be accepted (institution, aim, data provenance, trigger and level of automation are statistically relevant in driving the acceptance of ACRs). Finally, we discuss these results in the last section.

Methodology

Hypotheses

There are a great many possible factors influencing the social acceptability of APR; we decided to focus on eight, with the rationale for the choices as well as for their associated values presented here.

First, there are different ways of classifying automation of administrative/legal processes (Tamò-Larrieux et al., 2025), and we rely for the experiment design on the conceptualisation of ‘automatically processable regulation’ (APR) by Guitton et al. (2022a). The authors differentiate how APR can be used in different settings, ranging from judges using risk assessment instruments to gauge probability of re-offence to a social benefits clerk verifying eligibility. There has already been much pushback against systems used by judges to derive a probability of re-offence, with probably the most famous one called COMPAS (Van Dijck, 2022). The system is not public and assumptions behind the model can therefore only be guessed, and usually defendants do not know either whether a judge has used the system or what the output was, leaving little possibility for a recourse. In criminal court proceedings, the stakes are therefore high, with possibly privation of liberty. Yet other possible cases include members of parliament using APR to summarise past amendments to the law (Gesnoin et al., 2024). Here the stakes are not on a personal level but could influence the debate and vote in plenum. Going further, prior research highlighted different forms of support within public institutions administering social benefits, be it administering family benefits, unemployment, health insurance or pensions (Wendt et al., 2011) as the problem they seek to solve and the bureaucratic challenges that they face when doing so differ. We hence propose that, in line with past research (Grönlund and Setälä, 2012), that:

The intended public institution to use the APR impacts social acceptability.

Second, prior research on acceptance of automation has shown that the ‘type of task’ mattered (Castelo et al., 2019), notably whether the task involved rather emotions or cognition (Lee, 2018). APR breaks down in two main aims, focused on efficiency and on accessibility to the law, respectively. APR may be developed for individuals to query the law – for example how to handle one's rights as a tenant versus the landlord's rights (Westermann and Benyekhlef, 2023). The usage is therefore for them to better understand the law in vigour applied to their specific legal quandary. It is a reasonable expectation that there could be more social acceptability, as a different level of stakes in play, for systems geared towards fostering access to the law rather than more ‘efficient’ decision-making from authorities. We hypothesise that:

The aim of the APR impacts social acceptability.

Third, as a consequence of different public institutions using APR for different aims, it follows that APRs are likely to concern different legal fields – a variable which has already been the subject of previous studies (Yalcin et al., 2023). The nuance we seek to introduce is that the same institution – say, a judge – might be perceived differently if they have to leverage APR in criminal proceedings or when it comes to ruling on a tax case (Spire, 2018) or an individual's nationalisation case or on administrative decisions related to social benefits. All come with different levels of politicisation (also referred to as hierarchy in agenda-setting; Ullrichova, 2023), and as such, with potential differences in support. As the experiment considers the narrow case of the proactive administration focusing on social benefits, we can also distinguish within the topic a range also with different levels of politicisation and associated support when it comes to family allowance, health insurance, unemployment, pension or housing benefits (see various polls ranking concerns, e.g. from YouGov 1 ), all representing different fields. We therefore hypothesise that:

The legal field of the APR impacts social acceptability.

Fourth, the data underpinning how administrative/legal processes are automated could matter (Diver et al., 2022). More specifically, the context through which the data was obtained (Nissenbaum, 2011), for instance whether a user provided this data to a specific institution for a use only by this very institution, or whether an institution obtained it via a different channel unknown to the user, could have an impact on social acceptability (past research in the context of sharing health data evidenced this, Varhol et al., 2023). In order to test the hypothesis that data provenance impacts social acceptability, three possible values are in scope. First, that the data used by an institution comes from the user providing this data to the institution specifically. Second, that an institution uses all the data that the user gave to the state (meaning other public institutions). And third, an institution uses all public data available, meaning including data published online. Drawing on the work of ‘contextual integrity’ (Nissenbaum, 2011), it is questionable whether individuals would have reasonable expectation of the state using their online published data this way and consequently, this would have an impact on their perception of the implementation, and on their support thereof.

The data provenance of the APR impacts social acceptability.

Fifth, we hypothesise that there could be differences depending on who triggers the process, whether it is a citizen or an institution, or even, whether there is a need for someone to trigger it at all. The core tenet of the proactive administration literature is that the state's role should be to provide a person with their right (e.g. to a social benefit) without the need for the person to start an administrative process (Marzolf, 2024).

Who triggers the APR impacts social acceptability.

Sixth, certain automated administrative/legal processes require input for the citizen, while others will seek to remove citizen input completely in an effort to maximise automation (Guitton et al., 2022b). Previous research has shown how humans react differently when presented with different levels of automation (Schaefer et al., 2016). When it comes to APR, it is similarly possible to imagine systems through which the affected individual has to manually validate all of the data, or whether this is all done in the background without their involvement. In one case, control is retained, in the other, comfort of not having to sift through one's own data and delegating. We therefore hypothesise that:

The level of automation for data entry impacts social acceptability.

Past research has also shown how age (Sidhu et al., 2024; Niehaves and Plattfaut, 2014; van Deursen et al., 2011; Guner and Acarturk, 2020; Granjon, 2022) plays a role in explaining support in technology, and by extension, could play a role in the context of automated decision-making.

Age impacts social acceptability of APR.

Lastly, we are interested in looking at the reasons behind the support. There is already wide research on the different reasons behind policy choices, notably in areas different than the one under focus here. We leverage a review on reasons to support climate policies from Drew and van den Bergh. (2015) to shortlist eight possible reasons that are applicable to the case of APR. Of all these reasons, we hypothesise that one of them will be a much more frequently cited driver (see for instance Tamò-Larrieux et al., 2025), namely trust:

Trust/distrust of institutions is the most frequently cited explanation behind a person's social acceptability of APR.

We will consider all hypotheses at alpha=0.05, meaning that statistical tests with p-values below this threshold will be deemed as accepted. The threshold is common in the social sciences for hypotheses which have reasonable odds of being accepted, as it is the case here following the justification behind them (Lakens et al., 2018).

We also emphasise that we attempt at differentiating the support for the law from the assessment of it being turned into an APR. For instance, in the context of an assessment for social benefits, we do not seek to survey the social acceptability of an institution administering social benefits and are only interested in the perception of this institution using an APR to either grant or remove a benefit to an applicant. We do acknowledge, however, that this is a confounding variable (see the Discussion section).

Design: Measuring social acceptability

Our main method of study replicates what is common in many studies of social acceptability (Koelle et al., 2020): a survey with an eleven point Likert scale (from −5 to +5). However, in light of the lack of validated questionnaires (Koelle et al., 2020) and in order to be able to study the effects of different variables, we opted for a factorial vignette study design. Vignette studies are well suited to study multiple causal, context-dependent factors affecting an outcome (Atzmüller and Steiner, 2010). In a factorial vignette design, several factors are tested simultaneously against the participants’ perception of the vignette, allowing to measure the perception of factors in vignettes relative to one another (Waldmann and Martin, 2022). Then, the participants are asked to answer one to two questions.

We used one experimental design that contained two phases to assess the social acceptability of APR projects: one phase looking at the broad range of APR applications, a second phase narrowing it down to a specific case, the one of proactive administration. Within proactive administration, only public administration clerks would use an APR to decide on matters of social benefits (housing, family, unemployment, etc). The scope is hence more restricted than in the general case where a range of actors (from MPs, to judges, to clerks) can use APR in a range of situations. The narrow case is notably much closer to an actual case study for which, at the time of the research, much discussion had occurred but without yet an actual implementation. The narrow case, more specific, is therefore intended as mechanism probing, and will allow us to compare whether we can reach the same conclusions as in the general case. Both phases were pre-registered with this aforementioned framing.

Basing the investigation on a vignette study allows us to include a wealth of factors that can impact social acceptance of ACR, as evidenced in the aforementioned section on hypotheses. These factors need to be tested in combination with each other, and this cannot be done in a traditional 2×2 or even 3×3 vignette study, in which 2 variables with 2 possible values (correspondingly 3 variables with 3 values) are tested. We can therefore modulate a much greater number of variables and through a wider range of values via the factorial vignette design, upon which we can then test the social acceptability for the scenario depending on all the variables and ask about their perception of their social acceptability and on their rationale behind it (Table 1 below provides an example of what this meant just varying across two values for ‘process trigger’).

Example of vignettes with all the varying factors highlighted in bold; only the variable ‘process trigger’ changes between the two examples; participants did not see the brackets with the name of the variables.

Once the participants have read the vignette, they are presented with two survey questions. The first survey question used is similar to those of previous surveys on social acceptability of different topics (de Groot and Schuitema, 2012; Flores, 2019; Ščasný et al., 2017; Atzmüller and Steiner, 2010; Soydan, 1995): ‘How much would you support a policy enabling the following situation to occur?’ Furthermore, we asked participants to provide a rationale for their answer with the question adapted from Drews and van den Bergh (2015) in the context of policy support for climate change, the reason being that we sought to tease out a wide range of factors which were not too narrowly defined by the literature on technology acceptance (Alexandre et al., 2018). We acknowledge that there are biases when asking a population for their preferences (Murphy et al., 2005) and more so when the topics are highly political and associated with specific moral slant (e.g. political support for extreme parties, Breen, 2000). Such biases also transpire when surveying online political opinions, with a slant towards ‘age, gender, race, education and employment status’ (Hargittai and Karaoglu, 2018). We present below the demographic of the participants alongside these characteristics in order to be as transparent of the possible slant as possible (see the ‘Participants’ section).

The vignette allowed us to test the different hypotheses and their different modulations (see Supplemental Appendix A for the different variations possible on the vignettes). For each of the six hypotheses, there could notably be a lot of different variations in answers and that is why we separated between a broad and narrow phase. While the broad phase sought to capture nuances across the spectrum of possible APR, the narrow phase zeroed in on the case study of proactive administration to probe further the possible nuances that could emerge when restricting a few of the numerous variables. Also, the case study was selected because it is one currently debated and experimented within French society (Marzolf, 2024; Direction interministérielle de la transformation publique, 2023); it is therefore not a mere hypothetical question regarding its social acceptability but one set in a concrete, current debate. While the hypotheses and variables remained the same, we modulated the answers to the variables to reflect the different possibilities to implement ‘proactive administration’. Lastly, while the topic proactive administration is currently debated in public fora, there are not yet actual cases in which to delve and analyse at the time of the research, hence preventing the use of another methodology such as the case study methodology.

Variables

The variables follow the hypotheses as follow (Table 2), with a few combinations removed in the narrow phase as there are not possible:

Overview of possible values for each variable in the general and narrow case.

The analysis of the general phase runs independently from the one in the narrow phase. They both follow the same step below, and we will look at whether they yield different results.

We conducted three ‘groups’ of statistical tests.

First, in order to decide on the eight hypotheses around both choices of APR implementation and reasons for policy support, we will conduct two linear regressions of the type (omitting covariate and error terms):

Where for a given participant, bi is the impact factor for scores clustered Xi corresponding to the hypothesis Hi. The p-value of bi is informative for the statistical relevance of hypotheses 1 to 7. Y will be the support given to the vignette (question 1), whereas X7 will be demographic data provided by the participant.

Second, unrelated to the decision on the hypotheses, we are interested in knowing whether there are differences within each variable. For instance, by looking at the institution, it may be that members of parliament are more socially accepted to use APR than judges. We carry out an analysis of the variance (ANOVA) within each variable and post hoc tests to determine if this is the case. ANOVA is a common statistical technique that allows to identify whether there are statistically significant in-group differences (without stating which in-groups differ, and therefore further tests are then required). In the current study, that would mean, for instance, identifying that there are different social acceptability when it comes to public institutions with at least one of the in-group members (judges, MPs or public administrative clerks) receiving different scores. Third and last, in order to decide on H8, we looked at the most frequently cited reason across all vignettes.

Participants

Within the vignette study, we focused on inter-subject variations as many other studies have done (Nivette et al., 2024; Schiff et al., 2022), with the independent variable being social acceptability score obtained from question 1. The reason for focusing on between-subjects is because of the large number of factors to test and variation possible, making it too difficult to sample and realistically obtain viable results with a within-subject design: there are 432 combinations possible in the general case, and 108 in the narrow case. Per vignette, we sought to have more than 10 answers, and decided to give each participant 5 vignettes in the general case and 2 vignettes in the narrow case, necessitating, respectively, ca. 5 and 2 minutes to complete. The choice of five vignettes per participant was to avoid survey fatigue during participation, and also to reduce the number of required participants otherwise needed with a lower ratio of vignettes per participant.

We conducted the survey online with participants recruited via Prolific during February–March 2025. Participants were French speakers based in France for both studies; we conducted the study in French. 2 We were able to recruit 1,067 individual participants in the general case, and 1,074 in the narrow case, meaning that we obtained a total of respectively NGeneral=5,370 and NNarrow=2,190 vignettes assessed (NTotal=7,560). The distribution of answers across the vignettes is presented in Table 3 with on average respectively roughly 12 and 20 participants taking each individual vignettes in the general and narrow case (with respective standard deviation of 3·3 and 3·5).

Participants’ random allocation of vignette led to a sufficient number of assessments per vignette.

In light of previous research on the impact of conducting surveys online with ‘age, gender, race, education and employment status’ (Hargittai and Karaoglu, 2018) playing a role, we provide a distribution of these factors. Respondents were on average 32.7 years old in the general case, and in the narrow case on average 32.6 years old (in comparison with an average age of 42.7 in France, Insee, 2025a). The gender distribution was 49% male and 46% female, and in the narrow case, 48% male and 47% female, rather in line with gender distribution in France (Insee, 2025b). Self-reported ethnicity was 73% White, 4·94% Asian, 4·9% Black and 7·19% Other (and the rest preferred not to say); 50·95% had no higher education, correspondingly 49·05% had one; and 41% of respondents worked a full-time job, 9% a part-time job, 8% were unemployed, 4% not in paid work (e.g. retired and disabled) and 38% did not provide data. For these last three factors, comparison with the French resident population is difficult as there is no official data collected on ethnicity, the level of education varies greatly in function of age (Insee, 2025c), and the definition of being active used in official statistics (Insee, 2025d) differs from the one used by Prolific.

Results

Table 4 provides a summary of the results; the comprehensive quantitative results are available online (see the ‘Data Statement’ section), including with the ANOVA results.

Summary of the results showing which hypotheses are accepted; blank cells means that there was no statistical significance.

Lastly, concerning H8, it does emerge that trust/distrust is the highest reason cited behind the acceptance level in both the general and narrow cases, thereby allowing us to accept the hypothesis (see Figure 3 for the distribution of the other reason cited).

Trust/distrust of the institution is the most oft-cited reason for participants when giving their acceptance level to the vignette.

Discussion

General vs. narrow case: Confirmatory evidence

Across the two phases, we find confirmatory evidence on four points. First, public administration enjoys the most acceptance of institutions. This can also be seen clearly in graph form (see Figure 4, but for the sake of space, we do not include it for the other variables). As stated in the hypothesis, the stake at play may play a role with a judge as the ultimate authority having possibly a more severe impact. Surprising is that automation of administrative and legal processes by MPs ranks at the bottom of all three institutions, even though their use of APR would not be felt directly by the public on individual cases but arguably only via changes to the legislation. We therefore suspect that confounding effects are at play here notably when it comes to the often bad perception of the public for MPs (a survey from the Parliament itself showed that only 40% of respondents trusted it while 40% found it ‘little useful’, Assemblée nationale, 2021). The point on distrust of institutions being mentioned as the most often reason (see Figure 3) would support this interpretation.

Public administration is the most accepted as an institution to implement automatically processable regulation (APR).

Second, regarding data provenance, there is a clear preference against using all publicly available data. Again, as hypothetised, this comes as a confirmation of the theory of contextual integrity whereby users did not publish data online with in mind the context that it could be used by a state institution and this would therefore constitute a violation of their expectations.

Third, the users clearly prefer to trigger the process themselves. While this is not so central to the general case, it is rather central to the concept of proactive administration, namely that the state would take over if not the whole process, at least kickstarting it (Chevallier, 2024). As the concept of proactive administration is still emerging, we argue that the trigger should constitute an important element for future discussions.

And fourth, the highest automation level (without any review) is associated with the least acceptance while the lowest automation level – as well as the user triggering the process – correspond to the highest acceptance. This last result, while in the very context of APR, is yet another piece of evidence that policy makers need to be very careful when considering broadly the implementation of algorithms in public institutions. Regarding how difficult it is to translate academic findings into the policy making space, confirmatory evidence should hence be welcome to strengthen the case for managing public expectations and implementations along the line of democratically expressed preferences.

Overall: Limited social acceptance of steering society through algorithms

Across all vignettes, overall, the level of social acceptability remains remarkably modest on average (see Figures 5 and 6 for the distribution). Of all possible combinations of variables tested, the maximum acceptance obtained was at 3·5 in the general case and 2·53 in the narrow case, while the average is at 0·18 in the general case and 0·60 in the narrow case. This finding is actually in line with previous research: in a study carrying out an international comparison amongst 16 countries, France ranked the lowest in terms of acceptance of ‘algorithmic government’ (Sidhu et al., 2024; see as well Danaher et al., 2017 on the topic), a theme very close to the acceptance of APR. Furthermore, it is also remarkable that the highest support for the variable ‘automation level’ and ‘process trigger’ corresponds to the values where there is the least automation (and so with the most manual input and review); conversely, when there is no human review and hence the highest form of automation, the acceptance is the lowest. Taken together, these points indicate that the French public has limited support to APR and that therefore authorities should take this into account when deciding whether, and if yes, how, to pursue an APR project that best aligns implementation with public expectations and support. This point is even more poignant when considering that the main reason behind this low acceptance is, as provided by participants, their trust/distrust of the institution implementing it.

The distribution of acceptance in the general phase is spread out (average=0·18, SD=2·70).

The distribution of acceptance in the narrow phase is also quite spread out (average=0·60, SD=2·50).

The other highest forms of support within the legal field were for the legal field of taxation. The highest form of support for the field of taxation contrasts with the lowest form of support in criminal law, where judicial consequences can be even more extensive (with penitentiary punishments). This lowest form of support for criminal law is in line with the push back against the use of polemical risk assessment instruments such as COMPAS in the United States or OxRec in the United Kingdom (Van Dijck, 2022).

It is noteworthy that the aim of the APR (if it is to seek efficiency gains or to increase access to the law) does play a statistically significant role in the public support for an implementation but it is in a way surprising that efficiency gains have more support than accessibility to the law. One of the explanations for this result might come from the vignette text where we implemented ‘accessibility’ with the wording that the APR would ‘support the [institution]'s interpretation’ of the law. It is therefore accessibility of the law for legal professionals rather than to laypeople; a wording more geared towards making the APR to support laypeople's understanding of the law (but which was difficult to align with the scenario in the vignette text) could bring different results.

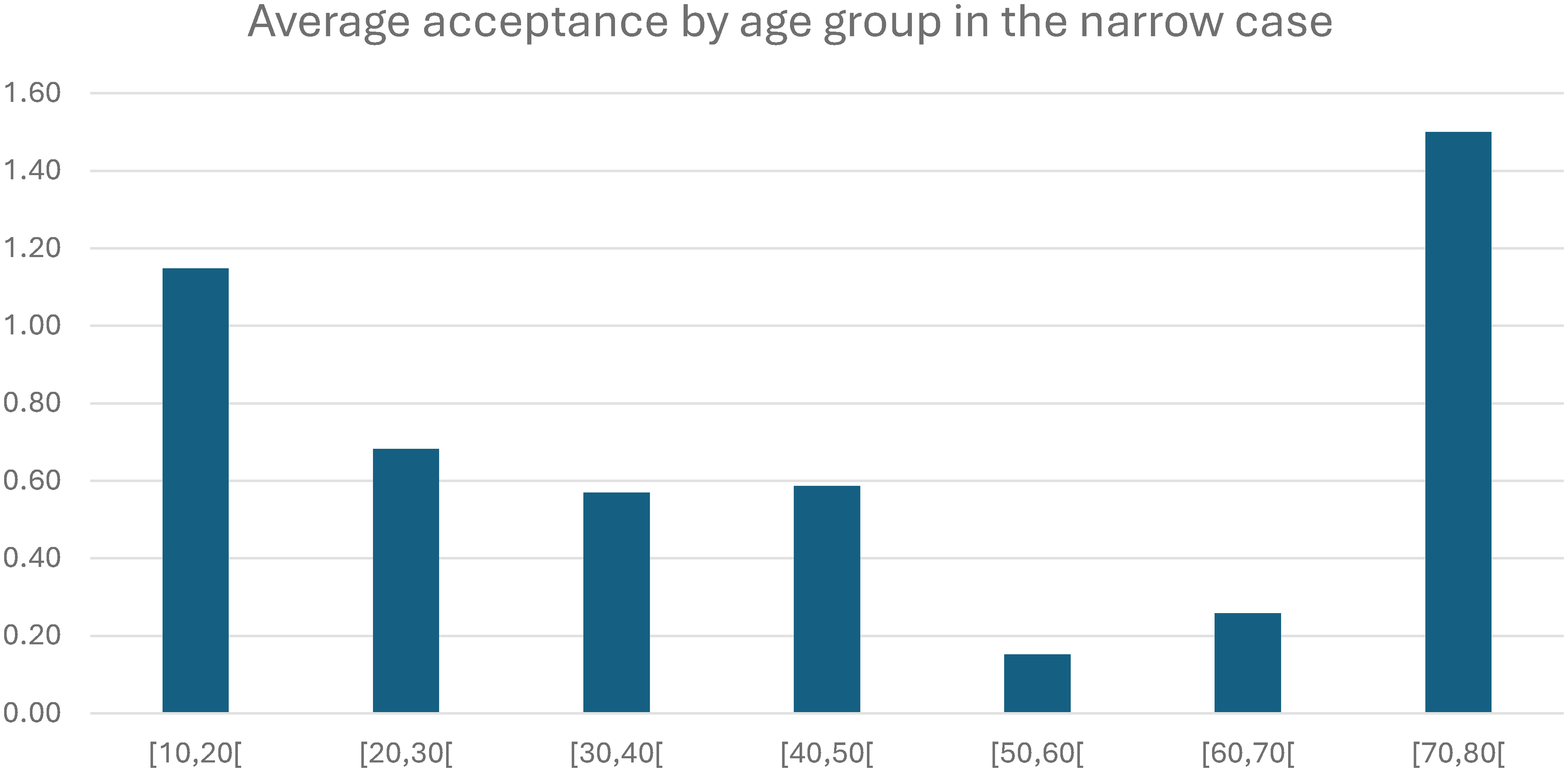

Also interesting is that the hypothesis H7 on age is at the limit of acceptance in the narrow case. Looking at the direction of the relation in the narrow case, we can remark that the younger participants give a higher acceptance for APR implementations, all confounded (see Figure 7). It could be thought that this could be because older people have, for instance, a higher disbelief in technology whereas younger people distrust institutions more – but there is no correlation between the participant's age and the reason participants give to their answer (Pearson's correlation=3·39E-03). In the same vein, a linear regression of reason against age does not yield statistical significant results (p=8·74E-01), similarly to ANOVA of age grouped by reason (p=1·75E-02). Lastly, the spike in acceptance across all vignettes for the age group of [70;80[ years old is also noteworthy, but while unusual (Niehaves and Plattfaut, 2014; van Deursen et al., 2011), other studies have also noted similar results (Guner and Acarturk, 2020).

The average acceptance per age group tends to show that younger participants give a higher answer of acceptance than their older peers (the group of [60;70[ and [70;80[ represent the odd ones out).

Lastly, roughly 13% of respondents indicated not being able to understand the vignette or the question (the ratio is similar between the general and narrow case). It is after all a niche topic which may not be accessible to all. When re-conducting the statistical analyses by excluding these participants, the results remain unchanged. Therefore, we interpreted this percentage of the surveyed population not understanding the vignette as still being reasonably low to not throw in question the validity of the methodology, vignettes or results.

Legal and design implications

The topic of automation of administrative and legal processes connects with the legal concept of automated decision-making that have legal effects or significant impact on individuals, and which within the GDPR may only occur if explicitly consented to or needed to fulfil a contract (Art. 22 GDPR; CNIL, 2018). With respect to the public entities studied within the context of this article, the GDPR constrains the examples used in vignettes. Depending on the process trigger and the automation level of APR, projects could be challenged by affected citizens. Aside from the legal implications that will impact the design of APR, the study reveals a strong need for control over APR deployed by public entities. Whereas it might be argued that some preferences expressed, such as manual data input, would be impractical, too burdensome for users, or result in a privacy-paradox-like syndrome (Kokolakis, 2017), they actually reveal a need for individuals to feel and be in control of APR for accepting these systems.

Many control and oversight mechanisms have been proposed to increase public trust in automated systems deployed by public services: data processing transparency, decision transparency (Grimmelikhuijsen et al., 2021), explicability of the systems decisions, providing a right to review and appeal to an automated decision or creating an independent oversight body (Ada Lovelace Institute, 2021). In fact, some of those mechanisms already exist in French law. For example, public bodies have transparency obligations on algorithms used to produce administrative decisions (Code des relations entre le public et l'administration, 2015); albeit not those obligations are rarely implemented by public bodies (Défenseur des droits, 2024). Overall, the question of what factors increase trust and how to steer – through regulation or design – towards enhancing trust in automation (Tamò-Larrieux et al., 2025), is a question that has been an important research field in public administration science and policy studies (Six et al., 2025; Maman et al., 2025). Still lacking in the discourse however, is a sound understanding of how different components, such as trust in the institutions, the government overall, technology, impact social acceptability of APR particularly and what interventions, such as training of public servants in how to use APR, could help remedy some of the concerns. Especially front-line public servants are key actors to ensure inclusion of citizens meaning that they could be important actors to promote acceptability of APR projects (Bernhard and Wihlborg, 2022). This seems as a particularly important point in the French context as the digital divide is the object of regular criticism when it comes to digitalisation and automation of public services, in particular by distancing the most precarious and vulnerable populations from public services (Deville, 2018). Additionally, these populations are often subject to algorithmic discrimination and harms, as can attest the current legal action against the French national office for family benefits (CNAF) for its use of an automated system to target fraud checks (Amnesty International, 2024).

Finally, the very way an APR system is designed can increase its acceptability. The use of participatory design methods, where all stakeholders are included in decision-making processes based on their needs and lived experiences, makes it possible to build a solution that aligns with people's expectations – whether they are citizens or public officials. Participatory approaches can be used not only in the system's design itself (Robinson, 2022) but also in the development and implementation of oversight mechanisms, such as audits or monitoring processes (Hintz et al., 2022). Still participation in APR, similarly to participation for machine learning, can fall into the trap of ‘participation-washing’ (Sloane et al., 2022). To avoid such a trap, designers of APR should commit to a genuine participatory approach, accepting a long term engagement as well as adapting the means of participation to the specific context in which it is deployed.

Limitations and future research

The study, as any, has its pitfalls: there are a host of further factors which we could not test, a small portion of the surveyed population would have wished for more explanations on the topic of APRs (the wording may have also been difficult to grasp at times, see Supplemental Appendix A), we only surveyed the French population and the subset of surveyed people showed a rather young age at an average of 33, and to limit the costs of the study, we did not ask for details about people's perception of possibly confounding variables, most prominently, their perception of the law itself (regardless of on which medium it is) and of institutions (regardless of what their staged role in the vignette). Another limitation is arguably that the factors for acceptance for H8 which included trust/distrust of institutions came with no randomised order. On the one hand, all participants saw the same list of factors, which allows for comparability; on the other, randomising the presentation of the factors could have provided a certain limited additional strength to the result of H8.

We acknowledge these shortcomings and are of the opinion that follow-up studies involving focus groups aimed at understanding these nuances would constitute a viable future research project. Despite these shortcomings, it would be interesting to track the evolution of acceptance in France by replicating the study cross-sectionally every 5 years or so. Regarding the fast pace of innovation in both AI and AI in government, as well as regarding the number of scandals which seem to be increasingly appearing (for a review, see Tamò-Larrieux et al., 2025), it is rather thinkable that acceptance will ebb and flow. By trying to understand the nuances in the form of support, it is envisageable that we are better prepared to manage expectations, and that governments can better deliver services that their constituency values. As such, any replication could also try to capture such historical factors: on whether the participants have been aware of any type of scandal, which type of scandal has influenced their acceptance (e.g. misuse of data, biased and damaging implementation, recourse and retort running for over a decade), or whether changes in the regulatory landscape (e.g. via GDPR, AI Act or others) has, in their own opinion, have had an impact on their perception of their social acceptability of APR.

Supplemental Material

sj-pdf-1-bds-10.1177_20539517261431640 - Supplemental material for Steering society through algorithms: Testing the social acceptability for automating administrative and legal processes

Supplemental material, sj-pdf-1-bds-10.1177_20539517261431640 for Steering society through algorithms: Testing the social acceptability for automating administrative and legal processes by Clement Guitton, Vlada Druta, Aurelia Tamò-Larrieux, Estelle Hary and Simon Mayer in Big Data & Society

Supplemental Material

sj-xlsx-2-bds-10.1177_20539517261431640 - Supplemental material for Steering society through algorithms: Testing the social acceptability for automating administrative and legal processes

Supplemental material, sj-xlsx-2-bds-10.1177_20539517261431640 for Steering society through algorithms: Testing the social acceptability for automating administrative and legal processes by Clement Guitton, Vlada Druta, Aurelia Tamò-Larrieux, Estelle Hary and Simon Mayer in Big Data & Society

Footnotes

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data statement

And the anonymised raw data as well as Supplemental Appendix A with all the vignettes and Supplemental Appendix B with all the results are available under: ![]()

Supplemental material

Supplemental material for this article is available online.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.