Abstract

The perceived importance and difficulty of accounting for algorithms in health systems continues to inform scholarship and practice across diverse fields. While accountability is often framed as a normative good, less clear is exactly what kind of normative work accountability is expected to do, and how it is expected to do it. Drawing on contributions from science and technology studies, and especially sociomaterial perspectives on governance, in this article I review how algorithmic accountability has been conceptualized in the academic and grey literature. I introduce five normative logics characterizing discussions of algorithmic accountability: (1) accountability as verification, (2) accountability as participation, (3) accountability as social licence, (4) accountability as fiduciary duty, and (5) accountability as compliance. I critically engage with the styles of valuation these are predicated upon, including how each configures the algorithm as an object of reference, and discuss the implications of this approach for understanding how health-related worlds are created and sustained, and how they might be otherwise.

Introduction

As applications of data and advanced digital technologies become more deeply embedded in public health and healthcare systems, the significance of algorithms within those systems has become clearer (Henwood and Marent, 2019; Kickbusch et al., 2021). Expanding digital infrastructures, intensifying global competition, and rising policy interest have reinforced expectations that artificial intelligence (AI) will make large volumes of data useful in new and powerful ways, contributing to their adoption across clinical, public health, administration, finance, and consumer settings (EU STOA, 2022). While AI and other digital health technologies introduce opportunities for positive change, they can also result in paradoxical outcomes (Hoeyer, 2023; Ziebland et al., 2021). For example, AI systems are often proposed as a solution to health workforce challenges, but may introduce additional administrative tasks for clinicians and patients (Schwennesen, 2019). The creation of new health data ‘spaces’ and ‘platforms’ may seek to improve representativeness in AI model training, but simultaneously introduce opportunities for cybersecurity breaches and secondary uses without consent (Terzis, 2022). These broader changes associated with a shift to datafied health represent significant rearrangements of financial, infrastructural, and technological power, which are increasingly consequential for individual and collective health-related practices (Kickbusch et al., 2021).

In return, academic, policy, and public discourse has emphasized accountability as a desirable, if not elusive, component of effective AI governance, with a World Health Organization (WHO) guidance document citing ‘responsibility and accountability’ as both a guiding principle, and also a top ‘ethical challenge’ (World Health Organization, 2021). Accountability is appealing to different health system actors, from policymakers to patients groups, as it seems to equip practices of responsibility with teeth. At the same time, accountability has been described as a chameleon-like term, often standing in as a buzzword for higher public values or proxy for good governance (Dubnick, 2014; Mulgan, 2000). These diverse understandings of and approaches to accountability make for a complex landscape of actors and interests competing for influence in the highly contested domain of health, each of which is associated with very different calculative practices, expectations of value generation, and notions of care (Jerak-Zuiderent, 2015).

Numerous conceptual frameworks have been put forward to address this complexity. One highly cited approach, for example, differentiates between accountability as a mechanism and accountability as a virtue (Bovens, 2010). As a mechanism, accountability is said to represent an institutional or organizational arrangement in which an actor can be held to account by a forum. This arrangement is typically comprised of: (1) the actor, (2) the forum, (3) the relationship between them, (4) the content and criteria of accounts provided, and (5) consequences which may result from those accounts. In contrast, accountability as a virtue describes a set of normative standards for the evaluation of public actors. These contributions are useful, offering an evaluative scheme that can be taken up by different actors in assessing the suitability of different accountability arrangements, but often say less about how those arrangements shape their objects of reference in practice, and how they are legitimized, contested, or negotiated.

Science and Technology Studies (STS) contributions, on the other hand, have emphasized the messiness of accountability, where the practical and the normative are not so easily separated (Jerak-Zuiderent, 2015; Neyland, 2016). Accountability arrangements, for example, are often justified through normative criteria regarding which particular actors, fora, accounts, or instruments of change are appropriate in a given situation (Annisette and Richardson, 2011). All accountabilities are ‘performed’ (Musiani, 2015), and made up of diverse and heterogenous components including organized professions, technologies, standards, laws, regulations, and technical infrastructures. These perspectives on accountability offer an important counter-point to those privileging human-centric, top-down, and mechanistic understandings, at the expense of broader collections of actors, interests, and material devices that aim to produce particular outcomes. This is important, as it can avoid the trap of solutionism and orient attention to the kinds of health-related worlds we want, and how algorithms and accountabilities themselves are implicated in those worlds.

‘Worlds’ in STS and other allied fields call attention to the practices that bring different realities into being, where multiple and contingent ontologies (e.g. algorithms, health, medicine, and accountability) are shaped by values and performed or enacted in efforts to impose order of some kind (Woolgar and Lezaun, 2013). Worlds are ethical and political precisely because they are often associated with efforts to impose a singular order through sanctioned values and practices, but also occasionally coercion or force. In this sense, worlds and worlding have been taken up as a postcolonial orientation to alterity (McCann et al., 2013), or as an attempt to highlight modern metaphysics of singularity enacted in ‘centres of calculation’ often located in the Global North (Blaney and Tickner, 2017; Latour, 2012). At the same time, arrangements can be otherwise (Calvert, 2024; Escobar, 2018). To Calvert (2024), ‘otherwising’ is where the transformative potential of these perspectives lie. To consider how worlds can be otherwise is to refuse to take subjects/objects of reference for granted, and to consider what might be needed to advance alternative, more inclusive realities in the face of current and historical arrangements that make claims about who and what has value.

For example, health systems are characterized by complex sets of existing accountability arrangements that worlds its objects in different ways. For the last century these have especially involved regulatory bodies, professional associations, public and private payers, hospitals, governments, and more (Van Belle and Mayhew, 2016). Since the adoption of new public management reforms in the 1980s and 1990s, accountability arrangements have become highly diverse, ranging from individual-level responsibilities for adverse events (e.g. professional liability, complaints processes) to policy-level contractual requirements variously privileging patient safety, cost-effectiveness, transparency, or equity. Technological developments have been especially consequential, offering new means of oversight and influence in what some commentators have characterized as an ‘audit society’ (Power, 1997). Big data and algorithms are now central in demands for learning health systems (Krumholz, 2014), evidence-based practice (Upshur and Tracy, 2004), and value-based care (Hogle, 2019), all of which are often informed by, and predicated upon, the perceived value and importance of data and AI in addressing various health system challenges, and economic and social policy priorities.

In this sense, algorithms can be appreciated as both objects and subjects of accountability in health systems. As objects of accountability, algorithms are increasingly deployed as means to monitor and assess public health and healthcare practices, services, and programmes, as for example in the use of AI for clinical quality improvement (Feng et al., 2022), insurance fraud detection (Cevolini and Esposito, 2020), pharmacist support for identification of atypical medication orders (Woods et al., 2014), or the generation of cardiovascular risk scores (Amelang and Bauer, 2019). Here, algorithms are seen to perform accountability by setting, monitoring, and enforcing specific clinical or health system goals (Hoeyer et al., 2019). As subjects of accountability, publics, policymakers, system developers, and others increasingly recognize that algorithms may result in unintended consequences, and are therefore in need of oversight, checks and balances, regulations, and more in order to reduce potential harms. These observations are taken up throughout this article, and reflect a position that interventions such as accountability arrangements, and their objects of reference such as algorithms, are co-produced in practices that make those phenomena understandable and relevant for different actors in particular ways. The approach also highlights how algorithmic-accountabilities in health systems are always tied to existing health-related practices, and cannot be considered independent of them.

More specifically, drawing on contributions from STS and especially sociomaterial perspectives on governance (Briassoulis, 2019; Woolgar and Neyland, 2013), in this article I review how algorithmic accountability has been conceptualized in the academic and grey literature. To date, most contributions, including those in STS, have focused on individual cases of (health-related) algorithmic accountability in practice, but have not systematically surveyed the variety of forms that accountability takes and the claims made that inform them. Going beyond individual cases is essential to understanding the multiple, intersecting, and sometimes competing logics that inform their conceptualization and pursuit in practice. In this sense, the objectives of the review were three-fold: (1) to describe normative logics characterizing different approaches to algorithmic accountability in public health and healthcare that appear in the academic and grey literature; (2) to review these logics in terms of the assumptions they implicate about both algorithms and accountability; and (3) to discuss the implications of a sociomaterial approach for pursuing alternative digital health futures. To clarify, by normative logics I mean claims, assumptions, or descriptions of what accountability does, or should do, or how it should be (cf. Ebrahim, 2009). These normative logics are tied to particular value regimes or ‘styles of valuation’ (Lee and Helgesson, 2020) which represent practical arrangements of actors, objects, and calculative practices that shape how accountabilities and algorithms are assessed (Lee and Helgesson, 2020). While others have examined normative logics of accountability in organizations (Ebrahim, 2009), or approached the topic in relation to transparency (Ananny and Crawford, 2018), as a desired policy outcome (Ada Lovelace Institute, 2021), and as an institutional arrangement (Wieringa, 2020), or introduced more generic types of accountability such as internal or external and vertical or horizontal (Lindberg, 2013), a review of the diversity of normative expectations characterizing algorithmic accountabilities in health systems, and their associated styles of valuation, is lacking. Systematically documenting these logics can point to the kinds of health systems we can expect from a turn to algorithmically mediated health, and how they might be otherwise.

I begin in the second section by briefly describing the approach taken in this article, including key methods, terms, and theoretical concepts employed. The third section introduces five normative logics of algorithmic accountability that appear in the academic and grey literature, and the resulting rules, codes, and practices that constitute different ‘value regimes’ implicated in their assessment. In the fourth section I review the practical implications of a sociomaterial approach to algorithmic accountability, including possibilities for alternative worldings of health-related algorithms and accountabilities. Finally, in the fifth section I briefly conclude with some key questions for future inquiry based on these suggestions.

Approach

Literature reviews, a distinct genre of textual inquiry, are one practical way to identify and engage with the variety of descriptions that characterize diverse goals and practices of accountability (Greenhalgh et al., 2005). Scholarly publications, policy documents, and reports focused on accountability often explicitly describe contrasting visions, goals, or practices of accountability, which implicate different worldings of algorithms and the health-related futures they work to produce. In this sense, these texts make expectations of accountability visible where they might otherwise be taken for granted, and can be used to understand situated practices of accountability, even when they are not taken up unquestioningly in practice. To be clear, the aim of this review was not a throughgoing representation of singularly ordered accountabilities represented in textual accounts, but rather, a description of the contrasting and sometimes overlapping expectations, assumptions, and normative claims that frame emerging algorithmic accountability regimes, and how the worlds they work to produce might be otherwise (Law, 2004). This matters for accountability, as it is often mobilized as a catch-all justification for action of some kind, however diverse those actions might be.

More practically, this inquiry took the form of a structured review approach (Greenhalgh et al., 2018). Structured reviews are different from systematic reviews in that they do not intend to establish a compilation of every available article that addresses a topic. Rather, they aim to identify a purposive sample of key articles and reports that are illustrative of the literature base (Coyne, 1997; Suri, 2011). Identifying literature sources associated with algorithmic accountability was an iterative process. First, keyword searches were conducted on search engines (for grey literature, such as policy documents, reports, and other generative contributions) and Google Scholar (for academic journal articles, book chapters, and conference papers). Keywords included variations of accountability (e.g. ‘account’, ‘accountable’, ‘accounting for’), algorithms (e.g. ‘algorithm*’, ‘AI’, ‘artificial intelligence’, ‘predic*’, ‘machine learning’), and health (e.g., ‘health*’, ‘public health’, ‘care’, ‘med*’, ‘clinical’). A specific focus on accountability (rather than responsibility, ethics, etc.) was chosen, as there is considerable overlap among related terms, however, conceptual diversity associated with accountability was the focus of this article. That is to say, ‘ethics’, ‘responsibility’, or other terms were only included if they were discussed in the context of accountability. Grey literature sources were included in this review as they are often cited in policy and academic discussions of algorithmic accountability in healthcare. Both empirical descriptions of accountability practices, and reports and policy documents describing accountability goals, were included as long as they met the inclusion criteria and described different visions, expectations, or instances of algorithmic accountability that mattered for how accountability was understood or pursued in relation to health. This reflects a position that practices and expectations of accountability influence each other, and that both have merit when considering different normative logics.

Sources selected for inclusion were expected to meet the following criteria: (a) explicitly addressed accountability, as stated or defined in the source document and (b) did so in relation to AI algorithms, as stated or defined in the source document. Adopting a pluralist approach, rather than narrowly defining algorithms or accountability in advance, aligned with the conceptually oriented objectives of this article, as well as other review methods focused on identifying differences across research traditions, including those focused on accountability (Greenhalgh et al., 2005; Van Belle and Mayhew, 2016). Health in this review was broadly defined to include medicine, healthcare, public health, and health promotion, including administrative, clinical, consumer, research, or population health applications of AI (EU STOA, 2022). Included contributions addressed algorithmic accountability more generally, as well as specifically in relation to public health and healthcare, as many influential contributions originated outside of public health and healthcare literature, but were frequently cited in health-specific sources, warranting their inclusion in analysis. For example, the United States National Institute of Standards and Technology AI Risk Management Framework (NIST, 2023) is not a health-specific contribution, but it has been taken up and referenced by the US Department of Health and Human Services, and other health-related organizations internationally.

This approach generated 117 sources for analysis, which primarily included academic journal articles (n = 84), reports (n = 15), and conference papers (n = 9). Academic sources were highly diverse, and included contributions from across the social sciences, humanities, law, and applied sciences. Reports also originated from diverse settings, including health professions associations, regulatory bodies, non-governmental organizations, research institutes, and government agencies.

Analysis of included sources was informed by interdisciplinary contributions from STS, including especially sociomaterial perspectives on governance (Woolgar and Lezaun, 2013), and sociology of expectations. While STS broadly engages with how social norms, values, and expectations shape and are shaped by the processes and outcomes of science, technology, and innovation, a focus on governance takes seriously how so-called ‘mundane’ practices of ordering of people and things have important consequences (Woolgar and Neyland, 2013). These practices of ordering are shaped by expectations, which help to explain the situated, distributed, and multi-sited character of diverse instances of both accountabilities and algorithms, the practices they are enacted through, and the significance of debates themselves (Musiani, 2015). I draw these notions together through use of the term ‘normative logics’ (cf. Ebrahim, 2009), which describe desirable or legitimatized accountability types in combination with practices that involve state and non-state actors, policies, infrastructures, data, and more (Briassoulis, 2019). Analyzing documents in terms of ‘normative logics’ departs from conventional thematic analysis in its explicit focus on how similar themes, concepts, or claims represent framings of a phenomenon, and how those framings overlap, intersect, or overflow their boundaries producing both intended and unintended results (Callon, 2021).

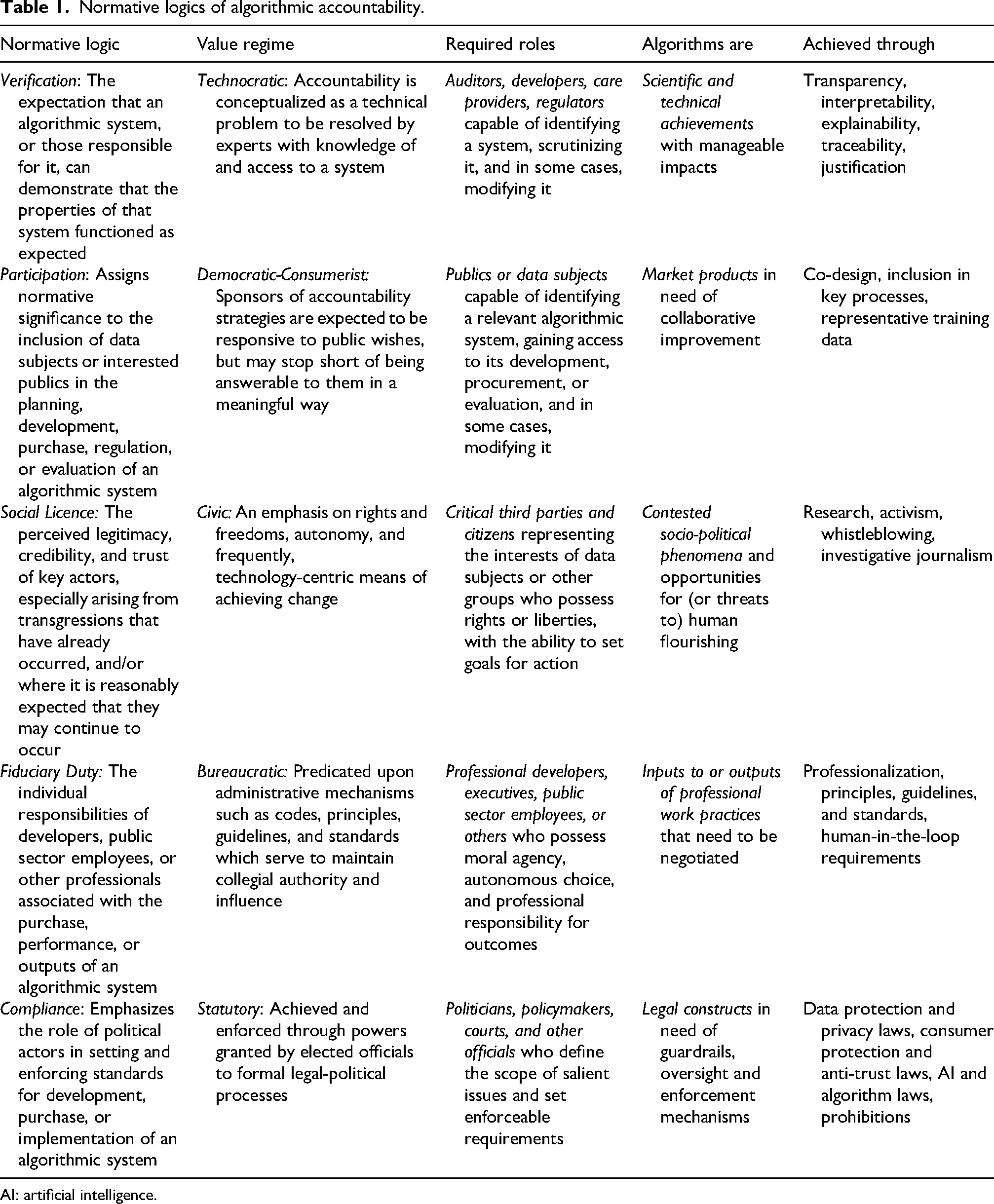

Analysis of sources proceeded abductively (Timmermans and Tavory, 2012), beginning first with close reading and inductive generation and attribution of codes according to different normative assumptions, expectations, and descriptions of practices of accountability, following by a second stage grouping of codes according to shared value statements, key roles, practical strategies, methods, and processes in/of/for algorithmic accountability (normative logics, Table 1). Snowballing and additional keyword searches were conducted until sufficient information power was achieved both within and across the normative logics identified (Malterud et al., 2016), where no new insights were generated by the inclusion of additional sources. Qualitative analysis software NVivo supported this process.

Normative logics of algorithmic accountability.

AI: artificial intelligence.

Normative logics of algorithmic accountability

In the following section I review five normative logics characterizing expectations of algorithmic accountability for health systems in the academic and grey literature: (1) accountability as verification, (2) accountability as inclusion, (3) accountability as social licence, (4) accountability as fiduciary duty, and (5) accountability as compliance.

Accountability as verification

The normative approach of verification as a means to pursue accountability is exemplified by several practical methods that continue to have immense purchase within health services and policy (Ghassemi et al., 2021; Vollmer et al., 2020), representing nearly half of all health-specific literature sources identified. Here, verification refers to the requirement that an algorithmic system, or those responsible for it, can demonstrate that the properties of that system functioned as intended or expected, often as a means to promote trust (Ennab and Mcheick, 2022; Kiseleva et al., 2022). As a code-related goal, verification is typically operationalized through efforts to achieve algorithmic transparency (observing processes or code), interpretability/explainability (comprehending processes or code, or outcomes), traceability (establishing cause-effect), and justification (demonstrating reasonable inference) (Mittelstadt et al., 2016). These code-related goals, while themselves quite diverse (Nazar et al., 2021), can be found to varying degrees in initiatives or policies related to open data and open government (Coglianese and Lehr, 2019; Mayernik, 2017), algorithmic impact assessments (Ada Lovelace Institute, 2021), audits (Raji et al., 2020), and public algorithm registries (City of Amsterdam, 2020).

Brundage et al. (2020) outline three approaches to making and assessing verifiable claims. (1) Institutional mechanisms address incentives for those responsible for creating algorithms, providing greater visibility into their behaviour. This can include bias and safety bounties, red-teaming, and sharing of critical incidents. (2) Software mechanisms focus on enhancing understanding and oversight of algorithms’ properties, including audit trails, interpretability, or privacy-preserving models. Finally, (3) hardware mechanisms focus on how computing hardware can enable transparency, such as secure hardware and high-precision measurement.

What unites all of these approaches is a common focus on the extent to which any process, policy, procedure, or initiative can open up the ‘black box’ of a system in a way that is determined by select actors to be sufficient (Ghassemi et al., 2021; Wadden, 2022). These typically include an auditor, regulator, developer, healthcare provider, or patient capable of identifying a system, scrutinizing it, and in some cases, also changing it. Complex concepts such as ‘openness’, ‘transparency’, and ‘interpretability’ are translated into technical specifications. For example, the European Parliament Research Service report on algorithmic accountability and transparency explicitly identifies transparency measures as key to enabling accountability, and suggests that AI systems should be transparent ‘not to satisfy idle curiosity, but to help achieve important social goals related to accountability’ (EU STOA, 2019: 6). On its own, this is a view of algorithms that privileges their status as primarily scientific and technical achievements, with mostly foreseeable and alterable impacts for those with the means to ‘know’ them.

Practices associated with this accountability regime are therefore largely technocratic, where social and political questions are translated into smaller-scale technical interventions (Ebrahim, 2009). Crafting accountability policies, procedures, or initiatives in this way especially privileges the resources and tools of experts, protecting the exclusivity of their expertise (Ananny and Crawford, 2018). Algorithms are configured as technical objects that can be modified to suit specific purposes or goals, or intervened upon when necessary. As investment in these approaches increases, it will be important to consider how algorithms and related verification practices are situated within broader health systems, and how the tools, methods, practices, and priorities they rely upon interact with publics’ own expectations of programmes and services.

Accountability as participation

Accountability as participation assigns importance to the inclusion of affected groups in the planning, development, purchase, regulation, or evaluation of an algorithmic system. Participation may be invited or ‘active’, referring to participation in design, development, or oversight with or without decision-making authority, or uninvited, ‘passive,’ or even non-consensual, as for example in securing representative training data for specific groups or populations. As an active mode, participation has been advocated in algorithmic impact assessments (Acemoglu, 2021; Data & Society and European Center for Not-for-Profit Law, 2021; Metcalf et al., 2021), design and development (Cohen et al., 2014; Zidaru et al., 2021), policy proposals (Madiega, 2023; UK Department for Science, Innovation and Technology, 2023), and efforts to diversify the tech industry more broadly (Dunbar-Hester, 2019). Conversely, as a passive mode, participation can be found in efforts to recruit more diverse populations in order to ensure higher standards for data collection, including the effort to reduce computational bias or improve algorithmic ‘fairness’ (National Academy of Medicine, 2019; Reddy et al., 2020; World Health Organization, 2021). Perhaps more than other forms of accountability, inclusion carries connotations of democratic legitimacy, however there are a number of ways to assess the fidelity of different forms of inclusion to aims of democratic governance, including especially access to information, and relatedly, decisional influence (Epstein, 2003; Olsen, 2013). For example, Oduro et al. (2022) suggest that ‘challenges to foregrounding the public interest can be addressed through multi-stakeholder convenings and community involvement in the development of transparency documentation requirements, as long as this involvement is pursued equitably and reflexively’.

Actors must therefore be variously capable of identifying a relevant algorithmic system, participating in its development, purchase, or evaluation, and in some cases, modifying or discarding it. In many healthcare contexts, established patient and public participation regimes shape these initiatives, including who is invited to participate, and how. For example, at the health policy level, patients or publics may be invited to citizens’ panels where they are given the opportunity to provide feedback on draft policies or regulations. Health technology assessment agencies also often coordinate patient and public feedback periods (Gauvin et al., 2010; Steffensen et al., 2022). At the same time, debates about specific technologies or policies are often reactive, and intended to respond to scenarios that assume future implementation (Aaronson and Zable, 2023). Moreover, few incentives exist for participation in private settings, where most technology development decisions are made.

This is a regime that blends language and logics from both civic and market spheres, running along a democratic-consumerist spectrum that typically approaches algorithms as market products in need of improvement. In many cases, sponsors of accountability strategies (hospitals, health technology assessment agencies, regulators, technology companies) are expected to be attentive to publics’ wishes, but are primarily accountable to organizational leadership. In cases of passive inclusion (i.e. in data collection schemes) these considerations are foreclosed in favour of the optimization a given target and the actual output of a model.

Accountability as social licence

Closely related to democratic governance and the perceived challenge of advancing public interests within existing arrangements, is the claim that many algorithms lack legitimacy or especially, social licence to operate. While the concept of social licence initially emerged from debates regarding development of natural resources, it has increasingly been taken up in health services and policy research related to big data and algorithms (Carter et al., 2015; Paprica et al., 2019). Thomson and Joyce (2008) outline three of its core features: legitimacy (conforming to legal, social, cultural norms), credibility (the quality of being believed or believable), and trust (the willingness to be vulnerable to risk or loss).

The normative significance assigned to perceptions of social licence to operate appear in many distinct but related conceptualizations of algorithmic accountability, many of which are considered outside the scope of formal policy. These include activism, whistleblowing, and investigative journalism. Contrary to the approaches listed thus far, these do not always set out to enhance or improve the performance of an algorithmic system, but rather, serve as ‘critical third party audits’ (Metcalf et al., 2021: 739) which seek to intervene where perceived transgressions have already occurred (legitimacy), and/or where it is reasonably expected that they may occur again with harmful effects (credibility and trust).

Over the last decade, grassroots movements, research and advocacy initiatives, and investigative news stories related to health data, algorithms, and their sponsors have grown in number. For example, in 2016 public backlash followed a New Scientist investigation revealing that Alphabet subsidiary DeepMind was granted access to NHS medical records in order to develop a diagnostic app without patients’ consent (Hodson, 2016). A now well-known study by Obermeyer et al. (2019) found that an AI-based resource allocation system referred Black patients for additional care half as often as White patients, in spite of having comparable levels of need. At the outset of the COVID-19 pandemic, Stanford University apologized after media reports revealed that a vaccine allocation algorithm left out nearly all medical residents (Wamsley, 2020). In 2023, an investigation by StatNews found that the largest health insurer in the United States pressured medical staff to deny payments for patients, in compliance with the insurer's own algorithm, leading to a subsequent lawsuit (Herman, 2023a, 2023b). These cases represent only a few of many responses targeting the legitimacy, credibility, and trust of algorithms or their sponsors, which were viewed as having damaging personal, social, political, or economic consequences.

Individual rights and freedoms, autonomy, and occasionally, technology-centric targets of change, figure prominently in discussions involving social licence. These typically convey data subjects or other affected parties as acting subjects with inalienable rights and liberties, defined by free will, including especially the ability to set goals for action (Lehtiniemi and Ruckenstein, 2019). This civic regime ties different forms of technological citizenship to particular projects, products, companies, or initiatives with identifiable (often blame-worthy) actors, where algorithms are contested socio-political phenomena and opportunities for, or threats to, human flourishing. Less acknowledged is how civic action is generated within, and translated into, more solidaristic aims, and what those mean for the data subjects upon which data-intensive health systems rely.

Accountability as fiduciary duty

This normative approach locates accountability squarely within professional practice, where fiduciary duties characterize the individual responsibilities of developers, clinicians, public health officials, or other professionals associated with the development, purchase, or oversight of an algorithmic system (Martin, 2019; Naik et al., 2022; Smith, 2021). This form of accountability can be found in calls for developers of algorithmic systems to become ‘professionalized’, as well as in the proliferation of ethics principles, guidelines, and standards. For example, Eubanks (2018) outlines a series of principles of non-harm for algorithmic systems, which she suggests could serve as a ‘Hippocratic oath for the data scientists, systems engineers, hackers, and administrative officials of the new millennium’. Commentators in healthcare have similarly worried about how algorithms will affect the patient–provider relationship, including notions of responsibility, confidentiality, liability, and other tenets of professional ethics (Char et al., 2018). More technically oriented discussions have included suggestions for a ‘human in the loop’, where decisions are informed by an algorithm, but ultimately adjudicated by a professional, such as a physician (Bodén et al., 2021; Zanzotto, 2019).

Professional codes, principles, guidelines, and standards are not new, however, and there are diverse perspectives on the emergence, role, and function of organized professions in health and medicine, and society more generally (Riska, 2010). For example, organized professions can serve to clarify roles and responsibilities, enhance public understanding and trust of new technologies, practices, or roles, and demarcate social and political influence. At the same time, many of the normative frameworks under which health professions have been held accountable have come under scrutiny for failing to address broader injustices, including the distribution of power and resources in the populations they are meant to serve (Banks and Gallagher, 2008). Beyond functioning to both narrow and formalize professional responsibilities, codes, principles, guidelines, and standards also rest upon the assumption that causal links can be drawn between the actions of developers or healthcare providers, and the outcomes of a system.

As a bureaucratic form of accountability, fiduciary duties are predicated upon professional training, moral agency, autonomous choice, and personal responsibility. Ultimately these are intended to maintain collegial legitimacy, authority, and influence, and especially preserve peer discipline and self-regulation. Algorithms are approached as tools of professional practice that need to be managed. However, public distinctions between those who develop, procure, or adjudicate the decisions of algorithmic systems may challenge core tenets of professional ethics, and the assumptions that underpin these individual-centric approaches to accountability.

Accountability as compliance

Accountability as compliance emphasizes the role of policymakers, regulators, ombuds, and other government actors in defining and enforcing standards and requirements for algorithm development, purchase, or use (Novelli et al., 2023). These represent a diverse and growing collection of strategies that vary by region, each attempting to construct legal regimes for defining and addressing rights, harms, risks, and impacts of algorithms in healthcare and beyond. For the most part, these can be found in three distinct legislative and regulatory regimes.

Privacy and data protection regimes primarily target personal data, and the means by which they are generated, protected, and used. Many jurisdictions also have health-specific data laws, such as the United States’ Health Insurance Portability and Accountability Act (HIPAA) for example, which applies to covered business entities and business associates and safeguards protected health information. In some instances data protection laws have either been expanded, or newly created, to better account for algorithmic systems and expanding data infrastructures, such as electronic medical records (EMRs). In these cases, accountability primarily refers to individuals’ rights and recourses related to freedom from discrimination or harm arising from improper use of data or algorithms. The GDPR's Article 22, for example, states that data subjects’ rights should not to be subject to a decision based on automated processing, and that they should have a right to contest any such decision, with article 5(2) defining accountability as an overarching objective of legally permissible automated decision making (ADM). This ‘meta-principle’ requires data controllers to proactively implement ADM measures that are expected to be justifiable from a legal perspective, notably through audit of decisions (Malgieri and Pasquale, 2024).

Privacy and data protection laws are also complemented by consumer protection laws. These include anti-trust laws which target the monopolistic practices of large organizations (Curfman, 2020), as well as advertising laws, and safety-related laws for health technologies. These are often overseen by arms-length agencies, such as the US Food and Drug Administration (FDA) or Securities and Exchange Commission (SEC). Consumer protections offer additional means by which governments can challenge the power of large technology companies, however, they necessarily focus more narrowly on activities of only the largest actors, or those engaged in regulated activities.

Finally, algorithm-specific laws have also been introduced. The US Algorithmic Accountability Act (2022), for example, would have enabled the Federal Trade Commission (FTC) to ensure that certain companies conduct impact assessments of their automated decision systems for risks to data privacy and security, as well as inaccurate, unfair, biased, or discriminatory decisions. In contrast, the EU's AI Act requires ‘conformity assessments’ (analyzing datasets, bias, how users interact with the system, and the design and monitoring of system outputs) and post-market monitoring for companies using algorithmic systems in EU-regulated products, including medical devices (EU Commission, 2021). Canada's proposed AI and Data Act (AIDA) defined accountability as ‘governance mechanisms needed to ensure compliance with all legal obligations of high-impact AI systems in the context in which they will be used’ (ISED Canada, 2023).

Legislative approaches are among the few that are tasked with reconciling multiple and at times conflicting accountability logics, blending goals of technical transparency, individual rights, due process, and public participation. Above all, these are processes predicated upon the algorithm as a legal construct that is definable, and in need of oversight, enforcement, or report requirements (Novelli et al., 2023) in order to mitigate risk of harm, with harms often individualized and narrowly defined (Viljoen, 2021). While necessary, legal routes to algorithmic accountability remain partial and patchwork, contingent on political support, and increasingly, are shaped by corporate interests (Sharon and Gellert, 2023). Moreover, disconnected legal strategies, and the actions of those responsible for enforcing them, have led to regulatory gaps. Above all, this statutory form of accountability is currently characterized by a lack of consistent, comprehensive, or globally integrated schemes, which has contributed the proliferation of other accountability regimes, as summarized in Table 1.

Worlding algorithmic-accountabilities: Making good differences in health

These normative logics represent intersecting and occasionally competing ideas about how accountability should be understood, pursued, and evaluated, each implicating different actors (developers, elected officials, journalists, healthcare providers), roles (critical third parties, data subjects, patients, publics), and objects of governance (datasets, dashboards, hardware, organizations, practices, platforms, code). For example, technocratic forms of accountability approach algorithms primarily as technical achievements with mostly-knowable impacts, and therefore especially in need of scrutiny by highly trained and qualified experts. Democratic-consumerist forms of accountability often approach algorithms as market products, where objectives range from improvement of goods and services, to involvement of different groups in their development or evaluation. Bureaucratic approaches treat algorithms primarily as outputs of professional work practices, and therefore responsibilize developers or others tasked with identifying potential harms. Civic framings treat algorithms as products of socio-political phenomena and opportunities for (or threats to) human flourishing, and therefore especially in need of more robust forms of technological citizenship. Political accountability views algorithms as objects of legal intervention, where politically influential actors are especially privileged. These are not just different perspectives on what algorithms are (Table 1, fourth column), but reflect different practices that construct algorithms and accountabilities by prioritizing and ordering their constituent parts, with practical consequences.

Practices of accountability are therefore diverse and situated, and their pursuit is always accompanied by expectations of algorithms and the forms of care that both make possible. This necessarily raises questions about the broader social and material worlds in which health-related algorithms and accountabilities are made, practiced, or valued, and how they might be otherwise. If algorithms and accountabilities partially enact the worlds they seek to intervene upon, then the question becomes about how to ‘make good differences’ within and across those worlds (Law, 2016; Lepawsky, 2018). ‘Worlds’ and ‘worlding’ are useful as they encourage consideration of the diverse material realities that constitute realms of significance actors within them (Woolgar and Lezaun, 2013). For algorithmic-accountabilities specifically, they point to multiple and contingent practices that are shaped by expectations of value, and enacted in efforts to impose health-related orders of some kind. These worldings provide a basis for arguments about the adequacy or inadequacy of action, or the in/appropriateness of algorithms and accountabilities in addressing health-related problems. They also legitimize certain styles of valuation (Annisette and Richardson, 2011), and render other values inappropriate or incomprehensible (Birch, 2017), narrowing the scope of imagination, action, or change.

At the same time, this process is never total, and there are always opportunities for alternative worldings of health-related algorithmic futures (Calvert, 2024). What is needed, then, is sensitivity to different worlds, and the actors, roles, and objects they implicate. To pursue such an approach, however, presents a conundrum for policymakers and others who rely on bureaucratic chains of responsibility. Where is accountability to be found when actors, objects, and goals are heterogenous and distributed? Briassoulis (2019) suggests that ‘the only realistic strategy is to explore the implications of intervening in one assemblage on all other assemblages and be prepared to cope with the repercussions, aiming to prevent assemblages from being locked-in “problematic” states or facilitate the transition to other more desirable states’ (p. 446). Put another way, if scholars, activists, or policymakers wish to make good differences, a useful place to begin is with the transformative changes algorithms and accountabilities are capable of bringing about, and the health systems they rely on to succeed.

More practically, I suggest that these worldings can be surfaced through three focal points. First, already existing worlds should be taken seriously in considering different logics of accountability. For example, an algorithm developed by a large corporation will implicate very different worldings when deployed in the United States versus Denmark (Wadmann and Hoeyer, 2018). Different funding arrangements, services agreements, populations, data infrastructures, and existing accountability practices all make the algorithm unique in those instances. This point highlights the importance of moving beyond ‘context’ (i.e. as emergent and enacted in practices, rather than a static explanatory resource), to consideration of how designed artefacts and governance practices themselves generate new worldings of phenomena and relations of being. This is expanded view of accountability considers not only human participants and technical goals, but broader entanglements of policies, infrastructures, norms, data, and other objects that are implicated in different digital health futures.

Second, and related, these entanglements require attention and care. Most logics of accountability take as their starting point that individuals, communities, groups, or technologies are independently constituted, and that their interests, functions, or perspectives are independent of their relatings to other phenomena (Chilvers and Kearnes, 2019). In contrast, taking entanglement seriously means considering how algorithmic-accountabilities are productive of the phenomena they intend to represent. This reflects a different starting point, which begins with the problematization of assumed characteristics of those affected by algorithms, beyond users or data subjects, and serves as an opportunity to construct new, temporary, shared categories of being and regimes of value. It also invites consideration of how those tasked with intervening are part of the problem to be solved. This can foster new forms of togetherness which take seriously how problem-solution worlds are formed, and how they might be otherwise.

Third, an emphasis on accountability as an essential component of responsible governance is itself tied to imaginaries that seek quick governance fixes for technological artefacts. For example, the use of algorithms in health and medicine cannot be considered independent of developments which approach health systems as centres of economic production and global competitiveness. Here, value is equated with efficiency, and accountability becomes a marketized product in its own right. Re-orienting algorithmic-accountabilities from purely instrumental pursuits, to the kinds of health-related worlds being made and re-made, not only concerns accurate decisions, involvement in design, or legal liability, but also data economies, environmental impacts, and the allocation of scarce health-related resources. It also invites debate about the kinds of health systems we want, what role algorithms can play in those visions, and which accountabilities follow them.

In the context of digital health, these focal points ask: what conditions of being are made possible when a digital health technology is proposed, developed, implemented, or modified? What evaluative assumptions are implicated in making a determination about whether a technology is considered innovative or responsible or accountable? And what relations emerge from our investments in certain problems or solutions?

Conclusion

In this article I have presented contrasting logics of algorithmic accountability in health systems. I have suggested that approaching these normative types from a sociomaterial perspective generates different views on both the object to be governed, and practices of governing. For example, algorithms and accountabilities both provide structuring frames for anticipated technological achievements (reducing costs, transforming obligations, meeting political demands), and also make those achievements possible (e.g. quantifying risk and documenting decisions). I have also outlined the implications of this approach for understanding how different health-related worlds are created and sustained, and how they might be otherwise.

Approaching accountability as a strict arrangement in which an actor is held to account by a forum risks missing the broader circumstances in which objects and subjects of accountability are convened. The result is a depoliticizing of health-related futures which might otherwise be characterized by conflict, contestation, or negotiation. Neglecting the normativity of these arrangements matters, as it forecloses discussion about how to justify distributions of health-related goods. Instead, broadening accountability to consider the worlds within which those practices are situated can call attention to the multiple processes, objects, and values that make up the health systems we rely upon, and how algorithms are (or are not) implicated in those worlds.

Practices of worlding therefore remain a promising area of inquiry for algorithmic accountability. For example, a focus on conflicts could surface different forms of justification, expectations of value generation, or imaginaries that characterize health-related algorithms and accountabilities. In contrast, a focus on consensus could examine how shared geopolitical ambitions shape pursuits of datafication in healthcare across jurisdictions, and how accountabilities are structured to meet those objectives in different ways. The distributed nature of practices of world-making mean that these studies can begin in any number of places: the boardroom, the lab, the clinic, or the government office. Each of these locations is implicated in worlding health-related algorithmic futures, and can generate insight into where positive change might be most possible, or most desirable.

Footnotes

Acknowledgements

This work was supported by a Social Sciences and Humanities Research Council (SSCHRC) Doctoral Fellowship, and an AI for Public Health Doctoral Scholarship as part of the Canadian Institutes of Health Research (CIHR)-funded AI for Public Health Research Training Platform. I wish to thank Dr. Jay Shaw for his feedback on earlier iterations of this manuscript, and the three anonymous reviewers for their detailed and constructive engagement.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.