Abstract

Advancements in generative artificial intelligence have led to a rapid proliferation of machine learning models capable of synthesizing text, images, sounds, and other kinds of content. While the increasing realism of synthetic content stokes fears about misinformation and triggers debates around intellectual property, generative models are adopted across creative industries and synthetic media seep into cultural production. Qualitative research in the social and human sciences has dedicated comparatively little attention to this category of machine learning, particularly in terms of what types of novel research methodology they both demand and facilitate. In this article, we propose a methodological approach for the qualitative study of generative models grounded on the experimentation with field devices which we call synthetic ethnography. Synthetic ethnography is not simply a qualitative research methodology applied to the study of the social and cultural contexts developing around generative artificial intelligence, but also strives to envision practical and experimental ways to repurpose these models as research tools in their own right. After briefly introducing generative models and revisiting the trajectory of digital ethnographic research, we discuss three case studies for which the authors have developed experimental field devices to study different aspects of generative artificial intelligence ethnographically. In the conclusion, we derive a broader methodological proposal from these case studies, arguing that synthetic ethnography facilitates insights into how the algorithmic processes, training datasets and latent spaces behind these systems modulate bias, reconfigure agency, and challenge epistemological categories.

Keywords

The methods of the model

In 2014, Google engineer Alexander Mordvintsev developed Inception, an image processing program that used a convolutional neural network 1 trained to parse an input image for a specific feature and enhance it, creating strikingly weird images in which original outlines and details blur and expand into recursive fractal patterns (Figure 1). Mordvintsev and colleagues describe the surprising discovery resulting from this program: “neural networks that were trained to discriminate between different kinds of images have quite a bit of the information needed to generate images too” (Mordvintsev et al., 2015). Released in 2015 by Google under the name DeepDream, this program was arguably the first generative model to enter popular culture. Both its codenames—Inception and DeepDream—frame the program's chief purpose (probing and visualizing the hidden layers of neural networks) as “a way to look into the machine's unconscious, into its inner life, its dreams” (Miller, 2020). Throughout the following decade, generative artificial intelligence (AI) has developed in multiple directions, leading to a proliferation of models capable of synthesizing text, images, music, videos, or 3D meshes. Countless datasets have been created with the sole purpose of training generative models for specialized usages (Jacobsen 2023), and synthetic media have become the focus of heated debates around issues of privacy, intellectual property, and representation (Zeilinger, 2021). Companies like OpenAI, Adobe, Alibaba, Google, and Baidu compete to integrate generative models in their software and services, releasing tools like ChatGPT, Generative Fill, ModelScope, Magic Editor, or ErnieBot, which allow increasing numbers of users to experience media synthesis first-hand. At the same time, open-source models like StyleGAN or Stable Diffusion are embraced by communities of creators seeking to experiment with their capabilities.

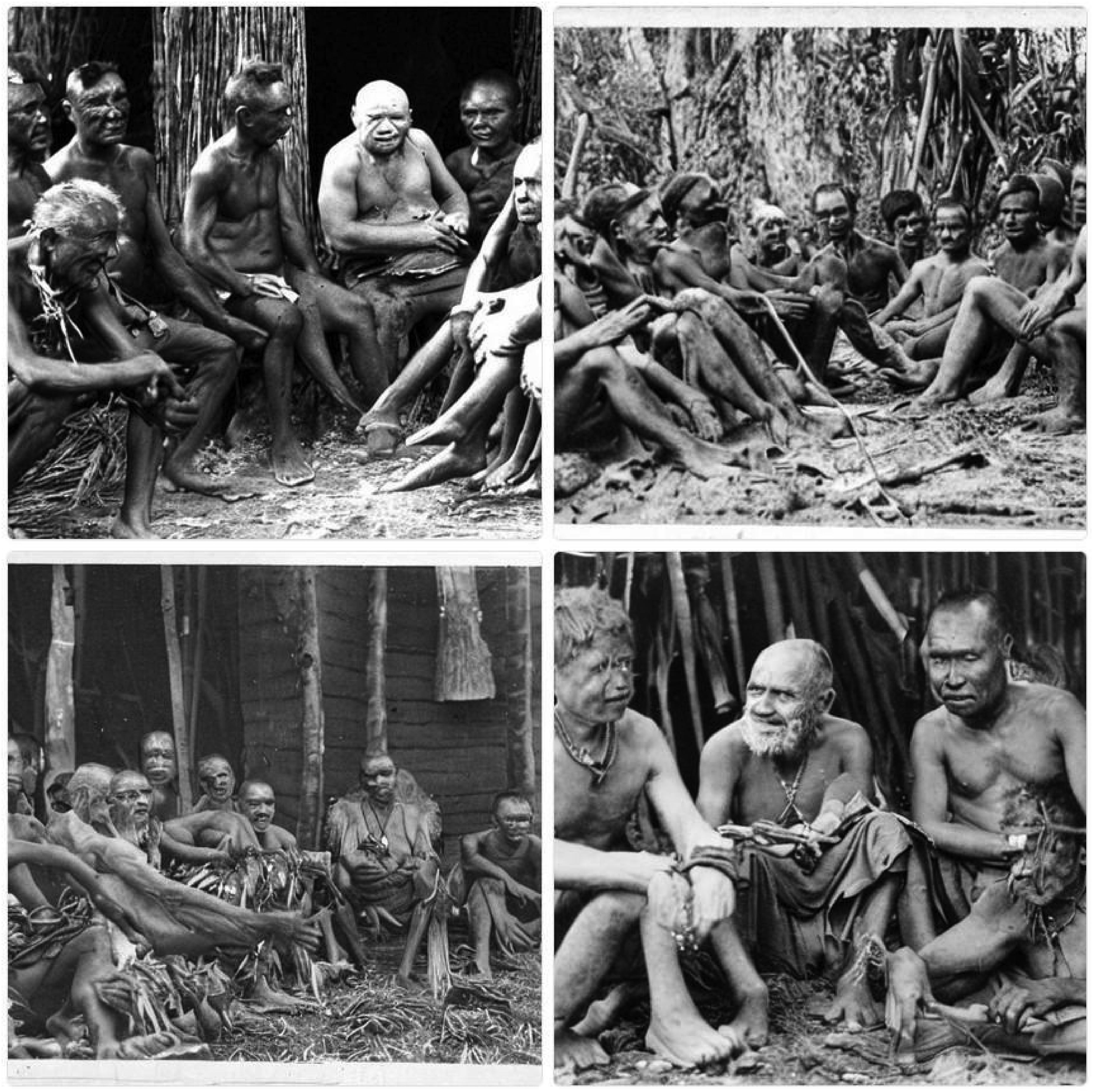

Photo of anthropologist Bronisław Malinowski during his ethnographic fieldwork with Trobriand Islanders during 1917–1918, processed through the Google DeepDream algorithm.

The ease with which generative models can be used to synthesize content of increasing realism has stoked fears about political misinformation and propaganda and resulted in calls for global regulation (Chesney and Citron, 2019). Despite these anxieties, generative models are being adopted across creative industries and made available to increasingly large publics. The complex and distributed nature of these systems—assemblages of datasets, models, interfaces, and social practices—also results in unpredictable patterns of adoption across global contexts. Generative models and the synthetic media resulting from their creative use are likely to become increasingly widespread, shaping cultural processes and reconfiguring the production and consumption of media content across different social domains. The architectures behind these models also have several applications beyond the creation of synthetic media: biologists use them to design new proteins (Jumper et al., 2021), astronomers rely on them to augment their datasets with synthetic data (Michos, 2023), and computational social scientists experiment with them to improve their research workflow (Bail, 2023). As the role of generative AI in domains ranging from experimental science to cultural production becomes more noticeable and in need of urgent critical analyses, qualitative social and human sciences have only begun to assess this application of machine learning (ML) and have yet to formalize the novel kinds of research methods it both demands and facilitates.

In this article, we take a cue from the digital methods proposal of “studying and repurposing […] the methods of the medium” (Rogers, 2013, p. 1) and apply it to the methodological approach of digital ethnography to develop “field devices” (Criado & Estalella, 2023) for the qualitative study of generative models. Building on the main strength of ethnographic research—defined in the social anthropological tradition as long-term, dialogic engagement that actively participates in both the coconstruction of its field and the production of situated knowledge (Clifford & Marcus, 1986)—and inspired by contemporary ethnographic approaches to digital data (Knox & Nafus, 2018), we call our approach synthetic ethnography. Synthetic ethnography is not simply a participatory qualitative research methodology applied to the study of the social and cultural contexts developing around generative models, but also strives to envision practical and experimental ways to repurpose these technologies as research tools in their own right. Our proposal develops as follows: in the first half of the article, we recapitulate the history of generative AI models and synthetic media, defining the main concepts of the field, and then revisit the trajectory of digital ethnography from the advent of the internet to the rise of algorithmic systems. In the second half, we discuss three case studies for which the authors have developed experimental field devices to study different kinds of generative models and synthetic media ethnographically. In the conclusion, we derive a broader methodological proposal from the case studies, arguing that synthetic ethnography facilitates insights into how the algorithmic processes, training datasets and latent spaces behind these systems modulate bias, reconfigure agency, and challenge epistemological categories.

Generative models and synthetic media

In order to ground our assessment on a solid conceptual scaffolding, we provide a brief history of recent developments in generative artificial intelligence (henceforth “generative AI”) and some definitions of key terms like model, dataset, prompt, and latent space. As of the early 2020s, the term generative AI describes a subset of AI systems dedicated to generating novel outputs across media (text, images, sound, video, 3D meshes, etc.) from the data it has been trained on. This data is usually contained in datasets compiled for training purposes, and the resulting file of neural network weights and biases is called a model. Being mostly based on unsupervised or semisupervised training, generative AI differs from other types of ML in its focus on generating rather than predicting patterns: a generative model synthesizes new representations based on the statistical probabilities learned from the data it has been trained on. The training of generative AI models results in a latent space (similar concepts are vector space or embedding space), a term describing a high-dimensional manifold containing what the model has learned from the training data in a compressed numerical vector form. In generative models, it is the diverse data points embedded in this latent space that allow for the generation of new—and often unexpected—types of representations, such as pictures of dogs and cats in the style of Van Gogh. By combining the training on large datasets with a process of fine-tuning, generative models can be primed to respond to prompts, textual inputs through which users can query the model for specific outputs, including examples that the model has not been trained with.

The uncanny ability to synthesize new content from existing data in response to user prompting has led to a surge of interest into generative AI for a variety of creative uses and drives the emergence of a host of models fine-tuned to generate text, images, videos, sound, music, or speech that are at times indistinguishable from human-generated content. While models like GPT-4, Midjourney 6, or DALL·E 3 are at the center of much debate and speculation throughout 2024, the use of ML to generate synthetic media goes back at least one decade. One of the early examples of generative AI is the application of generative adversarial networks (GANs) conceived by Ian Goodfellow and colleagues in 2014 (Goodfellow et al., 2014). The idea behind GANs is to pit two neural networks against one another in a competitive zero-sum game where the task of the first network, the generator, is to create new examples based on the data it was trained on. The task of the second network, the discriminator, in turn, is to determine whether these examples are real (based on their similarity to the training data). Given sufficient time and training cycles (epochs), this algorithmic game of forgery allowed GANs to produce strikingly realistic representations that approximated, but not reproduced, the data the model was trained on. Experimentation with different GAN models triggered an early wave of debates around the consequences of synthetic media production, as online communities of tinkerers developed new GAN-powered tools to insert or swap faces in images and videos, synthesize realistic portraits of people who “do not exist,” or develop new forms of generative art (Scorzin, 2023).

One decade after Goodfellow's proposal, the capabilities of generative AI are pushed even further by two main developments. The first is the ability to train a model on different types of data at the same time—for example, OpenAI's Contrastive Language-Image Pretraining (CLIP) model combines images and their captions as its training data. By learning both the visual and textual information contained in hundreds of millions of text–image pairs, a model like CLIP is capable of both classifying images based on their textual content but also of generating new images from textual descriptions—in short, generative AI becomes multimodal. The rise of text-to-image (TTI) generative models is also propelled by the second key development: the rise of diffusion models. Unlike GANs, diffusion models work by adding noise to training data and then reverse-engineering the process by learning to remove this noise (Yang et al., 2022). Diffusion powers the most popular commercial models such as Midjourney, DALL·E 3, Stable Diffusion, or RunwayML, contributing to the mainstreaming of media synthesis among both creative professionals and amateur creators who, for the first time in the history of generative AI, do not need domain-specific technical knowledge to experiment with processes like image generation, style transfer, generative fill, or keyframe animation. The widespread availability and cultural relevance of generative models, combined with their collaborative contexts of development and experimentation, also invites qualitative researchers to engage with them in ways that build upon existing efforts in critical analysis and theoretical speculation.

Social science and humanities research has been instrumental in identifying the biases encoded in the development of generative models and the potential harm consequent from their use. Key issues include the appropriation of copyrighted materials for training set construction without compensation for the authors (Samuelson, 2023), the potential for job displacement and worsening economic inequality (Wach et al., 2023), and the environmental burdens related to computation (Crawford, 2021). While researchers still dispute whether generative models will worsen the manipulation of public opinion (Simon et al., 2023), documented use cases include the growing circulation of deepfakes, unsolicited intimate images, and nefarious practices like spear phishing (Kapoor and Narayanan, 2023). Given their historical familiarity with creative processes and knowledge production, the humanities and social sciences are uniquely positioned to critically examine the risks and consequences of generative models. In our view, to adequately address the issues related to generative AI, research practices cannot remain the same and researchers need to “not only think about, and often against, but sometimes also with AI” (Bajohr, 2024). This idea of thinking with does not entail being uncritical about generative models but instead extends a critical attention toward both the industry behind AI and academic research methodologies. How can a qualitative method like ethnography adapt to the opportunities and challenges brought about by generative models, the synthetic media they output, and the broader sociotechnical assemblages they are enmeshed in?

Ethnography of the digital, algorithmic, automated

Since the early years of networked communication systems, anthropologists have recognized the need to explore new forms of human–machine interaction developing in cyberspace (Escobar, 1994) or the internet (Ito, 1996). Ethnographic approaches to the online sociality have ranged from extensive field studies following how literacy, access, and connectivity changed local practices (D. Miller & Slater, 2000) to participatory and embodied investigations foregrounding virtual spaces as field sites in their own right (Hine, 2000). Since the early 2000s, scholars across disciplines have proposed a variety of “ethnographic approaches to digital media” (Coleman, 2010) which have found a common descriptor under the label “digital ethnography” (Hjorth et al., 2017). The increasingly pervasive use of algorithms for the querying, indexing, analysis, filtering, and recommendation of online content (Gillespie, 2014) has forced qualitative researchers to grapple with algorithmic black boxes (Diakopoulos, 2015) and find ways to study them in productive ways beyond the mythologies and dramas accruing around them (Ziewitz, 2016). In an overview of critical research approaches to algorithms, Rob Kitchin identifies the ethnographic study of programmers, sociotechnical assemblages, and wider contexts of use as a promising path alongside the production of code and reverse engineering (2017). Anthropologists have derived important methodological insights from their ethnographic experiences: Nick Seaver recommends specific tactics that undo rigid definitions of algorithm and instead approach them “as culture,” or the result of human practices (2017); Ann-Christina Lange and coauthors propose four epistemic perspectives from which to analyze the shifting relations between algorithms and their users (2019); Angèle Christin outlines three methodological strategies to move beyond the black box metaphor and enroll algorithms in the research process itself (2020); and Loup Cellard suggests to unpack how algorithms are fabulated from complex sociotechnical assemblages (2022).

Because of its focus on the automation of computational processes, the ethnographic study of algorithms intersects and overlaps with another field of inquiry: socioanthropological research on AI. As early as 1985, writing at the of peak of debates around expert systems, Steve Woolgar outlines a sociological perspective on AI that goes beyond the study of computer scientists and their systems and focuses on unraveling the “dichotomies and distinctions” that characterize its discursive field (1985). Anthropologists like Diana E. Forsythe have demonstrated through ethnographic research how AI is as technical as it is cultural (2002). More recently, Alan Blackwell has argued for ethnographic accounts of AI as culturally situated that decenter the field from the Global North (2021). Ethnographers have also examined particular aspects of AI, disaggregating the sociotechnical assemblages of ML models, training datasets, and code (Knox & Nafus, 2018). Database ethnographies aim at complementing the collection and categorization of data with qualitative context (Schuurman, 2008), enhancing data interpretation (Zhang et al., 2018) and analyzing the resulting datasets as field sites (Burns & Wark, 2020), but ethnographic approaches can also contribute to structuring statistical inquiry (Ford, 2014), narrating quantitative data qualitatively (Dourish & Gómez Cruz, 2018), and establishing ground truths for big data analyses (Bjerre-Nielsen & Glavind, 2022). Ethnographers working with modeling experts have demonstrated that the complexity of ML models escapes their users (Kolkman, 2022), and the ones examining code have evidenced its key role as a sociotechnical actor (Rosa, 2022).

As a qualitative methodology tasked with charting rapidly shifting media ecosystems, digital ethnography has developed into a variegated and flexible toolbox of approaches. The rise of algorithmic media has prompted digital ethnographers to devise strategies to peer into black boxed sociotechnical assemblages and unravel how they are enacted through practices and relationships. The renewed interest in AI brought by ML and neural networks has also been contextualized by ethnographic studies that foreground the situated ground truths of models and datasets. Generative AI models and synthetic media demand a reconfiguration of ethnographic methodologies capable of operating at the convergence of three sociotechnical domains: first, synthetic media are profoundly entangled with apps and social media platforms, and users often encounter generative models through opaque interfaces; as they become more pervasive, these systems further complicate relationships between users and algorithms; lastly, discursive imaginaries of intelligence and sentience challenge established definitions of key categories such as creativity and personhood. As recently observed by Anders Kristian Munk, Ethnography now faces a situation like the one it faced twenty years ago with the emergence of virtual online worlds. A new field has suddenly come into being with its own cultural expressions, its own species of interlocutors, and its own peculiar conditions for doing fieldwork. (Munk, 2023)

Synthetic ethnography: Three field devices

In this section, we revisit individual research projects conducted by the three authors that exemplify different qualitative approaches to generative AI, and from these generalize three “field devices” for synthetic ethnography. We adopt the concept of field device from the edited collection on ethnographic invention curated by Criado and Estalella (2023), who identify forms of relational inventiveness and collaborative improvisation as essential for the practice of ethnographic fieldwork. Drawing on discussions of the term “device” in science and technology studies, Estalella and Criado define field devices as “situated arrangements that dispose the ethnographic situation” (2023, p. 1) and expand its methodological toolbox beyond the techniques of participation and observation. In our proposal, synthetic ethnography responds to methodological challenges brought to social science and humanities research by the proliferation of generative AI. As noted by Nick Seaver, technological novelty does not necessarily require the development of brand-new methodologies (2017, p. 6); similarly, building on the work of Noortje Marres, Phillip Brooker argues for the need to draw on methods that already exist among users of digital technologies (2022, p. 4). Along these lines, synthetic ethnography is an effort to fine-tune, retool and reconfigure ethnographic research in response to generative AI. The three case studies have distinct objects of research, and feature generative models deployed in a variety of ways as part of research devices, experimental ways of “patterning the social, devised to gather data, produce knowledge, and articulate questions” (Criado & Estalella 2023, p. 5). Writing about the mythologies developing around algorithms, Malte Ziewitz recommends to “shake the black box” rather than being captivated by it, since “myth does not always have to be debunked but can be generative” (2016, p. 9, our emphasis). The three field devices described in the following sections are our own generative attempts at shaking the multidimensional and colorful boxes used to package the various myths of AI.

Deepfaking the ethnographer: Creative participation as practical probe

While conducting research on the creative uses of AI in China, Gabriele de Seta came across several examples of synthetic media that were becoming a matter of societal relevance in the country. After reviewing debates around synthetic media on Chinese news sources, scouring social media for related search terms and hashtags, and chatting with local internet users about the topic, he decided to look into a specific genre of synthetic media: huanlian, or “changing faces,” which roughly overlaps with the English-language term “deepfake” and indicates various forms of facial animation, manipulation, and replacement (de Seta, 2021). By tracing back the origin of several popular huanlian videos, the researcher found an active community of creators uploading their creations to Bilibili, a Chinese video streaming platform. After setting up a Bilibili account, he watched a variety of huanlian content by following hashtags and suggested videos, identified the most prolific creators and contacted some of them to inquire about their technical skills, software of choice, and creative decisions. When de Seta noticed that some creators also uploaded tutorial videos discussing different aspects of huanlian creation, porting software, and translating techniques to help Chinese viewers bypass local restrictions, he realized that he could learn a lot by trying to create this sort of content by himself. The author's goal was to understand more about synthetic media—and by extension, ML algorithms, models, and datasets—by becoming a creator situated in the local sociotechnical context. Engaging in situated practices is a common ethnographic strategy, and in the context of contemporary digital media, creating and sharing content is a key form of participation. So, the ethnographer decided to try deepfaking himself into some popular images and videos.

De Seta's first experiment was based on a specific generative model: the First-Order Motion Model for Image Animation (Siarohin et al., 2020), a framework capable of animating an object in a static image according to the motion of another object in a driving video. Siarohin and colleagues shared the source code for the First-Order Motion Model on GitHub and even set up a demo on Google Colab, allowing users to play with the model in-browser. The convenience of this model demo turned it into the perfect tool to create humorous clips, and users from around the globe started uploading their own content to make, among many other things, countless versions of a specific meme based on “Dame da ne,” a song from Japanese video game series Yakuza. “Dame da ne” videos made with the First-Order Motion Model were quite popular on Bilibili, and several uploaders used it to animate characters from Chinese popular culture according to the song's lyrics. Making a video of the ethnographer's own face from a still photograph and the original video of a man singing the song in a dramatic way was a quick and convenient process: as instructed by one news article on the topic and a tutorial video uploaded on Bilibili, the author loaded both files onto the Google Colab notebook and run it for a few minutes. He then uploaded the resulting video on his own Bilibili profile, tagging it like similar pieces of content and hoping to become noticed by the platform's huanlian community. Despite several news mentioning that Bilibili was actively deleting huanlian content, his video went through the platform's verification process without problems. Besides gaining some insights into the copyright policies of Bilibili, uploading his own video also helped de Seta to contact other creators asking for feedback and suggestions. One of them, for example, told the researcher that they were not able to access the Google Colab notebook since Alphabet services are banned in China, and they had to code their own Python script to run the model downloaded from GitHub.

De Seta's second experiment was a much more involved one. The researcher wanted to expand his skills as a huanlian creator beyond smartphone apps and in-browser demos. Following the recommendation of another creator he contacted on Bilibili, de Seta downloaded FaceSwap, an open-source software capable of generating deepfakes through multiple models, which includes a user-friendly interface to fine-tune parameters without the need to write any code. His goal was to create a version of a popular humorous viral video featuring actor Jackie Chan. After watching some tutorials made by other Bilibili creators, de Seta proceeded to download the original viral video and shoot a video of his own face, then extracted the faces from both videos and started training one of the deepfake models included in FaceSwap. While FaceSwap hides much of its computation behind the software's user interface, observing the training process yielded interesting insights: the author could observe how a ML model synthesizes a human face by pulling out increasingly accurate probability distributions of pixels from the latent space it generates during training (Figure 2). The process was also clearly asymptotic: FaceSwap honed into a blurred version of the ethnographer's face in the span of a few thousand iterations, and between 10,000 and 20,000 iterations the improvements in clarity were noticeable. As the training moved into the hundreds of thousands of iterations, improvement was much slower.

Training output preview of the FaceSwap deepfake model after a few thousand iterations, showcasing how facial features emerge from a blur as the model learns to map them between original and swap datasets.

Generating individual frames from the trained model, combining them into a video file and adding the original audio on it was a matter of minutes; the result, which de Seta uploaded on Bilibili and shared with a few friends for laughs, was not that different from his first attempt at creating huanlian content: a funny and glitchy animation showcasing the potentials and shortcomings of media synthesis. Despite some trial runs and tinkering with parameters, the video he spent hundreds of hours creating had noticeable flaws: first, the different facial structure did not help transferring the ethnographer's features onto Jackie Chan's motions, resulting in misalignment and artifacts in the synthetic face. Second, the researcher's lack of experience meant that he shot footage of himself at a different angle than the one of Jackie Chan in the source video, which further compromised the effectiveness of training. These issues could have been mitigated by further attempts at retraining the model with better footage and more suitable types of face extraction masks, which would in turn have meant hundreds more hours of calculations. For this project's purposes, an imperfect but funny huanlian video was good enough: in its failure of realism, it demonstrates how much skill is still involved in crafting convincing synthetic media. As a professional huanlian creator interviewed by de Seta confirmed, using a software like FaceSwap proficiently requires numerous attempts, a willingness to learn by trial and error, and a sustained engagement with documentation and fellow creators.

Methodologically speaking, these two experiments highlight the ethnographic value of becoming a user of ML algorithms, models, frameworks, or larger systems. The first experiment allowed the researcher to follow a framework for image animation developed in collaboration between academia and the tech industry as it traveled from computer science conferences to social media around the world through the mediation of open-source repositories. Even if creating an animation through the First-Order Motion Model demo was rapid and convenient thanks to remote computation and prescripted routines, the whole process allowed de Seta to peer inside the opaque box of a specific ML model and its social “unboxing” through tutorials, local translations, and private communications. The second experiment proved that while consumer apps, pretrained frameworks and demos sustain an imaginary of accessibility and convenience, training and fine-tuning a model takes more time, skill, and computing power; after hundreds of hours of processing and flawed outputs, the ephemerality of synthetic media is counterbalanced by the accumulation of insights into the amount of tinkering its production requires. Both experiments exemplify a kind of field device: the creation of content through active participation in communities developing around the use of generative models and the circulation of synthetic media. This field device foregrounds creative participation as a probe into the situated practices through which users make sense of, discuss, and speculate about generative AI, expanding participant observation through a hands-on approach.

Probing the limits of historical data: Generative models as trace archives

Matti Pohjonen has experimented extensively with using TTI models as trace archives of historical events. His research is premised on the fact that new generative AI models ultimately reflect—albeit often in nonlinear and unpredictable ways—the data they have been trained on. As such, they can be repurposed as archives of traces about the world: assemblages of text and images (Deleuze, 1988: 47–69) that reflect the historical, cultural, political, and technological dynamics of the society that produced them. As a result, generative models are “likely to amplify existing societal biases and inequities—indeed, to the extent that ML artefacts are constructed by people, biases are present in all ML models” (Luccioni et al., 2023, p. 1). At the same time, because these models reflect the societal context in which they are developed, the information embedded in generative models can be thought of as constituting massive archives of knowledge produced at any given historical time. Shifting our analytical focus from questions of how these AI models are biased (that is, how these models might misrepresent the world) to how these models also rerepresent the world can thus function as a magnifying lens into the historical “systems of discursivity” (Deleuze, 1986, pp. 1–23) of the societies that produced them—what Ervik calls the “technology-guided social imagination” of generative AI (2023). Even when the data for training AI models is not readily available for analysis, using these models as a component of long-term ethnography can thus help the researcher better understand the social, cultural, and political conditions that help shape “the field” in which they operate. How could we then repurpose generative models as field devices to better understand the conditions of possibility of these discursive systems?

To pursue this question from a methodological angle, Pohjonen's work has probed how historical events—such as the 1984 Ethiopian famine—are reimagined by generative models. This research builds on Pohjonen's long-term engagement with themes related to memory and representation, based both on his childhood experiences growing up in Ethiopia during the 1980s famine and civil war but also on his subsequent research on contemporary digital politics in Ethiopia. As part of the broader context for this work, Pohjonen crowdfunded a research project and experimental art book that combined oral histories of people who grew up in Ethiopia during the 1980s, exploring his own childhood memories together with a 22-day “autoethnographic” walk that traversed the remote mountainous regions of Ethiopia most affected by the famine and civil war. During this trek, he used photography and digital design to explore, among other things, how his childhood memories and powerful yet selective global media representations of the civil war and Ethiopian famine intersected and clashed with the embodied experience of walking 400 km through the harsh landscapes and conversations with people who lived there (Pohjonen, 2015). Focusing on an “iconic media event” (Franks, 2013) such as the 1984 Ethiopian famine provided his research with three methodological advantages: first, it foregrounded an event that has been represented through a rarefied repertoire of visual content (e.g. the popular images of starving children and desolate landscapes canonized by Western news coverage and in other popular culture imaginaries such as Live Aid); second, despite the limited diversity of visual content available, the famine has nonetheless emerged as an influential visual trope through which complex problems in Africa have been represented historically (see Gill, 2011; Sorenson, 1991); third, this allowed Pohjonen to situate his exploration of TTI models into the wider context of his long standing engagement with global representations related to the famine.

As one of the strategies of this ongoing work, Pohjonen has been systematically testing how emerging generative models have represented the 1984 famine over time. For example, when prompted with “1984 famine and drought in Ethiopia,” the VQGAN-CLIP model (Crowson et al., 2022) and early versions of Stable Diffusion result in recurring motifs of dark-skinned, almost skeletal human figures roaming across desolate landscapes. The visual capabilities of TTI models have quickly improved between 2022 and 2023, but despite the increasingly photorealistic quality of their outputs, similar motifs also emerge in later model versions as well (Figure 3). These improved capabilities have been aided by the development of a new lexicon of prompts and prompt modifiers which can guide the aesthetic look of images with a surprising degree of nuance. When given a similar prompt augmented by prompting “tricks” such as adding qualifiers (ultra-detailed, photo realism, dreamlike lighting, magical photography, dramatic lighting, 18 mm lens, f/16″) to guide the image output, Midjourney still generates images featuring the visual tropes described above, albeit now rendered in a photorealistic style. This allowed Pohjonen to observe how rapid progress in the visual quality of TTI model outputs contrasts with the relative homogeneity of the visual forms they generate: skinny human figures, protruding bones, ragged crowds, desolate landscapes. This suggests that the homogeneity of these “strong features” (Salvaggio, 2023, p. 90) also reflects the latent representational structures that the model has learned to associate to concepts such as “famine” and “Ethiopia” from the training data. This can be further confirmed by reverse-engineering the image-text assemblages of the Large Scale Artificial Intelligence Open Network (LAION) dataset on which Midjourney is trained—for example, by searching the dataset for “Ethiopia” and “famine” through an open-source CLIP retrieval tool, Pohjonen found several influential (and, for some, infamous) media representations of the 1984 famine, including dramatic photographs and magazine covers.

Two outputs of the VQGAN-CLIP model prompted with “1984 famine and drought in Ethiopia” (top) and one of the Midjourney model (version 5.0) prompted with real-life photography that shows the devastating effects of the 1984 famine on the people of Ethiopia, images of malnourished and starving people, images of the dry, barren, mountainous landscape that contributed to the famine, ultra-detailed, photo realism, dreamlike lighting, magical photography, dramatic lighting, 18 mm lens, f/16″.

Through these experiments, Pohjonen has probed the limits of historical data by approaching generative models as trace archives: multimodal assemblages that contain mathematical representations of the data used to train them. By focusing on a specific kind of model (TTI) and an iconic media event (the 1984 famine in Ethiopia), the ethnographer can combine their deep contextual knowledge of a subject matter with experimenting with the models, following these traces to explore controversial topics such as the representation of historical events emerging from generative models (Offert, 2023). While training datasets are nearly impossible to explore qualitatively because of their scale (Pipkin, 2020), recent work on the art of prompting (or “prompt engineering”) has focused on its use as a distinctly qualitative method for interacting with generative models (Carter, 2023). Probing generative models through prompting should not be approached as a “hard science” but, rather, as Oppenlander notes, it resembles a dialogic interaction with the system: “A practitioner typically will run a prompt, observe the outcome, and adapt the prompt to improve the outcome […] prompt engineering, thus, is iterative and practitioners formulate prompts as probes into the generative models’ latent space” (2022, p. 4).

At the same time, it is also good to stress here that even skillful prompting does not necessarily constitute an ethnographic activity by itself. To add an additional layer to existing approaches such as the repurposing of digital methods suggested by Rogers (2013), what is additionally needed to make this approach qualify as “ethnographic” is to use this field device as a part of the long-term engagement with a subject matter in a way that moves the focus of research away from only the technical specifications of AI models to also include a more critical sensibility characteristic of ethnographic research and writing (Clifford and Marcus, 1986). When understood as a dialogic and iterative interaction between a human and an ML system over time, prompting shares some similarities with ethnographic fieldwork practices, during which knowledge production similarly takes place through trial and error in recurrent and sustained engagement as the nuances of field sites emerge in their relevance and become more known to the researcher. In the case of TTI models, this process can be facilitated by learning heuristic research tricks from other users, such as selecting appropriate subject terms, learning the relevant style modifiers, crafting accurate image prompts and relying on documentation developed in generative AI communities over their long-term engagement with rapidly evolving models. This approach exemplifies another field device for ethnographic research: the close exploration of trace archives, following their transmutations in the latent spaces of AI models, which complements a more classical ethnographic engagement with the “statements and visibilities” (Deleuze 1986; 41) reproduced by these models about a specific research topics.

Repurposing latent spaces: Synthetic media generation as speculative modeling

Generative models like DALL·E 2 and ML frameworks like GANs are commonly thought of as accessible ways to generate images. But could they also be used to study social phenomena, such as the visual aspects of social processes in cities? The work of Aleksi Knuutila seeks to answer this question through the strategy of speculative modeling, in which the attributes of urban environments—such as reoccurring visual patterns and spatially stratified data production—are leveraged to repurpose generative models for social scientific inquiry. Speculative modeling repurposes the latent spaces of ML frameworks to create novel relations between disparate types of data, the model, and its users. The author bases his work on street-level photography of London, which he uses to explore the connection between the built environment and social processes—a frequent topic in ethnographic work and subdisciplines such as human geography and urban studies. This way of applying generative models has not yet been utilized as part of long-term fieldwork, but the case study discusses how this device could enable ethnographic research.

Knuutila's project

The speculative modeling behind This inequality does not exist is close to the concept of “spawning” proposed by musician Holly Herndon to describe the generation of new music through the musician's interaction with a model trained on a dataset of samples (Wilson, 2022). Rather than reproducing sounds (as with sampling), spawning takes some aspect of the original (such as the timbre of sound or style of music) and applies it to another object (such as a vocal line or a synthesizer pattern). GANs make it possible to generate realistic images, but also allow to manipulate them through a form of spawning called “style transfer” (Jing et al., 2019)—for instance, by taking a photograph and synthesizing a version that preserves its content or subject but looks like it was painted by Van Gogh. The relatively novel aspect of this inequality does not exist is the fact that it applies this process of spawning to aspects of the city for which visual qualities (the style to be transferred, in this case) are not known a priori, and allows users to generate unlimited outputs that can be read comparatively (Figure 4). Inspired by a long tradition of walking as a method for knowledge production—for example, activist group Precarias a la Deriva who organize walks through Madrid with nurses trying to reconcile their varying experiences of the city through dialogue (Precarias a la Deriva, 2004)—the author has conceptualized the “latent walk” as an analogy for working through assemblages of synthetic data (Knuutila, 2023, p. 8).

A grid displaying synthetic images of London streets generated by the this inequality does not exist GAN according to parameters reflecting visual patterns related to income, education, and health.

Knuutila's walkthroughs of latent space, accompanied by two other inhabitants of London, resulted in several observations, which exemplify the knowledge-production potential of latent walks: moving from the portion of latent space associated with low education areas toward the one associated to high education areas creates a change from 1930s and postwar building stock to Victorian facades. Roof pediments become more frequent, and window frames become more decorated. Hedges and bushes in the front yards grow, giving the houses a sense of privacy and seclusion. Following the same latent walk along lines associated with higher income produces different visual characteristics, with Victorian bay windows giving way to Edwardian flat edifices. Raw brick edifices become coated with whitewashed stucco, and even the sidewalks change, with the bare tarmac turning into paving stones. Walking through the latent space toward higher levels of health, in turn, appears to be less associated with greenery than with increased space, and more extensive front gardens and windows added to attics indicate roof conversions for additional living space. More trees appear on the streets, and the houses move further away from the cars. These observations demonstrate how a latent walk can make tangible the visual correlates of abstract qualities such as inequalities in income and education. As a method for producing scientific knowledge, latent walks have obvious limitations: like other speculative approaches, there is no prominent and established manner to validate the results, distinguishing between the biases and omissions of the model and representation of social phenomena. Unlike methods with agreed-upon practices of application and verification, speculative modeling is “a provisional arrangement that results not from polished design but from tinkering practices” (Criado and Estella, 2023, p. 5). The latent walk—generating a series of synthetic images which are then qualitatively interpreted—could be used as a field device in many different research processes. In what way could latent walks form a part of specifically ethnographic modes of inquiry?

When working on subject matter such as inequality in London, one affinity between latent walks and ethnographic practice is the necessity of local knowledge in the close reading of generated material and the potential for collaboration or dialogue in using this knowledge. When walking has been used in ethnographies of urban settings, one common strategy has been the “go-along”: walking together with others and comparing experiences and meanings associated with cities (e.g. Ingold and Vergunst, 2008). Similarly, doing latent walks as part of This inequality does not exist demonstrated to Knuutila the importance of “collaborative hermeneutics” (Criado and Estella, 2023, p. 5) with participants who are intimately familiar with London's built environment. Through these collaborative “go-along” experiences, the project questions the idea that ML or modeling necessarily displaces or mimics human activities. Instead, latent walks orchestrate a speculative configuration for knowledge production involving the “homogeneous spaces of calculation” (Amoore & Piotukh, 2015, p. 316) while also retaining the importance of human interpretation. Similar speculative models can be used ethnographically in processes that create spaces of collaboration and build novel relationships. For example, in Criado and Estella's survey of “field devices” for ethnography, one recurring pattern is the collaborative building of digital data collection infrastructures (documenting, for instance, asthma and public health challenges), which results in relationships that also help with other forms of inquiry (Criado and Estalella, 2023, p. 5–6; Fortun & Fortun, 2023). One example of specifically generative models used for building relations and dialogue are Danish experiments in participatory planning, where images generated by inhabitants through prompting facilitate conversations about their surroundings (Vingaard et al., 2023). One potential benefit of generative models for such purposes is that the interfaces they provide, such as text prompts or sliders, are relatively more accessible than more technically demanding ML systems.

A generative synthesis

Generative AI is here to stay. Or is it? In the constant attempt to catching up with industry innovations and institutional responses, the relevance of specific ML models, frameworks and approaches might be waning before academic research manages to offer substantive theoretical and methodological contributions to the field (Roberge & Castelle, 2021, p. 2). In this article, we proposed a methodology for the qualitative study of generative models based on the experimentation with field devices: adding to the proliferation of methodological buzzwords, we call this approach synthetic ethnography. This methodological proposal does not emerge in a vacuum: researchers across disciplines have already started exploring the social and cultural lives of generative AI. Some ethnographers have conducted studies of early adopter communities, documenting the emergence of prompting practices (Oppenlaender, 2022) and representational glitches (Wasielewski, 2023); others have investigated the adoption of generative AI by professional users like game designers (Vimpari et al., 2023) or fan artists (Lamerichs, 2023). Scholars in the humanities have also begun developed methodological strategies to study synthetic media and generative imagery in particular (Wilde, 2023), including a “genealogical method” to study datasets (Denton et al., 2021), “critical image synthesis” as speculative practice (Carter 2023), the “promptological approach” to models (Bajohr, 2023), and even a step-by-step process of visual semiotic analysis for AI-generated images (Salvaggio, 2023).

Our proposal builds upon these emerging approaches and offers a generative synthesis of their strengths. With its emphasis on devising collaborative field devices, synthetic ethnography pushes ethnographic studies beyond participant observation and toward a hands-on, experimental engagement with generative models. At the same time, its grounding on long-term, collaborative and situated qualitative research invites media studies researchers to complement critical readings of synthetic media with participation in the social worlds in which they are created and circulated. Synthetic ethnography is not simply the use of generative AI to “improve” qualitative research (Bail, 2023), nor is drastically different from other ethnographic approaches to digital media. Rather, it follows decades of developments in digital ethnography, in which “fabrication” has been discussed as an ethical strategy (Markham, 2012) prefiguring synthesis, and shaking the black boxes of algorithmic systems has been recognized as a generative practice (Diakopoulos, 2015). In this article, we have illustrated our proposal through three case studies, each developing a different field device: de Seta's experimentation with deepfake models in the Chinese context demonstrates how creative participation in communities of practice can become an ethnographic probe; Pohjonen's long-term exploration of TTI systems through an iconic media event evidences the role of generative models as archives of traces; Knuutila's speculative modeling project of generating street views of London guided by socioeconomic indices proves that latent spaces can be repurposed into walkable spaces for collaborative interpretation. These three case studies are not an exhaustive inventory of synthetic ethnography—rather, we expect others to develop their own field devices inspired by the methods of the model and responding to rapidly changing technologies and techniques.

To conclude, we circle back to the first illustration of this article: one of the most iconic representations of ethnographic fieldwork, a photograph of anthropologist Bronisław Malinowski sitting with Trobriand Islanders, likely shot by pearl trader Billy Hancock sometime in 1917 or 1918, which we processed through the DeepDream algorithm released by Google one century later. In a sense, not much has changed in the politics of ethnographic image production: a white anthropologist centered among unnamed natives, an uncredited photographer, and unmarked representational politics. This image is also likely to have ended up in the training sets of multiple generative AI models, skewing what prompts like “anthropologist” or “ethnographic fieldwork” will output in the future (Figure 5). And yet, the algorithmic logics of generative AI and synthetic media throw a spanner in the work of ethnographers. One of the few mentions of “synthetic ethnography” in academic literature is found in Tobias Rees's book After Ethnos, where he discusses Malinowski's ethnographic proposal vis-à-vis Radcliffe Brown's abstract functionalism: Malinowski likened anthropology to the arts—the challenge was to immerse oneself in the everyday life of a particular group; to discover, by way of attending to their conversations and habits, the “underlying ideas” that structure the natives’ lives; and to then learn how to vividly describe, as a novelist describes (as a painter paints) the life of the primitive [sic] in such a way that the underlying ideas are rendered visible in the concrete—without rescue into the abstraction. (Rees, 2018, p. 79)

Four images generated by stable diffusion following the prompt “Polish–British anthropologist Bronisław Malinowski sitting with Trobriand Islanders” in July 2023.

Footnotes

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

Gabriele de Seta's work on this article was supported by the ERC Starting Grant project “Machine Vision in Everyday Life” (Grant Agreement No. 771800) and the Trond Mohn Foundation Starting Grant “Algorithmic Folklore” (Grant Agreement No. TMS2024STG03).