Abstract

Early enthusiasts imagined a cyberspace free from centralized control. Today, Internet platforms surveil and regulate user activity through systematic governance mechanisms, including content moderation. This article examines how centralized control became the taken-for-granted solution to platform challenges, a shift that abandoned the dream of self-governance. By analyzing the rise of “Trust and Safety” at eBay between 1995 and 2007, I show that platform governance emerged to align eBay with corporate pressures as it transitioned from a startup to a public, multinational corporation. Trust and Safety at eBay came to designate a department, a discipline, and a philosophical approach to questions concerning the governance of users, synthesizing two competing visions: Pierre Omidyar's cyberlibertarian ideal of community self-governance through mutual surveillance, and Meg Whitman's corporate vision of a centrally regulated “well-lit marketplace.” Drawing on internal and external company documents, I demonstrate how Trust and Safety provided a moral justification for centralized governance by framing corporate vigilance as user protection, making control of users compatible with the “community” ethos of early Internet culture. As an early commercial platform, eBay acted as a laboratory for platform governance, where the visions and practices of Trust and Safety were developed and then exported to other major platforms.

Keywords

Cyberutopians of the early 1990s viewed digital realms as inherently devoid of centralized authority. “Our identities have no bodies, so, unlike you, we cannot obtain order by physical coercion. We believe that from ethics, enlightened self-interest, and the commonweal, our governance will emerge,” said John Perry Barlow (1996) in his “A Declaration of the Independence of Cyberspace.” Had Barlow traveled forward in time, he would likely have been dismayed by the transformation of his digital utopia into a landscape of control. Contemporary platforms detect and restrict user activity they categorize as “deviant” according to prevailing norms; this is why scholars argue that platforms govern user behavior (Gillespie, 2017; Gorwa, 2019). While cyberlibertarians envisioned the digital space as allowing for unbridled speech, today's platforms are premised on content moderation, which information science scholar Sarah Roberts (2022: 33) defines as “the organized practice of screening user-generated content posted to internet sites, social media, and other online outlets.”

How did content moderation and the vigilant monitoring of user activity become taken-for-granted practices in the platform economy? This article explores this question by examining eBay, an early commercial platform and online marketplace, between 1995 and 2007. During this period, eBay pioneered “Trust and Safety”—a governance paradigm that synthesized cyberlibertarian ideals of community self-regulation with centralized surveillance. This paradigm emerged as eBay navigated pressures from investors, regulatory bodies, and corporate boards during its transformation from startup to publicly traded multinational corporation.

Extant scholarship has focused on the logics and practices behind platform governance (Gillespie, 2018; Klonick, 2018; Gorwa, 2019) and has connected governance mechanisms to growth pressures (Goldsmith and Wu, 2008; Lehdonvirta, 2022; Lingel, 2020). eBay's case, as an early example of the dynamics that came to dominate subsequent platforms, offers a window into how centralized governance became institutionalized (Meyer and Rowan, 1977; DiMaggio and Powell, 1983) as the legitimate response to platform challenges. In responding to challenges from investors, regulatory bodies, and corporate boards, eBay developed a governance apparatus that satisfied these actors’ expectations about how organizations should operate. As an early commercial platform, eBay acted as a “laboratory” where governance visions and practices were developed and later exported throughout the platform ecosystem, shaping how governance is understood and practiced across the industry today. 1

While Trust and Safety today refers to an established field of specialists, the term emerged at eBay as a novel discourse that synthesized two seemingly contradictory views. One view came from Pierre Omidyar, who founded the company in 1995. Inspired by cyberlibertarian ideals—the belief that digital technologies would naturally enable decentralized, self-governing communities free from traditional hierarchies (Turner, 2006)—he saw eBay as destined for self-governance and developed a website feature, the Feedback Forum, to achieve this. In contrast, Meg Whitman, who took the company's reins in 1998, sought to transform the site into a “well-lit marketplace,” where specialists and algorithms monitored and regulated user activity. Through an analysis of internal and external company documents, 2 I argue that Trust and Safety provided a moral justification for Whitman's governance ideal by linking centralized control to Omidyar's cyberlibertarian rhetoric. This synthesis transformed the delivery of a “positive user experience”—the perceived value proposition of online platforms (Gillespie, 2018)—into a mandate for sanitization and safeguarding, aligning eBay with American business culture while pioneering governance practices that would define the platform industry.

The article begins by framing eBay's story, reviewing approaches to the rise of platform governance and providing a historical contextualization. The second section examines Omidyar's self-governance ideal, including an analysis of the Feedback Forum. In the third section, the analysis turns to Whitman's tenure, during which she advanced a range of efforts to surveil and regulate user activity. The fourth section shows how Whitman's view matured into Trust and Safety, referring to a department, a discipline, and a philosophy that would synthesize eBay's approach to platform governance. Lastly, the conclusion discusses how Trust and Safety went on to become a powerful institutional myth that outlived eBay's heyday.

From infrastructure to early platforms

The seemingly dramatic reversal from cyberlibertarian self-governance to centralized platform control occurred against the backdrop of an economic transformation: the Internet's primary profit model shifted from selling access to the network to offering access to online services. Cyberspace enthusiasts mostly viewed digital environments as devoid of regulation and governed by the gift economy, a non-market exchange system. With the rise of dot-coms as the key business opportunity in the digital economy, companies began treating interactivity as a service to be commercialized, a shift that would give way to centralized platform governance. This section introduces this historical evolution.

For thinkers of digital culture in the early 1990s, centrally governing cyberspace was both impossible and undesirable. They believed that, by design, virtual communities like Usenet bulletin boards were structurally incompatible with centralized control, whether governmental or corporate. Howard Rheingold (1994), then editor of The Whole Earth Review, argued that virtual communities could uniquely self-regulate through “netiquette”—implicit principles of online civility rooted in the “gift economy.” Drawing on anthropologist Marcel Mauss ([1925] 2002), Rheingold envisioned cyberspace as fostering non-market exchanges based on reciprocal giving rather than monetary transactions. 3 By sharing information freely, users could filter unwanted content or self-select into communities with compatible values, thereby maintaining peaceful interactions without the need for centralized authority. This gift economy logic, as later argued by legal scholar Yochai Benkler (2002, 2006), enabled “commons-based peer production”—spontaneously formed organizations that developed collaborative projects such as Wikipedia or Linux. In this vision, free access to information combined with social recognition for contributions would naturally maintain order, making centralized moderation unnecessary.

It is worth noting that this dream of self-governance was, for the most part, merely a dream. For one, critics showed how online communities did not offer a haven from the embodied nature of race and gender, and that self-curated information could do little to sway these perceptions (Dibbell, 1994; humdog, 1999; Nakamura, 2002). Moreover, online communities certainly had moderators. For example, online bulletin boards were moderated by volunteer “sysops,” who often acted arbitrarily (Zittrain, 1997; Driscoll, 2022). This mode of governance, however, bore little resemblance to the systematic approaches that would define Trust and Safety. Virtual community governance was unsystematic and not-for-profit; it reflected what governance scholar Robyn Caplan (2018) characterizes as “artisanal” and “community-reliant” approaches to content moderation.

The naturalization of platform governance through systematic vigilance and formalized norms was driven by an economic transformation in which the prime opportunity to profit from the Internet shifted from selling connectivity to offering services that operated on Internet infrastructure. As the U.S. government privatized the Internet by 1995, Internet service provider companies emerged as the primary means of profiting from the Internet's growing popularity by selling users network access for a fee (Greenstein, 2015). Soon after, investors were wooed by the emergent market opportunity of dot-coms: companies that offered services running on the Internet infrastructure and that could “scale up” rapidly. Dot-coms offered graphical HTML-based designs and dynamic interactions that ran on Internet browsers, all thanks to the technical innovations developed by Tim Berners-Lee and his collaborators (Abbate, 1999; Greenstein, 2015).

Among these dot-coms, a particular category emerged: sites that relied on user participation and user-generated content. These were early examples of what would become known as platforms (Gillespie, 2010; Poell et al., 2019). These sites preserved the social interaction aspects of earlier virtual communities while introducing commercial intermediation of those relationships—in other words, they monetized the gift economy (Turner, 2009; Elder-Vass, 2016; Fourcade and Kluttz, 2020). They experimented with new modes of commercialization: for instance, eBay took a cut of users’ sales and auctions, while Match.com charged $9.95 monthly to subscribe to its dating service, thus commercializing romantic connections (Angwin, 1998). This was afforded by the Internet's distributed infrastructure, through which web-based services could theoretically serve millions of simultaneous users (in contrast, earlier bulletin boards were limited by the capabilities of telephone lines, which restricted the number of concurrent users [see Driscoll, 2022]).

Many scholars have identified how platform governance emerged as these companies had to respond to the challenges of user growth and user demands. Communication scholar Jessa Lingel (2020) notes that sanitized online spaces rose “as more people came online and new platforms sprouted to meet their needs” (2). Gillespie (2018) argues that platforms began making decisions regarding content moderation as they came to perceive content as the primary commodity they offered. Therefore, allowing any content would turn a platform into a “cesspool” (207). Legal scholar Kate Klonick (2018) argues that leaders of platform companies decided to start moderating content to “reflect the normative expectations of users” (1630) and align with their corporate philosophies. Analyzing the specific case of eBay, economic sociologist Vili Lehdonvirta (2022) argues that the site created a system of norms and user policing to counteract the prevalence of “market failures” (52), such as fraud and scams. Similarly, legal scholars Goldsmith and Wu (2008) argue that eBay established a fraud prevention team to address legal and reputational risks as its user base expanded and fraud cases increased.

While these accounts identify important dynamics, they leave open the question of how vigilant monitoring and norms-based control of user activity became commonplace and widely accepted—a striking transformation, given Silicon Valley's libertarian ethos. Neo-institutional theorists offer an account of how particular practices become taken-for-granted in organizational fields through the concept of “institutionalization.” Organizations do not merely seek efficiency when shaping their formal structures (their work practices and divisions of labor), but also pursue legitimacy in the eyes of key stakeholders. They do this by incorporating “myths” from their institutional environments, widely accepted ideas about how organizations should be or should act (Meyer and Rowan, 1977). Through this process, particular practices become institutionalized across an organizational field—they become the taken-for-granted solutions that organizations are expected to adopt to be viewed as legitimate. Institutionalization occurs through “isomorphic pressures”: coercive (from regulators and powerful stakeholders), mimetic (imitating other organizations under uncertainty), and normative (through professionalization efforts) (DiMaggio and Powell, 1983).

Applying this framework to eBay's case reveals how institutional pressures from external actors produced centralized governance as the taken-for-granted solution. Rather than treating the centralized curation of information as an inevitability—a vision that is core to the discursive apparatus of contemporary platforms (Gillespie, 2010)—we can examine what particular institutional pressures and worldviews drove dot-coms to move towards centralization. eBay's integration into new institutional environments subjected it to pressures from financial markets, corporate boards, legal regulators, and established retail companies—pressures that cemented centralized governance as the legitimate solution. The following sections analyze three governance imaginaries that emerged throughout eBay's transformation: Pierre Omidyar's vision of self-governance, Meg Whitman's vision of a well-lit marketplace, and, finally, Trust and Safety as the synthesis of these competing visions.

Pierre Omidyar's self-governing machine (1995–1998)

In the spring of 1995, during dinner with then-boyfriend Pierre Omidyar, Pam Omidyar (they recently married) dreamt of an online destination where she and other Pez lovers could talk about and trade their plastic candy dispensers. Pierre's interest was piqued. So, with his future wife's happiness in mind, the 27-year-old computer programmer wrote the software to power eBay. On Labor Day of that year, Omidyar launched the site from the family room of his Campbell, Calif., home (Cheng, 1999).

This mythical origin of eBay, as narrated by an article in AdWeek, is not factual; it is actually a fabrication of the company's public relations manager (Cohen, 2002). Despite this, it is emblematic of Pierre Omidyar's vision for eBay: a community, inspired by the romanticized sociality that supposedly characterized early online communities. This vision was most evident in a feature Omidyar championed throughout his tenure, from September 1995 to February 1998: eBay's Feedback Forum. This quantified reputation system mediated market relationships between unknown individuals and promised to make community self-governance possible.

While eBay's founding story portrayed Omidyar as an amateur, this was another half-truth. In fact, he was an experienced entrepreneur. He had previously co-founded eShop, a startup that sold e-commerce software for brick-and-mortar stores transitioning to online sales, and sold it to Microsoft in 1996 (Microsoft, 1996). At the same time, eBay did start as a hobbyist's side project. While founding “AuctionWeb” (eBay's first name), Omidyar was a full-time engineer at Mountain View-based General Magic. Silicon Valley provided a welcoming environment for Omidyar's entrepreneurialism, a milieu that combined an experimental attitude, pervasive technological optimism, and growing capital. By the time of his arrival in the Bay Area after transferring to the University of California, Berkeley, Silicon Valley had a bustling investment ecosystem and had already established itself as a hub for technological innovation through companies like Hewlett-Packard, Intel, and Apple (Saxenian, 1996). In Silicon Valley, hobbyists like Omidyar appeared to be rewarded: the region's hobbyist computing clubs—like the Homebrew Computer Club, where Apple co-founders Steve Jobs and Steve Wozniak had famously first connected—were part of a mythology that valorized tinkering as the root of innovation.

Omidyar believed that eBay could free individuals from the oppressive nature of traditional marketplaces, which were centralized, hierarchical, and controlled by powerful corporations that limited individual participation and agency. This reflects what media historian Fred Turner (2006) would later term “digital utopianism,” a belief in the liberatory powers of digital technologies. Ideas like Barlow's and Rheingold's, combining libertarianism, countercultural appeal, and techno-utopianism, were widely shared across Silicon Valley through publications like Wired—what Barbrook and Cameron (1996) called “the California ideology.” Inspired by the Usenet forums in which he had been an active participant, 4 Omidyar invoked the liberatory nature of virtual communities to articulate eBay's uniqueness. 5 In an interview for Time, he explained: “The first commercial efforts were from larger companies that were saying, ‘Gee, we can use the Internet to sell stuff to people’. (…) Clearly, if you're coming from a democratic, libertarian point of view, having corporations just cram more products down people's throats doesn't seem like a lot of fun. I really wanted to give the individual the power to be a producer as well” (Cohen, 1999). Beyond the ideological appeal, Omidyar saw the site's modeling of virtual communities as the key to its commercial success. In a retrospective interview, he argued that eBay was not “just a business about buying and selling,” as behind its success was “the not-so-obvious power” of community (Swisher, 2001).

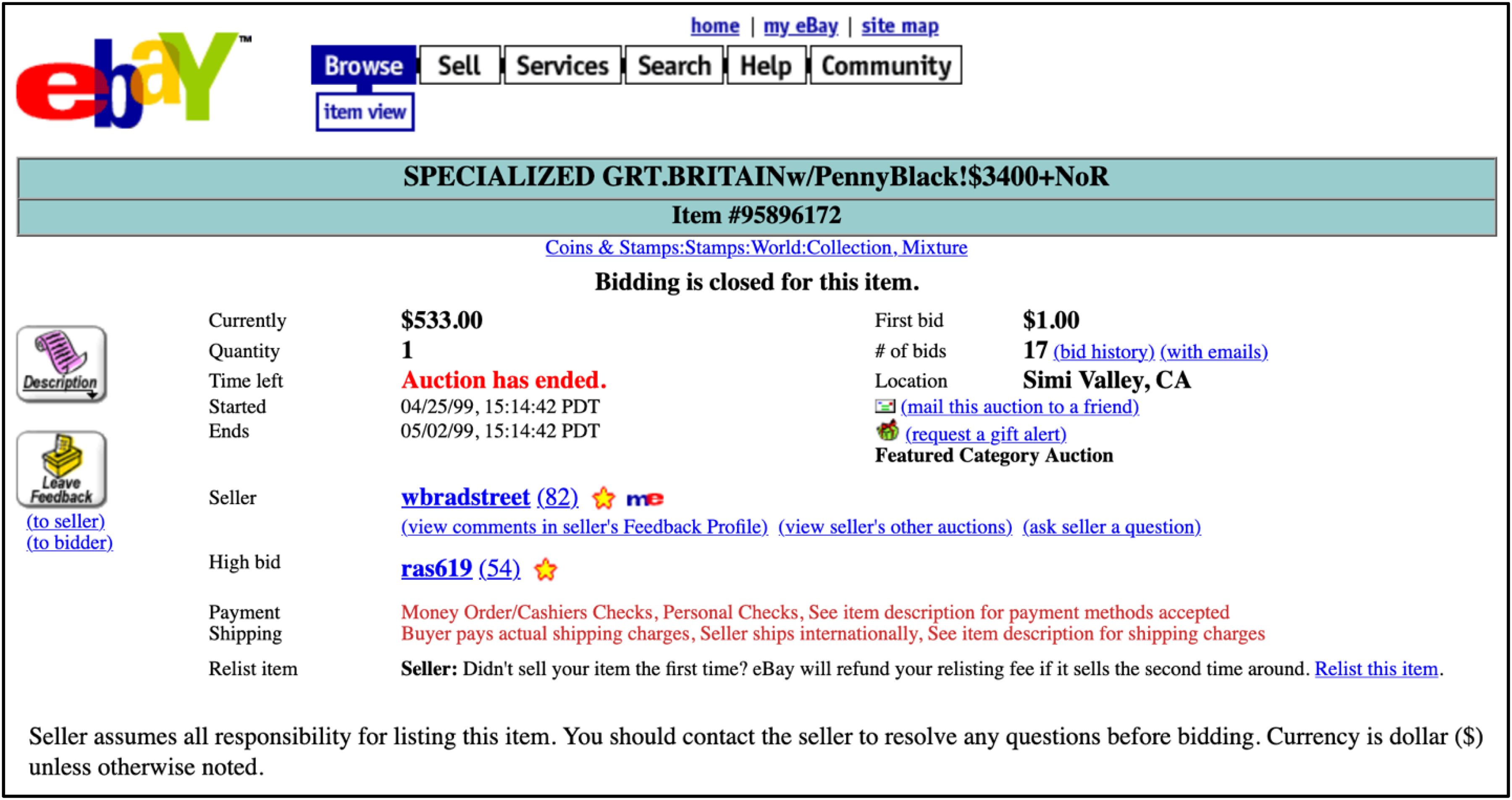

Inspired by the ideas of the gift economy and commons-based peer production, Omidyar designed a system of community-driven mutual surveillance to manage conflicts between users, which he named the “Feedback Forum”. Six months after launching the site, Omidyar announced this feature in a letter addressed to the “eBay Community” (eBay News Team, 2017): “Most people are honest. And they mean well. (…) But some people are dishonest. Or deceptive. This is true here, in the newsgroups, in the classifieds, and right next door. It's a fact of life. But here, those people can’t hide. We'll drive them away.” After buying or selling an item, registered users were invited to add a comment on their interaction with the other user (for example, a buyer could note whether they received the item, or a seller could comment on payment timeliness) and rate their transaction as positive, neutral, or negative. Each registered user had an associated rating, visible next to their username (see Figure 1). High ratings gave users “stars” of different types; for example, a “shooting star” was assigned to users with the highest ratings.

eBay auction of a stamp collection that ended in May 1999, archived by the Wayback Machine. Next to the names of the seller and the highest bidder, the parenthetical number indicates the Feedback Rating, which is the aggregate of feedback ratings (users receive +1 point for a positive comment, no points for a neutral one, and −1 for a negative comment). Both users have “Yellow Stars,” assigned to users with 10–99 points (eBay, 1999).

The Feedback Forum materialized reputation into metrics that would mediate market relationships between unknown individuals (Draper, 2019; Lehdonvirta, 2022), with the hope that it would enable users to make informed decisions about who to trade with. It created a performative public accountability system, leveraging visibility as a regulatory force. If eBay were a true community, in Omidyar's view, then public accountability could serve as its primary mechanism of governance. As ratings were reciprocal, they would deter users from antisocial behaviors without requiring any policing. The Feedback Forum also positioned users as both consumers of the site and its “producers,” as through their contributions, given away freely, they could shape eBay—the gift economy at its finest. Like Rheingold, Omidyar believed that “non-market” interactions (users were not financially incentivized to provide feedback) could provide the basis for digital self-governance.

For Omidyar, the Feedback Forum provided order without contradicting his cyberutopian, libertarian ideals. He saw it as a grand innovation in the site's governance. As Lehdonvirta (2022) notes, review systems may seem commonplace for contemporary users, but they were relatively innovative as a collective governance tool in 1996. Relying on the free flow of information via the Feedback Forum, users would be able to make decisions themselves. This dream would largely end with Omidyar's departure as CEO.

Meg Whitman's well-lit marketplace (1998–1999)

Omidyar's vision of a self-governing community based on mutual surveillance was challenged by Meg Whitman, who took over as CEO of the company in March 1998. She was not opposed to Omidyar's framing of eBay as a community: In fact, she would frequently draw on this rhetoric to justify her decisions, and it would remain a key part of eBay's marketing efforts (Whitman and Hamilton, 2010). However, while Omidyar saw eBay as a self-governing community that needed only a basic framework to flourish, Whitman believed the community required active regulation and vigilance to become a “well-lit marketplace” suitable for corporate expectations.

Whitman joined as eBay transformed from a startup to a corporation, bringing corporate business experience that Omidyar and co-founder Jeff Skoll lacked. By the time of her arrival, eBay had 30 employees, half a million users, and revenues of $4.7 million in the United States (The Telegraph, 2011). It had also raised $6.7 million in capital from Benchmark, which acquired a 22.1% stake and suggested bringing on a CEO with a background in consumer marketing who could mature the company in anticipation of a promising IPO. 6 Whitman had launched her career in large, traditional corporations, like Procter & Gamble, Bain & Company, Disney, and Hasbro. Many of these were headquartered on the U.S. East Coast, a radically different world from Silicon Valley's experimental, horizontal culture (Saxenian, 1996). Financial stakeholders viewed her arrival favorably. Three months after she joined, Howard Schultz (then-chairman and CEO of coffee chain Starbucks) and Dan Levitan's firm, Maveron, made a significant investment in the company. eBay went public on 24 September 1998, in a highly successful IPO. By the end of that first trading day, its stock price had increased by 163% (Schwartz, 1998).

While Omidyar fundamentally believed that eBay had to be self-governed by its users to the maximum extent possible, Whitman thought that eBay would have to monitor and shape user activity to become a household name. Her goal was to turn eBay into a “clean, well-lit place to do business,” as described in her memoir, The Power of Many: Values for Success in Business and in Life (Whitman and Hamilton, 2010). Omidyar had seen dishonest activity on the site—fraud and bad-faith transactions—as a “fact of life” (eBay News Team, 2017), which could be addressed through information flow. In contrast, Whitman was critical of this “kind of free for all,” as she called it (Whitman and Hamilton, 2010: 94). She echoed a broader trend in American politics against digital anarchy, a trend different from Omidyar's milieu, which had seen the Internet's apparent lawlessness as liberatory. In 1996, Congress had passed the Communications Decency Act, “the first great attack on cyberspace” (Goldsmith and Wu, 2008: 19), which criminalized transmission of “obscene or indecent” materials to minors over the Internet. While most of it was struck down by the Supreme Court, later efforts, such as the 1998 Children's Online Privacy Protection Act, reflected a growing perception emphasizing corporate, rather than individual, responsibility for online activity.

To achieve this vision of a well-lit marketplace, Whitman oversaw the release of a program of “safety” features and processes, bundled under the “SafeHarbor 2.0” name (Bradley et al., 2000). While the site had relied on mutual surveillance for governance, a press release stated that now “eBay vigilantly [looked] to protect its community” (eBay in Boyd, 2006). While the program shared the name with the legal term “safe harbor”—a provision that protects parties from liability when they meet specific requirements—these two ideas had little actual connection. The laissez-faire approach to user activity spearheaded under Omidyar was largely reliant on legal safe harbors like Section 230 of the CDA (Klonick, 2018; Kosseff, 2019) and the 1998 Digital Millennium Copyright Act (DMCA), which relieved online service providers of responsibility as long as they provided an avenue for copyright holders to report infringing materials. But under Whitman, eBay would come to lean less on these provisions.

SafeHarbor 2.0 included norms of acceptable use, which would later be known as “community guidelines” or “platform policies” (Gillespie, 2018). Some of these were adopted in response to perceived legal threats, coinciding with legal investigations in the U.S. By February 1999, eBay was under a U.S. federal investigation for its role in facilitating the trade of firearms. The following month, eBay instituted a policy to ban the sale of firearms on the site ( The New York Times, 1999 ). In September, the policy extended to alcohol and tobacco (Richtel, 1999). In addition, SafeHarbor 2.0 included a “shill bidding policy,” referring to bidding on an item to drive up the price without the intention of purchasing it, which could also present a legal challenge (Bradley et al., 2000). This meant that eBay was no longer solely concerned with the digital identity of users, but also their physical one, closing the door on the cyberlibertarian dream of disembodiment and purely virtual selfhood.

These new norms reflected Whitman's belief in the responsibility of the company itself. Brad Handler, eBay's legal counsel who had defended the company's laissez-faire stance during Omidyar's tenure, argued strongly that eBay could rely on Section 230 to avoid legal liability for most user activity (Cohen, 2002). However, Whitman did not see these issues as just linked to legal penalties. In her memoir, she explained that it was impossible to “control how [tobacco, guns, or alcohol] would be shipped and by whom they would be received” (Whitman and Hamilton, 2010: 104). This concern for corporate responsibility reflected both the declining perception of a lawless Internet and a concern for company reputation. From the start of her tenure in 1998, she was concerned over negative perceptions of activity on the site in the media (Bradley et al., 2000), and her acumen for corporate leadership meant she was aware of the potential harm of negative coverage. Indeed, eBay stock had taken a direct hit as a result of the legal inquiries (The New York Times, 1999).

eBay's norm system would stray beyond the requirements of the law, as the company sought to adjust to the expectations of its new corporate stakeholders. The Nazi items ban illustrates this, where Whitman acted under pressure from its newly appointed Board of Directors. The Board included Omidyar, Whitman, Intuit founder Scott Cook, Bob Kagle from Benchmark, and Howard Schultz from Maveron (eBay, 1998). In 1999, Schultz returned from a visit to the Auschwitz concentration camp with a request for Whitman to ban the sale of all Nazi items on the site. Previously, the Board had discussed banning the sale of Nazi memorabilia, but the decision had been to allow all legally permissible items. 7 Schultz told Whitman to ban items with Nazi symbols, or he would consider leaving the Board (Whitman and Hamilton, 2010). Schultz's request went to the core of Omidyar and Whitman's philosophical rift: Omidyar insisted that the Feedback System was there to address conflicts on the site and that users had to be empowered to decide what to buy and sell (Whitman and Hamilton, 2010: 95). But Schultz persuaded Whitman, as she believed his concern was essential to protect the eBay brand. First, eBay banned Nazi insignia but allowed items more than 50 years old. In 2001, the company expanded the policy to include all items with Nazi symbols, including antiques (Guernsey, 2001).

Corporate actors also swayed Whitman to develop automated vigilance mechanisms—precedents of contemporary automated or algorithmic content moderation (Gray and Suzor, 2020; Gillespie, 2020; Gorwa et al., 2020; Wright, 2022). In 1999, the Interactive Digital Software Association (IDSA), a trade group representing video game companies in the United States, threatened eBay with a lawsuit for distributing “bootleg” copies of video games. eBay's legal team advised Whitman to argue the company was not liable for this, as it was likely protected by the 1998 DMCA, which relieved online service providers of responsibility as long as they provided an avenue for copyright holders to report infringing materials. During Omidyar's tenure, Handler had established a “Legal Buddy” program that allowed high-selling brands to request takedowns of items that infringed on their copyrights (Cohen, 2002). eBay's legal team argued that constantly screening the site for content that infringed on the copyrights of some holders, like those represented by IDSA, could risk losing its DMCA safe harbor (Whitman and Hamilton, 2010), and that proactively screening content could mean that eBay would be liable for the copyright infringement cases its filtering mechanisms missed.

Whitman's response was once again influenced by the Board, which swayed her to adjust to the expectations of American business. Schultz urged her to consider “the character of the company,” and another Board member said that she could not let “lawyers run the company”; it had to be her “values” as a corporate leader (Stanford eCorner, 2017: 1:00:38). SafeHarbor's 1999 feature update included “Legal Buddy Program 2.0,” under which the site, rather than merely responding to takedown requests from copyright owners, would proactively identify potential violations through an automated daily keyword search (Bradley et al., 2000). With these changes, eBay positioned itself as an ethical corporate citizen.

Trust and Safety at eBay (2002–2007)

Whitman's concern for a well-lit marketplace and Omidyar's mutual surveillance imaginary would be synthesized in the development of Trust and Safety: the department, discipline, and philosophy behind eBay's platform governance apparatus. In 2002, eBay launched its Trust and Safety department under the leadership of Rob Chesnut, a former federal prosecutor who had joined the company as an attorney in 1999 (Chesnut, 2022). Over the following years, Trust and Safety became a fully developed body of knowledge and practices. It leveraged a cultural appeal, the ethos of online communities, while making eBay compatible with the demands and norms of American business culture that had guided Whitman's well-lit marketplace drive.

By launching a department, Whitman intended to develop a proactive approach to eBay's governance, focusing these efforts on a specific division within the company. Chesnut had been involved in implementing the SafeHarbor features by relying on scattered resources across the company. Now, he was allowed to poach employees from the Customer Service division and hire a team of engineers, data analysts, and tooling specialists. He claimed a “jurisdiction” (Abbott, 1988) within the company, capturing all issues related to user governance.

In its external communications, eBay highlighted the work of its Trust and Safety team as protectors of users and guarantors of the site's safety. Between 2006 and 2008, eBay hosted at least four Town Halls on “eBay Radio” (the company's Internet radio show) dedicated exclusively to Trust and Safety topics. 8 In the April 2007 Town Hall, eBay's Chief Marketing Officer introduced the Trust and Safety speakers as true “leaders in the industry” who would talk about “eBay's efforts to keep out the scammers in the marketplace, so we can keep it safe and well lit” (eBay, 2007: 1). Answering questions from users, they introduced themselves by their full names and company roles (eBay, 2005, 2006a, 2006b, 2007), a performance of transparency that echoed Omidyar's idea of eBay as a true community. By relying on metaphors of civic life—spearheaded by Omidyar, who had named the site's forum “eBay Café”—the Trust and Safety Town Halls positioned users as “citizens” seeking answers from their “governors,” while maintaining corporate control through carefully screened questions. At the same time, the choice of Internet radio as the medium evoked the cultural familiarity of talk radio call-in shows, while drawing on Internet radio's culture of amateurism—once again, a core aspect of the Silicon Valley imaginary.

Metaphors of communitarian civic life also appeared in the eBay Trust Playbook (eBay, 2004), a document that eBay's then-senior manager of “trust marketing,” Dave Steer, spread around the company's employees, summarizing eBay's Trust and Safety strategy. The Playbook started with two quotes: a Muslim proverb stating “Trust in Allah, but tie your camel,” and another from “ocean-gypsy, eBay Community Member” that read: “eBay is like having a big city like New York City in your home or your office. Most problems in the ‘big city’ of eBay can be avoided if you remember to use good common sense.” The urban metaphor was used to emphasize self-reliance, reminiscent of Omidyar's vision for the site. However, this was no longer the scoped community that Omidyar had imagined. At eBay's start, the Playbook noted, it “had imagined [its] future as a company in terms of the eBay Marketplace.” Now, its community was a truly “global” one, and the company's goal would be “economic opportunity for people worldwide” (8). This meant that eBay would have to “turn trust and safety into a core competence” (23). eBay was an established brand and a multinational corporation, in line with Whitman's efforts; yet the Playbook drew on the repertoire of Omidyar's California idealism: the site was the realization of the early promises of cyberspace.

To fulfill the mission of achieving a well-lit and global marketplace, the Playbook articulated a philosophy and outlined a strategy for “trust” and “safety,” sometimes treating them as separate goals. Trust was described as a “social, shared good between people that is hard to quantify” (7), “earned through experience” (18). Trust took several forms. For one, it referred to trust among users. The Playbook noted that eBay could encourage its users to reciprocate trust through the free flow of information, which is why the site's feedback system, a descendant of the Feedback Forum, was a crucial element in achieving this goal. This was the strongest echo of Omidyar's vision. Second, trust referred to a relationship between users and eBay. The Playbook stated that eBay was “unlike any other business,” as it worked “because of the vigilance and participation of the global community of buyers and sellers—and because of the unique three-way balance of trust that eBay Inc. cultivates among buyers, sellers, and eBay” (25).

These two dimensions of trust were interconnected, as seen in the hopes behind the design of the Online Dispute Resolution (ODR) system, a technology developed by eBay's Trust and Safety team. In 1999, Ethan Katsh, a professor at the University of Massachusetts Amherst, collaborated with eBay to launch a pilot dispute resolution program for resolving buyer and seller feedback disputes, which ultimately led to the online mediation startup SquareTrade (Rule, 2017). In 2002, eBay's Trust and Safety team hired Colin Rule to launch an in-house system, targeting additional dispute types like cases where sellers claimed buyers had never paid for the items they won in auctions. Rule (2017: 356–357) believed that ODR could improve relationships among users by facilitating communication, and that it could also shape users’ relationships with the marketplace. Rule was concerned with “how the community approached transaction problems,” as non-paying users were often referred to as “deadbeat buyers,” and if a seller did not deliver an item the only recourse was to file a “fraud alert.” Rule saw these as overtly hostile and believed they negatively shaped eBay's culture. And beyond transforming the culture, ODR could change how users conceive eBay—in a paper published in the Artificial Intelligence and Law journal, Rule and Friedberg (2005), another employee of eBay's Trust and Safety department, argued that trust was flimsy. If users had repeated positive experiences, they would no longer think of the marketplace on a “brand level,” but instead think of it based on their personal experiences.

While trust gathered many aspects of Omidyar's vision, the Playbook's articulation of safety was emblematic of Whitman's well-lit marketplace aspiration. Safety was “individual, and more simply measured as the everyday odds of staying free from harm” (eBay, 2004: 7). In this sense, the Playbook portrayed safety as a probability. eBay could not ensure safety, but could reduce the risk of harm. How much to reduce that risk was a complicated calculation. This is because, the Playbook argued, trust and safety were complementary concepts, but they needed a careful balance: “Safety should support trust, not smother it (…) Too much in the way of safety mechanisms can actually undermine trust—introducing a sense of oppression, and rendering trade antiseptic and antisocial” (20). Safety was described broadly as involving the areas “beyond the community's control” where eBay would intervene (45). A key aspect of guaranteeing safety was about enabling low-risk payments, which is why making PayPal (which eBay had bought in 2002) the “universal online payment standard” was one of the Playbook's “plays” or “principles of action” (36). Safety was also about ensuring a monitoring system to minimize harmful behavior. It included the site's security and risk management systems: “all the mechanisms, programs, and people working behind the scenes at eBay Inc. (including PayPal) to monitor and clamp down on fraud and deception” (50).

The elaboration of Trust and Safety demonstrated a concern for users’ emotional experiences on the site, as evident in Rule and Friedberg's (2005) emphasis on perceptions of other users and the marketplace, as well as in the framing of safety as an individual sense. In the February 2006 Trust and Safety Town Hall, Chesnut addressed a question from a user who inquired about eBay's decision not to allow many PayPal competitors to serve as payment intermediaries, a result of the site's new “Safe Payments Policy.” “As a venue, you shouldn’t be doing that,” the user argued (eBay, 2006a: 12). Chesnut argued that “the goal of a venue is actually to create a safe environment for the participant. (…) As the operators of the venue, it actually is our responsibility to provide rules and to be involved in the venue to the extent that it actually makes it a better venue for everyone to experience” (12). eBay was a “venue,” yes, but it had to be experienced. And for this experience to be positive, it required the engineering of Trust and Safety specialists.

The notion of a safekept venue shows the extent to which Trust and Safety synthesized Omidyar's vision of self-governance and Whitman's centralized governance ideal. It brought together the construction of eBay as a community (Boyd, 2006), while also solidifying eBay's role as a responsible corporation. For Omidyar, governance had been about creating harmony in a community prone to disorder, where eBay had to provide a frame for user activity. For Whitman, it had been about restoring the integrity of the company and making it responsive to the new capitalistic stakeholders it was now accountable to. Trust and Safety would position these two concepts as fundamental for sustained positive interactions. A sanitized environment, defined in terms of Trust and Safety, was constructed as necessary for eBay's success.

Conclusion

eBay's case shows how centralized governance became an institutionalized practice as dot-coms encountered and adopted the logics, practices, and visions of American business culture. Coercive pressures (DiMaggio and Powell, 1983) from financial and corporate actors—investors, boards, and regulators—pushed eBay to adopt systems of vigilance and regulation of user activity, including content moderation. eBay's governance processes and mechanisms were developed as Whitman aligned the company with the pressures of the corporate landscape. The company responded to legal threats not by strengthening its laissez-faire approach but by adjusting to the expectations of other companies and its Board. In short, it created an apparatus of governance to align with what was recognized in this environment—novel for a Silicon Valley company—as a legitimate, ethical corporate citizen.

Trust and Safety's innovation was creating a discourse that reframed corporate vigilance as user protection. By the end of eBay's first decade, “Trust and Safety” had become an established term at the company, referring to a discipline dedicated to making users self-reliant and turning eBay into a well-lit site that facilitated positive user experiences. This mission synthesized Omidyar's vision for the site—inspired by libertarian and utopian ideas of a self-governed cyberspace—with Whitman's idea of a curated, norm-ridden space that aligned the company with the expectations of regulators, shareholders, and other companies in the American corporate environment. While maintaining Omidyar's community rhetoric, it enabled Whitman's centralized control, making user experience code for “commercially viable space.” Trust and Safety served to align eBay with corporate stakeholder expectations while maintaining the legitimizing language of community self-governance—a template that would define platform governance for decades to come.

In that sense, Trust and Safety became a discourse, a set of “practices that systematically form the objects of which they speak” (Foucault, 2013: 54). This discourse was geared toward the construction of “safe for business” environments online, reframing online interaction from a space of natural self-governance to one inherently requiring centralized surveillance, where individual user judgment must be supplemented by platform-designed safety mechanisms. Through the discourse of Trust and Safety, eBay became aligned with formal, corporate American commerce. This set eBay apart from lightly moderated sites like Craigslist (Lingel, 2020) and from what would become “darknet markets” (Gehl, 2018)—a term that draws on a diametrically opposed metaphor to that of a well-lit marketplace. 9 Trust and Safety embedded economic imperatives into safeguarding practices and crystallized the role of platforms as arbiters of acceptable behavior. This made centralized vigilance appear natural and morally justifiable.

eBay acted as a laboratory for platform governance. In addition to coining the term “Trust and Safety,” eBay's team would come to shape the platform industry, leading to the formation of a discipline of specialists now known as Trust and Safety professionals (a driver of normative isomorphism, in neo-institutional terms [DiMaggio and Powell, 1983]). Members of eBay's Trust and Safety team went on to work at other influential technology companies that sought to build their centralized governance apparatuses. For example, Rob Chesnut (n.d.) went on to work on Uber's Advisory Board on Safety and later became Chief Ethics Officer at Airbnb. Matt Halprin (n.d.), eBay's Vice President of Global Trust & Safety from 2002 to 2008, became YouTube's Vice President and Global Head of Trust & Safety in 2017. Trust and Safety would also go on to become a burgeoning professional field, as evident in organizations like the Trust and Safety Professional Association. eBay's effort to advance Trust and Safety as a discipline lives on through academic efforts like the Journal of Online Trust and Safety and courses including Stanford University's “Trust and Safety Engineering.”

The rise of Trust and Safety at eBay illuminates the emergence of platform governance alongside the mass commercialization of the Internet and the resulting financial pressures on for-profit projects. eBay's transformation shows how dot-coms shifted their value proposition from providing access to information and interaction to facilitating positive experiences through the protection of users. In that sense, Trust and Safety spearheaded the Internet into what business scholars Pine and Gilmore (1998) call “the experience economy”: a new business paradigm in which companies would strive to create positive, memorable events for customers, with the experience itself becoming the central product rather than merely the goods or services being transacted.

While content moderation today is primarily framed as a question of speech and expression—with scholarly and policy debates focusing on the boundaries of acceptable discourse—eBay's case shows how Trust and Safety emerged in the context of the governance of commerce. Political ethnographer Graham Denyer Willis (2023) frames Trust and Safety as a constitutional actor of the “digital market-fortress” of platform capitalism, arguing that its role is to shelter interaction and exchange by guaranteeing trust. eBay pioneered this fusion: the practices and understandings behind content moderation evolved from marketplace governance tools designed not to foster expression, but to create commercially viable digital spaces. This intermingling of the governance of commerce and the governance of speech is precisely what Jürgen Habermas (1985) referred to as the “colonization of the lifeworld”—the intrusion of systemic imperatives into domains that should be governed through democratic discourse. The practices and understandings surrounding the regulation of acceptable expression online are ported from the governance of marketplaces.

Footnotes

Acknowledgments

The author is grateful for the careful feedback offered by Becca Lewis, Collin Knopp-Schwyn, Devesh Narayanan, Elizabeth Fetterolf, Fred Turner, Kate Sim, Marijn Mado, Rachel Bergmann, and Xiaochang Li. He is also grateful for the generous comments offered by the three anonymous reviewers and Associate Editor Matthew Zook.

Ethical considerations

Interviews were conducted following a protocol (#68891) approved by the Stanford Institutional Review Board.

Consent to participate

Verbal informed consent was obtained from interview participants.

Consent for publication

Verbal informed consent for publication was obtained.

Funding

The author disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The author is supported by a graduate fellowship award from Knight-Hennessy Scholars at Stanford University.

Declaration of conflicting interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data availability statement

The data that support the findings of this study are derived from publicly available sources (which are cited in the article), except for one internal eBay company document. This document was provided by a former employee, who did not authorize its publication.