Abstract

As new online safety laws and advances in AI reshape digital markets, a new class of third-party software vendors has emerged offering platforms for managing trust and safety. This article examines how these vendors reshape platform governance not merely as secondary labor providers but as positioned actors in a social and professional field. Through interviews, fieldwork at an industry conference, and analysis of vendor texts, this study finds that these start-ups are incentivized to build moderation platforms that capture critical chokepoints between social media platforms, AI model developers, regulators, and moderation labor. From this position, vendors translate legal ambiguity into operational norms and recast moderation as a form of preemptive risk management. These findings reframe platform governance by revealing how a growing intermediary field of trust and safety institutions mediates external pressure on platform companies and shapes standard norms and practices for governance across the field.

Introduction

In recent years, social media platforms have come to rely more and more on external vendors, in addition to their internal teams or hired pieceworkers, to support the work of content moderation, age verification, fraud prevention, and more functions that fall under the umbrella of trust and safety (Gillespie, 2018; Roberts, 2019). A 2024 report from the trust and safety start-up Duco Experts estimated that the market for trust and safety vendors is set to grow by $8 billion by 2028 (Trust & Safety Market Research Report, 2024). The authors cite several reasons, most prominently new advances in artificial intelligence (AI), widespread layoffs of in-house policy teams in recent years, and new online safety regulations creating strong incentives for the industry to spend on trust and safety. However, the report does not project these gains to be evenly distributed. Almost all of the growth is projected in “trust and safety software,” as opposed to “trust and safety services.” These are a small number of venture capital (VC)-backed start-ups launched in the last decade, many founded by former employees of large platform companies that specialize in software tools for moderation and AI-powered risk management.

What should scholars of platform governance make of this predicted shift in the market for moderation services? Regardless of whether the predictions of this report are realized, they offer a glimpse into how founders and investors from influential funds like Y Combinator imagine the future of trust and safety. Investigating the products these companies are developing in anticipation of that future can help us to better understand the forces shaping online safety today. The literature has been attentive to vendors that provide labor outsourcing services and industry attempts to automate moderation decision-making (Gillespie, 2018; Roberts, 2019), but the rise of software-as-a-service (SaaS) start-ups capturing millions of dollars in VC suggests a new space in this market—and governance ecosystem—is opening.

This study begins from the premise that in order to understand this shift in the vendor market, scholars must adopt a new understanding of trust and safety. I then proceed to answer two questions through interviews, observational fieldwork, and textual analysis. First, what forces, both internal and external to the field of trust and safety, are shifting the business of trust and safety vendors toward a software-as-a-service platform model? Second, how does the infrastructure and professional node occupied by platform vendors enable the active shaping of the practices, norms, and standards of the rest of the field? I find that vendors, far from being neutral service providers, function as field-shaping actors who mediate regulatory uncertainty and reorient governance around risk management logics, by building new platforms at infrastructural and professional chokepoints in the market. I argue that they are not merely reacting to new regulations or the prior decisions of executives at platform companies; instead, they are platformizing the market for moderation services, reconfiguring the logics and boundaries of digital governance by building the software on and through which it happens.

Literature review

Early work on platform governance observed that platform companies (especially social media companies) both govern their users’ speech and behavior (Grimmelmann, 2015; Klonick, 2017; Suzor, 2019) and are themselves governed by the law and other external influences (Gillespie, 2018; Gorwa, 2019b; Schulz, 2022). Research has examined both sides of this relationship, however the literature on the governance of platforms has tended to focus on top down influence: laws that require companies remove certain categories of illegal speech, multistakeholder bodies that set best practices, and, to a lesser extent, civil society principles for protecting civil liberties to which companies can sign onto to build trust. Gorwa (2019a) models these influences as a triangle, wherein regulatory influences can be characterized as a combination of firm, state, and civil society pressure.

This attention to the vertical flow of governance from law to platform to user has largely overlooked other institutions that influence platform governance horizontally, not through mandates or public pressure but as influential actors in a shared field. Caplan (2023) introduced the concept of “networked platform governance” to describe how companies horizontally distribute accountability to external actors. Platform companies, whether voluntary or required by law, consult with advisory bodies, civil society groups, and academics, giving them input but little actual power to shape outcomes. Absent from this network are the third-party companies contracted to carry out the actual enforcement of moderation.

Vendors have likely been excluded from the conversation of the governance of platforms because they are so closely associated with governance by platforms. Where they have been investigated in the literature, it is essential research on the highly exploitative, off-shore piecework labor that carries out the policy decisions that platform companies have already made (Gray and Suri, 2019; Roberts, 2019). However, vendors can also play a role in shaping the governance of an industry. Research on privacy law has found that vendors often do the work of interpreting ambiguous regulatory requirements and shaping the practices that come to be recognized as signals of compliance (Grover, 2024; Waldman, 2019). The law places requirements on platforms, but before those requirements can influence the practice of managing user data, it must first pass through and be interpreted by a professional field, including software vendors.

Building on Caplan's networked governance, this study positions trust and safety vendors as actors in that network. This shared profession allows for a horizontal, mediating form of influence in which a range of firms contribute to shaping the day-to-day practices of trust and safety professionals inside platform companies. Both in-house and vendor employees attend the same professional conferences, read the same newsletters, and tune-in to webinars from field-building organizations like the Trust and Safety Professional Association. Circulating in these shared professional spaces, vendors are not an influence on governance from above, but professional colleagues, many of whom also used to work in-house.

Unlike the advisory boards and non-governmental organizations Caplan examined, platform companies do not engage with vendors in an attempt to establish the appearance of democratic decision-making. However, they do not contract with vendors for purely market-based, rational reasons either. Instead, I draw on neo-institutionism to examine the social and professional environment trust and safety teams in platform companies and trust and safety vendors are embedded in (DiMaggio and Powell, 1983; Meyer and Rowan, 1977). Both actors are responding to shared norms, myths, and dominate social logics in the field as they seek legitimacy (Friedland, 1991; Thornton, 2002). Additionally, to understand how they articulate their shared profession of trust and safety, its boundaries, and its expertise, I draw on the sociological theories of professions developed by Abbott (1988).

This perspective of vendors as embedded actors in a shared professional field of trust and safety, in which they shape and mediate the influence of other external actors on platform companies, allows this research to consider the projected shift in the market in a new light. Instead of the question being, “Are their algorithms more accurate?” or “Are they cheaper?” I ask how the shift from labor outsourcing to expert software-as-a-service repositions vendors within the field, and thereby alters their influence on governance. This allows for a clearer understanding of how top down pressures such as laws, standards, and advocacy campaigns are translated into the practice of the trust and safety profession, arguing that this practice does not simply follow the law or market incentives but is actively mediated within the field's internal contests for capital, legitimacy, and control over infrastructural and professional nodes.

A new wave of trust and safety vendors

In the early days of large social media platforms like Facebook and YouTube, business processing companies (BPOs) saw an opportunity. These platforms needed to review billions of pieces of digital content a day, and they did not have the desire nor the capacity to do it in-house. They needed external partners who could provide access to cheaper, outsourced piecework (Roberts, 2019). The companies that filled this need were typically not founded as “content moderation providers”—instead they were specialists in absorbing tasks and workflows that clients could not or would not do in-house. Content moderation was one of these tasks.

In this study, my focus is not on the longstanding labor outsourcing business model but on a more recent wave of start-ups launched since 2018. Whereas the business process outsourcing companies sell access to a large volume of poorly-paid decision-makers, these newer companies sell access to their small team of experts and the tools they developed via that expertise. To establish their expertise, founders draw on their backgrounds in trust and safety engineering, product management, and security at large platform companies. In their telling, they are not a distant shore on which to dump unwanted labor but a lean, expert partner there to help customers build a new kind of trust and safety workflow.

While many of these start-ups launched offering widely different services, most of them have converged on a roughly similar business model. While the specifics of these products can vary greatly, they all resemble a set of software-as-a-service products that are modular, enterprise-grade tools for making moderation decisions at scale, monitoring outcomes, and enabling compliance reporting. Typically, this means a centralized set of tools for moderators to review content with, policy teams to create and test new rules with, and managers to monitor the entire workflow with. When a new piece of content from a social media company or a new product listing from an online marketplace enters the system, it is funneled through a set of checks (human or automated) that assess it against a set of rules and, ultimately, an action (removing or approving the post, banning the user, etc.).

Centralization allows customers build powerful decision tree-like workflows for automating the logics of processing content before and after classification steps: that is if a post contains a photo, pass it through the nudity detector; if it scores greater than 90, remove it; if it scores between 70 and 90, send it to a human for review; if it is removed, send the account a warning. This function provides a kind of underlying, customizable logic of association that translates a range of disparate data into actions, what vendors often refer to as an “operating system.” Some function as “marketplaces,” with AI models the client can choose from external developers like OpenAI and Google. Some function as “walled gardens,” providing access to proprietary moderation tools developed by the vendor and its partners. Others focus on the platform-BPO relationship, building tools to integrate outsourced moderation with in-house enforcement. But they all share a combination of classification and decision-making logic that warrants further investigation.

Methods

This study employed a multi-method qualitative design to investigate the role of vendors in the field of trust and safety and their influence over platform governance outcomes. To do this, I triangulate my analysis across three sources of data: semi-structured interviews with trust and safety practitioners, participant observation at TrustCon (the pre-eminent industry gathering for trust and safety vendors, clients, and other stakeholders) in July 2024, and theory-driven thematic analysis of vendor documents, websites, product demos, and other texts. Data from all three sources were iteratively analyzed for common themes in waves as I collected the data, which informed the following round of data collection (e.g. early analysis of vendor marketing material informed my observations at TrustCon). All research was approved by the Cornell University IRB via Protocol #IRB0148691.

Once all data collection was complete, I conducted one final round of coding of all collected text. This iterative process drew themes from each data source as they informed and reinforced each other. Because this study focuses on how vendors articulate their role, frame their products, and seek legitimacy in the field of trust and safety, my recruitment and data collection were aimed at the vendors themselves, rather than the entire field. The start-up vendor ecosystem is still relatively small and concentrated, so this corpus of interviews and observations at the one conference where the majority of these vendors gather captured a substantial portion of the field and provided sufficient variation to reach thematic saturation through triangulation.

Twelve semi-structured interviews of approximately 60 minutes were conducted with trust and safety practitioners. Participants were recruited through purposive sampling at TrustCon, with the goal of recruiting individuals in leadership roles at vendors or longtime professionals who have experience working with vendors. Nine of the participants are founders of or senior executives of trust and safety vendors. The three non-vendor participants all have at least ten years of experience in the field across multiple technology companies, and one has experience investing in early stage trust and safety start-ups.

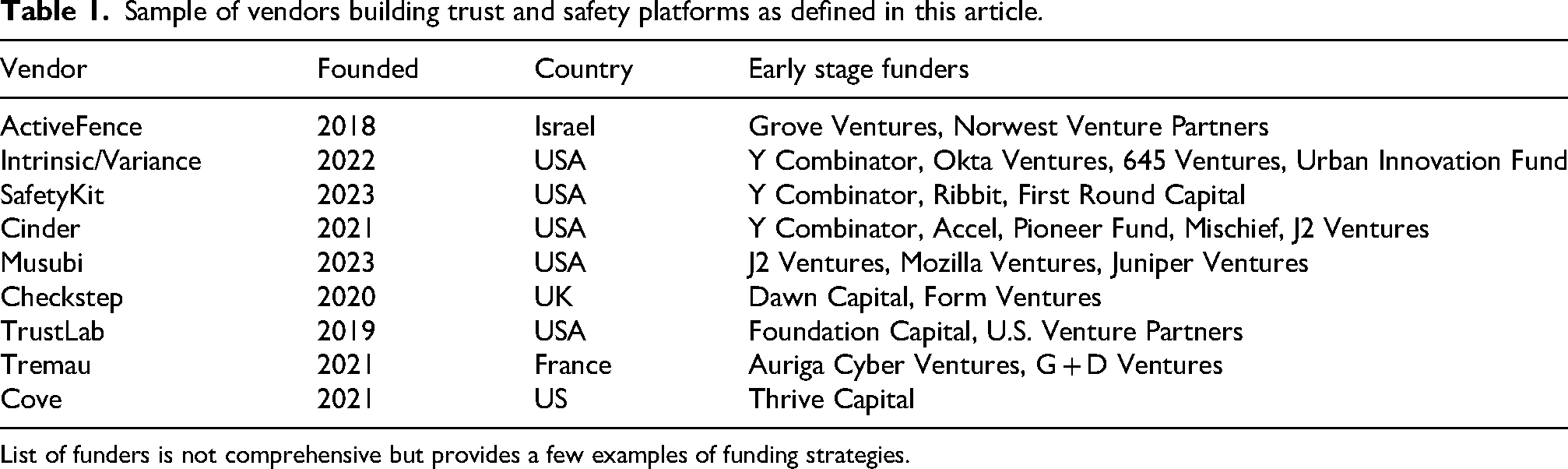

I also participated as an attendee at the 2024 TrustCon conference, which brought together around 3000 participants from the industry, as well as a smaller number of participants from governments, civil society, and academia. As an overt researcher, I participated and observed panels, workshops, social events, and exhibitor booths. My final source of data is publicly accessible documents published by the nine vendors listed in Table 1, including but not limited to websites, blogs, webinars, reports, and product demos. Given the small number of vendors, I was able to sample documents from a majority of the software-as-a-service vendors that I am aware of, allowing for a comprehensive view of the vendors in this market.

Sample of vendors building trust and safety platforms as defined in this article.

List of funders is not comprehensive but provides a few examples of funding strategies.

Data were analyzed throughout the collection process in order to inform subsequent rounds of collection. For example, I first began collecting and analyzing vendor websites and marketing materials to understand how they describe themselves, their vision of trust and safety, and the types of applications they propose for their software. This informed my observations at TrustCon, giving me insights into how certain vendors position themselves in the field and helping me to prioritize my time at the conference. After the conference, I collected new data based on my observations and new vendors I discovered while there. After all data were collected, I then reanalyzed the entire corpus together.

I treated these data as contextually situated artifacts of how vendors seek to position themselves. Interview statements, claims made in webinars, and assertions in marketing materials were analyzed as attempts to project legitimacy, authority, and distinguish themselves in a competitive market. Triangulating these materials with observations from TrustCon allowed me to contextualize the promotional narratives. For example, I was able to better interpret the symbolic meaning of their marketing pitches because I had sat in on presentations they gave, heard industry gossip about their products at happy hours, and read earlier iterations of their websites via the Internet Archive. This approach treats start-up spin as a valuable signifier of the social logic and institutional constraints shaping this market rather than authoritative statements of what their product can do.

The constellation of forces shaping trust and safety vendors

In this section, I consider two external forces acting on the field of trust and safety that are moving the market for third-party services toward the platform model: regulation and capital investment. A common refrain that I observed in conversations and in panels at TrustCon 2024 was that new regulations like the DSA and the UK's Online Services Act (OSA) are creating a “compliance floor” for online safety obligations of platform companies and therefore driving new business to vendors. Before, I heard people argue, companies could simply choose to ignore harm to users as long as they avoided scandals, but now governments around the world are creating significant financial liabilities.

The shape of those gaps depends on the specific requirements of the law. For example, the DSA does not require companies to remove specific categories of speech. Instead, it mostly imposes workflow requirements: transparency reporting, risk assessments, appeals systems, and so on. This is creating a greater need for process and reporting tooling than for content classifiers. Multiple interview participants from vendors informed me that, at the time of the interviews in late 2024, they had not yet experienced the wave of new customers they had anticipated after the DSA went into effect. One vendor founder I interviewed said: “I was thinking back to when I was at [a large platform company] and GDPR was coming into play in 2019, and how this [DSA enforcement], we were thinking would be a similar moment, and so far I don’t think it has. […] These laws take a few years to really have their impact felt, but, so far, at least that we’ve seen, there's not a lot of concern from potential customers about it. As long as you’re roughly checking the boxes, they’re under the assumption that […] they’ll go after the worst offenders with the largest impact.”

However, many regulated companies have traditionally not operated their systems in a way that is amenable to the reporting requirements of the DSA. Articles 34 and 35 of the DSA require large companies to conduct risk assessments for broad categories of “systemic risk” and identify measures to mitigate those risks (Griffin, 2025). A larger range of companies must also report on every moderation decision they make, including whether the decision was automated or not, for inclusion in a public database. Some smaller trust and safety teams have no way of compiling aggregate data on decisions other than by hand. One founder told me: “A lot of the companies I talk to […] are like, ‘Yeah, we get incidents in Slack, and we get incidents in ZenDesk, and stuff comes through Salesforce.” […] How do we union all of these different sources so we can have a single view of a case?”

Similarly, the imperative for growth created by VC investment in trust and safety start-ups is pushing vendors toward business models that expand the market or create new markets. Most VC funds assume the majority of their investments will fail but that they will more than recoup these losses via the smaller number of companies that return many times their initial investment (Bracy, 2025). This incentivizes funds to push start-up founders to take on risk because success requires capturing an enormous amount of the available market.

In my interviews, some participants expressed concern that the combined trust and safety budgets of the companies who are willing to spend it on external vendors is not enough to support VC-level growth. As one said of the need for more software to support trust and safety teams: “The main thing hindering that is just that there is […] not enough money, right? Ultimately even companies that are of the billion dollar, one to five billion dollar, valuation are dragging their feet in deploying some of these technologies mainly because of cost.” “I’m not confident the trust and safety market [narrowly defined] is really there to support big, big tooling companies. You’d have to sell to Meta and Google and all of the other big companies. [Competitor vendor] is probably the closest to that, but I think they have a lot of government contracts and that's propping them up. […] And that is probably a massive market.”

Vendor strategies: remaking the business model as a platform for decision-making

I have found that vendors are responding to these forces primarily in two ways: expanding the scope of the trust and safety market and building the platform that the existing market for third-party services operates on and through. Some vendors are still offering other services, like custom trained machine learning models and open source investigations, but all of the vendors I have analyzed have adopted one of or both of these strategies.

The first strategy is to increase the overall size of the market of firms that buy trust and safety tools to include a much wider array of types of services. Vendors that began offering services to social media content moderation are likewise pushing into these related industries to grow the size of the total market, by making other problems of data classification recognizable as trust and safety problems. One founder I interviewed described how their products have evolved from content moderation to marketplace safety in search of larger markets: “We’re still doing a bunch of trust and safety stuff, but we’ve definitely pivoted from pure content to marketplace and payment processor enforcement. […] Where there's money there's money. The value of protecting a platform from a tweet is much, much, much lower than the value of protecting a platform from a problematic merchant. […] This sort of dictates where companies invest internally. [Investment risk] teams, charge back teams, and teams that literally protect companies from direct impact to revenue get a lot more funding than teams who do, sort of, vibes-based platform quality moderation.”

As multiple interview participants from different vendors told me, they are aiming to sell software to this long tail of smaller companies who have not yet realized that they have a trust and safety need. Typically, trust and safety has been thought of as a “tech company” problem, but one founder named Walmart as the kind of company they would like to sell their products to—one that does not have a long history of trust and safety work,

1

that increasingly functions as an online marketplace, and therefore has a greater need for external support developing and enforcing policies. Another described the long tail of smaller online companies that facilitate small but potentially risky interactions between users as potential customers: “Even if all they have on their video game platform is ability to send screenshots, there's hate speech there and there's bullying, and there's harassment, and there's suicide, self-harm, and all these harms exist. No matter how small or low resolution or short your entry field and upload buttons are, you’ll have harm. So there are new entrants.”

In the summer of 2024, the website for Intrinsic, a Y Combinator-backed moderation start-up founded by former Apple employees, advertised their services as primarily a moderation solution for replacing existing human content review processes. In 2025, the company rebranded as Variance, which now sells, “Autonomous agents that detect threats and enforce missions-critical decisions” for “user-generated content moderation,” “insider threat detection,” “fraud and marketplace risk,” and “AI-driven threat intelligence.” Their products, the new website, claim “unveil hidden risks in any system.” While they still market their services for content moderation, the total addressable market for Intrinsic was limited by trust and safety budgets, but Variance can sell to almost any business with large amounts of unstructured data and “hidden threats.”

One founder speculated that this field expansion has included government contracts that vendors have not made public: “This is pure speculation, but when I think of the cyber-intelligence and cybersecurity and whatnot at a massive scale, you are basically just reviewing; it is moderation. You’re like, this text message came through or this post on a platform that we ingested or this phone call transcript. Now I feel like I’m in conspiracy theory land, but that's all just the same exact process as content moderation, and you have an entire warehouse of analysts who are just reviewing and connecting and doing deep connects across all of these objects. It is effectively the same problem. […] And so I think that's a huge market.”

Perhaps most significantly, vendors are pushing the market for trust and safety services into the emerging market for AI safety. As hype around the future of generative AI has funneled billions of dollars into start-ups, the authority to declare AI systems safe has emerged as highly valuable professional territory for a profession to stake claim to (Abbott, 1988). ActiveFence, for example, began releasing reports on AI red teaming (the practice of proactively imagining adversarial attacks on AI systems to check for vulnerabilities and unintended behaviors) and marketing red teaming services and “guardrails” to AI developers and end users. In a blog post on redteaming, they argue that in order to anticipate the full range of risks posed by a generative AI model, redteamers need the expertise of trust and safety teams who have been investigating the worst harm of the internet for decades (The Importance of Threat Expertise in GenAI Red Teaming, , 2025). In 2025, their marketing materials shifted to primarily focus on AI safety. Cinder, another prominent Y Combinator-backed vendor, built a moderation platform originally intended for social media content that is now used by AI developers such as OpenAI to monitor generative AI models in development and after they launch into the world. The company now brands itself on the banner of its website homepage as, “The operating system for safety. From content moderation to responsible AI”mpa#rdquo; (Cinder - Responsible AI, Trust &).

In order to establish legitimacy to sell software and services to AI developers and deployers, vendors are doing the public work of redefining the problems of AI as matters of trust and safety. At TrustCon, I observed one co-founder of a vendor say on a panel that “Generative AI safety is essentially a content moderation problem.” In other words, making generative AI models safe means developing rules for inputs and outputs to the model and building a scalable system for enforcing those rules.

Setting aside the merits of this claim and instead taking it as a discursive act, we can imagine statements like this shaping perceptions of what kind of expertise is needed to make AI safe in industry and among regulators. In the model of Abbott's (1988) system of professions, this is an attempt to fill the jurisdictional vacuum created by the rapid emergence of generative AI as a public problem. In their push to advance into new fields, vendors are doing discursive work to shape what counts as a “trust and safety problem,” and that has implications for the entire field: the skills the field hires for, the forms of knowledge it values, where it fits in an organizational chart, and so on.

Some vendors are attempting to create a new market which undergirds the existing market, inspired by existing models of software-as-a-service vendors who managed to dominate a market by building platforms at key chokepoints (Poell et al., 2019). The problem for the start-up founders I have spoken to is that they cannot compete in model development with companies like OpenAI and Google. One founder I spoke with said that he let his machine learning team go 6 months after ChatGPT released because it was clear that they could not compete in the arena of general-purpose language processing.

This founder described two possible responses. One would be to become the world leader in classifying a very specific kind of speech and outperforming BPOs and AI developers in a sliver of the market. The other, which this start-up is now pursuing, is to become the “Salesforce” of moderation: “One of the things I realized. The SaaS [software-as-a-service] business. There are not so many big SaaS companies. The biggest one outside of Microsoft is Salesforce. They built an operating system that is now plugged in by hundreds of companies that are entirely dependent on Salesforce. So it becomes a little bit of a magnet. We could be the equivalent of Salesforce […] but for trust and safety. That's my vision.”

Rather than trying to replace human moderators at BPOs with algorithms or build a better speech classifier than OpenAI, the goal of this model is to build the system that the BPOs and model developers operate atop. Salesforce serves as a common example for these vendors to emulate, an example of mimetic isomorphism (DiMaggio and Powell, 1983) where a shared model compels firms to behave in similar ways. Similarly, another founder I interviewed pointed to the cybersecurity firm CrowdStrike as the model for their company (while jokingly acknowledging the outage that had occurred 2 weeks prior.) Another interviewee summarized this “consolidation” toward a platform business model: “They’re all using the same language. […] I’m like, ‘You’re just stealing our language.’ […] I just feel like there's a funny consolidation towards […] automate your decisioning, send the rest to humans. All of that is a consolidation towards a single platform that you can use to basically triage between AI and humans.”

Infrastructural node/professional node: vendors as mediators of institutional pressure

Existing sociological research on legal compliance and the development of professions has highlighted the importance of professional bodies—such as trade journals, professional associations, formal credentialing organizations, and so on—in shaping the practices of firms (Abbott, 1988; Edelman et al., 1999). In the case of trust and safety, these bodies are either recently launched or non-existent, giving vendors with existing capital in the emerging field the opportunity to play this role. The strategies described in the previous sections of boundary expansion and building platforms place the newer wave of vendor start-ups at both an infrastructural and professional chokepoint in the field, and that, with other professional institutions in the field still maturing, this gives vendors a powerful position from which to influence the practices and norms of the field.

Platform studies scholars have found that platform companies derive their power from control of key infrastructural nodes, where a complex network of interdependencies passes through a bottleneck that can be controlled (Nieborg and Poell, 2025; Poell et al., 2019). As the editors of a 2024 special issue on platform power argued, platform power is located in “the relations of dependence that grow around specific platforms” (Nieborg et al., 2024: 4). As the above quoted founder told me, the power of a company like Salesforce that they are attempting to emulate is that of a “magnet” that attracts and makes hundreds of other companies dependent.

The moderation platforms offered by these vendors are positioned to control a key node between decision-making, platform companies, and policy. Most obviously, they seek to mediate the relationship between companies that offer means of classification and decision-making (model developers and BPOs) and online service providers with moderation needs. They also sit at a chokepoint between online service providers and regulatory bodies, such as the out-of-court moderation dispute resolution bodies required by the DSA. Two of these bodies, Appeals Centre Europe and User Rights, have announced partnerships with Cinder and Tremau, respectively, to enable integration of their platforms with moderation appeals bodies. In the press release announcing their partnership, Tremau describes their role as infrastructural, with User Rights reviewing the appeals and Tremau “facilitating the information flow process” (Tremau, 2025, para. 5).

The power of this node is that vendors become an infrastructural dependency for regulatory compliance, which may create a lock in effect for the entire ecosystem. The end result they are aiming at is for regulatory databases, appeals bodies, model developers, BPOs, and online service providers to become dependent on a vendor's platform, creating high costs to any one actor that seeks to exit. One founder I interviewed described how unlikely customers would be to switch from one service to another, due to the high cost: “The switching cost fucking sucks. Like, really, really rough. […] The really big companies that could actually resource a team to switching are not going to buy these smaller companies’ products.”

By controlling the node of this system responsible for operationalizing safety, vendor platforms play a vital role in determining the meaning of the metrics that get reported to regulators: what gets measured, how those complex processes are operationalized into something quantifiable, how the workflow of moderation changes to be optimized for that quantification. These are decisions that, ultimately, the end-user of the platform controls but that the design of the vendor platform influences and constrains.

However, vendors do not only cultivate power derived from these infrastructural nodes alone. The expansion of the boundaries of trust and safety is bringing them into contact with new, adjacent fields. As the vanguards who are often bridging these fields, they gain a position of influence by sitting at professional nodes between those fields. It is therefore important to understand the platform power they wield as coming from both their position at infrastructural and professional chokepoints.

For example, as a vendor expands from traditional content moderation into AI red teaming, their ability to speak to both fields with the epistemological authority of the other grants them unique legitimacy. To trust and safety customers, they bring emergent expertise in urgent new AI threats and capabilities while to AI developers and deployers, they bring established, years-long expertise in tried-and-true moderation expertise. At TrustCon, speakers repeatedly cited AI in presentations, on panels, and in sponsor keynotes as a threat and opportunity that the field was generally unprepared for. Representatives of vendors were eager to present themselves at their booths and in their talks as experts in AI who were prepared to combat the threat and also provide the engineering talent to realize the opportunities.

Vendors who have developed robust policy operations also possess a similar form of field-spanning capital. They are not merely operationalizing policy decisions made in-house but also shaping what the field sees as content that needs to be mitigated in the first place. For example, the French start-up Tremau pairs their moderation platform, Nima, with a compliance consulting unit that advises customers on how to prepare for new regulations. Tremau has hosted webinars guiding participants on how to prepare for risk assessment requirements for both the DSA and OSA and has hired former European Commission policy officers who worked on drafting the DSA. Tremau did not write the DSA, and they are not telling regulators how to enforce it, but they are playing an important intermediate step in interpreting its requirements for customers and offering compliance strategies.

To leverage this field-spanning position, vendors regularly host webinars and release research reports that not only promote their products but offer a vision of the everyday practice of trust and safety. These include sessions on new regulations and how to prepare for compliance and others on emerging threats such as deepfakes, generative AI, and child exploitation methods. In these sessions, vendors do not merely pitch products in response to existing professional norms because in many cases, those norms do not yet exist. Instead, they operate in uncertainty—describing potential threats that the field is not acting on and shaping norms that have yet to emerge, discursively and through design. As one founder explained to me in an interview, the design of the moderation platform can encode best practices into the safety workflow of smaller customers who have less experience in the field.

Vendors regularly operate in the uncertainty created by new online safety laws to shape new practices, selling tools for compliance norms that have yet to crystalize. At TrustCon, which took place shortly before the first deadline for the first round of DSA risk assessments, I sat in on a panel co-organized by the European Commission and a vendor. In the questions portion of the panel, participants repeatedly pressed representatives of the European Commission for details on how to conduct the newly required risk assessments. There was a clear sense of frustration among these participants, many of whom were lawyers working for online service providers, who felt they were being asked to invent a new kind of assessment on the fly that would be evaluated against some unknown requirements.

Stepping into this uncertainty, vendors like Tremau and ActiveFence have offered public webinars on preparing for compliance with the DSA and OSA. This practice extends into smaller laws that receive less public scrutiny. In 2024, ActiveFence hosted a webinar on strategies for compliance with the REPORT Act, a mostly overlooked law that altered federal requirements for online service providers to report known cases of child sexual abuse material, expanded the scope to include child sex trafficking, and increased penalties for non-compliance. These policy changes created new uncertainties about what and how much content to report. In their webinar, ActiveFence spoke directly to this uncertainty with compliance recommendations that are not required by the law, such as conducting proactive investigations into user behavior on other online services, which ActiveFence's products are well-suited to facilitate. As an ActiveFence representative in the webinar said of the “murkiness” of detecting and reporting child sex trafficking: “ActiveFence works with a lot of major platforms. A lot of major platforms have amazing AI algorithm capabilities in-house. The reason they go to a vendor like ActiveFence is exactly because AI is limited in looking across the open web but even more so the deep and dark web for up-to-date evolution. Which oftentimes can only really be picked up in the closed communities of these threat actors” (ActiveFence, 2024, 45:19).

Not every vendor offers explicit policy services, but they almost all advertise their moderation platforms as a tool for staying compliant in a rapidly changing global regulatory environment. In interviews, multiple participants reiterated that they are not compliance companies, but simply integrate features such as one-click features for generating transparency reports. For example, one founder of a software start-up that mentions legal compliance on its website but would not call itself a “compliance company” told me: “Compliance to us is mostly two things. […] [First] It is the ability to handle appeals. On our platform, we have an endpoint through our API where users can appeal things and, as long as our customers set it up, that will also go into [our systems] for review. […] [Second] Transparency reports. So basically we are ingesting all of your data and all of your decisions and all of the automation, so we can generate a transparency report for you, as required by the DSA. […] Compliance is not our main focus, but because we’re in this space we want to have something. […] And we’re already surfacing that data anyway.”

It is not that clear requirements of new online safety laws are driving the adoption of new moderation tools. It is instead the ambiguity and uncertainty created by these laws that enable vendors to at once promote their services using the language of compliance and at the same time deny being a “compliance company.” Mirroring the strategic ambiguity that online platform companies have long used to maintain the appearance of neutrality (Gillespie, 2010), it is not wholly accurate to call these companies “DSA compliance providers” but it is also not accurate to leave them out of the regulatory compliance conversation altogether. They shape practices as intermediaries, from the middle, not themselves subject to these laws and rhetorically able to defer decisions to the customer. All the while, they promote interpretations of requirements, strategies for compliance, and tools for generating reports, appeals, and dashboards.

Trust and safety as risk management

If vendors and their moderation platforms are influencing the governance of the internet, what kind of governance do they suggest? What kinds of problems are these platforms designed to solve? The public's common perception of content moderation is of a case-by-case, quasi-judicial process in which a piece of content is evaluated against pre-existing, fixed rules (Douek, 2022; Gillespie, 2018). In this version, moderation is triggered through user flags or automated systems for detecting violations, and the decision is made by a human or an algorithm, based on an evaluation of past behavior. It is certainly possible to use these moderation platforms in this way, but the marketing for many of these start-ups often describes use-cases that go beyond this typical picture.

In a demo of the “AiMod” system from the vendor Musubi's website Musubi—Moderation Supercharged (2025) an account is assigned a risk score of 83 and banned from the platform. That score appears to be derived from four other risk scores, based on behaviors such as rapidly viewing profiles from different continents and having a mismatched GPS location and IP address location. Musubi boasts that this tool can evaluate someone's “entire history holistically” to “deeply understand their intentions.” This is not an evaluation of the probability that a piece of content has already violated a rule but rather an estimation of future harm posed by an account. Regardless of whether their algorithms can actually predict harm, they enable present action.

Other vendors promote similar user-level risk scoring as a best practice, to varying degrees. For example, in a blog post about evaluating content detection systems, ActiveFence describes the value of account-level risk metrics for determining future harms and enabling stricter moderation of accounts deemed high risk (Stark, 2024). Large platforms with sufficient resources to develop moderation tools in-house have been doing similar behavioral moderation for years (Douek, 2022). But vendor platforms, many founded by former employees of those larger companies, now offer—or even encourage—this technique as a service to smaller companies that fall into the expanding market of trust and safety.

This shift from evaluating a specific violation to estimating future potential harm mirrors Amoore's (2013) account of the transformation of security governance from a logic of discipline to one of risk. The traditional view of moderation operates primarily on a disciplinary logic (Foucault, 1977), in which subjects are judged against known rules based on their past actions. Rather than evaluate whether a specific piece of content violates a norm, these systems assess the likelihood that a user will pose a future harm, based on correlations and associations within user data. Amoore describes this turn to risk in terms of the “data derivative,” which is a mode of calculation that does not seek to probabilistically project a past trend into the future but instead acts on the basis of associations across disparate data points that indicate possible futures (Amoore, 2011). These scores are not causal; their value is not in predicting future events but in making present data actionable on the basis of the harms they imply. As Amoore says: “It is not strictly collected data that become an actionable security intervention, but a different kind of abstraction that is based precisely on an absence, on what is not known, on the very basis of uncertainty. […] Mining and analytics draw into association an amalgam of disaggregated data, inferring across the gaps to derive a lively and alert new form of data derivative - a flag, map or score that will go on to live and act in the world” (Amoore, 2011: 27).

Ultimately, the central offering of many moderation platforms lies in their capacity to transform the vast quantities of user data collected by customers (and other sources) into actionable data derivatives and to make those actions reportable. In doing so, they enable and promote a mode of governance oriented toward proactive risk management rather than reactive discipline. This is not moderation as enforcement but moderation as preemption. And in that preemption, the designers of these systems have the opportunity to impose new logics as “best practices” on the field.

This model for risk management is exactly the form of governance vendors are incentivized to produce by external pressures of VC and regulation discussed above. The shift from governance as discipline to governance at risk mirrors the emphasis on risk management in online safety laws. Firms have been required by the DSA to monitor for systemic risk but given little practical guidance in how to do so, and vendors are stepping in with the language of user risk scoring as a potential strategy. These behavioral risk scores are not mentioned in the DSA, but the open-ended nature of the systemic risk mitigation requirements (Griffin, 2025) of the law leave firms and vendors open to interpret users as a primary source of risk.

Similarly, VC-level growth requires a product that can scale beyond and across traditional markets. A content moderation tool is limited to a relatively small market, but a risk management tool that takes a large amount of disaggregated data and enables preemptive action can be sold to almost any organization known or unknown threats. The abstraction of risk into portable data derivatives is what enables this move. It transforms content moderation into a more universal form of preemptive governance and transforms the scope of the field of trust and safety to encompass the broad, blurry category of digital risks—from AI-generated content to disinformation to “insider threat detection.”

Discussion: vendors and the market for networked governance

Caplan (2023) introduced networked governance to the literature on platform governance to describe the array of external organizations platform companies consult in policy development, dispersing accountability for their decisions horizontally. My findings introduce a new, political-economic dimension to this network of external governance actors. The vendors I have described are not merely enforcing the decisions of platform companies, they are shaping them. They interpret the meaning and significance of new regulations, operationalize broad concepts of safety, and shift the underlying logic of platform governance toward risk management.

These start-ups adopt this platform model due to their belief (and the belief of their VC-backers) that there is a new, untapped multi-sided market to capture in the governance relationship between AI developers, online service providers, regulators, and the moderation labor outsourcing companies. In other words, networked governance emerges not only from strategic actions of large platform companies but also through markets for governance. While the start-ups I have described here may fail eventually (most of them will), many of the institutional, regulatory, and market forces shaping them will remain. Even if the billion dollar market for trust and safety-as-a-service software is only imagined, I have shown that these start-ups are already pushing the boundaries of the field, defining compliance strategies, and promoting a logic of risk, in response to those forces.

Through their consulting services, marketing materials, webinars, and participation in field-defining spaces like TrustCon, vendors articulate specific best practices in response to new, uncertain regulations. As Griffin (2025) recently observed, the DSA's vague requirements for systemic risk mitigation depoliticize questions of risk and power in platform governance and are likely to serve the interests of the large, powerful companies they are meant to constrain. By reifying this risk-based approach, vendors are furthering what Griffin describes as technocratic policy dominance that closes off broader public contestations of risk.

Beyond these large companies, however, the language of risk management is being mirrored by vendors as the new operating logic of trust and safety. Even though the law's systemic risk requirements only apply to the largest companies and say nothing about scoring users’ risk levels, the shared language of risk represents a broader shift in the field toward a logic of risk. Whether user risk scoring of the kind offered by these vendors becomes an agreed upon signifier of DSA compliance in the field remains to be seen.

Limitations and future research

This study excluded other forms of vendors, many of which I encountered at TrustCon, that specialize in other services, such as age and identity verification and intelligence investigations. It also focused on new regulations in Western countries, overlooking online safety regulations in countries such as Singapore and South Korea, and the process by which those laws are translated into practice. Data collection was also limited to the vendor side of the market, excluding the perspectives of longer standing BPO vendors and social media platforms. Because data collection was structured around TrustCon, findings are limited to the particular themes of that conference and to the people and organizations that attend it.

To fully understand how the market for vendors and the field of trust and safety more generally is shaping platform governance outcomes, future research should investigate how other actors and institutions within the field perceive and engage with these vendors. This study examined how these vendors are constructing a market for themselves, and analyzed their own claims about the future of trust and safety. Whether potential customers believe those claims, how regulators are thinking about these new start-ups, the extent to which these start-ups are or are not lobbying lawmakers, and the perspective of the venture capitalists investing in these companies are all outside of the scope of this article and offer fruitful avenues for future research.

Additionally, future research should examine the influence of trust and safety on the practices of other fields, especially AI safety. Crucially, as trust and safety vendors make the push into generative AI safety, they bring with them certain commitments, backgrounds, and notions of safety. Future research should more fully map these approaches to safety, the fields of expertise they emerge from, and the notions of safety they employ.

Conclusion

By examining vendors’ active roles in shaping this field, this analysis advances scholarly understanding of the two sided model of governance “by” and “of” platforms (Gillespie, 2018), revealing how regulatory influence and moderation outcomes are mediated and transformed into operational practice. Crucially, moderation platforms, which are fundamentally systems for managing and classifying large amounts of disparate data points, shift the logic of content moderation from discipline to risk. While online safety laws like the EU's DSA do not require behavioral risk scoring and proactive moderation of accounts, vendors mirror the language of risk management to promote platforms as compliance tools.

As the boundaries of trust and safety expand into AI safety and corporate risk management more broadly, it is essential to understand the internal and external field dynamics that shape its norms and practices. Trust and safety as a field has its origins in the typical view of content moderation as a juridical, rules-based review process, but the field is rapidly expanding into new domains, with vendors at the vanguard. Largely driven by new, risk-oriented regulations like the DSA and the growth imperative instilled by the VC funding model, vendors are actively shaping this field, not merely providing the labor to enforce decisions that have already been made. Locating those decisions not only within platform companies but across this field is an essential and pressing challenge for the scholarship of platform governance moving forward.

Footnotes

Acknowledgments

The author would like to thank J. Nathan Matias, Tarleton Gillespie, Claire Wardle, Rohan Grover, Tomás Guarna, and two anonymous reviewers for feedback and conversations that greatly assisted in this research. The author would also like to thank the Cornell Communication Department for feedback he received after presenting a version of this research at its colloquium series.

Ethical approval and informed consent statements

This research received approval from the Cornell University IRB via Protocol #IRB0148691. Written consent was obtained from all interview participants. Conference organizers were aware of and consented to the research.

Funding

The author disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: received financial support for fieldwork from the Cornell University Graduate School, Department of Communication, the Qualitative and Interpretive Research Institute.

Conflicting interest

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article

Data availability

The data collected for this study consists primarily of interview transcripts. Due to the small number of companies that fit the description of the research and the risk of directing abuse toward participants, the transcripts will not be made available.