Abstract

As algorithmic systems are part of even the simplest actions in our daily lives, the critical issues of meaningful human agency and autonomy in relation to these systems have been increasing. This article aims to introduce a novel way of conceptualizing meaningful human agency and simultaneously address a gap identified in the current predominant sociotechnical solutions. To do so, it reaches out to A.J. Greimas’ actants theory and theory of modalities and further builds on the author’s own empirical findings. Re-reading Greimas’ theories, it argues that current ‘fixes’ for enabling and facilitating meaningful human agency have overlooked a crucial aspect – the willingness of the individuals to act agentially, even when opportunities and mechanisms to do so may be present. By transposing Greimas’ syntactic trajectory for action (the

Introduction

we are unescapably algorithmically processed by calculative devices

(Bucher, 2020:613)

As algorithmic systems are becoming our companion species (Haraway, 2010; Lupton 2016), becoming progressively part of even the simplest actions in our daily lives, the critical issues of meaningful human agency and autonomy in relation to these systems have been increasing. Since our daily lives are predominantly happening on and through algorithmic infrastructures, and due to a multitude of factors (e.g., algorithmic opacity, complexity, corporate gatekeeping practices), individuals are said to be in an asymmetrical power position in relation to the data and algorithmic systems they interact with, which significantly limits their ability to understand, inspect, and challenge the outputs of these systems. Scholars in media and communication studies, along with related fields like science and technology studies, and critical data and algorithmic studies, have been problematizing the nature of algorithmic power in contemporary society and in individuals’ lives and the nature and degree of human agency within these systems (Couldry, 2014; Feenberg, 2011; Hepp and Görland, 2024; Hildebrandt and O’Hara, 2020; Introna, 2011; Kennedy et al., 2015; Neff and Nagy, 2016; Neff et al., 2012; Passoth et al., 2012; Pop Stefanija and Pierson, 2023; Rammert, 2012; Savolainen and Ruckenstein, 2022; Susser et al., 2019). This problematization proves to be a complex one – individuals simultaneously do have agency over algorithmic systems, but at the same time, they are increasingly influenced and impacted by them. The interplay of human and algorithmic agency has been conceptualized as

The aim of this article is to introduce a novel way of conceptualizing meaningful human agency while also addressing a recognized gap. It does this by drawing, first, from a lately forgotten place – the theoretical conceptualizations of the structuralist and semiotician A.J. Greimas. The further development of Greimas’ conceptualizations and their transposition in algorithmic contexts is additionally informed by insights collected from previous empirical studies (Pop Stefanija & Pierson, 2023, 2024, forthcoming). In this article, I argue that Greimas’ theories can be productively transposed within the domain of critical algorithmic studies, and I elaborate further on how they can be employed to conceptualize, investigate, and facilitate meaningful human agency within human-algorithm assemblages. Why is this reconceptualization important and necessary? While researching the preconditions and requirements for achieving meaningful human agency (Pop Stefanija & Pierson, 2023, 2024, forthcoming) and examining the solutions proposed by others (academics, regulators, industry), I identified a substantial gap in current dominant socio-technical solutions. Although the empirical studies I conducted differed, they uncovered one common denominator – I observed that what was often missing for a ‘fix’ in agency to be possible was not only the presence of technical affordances or societal initiatives like regulation and literacy. It was the

Following Greimas and supported by my own findings, this modality of wanting is crucial for the transformation of individuals from

Although Greimas’ theories have been widely used in the foundational periods of Actor-Network Theory (ANT) (see Beetz, 2013; Boullier, 2018; Høstaker, 2005; Latour, 1999; Lenoir, 1994; Mattozzi, 2019), they have fallen into a somewhat forgotten and silenced place. Based on Greimas’ actantial model and his theory of modalities, I transpose his theory of actants and actors to investigate and re-problematize the nature of human agency in relation to algorithmic systems. Following his theories, I argue that in their interactions with algorithmic systems, individuals are not fully agential (yet!). Further, translating Greimas’ theory of modalities —considered a theory of agency —allows us to postulate the preconditions and requirements for an individual to act agentially within the human-algorithm assemblage. According to Greimas, this is only possible if a hierarchical organization of four modalities (1.

The arguments and conceptualizations elaborated in this article can contribute in three significant ways. Transposing and translating Greimas’ theory into a new, human-algorithm context allows for a novel conceptualization of human-algorithm agency and the complex power dynamics involved. This reconceptualization can be further developed and used as an analytical lens and tool for investigating existing relationships of power, knowledge, and agency within specific human-algorithm configurations and systems. The prescriptive schema of the modalities –

The article begins by emphasizing the need for a novel conceptualization by discussing the nature of human agency in relation to algorithmic systems and the effects of this type of agency on individuals. It then elaborates Greimas’ complex, and potentially unfamiliar, theories in greater detail, developing them further and transposing them to the human-algorithm context. Discussing in detail what this would entail, the article ends with a discussion of a few points of consideration.

On agency, governmentality, virtuality, and subjectivity

To open the discussion on why a novel conceptualization of meaningful human agency is needed, it is crucial to elaborate on the understanding of agency elaborated in this article. The power and agency of algorithms can only be exerted on free subjects, as Foucault (1982) outlines –‘power is exercised only over free subjects, and only insofar as they are free’ (791). Individuals, as entangled in these systems, can still engage in corrective, agential, problematizing practices (Weiskopf and Hansen, 2022). Bonini and Treré (2024; see also Pop Stefanija & Pierson, 2023) provide a comprehensive definition of human agency, accounting for all the intricacies of this asymmetric power position – in our entanglement with algorithmic systems, we are able to exercise our agency, albeit to a degree only. The technical affordances of these systems simultaneously constrain us: we can both act agentially and/or not, we are steered by the boundaries imposed by the algorithmic structures and at the same time we still can, in gradations, exercise

This

Relying on this type of knowledge and epistemic processes, algorithmic governmentality bypasses both virtuality and subjectivity –‘there is no longer any subject in fact’ (Rouvroy & Stiegler, 2016:12), and subjectivity is avoided. This is precisely because of how algorithmic logic operates – built on vast amounts of individual data, devoid of context and information about intentionality, its goal is to categorize individuals without being interested in them –‘the very notion of subject is itself being completely eliminated thanks to this collection of infraindividual data; these are recomposed at a supra-individual level under the form of a profile. You no longer even appear’ (ibid.,:12). Unlike Foucault's governmentality (1991), algorithmic governmentality does not appeal to individuals’ capacity to understand (Rouvroy & Stiegler, 2016:12), but it functions at the level of signal, of reflex but not reflexivity. As such, it is a process of objectivation rather than subjectivation – a ‘process in which human subjects and their experiences are rendered visible and transformed into an object of knowledge’ (Weiskopf & Hansen, 2022:7), removing human reflexivity from the process of categorizing. This might lead to processes where individuals relate to the algorithmic prescription, internalize it, and ‘self-regulate to comply with what they think and anticipate algorithms or the designers of algorithms are expecting’ (ibid., 12). The creates epistemic imbalances – ‘a situation when some entity holds information, knows, or understand something about an individual, that the individual themselves do not’ (Delacroix & Veale, 2020; Pop Stefanija, 2023) and epistemic hegemony, where knowldege is captured, gatekept, and restricted (Ben-David, 2020; Pop Stefanija, 2023) which results in an elimination of the possibility to

The main goal of algorithmic governmentality is ‘uncertainty management’ (Rouvroy, 2013:10) – by affecting the actualization of the virtual, of the dimension of potential and spontaneity, it seeks to minimize and control the (radical) uncertainty of individuals’ potential actions and their agency, understood as their ability to either do or not do what they are capable of, including questioning, resisting, and disobedience. Targeting the inactual but possible, the algorithmic ordering aims to ‘structure the possible, to eradicate the virtual’ (Rouvroy and Berns, 2010: para X) and to steer the realm of possibility and potentiality in a specific way. Virtuality is definitional of the subject and the subjectivity (Rouvroy, 2013) understood as a process, as an ability to be and become oneself, to author oneself. By targeting uncertainty, virtuality, and potentiality, algorithmic governmentality targets the very elements and processes that allow individuals to author themselves, project themselves, relate to themselves, and ultimately become subjects (Rouvroy, 2013). As will be further discussed, Greimas’ theory postulates precisely the notions of potentiality, virtuality, knowledge, capabilities, and subjectivities as essential prerequisites for acting agentially and becoming an actor or a subject.

Unknowing subjects of algorithmic systems

This was the antidote, she realized, to the feeling of distant people whom she’d never meet who held the power of everything over her. To be able to control the computers around her, rather than being controlled by them.

(Doctorow, 2019:62)

In

Salima thus becomes agential in the relation with the systems – for the first time, she ‘had a kitchen full of devices that would obey you’ (Doctorow, 2019:48). One event is crucial for this – she gained knowledge that was previously gatekept, hidden, and inaccessible. By acquiring this knowledge, she transformed from an

Departing from the premise that ‘what may count as a form of agency may be different from who or what counts as an actor’ (Passoth et al., 2012: 5), I start with the question – what counts as an actor according to Greimas’ theory and why individuals, in the current socio-algorithmic arrangements, cannot be considered one, yet?

On subjects and objects

To answer this, I reach out to Greimas’

As already briefly elaborated, the difference between actants and actors is significant, for both Greimas and the arguments in this article. The

The category of actant is not a simple one, and its definition is complex and scattered in Greimas’ works (e.g., canonical form in 1966 (Greimas, 1984), refined in 1973 (Greimas, 1987a); see also Greimas and Courtés, 1982; Greimas, 1987b). Simply explained, actants are specific elements within a certain constellation (schema, according to Greimas) that follow a predetermined programme – their function is not changeable, their performative boundaries are fixed, and with that, their behaviour is predictable and programmable (Greimas and Courtés, 1982). In this schema, the subject-actant is the origin of the action, and the object-actant is the one that performs the action as requested by the subject-actant. Transposed into human-algorithmic relations, this means that, constrained and steered by the technical affordances of algorithmic systems, individuals follow the programmes of these systems. Thus, algorithmic systems assume the function and position of actants-subjects, while individuals serve as actants-objects. In his well-known example of ‘John wants Peter to leave’ (Greimas, 1987c: 72), where John is the subject and Peter is the object, we can say that the same principle applies to our situation: ‘YouTube wants me to continue watching viral propaganda videos’ or ‘TikTok wants me to keep posting content so I can stay relevant and make my content discoverable’, and similar. When using the verb ‘want’ here, it is used broadly and it refers to the processes of algorithmic workings where individuals’ actions are steered through the technical affordances of the system: algorithms as artifacts can request, demand, allow, encourage, discourage, and refuse certain actions (Davis, 2020). In these examples,

Further clarifying the idea of viewing individuals as objects in human-algorithmic relationships rather than as subjects is essential for advancing the arguments. According to Greimas, an actor is considered an individualized manifestation of an actant, while an actant can also be an inanimate object (e.g., an algorithmic system). In the current human-algorithms constellations, the individual processed through algorithmic logic is only an objectified operationalization of the human subject (Seberger and Bowker, 2021). Algorithmic systems categorize, sort, and profile individuals according to often simplistic classifications, disregarding the complexity of individuals and the unpredictability of behaviours, in order to produce algorithmic outputs. The algorithmic logic – and the social order it strives to create – ‘requires no subjects at all, but rather seeks to turn them into objects’ (Fisher, 2022:12), devoid of subjectivity and individuality. The result of this objectivization is a subjectivity made redundant and individuals becoming objectified humans (Fisher, 2022; Seberger and Bowker, 2021), or subjects without subjectivity. Relying on categorization, algorithmic systems attach a certain (algorithmically constructed) identity to an individual, further imposed as a ‘law of truth’ (Foucault, 1982:782) that should be recognized in the individual by others and internalized by the individual themselves. This is an algorithmically imposed subjectivity, and this imposition is a form of power (Foucault, 1982). Or as McQuillan (2022) would say –‘We are not individual subjects of AI but the inferential subjects of AI’ (36). As discussed in more detail above, Rouvroy and Berns (2013) argue that the object of algorithms never manages to become a subject (XX), because algorithmic governmentality prevents and/or complicates the very possibility of subjectification processes of forming subjects. As actants-object, individuals are constrained by the programmability of the algorithm that prescribes behaviour and enables or restricts actions.

In a socio-technical constellation like this, the role and status of the individual in these entangled and complex infrastructures can be understood as one of a ‘subject’ only in the sense of

On actants and actors

What is the relation between actants and actors, and what is the crucial difference between them? According to Greimas (in Greimas and Courtés, 1982), an actor is not the same as an actant – each actor was an actant first, but not every actant becomes an actor unless certain conditions are met (Høstaker, 2005; Greimas and Courtés, 1982; Mattozzi, 2019). For an actant to become an actor, the actant needs to become a

This is an essential aspect for the remainder of the article, since it argues that this position of having agency, of being (becoming) an actor through the process of obtaining the ability to

While actants follow a prescribed programme and are devoid of specific characteristics (subjectivity) (Greimas and Courtés, 1982:7), actors are related with the notion of transformation, identity, and individuation – the transformative process of becoming a particular individual – characterized by ‘a set of pertinent traits which distinguish its doing and/or being from those of other actors’ (ibid.). The actor's specific ways of

In that sense, this active principle means (re)gaining subjectivity, understood as the individual's ability to shape their own conduct and personality, and not simply to follow a script, a programmed action. As such, it requires the ability for self-reflection, self-determination, autonomy, and agency. To achieve this, several transformations would need to take place. The element of transformation is a crucial difference between an actant and an actor – to be an actor, it means that one needs to undergo an act of modification, not to be the same as they were before. As Doctorow (2019) describes his protagonist Salima, whom we met earlier – ‘her experience with the dishwasher and the toaster changed her, though she couldn’t quite say how at first’ (22). However, there are a few crucial steps – as Fisher (2022) argues, becoming a subject with subjectivity requires the attainment of critical knowledge, which is crucial for ‘transforming individuals from objects to subjects’ (112).

This is how the term

Preconditions for acting agentially

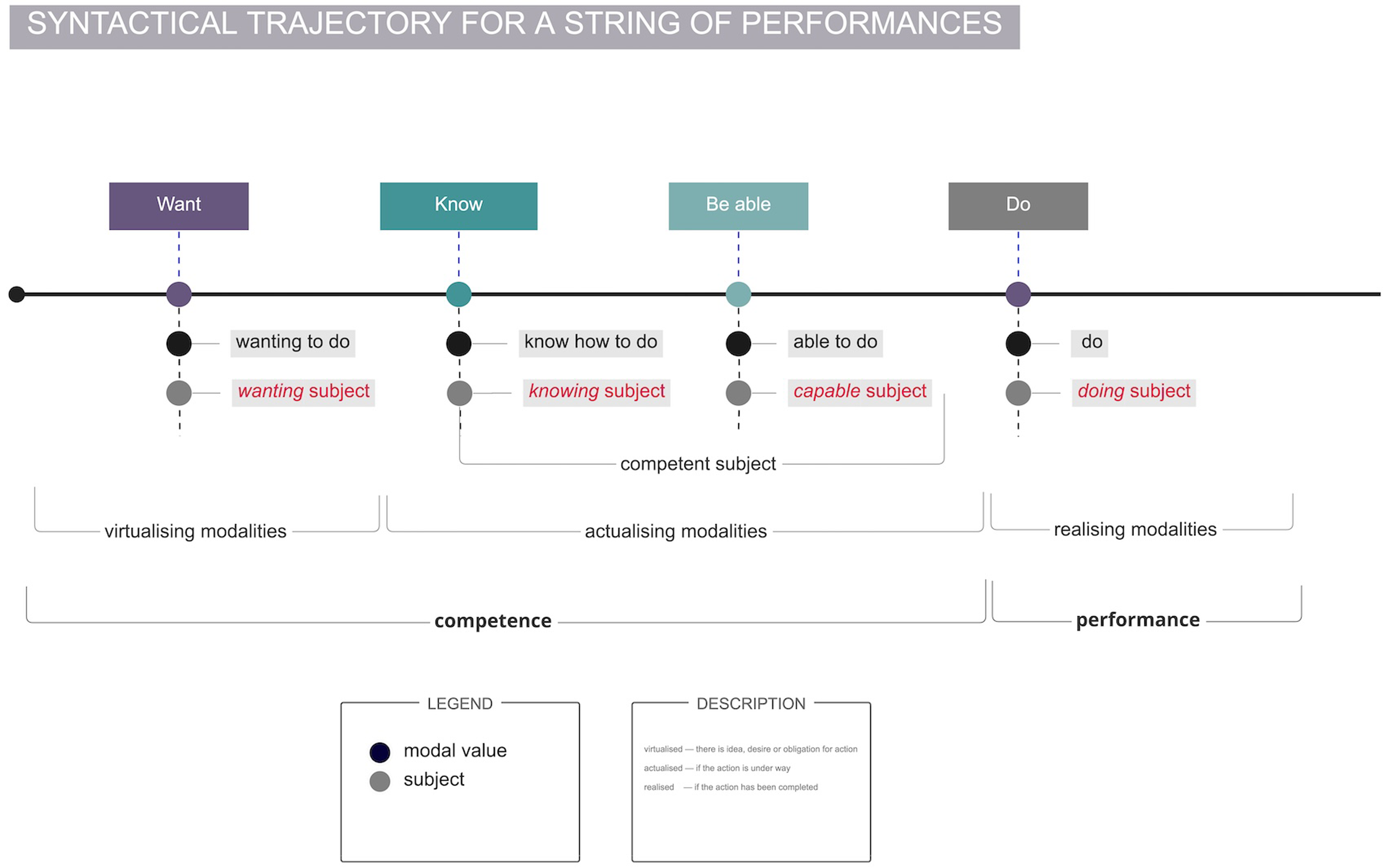

Greimas’ theory of modalities (Greimas and Courtés, 1982), as a theory of agency (Høstaker, 2005:7), postulates the preconditions and requirements for agential doing. Due to its complexity, I provide a brief and simplified summary. For an action, any action to be performed (what Greimas calls an

Illustration of the classification of modalities, reworked according to Greimas, (1987a, 1987b, 1987c:132) and Greimas and Courtés (1982:45;195).

Illustration of the hierarchical schema for a string of performances, according to Greimas (1987a, 1987b, 1987c:129) and Greimas and Porter (1977:37).

For our human-algorithm constellations, that means that for an individual to act agentially in relation to or within an algorithmic system and to be able to impose their own requests on it by overcoming the boundaries of the technical affordances, they first need to have the competences to do so. Since data and algorithmic systems are complex socio-technical assemblages, I argue that all these competences need to be possessed or acquired (both the virtualizing and the actualizing) which would require a ‘redistribution of competencies and performances of actors in a setting’ (Høstaker, 2005:17).

From knowing to capable subjects

To elaborate better on what this process of gaining competences entails, it is necessary that we first define and explain the concept through the theory of modalities. Competence is understood as a hierarchical organization of four modalities (Figure 1):

Thus, the acquisition of modal competence (becoming a wanting/obliged, knowing, capable subject) unfolds in three stages, and is a process (Greimas and Courtés, 1982:6). The acquisition of knowledge (

Transposing this into our human-algorithmic conceptualization means that to enable individuals to act with meaningful human agency, we need to ensure that the preconditions are met for the acting subject (the individual) to be simultaneously a

The wanting subject or making agency desirable

According to Greimas, one of the crucial aspects for realizing an action is

The

The cruciality of the modal competence of

Some tried and implemented mechanisms that can serve as mitigating strategies for the challenges of

However, while these initiatives have been successful to a certain degree, some of my empirical findings (Pop Stefanija & Pierson, 2023, 2024, forthcoming) have shown that they are not sufficient. What did prove productive for facilitating and encouraging this desire to act agentially is the approach of making the algorithmic matters of fact –

But making algorithmic entanglements and agency a matter of concern is not enough; we need to encourage individuals to care (de la Bellacasa, 2011) about their entanglement with the algorithmic systems. Stimulating care is possible when there is personal investment, a personal stake in what we are concerned about. In my research, I was aiming to build an emotional connection between the participants and the algorithmic systems they were entangled in. People tend to react more and to care when the issue in question concerns them deeply, especially when they are dealing with something that is closely related to them and that affects them, and whose outcomes are tangible, visible, and known. This is, however, only possible if there are opportunities to see and grasp this entanglement, and when the effects and outcomes are made knowable and understandable. If only given time, guidance, and opportunities to understand why this matters and how these human-algorithmic entanglements impact their lives, individuals develop a relationship of care with these systems and, consequently, a willingness to act accordingly.

This process, and this

The doing subject or realizing agency in practice

Knowing (how to do) or making it possible

As discussed earlier, the sequence of modal competences of

Before being able to act, as we saw from Greimas, we need the knowing – the cognitive dimension that precedes pragmatic actions. An individual might want to act agentially but not have the knowledge of how to do so. Seen as taking charge (Greimas and Courtés, 1982:32), knowing enables a shift in existing power relations and asymmetries because whoever holds the knowledge also holds the power. For changes in a socio-technical assemblage to occur, a redistribution of actors’ competencies, including knowledge, is required (Høstaker, 2005).

When the knowledge regarding the algorithmic system is hidden, intentionally made opaque and inaccessible, the withholding of knowledge from someone establishes an asymmetrical position of power. This power is generative (Tseng, 2022) as it is hidden in the background and often unnoticed by those it affects. Establishing and accepting algorithmic knowledge as an epistemic authority leads to epistemic hegemony (Ben-David, 2020; Pop Stefanija, 2023) and hermeneutical injustice (McQuillan, 2022), which diminishes or entirely reduces both a person's capacity to know and their ontological position as a knower. Obtaining epistemic insights will shift and reorganize knowledge, both epistemic and critical. Epistemic in the sense of validity, credibility, normativity, and authority of the knowledge about the world and individuals. Critical knowledge in the sense of knowledge about oneself, which is acquired based on information obtained through processes of reflection, learning, and self-determination. As Rouse (2005) says: ‘Both knowing subjects and truths known are the product of relations of power and knowledge’ (107). The ability to know and to have access to knowledge leads to a (re)configuration of that knowledge.

In Greimas’ theory (Greimas and Courtés, 1982), knowledge is regarded as an object in circulation – it can vary in degree, be present or absent, and can be produced or acquired, given or obtained. As such, the epistemic imbalances discussed earlier are not immutable. Knowledge can be provided voluntarily by those who ‘own’ it, or obtained through an informant/helper (someone who possesses this valuable information and knowledge). Or it can be acquired by accident or trickery and through access to secret knowledge (like in Salima's case). In that sense, for example, when we discuss algorithmic knowledge, the knowledge can be provided by the algorithmic system itself (e.g., making the outputs of the algorithmic workings visible, providing transparency and explainability). It can be obtained through various means (e.g., literacy initiatives, transparency tools, and regulation). It can also be forcibly obtained through trickery, when mechanisms and manoeuvres are needed to gain access to it (e.g., hacking, API access, algorithm gaming, data rights, etc.).

This cognitive competence in relation to algorithmic systems can be referred to as

Being able (to do) or making it doable

Knowledge precedes agency, but knowledge is only relevant if it facilitates, and is coupled with, the ability to act. To (re)gain agency, individuals must know and be able to understand how the algorithmic systems governing them work. Knowing and understanding, but not being able to disagree, oppose, correct, change, or in any way react and impose some autonomy over the system strips individuals of their agentic power over these systems. This is how Greimas understands knowledge, too. For Greimas, the modality of

The modality of

Being agential, becoming a subject

When an act, a performance is completed by an individual (now considered a competent subject that acts independently), a transformation producing ‘new state of things’ emerges (Greimas and Courtés, 1982: 227), with various outcomes. First, the epistemic imbalances and the epistemic authority positions are shifting. Now individuals can assume a more equal position in relation to the systems that rely on algorithmic knowledge to produce, uphold, and maintain power asymmetries (Foucault's power/knowledge, 1980). This shifts power positions too, and it is not solely the algorithm having

The acquisition of knowledge and the possession of abilities to act upon and enact that knowledge are the crucial elements that make the actualization of meaningful agency possible. Meaningful human agency, considering everything discussed, can be understood as the ability to act, as an intentional and reflective practice that may or may not be realized, but that relies on the abilities, capacities, and opportunities to conduct and direct one's actions according to one's willingness, and thus to self-govern themselves. This change and shift in the ability to act (more) agentially also affects individuals’ autonomy. Closely related to the notions of agency and control, autonomy implies being able to make ‘reflective and informed choice and the ability to enact one's goals and values amid technological constraints’ (Savolainen and Ruckenstein, 2022:1).

All this inevitably instigates and is supported by the processes of self-reflection and self-determination, which, together with the possession of critical knowledge, are essential building blocks for achieving subjectivity (Fisher, 2022). Returning to Greimas and his definition of performance as

In this sense, we are talking about meaningful human agency as a counterbalance to algorithmic governmentality. To return to the opening story of this article and to Doctorow's Salima – ‘“You see”, she said at last, as a realization came out of the blue to her and left her wonderstruck and thunderstruck, feeling like a revelating prophet. […] A computer you can access without supervision is a computer you can change, because all these computers are the same, deep down. […] Once you can seize control over that computer, all of them are yours’’ (Doctorow, 2019:62/63). What this article aimed to achieve is to conceptualize, from a different theoretical perspective, the agential relation between humans and algorithmic systems. By elaborating on the often blurry and narrow boundaries between subjects and objects, actants and actors, this approach aims to outline a way to understand meaningful human agency that will focus not only on the technological and the societal, but will also pay attention to the crucial elements for achieving agency

However, there is one important issue we need to account for when devising interventions for ‘more’ agency –

At the end, I would like to reflect on what this article did not do – prescribing mechanisms for translating this into practice. To borrow from Bucher (2012), the aim was to ‘form responses rather than definite answers’ (69). As such, the aim was to propose novel approaches and foster and encourage new discussions. By introducing the syntactic trajectory for action (the

Footnotes

Ethical approval

No ethical approval was needed since this is a theoretical research paper

Funding

The author disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Fonds Wetenschappelijk Onderzoek (FWO), grant number G054919N.

Declaration of conflicting interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.