Abstract

Recent years have witnessed an intensifying debate on the deployment of emerging surveillance technologies in protests. Often discussed in terms of “chilling effects”—where activists self-censor due to fear of surveillance repercussions—there's limited research on its effects on both activists and law enforcement. This study explores the technologization of protest policing, moving beyond the oversimplified cat-and-mouse game analogy, to examine its effects on surveillance experiences in more nuanced ways. By analyzing observations and interview data from 2023 road blockades by Extinction Rebellion in The Hague, Netherlands, this paper highlights the intricate consequences of surveillance technologies for both sides. Moving beyond the narrow legal interpretation of “chilling effects,” it uncovers two further socio-psychological sub-manifestations, showing how both groups adapt through hyper-transparency (extreme openness) and hyper-alertness (extreme caution). The study demonstrates how these experiences can be self-reinforcing, where reciprocal suspicion might contribute to a cycle of mutual distrust beyond protest contexts, but also introduces new forms of resilience. This cycle, despite lacking clear causality, bears important implications for society at large. Pervasive suspicion erodes institutional trust among activists and threatens the traditionally communicative and de-escalation-focused approach of Dutch law enforcement. Overall, this extended impact indicates that the technologization of protest policing has resulted in a hybridization of screens and streets, causing its human impacts to stretch beyond the specific times and places of demonstrations. Protest policing now encompasses a multifaceted spectrum of surveillance experiences, affecting a plethora of public values, beyond the right to protest alone.

Keywords

Introduction

The role of technology in protest management is becoming increasingly significant, renewing debates on how to balance public safety with protest rights. As protests are becoming more diverse, frequent, and complex (Swart and Roorda, 2023), protest policing can be illustrated as a tightrope walk, effectively “getting the balance right” between opposing societal interests: that is, between protecting demonstrators right to voice their opinions and ensuring public order (Baker et al., 2017). Digital technology's increasing role adds complexity to this balance by boosting protest organization and mobilization, leading to more unpredictable law enforcement challenges (Douglas, 2023).

To stay on par with this dynamic, police organizations are increasingly worldwide deploying advanced surveillance technologies during protests for efficiency—including CCTV, bodycams, drones, and facial recognition systems—prompting critical human rights questions by the United Nations (UN) Special Rapporteur on the Right to Assembly and Association (Voule, 2022, 2024). Over-reliance on such technology in protest policing can tilt the scales towards “risk-thinking” over protest facilitation (Amnesty International, 2023). Live facial recognition, especially in protests, has drawn particular UN scrutiny in the European Union (EU) during its “AI Act” negotiations (OHCHR, 2023). This “informatization and medialization of protest policing” (Ullrich and Knopp, 2018: 185), raises questions about its impact on surveillance experiences and dynamics, historically seen as “perpetual cat-and-mouse-games” (Huey et al., 2006: 174).

The 2023 civil disobedience actions by the Dutch branch of climate movement Extinction Rebellion (XRNL) in The Hague offer a valuable case study of the effects of advanced surveillance technologies on real-life experiences. Known as the “international city of peace and justice” and the epicenter of Dutch political affairs, The Hague is a prime site for protest policing, hosting over 2000 protests annually (Van Zahnen, 2023). XRNL, furthermore, underwent a significant surge with their “A12-Blockades,” where climate activists obstructed a symbolic “highway” entrance near Dutch ministries to raise awareness about the climate crisis and demand an end to fossil fuel support (XRNL, 2022). These legally ambiguous acts of civil disobedience, defined by non-violent law-breaking to highlight injustices (Weber, 2023), vividly illustrate the authorities’ challenge in striking a balance between fully facilitating protests and legally enforcing (order) restrictions under the Dutch Manifestations Act (WOM).

This article explores the surveillance experiences during these A12-Blockades, seeking to understand the impact of new technologies, among which Artificial Intelligence (AI): (a) how do new digital surveillance technologies influence surveillance experiences of activists and police? (b) how do these experiences differ or resemble one another, and (c) how do such experiences shape their mutual perceptions and overall police-activist dynamics? This research contributes to calls for deeper examination of AI-assisted surveillance in activism, as it may have broad implications for democratic participation (Stevens et al., 2023), more empirical investigation into surveillance experiences (Schuilenburg, 2024) and the under-researched relationship between police and protestors, considering both sides (Jackson, 2020; Douglas, 2023).

Surveillance experiences as cat-and-mouse-games

Protest policing, the handling of protest events (Della Porta and Diani, 2020), has developed from the 1960s “escalated force” model to “negotiated management” in the 1990s (Gillham and Noakes, 2007), before shifting to a more repressive “strategic incapacitation” post-9/11 (Gillham, 2011; Gillham et al., 2013), and now combining “soft tactics” of liaison policing (Stott et al., 2013) with “hard tactics” including comprehensive surveillance, pre-emptive arrests, less-than-lethal force and no-protest zones (Gillham, 2011; Whelan and Molnar, 2019). This coincides with a global trend towards more technologically advanced and information-centric methods of protest policing (Douglas, 2023; Gillham and Noakes, 2007; Ullrich and Knopp, 2018; Wood, 2015). Drones and helicopters are increasingly supplementing CCTV surveillance (Ataman and Çoban, 2018; Eggert et al., 2018) and bodycams are embraced for potentially fairer police–public encounters (Wright et al., 2023a). Meanwhile, the sporadic use of (retrospective) facial recognition at protests in the Global North—seen in Germany, UK, and USA (Borak, 2024; Novokmet et al., 2023; Zalnieriute, 2024)—remains contentious, and particularly its “live” facial recognition counterpart caused divisions among EU institutions 1 during negotiations over its AI Act (Cocito, 2024).

Understanding how protestors experience surveillance requires examining the actions and counterresponses of both sides (Ellefsen, 2018), often termed cat-and-mouse-games (Huey et al., 2006; Koskela and Mäkinen, 2016; Marx, 2003; Owen, 2017; Wilson and Serisier, 2010). On the mice’ side, activists employ various “moves” to dodge data collection (Marx, 2003). Counter-surveillance (Monahan, 2006a) manifests itself through disguise—masks, umbrellas, or black attire—but also extends to proactive filming strategies to reverse surveillance (Ullrich and Knopp, 2018). “Cop-watching” (Schaefer and Steinmetz, 2014; Søgaard et al., 2023) brings “new visibility” to police operations, where citizens use video-activism to expose misconduct—mostly questionable force—potentially eroding police institutions’ legitimacy (Goldsmith, 2010; Brown, 2016). However, while effective in raising awareness; their impact in changing perceptions may be limited to already sympathetic protestor audiences (Wilson and Serisier, 2010). In Chile, for instance, wider public opinion deemed strong police responses to protestors as justified due to their perceived criminality (Bonner and Dammert, 2022).

As far as the cat's response is concerned, although the earlier view was that police opposed being filmed (“war on cameras,” Wall and Linnemann, 2014), there is a growing consensus that police might actually view citizens’ cameras as opportunities for their public image (Sandhu and Haggerty, 2019). This shift suggests that police behavior might be influenced both consciously, in efforts to present a more favorable image, and subconsciously, as the pervasiveness of social media potentially shapes officers’ awareness on the job (Brown, 2016). This raises another question of whether police's own bodycams similarly offer “civilizing effects” (Patterson and White, 2021: 412). Gaub et al. (2022) contend however that body-cams’ main benefit during Black Lives Matter (BLM) protests was in collecting evidence, rather than conduct improvement. This observation feeds into the debate “that the spread of body-cams is in part legitimized as a way to counter counter-surveillance” (Leman-Langlois, 2019: 359). This reflects Andrejevic's concerns about protestors’ shared digital content inadvertently becoming “tempting honeypots for authorities including police investigators and intelligence organizations” (2011: 180), a vulnerability exemplified by reports of US authorities using social media facial recognition to identify and arrest BLM protestors (Zalnieriute, 2024). Social media is indeed increasingly considered police jurisdiction, encapsulated in the term “social media surveillance assemblage” (Trottier, 2011: 63). Nurik (2022: 32) voices apprehensions that this may involve “proactive monitoring, combing through social media accounts, creating databases, and heavily tracking particular individuals, groups, and communities,” while others discuss covert surveillance chat groups (Schlembach, 2018; Stephens-Griffin, 2021).

Protestors and police thus engage in a learning process (Della Porta and Diani, 2020), “each anticipating, responding to, and feeding off of the moves and activities of the other” (Walsh, 2019:383). This cat-and-mouse dynamic can set in motion a spiral of action-reaction, where successful evasion by activists prompts police to adopt new technologies (Leistert, 2012; Ullrich et al., 2011; Ullrich and Knopp, 2018; Wilson and Serisier, 2010). In Germany, for example, law enforcement countered activists’ encryption by adopting more sophisticated surveillance methods, including advanced metadata analysis and the use of silent SMS tracking (Leistert, 2012). However, this escalation can extend beyond surveillance tactics. Research suggests that when protest policing escalates into violence, protesters may also become more likely to view violence and vandalism as justified responses (Drury and Reicher, 2009; Maguire et al., 2021; Snipes et al., 2019; Stott et al., 2008). This complex dynamic can damage public trust in the police, but depends on context, as this effect seems stronger in the USA than Germany (Nägel and Nivette, 2023; Wright et al., 2023b).

Finally, surveillance's “chilling effect,” potentially deterring protest participation altogether (Owen, 2017), is arguably the most debated potential negative side-effect of technologizing protest policing. Especially the chilling potential of facial recognition vis-à-vis protest rights is increasingly acknowledged by international bodies (FRA, 2019; OHCHR, 2023) and the European Court of Human Rights (Glukhin v. Russia, 2023). Yet, consensus on definition and operationalization is still lacking. Conceived within USA 1960s free speech jurisprudence and later adopted by European courts, chilling linked fear of sanctions to self-censorship (Fataigh, 2019), often measured in internet research (Stoycheff, 2016). A broader socio-psychological perspective posits however that chilling goes beyond mere (individual) speech: qualitative evidence from Zimbabwe (Stevens et al., 2023) and Uganda (Murray et al., 2023) demonstrates that chilling impacts both freedom of expression and freedom of assembly; subtle yet profound impacts on identity, mental health, relationships, and, ultimately, (collective) civic engagement. Crucially, the mere perception of surveillance, whether real or not, induces self-regulation (Stevens et al., 2023). And, even when experiencing chilling effects, activists’ enduring political agency and resilience must not be overshadowed (Stephens-Griffin, 2021). This transcends legal definitions but is harder to measure and define; our research aims not to broaden chilling's definition but rather to refine it, by examining how surveillance technology, with its unprecedented viability, shapes protest experiences.

Methods and ethics

This study applies a multi-method case study approach (Yin, 2018) to protest policing (Della Porta and Diani, 2020) in order to understand surveillance experiences of activists and police. Ethnographic and interview data collected over 10 months (March 2023–January 2024) inform the analysis.

First, ethnographic observations included attending an XRNL action training (spring 2023) and three A12-Blockades in The Hague (11 March 2023, 27 May 2023, 10 September 2023), mainly by the first author, and partly by the second. Detailed note-taking followed a protocol, focusing on setting (location/atmosphere?), participants (who/how?), and (counter-) surveillance methods (manual/digital, how were they responded to?). Initial notes were then discussed confidentially within the research team for reflection, using co-authors not involved in data collection as a sounding board.

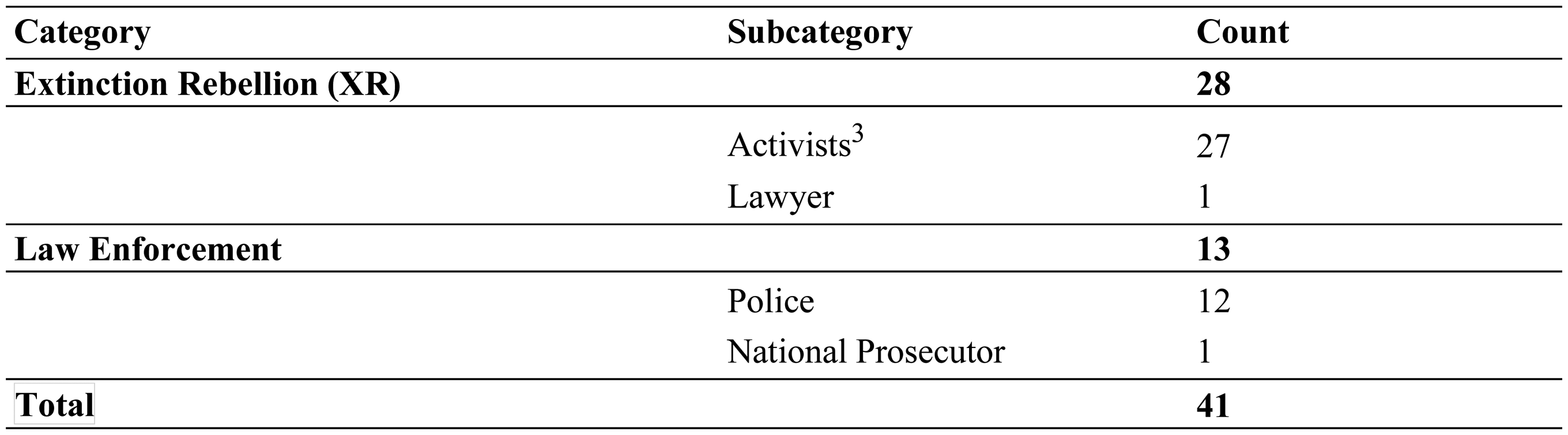

Second, 41 semi-structured interviews were conducted with activists and police (June–December 2023) and started following two A12-Blockade observations (March, May). Interview-partners (IPs) 2 were reached via various means including email, X/Twitter, LinkedIn, and snowball sampling, with social media proving most effective for contacting activists. For police contacts, access was obtained through a gatekeeper, granting personal access to officers on duty during A12-Blockades, both on the ground and within the control room. Activists were generally interviewed individually by the first author, whereas police interviews typically took place in group settings (ranging from one-to-two to two-to-two configurations) featuring additional researchers, with the first and second authors leading these sessions together predominantly. Group setups aimed to encourage open dialogue on complex issues and co-create knowledge, reflecting a transparent, collaborative research approach. Interviews (30–90 min) took place at the IP's preferred location (in-person/online).

The semi-structured interview guide for activists (informed by surveillance literature and observations) explored four key themes: pre-protest surveillance, during-protest surveillance, impact beyond protests (personal values and XRNL-culture), and views on future surveillance/AI. Police interviews were subsequently informed by themes from activist interviews, fostering a “feedback loop” between groups—positioning this study as “critical friends” who “do not seek […] quick agreement but rather to complicate by probing for deeper meaning and evidence and seeking possible alternative explanations” (Coghlan and Brydon-Miller, 2015: 205; Jacobs, Van Houdt and coons, 2024). Interviews were transcribed verbatim and anonymized, with careful attention to omitting activists’ personal details from the transcripts when mentioned. The first author conducted a two-step coding process (Charmaz, 2006), using Atlas.ti software, involving initial coding, labeling data fragment by fragment, and focus coding, concentrating on frequently recurring and relevant codes. The team then jointly reviewed these themes, facilitating extraction of patterns previously recognized in the literature and exploration of new, emerging themes (Figure 1).

Study sample.

Ethical considerations

Surveillance science can unintentionally increase activists’ or police officers’ vulnerability by offering “how-to” guidance. Therefore, we deleted details exposing group connections and/or tactics to preserve anonymity, security culture, and prevent research misuse. Our ethical clearance encompassed a review of consent forms, risk assessment, data management, and research design. Extra risk management included data minimization, including pseudonyms on consent forms. During observations, researchers wore identifiable university vests to clearly signal their presence, ensuring transparency and protection of participants and themselves.

The case study is presented in the next section, followed by presentation of the findings, which focuses on select themes and codes identified during the coding process. Briefly, this study goes beyond the traditional legal understanding of the chilling effect, by identifying two new manifestations not yet discussed in literature on chilling, affecting both parties involved.

Case study: the A12-Blockades

XRNL's A12-Blockades started in January 2022 to spotlight the climate crisis and stop governmental fossil fuel subsidies (XRNL, 2022). Despite an earlier, failed attempt resulting in community services, 3 these blockades quickly gained public momentum: arrests surged from 1579 in May, to 2400 in a single day by September (IP23), including 30 minors 4 (Police, 2023). This escalation prompted daily blockades until the government agreed to cancel subsidies after a 27-day campaign, ratified by parliament (XRNL, 2023a). Despite perceived success, the movement's methods attracted legal scrutiny with seven activists convicted for sedition, 5 now on appeal. And, faced with perceived inaction, XRNL has vowed to maintain their campaign (XRNL, 2024).

In the Netherlands, protests do not require “permits” but “declarations” instead (WOM, Art. 3), and mayors have authority to restrict them for public health, traffic, or order (WOM, Art. 2)—a framework deemed by Amnesty International (2022) to be below international standards. Policing protests meanwhile involves so-called “Triangle” deliberations among the mayor(s), police chief, and public prosecutor (Police Act, 2012, Art. 13), indicative of the Dutch political “polder” model emphasizing consensus-based decision-making (Van Dijk and De Waard, 2009). The chief prosecutor, overseeing criminal investigations, and mayors, responsible for public order, both direct the police chief, who, despite being a central figure, has lesser authority and contributes through information and safety analyses (Fijnaut et al., 2014). A special police unit (SGBO) can be activated, with chief officers managing inter alia public order, mobility, and security, using so-called BLOOS/IRS models for assessing the situational information and coordinating responses based on location, social media, and weather (IP34).

Dutch Police did not utilize AI during A12-Blockades. 6 Nevertheless, police can monitor activists on social media (sometimes via fake accounts), increasingly use drones to observe crowd formations, 7 and have access to retrospective facial recognition technology 8 (Amnesty International, 2022, 2024). For “notorious public order violators,” police can also employ automatic license plate recognition (ANPR, Investico, 2023b: 1). Investigative reports, drawing on sedition-related police-files, disclosed moreover infiltration into XRNL chat groups prior to the January 28th blockade using pseudonym “Inge” (RTL Nieuws, 2023). Additionally, the Dutch riot police have been borrowing its water cannons from Belgium and Germany since mid-2022, with German officers operating under Dutch direction at A12-Blockades (Jong, 2023). While XRNL's lawsuit against water cannons was unsuccessful, 9 the adoption of “liaison policing” (Gorringe and Rosie, 2013: 204) with activists wearing liaison jackets emerged valuable for enhancing dialogue, alleviating protester anxiety, and fostering “self-regulation” (Gorringe et al., 2012:111). XRNL and police also jointly developed de-escalation tactics, using stretchers to safely remove activists from blockades, addressing XRNL's concerns over officer health hazards and potentially reducing critiques of excessive force (Figures 2 and 3).

Photo of the A12-Blockade (30 September 2023, Regio15, 2023).

Announcement of the A12-Blockade (XRNL, 2023b).

Results

Our investigation into surveillance experiences at protests began by examining chilling from the legalistic definition, thus focusing on activists only, as it stresses impact on those under the law, rather than those above/enforcing it. 10 This initial focus allowed us to identify two sub-forms: hyper-transparency and hyper-alertness. Both activists and police, adapting to surveillance, may exhibit hyper-transparency (extreme openness) or hyper-alertness (extreme caution). We describe these ideal types, indicating that an experience classified in one category can also show elements of another.

Chilling

Activists

Social media self-censorship emerged as a prominent theme, reflecting a chilling effect on speech. This followed the arrest of eight activists on sedition charges linked to their online activity, sending a palpable “chill” through the community. Many activists responded by reducing or ceasing their social media engagement, with some even deleting posts or deactivating accounts entirely. IP8 shared how this took shape: I’ve had times when I woke up in the middle of the night and impulsively started deleting things from LinkedIn [and others].

Activists perceived the sedition arrests as pre-planned, sparking fear among core organizers with “that could have been me.”

11

This fear was heightened by the forceful nature of the arrests in activists’ private lives, which led the judge to question their proportionality.

12

As such, fearing psychological strain, online recognizability (as part of XRNL's media strategy) and career risks (time-consuming court appearances, professional judgment, political limitations, and legal expenses), particularly young activists grew hesitant to assume leadership roles: Honestly, I’m just too afraid now [to lead]. And, well, that day was downright traumatic. A really awful day. The fear of ending up in a cell again… I can’t handle that [anymore]. (IP7)

Activists furthermore shared frustration to involve their personal circles into activism, describing the atmosphere “scary” or “daunting,” suggesting a chilling effect on recruitment. As IP13 stated, “it's tougher to draw new friends into activism because they say, ‘Sure, I'll attend a protest, but I really don't want to deal with police intimidation or violence, or jeopardize getting a Certificate of Good Conduct.’”

Chilling seems powered by fantasies of advanced AI use in protest surveillance, including facial recognition in drones, CCTV, body-cams, and even illegal tools like ClearviewAI. The ambiguity regarding the use of CATCH

13

for identifying protestors at Schiphol by the Dutch border police was repeatedly speculated over by activists, raising their suspicion of AI. IP25 even pondered: I wonder, isn’t facial recognition tech so advanced now that it can see right through those stripes [of face paint]?

Some even contemplated about the degree to which “they” kept tabs on their purchases, especially in cases like acquiring movie tickets to “How to Blow Up A Pipeline” or the book itself, hinting at blurring lines in the web of surveillance: “I’ve been overthinking very much whether I would order the book, How to Blow Up A Pipeline, under my own name […] is that something that is monitored?” (IP20). Activists consequently overestimated police surveillance capabilities, seeing them as overwhelmingly “all-mighty,” even if this doesn’t truly match modern protest policing. How this disconnect induces self-regulation shows IP17: I often see a police-van driving by with 360-cameras filming the protesters [and] who knows what else. Yes, that's registering protesters. It's a huge violation of privacy and a breach of the right to protest. Because it scares people away from participating.

Similar anxiety arose from digital ID checks at protests, which Amnesty International (2023) criticized for violating anonymous protest rights and potentially storing this data illegally. When IP20's ID was requested, it instantly sparked a reaction: “That immediately triggered a feeling of […], bleep, [name] is moving,” pointing to a perceived system where personal information is logged and tracked, highlighting activists’ increasing worries about how AI could reshape their maneuverability. Continuing this AI discussion, IP20 expressed concerns how AI could expedite the chilling effect: I can picture them using AI to [calculate] a precise response that is impactful enough to create a chilling effect without triggering public outrage. We saw that with [sedition-related] arrests, it was actually a miscalculation: they thought they were sending a message […but] the reaction was the opposite: further hardening and broad societal support. I expect that their toolkit will become more fine-grained, more delicate, thanks to AI.

However, interviews unveiled a more nuanced understanding beyond this legalistic understanding of chilling. We identified two (socio-psychological) sub-manifestations of chilling: hyper-transparency and hyper-alertness, presented below.

Chilling as hyper-transparency

Activists

Among activists, some adopted a stance of absolute openness, or saw surveillance as an opportunity to demonstrate commitment to civil disobedience. In its extreme version, this would mean to voluntarily abandon privacy rights—around-the-clock—eroding the distinctions between personal, public, and professional spheres. We termed this “hyper-transparency,” best encapsulated in IP11's reflections: It's almost an invitation to just be extremely open and transparent […] it's almost a gift to be infiltrated, because it also keeps you on your toes and maybe even morally pure, right? Because you lose your legitimacy the moment you would do things there that can’t bear the light of day.

This argument emerged in discussions on undercover surveillance stories (in case description), highlighting hyper-transparency as a core belief that constant watch strengthens, not weakens, their commitment to civil disobedience. At the core of this belief is the idea that XRNL has “nothing to hide,” viewing their actions as lawful and constitutionally protected, thus rendering surveillance fears irrelevant, typically reserved for criminals. As IP28 shared, “We can openly discuss everything [….]. We don’t engage in criminal activities or break the law.” This logic extends to welcoming police in training sessions, IP3 noted: “everyone is welcome.”

In tandem, activists noted that joining XRNL often means getting accustomed to surveillance, seen as part of civil disobedience's accountability, in other words, a necessary adaptation for the cause. Two hypertransparents put this succinctly: “Yes, it's part of civil disobedience that you also simply accept the consequences,” as, “It's about the climate crisis, so it's not about prioritizing your own safety or privacy” (IP26; IP8).

Identity and privilege shaped activist's choices regarding hyper-transparency: IP8, an older, privileged man (self-employed, white), acknowledges his different experience from younger or marginalized activists. Similarly, IP22 states: “I am a self-employed entrepreneur […], I don’t mind [surveillance]. In fact, I think it might even benefit my profile!” After sedition-related arrests, a group came together to place a public protest ad in a main Dutch newspaper, garnering over 2000 signatures: [sedition] only fueled my anger, making me believe it was even more crucial to voice myself […]. By listing your first and last name [in the newspaper], you declared “I incite everyone [to block the A12]!” (IP25)

However, some activists embraced hyper-transparency to counter perceived “paranoia,” explaining it could harm both their activism or overall well-being. This ranged from resignation (“they've got me on their radar anyway”) to defiance and individual resilience (“I will not become paranoid”). This reveals an understanding of hyper-transparency as a deliberate strategy against surveillance's potential psychological weight: I refuse to succumb to paranoia. I reject it entirely. I believe the government truly defeats us when we start seeing threats in every shadow and camera. What the heck, let it be. Record me, observe me, do what you will. (IP8)

Temporal dimensions of hypertransparents’ disregard for surveillance were notable. Hyper-transparents felt that “until now” surveillance had not harmed them, but recognized they might need to adjust their transparency with socio-political developments. The UK's jailing of environmentalists served as a cautionary tale (Laville, 2024): “For now, I'm comfortable sharing my name and thoughts openly […]. Even if someone snaps my photo at a demonstration, that's OK. But I really hope we don't head in UK's direction; because that's really really really scary,” remarked IP4, indicating their readiness to adjust their mentality (and even consider complete surrender) as circumstances evolve.

While hyper-transparents acknowledged potential future AI surveillance, many dismissed its effectiveness, proposing direct communication more worthwhile. P13 even mocked digital surveillance, a resilience strategy by refusing to be intimidated: It all looks like a grand, orchestrated conspiracy of data collection. I’m not buying it. They’ll probably end up [clueless]… But I find it somewhat pitiful, I mean, really, is [AI] the government's response to citizens who are simply deeply concerned? Oh, what a downfall. So, there you are with all your AI and drones, and citizens keep blocking the A12, and there's nothing you can do about it. IP22 reiterated: My greatest concern is the escalating climate crisis. When we’re all submerged underwater, those [AI-]computers won’t work anyway. I’m thinking beyond the immediate; looking ahead at the bigger picture.

Police

Hyper-transparency was also an ubiquitous theme among police, impacting their operations, private life and professional identity. The dominant issue they grappled with was being “the most visible arm of the government” (IP37), placing them at the frontline of public dissatisfaction. IP41 noted that the public opinion on protest policing is irrevocably split, with criticism for both inaction and use of force. IP33, highlighting the perceived unspoken rule governing their profession, stated: Do our job, no news […]. Excel, we maybe hit the fifth page of a local newspaper. But drop the ball, and we’re front-page material. You have to understand that's the game.

Yet, this sentiment intensified during media frenzy over A12-Blockades. While some officers conveyed feelings of sympathy over the activists’ objectives, and sometimes even with the impact of civil disobedience, they also struggled with being cast as “villains” and managing public perception—a central theme in the interviews—especially when arresting “peaceful protesters” and dealing with press freedom issues. Therefore, lifting the veils was necessary, urging colleagues (IP33): We’ve got nothing to forbid, nothing to hide. Just do your job right, let everyone film.

Police also opened doors to media and used social media to clarify protest policing responsibilities, clearly conveying that decision-making lies with the municipality, not them. “We had to clarify our position. We are often wrongly associated with [political] issues like the childcare benefits scandal. 14 No, that's poli-tics, not poli-cing […]. Municipality, do your part, you’re in charge! Politicians[…], speak up!” (IP36).

Interviews confirmed initial observations (27 May 2023) about technology's potent role—CCTV, 360-cameras, drones, bodycams, helicopters, and social media—in hyper-transparency. Officers highlighted body-cams as essential for justifying force, supporting proportionality reviews, and providing evidence in legal cases involving insults. Drones were meanwhile praised for gathering evidence and disputing claims of excessive force, particularly on social media: “Drone footage [lets us] analyze performance,” explained IP24, “imagine pairing that with AI … for example from a video vehicle, street-level colleagues, or a real-time live stream […], a comprehensive package. Then, media reports [can be countered] very nicely.”

Officers nevertheless acknowledged transparency challenges, including delays in live footage access and limitations of body cams (shaky video, limited angles), and the rise of AI-generated deepfakes, making it harder to debunk false narratives: You have the physical demonstration […], and you have the demonstration-after-the-demonstration. And, that demonstration, is always online. [Then,] I’m dependent on the CCTV, surveillance-car, body-cam, or what-not; […but] those videos are live-streamed, not recorded. So I could say, “I need that clip now,” but then I have to stand next to a screen with my phone to film it, which doesn’t work. And validated, reliable police info is key—you’re lost if you start spreading rumors [which takes time]. (IP36)

Chilling as hyper-alertness

Adding to the spectrum of reactions, both sides of activists and police also became extremely cautious in their behavior due to the fear of being surveilled. We termed this hyper-alertness.

Activists

Among activists, hyper-alerts displayed a heightened fear of surveillance and its consequences. This constant state of alertness manifested in both mental and physical preparation for potential confrontations with authorities.

Several dynamics fueled hyper-alertness. Activists often first mentioned media stories on undercover surveillance, hinting at how these reports influenced their inclination towards hyper-alertness. Secondly, claims by journalism-collective Investico (2023a) regarding the extensive use of the digital records database (BRP) further heightened anxieties, as activists perceived an increase in database activity around blockades.

15

Direct encounters with suspected undercover agents (“Romeos”

16

) also influenced their caution. In IP4’ words: “they’re easy to spot, distinct in their behavior. While others chat, they scan the scene, moving purposefully.” IP3 meanwhile portrayed encounters with suspected infiltrators, further reflecting heightened vigilance: [infiltrators] throw out wild ideas like “What if I slash the tires on those planes?” […] But you know, you can never be too sure: I also met someone who got expelled from a group, suspected of being undercover, when they were [innocent].

Hyper-alertness manifests beyond protests (in fact, it appears common for activists to adopt hyper-transparency during demonstrations and get arrested with IDs); instead, the seeds of hyper-alertness tend to germinate in their daily lives. A common behavioral shift, among hyper-alerts, is heightened vigilance at the sound of a doorbell: [After sedition-related arrests] whenever the doorbell rang, I was afraid they were coming to get me. It caused a lot of stress and feelings of injustice. (IP1)

Many acknowledge a touch of irrationality, often adding, “I’m not paranoid, but…” Despite never being arrested at home (and doorbells usually signaling deliveries), this highlights how activists strive to stay rational, yet can’t transcend their lingering unease, amidst hyper-alertness. In other words, the pervasive ambiguity, the not knowing, significantly shapes hyper-alerts’ behavior. Due to the constant uncertainty, as IP20 said, they “suspect surveillance where it maybe isn’t.” You never know if the police might just show up […]. I always play it safe, hiding certain things just in case. It's like living in a constant state of fear, which is incredibly draining. (IP13)

Facing surveillance's “black box” (IP13), hyper-alerts thus embrace a “better-safe-than-sorry” ethos, preparing for unexpected events like surprise visits, informants, or wiretaps. Their counter-surveillance toolkit is varied and includes, but is not limited to, “action names,” creative methods to evade biometric detection upon arrests, and implementing cybersecurity measures more generally. As such, a “security culture,” became considered more broadly and seriously as collective resilience: It used to be like, “Hey, there's this cool event, want to join?” […] But now, if you can’t join, we won’t spill the beans […]. We’re becoming more discreet and selective about [sharing]. (IP13)

This caution also arises from stories of police visits, yet no concrete verification of these incidents was obtained due to a lack of firsthand accounts.

17

While such a visit might just involve a community officer looking to understand societal dynamics, they may still intimidate activists due to information imbalances (IP21). Nevertheless, the impact of these stories is profound; even the most mundane police presence can elicit stress, as IP32 described: I was recently at the community center, enjoying coffee with some elderly, when a local police officer entered. Immediately, stress washed over me […]. I couldn’t shake off the tension […]. So yes, over the past years, my view of the police has definitely changed.

Effects on mental health and individual relationships were also present. IP3 said, “I recall telling my then-partner, look, don’t be surprised if someone suddenly knocks on our door asking for me. It was one of those things you just have to brace someone for.” IP18 discussed caution in new friendships: I find it harder to trust people […]. I am always a bit on guard, thinking, “Okay, I don’t know who you are, who you talk to.” We know people get shadowed’ later adding “I have mental health problems at the moment, I see a psychologist and this definitely plays a role in that.” This was not unique: “I have friends who are sometimes afraid to deal with me, because they think of yes, but then I will also be in the crosshairs of the police” (IP6). Sometimes mere perceptions were hard: “Because how the police criminalize us, we start to behave more criminally. […We] recently bought a burner phone […], that we didn’t want to be traceable to us, so we bought it with cash […frustrated:] I feel so damn criminal because of it!” (IP13)

The topic of AI-enabled surveillance pushed a button for hyper-alerts, particularly due to fears of being included on “police lists,”

18

and driven by stories of difficulties removing themselves from such records, along with its impact on legal matters, career prospects, and, as frequently noted, no-fly lists. When pressed more specifically on these data collection concerns, the foremost issue highlighted was the lack of context, which would lead to a simplistic, black-and-white portrayal of them as colorful individuals. Many drew parallels to the Dutch childcare benefits scandal. As an illustration, IP3 remarked: The real issue with data collection, as I see it, is the frequent loss of context. You become just a name in an enormous sea of data, a mere entry in some vast datafile [….]. It scares me, because I can’t assess in what context they read this, and in what context they now see me. Does my name in their files imply that I’m under surveillance, and in more ways than one?

However, hypertransparents’ drive for anonymity occasionally juxtaposed with a collective drive for visibility, mostly via XRNL's Instagram livestreams and filming of officers. Observations (27 May 2023) unveiled a best described as a “clash of cameras,” with demonstrators, supporters, counter-protesters, media photographers, police body-cams, and drones overhead, all simultaneously recording the same events. While most activists valued cop-watching (IP25), one activist offered a counter-perspective (IP26): The police sometimes resort to force, and while they have the right to do so, it's not always appropriate […]. It's crucial to document these incidents for lodging complaints and saying, “Hey, this is not how the police should behave!” (IP25) I don’t like [police] snapping photos and filming with their phones. I mean, we film too, so that feels … hypocritical, I guess. If you don’t cooperate, they may use force. We’ve agreed to always stay non-violent, so when you start yelling [and filming] “Give me your badge number” [looks uncomfortable] ‘that backfires. (IP26)

Police

Mirroring wary activists, some officers also adopted hyper-alertness—particularly with cop-watching by bystanders now being the norm, a key insight noted across all observations. “Citizens nowadays seem to prioritize filming incidents over helping,” IP40 claimed. This was not always welcomed: “We are a very free country where [filming] is allowed. There are plenty of countries where [this] leads to very ugly scenes,” IP33's contemplated, “I do not want to promote that we go in that direction, by the way. But it can be difficult, when it's all so easy… When you have all these men standing around […], knowing they are only out for one thing: to catch you out and frame you.” In this respect, officers feared being “doxed” by such recordings. IP37 was quite candid in expressing that, while cases might be rare, the fear remains real: The actual number of colleagues exposed by name in the media isn’t that high. But the feeling of insecurity among colleagues is very high. Just one instance of a colleague's window being smashed can spread fear through the ranks like wildfire. It's like the old days of the neighborhood cop; everyone knew and could find their local officer, but that was a small community. Now, being recognized means being known to 18 million people. So, the impact and risk have magnified significantly.

When probed about the comparability between the impact of doxing on officers and activists’ fear of recognition, IP39 responded with perplexity and forthrightness, highlighting the profound seriousness of these emotions: “What do you mean with a mirror image?! That activists are afraid that the police know who they are? I don’t think that as a citizen you have to be afraid that the police will show up at your door … to beat you up or something” they stressed. The riot police's undercover unit (“Romeos”), seem the most vulnerable to doxing by, in the officers’ words, “keyboard warriors”—unlike liaison officers (see IP41 below). “Yes, sometimes we pick up from streams, them saying, ‘Hey, hold on, those Romeos over there, we’ve spotted them again,’ and then asking, ‘Who knows who they are?’” (IP24). [Discussing doxing] Within the group I’m involved with, the police network team, fears are non-existent. This is largely due to our distinct function. We are liaisons, in direct contact with action leaders. We stand side-by-side. What do you wanna do? (IP41)

Its most visible outcome is officers resorting to balaclavas for anonymity as a resilience strategy. Some linked the rise of balaclavas to the tactical deployment of face masks during COVID-19 riots, suggesting it may have evolved into the habitual wearing of balaclavas. As IP41 recounted, “during COVID, leadership eventually stepped in, urging us to ditch the face coverings: ‘it looks too aggressive’, they said. Imagine facing a lineup of riot police, all masked—it sends [protestors] a message we’re ready to fight.” This view resonated: You might see officers pulling their balaclavas over their noses, but I’m totally against it. We aim to be a police “in connection”, but you just can’t “connect” hiding behind a balaclava. During COVID, it was simpler; everyone wore face masks, so blending in was easy. But those days are gone. Now we are like: “from the employer's side we’ll help you […], try to protect you against doxing, but … That is not always possible.” (IP36)

Discussion and conclusion

This article delves into surveillance experiences of activists and police during protest policing at the 2023 Extinction Rebellion A12-Blockades in the Hague, the Netherlands, and examines the impact of new surveillance technologies on these experiences, to address calls for more empirical data on surveillance experiences (Schuilenburg, 2024), police-protestor interactions (Douglas, 2023; Jackson, 2020) and AI-assisted chilling effects (Stevens et al., 2023). It found complex experiences beyond the legal interpretation of chilling, encompassing both hyper-transparency and hyper-alertness, evoking parallel emotions in observers and observed, in spite of power imbalances.

This paper, therefore, argues that protest policing experiences are far more complex than the rather legalistic chilling effects the “cat-and-mouse” analogy suggests. This traditional “game-metaphor” (Koskela and Mäkinen, 2016: 1523), implies limited engagement between police and protestors of surveillance and countersurveillance, confined to the time and space of the demonstration (e.g. Leistert, 2012; Ullrich et al., 2011; Ullrich and Knopp, 2018; Wilson and Serisier, 2010), and the primary risk being the chilling of protesters (Owen, 2017). While corroborating the chilling effect with evidence surveillance's role in activist self-censorship and mental health deterioration—a reflection of studies in different political and regional contexts (Ali, 2016; Murray et al., 2023; Starr et al., 2008; Stephens-Griffin, 2021; Stevens et al., 2023)—and showing how “AI imaginaries” intensify these effects, our study also reveals that the surveillance experiences are further-reaching.

The findings specifically show how nowadays protest policing's effects stretch beyond the specific times and places of demonstrations. The increasing technologization of protest policing (Douglas, 2023; Gillham and Noakes, 2007; Ullrich and Knopp, 2018; Wood, 2015) has led to a blending of the digital and physical worlds, making it unclear today where a demonstration actually begins and ends. On the one hand, this hybridization of screens and streets leaves activists uncertain about the extent and duration of the surveillance they are subjected to. Police, on the other hand, contend with extended duties in policing the digital-post-protest, encompassing the wide-ranging digital aftermath. This change in protest policing signifies a shift from the view of law enforcement's time-bound and physical presence at protests, creating an elevated hybrid situation characterized by hyper-experiences.

This research demonstrates how this hyper-layer unfolds into two novel and diametrically opposing chilling experiences: hyper-transparency and hyper-alertness; two socio-psychological manifestations of chilling which are not yet discussed—permeating into private life, well beyond the confines of weekend protests scheduled from twelve-to-six. It reveals that these constant effects impact both activists and officers alike, 24/7, with new resilience strategies (Stephens-Griffin, 2021)—indicating that the repercussions extend beyond activists alone which is typically assumed. For police, hyper-transparency highlights two aspects: a personal dimension in discussions on transparency (Sandhu and Haggerty, 2019; Horton et al., 2023) and suggests operational limitations, rendering certain actions impossible. Echoing Marx (2003:374) warning that “the rules may not be equally binding on all players,” it is important to acknowledge that these experiences, while reflective of each other, are not necessarily equivalent. Yet, the persistence of these mirrored experiences, precisely in the face of significant power imbalances, highlights limitations of traditional “cat-and-mouse”-views.

What does this imply for the future of protest policing? Most crucially, this dynamic risks becoming self-reinforcing, further deepening societal divisions. When police wear balaclavas due to fears of being identified, for example, protestors’ police perception as threatening can strengthen; further fueling distrust on both sides with spillover effects into private lives. And, while this ongoing cycle lacks clear causality, our findings allude to important implications for society at large beyond our research focus. Previous studies have already highlighted how (cyber-)security measures to evade surveillance can hinder the long-term success of protest movements (Gunzelmann, 2022; Starr et al., 2008, see also Martin et al., 2023), and in this case feed misperceptions of “eco-terrorism” (Posłuszna, 2015:5), which risks activists’ stigmatization and alienation, reduced institutional trust, and their eventual withdrawal from public life. Simultaneously, these are tensions that movements must increasingly navigate in their culture—whether to balance/prioritize hyper-alertness or hyper-transparency—and to what extent this influences protest potency.

The negative impact is not limited to activists, however: officers increasingly find themselves viewed as representations of the government's failures or even “enemies” of demonstrators, instead of the traditional role of facilitators of protest rights and bridge-builders between the two groups (van Benthem et al., 2023). One could even argue this works as “chilling effects” on the communication-based approach of officers that traditionally defines Dutch policing: fearing they might be unfairly portrayed on social media, officers may be less inclined to use de-escalation tactics with protesters. The perceived involvement of AI in protest policing, even when unfounded, threatens to exacerbate this rift, as it ripe for misconceptions of omnipresent surveillance, and can thereby ultimately erode wider public trust in core policing principles of proportionality and subsidiarity.

A way forward for protest policing could be to acknowledge that the growing use of digital technologies in protest policing, especially (non-)AI, requires increased transparency. Detailed information about tools, the why/how, and legal basis should be publicly accessible—effectively dispelling fears of “unchecked” police discretion and reinforcing that policing is grounded in democratic principles and parliamentary oversight. Technology legal frameworks should be developed collaboratively with policymakers, police, civil society, and privacy scholars. While the EU AI Act provides some foundation, its ambiguities permit much police discretion. The Dutch government's roundtable on protest rights (with XRNL, police and mayor present, Parliament, 2023b) is therefore a more promising example, showcasing both democratic resilience and de-escalation. Such democratic formats could be extended to reflections on technology experiences; as our research reveals, the perception of surveillance technology ripples through society, influencing public values far beyond protest rights alone.

Limitations

Acknowledging its case study approach, this study has several limitations. Our search for activists via social media likely biased the sample towards already publicly “transparent” ones (and, while some without social media helped, most remained online-active). Many online activists furthermore still refused for privacy, leaving these perspectives unexplored. Furthermore, the extent to which these findings can be generalized, especially to different legal or political contexts, deserves further reflection. Acknowledging this study focuses mainly on white, upper-class activists (Hassouni, 2023) future research could therefore examine how intersectionality shapes surveillance experiences. Although this study didn’t study demographic differences among protesters, results on hyper-transparency offered initial insights that suggest that incorporating intersectionality can deepen understanding, facilitate comparisons, and benefit future research in other contexts.

Footnotes

Acknowledgements

We are grateful to our interview partners from Extinction Rebellion and the Dutch police for their openness and willingness to share their experiences and perspectives with us and with each other. We owe deep gratitude to our project colleagues and partners for their active support during the field research and for their valuable feedback. This work was made possible by this collaborative learning ecosystem.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was conducted as part of AI-MAPS (Artificial Intelligence—Multistakeholder Public Safety Issues), funded by the Dutch Research Council (NWO, NWA.1332.20.012).