Abstract

Local review platforms like Yelp and Google Maps use systems combining automated and human judgment to delineate the limits of acceptable speech, allowing some reviews to remain public and removing or obscuring others. This article examines the phenomenon of “review bombing,” in which controversial businesses receive an influx of reviews, using spatiotemporal analysis of review activity to analyze their shifting catchment areas, measuring what sociologist Richard Ocejo calls the “extraterritoriality” of their “taste communities”. Specifically, this article examines businesses in the United States that are caught up in political controversies using the locations of their consumer-reviewers on Yelp. The author compiles a test dataset of affected businesses encompassing national and local politics, including the 2016 and 2020 U.S. elections, the #BlackLivesMatter and #MeToo movements, and the COVID-19 pandemic, and selects two for in-depth case studies and spatial analysis: Washington, D.C.-based pizzeria Comet Ping Pong (subject of the #Pizzagate conspiracy theory) and St. Louis-based Pi Pizzeria (caught up in debates about policing and the Black Lives Matter movement). In Comet Ping Pong's case, review bombing resulted in a wider spatial distribution of primarily negative reviewers, while Pi has a much more local pattern, with a fairly even split of supporters and detractors, showing how different political controversies resonate across different scales. The article contrasts Yelp's interventionist approach to content moderation to the relatively laissez-faire attitude of competitors like Google, and considers the consequences of this form of "algorithmic censorship" for small businesses, communities, and online speech.

Keywords

Introduction

Millions of people rely on digital location-based services (LBS), including local review and digital mapping platforms, to make decisions about where to spend time and money in cities around the world. Drawing on sociologist Richard Ocejo's research on the extraterritorial nature of “taste communities” brought together at upscale consumption sites like gourmet restaurants, coffee shops, and cocktail bars (Ocejo, 2014), in which “the clientele they serve are different from the communities within which they are embedded,” (p. 147) this article uses spatiotemporal analysis of Yelp review activity to depict and analyze the shifting catchment areas of local businesses, as measured through the locations of their reviewers over time and across review categories (Recommended, Not Recommended, and Removed). In some cases, extraterritoriality changes more gradually, for example when a new upscale restaurant draws diners from outside a gentrifying neighborhood. Other times, outside events rapidly thrust a business into the spotlight, resulting in people who could be thousands of kilometers away attempting to publish their opinions about a business in so-called “review bombing” incidents.

This article considers the change in extraterritoriality for businesses that are caught up in political controversies by using a novel data source: the location of consumer-reviewers contributing to a business profile page on the popular local review platform Yelp between the site's founding and 2004 and the time of data collection in April 2021. Analyses below examine the different distributions of Yelp reviewers’ home locations in reference to two case study businesses (and the overall population distribution of the United States), one showing a political controversy with national reach (Comet Ping Pong), and one that remained a local issue (Pi Pizzeria). The article also shows the results of statistical tests comparing the distance distributions of different subsets of reviewers. While review bombing is often assumed to be the work of far-flung Internet trolls, the actual spatial pattern of political reviewers can vary significantly from one case to another. In Comet Ping Pong's case, review bombing resulted in a much wider distance distribution of primarily negative reviewers, while Pi has a much more local pattern, with a fairly even split of supporters and detractors, the overwhelming majority of them located in the St. Louis metropolitan area.

Given these vastly different kinds of communities being mobilized in response to political controversy, the article concludes by considering the consequences of Yelp's interventionist approach to moderating political speech in reviews. Moderation of political content in reviews can protect small businesses from the very real impacts that review bombing can have on their ratings and their business performance, as seen in the Comet Ping Pong case, but this comes at the expense of enforcing a narrowly consumerist vision of spatial media and foreclosing opportunities for free expression online, including from the kinds of local supporters of businesses like Pi Pizzeria, who legitimately consider aspects of the business beyond the in-person consumer experience as relevant (Kitchin et al., 2017; Kuehn, 2013; Medeiros, 2019).

Literature review

Local review platforms like Yelp and Google Maps constitute one of the most widely used software interfaces to contemporary cities in the United States and elsewhere. Recent work in different regional contexts has used local review data to identify spatial clusters of foreign and regional Chinese cuisine in restaurants in China (Zhang et al., 2021), build deep learning models of neighborhood descriptions to understand urban development patterns over time in Toronto (Olson et al., 2021), compare the difference in sentiment distribution between Japanese and English-language Yelp reviews in Tokyo (Nakayama and Wan, 2019), and create a synthetic index of places affected by overtourism in the historic center of Venice (Ignaccolo et al., 2023).

Local reviews, like many forms of spatially referenced data, are not merely objective measurements of the underlying quality of a business, but a form of spatial media, which geographer Jim Thatcher argues must always “recursively shape and be shaped by larger processes of social and spatial production” (Thatcher, 2016). Customers who leave online reviews are only a small portion of the total customer base of a business, and local review usage patterns are uneven between and within metropolitan areas in the United States based on socioeconomic status and race (Baginski et al., 2014; Motoyama and Usher, 2020). Research in the Netherlands has also demonstrated a significant metropolitan spatial bias to local reviews compared to government datasets on building use (Arribas-Bel and Bakens, 2019).

The demographic of power users (i.e. Yelp “Elites” and Google “Local Guides”) that contribute the majority of reviews overlaps significantly with the population of new residents in gentrifying neighborhoods (Kuehn, 2016; Payne, 2021). These users are whiter, younger, richer, and more educated than the populations of the areas in which they live, and local review coverage is more comprehensive in denser urban areas (Folch et al., 2018; Hecht and Stephens, 2014; Tarr and Alvarez León, 2019). Despite this demographic bias, local reviews have a major impact on small business performance, with one study showing that a one-star increase in average Yelp rating causes a 5% to 9% increase in revenue for nonchain restaurants (Luca, 2016; see also Anderson and Magruder, 2012; Kuang, 2017), with reviews from Elite users proving especially decisive (Liu and Park, 2015; Park and Nicolau, 2015).

The power that local review platforms wield, like that of many of the digital marketplaces that make up contemporary platform urbanism (Bissell, 2023; Leszczynski, 2020), is intrinsically tied to their ability to be perceived as trustworthy and objective intermediators. To that end, the word “local” in local reviews has a dual nature: both denoting reviews that are spatially referenced, and staking a claim to “local knowledge” associated with values of authenticity and trustworthiness (Burns and Welker, 2022; Payne, 2021; Tarr and Alvarez León, 2019). Unlike local social networks restricted to area residents like Nextdoor (Payne, 2017), however, anyone can write a review on services like Yelp, Google Maps, and TripAdvisor, even about a place they have never patronized.

This article looks at the resulting spatial patterns in local review data with an inverted focus from many researchers working in similar areas. While a growing body of scientific literature uses anonymized LBS data to understand human mobility patterns and home locations (see Chen and Poorthuis, 2021), most of this work focuses on what spatially referenced data can reveal about individuals and groups of people, not the catchment areas of the businesses that they visit.

Background: Yelp

Founded in San Francisco in 2004 by former PayPal employees Jeremy Stoppelman and Russel Simmons (Fost, 2008), Yelp is the leading local review platform in the United States. Yelp's core labor force and audience of unwaged review writers is younger, wealthier, and more educated than United States as a whole, and skews white and Asian, and more female-identifying on average (Payne, 2021; Tarr and Alvarez León, 2019; Yue, 2011). The service had an archive of 265 million reviews at the end of 2022, and averaged ∼80 million monthly users in 2023 (Chmura et al., 2023; Sampey, 2024). While competitors like Google and Facebook that offer local review products of their own have more diversified revenue streams, 95% of Yelp's ∼$1.2 billion in revenue in 2022 came from advertising (Yelp Inc, 2023a). Because of the resulting imperative to have “brand-safe” content without obscene or low-quality information, and the fact that efforts by business owners to pad their own scores with illegitimate reviews threaten consumer trust in the platform (Cardoso et al., 2018; Luca and Zervas, 2016), Yelp takes an explicitly interventionist approach to content moderation.

Yelp's review filter

Yelp heavily promotes the effectiveness of the company's automated review process, which determines which reviews might be spam and “filters” them. Under the company's content guidelines, with narrow exceptions added in the wake of the 2020 George Floyd protests for reports of racist behavior from owners or employees (Yelp Inc, 2020), reviews that depart from this “ideology of consumption” are treated more harshly than those suspected to be fraudulently posted by business owners. Yelp retains the full contents of “Not Recommended” (presumed to be low-quality or spam) reviews, but quarantines them on a page that is relatively hard to find (only accessible via a small link that says “view reviews that are not currently recommended”) and not taking their ratings into consideration for a business’ average. Reviews that violate the company's Terms of Service, on the other hand, are removed, leaving only metadata. These reviews include anything that does not refer to an in-person experience with a business, or primarily focuses on cultural or political factors. Kathleen Kuehn has analyzed how Yelp's narrow framing of what the company describes in brand advertising as their mission of “review democracy,” serves as “a form of post-politics that reconfigures democratic participation in economic terms” (Kuehn, 2013).

According to Yelp's (2022) Trust and Safety Report, of the 21 million reviews posted to the site in 2022, 75% were Recommended (included in rating averages and readily visible), 19% were Not Recommended (filtered out of rating averages and posted on a secondary page), 4% were removed by Yelp's moderators, and 2% were removed by users themselves (Yelp, 2023). The company's review filter is an “algorithm” not in the strict computer science sense, but more akin to Nick Seaver's (2017) definition as a “heterogeneous and diffuse sociotechnical system,” one that relies extensively on human judgment on what is considered political or controversial. Since it is intended to counter spam and other inauthentic behavior, Yelp does not publicly reveal the full set of parameters that the company considers. This has provoked a backlash from businesses who argue that this opacity ends up suppressing real reviews, especially for businesses that do not pay for advertising (Burke, 2019; Diakopoulos, 2013; Orsini, 2013). That said, publicly available information shows that the company employs data signals including user behavior, location, friend count, and recency of account creation (Kamerer, 2014; Mukherjee et al., 2013; Yelp Inc., 2010), and that this algorithm's evolution over time can result in reviews moving between categories long after their original posting (Amos et al., 2022).

“Yelp bombing”

While most popular businesses on Yelp have a handful of filtered and removed reviews, the practice of “Yelp bombing,” in which a large number of people leave reviews in response to a political or media controversy, puts the company's moderation practices into starker relief. Like other online forums that rely on user-submitted content, location-based services are vulnerable to coordinated inauthentic behavior, including techniques like “brigading” (the formation of organized online harassment collectives that target members of marginalized groups) made common on sites like Reddit and 4chan (Marwick and Lewis, 2017). “Yelp bombing” was coined by marketing blogger Daniel Newman in 2009 in an analogy to “Google bombing,” the deliberate manipulation of the Google search algorithm to produce a humorous or political result (Newman, 2009). In October 2003, a number of left-wing netizens led a concerted effort to link the words “miserable failure” to then-President George W. Bush's official White House page until it became Google's top search result for that phrase (Tatum, 2005; see also Gillespie, 2019 on a similar issue involving Pennsylvania Senator Rick Santorum). Perhaps surprisingly, the two initial examples that Newman provides have little to do with politics, just typical customer service issues: a couple arrested at a Pennsylvania restaurant for refusing to pay a mandatory tip (Chang, 2009) and the owner of a New York City restaurant who allegedly yelled at his employees (Somaiya, 2009).

The phenomenon of review bombing became more prominent by the mid-2010s through a variety of culture war and partisan political issues, including gay marriage, gun laws, and the Obama presidency (Danovich, 2016; Dellinger, 2018; Dewey, 2015; Forbes, 2014; North, 2011). As psychologist Regina Tuma has argued, by defining itself as the “go-to spot for dining opinions,” when people perceive restaurant owners or staff as committing or supporting injustices, Yelp became the natural digital forum for protest (quoted in McKeever, 2015). One 2014 project by programmers Sam Levigne and Fletcher Bach even took the review bombing concept back into the material realm of protest by creating a Python script that automatically faxed any new Yelp reviews of 12 prisons around the United States to facility staff (Koebler, 2014).

Algorithmic and human moderation

When media attention suddenly leads to unexpected review activity, Yelp staff post an alert that reviews for that business will be subject to manual scrutiny and may be removed (Sollitto, 2016). This feature was introduced as an “Active Cleanup Alert” in 2015, and renamed to “Unusual Activity Alert” in 2018, alongside other alerts for suspicious or spammy review activity. On sites like Yelp that require users to declare a home location, sudden signals of increased review activity from new users and people located outside a business’ local area can trigger one of these alerts, locking profile pages and warning users not to post reviews that don’t reflect “firsthand consumer experiences.” In 2022, over 1900 businesses in the United States and Canada had Unusual Activity Alerts (Yelp Inc, 2023b), up from 940 in 2021 (Yelp Inc, 2022), 850 in 2020 (Yelp Inc, 2021), and 450 in 2019 (Malik, 2020a), an over 300% increase in 3 years, during a period in which the number of reviews contributed annually on Yelp dropped from 28 million in 2019 to 21 million in 2022, a 25% decrease (Yelp Inc, 2019, 2020, 2023b).

In a 2016 blog post describing the company's policy toward “social activism review storms,” Yelp executive Vince Sollitto suggests an alternate venue for users to post political critiques, called Yelp Talk: a series of disconnected message boards for each of Yelp's hundreds of regional communities, unindexed by search engines and barely moderated (Sollitto, 2016). The company claims that Yelp Talk posts are removed when users post “threats, harassment, lewdness, hate speech, or other displays of bigotry,” but many such posts remain visible, given the lack of incentives for Yelp to clean up such marginal areas of the site. Discussing two cases of political controversy in Yelp reviews that occurred in 2018, Ben Medeiros argues that Yelp Talk is “analogous to the often-maligned ‘free speech zones’ in physical protest[s],” moving speech that is meant to be “picketing the virtual storefront” to a sequestered digital space, where it could never have its intended effect (Medeiros, 2019). Sometimes, Yelp staff censor political viewpoints that the company promotes in other forums: writing about a pizzeria whose co-owner had refused to cater same-sex weddings, Amy McKeever notes that “Yelp had itself publicly denounced the Indiana religious freedom law that Memories Pizza supported, and yet here it was flagging and removing user reviews making the same point” (McKeever, 2015).

Political reviews on Google and TripAdvisor

Yelp's interventionist approach to policing political speech is not the only model for local review companies. The company's biggest local review competitor, Google Maps, is much less aggressive in its approach to content moderation. Local reviews on Google Maps are contributed by millions of Local Guides (frequent users) around the world, and the service has steadily gained reviews and importance against Yelp over the past decade. Google Maps is the default mapping application on all Android devices, and is preferred by the majority of Apple iOS users over the company's Apple Maps product, which displays review data both from Yelp and its own contributors (Alcántara, 2023).

The case studies below incorporate overall rating trends and selected political reviews from Google Maps, but the spatial analysis portion is currently limited to Yelp, since Google's moderations guidelines are vague and unevenly enforced, and removed reviews are not accessible publicly. Google does not include user locations in local review metadata, and inferring them requires some complex workarounds that are less effective for the kinds of infrequent reviewers who are more likely to contribute political content to local reviews (Rosen and Alvarez León, 2023 use the locations of each reviewers’ top 10 most-viewed photos, for example).

Finally, on competing local review site TripAdvisor, which is primarily aimed at tourists and operates across many countries, user profiles do contain location information, but most businesses in the United States have review counts that are considerably lower than either Yelp or Google. Furthermore, the TripAdvisor moderation strategy combines elements of both Yelp and Google in ways that make it challenging to study political speech: political reviews are removed, like in the case of Yelp (e.g. Comet Ping Pong has no #Pizzagate-inspired negative reviews on TripAdvisor), but no metadata about them is preserved in a publicly accessible location.

Case study selection

While Yelp has put thousands of Unusual Activity Alerts in place since 2015, putting together a comprehensive dataset of businesses targeted by review bombing is not feasible. Only Yelp has an exhaustive list of affected businesses, and there may be other cases that never rose to the level of an alert. To assemble a list of cases to consider for analysis, I searched for news stories containing keywords related to “Yelp bombing,” “review bombing,” or other terms combining “Yelp” with controversial topics, which resulted in a set of 38 businesses affected between 2009 and 2020, primarily in the United States (and one each in Canada and Ireland), on a wide variety of topics and degrees of intensity. The points of controversy ranged from allegedly racist or anti-gay owners to Black Lives Matter protests, Israel/Palestine, gun laws, the #Pizzagate conspiracy theory, and a variety of incidents related to the Obama and Trump presidencies. 1

Table 1, based on data assembled between April and July of 2021, contains the following information for each business: name, type of business, location, controversial issue, year of controversy, and the total number of Yelp reviews, followed by the number and percentage of reviews in each of the three types (recommended, not recommended, and removed; abbreviated to “Rec,” “NR,” and “Rem”). Data bars visualize the percentages of each review type (blue for Recommended, yellow for Not Recommended, and red for Removed), and the table is sorted in descending order by the percentage of Recommended reviews. The percentage of filtered and removed reviews ranged from around 20% (for two New York City controversies not prominently featured in national media) to 99.9% for Manhattan lawyer Aaron Schlossberg, captured on video in 2018 threatening to call immigration authorities after hearing a restaurant worker and customer talking to each other in Spanish.

Prominent targets of Yelp bombing from 2009 to 2020 (case study businesses highlighted).

I used a number of screening criteria to then select two case studies. I used a starting date of 2015, since by this time every major city in the United States had a significant roster of Yelp reviews and paid Yelp staff moderators monitoring local review activity (Payne, 2021). I also limited the case study pool to issues of broad national importance, which eliminated places like the Boca Raton Resort and Club, targeted for kicking out a YouTube prank star (Matyszczyk, 2018). I set a threshold of 100 reviews for the Recommended and Removed categories in order to better compare their spatial patterns, and I limited my case studies to businesses in the United States, the country where Yelp was founded and remains the most relevant and widely used. This ruled out a business in Vancouver whose staff ejected a customer wearing a red MAGA hat (“Make America Great Again,” the campaign slogan of former president Donald Trump) in 2018. Since Yelp is less profitable in Canada, the company's moderation efforts are less extensive there, as evidenced by the business having the second-highest “Not Recommended” review percentage in Table 1. The business with the highest Not Recommended rate was Human Village Brewing in Pitman, NJ, at 87.7%, likely because their local controversy around hosting an event with “alt-right” political personalities was never assigned to Yelp staff to moderate given their relatively remote location in the South Jersey suburbs of Philadelphia.

Two potential test cases were eliminated due to methodological challenges. The Red Hen in Lexington, VA, which received a firestorm of reviews after President Trump's then-Press Secretary Sarah Huckabee Sanders was denied service, along with several unrelated restaurants with the same name elsewhere, would be suitable test cases (Matsakis, 2018). Unfortunately, the Yelp website crashes when trying to load all of the Red Hen's over 37,000 Not Recommended and Removed reviews, and the Internet Archive's Wayback Machine does not archive full Yelp review data, just business profile pages. Given more space, Reem's, a group of restaurants serving “Arab street food” in the San Francisco Bay Area, would be another interesting case study, although somewhat complicated by the fact that it has had multiple locations and has been the subject of several different controversies, pertaining both to the Israel–Palestine conflict (Dalton, 2017) and attitudes to policing in American cities (Lin, 2023), which could be challenging to disentangle.

Following these steps left two restaurants with around 1200 reviews each that both had significant political controversies and review compositions that allow for comparison of spatial patterns within recommended and removed reviews: Comet Ping Pong, in Washington, D.C. and Pi Pizzeria in St. Louis. Coincidentally, they both happen to be gourmet pizzerias, but the difference in the spatial distribution and relative impact of their politically charged reviews is instructive in how the moderation of speech on local review platforms can have real consequences for businesses and communities.

Methodology

I collected information on all Yelp reviews for each of the two case study businesses, including metadata that is public on Yelp's site but is not included in their API (Application Programming Interface) like the listed location of each reviewer. Not Recommended and Removed reviews are also not included in the Yelp API, and while less information is available for these reviews (Removed reviews do not display their contents), all of them include metadata like date, username, star rating, and user location. Duplicate reviews were somewhat common for Not Recommended and Removed reviews; I merged these by using date, rating, and username. Next, I put each user location through the ESRI World Geocoding service using a Python API to generate a latitude-longitude pair for that location, and compared each coordinate pair to the location of the corresponding business to get a Euclidean (as the crow flies) distance between the two points in kilometers. These distances, grouped by review type (Recommended, Not Recommended, and Removed) and star rating, are the basis for the density plots below.

I used a cutoff time of April 2021 for reviews to adequately capture the effects of each controversy, given that one occurred in 2016 and the other in 2020, and by early 2021 neither were receiving regular media attention for these incidents. Also, the more time passes after a controversy, the more likely it is that users might delete their accounts, or that Yelp might move reviews between categories, a practice that researchers have shown can happen even in the absence of significant new review activity (Amos et al., 2022).

There are several potential methodological limitations worth mentioning. First is the possibility that the self-reported location of Yelp users is inaccurate, giving a false sense of reliability for the spatial data available (see Dalton and Thatcher, 2015 on “inflated granularity”). This could be the case for a number of potential reasons. First, users could have deliberately chosen imprecise (e.g. “New York City” instead of “Highland Park, New Jersey”) or false locations to mask their identity. Second, they could have moved between the time they set up their Yelp profile and when they wrote a review, or when I accessed it. While Yelp review contents and posting dates are not changeable without leaving metadata traces, aspects of a user's profile like their name, location, and count of reviews and friends are only loaded when accessed by a site user.

That said, the effect of unrecorded long-distance moves on the spatial pattern of Yelp reviewers is not likely to be very high. According to the U.S. Census Bureau, in 2021 only 5.7% of Americans moved to a different county, and only 2.4% to a different state (Palarino et al., 2023). These out-of-state moves would include regional moves between, for example, St. Louis, Missouri and East St. Louis, Illinois, or Washington, D.C. to and from Virginia and Maryland, in the case of our two case study businesses. Even if Yelp users are more likely to move given their youth and higher education level, the overall turnover in a few years is not likely to be high enough to drastically affect this data. Also, given that these case studies examine the change in reviewer location patterns before and after political controversies, and there is no a priori reason to assume that mislocation issues would affect one set of reviews more than another, the likelihood that they are meaningfully obscuring the spatial patterns of customer-reviewers seems to be low.

Case study 1: Comet Ping Pong (Washington, D.C.)

Comet Ping Pong is an upscale pizzeria and music venue in the Chevy Chase neighborhood of Washington, D.C. The restaurant opened in 2006, and for the first decade of its operation was primarily known for its thin-crust pies (Guy Fieri featured Comet on his TV show Diners, Drive-Ins and Dives in 2010), ping-pong tables, and occasional concerts. Just before the 2016 U.S. presidential election, WikiLeaks released Hillary Clinton advisor John Podesta's hacked emails, which included reference to pizza at Comet Ping Pong that Internet conspiracy theorists interpreted as revealing a child sex trafficking ring operating from the restaurant's (nonexistent) basement (Sommer, 2016). The ensuing #Pizzagate conspiracy, which continues to circulate among some right-wing online networks (Harwell, 2023), inspired one man to drive from North Carolina to the restaurant and fire an assault rifle before police were able to convince him to stand down (Kang and Goldman, 2016).

While this was the most dramatic real-world spillover of the online-first conspiracy theory, as Pizzagate spread through right-wing misinformation networks online, Comet's owner James Alefantis saw the consequences immediately in abusive social media messages and online reviews, leading Yelp to place an Unusual Activity Alert and stop new reviews to Comet's page temporarily, while moving conspiratorial reviews to the Removed page (Kang, 2016). As of April 2021, Comet had a total of 1242 Yelp reviews: 836 Recommended (67% of the total), 123 Not Recommended (10%), and 283 Removed (23%).

Comet has four Recommended reviews that mention Pizzagate, but they all consist of people referring to the publicity the conspiracy theory brought to the restaurant (one review starts with “I’m that guy who wanted to go because of #Pizzagate”), not the allegations themselves, and they all also contain fairly plausible consumer-framed reviews of the business. Some more recent Pizzagate reviews have snuck past the Yelp moderators, if not their automated filter; one Not Recommended review by Alejandro G. in 2020 says: “Creepy pedophiles run this place. Their Instagram is full of child pornography and they host speakers and bands who love to talk about their pedophilia.”

One user exercised considerable creativity in attempting to get around Yelp moderation policy by selecting “Johnpodesta P.” as their Yelp username, though their review was still removed.

Comet's review activity on Google Maps is significantly more chaotic, with no visible effort to moderate the 1214 total reviews except presumably an automated profanity blocker. Looking at the one-star reviews in particular reveals dozens of reviews referencing Pizzagate, almost as many as there are one-star reviews talking about the food or service. While it is impossible to tell whether these reviews represent sincere belief or constitute ironic “shitposting” (Woods, 2023), the effect on Comet's average review score is real. As a result of these reviews, the pizzeria's average rating on Google is only a 3.9, putting Comet behind dozens of other nearby pizzerias. Since the average restaurant rating on Google is 4.2 (Li and Hecht, 2021), compared to 3.7 for Yelp (Bialik, 2018), a 3.5/5 on Yelp (95% of the median review average for restaurants) would be equivalent to a 4.0 on Google. If the Pizzagate-themed Google reviews were all deleted, Comet could have an even higher score. Given the rising popularity of Google Maps reviews, this lack of moderation is likely costing Comet revenue; a 3.9 score on Google misses the cutoff for the top two ratings filters that users can use to narrow down their search (4.5 and higher, which comparatively few businesses achieve, and 4.0 and higher, which is much more common).

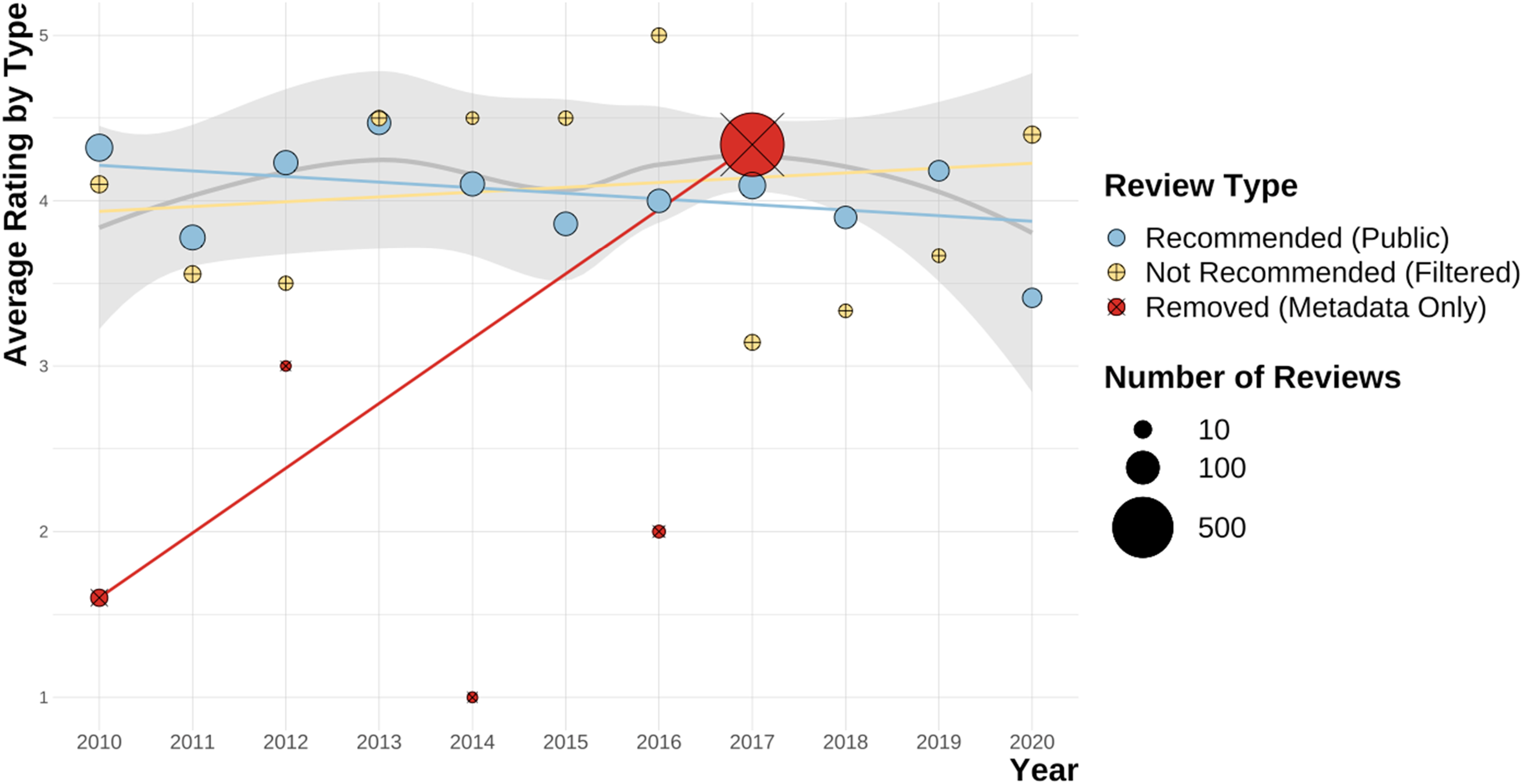

Figure 1, showing average rating by review type for Comet Ping Pong annually, gives a sense of how bad things could have gotten for the pizzeria if Yelp had not been actively moderating reviews. The restaurant's mean rating across all reviews, including Not Recommended and Removed, would be 3.13, which would round down to 3 stars in Yelp's interface, instead of its current 3.5 stars (see Luca, 2016 for the revenue implications of lower Yelp ratings). The gray line is the local (loess) regression for all reviews, weighted by number, and there are three linear regression lines for each review type, omitting years with fewer than five reviews in a category.

Comet Ping Pong: annual total and average rating of Yelp reviews by type.

Given that there is a significant “Pizzagate effect” on Comet Ping Pong's local reviews, one that Yelp has mostly attenuated through moderation, a logical next question would be to what extent these conspiratorial reviews are local, or whether they have primarily been contributed by people in other areas who had heard of the conspiracy theory and made their opinions known online. Put differently, is there an observable difference in the extraterritoriality of Comet's reviewers as a result of this political controversy?

As a first attempt to answer this question, Figure 2(a) aggregates Comet's 836 Recommended Yelp reviews by the location of the reviewer at the Core-Based Statistical Area (CBSA) level, labeling CBSAs with over 10 unique reviews. The vast majority of these reviews come from the Washington-Arlington-Alexandria CBSA, followed by other CBSAs along the Northeast Corridor, Chicago-Naperville-Elgin, and the two primary CBSAs in California (Los Angeles-Long Beach-Anaheim and San Francisco-Oakland-Hayward). This distribution shows a balance between a few tendencies: the expected hyperlocal skew of a restaurant, Yelp's metropolitan skew (particularly concentrated on both coasts) toward highly educated and wealthier users, and the way that frequent reviewers employ distant travel destinations as what Rosen and León call “signaling hinterlands” demonstrating their worldliness through curation of their online personas (Rosen and Alvarez León, 2023).

Comet Ping Pong: recommended Yelp reviews (a) and removed one-star Yelp reviews (b) from 2006 to 2020, mapped and shaded by Core-Based Statistical Area (CBSA).

By contrast, Figure 2(b) applies the same process to the restaurant's 229 Removed Yelp reviews with 1-star ratings. While it is possible that some one-star reviews could have been removed for other reasons, since only one of these reviews was posted before November 2016, and 143 were posted within three months of this event, it is reasonable to assume that the majority of the Removed one-star reviews were removed for expressing sympathy with the Pizzagate narrative and attempting to damage Comet's business. As such, this map shows the distribution in space of conspiracy-minded Yelp reviewers as a proxy for Pizzagate sympathizers in the broader population, filtered through the demographic and psychographic biases that lead people to contribute reviews. Much of this pattern follows overall population density, but there is a distinct Southwestern bias to the distribution, especially compared to Figure 2(a); the Los Angeles-Long Beach-Anaheim CBSA actually has more Removed reviews (21) than Recommended ones (18).

One problem with analyzing the maps in Figure 2 is that due to differences in both the underlying spatial structure of the United State’ population distribution, and the expected distance distribution for restaurant patrons being a tight focus around the immediate area with some “long tail” reviews from more distant visitors, meaningful differences in spatial patterns can be quite difficult to understand visually (MacEachern and Taylor, 2013; Payne and McGlynn, 2024). By using a kernel density estimation (KDE) function in the

Comet Ping Pong: distance distribution of recommended and removed Yelp reviews.

In this case, the distance distribution of the 836 Recommended reviews follows a fairly predictable spatial pattern, in which the vast majority of reviews are extremely local, with a secondary peak between 3500 and 4000 km away, a range that corresponds to the West Coast of the United States (note how the U.S. population distribution also has a secondary spike here). The 283 Removed reviews, on the other hand, have their highest concentration in this West Coast distance band; 90 removed reviews, 32% of the total, are from Yelp users in California, which contained 12% of the population of the United States in 2016, according to the U.S. Census Bureau). In this case, in addition to being the home of Yelp, California is significant as the country's most populous state; a pattern that shows a lot of reviewers from the state is consistent with an increase in interest in Comet across the entire country, as can also be seen in the consistent density distribution across lower distances as well.

Looking at the 283 removed reviews and splitting them into one-star reviews (n = 229) and five-star reviews (n = 24), and plotting kernel density functions for their distance distributions, it is clear that the negative removed reviews are more concentrated in more distant places (see Figure 4), specifically on the West Coast of the United States (around 3700 km from Washington, D.C.), while the five-star removed reviews are somewhat more balanced. Performing a two-sample Kolmogorov-Smirnov test on these two distributions results in a p-value of 0.008 (highly significant), indicating that it is very unlikely that these two samples come from the same underlying distribution.

Comet Ping Pong: distance distribution of removed 1- and 5-star Yelp reviews.

In this case, there were 229 removed 1-star reviews, only one of which predates the Pizzagate conspiracy theory. By contrast, there were only 24 removed reviews with 5-star ratings, mostly in D.C., with a scattering of cases across the country and one in Mexico City. So, to the extent that there was a pro-Comet backlash to Pizzagate, it doesn’t seem to have played out as review bombing, though as attested to by the several Yelp reviewers who mention the phrase in their reviews, it did seem to attract some new in-person customers to the pizzeria. Comet Ping Pong follows the intuitively expected postcontroversy pattern of increased interest from distant reviewers, but this is not the only way that review bombing can play out spatially.

Case study 2: Pi Pizzeria (Saint Louis, Missouri)

The second case study business is Pi Pizzeria, in St. Louis’ Central West End neighborhood, a hotspot for gentrification since the 1990s due to its intermediate position between the mostly poor and Black neighborhoods north of Delmar Boulevard and the richer and whiter western neighborhoods of the city (Swanstrom and Plöger, 2022). After working in the technology industry in San Francisco in the early 2000s, founder Chris Sommers bought a Chicago-style deep dish recipe from Bay Area pizzeria Little Star and took it back to the Midwest in 2008 with the opening of Pi in the Central West End. Now a local chain, Pi had its first brush with politics early on; while on a campaign stop in Missouri, then-Senator Barack Obama tried and loved Pi, and Sommers was later invited to serve his pizza inside the White House (Wagman, 2009). This initial brush with partisan politics came before Yelp became popular in St. Louis; the company only hired a paid staffer to oversee local reviewers in 2010 (Payne, 2021). A different pizzeria, Big Apple Pizza in Ft. Pierce, FL (see Table 1), was the subject of a review bombing campaign after the owner hugged President Obama in 2012 (Danovich, 2016).

In September of 2017, Sommers was handing out water during protests after St. Louis police officer Jason Stockley was found not guilty of murdering Black man Anthony Lamar Smith in 2011; he and his staff were tear-gassed and shot with pepper pellets (Sommers, 2017). The experience profoundly affected Sommers, who was hardly a stereotypical “defund the police” supporter. As Sommers points out, uniformed cops got free or half-price meals at Pi, and the business donated regularly to law enforcement organizations (Castrodale, 2017). This record of support did not matter. Sommers’ blog posts and interviews with local media about his experience led outraged law enforcement supporters to call the business and yell at staff, place large fake orders, and leave negative reviews online, primarily on Yelp (Kloepple, 2017).

This on- and offline harassment was encouraged by a blog post by Blue Lives Matter (a pro-police group that emerged in 2014 as a direct response to Black Lives Matter) that was reposted on Facebook and later deleted by the St. Louis County Police Association (Reilly, 2017). The review bombing that ensued is clear in the restaurant's Yelp review data (see Figure 5), but so is the subsequent defense of the business by its political supporters, particularly in the St. Louis area. As of April 2021, Pi Pizzeria had a total of 1119 Yelp reviews: 467 Recommended (42% of the total), 64 Not Recommended (6%), and 588 Removed (52%). Perhaps surprisingly, compared to the very negative skew of the Removed reviews for Comet Ping Pong, the average rating of Pi's removed reviews is over four stars, very close to the restaurant's average rating among recommended reviews. This high average rating is due to the fact that while there are 98 removed 1-star reviews, there are also 470 removed 5-star reviews.

Pi Pizzeria: annual total and average rating of Yelp reviews by type.

Yelp's moderation of political posts has been fairly thorough on Pi's profile page. There are zero recommended reviews that contain the phrases “cop,” “LEO” (law enforcement officer), “blm” (Black Lives Matter, a decentralized political movement in the United States since 2013 that aims to combat racial discrimination and police violence against Black people), “BLM,” or “protests.” There is one recommended review each for “police” and “protest.” Both are five-star reviews posted by established Yelpers who express support for restaurant staff against the backlash from police advocates, but also mention in-person customer experiences, just like the Comet reviews alluding to Pizzagate. One of the Not Recommended reviews, from user Steve H. in 2020, includes an extended political diatribe, comparing Pi staff to members of “antifa” (short for “anti-fascist,” a loose array of anarchist-inspired protestors regularly seen at political demonstrations) and residents of famously left-leaning Portland, Oregon: “StL's second home of cancel culture. Left Bank Books across the street is the headquarters. You shouldn’t eat with these people or shop in their neighborhood. They hate diversity of opinion and really detest white people and cops, it's very obvious. Stay away. It's like a mini-Portland Antifa wannabe.”

On Google Maps, by comparison, Pi's flagship Central West End location had 1126 total reviews, with an average rating of 4.4 out of 5. Seven of these reviews mention the word “police,” “LEO,” or “cop”: five one-star reviews complaining about Sommers’ lack of support of the St. Louis Police Department, and two five-star reviews supporting him for speaking out on this issue and being targeted by conservatives as a result. Notably, of the four negative reviews, two at least referred to in-person experiences; one said they had a bad experience wearing a shirt supporting police, and one said they visited with a police officer friend and were treated poorly. Four five-star reviews mention “BLM,” “blm”, “protests,” or “protest.” Four more one-star Google reviews refer obliquely to the controversy, referring to Sommers with phrases like “loser owner” and “horrible owner.” One says that the “owner needs his priorities checked […] focus on making good pizza, not politics,” and another says “[w]ill not dine here again after watching owner on the news.”

Despite the vitriol in these posts, Pi does not have nearly as many negative Google reviews as Comet Ping Pong, perhaps because the controversy here was more local in nature, and not covered on national news broadcasts. In this case, since the Google reviews average at 4.4, compared to the 4-star rating on Yelp, there does not seem to be a significant penalty or a bonus to Pi's reputation given the services’ different overall rating averages, just a limiting of speech supporting the business owner for qualities not related to the in-person customer experience.

As seen above, Comet Ping Pong's review catchment area showed a clear “scale jumping” effect, which Virginie Mamadouh (2004) has defined as “shar[ing] information and ideas across space” in order to more effectively articulate and frame debates among far-flung participants, as the Pizzagate conspiracy theory brought a much farther-flung group of conspiratorial reviewers to the pizzeria's profile page. Pi's spatial distribution of removed reviews, on the other hand demonstrates an inverse pattern. As shown in Figure 6, there are significantly more Removed reviews from the St. Louis metropolitan area than Recommended reviews. A two-sided Kolmogorov-Smirnov test of these two distributions results in a p-value of 0.0000006, meaning that it is extremely unlikely that they are samples from the same underlying distribution. In this case, Pi's regular customer base had a moderately local focus, with additional spikes on the East and West coasts of the United States, but locals were much more likely to weigh in on the controversy around the protests in ways that triggered Yelp's human and automated moderation screen for removal.

Pi Pizzeria: distance distribution of recommended and removed Yelp reviews.

Focusing on only the removed reviews that are either one or five stars shows these spatial patterns more clearly. Figure 7 shows that within the removed reviews, one-star reviews are even more concentrated in the immediate vicinity of St. Louis, but the five-star reviews are also quite local. In this case, a two-sided Kolmogorov-Smirnov test results in a p-value of 0.67, however, which is not significant enough to reject the null hypothesis that they are two samples from the same distribution.

Pi Pizzeria: distance distribution of removed 1- and 5-star Yelp reviews.

At first glance, the local focus of political Yelp reviews for Pi seems like a counterintuitive result for a communications technology designed to bridge distance and bring local knowledge to outsiders. As previous research using other social media platforms like Twitter that offer the theoretical possibility of transcending distance and scale has shown, though, many of these networks are more effectively connected at the local level (Stephens and Poorthuis, 2015). Furthermore, the outspoken stance that Sommers took criticizing police violence appears to have struck a nerve primarily among local BLM and police oversight advocates, without reaching a significant national or international audience.

In addition to the spatial pattern contrasting markedly to Comet, Pi shows an example of how review “bombing” can come from supporters of a business as well as detractors, and is not limited to reviewers of one political tendency. In fact, Pi is far from the only case of progressive or left-wing groups using Yelp to send a political message. In 2014, for example, the Tenants Union of Washington State encouraged their supporters to leave negative reviews on multiple Yelp profile pages associated with properties owned a Seattle developer and landlord that they argued was engaged in predatory practices (Tenants Union of Washington State, 2014). Other issues associated with liberals and leftists have also led to review bombing incidents; participants in the January 6th riots at the U.S. Capitol have been identified and targeted on social media, including Yelp and Google Maps (Associated Press, 2021), and businesses that have violated COVID-19 lockdowns or masking policies have also come under attack (Bell, 2022).

Future research

A next step to explore the impact of review bombing on online speech, small businesses, and communities would be to expand the temporal and topical scope of the dataset to include more subjects of controversy, including responses to COVID-19 lockdown and mask policies and the Israel-Hamas war in Gaza. As discussed above, the highly variable number of reviews between businesses can make comparisons across different kinds of controversy difficult, but with a bigger dataset it might be possible to make directional claims about which kinds of topics are more likely to induce distant users to leave politically charged reviews. Second, the author is investigating ways to employ the methods used here to study changing catchment areas of politically controversial businesses to understand more subtle shifts, especially those associated with gentrification and urban redevelopment.

Discussion

This article has demonstrated a number of features of review bombing and political speech moderation practices on online local review platforms. At the highest level, this research has shown how local review data can serve as evidence showing how local businesses shift their spatial contexts when they become the subject of regional or national cultural controversies. Because local review platforms provide an easy outlet for noncustomers to express outrage or support, businesses that become the subject of political controversy leave clear signals in Yelp review data, especially when considering the spatial pattern and numerical averages of both the recommended and removed reviews. Second, the radically different reviewer coalitions represented in the two case studies examined above show how review bombing can have multiple political valences. The dramatically different spatial patterns of removed reviews between the two businesses also show that it is not always conducted by outside groups, but can be the product of local grassroots movements as well.

Finally, the platforms that monetize place-based speech have varied incentives behind their moderation decisions, which can lead to inconsistency in what is considered acceptable political speech between platforms and across time. Google's light hand in moderation, which makes business sense for a company that has a global scale and is not particularly dependent on small business advertising served adjacent to review content, can lead to more abusive and false reports than seen on Yelp, as in the case of Comet Ping Pong, which could be hurting Comet's business with Google Maps users. That said, Google allows for the expression of more varied political messages in reviews than Yelp's form of “algorithmic censorship” (Gillespie, 2018), as seen in the case of Pi Pizzeria.

Beyond the potential for local review moderation decisions to affect the rating averages and revenue potential of small businesses, the consolidation of moderation decisions in the hands of just a few companies raises questions about what the role of political speech in local reviews should be. Even companies like Yelp that take a more active role in moderating online speech are sometimes responsive to changing social attitudes, which has led the company to depart from an antipolitical moderation strategy to one that explicitly supports marginalized groups who have been the target of harassment.

In October 2020, in the wake of a growing national antiracist movement (and an attendant backlash from political conservatives), Yelp released a blog post saying that after George Floyd's killing on 5/26, there were over 450 Unusual Activity alerts on businesses “that were either accused of, or the target of, racist behavior related to the Black Lives Matter movement” (Malik, 2020b). The company responded to the shift in the national political climate after Floyd's death by introducing two new alerts: one for alleged in-person incidents of racist harassment, and one for places where there are “egregious acts of racism” that requires documentation with a credible news article. After the introduction of these new alerts, right-wing commentators, including Donald Trump Jr., reacted to the rollout of these new alerts by claiming that this feature will be weaponized by left-wing groups to punish conservatives (Palmer, 2020).

Although these developments stand out compared to Yelp's previous policy as the company taking a political stance and aiming to safeguard its customer base from racist harassment, it is notable that the positive reviews that Pi Pizzeria received for supporting Black Lives Matter are still prohibited under this policy; only warnings about discrimination are allowed. Reasonable people can disagree on whether the political positions of a business owner should guide decisions on whether to patronize an establishment, but Yelp users do not get to make that choice; the company's filter is unyielding. It remains to be seen how local review platforms will adapt to ongoing debates about free speech and online harassment related to the ongoing war in Gaza, which has already left its mark in negative Yelp reviews for both Israeli and Palestinian restaurants in the United States (Javaid and Ferguson, 2023).

Footnotes

Declaration of conflicting interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author received no financial support for the research, authorship, and/or publication of this article.