Abstract

Mainstream coverage of artificial intelligence often appears to emphasise the technologies’ benefit and economic potential over its growing downsides. How does a technology poised to be so disruptive become so uncritically embraced? Why is it, simply put, that artificial intelligence's representations in legacy media do not normally convey the controversialities otherwise found in research or policy debates? We introduce the concept of ‘freezing out’ to describe processes of translation that cool down debates over the merits of technology. Freezing out looks at the other side of controversy studies to study the production of uncontroversies or cold controversies rather than hot topics and debates. We use the coverage of artificial intelligence in Canadian national news outlets to analyse how controversiality becomes ‘frozen out’. Since Canadian academics won the prestigious ImageNet prize in 2012 introducing the modern turn toward machine learning approaches, Canada has promoted itself as a global leader. Using in-depth interviews with Francophone and Anglophone journalists as well as topic modelling on data collected from five major newspapers, we find that routine news making processes between journalists, experts, entrepreneurs, and governments build, maintain, and promote Canada's artificial intelligence ecosystem. Freezing out contributes to a broader interest in how heterogeneous actors traverse their domain of expertise across policy, media, and research circles to cool down artificial intelligence controversies.

This article is a part of special theme on Analysing Artificial Intelligence Controversies. To see a full list of all articles in this special theme, please click here: https://journals.sagepub.com/page/bds/collections/analysingartificialintelligencecontroversies

Introduction

A Canadian journalist shares his reflection and frustrations about covering artificial intelligence (AI) for a major newspaper in Québec. They explain: These companies working on AI, they had partnerships, money, and customers. … At some point, my boss put three journalists, including himself, on the project. The challenge was to find examples of good industrial or commercial applications of AI in Québec. And this is when we realized: we had been fooled for two years! … There were partnerships and projects, but there was not a damn [company] that could come up with a concrete project. In the end, on trouvait juste des peanuts [we only found peanuts]: some little ridiculous ventures with weak AI.

In what follows, we define a particular politics of translation happening in news production. What we call the freezing out of AI's controversiality is a process that is co-constructed by legacy media, journalists, and cited experts who are increasingly entrepreneurial. Our contention is that such emergence of a cold situation involves mundane practices and processes of news making that frame a particular set of visions and promises about AI. This framing implies that journalists and newsrooms are active participants in AI's cold situation; they are an ‘engine, not a camera’ (Mackenzie, 2017).

We apply our concept of freezing out to Canada, a critical case of a traditional middle power attempting to position itself as a world leader in AI. We begin by situating our study of Canadian news coverage within the wider literature of news making and AI's national representations. Using quantitative and qualitative news analysis, we examine which and how heterogeneous actors produce AI stories. In both Francophone and Anglophone outlets, we find a convergence of expectations around AI between journalists, newsrooms, entrepreneurial experts. AI has been promoted by legacy news media whose newsrooms have become concentrated around business desks, around journalists trying to interpret an amorphous technology, and by scientists increasingly forced into entrepreneurship to sell their science. The result, we contend, is that coverage of AI uncontroversially frames it as a national resource that needs to be unreservedly exploited (Colleret and Gingras, 2022). We conclude by illustrating how the freezing out of AI's controversiality not only frames debates on the merits of AI, but also the political economy that support this so-called disruptive technology.

News making, legacy media, and artificial intelligence

Journalists and newsrooms are not neutral observers of the production and dissemination of news; they are participants in what Sarah Whatmore calls ‘moments of ontological disturbance’ during which what makes the news is intricately constructed ‘as unexamined parts of the material fabric of our everyday lives [that] become molten and make their agential force felt’ (Whatmore, 2009: 587; see also Stengers, 2005). Scientific controversies, for example, extend to public domains and settings outside academia, often through journalism that at least in theory, invites the participation of heterogeneous actors and institutions (Seurat and Tari, 2021; Venturini, 2010). Controversy analysis teaches us that technoscientific objects are eminently political (Latour, 2005; Marres, 2015, 2020; Ricci, 2019). Journalists then act as key translators, as Callon puts it, ‘creating convergences and homologies by relating things that were previously different’ (1981: 3). If journalists translate, then what type of translation does news coverage, particularly by legacy media, do amidst an expanded range of actors?

The legacy media's status as a democratic institutions has been questioned by the turn toward hybrid media systems where newspapers are merely one category of actors in the production of the political information cycle (Chadwick, 2013). We focus on legacy media because of its traditional and elite status in media systems. There is no canonical definition of legacy media. Legacy media, to us, refers to established journalistic organisations that published before the Internet with strong public reputations and special protections by governments. These are important institution of liberal democracies that can amplify controversy, sustain public attention, and compel authorities to respond (see Langer and Gruber, 2020). In liberal democracies, legacy media are traditionally expected to provide a unique space for democratic debate and civic engagement (Cefaï, 1996; Habermas, 1992; Joseph, 1984). As a liberal profession, journalism has been institutionalised around norms of impartiality and neutrality, which is reflected in the duties reporters often set for themselves of covering events and situations rigorously, faithfully, quickly, and objectively (Thibault et al., 2020). In that regard, legacy media has historically sought to form a symbolic arena, where discourses on science, technologies, and their related controversies and societal expectations are communicated and subjected to unrestricted deliberations (Konrad, 2006; Marres, 2007). Legacy media aspires to create an interpretative space—a place that makes room for a ‘politics of expectations’ (Borup et al., 2006: 295) in which news making practices and processes construct and circulate contrasting visions of the technological future (van Lente, 2012). Callon notes that newspapers are ‘devices that allow us to visualise the existence of externalities’ such as ‘when Parisians read in their daily newspapers that the pollution index has risen’ (1998: 258). Translation brings worlds to be sensible to publics. As Callon (1981, 1986) teaches us, translation frames the controversiality of objects—that is, their quality of being controversial—by shaping them from one context, situation, or state to another (Roberge et al., 2020).

Legacy media has become a powerful site of discourse formation in which certain voices and tropes about AI are authoritatively put forth while others are not (Bareis and Katzenbach, 2022; Bunz and Braghieri, 2022). Since 2018, studies on AI coverage have tended to focus on AI's national representations, often through a few prominent national newspapers. In the United Kingdom, J. Scott Brennen and colleagues (2018) studied six mainstream news outlets to conclude that 60% of all articles on AI in the UK are indexed to industry products, initiatives, and announcements. Similar findings exist in the United States, where primary topics in news coverage of AI focused on business and technology (Chuan et al., 2019). In Denmark, a discourse analysis of AI articles from all main news outlets since 1956 reveals that media representations of AI largely serve the Danish political economy of AI (Hansen, 2021). In Australia (James and Whelan, 2022), China (Zeng, 2021), and Germany (Köstler and Ossewaarde, 2022), legacy media also tend to present AI in a favourable light for its national development and implementation. Another trend is the high proportion of AI stories where few experts were cited (Brennen et al., 2019; Bunz and Braghieri, 2022; Nguyen and Hekman, 2022). In Canada, our own analysis is consonant with these findings and concludes that AI coverage is primarily business news (Dandurand et al., 2022).

International coverage participates in making, or not making more exactly, AI controversial. As Noortje Marres lucidly puts it, controversy analysis is an investigation into how ‘the formulation of knowledge claims and the organisation of political interests tend to go hand in hand’ (2015: 656). AI is an object of debates and its related controversies continue to be present in scientific circles (Dandurand et al., 2022) and on social media (Marres, 2020). However, these debates have not been covered with as much consistency and depth in legacy media. These changes are part of a longer trend where economic forces are overrepresented in news coverage and steer knowledge formulation. For example, hyperbolic narratives positioned nanotechnology as key to economic growth two decades ago (Chateauraynaud, 2005). Just like promoters constructed narratives about nanotechnology leading to a third industrial revolution in the early 2000s (Colleret and Khelfaoui, 2020), AI is promoted as promising to bring about a fourth industrial revolution (Vicente and Dias-Trindade, 2021). The convincing nature of these representations—visions of what science and technologies will one day be able to achieve—make these news stories performative: they rally actors, attract funding, coordinate research activities, form the basis of policy making, and organise entire technoscientific communities in and outside of academia (Ananny and Finn, 2020; Borup et al., 2006; Konrad, 2006). As the nanotechnology case study teaches us, representations of the future of AI can ‘colonize the present’ (Joly, 2010, 2015) and serve the purpose of steering debates into a direction where they could become non-debatable.

The uncritical embrace of AI as good—or techno-positivist—points to another side of a controversy. Where controversy studies are usually looking for flashpoints of conflicted definitions and uncertainty, what to do when AI's coverage involves so much consensus about its benefits? Here we draw directly on Callon's (1998) work on controversy studies that contrasts hot and cold situations. As Callon explains, ‘in “hot” situations, everything becomes controversial’; things and performances are chaotic, unsettled, and indeterminate. The involvement of actors and their interests fluctuate greatly. Controversies are volatile and, seemingly, consensus cannot be reached. Conversely, ‘in “cold” situations’, Callon continues, ‘agreement … is swiftly achieved. Actors are identified, interests are stabilized, preferences can be expressed, responsibilities are acknowledged and accepted’ (1998: 260–261). Over three decades ago, Callon noted that our ‘“hot” world’ was ‘becoming increasingly difficult to cool down’ (1998: 263). Indeed, controversy studies have had to contend with a ‘hot’ world when ‘contestation has become a matter of routine’ (Whatmore, 2009: 588). AI's positive representation, however, prompts us to interrogate how a cold situation, rather than a hot one, might be the appropriate framing.

How do cold situations happen? One answer is our concept of freezing out. Freezing out cools down potentially hot situations through interactions between key actors and institutions that create a mutually beneficial framing of the said situation—resulting in what looks like a cool situation. Here, indeterminacies and uncertainties become settled when a few experts close debates, obfuscate contrasting expectations, and shape the political economy of science and technologies (Borup et al., 2006; Nahuis and van Lente, 2008). In this sense, freezing out results in something different from Callon's (1998) cold situation since the results do not lead to a true consensus or something taken-for-granted. Callon's ‘“cold” situations’ imply a certain consensus or business as usual attitude that allows for controversies to be settled through routine. Freezing out is more exclusionary like the slang to freeze someone out. The exclusion of technology's controversiality, and specifically of AI here, can neither be stable nor settled as AI relies on narratives of disruption and revolution. Freezing out—in its incompleteness, its non-closure—holds the potential for matters to fluctuate, oscillate, or rapidly heat up. The results are as thin as the ice flows on the St Lawrence River today in our increasingly hot world. Without active participation, interjections may potentially melt matters at any time during or after the news cycle.

Using freezing controversiality as an analytical device, we come to put into question how a small group of actors engage in the practices and processes of news making to frame AI as (a future) successful disruptive technology. To explore news making practices and processes is to interrogate by whom and how; in fact, public discourse on deep learning techniques has frozen AI in Canada's newspaper coverage. In the next section, we examine which actors make it into local AI coverage, how they become actors and spokespeople, and how they promote a specific, yet paradigmatic, AI ecosystem. What we find is a politics of expertise at work where journalists must translate a new type of expertise, what Nik Brown and Mike Michael call ‘entrepreneurial technoscientists’ (2003: 13).

Research design: Leveraging mixed methods for controversy analysis

Given its purported role as an institutional space for democratic deliberation on controversies in Canada (see Gingras, 1999), we focused on legacy media. We selected established French and English language newspapers in print before the popularity of the Internet and recognised as journalistic organisations through government tax credit, a proxy for special status. We first interviewed 14 journalists that covered AI over the last decade. We conducted the interviews online for a period of 60 to 120 min in the summer 2021, recorded via Zoom, and transcribed in French and English through a combination of automated and manual transcription. The questionnaire used in all interviews consisted of 19 questions that spanned four broad themes: (a) the interlocutor's biography; (b) their media environment; (c) AI controversies and consensus; and (d) AI actors and institutions. We complemented the situated insights collected from interviews with a computational analysis that provided a broader outlook on the Canadian AI coverage since 2012. We curated a list of news stories from two French-speaking (n = 3447) and three English-speaking (n = 3797) newspapers: La Presse (n = 2295), Le Devoir (n = 1152), the Globe and Mail (n = 2788), the Toronto Star (n = 954), and Maclean's (n = 55). To extract our corpus, we used the following search query: AI, artificial intelligence, algorithm*, machine learning, ML, and deep learning. Analysing this corpus, we used (a) unsupervised topic modelling called Top2Vec (Angelov, 2020) to identify controversies, debates, and narratives that framed AI coverage and (b) named entity recognition (NER) to create lists of actors, institutions, and organisations that were prominently featured in the coverage and, thereby, participated more than others in the stabilisation of AI.

Making AI simple: Journalists and the practices of translation

In making AI accessible, journalists address AI's interpretative flexibility (see Jasanoff, 2019; Marres, 2015, 2020). Their efforts are bolstered by their gradual enrolment in what we will describe as Canada's AI ecosystem. A journalist's first task, one freelancer explains, ‘is to make complicated things simple’. Ideally, the practice of journalism is to convey contextualized, intricate, at times indigestible controversies and ideas into an engaging and structured narrative that is intelligible to the layperson. ‘That's what I like to do, anyway’, pursues the interlocutor who mentioned in passing that a good grasp of the topic in question is necessary to present it clearly to an audience. ‘If you do not know AI, if you haven’t read on that specific topic, it's certain that you will not have the reflexes to ask some questions’, the freelancer adds. ‘It requires a certain understanding… You have to be informed . … You know, it is not the kind of reflection that will necessarily come to you naturally’.

Journalists heavily rely on experts to cover technical matters like AI. Experts remedy what Eric Merkley concisely refers as ‘the appearance of bias when casting an interpretative lens to a story’ (2020: 531). As one journalist puts it, ‘who is the best person to talk about AI, other than the one who is actually making it?’ Journalists use experts to modulate the construction of news stories since highly specialised knowledge can often pass for factual, objective, or unbiased information in journalistic discourse (Albæk, 2011; Merkley, 2020).

Relying on computer scientists for knowledge and quotes pulls journalists into Canada's AI ecosystem to access the necessary expertise. These computer scientists have an intricate understanding of their field and object of research which they can describe in detail. As sources of information, these specialists possess legitimacy and specialised knowledge that empower them to shape coverage. However, having a detailed understanding of a domain does not make these actors objective or accurate when they share their expectations of how a technology will shape the world (Dandurand et al., 2020). ‘Even a reporter that is really, really good in math or in data science’, explains a freelancer, may find it difficult to challenge experts who possess acute technical knowledge, especially when you face Yoshua Bengio or other similar personalities [that are] good communicators. [AI] remains a domain of specialists, and I think that not everyone can understand it. I think I have a good understanding of what AI is, but I don’t pretend to understand it like the specialists. So yeah, it is difficult to explain something that is very complex when we don’t grasp it ourselves. At the time [late 2000s/early 2010s], I attended Yoshua Bengio's conferences at the Université de Montréal. There was a buzz in the room when Bengio was explaining what AI was. It was very technical, but that's the trick: for us, we have to convey [AI] to the public. But Bengio, he was zooming on the screen to the scale of the pixels in his images to explain how the computer differentiated between a dot that was nothing and, say, a dog's hair. It was extremely technical. Students in the room loved it, and that was a bit weird because it was really too geeky [for me].

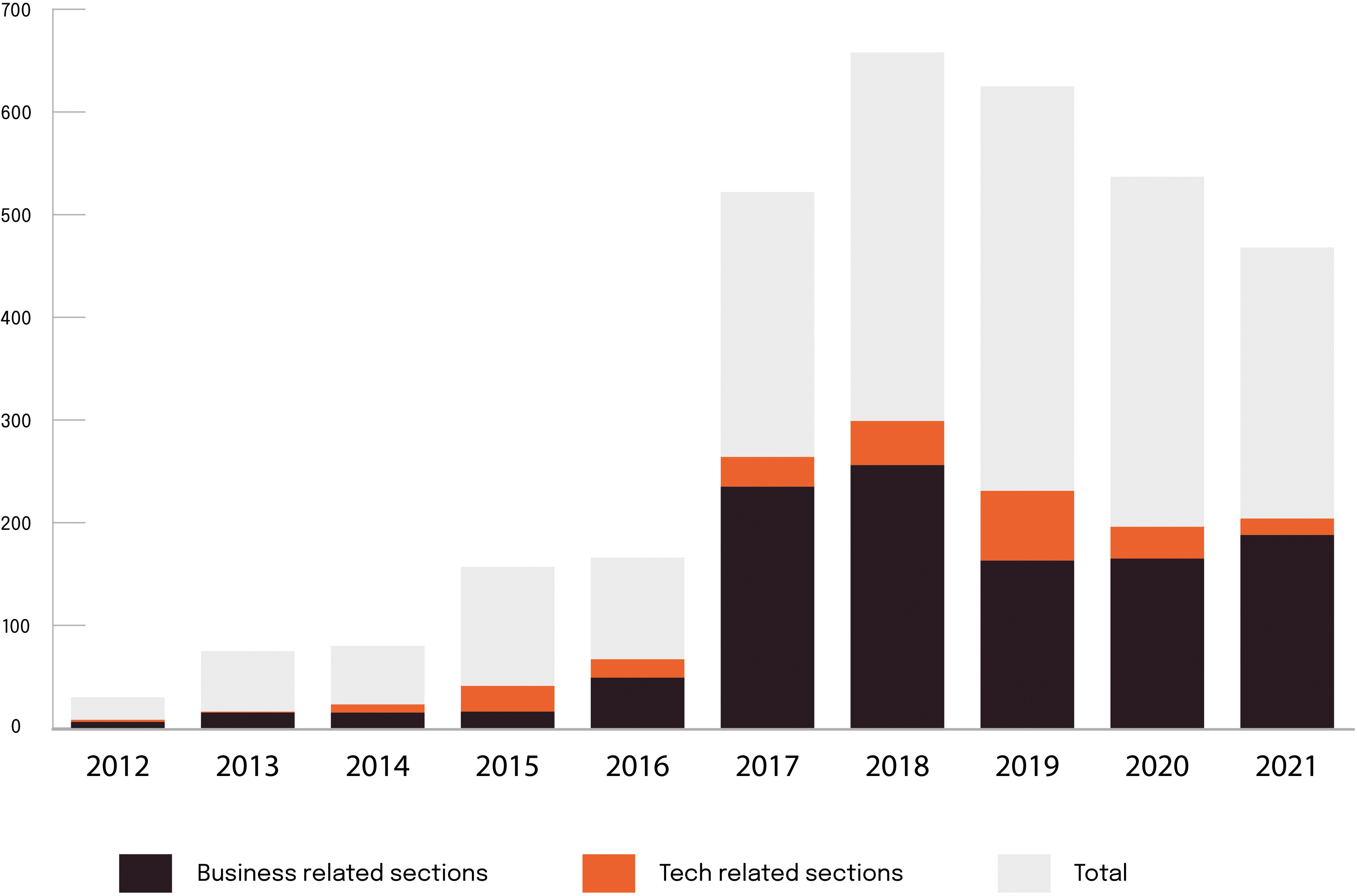

In Canada, AI coverage has become business news. Compared to science and technology, business news has a broader readership and a paying pool of advertisers that results in dedicated sections, reporters, and resources. Figure 1 shows that AI stories began to be prominently featured in 2017. About a third of all AI news coverage took place in the business pages of the French-language newspaper La Presse or Le Devoir. Figure 2 shows that, in relative numbers, AI-related stories appear more prominently in business sections than they do in tech or any other section. 1 Interlocutors concurred that AI is more consistently covered from a business angle. ‘It's very much through a business lens’, one of them insists, ‘through who's raised what funding, what executive shakes up here and there, who's turning around X company, etc. So, when you do see coverage of a new technology … it tends to be either as a sort of subset of that business coverage or like a general interest kind of approach’. The result is a narrowing of AI's framing, or the range of expectations that make their way into AI stories along business lines. ‘We all have our own autonomy’, explains a journalist with more than 20 years of experience, ‘but up to a certain point … we end up looking a lot alike between colleagues … . We are apostles for technology [apôtres de la technologie] in general’. As this interlocutor suggests, this shared a priori interest in technology, or in AI, positions tech reporters as having stakes in AI's positive or economically beneficial coverage.

Volume of business vs. tech articles.

Ratio of business vs. tech articles.

An examination of journalists’ relationships with experts introduces how freezing controversiality is a framing process centred on an uncontested interpretation of AI as an economic imperative and a chance for Canada to build a world-class AI ecosystem. This is how the mundane practices of tech journalism contribute to freezing out AI controversiality. The result resembles Meredith Broussard's (2019) term ‘techno-chauvisms’ where stories about AI become opportunities to reassert the technologies as a hyperbolic engine of revolutionary future. Translating highly technical promises that have yet to be actualised in a way that is intelligible (and interesting) to an audience is a challenging task, but it is also a significant one as it frames the arena of political deliberation about the future role of science and technology in society.

Canada's AI hinterland: Experts as entrepreneurs

When computer scientists intervene in legacy media as cited sources, they are not only experts, but representatives of the networks formed of private and public actors, institutions, and organisations that have vested interests in AI's success (Colleret and Gingras, 2022).

2

These AI experts have become Our world is changing in many ways, and one of the things which is going to have a huge impact on our future is artificial intelligence—AI, bringing another industrial revolution. Previous industrial revolution expanded human's mechanical power. This new revolution, this second machine, is going to expand our cognitive abilities, our mental power. Computers are not just going to replace manual labour, but also mental labour. (2016 in Colleret and Gingras, 2022: 82)

4

Such excitement spills over. Experts in computer science shape even AI-related stories that are not directly related to their expertise (cf. Merkley, 2020). For instance, at the beginning of the pandemic, just a few months before ServiceNow acquired Element AI, Bengio did a media tour to promote his own research centre's (MILA) AI-based app to fight Covid-19 (Deschamps, 2020; Marquis, 2020). Here is an excerpt of an interview with Bengio in the Montréal Gazette: People are ready to share information, but they need to be taken by the hand,” Bengio said. You need political leaders to get involved. We saw this play out with masks. How often did we talk about masks? A lot. So if we want people to acquire certain habits, make certain changes, we have to convince them. People have to have confidence. (cited in Tomesco, 2020)

What is striking in this excerpt is that Bengio's intervention in the media is not related to the AI research for which he gained credibility and notoriety. He uses his legitimacy to normalise the broader use of AI as an instrument of governance and to share his expectations about what AI could eventually accomplish—let alone what his own research centre's AI-based application could achieve. He is aware of the risks but considers them acceptable. 5 Canada's AI strategy has promoted its AI leaders to become entrepreneurs, 6 a framing that key experts actively co-construct. Bengio is a computer scientist who is professor at Université de Montréal and scientific director at Mila and IVADO (Institut de valorisation des données), two non-profit organisations. He cofounded the startup Element AI with Jean-François Gagné in 2016 and is now a research advisor for ServiceNow, the American company that bought Element AI in 2020. Geoffrey Hinton was a professor in the department of computer science at the University of Toronto and chief scientific advisor for the Vector Institute and Google. As for associate professor Joëlle Pineau, to give another example, she ‘shares her time’, according to her website, 7 between McGill University and the Facebook AI Lab in Montréal, where she is a managing codirector. Bengio, Hinton, and Pineau hold, or have held, decision-making positions at CIFAR (the Canadian Institute for Advanced Research), an organisation that has channelled governmental funding to labs and research centres—including ones run by Bengio, Hinton, and Pineau—through the federal government's Pan-Canadian AI Strategy, on which more attention will be devoted in the next section. These computer scientists have been well represented in Canadian media since 2012. Coming shortly after the names of socialites/platform owners (e.g. Mark Zuckerberg, Jeff Bezos, Elon Musk) and politicians who marked the Canadian political landscape over the last decade (e.g. Justin Trudeau, François Legault, Doug Ford, Donald Trump), 8 Yoshua Bengio appeared 491 times in 344 distinct articles across the corpus. 9 Other computer scientists and related AI entrepreneurs are also prominently featured in our corpus: Geoffrey Hinton appears 190 times in 117 articles; Jean-François Gagné appears 65 times in 32 articles; Joëlle Pineau appears 48 times in 30 articles; and Yann LeCun appears 24 times in 15 articles. 10

Like journalists, entrepreneurial technoscientists engage in the framing of AI as national resource, a process that freezes out AI's controversiality. When these actors intervene in legacy media as AI experts, implicitly or explicitly, they campaign for the construction or maintenance of political structures necessary for the development and implementation of AI—Canada's AI ecosystem as we will also examine in the next section. To illustrate, one journalist mentions: There has been a definite alignment [‘rapprochement’] of these different parts of the chain and we see it in the technology sector. I don’t know if it's a coincidence or if one inspired the other but … the conversation is easier to have between the private and the public, and the academic, all of that. It definitely improved a lot … I’m not saying it is perfect, but it's a lot more harmonized that it was. … You realize it when you talk to everyone: they all say the same thing. They speak to each other. Clearly, there is a channel of communication that has opened up that was not there before.

The political economy of cold controversies in Canada

In the two sections above, we analysed the process of freezing AI's controversiality. First, we examined how the coverage of science and technology in legacy media lends itself to the intervention of few experts who tend to support AI. Such a framing, we contended, narrows the range of possible representations for the technological future of AI and freeze out its controversiality. Second, we debunked the neutral or objective posture of the experts, often central in AI Canadian news stories, to reinsert them instead in a network of actors, institutions, and organisations. We show that these experts participate in a particular framing of AI controversialities in the name of, and in favour of, the actors, institutions, and organisations for which they are spokespeople. These networks, we argue in this last section, are Canada's AI ecosystem that has entangled legacy media to build, maintain, and promote this political economy.

The implications of freezing AI controversiality appear in the results of topic modelling. Since our corpus is bilingual, we analysed our collections of articles in French and in English separately to identify three broad shared meta-topics between corpora. 11 The first meta-topic encompasses articles on how AI is, or could be, implemented in many different economic sectors and include stories on potential applications of AI in healthcare, communication, transport, retail, agriculture, banking, and smart cities. It also comprehends the many stories on gadgets that populate AI coverage. The second meta-topic includes news stories on business, economics, funding, finance, international relations, commerce as well as governmental announcements and private and public investment on AI. As we will examine later in this section, these topics typically frame AI as a national resource that ought to be exploited. Finally, the third meta-topic features articles on public debates that have traversed AI coverage between 2012 and 2021. But even this meta-topic that is much smaller than the previous two epitomises the freezing out of AI controversiality. As we show at the end of the section, reports on AI ‘ethics’ rarely question—and to some extent defend—the technological visions of AI promoters and the alignment of interests in the political economy of AI (Jobin et al., 2019; Munn, 2022; Scharenberg, 2021).

According to one journalist interviewed, ‘one thing that is obvious is that I don’t hear anyone saying that AI will disappear!’ Our computational analysis certainly illustrates that AI is indeed propelled by what seems like the unstoppable technological progress of deep learning. These broad meta-topics come into focus through the mundane efforts to frame AI as favourable technology that should be developed and deployed in many sectors of the economy. Here, federal and provincial governments are active participants as well. Over the last decade, AI has been so hyped and its economic projections so positive, both in Canadian legacy media and in public discourse, that an ‘ecosystem’ has been constituted through a government's initiative called the Pan-Canadian AI Strategy, which aims to implement AI in as many sectors as possible. Such Strategy comes with substantial public funding directed at only a few research institutions (Colleret and Gingras, 2022) and contributes to the increasingly close entanglement of the state, academia, start-ups, and multinational corporations (Dandurand et al., 2022). Whereas this kind of alignment of positions and interests is unusual, it remains largely unquestioned in legacy media, which again contributes to freezing AI's controversiality.

Journalists, newsrooms, and entrepreneurial technoscientists cooperate in this framing of AI. When the Canadian government announced $400 million CAD in venture capital to fund research on AI in 2017, cofounder of Element AI Jean-François Gagné made his way into the news to state that this was the ‘kind of leadership and foresight needed to ensure that our businesses and citizens will thrive in the twenty-first century’ (cited in Silcoff, 2017). Similar sentiments were conveyed later in 2018, when the government released another $1 billion CAD for the constitution of five superclusters, including one (opaquely) managed in Montréal by Scale AI, a consortium of private corporations, research centres, academic actors, and start-ups. Most coverage framed the government's announcement with the same citation from Navdeep Bains, then Canadian Minister of innovation, science, and industry, who compared the idea of superclusters to Silicon Valley's conglomeration of big tech companies (Balingall, 2018; Bellavance, 2018a, 2018b; La Presse Canadienne, 2018). Bains made the objective of the AI superclusters clear: to make Canada into a global leader in AI, to create highly qualified jobs across the country, and to stimulate economic growth.

The major investments by the government had ample reason to be controversial, all the while only a few critics came to the fore. Columnist Konrad Yakabuski highlighted that no metrics existed to measure the superclusters’ objectives. Plus, Yakabuski argues, $1 billion of funding in a $2 trillion national economy ‘was never going to generate transformational change’ (2020). Others reported that the AI supercluster was ‘by far the slowest’ (Hemmadi, 2021), and that businesses in the region are struggling with AI (Benessaieh, 2021; Desrosiers, 2020). The issue has lingered. In 2022, 5 years after Bains’ press conference, the French-language newspaper La Presse reported that only 6% of Québec-based businesses use AI applications (Décarie 2022). Yet again, such facts do not hinder AI promoters in spreading the myth of the ‘fourth industrial revolution’. In an article called ‘Redynamiser l’écosystème de l’IA’ (‘Revitalize the AI Ecosystem’), columnist Jean-Philippe Décarie argues for broader adoption of AI across Quebec's industries. Décarie interprets the 6% figure as a missed opportunity or a lack of entrepreneurship from businesses in the region rather than an invitation to warm up its controversiality. ‘Despite an ecosystem that is teeming with advanced technological solutions, created and developed at home [in Montréal]’, Décarie states, This underutilized expertise must make itself better known in order to quickly promote better penetration of AI to ensure real optimization of its impact on the entire economy. … We already know that since 2017, more than $1.5 billion in private funding has been achieved in the AI ecosystem, but Québec must not slow down if it wants to maintain its competitive position. (2022)

Coverage relied on a small group of experts invested in the positive framing of the supercluster and the protection of Canada's AI ecosystem. Décarie's column quoted above is based on an interview with Marie-Paule Jeansonne, CEO of the non-profit organisation Forum IA Québec, the provincial equivalent of Scale AI. In the column, Jeansonne promises that a new study will soon be released on the socioeconomic potential of AI in Québec, thanks notably to the massive governmental investments via Scale AI. Just a few weeks later, a study commissioned by Forum IA Québec made the news. The report reveals that Québec ranked as a global leader in AI and gave a good grade to the provincial government's AI strategy (Benessaieh, 2022). 12 In other words, research findings sponsored by Forum AI Québec, itself set up by the Government of Québec to promote AI, suggests that the substantial investment made by federal and provincial governments ‘confirms that we have succeeded in building a very strong, world-class ecosystem’ (Jeansonne cited in Benessaieh, 2022).

Commissioned studies such as these and uncritical interventions in legacy media such as Jeansonne's freeze out AI's controversiality. These framings efforts contribute to make AI into a national resource that must be exploited. Perhaps more importantly, if left unchallenged, reports such as these create conditions for cold situations: interpretative spaces visited by few actors, meant to align interests behind the economic merits of AI while short-circuiting its controversiality (e.g. the pressing need to regulate the deployment of AI, the inequalities it exacerbates, and the balance of power between the state, the small number of multinational corporations that control AI instruments, and citizens). In fact, these favourable stories legitimise the current state of the political economy of AI in Canada, while promoting the networks of experts/researchers/entrepreneurs who occupy key positions of power within these para-public planning committees and organisations that channel public funding to AI—including Jeansonne's Forum AI Québec and Scale AI. As a matter of fact, many continue to argue in legacy media and elsewhere that AI is increasingly integrated in concrete chains of production (Gagnon, 2021), including the government of Québec which asserts that AI's uses and potential ‘no longer have to be proven’. 13

The promotion of an AI ecosystem and the repeated interventions of its spokespeople take so much space in legacy media that the claim that AI is a national resource to be exploited is seldom questioned which, in turn, contribute to freeze AI controversiality. As a journalist remarks, the activities and influence of organisations set up by governments to construct and champion this AI ecosystem rarely make the news, unless they have an AI-related product or service to endorse: There is a supercluster that is managed by a company that is called Scale AI, based in Montréal. We don’t talk about it as much because it is very p2p [peer to peer] in the world of inter-business [supply chain], so it's pretty foggy, but it exists and they have a lot of money. But what is newsworthy is not so much those who invest, but in what they invest. So often, we report on the final product, the business that receives funding. … We don’t talk much to those who have a handle on the purse strings.

The ethical AI beat is another telling example of freezing out AI's controversiality. During interviews with journalists, many stated that ‘ethics’ of AI served as a counterpoint to AI promotion—a balanced position against the unabated development and deployment of AI. ‘I think that the ethics question was very well addressed, and sometimes perhaps over-addressed’, a freelancer noted. ‘That said, I think that it brought these [ethical] issues to the audience. There has been this popularization of [ethics] that has been done through the media for the public. It worked well’. Another interlocutor agrees: ‘I think that the ethical risks were present [in the coverage of AI]. It is now something taken for granted that there is some ethical work to be done [in order to deploy AI], informed people now know that’. The coverage of AI-related ethics issues was indeed rather informative, especially around 2018 when The Montréal Declaration for Responsible AI was first ratified. AI ethics was then introduced as cardinal points of principles on a ‘moral compass’, 14 as the Declaration stipulates, that in practice results in toothless guidelines for corporations, research institutions, and governments to follow in the development and deployment of AI. The Declaration and the values it promotes are certainly rallying—no one has issued a declaration for the promotion of irresponsible AI—but in the Canadian context, voluntary commitments to vague principles of ethics have frozen out controversiality on the AI ecosystem and the utter lack of regulation of AI, supposedly designed and deployed to ‘revolutionise’ our society and economy (Roberge et al., 2020).

Ultimately, the Declaration helped redefine the discourse on the structural inequalities exacerbated by the complex distribution of power that underlies AI in the vague terms of a simplistic understanding of ‘ethics’. It created a language that frames AI controversies under a liberal nomenclature of ethics—ethical AI, responsible AI, AI for good, etc.—that precludes genuine critical engagement with the current state of the political economy of AI. Perhaps more importantly, the Declaration sublimated the pressing need to form regulatory frameworks in Canada (such as the European Union's General Data Protection Regulation). It was put in place to stabilise the formation of networks and the alignment of interest among actors from academia, the state, research institutions, start-ups, and multinational corporations, 15 all of which work in concert to freeze out AI controversiality and create conditions for the promotion of the AI ecosystem in which these actors and organisations thrive.

Conclusion

Our research makes three important contributions. First, we introduce freezing out as an important, yet understudied process in controversy studies. Our qualitative and quantitative analysis adds to a needed discussion of how to approach a cold situation, in our case, by attending to the performance of translation and framing. Second, our explanation of freezing out demonstrates how a convergence of expectations results in a certain frame of AI coverage. What we observe are AI-related stories streamlined for a mainstream audience, built on predigested narrative about the ‘natural’ progress of technological development that aim to make AI into a much-needed national resource (Colleret and Gingras, 2022). The result, in Canadian media and abroad, is a particular kind of techno-chauvinism as a desirable future (Broussard, 2019; Dandurand et al., 2022; Joly, 2010, 2015; Joly et Le Renard, 2022).

Our findings call further into question the status of legacy media when covering science and technology controversies. Beyond debates about the role of legacy media in liberal democracies, freezing out controversiality is a process that takes place in the mundane practices of translation. These practices create something like a cold situation in news making processes about science and technology, and in some ways, manufacture consensus and freeze needed debates on the place of AI in our economy and society.

What might be AI's antifreeze? Diversifying sources in legacy media—or making it a hot situation in Callon's (1998) characterisation—would certainly contribute to public debates on AI and create conditions for inflaming its controversiality. Adding new (critical) voices to AI coverage might open debate, and warm AI's cold situation. However, the everyday pressure to produce journalistic content in a short time span with fewer resources available to journalism now than ever (Winseck, 2021) complicates these efforts, according to a freelancer: There are shortcuts that have to be taken. And that's a shame. But it's also a little bit our reality as well. So the reason why [other experts] are not contacted that much is often because it's more efficient to contact people you know . … It's something we should not be doing, but we do it anyway: we contact people because we know [in advance] what they are going to tell us and we [need] what they have to say for our article.

The implicit question that we sought to tackle is addressed to the porous field of controversy studies: do researchers report controversies or produce them? Throughout our paper, we implied that AI should be controversial. Given its expected propensity to generate the next industrial revolution, AI should be magma, but instead has cooled. Researchers then must consider whether they are willing to re-kindle AI's uncertainties, undoing their own identities as authoritative expert witnesses.

Footnotes

Acknolwedgments

Writing this paper would not have been possible without the collaboration and enthusiastic support of Marek Blottière, Guillaume Jorandon, Meaghan Wester, Nick Gertler, Rob Hunt, Natalia Balska, and Nicolas Chartier-Edwards. Special thanks go to Colette Brin, Alain McKenna, Chloé Sondervorst, and Sophie Toupin for the fruitful discussions on the state of AI coverage in Canada during the launch of our research report, Training the News: Coverage of Canada's AI Hype Cycle (2012-2021). We would also like to thank the guest editors of this special issue, the entire Shaping AI team, Sachil Singh, and Matthew Zook.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Open Research Area (grant number 2004-2020-0004).