Abstract

Despite the immense societal importance of ethically designing artificial intelligence, little research on the public perceptions of ethical artificial intelligence principles exists. This becomes even more striking when considering that ethical artificial intelligence development has the aim to be human-centric and of benefit for the whole society. In this study, we investigate how ethical principles (explainability, fairness, security, accountability, accuracy, privacy, and machine autonomy) are weighted in comparison to each other. This is especially important, since simultaneously considering ethical principles is not only costly, but sometimes even impossible, as developers must make specific trade-off decisions. In this paper, we give first answers on the relative importance of ethical principles given a specific use case—the use of artificial intelligence in tax fraud detection. The results of a large conjoint survey (

Introduction

Artificial intelligence (AI) has enormous potential to change society. While the widespread implementation of AI systems can certainly generate economic profits, policymakers and scientists alike also highlight the ethical challenges accompanied by AI. Most scholars, politicians, and developers agree that AI needs to be developed in a human-centric and trustworthy fashion, for AI that benefits the common good (Berendt, 2019; Jobin et al., 2019). Trustworthy and beneficial AI requires that ethical challenges be considered during all stages of the development and implementation process. While plenty of work addresses ethical AI development, there is surprisingly little research investigating public perceptions of those ethical challenges. This lack of citizen involvement is striking because developing ethical AI, in a normative sense, aims to be human-centric and of benefit for the whole society. Moreover, insights into citizens’ perceptions of ethical principles will inform developers tasked with designing ethical AI systems and decision-makers entrusted with implementing such systems in social contexts (Berendt, 2019). We, therefore, set out to shed light on public perceptions of ethical principles outlined in ethical guidelines. Particularly, we investigate how people prioritize different ethical principles. Accounting for the trade-offs between the different ethical principles is especially important because maximizing them simultaneously often proves challenging or even impossible when designing and implementing AI systems. For instance, aiming for a high degree of explainability of AI systems can conflict with the ethical principle of accuracy, since a high degree of accuracy tends to require complex AI models that cannot be fully understood by humans, especially laypersons. Thus, taking the goal of ethical AI development seriously requires decision-makers to take the opinions of the (affected) public into account.

This paper gives first answers to the relative importance of ethical principles. We use an AI-based tax fraud detection system as a case in point. Such systems, already in use in several European countries, detect patterns in large amounts of tax data and flag suspicious cases which are then analysed in depth by a human. In a large (

Ethical guidelines of AI development

AI increasingly permeates most areas of peoples’ daily lives, whether in the form of virtual intelligent assistants, as a recommendation algorithm for movie selection, or in hiring processes. Such areas of application are only made possible by the accumulation of huge amounts of data, so-called Big Data, that people constantly leave behind in their digital lives. Although these AI-based technologies aim to take tasks off peoples’ hands and make their lives easier, collecting and processing personal data is also associated with major concerns. boyd and Crawford (2012) emphasize the importance of ethically responsibly handling Big Data. Scandals such as Snowden’s revelations about mass surveillance by US intelligence agencies (Steiger et al., 2017) or the collection of data from millions of Facebook users for the purpose of personalized advertising and election interference in the 2016 US presidential election by Cambridge Analytica (Hinds et al., 2020) have recently caused great public outcry. The public attention was, therefore, drawn more strongly to the issue of privacy. Policymakers are increasingly reacting to these concerns. For example, shortly after the Cambridge Analytica scandal became public, the European Union’s General Data Protection Regulation came into force. This is considered an important step forward in the field of global political convergence processes and helps to create a global understanding of how to handle personal data (Bennett, 2018).

However, Big Data not only leads to privacy concerns, but also can undermine transparency for users of online services. This lack of transparency is further exacerbated by the fact that algorithms are sometimes too complex for laypersons to understand, which is often referred to as a black box (Shin and Park, 2019). Questions regarding comprehensibility and explainability are therefore at the core of algorithmic decision-making and its outcome (Ananny and Crawford, 2018). These questions become particularly relevant when algorithms make biased decisions and systematically discriminate against individual groups of people. For example, the COMPAS algorithm used by some US courts systematically disadvantaged black defendants by giving them a higher risk score for the probability of recidivism than white defendants (Angwin et al., 2016). In contrast, a hiring algorithm that Amazon developed and ultimately decided not to use systematically discriminated against female candidates (Köchling and Wehner, 2020). Algorithmic discrimination can be caused by flawed or biased input data or by the mathematical architecture of the algorithm (Shin and Park, 2019). Thus, AI systems run the risk of reproducing or even exacerbating existing social inequalities with detrimental effects for minorities. Such algorithmic unfairness then leads to the question of who is accountable for possibly biased decisions by an AI system (Busuioc, 2020; Diakopoulos, 2016). All of these questions have been extensively discussed in the fairness, accountability, and transparency in machine learning (FATML) literature (Shin and Park, 2019). The different concepts are closely intertwined. For example, Diakopoulos (2016) points out: ‘Transparency can be a mechanism that facilitates accountability’ (p. 58).

To address these concerns and to define common ground for (self-)regulation, governments, private sector companies, and civil society organizations have established ethics guidelines for developing and using AI. The goal is to address the challenges outlined by the scientific community and thus to ensure so-called ‘human-centered AI’ (Lee et al., 2017), or ‘human-centric’ AI (European Commission, 2019). For example, the High-Level Expert Group on AI (AI HLEG) set up by the European Commission calls for seven requirements of trustworthy AI: (1) human agency and oversight, (2) technical robustness and safety, (3) privacy and data governance, (4) transparency, (5) diversity, non-discrimination and fairness, (6) societal and environmental well-being, and finally (7) accountability (European Commission, 2019). Along similar lines, the OECD recommends a distinction between five ethical principles, namely (1) inclusive growth, sustainable development, and well-being; (2) human-centred values and fairness; (3) transparency and explainability; (4) robustness, security, and safety; and (5) accountability (OECD, 2021).

Some researchers have taken a comparative look at the numerous guidelines published in recent years and have highlighted which ethical principles are emphasized across the board (e.g. Hagendorff, 2020; Jobin et al., 2019). There is a widespread agreement on the need for ethical AI, but not on what it should look like in concrete terms. Hagendorff (2020) highlights that the requirements for accountability, privacy, and fairness can be found in 80% of the 22 guidelines he analysed. Thus, to a large extent, the guidelines mirror the primary challenges for human-centric AI discussed in the FATML literature. At the same time, however, Hagendorff (2020) points out that it is precisely these principles that can be most easily mathematically operationalized and thus implemented in the technical development of new algorithms.

Jobin et al. (2019) conducted a systematic review of a total of 84 ethics guidelines from around the globe, although the majority of the documents originate from Western democracies. In total, the authors identify 11 overarching ethical principles, five of which (transparency, justice and fairness, non-maleficence, responsibility, and privacy) can be found in more than half of the guidelines analysed. Also, the attributes of beneficence and of freedom and autonomy can still be found in 41 and 34 of the 84 guidelines, respectively. The ethical principles of trust, sustainability, dignity, and solidarity, on the other hand, are only mentioned in less than a third of the documents (Jobin et al., 2019).

In this paper, we focus on the seven most prominent ethical principles, which are discussed in most of the existing guidelines, analysed by Jobin et al. (2019). In addition to the aforementioned principles of transparency (or explainability), fairness, responsibility (accountability), and privacy Jobin et al. (2019) list non-maleficence, freedom and autonomy, and beneficence. They conceive ‘general calls for safety and security’ (p. 394) as non-maleficence. At the core of the principles lies the requirement for the technical security of the system, for example, in the form of protection against hacker attacks. In this way, unintended harm from AI, in particular, should be prevented (European Commission, 2019). According to Jobin et al. (2019), the freedom and autonomy issue addresses, among other things, the risk of manipulation and monitoring of the process and decisions, as also addressed by the AI HLEG. In light of this challenge, implementing human oversight in the decision-making process can ensure that human autonomy is not undermined and unwanted side effects are thus avoided (European Commission, 2019). However, decision-making procedures are perceived as fair when the procedure guarantees a maximum degree of consistency on the one hand and is free from personal bias on the other hand (Leventhal, 1980). Therefore, the neutrality of an algorithmic decision – without human bias – might explain why algorithmic decision-making is perceived as fairer than human decisions (Helberger et al., 2020; Marcinkowski et al., 2020). Even though these perceptions are context-dependent (Starke et al., 2021), it can be assumed that in some use cases no human control is desired. For example, this is especially important to consider when personal bias or, at worst, corruptibility of human decision-makers could be suspected, that is, in tax fraud detection (Köbis et al., 2021). In this sense, the use of AI can lead to less biased decisions (Miller, 2018). Finally, beneficence refers to the common good and the benefit to society as a whole. However, reaping this benefit requires algorithms that do not make any mistakes. The accuracy of AI is therefore decisive for societal benefit. This is because only a high level of predictive accuracy or correct decisions made by an AI can generate maximum benefit (Beil et al., 2019). Accordingly, AI systems used in medical diagnosis, for instance, can only improve personal and public health if they operate as accurately as possible (Graham et al., 2019).

While all ethical principles highlighted in the AI ethics guidelines seem desirable in principle, they can cause considerable challenges in practice. The reason is that when designing an AI system, it is often infeasible to maximize the different ethical aspects simultaneously. Thus, multiple complex trade-off matrices emerge (Binns and Gallo, 2019; Köbis et al., 2021). Two examples help to illustrate this point. First, the more information available about a user’s wants, needs, and actions, the more helpful and accurate recommendation algorithms can make on social media platforms. This information includes not only private data about a user, such as the browsing history, but also sensitive data, such as gender. Collecting this data and simultaneously improving the recommendation can result in accuracy–privacy (Machanavajjhala et al., 2011) or accuracy-fairness trade-offs (Binns and Gallo, 2019). Second, for a company to assess if its hiring algorithm discriminates against social minorities, it needs to collect sensitive information from its applicants, such as ethnicity, which may violate fundamental privacy rights, leading to a fairness-privacy trade-off (Binns and Gallo, 2019). By adding more variables such as transparency, security, autonomy, and accountability to the mix, highly complex trade-offs between the various ethical principles emerge.

Public preferences for AI ethics guidelines

Human-centric AI is an essential, yet fuzzy concept used in ethical AI research. For instance, some authors argue that AI can only be human-centric if, on the input side, it considers the sociocultural complexity of humans and, on the output side, it provides explanations that are easy to understand for laypeople (Riedl, 2019). Another concept of human-centric AI focuses on the overarching objective of AI, namely that it is used ‘in the service of humanity and the common good, with the goal of improving human welfare and freedom’ (European Commission, 2019, p. 4). A common denominator of those different concepts is that accounting for perceptions of those most affected by decisions made by algorithmic systems is a key strategy to achieve human-centric AI. A recent example from the UK illustrates that violating ethical principles when designing and implementing AI—in this case, an automated system that graded students in schools—can lead to substantial public outrage (Kelly, 2021). Empirical research further suggests that perceiving AI as unethical has detrimental implications for an organization in terms of a lower reputation (Acikgoz et al., 2020) as well as a higher likelihood for protests (Marcinkowski et al., 2020) and for pursuing litigation (Acikgoz et al., 2020). Thus, to address the fundamental question of which kind of AI we want as a society, detailed knowledge about public preferences for AI ethics principles is key. A surging strand of empirical research addresses this question and finds that public preferences for AI are highly dependent on the context, as well as on individual characteristics (Pew Research Center, 2018; Starke et al., 2021). While some people perceive algorithms to be acceptable in some domains (e.g. social media recommendation), they reject them in others (e.g. predicting finance scores). Also, judgments about AI hinge considerably on sociodemographic features, such as age or ethnicity. In the US, a study by the Pew Research Center (2018) identifies four major concerns voiced by respondents: (1) privacy violation, (2) unfair outcomes, (3) removing the human element from crucial decisions, that is the belief that some tasks can be better evaluated by humans, who does not solely rely on measurable characteristics (e.g. the inclusion of empathy in a decision), and (4) inability of AI systems to capture human complexity, which refers to the notion that algorithms cannot take into account individual aspects of humans in their decisions and therefore make inaccurate decisions.

The empirical literature further shows that people largely desire to incorporate ethical principles advocated for in the legal guidelines. First, people base their assessment of an AI system on its accuracy. The seminal study by Dietvorst et al. (2015) finds that people avoid algorithms after seeing them making a mistake, even if the algorithm still outperforms human decision-makers. Along similar lines, people lose trust in faulty AI systems (Robinette et al., 2017). However, studies have found that people still follow algorithmic instructions even after seeing them err (e.g. Salem et al., 2015). Second, fairness is a crucial indicator for evaluating AI systems (Starke et al., 2021). When an AI system is perceived as unfair, it can lead to detrimental consequences for the institution implementing such a system (Acikgoz et al., 2020; Marcinkowski et al., 2020). Third, empirical evidence suggests that keeping humans in the loop of algorithmic decisions, that is, ensuring human oversight at least at some points of the decision-making process, is perceived as fairer (Nagtegaal, 2021) and more legitimate (Starke and Lünich, 2020) compared to leaving decisions to algorithms. Fourth, in terms of transparency, the literature yields mixed results. On the one hand, more openness about the algorithm is essential for building trust in AI systems (Neuhaus et al., 2019), involving the users (Kizilcec, 2016), reducing anxiety (Jhaver et al., 2018), and increasing user experience (Vitale et al., 2018). On the other hand, studies show that too much transparency can impair user experience (Lim and Dey, 2011) and confuse users, complicating the interaction between humans and AI systems (Eslami et al., 2018). Further, the results of a conjoint survey conducted by König et al. (2022) show that while the transparency of AI systems is valued, the cost consumers may have to pay is seen as a more important feature. Fifth, privacy protection can be an essential factor for evaluating AI systems, leading people to reject algorithmic recommendations based on personal data (Burbach et al., 2018). However, other studies suggest that users are often unaware of privacy risks and rarely use privacy control settings on AI-based devices (e.g. Zheng et al., 2018). Sixth, empirical research suggests that people perceive unclear responsibility and liability for algorithmic decisions as one of the most crucial risks of AI (Kieslich et al., 2020). Furthermore, accountability and clear regulations are viewed as highly effective countermeasures to algorithmic discrimination (Kieslich et al., 2020). Along similar lines, other studies found that perceptions of accountability increase people’s satisfaction with algorithms (Shin and Park, 2019) as well as their trust in algorithms (Shin et al., 2020). Lastly, in terms of security, people consider a loss of control over algorithms as a crucial risk of AI systems (Kieslich et al., 2020).

Only a few studies, however, compare the influence of different ethical principles on people’s preferences. In several studies, Shin and colleagues tested the effects of three crucial aspects of ethical AI: fairness, transparency, and accountability. The results, however, are mixed. While fairness had the most substantial impact on people’s satisfaction with algorithms (followed by transparency and accountability) (Shin and Park, 2019), transparency was the strongest predictor for people’s trust in algorithms (followed by fairness and accountability) (Shin et al., 2020). Another study found that explainability had the most decisive influence on algorithmic trust (Shin, 2020). However, to the best of our knowledge, no empirical study has looked at different trade-off matrices between the various ethical principles and investigated people’s preferences for single principles at the expense of others. Therefore, we propose the following research question:

However, considering the diversity of social settings and beliefs in society, it is probable that there are trade-off differences among the public concerning the prioritization of ethical principles, respectively, ethical preference patterns of AI systems. Hence, we ask the following research question:

The literature suggests that human-related factors influence the perception of AI systems. For example, empirical studies have found that age (Grgić-Hlača et al., 2020; Helberger et al., 2020), educational level (Helberger et al., 2020), self-interest (Grgić-Hlača et al., 2020; Wang et al., 2020), familiarity with algorithms (Saha et al., 2020), and concerns about data collection (Araujo et al., 2020) have effects on the perception of algorithmic fairness. Hancock et al. (2011) performed a meta-analysis of factors influencing trust in human–robot interaction and identified, among others, demographics and attitudes towards robots as possible predictors. Subsequently, we elaborate on this literature and test for differences among human-related factors concerning the emerging ethical design patterns. Hence, we ask RQ3.

Method

Sample

The data was collected via the online access panel (OAP) of the market research institute

We cleaned the data according to three criteria: (1) low response time for the entire questionnaire (1

After data cleaning, 1099 cases remained. In total, our sample consisted of 593 (54.0%) women and 503 (45.8%) men, while 3 (0.3%) indicated nonbinary. The average age of the respondents was 47.1 years (

Procedure

Initially, respondents were asked to answer several questions concerning their perception and opinion on AI. To evaluate the preference for ethical principles in the design of AI systems, we integrated a conjoint survey with seven attributes in the survey. The use case was an AI-based tax fraud detection system. Such systems are already in use in many European countries, for example, France, the Netherlands, Poland, and Slovenia (Algorithm Watch, 2020) and also in the state of Hesse in Germany (Institut für den öffentlichen Sektor, 2019). In our study, respondents were presented with a short text (179 words) describing the use case of AI in tax fraud detection. The text stated that these systems can be designed differently. We then described the seven key principles of AI ethics guidelines, which we derived from the review article by Jobin et al. (2019): explainability (as a measurement for the dimension ‘transparency’), fairness, security (as a measurement for the dimension ‘non-maleficence’), accountability (as measurement for the dimension ‘responsibility’), accuracy (as a measurement for the dimension ‘societal well-being’), privacy, and limited machine autonomy (for exact wording of the attributes, see Table 1). Notably, we chose to include machine autonomy as we assumed that in the special case of tax fraud detection, no human oversight might be preferred due to possible bias reduction. In the following, the ethical principles are also called ‘attributes’. 2

Desciption of the attributes.

After reading the short introductory text, respondents were told that an AI system can have different configurations of the ethical principles. If the system satisfied a principle, it was indicated with a green tick; if the property was not met, it was marked with a red cross. Respondents were presented with a total of eight cards showing different compositions of AI systems in randomized order. The configurations varied only in the different fulfilment of the ethical principles. For each card, we asked respondents to indicate how much they preferred the configuration of ethical principles shown on the card. At the end of the questionnaire, respondents had to indicate some sociodemographic information.

Measurement

Conjoint design

The strength of conjoint surveys lies in the ability to analyse a variety of possible attributes simultaneously (Green et al., 2001). This is particularly relevant for attributes that can potentially offset each other in reality, as argued in the trade-offs of the ethical principles. While asking for the approval of the principles separately is likely to yield high scores across the board, conjoint surveys force respondents to make a choice between the imperfect configurations of the principles. Furthermore, conjoint surveys can also be conducted with a partial factorial design. Thus, it is possible to predict respondents’ preferences for all possible combinations, even if they only rate a small fraction of them. As described above, we treated the seven most prominent ethical design principles outlined by Jobin et al. (2019) as attributes (transparency, fairness, non-maleficence, responsibility, beneficence, privacy, and machine autonomy). We chose sub-codes for some ethical principles to tailor the broad concepts to our use case of tax fraud detection. As attribute levels, we simply marked if an ethical principle was complied with or not.

To determine the different compositions of the cards used in our study, we calculated a fractional factorial design using a standard ‘order’ allocation method and random seed. This method produces an orthoplan solution in which combinations of attributes are well balanced (see Table 2).

Orthoplan.

Measures

Results

All calculations were performed in R (V4.0.3). The analysis code, including R packages used, is available upon request.

Relative importance of ethical principles

To answer RQ1, we calculated a conjoint analysis in R. In particular, we computed linear regressions for every respondent with the attributes as independent variables (dummy coded) and the ratings of the cards as the dependent variable. Thus, 1099 regression models were calculated to show the preferences of every respondent; the regression coefficients are called the part-worth values (Härdle and Simar, 2015). We then calculated the average value of the regression coefficients to determine the preferences of the German population for an ethical design of AI.

Part-worth of attributes

Predictably, all regression coefficients (part-worths) were positive (

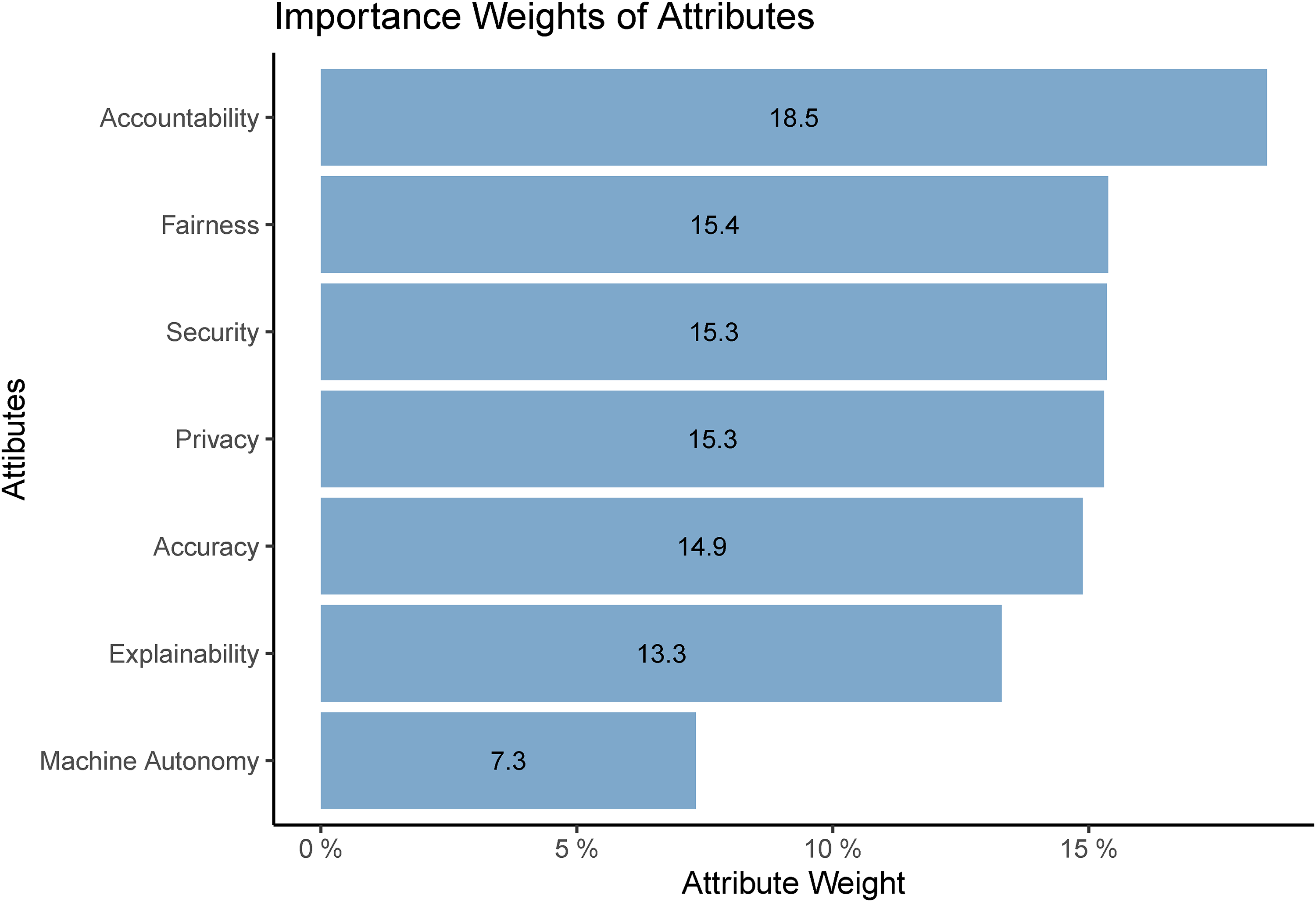

We looked more closely at the differences in the importance of fulfilling the ethical principles, or, in other words, people’s preferences for certain ethical principles over others. For that, we calculated the importance weights for each attribute (see Figure 1). Importance weights can be obtained by dividing each mean attribute part-worth by the total sum of the mean part-worths.

Overall importance weights of attributes.

The results suggest that accountability is, on average, perceived as the most important ethical principle. Fairness, security, privacy, and accuracy are on average equally important to the respondents. The explainability of AI systems is slightly less important. Lastly, machine autonomy is the least important for the respondents. Though, the importance weights of the attributes are relatively balanced in aggregate.

Preference patterns among the public

To answer RQ2 and RQ3, we conducted

We used respondents’ regression coefficients as cluster-forming variables. The number of clusters was determined using the within-cluster sum of square (‘elbow’) method with Euclidean distance measure. Euclidean distance measure was chosen since the cluster variables follow a Gaussian distribution and have few outliers. The results suggest a solution of

Figure 2 shows the preference profiles of the five cluster groups. The yellow group includes people who do not seem to care much about the ethical design of systems. The purple group values fairness, accuracy, and accountability. The green group demands privacy, security, and accountability. The blue group considers all ethical principles equally important. Finally, the main characteristic of people belonging to the red group is described through high disapproval of machine autonomy.

Preference profiles.

In the next step, we labelled the clusters and calculated the cluster sizes. Cluster 1 (red) was labelled as ‘Human in the Loop’ cluster 2 (blue) as ‘Ethically Concerned’ cluster 3 (green) as ‘Safety Concerned’, cluster 4 (purple) as ‘Fairness Concerned’, and cluster 5 (yellow) as ‘Indifferent’. Table 3 depicts the average approval ratings for each cluster group per card and in total across all cards.

Mean card ratings per group.

The results shown in Figure 2 suggest that the largest group consists of people who treat all ethical principles equally and highly important,

In contrast, the second largest group consists of people whose system approval ratings are only slightly affected by an ethical design of an AI system,

A total of 167 (15.2%) respondents were considered as

The group of

In the fifth cluster, people oppose machine autonomy and accordingly strive for human control,

Cluster description

We address RQ3 by describing the five cluster groups based on several characteristics, which we group into two categories: socio-demography (

As the MANOVA shows statistical significance,

Cluster descritpion.

The ANOVA results show that the clusters significantly deviate from each other in all characteristics analysed. In the following, we will describe the profile of each cluster group in detail. All mean scores of the variables are displayed in Table 4.

Human in the loop

This group overwhelmingly demands human control and, thus, is strongly opposed to machine autonomy. Persons belonging to this group tend to be older and less educated. Regarding AI opinions, they are rather uninterested in AI and have a low acceptance of AI. Moreover, they are comparatively more aware of risks, have quite low opportunity perceptions, and low levels of trust in AI.

Ethically concerned

People who demand high standards on all ethical principles are comparatively young and well educated. They also have a high interest and trust in AI. Furthermore, they tend to accept AI in various domains and have a relatively high opportunity perception and a relatively low risk perception.

Safety concerned

Respondents belonging to the

Fairness concerned

The

Indifferent

The

Discussion

This study sheds light on a crucial area of ethical AI, namely public perceptions of ethical challenges that come along with developing algorithms. Empirical insights into citizens’ preferences for fundamental principles of ethical AI and the trade-offs between them are essential to advance the notion of human-centric AI. Our results can further have practical implications: They can inform developers about prioritizing certain ethical principles when designing AI systems. The findings also provide vital information for decision-makers tasked with implementing AI systems into society according to fundamental societal values.

In this paper, we investigated opinions about the ethical design of AI systems by jointly considering different essential ethical principles and shedding light on their relative importance (RQ1). We further explored different preference patterns (RQ2) and how these patterns can be described by sociodemographics as well as AI-related opinions (RQ3).

From ethical guidelines to legal frameworks?

Our results show that there are no major differences within the German population with regard to the relative importance of ethical principles. However, we find a slight accentuation of

Thus, ethical guidelines are not only present in a vacuum, but also address the needs of the public. In the case of German citizens, accountability is foremost demanded. In the context of our study, accountability is equal to liability; hence, there is a need for a clear presentation of an actor, who can be accounted for losses and who—in the end—can be regulated. This is in line with empirical evidence showing that legal regulations are perceived not only as effective, but also as demanded countermeasures against discriminatory AI (Kieslich et al., 2020). As AI technology is considered a potential risk or even threat—at least among a share of the public (Kieslich et al., 2021; Liang and Lee, 2017)—setting up a clear legal framework for regulation might be a way to further enhance trust and acceptance toward AI. In this respect, the EU has already taken on a pioneering role, as the EU commission recently proposed a legal framework for the handling of AI technology (European Commission, 2021). With this, they set up a classification framework for high-risk technology and even list specific applications that should be closely controlled or even banned. Considering the results of our study, this might be a fruitful way to include citizen perceptions in this process and, for example, specifically make clear, who takes responsibility for poor decisions made by AI systems. Besides, it is the articulated will of the European Commission to put humans at the centre of AI development. Our empirical results suggest that ethical design matters and—if the EU takes their goals seriously—ethical challenges should play a major role in the future. Strictly speaking, ethical AI primarily requires regulatory political or legal actions. Hence, the implementation of ethical AI is a political task, which must not necessarily include computer scientists. However, from our results, we can also draw conclusions for the ethical design of AI systems in a technological sense.

Ethical design and demands of potential stakeholder groups

Our results also suggest that citizens value ethical principles differently. After clustering the respondents’ preferences, we found five different groups that differ considerably in their preferences for ethical principles. This suggests that there might not be a universal understanding and balance of the importance of ethical principles in the German population. People have different demands and expectations regarding the ethical design of AI systems. Thus, these different preference patterns have implications for the (technical) design and implementation of AI systems. For example, the

Concerning the results of our study, for example, given the case of an algorithmic admission system in universities (Dietvorst et al., 2015), system requirements articulated by the affected public (in this case, students) might widely differ from those of a job seeker categorization system (e.g. the algorithmic categorization system used by the Austrian job service) (Allhutter et al., 2020). While students supposedly are younger, well educated, and more interested in AI, those affected by a job seeker categorization system are supposedly older and less positive about AI. Our results suggest that operators of AI systems should address the needs of the stakeholders differently if aiming for greater acceptance. For the admission system, it might be useful to highlight that such systems deliver precise results and treat students equally, since students—based on their group characteristics—primarily belong to the group of the

Notably, there is also a group of people of substantial size (the

The largest share of the German population equally values all ethical principles and, thus, sets very high standards for ethical AI development. In fact, this leads to the observation that the bar for approval of AI systems is very high for this group. However, if the principles are complied with, ethical AI can lead to high acceptance of AI. Common characteristics of this group are a high level of education, young age, and high interest in AI as well as high acceptance of AI. This group is especially demanding in terms of AI design. This may, in consequence, lead to a serious problem for AI design. As outlined, some trade-off decisions must be made eventually, as the simultaneous maximizing of all ethical principles is very challenging. However, our results suggest that it will not be easy to satisfy the demands of ethically concerned people. If some ethical trade-offs are taken, it may very well lead to reservations against AI.

However, considering only the public perspective in AI development and implementation might also have serious ramifications. Srivastava et al. (2019) show, regarding algorithmic fairness, that the broad public prefers simple and easy to comprehend algorithms to more complex ones, even if the complex ones achieved higher factual fairness scores. As AI technology is complex in its nature, it is possible that many people will not understand some design settings. In the end, this might lead to a public demand for systems that are easier to understand. However, it might very well be that a more thorough design of those systems would follow ethical principles to an even higher extent. Thus, we highlight that the public perspective on AI development definitely needs more attention in science as well as in technology development and implementation. We emphasize that the public perspective should rather complement and not dominate other perspectives on AI development and implementation.

Limitations

This study has some limitations that need to be recognized. We used an algorithmic tax fraud identification system as a use case in our study. Hence, our results are only valid for the specific context. However, as we wanted to describe preference profiles and cluster characteristics, we decided to present only one use case. This approach is similar to studies in the field of fairness perceptions (Grgić-Hlača et al., 2018; Shin and Park, 2019; Shin, 2021). However, public perceptions of AI are highly context-dependent. It might be that importance weights and cluster profiles differ concerning the particular use case. Therefore, further studies should test for various use cases simultaneously and compare the results regarding those contexts. Context-comparing studies have already been performed for public perception of trust in AI (Araujo et al., 2020) and threat perceptions (Kieslich et al., 2021).

Additionally, the survey was conducted in Germany, and the findings are thus only valid for the German population. We encourage further studies that replicate and enhance our study in other countries. Cross-national studies could detect specific nation patterns regarding the importance weights and preference profiles of ethical principles. The comparison to the US, Chinese and UK population would be especially interesting, since those countries follow a different national strategy for the development of AI than Germany.

Conclusion

Ethical AI is a major societal challenge. We showed that compliance with ethical requirements matters for most German citizens. To gain wide acceptance of AI, these ethical principles have to be taken seriously. However, we also showed that a notable portion of the German population does not demand ethical AI implementation. This is critical, as compliance with ethical AI design is, at least to some level, dependent on the broad public. If ethical requirements are not explicitly demanded, one might fear that implementation of those principles might not be on the highest standard, especially because ethical AI development is expensive. However, we showed that people who demand high-quality standards are interested in AI as well as aware of the risks. Thus, to raise demands for ethical AI, it would be a promising way to raise public interest in the technology.

Supplemental Material

sj-pdf-1-bds-10.1177_20539517221092956 - Supplemental material for Artificial intelligence ethics by design. Evaluating public perception on the importance of ethical design principles of artificial intelligence

Supplemental material, sj-pdf-1-bds-10.1177_20539517221092956 for Artificial intelligence ethics by design. Evaluating public perception on the importance of ethical design principles of artificial intelligence by Kimon Kieslich, Birte Keller and Christopher Starke in Big Data & Society

Footnotes

Acknowledgements

The authors would like to thank Pero Došenović, Marco Lünich and Frank Marcinkowski for their feedback on the paper. Furthermore, the authors would like to thank Pascal Kieslich and Max Reichert for their feedback on the methodological implementation and evaluation of the results.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This study was conducted as part of the project

Supplemental material

Supplemental material for this article is available online. The analysis code is available on request.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.