Abstract

This article examines the social acceptability and governance of emotional artificial intelligence (emotional AI) in children’s toys and other child-oriented devices. To explore this, it conducts interviews with stakeholders with a professional interest in emotional AI, toys, children and policy to consider implications of the usage of emotional AI in children’s toys and services. It also conducts a demographically representative UK national survey to ascertain parental perspectives on networked toys that utilise data about emotions. The article highlights disquiet about the evolution of

Introduction

Against the context of increasing datafication of children (Lupton and Williamson, 2017) and surveillance capitalism (Zuboff, 2019), this article investigates children’s toys and services that make use of emotional AI. Although smart and connected toys are growing in popularity (Mascheroni and Holloway, 2019), availability and use of emotoys are few and limited in scope. Yet, on the basis of rising interest in the use of emotional AI and biosensing across diverse life domains (McStay, 2018), growth in networked objects, and the long history of toys and care (Turkle, 2011), it is likely that emotion and affect-based entanglement between children, networked objects and AI-based playthings is picking up speed. While we are sensitive to debates around the psychology of childhood development, this is not our expertise. Rather, we are interested in arising ethical and governance concerns, the terms by which child emotional AI may be acceptable (if any) and what parents think of these technologies.

To explore these issues we conducted interviews with data protection regulators, leading academics, privacy-oriented non-governmental organisations (NGOs), and diverse industrial and service sectors that use emotional AI. Against a context of literature on dataveillance and children, connected and smart toys, emotional AI and criticism of affective computing, the leading theme to emerge from interviewee concerns is

Emotional AI in children’s toys and services

Emotional AI refers to technologies that use affective computing and artificial intelligence techniques to try to sense, learn about, and interact with human emotional life (Emotional AI Lab, 2020). The practice of using computer sensing to interact with emotional life has origins in the 1990s, with the field of affective computing (Picard, 1997).What McStay (2018) terms ‘emotional AI’ and ‘empathic media’ are made possible through weak, narrow and task-based AI efforts to see, read, listen, feel, classify and learn about emotional life. This involves data about words, images, facial expressions, gaze direction, gestures, voices and the body, which itself encompasses heart rate, body temperature, respiration and electrical properties of skin.

Applied to children, emotional AI raises concern about the conversion of child behaviour and subjectivity into biocapital (Lupton and Williamson, 2017). Questions about child bodies and profiling sit within a context of longstanding concern about media effects on children, online screen-based media, and the increased tendency for children to be ‘always on’ in all contexts of childhood (such as play, communication, education and promotion of wellbeing; Livingstone et al., 2018). Leaver’s (2017) work on parents is also relevant to this article, questioning the positioning of datafied surveillance as a normalised part of childcare where

On toys, despite the seeming novelty of emotional AI in toys, there is a historical context. Toys with robo-qualities of automata reach back to the 1800s and beyond. For example, in the 1920s,

The 1990s saw a new development. Whereas toys in the past were typically Rorschach objects, upon which a child projects feelings and situations, ‘smart toys’ involved new forms of mutual engagement that involved awareness of

Emotoys themselves are still relatively rare although home social robots, such as Anki’s

Research design

To understand ethical questions around emotoys and parental perspectives we conducted 13 in-depth interviews (Miller and Crabtree, 1992) and a demographically representative UK national survey of parental attitudes to emotional AI in child-focused technologies (

Thematic analysis of interviews

Due to familiarity with the topic of emotional AI (McStay, 2018) and Internet of Things (IoT) data protection policy (Rosner and Kenneally, 2018), analysis of the 13 interview transcripts followed an adaptive approach (Layder, 1998). This balances deductive theory with inductive insights from data. To analyse the interview data, a hand-coded approach was employed (Cresswell, 1994; Miles et al., 2014). This was preferred to a software-led approach due to low interviewee numbers, sensitivity to the context of the interviews, voice and behaviour cues, and other contextual factors that might have been missed by an automated approach. The practicalities of coding were done by annotating sentences and paragraphs of

Survey design

To explore issues arising from analysis of the interview data, we sought insights from parents regarding: (1) acceptability of emotoys and use of emotional AI in child-focused technologies; and (2) by what terms use of these intimate technologies in toys should be governed. Conducted in the UK in February 2020 and executed online via commercial survey organisation, ICM Unlimited, the survey (

Key findings

The interviews generated four key themes: (1)

Generational unfairness

The principle of When your own play in your own bedroom is no longer private, when your own home is no longer private, when your own feelings and emotions are no longer yours to share when you choose to share them, but are being analysed and shared with others even when you're not ready to share them – I think we've done those kids a tremendous disservice […]. (Interview, 2019) I have concerns there will be emotional tracking of children throughout their adulthood, and that it starts to become available to the university that they're applying to, or the healthcare that they would like to obtain, or the job that they're after. (Interview, 2019)

Emotional AI amplifies informational asymmetry due to child inability to challenge embedded framings of emotion in technology. For example Jag Minhas, of emotional AI company

Manipulation

Key among unfairness concerns was manipulation by companies that do not have child wellbeing foremost in mind, economic value of data about emotion, and power differentials. Industry expert Ben Bland (Sensum) remarked that ‘if anyone [in the emotion analytics industry] is focusing their research on children as a data set, they're going to have a huge advantage in the market if they're going to start creating toys and things off the back of it’. On manipulation and third parties, Bland notes this data would be a premium product and that negative emotion would be most valuable because ‘people spend more when depressed’. Indeed, studies of social media companies show that they have long profiled the full gamut of child emotions and profited as a result (Barassi, 2020). For Jenny Radesky (Developmental Behavioural Paediatrician with a focus on digital media) emotional AI used with children creates generational unfairness through power imbalances between companies and children, largely due to children’s ‘limited awareness about their own emotions … about data collection and about machine learning and all of the other technical aspects that go into their interactions with technology …’ (Interview, 2019). Interviewees recognised the ease with which these technologies might be woven into a dystopian narrative, but were broadly keen to avoid this. Amelia Vance (Director of Youth and Education Privacy at the Future of Privacy Forum) for example saw scope for positive uses of child nudging, but also stated that technologies that influence emotions ‘are pushing past nudging to outright manipulation’, which in turn creates a ‘discrepancy of power between the companies offering a convenience, and people adopting it without understanding the implications’ (Interview, 2019).

Memory: Data longevity and the right to a past

Another aspect of generational unfairness is concern about the right to have parts of childhood forgotten. With pre-existing concern in ‘sharenting’ (Blum-Ross and Livingstone, 2017) and right to data erasure debates (Lambert, 2019), emotoys expand concerns about data longevity. For example, Golin observes that increasing toys’ capacity for conversational ability means that children would ‘tend to see them as confidants and confide in them’ (Interview, 2019). This is a reasonable assertion given human–computer interface research on Amazon’s Alexa that finds ‘young children are highly influenced by the spoken nature of conversational agents, respond to the agents at a very young age, and imbue the agent with human-like qualities’ (Sciuto et al., 2018: 866). For Golin confidence is problematic because, in the case of

Amplifying Leaver’s (2017) concerns about intimate surveillance in social media, apps and smart objects, it is instructive to recollect that toys such as

Living with ontologically uncertain objects

Already introduced in relation to unfairness and data longevity is the issue of synthetic personalities that may simulate empathy without meaningful testing or oversight. Pasquale (2020) has taken issue with simulated empathy due to potential for deceptive mimicry and the imbalance of care between the child (who cares) and the synthetic (that mimics). On this point, Minhas remarks, ‘there's a risk that emotional and AI-powered toys could encourage a shift away from the human touch’ (Interview, 2019). A key problem for interviewees with a professional interest in children is that the term ‘child’ represents a massive span of intellectual and emotional abilities. Aligning with Pasquale on mimicry, Radesky pointed out that young children are ‘more likely to take things at face value and are magical thinkers’ (Interview, 2019), thus confusing appearance with reality. A consequence for Radesky is that ‘they think it [the synthetic] should be treated morally,’ which as Pasquale (2020) notes, risks manipulation by manufacturers (and potentially third-party companies).

Susceptibility of parents

The second main theme emerging from our study is

Aligned with concern about data literacy is parental emotional susceptibility. Radesky remarks that having an infant child is a ‘huge growth period for parents’ and that [emotionally] ’you don't stop developing just when you reach the age of 18’ (Interview, 2019). With adults still developing, learning to cope with being parents, and children who may refuse to be calm or sleep, this is not an ideal situation for making rational decisions about privacy and data protection. Parental susceptibility is that a technology may solve an immediate short-term problem (such as calming a crying child), but there is a long-term trade-off. This issue is understood in privacy scholarship where, even with sufficient information, people in general (never mind frazzled parents) are likely to trade off long-term privacy for short-term benefits (Acquisti and Grossklags, 2005). Radesky worries that emotion-sensing may come to be relied upon by stressed and worn-out parents to understand why a child is behaving in a given way. As a consequence, child-oriented emotional AI will, for Radesky, be first marketed to parents to tell them what their child is thinking and feeling when their child is distressed, not sleeping, behaving in unusual ways, or refuses to calm down.

Susceptibility also comes in the form of fear of failure (Leaver, 2017; Stark and Levy, 2018). For Radesky this create an unethical business opportunity: I worry that some simplistic technology is going to jump in and say, “Hey, we'll be able to predict your child's tantrums and stop them before they start” [and] “We'll be able to tell you what sort of things make your child happy.

Immature signals

Interviewees both with and without technical expertise in emotion-sensing see it as methodologically problematic. Radesky remarks, ‘With an algorithm like deep learning, which is just trial-and-error, emotions are not trial-and-error. They are so much more complex than that and they represent a deep network of different regulatory, physiological and mental states within the brain’ (Interview, 2019). A key problem is industrial overreliance on Ekman and Friesen’s (1971) six basic emotions (Stark and Hoey, 2020). Fifty years later, even psychologists who support face-based emotion research recognise complexity, this taking the form of an expanded repertoire of emotions, context specificity, multimodal sensing, situation appraisals, expressive tendencies, temperament, signal intent, subjective feeling states and multiple meanings of expressions (Cowen et al., 2019).

On children specifically, Barrett et al. (2019) problematise face-based basic emotion systems due to their lack of contextual awareness (e.g. who, why and where a facial expression was made). They observed that facial coding is especially poor with children, due to their immaturity and lack of development in emoting. There are also more subtle problems. Comparing supervised and unsupervised machine learning about emotion, Azari et al. (2020) find ‘above-chance classification accuracy in the supervised analyses … suggesting that there is some information related to some of the emotion category labels’ (p. 9). However, the unsupervised learning stage did not replicate the emotion data clusters that supervised leaning began with. The significance is that machine learning in emotional AI may discover a signal in data that is stipulated by a system that seeks to stimulate an emotion (such as a toy). Yet, when the stipulated signal is removed, ‘that signal may not be sufficiently strong to be detected with unsupervised analyses alongside other real world variability in other features’ (2020: 10). In other words, the creation of emotion labels imposes an unnatural (or at least contingent) vantage point on the world, where one may find what one wishes to see. These emotion labels are problematic because of their contextual, cultural and ethnocentric nature. This is a well-rehearsed argument in social constructionist accounts of emotions (McStay, 2018), but through empirical work testing supervised and unsupervised machine learning, Azari et al. (2020: 10) conclude that ‘emotion categories are better thought of as populations of highly variable, context-specific instances.’ This leads them to challenge predetermined labels in building supervised classifiers in emotional AI systems.

The lack of high quality training data for analysing emotion expressions and affect was raised by multiple interviewees. Minhas observed ‘a lot of the training data that's out there is still at a very early stage, it’s kind of academic research’ (Interview, 2019). As to achieving high confidence in automated emotion detection, Minhas adds, ‘I think it will be multimodal’ – necessitating extra signals and data sources to triangulate and identify a child’s state. Although this may improve accuracy, it perforce increases surveillance breadth (McStay and Urquhart, 2019). As a consequence, Minhas adds, ‘And certainly as a business, our ethos would probably steer us away from … building our technology into objects where the main recipients of which would be children’. This takes us beyond the scope of this article, but it worth noting that emotional AI companies operating in public spaces (such as retail, in the case of

Guarded interest

No interviewee expressed unreserved support for toys that pertain to sense emotion, but some did express So, partly, I'm with that large proportion of the general public that just wants to say, “Stop!” But I can see many incredibly well-meaning claims from decent organizations about how this can be used to do good. So the health industry or the health sector is probably the best case of that. And I think this is … the debate to be had. We have to decide what is going to benefit children. (Interview, 2020)

Eva Lievens, a European child law expert and smart toy researcher is less negative about smart and emotoys: ‘Some of these toys are really, really entertaining and really interesting for children; sometimes educational’ (Interview, 2019). Lievens adds that the value of emotoys depends on their purpose: ‘I could imagine that, for instance, very sick children who are in hospital and who are lonely, that maybe such kind of toys can enhance their wellbeing.’ Also seeing potential for positive uses, Amelia Vance (Future of Privacy Forum) described tests with CogniToys’s conversational toy

Need for good governance

European legal experts were cautious and critical, but did not reject the premise of emotoys. They did however insist on the if a toy company [were to] develop such an IoT [Internet of Things] toy … what they should really do is also listen to children during the development phase […] Children also have “a right to be heard” because it is in ‘the best interest principle of the child. (Council of Europe, 2016)

Governance of emotional AI (and emotoys) is further complicated by GDPR being only one part of a wider governance system, especially as it overlaps with consumer protection. Damian Clifford (legal specialist in emotional AI) raised the issue of consent, which the GDPR requires to be freely given (Art. 7). Clifford is sceptical that consent to data processing is ‘freely given’ and has reservations about whether access to emotoys should be conditional upon consent. In addition to well-known problems of complex language and the length of time it takes to read privacy notices (Cranor et al., 2014), a more unique problem is that emotional AI may be integrated into a given service. This leads Clifford to suggest that 'the boundaries between processing that is necessary for the provision of the service might come under something like a contract as grounds for processing as opposed to consent.’

In addition to minimal attention to emotion and consent, extra-territoriality was also raised by legal experts. The GDPR applies beyond the territorial boundaries of the EU for data processing activities. It applies for (1) offering of goods or services to data subjects

Special protections for emotion data?

In addition to unique provision for children, we were also interested in whether there should be special protections for data about emotion. McStay (2016, 2018) has argued for a class of privacy protection based on intimacy, but there is a good case to be made that existing provisions for biometrics and personal data are sufficient. Interviewees from a legal background agreed that emotion is of innate interest. However, they did not agree that special legal provisions are needed, although certain practices might need further restrictions. Asked whether more legislation is required, Clifford responded, ‘the more [laws] we adopt the more it seems to contradict what we've got…’ Rather: ‘I think what we need at the moment is some enforcement’ (Interview, 2019). Clifford also sees need for greater regulatory clarity on whether data about human emotion is personal data or sensitive personal data, where the boundaries lie, and at what point data about emotion fits into one category or the other. Eva Lievens similarly sees the intrinsic value in emotion, noting that emotion is inherent to a person’s identity, personality and to human intimacy. She adds that the goal of data protection regulation is not only to realise the right to data protection, but that it is also closely linked to privacy rights. Yet, on sensitive data, she observes that ‘there is already some kind of specific protection in relation to biometric data,’ meaning no extra law is required (Interview, 2019).

Timing and consent

The GDPR provides for six legal bases that allow personal data to be processed: consent, contractual obligations, legal obligations, vital interests, public interest and legitimate interests (Art. 6). It is thus conceivable that children’s emotional data could be processed without gaining consent. However, Verdoodt argued that ‘consent appears to be the only legal basis,’ adding as a company, if you opt for the ground of legitimate interest, you have to balance your legitimate interests with the data subject rights, with the rights of the data subject and the interests of the data subject. In this balancing test, the interests of children it's a reference to national contract law or law on capacities of children, or it is a reference to the US Children's Online Privacy Protection Act (COPPA), or it is a reference to, “Oh well, our neighbouring country is using that age.” But it's almost never developmentally justified reasoning on why a child is considered competent to give consent from a certain age (Interview, 2019). will be stronger or taken more into account than adult data subjects. So I think it will be difficult to use this as ground for processing. (Interview, 2019)

Interviewees with legal expertise were also asked I do think efforts in really stark and clear language that talk about what [devices] collect and what's happening with the information at point-of-sale is really important because I don't believe that it’s really informed consent once you get to point of downloading the app and you've already got this product that your child is excited about (Interview, 2019)

Survey findings: Parental perspectives

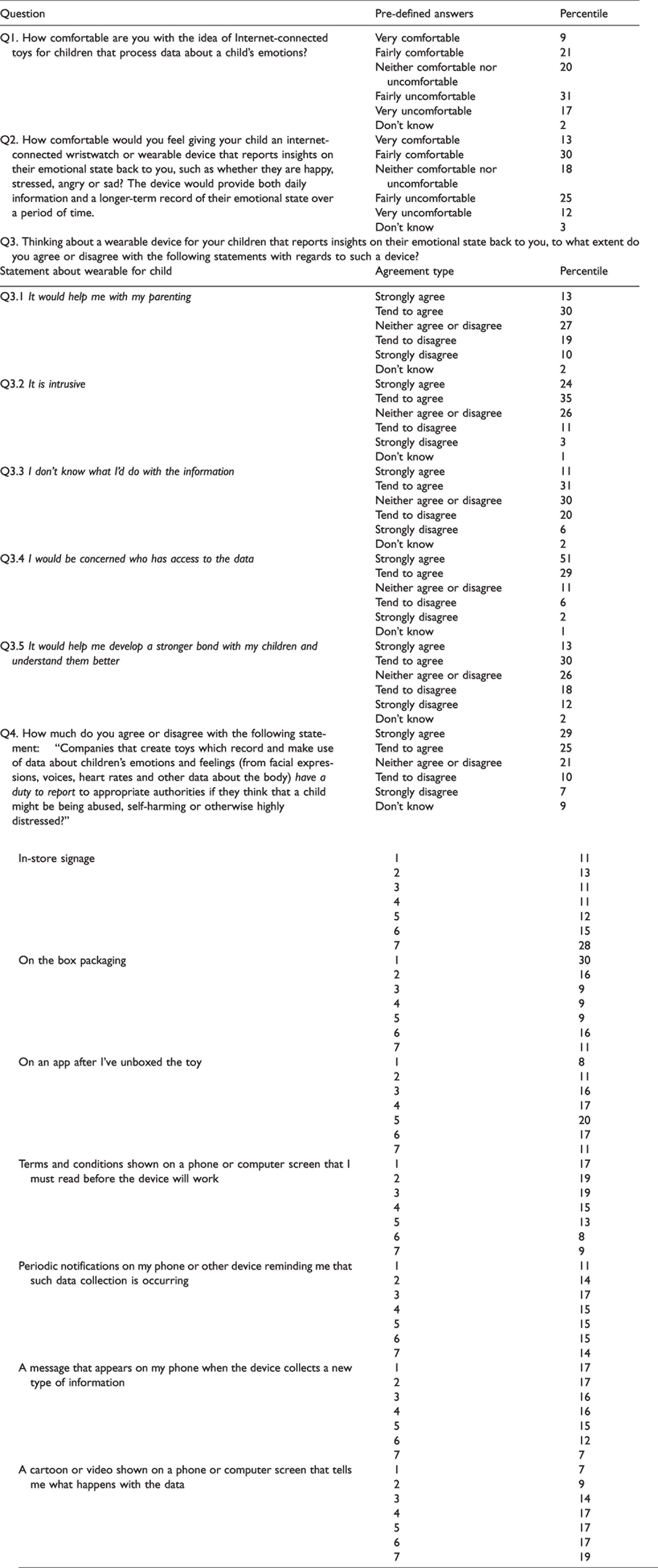

Having established four primary themes from the interviews (generational unfairness, susceptibility of parents, guarded interest and the need for good governance), we next sought survey-based insights from parents regarding the acceptability of emotoys and use of emotional AI in child-focused technologies, and by what terms use of these intimate technologies in toys should be governed. (See Table 2 for questions and findings.)

Interviewees asked about emotoys.

Parental perceptions of child-focused emotional AI.

Q1 asks: ‘How comfortable are you with the idea of Internet-connected toys for children that process data about a child’s emotions?’ Parents were not given extended descriptions of the technology, but were in effect asked to consider the principle of emotoys. We were especially interested in creepiness (McStay, 2018; Tene and Polonetsky, 2014), parental alarm and comfort with new technology (Livingstone, 2009). Parents display neither high comfort nor very low comfort, with 48% overall registering as ‘Uncomfortable’ (versus 30% overall as ‘Comfortable’). This suggests that while parents are clearly not comfortable with the idea of these technologies for a variety of reasons (some unpacked below in answers to other questions), parental alarm was not a leading sentiment.

Q2 asked parents: How comfortable would you feel giving your child an internet-connected wristwatch or wearable device that reports insights on their emotional state back to you, such as whether they are happy, stressed, angry or sad? The device would provide both daily information and a longer-term record of their emotional state over a period of time.

Q3 asked parents to consider wearable devices for children that reports insights on a child’s emotional state back to parents. A key finding in Q3.1 is that 43% agree that an emotion sensing wearable would help respondents with their parenting, while 29% disagreed. These findings should be treated cautiously since the technologies were not explained, nor was the questionable effectiveness of using biometrics to infer emotion (Azari et al., 2020; Barrett et al., 2019; McStay, 2018). Nevertheless, the idea that these technologies could be a benefit to parenting was not dismissed. Follow-up work is required to explore this, but our initial reactions connect with Lupton and Pederson’s (2016a) aforementioned interest in tracking technologies, reassurance through quantification, and Leaver’s (2017) observation that

Q3.2 finds that 59% of parents agreed that a child-oriented wearable that tracks emotion is intrusive, although this too should be seen in light of the finding that parents broadly think it would help them with their parenting. Again, this gives support for Leaver’s (2017) point about intimate surveillance and that parents are aware of being potentially invasive, yet this is justified on grounds of what is understood as ‘good parenting’. We agree with parents, that it is intrusive, but add that if emotion trackers are used to assist with mental and emotional wellbeing (such as combatting child anxiety), it is questionable whether wrist-based surveillance devices will help given privacy concerns.

Q3.3 raises an apparent paradox: it finds that despite the perceived potential to help with parenting established in Q3.1, 42% in Q3.3 ‘strongly’ or ‘tended’ to agree that they did not know what they would do with information about their children’s emotions. Further qualitative work will help resolve this, but we think it is partially explained by: (1) longstanding acculturation of digital media into parenting and (2) lack of familiarity with devices that collect data about emotions. The question becomes one of what would social adoption look like as parents become more familiar with them and what conventions would be formed around the data from emotion-based devices? As Lupton and Pederson (2016b) have explored, it is useful to take lessons from acculturation processes in digital media, such as asynchronous messaging (like texting and emoji), real-time more intimate communication (phone) and video calls (to see visual images of their children as they chat). Indeed, this care for wellbeing from a distance helps explain parental interest in emotion trackers even if parents are not yet clear on what they would do with the data. Longhurst (2016) grants a glimpse of the nature of acculturalisation as she discusses the mediation of emotion in hybrid terms as the organic and inorganic comingle through communication technologies. McLuhanite in its vision of an extended sensorium (McLuhan and Fiore, 1967), the appeal is that emotion-based devices may promise ambient awareness of wellbeing, even if it is not clear to parents what they would do with that information. It will be interesting to see how social adoption and the technologies develop, especially in light of: (1) Longhurst’s argument that mothers perform the majority of emotional labour; (2) that emotion in relationships has long been mediated; and (3) that mediation enriches inter-connections. For example, wearable manufacturers continue research into languages of haptic communication (McStay, 2018), which provides not only for communication, but touch-based intimacy from afar. We look forward to close-up study of these technologies in lived contexts, just as social media and messaging are prevalent today. This will be to understand the child-focused emotional AI not only in terms of unfairness and the concerns posed in this article, but acculturalisation among parents and children.

Q3.4 is about levels of concern over who would access data about the child. Eighty per cent of parents agreed that they would be concerned about who has access to the data, with only 8% disagreeing. Despite relatively extensive literature pointing to low level of parental concern about data privacy and children (Lupton and Pederson, 2016b), we see parallel with Livingstone et al.’s (2018) finding that while parents

Q4 was interested in reactions to the proposition that toy companies might have a duty to report to appropriate authorities if systems flag that a child might be being abused, self-harming or otherwise highly distressed. The question was motivated by: (1) an extension of research focus from parental to institutional surveillance; (2) the thorny ethics of child protection and intervention (Hall et al., 2016); and (3) concern from the technology ethics field on the need to defend human expertise from technophilic AI claims (Pasquale, 2020). Overall, 54% agree with the premise versus 16% who did not. The point about human expertise is vital, especially given that emotion-sensing is a deeply problematic method (Barrett et al., 2019; McStay, 2018). As discussed above in the interview section on immature signals, Azari et al.'s (2020) findings about over-reliance on basic, folk and ethnocentrically specific categories of emotion are central because they confirm that emotion labels are social and contingent. Applied to our question of sensing whether a child might be being abused, self-harming or otherwise highly distressed, the scope for misunderstanding in emotional AI is profoundly problematic due to scope for false positives (and negatives) in the most sensitive of circumstances (child abuse).

Given longstanding concern about privacy and consent notification (Cranor et al., 2014), Q5 was interested in the means by which parents should be informed of the types and methods of data collection for these toys. Options given were: in-store signage; on the box packaging; on an app after unboxing; on a range of networked devices that must be shown before the toy will work; through periodic notifications reminding parents that data about their child is being collected; notification when a new type of data is being collected; and cartoons or a video that tell parents what data is being collected and how. Parents did not express strong preference for any option and it is possible that respondent fatigue may have occurred. There are however some clear insights: Q5.1 finds that parents are not keen on in-store signage as a means informing them about what data is collected, but

The finding of parental preference for on-the-box packaging may not be a scintillating insight, but it is very practical. It also resonates with the statement from the UK Information Commissioner’s Office that customers should be able to view privacy information, terms and conditions of use and other relevant information online without having to purchase and set up the device first, meaning customers can make informed decisions about whether to buy the device in the first place (ICO, 2020). The time-based dimension is important because it answers a problem in data protection provision and, with appropriate easy-to-digest information on the box, begins the informed consent process before the item has been bought. In relation to toys, it also helps address problematic consent stipulations in GDPR: that consent should be given

Conclusions

Emotional AI is the use of affective computing and artificial intelligence techniques to try to sense, learn about, and interact with human emotional life. This article has explored usage in toys (emotoys) and related child-focused objects (such as wearables that pertain to track wellbeing). To explore the significance of these in relation to ethics, governance and data protection, the article made use of expert interviews and a UK national survey of parents. The interview stage raised diverse concerns about generational unfairness. Having digital origins in ‘sharenting’ and exploitation of children’s digital footprints, generational unfairness itself is not a new idea. Yet, unfairness and injustices through the datafication of emotional life introduce new concerns and questions. Interviewees opined that adults are doing a disservice to children, that they are not envisaging consequences and that they are creating gross power imbalances between children and corporations. Unfairness through imbalances took a range of forms, including manipulation of child vulnerability, that parts of childhood should remain in the past, unwanted labelling of behaviour, the need to try-for-size adult concepts like sexuality without a corporate data gaze, and socially unhealthy corporate strategic interest in the construction of synthetic behaviours.

Interviewees also saw parents as vulnerable, especially in relation to data literacy, inexperience of raising children, fatigue, fear of failure, concern about the loss of human skill in parenting and the outsourcing of these skills to questionable technologies. Interviewees flagged problems with methods behind these technologies, a point this article bolstered with discussion of contextual, cultural, ethnocentric and labelling techniques in machine supervised learning. Methods aside, interviewees saw scope for good in child-focused emotional AI but insisted that it must be used in the best interests of the child. Governance was shown to be problematic, with all recognising that laws remain limited for children and questions of datafied emotion. However, expert interviewees did not recommend new law, but better enforcement of existing law. Regarding toys and other emo-objects themselves, consent was the preferred legal basis for personal data to be processed.

The survey stage generated ambivalent responses. Parents were concerned about privacy, yet also saw merit in emotion-enabled devices. Leaver’s (2017) work on parents and intimate surveillance provides a useful prism in that it recognises the affective context of parenting, fear of failure, inherent surveillance in parenting and longstanding datafication in parenting (such as pregnancy apps and sensors in infant cots). What might be seen as contradictory findings – such as broad agreement that emotion sensing wearables could help with parenting, but that parents did not know what they would do with this information about their children’s emotions – are to an extent resolved by seeing that parents want to do the right thing. With very high concern for privacy added, parents are pulled in multiple directions. We suggest that the survey results reflect the technology, parenting and governance landscape well. Given that parenting is inherently about care, communication, wellbeing, emotion, oversight, modulation of control, interest in new tools to help with parenting, and that parents are exploring the mediation of these in a highly imperfect governance context, the results make sense. We recommend close regulatory attention to these technologies due to questionable effectiveness, potential trading of long-term privacy for short-term benefits, and the conceivable severity of outcomes resulting from the processing and sharing of child data (we have the finding on potential child abuse in mind).

As a practical final point,

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work is supported by Economic and Social Research Council [grant number ES/T00696X/1] and Engineering and Physical Sciences Research Council [grant number EP/R045178/1].