Abstract

The integration of artificial intelligence (AI) into children's toys has transformed interactive play, increasingly used for learning, leisure, and socialization. This article examines the evolution of AI in toys, exploring both its potential benefits and emerging concerns. A systematic review of 13 academic prototypes evaluates their features, purposes (e.g., learning, therapy, leisure), and usability tests, providing a comprehensive overview of the field. Additionally, the article conducts an industrial mapping of 91 AI toys released from November 2015 to January 2024, offering insights into the current market landscape. Based on Piaget's Theory of Cognitive Development, the study suggests that AI toys can enhance cognitive and social-emotional development, particularly in STEM skills and early digital literacy. However, it identifies a critical gap between the rapid growth of AI toys and the limited academic research on their educational value. This disconnect impedes independent assessment of industry claims, especially regarding emotional AI. This article concludes by emphasizing the need for interdisciplinary collaboration and the development of regulatory frameworks to ensure the ethical design, marketing, and use of AI toys.

Introduction

The advent of artificial intelligence (AI) has transformed various industries. Among these, the toy industry stands out as a particularly vibrant field where AI is being integrated to create intelligent, interactive toys. AI integration in toys has redefined traditional play patterns, merging education and entertainment in unprecedented ways. This evolution underscores the growing significance of AI toys in child development, as they increasingly serve as tools for leisure, learning, and socialization (Rafferty and Hung, 2015).

This study addresses the gap between rapid technological advancements in the toy industry and the academic frameworks designed to understand and regulate these developments. By advocating for ethical and healthy standards in AI toy marketing and advertising, it aims to inform stakeholders and guide future innovation in this burgeoning sector.

The analysis focuses on AI toy prototypes developed by researchers, highlighting their features, methodologies, usability tests, and major innovations. It also explores the broader AI toy market, linking practical applications to academic discourse.

Background

AI toys

AI toys have emerged as a distinct and growing subset of smart and connected toys. While no standardized definition exists, this study defines AI toys as physical toy devices that incorporate artificial intelligence, such as machine learning, natural language processing, or adaptive algorithms, to personalize interactions, simulate decision-making, and respond dynamically to users.

In contrast, smart toys use sensor-based feedback and network connectivity, typically relying on rule-based responses (Tang and Hung, 2017). Internet of Toys (IoToys) refers to toys connected via the internet that enable data exchange and app integration but lack autonomous behavior (Mascheroni and Holloway, 2019).

AI toys are unique in their adaptive, semi-autonomous capabilities. They incorporate adaptive algorithms such as machine learning, natural language processing, and computer vision, enabling them to personalize interactions, simulate decision-making, and learn from user behavior over time. These dynamic, context-sensitive features mark a significant departure from the static or reactive nature of earlier toy technologies.

While AI toys share surface similarities with smart toys and Internet of Toys, they raise unique ethical, legal, and developmental concerns not typically addressed by frameworks for general smart toys (McStay and Rosner, 2021). These concerns include algorithmic bias, persistent surveillance, the personalization of emotional responses, and long-term behavioral shaping (Fosch-Villaronga et al., 2023; Jones and Meurer, 2016). Recognizing AI toys as a separate category enables more effective evaluation of their pedagogical value, psychological impact, and implications for children's rights and data privacy.

Early examples (Figure 1) include Hello Barbie and Dino, which used speech recognition and IBM Watson (Albuquerque et al., 2020). Recent products like Grok (Curio, 2024) incorporate ChatGPT to enable conversational interaction with children. Kai (Thames & Kosmos, 2023) employs cameras, facial recognition, sound recognition, and gesture recognition that can react to the gestures and sounds children make.

AI toy examples: (1) Hello Barbie (Mattel, 2015), (2) Dino (Moynihan, 2015), (3) Kai (Thames & Kosmos, 2023 ), (4) Grok, Grem, and Gabbo (Curio, 2024).

Jean Piaget's stages of cognitive development

AI toys aim to blend play with learning, fostering children's cognitive and social development. Jean Piaget's stages of cognitive development provide a useful framework for understanding how intellectual toys can be designed to support growth across different ages (Lobra, 2020).

Piaget identified four stages of cognitive development: the sensorimotor stage (birth to 2 years), the preoperational stage (2–7 years), the concrete operational stage (7–12 years), and the formal operational stage (12 years and up) (Piaget, 1950). In the sensorimotor stage, infants learn primarily through interactions with their environment, developing motor skills and object permanence. They are also starting to develop their language skills at this stage. During the preoperational stage, children engage in symbolic thinking and develop language, although their logical reasoning remains limited. The concrete operational stage is marked by more logical thinking, including the ability to engage in hypothetical reasoning. Finally, in the formal operational stage, adolescents develop the capacity for abstract thought and deductive logic (Piaget, 1989).

AI toys can align with these stages by offering tailored experiences that match developmental needs (Miglino et al., 1999; Singh et al., 2024). Piaget's theory emphasizes active learning through interaction with the environment. AI toys that adapt to children's responses align with this concept by providing dynamic feedback that supports cognitive development. For example, an AI toy that reacts to a toddler's actions reinforces sensorimotor learning, while toys for older children can encourage problem-solving and critical thinking. This article applies Piaget's theory to understand how AI toys in the current market align with developmental stages and calls for academic research to validate manufacturers’ educational claims.

Related works

As AI toys become more sophisticated and integrated into children's lives, research has not kept pace with the rapid advancements. Much of the existing literature addressed broader categories like smart or connected toys rather than AI toys specifically. While these studies explore issues such as privacy and security, they often fail to recognize AI toys as a distinct category requiring focused academic attention.

For example, Fosch-Villaronga et al. (2023) address the ethical and social concerns raised by smart connected toys, yet many of the examples they discuss, such as social robots and AI-powered decision-making systems, align more closely with AI toys. Loukil et al. (2017) reviewed privacy issues in IoT applications but did not treat AI toys as a distinct category. Mahmoud et al. (2018) analyzed privacy policies and terms of use of 11 smart toys, highlighting the urgent need to assess how these toys handle personal data. Similarly, Albuquerque et al. (2020) conducted a scoping review, identifying major privacy risks and mitigation solutions related to connected toys. Pontes et al. (2019) mapped smart toy security research, while Jones and Meurer (2016) focused specifically on one AI toy, Hello Barbie, demonstrating how its interactive design and data practices raise ethical and regulatory concerns.

Despite these contributions, no prior work has systematically mapped the industrial landscape of AI toys or compared it with academic research. While de Albuquerque and Kelner (2019) conducted an industrial mapping of toy user interfaces (ToyUI) from 2012 to 2017, their focus was on understanding the evolution of toy interfaces, not toys themselves. Our study fills this gap by analyzing recent industry innovations in AI toys and comparing them with academic developments. Instead of focusing solely on privacy and ethics, this study integrates technological trends, academic gaps, and industry developments, highlighting the need to treat AI toys as a distinct research category.

Method

This study consists of three parts. The first part presents the results of the academic mapping (AM), a systematic mapping of the existing research literature. We conducted a review covering ten years of research from January 2014 to January 2024 as the use of AI was rapidly evolving. The focus was on identifying relevant research prototypes.

The second part presents an industrial mapping (IM) of AI toy releases from November 2015 to January 2024. Hello Barbie, the first AI-powered doll in the market that uses speech recognition and progressive learning to engage with children, was released in November 2015. Starting with Hello Barbie, we scoured multiple sources to curate a list of AI toys currently on the market.

The third part compares the results of the industrial mapping (IM) with those of the academic mapping (AM). By identifying the potential gaps between the rapid technological advances in the toy industry and the academic frameworks, we seek to understand and critique these developments.

Academic mapping

The academic mapping (AM) included data from five major digital libraries, selected for their strong focus on human-computer interaction (HCI), robotics, educational technology, and AI system design: the ACM Library, Springer Link, IEEE Xplore, Science Direct, and SAGE Journal. These fields closely align with the study's objective of identifying and analyzing academic prototypes of AI-enabled toys.

The initial search terms, “Internet of Toys” and “artificial intelligence toys,” returned only 98 results. Further searches were conducted to refine the search query by identifying alternative spellings and synonyms. We concluded that “smart toys,” “internet of toys,” and “connected toys” are the three most commonly used terms and are often used interchangeably in the literature, although some scholars emphasize fundamental differences between them (e.g., Fosch-Villaronga et al., 2023; Hall et al., 2022). The term “AI toys” was coined later and is often used interchangeably with these three terms. Final search queries (Figure 2) were formatted in both simple and Boolean forms to suit different library interfaces.

Search string used in the search process.

The refined queries yielded 1574 search results. Following the guidelines suggested by Garousi et al. (2019), we applied seven inclusion (IC1-7) and seven exclusion (EC1-7) criteria. Each exclusion criterion is the inverse of an inclusion criterion. Titles and abstracts were screened first, followed by introductions and conclusions. Full texts were reviewed only if prior sections passed. Table 1 summarizes the rules and exclusions.

Inclusion and exclusion criteria. Number of excluded articles.

The first exclusion criterion (EC1) was duplicate studies, resulting in the removal of 113 articles. Next, we excluded 1210 articles (EC2) describing smart/connected toys that lacked AI functions such as learning or personalization. Though interactive, these toys used only sensors and static code, without adaptive feedback or behavioral learning.

To maintain analytical focus, we limited our selection to fully developed, physical AI toy prototypes (EC3, n = 141). Consequently, we excluded articles focused on conceptual designs, VR/AR games, workshop reports, proposals for hardware or software toolkits, as well as studies centered on HCI frameworks, engineering principles, or computing methods.

We fully acknowledge that social, ethical, and legal perspectives are essential to understanding the broader implications of AI toys. However, addressing these dimensions in depth requires a distinct analytical framework and more space than this article allows. To ensure conceptual clarity, we have chosen to treat these issues in a separate, companion manuscript currently in preparation.

By narrowing the scope here, we aim to provide a clear and structured view of system-level innovation while laying a foundation for future interdisciplinary research. We believe this phased approach helps avoid conflating distinct lines of inquiry and better supports a comprehensive understanding of the AI toy ecosystem.

Further exclusions included non-English papers (EC4, n = 9), incomplete documents like posters or book chapters (EC5, n = 32), and inaccessible full texts (EC6, n = 21). Finally, only articles directly describing prototypes were retained (EC7, n = 36), leaving 13 for inclusion (Table 2). This comprehensive evaluation ensured that each study met our standards for relevance, quality, and adherence to our research objectives.

Studies included in the academic mapping (AM).

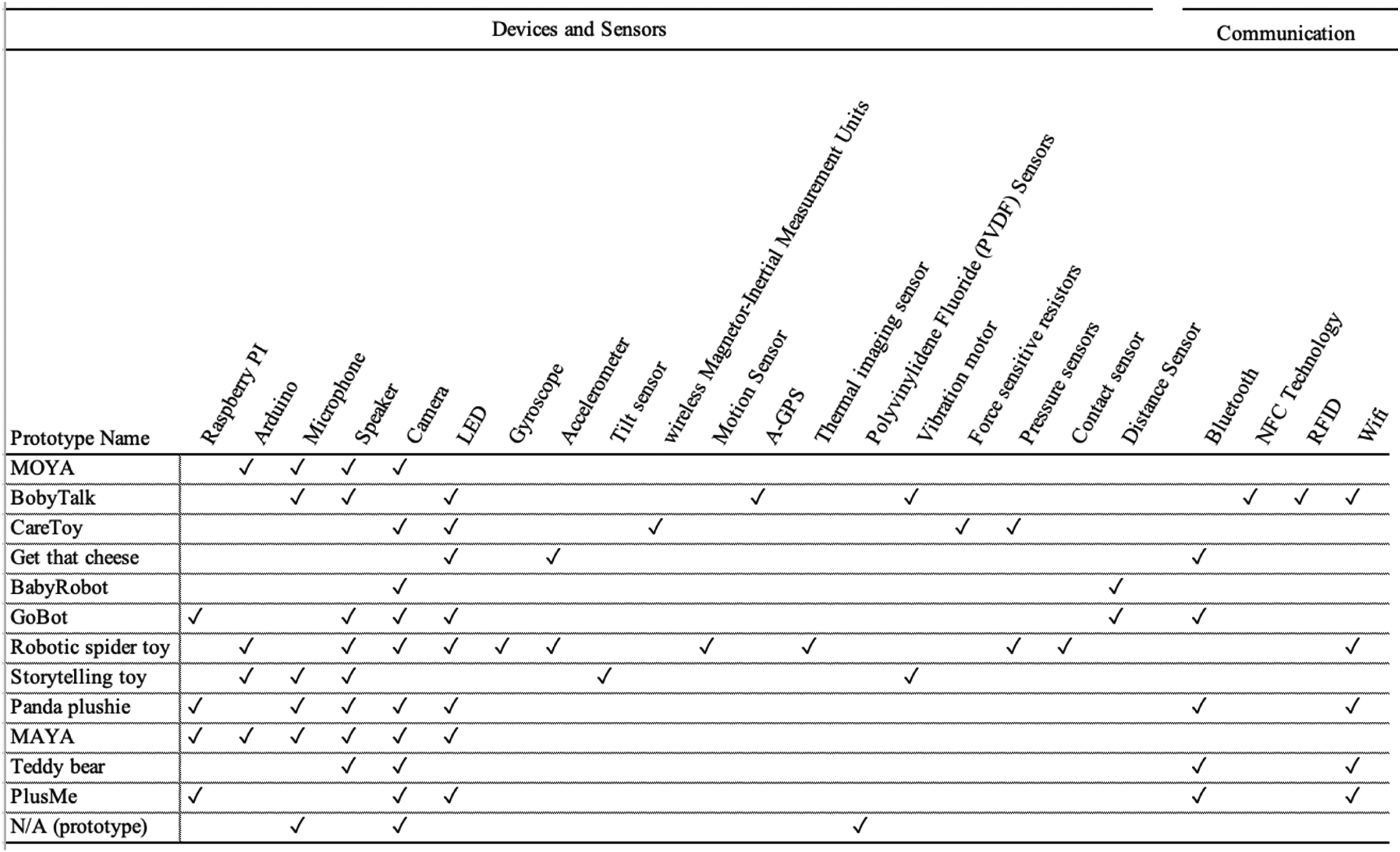

We then analyzed those 13 scientific articles based on 10 features, detailed in Table 3. These features covered various aspects of the toys’ designs and goals, such as their purpose, physical environment, social environment, methods of play, modes of engagement, interactivity, accompanying mobile applications, and AI functionalities like speech recognition and emotion prediction.

AI toy prototype features.

Next, we examined the methods used in the 13 research articles. Researchers often conduct usability tests to provide evidence of the prototypes’ effectiveness and practicality. By assessing user interactions with AI toys in both real-world and controlled settings, these tests highlighted strengths and areas for improvement. We identified whether studies used qualitative, quantitative, or mixed methods, documenting research designs such as surveys, behavioral measures, experiments, and interviews.

In addition, we reviewed the hardware components and communication technologies described in the prototypes. Sensors included motion detectors, cameras, and microphones, while communication channels encompassed Wi-Fi, Bluetooth, and other options. This evaluation provided insights into the technological capabilities and limitations of AI toys developed in academic settings.

The data synthesis process of the academic mapping (AM) involved a thematic analysis to identify common themes across the prototypes, revealing broader AI toy development trends such as interactive storytelling, educational use, and therapeutic applications. Figure 3 illustrates the full academic mapping process.

Outline of the systematic mapping process.

Industrial mapping

For the industrial mapping (IM) process, we used multiple search strategies to ensure a comprehensive collection of data on AI toy products. First, we examined AI toy products from toy brands with top market shares (e.g., Mattel, Disney, LEGO, and WowWee) (Gupta, 2025). Second, we added AI toy products mentioned in scholarly articles during the academic mapping (AM) process. Third, we scraped data from major online shopping platforms (Amazon and Google Shopping) using search terms “AI toys,” “AI-powered toys,” and “AI-enabled toys.” This step enabled us to explore the current market offerings and consumer preferences based on product availability.

The initial dataset included 735 observations. During the third step of the IM process, we observed a notable issue: many sellers falsely claimed their products were AI-enabled when, in fact, they did not incorporate any AI technology. For example, items like the “Smart AI Electronic Pet” (Amazon, 2025) and the “AI Flying Orb Ball Toy” (Amazon, 2024) featured buzzwords like “smart AI chip” or “interactive AI functions.” Yet upon inspection, they relied entirely on basic pre-programmed responses, mechanical movement, or gyroscopic sensors. To ensure data accuracy, we manually reviewed and removed all non-AI products. After data cleaning and deduplication, the final dataset comprised 91 items. The majority of products (51) were sourced from Amazon, followed by Google Shopping (20 products), top brand websites (11 products), and academic literature (9 products).

We then classified the manufacturer's recommended age into “0–2,” “3–7,” and “8–12” based on Piaget's four cognitive development stages (Piaget, 1950, 1960). These categories were slightly adjusted to avoid overlapping ranges and ensure each toy was assigned to only one stage. For instance, while Piaget places age 2 at the boundary between stages, we designated it within “0–2” for clarity. This practical adjustment ensures that each toy is assigned to only one developmental category. We also added “13–17” and “18+” to reflect older age groups, with 18+ toys typically intended for adults due to complexity or collectability.

Next, we analyzed the overall market trends, focusing on the market release patterns and price ranges of AI toys. This provided insights into the current state and trajectory of the AI toy market. To further enrich our understanding, we conducted word frequency and term frequency-inverse document frequency (TF-IDF) analyses on toy descriptions. Word frequency identified the most common terms in product descriptions, highlighting key features emphasized by sellers. In contrast, the TF-IDF analysis revealed unique terms that differentiated specific products, offering a nuanced view of the market landscape.

We also retrieved data from the Google Trends website spanning May 1, 2015, to May 1, 2024, using global search data. The keywords we used were “AI toy” and “smart toy” in both singular and plural forms. These queries aimed to capture Google Search users’ behavior and identify emerging trends.

Finally, we integrated findings from the academic mapping (AM) and the industrial mapping (IM) to complete the categorization of toy features. A comparative analysis between academic and commercial prototypes was conducted to assess the diversity of AI toy designs, highlight recurring challenges, and identify future research opportunities.

Result

Academic mapping

As described in the Methods section, we classified the 13 academic AI toy prototypes by their primary purpose into three groups: leisure, learning, and therapy and rehabilitation. Of these, five were designed for therapy and rehabilitation purposes, while learning and leisure categories each contained four prototypes. This nearly even distribution demonstrates the multifunctional potential of AI in children's toys. Researchers increasingly view AI toys as not only entertainment tools but also valuable for educational and therapeutic applications, particularly for children with special needs.

Table 4 summarizes the features of these prototypes. While we broadly classified these prototypes based on their three primary purposes (learning, leisure, and therapy and rehabilitation), the selected AI toy prototypes can also be grouped into seven thematic domains, namely: language learning, interactive storytelling, interactive learning, autism spectrum disorder (ASD) treatment, locomotor training, child monitoring, and motor skill treatment.

Selected AI toy prototype feature.

Therapy and rehabilitation toys provide tailored, engaging interventions that utilize real-time data for continuous optimization (e.g., Canas et al., 2022; Mironcika et al., 2018; Montedori et al., 2022). Those toys often target specific body parts to improve particular motor skills, such as gross motor skills through limb-focused activities like crawling and walking (Canas et al., 2022; Mayoral et al., 2023) and fine motor skills through tasks involving hand and finger movements (Mironcika et al., 2018). Such activities are beneficial for physical development and therapeutic interventions, especially for children with disabilities or developmental delays.

Learning-oriented prototypes extend beyond knowledge acquisition, covering locomotor skills (e.g., Mayoral et al., 2023), language learning (e.g., Ahn et al., 2018), interactive learning (e.g., Akdeniz and Özdinç, 2021; Pap et al., 2021), and interactive storytelling (e.g., Hu et al., 2016; Ni and Wang, 2019). These applications demonstrate that AI toys can support not only therapeutic applications but also a range of learning activities, promoting physical training, language development, creative thinking, and knowledge acquisition.

Monitoring toys are often for leisure or therapy and rehabilitation purposes, tracking children's activities or health metrics. These toys use AI to gather and analyze data on movements, behaviors, or health, providing insights for parents and caregivers (e.g., Cecchi et al., 2016). These toys are particularly beneficial for children with disabilities or neurodevelopmental disorders, offering parents a tool to better understand and meet their child's needs.

Most toys are designed for solo play in indoor environments, often with full-body or limb-based interactions. The majority fall under the category of toy robots or dolls and stuffed toys, reflecting that materials are crucial in toy design as they impact safety and user experience. Soft, ductile materials are not only safe for children but also help create a sense of intimacy and comfort (Goula-Dimitriou and Dasygenis, 2016; Hu et al., 2016). Meanwhile, robotic AI toys are designed to be visually appealing to attract children's attention and enhance engagement (Ni and Wang, 2019).

While most toys are intended for single-player engagement, a few also support collaborative or competitive play. Interactivity primarily centers on toy-to-player engagement, though some prototypes, such as MAYA and BabyTalk, incorporate player-to-player interactions. Additionally, only MAYA and PlusMe feature accompanying mobile applications, indicating an opportunity for further development in enhancing the digital capabilities of AI toys.

Half of the academic articles included usability testing to evaluate their AI toy prototypes’ effectiveness, as detailed in Table 5. Qualitative methods, such as surveys, interviews, and observational studies, provided in-depth insights into user interactions and were frequently employed to refine toy design. For instance, Hu et al. (2016) and Akdeniz and Özdinç (2021) utilized surveys and interviews to assess preschoolers’ engagement with an interactive toy, generating a robust dataset to guide design improvements. Conversely, quantitative methods, including experimental designs and behavioral measurements, delivered objective data on prototype effectiveness. Poon et al. (2017), for example, conducted experiments to evaluate a memory-efficient multimodal emotion recognition system. It offered valuable evidence of the toy's capability to meet its intended goals.

AI toy prototype articles with usability test.

There were also mixed-methods approaches, which integrate both qualitative and quantitative techniques. For example, Mironcika et al. (2018) and Mayoral et al. (2023) combined behavioral measures, interviews, and questionnaires to assess fine motor skills development and physical activity engagement.

A usability test not only validates the prototypes’ effectiveness but also identifies areas for improvement, contributing to the development of more sophisticated and impactful AI toys. However, it is important to note that the other half of the prototype articles did not present any usability tests. Without empirical usability assessments, it becomes difficult to determine whether these toys achieve their intended educational or developmental goals. Furthermore, the lack of usability testing in these studies suggests potential gaps in the design process, highlighting an area for improvement in future research.

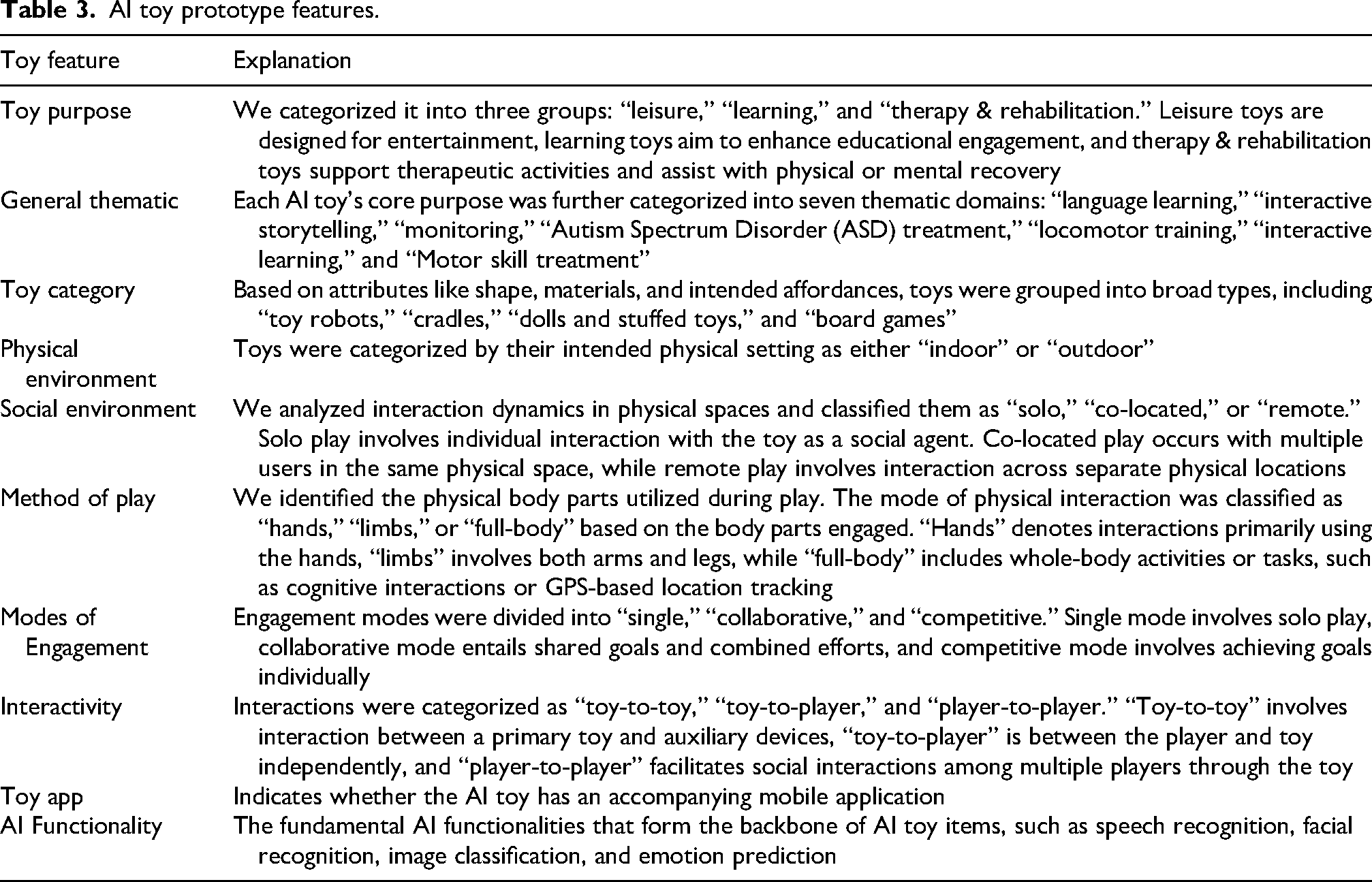

The academic AI toy prototypes incorporated various sensors and communication channels to enhance functionality. Figure 4 summarizes the hardware used in the selected prototypes, highlighting the diversity of integrated components.

Available sensors and communication channels in selected smart toy prototypes.

Additionally, the analysis revealed core AI functionalities aimed at improving interactivity and effectiveness, summarized in Table 6. Some common AI features included speech recognition, facial expression recognition, emotion analysis/prediction, adaptive gameplay, and behavior assessment.

Selected AI toy prototype functionality.

One of the most prevalent functionalities is speech recognition (e.g., Hu et al., 2016; Ni and Wang, 2019). This technology allows toys to understand and respond to children's verbal inputs, fostering dynamic interaction. It is particularly important for educational toys, supporting language acquisition and helping children develop communication skills (Pap et al., 2021).

Sensor-based analysis of children's activities and health metrics is another significant feature. These toys use sensors to promote physical activity and provide real-time feedback. This functionality is essential for therapeutic toys, as it allows caregivers and therapists to monitor the child's development and make necessary adjustments to interventions (Cecchi et al., 2016; Mironcika et al., 2018; Murphy et al., 2015).

Meanwhile, the adaptive gameplay feature could adjust the difficulty level and content of the games based on the child's performance. This ensures that the activities remain challenging yet achievable, fostering sustained engagement and learning. This functionality is crucial for both therapeutic and educational toys, providing a personalized experience tailored to individual needs (Akdeniz and Özdinç, 2021; Canas et al., 2022; Mayoral et al., 2023; Mironcika et al., 2018; Ni and Wang, 2019).

Emotion analysis and prediction also play a significant role in AI toys. Some toys employ facial expression recognition to analyze children's emotions (Goula-Dimitriou and Dasygenis, 2016). Those toys detect emotions and respond with appropriate songs, offering emotional observation and parental monitoring capabilities. Poon et al. (2017) integrate speech recognition, facial expression analysis, and physical action detection to assess emotions using multiple data sources. Murphy et al. (2015) and Ni and Wang (2019) also expressed interest in incorporating emotional recognition in future studies, highlighting emotional analysis as an emerging trend in AI toy development.

Industrial mapping

Next, we employed Google Trends data in the analysis of our industrial mapping (IM). Our search count results show a clear cyclical pattern, with distinct peaks occurring annually at the end of the year, especially for “smart toy(s)” (Figure 5). This pattern aligns with the Christmas shopping season when consumers are more actively searching for toys. Although “AI toy(s)” had lower overall search volumes, they also show an annual peaking trend, suggesting that interest in AI-enabled toys aligns with general toy purchasing cycles.

2015–2024 Google search count.

Before 2022, users were more familiar with or interested in “smart toys” rather than “AI toys.” However, from 2022 to 2024, there was a noticeable increase in searches for “AI toy(s),” reflecting growing awareness and interest in AI-marketed toys. The convergence of the terms began in 2023, and by 2024, the search volume for “AI toy(s)” was nearly equal to that for “smart toy(s).” This indicates the growing trend of a broader acceptance and interest in AI toys.

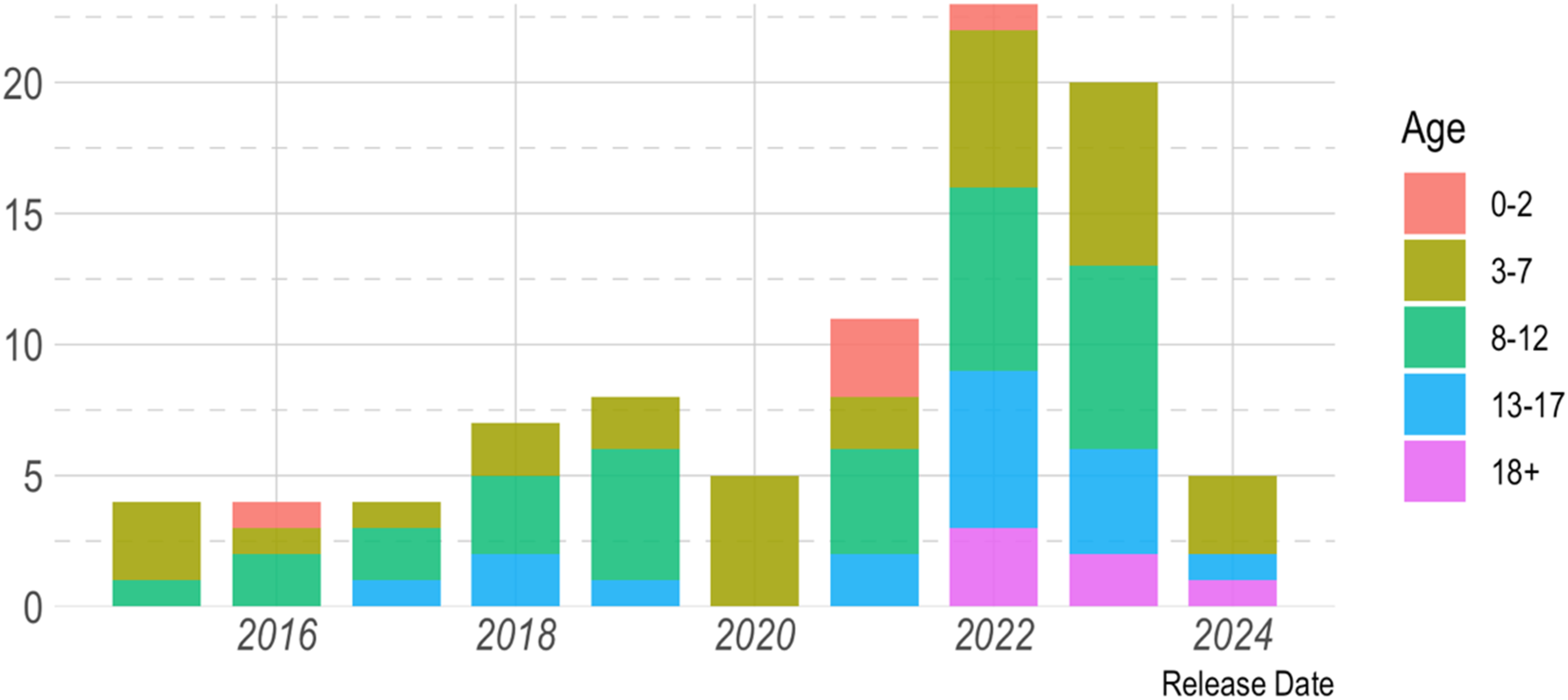

Since the release of Mattel's Hello Barbie in 2015, the AI toy market has experienced a steady increase in product releases (see Figure 6). A significant peak occurred in 2022, largely driven by advancements in AI technology, particularly following the launch of OpenAI's ChatGPT. This development accelerated innovation within the AI sector, significantly influencing the toy industry.

Distribution of AI toys released annually (2014–2024), categorized by target age group.

The advent of AI technology has notably captured the attention and imagination of the public, generating significant excitement and confidence in the capabilities of AI. The rapid integration of AI toys has become central to both product strategy and consumer satisfaction, with most AI toys aimed at children aged 3 to 12 (see Figure 7). Notably, AI toys for adults (18+) emerged in 2022, indicating that technological advancements have enabled more complex functionalities to cater to a mature audience.

Distribution of AI toys by age group.

However, a notable decline in AI toy releases occurred in 2020, directly attributable to the global COVID-19 pandemic. The widespread logistical disruptions and operational challenges during this period temporarily hindered production and innovation across many sectors, including toy manufacturing (Perry, 2021; The Toy Association, 2022). This temporary decline was followed by a robust recovery, as demonstrated by the subsequent rise in AI toy releases (Lv et al., 2024).

Next, Figure 8 presents the price distribution of AI toys from 2015 to 2024, categorized by age range. The data reveals that most AI toys are priced under 500 US dollars. To provide a more detailed exploration of the prevalent price range, a secondary, zoomed-in graph, which focused on the 0–250 US dollar price range, was created. It shows that most of the AI toys (82.4%, n = 75) fall within this range. This finding highlights the market's focus on affordability to foster the adoption of AI toys, which is likely a strategic response to increasing demand for accessible AI-driven learning and interactive tools for children (Aggarwal et al., 2023).

Price of toys per age group and year. All toys in our dataset (left) and only toys that cost less than $250 (right).

Additionally, over half of the toys (60.4%, n = 55) are priced at 200 US dollars or below, primarily targeting children aged 8 to 12. These lower-priced toys typically feature basic AI functions, such as speech and gesture recognition. This pattern reflects a pricing strategy that manufacturers are keen on capturing the larger market segment of middle-income families by making these toys more accessible and affordable. In contrast, AI toys designed for adolescents and adults (ages 13 and older) are priced significantly higher due to their advanced systems, such as Raspberry Pi. Those systems support sophisticated functions like inverse kinematics, facial recognition, and personality development. Interestingly, for other age groups, the data does not indicate a significant correlation between toy prices and the age of children in their target market, nor is there a discernible trend between the release year and the price of toys.

Despite technological advancements potentially leading to lower production costs, AI toy prices have remained relatively stable over time. This could be attributed to the steady demand and the ongoing refinement and enhancement of AI capabilities, which may not yield immediate cost reductions.

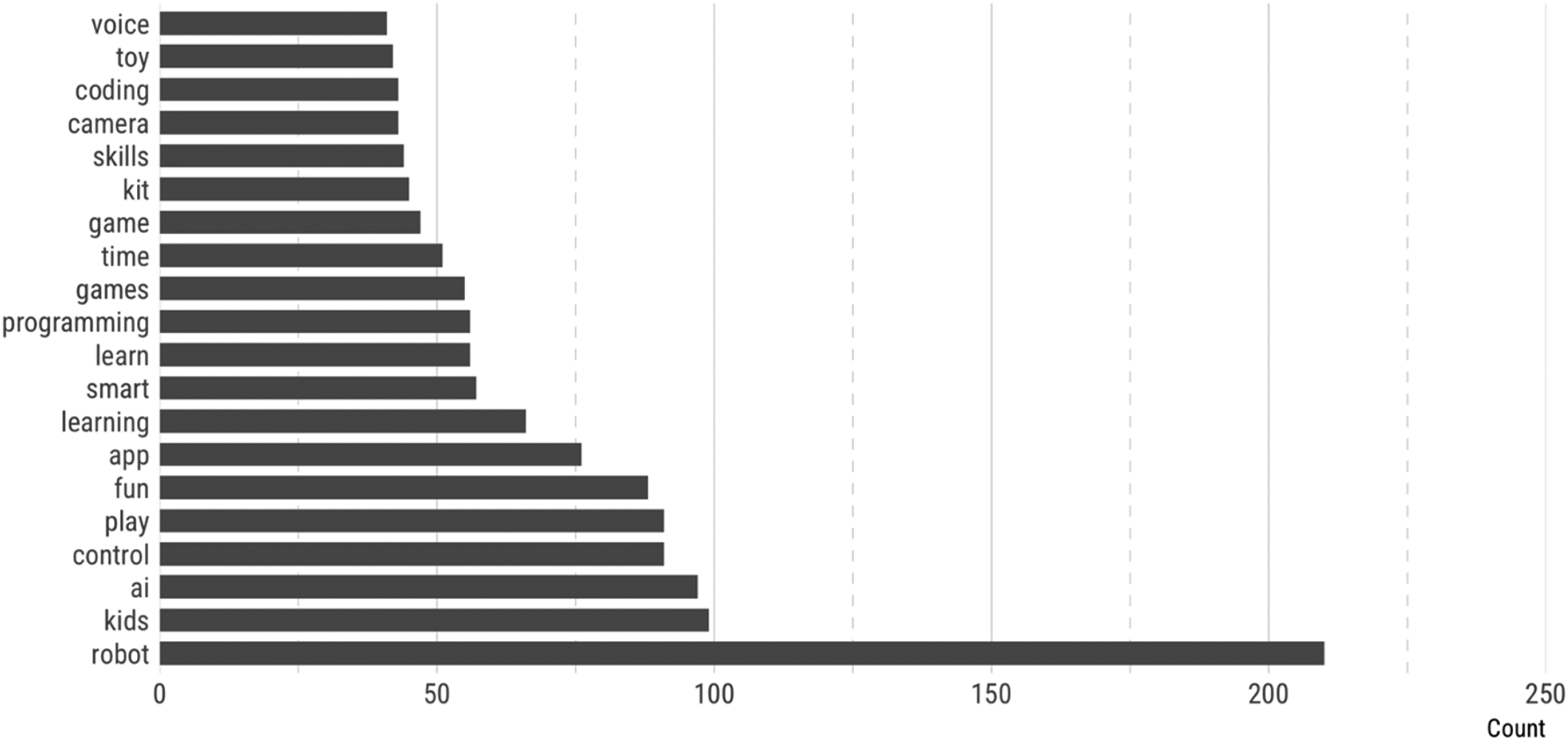

Figure 9 provides a detailed analysis of word frequency in AI toy descriptions. The term “robot” appears most frequently, underscoring a dominant focus on robotic functionalities. Other frequently used terms include “fun,” “app,” “learning,” “smart,” “learn,” “programming,” “time,” and “game.” These words emphasize both the entertainment and educational value of AI toys. For parents, the emphasis on “learning” and “coding” addresses the growing demand for educational play, positioning these toys as tools for developing Science, Technology, Engineering, and Mathematics (STEM) skills, such as coding and problem-solving. For children, the emphasis on “fun” and “game” ensures that these toys remain engaging and enjoyable, which is crucial for sustaining a child's interest and enthusiasm.

Word frequency in AI-toys descriptions.

Additionally, the frequent use of words like “camera,” “skills,” “coding,” and “voice” highlights the integration of advanced technological features, such as coding education, voice interaction, and skill development.

Overall, the linguistic patterns in AI toy descriptions reflect the growing sophistication of these products, particularly in terms of robotics, app integration, voice recognition, recording, and remote-control functionalities. This selection of terms suggests that manufacturers are strategically emphasizing the advanced technological features of AI toys to differentiate themselves in a competitive market.

The results of the TF-IDF analysis offer valuable insights into how AI toy marketing is tailored to appeal to the cognitive and developmental stages of different age groups (Figure 10).

TF-IDF of AI-toys descriptions.

For infants aged 0–2 years, product descriptions often emphasize interactive features like telling “stories” and playing “songs.” Since infants at this stage are not yet capable of complex interaction, such auditory stimuli become crucial for early language acquisition and comprehension. Some descriptions also highlight their role in assisting “grandparents,” “parents,” and “divorced” caregivers, suggesting these AI toys as a supplemental caregiving tool.

Additionally, these toys frequently mimic familiar objects like “puppies” and perform simple actions like fetching and walking, offering comfort and sparking curiosity through recognizable forms. Their predictable actions suit young children, who thrive on routine and repetition while exploring their environment. By incorporating sensory elements like sound and movement, these toys also foster early sensory and motor development.

For children aged 3–7, AI toys focus on stimulating cognitive, linguistic, and social development. They introduce more complex tasks, including role-play (“doctor”), strategic games (“chess”), and problem-solving challenges that encourage fine motor skills and logical reasoning. Social elements, such as competing with other children as “opponent[s],” promote collaboration and teamwork. Designed to create “challenges,” these toys encourage fine motor skills and cognitive development by engaging children in tasks that require critical thinking and sequencing.

As children in this age range develop greater vocabulary and communication skills, these AI toys also focus on interactive features like engaging in “conversations” and “repeat[ing]” children's words. These capabilities support language development and enhance communication skills. By engaging in real-life scenario dialogues, children learn essential social behaviors, including negotiation, collaboration, and understanding rules.

For children aged 8–12, descriptions increasingly emphasize educational features through terms like “drawing,” “STEM,” and “kits.” These toys blend entertainment with education (edutainment), aligning with school curricula in science, technology, engineering, and mathematics (STEM). Terms like “teach,” “guide,” and “assemble” suggest interactive, instructional components that foster practical skills and cognitive abilities through hands-on learning. This approach reflects manufacturers’ efforts to meet educational needs while maintaining engagement through entertaining content.

Interestingly, a notable pattern also emerges in the use of gendered pronouns for toys aimed at different age groups. For children ages 3–7, the pronoun “she” is often used to refer to toys, imbuing them with female personas. In contrast, toys for children ages 8–12 frequently use the pronoun “he.” This gendered marketing approach might reflect traditional societal norms or perceptions about gender roles. This suggests that gender norms may influence the design and marketing of AI toys, raising important considerations about how gender roles are reinforced in educational products.

For adolescents aged 13–17, AI toy descriptions shift toward more advanced educational themes, with terms such as “physics,” “kinematics gait,” “positioning,” and “bionic” becoming prominent. These advanced features align with the cognitive development typical of this age group, emphasizing logical thinking and the application of scientific principles. AI toys for this demographic offer more sophisticated tasks related to robotics and physics, designed to support and enhance the practical application of theoretical knowledge. The integration of complex scientific and technological tasks in AI toys reflects manufacturers’ goal of fostering deeper engagement with STEM subjects, encouraging adolescents to explore advanced concepts, pursue critical thinking and experimental learning, and apply theoretical knowledge in practical, real-world scenarios. By aligning with the educational curriculum of secondary education, AI toys for adolescents promote a passion for STEM disciplines and provide tools for hands-on experimentation with advanced scientific concepts.

Lastly, for the adult demographic (age 18 and above), AI toys are characterized by their emphasis on advanced functionalities. Frequently used terms such as “radar,” “lidar,” “depth,” “encoder,” “chassis,” “microros,” and “mapping” indicate a significant leap in complexity, catering to a technologically adept and mature audience. For example, “lidar” refers to the use of advanced sensing technology common in autonomous vehicles and robotics, enabling precise distance measurement and object detection. Similarly, “depth” and “mapping” suggest that these toys offer spatial awareness capabilities, allowing complex interactions within a three-dimensional environment. The terms “encoder,” “chassis,” and “microros” highlight the toy's focus on mechanical and software engineering, essential for building and programming sophisticated robotic systems.

Discussion

The industrial mapping findings indicate that AI toys are not merely playthings but tools designed to support cognitive and social development. This aligns with Jean Piaget's stages of cognitive development (Piaget, 1950, 1960), a useful framework for understanding how these toys influence children at various developmental stages. While this developmental framing is not unique to AI toys, the affordances they introduce distinguish them from their non-AI counterparts in important ways.

In the sensorimotor stage (0–2 years), both traditional and AI toys aim to stimulate sensory and motor engagement. However, AI toys differ in their ability to respond dynamically to infants’ actions, adjusting feedback based on movement, vocalizations, or patterns of interaction. This adaptive responsiveness introduces a layer of interactivity that static or pre-programmed toys cannot replicate. It transforms cause-and-effect learning into a more interactive and personalized experience.

In the preoperational stage (ages 2–7), children begin using words and images to represent objects but lack logical operations. Traditional toys often encourage symbolic thinking and imaginative play, but AI toys expand this through simulated conversation-like interactions, voice recognition, and emotional feedback. Unlike traditional toys that rely on the child's projections, AI toys co-construct these interactions with children, providing responsive engagement that potentially fosters social-emotional growth and perspective-taking.

During the concrete operational stage (ages 7 to 12), children begin to attend school regularly, and their reasoning becomes more logical and organized. AI toys scaffold these processes through real-time task adjustment and personalized challenges. They facilitate more sophisticated types of thought processes, such as hypothetical reasoning and deductive logic. Whereas traditional puzzles or games offer fixed challenges, AI toys tailor content to the user's performance, allowing for individualized cognitive development.

In the formal operational stage (12+ years), abstract reasoning and hypothetical thinking through deductive logic emerge. Here, AI toys often embed advanced STEM content, machine learning elements, or adaptive tutoring functions. Traditional educational toys (science kits, strategic games, etc.) often require independent exploration, but AI toys serve as interactive tutors, employing speech or vision recognition to guide inquiry-based learning and project-based exploration. These AI affordances potentially offer a more immersive and scaffolded educational experience.

The cumulative effect of these stage-specific interactions—from dynamic sensory responses in infancy to collaborative learning partnerships in adolescence—reshapes how children conceptualize their relationship with these technologies. From a child's perspective, AI toys transcend their role as passive media to become conversational partners in play. Through interacting, children perceive them as friends rather than mere objects. This perception fosters trust and emotional attachments that encourage them to share information they might otherwise keep private (Jones and Meurer, 2016).

This phenomenon reflects what Guzman and Lewis (2020) identify as a fundamental shift in human-technology relations—from technologies serving as channels for human communication to becoming communicative subjects themselves, blurring the traditional boundaries between human communicators and technological mediators. This boundary-blurring is particularly potent in childhood, where cognitive barriers to accepting non-human entities as social partners are naturally lower.

As Sundar (2020) observes, users “cannot help themselves but be social toward machines”—a tendency that manifests strongly when children encounter AI toys. Sundar's Theory of Interactive Media Effects (TIME) explains how AI toys trigger social responses through both their design features (cue route) and the interactive exchanges they enable (action route). The AI toys’ apparent agency—autonomy and reciprocal engagement—positions them as what Sundar calls “co-operative” machines. They create such convincing social presences that shaping how children share information and form relationships, ultimately transforming the fundamental nature of play itself. This transformation clearly depends on whether these toys deliver the AI capabilities they promise.

Yet our industrial mapping (IM) revealed significant discrepancies between marketing claims and actual capabilities. Several toys were excluded from our analysis for selectively showcasing surface-level “smartness” while concealing the absence of genuine AI—a strategy clearly designed to lead caregivers to overestimate the toys’ intelligence and reliability.

For example, the AI Flying Orb Ball Toy (Amazon, 2024) claims “intelligent flight” and “AI-powered motion sensors” but is merely a drone with preset gyroscopic patterns. Similarly, the Interactive Robot Dog (Amazon, 2025) is marketed as a “lifelike AI companion” yet performs a narrow repertoire triggered by simple sensors. Marketing presents these actions as autonomy in the “frontstage,” but the “backstage” reality is static, non-learning technology.

Beyond these false claims, numerous AI toy categories—including chatbot dolls, storytelling companions, and therapeutic devices—often add superficial AI features to traditional toy designs. This approach generates short-term novelty but delivers little sustained value (Hall et al., 2022; McStay and Rosner, 2021). Such toys initially excite children, who quickly become bored upon discovering the “backstage” limitations behind the “frontstage” promises of creativity.

This practice of capitalizing on parental aspirations for educational toys through mislabeling products as “AI-powered” extends beyond mere marketing hype—or what Markelius et al. (2024) termed “AI snake oil.” It exemplifies Taylor's (2018) concept of fauxtomation: the deception of claiming full automation while much of the work remains performed by humans who are often unpaid, underpaid, or hidden. This manipulation aligns with Newlands’s (2021) concept of strategic visibilization, whereby companies deliberately control the visibility of human labor—rendering it invisible, visible, or hypervisible—to serve commercial interests.

With AI toys, this manipulation specifically conceals parental labor, mirroring how tech companies obscure the contributions of data workers, particularly those in the Global South whose work enables machine learning algorithms (Muldoon et al., 2024). Rather than reducing parental labor as advertised, these toys merely redirect it into new, invisible tasks: device setup, troubleshooting, usage monitoring, and data management. As Montiel Valle and Shorey (2024) observe, these persistent “frictions” and “glitches” not only undermine the supposed autonomy of AI systems but also reveal the hidden human work sustaining them.

Given these concerns, industry claims require urgent independent verification. Indeed, our academic mapping (AM) suggests that researchers are only beginning to grasp the implications of this AI-driven revolution in toy design. While researchers continue to categorize these products under broader terms like “smart” or “connected” toys, the industry has moved far beyond basic connectivity. This failure to recognize AI toys’ distinctive features and implications has created a dangerous disconnect—one where children are already engaging with technologies whose impacts remain largely unexamined.

This gap becomes especially apparent in the realm of emotional AI. Consider the current marketplace: the Miko 3 robot already detects and responds to children's emotions with the stated goal of enhancing empathy (Miko, 2021). MOXIE uses machine learning to analyze expressions and vocal tone, providing tailored emotional support (Lee, 2024). Perhaps most sophisticated is the LOVOT 3.0, marketed under the concept of “Emotional Robotics,” which uses 50 sensors, a microphone, and three cameras to recognize up to 1000 people and detect emotions, deliberately fostering emotional attachment through affectionate behaviors (Lee, 2019). While these emotionally responsive toys are forming bonds with children worldwide, researchers are only beginning to explore potential psychological impacts.

The urgency of bridging this gap between commercial reality and academic understanding demands immediate action across several fronts. Longitudinal studies must evaluate the long-term cognitive and emotional effects these already-deployed AI toys have on children. Interdisciplinary collaboration between psychologists, educators, and computer scientists will enable more comprehensive assessments of both current and emerging AI toy functionalities. Equally critical is establishing standardized frameworks that recognize AI toys as a distinct category, enabling more targeted research questions and regulatory responses. Finally, developing ethical guidelines for AI toy design and marketing will help ensure these technologies are deployed responsibly, safeguarding children's rights and well-being.

Conclusion

This study provides critical insights into the evolving landscape of AI toys and highlights the need for academia and industry to align their efforts in addressing rapid technological change. AI toys are not just a reflection of technological progress but also tools capable of influencing cognitive and social development, as outlined by Piaget's Theory of Cognitive Development. The increasing integration of STEM elements into AI toys aimed at older children further emphasizes the industry's role in promoting early digital literacy and problem-solving skills.

Meanwhile, the study reveals a significant gap between industry innovation and academic research. While the toy industry has rapidly incorporated emotional AI and advanced functionalities, academic discourse has lagged in recognizing AI toys as distinct from broader categories like smart toys, connected toys, and the Internet of toys. This disconnect creates an urgent need for research on AI toys, particularly regarding ethics, privacy, and child development. The industry's advancements in emotional AI demand immediate attention from both scholars and policymakers to ensure that these technologies are implemented responsibly, with proper safeguards in place.

This study has some limitations. First, some insights drawn from industry sources, such as product descriptions and marketing materials, may reflect promotional bias. These materials are often designed to emphasize benefits rather than critically assess risks, which may result in an overly optimistic portrayal of AI toy capabilities.

Second, the study's focus on academic prototypes excluded a broader body of literature addressing ethical, legal, and privacy-related concerns. This choice was made to maintain analytical clarity and stay within space constraints, allowing for a structured analysis of system-level innovation. However, it limits the interdisciplinary scope of this article. These broader concerns are addressed in a companion manuscript currently in preparation. We also acknowledge the value of incorporating user studies, regulatory analyses, and investigations of commercially available AI toys in future work.

Third, patent literature was not included, as most prototypes examined are early-stage academic projects not consistently linked to formal intellectual property filings. Future studies could benefit from including patent databases to track innovation trajectories and commercialization potential.

Fourth, our reliance on five publisher-hosted databases—ACM Digital Library, Springer Link, IEEE Xplore, ScienceDirect, and SAGE Journals—represents a limitation of this review. In February 2024, we conducted a preliminary search in publisher-agnostic databases (Scopus, Web of Science, and Google Scholar) and additional major publishers (Wiley and Taylor & Francis). These searches either returned no meaningful findings on AI toy prototypes or works already encompassed by the five publishers on which our review relied. In hindsight, we acknowledge that incorporating these sources into our formal analysis would have been preferable for completeness, even if they had contributed no additional findings. Moreover, since February 2024, relevant works have appeared in these omitted platforms. Future reviews should therefore incorporate them.

Expanding the scope in these ways would allow future research to offer a more balanced understanding of both the technological advancements and the societal implications of AI toys. This would provide valuable insights for parents, educators, policymakers, and manufacturers, helping them better understand the implications of AI toy technology and guiding the development of more responsible and ethical AI toy practices.

Footnotes

Acknowledgments

The authors thank the two anonymous reviewers, Editor-in-Chief Matthew Zook, Associate Editor Jing Zeng, and our friend Stephanie Craft for their valuable feedback and suggestions on earlier drafts of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.