Abstract

Scholars are increasingly concerned about social biases in facial analysis systems, particularly with regard to the tangible consequences of misidentification of marginalized groups. However, few have examined how automated facial analysis technologies intersect with the historical genealogy of racialized gender—the gender binary and its classification as a highly racialized tool of colonial power and control. In this paper, we introduce the concept of auto-essentialization: the use of automated technologies to re-inscribe the essential notions of difference that were established under colonial rule. We consider how the face has emerged as a legitimate site of gender classification, despite being historically tied to projects of racial domination. We examine the history of gendering the face and body, from colonial projects aimed at disciplining bodies which do not fit within the European gender binary, to sexology's role in normalizing that binary, to physiognomic practices that ascribed notions of inferiority to non-European groups and women. We argue that the contemporary auto-essentialization of gender via the face is both racialized and trans-exclusive: it asserts a fixed gender binary and it elevates the white face as the ultimate model of gender difference. We demonstrate that imperialist ideologies are reflected in modern automated facial analysis tools in computer vision through two case studies: (1) commercial gender classification and (2) the security of both small-scale (women-only online platforms) and large-scale (national borders) spaces. Thus, we posit a rethinking of ethical attention to these systems: not as immature and novel, but as mature instantiations of much older technologies.

Introduction

Race and gender are enculturated via everyday reality, shaping our interactions with each other and the world. Such identities are often interpreted through visible characteristics that become marked as socially meaningful forms of difference. We have been taught to associate specific skin colors and physical characteristics (like eye, nose, and mouth shape) with certain racial categories. So, too, might we quickly label a stranger across the street as a man or woman based on our perception of their visual presentation. To many, these processes of classification may seem mundane and intuitive. At the very least, they have been historically viewed as objective, measurable, and scientific.

As various social theorists and Science and Technology Studies (STS) scholars have argued, technologies of classification do not offer up passive reflections of embodied difference; rather, they serve as a key means by which socially constructed ideologies of difference become material (Bowker and Star, 1999; Barad, 2007; Foucault, 2012; Benjamin, 2019). Classification technologies have frequently been bound up in political projects that racialize and gender specific bodies, with the consequence of justifying their marginalization, exclusion, and even their extermination. The face has served as a particularly productive site for such projects, with its classification tied to determinations of personality, ability, and morality in ways that justify hierarchies of race and gender/sex. 1 and should be treated as such. Pseudoscientific practices such as physiognomy—the use of facial features to make claims about qualities of mind and character—and phrenology—the linking of cranial metrics to intellect and personality traits—have frequently been put to work to advance colonial regimes of gender and racial subjugation, as demonstrated in American slavery, South African apartheid, the Holocaust, and the exclusion of women from leadership (Staum, 2003).

The notion that gender/sex can simply be read from the body—and face—is now being embedded into new digital technologies. Such a shift is particularly apparent in computer vision research and practice—specifically in automated facial analysis and body analysis technologies, a broad collection of machine learning methods being employed to detect and classify attributes of the human face and body. The response outside of machine learning communities has largely been that of growing concern, particularly with regard to how computer vision will contribute to discrimination against marginalized racial and gender groups. Misrepresentation of identity (e.g. Scheuerman et al., 2020), loss of opportunity (e.g. Chen et al., 2018), and discriminatory policing (e.g. Angwin et al., 2016) all stand to be exacerbated by computer vision tools. In response, machine learning researchers have sought to develop increasingly diverse training and evaluation datasets (e.g. Merler et al., 2019) and de-bias otherwise skewed data (e.g. Wang et al., 2019). In doing so, these machine learning researchers hope to create tools whose effects are equal across salient categories of difference.

However, recent interventions from the field of algorithm fairness—such as those that attempt to balance prediction error rates across subgroups (e.g. Hardt et al., 2016)—do not consider the racialized and gendered analogs of facial classification technologies. Nor do they revise their underlying premise: that social systems of classification have an essence; an intrinsic, biological substrate that can be detected via the face once-and-for-all with the assistance of “objective” automated tools. We call this process auto-essentialization: the use of automated technologies to re-inscribe essential notions of difference, with the face currently serving as the medium of choice.

Auto-essentialization bears a family resemblance to Browne’s concept of digital epidermalization (2010; 2015), extending Fanon’s classic formulation of epidermalization (1967). For Fanon, epidermalization is “a way of thinking of the ontological insecurity of the racial body as it experiences its ‘being through others”’ (Browne, 2010). Browne extends the colonial act of reading race onto the body of racialized into the world of the digital, electronically mediated, and biometric.

By contrast, in this paper, we focus in particular on the search for the essence of gender/sex in the face. We argue that the contemporary auto-essentialization of gender/sex via the face is both racialized and trans-exclusive: it asserts a male/female binary as biological and fixed, and it elevates the White face as the ultimate model of gender/sex difference. We argue further that it is necessary to view such technologies through the prism of colonialism since, as we will show, the gendering/sexing and racializing of the body and face was and continues to be a core technology of imperialist empire-building. In seeking to locate the supposed essence of gender/sex in the face, automated facial analysis tools echo the imperialist ideologies that underpinned the development of physiognomy and other scientific projects of classification, meaning that these contemporary technologies have the potential to reify racist, sexist, and cisnormative beliefs and practices.

In what follows, we begin with a brief overview of automated facial analysis, describing when it emerged and how it operates as a technology of classification. We then trace specific moments in the joint lineage of efforts to classify the racialized face and gendered/sexed body, focusing on first on the colonial origins of the ideology of the gender binary and its relationship to the scientific pursuit of biological sex. We then turn to the tools that have served as historical analogs to contemporary facial classification efforts, such as criminal sketches and facial measurements, and show how they were used for colonial projects of domination. Next, we draw the connections to two contemporary cases of automated facial analysis: (1) commercial systems of gender/sex classification and the ideologies of racial hierarchy that they perpetuate, particularly through the lens of the scholarly and artist work of Joy Buolamwini (2018) and the Gender Shades project (Buolamwini and Gebru, 2018); and (2) the use of facial analysis to cast transgender bodies and faces as inherently suspect to crossing virtual and national borders, focusing on the “women’s only” app, Giggle, and biometric security systems. We conclude by reflecting on the implications of auto-essentialization as a guiding concept for critical engagement with practices of automation.

The site of inquiry: Automated facial analysis

Computational facial analysis technologies first emerged in the 1960s but have rapidly advanced in the last decade. Such technologies are now embedded into everyday life in many countries across the globe. Facial recognition is commonly used in airports, by local police departments, and by government agencies, like the FBI and ICE in the United States (Ghaffary and Molla, 2019). In everyday commercial activity, facial classification is often sold to businesses for determining demographic information about potential customers for targeted advertising (e.g. Kuligowski, 2019). Almost every large technology company in the United States—Google, Microsoft, IBM, Amazon—offers its customers access to a facial analysis service. In a more blatantly racialized and nefarious case, facial classification is being used by the Chinese government to target Uighurs and send them to “re-education” camps (Mozur, 2019).

Broadly, facial analysis systems work much like anthropometric measurements of facial features performed by human beings, but through automated, large-scale pattern recognition. Facial recognition, like other biometric technologies, attempts to match an individual’s identity to records in a database based on images of their face. Facial classification, on the other hand, attempts to classify individuals according to socially meaningful categorical schemas, such as age, gender, and race. Scheuerman et al. (2019) highlight the difference between different facial analysis techniques.

All automated analysis systems are premised on their data: the hundreds to thousands of images that are used to train the model to complete a specific task. In the case of facial detection tasks, images of faces are used to train a model to detect what patterns in an image equate to a human face. In the case of facial recognition tasks, the system is trained to distinguish an individual’s face from others in a database. The more diverse the images of a single individual available to the system to process, the more successful it should become at accurately recognizing that individual. Classification systems, on the other hand, are trained to recognize predefined features of a face or body. Such systems use these facial features to classify an individual in terms of specific demographic categories of interest, such as those associated with race or gender (Scheuerman et al., 2020). To do so, (human) annotators assign categories to each image in the database, often with little explanation as to how they made their determination. The model then reads those images to learn visual patterns in the data, such as what patterns are present in those images that are labeled as “female” by annotators. A system then uses this information to classify new, previously unseen images.

One method that researchers use to assess whether the data being fed into automated systems is sufficiently diverse is to measure variation in facial landmarks: features of the face believed to be commonly associated with particular racial and gender categories. For example, IBM’s Diversity in Faces dataset, which was created as a response to the lack of diversity in prior datasets, employs what researchers call “craniofacial science”: “[T]he measurement of the face in terms of distances, sizes and ratios between specific points such as the tip of the nose, corner of the eyes, lips, chin, and so on” (Merler et al., 2019, 9). Such facial landmarks are not generated by facial analysis technologies themselves but rather are identified and used by human researchers to compare facial variation across categories of race and gender. In doing so, researchers imply that certain craniofacial distances and shapes are objectively associated with specific races and genders, and that this diversity has thus been adequately accounted for by the model. As we explore in the following section, however, the search for an essence of gender/sex in the face has also been taken up as its own project within computer science.

Towards automated gender/sex classification

Automated gender/sex classification has not been restricted to making inferences based on the face, also incorporating other aspects of the body, appearance, and human behavior. For instance, Lin et al. (2016) provide a survey of automated gender/sex classification methods, which they characterize as relying on a number of different categories, including static body features (such as bodies, eyebrows, hand shapes, and fingernails), dynamic body features (such as gait, motion, and gesture), apparel-based features (such as hairstyle, clothing, and footwear), and other biometrics (such as fingerprints, iris, voice, and ears). However, computer science literature has centered the face as the primary site and means of automated gender/sex classification. Some of these initial texts motivate this with reference to cognitive neuroscience literature, which claim that humans can correctly classify the gender/sex of individuals primarily from the face.

Some of the earliest work on facial gender/sex classification by Golomb et al. (1991) adopts the neural network method, which is underpinned by the notion that there exists some biological essence of sex in the face that transcends gendered features (such as hair style, facial hair, and make-up) and which the human brain is capable of accurately detecting when classifying faces according to a binary male/female schema. According to this early research, “[p]eople can capably tell if a human face is male or female,” relying on the responsiveness of “single neurons” in the visual cortex and amygdala to identify patterns across individual faces (Golomb et al., 1991, 1). Golomb et al. (1991) then replicated this visual-neuronal concept of gender/sex in their “SexNet,” technology, improving upon the classification performance of humans. However, with an average error rate of 8.1% (compared to 11.6% in humans), this technology revealed the complexity of human faces, rather than their binary essence.

Other early researchers relied on “a geometrical feature vector,” identifying individual features of the face to categorize by gender/sex (Brunelli and Poggio, 1992). One alleged virtue of this method is that it draws attention to which “features”—in this case, facial landmarks in the form of facial components such as eyebrows, nose shape, pupillary distance, and face shape—are most significantly associated with gender/sex. Figure 1 from Brunelli and Poggio (1992) study highlights those features that they found to be the most important: “[o]f the sixteen features only three are given a noticeable weight: distance of eyebrow from eyes, eyebrows thickness and nose width. These are followed by the vertical position of the nose and mouth and the two radii describing the lower chin shape” (Brunelli and Poggio, 1992). While Brunelli and Poggio (1992) claimed that their vector approach “proved sufficient for obtaining good performance” and could be used for gender classification purposes going forward, their technique was only 87.5% accurate when applied to new images (and only 92% accurate on the images that were used to build the model). That is, the “discriminating features” of the face do not map onto a male/female binary. Given the study relied on predominantly White undergraduate students 2 this raises further questions of who was amongst the 13% not accurately classified by the model, or, put differently, whose faces were privileged as “accurately” modeling maleness/femaleness.

Feature weights for gender classification from Brunelli and Poggio (1992).

With the emergence of eigenfaces in 1987, manual labeling of every face in a dataset with facial feature information was no longer necessary (see Stevens, 2021). Now, with the advent of deep learning methodologies and large amounts of available facial imagery via the web, most modern facial analysis systems rely on some kind of deep learning method—which, given the precedent of eigenfaces, also does not require manual measurement or annotation of facial landmarks. As we will show, however, the very notion that facial features can be linked to systems of classification—and that the “essence” of gender/sex (and race) can be located in the face—is a worldview that has been put to work with devastating effects in colonialist projects.

Racializing the face, sexing the body: A colonial project

It is no accident that the emergence of race as a system of population classification coincided with the expansion of Western European colonial projects. Nor, as we will show, is it a coincidence that the scientific pursuit of “sex” as a supposedly binary form of embodied variation, and the related effort to impose a Western model of binary gender/sex difference onto non-Western bodies, gained momentum in this same period. Rather, the essentialization of gender/sex and race via practices of measurement and classification—including the face and skull—has been used to assert “natural” hierarchies of gendered/sexed and raced bodies and, in the process, legitimatize projects of colonial control and exploitation.

In what follows, we trace the analog lineage of contemporary practices of auto-essentialization. We outline first the history of efforts to erase bodies deemed to be outside of a binary gender/sex schema through imperialist interventions. We then turn to the scientific classification of sex, with a particular focus on how the ideology of sex as a biological binary has been put to work to assert the superiority of the White Western body. To our knowledge, and in contrast with contemporary automated techniques, existing literature has not addressed how these analog predecessors sought the essence of the binary everywhere but the face. We suggest, however, that they normalized the pursuit of a gender/sex essence as a scientific project. Importantly, however, the face was simultaneously being established as a site of classification in other ways, namely with respect to ideologies of racial difference. In outlining the history of physiognomy as a technology of racialization that positioned whiteness as inherently superior and as written on the face, we argue that the parallel, entangled, and often mutually constitutive efforts to scientifically classify gender/sex and race serve as the foundations for the practices of auto-essentialization that we are witnessing today.

Gender/sex binary as colonial project

The notion that faces and bodies can be classified in terms of a distinct gender/sex category has been a constitutive element of a wide range of imperialist projects intended to assert White and Western supremacy. Within such projects, it is specifically the binary classification of gender/sex that has been valorized and then claimed as evidence of the alleged superiority of European civilization. This endeavor has involved at least two simultaneous moves: first, the imposition of a gender/sex schema onto diverse identities and bodies, including those viewed under a Western gaze as deviating from the gender binary; and second, the association of such bodies with non-Western peoples, often with the assistance of the scientific and medical community (Schiwy, 2007; Stryker, 2008). The underlying logic is as follows: binary gender/sex variation is superior and, as such, must be constructed and claimed as a defining feature of more “pure” and “advanced” European societies. As such, the search for an “essence” of binary gender/sex, including in those societies where such a schema was not pervasive, has always already been entangled with projects of racial supremacy and domination.

As various scholars have shown, the binarization of identity and body is a culturally—and historically-specific phenomenon, while bodies that deviate from the binary both predate and have persisted in spite of European colonial empire (Vincent and Manzano, 2017; BSF, 2015). With respect to gender/sex expression, Crouch and David (2017) detail how various non-binary identities—including those that might in universalizing Western projects be understood as “transgender”—were present in Navajo, native Hawaiian, and Indian cultures, presenting a challenge to the hierarchical gender/sex binary promulgated under colonial rule. Indeed, in Yoruban societies even the concept of ‘woman’ and associated notions of gender hierarchy did not exist prior to the arrival of colonial rule (Oyěwùmí, 1997). In the European context, diverse gender/sex expression can be seen in the examples of Roman eunuchs, the köcek of the Ottoman Empire, and the Femminielli of Italy. Importantly, these identities were not necessarily stigmatized prior to the rise of colonial empire (Vincent and Manzano, 2017).

As demonstrated by Meissner and Whyte (2017), settler colonialism, such as that practiced in the North American context, sought to erase the cultures of indigenous peoples—and purportedly “save” them from their own “savagery”—in part by erasing gender/sex fluidity, polyamory, and homosexuality and replacing it with a monogamous, heterosexual binary. The binarizing of gender/sex was imposed through boarding schools and missionization, where indigenous women, girls, and Two-Spirit people were subject to higher rates of sexual violence than others (Driskill, 2016). In India, British colonialists sought ‘to bring about the gradual “extinction” of

Further, we highlight that Two-Spirit, hijra, and many other non-Western identities have often been interpreted as non-binary or trans expressions of gender/sex, despite not necessarily being experienced as a form of gender/sex variation at all (Driskill, 2016). As scholars like Dutta and Roy have noted, various minority groups have been forced to adopt such labels in order to be recognized and supported by Western non-government organizations, revealing how “transgender” movements themselves, while designed to liberate Western trans people from the confines of the sex/gender binary, have resulted in trapping non-Western identities within Western conceptions of gender/sex (Dutta and Roy, 2014).

In sum, gender/sex was made to align with binary ideology via a range of discursive, regulatory, and violent acts that positioned indigenous, non-binary, and non-gendered identity variation as a distinctly non-European phenomenon, in the process shoring up the alleged superiority of Western civilization and justifying the presence of European colonial powers. As we will show in the following section, a similar process occurred with scientific and medical knowledge-making put to work to uncover the “essence” of binary gender/sex in the body, again constructing White Europeans as embodying this essence in its more pure form.

Faceless gendered/sexed bodies

Feminist science studies has long criticized the scientific search for the biological roots of “sex” as a presumed binary system of classification (Fujimura, 2006; Sanz, 2017; Jordan-Young, 2019). Richardson (2013), for example, has shown how 20th century geneticists pursued the notion of X and Y chromosomes as determinants of “sex,” overlooking numerous ambiguities in their research to do so. Jordan-Young (2011) has similarly illustrated the ideological character of neuroscientific efforts to find the binary reflected within the organization of the brain. At every step, such scientific projects have ignored the “awkward surpluses” of data that contradict the notion that brains, hormones, chromosomes, and other body parts must be essentially female or male (Fujimura, 2006).

Similarly, feminist scholars such as Farrell and Kessler (1998) and Davis (2015) have shown how the rush to medically “correct” intersex bodies reveals the intensely social rather than natural origins of binary “sex,” with a range of social institutions obscuring any ambiguities that might undermine its stability as a “natural” system of classification. Entangled with medical notions of the appropriately gendered/sexed body is the primacy of heterosexuality: how intersex bodies are “corrected,” and genitalia in particular, has been guided by the goal of heterosexual penetrative intercourse—a goal that is also reflected in colonialist, imperialist, and state population practices (Canaday, 2009).

Critically, the feminist interrogation of the scientific and medical management of gender/sex reveals how such efforts have very often been entangled with—and put to work in service of—projects of racial hierarchy and Western imperialism. Focusing on European physician and sexologist Havelock Ellis, Markowitz (2001) has documented how he relied on measurements of the female pelvis to claim White European superiority: the broader hips of White women, he claimed, were “a characteristic of the highest human races, because the largest heads must be endowed also with the largest pelvis to enable their large heads to enter the world” (pp. 402). By contrast, Ellis describes the pelvis of Black women as “the least developed, the narrowest, and the flattest” (Markowitz, 2001, 402). To signal racial superiority, this contrast also needed to be in the appropriate direction: Ellis claimed that Jewish people were characterized by wide hips in their (allegedly effeminate) men, and narrow hips in their (allegedly masculine) women, which he presented as evidence of their “racial” inferiority (Markowitz, 2001). The greater (and more “correct”) the dimorphic contrast between women and men, the more physically and intellectually advanced a population could claim to be, with the gender/sex binary serving as “a human ideal against which different races may be measured and all but White Europeans found wanting” (Markowitz, 2001, 51).

Other scientists at this time were preoccupied with measuring and classifying the genitalia of women of color, in a search for the essence of gender/sex that was again racialized from the outset (e.g. Fausto-Sterling, 1995). Magubane (2014) reveals how this logic shaped the historical accumulation of clinical knowledge about intersex variation, which in the mid-1800s capitalized on the European colonization of Sub-Saharan Africa. European physicians sought to document and classify what they asserted was the prevalence of “ambiguous genitalia …among southern African blacks” (pp. 770). One doctor described the labia of African, Persian, and Turkish women as “naturally much longer than in our European regions” (pp. 770). Echoing Markowitz, Magubane observes that it was the alleged “lack of social and biological differentiation between men and women [that] marked Blacks as Black while also indexing their fundamental difference from and inferiority to whites” (pp. 770). Magubane notes that in the U.S. context, from the era of slavery to Jim Crow, the urgency that has characterized medical efforts to “normalize” intersex genitalia has focused first and foremost on White bodies, since “no one cared if a black person was threatened with the ‘ruin of character and peace of mind’ brought on by doubtful sex” (pp. 776).

Gender/sex, then, has frequently been enacted via scientific and medical practice as essentially binary, biological, and White. But what role did the face play as a medium for such projects? Cranial measurements did at times figure in this history, as documented by neuroscientist Rippon (2020), who explains how craniologists in the mid-to-late 1800s adapted their theory of orthognathism—the notion that well-balanced angles in the face indicated intellectual capacity—to affirm the superiority of the (White European) male brain. Upon discovering that women were in fact more orthognathic than men (on average), craniologists argued that children, too, often had “advanced orthognathism,” meaning women could simply be classed as intellectually underdeveloped. Primarily, however, scientists seeking the biological essence of binary gender/sex have relied on body parts other than the face. Yet critically, these analog precedents to auto-essentialization established the scientific (and racialized) pursuit of binary, biological sex as a legitimate enterprise. As we will show in the following section, the parallel pursuit of the biological essence of racial hierarchy ensured that the face would be readily available for contemporary efforts of gender/sex classification.

Classifying the racialized face

The European imperialist projects that transformed global relations from the 15th century onward can be understood as motivated by both the pursuit of material wealth and the ideology of White supremacy (Du Bois, 1947; Jalata, 2013; Mills, 2015). Scientific schemas of racial classification, aimed at reading the biological “truths” of racial difference and hierarchy from the body, developed as a key technology alongside lucrative exploits like the trade in slave labor and the occupation and indoctrination of non-European civilizations. In the words of Browne (2015), the “naturalization of difference

Anthropometry in particular—the measurement and classification of variation in the outward physical form of human bodies—emerged as the technology for distinguishing White Europeans from racialized “others.” In the process, race became a classification of both body and mind. Once differences in outward physical appearance were established as categorically meaningful, Western scientists could assert links to “innate characteristics, such as temperament, predispositions, and [cognitive] abilities” (Hirschman, 2004, 399). Writing in the late 1700s, slave owner Edward Long published a taxonomic account of racial difference, according to which Black Jamaican men were “‘innately primitive and corrupting” while Black Jamaican women were depicted as simultaneously resistant and hypersexual (Browne, 2015, 96).

Beliefs about measurable physical difference flourished in the field of anthropological criminology, which sought to establish the relationship between criminal inclination and physiological characteristics, particularly those associated with the head and face. The tools of the trade included rulers, calipers, eye color charts, and Bertillon cards (Pavlich, 2009). The founder of the field, the early 19th century Italian criminologist Cesare Lombroso, was inspired by previous work in both phrenology—the notion that personality characteristics are determined by the morphology of the cranium, founded by the 18th century German neuroanatomist Franz Joseph Gall—and physiognomy—the more ancient notion that moral and personality characteristics are determined from the physical form, especially the face (Gray, 2004). Phrenologists and physiognomists focused on developing systematic bodily measures for degeneracy, criminality, and intellect, providing the foundations for what Gray terms “the physiognomic decade of German intellectual-cultural history” that would later influence eugenic policy (pp. xxix).

In the hands of criminologists like Lombroso, the tools of physiognomy were put to work to associate criminal degeneracy with racial difference. Influenced by Darwin’s theories of evolution, Lombroso used cranial measures taken from southern Italian inmates, as well as those of colonized peoples in Asia, Africa, Australia, and New Zealand, to develop his theory of criminality as atavist: heritable and ingrained in certain bodies, with northern European men emerging as the most advanced (Horn, 2003; Bradley, 2010; Carrington and Hogg, 2017). While there has been much discussion of the links between Lombroso’s theories of degeneracy and race-making in the 19th century, his project can also be understood as thoroughly gendered. Much of Lombroso’s work involved the extensive examination of the male face and body, casting by default women as “other.” While he concluded women had less propensity for criminality than men, Lombroso also utilized his limited measurements of the female body to argue that women as a group were intellectually and morally inferior to men (Harrowitz, 1994; Jacobson, 1995). So, too, were women characterized as having their own innate vices, namely a physiological drive towards cruelty and lying (Lombroso et al., 2004). In this way, White European men were positioned as the physiologically advanced standard against which all “others” could be marked as deficient.

In the 1930s, physiognomy became a scientific colonial export with dramatic consequences. One setting was Rwanda, where Belgian scientists traveled in order to measure the skulls and features of different Rwandan ethnic groups (Straus, 2006; Curtis, 2011; André, 2018). The Belgian imperialists determined that there existed three essentially different “racial” groups that could be ranked in order of “superiority”: the Tutsi, the Hutu, and the Twa. Before colonization, the Tutsi and Hutus did not consider themselves separate races—rather, this classification came into being via the Belgium colonial project (Berry and Berry, 1999, 32). It was Europeans who instilled the Hamitic myth, which posited Tutsis as an invader in their own country, descending instead from Egypt and possessing superior intellect, as demonstrated through their European features, which “[resembled] the Negro only in the colour of his skin” (Krüger, 2010, 95). The Belgian government formalized this hierarchy by creating a system of racial segregation in 1931, in which Tutsis were placed in positions of power while Hutus were kept subservient (Berry and Berry, 1999, 32). In 1933, the Belgian forces instituted identity cards that listed the card-holder’s ethnicity (Berry and Berry, 1999). These cards were later relied upon during the Rwandan genocide of 1994, though subjective determinations based on outward physical appearance were also common, fueled in part by the racialized caricatures, originating in the colonial period of the 1930s, that were disseminated through extremist media propaganda (Baisley, 2014).

Again, the intersection of race with gender/sex is consequential for this catastrophic period in Rwandan history. The destruction of victims’ bodies during the genocide was fueled by the notion of Tutsi beauty as European, particularly the sexualized caricatures of Tutsi women, used to emasculate Hutu men. Thus the mutilation of Tutsi noses (Krüger, 2010) and the rape and torture of Tutsi women can be understood as the devastating outgrowth of the simultaneously racialized and gendered systems of classification established under colonial rule (Sharlach, 1999; Holmes, 2008).

In sum, Lombroso and other physiognomists worked to establish a “scientific” discourse of racial essence and hierarchy that not only consolidated a Black/White color line but which also sowed the seeds of violence amongst different non-White groups. Critically, this discourse enacted a link between the racialized face and the morality and criminality of a given individual. This period also provided the theories, tools, and methodologies to support the development and implementation of eugenic policies. While physiognomy and phrenology are now largely regarded as pseudoscientific, their legacy was to normalize the scientific use of the face as a resource believed capable of revealing the supposed essence of social systems of classification.

Contemporary practices of auto-essentialization

While the face has not featured extensively in scholarship focused on the scientific and cultural assignment of gender/sex, we see in the preceding analysis the analog logic of classification that is echoed in contemporary automated facial analysis practices. Whereas physiognomy as a practice was more focused on intellect and character in developing a taxonomy of race, the practices of bodily classification that were implicated in defining gender/sex as binary also established racialized norms for what female/male should properly look like. Contemporary automated facial analysis projects serve to reinscribe this colonial order by reproducing—and automating—essentialized (and racialized) notions of gender/sex difference. In the next section, we discuss how the automated classification of gender/sex is less of a novel development inaugurated by computing, but one that has a common lineage with colonial practices of essentialized measurement. We do so by highlighting two cases: (1) the privileging of racial hierarchies within commercial applications of automated gender classification; and (2) the use of automated facial recognition to protect cisgender norms of womanhood and state borders.

Case study 1: Euro-centric gender classification

The epigraph of this article is drawn from Joy Buolamwini’s spoken word piece, AI, Ain’t I A Woman? 2018. Buolamwini is best known for her studies on the failures of AI systems in detecting the faces of Black women (Buolamwini and Gebru, 2018; Raji and Buolamwini, 2019). Buolamwini and Gebru (2018) assess the accuracy of commercial gender classification systems, including Microsoft, IBM, and Face++’s commercial APIs. They show that these commercial systems, at the time of testing, had a false positive rate on the order of 34.4% for darker-skinned women compared to lighter-skinned men, the group with the best performance on these systems. Raji and Buolamwini (2019) show how auditing the algorithms of private companies can promote change by revealing the extent of misgendering of Black female subjects. In this case, all three companies released updated facial analysis software platforms with improved accuracy between racialized groups of women and men, which in turn led to an improved accuracy rate for these models overall.

These projects can be read in two ways. The first, limited critique fits squarely within the auto-essentialist frame, in that it assumes commercial classification systems can indeed read gender/sex from the face accurately, and what’s required are simply more data points that incorporate training images of women of color. Corporate interventions have capitalized on this interpretation; IBM offered quickly offered up their Diversity in Faces dataset noted above (Merler et al., 2019), while Facebook recently published their Casual Conversations dataset, featuring individuals of various skin tones and genders (Hazirbas, 2021). These interventions, however, continue to reproduce auto-essentialist assumptions of the utility and scientific basis of computational identity classifications.

The second critique assumes a broader lens, highlighting how systems have racialized gender/sex in such a way that, like past systems of classification, they prioritize whiteness as the standard for binary facial classifications. By highlighting how gender/sex classification works less effectively on Black women, computer vision systems are revealed as reinscribing a history that has weaponized gender categories against women of color, portraying them as less feminine and, as a consequence, dehumanizing them.

In AI, Ain’t I A Woman?, Buolamwini makes this historical connection explicit by referencing Sojourner Truth’s historic 1851 speech “Ain’t I A Woman.” The speech, delivered to a room filled with (mostly White) women, spoke to the inability of her contemporaries in the women’s rights movement to comprehend or incorporate advocacy for and by Black women. The speech challenged the “essentialist thinking that a particular category of woman is essentially this or essentially that” (Brah and Phoenix, 2004).

In her spoken word piece, Buolamwini (2018) speaks on the legacy of famous and well-known Black women, including Truth, Ida B. Wells, and Michelle Obama, to highlight how computer vision systems misrecognize and misgender them. While displaying an image of Wells, Buolamwini speaks of her as the “data science pioneer//Hanging facts, stacking stats on the lynching of humanity” while the screen pans into a search result which displays the result that she “appears to be male” with 92.9% certainty (see Figure 2). Numerous other errors of this kind masculinize the women, or misrecognize elements of their images, such as their hair or clothing—for example, a high-school image of former U.S. First Lady Michelle Obama is labeled as wearing a toupee and an image of Wells is labeled wearing a “coonskin cap.”

Screenshot from Buolamwini (2018)’s AI, Ain’t I A Woman? showing Ida B. Wells being labeled as male by Amazon Rekognition.

Such misclassifications expose the colonialist and racist paradigms that have tied essentialized gender/sex to whiteness. This artistic intervention highlights that, despite accuracy gains in commercial AI systems, the relationship between gender/sex and race that was established via science under colonial rule and promulgated during the era of chattel slavery in the Americas—that White faces and bodies shall be the embodiment of the binary, to the denigration of all “others—continues to serve as the foundation upon which new modes of auto-essentialization are built. Further improvement of the accuracy of systems may decouple whiteness from current formulations of gender/sex; however, doing so continues to replicate auto-essentialization by seeking the essence of gender/sex difference in the structure of the face. Here we see the trap that many marginalized researchers have been pushed into: to participate on the terrain of corporate interventions, they need to operate within the seemingly inescapable modern reality that these tools exist and are regularly deployed, and thus they should be improved within the confines of that reality. This is akin to the critique of ‘inclusion’ offered by Hoffmann (2020), that ‘[i]nclusion positions a certain kind of technology (and, more often than not, tech company) as integral to social progress”’ (2020, 12).

Case Study 2: Gendered “Others” as sexualized security threats

As in early examples of automated gender classification techniques, the implicit claim in using computer vision to secure “female-only” spaces is the belief that they can reveal the “real” identity of a suspect individual: that there is some essence of (binary) gender/sex that might escape the untrained human eye, but which technology can unearth. Giggle, which bills itself as a “female social network” and a “girls-only space,” uses facial analysis technology (“bio-metric gender verification software”) to bar people who its algorithms do not recognize as women from entering the space. 3 At the time of writing (April 2021), the app’s website reports that they rely on technology developed by Kairos, which is described as “a leading face recognition AI company with an ethical approach to verification, that reflects our globally diverse communities. Through computer vision and deep learning, they recognise females in videos, photos, and the real world”. 4 That is, representatives of Giggle believe in the existence of a female essence, one that is discoverable via the face and which rises above the confounding effects of “diversity” (which they claim technology can account for). The policing of gendered spaces can be seen in action in the app’s use of classification technologies: the app’s creators explicitly acknowledge that “trans-girls [sic] will experience trouble with being verified,” and require them to undergo extra verification steps (Schiffer, 2020).

Rather than inclusion, however, the goal appears to be to exclude trans women from this essentially “female” space. Instead of removing the use of facial analysis technology, the creators of the app doubled down. Giggle CEO Sally Grover wrote a series of blog posts on Medium to defend her decision to use automated gender classification. 5 She describes her experiences with sexual violence, which led her to create “female-only” spaces to guard against the advances of men, who are depicted as using deception in order to infiltrate women’s-only spaces and assault “real” women.

Grover also appeals to the supposed authority of “science” on binary sex: “Because I agree with science, and biological sex, I’m a TERF.” 6 The Giggle website claims that the science they rely upon is sound, in the process distancing themselves from controversial classification projects of the past: “It’s Bio-Science, not pseudo-science like phrenology,” (Schiffer, 2020). Here, Grover is leaning into the auto-essentialist frame while explicitly attempting to distance it from its colonial antecedents. Yet, as we have explicated above, one can “follow the science” of reading gender/sex from the face back to its colonialist origins.

Grover defends the platform on the grounds of its transnational reach, stating that “Giggle is used in 88 countries around the world. We have users in countries where female rights are virtually non-existent. All of these women, plus women in the USA, Australia, UK and Europe, use Giggle to connect in a safe, private and female environment.” The impulse to single out “the USA, Australia, UK and Europe” as separate from “countries where female rights are virtually non existent” has its own colonialist undertones, suggesting White, former and neoimperial states are those in which femininity is properly defended and articulated, all while ignoring the role of colonial projects in enforcing the binary in the first place. This move privileges the White European binary as a corrective to gender non-conforming identities and presents the platform as a virtual border that excludes “male” predators located in those “oppressive,” non-White nations.

Giggle’s position may be an outlier in the business of building social platforms. However, the desire to locate the “real” or “essential” female for the purpose of securing borders—virtual and physical—is perpetuated by computer scientists, machine learning, and computer vision researchers more broadly. For example, computer scientist Karl Ricanek collected videos of transgender individuals documenting their use of hormone replacement therapy (HRT) via transition videos (Mahalingam, 2013). These videos were taken from YouTube, largely without consent, and compiled into the HRT Transgender Dataset (which has been silently retracted). Ricanek characterizes the people in the dataset as edge cases: a threat to the “accuracy” of facial recognition models. While Ricanek apologized for the use of these videos and taking them without consent, he justifies his research problem with recourse to security and crossing of borders: “What kind of harm can a terrorist do if they understand that taking this hormone can increase their chances of crossing over into a border that’s protected by face recognition? That was the problem that I was really investigating” (Vincent, 2017). Ricanek’s computer vision research boasts over $18 million in funding, much of it from the US national security apparatus, including the IARPA, the FBI, and other federal security agencies.

As Beauchamp argues, however, the use of the HRT dataset in this way is not a new practice and is consistent with the existing logic of U.S. border security, which has long relied on surveillance techniques to racially profile travelers (Beauchamp, 2016). As a consequence, in addition to positioning transgender bodies as “new objects of study,” it the HRT dataset also increases the vulnerability of those trans people with already highly surveilled racial and ethnic identities (Beauchamp, 2016). It is further likely that the assigned-male body attracts particular scrutiny in such settings, given applications of facial recognition technology regularly position such faces as inherently threatening and suspicious (Keyes, 2018).

In his statement above, Ricanek echoes the sentiment of Grover: that transgender people are suspect, imposters who rely on deceit in order to trespass the borders guarding female-only spaces or the borders of empire. Ricanek and others are not outliers within computer vision research; many of the justifications proffered for essence-seeking facial analysis and automated gender/sex classification focus on border security. Like earlier scientific efforts to reveal (binary) gender/sex once and for all, auto-essentialist technologies contribute to shoring up the White, cisgender face as the “natural” standard that must be protected from racialized, gender non-conforming “others.”

Conclusion

We have argued that modern automated facial analysis can be understood as a form of auto-essentialization: the latest chapter in the search for an “essence” of gender/sex, this time focusing on the face and relying on the depoliticized tools of automated (and hence allegedly objective) digital technologies to confirm the racialized ideology of the female/male binary. It is rooted in the complex entanglement of imperialist ideologies found in historical practices of measurement, quantification, and classification of human beings, particularly for the control and disciplining of marginalized groups. We also add the following nuance to our argument: historically, the cranium and the face were sites of racialization through the practices of phrenology and physiognomy, while it was the body that served as the major site for the colonial regulation of gender/sex. By contrast, contemporary automated analysis technologies largely rely on the face over the body as a site for both racialized (and cisnormative) gendering.

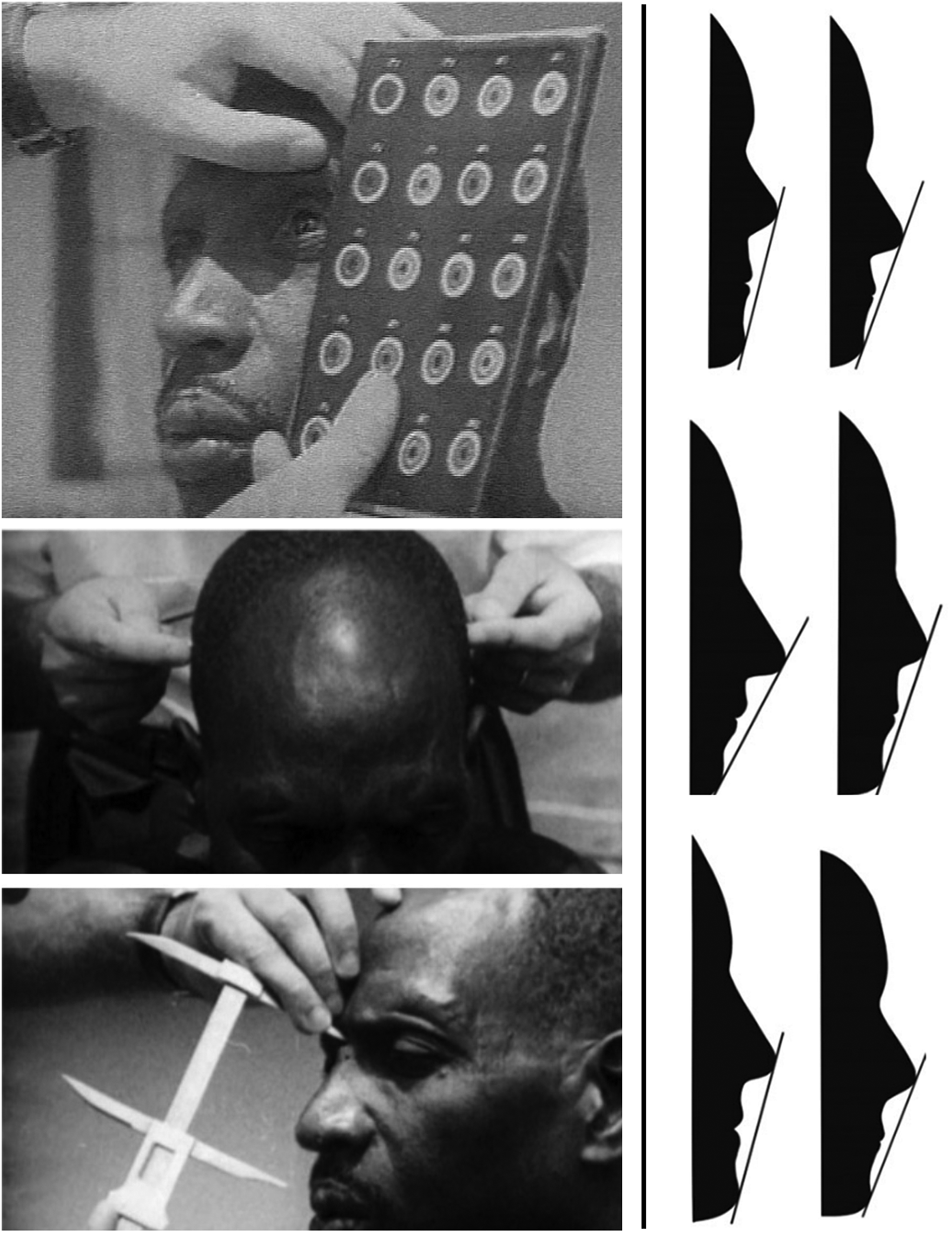

Thus, while the auto-essentialization process is rooted in the colonial analog precedents that we have outlined, it is also doing something new: it is combining these histories to claim the face as a new site for “scientifically” classifying gender/sex. Thus, it is not merely an example of historical repetition but can also be considered a technological shift that is actively creating new possibilities of, and justifications for, classification projects. The face likely serves as a convenient medium for automated classification efforts for a number of reasons, such as the accessibility of faces and facial images to cameras, the proposed lack of bodily invasiveness compared to other biometric approaches, and the lack of consent needed on behalf of those classified. However, we have argued that such technologies only become possible—even imaginable—because of the worldview and practices espoused by colonial projects of gendered and racialized classification (Figure 3).

Left: Belgium scientists taking physiological measurements. Photos from Curtis (2011). Right: Silhouettes of racial profiles from Fu et al. (2014), stating: “From left to right: African-American (Male/Female), Asian (Male/Female), Caucasian American (Male/Female).” The figure originally published in Davidenko (2007).

While previously an amalgamation of bodily sources, from genitals to chromosomes, the rise of automated facial analysis tools has transformed the face into a window into the essence of binary gender/sex. This essence is conceptualized in terms of the categories reified through colonialist Western histories and medical science practices—a dichotomy of male/female, man/woman, masculine/feminine, which are made to align in a unidirectional and heteronormative binary. This essence is further racialized through a reliance on data that has been (and remains) systematically biased towards White faces, reinscribing binary embodiments of gender/sex as inherently White, with faces of other races as less pure, and Black women in particular posited as inherently masculine.

As racialized worldviews of gender/sex become embedded into the real world applications, they re-embed colonialist histories and imperialist agendas in everyday practice. Gendered classifications in applications like Giggle, which are presented as scientific and therefore objective, narrow the possibilities of seeing and knowing gender/sex otherwise, reinforcing binary categories that actively exclude trans and non-binary identities, while ignoring the unique social locations of Two Spirit, hijra, and a wide spectrum of indigenous and other modern gendered (and non-gendered) identities. Such exclusion directly impacts individuals attempting to engage with or benefit from digital services; but, beyond individuals, it also impacts entire communities of people through a familiar legacy of othering: conform or be erased.

Automated facial analysis technologies aimed at recognizing and verifying specific trans individuals, regardless of gender transition, are even larger in scale, representing state biopolitics—in particular, an inherent distrust of specific groups at the intersection of race and gender/sex. This distrust of minoritized groups is manifest in an entanglement of public, private, and larger state interests. An alarming example is academic research aimed at identifying transgender people across transition out of concern for malicious “biometric obfuscation,” funded by national surveillence entities like the FBI Biometric Center of Excellence (e.g. Mahalingam, 2013; Vijayan et al., 2016). Furthermore, domestic programs police access to social services, such as health care, access to women’s shelters, and, increasingly, sports spaces for transgender girls and children (Bettcher, 2007; Spade, 2008; American Civil Liberties Union, 2021). Like so many other modern technological development agendas—such as blockchain (Jutel, 2021), big data efforts (Couldry and Mejias, 2019), data privacy laws (Coleman, 2019), and social media (Oyedemi, 2019)—the intricate web of the modern “artificial intelligence arms race” weaves together commercial and state interests with globalization and “trans panic” about the encroachment of gender non-conforming people into sex-segregated spaces.

Historical legacies of race and gender/sex hierarchies cannot be separated from the modern sociopolitics of identity; in their current state, automated facial analysis tasks, from classification to recognition, are intrinsically tied to biopolitical projects, whether designed to explicitly classify characteristics like gender/sex or to implicitly privilege specific racialized and gendered faces (and bodies). Therefore, an open question remains: is it possible for automated facial analysis to be separated from the legacy of gender colonialism and imperialist logics we have detailed in this paper? Perhaps highly constrained efforts, outside the purview of militaristic nation states and corporate interests, focused for example on increasing equity for historically marginalized groups, can mitigate some of the problematic origins of auto-essentialization. Regardless, auto-essentialist tools like automated facial analysis must be accountable to the histories that have shaped them.

Footnotes

Acknowledgements

We’d like to thank Janet Jacobs, not only for her feedback on a preliminary version of this work, but for all the insights gleaned from her course on Gender, Genocide, and Collective Memory. We’d also like to thank Emily Denton, Anthony Pinter, Junnan Yu, Samantha Dalal, Jed R. Brubaker for their feedback throughout the process of writing this paper.

Declaration of conflicting interest

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

Publication of this article was funded by the University of Colorado Boulder Libraries Open Access Fund.