Abstract

The rapid development of machine-learning algorithms, which underpin contemporary artificial intelligence systems, has created new opportunities for the automation of work processes and management functions. While algorithmic management has been observed primarily within the platform-mediated gig economy, its transformative reach and consequences are also spreading to more standard work settings. Exploring algorithmic management as a sociotechnical concept, which reflects both technological infrastructures and organizational choices, we discuss how algorithmic management may influence existing power and social structures within organizations. We identify three key issues. First, we explore how algorithmic management shapes pre-existing power dynamics between workers and managers. Second, we discuss how algorithmic management demands new roles and competencies while also fostering oppositional attitudes toward algorithms. Third, we explain how algorithmic management impacts knowledge and information exchange within an organization, unpacking the concept of opacity on both a technical and organizational level. We conclude by situating this piece in broader discussions on the future of work, accountability, and identifying future research steps.

Keywords

Introduction

From restaurants to try, movies to watch, or routes to take, machine learning (ML) algorithms 1 increasingly shape many aspects of everyday human experiences through the recommendations they make and actions they suggest. Algorithms also shape organizational activity through semi- or fully automating the management, coordination, and administration of a workforce (Crowston and Bolici, 2019). Termed “algorithmic management” or “management-by-algorithm”, this trend has come to be understood as the delegation of managerial functions to algorithms (Lee, 2018; Lee et al., 2015; Noponen, 2019). A defining feature of algorithmic management is the data which fuels the predictive modeling techniques, with many acknowledging that the political economy of data capture is a significant driver in transforming labor norms (Dourish, 2016; Newlands, 2020; Shestakofsky, 2017).

Prior research on algorithmic management has focused on platform-mediated gig work, where workers on non-standard contracts usually commit only short-term to a given organization (Harms and Han, 2019; Jarrahi and Sutherland, 2019). In what Huws (2016) describes as a “new paradigm of work”, algorithmic systems in the gig economy track worker performance, perform job matching, generate employee rankings, and can even resolve disputes between workers (Duggan et al., 2019; Wood et al., 2018). Operating as the primary mechanism of coordination in the gig economy (Bucher et al., 2021; Lee et al., 2015), platforms can support millions of transactions a day across disaggregated workforces (Mateescu and Nguyen, 2019). Much of what we know about algorithmic management comes from nascent research in this domain (e.g. Meijerink and Keegan, 2019; Sutherland and Jarrahi, 2018), where a particular focus has been placed on how algorithmic management both substitutes and complements traditional managerial oversight (Cappelli, 2018; Newlands, 2020).

However, algorithmic management is not isolated to platform-mediated gig work (Möhlmann and Henfridsson, 2019). Recent years have also witnessed the parallel development of algorithmic management in more standard work settings, referring to work arrangements that are stable, continuous, full time, and embrace a direct relationship between the employee and their unitary employer (typically organizations with clearer structures and boundaries) (Schoukens and Barrio, 2017). In contrast to most gig work settings, algorithmic systems in standard organizations emerge within pre-existing power dynamics between managers and workers. As a sociotechnical process emerging from the continuous interaction of organizational members and algorithmic systems, algorithmic management in standard work settings reflects and redefines pre-existing roles, relationships, power dynamics, and information exchanges. Deeply embedded in pre-existing social, technical, and organizational structures of the workplace, algorithmic management emerges at the intersection of managers, workers, and algorithms. As von Krogh (2018) explains, both traditional and non-traditional work settings will be increasingly shaped by the “interaction of human and machine authority regimes” (p.406).

For instance, algorithms can assist Human Resources (HR) to filter job applicants (Leicht-Deobald et al., 2019), fire inadequately fast warehouse workers, and improve work morale through fine-grained people analytics (Gal et al., 2020). The growth of algorithmic management should therefore be understood as a plurality of decisions made by human managers to alter work processes. Each decision occurs not in a vacuum but entwined with diverse considerations and consequences. However, in-depth understanding of the in-situ interactions between workers, managers, and algorithms in standard work contexts are still relatively uncharted (Duggan et al., 2019; Jarrahi, 2019; Wolf and Blomberg, 2019). As algorithmic management systems move from cutting-edge research to routine aspects of everyday organizations, research is needed to explore the moral implications of algorithmic management and labor conditions such systems create (Leicht-Deobald et al., 2019).

This article’s key contribution, therefore, is to look at how the emergence of algorithmic management across both standard and non-standard work settings interfaces with pre-existing organizational dynamics, roles, and competencies. This article is structured as follows. First, we will establish algorithmic management as a socio-technical concept, discussing how it develops through organizational choices. Following, we will explain how algorithmic management impacts power dynamics at work, both increasing the power of managers over workers, while simultaneously decreasing managerial authority. Next, we will explore how algorithmic management shapes organizational roles through the development of both algorithmic competencies and oppositional attitudes, such as algorithm aversion and cognitive complacency. In this, we directly address how both the intertwined technical and organizational opacity of algorithms shapes workers’ competencies, as well as information and knowledge exchange. We will conclude by situating this piece in broader discussions on the future of work, accountability, and identifying future steps.

Algorithmic management as a sociotechnical concept

Discourse around algorithmic management often translates into a simplified narrative of algorithmic systems progressively replacing human roles (Jabagi et al., 2019). However, examining algorithmic management in standard organizational contexts means looking beyond the idea that algorithms will operate autonomously as technological entities (von Krogh, 2018). Algorithmic management should rather be understood as a sociotechnical process emerging from the continuous interaction of organizational members and the algorithms that mediate their work (Jarrahi and Sutherland, 2019). This sociotechnical perspective underscores the mutual constitution of technological systems and social actors, where relationships are socially constructed and enacted (Sawyer and Jarrahi, 2014). In this, humans and algorithms form “an assemblage in which the components of their differing origins and natures are put together and relationships between them are established” (Bader and Kaiser, 2019: 656). Mutually constituted with organizational surroundings, algorithmic management both reflects and redefines existing relationships between managers and workers. The boundaries between the responsibilities of managers, workers, and algorithms are not fixed and are constantly negotiated and enacted in management practices. In other words, understanding the emerging role of algorithms in organizations means taking a sociotechnical perspective and moving from questions of replacement or substitution toward questions of balance, coordination, contestation, and negotiation.

Standard organizations implement algorithmic management by drawing on a variety of data-driven technological infrastructures, such as automated scheduling, people analytics, or recruitment systems. Automated scheduling systems, for instance, have been used widely in the retail and service industries to predict labor demand and schedule workers based on data regarding customer demands, seasonal patterns, and past sales data (Pignot, 2021). In the case of people analytics, algorithmic systems leverage data on worker behavior to offer actionable recommendations for managers regarding key decisions such as motivation, performance appraisal, and promotion (Gal et al., 2020). Through systems such as Microsoft Teams, organizations can also collect data about the minutiae of a worker’s activity, productivity, and other granular aspects of their performance ranging from daily patterns of a specific employee’s behaviors all the way to macro trends of how an organization manages its HR over time toward strategic goals (Galliers et al., 2017). Voice analysis algorithms, for example, are used in call centers to decide whether workers express adequate empathy. Meanwhile, algorithms in Amazon warehouses closely monitor workers’ performances, rate their speed, and terminate workers if they fall behind (Dzieza, 2020). Algorithmic decision-making is also becoming popular for recruitment as manifested through CV screening and algorithmic evaluations of telephone or video interviews (Köchling and Wehner, 2020; Yarger et al., 2020).

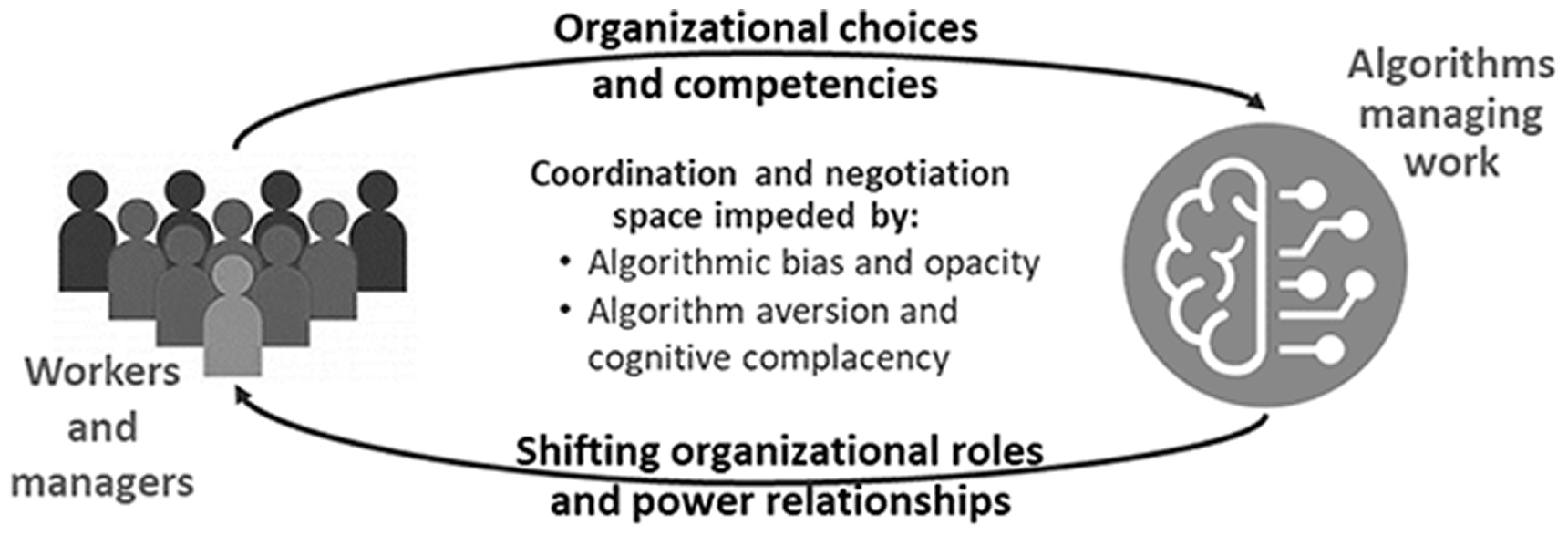

Focusing primarily on the technology involved in algorithmic management, some perceive algorithms as “technocratic and dispassionate” decision makers that can provide more objective and consistent decisions than humans (Kahneman et al., 2016; Kleinberg et al., 2018). However, it is important to underline how algorithms lack any sense of individual purpose: they must have their objectives defined and algorithmic systems must be deliberately fed and trained on organizational data. As such, there is a significant element of organizational choice in where algorithmic management is implemented and in terms of which processes are replaced or augmented. Moreover, there is considerable organizational choice around whether to implement the suggestions and recommendations made by the algorithmic systems. In this, automated implementation of algorithmic suggestions can be regarded as a distinct organizational choice. As Fleming (2019: 27) notes: “It is not technology that determines employment patterns or organizational design but the other way around. The specific use of machinery is informed by socio-organizational forces, with power being a particularly salient factor.” We go one step further, and as illustrated in Figure 1, present algorithmic management as a sociotechnical phenomenon shaped by both social and organizational forces. In the rest of this article, we broach how algorithmic management may shift organizational roles and power structures while being shaped by organizational choices and competencies.

Algorithmic management as a sociotechnical phenomenon representing both social and technological forces.

Considering algorithms within a broader notion of management thus makes visible possible ramifications of algorithmic management. It requires that we think about interactions between humans and algorithms in terms of the direction and development of organizations over the long term, and not just in the day-to-day experiences of the worker. Understanding that algorithmic systems are socially constructed and embedded in existing power dynamics also means these systems are neither created nor function outside the biases rooted in the organizational cultures within which they are implemented (Kellogg et al., 2020). For this reason, algorithmic systems can inherit biases embedded in previous decisions, and reenact historical patterns of bias, discrimination, and inequalities (Chan and Wang, 2018; Lepri et al., 2018). If an organization has demonstrated historical biases in hiring, firing, pay, or other managerial decisions, these biases will be “learned” by the algorithm (Keding, 2021). Lambrecht and Tucker (2019), for example, discovered fewer women than men were shown ads about STEM careers even though the ads were gender neutral by design. Amazon also scrapped its AI-powered recruiting engine since it did not rank applicants for technical posts in a gender-neutral manner (The Guardian, 2018). The company discovered the underlying algorithm would build on the historical trends of resumes submitted to the company over a 10-year period, which were reflective of the broader male-dominated IT industry.

Algorithmic management shapes power relationships

Increased power to managers

Primarily, algorithmic systems aid managers to overcome cognitive limitations in dealing with data overload (Jarrahi, 2018). Algorithmic systems, such as for CV filtering or time-scheduling can streamline work processes and overall improve job quality for those involved. However, the use of advanced algorithms creates new opportunities for managers to exercise control over the workforce (Kellogg et al., 2020; Shapiro, 2018). Similar to the implementation of organizational information systems, often it is the workers who are the subjects of algorithmic management, while managers are those implementing the decisions. In tune with the long-standing spirit of Taylorism and scientific management, algorithmic management carries the risk of treating workers like mere “programmable cogs in machines” (Frischmann and Selinger, 2018). As Bucher et al. (2019) note in their study of Amazon Mechanical Turk workers, this commodification and alienation of workers is a widespread issue particularly in digitally-mediated work where power imbalances can have wide social impacts.

Platform-mediated gig work is critically dependent upon algorithmic systems for control and there is a clear hierarchy of power between the workers, the platform, and the service recipients (organizations in some cases). Current research highlights implicit and explicit power asymmetries between the workers and service recipients, as well as between the workers and platforms (Newlands, 2020; Shapiro, 2018). As clients, service recipients enjoy an upper hand in transactions. Likewise, platforms use different mechanisms of control to withhold information from the workers or even remove them from the platform. These control mechanisms are visible and often focus on three major outcomes: ensuring the integrity of transactions, protecting the platform from disintermediation, and monitoring workers’ performance (Jarrahi et al., 2019; Newlands, 2020). Since many work activities are mediated via platforms, gig work organizations can utilize “soft” forms of workforce surveillance and control to monitor how workers spend their time and where they are located (Duggan et al., 2019; Shapiro, 2018). Wood et al. (2018) also found that algorithmic management creates symbolic power structures around features such as reputation and ratings. Yet, while “analogue” parallels to gig work exist, such as standard taxi driving, food-delivery work, or creative freelancing, gig work platforms usually emerged as new organizations without pre-existing relationships among the management and the workforce. Deliveroo, for instance, was founded ex nihilo rather than as the digital transformation of a pre-existing food-delivery company. Uber, similarly, was never a taxi company with “standard” workplace relationships. Since research to date on algorithmic management has primarily focused on gig work settings, it is therefore important to highlight how key lessons and findings on gig work are not perfectly translatable to a standard context.

In more standard work settings, where digital platforms and algorithmic systems are not the primary means of organizing work, algorithmic control adds to pre-existing power dynamics and regimes of control. In these contexts, most workers are connected to the organization through more conventional coordination mechanisms such as organizational hierarchies and traditional employment arrangements. As such, this sociotechnical emergence is a critical factor in determining the motivation, scope, and implementation of algorithmic systems. Rather than mass-scale algorithmic management, as observed in gig work, in standard organizations, only a small number of managerial functions may be replaced or augmented with algorithmic systems. One key reason is the high-cost and uncertain returns of algorithmic systems, which usually have to be sourced from a third-party vendor (Christin, 2017). The extra effort needed to draw on algorithmic decisions also comes with heavy investments of time and energy, such as aligning human and algorithmic cognitive systems (Burton et al., 2020).

A primary consideration for the more gradual roll-out of algorithmic management in standard work settings is that augmenting or replacing managerial processes has direct implications for either increasing or decreasing the managerial prerogatives already in place. As such, we can observe that the roll-out of algorithmic systems is swiftest when increasing managerial power. Automated scheduling systems, widely adopted in the retail industry, shift more power from workers to managers. The dynamic and “just-in-time” approach presented by these systems makes a justification for allocating shifts in short notices and smaller increments in response to changes in customer demands. Such fluctuating schedules imposed by the automated systems create negative consequences for workers, such as higher stress, income instability, and work–family conflicts (Mateescu and Nguyen, 2019).

Using algorithms to nudge workers’ behaviors can be more subtle, but no less effective. For example, organizations may use sentiment analysis algorithms in their people analytics efforts to assess the “vibes” of teams and to identify ways to increase productivity and compliance (Gal et al., 2020). Inspired by the gig economy, where the gig workers may get pay raises based on client reviews, firms such as JP Morgan build on algorithms to collect and analyze constant feedback to evaluate the performance of employees and ascertain compensation (Kessler, 2017). Darr (2019) also describes the use of “automatons” to control workers in a computer chain store, where the company leverages algorithmically generated sales contests to control workers’ behavior.

Algorithmic surveillance techniques have also emerged in more totalizing organizations, which are using wearables such as wristbands or harnesses to analyze workers’ performance and nudge them toward desirable behaviors through vibration (Newlands, 2020). In warehouses, for example, algorithms can automatically enforce pace of work, and in some cases result in demoralization of the workers or even physical injuries (Dzieza, 2020). Truckers’ locations and behaviors can also be monitored through GPS systems, allowing the dispatchers to algorithmically evaluate their performance (Levy, 2015). Algorithmic surveillance has also been implemented to manage flexible and remote workforces. During the Covid-19 pandemic, which required millions of workers to work remotely, organizations started to roll out systems such as InterGuard to monitor the remote workforce by collecting and analyzing computer activities such as screenshots, login times, and keystrokes as well as measuring productivity and idle time (Laker et al., 2020; Newlands et al., 2020). Although researchers have identified strategies that workers use to work around algorithmic control and reclaim their agency (Bucher et al., 2021), workers often find them difficult to confront and change (Watkins, 2020).

Surveillance through algorithms in the workplace can also take a “refractive” form, which refers to the approach in which “monitoring of one party can facilitate control over another party that is not the direct target of data collection.” (Levy and Barocas, 2018: 1166). Retailers, for example, are known to leverage customer-derived data (gathered based on many data points such as close monitoring of foot traffic) and algorithm-empowered operational analysis to optimize scheduling decisions, automate self services, and even replace workers. Brayne (2020) examines how the rollout of big data policing strategies and algorithmic surveillance technologies introduced to monitor crime came to be used to surveil police officers themselves. The officers’ reaction against this effort is telling of the ways that surveillance can reconfigure work and professional identity. Officers reacted not only against the surveillance as an entrenchment of managerial oversight and a threat to their independence, but also as a move away from the kind of experiential knowledge that an officer brings to their work.

Decreased power to managers

While emerging as a powerful mechanism to increase the control of management as a whole, algorithmic management can also decrease the power and agency of individual managers. For years, research has documented the decline of middle managers in post-bureaucratic organizations (Lee and Edmondson, 2017; Pinsonneault and Kraemer, 1997) and has sought to define what functions and identity these positions may entail (Foss and Klein, 2014). Recent advances in AI and the prospect of introducing algorithmic management may further complicate these roles (Noponen, 2019).

In many traditional organizational settings, the implementation of algorithms is still based on dashboards or “decision support” systems, which generate recommendations to managers on actions to take. Explaining how managers may work alongside algorithms, Shrestha et al. (2019) describes three categories: full delegation, sequential decision-making, and aggregated decision-making. In cases of full delegation, managerial agency is almost entirely subsumed into the algorithmic systems. The key moment of managerial intervention becomes the design and development of the system.

Yet, delegating decision-making to algorithms may deprive managers of critical opportunities to develop tacit knowledge, which primarily emerges from experiential practices. Tacit knowledge often derives from opportunities to practice judgment when directly involved in decision-making. Social practices of decision-making through trial and error help humans retain and internalize practical knowledge. A recent empirical study about recruiters using AI-based hiring software provides a nuanced picture (Lin et al., 2021). While AI-based sourcing tools provided opportunities to identify new talent pools and newer keywords and skill sets associated with different jobs, recruiters did not have enough control over algorithmic recommendation criteria. The lack of control put recruiters in a position where they merely accepted good recommendations and sometimes deprived them of the chance to develop their own strategies. Taking away these first-hand experiences may risk turning managers into “artificial humans” that are shaped and used by the smart technology, not the other way around (Demetis and Lee, 2018). While a significant amount of work on algorithmic management has focused on decision-making, contemporary notions of management imply a large array of other skills and responsibilities. This provokes us to think about what it would mean for an algorithm to attempt the role of liaison, spokesperson, or figurehead, for instance.

An interesting research direction along these lines focuses on whether and how algorithmic management may transcend to the realm of what Harms and Han’s (2019) called “algorithmic leadership,” where smart machines assume leadership activities of managers such as motivating, supporting, and transforming workers. Future research may ask whether algorithms can go beyond effective handling of task-related elements of leadership and automate relational aspects as well, given the self-learning capacities of cutting-edge techniques such as AlphaZero. 2 Future research may explore “how humans come to accept and follow a computer leader,” (Wesche and Sonderegger, 2019: 197) or “whether, or when, humans will prefer to work with AIs that appear to be human or are clearly artificial” (Harms and Han, 2019: 75). There is an alternative argument that there will be a premium on soft skills and human intuition (Ferràs-Hernández, 2018). In a more collective cultural sense, workers and their supervisors would enter relationships based on social exchange, and would view their supervisor’s decisions in the context of norms of reciprocity and commitment (Cappelli et al., 2019). However, such an empathetic relationship based on goodwill is almost impossible to develop with an algorithmic manager (Duggan et al., 2019).

Competencies for an algorithmic organization

Shifting roles

The emergence of algorithmic management is changing the roles of workers and managers based on the need to manage and interact with algorithmic systems. A sociotechnical perspective argues that social actors play an active role in shaping and appropriating technological systems. In other words, workers and managers are not passive recipients of algorithmic results; they could find ways to develop a functioning understanding of algorithmic systems, work around issues such as trust, and align the system to their needs and interests.

Addressing the need for new organizational roles to handle algorithmic systems, Wilson et al. (2017) identify three emerging jobs: trainers, explainers, and sustainers. Workers need to teach algorithms how to perform organizational tasks, open the “black-boxes” of algorithmic systems by explaining their decision-making approach to business leaders, and finally ensure the fairness and effectiveness of algorithms to minimize their unintended consequences. Gal et al. (2020) similarly suggest that organizations need to establish what they term “algorithmists”, who monitor the algorithmic ecosystems. They are “not just data scientists, but the human translators, mediators, and agents of algorithmic logic” (Gal et al., 2020: 10). Since a common culture obsessed with efficiency and extraction of maximum value from workers has long existed, there is a risk of siloing algorithmic competencies into a small number of organizational members. A consequence of this culture is a mixture of upskilling and deskilling disparate sets of workers with regard to the managerial systems. While some groups in organizations will be trained to use the new algorithmic systems, thus increasing their power and position, others will not be trained and rather be forced to implement decisions that previously they had the power to shape. Both the organization and worker may come to view the deskilled manager as only an “appendage to the system” (Zuboff, 1988).

Indeed, years of research demonstrates common system design mindsets that perceive workers’ roles and responsibilities based on automation demands; meaning the designer seeks to automate as many sub-components of the sociotechnical work system as possible and leave the rest to human operators. As a result, an unintended consequence of such common design approaches is to deskill humans and to relegate them to uninspiring roles. Insights from the use of algorithmic management in the gig economy reflect the same findings where workers are considered replaceable “human computers” and may engage in (ghost) work that is repetitive, does not result in any new learning, and only intends to train AI systems (Gray and Suri, 2019).

Algorithmic competencies

In a workplace where algorithmic management is implemented, it is essential for managers and workers to develop algorithmic competencies (Jarrahi and Sutherland, 2019). However, research on algorithmic competencies, particularly in standard work contexts, is still in its infancy. Algorithmic competencies can be understood as skills that help workers in developing symbiotic relationships with algorithms. In addition to data-centered analytical skills that facilitate interactions between workers and algorithms, algorithmic competencies involve critical thinking. Workers “have a growing need to understand how to challenge the outputs of algorithms, and not just assume system decisions are always right” (Bersin and Zao-Sanders, 2020). Since algorithmic management involves a complicated network of people, data, and computational systems, the ability to understand, manipulate, and address the algorithmic systems on both a technical and organizational level will determine an individual’s agency and power at work. Grønsund and Aanestad (2020), for instance, have observed that the role of human workers shifted with the introduction of algorithmic systems, and that algorithmic competencies include auditing and altering algorithms.

An individual’s power in relation to the algorithm, and in relation to the organization as a whole, will therefore depend on their ability to understand and interact with the algorithmic systems. A lack of competency with the tools of work can reduce workers’ sense of autonomy over their work as well as their ability to make informed decisions and self-reflect (Jarrahi et al., 2019). Without algorithmic competencies and the active role of workers in constructing them, artificial and human intelligence do not yield “an assemblage of human and algorithmic intelligence” (Bader and Kaiser, 2019). As such, algorithmic opacity can be employed as a control mechanism to maximize the organization’s objectives. Indeed, recent research shows how gig work platforms withhold information about how algorithms operate to maintain soft control of the workforce (Shapiro, 2018). Information on how platforms’ payment algorithms operate, for instance, is used to prevent gig workers from collectivizing as detailed by Van Doorn (2020) in his study of Berlin-based delivery workers.

Attitudes toward algorithms (algorithm aversion and cognitive complacency)

The development of algorithmic competencies is, however, bounded by individual attitudes toward algorithms (Lichtenthaler, 2018). These attitudes, namely algorithm aversion and cognitive complacency, can be viewed as oppositional attitudes as they reflect a resistance to either using algorithms or to understanding and actively shaping their deployment.

Algorithm aversion refers to people’s hesitance to use algorithmic results, usually after observing imperfect performance by algorithms; this can even occur in situations where the algorithm may outperform humans in certain accuracy measures (Dietvorst et al., 2016; Prahl and Van Swol, 2017). Algorithm aversion reveals a lack of trust in algorithm-generated advice and has been observed in the work of professional forecasters in various domains where managers resisted the integration of available forecasting algorithms in their work practices. The full array of reasons behind algorithm aversion is not completely known. Recent experimental studies suggest that after receiving bad advice from human and computer advisors, utilization of computer-provided advice decreased more significantly, and human decision-makers still find more common ground with their human counterparts than with non-human, artificial systems (Prahl and Van Swol, 2017). Dietvorst et al. (2016) also suggest that algorithm aversion decreases if decision makers can retain some control over the outcome and the option to modify the algorithms’ inferences. Algorithmic aversion can lead to a lack of functioning understanding about how algorithms actually operate and their impact on one’s own work.

However, we can observe a critical distinction between aversion to algorithms in general, and an aversion to specific algorithmic systems. For instance, acceptance for workplace monitoring is more likely if it enhances labor productivity, but there is a tendency to reject it if it is used for monitoring health and performance (Abraham et al., 2019). It must be underlined that the implementation of algorithmic management is usually top-down and imposed upon the majority of the workforce who have limited power to resist. Kolbjørnsrud et al. (2017), for instance, demonstrate that support for the introduction of algorithmic management correlates with rank and is impacted by cultural and national differences. As they explain: “top managers relish the opportunity to integrate AI into work practices, but mid-level and front-line managers are less optimistic”. Isomorphic pressures to “keep up” with rival organizations, or to maintain a sense of digital innovation, can thus result in the implementation of algorithmic management systems by top management that are neither welcomed nor necessary.

Balancing aversion to algorithms is, however, a trend for cognitive complacency (Logg et al., 2019). Cognitive complacency may manifest itself when human decision-makers do not inquire into the factors driving inferences made by algorithms (Newell and Marabelli, 2015). In organizational contexts, algorithmic decision-making can “lead to very superficial understandings of why things happen, and this will definitely not help managers, as well as ‘end users’ build cumulative knowledge on phenomena” (Newell and Marabelli, 2015: 10). Previous research suggests that organizations posing increased workload and time pressure may raise the possibility of workers’ overreliance on automated systems and overuse of automated advice. That is, as workload increases, the workers may cut back on actively monitoring automated systems and uncritically accept the decision outputs to keep up with task demands (Chien et al., 2018). The procedural character of the algorithm may also be regarded as a kind of neutrality or objectivity, leading its decisions to be taken as authoritative by default.

This oppositional attitude toward either understanding or critiquing algorithmic systems echoes similar observations regarding a “machine heuristic”, which refers to mental shortcuts used to imply that interactions with machines (as opposed to another human being) can be more trustworthy, unbiased, or altruistic (Sundar and Kim, 2019). As such, people may overthrust intelligent agents and defer to them in high-stakes decision-making such as in emergency situations (Wagner et al., 2018). Earlier research on automation suggests, in some contexts, that humans may assign more value and less bias to decisions generated by automated systems compared to other sources of expertise (Parasuraman and Manzey, 2010). However, specific elements of an organizational context can fuel cognitive complacency. What is termed cognitive complacency may, in this regard, often be a manifestation of helplessness due to power imbalances.

Opacity

In a context where knowledge is power, how algorithmic management develops in standard work settings is fundamentally shaped by access to knowledge, specifically information and understanding regarding the algorithmic systems. This is primarily an issue of algorithmic opacity which operates sociotechnically through an intertwinement of technical and organizational features. For example, Burrell (2016) draws distinctions between opacity as intentional concealment, such as with corporate trade secrets, opacity due to a lack of technical literacy, and opacity due to algorithmic complexity and scale. Here, we discuss two important intertwined facets of opacity that impact the performance of algorithmic management in organizations: technical opacity and organizational opacity. While technical opacity is rooted in the specific material features and design of emerging algorithmic systems, organizational opacity entails how algorithms may reinforce the opacity of broader organizational choices.

Technical opacity

In terms of technical opacity, a frequent concern around algorithmic management is the opaque or “black box” character of AI systems (Burke, 2019). This term should be disaggregated, however, as the literature discusses a number of different ways in which algorithms are “black-boxed” (Obar, 2020). First, the algorithm may be black-boxed in the way that any computational or bureaucratic system can be. Once in place, its functioning is taken for granted, and the decisions or contingencies that went into designing the algorithm may not be engaged again. Newell and Marabelli (2015: 5) found that: “discriminations are increasingly being made by an algorithm, with few individuals actually understanding what is included in the algorithm or even why.” There is also an aspect of black-boxing that has been ascribed to algorithms as an inherent property of the computationally convoluted ways in which they operate. Many of the techniques used in ML, such as neural networks, are immensely complex, often involving many layers of computational processing in high dimensionality. Such complexities often make it difficult for those who are not AI experts to understand, even at a high level, how these techniques operate (Shrestha et al., 2019).

In response to this need for greater human comprehension of AI systems, there has been a rapidly growing subfield within the technical AI community called “Explainable AI” (XAI) (Adadi and Berrada, 2018). Fueled by the U.S. Defense Advanced Research Projects Agency program of the same name, XAI techniques attempt to render immensely complex models more understandable by humans through various technical methods. These can produce “local” or “global” explanations, with a local explanation offering a particular input/output prediction (e.g. why did the model assign X label to Y input?) and a global explanation relating to the model overall (e.g. what are the prominent features the model will take into account when reaching a prediction) (Adadi and Berrada, 2018). Such methods are largely aimed at the version of black-boxing associated with ML’s inherent complexity. Yet, scholars have noted a gap between technical XAI advances (which often are most useful to AI developers in processes of debugging) and the types of explanations that workers might need or want in actual use settings (Gilpin et al., 2019). In considering algorithmic management, different sets of concerns around explainability become salient, one of which is the varying technical literacies of workers who might interact with AI systems (Wang et al., 2019).

Organizational opacity

In addition to technical opacity there is the issue of organizational opacity, a lack of information by the organization due to strategic interests and intellectual property. Algorithmic opacity can be compounded by organizational relationships or power dynamics within and outside the organization. Developing algorithmic management systems relies on high upfront costs with uncertain long-term benefits (Keding, 2021). The scarcity and high-cost of AI talent explains why most gig-work platforms require extensive venture capital funding to develop the underlying technological infrastructures. While gig work platforms are opaque in their processes, most of their algorithms are developed “in-house”, giving their management the ability to continuously “tweak” the algorithm. Indeed, Uber has become notorious for continuous changes to their algorithms, to the detriment of both workers and customers. In standard organizations (non-platform based), however, technological infrastructures are often provided through the Cloud by third party AI suppliers on an AI-as-a-service basis (Parsaeefard et al., 2019). Organizations using Microsoft Teams, for instance, may rely on the “365 productivity score” to rate worker performance based on how much they use Microsoft 365 products (e.g. Word, Excel, or Teams). This externalization, however, can not only restrict managerial agency in “tweaking” the algorithms, it also prevents them from understanding how the algorithmic systems operate.

From an intra-organizational perspective, a major concern is also the opacity of the broader organizational workflow within which AI systems are embedded and the “high” or “low” stakes involved along that flow (Rudin, 2019). The overarching workflow itself may be complex, with several processes unfolding across time and space. Entwined with the concept of algorithmic authority, Polack (2020) refers to how professional gatekeeping may hinder algorithmic transparency since only ML developers or top managers may feel the need (or get permission) to understand how the algorithmic system works.

Overcoming opacity

Recently, regulatory effort has attempted to make algorithms more transparent and explainable (Felzmann et al., 2019). Such techniques can be applied to some degree within organizational settings. One approach is the algorithmic audit, which is predicated on the idea that algorithmic biases may produce a record that can be read, understood, and altered in future iterations (Diakopoulos, 2016; Silva and Kenney, 2018). Conversely, Polack (2020) has proposed that, as opposed to reverse-engineering algorithms, effort is invested in “forward-engineering” algorithms to establish how their design is shaped by varying constraints. Auditing is especially important in enterprise applications of AI, such as in regulated industries (e.g. health/medical, finance), as well as in the European Union where the GDPR grants individuals a “Right to Explanation” (Casey et al., 2019). There are several significant types of information, the disclosure of which could help provide a kind of algorithmic literacy (including human involvement, data, the model, inferencing, and algorithmic presence) (Diakopoulos, 2016; Jarrahi and Sutherland, 2019). As organizations increasingly adopt (or even rely on) algorithms as the backbone for people management, workplace-related concerns may even raise algorithmic management as a pressing concern for contemporary labor laws.

However, in the absence of labor-specific and organization-specific policies that govern algorithmic management, access to knowledge about which algorithms are deployed, how they are enacted, and what impact they have on each worker is limited. In platform-mediated gig work, considerable attention has been levied to “unboxing” the algorithms, trying to ascertain how the different algorithmic systems function (Chen et al., 2015; Van Doorn, 2020). Algorithmic management has become a target of intense analysis and critique among the disparate workforce, as well as by the media and research community. As a result, more information is available about how gig work platforms operate than about how the more everyday instances of algorithmic management function, which are easier to overlook and not centralized within a single platform. Continuous audits of algorithmic systems within an organization may provide an internal form of inquiry into how algorithms organize work-related processes (Buhmann et al., 2020). Organizations can also coordinate regular communication and deliberation opportunities to allow stakeholders to collectively assess the development and impact of algorithmic management systems. The transparency by design framework (Felzmann et al., 2020) or a participatory AI design framework (Lee et al., 2019) can provide procedures and tools that enable such stakeholder participation. Yet, one caveat is that developing such forms of transparency still needs the active engagement of workers to uncover the information. As demonstrated from the gig work setting, algorithmic opacity is not overcome without struggle, effort, and risk.

Discussion and conclusion

Algorithmic management is changing the workplace and the relationships between workers, managers, and algorithmic systems. Three key trends have supported the rise of algorithmic management. First, shifting norms of what constitutes work (e.g. project-centric work arrangements, platform work, and non-standard contracts); second, the expanding technical capabilities of machine-learning based algorithms to replace discrete managerial tasks (Khan et al., 2019); and third, wide-scale micro-instances of organizational choice to use algorithmic management due to local economic and strategic goals.

While intelligent systems continue to reshape our conceptions of work knowledge, boundaries, power structures, and overall organization, self-learning algorithms have the potential to chip away at several foundational notions of work and organization. For example, the deployment of intelligent systems will usher in new work systems with different divisions of work between machines and humans (where more mundane and data-centric tasks are assigned to machines while humans engage in tasks requiring social intelligence, tacit understanding, or imagination). Moreover, new roles will be defined for managers as being strategic and creative thinkers rather than only coordinators of organizational transactions. While public concerns today focus on machines taking jobs from humans, care and concern is also needed around how these machines will contribute to the management of human actions.

Adopting a sociotechnical perspective, we have argued that algorithmic management embodies both social and technological elements that interact with one another and together shape algorithmic outcomes. Algorithms must be recognized in the social and political context of employment relationships (Orlikowski and Scott, 2016). Issues such as opacity around the application of algorithms in organization derive from the interaction of technological and material characteristics of algorithms, as well as by their surrounding organizational dynamics. For example, unique characteristics of emerging AI algorithms (powered by deep learning) set them apart from previous generations of AI systems, and render their inferences intrinsically opaque due to the complicated nature of underlying neural networks. However, organizational politics and interests in minimizing public disclosure of decision-making processes can further deepen or even build from the opacity of algorithmic management to deflect accountability. As such, it is often hard to pinpoint the origin of these problems and separate the two elements as both the social and technical are entangled in current practices and are considered co-constitutive.

A driving concern throughout the implementation of algorithmic management is organizational accountability: holding algorithmically-driven decisions accountable to various stakeholders within and outside the boundaries of the organization (Diakopoulos, 2016; Mateescu and Nguyen, 2019). Discussions surrounding algorithmic accountability frequently refer to the transparency of algorithms, how organizations practically engage with opaque algorithms, and how to develop a sense of trustworthiness among organizational stakeholders (Buhmann et al., 2020). When addressing systemic discrimination, even simple oversight of algorithmic functions is problematic due to a multiplicity of stakeholders, interests, and sociopolitical factors innate to algorithms. For example, blame was placed on a distribution “algorithm” in Stanford Medical Center when controversy erupted on misallocating Covid-19 vaccines to different stakeholders. Administrators, in this case, were prioritized over frontline doctors. However, the algorithm was in fact not a complicated deep learning one, but quite a simple decision-tree algorithm designed by a committee (Lum and Chowdhury, 2021). A technocentric culture often fails to provide full rationales for decision-making and presents algorithmic results as “facts” to users rather than probabilistic predictions. Rubel et al. (2019) refer to this as agency laundering wherein human decision makers launder their agency by distancing themselves from morally suspect decisions and by assigning the fault to automated systems. This organizational approach goes against algorithmic accountability, which “rejects the common deflection of blame to an automated system by ensuring those who deploy an algorithm cannot eschew responsibility for its actions” (Garfinkel et al., 2017).

Algorithmic management touches upon many stakeholders, but no one inside the work context is impacted by algorithmic management more than managers and workers themselves. The relationship between the two parties will continue to be reconfigured and negotiated through uses of algorithms in organizations. Not only does algorithmic management reconfigure the power dynamics in the workplace but it also embeds itself in pre-existing power and social structures of the organization. In short, the deployment of algorithmic management in organizational work introduces novel “human–machine configurations” that could transform relationships between managers and workers and their respective roles (Grønsund and Aanestad, 2020). For example, algorithmic management may translate into cost-cutting and consequently labor-cutting measures (Mateescu and Nguyen, 2019).

With the rise of algorithmic systems, human managers are tasked with deciding what kinds of algorithmic software to adopt in their organization, whether it is for performance reviews, incentive amounts, or departure alerts. While these do not entirely remove human decision-making from the equation, they do encourage new ways of approaching, understanding, and acting upon such information. Workers, though, who are faced with algorithmic management processes within their workplace, as of now have little recourse to detect, comprehensively understand, or work around undesirable outcomes. Protecting the dignity and the wherewithal of workers—and those who manage their work—remains a critical concern as algorithmic management becomes a more commonplace phenomenon. Yet, if the gig economy is any template, reward-oriented algorithmic management processes are unlikely to favor workers over other stakeholders. While this scenario is hypothetical, more public awareness and scrutiny is needed about the role of learning and self-learning algorithms in influencing algorithmic management trends.

Throughout this article, we argued that algorithmic management is a sociotechnical phenomenon. This means that while algorithms have a central bearing on how work is managed, their outcomes in transforming management and relationships between workers and managers are socially constructed and enacted. Future research is, however, needed to investigate the mutual shaping of algorithms that manage work and unique dynamics of different work contexts. In this regard, we envision three specific directions for future research.

First, as noted, much of the discourse around algorithmic management is rooted in platform-mediated gig work. Gig work’s unique characteristics, such as a lack of pre-existing management systems and non-standard work arrangements, limit the transferability of current findings to standard work settings. It is therefore important to explore contextual variations in how algorithmic management unfolds and corresponding sociocultural differences across industries and organizational contexts. For example, algorithmic management may appear differently in organizations with flat hierarchies and more democratic organizational cultures than bureaucratic organizations. Studies of non-platform parallel industries to popular gig work industries would be particularly valuable, such as food delivery and freelancing, since we could observe the differences in power dynamics when algorithmic management is implemented from the start or later in an organization’s history.

Second, the use of intelligent systems empowered by deep learning in more traditional organizations is still embryonic. As such, most research on algorithmic management and the impact of algorithms in organizations concerns previous generations of AI (i.e. rule based AI); so this work many not fully reflect some of the technological characteristics of emerging AI systems and their growing applications in algorithmic management. Future research is needed to examine the interplay of these technological capabilities and ways that organizations manage work in practice.

Third, empirical research into the worker perspective, such as through ethnography, is vitally important in determining how algorithmic management is experienced “on the front lines” in standard organizations. Participatory action research, to understand the multi-stakeholder and socio-technical nature of algorithmic management implementation, would also offer considerable value in determining how to ensure a fair and democratic roll-out of algorithmic management. Conversely, direct participatory research may identify normative parameters of algorithmic management, highlighting where organizations should resist isomorphic pressures and avoid implementing algorithmic management at all.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

This work was supported by the Research Council of Norway within the FRIPRO TOPPFORSK (275347) project ‘Future Ways of Working in the Digital Economy’ and by National Science Foundation Award CNS-1952085.