Abstract

Algorithms have become a widespread trope for making sense of social life. Science, finance, journalism, warfare, and policing—there is hardly anything these days that has not been specified as “algorithmic.” Yet, although the trope has brought together a variety of audiences, it is not quite clear what kind of work it does. Often portrayed as powerful yet inscrutable entities, algorithms maintain an air of mystery that makes them both interesting and difficult to understand. This article takes on this problem and examines the role of algorithms not as techno-scientific objects to be known, but as a figure that is used for making sense of observations. Following in the footsteps of Harold Garfinkel’s tutorial cases, I shall illustrate the implications of this view through an experiment with algorithmic navigation. Challenging participants to go on a walk, guided not by maps or GPS but by an algorithm developed on the spot, I highlight a number of dynamics typical of reasoning with running code, including the ongoing respecification of rules and observations, the stickiness of the procedure, and the selective invocation of the algorithm as an intelligible object. The materials thus provide an opportunity to rethink key issues at the intersection of the social sciences and the computational, including popular concerns with transparency, accountability, and ethics.

We watch an ant making his laborious way across a wind- and wave-molded beach. He moves ahead, angles to the right to ease his climb up a steep dunelet, detours around a pebble, stops for a moment to exchange information with a compatriot. Thus he makes his weaving, halting way back to his home. So as not to anthropomorphize about his purposes, I sketch the path on a piece of paper. It is a sequence of irregular, angular segments—not quite a random walk, for it has an underlying sense of direction, of aiming toward a goal. (Simon, 1996: 51)

The search for an “underlying sense of direction” has long been a concern in the study of social and natural phenomena. Markets have their invisible hand, biological populations their natural selection, and communities their social norms. In the case of computation, this fascination with hidden agencies has recently found its object in the notion of the algorithm. Following the script of a seductive drama, algorithms have come to be portrayed as powerful yet inscrutable entities that somehow govern, shape, or otherwise control our lives (Gillespie and Seaver, 2015; Neyland, 2015: 121–122; Ziewitz, 2016: 5). Yet, as captivating as such talk may be, it often ends up further mystifying the phenomenon it seeks to clarify. For example, while the purported power of algorithms has raised concerns about the opacity of computation, this opacity is often taken as another sign of power (see Burrell, 2016: 1). This raises some important questions for the emerging field of critical data and algorithm studies. If it is so hard to know what algorithms actually do, then how to think about their recent rise as both a topic and a resource in the social sciences and humanities? What would it take to understand algorithms not as techno-scientific artifacts, but as a figure that is mobilized by both practitioners and analysts? How are we to study something that is widely thought to be inscrutable?

This article aims to explore these questions by examining the role of algorithms in practical reasoning, i.e. the practical considerations involved in getting things done on a day-to-day basis. Rather than understanding algorithms as a formal-analytic category whose meaning is to be established in advance, I shall demonstrate how people see and recognize just what is going on around them when they do so through the figure of the algorithm. My goal is therefore not to study algorithms as a term of art in mathematics and computers science, but as a device for making sense of observations. While this approach may seem parochial at first, it allows us to explore a kind of reasoning that has become pervasive not only in the social sciences and humanities, but also in law, design, and public policy. When “algorithms” are increasingly invoked by researchers, policy-makers, and designers in public talks, reports, and briefings, it is important to get a grip on how this specific form of reasoning is implicated in and implicates our actions.

Empirically, I shall explore this problem through an ethnographic experiment. Following in the footsteps of Harold Garfinkel’s (1967) tutorial cases, the experiment was designed around a simple task: go on a walk, guided not by maps or GPS but by an algorithm you devise ad hoc to give directions. By carefully recording interactions and paying close attention to the work of walking under the guidance of an algorithm, we were able to explore a number of contingencies that come with using algorithms as a figure to account for practice. While the exercise has been conducted many times in classroom and workshop settings, I shall draw here mainly on materials from one specific walk in 2011.

The materials provide an opportunity to reflect on three key issues at the intersection of science and technology studies (STS) and the computational. First, what appears to be a case of following a set of rules turns out to be a game of careful indexing of observations. Attending to how these come about and are resolved in situ illustrates the ongoing work of thinking and acting with algorithms. Second, the shift from analysis to reasoning allows us to respecify a number of concerns with algorithmic power. Issues like transparency, agency, and ethics appear in rather different light when seen through the lens of running (as opposed to static) code. Finally, while analyzing algorithms through ethnographic experimentation is a rather playful approach, such demonstrations can be a useful methodological as well as pedagogical device.

Travelling algorithms

Scholars in the social sciences and humanities have started to rethink an increasing number of activities as “computational.” Science, finance, journalism, and policing are just some domains now analyzed as deeply implicated in the management and processing of data. In this emerging field of research and practice, algorithms have taken on a peculiar role as both familiar and strange. Originally a term of art in mathematics and computers science (Knuth, 1974: 1), they are familiar because whatever system we engage with, it can be claimed that—from a software engineering point of view—algorithms are already at work. Understanding them as seemingly complete and self-sufficient routines, we do not usually ask about the circumstances of their use. At the same time, algorithms appear strange because their workings are difficult to account for from outside the analytic worlds in which they were conceived. Running code is not readily available to common sense—an impression further exacerbated by the sense of secrecy surrounding information systems. Taken together, these considerations lead to what may be called an “algorithmic drama” (Ziewitz, 2016: 5), in which assumptions about agency and inscrutability reinforce each other to enact the mysterious and seemingly autonomous figure of the algorithm.

Not surprisingly, this conundrum has provoked a wealth of speculation. Among the many issues raised are impact, i.e. the effects that algorithms have in various domains of public life (Beer, 2009); accountability, i.e. a concern about how algorithms and their makers might be held responsible for widespread impact (Diakopoulos, 2015); transparency, i.e. a demand for disclosure as a necessary condition for regulating impacts (Pasquale, 2015); and ethics, i.e. the idea of algorithms as embodying values that might lead to bias and discrimination (Kraemer et al., 2010). A key concern across these fields is thus an epistemic one with “knowing algorithms” (Seaver, 2013). Understanding what exactly algorithms do, the story goes, will help us better understand their implications, open up the black box of control, and respond to injustices that have been coded into them.

As a result, the question of how to study algorithms has become a topic in its own right. One strategy is to render the problem as one of expertise. Stephen Graham (2005: 575), for example, has called for “a concerted multidisciplinary effort to try and open up the ‘black boxes’ that trap software sorting.” While computer scientists explain the intricacies of computation, social scientists analyze their social and ethical implications. Accordingly, a number of initiatives have tried to foster interdisciplinary dialogue, knowledge exchange, or, in a more lopsided fashion, coding literacy among non-computer scientists (Wing, 2006: 33). Another strategy would be to apply the conventional register of social-scientific methods. In a recent overview, Rob Kitchin (2014: 17–24) lists six different approaches to studying algorithms in the social sciences: examining pseudocode/source code, reflexively producing code, reverse engineering, interviewing designers and conducting an ethnography of a coding team, unpacking the full sociotechnical assemblages of algorithms, and examining how algorithms do work in the world. 1 What these approaches have in common is that they start from an understanding of algorithms as things that, in principle, can be known. In doing so, they posit “the algorithm” as an epistemic object that produces social consequences.

From documents to figures

At closer look, the descriptive practice of turning algorithms into knowledge objects follows a familiar “two step” (Button et al., 2015: 78), according to which the particular is taken as a product of the general. This practice, which was first articulated by Karl Mannheim (1952: 53–63) and later developed by Harold Garfinkel, is better known as the documentary method of interpretation. In Garfinkel’s (1967: 78) words, the method consists of treating an actual appearance as ‘the document of,’ or as ‘pointing to,’ as ‘standing on behalf of’ a presupposed underlying pattern. Not only is the underlying pattern derived from its individual documentary evidences, but the individual documentary evidences, in their turn are interpreted on the basis of ‘what is known’ about the underlying pattern. Each is used to elaborate the other.

This way of theorizing algorithms has been a useful strategy. Among other things, it has allowed us to account for the recent proliferation of the trope across such diverse fields as journalism, finance, marketing, criminal justice, and gaming. It has facilitated conversations across a range of disciplinary and professional boundaries in sociology, media studies, history, political science, information science, and STS. It has bundled resources and attention to address concerns that might not otherwise have been articulated. At the same time, it has raised important questions. What would happen if we rethought algorithms not as objects to be known through observations but as figures to be used for making sense of observations? How to account for the seductive force or, as Adrian Mackenzie (2006: 43) called it, “cognitive-affective stickiness” of this figure? And how can these considerations help us rethink widespread concerns about ethics and accountability in practice?

Answering these questions is not an effort to debunk algorithm talk or to say that algorithms should not matter. On the contrary, it is an attempt at taking seriously the algorithm as a trope and understanding what its widespread use accomplishes. In doing so, I follow Paul Dourish’s (2016: 3) suggestion that “the limits of the term algorithm are determined by social engagements rather than by technological or material constraints.” This way of thinking also shares important sensibilities with the idea of figuration as employed by Claudia Castañeda (2002) and Lucy Suchman (2012). As “both a method through which things are made, and a resource for their analysis and un/remaking” (Suchman, 2012: 49), figuration can be seen as a descriptive tool “to unpack the domains of practice and significance that are built into each figure” and to consider “categories of existence … in terms of their use” (Castañeda, 2002: 3). Applying this idea to algorithms, we can examine just how the figure of the algorithm comes to shape and be shaped in the specific circumstances of its use. In other words, this paper is not so much concerned with what algorithms actually are, but with what kind of work our reasoning with algorithms does. Attending to this work will allow us to study the otherwise elusive trope of code in action or, to borrow Lucy Suchman et al.’s (2002: 164) term, the ethnomethods of the algorithm. 2

An algorithmic walk

A key challenge in exploring these ideas is to find a perspicuous setting, i.e. a setting in which the ethnomethods of the algorithm are “the organizational thing that [people] are up against” (Garfinkel, 2002: 182, emphasis in the original). In this case, I followed a tradition of research that combines experimental and ethnographic sensibilities into a social-scientific technique that has more recently been called “provocative containment” (Lezaun et al., 2013: 278). Historically most prominent in social psychology and art (Lewin, 1999; Milgram, 1974), this technique has been used in fields like STS (Izquierdo-Martín, 2004; Suchman, 2007), information science (Brown and Laurier, 2012), and sociology (Liberman, 2014: 49). Similar to ethnomethodological breaching experiments, this mode of inquiry is designed deliberately to provoke and induce trouble for analytic purposes (Schwartz and Jacobs, 1979: 272). A good example is Lucy Suchman’s (2007) canonical study of human–machine interaction at Xerox PARC. By inviting colleagues to operate a photocopy machine in groups of two, Suchman (2007: 121–122) constructed a situation to make certain issues observable; once given those tasks, the subjects were left entirely on their own. The strategy is therefore not experimental in the scientific sense. Rather, it acts as an “aid to a sluggish imagination” and helps produce “reflections through which the strangeness of an obstinately familiar world can be detected” (Garfinkel, 1967: 38). 3

In this case, I came up with the following task. In a group of two or three, go on a walk; be guided by an algorithm devised specifically to give directions; take careful notes about what happens and report back on your experience. Challenging participants to take a walk with the constraint of algorithmic navigation allowed me to observe how the figure of the algorithm came to life in the reasoning and deliberations of a small group of people. 4 Between 2011 and 2017, I observed such algorithmic walks on six occasions. Some of these walks I participated in myself, others were conducted in the form of classroom and workshop exercises with a total of 37 small groups of students and researchers from different disciplinary backgrounds in the United States and the United Kingdom. As these experiments produced a set of strikingly consistent observations, I shall illustrate my findings with materials from one specific walk I had conducted with a colleague at the University of Oxford in 2011. To capture the details of the walk, I had recorded our conversations, taken pictures, and written up extensive notes upon return. I then reconstructed parts of our experience by combining field notes, photographs, and excerpts from our conversations. The account itself is organized around five telling moments I call “stops.” At each stop, the walk was interrupted by what Bittner and Garfinkel (1967: 187) call a set of “normal, natural troubles,” i.e. troubles that are “normal, natural” in that they are part of our routine attempts to act “in accord with prevailing rules of practice.” Analytically, these troubles provide an important resource for understanding reasoning with algorithms.

Stop 1: A story for discovery

We decide to start our walk at the iconic Carfax Tower in the city center (Figure 1). “We,” in this case, are myself and Torben, a visiting scholar. On our way to Carfax, we wonder what an appropriate algorithm should look like. As non-computer scientists, we opt for a common-sense approach to algorithms as captured by countless dictionaries, textbooks, and encyclopedias: a step-by-step procedure for calculating the answer to a problem from a given set of inputs. What kind of instructions could produce some usable directions from what kind of data? And how would we even know it worked? We start by listing a number of ideas: take every third on the left, take right turns only, turn in the opposite direction if you see a yellow backpack, or take the street that starts with the letter closest to “A” in the alphabet. All these seem useful in that they define events that trigger our algorithm to produce directions. However, they also appear to be somewhat arbitrary. After some discussion, we settle on the following procedure:

At any junction, take the least familiar road.

Carfax Tower. At any junction, take the least familiar road. Take turns in assessing familiarity. At any junction, take the least familiar road. Take turns in assessing familiarity. If all roads are equally familiar, go straight.

As the materials show, coming up with an initial set of instructions was not an easy task. Guided by a common-sense understanding of algorithms, we devised a set of rules that would be specific enough to generate clear directions and general enough to work in any situation. This required first of all an act of imagination. What was our purpose? What were we likely to encounter? How could we guard ourselves against contingencies? Perhaps most importantly, we had to come up with a compelling story for our walk. While the options we had initially considered would have all been viable, they lacked a purpose and were difficult to parse. Only when we decided to explore new parts of the city did we have a baseline for making sense and judging the potential of our ideas. An important part of our operation was therefore to begin by posing a problem to ourselves; a problem which could then be claimed to justify choices in design that made—quite literally—sense.

The need to articulate a problem as a requirement for algorithmic processing is a well-known challenge. As a widely used computer science textbook suggests, “[a]lgorithmic problems form the heart of computer science, but they rarely arrive as cleanly packaged, mathematically precise questions” (Kleinberg and Tardos, 2005: xiii). In practice, however, these processes of problematization are hardly ever talked about or problematized themselves. As the literature on social problems has been arguing for a while, this moment of definition is crucial for facilitating action and allowing judgment. For example, while our approach made perfect sense for exploring new areas of the city, it would have been irrelevant had we intended to spend time in parks and nature. Our problem therefore set the discursive scene, on which the algorithmic walk could be accounted for—a “tellable story, … which narrates boundaries, relations, agencies and identities for entities” (Simakova and Neyland, 2008: 96). Exploring the less familiar parts of the city made intuitively sense and came in handy in the following days when we explained to others what we had been up to. In short, our first stop highlighted a key feature of reasoning with algorithms: the need to articulate a suitable problem that made the situation analytically tractable.

Stop 2: What is a junction?

We walk down High Street, a busy road with buses, taxis, and pedestrians. Then, only 60 feet away from our starting point, our walk comes to a halt. On the right hand side, between a jeweler and a chocolatier, we spot a little alleyway, indicating a “Historic Pub” (Figure 2).

A junction?

Neither of us has been down that alleyway before, so this would be the way to go. Is this a junction that will trigger our algorithm? Or shall we just ignore it and move on? With some good will, the alleyway might qualify as a road for pedestrians. Calling this a fully-fledged junction seems strange, though. After discussing our predicament, we realize we need to qualify our understanding of a road. As Torben suggests, “let’s say we only consider streets that are wide enough to walk a bike.” For our purposes, this seems to be a reasonable and unambiguous test:

At any junction, take the least familiar road. Take turns in assessing familiarity. If all roads are equally familiar, go straight. It is only a road if you can walk a bike on it.

The episode highlights another source of trouble in our attempt at reasoning with algorithms. As our difficulties on High Street show, the environment was not always readily available for processing. Rather, we had to make it up in the image of the task at hand. What counted as a junction for the purpose of the algorithm could not be resolved by recourse to the rule itself. Rather, we had to introduce another set of considerations that would allow us to determine the analytic status of the alleyway. Making our observations algorithmically tractable (or “indexing” them) did not simply consist of spotting patterns or identifying objects that already existed. Rather, we respecified them first in conversation and then in principle to keep us going. Specifically, we tried to overcome the trouble not with a singular decision for this specific situation, but with another rule that would apply to other situations of this kind. In other words, to preserve the workability of our algorithm in view of observations that might not register intuitively as “roads,” we resorted to a further test that would be workable on any such occasion.

It could be argued that the moment simply illustrated a long-standing lesson in the philosophy of language. Rules are never just “applied,” but need to be enacted in the specific the circumstances of their use (Wieder, 1974; Wittgenstein, 2009). Yet, while the idea that rules cannot determine the conditions of their application is not new, it is worth reminding ourselves of this in this specific context. In order to keep going, we had continuously to re-enact the world in the image of the algorithm. Enactment here meant recasting our observations so that they fit the grammar of our initial set of statements. This operation did not just allow us to overcome the challenge of the alleyway, but also reminded us that the distinction between algorithm and environment was itself a practical accomplishment. Only by maintaining this distinction in view of things that did not fit could we produce results consistent with the premises of the experiment.

Stop 3: The Christ Church incident

After a right turn into Alfred Street and a further turn into Blue Boar Street, we find ourselves in unknown territory. Following a small street paved with cobblestones and lined by yellow sandstone walls on either side, we take a few turns, walking past another pub. Eventually, we hit St. Aldate’s, a busy road with bus stops and a crowd of foreign language students talking outside the George & Danver coffee shop.

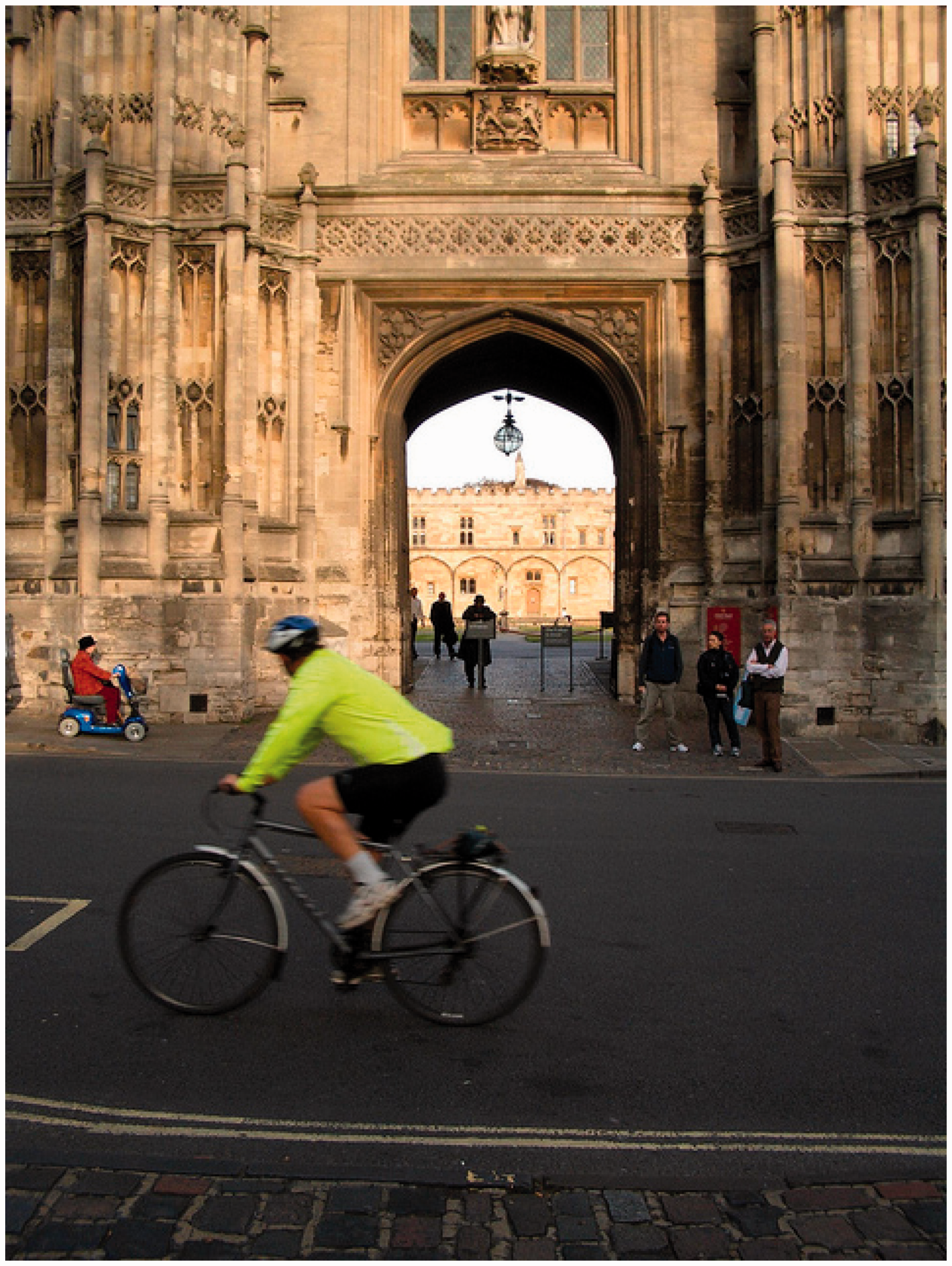

When we pass the entrance gate of Christ Church College (Figure 3), Torben stops and asks whether we might have time to have a closer look. Christ Church College, whose dining hall featured famously in Harry Potter movies, is a popular destination for tourists from around the world. Having been there many times before, I am not too keen on visiting the college. Luckily, it is my sense of familiarity that counts.

Me: OK, sooo … let’s see … this is definitely broad enough to walk a bike, so we are definitely dealing with a junction. Good–

Entrance gate of Christ Church College. Torben: –good–

Me: –so which of these is, u:m, which of these is less familiar, so, I have been to both, really, so … I guess … we’re going straight.

Torben: ((frowns))

Me: ((laughs)) Sorry … let’s go.

The third stop highlights another moment in which the algorithm came to figure in our interactions. In view of our diverging preferences, it was convenient for me to invoke the algorithm and defer accountability without having to get into a discussion about the relative merits of a visit to the famous college. In order to resolve the situation, I only had to recount it in the language of our script. Concluding with the line “let’s go,” I could conveniently invoke the algorithm as a discursive resource in order to avoid what otherwise would have required a more personal argument and explication of my own appreciation of the college.

One way to look at this phenomenon would be to say that the figure of the algorithm became itself an object in our interactions that could plausibly be imbued with some degree of agency. In this case, accounting through the lens of algorithms produced accountabilia, i.e. objects mobilized to enact relations of accountability (Sugden, 2010). Recasting the situation through a set of statements we called “our algorithm” provided a comparatively easy way to avoid individual responsibility and hide behind a seemingly autonomous operation. This process of dissociating oneself from the situation, then, could be said to account at least partially for the impression of the “hidden agency” commonly attributed to algorithms. Invoking “the algorithm” allowed me to recast the situation in terms of another set of actors, which in this instance played out in my favor. Given the careful work put into devising our set of rules, my reasoning was reasonably persuasive. The only way for Torben to resist would have been to question the purpose of our exercise more fundamentally.

Stop 4: The Y-junction

About 50 yards down the road, we discover a small and unfamiliar walkway to our right. It is for pedestrians only, but it meets our earlier criterion of broad-enough-to-walk-a-bike. We follow the narrow path between buildings until it opens up into a small square. We also notice how we get more confident over time. Whereas initially we had to pause and revisit our notes, we now navigate rather seamlessly through the residential parts of South Oxford. We cross the square and continue on Faulkner Street until we arrive at the next junction—and problem (Figure 4).

Confusing Faulkner Street.

It is Torben’s turn. But since neither of us has been here before, it does not really matter. Running through the routine, Torben suggests that we go straight as posited for equally familiar options. However, what counts as straight here is not clear. The street forks at more or less even angles, which brings our operation to a halt.

After some discussion, we realize that we need to extend our set of rules once more. As our goal of exploration does not suggest an obviously “better” route, we decide to flip a coin whenever our rules are not conclusive.

At any junction, take the least familiar road. Take turns in assessing familiarity. If all roads are equally familiar, go straight. It is only a road if you can walk a bike on it. When all else fails, flip a coin.

Once again, we had to rethink our script and fine-tune it to unanticipated circumstances. As our initial thinking had not accounted for the possibility of a Y-junction with two unknown roads, we had to add another element to the procedure in order to accommodate the case. In contrast to previous modifications, though, this situation could not be resolved by following the rationale of exploration we set for ourselves. Faced with two equally unfamiliar roads, we did not have a clear criterion to choose one over the other. The solution therefore had to introduce a new consideration that had not yet been captured in our reasoning.

Against this backdrop, the resolution we accomplished here was interesting. For one, a coin throw served our purposes just well—a practical device that did not require further reference to outside entities. Of course, it could be argued that this device might fail should we ever arrive at an equally familiar four-pronged junction with no straight option. But at the time the possibility did not occur to us. As it stood, the aleatory mechanism of the coin throw allowed us to proceed even when our initial set of instructions failed. In practice, this move thus further increased the robustness of our walk. This robustness was not achieved by specifying particularities as we had done before, but by adopting a device that was more semantically inclusive. The coin throw thus prevented us from having to step “out” of the procedure.

Stop 5: The forbidden car park

After some narrow turns and crossing a busy four-lane road, we end up in front of a police station and turn left into Floyd’s Row. We follow the lane and arrive at a big gate with name plates of local businesses. The gate is locked. Fortunately, we find a way around the building. To our right, there is a paved lane with marked parking spots between two buildings. We follow the lane, turn right, and walk along a small river. Eventually, the lane opens up into a car park with a tree-lined fence. As we follow the trail around the building, a door opens and a man in a white shirt and a yellow security badge steps out (Figure 5).

The forbidden car park.

Before we can say anything, he shouts: “Excuse me! What are you doing here. This is private property.” For a moment, I consider explaining the nature of our walk. However, on second thought, the idea appears too difficult to get across without looking even more suspicious. So instead we apologize and quickly continue our walk around the building, exiting the car park right next to where we entered it.

The incident in the forbidden car park highlights another difficult issue: the role and place of normativity and ethics in reasoning with algorithms. In light of recent concerns about the social implications of new data services, it has been suggested that algorithms contain “essential value judgments” (Kraemer et al., 2010: 251). In this case, for instance, it could be argued that the algorithm did not account for the possibility of private property and thus embodied socialist or egalitarian biases that just considered any space a public space. However, while reading ethical or political commitments into algorithms might serve a practical purpose of its own, it did not reflect what happened in this case. Neither Torben nor I had thought about the possibility of trespass. No warning sign or gates prevented entry. As we had entered the car park through a back entrance, the nature of the space had not been clear to us. Only when the uniformed security guard stepped up and redefined the car park as a “private” one did we find ourselves in violation of a norm. Had this not happened, we would have simply left the car park on the other side.

The ethics here were thus not coded into our algorithms, but achieved as part of our collective reasoning. For ethics to become an issue, the space itself pro-actively had to be rendered as forbidden. While for Torben and myself the car park seemed like any other public space we had passed through that afternoon, the security guard had indexed it as private property. It was the respecification of the space that variously rendered our presence as willful trespassing or casual passage. Specifically, it shows that there was nothing “in” our routine, the car park, or the algorithm that would have prevented us from entering. Instead, normativity came about as an upshot of our interactions in specific situations. The ethicality provoked by the incident was a practical accomplishment that involved far more than pointing to a value straightforwardly “embedded” in the algorithm.

After our detour into the forbidden car park, we find ourselves back on St. Aldate’s. Having been on the road for two hours, Torben suggests that we return to the department. Fortunately, we are headed right back into the shopping area, passing Clarendon Centre and taking a left into St. Michael’s Street. At the end of the street, we stop. The algorithm would demand that we flip a coin. But we decide to end our experiment right here. Without further discussion, we turn right into New Inn Hall Street, then George Street and walk back to Saïd Business School. This last stretch home, we note, feels strangely naked.

Reasoning with running code

As the materials have shown, the role of algorithms in guiding our walk cannot be captured in a simple definition. Far from being the straightforward or intuitive affair as which it sometimes is portrayed, reasoning with running code turned out to be a complex and ambiguous exercise. Understanding algorithms not as Galilean objects to be known but as a figure to be mobilized in practice, we identified a number of everyday troubles typical of algorithmic reasoning. This included the role of problematization, which—once established—was not further challenged; the work of parsing observations through the language of the algorithm; the moments in which we carved the figure of the algorithm out of our practices to defer accountability; the struggle to preserve the robustness of the procedure through additional provisions; and the situated and selective rendering of actions as unethical when challenged by an outside intervention. In this section, I shall offer three observations that cut across these themes and relate them to selected work on algorithms in the social sciences and humanities.

A first observation concerns the extent to which the walk brought out the work of respecification involved in algorithmic reasoning. Specifically, it was interesting to see how the experiment exhibited the previously mentioned double role of figuration as “both a method through which things are made, and a resource for their analysis and un/remaking” (Suchman, 2012: 49). In order to make any progress at all, we had to continuously revisit our assumptions about the world while recreating the world in light of these assumptions. For example, for roads and junctions to be recognized as such, we not only had to come up with an initial concept of a road or junction, but also had to put it to the test of a specific situation. Starting from the common-sense idea of algorithms as recursively applied decision rules, we grounded our walk in analytic language we had initially considered useful for the purposes of exploration. On the road, however, we had to put this grammar into practice and found ourselves confronted with a steady stream of situations that challenged our accounts. While some of these challenges were foundational in that they required us to change instructions to account for circumstances we had not anticipated, others were rather subtle and occurred as part of “normal” use.

This iterative and experimental process resonates with a number of attempts to theorize the work of making things algorithmic. For example, in a study of the computerization of the Arizona Stock Exchange, Fabian Muniesa (2011) observed a phenomenon he called “trials of explicitness.” Rather than understanding computerization as a linear process of translating markets into software code, Muniesa (2011: 3) suggests that “a call for explicitness often translates into the emergence of grey areas, the discovery of new problems and, sometimes, the development of controversies about what is exactly to be made explicit and how.” These ambiguities thus indicate a more recursive process, in which the grammar of accounts is itself subject to respecification. This has also been observed by Paul Kockelman (2013), who wrote about the case of algorithmic filtering and the kinds of ontological transformation that come with it. Much like sieves, Kockelman suggests, algorithms articulate assumptions that shape our observations. Unlike sieves, however, these observations also update our assumptions. While not a Bayesian operation in any formal sense, our walk illustrated these dynamics nicely. As we had to parse our observations in a constant struggle to respecify the situation in the image of the self-imposed constraint, the walk was not so much a case of recognizing patterns, but an exercise in explicating observations in the language of the algorithm while figuring out whether and to what extent they could facilitate the job at hand—a determination that itself was subject to the contingencies of real-time navigation.

A second observation has to do with the absorbing nature of the walk. As hidden alleyways became either “roads” or “not roads” and divergent views about the need to visit Christ Church college were being reconciled, it was striking to see how much we were willing to adhere to our routine and maintain its workability. Over time, parsing observations through the figure of the algorithm (and vice versa) began to constitute a rhythm, in which our reasoning made sense and was perceived to be internally consistent. While it could be argued that this was simply a result of the design of the experiment, which—after all—required us to follow self-imposed constraints, we became so immersed in the recursive process that it was hard to step away. For example, rather than abandoning the walk in view of yet another challenge like the Y-junction, we keenly added the catch-all provision that would allow us to proceed in face of unanticipated circumstances. An even more obvious example would be the sensation of abandonment that we experienced on our trip back home, especially the sense of feeling “strangely naked.” Not more than a passing comment at the time, the observation indicates an almost visceral dependence on this way of reasoning.

This experience of being drawn into and caught up in rule-based practices is a much-discussed phenomenon in sociology and anthropology. An early example is the work of Johan Huizinga (1955), who coined the concept of the magic circle. Like arenas, screens, and card tables, the magic circle constitutes a kind of playground, consisting of “forbidden spots, isolated, hedged around, hallowed, within which special rules obtain” (1955: 10). A more recent instantiation would be Natasha Schüll’s (2005: 74) concept of a “zone,” a state of being in the world described by machine gamblers in Las Vegas, whose “attention is thoroughly absorbed by a steady repetition of choosing operations.” A key theme here is how repeated practical engagement with a set of more or less explicit rules provokes a sense of “getting lost” in processes of figuration. Like Lucy Suchman’s employees at Xerox PARC, we quickly started losing sight of the initially constructed nature of the situation. At the same time, the walk demonstrated how this capture was not necessarily absolute or final. Edward Castronova (2005) made this point when analyzing governance in online games. Building on the notion of the magic circle, he described how games provide “a shield of sorts, protecting the fantasy world from the outside world,” but nevertheless have porous boundaries that “people are crossing … all the time in both directions” (2005: 147). In the same way economic currencies and legal rules circulate in and out of games, our own “synthetic” version of the city was occasionally pierced. For example, as the incident at the forbidden car park shows, our rendering of space was not immune from being challenged by a person not accounted for in our routines. 5

A third observation, then, concerns the fate of the algorithm as an intelligible entity. In light of ongoing respecification and gradual immersion, it became increasingly difficult to differentiate “the algorithm” from the various practices of observation, negotiation, and decision-making. In fact, temporarily objectifying and bringing back “the algorithm” was itself a practical accomplishment. Among other things, this included the verse-like notation of the algorithm in the form of pseudocode. Materializing the instructions on a piece of paper in some typographic form allowed us to refer to “them” at different stages of the walk. For example, invoking “the algorithm” while holding up my notebook was a handy shortcut in the Christ Church incident. Conversely, we resisted this temptation during our foray into the forbidden car park, a context in which the same maneuver might have complicated things.

As these selective invocations show, the status of “the algorithm” in our operations was rather fleeting and ephemeral. While our walk could easily be recognized as “algorithmic,” the precise object “algorithm” was virtually impossible to pin down. Rather, mobilizing “the algorithm” as an object was a way to make or break a certain version of the world. While the distinction between algorithm and environment or—more pertinently—algorithm and data is often assumed to be self-evident, the walk has shown how singling out one or the other is itself a practical accomplishment. In fact, it could be argued that achieving separation for the specific purpose of the walk was an important part of reasoning with algorithms in its own right.

Conclusion

This paper started with a challenge to explore how reasoning with algorithms is “not quite random.” Not unlike Herbert Simon’s ants, we made our way through the city of Oxford in “a sequence of irregular, angular segments.” While the shape of the resulting route in fact appears quite random, the study showed the systematic work involved in navigating built environments through a recursive set of rules. Rather than being a straightforward exercise in following directions, the experimental walk highlighted the work of reasoning with algorithms, including the ongoing respecification of rules and observations, the stickiness of the procedure, and the selective invocation of the algorithm as an intelligible object.

Together, these insights complicate the widespread interest in algorithms as powerful yet inscrutable entities. If we look at algorithms not as objects to be known in theory but as figures to be used in practice, then calls for transparency, accountability, or ethical design appear in rather different light. While transparency is usually predicated on the possibility of knowing and accountability requires the existence of an intelligible object, calls for fairer algorithms tend to assume that we can judge their ethicality in its own right. Yet, as the experiment has shown, none of these assumptions were particularly viable in the case of the experiment. This opens up some new directions for understanding settings that are considered “algorithmic.” For example, what would it take to think of algorithmic ordering as an exercise in respecification rather than an application of a set of rules? What would a politics of algorithms look like if it started from the necessity of tinkering and testing rather than from impacts and design? What if there simply is no way to know the ethicality of any given system in advance and if the best that we can do will always only be the second best?

While it would be tempting to dismiss these findings as “not really” about algorithms, it is important to remember that this peculiar status of “not really” is what much work on algorithms in the social sciences and humanities is about. Maybe most importantly, the experiment has shown that any recourse to the figure of the algorithm is itself a practical accomplishment. Examining in detail why and how this is the case can open up a new understanding not only of algorithms, but also of algorithm studies at the hands of scholars, policy-makers, and designers.

Footnotes

Acknowledgments

This article benefited from generous feedback at the Symposium on ‘Knowledge Machines between Freedom and Control’ in Hainburg, Austria (2011), the Annual Meeting of the Society for the Social Studies of Science in Copenhagen (2012), the Science Studies Research Group and the Information Science Colloquium at Cornell (2014), as well as the UC Berkeley CSTMS Master Class (2015). Many thanks to Samir Passi, Nick Seaver, the Digital STS folks, and three anonymous reviewers for their comments. Special thanks to Torben Elgaard Jensen and the students of ‘Computing Cultures’ and ‘Governing Everyday Life’ for being such excellent walking companions.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.