Abstract

Surveys frequently report widespread belief in conspiracy theories, prompting concerns about their democratic consequences. Yet, standard survey measures often implicitly treat agreement as equivalent to politically consequential belief, even though agreement can reflect a range of engagement—from momentary reactions to durable worldviews. This paper argues that an important dimension of belief is often insufficiently captured in existing approaches: salience. I introduce a salience-based measure that incorporates certainty and prior familiarity to distinguish more tentative or situational endorsement from internalized, action-relevant belief. Using original survey data, I show that this measure correlates more strongly with psychological traits associated with conspiracism and better predicts self-reported engagement: including discussing, posting about, and researching conspiracy theories. These results suggest that traditional measures may overstate the prevalence of politically meaningful conspiratorial belief and obscure substantial heterogeneity among those who agree with conspiracy claims. By refining how belief is measured, this paper offers a tool to more accurately identify which survey endorsements are likely to reflect consequential belief.

Introduction

Belief in conspiracy theories is often described as widespread in the American electorate, with survey research finding that a majority of Americans will endorse at least one (Enders et al., 2022; Oliver and Wood, 2014; Smallpage et al., 2017). These findings have fueled growing concern about the consequences of conspiracy belief for democratic norms, institutional trust, and political behavior (Enders et al., 2022; Muirhead and Rosenblum, 2019).

Yet, what survey agreement reveals about belief remains conceptually and empirically unclear. Many studies treat agreement as evidence of belief, but agreement can reflect heterogeneous response processes, including casual endorsement, expressive responding, or partisan signaling. Some respondents even agree with conspiratorial claims they report never having encountered before, suggesting that endorsement may sometimes be constructed in response to the survey prompt itself (Oliver and Wood, 2014). As a result, agreement alone likely overstates the prevalence of consequential belief, blurring distinctions between surface-level endorsement and attitudes that are sufficiently internalized to guide judgment and action.

These distinctions matter because the political implications of conspiracy endorsement are clearest when beliefs are stable, accessible, and capable of motivating action. When agreement instead reflects fleeting impressions or expressive responses, inference about consequences becomes more uncertain. Distinguishing between these possibilities requires moving beyond agreement alone. Drawing on original survey data, this paper incorporates two theoretically motivated indicators—certainty and familiarity—to distinguish forms of conspiracy endorsement that are more closely associated with political behavior and relevant psychological traits.

Although this paper focuses empirically on conspiracy endorsement, the measurement problem it addresses is not unique to conspiracy theories. In survey research more broadly, agreement often captures a mix of internalized belief, expressive responding, and situational judgment, particularly when respondents are prompted to evaluate claims they might not otherwise articulate spontaneously (Tourangeau et al., 2000). Beliefs of any kind become politically consequential when they are sufficiently salient to guide judgment or action. Conspiracy theories therefore serve as a substantively important case for examining a broader challenge in measuring belief.

Literature review

To clarify when survey endorsement reflects internalized, action-relevant belief, I draw on work on attitude strength from social psychology, which shows that attitudes vary along multiple dimensions, and that these properties shape stability and behavioral impact (Abelson, 1988; Krosnick et al., 1993; Petty and Cacioppo, 1986). In the survey context, two indicators are especially useful for separating internalized belief from on-the-spot endorsement.

First, certainty captures conviction: more certain judgments tend to be more stable and resistant to counter-arguments (Krosnick et al., 1993; Petty and Cacioppo, 1986). Second, prior familiarity captures whether respondents have encountered a claim before the survey, making it more likely that an evaluation already exists in memory and can be retrieved when asked—a precondition for attitude accessibility (Fazio et al., 1983; Krosnick et al., 1993). While attitude strength literature identifies dimensions such as importance or identity centrality, certainty and prior familiarity are especially useful for capturing whether an evaluation is stored, accessible, and capable of guiding judgment when elicited. Together, these indicators help distinguish pre-existing, action-relevant belief from situational endorsement.

Why agreement is noisy

From a belief-salience perspective, agreement is noisy because it does not distinguish situational responses from internalized ideas that guide judgment or action. A growing body of work shows that agreement can function as an expressive signal rather than a cognitive commitment, especially in politically charged contexts. Acquiescence bias, expressive responding, and partisan cheerleading are well-documented in public opinion research (Bullock and Lenz, 2019; Hill and Roberts, 2023; Peterson and Iyengar, 2021), including in work on conspiracy theories (Berinsky, 2017; Uscinski and Parent, 2014).

Although some work finds expressive responding to be limited (Berinsky, 2017), other studies show that even modest levels can meaningfully inflate belief estimates, particularly for low-baseline claims (Hill and Roberts, 2023; Peterson and Iyengar 2021; Yair and Huber, 2021).

When agreement reflects internalized belief: Familiarity and certainty

Agreement can also reflect limited prior knowledge or on-the-spot evaluation. In conspiracy surveys endorsement often exceeds respondents' self-reported familiarity with a theory (e.g., Oliver and Wood, 2014). This raises a central question: can endorsement of a previously unknown theory be treated as meaningful belief?

Survey psychology suggests that attitudes are often constructed on the spot based on accessible considerations, not stored beliefs (Tourangeau et al., 2000; Zaller and Feldman, 1992). In this view, agreement with a conspiracy item may reflect a momentary inference, heuristic, or associative response. Thus, agreement may reflect ongoing, internalized belief, but it may also reflect partisan loyalty, social signaling, or snap judgment instead. Yet, many studies operationalize conspiracy belief in ways that ultimately treat agreement as substantively equivalent, either by dichotomizing responses or by collapsing variation in certainty in downstream analyses, obscuring differences that may matter for political behavior.

How salient belief should behave: Action and dispositional roots

A longstanding literature shows that attitudes only sometimes translate into behavior (e.g., Fishbein and Ajzen, 1975). Measuring conspiracy agreement without measuring action risks overstating its civic implications. Not all believers act, and not all action is belief-driven. Distinguishing between endorsers who act and endorsers who do not helps identify when conspiratorial beliefs become politically consequential.

In addition to behavior, research links conspiracy belief to underlying psychological traits such as paranoia, magical thinking, and distrust (Abalakina-Paap et al., 1999; Brotherton and Eser, 2015; Douglas et al., 2017). These traits are thought to form the psychological basis of a conspiratorial predisposition. Therefore, if a measure captures meaningful conspiracy belief, it would be expected to correlate more strongly with these traits, and more so than simple agreement. Behavior and dispositional traits provide empirical benchmarks for evaluating belief measures: meaningful, internalized belief should be more tightly linked to action and to these underlying psychological predispositions than surface-level agreement.

Thus, salient belief (belief that is both familiar and held with certainty) would be expected to be the component of endorsement that is most consistently predictive of political behavior and most aligned with conspiratorial dispositions. These criteria offer a way to assess whether a measure captures stable, internalized cognition, rather than a tentative or situational endorsement.

Theory

Belief, as it is typically measured in this literature, is an imperfect proxy for epistemic conviction. Survey agreement is often interpreted as belief, though respondents may endorse conspiratorial claims for a variety of reasons. As a result, agreement-based measures may capture a combination of affect, identity, and conviction, rather than a single underlying construct.

Binary agreement measures can therefore conflate superficial endorsement with more strongly held beliefs, potentially overstating both the prevalence and apparent strength of conspiratorial thinking. This paper focuses on two dimensions that help differentiate forms of endorsement: certainty and familiarity. Together, these dimensions capture not only whether a respondent agrees with a statement, but how strongly the belief is held and whether it existed prior to the survey encounter.

Dimensions of belief salience.

Certainty: Is the belief strong?

Agreement with a claim does not necessarily indicate conviction. Some respondents endorse conspiratorial theories with hesitation or uncertainty, while others express high confidence. Certainty captures this distinction by reflecting respondents’ perceived strength of belief—whether they suspect a claim might be true or are confident that it is.

Existing research shows that higher-certainty attitudes tend to be more stable over time and more resistant to counter-arguments (Graham, 2023). Beliefs held with greater confidence are therefore more likely to shape decisions, reflect underlying worldviews, and persist beyond the survey context. I operationalize certainty using respondents’ self-reported confidence in each endorsed theory, distinguishing between lower- and higher-certainty endorsement.

Familiarity: Was the belief already known?

Beliefs that are constructed in response to a survey prompt may differ meaningfully from beliefs that existed prior to measurement. When respondents encounter unfamiliar claims, their agreement may reflect momentary inference or contextual cues rather than a stable conviction. Research in survey psychology demonstrates that individuals often form opinions in real time, relying on accessible considerations rather than stored attitudes (Zaller and Feldman, 1992).

Familiarity therefore serves as an indicator of whether an endorsement reflects a belief that predates the survey encounter. Beliefs that respondents recognize as previously encountered are more likely to be integrated into existing cognitive frameworks, whereas unfamiliar claims are more susceptible to tentative or affective responses. I measure familiarity using a pre-knowledge indicator that captures whether respondents had heard of a given theory prior to the survey.

Methods

Survey design and sample

Data were collected via Prolific in December 2024, yielding an initial sample of 1,089 U.S. adults quota-balanced by Prolific on age, gender, race, and partisanship to approximate national distributions; no post-stratification weights were applied. After applying response-bias procedures, the final analytic sample was 1,022. Exclusions were respondent-level and applied listwise, treating these cases as measurement error rather than substantive variation. Analyses used endorsement-level data (one row per respondent per theory).

Participants evaluated nine conspiracy theories, selected to vary in partisan alignment (three Republican-aligned, three Democratic-aligned, and three nonpartisan) and prior visibility in public discourse (see Appendix A). Each item was rated on a five-point certainty scale ranging from “I am certain this is false” (1) to “I am certain this is true” (5). For each item, respondents were asked whether they had heard the theory prior to the survey (1 = yes, 0 = no). Although the data were collected online, research suggests that Prolific yields high-quality, attentive respondents comparable to traditional survey vendors (e.g., Douglas et al., 2023; Peer et al., 2022).

Operationalization of belief

Agreement was coded 1 if a respondent selected 4 or 5 (“think this is true” or “certain this is true”), and 0 otherwise. Certainty was coded 1 only when a respondent selected the top category (“I am certain this is true”), and 0 otherwise. As a robustness check, I also estimate models treating certainty as an ordinal (1–5) measure. Familiarity was coded 1 when respondents reported having heard the theory before.

To distinguish internalized belief from tentative or constructed agreement, I defined a binary salience indicator coded 1 when a respondent is both certain a theory is true and reports prior familiarity, and 0 otherwise. This binary measure is used when treating belief salience as an outcome (e.g., in trait validation models and descriptive comparisons).

When predicting behavior, I use a joint certainty-familiarity specification which includes certainty and familiarity as two distinct predictors rather than collapsing them into a single binary indicator. This allows the models to retain information about each component’s contribution to behavior. Models used listwise deletion.

The binary salience indicator is instead used for construct validation and reporting descriptive behavior rates. It serves as a conceptual measure of cognitively stable belief, whereas the joint specification is better suited for prediction because it retains the full variation in certainty and fimiliarity.

Behavioral outcomes

Behavioral questions were administered only when respondents expressed agreement or familiarity with the theory. Respondents indicated whether they had: spoken about the theory with others, posted about it on social media, sought information from like-minded sources, or searched for news coverage to verify it (all coded 0/1).

Trait validation models

The survey also included scale measures of paranoid ideation, odd beliefs/magical thinking, and political trust. All items were adapted from validated scales (Grimmelikhuijsen and Knies 2017; Fenigstein and Vanable 1992; Mason, 2015; ANES). To assess construct validity, I estimated endorsement-level bivariate logistic regressions in which each belief indicator (agreement, certainty, familiarity, and the binary salience indicator) was regressed on each psychological trait. Model performance is summarized by pseudo-R2 and AIC, averaged across theories.

Behavioral models

To assess behavioral relevance, I estimate logistic models for each theory and each behavioral outcome, with behavior as the dependent variable and agreement only, certainty only, familiarity only, or certainty and familiarity entered jointly. Results are summarized by behavior type by averaging pseudo-R2 and AIC across theories.

Response-bias procedures

To account for acquiescence bias, the survey included randomized positive and negative framings of one of three conspiracy items. For example, respondents were asked both “The government hides evidence of alien life” and “The government is transparent about alien evidence.” Contradictory agreement flagged potential acquiescent respondents.

To assess partisan cheerleading, participants were shown two versions of a substantively identical conspiracy theory regarding secret elites—one framed with an in-party partisan cue and one a neutral cue. Cheerleading was defined as four points or more agreement with the partisan-framed version than the neutral version.

Results

This section evaluates whether survey agreement reflects meaningful belief. I first identify response patterns that complicate interpretation of agreement, including cheerleading, acquiescence, and endorsement of unfamiliar claims. I then assess whether endorsement predicts downstream behavior and whether stronger belief signals sharpen that relationship. Finally, I compare how agreement, certainty, familiarity, and the combined certainty-familiarity specification perform in predicting psychological traits and behavioral engagement.

Patterns in belief expression

Analysis of these indicators suggests that binary agreement reflects heterogeneous forms of endorsement rather than a uniform belief state. First, 6.6% of respondents agreed more strongly with a partisan-framed version of a theory than an identical neutral one, consistent with the idea that people may agree in order to reflect a partisan attitude. Another 3.2% agreed with both positively and negatively framed versions of the same claim, indicating acquiescence bias (See Appendix B).

Particularly striking, 18.8% of respondents endorsed at least one theory they had not heard before, suggesting that a meaningful portion of agreement may be constructed in real time rather than drawn from a pre-existing belief schema. Disaggregating by certainty further highlights this variation. Only about one-third of agreement responses reflected high-certainty belief; the remainder were more tentative or provisional (See Appendix B for breakdown by theory).

To ensure these results were not an artifact of a particular survey or platform, I pulled data from the CCES 2011 conspiracy theory module and calculated the percent of respondents who agreed with theory items they reported not hearing. Data for this robustness check come from Oliver and Wood’s (2014) replication materials. Among the CCES battery of seven questions, 16.7% of respondents agreed with at least one theory they reported not having heard before. Additionally, although the rates varied by item, 1–5% of respondents who endorsed a conspiracy theory did so despite reporting no prior familiarity with it—evidence that some portion of agreement reflects on-the-spot judgment rather than stored belief. This pattern appears across theories in multiple surveys (see Appendix E for results by theory).

Behavioral outcomes

Proportion of agreement leading to behavior.

Across all endorsements, 25.5% resulted in no action at all, and 16.6% of respondents never acted on any theory they claimed to believe. When limited to higher-risk behaviors (excluding information seeking), 36.4% of endorsements produced no engagement. These patterns suggest agreement is a weak proxy for consequential belief.

Certainty sharpened this relationship: respondents who were certain a theory was true reported more behaviors on average than those who “thought” it was true (1.63 vs 1.13) and were less likely to take no action on theories they reported believing (16.6% vs 25.9%, p < .001). This pattern anticipates the model-based results below, in which certainty alone improves prediction.

Trait models

To validate the belief measures, I estimate separate logistic models predicting agreement, certainty, familiarity, and the joint certainty-plus-familiarity measure from three psychological traits associated with conspiricism: paranoia, odd beliefs, and low trust. These models assess construct validity by testing whether each indicator correlates with known psychological predictors of conspiricism.

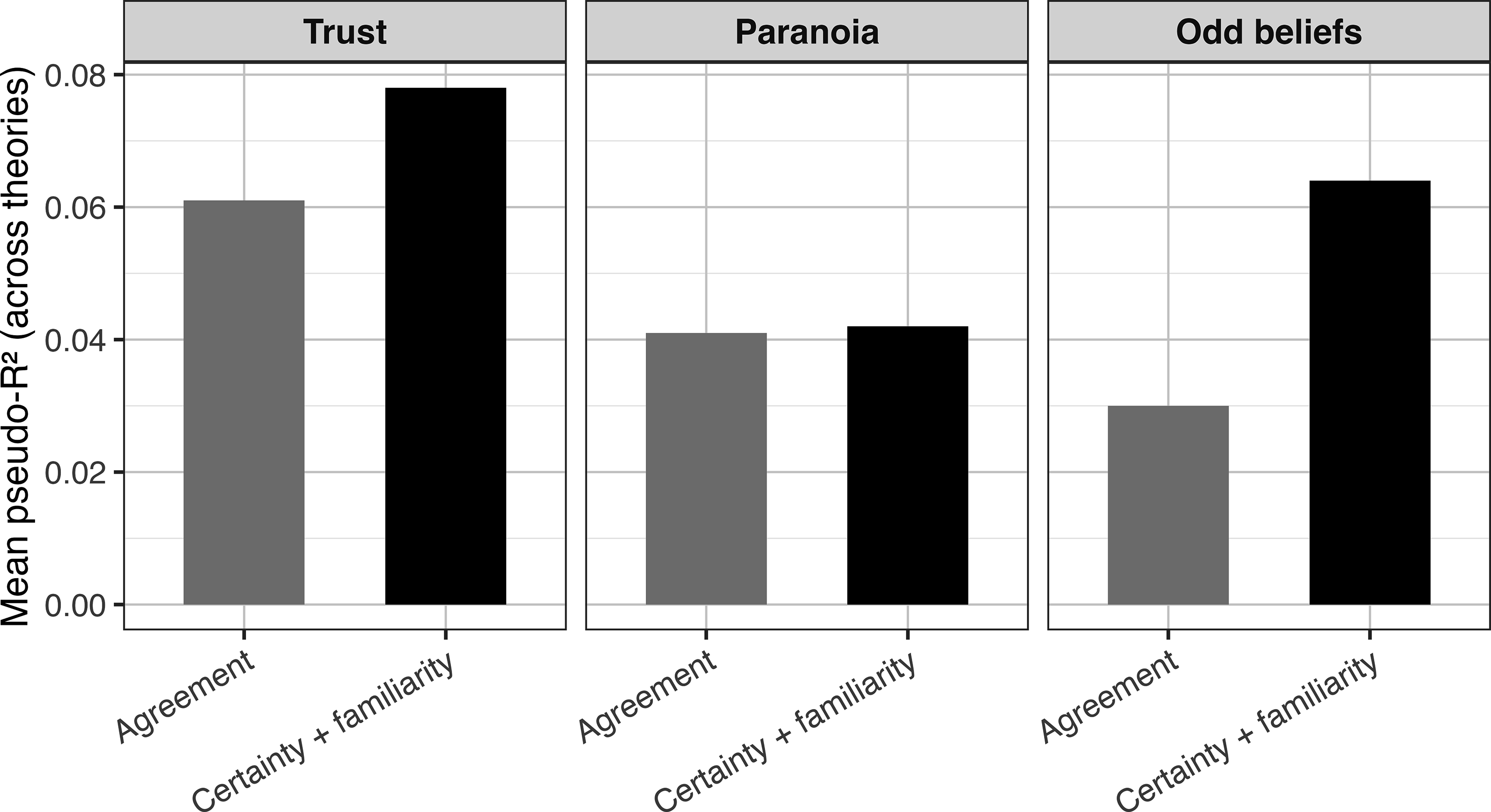

Across traits, the joint certainty-plus-familiarity specification exhibited higher pseudo-R2 and lower AIC than agreement alone. The improvement in model performance was largest for odd beliefs and generalized trust, and small but consistent for paranoia, as shown in Figure 1

1

. Certainty and prior familiarity outperform agreement in predicting psychological correlates of conspiracism.

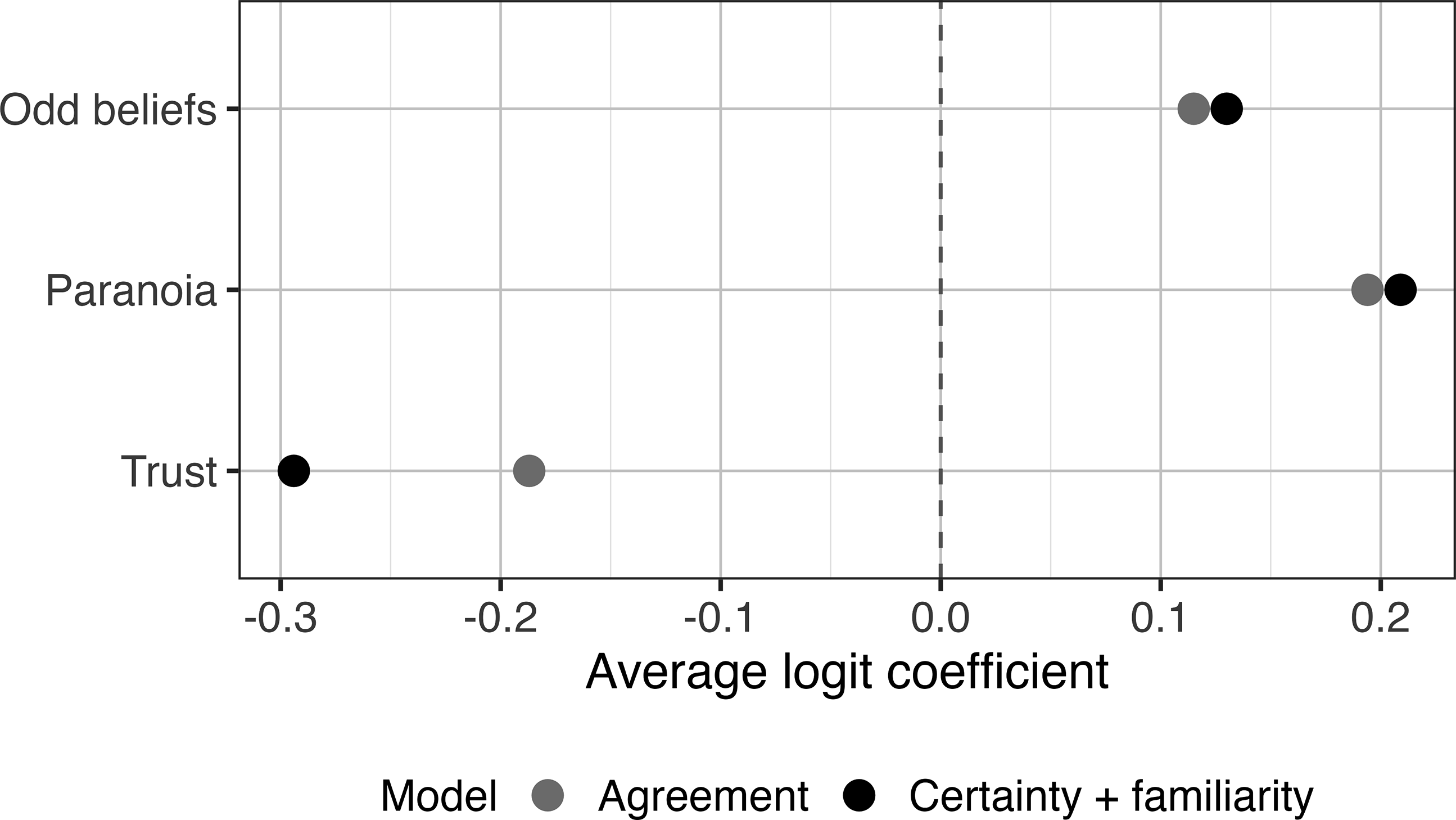

The salience indicator—a binary indicator of whether the respondent was both certain of and familiar with a theory—also better predicted traits associated with conspiracy belief. Trait associations were also more discriminating under the joint specification: coefficients were higher for paranoia and odd beliefs and lower for trust, suggesting that combining certainty and familiarity isolates a conspiratorial worldview more directly than agreement alone. Results are shown in Figure 2. Trait associations are stronger for certainty + familiarity than for agreement alone.

Behavioral models

The behavioral models test whether the proposed belief indicators meaningfully distinguish respondents who act on conspiracy theories from those who do not. Across all behaviors, the joint certainty-plus-familiarity specification achieved the best fit (lowest AIC and highest pseudo-R2), outperforming agreement and each component alone. Familiarity alone predicted information-seeking behaviors but was weaker for communication behaviors, potentially indicating distinct pathways from belief to action (Figure 3). Behavioral prediction improves when certainty and familiarity are included.

Substantively, respondents who were both certain and familiar with a theory were markedly more likely to report acting on it. Across all four behaviors, respondents expressing salient belief (certain + familiar) were substantially more likely to act on their beliefs than agreement-only believers. Salient believers were 34% more likely to discuss a theory with others (63% vs 47%), more than twice as likely to post about it online (15% vs 7%), 50% more likely to seek out like-minded information (42% vs 28%), and 44% more likely to search for verification (52% vs 36%). See Figure 4 for behavior rates across models. These differences are consistent across behaviors and represent sizeable shifts in the rates of engagement. Because posting and search behaviors are rare in the general population, differences of this magnitude represent large behavioral shifts. Salient believers are substantially more likely to engage in conspiracy-related behaviors.

As a supplementary analysis, I re-estimated the behavioral models treating certainty as an ordinal (1–5) measure rather than a binary indicator. The results were substantively similar: models incorporating certainty and familiarity continued to outperform agreement-only models across all outcomes (see Appendix D).

Overall, incorporating certainty and prior familiarity distinguishes a smaller subset of endorsements that is more consistently linked to both behavioral engagement and psychological correlates of conspiratorial predisposition than agreement alone.

Discussion

This study challenges the practice of treating survey agreement as synonymous with conspiracy belief. A notable share of respondents endorsed claims they reported never having encountered before, consistent with evidence that survey attitudes are often constructed in real time (Tourangeau et al., 2000; Zaller and Feldman, 1992). When standard measures treat such agreement as a pre-existing belief, prevalence estimates and downstream inferences can be distorted. Binary agree/disagree formats obscure variation between tentative acceptance and committed conviction; measuring belief salience provides a clearer basis for inference.

These findings carry two implications. First, public concern about the prevalence of conspiratorial belief may rest in part on measurement practices that treat heterogeneous forms of endorsement as equivalent. This does not imply conspiracy theories are inconsequential, but that agreement alone may overstate behaviorally meaningful endorsement. When respondents report low confidence, lack prior familiarity, and show limited behavioral engagement, agreement may capture a form of endorsement that differs from internalized belief.

Second, for scholars, the results highlight the importance of measurement strategies that capture variation in conviction and prior exposure. Belief salience helps distinguish which forms of endorsement are more closely associated with political behavior, psychological predispositions, and potential downstream consequences. Although developed in the context of conspiracy theories, the salience-based approach may also be useful for studying other political beliefs commonly measured with agreement items, including issue positions and candidate evaluations.

An important implication of this framework is that belief salience may condition how endorsement translates into downstream political processes. Endorsements that are held with greater certainty and prior familiarity may be more resistant to corrective information, more stable over time, and more likely to motivate political participation or information-seeking behavior. By contrast, low-salience agreement may be more responsive to corrections and less likely to persist or guide action. While the present study does not test these dynamics directly, distinguishing between salient and non-salient endorsement provides a foundation for future work examining when conspiracy beliefs endure, when they are politically mobilizing, and when they are most amenable to intervention.

Several limitations warrant note. Behavioral outcomes are self-reported and may be subject to social desirability bias, particularly for behaviors that carry reputational or normative implications. In addition, the data were collected from a quota-balanced Prolific sample, which improves demographic coverage relative to convenience samples but does not fully represent the U.S. population. As a result, the findings speak to measurement dynamics in survey contexts rather than to population-level prevalence estimates. Moreover, the findings speak specifically to survey measurement rather than all contexts in which beliefs are expressed. Future research should examine whether belief salience predicts costlier behaviors, such as protest, political mobilization, or policy noncompliance, and whether such beliefs are more resistant to correction or more likely to become central to political identity.

Conclusion

Conspiratorial belief is often framed as a rising threat to democracy. But before we can assess that threat, we must measure belief in a way that reflects more than surface-level agreement. This paper proposed a new approach for differentiating forms of endorsement by incorporating certainty and prior familiarity.

The salience measure offers a sharper tool for distinguishing internalized conviction from situational endorsement. Across multiple analyses, the measure helped isolate which beliefs are likely to shape action, persist over time, and reflect deeper worldviews. Its inclusion improved models of behavior and aligned more closely with psychological traits associated with conspiracism. Researchers relying on agreement items alone may benefit from incorporating certainty and prior knowledge to avoid overstating prevalence and misidentifying politically relevant believers.

These findings suggest a valuable path forward for refining studies of conspiracy belief, distinguishing between factors that drive mere agreement and those that foster behaviorally relevant belief. The measure not only clarifies when endorsement is likely to be politically meaningful but also provides a tool for capturing the kind of belief we’re most concerned with—the kind that is cognitively salient and action-oriented. By integrating salience into belief measurement, the measure advances both the theoretical and statistical precision of conspiracy research.

Supplemental material

Supplemental material - Do they really believe that? Measuring salient conspiracy endorsement

Supplemental material for Do they really believe that? Measuring salient conspiracy endorsement by Lisa Basil in Research & Politics.

Footnotes

Acknowledgments

I thank my committee members, Dennis Chong, Morris Levy, and Dan Schrage, for feedback on earlier versions of this project. I am also grateful to Sherry Zaks for helpful advice and encouragement during the revision process. All remaining errors are my own.

Ethical considerations

This study was approved by the University of Southern California Institutional Review Board. Informed consent was obtained from all participants.

Funding

The author disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was supported by a POIR Research and Development Fund.

Declaration of conflicting interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

Replication data and materials available at the Harvard Dataverse (https://doi.org/10.7910/DVN/QGZRIE).

Carnegie Corporation of New York Grant

This publication was made possible (in part) by a grant from the Carnegie Corporation of New York. The statements made and views expressed are solely the responsibility of the author.

Supplemental material

Note

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.