Abstract

We present ConflLlama, demonstrating how efficient fine-tuning of large language models can advance automated classification tasks in political science research. While classification of political events has traditionally relied on manual coding or rigid rule-based systems, modern language models offer the potential for more nuanced, context-aware analysis. However, deploying these models requires overcoming significant technical and resource barriers. We demonstrate how to adapt open-source language models to specialized political science tasks, using conflict event classification as our proof of concept. Through quantization and efficient fine-tuning techniques, we show state-of-the-art performance while minimizing computational requirements. Our approach achieves a macro-averaged AUC of 0.791 and a weighted F1-score of 0.753, representing a 37.6% improvement over the base model, with accuracy gains of up to 1463% in challenging classifications. We offer a roadmap for political scientists to adapt these methods to their own research domains, democratizing access to advanced NLP capabilities across the discipline. This work bridges the gap between cutting-edge AI developments and practical political science research needs, enabling broader adoption of these powerful analytical tools.

Keywords

Introduction

How can we adapt large language models to accurately classify and interpret complex political phenomena while maintaining computational efficiency and interpretability? We look at three fundamental challenges in computational analysis of political violence and conflict events. First, the temporal evolution of conflict patterns and tactics, addressing how pre-trained models can adapt to changing forms of political violence (Gururangan et al., 2020). Second, the complexity of modern conflict events, where single incidents often manifest multiple forms of violence simultaneously, requiring sophisticated multi-label classification capabilities. Third, model interpretability for security applications, ensuring that classification decisions can be understood and validated by political science researchers and security practitioners. Through careful architectural modifications and novel training approaches, we enhance Llama 3.1’s capabilities to capture these nuanced aspects of political violence while maintaining computational efficiency. Our results demonstrate significant improvements in understanding both common and rare forms of political violence, with particular advances in detecting emerging patterns of conflict. These challenges are particularly salient given the historical difficulties in event data collection and analysis. Human coding of events is neither as good nor as consistent as coders tend to believe, while traditional computational approaches have struggled with the temporal scales relevant to political analysis. ConflLlama addresses this gap, while maintaining the consistency and scalability needed for systematic analysis. In this paper, we make the following contributions: (1) We demonstrate how efficiently fine-tuned LLMs can improve multi-label conflict event classification; (2) We analyze performance across common and rare event types; and (3) We provide a practical pathway for political scientists to adapt these methods to their own research.

The Global Terrorism Database (GTD) (LaFree and Dugan, 2007) represents one of several terrorism event databases, following earlier systematic data collection efforts like International Terrorism: Attributes of Terrorist Events (ITERATE) and Worldwide Incidents Tracking System (WITS). Meta’s open-source Llama models (Touvron et al., 2023) make it possible for early term researchers with low to medium computational resources to prototype their own event classifiers. 1

The application of machine learning to conflict analysis has evolved significantly, from early statistical approaches described by LaFree and Dugan (2007) to modern deep learning methods. While traditional approaches relied heavily on manual feature engineering and classical machine learning techniques, the emergence of transformer-based models has enabled more sophisticated analysis of event narratives (Devlin, 2018; Hu et al., 2022). The challenge of adapting these models to specialized domains while maintaining computational efficiency remains significant (Dettmers et al., 2024).

ConflLlama advances machine learning in conflict event detection by leveraging recent Large Language Models (LLMs), such as Llama 3.1 (8B), while incorporating domain-specific knowledge through fine-tuning on the Global Terrorism Database (GTD) dataset. We employ Quantized Low-Rank Adaptation (QLoRA) for efficient adaptation, building on the work in low-rank adaptation of large language models (Hu et al., 2021). QLoRA enables efficient fine-tuning by quantizing model weights (reducing numerical precision) and training only a small number of parameters while keeping most of the model frozen. Through extensive temporal analysis of historical periods from 1990 to 2014, we demonstrate how broader historical context enhances the model’s classification capabilities. Additionally, prompt engineering (systematic optimization of input text) reveals remarkable consistency, suggesting robust internal representations of event characteristics.

We build upon and extend foundational research demonstrating large language models’ effectiveness in political science applications (Heseltine and Clemm Von Hohenberg, 2024; Mellon et al., 2024), showing how targeted fine-tuning of open source models can substantially enhance performance in specialized tasks. Leveraging Llama 3.1 8B, which offers several key advantages for conflict event detection: First, its architecture achieves strong performance with only 8 billion parameters (Touvron et al., 2023), making it accessible for resource-constrained research environments. Second, the model exhibits exceptional versatility in domain adaptation tasks (Lu et al., 2024), with documented success in specialized classification tasks (Li et al., 2023) and extended context processing capabilities—essential features for processing detailed conflict event descriptions. Third, the model’s architecture facilitates efficient feature extraction and effective fine-tuning through low-rank adaptation (Hu et al., 2021), making it well-suited for capturing the nuanced patterns in conflict event classification. While our current work focuses specifically on attack type classification rather than actor or location identification, the approach demonstrated here could be extended to these tasks in future work.

Background and related work

Evolution of conflict event classification

Building on Schrodt (2015)’s foundational work in event coding systems, modern approaches have progressed from basic natural language processing to sophisticated neural architectures. The Global Terrorism Database (GTD), curated by START (LaFree and Dugan, 2007), has emerged as a critical resource—documenting over 200,000 terrorist events with structured classification. This progression from manual coding to automated classification represents a fundamental shift in conflict analysis methodology. Recent advancements include automated extraction tools that rival human coders in performance (King and Lowe, 2003), domain-specific language models tailored for conflict detection (Hu et al., 2022), and machine learning-based forecasting systems for political violence (D’Orazio and Lin, 2022). Additionally, active learning methods have been introduced to improve data extraction efficiency while reducing annotation costs (Croicu, 2024). Research on cross-document event resolution further enhances the reliability of automated conflict classification by linking dispersed references to political events across texts (Radford, 2020). These innovations collectively push the boundaries of conflict event detection, increasing both the accuracy and scalability of political violence monitoring systems.

While previous work in conflict event classification has utilized BERT-based approaches such as ConfliBERT (Hu et al., 2022), recent evidence demonstrates that LLM-based approaches with efficient fine-tuning represent a more promising direction. Wang (2025) notes that “few-shot learning with GPT can outperform fine-tuning BERT and its variants” particularly when working with limited labeled data. Moreover, as highlighted by Ornstein et al. (2023), LLM approaches “significantly outperform existing automated approaches…at a small fraction of the time and financial cost.” This dramatic improvement in efficiency, combined with our quantization approach, enables deployment on consumer-grade hardware while maintaining strong performance. Even researchers previously committed to BERT-based approaches acknowledge that LLM-based methods may exceed their performance (Gabriel, 2025). Given these developments, we focus our comparison on the base (zero-shot) and fine-tuned variants of our LLM approach rather than comparing with older BERT architectures. For readers interested in encoder model comparisons, we provide benchmarks against the recently developed modernBERT in the appendix. The current implementation of ConflLlama focuses specifically on attack type classification rather than actor or location identification. As Wang et al. (2023) demonstrate, adapting LLMs for named entity recognition requires specialized approaches to bridge sequence labeling and text generation paradigms.

Distribution of Attack Types in the Global Terrorism Database.

Distribution of Attack Types in the Global Terrorism Database (GTD). The GTD is a comprehensive database documenting over 200,000 terrorist events worldwide from 1970 to 2020, maintained by the National Consortium for the Study of Terrorism and Responses to Terrorism (START). Each event is classified into one or more attack types, with significant imbalance across categories.

Large language models and efficient domain adaptation

Adapting large language models for specialized domains presents both opportunities and challenges. Gururangan et al. (2020) demonstrated the importance of domain-adaptive pretraining, showing how targeted adaptation can significantly improve performance in specific fields. The computational demands of such adaptation have spurred innovative solutions. LoRA, introduced by Hu et al. (2021), showed that effective model adaptation could be achieved by modifying a small number of task-specific parameters, making specialized applications more feasible. This approach was further refined through QLoRA by Dettmers et al. (2024), which combined quantization with low-rank adaptation to reduce memory requirements while maintaining model performance. These advances in efficient fine-tuning techniques have made domain-specific adaptation more accessible, particularly in resource-constrained environments where full model fine-tuning would be impractical. A critical challenge in conflict event classification is to generalize across time. Recent work by Lazaridou et al. (2021) highlighted how language models struggle with temporal adaptation, particularly evolving terminology and patterns. This challenge is relevant for conflict analysis, where these continue to evolve.

Methodology

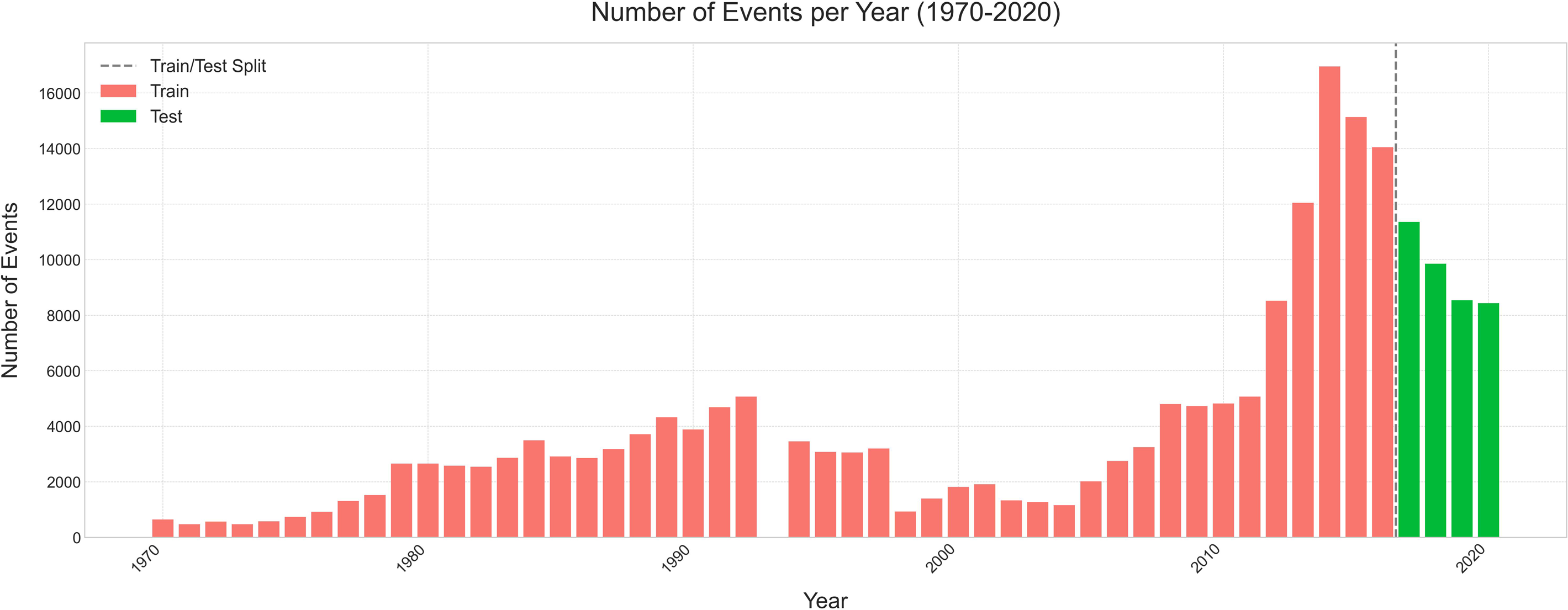

Our research aims to automate the classification of terrorist events by developing a language model capable of extracting key attack features directly from incident descriptions. To accomplish this, our preprocessing pipeline implements temporal filtering by establishing a cutoff date of January 1, 2017, which creates a natural evaluation set while ensuring temporal consistency in the training data. Our strategy divides the dataset into 171,514 training events (pre-2017) and 38,192 test events (2017 onwards), ensuring a robust evaluation of model generalization to recent incidents. The textual data preparation combines event summaries with recorded attack classifications, processed through a standardization pipeline that handles special characters, whitespace normalization, and date standardization while filtering empty entries. 3 For label processing, we preserve the multi-label nature of events by combining primary, secondary, and tertiary attack types when available, maintaining the natural hierarchy of attack classifications. Multi-label characteristics are rare in the data, with less than 5.0% of events having secondary attack types and 0.3% having tertiary classifications.

We leverage the Unsloth package, which provides crucial optimizations for efficient fine-tuning of large language models. 4 The training pipeline consists of three main components: data preprocessing, model setup, and training optimization. The GTD dataset undergoes initial processing to standardize event descriptions and attack type labels, with careful handling of temporal information and multi-label scenarios. 5

Conceptually, fine-tuning adapts the language model’s internal representations to better capture domain-specific patterns in conflict events. While general language models understand terms like “bombing” or “assault” broadly, fine-tuning helps the model recognize nuances between similar attack types and learn the contextual signals that distinguish between categories like Armed Assault and Assassination. This domain adaptation occurs through exposure to thousands of labeled examples, allowing the model to adjust its internal parameters while maintaining its general language understanding capabilities.

Results and analysis

To ensure clarity in our experimental design, we explicitly define the different model variants used in this study. The base model refers to the original, unmodified Llama-3.1 evaluated in a zero-shot manner, with no domain-specific fine-tuning applied. For this base model evaluation, we provided a structured prompt that explicitly listed all possible attack classifications and requested probabilities in JSON format (see Appendix for the complete prompt). This zero-shot approach serves as our baseline, representing what is achievable without any GTD-specific training. In contrast, our ConflLlama variants (Q4 and Q8) combine both fine-tuning on the GTD dataset and quantization at different precision levels. The Q4 variant uses 4-bit precision for most weights (with selective 6-bit precision for attention layers), while the Q8 variant uses 8-bit precision throughout, representing different trade-offs between memory efficiency and classification performance. Additionally, we provide a BF16 (unquantized) fine-tuned model for researchers with access to larger computational resources. However, this unquantized model requires approximately 16 GB of VRAM to load completely, making it impractical for researchers with consumer-grade hardware. In contrast, our quantized models (Q4 and Q8) can run efficiently on typical research workstations, democratizing access to state-of-the-art conflict event classification.

As shown in Figure 2, which presents a Receiver Operating Characteristic (ROC) curve plotting the true positive rate against the false positive rate at various classification thresholds, ConflLlama variants substantially outperform the base model. The ROC plot allows us to evaluate classifier performance independent of class distribution, with the Area Under the Curve (AUC) serving as a comprehensive metric of discriminative ability. ConflLlama-Q8 achieves an AUC of 0.791 compared to Llama-3.1’s 0.575. Q8 quantization achieves higher performance (AUC = 0.791) than Q4 quantization (AUC = 0.749).

6

This performance difference suggests that the higher precision retention in Q8 quantization better preserves the nuanced features necessary for accurate conflict event classification (Figure 3). Macro-averaged ROC curves showing performance comparison between ConflLlama variants and base Llama-3.1. ConflLlama-Q8 achieves the highest AUC (0.791), demonstrating significant improvement over the baseline. Comprehensive performance metrics across models. Bars show accuracy, macro F1, and weighted F1 scores for each variant.

Per-Class F1 Scores and Relative Improvements.

The most substantial performance gains occurred across several attack types. ConflLlama-Q8 achieved a remarkable 1463.6% improvement in Unarmed Assault classification, increasing the F1 score from 0.035 to 0.553. Similarly, Hostage Taking (Barricade Incident) saw a 692.0% improvement, while Hijacking events showed a 527.2% enhancement in classification accuracy. These improvements demonstrate the effectiveness of our fine-tuning approach in capturing subtle discriminative features. High-frequency event types also saw substantial gains in classification performance. Bombing/Explosion events, which represent a significant portion of the dataset, showed a 65.4% improvement, with the F1 score rising from 0.549 to 0.908. Armed Assault classification improved by 83.5%, with the F1 score increasing from 0.374 to 0.687. These improvements in common event types are particularly significant as they impact a large proportion of the classification tasks.

The performance improvements show a consistent pattern across attack types, with both variants outperforming the base model. The Q8 variant consistently achieves better results than Q4, with the margin of improvement being particularly notable in complex event types. This suggests that higher precision quantization is especially beneficial for distinguishing subtle differences between related attack types.

Analysis reveals ConflLlama’s sophisticated handling of complex conflict events where multiple attack types frequently co-occur. ConflLlama-Q8 achieves a Hamming Loss of 0.052, substantially outperforming both its Q4 variant (0.061) and the base model (0.148). 7 This precision in label-level predictions is particularly valuable for conflict event classification, where nuanced distinctions between attack types can significantly impact analysis.

The models capture complete attack patterns is equally impressive, with ConflLlama-Q8 achieving a Subset Accuracy of 0.724 compared to the base model’s 0.320. 8 This improvement suggests that the higher precision quantization of Q8 better preserves the model’s understanding of label co-occurrence patterns compared to the Q4 variant (0.688).

Beyond perfect matches, ConflLlama-Q8’s Partial Match score of 0.738 (up from the base model’s 0.356) demonstrates robust performance even in cases where exact label matching proves challenging. The model maintains remarkable fidelity to real-world event complexity, with its predicted label density of 0.975 closely tracking the true density of 0.963. 9 This balanced prediction proves crucial for practical applications, where both missing attack types and false positives can significantly impact conflict analysis and response planning. The multi-label performance metrics collectively demonstrate that our fine-tuning approach successfully maintains the complex relationships between different attack types while improving classification accuracy. 10 The performance of the Q8 variant (0.765 accuracy vs 0.729 for Q4) demonstrates that higher precision quantization better preserves the nuanced differences between conflict event types, while the dramatic improvement over the base model’s accuracy (0.346) and macro F1 score (0.012 vs 0.582) validates our approach to domain-specific adaptation. This performance gap underscores that general-purpose language models require appropriate fine-tuning for specialized classification tasks, and demonstrates that our efficient adaptation strategy yields substantial improvements while maintaining computational feasibility for resource-constrained research environments.

Analysis of misclassifications reveals interesting patterns in model behavior. The most challenging distinctions occur between semantically related categories, such as different types of armed conflicts. For example, the model occasionally struggles to distinguish between Armed Assault and Assassination events when both involve targeted violence with firearms. Similar confusion arises between Hostage Taking (Kidnapping) and Hostage Taking (Barricade) categories, where the temporal and spatial dimensions of the event represent the primary distinguishing factors rather than the core violent action. The model shows particular strength in identifying events with distinct characteristics (such as bombings) but faces greater challenges with events that share overlapping tactical elements, target selection patterns, or weaponry. Future research could address these challenges through hierarchical classification approaches that first identify the broad category of violence before making finer distinctions, or through expanded feature engineering that better captures the contextual cues human analysts use to differentiate between related attack types.

Impact of temporal training data scope & prompts

ConflLlama’s performance across expanding temporal windows, building on recent research showing that data distribution significantly impacts downstream task performance. By progressively incorporating data from 1990 to 2005 through to the complete dataset, we investigate how temporal coverage affects classification.

Our results show improvements across all macro metrics when incorporating recent examples. Model accuracy climbs from 0.69 to 0.76, while precision stabilizes around 0.70. Recall and F1-score show marked improvements—from 0.49 to 0.63 and 0.51 to 0.66. This aligns with recent findings that broader data distribution enhances model generalization (Parmar et al., 2018). The consistent upward trend suggests that access to more recent historical examples helps the model better capture evolving patterns in conflict events. However, the non-linear improvements in precision highlight the complex relationship between temporal coverage and classification performance—adding more years of data do not automatically translate to proportional gains in all metrics.

A closer examination of per-class F1-scores reveals striking patterns in how temporal coverage affects different types of conflict events. Bombing/Explosion events maintain consistently high performance across all temporal windows, due to their abundant representation in the training data. The most dramatic improvements appear in previously challenging categories. Hijacking classification jumps significantly from 0.129 to 0.614 between 2005 and 2010, while Unarmed Assault shows steady progression from 0.364 to 0.560. Hostage Taking (Kidnapping) demonstrates strong upward trajectory, reaching 0.821 in the full model.

Some categories reveal persistent challenges. Hostage Taking (Barricade Incident) shows modest improvement from 0.168 to 0.388, suggesting that even expanded temporal coverage may not fully address classification difficulties for rare event types. Assassination exhibits volatile performance, dropping sharply in 2010 before recovering to 0.629 in the full model, highlighting how temporal distribution can significantly impact specialized event classification.

Multi-Label Classification Performance Metrics and Relative Improvements.

The improvement in subset accuracy in Table 3 demonstrates the model’s enhanced ability to capture complete event characterizations. This is vital for security analysts who need to identify all aspects of an incident—for instance, distinguishing between a simple armed assault and one that escalates into a hostage situation. This 15.33% improvement suggests better reliability in comprehensive threat assessment. Noteworthy is the convergence of predicted labels (from 1.104 to 0.969) toward the true average of 0.963. This indicates the model’s sophistication in avoiding over-classification—a critical factor when multiple agencies may need to coordinate responses based on event type.

12

The consistent partial match accuracy (

This multi-label performance becomes especially relevant in real-world scenarios where events often defy simple categorization. For instance, in analyzing insurgency tactics, the ability to simultaneously identify multiple aspects of an attack (e.g., Facility/Infrastructure Attack combined with Bombing/Explosion) provides crucial insight into evolving threat patterns. The improved metrics suggest better capability in capturing such nuanced classifications, potentially enhancing early warning systems and response planning.

To evaluate ConflLlama’s robustness to prompt variations, we compared two prompting strategies: one explicitly framing the task within a terrorism event classification context, and another adopting a neutral stance referring to general “incidents” and “conflict-related events.” The results demonstrate remarkable stability across these approaches, with nearly identical macro F1-scores (0.635 vs 0.634) and only minor variations in class-wise performance, suggesting the model has developed “classification invariance” (Peyrard et al., 2021; Zhu et al., 2023). Detailed performance metrics and class-wise analysis are provided in the Appendix.

Conclusion

ConflLlama demonstrates significant advances in computational conflict analysis across multiple dimensions. Through efficient quantization strategies, we achieved remarkable classification improvements—from 65.4% for bombing events to over 1400% for categories like unarmed assaults—while maintaining modest computational requirements (processing 1000 events in under 2 minutes on standard GPUs). The model’s sophisticated multi-label capabilities (0.724 subset accuracy) capture the complex, overlapping nature of modern political violence, while temporal analysis reveals steady improvements in classification performance as historical coverage expands.

The success of our quantization approach demonstrates that high-quality conflict analysis is achievable with limited computational resources. Furthermore, the model’s resilience to prompt variations, maintaining consistent F1-scores (0.635 vs 0.634) across different prompting strategies, suggests robust internal representations of conflict patterns rather than superficial pattern matching. These advances in efficiency and robustness make sophisticated conflict analysis accessible to a broader research community.

Several promising directions emerge for future research in conflict studies. First, investigating how the model interprets emerging forms of political violence and novel attack patterns could help address the evolving nature of modern conflict. Second, improving the detection of rare forms of violence while maintaining efficiency could enhance our understanding of less common but significant conflict events. Third, examining applications to related domains such as civil unrest patterns or crisis dynamics could expand our understanding of political violence more broadly.

As political violence continues to evolve in both complexity and scope, tools that can accurately distinguish between different forms of conflict become increasingly crucial for both academic research and security practice. ConflLlama represents a step forward in sophisticated analysis of political violence more accessible while maintaining high standards of accuracy and efficiency. Its success demonstrates how computational advances can deepen our understanding of political violence while remaining accessible to the broader research community studying conflict and security.

Supplemental Material

Supplemental Material - ConflLlama: Domain-specific adaptation of large language models for conflict event classification

Supplemental Material for ConflLlama: Domain-specific adaptation of large language models for conflict event classification by Shreyas Meher and Patrick T. Brandt in Research & Politics

Footnotes

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was supported by NSF award 2311142 and utilized Delta at NCSA / University of Illinois through allocation CIS220162 from ACCESS.

Data Availability Statement

The code and models used in this study are available at Harvard Dataverse and Huggingface. The Global Terrorism Database used for training is available through START at the University of Maryland.

Supplemental Material

Carnegie Corporation of New York Grant

This publication was made possible (in part) by a grant from the Carnegie Corporation of New York. The statements made and views expressed are solely the responsibility of the author.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.