Abstract

Can the media effectively hold politicians accountable for making false claims? Journalistic fact-checking assesses the accuracy of individual public statements by public officials, but less is known about whether this process effectively imposes reputational costs on misinformation-prone politicians who repeatedly make false claims. This study therefore explores the effects of exposure to summaries of fact-check ratings, a new format that presents a more comprehensive assessment of politician statement accuracy over time. Across three survey experiments, we compared the effects of negative individual statement ratings and summary fact-checking data on favorability and perceived statement accuracy of two prominent elected officials. As predicted, summary fact-checking had a greater effect on politician perceptions than individual fact-checking. Notably, we did not observe the expected pattern of motivated reasoning: co-partisans were not consistently more resistant than supporters of the opposition party. Our findings suggest that summary fact-checking is particularly effective at holding politicians accountable for misstatements.

Fact-checking websites have changed how media outlets cover politics. Sites like PolitiFact, FactCheck.org, and The Washington Post Fact Checker seek to correct misinformation and hold politicians accountable by providing extensive coverage of the accuracy of claims made by political figures. Nevertheless, inaccurate claims made by politicians continue to mar public debate. How can journalists more effectively hold elites accountable when they spread misinformation?

Extensive research has been conducted on the effects of fact-checking on people’s factual beliefs (e.g., Flynn, Nyhan and Reifler, 2017) and the effects of media scrutiny on how the public views politicians (e.g., Snyder and Strömberg, 2010). However, less is known about how and under what conditions journalistic scrutiny might increase the reputational costs to politicians for promoting misinformation (though see Nyhan and Reifler, 2015) — even successful fact-checks that change respondents’ beliefs about a false statement have relatively little effect on the image of the offending politician (Nyhan et al., Forthcoming; Swire-Thompson et al., Forthcoming).

One promising alternative approach is summary fact-checking, which seeks to paint a more comprehensive picture of a politician’s accuracy by aggregating all existing ratings of statements they have made. For example, when Donald Trump said Hillary Clinton “wants to abolish the Second Amendment,” PolitFact conducted a traditional (individual) fact-check of this singular statement and rated it False using its “Truth-o-Meter” system (Qiu, 2016). A summary fact-check, on the other hand, would describe the distribution of fact-check ratings for a given politician. For instance, PolitiFact editor Angie Drobnic Holan wrote a New York Times op-ed in December 2015 noting that the site had “fact-checked more than 70 Trump statements and rated fully three-quarters of them as Mostly False, False or ‘Pants on Fire’” (Holan, 2015). By drawing on a larger number of ratings, this form of fact-checking could potentially provide stronger evidence of inaccuracy and have a greater influence on how the public perceives politicians than individual fact-checks do.

This study therefore compares the effects of summary fact-checking data and individual fact-check ratings on views of politicians who make misleading claims. Consistent with our preregistered hypotheses, summary fact-checking data reduced perceptions of politicians’ accuracy and favorability more than exposure to a negative individual fact-check rating did. These results, which were not consistently moderated by other factors such as partisanship, political knowledge, or education, demonstrate that fact-checking, especially when presented in a summary format, can play an important role in holding politicians accountable for misleading statements.

Theory

Existing research offers mixed conclusions about the effects of fact-checks and corrective information. Meta-analyses conclude that corrections can moderately reduce misinformation (Chan et al., 2017; Walter and Murphy, 2018). Similarly, recent work shows that individuals update their perceptions in the direction of corrective information (Wood and Porter, 2019). Several studies also argue fact-checks can increase political knowledge and affect voter behavior (e.g., Fridkin, Kenney and Wintersieck, 2015; Gottfried et al., 2013;), but others find that fact-checks may have limited effects or be counterproductive (e.g.,Garrett, Nisbet and Lynch, 2013; Garrett and Weeks, 2013). Fact-checks may be less effective when a misperception is salient or invokes strong cues, such as partisanship or outgroup membership (Flynn et al., 2017).

However, less is known about the effects of summary fact-checks, which aggregate fact-check ratings of politicians, and how those effects compare to those of fact-checks of individual statements by politicians. Though the summary fact-check format is relatively uncommon, fact-checkers and other media outlets increasingly provide these statistics for politicians to help readers differentiate between candidates who have made a few false statements and those with long histories of spreading misinformation. For instance, fact-checkers like Holan and media outlets frequently compile multiple ratings of a given politician on the PolitiFact’s Truth-O-Meter or the Pinocchios scale of The Washington Post Fact Checker.

To date, most studies have focused on how fact-checks affect belief accuracy. However, summary fact-checking does not attempt to correct specific false or misleading claims. We therefore assessed its effects on perceptions of politicians (a key mechanism of democratic accountability) rather than factual beliefs. If the images of politicians suffer as a result of getting repeatedly fact-checked, politicians would face a stronger reputational incentive to avoid making false statements (Nyhan and Reifler, 2015).

Our theoretical expectations were that people would be less likely to dismiss a falsehood as an isolated incident and would instead view a politician’s behavior as more problematic when presented with summary data, which offers stronger evidence of a pattern of inaccuracy. 1 Exposure to summary fact-checking might promote greater updating of respondent views toward a candidate compared to a fact-check of an individual statement through various mechanisms. These include a memory-based “running tally” (e.g., Fiorina, 1981) in which candidate inaccuracy is more likely to be registered as a negative consideration, online processing of negative affect inspired by information about a sustained record of inaccuracy (e.g., Lodge and Taber, 2005), or a Bayesian process in which more information about past inaccuracy leads to greater updating of candidate attitudes (Zechman, 1979). 2

There are reasons to doubt this hypothesis, however. First, the way in which information is presented can sometimes matter more than the strength of evidence presented. For instance, one recent study found that a compelling narrative about a single event was more important than broader statistical information about a topic in changing public opinion (Norris and Mullinix, Forthcoming). In addition, Swire-Thompson et al. (Forthcoming) found that presenting numerous fact-checks only affected ratings of target politicians when false statements outnumbered true ones and even then generated very small effects. Adjudicating between the effects of summary and individual fact-checks thus merits scholarly attention.

We specifically proposed three hypotheses and two research questions, all of which were preregistered. First, drawing on the experimental literature supporting the efficacy of fact-checks, we predicted that individuals exposed to negative fact-checking of a politician in either format would view that politician less favorably and perceive them as less accurate (H1). For reasons discussed above, we also predicted that summary fact-checking data would have a larger effect on these outcome variables than an individual fact-check rating would (H2). Finally, as per theories of motivated reasoning (e.g., Kunda, 1990; Taber and Lodge, 2006) we predicted that the favorability and perceived accuracy of politicians would decrease more when individuals viewed fact-checking of the opposition party compared to their own (H3).

In addition, we proposed two related research questions, asking whether participants’ political knowledge or level of education would moderate the effects of fact-checking (RQ1) and whether exposure to a summary or individual fact-check would affect attitudes toward fact-checking (RQ2). Existing evidence is limited on both points. Fact-checking may be more effective among the politically knowledgeable (Fridkin et al., 2015), but people who are more sophisticated may also be more skilled at resisting corrective information (Taber and Lodge, 2006). No published studies examine the effects of fact-checking on attitudes toward the practice.

The following sections discuss three survey experiments that test these hypotheses and research questions. We describe Study 1 in detail and more briefly review studies 2 and 3, which are slight variants of Study 1 that address limitations in the design of prior studies.

Study 1

Methods

Prior to conducting the study, we preregistered the design, hypotheses, and analysis plan in the EGAP archive, which is an online platform where researchers preregister study designs to promote scientific accountability. 3 The sample consisted of 2825 participants recruited via Amazon Mechanical Turk, an online marketplace frequently used to recruit research participants (e.g., Berinsky, Huber and Lenz, 2012). 4 Data collection took place from May 7–10, 2016. Participants were required to be US residents aged 18 or older with at least a 95% HIT (“Human Intelligence Task”) approval rating on Mechanical Turk. Demographically, our sample mirrors other Mechanical Turk studies in being younger and more liberal, educated, and white than the general US population. Specific demographic distributions can be found in Online Appendix C.

Experimental design

Our study used a 3 × 2 between-subjects design that randomly varied fact-check type and politician partisanship. Respondents were randomly assigned to one of three treatments: an individual fact-check rating of a fictitious statement about job creation, a summary fact-check rating, or the control condition. Fact-checks used in all three studies were negative, indicating that the statements in question were not accurate. Participants were also randomly assigned to a target politician: Mitch McConnell (R-KY), the Senate majority leader at the time, or Harry Reid (D-NV), the Senate minority leader at the time. McConnell and Reid were chosen because they belong to different parties but are comparable figures.

The graphics in our individual fact-check rating treatment were adapted from PolitiFact’s Truth-O-Meter; both senators were presented as making the same false claim. Participants in the summary fact-checking data condition were exposed to a graphic adapted from The New York Times (Holan, 2015) presenting either McConnell or Reid as making more false statements than the average senator. Figure 1 presents the graphics used for respondents in the treatment conditions. 5

Treatment graphics.

Finally, participants in the control group were shown a graphic displaying predicted weather for Des Moines, Iowa. We included a caption for each graphic to ensure that participants understood the information presented and to match the format and design of the stimuli between conditions as closely as possible (see Online Appendix A).

Procedure

Participants were first required to provide informed consent and their age. They then answered questions regarding their demographics, party affiliation, and political knowledge before the experimental manipulation. Demographic and knowledge characteristics did not vary significantly between our three experimental groups (see Table C1 in Online Appendix C). After a brief task intended to conceal the study’s purpose, participants answered questions measuring three outcome variables: favorability toward McConnell or Reid, perceived accuracy of that senator, and favorability toward fact-checking (see Online Appendix A for full survey text). 6 Participants were then debriefed and compensated for their time.

Measures

Our study measured two primary outcome variables on five-point scales: how often statements made by the senator are accurate from “never” (1) to “all of the time” (5) and how favorable or unfavorable their views of the senator are from “very unfavorable” (1) to “very favorable” (5). For our second research question, we asked four questions about participants’ perceptions of fact-checking (see Online Appendix A for details). We also considered several pre-treatment moderators. For H3, we classified participants’ partisanship according to which political party they identify with or lean toward. To explore RQ1, we measured the education level and political knowledge of participants. We classified those with a bachelor’s degree or above as having a high level of education and those who correctly answer at least four of five questions on a standard political knowledge battery as high knowledge in a median split.

Results

We analyzed the effects of our experiment using ordinary least squares (OLS) regression with robust standard errors. 7

Main effects of fact-check type

Consistent with our first preregistered hypothesis (H1), exposure to either negative summary fact-checking data or a negative individual fact-check rating led to significantly lower accuracy and favorability ratings than we observed in the control condition. These findings hold for both outcome measures and both target politicians (see Table 1). Consistent with H1, respondents provided with an individual fact-check rating (

Effects of fact-check type on politician accuracy and favorability ratings.

p < .05, **p < .01 (two-sided). OLS = ordinary least squares models with robust standard errors.

Our second preregistered hypothesis (H2) predicted that the summary fact-checking data group would rate politicians lower than those exposed to negative individual fact-check ratings. The results corresponded directly with our hypothesis: the summary fact-checking data group rated McConnell lower on accuracy (

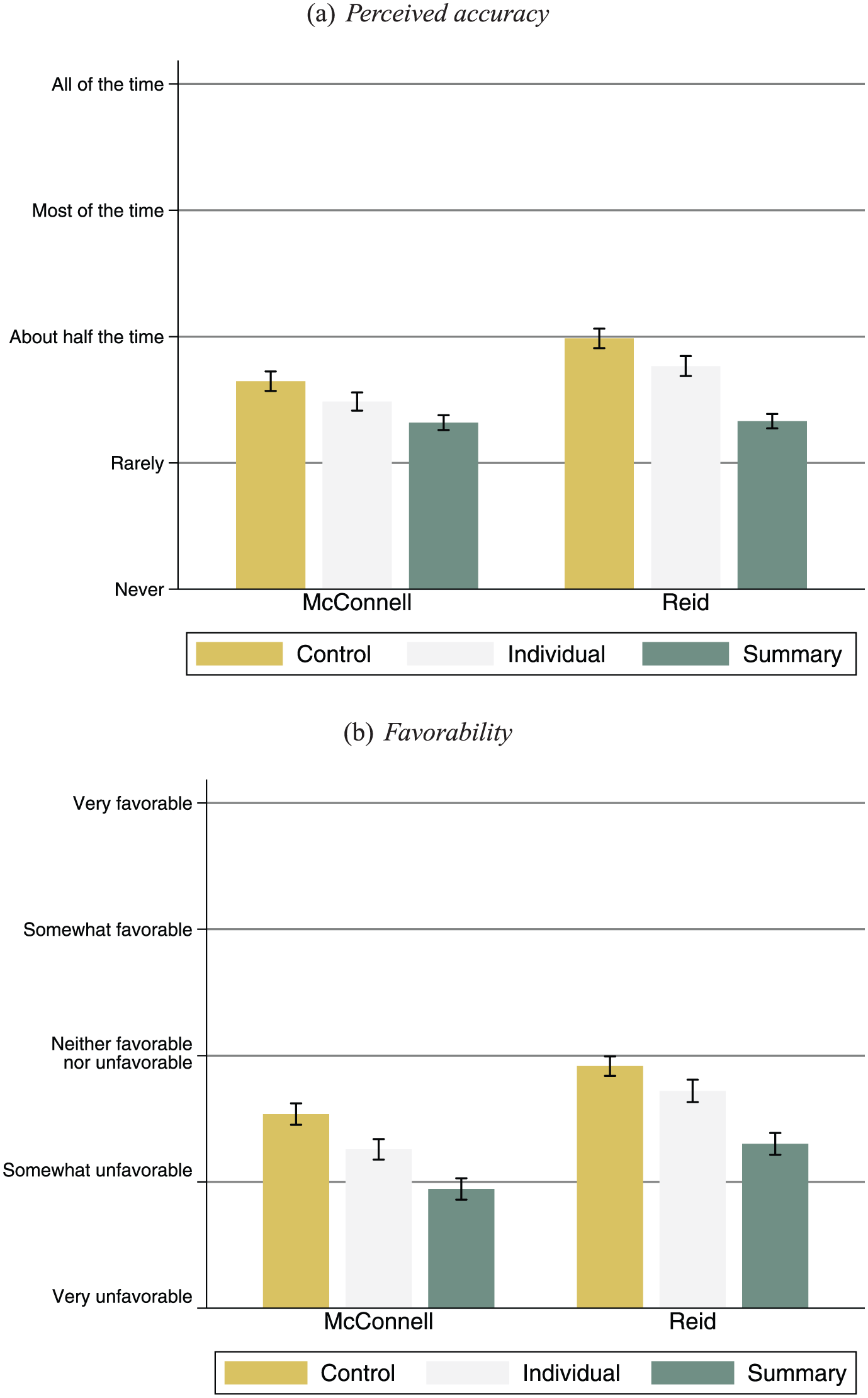

Figure 2 illustrates the substantive magnitude of these effects.

Perceived accuracy and candidate favorability by fact-check type.

As displayed in Figure 2a, control group participants perceived the senators’ statements as more accurate (McConnell: mean = 2.64, SE = 0.04; Reid: mean = 2.98, SE = 0.04) than did the individual fact-checking group (McConnell: mean = 2.49, SE = 0.04; Reid: mean = 2.77, SE = 0.04), but the summary fact-checking group rated them as least accurate of all (McConnell: mean = 2.32, SE = 0.03; Reid: mean = 2.33, SE = 0.03). Figure 2b presents similar results for favorability ratings. Participants in the control condition viewed the senators more favorably (McConnell: mean = 2.54, SE = 0.04; Reid: mean = 2.92, SE = 0.04) than those who viewed individual fact-checks (McConnell: mean = 2.26, SE = 0.04; Reid: mean = 2.72, SE = 0.05). Those who viewed summary fact-checking data rated the senators the least favorably (McConnell: mean = 1.95, SE = 0.04; Reid: mean = 2.30, SE = 0.04).

Party interactions

To check for directionally motivated reasoning (H3), we estimated heterogeneous treatment effects by party in Table 2.

8

Contrary to our hypothesis, these models did not suggest a directionally motivated response to negative fact-checking information. For instance, we expected the negative effect of summary fact-checking data on perceptions of Reid’s accuracy to be greater among Republicans than among independents and Democrats. Instead, the negative effect was greater among Democrats than among both independents (

Effects of fact-check type by party.

p < .05, **p < .01 (two-sided). OLS = ordinary least squares models with robust standard errors. FC = fact-check.

Research questions

Our first research question asked whether political knowledge or education would moderate treatment effects overall or among partisans. Our findings did not yield consistent results. Both fact-check types reduced McConnell favorability ratings more among people with low knowledge than among those with high knowledge. However, summary fact-checking data reduced Reid accuracy ratings more among respondents with high knowledge. Our findings are thus inconclusive. For our education results, we compared participants with and without a bachelor’s degree. We found only one significant difference in fact-check effects by education. Thus, we cannot conclude that education affects participants’ responses toward fact-checks of either type. 9 Similarly, fact-checking exposure had no measurable effect on perceptions of fact-checking for the four outcome measures we examined: favorability toward fact-checking, demand for more fact-checking, and the perceived accuracy and fairness of fact-checking. 10 We obtained similar results for both research questions in Studies 2 and 3 (see Online Appendix C for full results from all three studies) and therefore do not discuss them further.

Discussion

The results of this study confirmed that summary fact-checking is more effective at influencing the perceived accuracy and favorability of selected politicians than is individual fact-checking. These results did not vary by party or other preregistered moderators. The design we employed has two principal limitations. First, the summary fact-check we tested also includes fact-check information for an average senator; perhaps comparisons to the average senator drove the summary fact-check’s larger effects rather than the rating aggregation itself. Second, summary fact-checks explicitly lay out the politician’s record of accuracy, while an individual fact-check is centered around a single statement. Asking about overall accuracy might result in participants repeating back what they saw in the summary, rather than acting on an updated belief. We conducted two additional experiments to address these concerns. 11

Study 2

Study 2 replicated Study 1 except for two changes. In addition to measuring accuracy and favorability ratings of senators Reid and McConnell, we included a new question that tested participants’ perceived accuracy of a new statement putatively made by the senator about whom they saw a fact-check (“Nevada/Kentucky has more private sector jobs than ever before”). This measure addressed a potential concern about response bias in Study 1. By asking respondents to rate a novel statement, we could better test whether respondents were actively updating their beliefs rather than merely reporting what they saw in the fact-check graphics. In addition, we altered the summary fact-check graphic to remove the comparison to an average senator to address a potential design confound. This change clarified whether the aggregate information provided by the summary fact-check accounted for its stronger effects or whether they were the result of a contrast effect with an average senator. 12 Study 2 was conducted November 4–8, 2016 among a sample that was similar demographically to Study 1’s sample. It was also preregistered at EGAP.

Results

Study 2 results were similar to Study 1 for the two existing outcome measures (see Table B1). As in Study 1, respondents perceived the general accuracy of statements made by McConnell and Reid as lower when exposed to summary fact-checking versus an individual fact-check rating (−.11,

Discussion

Study 2 largely replicated the results of Study 1. Summary fact-checking information typically had more negative effects on the perceived accuracy of a politician and favorability toward that figure than an individual fact-check rating did. However, we found an anomalous result in how respondents evaluated the accuracy of a new statement attributed to the politician in question. This difference in accuracy rating may have been the result of an inadvertent confound between the topic of the individual fact-check (a claim about job creation during their tenure as majority leader) and the topic of the novel statement that participants rated afterward (private sector jobs in the majority leader’s state). We therefore replicated our findings in Study 3 using a design that removed this confound.

Study 3

Study 3 corrected a confound in the design of Study 2. Due to concerns about the close conceptual relationship between the topic for the fact-check of an individual statement (job creation) and the topic of the new statement whose accuracy respondents were asked to assess in Study 2 (private sector jobs), respondents were instead asked in Study 3 to evaluate the accuracy of the following statement from either McConnell or Reid: “I haven’t switched my position on the Trans-Pacific Partnership trade deal.” Study 3 was conducted January 12–16, 2017, had sample demographics that were similar to those in the previous studies, and was also preregistered with EGAP.

Results

Study 3 replicated the findings in studies 1 and 2 (see Table B2 in Online Appendix B). The perceived accuracy of statements made by McConnell and Reid and favorability toward them were lower when respondents were shown summary fact-checking data compared to an individual fact-check rating (

Discussion

The results of Study 3 helped explain the unexpected finding in Study 2, where participants who saw an individual fact-check rating viewed a new statement by that politician as less accurate than those who saw summary fact-checking information. We hypothesized that this finding was the result of the topic of the fact-check graphic and the novel statement being closely related. When this confound was removed and we asked respondents to evaluate a novel statement on an unrelated issue, we found the expected relationship: participants who were shown summary fact-checking data rated the novel statement as less accurate than those who were shown an individual fact-check rating.

Conclusion

Summary fact-checking data had significantly greater effects on perceptions of political figures than fact-check ratings of an individual statement did. Compared to respondents who saw an unfavorable or negative fact-check rating of a single statement, those who saw unfavorable summary fact-checking data viewed the politicians in question less favorably and perceived statements they made as less accurate. These effects were also not consistently moderated by other factors, including partisan affiliation, political knowledge, or education. The lack of partisan heterogeneity is particularly important given frequent concerns that directionally motivated reasoning undermines fact-checking effectiveness (e.g., Graves and Glaisyer, 2012).

These results suggest that news organizations should use summary fact-checking to encourage responsible conduct by political figures. However, caution is still required. First, fact-checking individual statements is still the best way to set the record straight about a specific claim. In addition, reporters and editors must consider whether aggregated fact-checks accurately represent a political figure’s overall record or will leave a distorted impression (Uscinski and Butler, 2013).

Future research should consider other research questions and approaches we did not evaluate. First, it would be valuable to test fact-checks of non-quantitative claims as well as different stimulus graphics or ratings. Additional studies could also consider more controversial targets or issues, vary the source of fact-checks, or test the effects of positive fact-checks. Second, we did not directly assess factual beliefs about a specific statement. Third, it would be worthwhile to further investigate the mechanisms for this effect (a difficult question under any circumstances).

Nonetheless, these results are an important first step toward understanding the new summary fact-checking format, which we found had greater effects on perceptions of politicians than did an individual fact-check rating. By increasing the reputational risk of making false claims in this way, it may help to discourage politicians from promoting misinformation in the first place.

Supplemental Material

Online_Appendix – Supplemental material for Counting the Pinocchios: The effect of summary fact-checking data on perceived accuracy and favorability of politicians

Supplemental material, Online_Appendix for Counting the Pinocchios: The effect of summary fact-checking data on perceived accuracy and favorability of politicians by Alexander Agadjanian, Nikita Bakhru, Victoria Chi, Devyn Greenberg, Byrne Hollander, Alexander Hurt, Joseph Kind, Ray Lu, Annie Ma, Brendan Nyhan, Daniel Pham, Michael Qian, Mackinley Tan, Clara Wang, Alexander Wasdahl and Alexandra Woodruff in Research & Politics

Supplemental Material

replication – Supplemental material for Counting the Pinocchios: The effect of summary fact-checking data on perceived accuracy and favorability of politicians

Supplemental material, replication for Counting the Pinocchios: The effect of summary fact-checking data on perceived accuracy and favorability of politicians by Alexander Agadjanian, Nikita Bakhru, Victoria Chi, Devyn Greenberg, Byrne Hollander, Alexander Hurt, Joseph Kind, Ray Lu, Annie Ma, Brendan Nyhan, Daniel Pham, Michael Qian, Mackinley Tan, Clara Wang, Alexander Wasdahl and Alexandra Woodruff in Research & Politics

Footnotes

Acknowledgements

We thank the Dartmouth College Office of Undergraduate Research for generous funding support. Our title comes from The Washington Post headline on a letter to the editor about fact-checking (Morris, 2013).

Authors’ note

Brendan Nyhan is professor of Government at Dartmouth College, Hanover, USA. Other co-authors are former undergraduate students at Dartmouth. Alexander Agadjanian is currently a research associate in the MIT Election Lab.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The authors received funding support from the Dartmouth College Office of Undergraduate Research.

Supplemental materials

Notes

Carnegie Corporation of New York Grant

The open access article processing charge (APC) for this article was waived due to a grant awarded to Research & Politics from Carnegie Corporation of New York under its ‘Bridging the Gap’ initiative.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.