Abstract

Objective:

Recent studies have identified the neutrophil/lymphocyte ratio, platelet/lymphocyte ratio, and neutrophil/lymphocyte × platelet ratio as promising prognostic markers in patients with sepsis. This study aims to evaluate the discriminatory ability of these ratios to predict mortality and requirement for renal replacement therapy at discharge, in patients with septic acute kidney injury.

Methods:

Diagnostic test study based on a multicenter retrospective cohort of adult patients with septic acute kidney injury requiring renal support. Hematologic ratios were calculated for three disease moments (admission, diagnosis of acute kidney injury, initiation of renal replacement therapy). Receiver operating characteristic curves were used to analyze the discriminative ability of the different hematological ratios at each disease moment.

Results:

A total of 152 patients were included. In-hospital mortality occurred in 61.8%, and 24.2% of survivors required renal replacement therapy at discharge. Measurements taken at the initiation of renal replacement therapy had the best discriminatory ability to predict adverse outcomes. For neutrophil/lymphocyte ratio the area under the curve to predict mortality was 0.596; (95% CI: 0.500–0.692), and to predict the requirement of renal replacement therapy 0.592 (95% CI: 0.286–0.898). In all proposed scenarios, the neutrophil/lymphocyte ratio and neutrophil/lymphocyte × platelet ratio demonstrated superior performance in comparison to the platelet/lymphocyte ratio. All three ratios exhibited comparable poor discriminatory ability.

Conclusions:

Hematological ratios have poor discriminatory capacity for predicting adverse outcomes in cases of septic acute kidney injury. The neutrophil-to-lymphocyte ratio taken at the initiation of renal replacement therapy is a potentially useful, economical, and easily applicable tool to be included in predictive models of mortality and dialysis dependence.

Keywords

Introduction

Acute kidney injury (AKI) is a prevalent clinical condition, with an incidence ranging from 20% to 50% in critically ill patients. It is associated with a poorer prognosis, prolonged hospitalization and increased healthcare costs.1,2

Patients who survive AKI are at an elevated risk of in-hospital mortality, progression to chronic kidney disease (CKD), cardiovascular morbidity, arterial hypertension, and progression to end-stage renal disease.3–5 The most prevalent cause of AKI in critically ill patients is sepsis,6–8 as evidenced by the multinational AKI-EPI study, which identified sepsis as the underlying cause in 271 of 666 cases (40.7%).

Currently, specific biomarkers have been described that predict renal function deterioration, intensive care unit (ICU) requirements, renal replacement therapy at discharge, and mortality.2,9–19 However, the development of such biomarkers faces two major challenges. First, they are costly and not widely available in clinical practice. Second, there is a requirement for simpler, reproducible, and more widely available markers. The development of such markers is therefore an important area for future research.

Ratios of hematological and inflammatory variables20,21 have been used as serum markers involved in the pathophysiological cascade of septic processes, which can predict adverse outcomes.22,23 These include the neutrophil/lymphocyte ratio (NLR), platelet/lymphocyte ratio (PLR), and neutrophil/lymphocyte × platelet ratio (NLPR), which have demonstrated adequate discriminatory ability to predict the development of AKI or mortality in patients with sepsis.24,25 However, the role of these ratios in predicting mortality in patients with sepsis associated with AKI remains unclear, with conflicting information being reported, particularly in patients with severe AKI requiring renal supportive therapy, an area where evidence is scarce.22,25 Furthermore, there is no evidence to predict the requirement for dialysis at discharge.

The objective of this research study is to determine the discriminative ability of the aforementioned indices in predicting mortality and the need for renal replacement therapy at discharge by comparing the different indices at the same time point, and the discriminative ability of each index at three time points during the patient’s hospitalization: at admission, at AKI diagnosis and at initiation of renal replacement therapy (RRT); concurrently, the study seeks to identify the ideal cutoff point for the prediction of such outcomes in a population diagnosed with severe AKI associated with sepsis requiring dialysis.

Methods

Study design

A multicenter retrospective cohort study was conducted as a diagnostic test study. The study included patients over 18 years of age who had been diagnosed with severe sepsis associated with AKI requiring RRT. These patients were admitted to the Hospital Universitario San Ignacio and the Keralty hospital network in Bogotá D.C., Colombia, between November 2016 and December 2022.

All data were anonymized, and all patient information was de-identified. This study was conducted in accordance with the ethical principles of the Declaration of Helsinki. The study protocol was approved by the ethics committees of the Hospital Universitario San Ignacio and the Pontificia Universidad Javeriana (The ethics approval number is FM-CIE-0280-24). Written informed consent was exempted by the Institutional Review Board.

Participants and definitions

The inclusion criteria for the study were met by patients over 18 years of age who had been diagnosed with sepsis and severe acute kidney disease, and who were requiring renal supportive therapy.

Patients with an admission time of less than 48 h, pregnant women, renal transplant recipients, obstructive uropathy, rapidly progressive glomerulonephritis or nephritic syndrome, those with previous need for RRT, or those with stage 5 chronic disease were excluded from this study. Furthermore, subjects with a documented previous medical history of human immunodeficiency virus infection, solid or hematological malignancies, systemic lupus erythematosus or other connective tissue diseases, and those currently undergoing immunosuppressive therapy, were excluded from participation in this study due to the recognized influence of these medical conditions on lymphocyte counts, thereby potentially resulting in erroneous interpretation of hematological ratios.

The definition of sepsis used in this study was the one proposed by the third international consensus document for the definition of sepsis, which stipulates that a score of ≥2 points and suspected infection are requisite for a diagnosis. 26 The definition of AKI used in this study was in accordance with the Kidney Disease Improving Global Outcomes (KDIGO3) criteria. 27 Septic shock was defined as sepsis with persistent hypotension requiring vasopressors to maintain a mean arterial pressure of ≥65 mmHg and a serum lactate level >2 mmol/L (18 mg/dL) despite resuscitation with adequate volume. 26

Data sources

The data presented herein originates from a patient cohort diagnosed with severe AKI associated with sepsis, which was then followed up by a nephrology specialist. The data was retrieved from the institutional electronic medical records (EHRs) registry. An active search of the EHR and clinical laboratory records was conducted to identify patients who satisfied the inclusion criteria and to exclude patients who did not satisfy the exclusion criteria. The data was collected anonymously and recorded in a standardized format. The accuracy of the data was reviewed by two investigators, and in cases of doubt or disagreement, a third researcher reviewed the data.

A comprehensive dataset was collated, encompassing sociodemographic variables such as age, sex, body mass index, comorbidities, including diabetes mellitus, hypertension, cardiovascular disease and cirrhosis, the source of infection, previous use of nephrotoxic drugs and estimated glomerular filtration rate (eGFR) at admission. In addition, information pertaining to treatment and laboratory results, including complete blood count at various intervals during the period of hospitalization, was documented. The primary outcome was in-hospital mortality, and the secondary outcome was the requirement for RRT at discharge.

In addition, the dataset includes variables related to the duration of hospital and ICU stays, time from symptom onset to admission and AKI, need for vasopressor support, nonrenal sequential organ failure assessment (SOFA),28,29 diuresis and volume overload, whether the infection was caused by gram-negative bacteria, modality and indication for RRT, hospital mortality and requirement of RRT at discharge.

NLR, PLR, and NLPR were calculated for three standardized time points during the patient’s hospitalization (at admission, at the diagnosis of AKI (defined as the time the KDIGO criteria were met), and at the initiation of RRT), as follows: N/LR is calculated as the absolute neutrophil count divided by the absolute lymphocyte count; P/LR is calculated as the absolute platelet count divided by the absolute lymphocyte count; and N/LPR is calculated as the absolute neutrophil count (×109/L) multiplied by 100 divided by the absolute lymphocyte count (×109/L) multiplied by the absolute platelet count (×109/L). 22

Statistical methods

We calculated the power of a test to compare two AUCs in a single sample for mortality prediction. With a sample size of 152 patients and AUCs of 0.6 and 0.5, the power of the analysis was 0.6.

Categorical variables are presented as absolute and relative frequencies, while continuous variables are presented as mean and standard deviation or median and interquartile range, depending on the distribution of the data. The Shapiro–Wilk test was used to assess the assumption of normality. For the evaluation of the primary outcome (mortality), we considered the entire cohort. For the evaluation of the secondary outcome (requirement for RRT at discharge), we considered only survivors, considering mortality as a competing risk. Receiver operating characteristic (ROC) curves were utilized to analyze the discriminative ability of the different hematologic indices at admission, at diagnosis of AKI, and at the start of RRT; the areas under the ROC curve (AUC) were compared using the method proposed by De Long. 30 Sensitivity, specificity, positive predictive value, and negative predictive value were calculated in different quintiles to provide detailed information on cutoff point performance. The point with the highest simultaneous sensitivity and specificity was defined using Liu’s method. 31 A p-value of less than 0.05 was considered to be statistically significant. The statistical analyses were performed using Stata version 17 statistical software (StataCorp LLC, College Station, TX, USA). The datasets used and analyzed in this study are available upon reasonable request. The reporting of this study conforms to the guidelines for Strengthening Reporting of Observational Studies in Epidemiology. 32

Results

Details of cohort

A total of 242 patients with a diagnosis of severe AKI associated with sepsis who required dialysis were identified; 90 of these patients were excluded according to the established criteria, leaving a final cohort of 152 patients for analysis. The process of patient selection is illustrated in Figure 1.

Flowchart of patient selection.

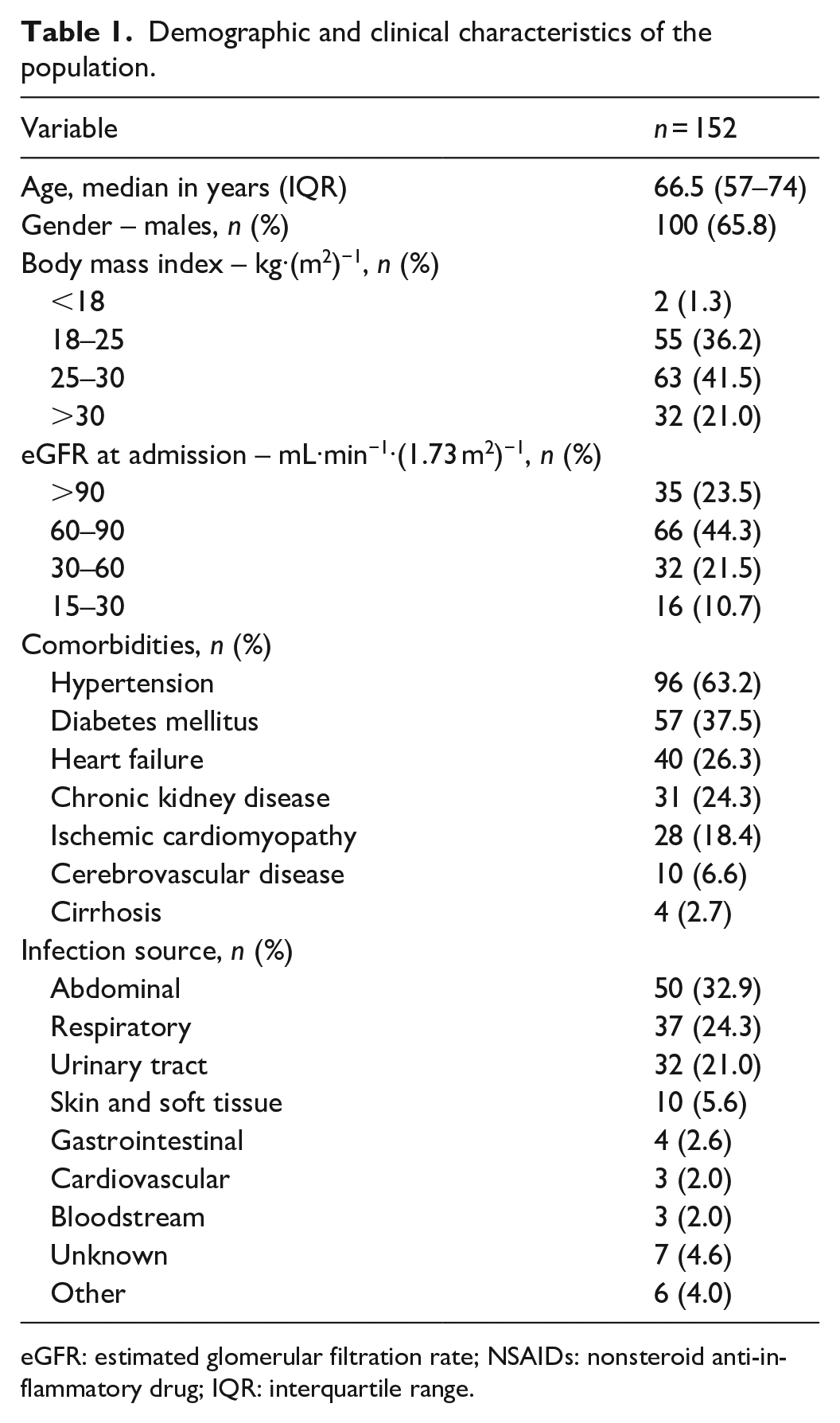

Demographic and clinical variables are shown in Table 1. The median age was 66.5 years (interquartile range (IQR): 57–74), and the most common comorbidities were hypertension (63.2%), diabetes mellitus (37.5%), and heart failure (26.3%). Furthermore, 62.5% of the participants were overweight or obese, and 67.8% had an eGFR greater than 60 ml/min/1.73 m2 at admission. The most prevalent sources of infection were abdominal in 32.9%, respiratory in 24.3%, and urinary tract in 21%.

Demographic and clinical characteristics of the population.

eGFR: estimated glomerular filtration rate; NSAIDs: nonsteroid anti‑inflammatory drug; IQR: interquartile range.

The median length of hospitalization was 19 days (IQR: 12–33.5), the great majority of infections were newly diagnosed, 86.7% had a nonrenal SOFA score of 5 or more points with a median C-reactive protein of 31.4 mg/dL and a small proportion had a history of prior use or in-hospital administration of nephrotoxic drugs. At the time of RRT, the recorded fluid balance was significantly positive with a median of 4062 mL (RIC: 2135.5–7314.2). Most patients received continuous modalities of RRT (64.2%), mainly continuous venovenous hemodiafiltration (CVVHDF) in 38.2% of patients, followed by intermittent hemodialysis in 34.2%. The primary indication for initiating RRT was anuria, followed by acidemia and volume overload, in 51.3%, 33.5%, and 26.3% of cases, respectively. However, it should be noted that several patients had more than one indication. Complete data are presented in Table 2.

Outcomes of the study population.

ICU: intensive care unit; SOFA: sequential organ failure assessment; RRT: renal replacement therapy; CVVHDF: continuous venovenous hemodiafiltration; IHD: intermittent hemodialysis; CVVHF: continuous venovenous hemofiltration; CVVHD: continuous venovenous hemodialysis; SLED: slow low-efficiency dialysis; SCUF: slow continuous ultrafiltration; IQR: interquartile range; EHR: electronic health records.

Measured from symptoms start to AKI diagnosis registered in EHR.

During and before admission.

Calculated for survivors.

Comparison of differing hematological markers

The primary outcome evaluated was in-hospital mortality, which occurred in 61.8% of patients.

The secondary outcome was the requirement for RRT at discharge. For this analysis, we considered exclusively the 58 patients who survived, and the outcome occurred in 22.4% of them.

In all proposed scenarios, the NLR and NLPR demonstrated superior performance in comparison to the PLR (Table 3). At admission, the NLR ratio exhibited an area under the curve (AUC) of 0.437 (0.343–0.532) for the prediction of mortality, while the AUC for the PLR was 0.390 (0.298–0.483) and 0.513 (0.418–0.608) for the NLPR (p = 0.024). A similar finding was evidenced at the RRT start for the prediction of mortality, with better performance of NLR (AUC: 0.596; 95% CI: 0.500–0.692) and NLPR (AUC: 0.608; 95% CI: 0.511–0.705) than for the PLR (AUC: 0.491; 95% CI: 0.393–0.589) (p = 0.108) (Figure 2(a)). For NLR, the AUC to predict the requirement of RRT at discharge was 0.592 (95% CI: 0.286–0.898).

Discriminatory ability for different outcomes of NLR, P/LR and N/LP at admission, AKI diagnosis and RRT start.

NLR: neutrophil/lymphocyte ratio; P/LR: platelet/lymphocyte ratio; N/LPR: neutrophil/lymphocyte platelet ratio; AKI: acute kidney injury; RRT: renal replacement therapy.

Receiver operating characteristic curve analyses for prediction of mortality (a) Comparison of NLR, PLR, and NLPR at RRT start (b) Comparison of NLR at admission, AKI diagnosis, and RRT start.

Comparison of differing time points of measurement

The AUC showed a consistent improvement in the ability to predict both outcomes for measurements taken later in time. It means higher values when ratios were measured at the initiation of RRT, subsequently followed by ratios measured at the time of AKI diagnosis (see Table 3). Regarding the prediction of mortality using the NLR, the AUC with 95% CI was 0.437 (0.343–0.532) at admission, 0.474 (0.368–0. 580) measured at AKI diagnosis and 0.596 (0.500–0.692) measured at the start of RRT (Figure 2(b)). Similarly, to predict the requirement of RRT at discharge, the NLR had a better AUC at RRT start 0.592 (0.286–0.898) than at admission or at AKI diagnosis. The AUC differences were not statistically significant.

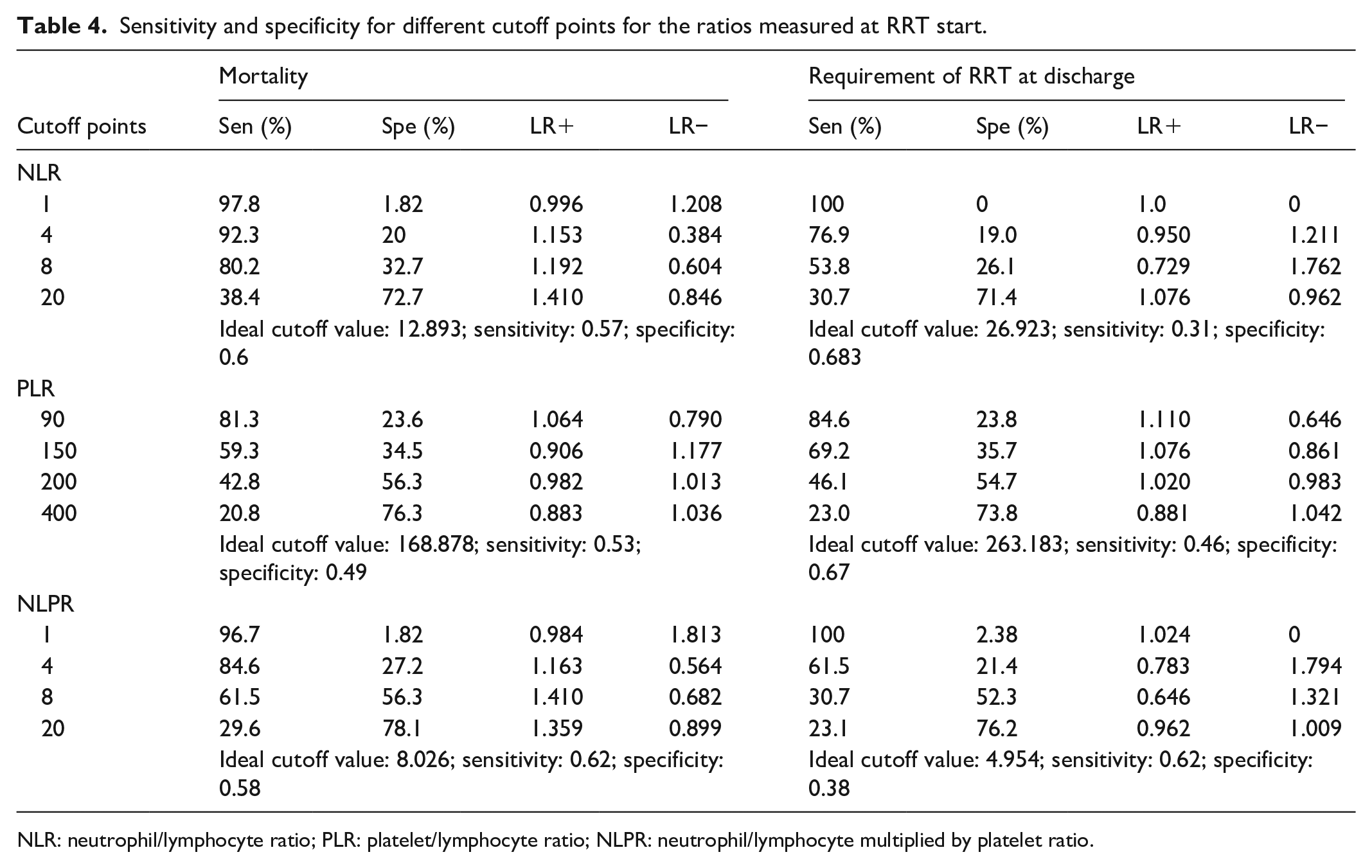

Optimal cutoff points

For each ratio, the sensitivity and specificity were determined for different cutoff points, and the ideal cutoff point was determined for each outcome (see Table 4). The ideal cut points differed for the mortality and requirement of RRT at discharge outcomes, being higher for the NLR and PLR for the latter outcome (12,893 vs 26,923 and 168,878 vs 263,183, respectively). In contrast, the optimal cutoff point for the NLR was higher for the mortality outcome (8.026 vs 4.954). The NLPR also had the best sensitivity for both outcomes at these cutoff points, while the NLR had the best specificity.

Sensitivity and specificity for different cutoff points for the ratios measured at RRT start.

NLR: neutrophil/lymphocyte ratio; PLR: platelet/lymphocyte ratio; NLPR: neutrophil/lymphocyte multiplied by platelet ratio.

Discussion

In this cohort of 152 patients with severe AKI associated with sepsis, the discriminative ability of all hematologic indices was poor. Regarding the prediction of mortality, NLR and NLPR showed a better performance than PLR (p = 0.0243). Furthermore, the measurement of NLR at the time of initiation of RRT tended to be better than at other time points (p = 0.079). Conversely, for the outcome of the RRT requirement, all three ratios exhibited comparable poor discriminatory ability.

For each of the three ratios, the ideal cutoff value for predicting each of the established outcomes was identified. For mortality, the optimal cutoff values were determined to be 12.893 for NLR, 168.878 for PLR, and 8.026 for NLPR, with the latter demonstrating the most optimal operating characteristics (sensitivity = 0.62 and specificity = 0.58). For the prediction of the requirement of RRT at discharge, the ideal cutoff points were determined to be 26.923, 263.183, and 4.954, respectively, with NLPR once again demonstrating optimal operating characteristics (sensitivity = 0.62 and specificity = 0.538).

Several studies have previously evaluated the predictive ability of these indices, with a particular focus on the NLR, in patients diagnosed with sepsis. In a retrospective study involving 507 patients diagnosed with sepsis, NLR was performed 24 h after admission to predict sepsis-associated AKI, obtaining an AUC of 0.749 (95% CI: 0.722–0.777, p < 0.001). 23

In parallel, the relationship of these ratios with mortality has also been evaluated. One study included 309 patients and compared the predictive capacity of the NLR performed at hospital admission versus at the time of AKI diagnosis. The ratio obtained at the time of admission had a higher AUC (0.618) for predicting 30-day mortality than that taken at the time of AKI diagnosis (0.593). 33 In contrast to the findings of our study, they found a superior performance for the measurement of NLR at hospital admission in predicting hospital mortality. These differences could be associated with differences in the study population, as it included patients with septic shock and AKI who did not undergo dialysis. 33

In relation to the NLPR, its application in predicting mortality was also investigated, yielding an AUC of 0.565 (95% CI: 0.515–0.615, p = 0.034), 34 a finding that aligns with the results obtained in this study. To the best of our knowledge, this study is the first to assess the efficacy of these ratios in patients with severe AKI who require renal support as well as renal recovery upon discharge.

There are biomarkers that have been specifically studied for their ability to predict renal outcomes, including the need for RRT, renal recovery and mortality. Cystatin C has been the focus of numerous studies, which have reported an AUC of 0.7 for mortality at 1 year (95% CI: 0.66–0.73). 12 Other studies have identified an AUC of 0.74 for cystatin C in terms of renal recovery.11,35 Other markers, such as osteopontin, have shown similar results, with an AUC for mortality of 0.81 (95% CI: 0.71–0.91) and for renal recovery of 0.61 (95% CI: 0.44–0.77). 11 NGAL (neutrophil gelatinase-associated lipocalin) demonstrated an AUC for mortality of 0.7 (95% CI: 0.61–0.78) in plasma and 0.62 (95% CI: 0.51–0.73) in urine. About the prediction of the necessity for RRT, the AUC was 0.55 (95% CI: 0.47–0.63) in plasma and 0.61 (95% CI: 0.53–0.70) in urine. 18 Given the limited availability of biomarkers such as cystatin C and NGAL, and given the similarity of AUC to hematological ratios, it is suggested that these ratios could be used as predictive tools, given their wide availability and ease of use.

The inclusion of platelets in the calculation of the indices, specifically the NLR, has been shown to improve the prognostic ability of the assessment. 34 The available data indicate that although there is an increase in the AUC of the NLR, it does not reach statistical significance.

A few studies have been conducted to determine the optimal cutoff points for these ratios in analogous scenarios. In one particular study, the optimal NLR cutoff point for predicting 30-day mortality was determined to be 9.54, with a sensitivity of 75.3% and a specificity of 63.1%. This result is similar to our results, although it shows a lower sensitivity and specificity (see Table 4). In this context, the other indices were not examined to determine these limits. However, higher ideal cutoff values of up to 20 or 60 have been documented in other conditions, 36 suggesting that thresholds may be lower in patients with severe AKI and that more extreme values may indicate a higher risk of mortality or need for renal support at discharge.

In the natural history of sepsis-associated AKI, there are multiple stressors that explain the hematological changes in these ratios, including proinflammatory factors and neuroendocrinological mediation by endogenous catecholamines, cortisol, and prolactin, generating an early innate immune response. 24 This response manifests within the initial 6 h and is marked by an increase in neutrophils and monocytes, with a goal to stimulate phagocytosis and augment the secretion of inflammatory mediators and cytokines. This process culminates in lymphopenia, concurrent with an adaptive immune response that entails a redistribution of lymphocytes into the lymphatic system and accelerated lymphocyte apoptosis. In addition to their role in modulating thrombus formation, platelets release cytokines and chemokines with chemotactic function for cells of the innate immune response, as well as signaling functions, neutrophil extracellular trap formation, and phagocytosis. 37 From these events, the relationship between the number of hematological cells has been studied to determine states of systemic inflammation in the presence of pathological stress.22,23 A significant stressor in this context is the onset of RRT, where homeostasis is altered because of direct mechanisms related to infection or host response to infection, as well as indirect mechanisms generated by unwanted effects of sepsis or its therapies, 38 leading to more pronounced serological inflammatory changes.

This study has important strengths; it included a substantial number of patients with AKI associated with sepsis and evaluated the predictive ability of three widely available and user-friendly hematological indices for hospital mortality and the need for RRT at three stages of the disease. However, we must acknowledge several limitations. First, the retrospective nature of the study may result in residual confounding factors that could not be controlled. Second, the sample size for the calculation of RRT requirement at the time of hospital discharge was small, which explains the low precision of the estimates.39,40 Another limitation is that, despite excluding conditions that may cause lymphopenia or atypical lymphocytosis, it is not possible to fully control for the use of some medications in refractory septic shock that may affect lymphocyte counts and these ratios. Nor were atypical lymphocyte counts, or lymphocyte subgroups, discriminated in the cohort under investigation. However, this reflects the way these ratios would be used in typical clinical practice. Furthermore, the discriminatory ability of these ratios was found to be inadequate for the outcomes under study, which will limit their applicability in clinical practice, a limitation shared by serum nonavailable biomarkers developed specifically for these outcomes.

Conclusion

To conclude, the present study has shown that the NLR, PLR and NLPR are measurements with poor ability to predict the requirement for dialysis at hospital discharge and mortality in patients with sepsis-associated AKI requiring dialysis. The most opportune time to evaluate these indices was at the initiation of RRT. The NLR taken at the initiation of RRT is a potentially useful, economical and easily applicable tool. Future studies can evaluate its inclusion in multidimensional scales or in complex predictive models of mortality and dialysis dependency.

Footnotes

Acknowledgements

This work was funded by proper resources. Acknowledgments to the Faculty of Medicine, Pontificia Universidad Javeriana.

Ethical considerations

The Institutional Review Board of the Hospital Universitario San Ignacio and the Pontificia Universidad Javeriana approved the study (FM-CIE-0280-24).

Consent to participate

Written informed consent was exempted by the Institutional Review Board.

Author contributions

DCVÁ, LVG, CAC, and DAM: conceived of the presented idea. OMM: developed the theory and performed the computations. OMM: verified the analytical methods. DCVÁ, CAG, and KMC: investigated and supervised the findings of this work. All authors discussed the results and contributed to the final manuscript.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data availability Statement

The data described in this article are openly available as supplemental material.