Abstract

Student-student interaction can benefit learning as well as provide a sense of community in online courses. Blogging is a common approach to provide opportunities for students to communicate with each other. This study used Social Network Analysis to depict commenting behaviour between students in an online graduate-level course. By examining the weekly interaction data, the results revealed how students’ commenting behaviours changed during the semester. Student participation and interaction in the blogging activity was influenced by the various pedagogical elements that were either directly or indirectly related to the blogging activity.

Introduction

Moore (1989) once predicted that student-student interaction, as a new dimension, would challenge the thinking and practice of distance education. Nowadays, communication between students is no longer a novel element in online courses. Research shows that student-student interaction can benefit their learning from both social and cognitive perspectives (Kurucay and Inan, 2017; Luo et al., 2017). The interaction between students can lessen psychological distance, thus building a sense of community in online classes (Rovai, 2002). Moreover, the feelings of community are related to the flow of information among learners (Rovai, 2002). The direct effect of student interaction on learning achievement is equal to teacher-student interaction, and higher than student-content interaction (Bernard et al., 2009).

Blogging is an advanced journal format that allows other people to read and comment (Deng and Yuen, 2013) and it has been increasingly used in online learning for interaction between students. Educational blogging research mostly focuses on investigating its influences on student participation and interaction. The description of student participation and interaction in a blogging activity is usually an aggregate of a time period while implementing the intervention (e.g. Pavo and Rodrigo, 2015; Sharma and Tietjen, 2016; Wopereis et al., 2010). However, in classroom settings, besides the implementation of the blogging activity, there are other pedagogical elements that could influence interaction between students (Kerawalla et al., 2009).

This study employed measures from Social Network Analysis (SNA) to depict the dynamic of student interaction over time through a blogging activity in an online course. Moreover, by describing and comparing the weekly patterns in the two blogging cycles, this study investigated how the pedagogical elements might be related to the interaction between students during this activity.

The communication function of a blogging activity and its educational values

When students participate in a blogging activity, they need to write, read, and comment on each other’s blogs. In the writing process, students are expected to apply a variety of thinking skills to produce content, such as gathering, organizing, and reflecting on materials, to compose articles on their blogs. Educational blogs are frequently used as reflection journals for students (e.g. Chu et al., 2012; Jimoyiannis and Angelaina, 2012; Robertson, 2011). Additionally, by creating and maintaining a blog, students could also improve their information literacy skills (Goktas and Demirel, 2012).

In contrast to private journals, blog articles are public to the class. Consequently, students tend to think carefully and write clearly when they perceive the existence of audiences (Kerawalla et al., 2009). One significant aspect of blogging’s educational value is its communication function. The information flow through reading helps students to share ideas and resources. In Goh et al.’s (2010) study, even though some students doubted their own contributions to sharing resources or proposing valuable ideas, they agreed that blogging was a convenient and efficient way to learn from peers. In addition, making comments gives students opportunities to provide feedback on each other’s thoughts. The communication of various ideas allows the blog audience to reflect on their initial topic and prompt deeper thinking. Novakovich (2016) found students had higher writing grades when peer feedback was provided through the blog platforms in comparison to in-class draft workshops as students provided more directive and critical feedback in the blogging setting. Specific and helpful feedback from instructors and peers can help cultivate a friendly class environment, which is perceived as an essential function of blogging among students (Tang and Lam, 2014). Furthermore, writing comments requires various cognitive skills, for example, reading, understanding, and critical thinking (Van Popta et al., 2017), making it a valuable experience for comment providers. Based on the previous research, an active and broad participation in commenting could indicate the success of a blogging activity in educational settings.

The influence of pedagogical elements on students’ blogging

Although previous literature has suggested the educational value of blogging, assigning blogging activities does not alone guarantee a benefit to students. Students might have different expectations and perform differently due to the blogging activity design (Hall, 2018; Kerawalla et al., 2009). For example, students did not necessarily retain much technical knowledge about creating and maintaining a blog site when they added articles to one class blog site (Jimoyiannis and Angelaina, 2012). Having individual blog sites allowed students a sense of ownership; by contrast, when the whole class worked on a same case study, the result was a repetitiveness of content (Kerawalla et al., 2009). Besides the design differences of blogging activities, other pedagogical elements may also influence participation. For example, some students expressed their confusions about posting and commenting, since the course also offered online forums for students to communicate (Kerawalla et al., 2009).

Though blogging is the technology used in the above studies, its effectiveness might vary due to the wide-ranging activity design and other pedagogical elements. Since educational blogging activities usually last for weeks, the consistent participation of blogging and commenting can also be a hurdle for some students. Therefore, it is not enough to present a semester’s aggregate blogging and commenting data (e.g. Pavo and Rodrigo, 2015; Sharma and Tietjen, 2016; Wopereis et al., 2010) in order to fully reveal the changing trends of student interaction over time in a blogging activity,

Social network analysis

SNA measures in online learning studies

Interaction and communication in online learning has constantly been an important research focus (Zawacki-Richter et al., 2009). Detailed and in-depth descriptions and understanding of student interaction are necessary for the investigation of any interaction intervention in online learning in order to inform further online learning and instructional design (Bernard et al., 2009). In recent years, SNA is used by a rising number of researchers to study networked behaviours in online learning environments (Cela et al., 2015; Jeong, 2014) and is considered a promising approach to describe the nature of interaction in online courses (Gruzd et al., 2016).

Both centrality analysis and cohesion analysis are common methods in SNA research in the context of online education. Degree centrality, which is the number of relationships that a student has in the network, is a frequent measure for centrality analysis. Particularly, in-degree centrality is the measure of the incoming relation and out-degree centrality is the measure of the outgoing relation (Kovanovic et al., 2014). Researchers used in- and out-degree measures to visualize peer feedback dynamics (Wopereis et al., 2010), to describe students’ engagement (Sharma and Tietjen, 2016), and to compare different types of interaction in blogging activities (Pavo and Rodrigo, 2015).

For collaboration blogging activity design, some researchers (Jimoyiannis and Angelaina, 2012) used cohesion analysis to detect subgroup, and employed power analysis to describe the role equivalence in the group. In their study examining 21 14–15 years old students’ blogging, Jimoyiannis and Angelaina (2012) proposed using both cohesion analysis and power analysis with centrality analysis to investigate student engagement in the blogging activities. Similarly, in another study, Jimoyiannis et al. (2013) used cohesion analysis and power analysis to examine group dynamic in a group collaboration blogging activity.

Overall, the frequently used measures and methods for online learning studies include degree centrality, density, and cohesion analysis (Cela et al., 2015). In comparison with participation data (e.g. the number of comments, blog posts etc.), these relational behaviour measures capture the dynamic of student interactions in detail, such as revealing students’ social capital in learning communities (Kovanovic et al., 2014). Therefore, SNA allows researchers to describe and investigate interaction data more precisely. However, most of the studies examine the interaction pattern in the unit of a whole course period, which may overlook the week-to-week changes resulting from the blogging activity design and other course pedagogical elements.

Social network graphs used as pedagogical intervention

Besides using SNA measures to analyze student interaction after the end of an online course, more and more researchers suggested using SNA results during an online course for teaching purposes. For example, Hernández-García and Suárez-Navas (2017) introduced the combination use of Gephi (https://gephi.org/) and GraphFES (https://github.com/TIGE-UPM/graphfes) to visualize interaction data from Learning Management System. They believed the visualization and analysis results from such tools can help instructors to identify disengaged and disconnected students, thus the instructors could intervene in time.

Researchers also studied the influences of SNA visualization as formative feedback on student interaction in various settings. For example, Yoon’s (2011) study was in a seventh grade classroom. The social network graphs in the study documented the face-to-face discussions that a student had with the classmate of her/his choices. The class level social network graph also presented the position where each student stood for the discussion question. Students received the social network graph before the 4th discussion in the 12-week long course. The researcher provided minimum interpretation of the graph; students were only told the graph was a snapshot of the discussions they had held. Yoon (2011) found that some students’ choices of the discussion partner had shifted from socially based to information based. She discussed that the shift might have been due to the graph, thus, the visualization of previous interaction could influence how some students would choose a discussion partner. Chen et al. (2018) designed a social learning analytics toolkit for college students. The toolkit in the study reflected students' online discussion by presenting a social network visualization of student interaction pattern and a word cloud of the messages posted in the discussion forums. Students reported positive attitudes towards the toolkit as they used it to monitor their own commenting behaviours. However, the toolkit was not intuitive to all students, and the quality of the discussion did not benefit much from the use of the toolkit. These studies showed promising outcomes, but the mixed findings revealed the needs of exploring how to appropriately use SNA results as a formative feedback for students.

This study examined the weekly pattern of the commenting behaviour of students. Particularly, this study focused on the number of peers that students sent comments to and whether this was changed under the influences of other course assignments and a network diagram formative feedback. This study aimed to answer the following two questions: 1) What are the patterns of students' commenting behaviours in the blogging activities in terms of SNA measures? 2) What are the influences of the pedagogical elements (feedback and other assignments) on students' commenting behaviours?

Method

Research context

The blogging activity studied was part of a graduate level online course focused on educational technology at a research university in the northeastern United States in the fall semester of 2016. The course lasted for 14 weeks and blogging activity was one of the course requirements. Students were also required to participate in the discussion forum each week, to evaluate an educational website individually, and to finish a collaboration project with peers and/or professionals from their working environments. There were 27 students enrolled in the course, three were excluded from the data as they either dropped or did not complete the course.

Blogging group assignment

The students were divided into four groups based on their professional experiences as posted in the first week’s self-introduction discussion. In week 9, students were reassigned to groups based on their participation in the first blogging cycle to balance activity levels.

Blogging topics

In the first week, students chose from various educational technology topics that their instructor suggested. They can also choose a blog platform from a recommendation list (e.g. Blogger, Wordpress, Weebly) to create their own blog. After this, the students sent their topic and blog addresses to the instructor for review. By the end of the first week, the instructor posted a blog directory with the group assignment and the blog topics in the announcement area of Blackboard. To advance exploration of diverse educational technology, students were encouraged to change their blog topics in week 9. Students could choose from any topics related to educational technology but no list of suggested topics was provided this time. Out of the 24 students, one student decided to continue writing about the same topic and all the others chose new topics.

Blogging feedback

Since week 2, students were required to update their blog once a week, and to make at least three comments to their peers’ blogs. A designated teaching assistant was assigned to record the weekly student participation on the blogging activity. If a student did not reach the requirement in the two consecutive weeks, an email reminder was sent to the student on Monday or Tuesday.

At the end of the first blogging cycle, all the students received a formative feedback on their group interaction. The feedback contained a network diagram of the group, which laid out student interactions in the first blogging cycle. It presented information about whom they received comments from as well as whom they made comments to. By using different shades of color and different sizes of each node, the diagram also showed students who made the most comments and who received the most comments in their group. The explanation of the diagram and the expected behaviours were written underneath the picture. Students were encouraged to visit blogs both within and across groups.

Pedagogical elements related with the blogging activities

The blogging activity was not a stand-alone activity; it was in fact intertwined with the subject content. In week 2, the course topic was about asynchronous and synchronous communication. The instructor assigned one required reading and two selected readings about blogging. In week 4, the course topic was web 2.0. The instructor encouraged students to find blogs about web 2.0 as well as recommended several relevant students’ blogs on Blackboard. Similarly, whenever a student’s blog topic was related to the topic of the week, the instructor included a link to the blog in that week’s introduction.

Data collection

The data used in this study was that of the interactions between 24 students on their blogs and through their comments. In the blogging context, interaction refers to students’ behaviours in responding to their classmate’s blog articles or comments. Therefore, interaction included two types of message chains: blog-comment and comment-comment. Student interactions were documented by group and week in Excel. As this study focused on the way students commented, the timestamp of a specific interaction was decided based on when the comment was made. For example, if student A commented on student B’s blog in week 5, even though B’s blog article was published in week 3, this interaction behaviour was recorded as occurring in week 5.

Data analysis

In this study, we used several SNA measures to provide a comprehensive understanding of student interaction in the blogging activity. These measures captured the features of the interaction at both individual and group levels. Visual analysis was used to compare the weekly SNA measures in the first and second blogging cycles. Gephi 0.9.2 was used to calculate SNA measures, and SPSS 21 was used for the statistical analysis in the study.

Out-degree

Out-degree as one type of local centrality measures (Prell, 2012) refers to the number of classmates one student interacted with. The interaction in the study included comments to blog articles and comments. Instead of how many comments a student made, out-degree focused on how many different classmates a student wrote comments to during a certain time (week/a blogging cycle/semester). Since each student wrote about a unique educational technology, the more blogs that a student visited indicated the more types of educational technology a student had read about. Therefore, degree measure more accurately reflects interaction patterns in the blogging activity than quantity measures. In the study, the out-degree was calculated for each week, each blogging cycle, and the semester.

Density and centralization scores

Density of a network was calculated as the ratio of the actual links to the total potential links in the network (Prell, 2012). Density represented the connectedness of the network. It was a widely used measure for student interaction in online courses. Density was calculated for each blogging cycle and a semester. Caution is needed when using density to represent how well a group of students were connected (Fahy et al., 2001). The high value of density might be due to a small number of students reaching out to all classmates instead of a more evenly distributed network. Therefore, Fahy et al. (2001) suggested using density as well as degree of centrality to gauge the connectedness.

Centralization score was based on degree centrality. The centralization score was different from the out-degree centrality, which focused on a single member. Instead, the centralization score described the whole group’s centrality. According to Freeman (1978), centralization score is calculated as “the variation in the degree centrality of the actors is divided by the maximum possible degree centrality variation” (Quoted from Prell, 2012, p. 169–170). In this study, centralization scores were calculated for each blogging cycle and a semester.

Modularity analysis

Modularity analysis was one of the approaches in SNA to detect subgroups by comparing the density of links inside a subgroup to links between subgroups (Blondel et al., 2008). In this study the instructor assigned students to different subgroups in the blogging activity, however, students were not instructed to only interact with peers in the same subgroup. By comparing the tightly connected subgroups to the assigned subgroup, the question of whether subgroup assignments limited student interaction could be answered. The actual subgroups and the assigned subgroups were drawn in social network diagrams at the class level for both blogging cycles.

Visual analysis

Visual analysis (Kratochwill et al., 2010) was used to compare weekly out-degree changing patterns in the two blogging cycles. After week 9, many students changed their blog topics. The instructor rearranged the subgroup assignment, and a network diagram of the student interaction was distributed as formative feedback. On account of this feedback, students might change their commenting behaviours. There were only two blogging cycles in the study, while the demonstration of causal relationship in visual analysis required more than two phases (Kratochwill et al., 2010). Therefore, in this study, visual analysis was used to describe the patterns of the weekly out-degree over the semester instead of examining the causal effects of the network diagram on weekly out-degree.

Visual analysis results, including level, trends, variability within each phase, overlap between phases, and the immediacy of effect (Kratochwill et al., 2010), were reported in the findings section. According to Kratochwill et al. (2010), level in the study refers to the mean out-degree in the two blogging cycles. Trends is the straight line that best fit the weekly out-degrees in each cycle. Variability presents the fluctuation of the weekly out-degree around the mean in each cycle. Overlap in the study is the proportion of the weekly out-degree from the second blogging cycle that overlapped with the weekly out-degree from the first blogging cycle. Finally, immediacy of effect is the change in levels between the last three weekly out-degree in the first blogging cycle and the first three weekly out-degree in the second blogging cycle.

Findings

In this section, we describe students’ blog commenting behaviours over the 13 weeks and discussed the possible influences from the pedagogical elements in the course.

A description of students’ commenting behaviours in the blogging activity

24 students posted 235 blog articles and 706 comments during the 13 weeks. The average length of each blog article was 245.47 words (SD = 167.07), and each comment contained 53.12 words (SD = 36.80) on average. Among all the comments, 512 were replies to the blog articles, and the rest were responses to the comments. 352 comments were created from week 2 to week 8 (the first blogging cycle) and 354 comments were created from week 9 to week 14 (the second blogging cycle). Each student posted 2 comments each week on average in the first cycle, and posted 2.4 comments each week in the second cycle. The average comments in each blogging cycle was lower than the minimum requirement in the blogging activity. Additionally, there was no significant difference between the average number of comments that students made in the two cycles.

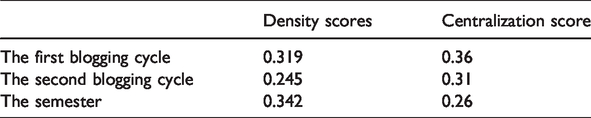

Table 1 presents the density and centralization scores for each blogging cycle and semester. Density is the ratio of the existed links of all the possible links (Prell, 2012). In this study, the density score of the whole class group for the semester was 0.342, indicating that among all the pairs of students, 34.2% of them either commented and/or received comments on each other’s blog articles or comments. This value seemed modest compared with density scores reported in other studies (i.e, Lipponen et al., 2003; Sharma and Tietjen, 2016; Sing and Khine, 2006). The density scores of the two blogging cycles declined from 0.319 to 0.254. The decrease of the density value meant that in the second cycle, the portion of pairs of students who sent each other comments was smaller than in the first cycle. Furthermore, the centralization scores also declined from 0.36 in the first cycle to 0.31 in the second cycle. Even though in the class level students were less connected in the second cycle, the lower centralization scores in the second cycle indicated that the exchanges of comments were scattered among students in the second cycle in comparison to the first cycle.

Density and centralization scores of the two blogging cycles and the semester.

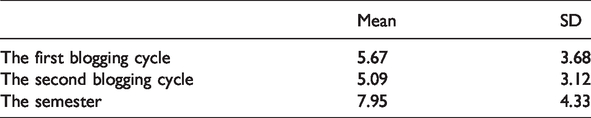

Out-degree of the two blogging cycles and the semester.

Density and centralization scores described student connectivity at the class level. The out-degree measure showed the centrality at a student level. One student might write multiple comments in one week, while the out-degree in this study revealed how many classmates that a student sent the comments to. If a student sends comments only to one classmate, the out-degree value of the student in the week equaled to one, no matter how many comments had been sent. In the blogging activity, each student researched one unique educational technology. Therefore, a higher value of out-degree indicated students had access to various resources.

Overall, as showed in Table 2, the average number of classmates that a student sent comments to was 7.95 (SD = 4.33) in the semester. The average out-degree of the first cycle was 5.67 (SD = 3.68), and the average out-degree of the second cycle was 5.1 (SD = 3.12). The repeated measure t-test showed no significant difference between the out-degree in the two cycles, indicating on average students sent comments to a similar number of classmates in the two blogging cycles. It is also notable that the semester out-degree was smaller than the summary of the two cycle out-degrees. This means on average that there were overlaps of the classmates that students sent comments to in the two cycles—even the group assignment was updated in the second cycle.

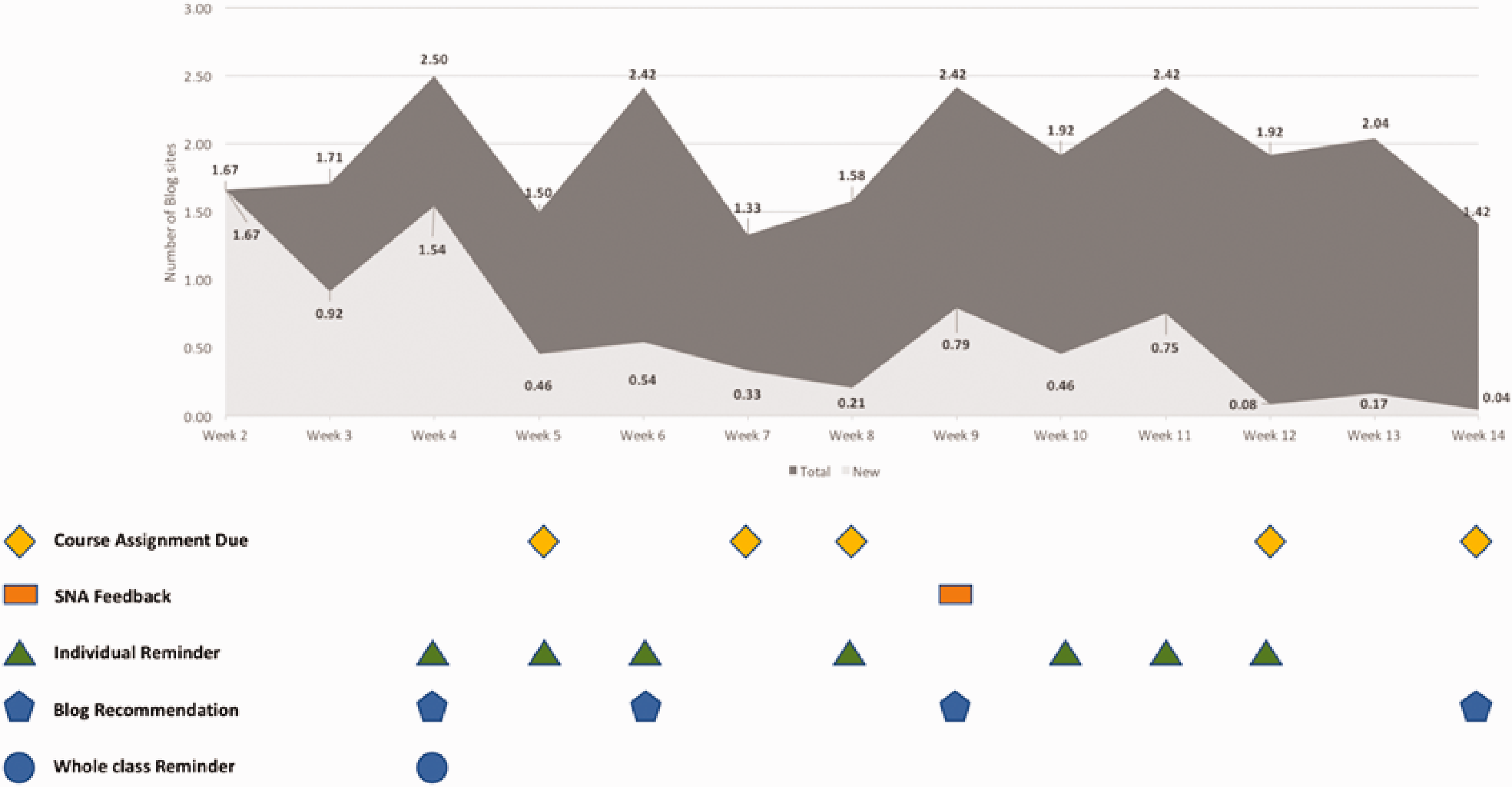

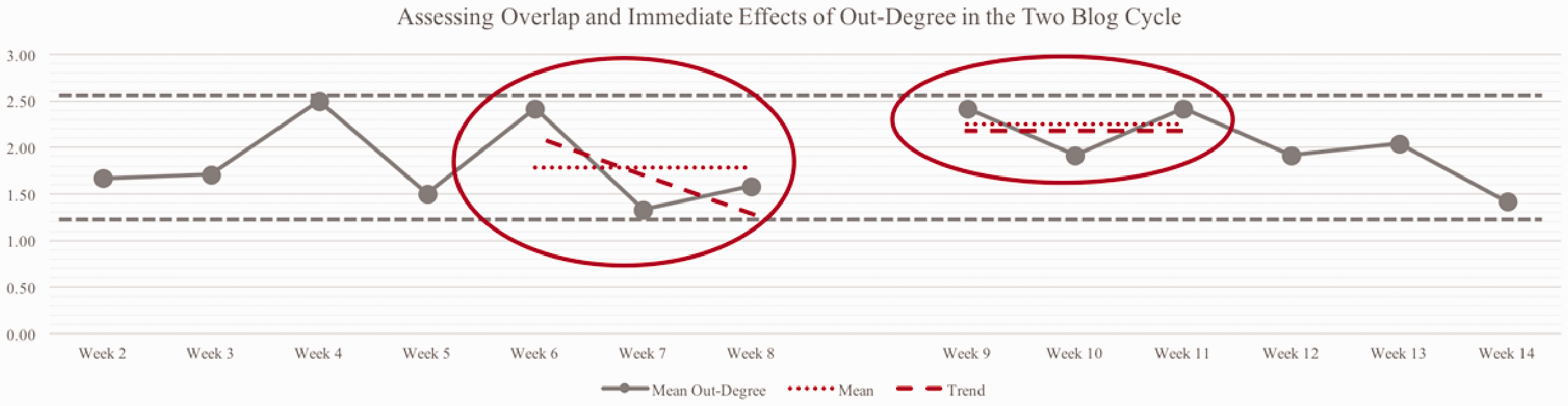

Figure 1 displays the average out-degree value in each week in the darker shade. The out-degree fluctuated from week to week. On average, each student sent comments to 1.82 classmates in each week in the first cycle and sent comments to 2.02 classmates in each week in the second cycle. The number of classmates that students sent comments to was almost the same in the two cycles.

The number of mean out-degree in each week and the related pedagogical condition.

Next, we examined whom students sent comments to in each week. Specifically, we compared the receivers of comments from a student from one week to the following week, then labeled the different comment receivers as a new interaction. This type of data told us whether students sent comments to different students from week to week or if they interacted with the same classmates they had in the first several weeks. The new interaction is presented in the lighter shade in Figure 1. Apparently, in the two cycles, it was the first several weeks that most students explored their classmates’ blogs. Since the fourth week in both cycles, few students kept exploring. Many students were comfortable in interacting with the peers they had commented with previously. Comparing the two cycles, students had fewer new interactions in the second blogging cycle.

The pre-course relationship among students may affect with whom students interacted with in the blogging activities (Deng and Yuen, 2013). In this study, the course was the first one in this program, so students did not necessarily know each other at the beginning of the course. However, due to the class size and group number, there was overlap of the group assignment in the two cycles. Therefore, students had almost visited all group members in the first few weeks in the second cycle. It left fewer “new blogs” in the group. This could explain why students in the second cycle visited fewer and fewer new blogs in the last few weeks. The other reason could be the irregular updating pattern of some students. Since the beginning of the semester, some students did not update their blogs on time. As a result, students frequently visited the regularly updated blogs towards the end of the semester.

Impact of assignments on out-degree’s weekly changes

Observing Figure 1, it is apparent that the various pedagogical elements could be related to the out-degree in the study. Particularly, students sent comments to fewer peers in the weeks when other assignments were due. However, the influences of the assignment due on the interaction were smaller in the second cycle than in the first. The number of classmates that students sent comments to decreased significantly from week 4 to week 5 (t = 3.811, p = 0.01), from week 6 to week 7 (t = 3.347, p = 0.03), while no significant difference was found between week 7 and week 8, week 11 and week 12, and week 13 and week 14.

Impact of the network diagram formative feedback

A network diagram formative feedback was sent to students at the end of the first cycle. The formative feedback informed students of their interaction patterns with classmates in the first cycle.

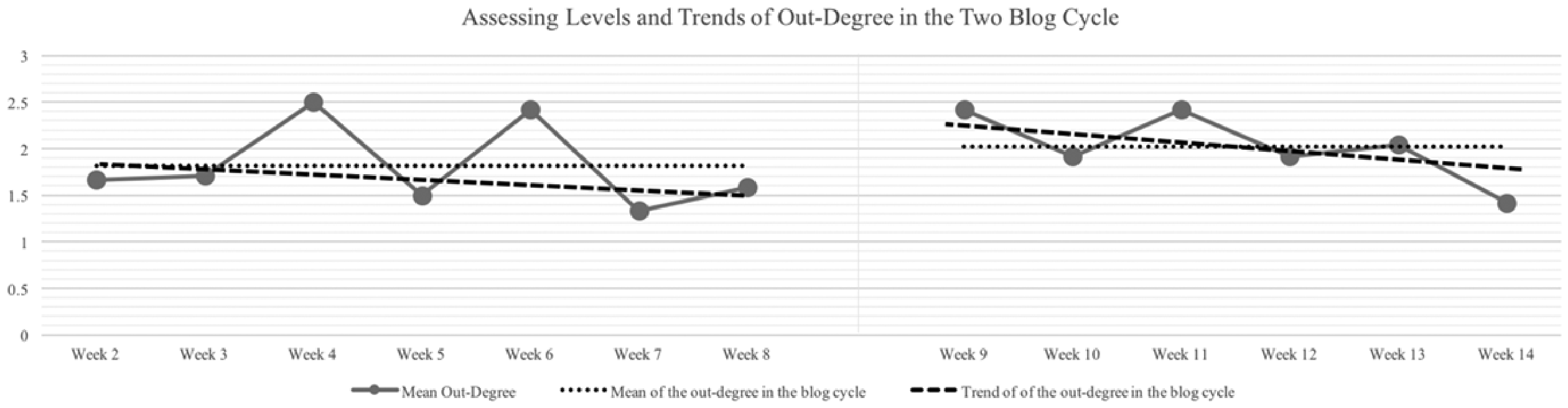

Out-degree in the two blogging cycles

The two blogging cycles lasted for seven weeks and six weeks separately. The out-degree in both two cycles presented wave patterns. A visual analysis (Kratochwill et al., 2010) was performed to compare the out-degree changing patterns in the two cycles. The out-degree of each week was used as the repeated measure through the 13 weeks in the visual analysis.

Student interaction was more stable in the second blogging cycle

In Figure 2, the level analysis compared the means of the two cycles, showing a slight increase in the second cycle after the administration of the network diagram formative feedback. This indicated a minor improvement in the variety of student interactions in the blogging activity. The trend analysis pictures the direction of the changes of each cycle, showing a slight decreasing trend in both cycles. The mean out-degree of each week in the second cycle were closer to the trend line than the first cycle, indicating a more stable pattern in the second cycle. Figure 3 showed a complete overlap of the mean out-degree of each week in the two cycles, meaning the highest and lowest means in the second cycle did not go beyond the scale of means in the first cycle.

Assessing levels and trends of out-degree in the two blogging cycle.

Assessing overlap and immediate effects of out-degree in the two blogging cycle.

The formative feedback exerted an immediate effect on student interaction

Comparing the last three data points in the first cycle with the first three data points in the second cycle helped us to find out whether there was an immediate effect of the group reassignment with the administration of the network diagram formative feedback. The abovementioned six data points were circled separately in Figure 3. The level of the first three data points in the second cycle was higher. Moreover, different from the decreasing trend in the last three data points in the first cycle, the first three data points in the second cycle showed a stable trend and smaller variability. Therefore, the new group assignment with the network diagram formative feedback had an immediate effect on students.

In short, the visual analysis showed limited differences in the patterns of the out-degree between the two cycles. In both cycles, student interaction varied week to week and tended to decrease. In the second cycle, the variation was slightly smaller than the first cycle. Additionally, an immediate effect occurred when the second cycle started, the average out-degree increased and did not show a declining pattern as it was in the first cycle. It is possible that the network diagram formative feedback reminded students of maintaining interaction, thus the interaction pattern was more stable in the second cycle and was less influenced by other assignment dues.

Group cohesion in the two blogging cycles

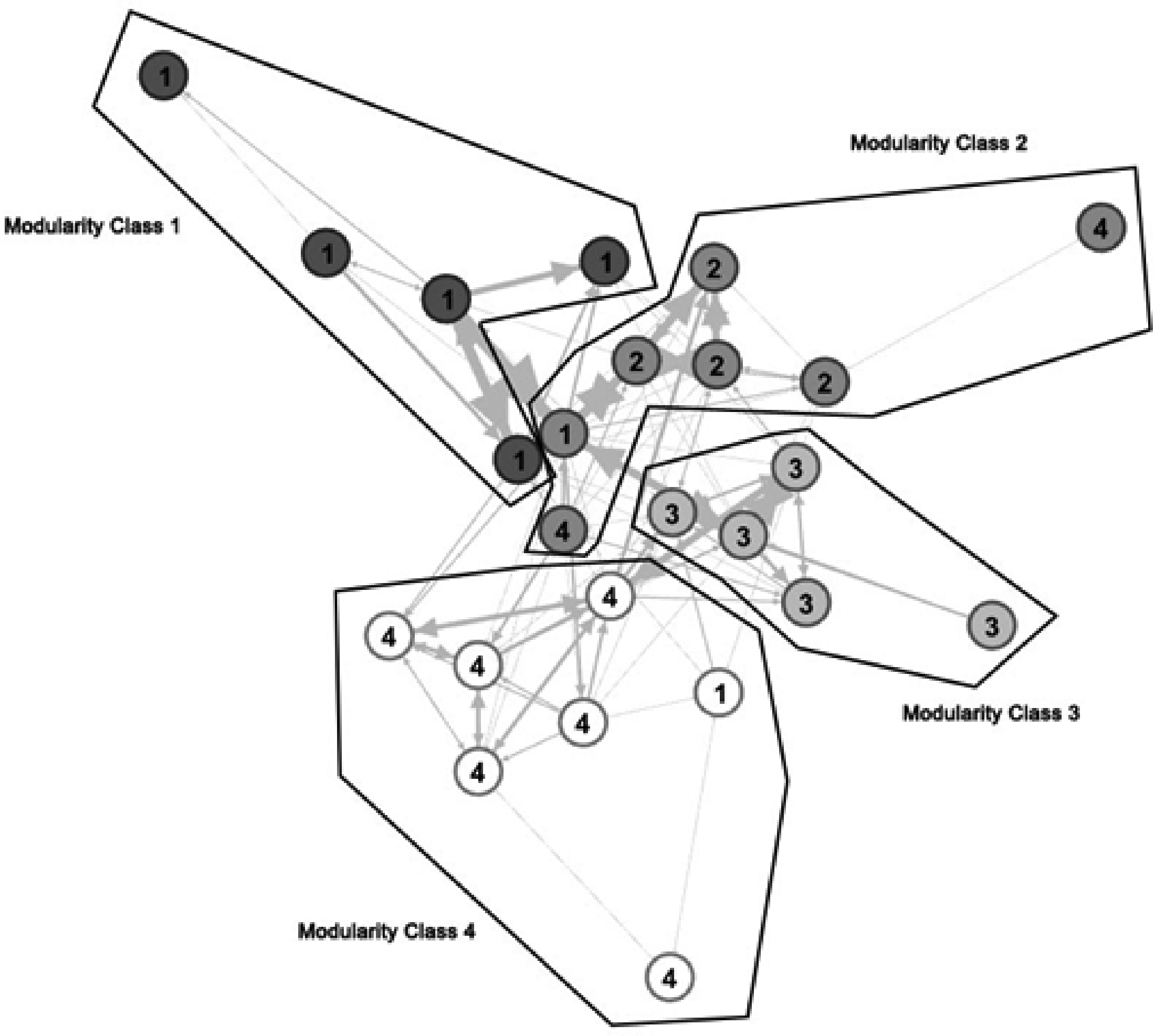

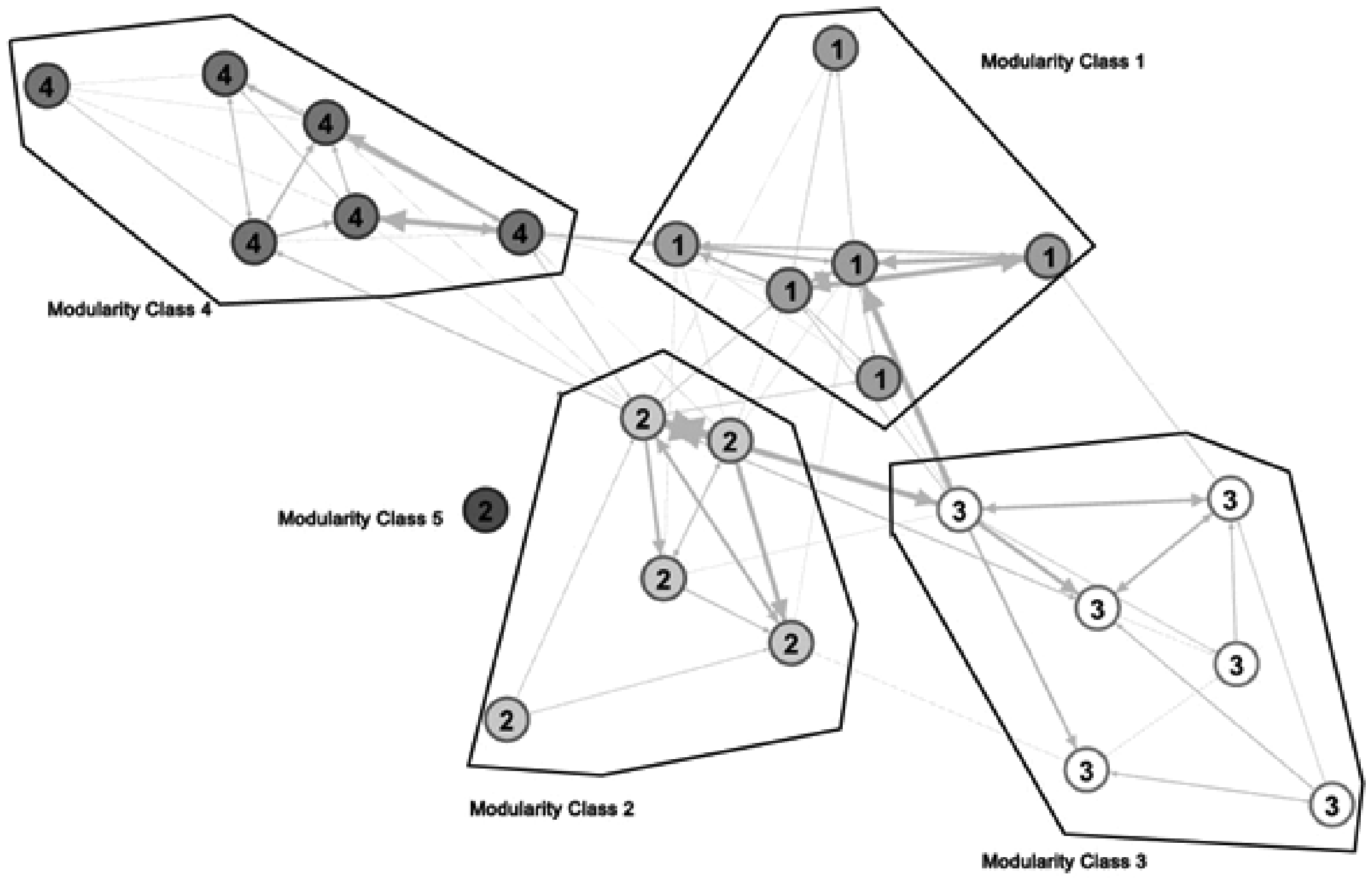

The out-degree comparison in the two cycles showed the immediate effect of the network diagram formative feedback on students’ commenting behaviours. Thus, we used modularity analysis to examine the two cycles whose blogs students interacted with more often: group members or non-group members. Modularity analysis helped us to detect subgroups of students who were more connected to each other in the blogging activity. The analysis presented with a four-subgroup structure in the first cycle and a five-subgroup structure in the second cycle.

Next, we compared the subgroups emerged from the modularity analysis with the groups assigned by the instructor. Figure 4 presented the modularity classes and the assigned groups in the first cycle. Each circle represented one student, and the number was the group assigned by the instructor. Circles of the same color were in the same modularity class, which meant they had more interaction with each other than with other students. Modularity class 2 was composed of members from assigned group 1, 2 and 4. Modularity class 4 included students from assigned group 1 and group 4. This diagram showed that some students interacted with non-group members more frequently than group members. However, this situation did not exist in the second cycle. As Figure 5 showed, almost all the modularity classes were the same as the assigned groups. This was true for everyone except one student, who did not have any interaction either group members or non-group members. In short, in the first cycle, the detected subgroups were different from the assigned groups. By contrast in the second cycle, the detected groups were the same as the assigned groups except one student who did not have any interaction with any classmate.

Modularity classes and group assignment in the first blogging cycle.

Modularity classes and group assignment in the second blogging cycle.

The modularity analysis found a clearer subgroup structure in the second cycle. This would resonate with the findings from the out-degree comparison that students in the second cycle explored fewer new blogs. The decreasing of the density scores from the first cycle to the second cycle was aligned with the findings from the out-degree comparison and the modularity analysis; students were more likely to stay within group interactions and explored fewer new blogs. Considering the findings together led to a postulation that the network diagram formative feedback promoted students to focus more directly on the interaction with classmates from the same group, which led to the fewer exploration of the new blogs and a clearer subgroup structure. The network diagram formative feedback only presented students the within-group interaction pattern, though the feedback included a paragraph to encourage students to interact with both group members and non-group members. The information students perceived might be that they should focus more on the within group interaction.

Discussions

The description of students’ commenting behaviours in the blogging activity found the different interaction patterns in the two blogging cycles and the fluctuation of out-degree from week to week. Particularly, students sent out comments to less peers when there was an assignment due in the week. This section discusses these findings and compared the results from other similar studies.

In the study, students were required to post at least three comments per week. When other assignments took up their time, some students chose not to reach even the minimum requirement. The blogging activity was designed to have students to research and write about educational technologies. In order to have students to be exposed and reflect on more educational technologies, students have the access to their classmates’ blogs. Though the blogging activity provided students with channels to express and communicate with each other, it should be noted that students’ commenting behaviours were related to both the blogging activity design and other pedagogical elements (Hall, 2018). In this case, a quantity requirement was failed due to the pressure of other course assignments. This finding resonated with previous research. Peters and Hewitt (2010) found that students tended to use some coping strategies to reach the participation requirement, which demanded less efforts and might undermine learning. One reason led to such coping strategies was the pressure of the weekly “quota” in the discussion forums in the course of their study. Similarly, in the study, some students made their choices to compromise one requirement in order to submit another assignment in time. Though this study only presented the quantity compromise, the compulsory participation could also result in posting many compliment comments which contained limited substance (Peters and Hewitt, 2010). In the future, a flexible quantity requirement might ease students’ workload and avoid the competition of students’ attention and efforts between course activities. Moreover, the activity design should focus on encouraging meaningful and authentic discussion, for example, role-assignment strategy is often used in online discussion activities to improve discussion quality (e.g. Chen et al., 2019; Xie et al., 2014).

A network diagram was given to students as formative feedback at the end of the first blogging cycle in the course of this study. Usually, formative feedback is designed to communicate information regarding students’ previous performance and to guide students toward expected performance (Shute, 2008). In this study, feedback had an immediate effect on the commenting behaviour of students, the activity level of student interaction improved. Nonetheless, it also led to an unforeseen result; fewer across-group commenting behaviours were presented in the second cycle.

Some researchers described the learning analytic visualization feedback as a double-edged sword (Tan et al., 2017). It allowed students to be more aware of their performance and to adjust the following learning behaviours while at the same time it brought a new pedagogical complexity. This complexity was also discussed in many other research studies that explored the use of SNA visualization as formative feedback of student interaction (Chen et al., 2018; Yoon, 2011). Yoon (2011) found that after viewing the social network graph, students developed rules of communication. Some of them reflected on their own positions of the discussion topic thus chose next discussion partners based on the partners’ point of views. While other students did not develop such reflection and chose partners randomly. The different communication approach affected students understanding levels of the content knowledge eventually. Similarly, Chen et al. (2018) found that some of the students saw the visualization feedback as “an inconvenient ‘add-on’” rather than a chance to reflect on the previous course engagement. Therefore, these students would not adjust their following behaviours in the online course. These studies showed the importance of guiding students to make effective use of the formative feedback. In this study, after sending students the network diagram, there were less across-group interaction. Students might interpret the feedback as an enforcement of within-group interaction since the feedback only labeled students names within their group. The finding showed that simply presenting SNA results to students cannot guarantee that their interpretation of the intention of the formative feedback is aligned with the designed purpose. The network diagram might be such a novel element to most students that the layout of the diagram could influence students’ understanding. As in previous studies, student agency plays a key role when using these analytic results as feedback. Therefore, a meaningful and explicit interpretation of the network diagram may better help students to make decisions about the adjustment of their following behaviours. More empirical studies are needed to examine how to use SNA results as formative feedback to improve students’ performance (Wise, 2014).

Finally, the study demonstrated the necessity of documenting and presenting the pedagogical elements. Especially for intervention studies in real educational settings, these pedagogical elements, which constitute the context of the intervention, either facilitate or obstruct students’ participation. Such detailed documentation can help clarify how and why the blogging activity design succeeded and failed, providing in-depth design implications for other researchers (Kelly et al., 2008).

Conclusions

This study investigated commenting behaviour in a blogging activity of a graduate level online courses, and it revealed the impacts of pedagogical elements on students’ commenting behaviour. The number of classmates that a student sent comments to was examined in the study, and the patterns of student interaction in two blogging cycles were compared. Cohesion analysis was employed to examine the interaction dynamic between and across group. Density with centralization scores was calculated to present the class level connectedness.

The study added an example of use of SNA measures to describe students’ week-to-week interactions in a blogging activity. The blogging activity design and other pedagogical elements could influence student interaction. A minimum comments requirement might not guarantee student activity level in the blogging activity since the other course assignments also need students’ attention and efforts. The use of network diagram formative feedback could affect the interaction objects of students’ choice. Moreover, an explicit explanation of the diagram should be provided to students accompanying with a clearly stated performance expectation.

Despite this, the small class size made this study more of an exploratory nature. The study focused only on students’ commenting behaviors instead of their motivation or learning outcomes. Further research could add content analysis to investigate the quality of students’ comments and take it a step further to compare ego-networks in order to examine how individual student’s interaction patterns changed over time.

Footnotes

Research data

The datasets used and/or analyzed during the current study are available from the corresponding author on reasonable request.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.