Abstract

Clinical guidelines advocate stroke prevention therapy in atrial fibrillation (AF) patients, specifically anticoagulation. However, the decision to initiate treatment is based on the risk (bleeding) versus benefit (prevention of stroke) of therapy, which is often difficult to assess. This review identifies available risk assessment tools to facilitate the safe and optimal use of antithrombotic therapy for stroke prevention in AF. Using key databases and online clinical resources to search the literature (1992–2012), 19 tools have been identified and published to date: 11 addressing stroke risk, 7 addressing bleeding risk and 1 integrating both risk assessments. The stroke risk assessment tools (e.g. CHADS2, CHA2DS2-VASc) share common risk factors: age, hypertension, previous cerebrovascular attack. The bleeding risk assessment tools (e.g. HEMORR2HAGES, HAS-BLED) share common risk factors: age, previous bleeding, renal and liver impairment. In terms of their development, six of the stroke risk assessment tools have been derived from clinical studies, whilst five are based on refinement of existing tools or expert consensus. Many have been evaluated by prospective application to data from real patient cohorts. Bleeding risk assessment tools have been derived from trials, or generated from patient data and then validated via further studies. One identified tool (i.e. Computerised Antithrombotic Risk Assessment Tool [CARAT]) integrates both stroke and bleeding, and specifically considers other key factors in decision-making regarding antithrombotic therapy, particularly those increasing the risk of medication misadventure with treatment (e.g. function, drug interactions, medication adherence). This highlights that whilst separate tools are available to assess stroke and bleeding risk, they do not estimate the relative risk versus benefit of treatment in an individual patient nor consider key medication safety aspects. More effort is needed to synthesize these separate risk assessments and integrate key medication safety issues, particularly since the introduction of new anticoagulants into practice.

Keywords

Introduction

The increasing incidence of stroke is due to an increase in the prevalence of key risk factors such as advancing age and other underlying cardiovascular conditions, particularly atrial fibrillation (AF). In Europe, the prevalence of stroke is about 2% and increasing [Kirchhof et al. 2007]. In the US, the prevalence of stroke is approximately 3% of the adult population (approximately 7 million individuals), and it is estimated that by 2030, the prevalence of stroke will increase by 24.9% to 4.0%, affecting an additional 4 million people [Heidenreich et al. 2011; Roger et al. 2012]. In Australia, recent health reports (2009) have estimated that 375,800 Australians (205,800 men and 170,000 women) have suffered a stroke at some time in their lives, which makes it the third leading cause of death for men and the second leading cause of death for women [Australian Institute of Health and Welfare, 2012].

Among persons with AF (nonvalvular form), the risk of stroke is approximately five times higher than that in persons without AF [Benjamin et al. 1994; Roger et al. 2012; Wolf et al. 1991]. The relationship between advancing age and AF and stroke is also important, as AF is the most common irregular heart rhythm encountered in clinical practice and is most prevalent in the elderly [Benjamin et al. 1994; Wolf et al. 1991]. Aging itself is a strong risk factor for stroke [Benjamin et al. 1994]; around half of all strokes occur in people over the age of 75 years. In the US, the incidence of stroke increases dramatically from around 30–120 per 100,000 persons per year in the age group 35–44 years old, rising to 670–970 per 100,000 persons per year for those aged 65–74 years [Roger et al. 2011]. It is estimated that the risk of hospitalization for stroke in people aged 75–84 years is more than 10 times the risk for those in the 55–64 year age group [Australian Institute of Health and Welfare]. As the population ages, the number of stroke incidents is expected to increase; for example, in Australia, there were approximately 60,000 new or recurrent strokes in the year 2010 [Boddice et al. 2010] compared with 50,000 in 2008 (AIHW 2008) [Australian Institute of Health and Welfare, 2008]. Overall, because the prevalence of AF rises with age, the risk of stroke due to AF is highest in the very elderly, such that the percentage of strokes attributable to AF increases dramatically from 1 in 67 persons in the 50–59 year age group to 1 in 4 for persons in the 80–89 year age group [Roger et al. 2012].

Clinical guidelines [Boddice et al. 2010; Camm et al. 2012; Skanes et al. 2012; Wann et al. 2011; You et al. 2012] advocate stroke prevention therapy in persons with AF, recommending the use of antithrombotic agents (e.g. warfarin, aspirin). Pooled analyses of many clinical trials have provided strong evidence that antithrombotics (anticlotting agents) can prevent stroke in patients with AF; warfarin (anticoagulant) reduces the risk of stroke by approximately 60%, while aspirin (antiplatelet) is less effective, reducing the risk by about one-fifth [Hart et al. 2007; van Walraven et al. 2002]. Prevention of stroke therefore currently relies on the use of antithrombotic therapy (anticoagulants as first line), although these agents inherently carry risks of adverse events (e.g. haemorrhage). For this reason, much attention has been focused on the research and development of alternative drugs (e.g. new antithrombotics such as dabigatran, rivaroxaban, apixaban). Unfortunately, none of these agents are devoid of significant risks to the patient. Therefore, the decision-making process regarding stroke prevention relies on a risk versus benefit assessment for each individual patient (i.e. an assessment of the potential risk of haemorrhage in the patient versus the benefit of the treatment in terms of reduction in the risk of stroke).

To this end, much emphasis has been placed on the development of tools to facilitate these risk assessments and support the decision-making process. In particular, there is a need to address a range of factors that contribute to medication safety in this clinical context, including patients’ age, cognition, function, falls risk, and medication adherence [Bajorek et al. 2007; De Breucker et al. 2010; Tulner et al. 2010]. Therefore, the decision-making process should necessarily consider both the stroke risk and bleeding risk as well as other medication safety issues. This narrative review focuses on the contemporary issues surrounding decision-making for stroke prevention in AF, specifically identifying the available risk assessment tools that help facilitate the safe selection of therapy in at-risk elderly persons. This review describes the features of the various tools developed to date and their relevance and potential application to clinical practice.

Methods

A review of the literature was undertaken via key electronic databases (PUBMED, OVID, EMBASE) and other online resources (e.g. Google, Google Scholar) using the search terms ‘atrial fibrillation’, ‘stroke risk factors’, ‘stroke risk assessment’, ‘stroke risk stratification’, ‘bleeding risk factors’, ‘bleeding risk assessment’, and ‘bleeding risk stratification’. The search was limited to peer-reviewed, English language publications (journal articles, reviews, consensus statements, published guidelines) within the 20-year period 1992 to 2012 (the period immediately following the publication of the pivotal clinical trials of stroke prevention in AF [Connolly et al. 1991; European Atrial Fibrillation Trial Study Group, 1993; Ezekowitz et al. 1992; Petersen et al. 1989; Poller et al. 1991; The Boston Area Anticoagulation Trial for Atrial Fibrillation Investigators, 1990]). With regard to guidelines and consensus statements, only the latest (current) versions were included for review. Each publication was searched to identify risk assessment or risk stratification tools/schemes to support decision-making. Overall, 19 tools were identified: 11 addressing stroke risk, 7 addressing bleeding risk, and 1 tool addressing both stroke and bleeding risk.

Stroke risk assessment tools

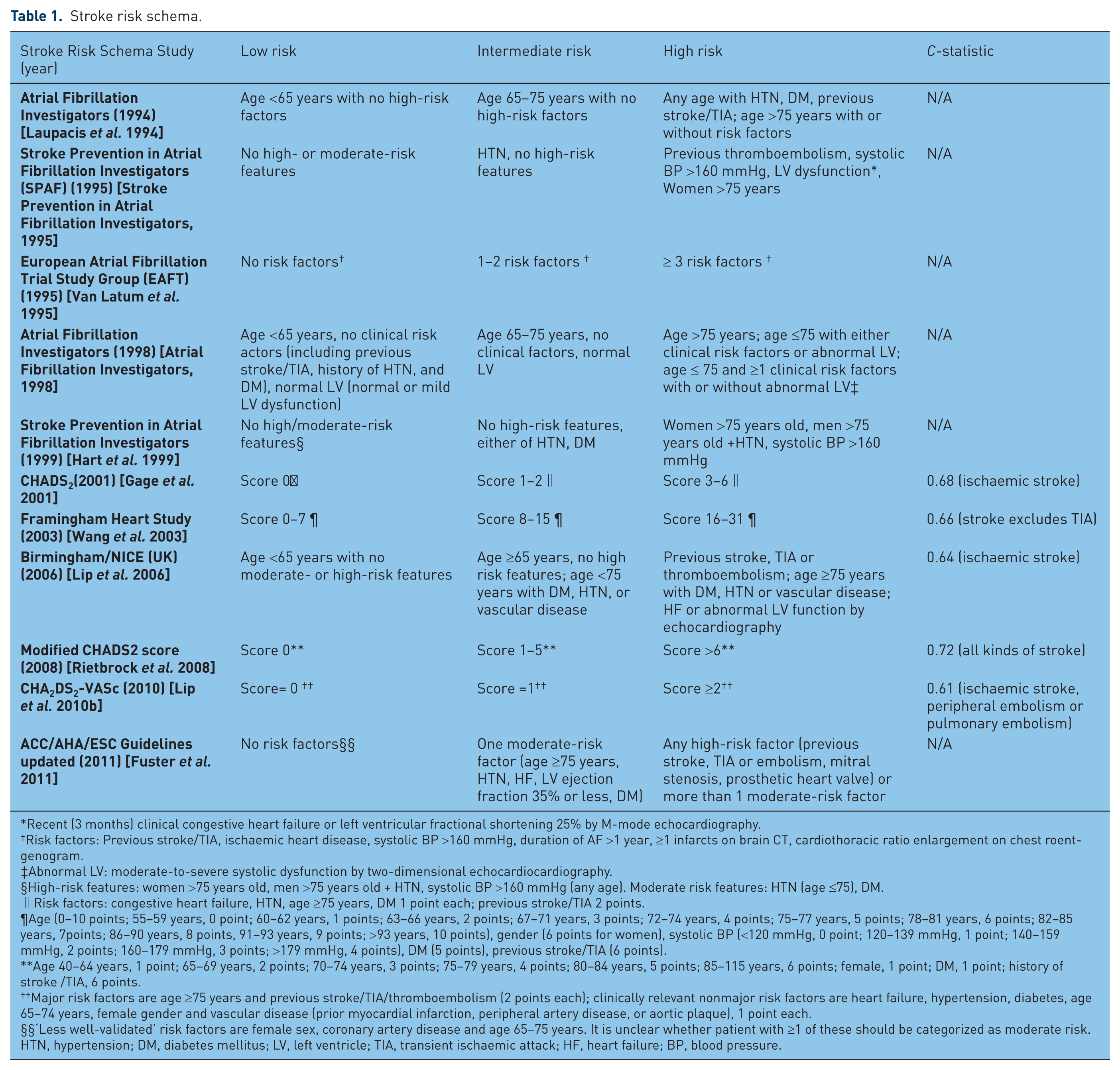

A number of tools have been developed to assess stroke risk (Table 1) although few guidelines to date specifically include a stroke risk stratification scheme alongside recommendations for antithrombotic therapy (e.g. guidelines published by the American College of Cardiology Foundation/American Heart Association/ European Society of Cardiology (ACC/AHA/ESC; updated 2011) [Fuster et al. 2011]. Overall, among the available stroke risk assessment tools, the CHADS2 [Gage et al. 2001] and CHA2DS2-VASc [Lip et al. 2010b] have been the most frequently advocated tools, sharing the following common risk factors: age, hypertension, diabetes mellitus (DM), previous stroke/transient ischaemic attack (TIA). Stroke risk schemes all vary significantly in complexity with the number of variables included ranging from 4 to 7, with a median of 5 (Table 1). The most frequently mentioned inputs across all of the stroke risk tools are previous stroke/TIA (11 out of 11 tools), followed by age (10 out of 11), hypertension (HTN; 10 out of 11), and DM (9 out of 11). Heart failure (HF; 5 out of 11), left ventricular (LV) systolic dysfunction (4 out of 11), and female gender (4 out of 11) are also often considered. Other risk factors incorporated into some tools relate to cardiovascular diseases (e.g. coronary heart disease, myocardial infarction [MI], peripheral vascular disease, aortic plaque). Most of these schemes are based on scoring systems (e.g. CHADS2, Framingham Heart Study (2003), Modified CHADS2 score (2008) and CHA2DS2-VASc), where the included risk factors have been weighted (i.e. assigned different amounts of points) according to their relative contribution (i.e. relative risks) in causing stroke; the overall stroke risk is then estimated by summing the scores (Table 1). This means that these schemes are not mere checklists, but rather provide some indication of the level of predicted risk in an individual patient.

Stroke risk schema.

Recent (3 months) clinical congestive heart failure or left ventricular fractional shortening 25% by M-mode echocardiography.

Risk factors: Previous stroke/TIA, ischaemic heart disease, systolic BP >160 mmHg, duration of AF >1 year, ≥1 infarcts on brain CT, cardiothoracic ratio enlargement on chest roentgenogram.

Abnormal LV: moderate-to-severe systolic dysfunction by two-dimensional echocardiocardiography.

High-risk features: women >75 years old, men >75 years old + HTN, systolic BP >160 mmHg (any age). Moderate risk features: HTN (age ≤75), DM.

Risk factors: congestive heart failure, HTN, age ≥75 years, DM 1 point each; previous stroke/TIA 2 points.

Age (0–10 points; 55–59 years, 0 point; 60–62 years, 1 points; 63–66 years, 2 points; 67–71 years, 3 points; 72–74 years, 4 points; 75–77 years, 5 points; 78–81 years, 6 points; 82–85 years, 7points; 86–90 years, 8 points, 91–93 years, 9 points; >93 years, 10 points), gender (6 points for women), systolic BP (<120 mmHg, 0 point; 120–139 mmHg, 1 point; 140–159 mmHg, 2 points; 160–179 mmHg, 3 points; >179 mmHg, 4 points), DM (5 points), previous stroke/TIA (6 points).

Age 40–64 years, 1 point; 65–69 years, 2 points; 70–74 years, 3 points; 75–79 years, 4 points; 80–84 years, 5 points; 85–115 years, 6 points; female, 1 point; DM, 1 point; history of stroke /TIA, 6 points.

Major risk factors are age ≥75 years and previous stroke/TIA/thromboembolism (2 points each); clinically relevant nonmajor risk factors are heart failure, hypertension, diabetes, age 65–74 years, female gender and vascular disease (prior myocardial infarction, peripheral artery disease, or aortic plaque), 1 point each.

‘Less well-validated’ risk factors are female sex, coronary artery disease and age 65–75 years. It is unclear whether patient with ≥1 of these should be categorized as moderate risk.

HTN, hypertension; DM, diabetes mellitus; LV, left ventricle; TIA, transient ischaemic attack; HF, heart failure; BP, blood pressure.

Age is an important risk factor for stroke, particularly in the context of AF management. These stroke risk schemes vary in how age is considered within the risk assessment, with different age categories used in various schemes. For example, the CHADS2 uses age 75 years as a cut-off to denote risk associated with advancing age, while the Modified CHADS2 score (2008) employs a range of age categories to better reflect increasing stroke risk over time, such that a score of 1 is assigned to persons aged 40–64 years and a score of 6 is assigned to those persons aged 85 years and older.

Tools from the ‘Atrial fibrillation investigators’

Atrial Fibrillation Investigators (1994)

The Atrial Fibrillation Investigators (AFI) (1994) [Laupacis et al. 1994] stroke assessment tool was derived from the pooled analysis of five clinical studies (AFASAK [Petersen et al. 1989], SPAF [Poller et al. 1991], BAATAF [The Boston Area Anticoagulation Trial for Atrial Fibrillation Investigators, 1990], CAFA [Connolly et al. 1991], and SPINAF [Ezekowitz et al. 1992]) of stroke prevention therapies in AF; CAFA, BAATAF, and SPINAF trialled warfarin versus placebo, whereas AFASAK and SPAF participants were treated with aspirin or warfarin versus placebo. Collectively, over 1800 patients received warfarin or placebo while over 1130 patients received aspirin or placebo; the mean age of patients was 69 years (range 38–91 years). BAATAF, AFASAK, and SPAF excluded patients with previous thromboembolism or cerebrovascular diseases. All studies, except CAFA, sought to identify stroke risk factors (such as history of stroke/TIA, age) according to their relative risks via univariate and multivariate analyses. These factors were then evaluated using the data from all of these studies (BAATAF, AFASAK, SPINAF, SPAF, and CAFA) to derive a risk assessment tool which categorizes patients into different levels of stroke risk (ranging from 1.0% relative risk in the low-risk group to 8.1% in the high-risk group; see Table 1).

Atrial Fibrillation Investigators (1998)

Following from the development of the first tool (1994), this risk assessment tool was based on a further pooled analysis of three randomized trials [Atrial Fibrillation Investigators, 1998]: BAATAF [The Boston Area Anticoagulation Trial for Atrial Fibrillation Investigators, 1990], SPAF I [Poller et al. 1991], and SPINAF [Ezekowitz et al. 1992]. Here, data was analysed for the control group patients only; over 1060 patients (mean age 67 ± 10.4 years) were followed up for an average of 1.6 years. The patients’ echocardiograms as well as clinical parameters were reviewed and then analysed (using univariate and multivariate analyses) with regard to their impact on the relative risk of stroke. Age, previous stroke, and hypertension were identified as key predictors of stroke in AF (Table 1). The annual stroke rate ranged from 0.8% in those patients less than 65 years old with no additional risk factors and normal left ventricular function, up to 19.7% in those patients more than 75 years old with one or more additional risk factors and abnormal left ventricular function.

Birmingham/NICE (UK) (2006)

In another analysis of the data from the AFI (1995) study, the Birmingham/NICE (UK) (2006) [Lip et al. 2006] assessment tool (Table 1) was based on the refinement of the AFI (1995) risk stratification tool and subsequently incorporated within the UK National Institute for Health and Clinical Excellence (NICE) guidelines for AF management. The tool itself was evaluated using data from over 990 patients from the SPAF III trial, who received treatment with either aspirin alone or aspirin combined with low-dose warfarin (target international normalized ratio [INR] 1.2–1.5), and followed up for a mean of 2 years (including blood sampling for von Willebrand factor [vWf]). The evaluation of this tool included a comparison with CHADS2 (described later). Cox modelling and multivariate analyses were used to determine the association of vWf with ischaemic and vascular events. The annual stroke and vascular event rates ranged from 0.0% in the low-risk group up to 5.75% in the high-risk group. This Birmingham scheme was shown to have a similar predictive value to the CHADS2 scheme for both ischaemic stroke and vascular events. Also, vWf was shown to be an independent risk factor for vascular events.

Tools from the ‘Stroke prevention in atrial fibrillation investigators’

Stroke Prevention in Atrial Fibrillation Investigators (1995)

Since aspirin was shown to be less effective than warfarin in the Atrial Fibrillation Investigators Study (1994), data from a large cohort of AF patients (Stroke Prevention in Atrial Fibrillation Investigators [SPAF] (1995) [Stroke Prevention in Atrial Fibrillation Investigators, 1995]) in SPAF I and II were analysed to identify patient characteristics related to arterial thromboembolism occurring during aspirin therapy. It was hypothesized that thromboembolism risk factors were different in AF patients receiving aspirin compared to those who were untreated. Over 850 patients receiving aspirin (mean age 69 ± 11 years) were followed for 1987 patient-years (range 4 days to 5.3 years) and risk factors (such as age, hypertension, impaired LV function) were identified according to their relative risks via multivariate analysis. The annual risk of stroke and systemic thromboembolism in patients ranged from 1.9% in the low-risk group to 5.9% in the high-risk group (Table 1).

SPAF I (1999)

Following from the 1995 tool, over 2010 patients (69±10 years) from the series of Stroke Prevention in Atrial Fibrillation trials (trials I to III) who received either aspirin alone or low-dose warfarin were followed up for an average 2.0 years to explore potential stroke risk factors [Hart et al. 1999]. SPAF I and II trials excluded patients with previous stroke or TIA, whereas SPAF III included patients with previous stroke or TIA. Risk factors were explored using multivariate logistic regression analysis to determine their relative risks, from which a risk stratification scheme was then developed for patients without a previous stroke or TIA (Table 1). When applied to patient data, the scheme showed a statistically significant difference in stroke prevalence among low- (0.9%), moderate- (2.6%), and high-risk groups (7.1%).

The ‘CHADS’-based tools

CHADS2 (2001)

The CHADS2 (2001) [Gage et al. 2001] risk assessment tool is currently one of the most widely used, despite the development of others since it was first introduced into practice. Two previous stroke risk stratification schemes (from the AFI (1994) and SPAF (1995)) were combined to derive this new scheme. Independent risk factors identified in the two schemes (such as prior cerebral stroke, hypertension, DM, age) were selectively included. In the scoring process, one point was assigned to all risk factors except stroke/TIA history (assigned two points) (Table 1). To validate this new scheme, the tool was applied to data from the National Registry of AF (NRAF in the USA), which included over 1700 nonrheumatic AF Medicare beneficiaries (aged 65–95 years) not receiving warfarin at hospital discharge. The stroke risk ranged from 1.9 per 100 patient years (score of 0) to 18.2 per 100 patient years (score of 6). Overall CHADS2 has shown high and better predictive value than either AFI or SPAF.

Modified CHADS2 score (2008)

A limitation of the original CHADS2 tool is regarded to be its inability to clearly distinguish patients with high stroke risk from those with a moderate risk [Baruch et al. 2007]. Thus, the modified CHADS2 score (2008) [Rietbrock et al. 2008] (Table 1) was proposed and tested against the original CHADS2 score by using data from over 51,800 chronic AF patients aged 40 years or older from the General Practice Research Database (GPRD; the computerized medical records of general practitioners in the UK). The investigators evaluated the inclusion of additional factors such as sex, extension of age categories, and also reweighting the previously included risk factors. Overall, the stroke risk was found to range from 0.72% for a risk score of 1 up to 15.64% for a risk score of 14. The revised CHADS2 was shown to have better classification and predictive value than the original CHADS2.

CHA2DS2-VASc (2010)

The CHA2DS2-VASc (2010) [Lip et al. 2010b] tool is a further evolution of the modified-CHADS2 tool and refinement of the Birmingham (2006) scheme, to include risk factors such as female gender and vascular disease (Table 1). It has been evaluated by application to a cohort of real AF patients from the Euro Heart Survey [Nieuwlaat et al. 2008], and compared against several other schemes such as the AFI (1994), SPAF (1999), CHADS2, CHADS2 modified, Framingham (2003), and Birmingham (2006) tools. In this tool, the hospital and death annual rate due to stroke and other thromboembolism ranges from 0.78% for a score of 0 up to 23.64% for a score of 9 [Olesen et al. 2011]. CHA2DS2-VASc (2010) has been shown to have a modest predictive value and to be better than either CHADS2 or the modified CHADS2 for predicting the risk of stroke and systemic thromboembolism.

Other tools

European Atrial Fibrillation Trial Study Group (1995)

The European Atrial Fibrillation Trial (EAFT) (1995) [Van Latum et al. 1995] assessment tool was based on the analysis of data from over 370 patients (mean age 71 ± 8 years, with the majority over 60 years) enrolled in the EAFT. In EAFT, patients with one or more nondisabling episodes of cerebral ischaemia and concomitant nonrheumatic AF (NRAF) were randomized to receive anticoagulant therapy, aspirin or placebo, and followed up for an average 1.5 years [European Atrial Fibrillation Trial Study Group, 1993]. The data pertaining to those in the placebo-treated group was used to derive this risk tool; clinical predictors (including previous stroke/TIA, systolic blood pressure (BP) >160 mmHg) were selected according to their relative risks via multivariate analysis (Table 1). Unlike other tools, age was not included as an independent risk actor because of the relatively higher average age of this subgroup of placebo-treated patients, although age was identified as risk factor in the broader EAFT trial [Van Latum et al. 1995]. The annual event rate of stroke and other major vascular events ranged from 0.0% in those aged more than 75 years with no risk factors up to 37% in those more than 75 years old with 3 or more additional risk factors.

Framingham Heart Study (2003)

The Framingham Heart Study (2003) [Wang et al. 2003] tool was based on observational data from the Framingham Heart Study, pertaining to a cohort of over 700 patients (aged from 55 to 94 years). The selected patients had a diagnosis of new on-onset AF, were not receiving warfarin, and were followed up for mean 4.0 years. A Cox model was used to identify risk factors and points were assigned to each to derive an overall risk score. A linear function was computed for each score to produce an estimation of 5 year stroke risk, ranging from 5% for a calculated score of 0–1 points, up to 75% for a score of 31 points. This risk assessment tool was shown to have modest predictive value for 5-year risk of a stroke event in individuals with AF (Table 1) as well as the 5-year risk of stroke or death.

ACC/AHA/ESC Guidelines updated (2011)

The ACC/AHA/ESC Guidelines updated (2011) [Fuster et al. 2011] tool has been proposed by expert consensus, to not only stratify stroke risk in AF patients, but also recommend antithrombotic therapy for patients in each risk category (Table 1). It was derived by expert review of several risk stratification schemes such as the AFI (1994) (1998), SPAF (1995, 1999), Framingham Heart Study (2003), and CHADS2 tools, but has not yet been evaluated via application to data from patient cohorts or clinical databases.

Summary of features of stroke risk assessment tools

Overall, a history of stroke or TIA is the most frequently included risk factor in these stroke risk assessment tools followed by age, hypertension, and DM. Many of the stroke risk assessment tools have been generated by review of previous risk factors but have not specifically sought to investigate or identify any new risk factors. Six of the stroke risk assessment tools [Atrial Fibrillation Investigators, 1998; Hart et al. 1999; Laupacis et al. 1994; Stroke Prevention in Atrial Fibrillation Investigators, 1995; Van Latum et al. 1995; Wang et al. 2003] have been derived from clinical or epidemiological studies of AF patients, while five are largely based on expert consensus. Furthermore, several tools have been based on selected patient cohorts or databases (where verification of data was not possible), and are potentially not representative of the broader target population (selection bias). Since each trial has defined risk factors differently, and risk factors were only assessed at the time of randomization, the true magnitude of impact of each factor (according to their relative risk) may be underestimated. Overall, CHA2DS2-VASc has been reported to have a better predictor than the AFI (1994, 1998), SPAF (1995), CHADS2 modified, CHADS2, Framingham (2003), and NICE (2006) tools in AF patients [Lip et al. 2010a; Van Staa et al. 2011].

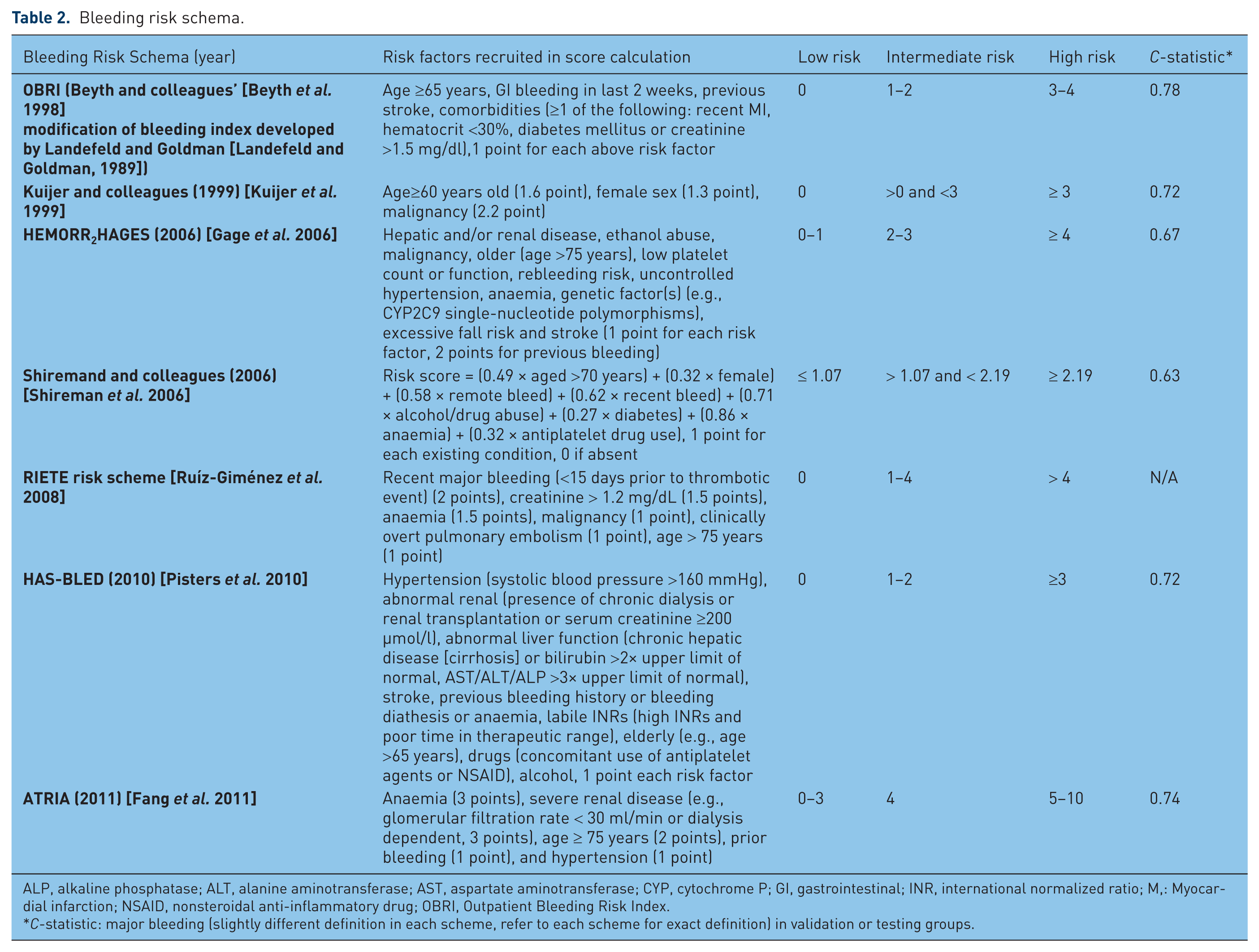

Bleeding risk assessment tools

Altogether, seven bleeding risk tools have been developed and employed in evaluating bleeding risk among AF patients (Table 2), although not all have been specifically developed for patients with AF. All of these bleeding risk tools stratify patients into low, intermediate, or high bleeding risk categories. Among them, HEMORR2HAGES [Gage et al. 2006] and HAS-BLED [Pisters et al. 2010] have been the most commonly advocated, both sharing common risk factors such as age, previous bleeding, renal, and liver impairment. Although each scheme uses different age cut-offs, ‘increased age’ per se is the only risk parameter common to all seven risk tools. The other most frequently mentioned inputs in these tools are age history of bleeding/prior bleeding (six out of seven tools), followed by anaemia/thrombocytopenia (five out of seven tools), renal dysfunction (five out of seven tools), previous stroke (three out of seven tools), hypertension (three out of seven tools), alcohol (three out of seven tools), DM (two out of seven tools), prior MI or ischaemic heart disease (two out of seven tools), liver dysfunction (two out of seven tools), malignancy (three out of seven tools), and female gender (two out of seven tools). Antiplatelet drug use, genetic factors, and excessive falls risk, are also considered in certain tools. To account for the different levels of risk attributed to various factor, different points have been assigned to each to derive an overall summative score (Table 2).

Bleeding risk schema.

ALP, alkaline phosphatase; ALT, alanine aminotransferase; AST, aspartate aminotransferase; CYP, cytochrome P; GI, gastrointestinal; INR, international normalized ratio; M,: Myocardial infarction; NSAID, nonsteroidal anti-inflammatory drug; OBRI, Outpatient Bleeding Risk Index.

C-statistic: major bleeding (slightly different definition in each scheme, refer to each scheme for exact definition) in validation or testing groups.

OBRI

The OBRI [Beyth et al. 1998] bleeding risk tool (Table 2) was refined from the bleeding index developed by Landefeld and Goldman in 1989 [Landefeld and Goldman, 1989], and designed for application to all types of patients at risk of haemorrhage, not specifically for AF patients. Development of the tool was based on the records of over 560 patients aged 18–92 years (mean age 61 ± 14) who were discharged from hospital on long-term warfarin therapy for indications such as AF, stroke, and other thromboembolism. Four risk factors (age ≥65 years, history of gastrointestinal bleeding, history of stroke, and severe comorbid conditions such as recent MI, renal insufficiency, severe anaemia) were identified by their relative risks as calculated in univariate and multivariate analyses. This OBRI scheme was then further tested on 264 outpatients who were commenced on warfarin after hospital discharge, and who were followed for a period of up to 7 years. The major bleeding incidence reportedly ranged from 3% in the low-risk group to 53% in the high-risk group, yielding modest predictive value for the tool.

Kuijer and colleagues (1999)

A literature review (comprising 15 papers) was conducted to identify risk factors for bleeding in a range of patients using anticoagulant therapy [Kuijer et al. 1999]. The risk stratification scheme (Table 2) was constructed according to the odd ratios of the various risk factors, and then initially evaluated in a subset of over 240 patients, followed by more extensive testing in an independent cohort of 780 patients (all from the database of the Columbus Investigators Study [The Columbus Investigators, 1997]); in the Columbus Investigators study over 1020 patients with venous thromboembolism (VTE) were allocated to receive heparin-based therapy plus an oral anticoagulant (OAC). In the initial subgroup of 240 patients, this tool was shown to have modest predictive value for all bleeding complications and major bleeding complications. Then, in the subsequent patient cohort, the tool was able to categorise one-fifth of the patients as high risk, where the absolute risk of bleeding was found to be significantly higher than the low-risk group (10% versus 1%).

HEMORR2HAGES (2006)

The HEMORR2HAGES (2006) [Gage et al. 2006] tool was derived from three previous risk schemes (the OBRI (1998) [Beyth et al. 1998], the scheme of Kuijer and colleagues [Kuijer et al. 1999], and the scheme of Kearon and coworkers [Kearon et al. 2003]), a systematic review [Beyth et al. 2002], and results from a literature (i.e. Pubmed) search. Overall, 11 risk factors (Table 2) were selected, with prior bleeding assigned 2 points (a higher weighting) and all other risk factors assigned 1 point, according to expert consensus. The scheme was then tested and compared with the other 3 schemes using data from over 3790 Medicare beneficiaries (mean age 80.2 years) listed in the NRAF database (the same database used for validation of the CHADS2). The bleeding risk ranged from 1.9 for a score of 0 up to 12.3 per 100 patient-years for a score over 4. Among patients prescribed warfarin, HEMORR2HAGES was shown to predict major bleeding better than the schemes by Kearon and colleagues (2003), Kuijer and coworkers (1999), or OBRI (1998).

Shireman and colleagues (2006)

The tool from Shireman and colleagues (2006) [Shireman et al. 2006] was developed and validated via a retrospective analysis of data from a cohort of over 26,300 AF patients who were aged over 65 years (identified in a national registry), and followed up for 90 days (NB/ the same database that was used for validation of CHADS2). A total of 18 variables (such as age, gender, and stroke) (Table 2) were initially explored in multivariate modelling, and 8 were finally selected into the risk scheme. The major bleeding rate ranged from 0.9% in the low-risk group up to 5.4% in the high-risk group. Overall, this tool was shown to have better predictive value than the OBRI and Kuijer and colleagues (1999) schemes.

RIETE risk scheme (2008)

The RIETE risk scheme (2008) [Ruíz-Giménez et al. 2008] tool was based on the RIETE Registry of patients (mean age 66 ± 17 years) with acute VTE, who were receiving anticoagulant therapy and followed up for 3 months. Over 13,000 patients were used as the derivation sample and over 6500 patients were used as the validation sample. Risk factors such as recent major bleeding, anaemia, malignancy, clinically overt pulmonary embolism, and age were identified based on their odds ratio in multivariate analysis (Table 2). During validation, the scheme was able to identify significant differences in the risk of major bleeding, ranging from 0.1% in low-risk patients to 6.2% in high-risk patients. Since this tool was developed using data from patients with VTE, its application to patients with AF or at risk of stroke is uncertain.

HAS-BLED (2010) [Pisters et al. 2010]

The HAS-BLED (2010) [Pisters et al. 2010] scheme was developed by using data from a real-world cohort of 3450 AF patients (mean age 66.8 ± 12.8 years) receiving antithrombotic therapy: OAC, antiplatelet only, OAC plus antiplatelet combined, or no therapy at all. The patient data came from the prospective Euro Heart Survey [Nieuwlaat et al. 2008] on AF, where patients were followed up for up to 1 year. The risk factors (such as age, female, hypertension, renal failure, prior major bleeding episode; see Table 2) were identified from univariate and multivariate analysis, with the resultant tool shown to have better predictive value than HEMORR2HAGES. The yearly major bleeding rate varied from 1.13% for a score of 0 up to 12.5% for a score of 5.

ATRIA (2011) [Fang et al. 2011]

ATRIA (2011) [Fang et al. 2011] was developed by obtaining the clinical data from over 13,559 nonvalvular AF patients taking warfarin therapy (mean age 71 years), and enrolled and followed up for up to 3.5 years in the ATRIA study [Go et al. 1999, 2003]. This cohort was separated into ‘derivation’ and ‘validation’ groups. Risk factors were initially selected from six previous published risk stratification schemes [Beyth et al. 1998; Gage et al. 2006; Kearon et al. 2003; Kuijer et al. 1999; Ruíz-Giménez et al. 2008; Shireman et al. 2006], evaluated by univariate and multivariate analyses of data from the derivation group of patients. Five risk factors (Table 2) were finally selected and assigned scores based on their regression coefficients. The scheme was then tested in the validation group of patients from the ATRIA study and compared with other risk stratification schemes. The risk of major bleeding ranged from 0.4% (0 points) to 17.3% (10 points). The predictive value for major bleeding of this tool was shown to be higher than OBRI, Kuijer and colleagues (1999), Kearon and colleagues (2003), HEMORR2HAGES (2006), Shireman and colleagues (2006), and RIETE risk schemes (2008).

Summary of features of bleeding risk assessment tools

In reviewing these tools, it is important to note their origins and therefore their relevance in the context of AF management. Three of these bleeding risk assessment tools were derived via refinement of previous risk assessment schemes [Beyth et al. 1998; Gage et al. 2006] or literature review [Kuijer et al. 1999]. One was derived from retrospective data extraction from clinical databases [Shireman et al. 2006]. Only HAS-BLED, the RIETE risk scheme, and ATRIA were derived from prospective studies of selected patient cohorts and all of them excluded patients who were not able to be followed up (selection bias). Although most of the data from which the tools were derived included a follow-up period of approximately 1 year, the schemes by Shireman and colleagues (2006) and RIETE (2008) had relatively minimal follow up (only 90 days) and did not include review of the INR during follow up. Furthermore, among these tools, only HAS-BLED, ATRIA, and Shireman and colleagues (2006) were specifically derived from AF patients, whilst HAS-BLED, ATRIA, HEMORR2HAGES, and Shireman and colleagues have all been validated in AF patients. The schemes by Kuijer and colleagues (1999) and RIETE (2008) are limited in their application by the fact that they were based on VTE patients, whilst ORBI was based on a broad range of patients discharged from hospital using antithrombotics. Indeed, these non-AF specific tools have been shown to be inferior in their application to the target patient population compared to those tools which were validated in AF patients [Fang et al. 2011; Gage et al. 2006]. In some recent reports, HAS-BLED has been shown to perform better in predicting bleeding risk than the ATRIA, HEMORR2HAGES, Shireman and colleagues (2006), Kuijer and colleagues (1999), and OBRI tools in AF patients [Apostolakis et al. 2012, 2013; Lip et al. 2012; Roldan et al. 2013].

Overall, in considering the inputs in these tools, advancing age has been the most frequently cited risk factor for bleeding, followed by a history of bleeding/prior bleeding, anaemia/thrombocytopenia, and renal dysfunction. The impact of age in the risk assessment process is highlighted again, and highlights the need to carefully assess the medication safety aspects of the decision-making process.

Assessment of medication safety in elderly patients

When exploring the utilization of anticoagulant therapy for stroke prevention in AF, issues impacting on medication safety must necessarily be explored. Age per se has often been cited as a key consideration in decision-making and a major barrier to the use of warfarin, reflecting the challenges of using high-risk anticoagulant therapies in the at-risk elderly population. However, a patient’s age per se is not a contraindication to therapy, but rather it represents an over-arching marker of other age-related factors that impact on their ability to manage complex regimens or which may increase their risk of adverse clinical outcomes. These factors include: impaired cognitive function (e.g. dementia), frailty (e.g. falls risk), comorbidities, decreased renal function, polypharmacy, and poor medication adherence [Alberts et al. 2013; Bajorek, 2011; Bajorek et al. 2007; Bereznicki et al. 2006; De Breucker et al. 2010; Hylek, 2008; Tulner et al. 2010]. Therefore, it is important to consider medication safety assessments alongside stroke and bleeding risk.

In reviewing the spectrum of risk assessment tools developed to date, only one has been identified that purposefully considers medication safety. The CARAT (Computerised Antithrombotic Risk Assessment Tool) is a web-based tool, which comprises both stroke and bleeding risk assessments (the CHADS2 and HEMMORR2HAGES schemes, respectively) alongside medication safety issues. The tool evolved from an earlier risk assessment process that was paper-based [Bajorek et al. 2012], and which had been shown to be effective, as part of a collaborative and multidisciplinary review process, in optimizing the use of antithrombotic therapy in older persons with AF [Bajorek et al. 2005, 2012]. The utility of the tool lies in integrating the risk: benefit assessment and systematically reviewing key medication safety issues such as the individual’s function, cognition, drug interactions, medication adherence, medication management capabilities, and relevant social factors. In applying this tool, the clinician can calculate the estimated risk of stroke, risk of bleeding, and identifies any key contraindications to the use of treatment options, before providing a treatment recommendation for an individual patient [Bajorek et al. 2005, 2012].

Whereas previous risk assessment tools for stroke and bleeding have been principally evaluated for their ability to predict risk, the evaluation of the CARAT has focused on canvassing clinicians’ application of this tool in the decision-making process. In an initial scenario-based survey, four cases (patient profiles describing different levels of risk) were used to test the agreement between clinicians’ independent treatment recommendations and those generated by CARAT. The majority of clinicians (71%, n = 77) ‘agreed’ with CARAT’s treatment recommendations (four questions; n = 108 responses), and importantly ‘agreed’ with its estimation of bleeding risk (three questions on bleeding risk; n = 81 responses). Regarding the overall usefulness and applicability of CARAT to clinical practice, out of 189 responses, 51% were agree or somewhat agree and 25% were neutral or undecided with CARAT. In their feedback, clinicians provided commentary on the CARAT to identify its potential role in the decision-making process:

‘Rapid calculation of risks is very useful’ (Cardiologist)

‘Bleeding risk assessment section is very useful’ (Cardiologist)

‘Warfarin is not a lifelong decision; people can fail a trial of anticoagulation but embolic stroke is irreversible [this tool helps re-focus away from bleeding risk, highlighting stroke risk]’ (Neurologist)

‘This tool should ideally be applied in ED and result should go to Local Medical officer’ (Cardiologist)

Discussion

What this review highlights is that there are indeed a number of tools to assess either stroke risk or bleeding risk in patients with AF. However, the tools are not uniform and their differences (including their limitations) need to be considered prior to application in decision-making. It is important to consider the development of these tools, and how their inputs were derived, acknowledging that not all risk factors can be treated equally since they present different relative risks. Indeed, each of the tools presented in this review does weight their input factors differently, and this is particularly reflective in the evolution of the CHADS2 to the CHA2DS2-VASc, where different age groups are assigned different points (i.e. the older age group is assigned more points).

In relation to the inclusion of ‘age’ as an important risk factor in both stroke and bleeding risk assessment needs examination. The age ‘cut-off’ to define an ‘older’ person differs across tools, ranging from 60 years up to 75 years, often below the average age (approximately 75 years old) of most AF patients. Whilst a few tools use cohort data to derive the age groupings in tools, some have been determined by expert consensus only. The inclusion of ‘age’ as a risk factor is not unexpected, given what is known about the increasing prevalence of AF and risk of stroke with advancing age. However, care must be taken about selecting arbitrary age ‘cut-offs’, noting that age per se is often an over-arching marker of other risk factors such as key comorbidities that are more prevalent with age (e.g. cardiovascular disease, diabetes, hypertension) and/or measures of frailty (e.g. falls risk), medication management ability (e.g. adherence), as well as cognition and function (e.g. dementia), although being elderly does not necessarily imply that these risks are present.

Overall, this review shows that most effort to date has focused on the development of tools to predict the risk of stroke, and less so on predicting the risk of bleeding. For stroke risk assessment, current guidelines recommend either that CHA2DS2-VASc be used for stroke risk assessment (e.g. European Society of Cardiology (ESC) [Camm et al. 2012]), or CHADS2 (e.g. American College of Chest Physicians (ACCP) [You et al. 2012], Canadian Cardiovascular Society (CCS) [Skanes et al. 2012]). The use of CHA2DS2-VASc may increase over time, since it is reported to better predict stroke risk than AFI (1994, 1998), SPAF (1995), CHADS2 modified, CHADS2, Framingham (2003), and NICE (2006) tools in AF patients [Lip et al. 2010a; Van Staa et al. 2011].

The availability of bleeding risk tools has certainly assisted clinicians in decision-making, enabling a balanced risk versus benefit assessment. Tools such as the HAS-BLED have now been incorporated in some guidelines (e.g., ESC guideline), where a score of 3 or more is considered to be an indicator of a high bleeding risk. However, it is important to note that the use of these bleeding risk tools is not to identify patients in whom treatment should be excluded; rather, these tools should be used to identify the potential for bleeding in an individual and identify appropriate risk reduction measures, i.e. treating modifiable risk factors (e.g. anaemia, drug use, alcohol use, uncontrolled hypertension, labile INRs, reduced platelet count), and providing support services to ensure close monitoring and regular review. In other words, a high bleeding risk score indicates the need to correct reversible risk factors and provide additional follow-up services, rather than providing a reason to prescribe anticoagulants [Alberts et al. 2013; Camm et al. 2012].

In reviewing the available risk tools collectively, it can been seen that there is a certain level of overlap between bleeding risk factors and stroke risk factors, specifically age, hypertension, previous stroke, and diabetes. Indeed, some studies using the CHADS2 and CHA2DS2-VASc tools have reported that patients with high bleeding risk have also been shown to have high stroke risk. Over 90% and over 99% of patients with high bleeding risk (HAS-BLED 3 or more) were categorized as high stroke risk by CHADS2 and CHA2DS2-VASc, respectively [Lip et al. 2011, 2012]. Whether it is sufficient to use tools such as CHADS2 and CHA2DS2-VASc to predict both stroke risk and bleeding risk needs further exploration, but would certainly help to simplify the risk assessment.

Integrating bleeding and stroke risks

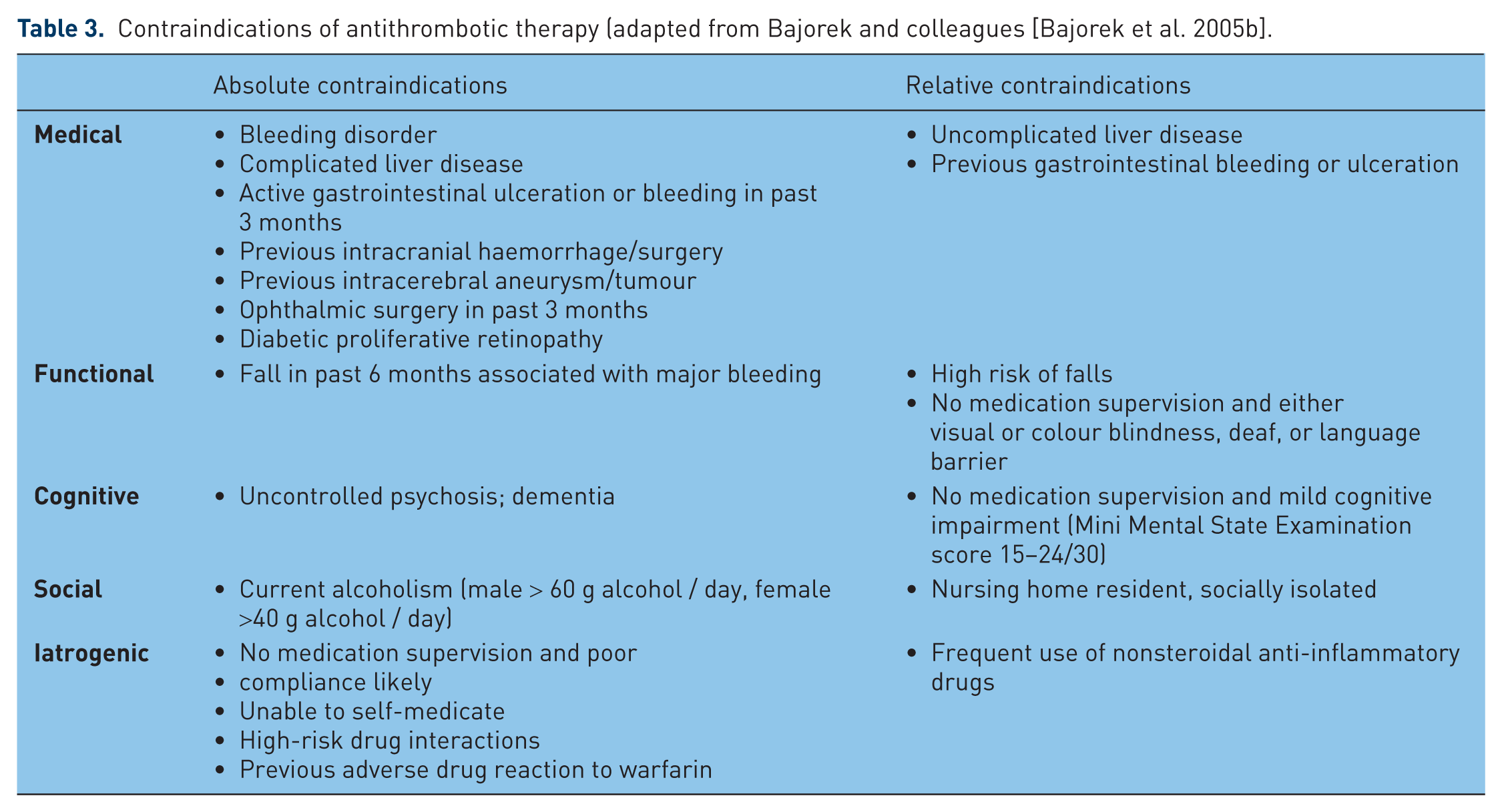

The simplification of decision-making through the use of such tools is an important goal in this context, recognizing that for the initiation of antithrombotic therapy is always complex for clinicians, since it involves weighing the risk (e.g. bleeding) versus benefit (prevention of stroke) of therapy, as well as other clinical characteristics of the patients, and these may vary widely among patients [Bajorek, 2011; Bajorek et al. 2007]. This review highlights that a number of tools are available to assess stroke risk or bleeding risk separately, and thus provide some information for antithrombotic therapy decision-making. In this regard, they are all helpful in identifying reversible risk factors (e.g. anaemia, uncontrolled hypertension) that can be modified through targeted intervention. However, the two assessments need to be brought together to complete the decision-making process for the selection of appropriate treatment, and ideally should estimate the relative risk versus benefit of available treatment options in an individual AF patient. Furthermore, the decision-making in AF is not solely based on stroke risk versus bleeding risk. Previous studies have highlighted that key barriers to the use of anticoagulants often relate to other patient factors that potentially increase the risk of medication misadventure [Bajorek et al. 2007, 2009]. Assuring medication safety is especially important for anticoagulants (e.g. warfarin) because they maintain a higher potential for adverse events due to their inherent risk of haemorrhage and/or complex pharmacology. Few of the available tools have provided this functionality (except CARAT), yet it is important to the whole process (Table 3) [Bajorek et al. 2005].

Contraindications of antithrombotic therapy (adapted from Bajorek and colleagues [Bajorek et al. 2005b].

Integration of risk schemes and consideration of additional factors does provide a more comprehensive assessment of an individual’s suitability for specific antithrombotic therapies. However, this potentially increases the complexity of the risk assessment process; in considering the usability of any of these tools, the critical issue relates to simplicity and practicality, so that it can be readily applied in everyday clinical practice. Compounding this is the need for regular review of risk, as these can change over time (e.g. increasing age). Although electronic and digital resources are increasingly available (including smart phones, portable computers, iPads) in the health setting, the ability to calculate a score easily and simply in the midst of a busy practice is paramount. The need for a meaningful, individualized risk assessment must be balanced against the need for usability by clinicians. This aspect has been specifically explored for one of the tools described in this review, where clinicians’ opinions have been gauged regarding the overall usefulness and applicability of the CARAT to clinical practice. Whilst the CARAT is web-based, it integrates a number of separate assessments (i.e. stroke risk, bleeding risk, medication safety considerations), and therefore requires more input from the clinicians at the time of decision-making. This may potentially affect its usability in some settings, and for this reason such tools might be best incorporated into clinical services that specifically review a person’s pharmacotherapy (e.g. accredited Medication Review services, pharmacy-based medicines checks, such as the MedsCheck program in Australia). There is a need to explore the role of support services provided by suitably trained and accredited health professionals (e.g. nurse practitioners, practice nurses, accredited pharmacists, consultant pharmacists) in using these tools within dedicated services, to help support clinicians in decision-making.

Therefore, more effort is needed to synthesize these separate risk assessments and integrate key medication safety issues, particularly in view of the introduction of new anticoagulants into practice. The introduction of these new drugs (e.g. rivaroxaban, dabigatran, apixaban) has been based on data from clinical trials which have included limited numbers of patients and which have applied strict exclusion criteria (e.g. a severe heart-valve disorder, stroke within 14 days or severe stroke within 6 months before screening, creatinine clearance of less than 30 ml/min, active liver disease) [Connolly et al. 2009; Granger et al. 2011; Patel et al. 2011]. To date, there are no assessment tools available to predict and/or stratify the risk of bleeding in regard to new anticoagulants. Although there is a perception that these new drugs are significantly safer than traditional antithrombotic options, they are not without risk, and risk versus benefit assessments remains critically important.

Summary

Although, separate tools are available to assess stroke risk and bleeding risk independently, they do not estimate the relative risk versus benefit of available treatment options in an individual patient, and seldom consider key medication safety aspects of prescribing treatment. More effort is needed to synthesize these separate risk assessments, integrate key medication safety issues, and incorporate them into daily clinical practice, particularly in view of the introduction of new anticoagulants into practice. Among the many factors contributing to risk, age is an important risk factor, but its definition and categorisation need further clarification and validation.

Footnotes

Funding

This research received no specific grant from any funding agency in the public, commercial, or not-for-profit sectors.

Conflict of interest statement

The authors declare no conflicts of interest in preparing this article.