Abstract

Generic red, green, and blue images can be regarded as data sources of coarse (three bins) local spectra, typical data volumes are 104 to 107 spectra. Image data bases often yield hundreds or thousands of images, yielding data sources of 109 to 1010 spectra. There is usually no calibration, and there often are various nonlinear image transformations involved. However, we argue that sheer numbers make up for such ambiguity. We propose a model of spectral data mining that applies to the sublunar realm, spectra due to the scattering of daylight by objects from the generic terrestrial environment. The model involves colorimetry and ecological physics. Whereas the colorimetry is readily dealt with, one needs to handle the ecological physics with heuristic methods. The results suggest evolutionary causes of the human visual system. We also suggest effective methods to generate red, green, and blue color gamuts for various terrains.

Motivation

We consider the colors of essentially the sublunary sphere of Aristotelian physics (itself derived from Greek astronomy). The sublunar region comprises the four classical elements (earth, air, fire, and water), the part of the cosmos where physics rules, the realm of changing nature. Nowadays, we might say “the natural environment.”

Digital photographs capture spectral information in a format that is closely related to the human visual system. This implies that the red, green, and blue (RGB) channels roughly contain counts of low-energy, medium-energy, and high-energy photons within the narrow visual range (about 1.8 to 3.4 eV). Enormous numbers of photographs from around the globe, each containing millions of spectral samples are readily available over the Internet. It would be a shame if such spectral data could not be mined and put to good use. However, there are numerous hurdles to be taken in order for this to be possible. We consider how to overcome some of them.

In order to motivate our methods, we start with a cursory look at the modest data source shown in Figure 1. The data volume is about Pebbles image.

These correlations must be due to the width of the autocorrelation function of the radiant power spectra of the natural (sublunar) environment. We consider this in some detail in this article. The RGB color channel correlations have immediate consequences that are important. Here we illustrate some of these, continuing our discussion of the pebbles image.

The eigenvectors are very close to the normalized versions of {1, 1, 1}, The blue vectors are

The fact that “opponent channels” serve to decorrelate color-related signals, such as the RGB, has been known for a long time. However, this insight came from analysis of the color matching functions (Buchsbaum & Gottschalk, 1983), that is to say, the structure of the human visual system. Here we have a quite different perspective; the correlations between the RGB channels are correlations between subregions of the radiant power spectra of the sublunar realm. We do not consider the human color system, but rather the ecological optics, that is, environmental physics. This does not address the vision of any specific species. Of course, we will come back to human color vision in this article, but only by way of a detour: No doubt, human color vision evolutionary adapted to the environmental physics.

Two facts are important here. First, a default prior yields very different results. Second, as we will show later, just about all photographs deriving from the sublunar domain have essentially the same structure as that of the arbitrarily picked pebbles example (Figure 1). Why is that? This appears to be a key question from an evolutionary perspective.

The first fact results immediately from elementary probability calculus. Suppose the RGB channels are mutually independent and uniformly distributed on the interval

The second fact is less easy to understand. It evidently involves the ecological physics of the sublunar realm. Accounting for this observation is a hard problem that can only be approached in a rather roundabout and approximate manner. It is dependent on the meaning of “ecological,” which not only involves the physics of the environment but also the structure of the human visual system.

In this article, we propose a model of spectral data mining that applies to the sublunar realm, involving spectra that are mainly due to the scattering of daylight by objects from the generic terrestrial environment. The model necessarily involves both colorimetry and ecological physics. The colorimetry is readily dealt with using standard tools. Because of the huge variety and complexity of the sublunar, the ecological physics has to be approached through heuristic, approximate methods of great generality.

The results yield handles on the evolutionary causes of the structure of the human visual system.

The methods described here also yield effective methods to generate RGB color gamuts for various terrains, something that might find a variety of applications.

Psychophysical, Physiological, and Physical Backgrounds

Although we consider these backgrounds separately, they are evidently closely connected, because humans have been shaped by evolution to match their generic Umwelts. 3 Because we are not considering visual awareness, but only discriminability, the visual part is readily dealt with using well known and standardized colorimetry. The physical part is far more involved.

Psychophysical and Physiological Background

The human observer samples a linear projection of the radiant power spectra available at the eyes. The complement of the projection’s null-space is three dimensional for the generic human observer. The null-space of the generic projection is well known, it was established empirically in the 19th century by Maxwell and Helmholtz (Koenderink, 2010a). 4 Nowadays, a projection matrix is available on the Internet. There is no natural basis for “color space,” that is the complement of the null-space, nor is there a natural metric.

We consider a highly simplified model of the sublunar realm in which the radiant spectra are spectrally selectively attenuated versions of the daylight spectrum. This implements “object colors.” For simplicity, we use a standard daylight spectrum available for download on the Internet (www.cie.co.at/index.php/LEFTMENUE/DOWNLOADS; see Figure 3).

At left, the CIE illuminant D65 (average daylight). The colors show the spectral bins for the cut-loci 483 nm and 565 nm. At right, the color matching functions of the CIE 1964 supplementary standard colorimetric observer. The tables may be downloaded from the CIE site, the cut-loci can immediately be computed from them.

The colors of such attenuated daylight spectra fill a finite region in color space. Because the daylight spectrum defines an infinitely dimensional cuboid in the space of spectra, this region is a convex, centrally symmetric volume in color space. 5 Its structure has been described by Schrödinger (1920). Colors on the boundary of this “color solid” are proper “parts of daylight” in the sense that their spectra are characteristic functions of connected spectral ranges or complements thereof.

This can be used to find the nature of spectral sampling by the human visual system. Split the spectrum into three parts by way of two cuts. Place the cuts thus that the resulting RGB space claims the largest possible volume fraction of the full Schrödinger color solid. This is a well-defined optimization problem because volume ratios are invariant against arbitrary colorimetric transformations. One finds (numerically, using the CIE color matching functions shown in Figure 3 right) that there is a unique solution, and the cuts should be at wavelengths of 482.65 nm and 565.43 nm (Figure 3 left). This yields a unique RGB basis for color space. The convex hull of the basis vectors is the parallelepided of largest volume that can be inscribed in the color solid, making it the optimum RGB basis (Figure 4 right). The corresponding color matching functions (Figure 4 left) are predominantly nonnegative and are mutually only weakly correlated.

6

At left, the color matching functions for the parts of daylight RGB colors. At right two (mutually symmetric) halves of the surface of the Schrödinger color solid. The skeleton cube is the parallelepided spanned by the red, green, and blue parts of daylight. This is a straight calculation from the CIE tables. The RGB cube snugly fits the color solid, in practice the overwhelming majority of object colors lies in the cube. This is the theoretically optimal representation of RGB colors.

Phenomenologically, the resulting parts look red, green and blue to generic observers, whereas unions of two parts look yellow, turquoise, and purple and the union of all three parts looks white. 7 Thus, one has a true RGB representation, exactly what display manufacturers aim for. If a display deviates significantly from this optimum, it is unlikely to attract customers. The reason is simply that the physiology dictates it.

Of course, there is no necessity for display manufacturers to produce “parts of daylight” as such. For display purposes, they are already in good shape when they get the colors—not necessarily the spectra—right. Thus, one might even use (quasi-)monochromatic sources. In practice, the spectra will often derive from the electronic structure of rare earth elements, from various organic molecules and so forth and often be rather rough. Nevertheless, the gamuts of current display units approximate that of the parts of daylight.

The same does not apply to the sensors. Ideal sensors would implement the human projection (Figure 4 left). The parts of daylight would be a good choice that is approximately physically possible because the sensor sensitivities should be nonnegative throughout most of the spectrum. Of course, such an ideal cannot be achieved. In practice, one makes do with coarse approximations. This typically involves a mosaic of absorption filters in front of the CCD or CMOS photosensitive chip. Fortunately, this tends to work out fine because almost all spectra of interest are not highly articulated. This is a topic to be discussed in the next section.

This suggests that human physiology effectively implements hyperspectral imaging with three bins per pixel, the bins being

This spectral description in terms of three bins is a natural RGB system, to which the camera and display industries have to comply—of course, approximately and by various heuristics. In practice, one notices that displays have largely converged, whereas there is quite a bit of variation among sensor sensitivities. That is why the “color rendering” of cameras tends to be debated in websites reviewing the latest consumer cameras. However, to the first approximation, all cameras are very similar, or they would not attract any customers at all.

This is essentially all the colorimetry needed in this article. Note that we do not refer to qualities of visual awareness, nor to just noticeable differences and so forth.

Physical Background

The physics is rather more involved

In order to avoid unfortunate confusion, it is necessary to distinguish between the spectrum of radiative power (henceforth called RP spectrum) and the spectrum of the articulation of the RP spectrum (henceforth called SA spectrum). The SA spectrum is the Fourier transform of the envelope of the RP spectrum. It can be quantified in terms of cycles per octave of the RP spectrum (Koenderink, 2010a). Both the amplitude and phase of the SA spectrum are relevant.

From the perspective of physics, the visual range subtends only a narrow window of the electromagnetic radiant power spectrum (about 380–700 nm as mentioned above). This is highly relevant from an ecological perspective, for the physical causes of spectral articulations change categorically over the electromagnetic spectrum (Feynman, Leighton, & Sands, 1963–1965). Molecular rotation bands occur in the infrared spectrum, while effects of electronic transitions in atoms occur in the ultraviolet spectrum. Articulation in the visual range is largely due to processes involving chemical binding energies. Since the set of physical causes is the same over the visual range and the width of the range is only an octave, the range will be statistically uniform. For spectral articulation, the important processes may be taken as translationally (along the wavelength axis!) invariant. This implies that a spectral analysis (the SA spectrum) makes sense. The articulation can have a variety of causes, there appears to be no particular absolute dimension. Thus, the default assumption would be scale-invariant (or self-similar) spectral statistics (Chapeau–Blondeau, Chauveau, Rousseau, & Richard, 2009)

It is hard to put this to an empirical test. Estimates of the

Perhaps surprisingly, these simple notions are already sufficient to draw some important consequences. Given that the visual range is narrow and its structure translation invariant, one expects the covariance matrix of the RGB color channels to have a structure roughly like

For simplicity, we consider the case

The first eigenvalue strongly dominates. It carries

This rough analysis is interesting in view of the significant literature on principal component analyses of collections of empirically determined spectral reflectance factors (Fairman & Brill, 2004; Tzeng & Berns, 2005). 10 Resulting principal components are invariably similar to the eigenvectors derived above (further illustrated below), there is essentially no valid reason to go through the trouble of measuring them and there is little reason to expect differences for various collections of samples. Indeed, there are not. The minor differences reported are probably due to the necessarily (very) limited size of the samples, which is perhaps an additional reason to prefer a fixed, formal basis.

The reason for the prominence of the two (instead of three

11

) opponent-like eigenvectors is that they implement the first- and second-order derivatives of the

This analysis, indeed, accounts for the major traits of the empirical data is illustrated by the simulations presented in Appendix A.

The physics of “object colors”

Object colors are due to radiant spectra that largely result from the scattering of radiation—here to be taken as average daylight say—by solids. Generic examples are colored papers, fabrics, human skin, soil, and rocks, … There are various processes that may play a role.

An important process is the radiative transport in layered turbid media. A well-known, approximate model is the Kubelka–Munk theory of turbid layers (Kubelka & Munk, 1931). 12 It is an approximate treatment of the radiative transport in layered turbid media that is very successful in applications and widely used in the paint, paper, and so forth industry. We introduce it here as a heuristic aid.

The key expression of the Kubelka–Munk analysis is

The function

From a global perspective, the structure of the Kubelka–Munk result is that the nonlinear part of the theory is packaged in the left side of Equation (4), whereas the right side of this equation describes fundamental physical causes—responsible for the spectral articulation—which are dominated by linear processes. We use these observations as a heuristic.

In ecological optics, one really does not have explicit theories; rather, a possibly large number of mutually different processes is likely to play some role. There is a need to capture this in a general, overall way. Here, one may take a lead from the formal structure of the Kubelka–Munk equation (though not necessarily the explicit Kubelka–Munk theory itself). That is what will be attempted here.

The scattering and absorption cross-sections are nonnegative physical quantities for which there exists no preferred absolute scale. Thus their noninformative Jeffreys’ prior distribution (Jaynes, 1968; Jeffreys, 1939, 1946) is hyperbolic, that is uniform on the logarithmic scale. Moreover, the quantities K and S are mutually uncorrelated. Thus, the parameter ξ (the ratio K/S) also has the hyperbolic prior.

The physical parameters combine multiplicatively, rather than additively, so a logarithmic representation is a natural one for the statistics.

A convenient way to capture this is to define a transformation Ω from the full real line

Note that the cases

In practice, one transforms observations on the unit interval to the “physical domain” (the full real line), does some calculations, and transforms back. It is an instance of the so-called homomorphic filtering (Oppenheim, Schafer, & Stockham, 1968), where the observations and calculations take place in distinct, appropriate domains. In our case, we collect data in the observation domain and study its statistics in the physical domain; in other applications, one generates artificial data in the physical domain and studies it in the observation domain. Examples follow below. It is a way to avoid nonlinear unpleasantness cheaply.

The physics of the imaging process

In the case of imaging, one may use a formally very similar phenomenological model (Barrett & Myers, 2003).

13

Here, the radiances in the scene are mapped on the unit interval for each of the color channels. When log radiance is mapped with the function Ω, the parameters are usually termed “exposure” (the location) and “contrast” (the width). Such a mapping is usually followed with a “gamma transformation” (Poynton, 2003), for example,

Although perhaps surprising at first blush, it makes intuitive sense that RGB photographs should retain the signature of the articulation of the radiative power spectral envelope, at least in some coarse fashion. If it was not the case, the images would not be acceptable to generic viewers. A formal calibration is not required, but typically one should be able to judge the distinction between red and green image details from the relative magnitudes of the RGB channels.

Of course, there are a variety of other factors that might put the value of potential “data” in jeopardy. The transformations considered above also handle the spatial nonuniformity, such as the focal plane illumination fall-off of generic cameras. The major remaining source of worry is probably transverse chromatic aberration. Fortunately, it is not too prominent (at least after correction by the in-camera firmware) in most contemporary camera models. It is unlikely to have an important effect on the statistics anyway, since it occurs at linear features, whereas the bulk statistics derives from areas.

A Phenomenological Ansatz

In the present application to the colors of the sublunar, the data are the color channels of images obtained by some familiar process (CCD or CMOS camera using RGB Bayer pattern say) and distorted for visual display (the Internet say). There are no radiometric calibrations. It is a very roundabout and most likely distorting way to observe physical parameters in the scene. Only by considering relations between relations one can expect to zoom in to relevant structure, absolute values cannot be expected to be informative.

Suppose the “true” radiometric signals in some specific case were {r, g, b}. Let the display distortion apply different magnifications

More generally, monotonic transformations due to a variety of physical factors are unlikely to have much effect. This is perhaps intuitively reasonable given the fact that at least rank order correlations are not sensitive to such factors at all.

The physics may be statistically modeled by a normal distribution on the logarithmic scale, characterized by location and width, for some physical parameter ϱ (say) in analogy to the ξ parameter of the Kubelka–Munk theory. A highly schematic model of the generalized physics might be a sigmoid function Ω, mapping the Example of histograms in the observation domain due to normal distributions of various means and variances in the physical domain. Note that these are far from normal in the observation domain.

Let the Data Speak

Even a medium-sized image

14

contains many pixels, for instance a 512 × 512 image contains more than a quarter million pixels (

Typical images today range from about 32 × 32 (“icon”, 1 kp) to 4096 × 4097 (consumer digital camera, 16Mp). For a typical field of view of

As an example of single image statistics, we proceed with the image of pebbles (Figure 1). It is a medium-sized image, it measures 2736 × 1824 pixels (thus about 5 Mp). The image is JPEG compressed, thus contains numerous artifacts on the local spatial scale. The overall mean RGB pixel value is {50, 46, 45}, 15 thus somewhat skewed towards the red, but approximately a median gray, as expected. 16 We already reported the covariance of the raw {r, g, b} values.

As a first operation, the RGB channels are transformed to

It has a very similar structure as found for the raw values (Equation (1)). What has changed are the distributions. The raw {r, g, b} values have histograms that may vary a lot, whereas the transformed values are close to being normally distributed. The transformation

The various covariance matrices thus have pretty much the form expected from first principles. Thus, already from a single image, the major aspects of the sublunar color gamut are apparent. Note that the scene contains mainly diffusely scattering solids, no sources or metallic reflectors and so forth.

For this image

An important gain of this transformation is that the

Of course, this is just a very small sample. Because a small sample, it is perhaps in danger of being atypical. For larger databases, the idiosyncratic nature of singular images tends to be drowned in the crowd.

More extensive statistics is available from a variety of databases in the public domain. The landscapes database from Torralba and Oliva at MIT (Torralba & Oliva, 2002) is an example (Figure 6). It is an interesting case because it also allows a distinction between what is intended as “sublunar” here and what might be termed “aerial,” or “atmospheric.” The database contains 410 “open country” images in total. All are 256 × 256 pixels, 8 bit per RGB channel. The majority has a strip of sky on top and a strip of foreground at bottom (see Figure 7 left). In the analysis, the “top” was defined as the upper 64 rows of the image pixel array and the “bottom” as the lower 64 rows of the image pixel array. Although obviously not exact, this certainly serves to split the data in a group that is predominantly sky, or atmospheric and a group that is predominantly “sublunar” in the intended sense of this article. This reduces the volume of the top and bottom sets to about 6.7 Mp samples each.

A mosaic composed of a subsample of the “open landscape” set of the MIT database. Local mean (left) and local samples (right) for the open landscape database.

Indeed, simply averaging over all images in the database yields a “generic landscape” image that is brownish below and bluish on top. Many of the images include blue sky (Strutt, 1899). It is the kind of priming, which a landscape painter might use in preparation of a painting. The human visual system is also tuned to this type of color banding (Koenderink, van Doorn, Albertazzi, & Wagemans, 2015).

Of course, the averaging removes all local variety. The nature and extent of this variety is retained in sampled images (see Figure 7 right). Each instance of such a sampled image is different, because pixel values are randomly sampled over the whole database, the only invariant being location in the pixel plane.

The effect of the air–light (Koschmieder, 1924) is visible in the average image, both in the ground plane and in the sky. The colors of the distant ground plane and the low sky become very similar at the horizon (Middleton, 1952). Apparently, such facts of ecological physics are quite robust in the sense that they survive noncalibration and likely maltreatment of image processing. Large data speak so loudly that these problems are overcome in the statistics.

The average RGB levels of the top part is {50, 63, 73}, that of the bottom part is {39, 40, 28}. Thus, the bottom part indeed looks brownish on the average, the top part bluish. This is also evident from the RGB histograms (Figure 8). Note that the histograms are far from normal, as could hardly be expected otherwise.

Histograms for the open landscape database. Top row for the observation domain and bottom row for the physical domain (black: Λ, blue: Θ, and red: Ξ). The left column relates to the lower (earth) part and the right column to the upper (aerial) part of the images.

A transformation to physical space makes the histograms, although somewhat skew, appear much more normal. Of course, the precise form depends somewhat on the choice of the sigmoid transfer function. The

The differences between the sky and earth parts of the open landscape images are well captured by the means and standard deviations of the

Such parcellated structure as in the open country database is quite typical for focused databases. As an example, the global mean of the Leeds butterfly database (762 images after removal of the images of pinned insects from museum collections) clearly reveals a “generic butterfly” (Figure 9). Such material is evidently unsuited to the present purpose. The same goes for images that depict various mutually very different items. An example is the parrots image (Figure 10). Not surprisingly, the The Leeds butterflies database. At left some samples, at right the overall mean. Parrots image with its histograms in the physical domain (colors as in Figure 8).

Here, we show some examples aimed at various types of terrain, some based on fairly large, representative images, other on databases focused on particular topics. For more information on the databases, see Appendix B.

An image like the desert soil image (Figure 11) is obviously quite homogeneous. It is a fairly large image (3264 × 2448 pixels), yielding a data volume of 8 Mp. The structure is entirely standard, with Desert soil image with its histograms in the physical domain (colors as in Figure 8).

Databases tend to be less overall uniform, though this need not be much of a problem if the fraction of “outliers” is small. As an example, consider the forest database (Figures 12 and 13). Here, two distinct types of nonuniformity occur.

Samples from the forest data base. It is very inhomogeneous. Local overall mean and local samples from the forest database.

First, there are outliers such as autumn foliage. Since these are true outliers, they are not problematic due to sheer numbers.

Second, there is a systematic trend for blue sky intruding on the top part. Here, large numbers do not help as can be seen from the global average. As a result the

There are evidently detectable differences in the available databases, although the overall structure is quite invariant. This can be judged in the larger databases by sampling random subsets. One finds that the statistical estimates for samples of say a hundred images (that is still good for millions of pixels) are very stable and well determined. Since any sample from one of the databases yields pretty much the same results, the databases have a unique signature, despite their global similarity. This suggests that the description might have some merit as a descriptor of the “gist” (Oliva & Torralba, 2006)—in colorimetric respects—of a database.

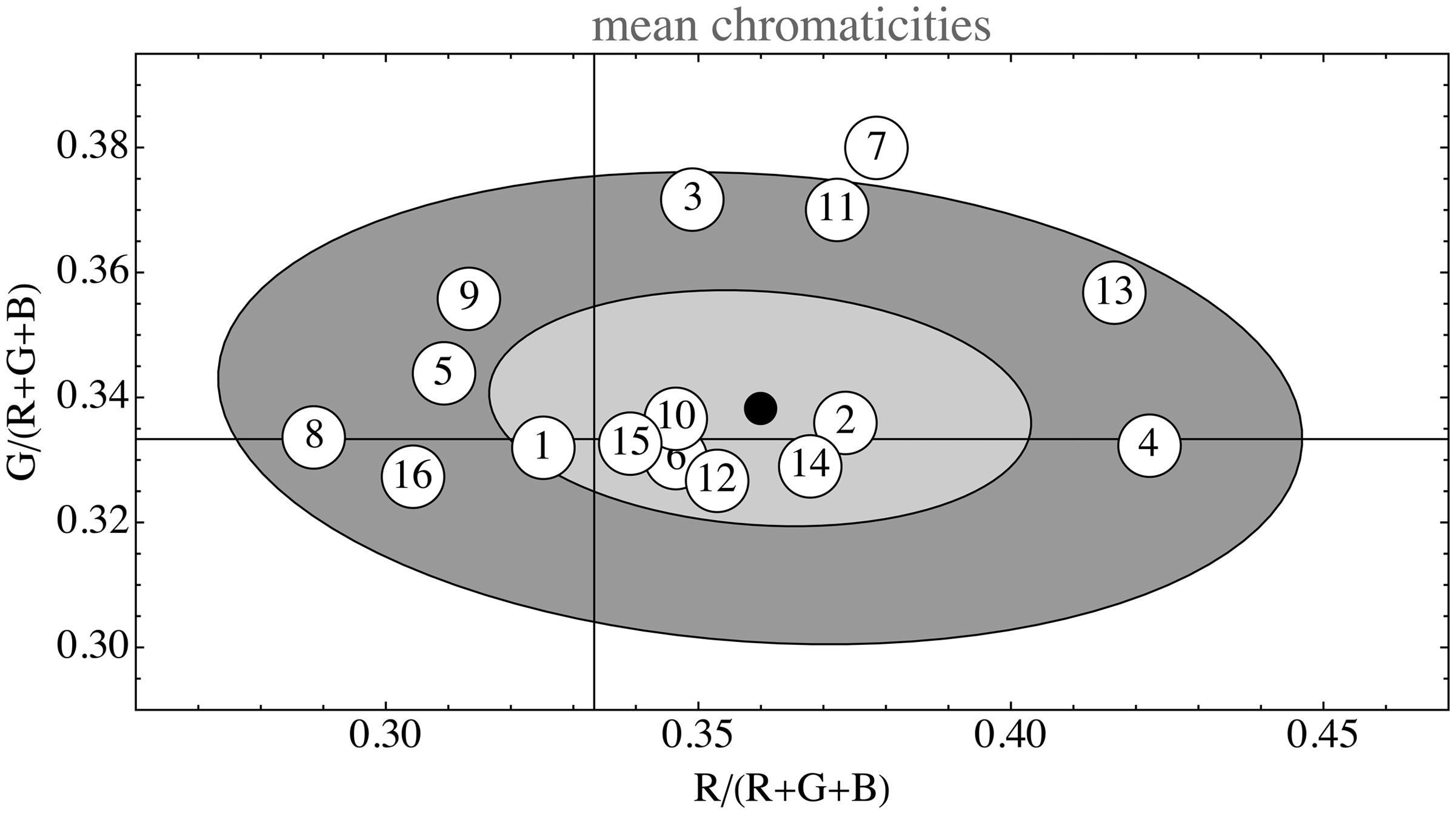

As to be expected, the images one encounters are almost invariably normalized so as to be overall medium gray with maximum contrast. The overall RGB means scatter all about the achromatic point in a chromaticity diagram (Figure 14). A measure of the monochrome contrast is the standard deviation in Λ. Empirically it varies over the range 0.65–1.38 (quartiles The overall RGB mean. The horizontal and vertical guidelines denote the one-third values, thus their intersection marks the point R = G = B. The ellipses show the one and two standard deviations boundaries. The indices refer to the list of data sources (see Appendix B). In total, this figure is based on

Meaningful measures are necessarily modulo Λ. For the examples analyzed in this article, the characteristic number

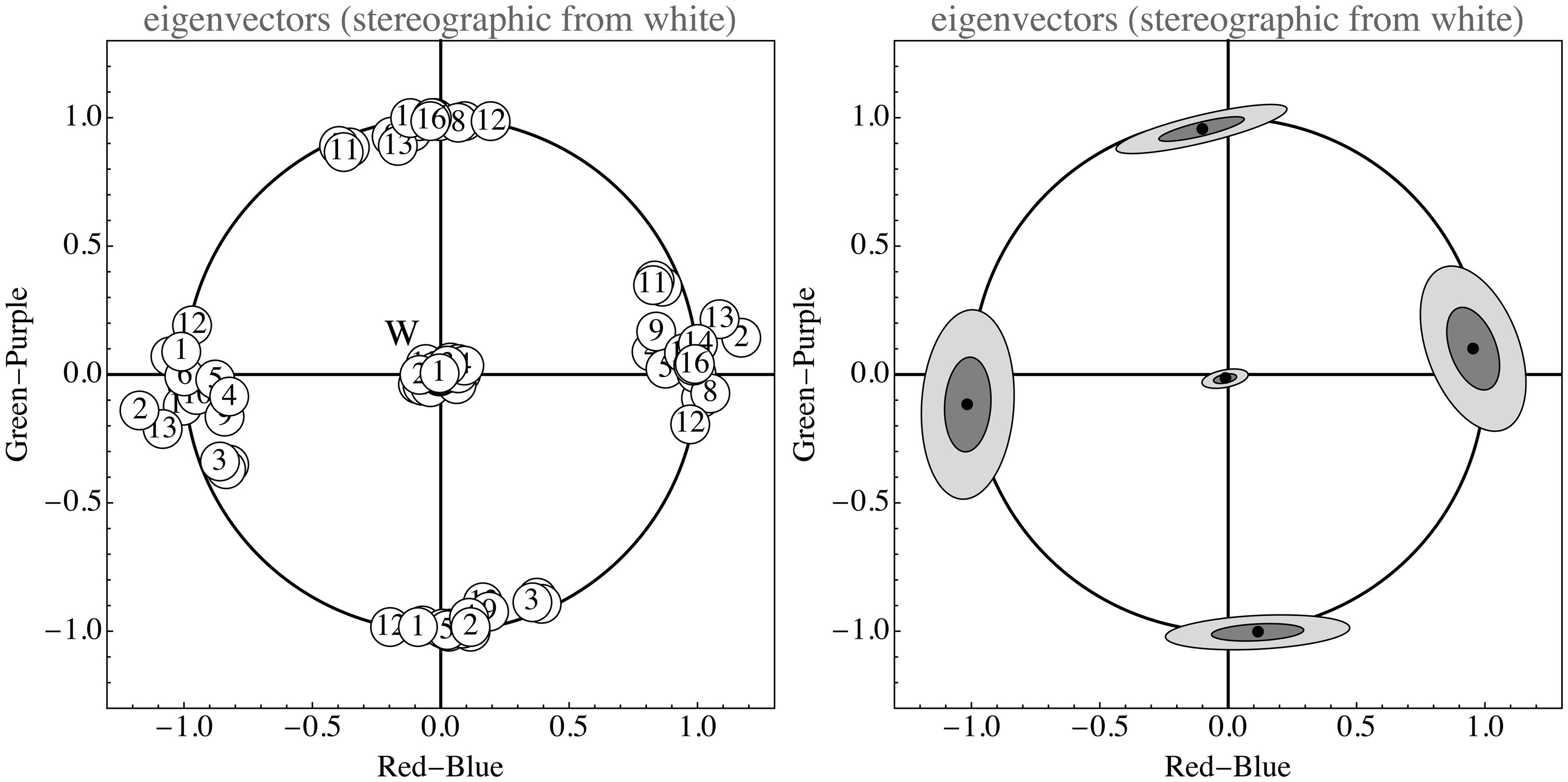

As can be seen in Figure 15, the opponent channel frame indeed fits almost universally. In this figure, the eigendirections of the Opponent color frame. These are stereographic projections of the sphere of eigendirections from the point

For the data in Figures 14 and 15 (see Appendix B), we used a set of 5 large single images and 11 databases, some very large. The collection is very heterogeneous, for instance, the landscapes were not segmented into foreground and sky, the flowers and butterfly databases were used as is and so forth. It is interesting to see how the structure of all these sets is rather similar although very different from the apparently obvious default assumption (mutually independent, uniformly distributed RGB channels).

For large samples, the pixel RGB data are largely captured by four parameters, describing the level variability of the spectral articulation as described by Θ and Ξ. For smaller samples, one encounters deviations from normality in the distributions of Θ and Ξ, sometimes finding bimodality, more typically heavy tails instead of normality. The Θ and Ξ distributions capture the spectral articulation, which will naturally vary from sample to sample when the sample size is small.

The standard deviation in Θ varied over the range 0.21–0.76 (quartiles

The standard deviation in Ξ varied over the range 0.05–0.29 (quartiles

There is a high correlation ( Correlation plot of the natural logarithms of the variances of the parameters Θ and Ξ for the same databases as mined in the previous two figure. The regression line has slope close to unity, indicating a linear dependence. The indices refer to the list of data sources (see Appendix B). In total, this figure is based on

Although perhaps understood in retrospect (such a dependence is also predicted by Equation (3)), this is evidently a remarkable finding. Most of the variance is in the red–blue, rather than the green–purple. This is due to the autocorrelation length of the articulation spectrum. This general structure is easily reproduced through very simple statistical models that capture the major facts of the ecological optics (see Appendix A).

Algorithmic Generation of Sublunar Color Gamuts

An obvious method to obtain random instances of a color gamut defined by some database is to simply randomly sample from the database. No generic algorithm needed! However, this involves sampling randomly from hundreds, perhaps thousands of images and randomly sampling pixels from these.

This may well be a viable method if the data source is a single, large image. However, in most cases, an algorithmic synthesis is the only practical way to proceed. It may well be the preferred way too, since it enables the possibility to automatically skip the unavoidable effects of saturation and subthreshold samples. 17

Since the structure of the sublunar color gamut is well determined and quite simple, it is easy to construct a random generator that will yield as many samples as desired for most purposes. All that is needed is to generate artificial

It is perhaps most natural to generate the values in the physical domain. Then there are six free parameters, namely, the location and widths of the physical values of the three channels. Thus, the algorithm becomes two tiered. In the first step, one generates random deviates

This may yield apparently very different RGB histograms. Starting values for the parameters may be obtained from the analyses of examples.

In most cases, this will almost perfectly simulate samples from the actual image or database (Figure 17 left for the pebbles image). Exceptions are cases of very inhomogeneous data sources (Figure 17 right for the parrots image). However, even in these cases, the results may well be acceptable for many purposes.

The large square is filled with simulated color samples, whereas the central square inset is filled with actual database samples. The inset has been outlined at right, because in this case (the pebbles image) the simulated gamut cannot be discriminated from the true one. The case of the parrots image (left) is expected to be about “worst case” and indeed, the inset square can be discriminated even without the outline.

Note that the functions Ω,

Automatic digital cameras are designed to set

Thus, the most informative data is in the three parameters

In applications, one would estimate the parameters from a fiducial set of images, like done in the previous section. It is even a reasonable proposition to estimate parameters from a single, large image. A simple application might be to find a generator for typical terrain colors for use in military camouflage. All that is needed is to provide representative images. In Figure 18, three instances are shown. All conform closely to the assumptions (prairie image Photographs of the prairie, the Arizona desert and the black moor with insets filled with artificially generated samples based on the statistical analysis of the images.

In cases, an “alien” effect is aimed at (like in SF movies), parameters can be assigned more freely, or indeed almost arbitrarily. A simple example is to set —almost all colors are far away from the achromatic axes; —there is an overdose of saturated greens and purples. Two random gamuts, obtained with different parameter settings. At left a gamut that might well belong to the sublunar realm and at right a clearly “alien” gamut. At left, 10 random spectra from the model. The parameter τ taken equal to the bin width. The

Thus, the algorithm offers a very wide range of readily parameterized color gamuts, which renders it useful for vision research.

The algorithm is sufficiently simple that an interactive developing environment is not hard to implement, allowing a designer to arrive at desirable

Discussion

We discuss three major topics, the ecological optics of the sublunar realm, the consequences of the generic structure of the

Ecological Optics of the Sublunar

Sublunar color gamuts have a simple structure that is invariant over mutually very different domains. This is the case because they all derive from a few generic properties of ecological physics. The main facts of relevance are the narrowness of the visual window and the extent of the

Taking account of the mapping of essentially linear physical interaction domains to the observation domain greatly simplifies the descriptions. The six parameters

The constant

They will merely make the environment appear a little lighter or darker and will most likely contribute a trend to normality in all channels. Finally, outliers on smallish spatial scales are generally due to flowers, butterflies, some minerals, and on a slightly broader scale human artifacts like paints and so forth. Such outliers are unlikely to be of much consequence, due to their relative scarcity.

Depending on one’s aims, it may be of interest to refine the statistics. Obvious targets are the deviations from normality of the

Our results are in accordance with Attewell and Baddeley (2007) who measured full spectral reflectance functions in the field. However, these authors remain in the reflectance domain and do not consider spectral correlations.

Full (high-resolution) spectral imaging (Ruderman, Cronin, & Chiao, 1998) also yields results close to these found here. Their estimation of the precise opponent directions is similar to ours. Apparently true hyperspectral imaging (a major chore) does not yield much beyond mere RGB crowd sourcing. This is only to be expected.

Articulations of the SA Spectrum and the Human Visual Sense

As we have shown, the opponent directions as phenomenologically identified by Hering (1920), turn out to derive from ecological physics. Their dominant appearance in the ecological optics is due to the nature of the spectral articulations. The structure of the

Thus, Hering’s opponent colors, identified from a phenomenological analysis, may well have resulted from an evolutional drive toward the informationally desirable decorrelation of sensor channels.

In view of the empirical value of Ψ, it appears a good design objective to limit the biological spectral resolution to a mere two or three degrees of freedom, as indeed resulted from evolutionary pressure. Because the correlation length of the

Thus, both trichromacy and opponency appear as adaptations to the ecological optics of the sublunar realm.

That opponent channels serve to effectively decorrelate the spectrally related optics nerve activity was already suggested by Buchsbaum and Gottschalk (1983). However, these authors effectively find the principal components of the color matching functions, not the spectral covariance. Thus, they implicitly treat the spectrum as white noise and the correlation structure as due to the mutual overlap of the color matching functions. This is categorically different from our perspective. However, from a biological perspective, the color matching functions are evolution’s answer to the spectral correlation, so the similarity of results is perhaps not a miracle, though certainly far from trivial.

Technology arrives at similar insights by a process of successive improvements driven by practical constraints. That the RGB channels tend to be highly correlated was already used in the 1953 (second) NTSC standard for analog TV. The luminance–chrominance encoding was already invented in 1938 by Georges Valensi.

18

The FCC version of the NTSC standard uses an intensity signal

Applications in Computer Graphics and Image Processing

Random gamut generators are likely to find applications in computer graphics, where it is often desirable (for instance in synthesizing various landscapes) to generate large numbers of instances of colors belonging to a restricted setting in an intuitively parameterizable way. Of course, such reflectance factors can be combined with various spectral illuminants to transform the gamut, say from a noon to a later afternoon setting.

Such color generators may also find application in interior design, textiles design, and so forth. They yield color gamuts that can be made to perfectly fit any well-defined environment in a simple, principled manner.

Although this exercise in capturing the “color gamuts of the sublunar” is possibly useful, there remain—of course—numerous loose ends. Some are due to the extreme generalizations that had to be made. As a consequence, numerous important effects of ecological optics were fully ignored. Perhaps most blatantly, no account was taken of the effects of geometry, obviously of major importance to the irradiation of the scattering surfaces and thus to the radiance scattered to the camera or eye. Such issues become relevant in applications of machine vision and image processing. Examples include image segmentation (Comaniciu & Meer, 1997) and recognition on the basis of color gamuts (Gevers & Smeulders, 1999). Here, more intricate statistical analysis, as mentioned above, may well turn out to be useful.

Conclusions

So what are the gamuts of the sublunar like? In view of the correlations shown in Figure 16 and perhaps surprisingly, a rather specific answer is possible. First of all, they are quite gray, touches of hues being special—thus biologically important. The variations are dominated by monochrome contrast. The major chromatic variations are in the range from orange to greenish–blue, or—as painters have it—“warm” to “cool.” Minor variations are in the range green to dark purple, what painters sometimes denote as “moist” to “dry” (Benson, 2000).

The big picture is evidently dominantly GRAY contrast with some red–blue and even fewer green–purple variations. This is largely due to basic physics (especially clear in Figure 21 of Appendix B) and constraints of human physiology which—by way of evolution—most likely have been shaped by the ecological structure itself.

Left: A thousand random RGB samples from the model. The parameter τ taken equal to the bin width. Right: A thousand random RGB samples from the model in the case of zero shift and large scaling. The spectra are approximately degenerated to random telegraph waves. Note that the random RGB colors accumulate on six of the edges of the cube, the other edges remaining unpopulated. These colors are Goethe’s edge colors (G. Kantenfarben).

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The work was supported by the DFG Collaborative Research Center SFB TRR 135 headed by Karl Gegenfurtner (Justus-Liebig Universität Giessen, Germany) and by the program by the Flemish Government (METH/14/02), awarded to Johan Wagemans. Jan Koenderink was supported by the Alexander von Humboldt Foundation.

Notes

Author Biographies

Appendix A

Appendix B

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.