Abstract

Texts on visual perception typically begin with the following premise: Vision is an ill-posed problem, and perception is underdetermined by the available information. If this were really the case, however, it is hard to see how vision could ever get off the ground. James Gibson’s signal contribution was his hypothesis that

Keywords

Introduction: Perception as an Ill-Posed Problem

Pick up almost any text on visual perception and you will find the foundational premise that vision is an ill-posed problem. This line of reasoning should be familiar to most readers of this journal. The modern version runs something like this: The problem of visual perception is an

The trouble with accepting the premise as the starting point for vision science is that it dooms the enterprise before we even get started. If the input for vision was as fundamentally ambiguous as claimed, it is hard to see how a visual system could ever get off the ground. It turns the problem of perception into the problem of prior knowledge: how did the perceptual system acquire, in some extra-sensory manner, the knowledge that is not only a prerequisite for seeing but also a prerequisite for vision to evolve? As Palmer (1999, p. 23) observed, were it not for the fact that our visual systems somehow manage to solve the problem, it would be tempting to conclude that three-dimensional perception is simply impossible.

Gibson’s Information Hypothesis

James Gibson did not believe this is the way our visual systems solve the problem. As he put it 40 years ago, “Knowledge of the world cannot be explained by supposing that knowledge of the world already exists” (Gibson, 1979, p. 253). The standard line of reasoning is patently circular. Even though it might be logically correct, it is the wrong way to frame the problem of perception: The function of vision is not to solve the inverse problem and reconstruct a veridical description of the physical world (Warren, 2012).

Gibson’s fundamental contribution to the field was his project of

At the core of Gibson’s theory is his concept of information, refined over the course of three decades. He reserved the term for

Patterns of stimulation are

Information Is Where You Find It

I stole my title from an article by David Dusenbery (1996), who wrote the founding text of the field of sensory ecology (Dusenbery, 1992). In his 1996 paper, he describes how root-knot nematodes exploit thermotaxis to converge on the depth of the root layer, in spite of circadian fluctuations in soil temperature. His take-away: All organisms extract useful information in their environment, sometimes from surprisingly complex stimulus patterns. (p. 121)

Electrolocation in Weakly Electric Fish

One energy array that has been hijacked as a medium of information is the electric field. Weakly electric fish, which evolved independently in Africa and South America, emit electric organ discharges (EOD) not to stun their prey but to sense their surroundings via

Sketch of electrolocation in the weakly electric fish,

An object within a mormyrid’s field casts an electric “shadow” on its skin, modulating the voltage amplitude with a Mexican-hat profile along the fish’s body (Figure 1B). Objects that are more conductive than water (larvae, plants, and metal) concentrate the current flow (Figure 1A), increasing the amplitude of the profile, whereas resistive objects (rock, clay, dead wood, and plastic) reduce its density, forming an inverted Mexican hat. The location of the shadow’s peak amplitude (max or min) on the skin specifies the bearing direction of the object. So far, so good. The trouble is that the diameter of the shadow increases with both object distance and object size. To make matters worse, the shadow’s peak amplitude decreases with object distance, increases with object size, and varies with its material composition. Electrolocation appears to be—you guessed it—just another ill-posed problem (Lewicki et al., 2014).

But, the Gibsonian persists,

The authors noticed an interesting exception, however: The slope/amplitude ratio of a perfect metal sphere is shallower than that of other objects, indicating that the sphere is farther away than it actually is. Thus, a metal sphere at 3 cm is a metamer of a metal or plastic cube at 4.5 cm. And indeed, when a metal sphere was compared with metal or plastic cubes of varying sizes, the fishometric distance function shifted by the predicted 1.5 cm. Fortunately for

Building on this distance invariant, higher order information for other object properties can also be derived (von der Emde, 2006). Take object size. The diameter of the electric shadow increases with the size of an object, controlling for its distance. It follows that object size (

As mentioned earlier, important families of objects can be identified by their electrical properties. Different materials vary in their

Finally, mormyrids also possess a surprising ability to recognize three-dimensional shape by electrolocation, despite variation in object size, pose, and material (von der Emde, 2004; von der Emde et al., 2010; von der Emde & Fetz, 2007). The results suggest that shape recognition may be based on the configuration of object parts and might even exploit temporal deformations of the field in an electric version of shape-from-motion.

In sum, a host of object properties are uniquely specified by higher order ratios of four variables: the peak amplitude, maximum slope, diameter of the electric shadow, and the distortion of the EOD waveform. These variables are informative by virtue of the laws of electrodynamics in an aquatic niche, including the resistance and capacitance of meaningful classes of objects. Upon investigation, electrolocation turns out to be an ecologically well-posed problem.

The Narwal’s Tusk

What is the function of the narwal’s tusk? The question has been a matter of scientific speculation for over 500 years, with hypotheses ranging from a weapon or a spear (despite the problem of consuming impaled prey), to a rudder, a spade, or an ice pick (Nweeia et al., 2009). More plausibly, it might be a secondary sex characteristic that plays a role in sexual selection or social dominance, akin to the stag’s rack. But recent research indicates that it’s a sense organ!

The male narwal’s tusk is a single erupted canine tooth, which forms a sinistral helix 2 to 3 m in length. A protective outer layer or

The narwal’s tusk contains dentin tubules that sense osmotic gradients (modified from Nweeia et al. (2014), with permission).

Now consider the narwal’s ecological niche. The narwal is an arctic whale that hunts halibut in complete darkness using click echolocation, deep beneath the winter pack ice. It can dive to depths of 1,500 m for up to 25 minutes, reaching pressures greater than 150 atmospheres (Laidre et al., 2003). But at their wintering grounds, ice covers 90% or more of the water surface (Heide-Jørgensen & Dietz, 1995). As a mammal that must surface regularly to breathe, there is thus strong selective pressure to avoid getting trapped under rapidly forming and shifting sea ice.

When surface water freezes, the salinity of the water below the ice increases (National Snow and Ice Data Center, 2020). Thus, a narwal swimming up to surface ice encounters a salinity gradient in space (along the tusk) and time (as it moves up the gradient). A higher concentration of sodium and chloride ions in the seawater generates an outward osmotic flow in the dentin tubules, stimulating the odontoblasts (Figure 2, bottom); conversely, a lower concentration generates an inward osmotic flow (Nweeia et al., 2014).

To investigate this hypothesis, Nweeia et al. (2014) alternately injected salt and fresh water into a tube surrounding the tusks of six male narwals while recording an electrocardiogram. A higher salinity evoked a significant 15% increase in heart rate. This result is consistent with the hypothesis that salinity differentials provide effective information for an imminent threat.

Within the narwal’s arctic niche, salinity gradients thus specify a very relevant property: the penetrability of the surface. The laws of chemistry, together with the niche’s regularities, grant salinity the status of information for the

In sum, information is where you find it—in ecological niches. Research inspired by Gibson’s information hypothesis has found that this is also true for vision. Let us press on.

Optic Flow: Steering Through Gaps

A goshawk flying through its dense woodland habitat deftly avoids trees and brush, threading its way through narrow gaps, all at break-neck speed. Video from a hawk head-cam during such maneuvers is astounding (BBC, 2009, min 1:43). Humans do this too, of course—only at lower speeds—any time we walk through a cluttered room or down a busy sidewalk. What information is used to guide locomotion, avoid obstacles, and steer through apertures or gaps? Gibson’s proposed solution was

Gibson discovered the optic flow pattern during the World War II, when he was leading research on pilot testing and training for the U.S. Army Air Force (Gibson, 1947).

3

By mounting a camera in the nose of an airplane during glide landings and projecting the images on a screen, he and his colleagues traced out the radial pattern of velocity vectors that came to represent the optic flow field (Figure 3A). In particular, they found that the focus of expansion in the velocity field corresponded to the current direction of travel or

Optic flow represented as a velocity field. A: Radial pattern of outflow during a landing glide, from the pilot’s viewpoint. The focus of expansion corresponds to the airplane’s current heading direction. B: Velocity field from a third-person viewpoint, projected onto a sphere about the observer.

Gibson (1950, 1966) promptly criticized the velocity field as only a partial description of optic flow, for it failed to capture local deformations, texture accretion/deletion, and temporal derivatives such as acceleration. He defined optic flow more broadly in 1966:

What does this have to do with steering through gaps? One of Gibson’s most influential proposals is that optic flow is used to control locomotion. He initially suggested that steering is “a matter of

Srinivasan noticed that honeybees enter the hive by flying through the center of the entrance hole, leading him to suggest that they fly through gaps by balancing the rate of optic flow in the left and right eye. This is close to Gibson’s (1958, 1979) formula of symmetrically magnifying the local contour of the opening, but only in the horizontal dimension. Srini and his colleagues (Kirchner & Srinivasan, 1989; Srinivasan et al., 1991) tested the hypothesis by flying bees down a vertically striped corridor while manipulating the speed of the stripes on one wall. Just as predicted, the bees shifted away from the wall with faster motion to the balance point in the corridor where the left and right flow rates were equalized.

When Andrew Duchon and I (Duchon & Warren, 2002) asked people to walk down a virtual hallway on a treadmill and similarly manipulated the speed of motion on one wall, humans behaved just like honeybees, shifting to the predicted balance point in the hallway. We implemented the balance strategy in a mobile robot (Duchon & Warren, 1994; Duchon et al., 1998) and found that it makes an excellent obstacle-avoidance strategy: The robot veers away from obstacles because they generate a higher rate of optic flow in one hemifield. This is reminiscent of Gibson’s (1958) formula for steering around obstacles by using the skewed magnification of the obstacle’s contour.

Reality turns out to be more complex, of course. Subsequent work has revealed that honeybees can also follow one wall in a corridor, by holding its optical flow rate constant (Serres et al., 2008); the lateral positions of the bee and its goal determine whether the bee adopts a balancing or wall-following strategy. Because humans walk on the ground, we equalize not only the flow rates of the left and right walls in a hallway but also the splay angles of the left and right baseboards (Duchon & Warren, 2002) and the edges of a path (Beall & Loomis, 1996).

While these findings tell us how bees and humans travel down a corridor, they do not yet answer the question of steering through gaps. There are a number of alternative hypotheses (Warren, 1998). First, there is Gibson’s (1958, 1979) global flow hypothesis: shift the focus of outflow (or more generally, the heading specified by optic flow) onto the gap. Second, there is Gibson’s (1958) local expansion hypothesis: symmetrically magnify the contours of the gap (akin to balancing the left and right flow rates). Third, cancel the lateral motion of the gap, originally described by Llewellyn (1971, p. 246) as “target drift”. Fourth, steering might not be based on optic flow at all, but on positional information (Rushton et al., 1998): move in the egocentric direction of the gap, or center the gap at the midline and move forward. Such a strategy may be needed to walk toward a distant light in the dark, but optic flow might be useful under illumination.

We tested these hypotheses by using virtual reality to dissociate the optic flow pattern from the actual direction of walking (Warren et al., 2001). Participants viewed a virtual environment in a mobile head-mounted display, while we displaced the focus of expansion by

Walking to a gap in virtual reality, with displaced optic flow.

With only a single target in the dark, that is precisely what happened: Participants walked on a curved path to the target, as predicted by the positional hypothesis (Figure 5A). This also demonstrates that they did not cancel target drift, which predicts a straight path. However, as more textured surfaces were added in the display, the amount of optic flow increased, and the paths increasingly straightened out, as predicted by the flow hypothesis (Figure 5B to D). Paths were straightest when a forest of poles was present (Figure 5D), consistent with the use of differential motion: The differential motion field also forms a radial pattern, with the point of zero parallax in the heading direction.

Virtual environment (left) and mean path (right, magenta ±

The results indicate that humans rely on both optic flow and positional information to steer to a goal or a gap, with the former dominating as more flow is added to the display. This is a control strategy that is robust to variation in viewing conditions. Naturally, the debate has continued, with some results favoring the positional hypothesis (J. M. Harris & Bonas, 2002; Rogers & Allison, 1999; Saunders & Durgin, 2011) and others the optic flow hypothesis (Bruggeman et al., 2007; M. G. Harris & Carre, 2001; Turano et al., 2005; Wood et al., 2000). Recent research has confirmed that the relative contribution of optic flow and positional variables depends on the available flow (Li & Niehorster, 2014; Saunders, 2014).

Gibson’s Affordance Hypothesis: Passable Gaps

Steering through a gap is not sufficient for successful behavior, however. To avoid bodily harm, the hawk, the human, and the bee must also be able to see whether the gap is large enough to fit through, that is, whether it is

To characterize an affordance, Gibson (1979, p. 127) said that environmental properties must be “measured

Applying this way of thinking to the humble gap, passability may be characterized by a dimensionless π-number,

To test the prediction that affordances are scale-invariant, the late Suzanne Whang and I (Warren & Whang, 1987, Exp. 1) asked large and small adults to “walk naturally” through gaps (apertures) of different widths. As the aperture got smaller, at some point participants began to rotate their shoulders and pass through sideways. This behavioral transition occurred at a narrow gap for small people and a wide gap for large people (Figure 6A) —but when shoulder rotation was replotted as a function of the ratio of aperture width to shoulder width

Mean maximum shoulder rotation when walking through an aperture as a function of (A) aperture width and (B) the ratio of aperture width to shoulder width, for small and large participants (from Warren & Whang, 1987, with permission).

This brings us to what is perhaps Gibson’s (1979) most notorious claim—that affordances not only exist, but can be

Body-Scaled and Action-Scaled Information

Gibson (1979, p. 127) reasoned that, according to the Information Hypothesis, many environmental properties such as the layout and composition of surfaces are optically specified. If a behaviorally relevant complex of surface properties constitutes an affordance, then “to perceive them is to perceive what they afford.” Affordances are not merely combinations of neutral physical properties, however, for when considered in relation to an animal, the complex has “unity,” “value,” and “meaning” for behavior. He offered an example: A surface that is horizontal, flat, extended, rigid, and low, relative to the animal’s body size, weight, and leg length, might be specified by a higher order combination of optical variables. This “compound invariant” (p. 141) would thus specify the affordance of a walkable surface.

To test empirically whether humans can perceive passable gaps, Suzanne and I asked large and small participants to judge whether they could “walk straight through” gaps of different widths without turning their shoulders (Warren & Whang, 1987, Exp. 2). In the static monocular condition, the gap was viewed through a reduction screen at a distance of 5 m, and in the moving binocular condition while walking from 7 m to 5 m. The results were the same in both conditions: The 50% threshold gap size was wider for large people than small people (Figure 7A), but when plotted as a function the body-scaled ratio

Mean percentage of “impassable” judgments with static monocular viewing as a function of (A) aperture width and (B) the ratio of aperture width to shoulder width, for small and large participants (from Warren & Whang, 1987, with permission).

Such evidence indicates that affordances can be judged successfully. It might be objected that, while the environment may be perceived, affordances are surely inferred based on prior knowledge of one’s body plan and motor abilities. In contrast, Gibson claimed that affordances are perceived

Pursuing the example of the gap, what is the body-scaled information for passability? Somehow an optical variable that incorporates a “length” scale for body size must be found. In terrestrial animals, this is given by standing eye height. Sedgwick (1986, 2021, also Gibson, 1979) showed that the height (

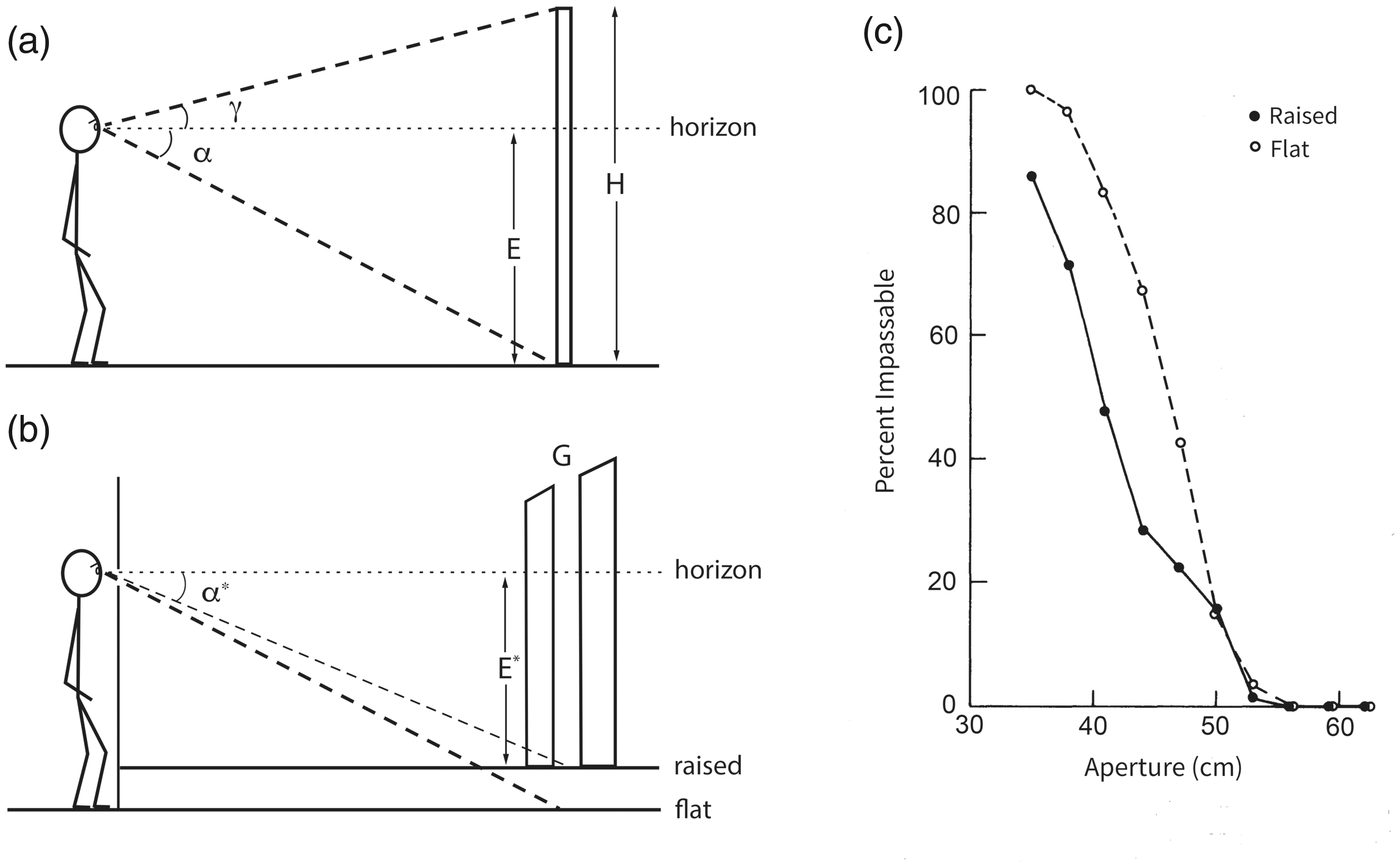

Eyeheight-scaled information for gap width. A: Definition of variables for the horizon ratio (Equation 3). B: Raising a false floor reduces the declination angle (

For a walking observer, eye height is also specified by the height of the focus of expansion on surrounding surfaces (Lee, 1980; Wu et al., 2005). Thus, consistent with our results, aperture width is specified as a ratio of eye height by static and dynamic

6

information. Because shoulder width is about one-quarter of standing eye height, the perceptual boundary (

To test this body-scaled information, we manipulated the horizon ratio by raising a false floor behind the reduction screen (Figure 8B), unbeknownst to the observer (Warren & Whang, 1987, Exp. 3). This served to reduce the declination angle (

The horizon ratio thus provides effective body-scaled information for passable gaps. If one manipulates the visual information—in this case the effective eye height—the perception of other eye height-scaled affordances should also shift. But if one manipulates the action system—such as changing body width by wearing a backpack—the information may need to be recalibrated to the new action capabilities (Franchak, 2017; Mark, 1987; Mark et al., 1990; Pan et al., 2014; van Andel et al., 2017).

Note that eye height-scaled information only works in terrestrial niches where animals walk and obstacles rest on the ground plane. The horizon ratio is informative about gap size by virtue of the laws of optics and gravity. Other body-scaled information must be available in aerial niches, where goshawks, budgerigars, and bumblebees successfully navigate through narrow gaps (Ravi et al., 2019; Schiffner et al., 2014).

Bumblebee Affordances

Not long ago I received a call from Sridhar Ravi, an aerospace engineer at the University of New South Wales, who was flying large and small bumblebees through gaps of various widths. He said that his bees were behaving just like our humans: As gap width (

Top-down view of a bumblebee flying through a narrow gap. Note lateral “peering” movements in front of gap, and yaw angle up to 90˚ within gap (from Ravi et al., 2020a, with permission).

Importantly, the onset of yaw occurs at wider gaps for larger bees and narrower gaps for smaller bees (Figure 10A). Not only that, but when yaw angle is replotted as a function of

Yaw angle when flying through a gap. A: Yaw angle as a function of gap width for small, medium, and large bumblebees. B: Same data plotted as a function of the ratio of gap width to wingspan, with sigmoidal fit (

So what’s the body-scaled information? Because bees are aloft, eye height-scaled variables provide no information about size. And with the notable exception of the praying mantis (Nityananda et al., 2016), invertebrates do not possess stereopsis. Sridhar found a clue in their “peering” behavior: As a bee approaches a gap smaller than ∼2 wingspans, it starts to hover in front of the gap and oscillate from side to side, keeping the edges in its field of view (see Figure 9). As the gap narrows from ∼2 to <1 wingspans, the mean number of passes increases from 2 to 10, and the mean peering time grows from <1 second to 4 seconds (Ravi et al., 2020a). This strongly suggests that the bees are scanning the edges of narrower gaps to determine their width from optic flow.

The puzzle is how a “length” scale gets into the optic flow during free flight (Gibson described the same puzzle for pilots in 1947). A reference flight speed would help. For example, if it can be assumed that the speed of approach to a gap is always the same, gap width is specified by the visual angle of the gap and its rate of expansion (Schiffner et al., 2014). Unfortunately, bumblebees do not have a constant approach speed—but they might perceive gap width based on the lateral velocity of their peering movements.

A bumblebee produces lateral movements by rolling its body about the longitudinal axis while holding its head vertical, locked to the visual surround. It is safe to assume that the body roll angle relative to the head is specified by neck proprioception from mechanosensory hair fields, as in other insects (Preuss & Hengstenberg, 1992). Remarkably, for any hovering body from bees to helicopters, lateral acceleration (

Rearranging the basic flow equation (Equation 1), the distance from the bee to the left and right edges of the gap (

Optical information for gap width (see Equations 6 and 7; from Ravi et al., 2020b, with permission).

After a little trigonometry (see Ravi et al., 2020b), we find that gap width (

It seems likely that this information is calibrated to a bumblebee’s wingspan by specific experience flying through gaps. For example, in each instar locusts recalibrate the information for gap sizes that their new bodies can step over, based on action-specific experience with walking across gaps (Ben-Nun et al., 2013). Similarly, humans calibrate the visual information for gap widths they can “squeeze” through, based on action-specific experience with squeezing (Franchak et al., 2010). When bumblebees fly through small gaps (<2 wingspans), their antennae, head, or legs frequently make contact with the edges of the gap, and wing collisions start to occur with narrower gaps (<1.5 wingspans). This might be a feature, not a bug: Mechanical contact provides feedback about gap passability that could be used to calibrate the optic flow (Equation 7). Thus, by virtue of the laws of optics and aerodynamics, and the bumblebee’s flight motor, wingspan-scaled optic flow is informative about the affordance of passable gaps.

In sum, birds do it, bees do it, even humans do it: perceive affordances based on body-scaled information, consistent with the affordance hypothesis.

Conclusion

As Gibson (1979) foresaw 40 years ago, if we begin with cases of successful perceiving and acting, we often find informational variables that specify environmental properties and guide effective actions within the nomic constraints of an animal’s niche. Information is where you find it. The case studies I have reviewed here serve as existence proofs that information exists in wildly different energy arrays and is uniquely specific to behaviorally relevant properties for creatures great and small. Starting with the presumption that perception is an ill-posed problem leads us to abandon the search, sending vision science down the rabbit hole of prior knowledge. Gibson’s hypothesis that vision is ecologically well-posed holds out hope for a vision science grounded in natural law.

Sidebar: Ecological Constraints or Prior Knowledge?

In one sense, perhaps, ecological constraints might be formulated as Bayesian priors. But in another sense, they are different animals: ecological constraints are facts of nature to which visual systems can adapt, whereas priors are internally represented

In their introduction to

As implied by the book’s title, however, the approach is commonly interpreted as a process model of visual perception. If perception is Bayesian inference, then the visual system combines probabilistic cues with internally represented knowledge (true beliefs) to make abductive inferences about the scene—that is, inferences from the image to beliefs about its best explanation. As Helmholtz understood, this prior knowledge must be of two kinds: knowledge about the external environment (priors) and knowledge about image formation (likelihoods; but see Feldman’s, 2013, caution). No sooner do Knill et al. (1996) invoke intentional concepts like knowledge, belief, and inference, though, before they back away from them, saying that this sort of prior knowledge may be no more than low-level filters or connection weights in a neural network. Palmer (1999, p. 83) executes a similar tactical retreat by saying that premises and assumptions may be merely patterns of neural connections, and “inference-like” processes are “somewhat metaphorical.” Indeed, the strong claims that synaptic weights in visual cortex represent knowledge or beliefs about the world, and neural networks make inferences, do not stand up to scrutiny (Orlandi, 2013, 2016). They are metaphorical. But metaphors guide the questions we ask, the experiments we do, and the theories we build.

Rather than internally representing external constraints, I would suggest we leave them in the environment where they belong. This would enable us to understand the visual system as

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by NIH R01 EY029745, NSF BCS-1849446.