Abstract

In times of what has been coined “post-truth politics,” people are regularly confronted with political actors who intentionally spread false or misleading information. The present article examines (a) to what extent partisans’ judgments of such behaviors as cases of lying are affected by whether the deceiving agent shares their partisanship (actual bias) and (b) to what extent partisans expect the lie judgments of others to be affected by a bias of this kind (perceived bias). In two preregistered experiments (N = 1,040), we find partisans’ lie judgments to be only weakly affected by the partisanship ascribed to political deceivers, regardless of whether deceivers explicitly communicate or merely insinuate political falsehoods. At the same time, partisans expect their political opponents’ lie judgments to be strongly affected by the deceiving agents’ partisanship. Surprisingly, misperceptions of bias were also present in people’s predictions of bias within their own political camp.

In recent times, the spread of misinformation has become a major threat to democratic societies: It can prevent fact-based debates, lead to public opinions being manipulated, and foster polarization and conflict among political groups (e.g., Cook et al., 2017; Loomba et al., 2021; Zimmermann & Kohring, 2020). One form of misinformation that has received surprisingly little scholarly attention as of yet concerns the dissemination of false information in statements made by political representatives. Although people are regularly confronted with political actors who omit information, twist the truth, and sometimes even make straight-out false statements to promote their political goals and agendas, little is known about how misinformation of this kind is perceived and reacted to (for the few studies on this topic that we are aware of, see Clementson, 2018; De keersmaecker & Roets, 2019; Galak & Critcher, 2023; Nyhan & Reifler, 2010; Woon 2017). In the present article, we aim to alleviate this gap in research by taking a closer look at people’s judgments of one of the crucial concepts involved in misinformation of this kind: that is, their judgments of whether political actors who intentionally spread false or misleading information can be considered as having lied.

Partisan Bias in Judgments of Lying

Misinformation Spread by Own Versus Opposed Party Members

To successfully counter misinformation in the political domain, political falsehoods need to be identified and called out for what they are (e.g., Zhang & Ghorbani, 2020). However, research on partisan biases suggests that people are often unable to accurately evaluate information in the political domain: Identical policies, outcomes, and behaviors are regularly assessed more favorably when associated with people’s own versus an opposed political party (for an overview, see Ditto et al., 2019), assumedly because people are motivated to reach conclusions that align with their political allegiances and beliefs (e.g., Ditto et al., 2019; Taber & Lodge, 2006 but see Tappin et al., 2020, on the potential role of a purely cognitive mechanism, where information is evaluated differently depending on consistency with people’s prior beliefs).

In the present article, we examine whether partisans’ judgments of political representatives who intentionally spread false or misleading information as having lied are similarly affected by whether the deception is associated with their own versus an opposed political party. As both people’s political allegiances and their prior beliefs are likely challenged when representatives from their own party engage in the immoral behavior of spreading misinformation (and supported when political opponents do), a bias in people’s lie judgments for such cases seems well conceivable. At the same time, it would likely constitute a serious threat to a healthy democratic discourse: While overly strict judgments for out-party members might foster out-group animus, overly loose judgments for in-party members might prevent political actors from facing adequate consequences within their own political camp (e.g., Bullock et al., 2015; Woon, 2017).

Misinformation Communicated by Means of Explicit Lies Versus Deceptive Implicatures

To communicate false information, political representatives and everyday speakers alike can make use of different strategies. In addition to explicitly stating something one believes to be false to deceive others, speakers can also communicate false information more indirectly by means of deceptive implicatures (Grice, 1989). In the latter case, speakers make use of utterances whose communicated content differs from the content that has been explicitly expressed and thereby trick their hearer(s) into a false belief while saying something that is literally true. Consider the following example: When Bill Clinton was asked in an interview whether he had a sexual relationship with Monica Lewinsky, he answered, “There is not a sexual relationship [with this woman].” Given the reporter’s question, Clinton clearly implicated that there never was a sexual relationship with Lewinsky. Nevertheless, his statement was literally true, as the relationship had indeed already ended when the interview took place. For two reasons, deceptive implicatures are of particular interest to the present project. First, as compared with standard lies, they are much more ambiguous (i.e., one can either focus on the literally true content or the implicated false content when evaluating deceptive implicatures). As biases are often particularly pronounced in ambiguous situations (e.g., Ditto & Koleva, 2011; Dunning et al., 1989; Kopko et al., 2011), this feature might render people’s judgments about deceptive implicatures particularly prone to partisan bias. Second, given their regular use in the context of political discourse, deceptive implicatures provide an ecologically valid context for investigating partisan bias. In our first experiment, we thus examine to what extent people show a political bias in their lie judgments for both standard lies and deceptive implicatures.

Perceived Partisan Bias in Judgments of Lying

Recent research suggests that perceptions of how other partisans think or feel can be just as impactful as partisans’ actual thoughts and feelings. Partisans regularly overestimate how polarized, biased, and hostile toward the own group political opponents are, and these misperceptions are associated with dislike, hostility, and an unwillingness to cooperate with the out-group (e.g., Blatz & Mercier, 2018; Kennedy & Pronin, 2008; Moore-Berg et al., 2020; Parker et al., 2021; Pasek et al., 2021). Misperceptions of bias, in particular, have been argued to arise because people are naive realists who think that they are able to perceive the world as it objectively is, and that people who do not share their views of the world must therefore either be ill informed, or, when this can be ruled out, unable to see the world objectively (i.e., be biased; e.g., Pronin, 2007; Ross & Ward, 1996).

Given the regular occurrence of misperceptions of bias and polarization and their unique contribution to partisan conflict, in our second experiment, we examine to what extent partisans perceive their opponents’ judgments of whether a political representative has lied to be biased by the deceiving representative’s partisanship. On the naive realism view, we would expect partisans to perceive their opponents—that is, people who do not share their views of the world—to be strongly biased in their lie judgments. Perceiving one’s opponents to be biased in this way, in turn, might contribute to the growing divide between opposed political camps. After all, cooperation and rational discussion will likely seem less fruitful the more the other side is perceived as swayed by non-normative considerations (Pronin et al., 2004)

Experiment 1

Overview

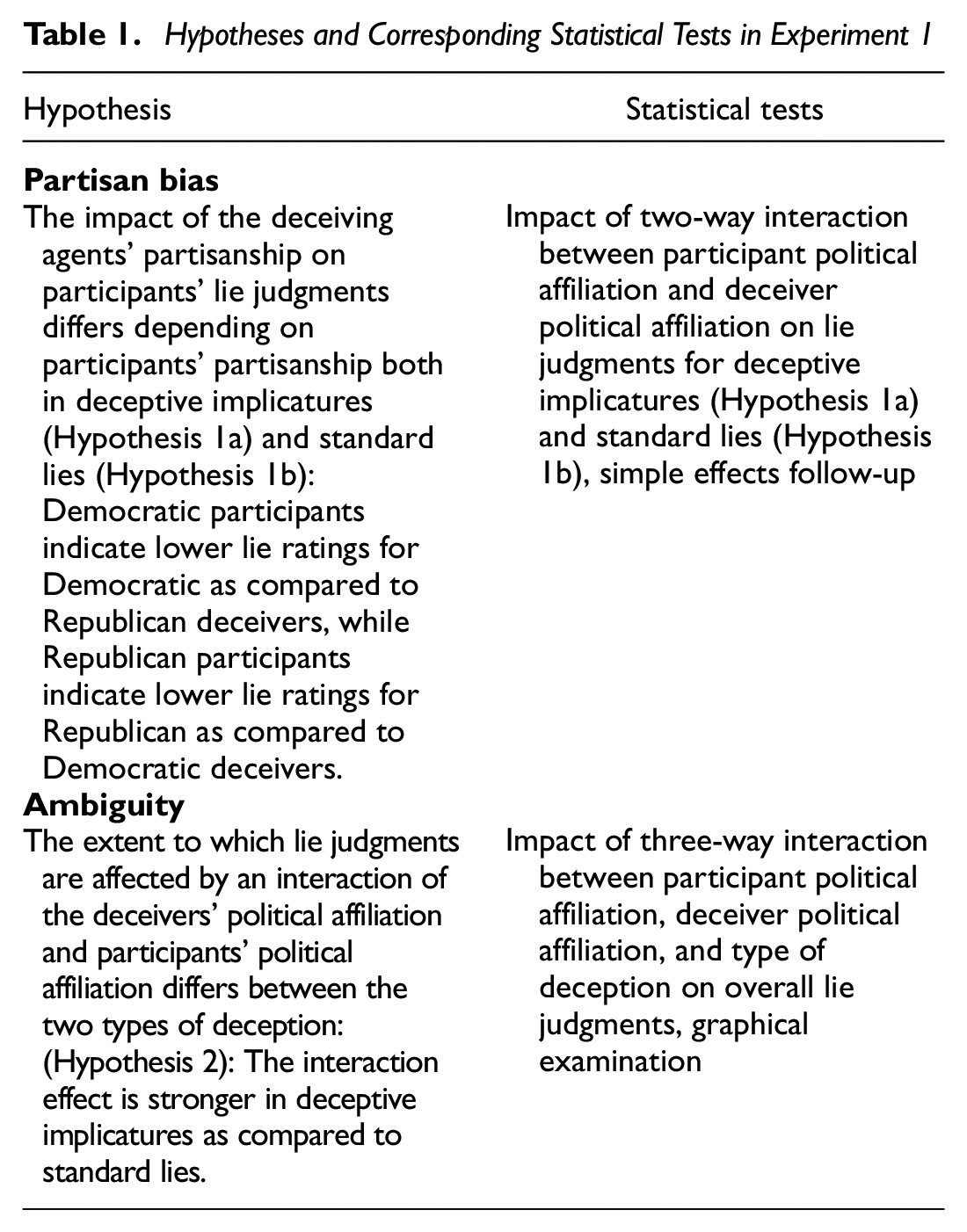

In Experiment 1, we examine whether partisans’ lie judgments are prone to a partisan bias based on the deceiving agent’s partisanship. Building on the literature outlined earlier, we expected partisans’ lie judgments to be more favorable for own versus opposed party deceivers, particularly in deceptive implicatures (for a detailed overview of our hypotheses and statistical tests, see Table 1). Experiment 1 was preregistered on the Open Science Framework (OSF): https://osf.io/zecps. Data and analyses are found at https://osf.io/eq3sa/.

Hypotheses and Corresponding Statistical Tests in Experiment 1

Method

Participants

433 participants were recruited via Prolific Academic (Palan & Schitter, 2018). Participants had to be U.S.-American citizens, native English speakers, at least 18 years old, and self-identified Democrats or Republicans to be eligible. Participants who indicated an unclear political affiliation (n=13), failed an attention check (n=16), or exceeded the preregistered sample size of N=400 1 were subsequently excluded (n=4). The final sample included 200 self-identified Democrats (age: M=34.78 SD=11.25; gender: 90 female, 109 male, 1 undisclosed) and 200 self-identified Republicans (age: M=39.91, SD=12.44; gender: 95 female, 105 male). 2 Participants were compensated with £0.82 (£7/h).

Design, Procedure, and Materials

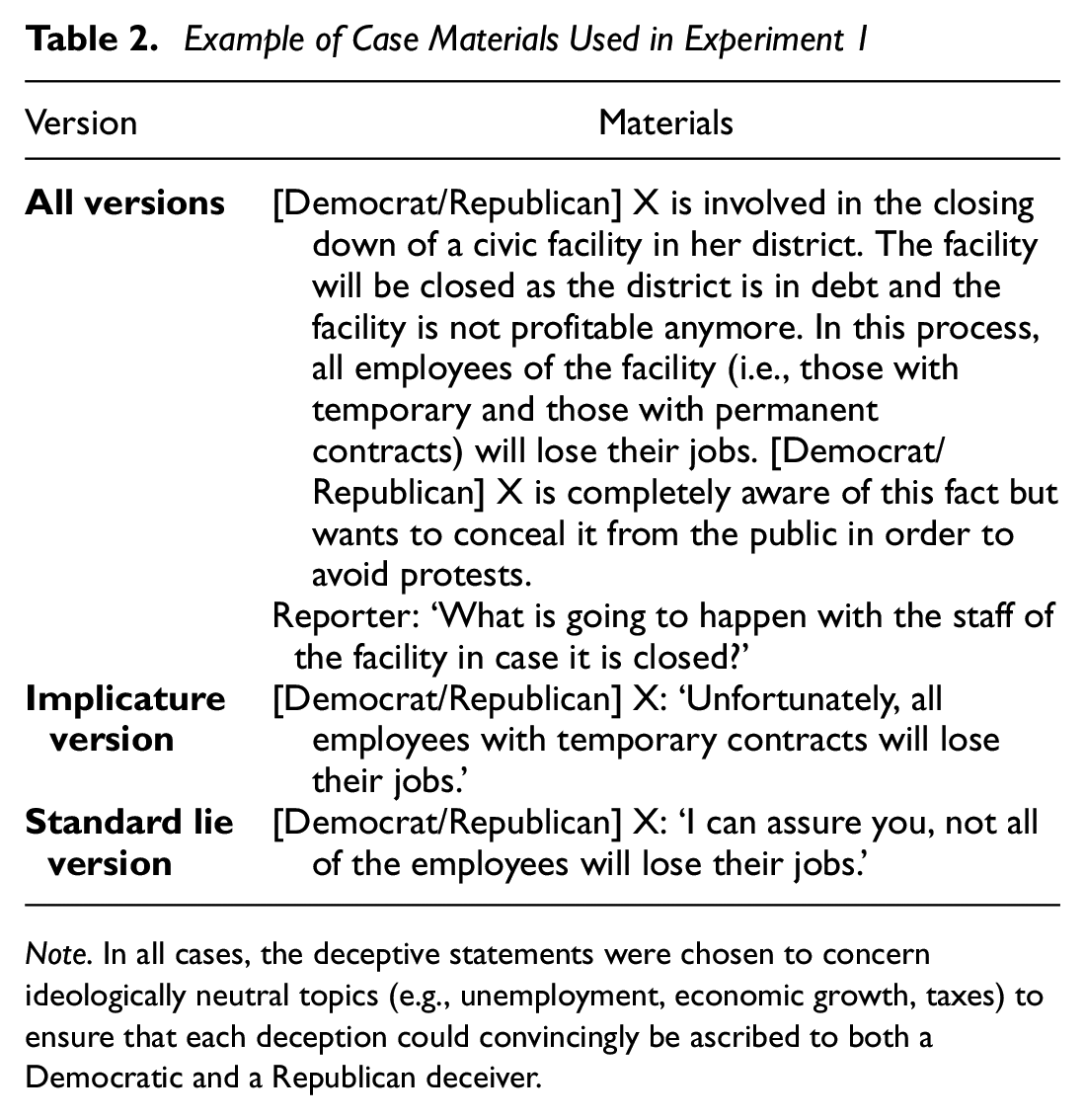

Experiment 1 followed a 2 (deceiver political affiliation: Democratic vs. Republican, between-subjects) × 2 (deception type: deceptive implicature vs. standard lie, between-subjects) × 6 (deception content, within-subjects) mixed factorial design. Participants were presented with six vignettes of a fictional political representative (either described as Democratic or Republican) communicating a false claim (either by means of a deceptive implicature or a standard lie) in an interview. Allocation to conditions and the order of cases were randomized. Table 2 shows a case example; all remaining cases were structurally equivalent and can be accessed in the preregistration files.

Example of Case Materials Used in Experiment 1

Note. In all cases, the deceptive statements were chosen to concern ideologically neutral topics (e.g., unemployment, economic growth, taxes) to ensure that each deception could convincingly be ascribed to both a Democratic and a Republican deceiver.

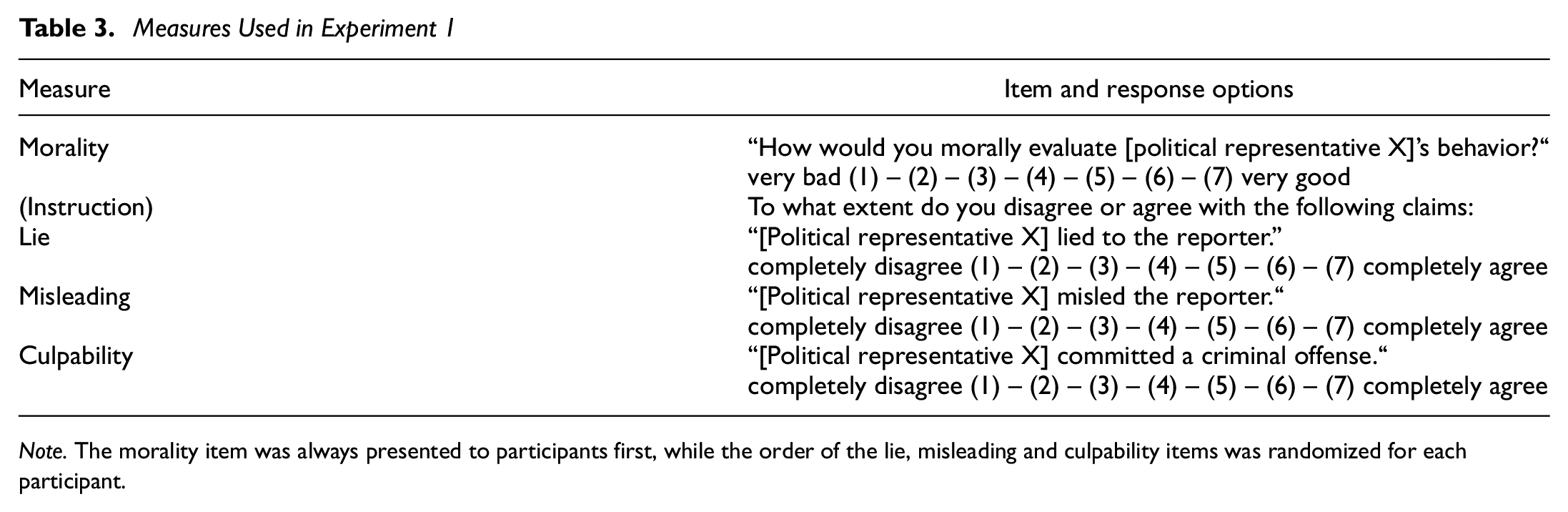

After reading each case, participants were presented with the test questions depicted in Table 3. In addition to the focal question of whether each political representative lied, participants were asked morality, misleadingness, and culpability questions. Also, they had to answer two attention checks and a set of demographic questions (age, gender, political affiliation). The morality question was included to allow participants to express blame before answering the lie question (see Gal & Rucker, 2011), while the misleading question was added to allow participants to distinguish between cases of lying and mere misleading. Finally, the culpability question was asked to provide participants with an item to disagree with (see also Reins & Wiegmann, 2021); otherwise, participants might have felt less inclined to agree with both the lying and misleading items (when agreeing to both would be a sensible response for many of the cases tested).

Measures Used in Experiment 1

Note. The morality item was always presented to participants first, while the order of the lie, misleading and culpability items was randomized for each participant.

Results

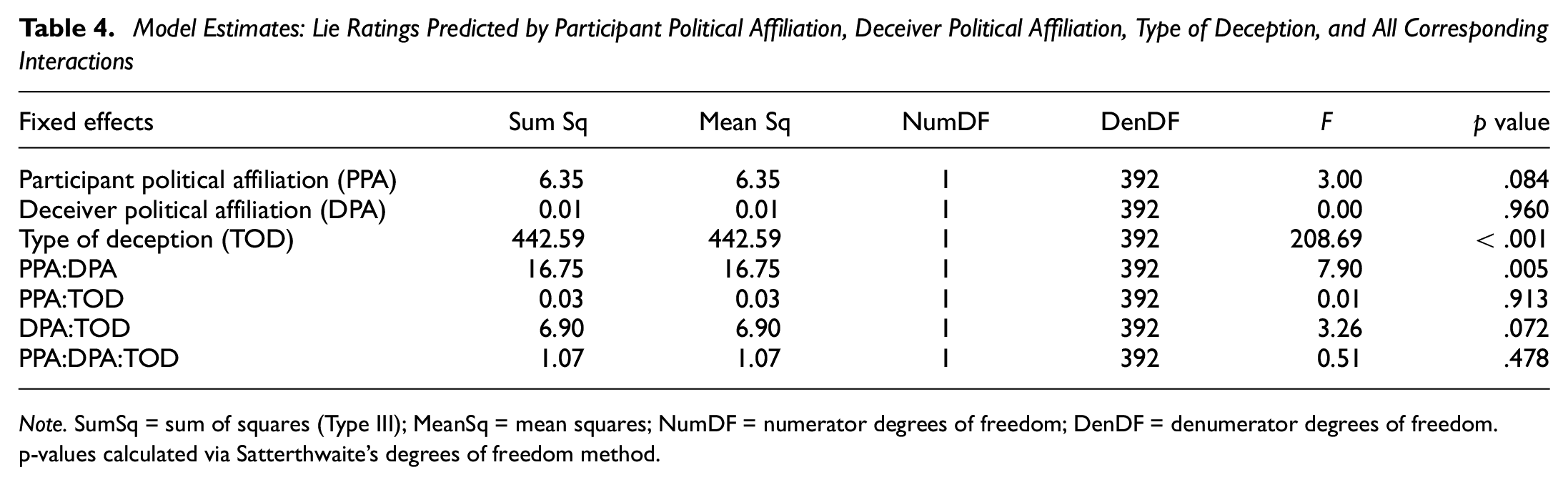

A linear mixed-effects model was used to predict participants’ lie ratings from participant political affiliation, deceiver political affiliation, deception type, and all resulting interactions. Participants and the deception contents were included as random factors (intercepts only). 3 Significant results were obtained only for the main effect of deception type and the interaction between participant and deceiver political affiliation. Table 4 summarizes the model’s estimates.

Model Estimates: Lie Ratings Predicted by Participant Political Affiliation, Deceiver Political Affiliation, Type of Deception, and All Corresponding Interactions

Note. SumSq = sum of squares (Type III); MeanSq = mean squares; NumDF = numerator degrees of freedom; DenDF = denumerator degrees of freedom. p-values calculated via Satterthwaite’s degrees of freedom method.

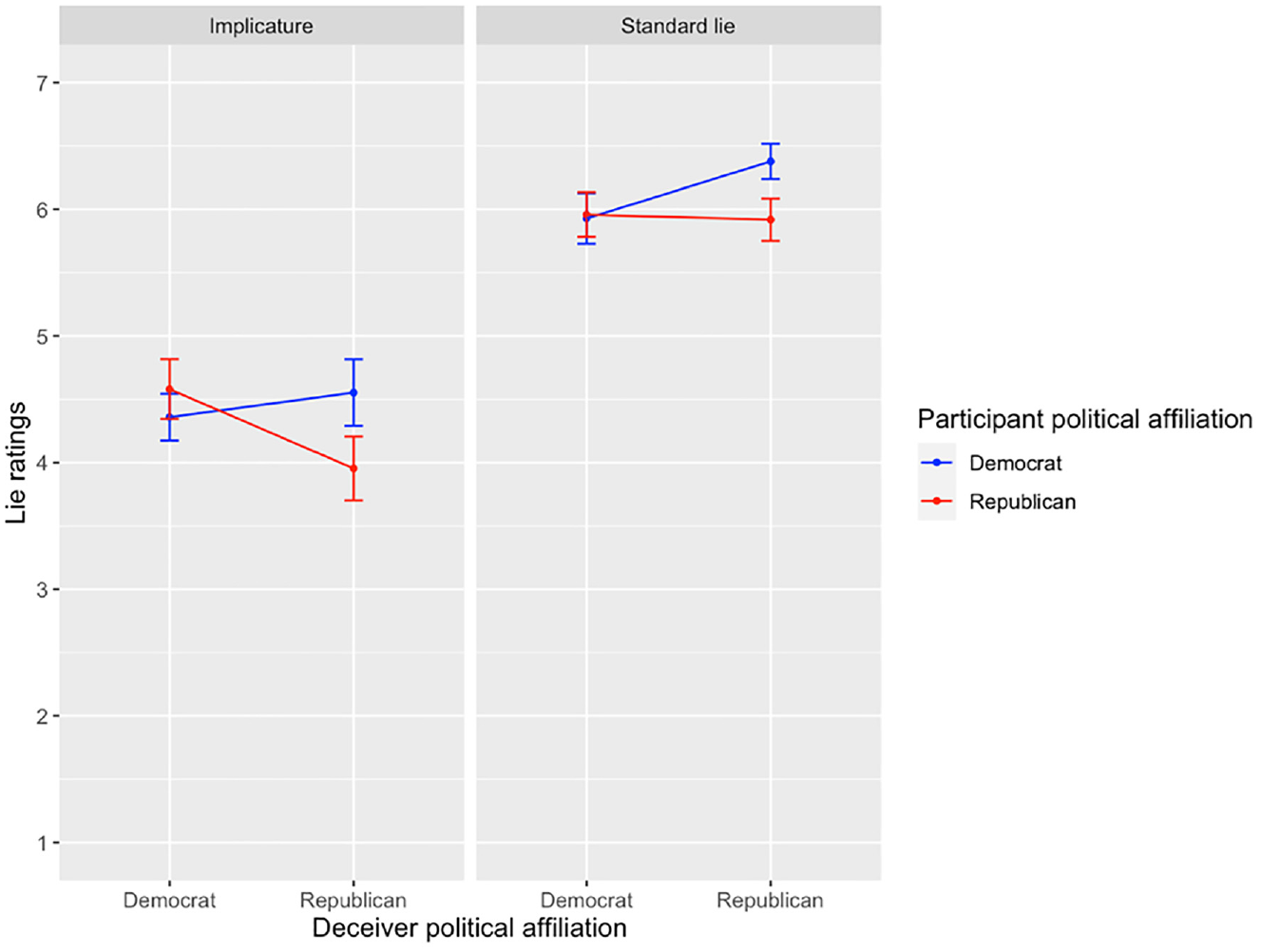

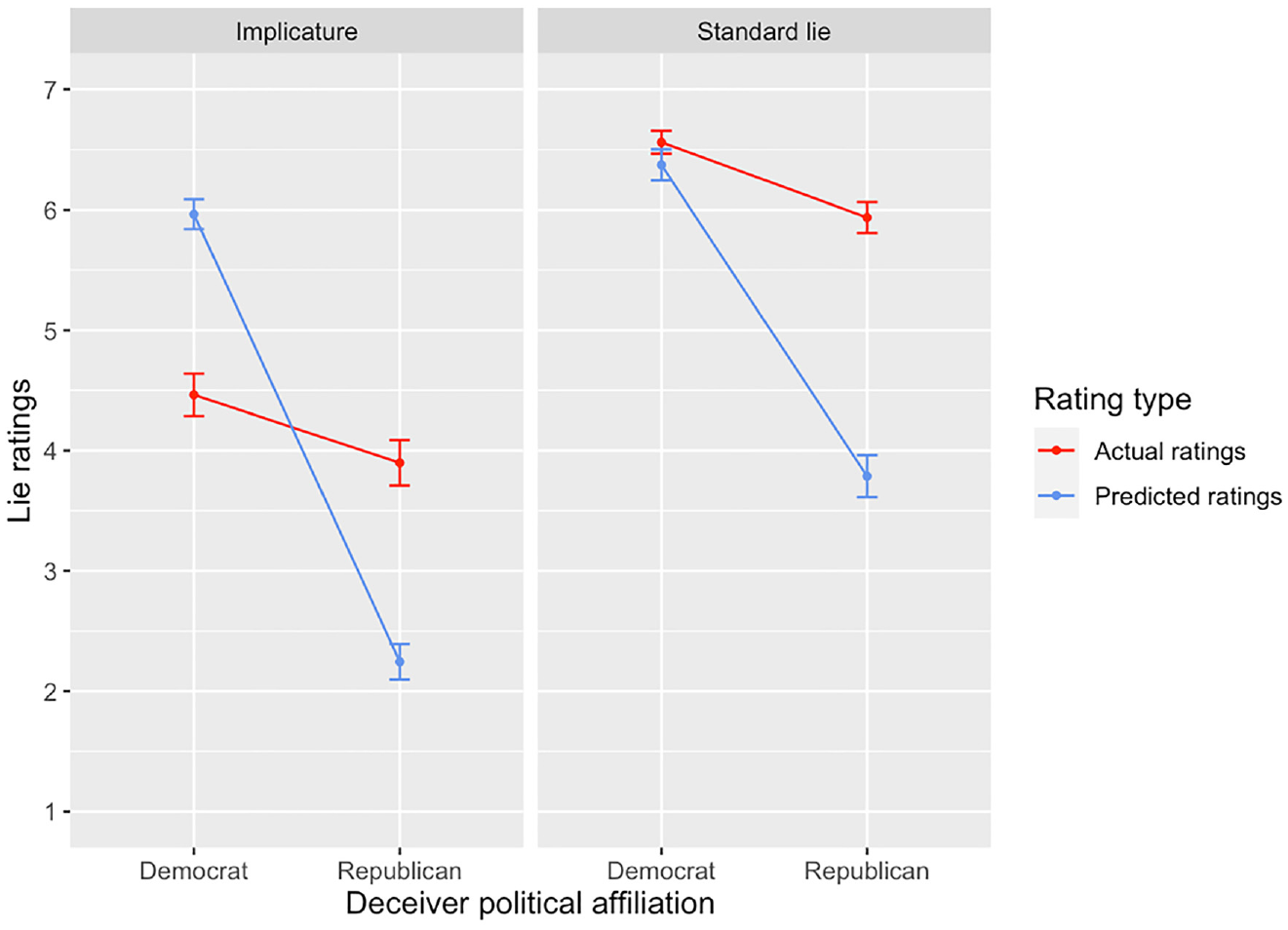

The significant main effect for deception type reflects the finding that participants overall provided lower lie ratings for deceptive implicatures as compared with standard lies. The significant two-way interaction between participant and deceiver political affiliation shows that participants’ lie ratings indeed differed depending on whether the deceiving agent shared their political affiliation. The interaction was followed up with planned contrasts for each deception type, which showed a significant two-way interaction between participant and deceiver political affiliation in deceptive implicatures, t(392)=−2.48, p=.014, but not standard lies, t(392)=−1.49, p=.137. Nevertheless, the bias patterns did not differ significantly between deceptive implicatures and standard lies (as indicated by the non-significant three-way interaction between participant political affiliation, deceiver political affiliation and deception type). A graphical examination of the findings (see Figure 1) 4 confirmed that, in deceptive implicatures, the significant interaction followed the expected result pattern: Both Democratic and Republican participants indicated lower lie ratings for deceptive statements uttered by members of their own vs. an opposed party. In standard lies, the expected pattern was (descriptively) present in Democratic participants’ ratings, while Republican participants indicated similar lie ratings for deceivers from both parties. Additional simple effects analyses revealed that all differences in participants ratings for own vs. opposed party deceivers amounted to small effects only.

Effect of Deceiver Political Affiliation on Democratic and Republican Participants’ Lie Judgments for Implicatures and Standard Lies

Experiment 2

Overview

In Experiment 2, we examine to what extent partisans expect other partisans’ lie judgments to depend on congruence with the deceiving agent’s partisanship. Participants were again presented with the materials from Experiment 1, but this time had to indicate not only what they thought about each case but also how they thought that other partisans would respond to the cases. Based on the literature outlined earlier, we expected partisans to perceive their political opponents to be much more biased than they are (for a detailed overview of our hypotheses and tests, see Table 5). In an exploratory manner, we also examined to what extent partisans see own party members as biased. Experiment 2 was again preregistered on the OSF: https://osf.io/gh3s8. Data and analyses are found at https://osf.io/t7jm6/.

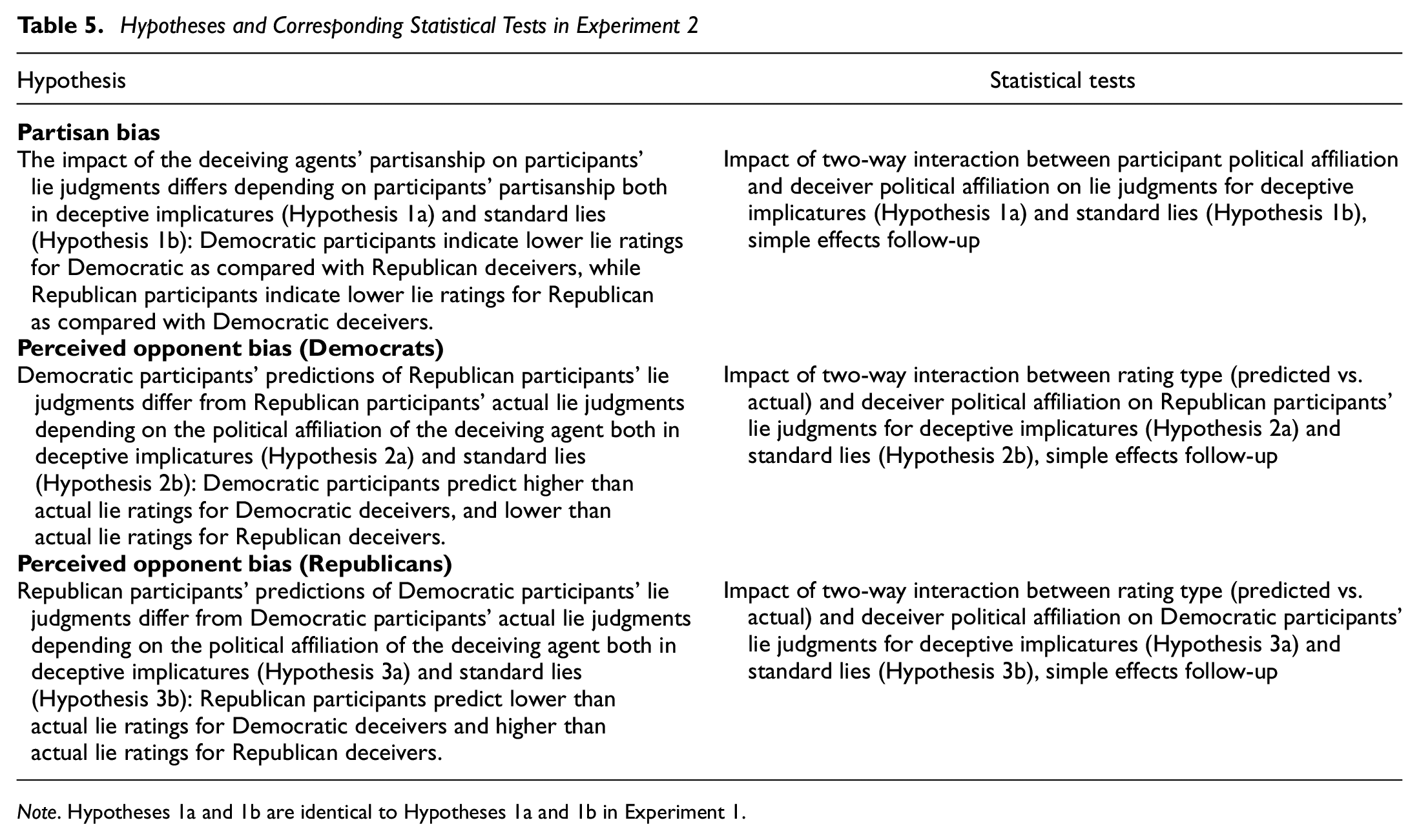

Hypotheses and Corresponding Statistical Tests in Experiment 2

Note. Hypotheses 1a and 1b are identical to Hypotheses 1a and 1b in Experiment 1.

Method

Participants

For Experiment 2, a total of 767 participants were recruited on Prolific Academic, applying the same inclusion criteria as in Experiment 1 (see Sect. Experiment 1: Methods). Participants who indicated an unclear political affiliation (n=21), failed an attention (n=22) or manipulation (n=70) check, experienced technical problems (n=3), or exceeded the preregistered sample size of N=640 5 were subsequently excluded from the sample (n=11). The final sample consisted of 320 self-identified Democrats (age: M=37.90, SD=14.19; gender: 194 female, 124 male, 2 undisclosed) and 320 self-identified Republicans (age: M=42.87, SD=14.57; gender: 169 female, 151 male). 6 Participants were compensated with £1.87 (£7.5/h).

Design, Procedure, and Materials

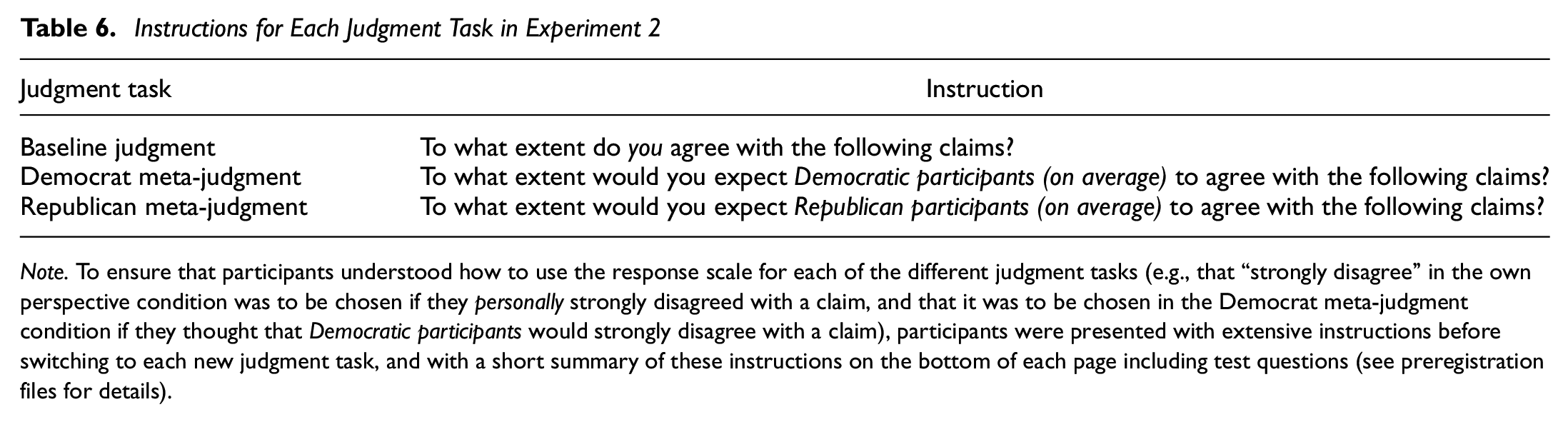

Experiment 2 constitutes an extension of Experiment 1 in which participants were presented with three instead of one judgment tasks as a new within-subjects factor. Participants received the same cases and questions as before (with the exception of the morality question, which was dropped from Experiment 2 to prevent it from being overly long and tedious), yet now had to indicate not only how they thought about each of the test questions but also how they thought that Democratic and Republican participants, respectively, would respond to the questions. Thus, Experiment 2 followed a 2 (deceiver political affiliation: Democrat vs. Republican, between-subjects) × 2 (deception type: deceptive implicature vs. standard lie, between-subjects) × 6 (content of deception, within-subjects) × 3 (judgment task: baseline judgment vs. Democrat meta-judgment vs. Republican meta-judgment, within-subjects) mixed factorial design. For all three different judgments to be assessed, participants were successively presented with the set of cases thrice, each time alongside a different instruction as to which judgment they were supposed to give (order of cases, judgment tasks, and test questions randomized). The response options ranged from “strongly disagree” (1) to “strongly agree” (7) for all items. Table 6 shows the different instructions for each type of judgment. After completing each judgment task, a manipulation check asking participants from which perspective they answered the previous questions was administered. Also, participants received two attention checks and a set of demographic questions (age, gender, and political affiliation).

Instructions for Each Judgment Task in Experiment 2

Note. To ensure that participants understood how to use the response scale for each of the different judgment tasks (e.g., that “strongly disagree” in the own perspective condition was to be chosen if they personally strongly disagreed with a claim, and that it was to be chosen in the Democrat meta-judgment condition if they thought that Democratic participants would strongly disagree with a claim), participants were presented with extensive instructions before switching to each new judgment task, and with a short summary of these instructions on the bottom of each page including test questions (see preregistration files for details).

Results

A linear mixed-effects model was used to predict participants’ lie ratings from participant political affiliation, deceiver political affiliation, deception type, judgment type, and all possible interactions of these factors while again including participants and the different contents as random factors (intercepts only). 7 The model estimates are summarized in Supplemental Appendix S2. The model was followed up with planned contrasts to assess our predictions. 8

Confirmatory Analyses

Actual Bias

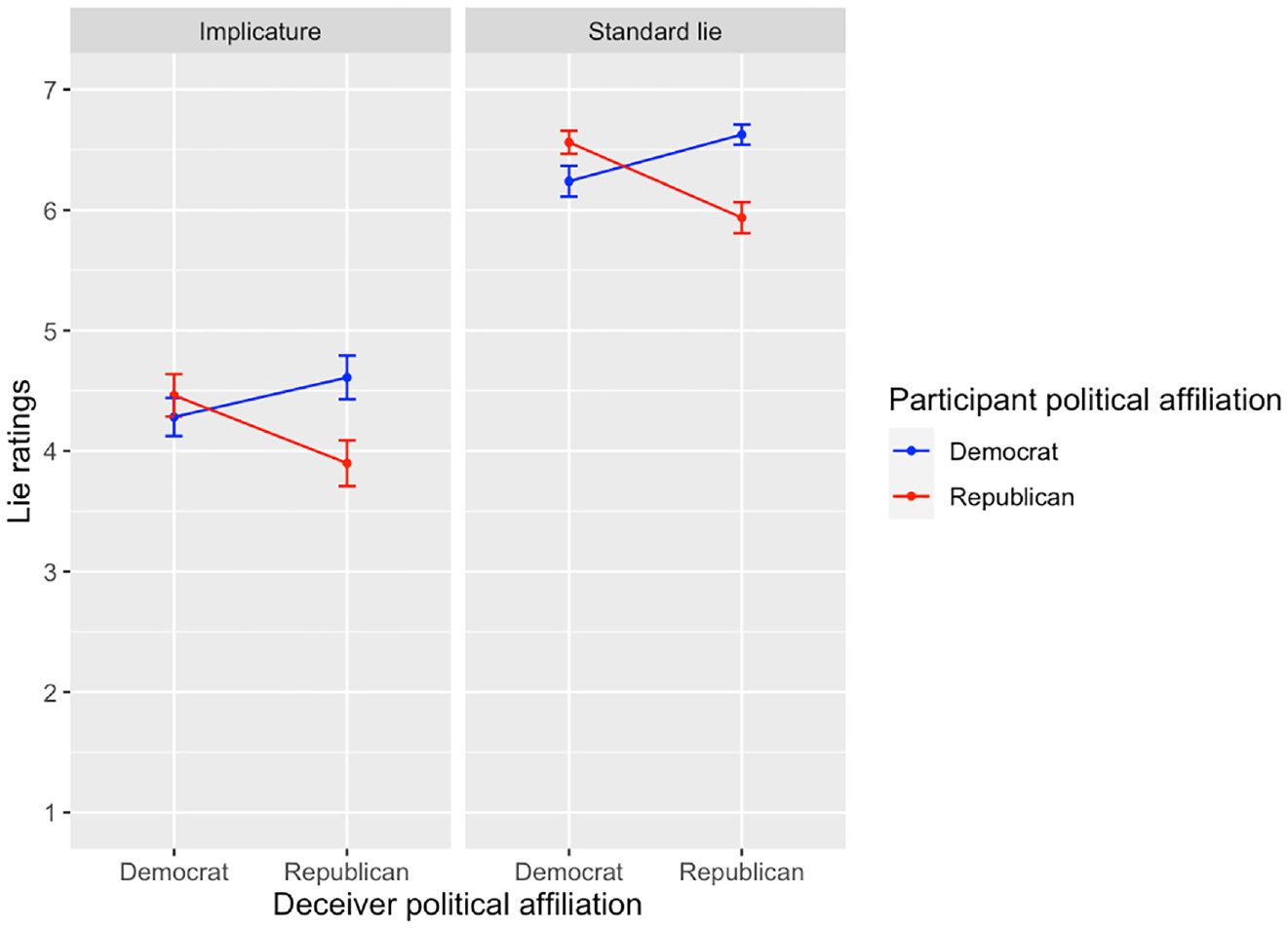

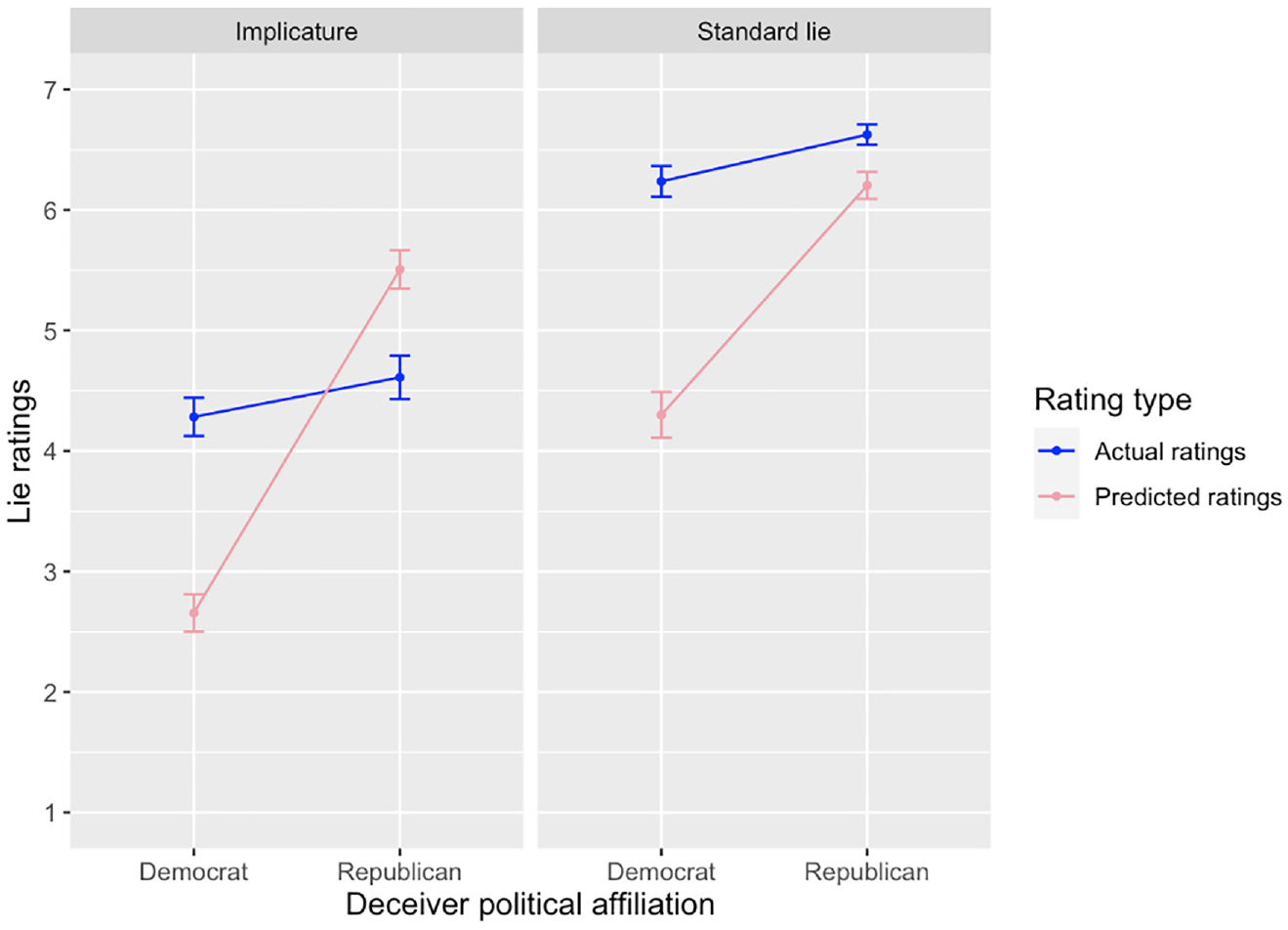

To examine whether the main findings from Experiment 1 replicated, we calculated two separate contrasts for deceptive implicatures and standard lies assessing the impact of the interaction between participant and deceiver political affiliation on participants’ own (baseline) judgments. The analysis revealed a significant interaction between participant and deceiver political affiliation both in deceptive implicatures (z=−3.91, p=.0001) and standard lies (z=−4.35, p<.0001). 9 A graphical examination of the findings (see Figure 2) confirmed that both interactions were caused by the expected result pattern: Participants indicated higher lie ratings for deceptive statements uttered by political opponents as compared with deceptive statements uttered by own party members. Again, all differences between participants’ judgments for own and opposed party members were small in size.

Effect of Deceiver Political Affiliation on Democratic and Republican participants’ Lie Judgments for Implicatures and Standard Lies

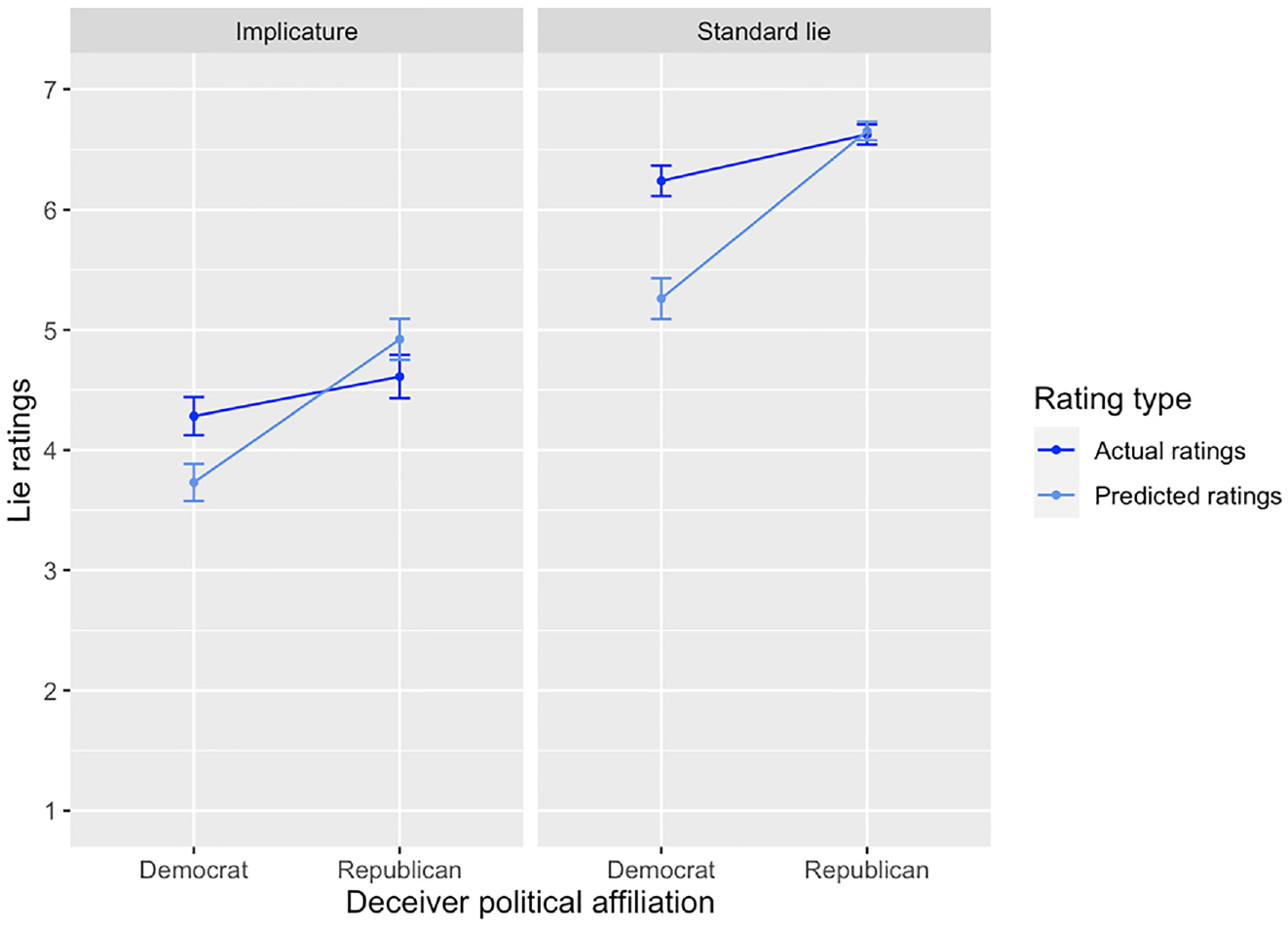

Democrat Estimates of Republican Bias

To examine whether Democratic participants believe Republican participants to be more biased in their lie judgments than reality warrants, we calculated two separate interaction contrasts for deceptive implicatures and standard lies comparing Democratic participants’ estimates of Republican participants’ lie judgments with Republican participants’ actual lie judgments. The analysis revealed a significant interaction between rating type (estimated vs. actual) and deceiver political affiliation both in deceptive implicatures (z=13.83, p<.0001) and in standard lies (z=8.43, p<.0001). A graphical examination of the findings (see Figure 3) shows that the interaction for deceptive implicatures was caused by the expected result pattern: Democratic participants overestimated Republican participants’ lie ratings for Democratic deceivers and underestimated their lie ratings for Republican deceivers. For standard lies, the result pattern deviated from our predictions: While Democratic participants indeed underestimated Republican participants’ lie ratings for Republican deceivers, they did not overestimate their lie ratings for Democratic deceivers. The difference between Republican participants’ ratings for Democratic and Republican deceivers as predicted by Democratic participants (i.e., the perceived bias) amounted to large effects for both deceptive implicatures (d=2.41 [2.25, 2.57]) and standard lies (d=1.51 [1.36, 1.66]).

Effect of Deceiver Political Affiliation on Democratic Participants’ Predictions of Republican Participants’ Lie Judgments and Republican Participants’ Actual Lie Judgments for Implicatures and Standard Lies

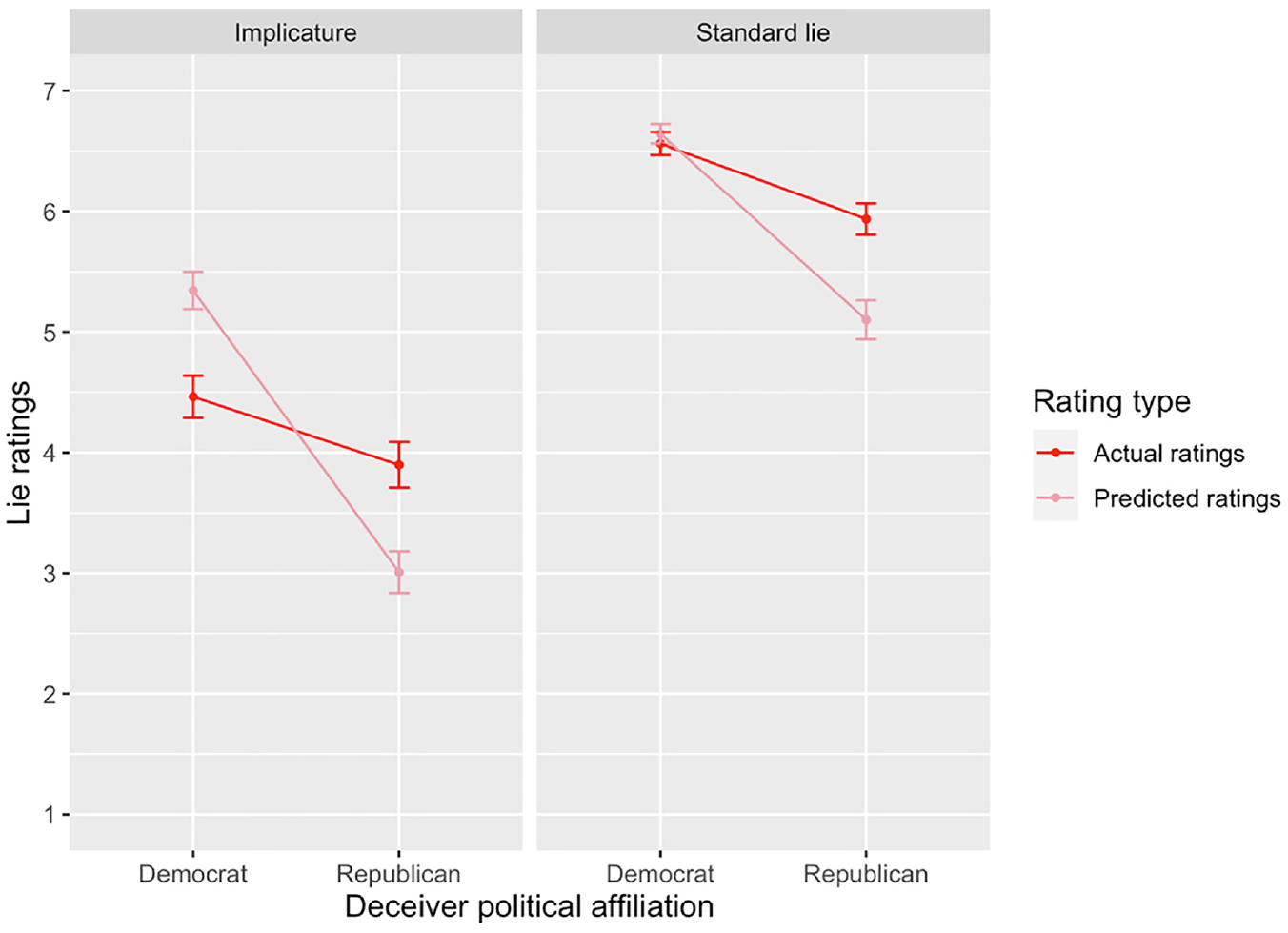

Republican Estimates of Democrat Bias

To examine whether Republican participants believe Democratic participants to be more biased in their lie judgments than reality warrants, we calculated two separate interaction contrasts for deceptive implicatures and standard lies comparing Republican participants’ estimates of Democratic participants’ lie judgments with Democratic participants’ actual lie judgments. The analysis revealed a significant interaction between rating type (estimated vs. actual) and deceiver political affiliation both in deceptive implicatures (z=−11.06, p<.0001) and standard lies (z=−6.52, p<.0001). A graphical examination of the findings (see Figure 4) shows that the interaction for deceptive implicatures was caused by the expected result pattern: Republican participants underestimated Democratic participants’ lie ratings for Democrat deceivers and overestimated their lie ratings for Republican deceivers. For standard lies, the result pattern again deviated from our predictions: While Republican participants indeed underestimated Democratic participants’ lie ratings for Democrat deceivers, they did not overestimate their lie ratings for Republican deceivers. The difference between Democratic participants’ ratings for Democratic and Republican deceivers as predicted by Republican participants (i.e., the perceived bias) amounted to large effects for both deceptive implicatures (d=−1.64 [−1.79, −1.49]) and standard lies (d=−1.14 [−1.28, −1]).

Effect of Deceiver Political Affiliation on Republican Participants’ Predictions of Democratic Participants’ Lie Judgments and Democratic Participants’ Actual Lie Judgments for Implicatures and Standard Lies

Exploratory Analyses

Democrat Estimates of Democrat Bias

In an exploratory manner, we also tested whether Democratic and Republican participants believe members of their own parties to be more (or less) biased than reality warrants. To examine the actual vs. perceived in-group bias of Democratic participants, we calculated two separate interaction contrasts for deceptive implicatures and standard lies comparing Democratic participants’ estimates of Democratic participants’ lie judgments with Democratic participants’ actual lie judgments. The analysis revealed a significant interaction between rating type (estimated vs. actual) and deceiver political affiliation both in deceptive implicatures (z=6.81, p<.0001) and standard lies (z=7.57, p<.0001). A graphical examination of the findings (see Figure 5) shows that the interaction for deceptive implicatures was caused by an underestimation of in-group partisans’ ratings for Democratic deceivers, and an overestimation of in-group partisans’ ratings for Republican deceivers. The interaction for standard lies, on the contrary, was caused only by an underestimation of in-group partisans’ ratings for Democratic deceivers, while in-group partisans’ ratings for Republican deceivers were predicted accurately. The difference between Democratic participants’ ratings for Democratic and Republican deceivers as predicted by Democratic participants (i.e., the perceived bias) amounted to a medium effect for deceptive implicatures (d=−0.64 [−0.77, −0.52]), and a large effect for standard lies (d=−1.03 [−1.17, −0.89]).

Effect of Deceiver Political Affiliation on Democratic Participants’ Predictions of Democratic Participants’ Lie Judgments and Democratic Participants’ Actual Lie Judgments for Implicatures and Standard Lies

Republican Estimates of Republican Bias

To examine the actual versus perceived in-group bias of Republican participants, we calculated two separate interaction contrasts for deceptive implicatures and standard lies comparing Republican participants’ estimates of Republican participants’ lie judgments and Republican participants’ actual lie judgments. The analysis revealed a significant interaction between rating type (estimated vs. actual) and deceiver political affiliation both in deceptive implicatures (z=−13.60, p<.0001) and standard lies (z=−7.10, p<.0001). A graphical examination of the findings (see Figure 6) shows that the interaction for deceptive implicatures was caused by an overestimation of in-group partisans’ ratings for Democratic deceivers, and an underestimation of in-group partisans’ ratings for Republican deceivers. The interaction for standard lies, on the other hand, was caused only by an underestimation of in-group partisans’ ratings for Republican deceivers, while in-group partisans’ ratings for Democratic deceivers were predicted accurately. The difference between Republican participants’ ratings for Democratic and Republican deceivers as predicted by Republican participants (i.e., the perceived bias) amounted to large effects for both deceptive implicatures (d=1.28 [1.14, 1.42]) and standard lies (d=1.01 [0.88, 1.14]).

Effect of Deceiver Political Affiliation on Republican Participants’ Predictions of Republican Participants’ Lie Judgments and Republican Participants’ Actual Lie Judgments for Implicatures and Standard Lies

General Discussion

Actual Bias in Partisans’ Judgments of Lying

Previous research on partisan biases suggests that people often evaluate information more favorably when it supports rather than challenges their political allegiances and beliefs (see Sect. Partisan Bias in Judgments of Lying: Misinformation Spread by Own Versus Opposed Party Members). In the present article, we examined whether this bias also affects partisans’ judgments of whether political representatives have lied. Our findings suggest that this is indeed the case: Both Democratic and Republican participants in our study were more inclined to judge political representatives who communicated false or misleading information as having lied when the representatives were described as members of their own vs. an opposed political party. However, the bias observed in the present experiment was comparatively small. Although partisans’ lie judgments were significantly lower for own party deceivers, they were still inclined to agree that members of both parties who spread false or misleading information had lied. Surprisingly, we also did not find the bias to differ in its extent between standard lies and deceptive implicatures. Although participants were aware of the ambiguity involved in deceptive implicatures (as shown by their overall lower lie judgments for deceptive implicatures), they did not take advantage of this ambiguity in adjusting their lie judgments in a politically favorable direction. This contrasts with previous research having shown that (partisan) biases are often most pronounced in ambiguous situations (see Sect. Partisan Bias in Judgments of Lying: Misinformation Communicated by Means of Explicit Lies Versus Deceptive Implicatures). Taken together, our findings suggest that people are not always blinded by their partisanship, even when judgments could easily be adapted to align with people’s political allegiances.

Perceived Biases in Partisans’ Judgments of Lying

While partisans, in fact, showed only a slight political bias in their lie judgments, our results suggest a different picture when it comes to how partisans perceive this bias. In line with previous research having shown that partisans’ perceptions of what “the other side” thinks are often overly negative (see Sect. Perceived Partisan Bias in Judgments of Lying), our findings suggest that partisans overestimate how biased political opponents’ lie judgments are. Both Democratic and Republican participants expect their opponents’ lie judgments to be much more favorable (i.e., lower) than they are for representatives from the opponents’ party, and much less favorable (i.e., higher) than they are for representatives from participants’ own party. The only exception to this pattern was constituted by partisans’ estimates of how opponents would judge standard lies uttered by members of their own party, in which case the estimates of both groups were rather accurate. This can likely be explained by the fact that partisans’ actual lie ratings for standard lies uttered by deceivers from the opposed political camp were already at the ceiling, making it difficult for participants to predict higher lie ratings on the given scale. Taken together, our findings suggest that partisans believe their opponents’ lie judgments to follow a highly biased pattern. Political opponents are expected to see the same deceptive implicatures as cases of lying only when uttered by a member of an opposed party, and only reluctantly agree that members of their own party have lied even when a false claim has been stated explicitly (when in fact, partisans’ lie judgments differ only slightly for opposed vs. own party deceivers). As perceptions of others as biased have been shown to relate to a preference of competitive over cooperative actions (Kennedy & Pronin, 2008), it seems well conceivable that perceiving one’s opponents’ lie judgments to be biased in this way might foster partisan conflict. Nevertheless, there is one thing to be optimistic about: In contrast to actual biases, misperceptions of bias can potentially be mitigated by means of relatively simple informational interventions (e.g., by educating partisans that their opponents, in fact, are not that biased; see, Lees & Cikara, 2020; Mernyk et al., 2022; Ruggeri et al., 2021).

In contrast to most previous work, in the present experiment, we also examined partisans’ perceptions of bias within their own political camp. Interestingly, we found a result pattern highly similar to partisans’ perception of their political opponents: In deceptive implicatures, both Democratic and Republican participants overestimated their own party members’ judgments for opposed party deceivers and underestimated their own party members’ judgments for own party deceivers. In standard lies, both groups underestimated their own party members’ judgments for own party deceivers, but correctly estimated their own party members’ judgments for opposed party deceivers. While the overestimation was lower for in-group as compared with out-group members, the misperceptions were still large enough for partisans to predict that own party members would see a deceptive implicature as a case of lying only when uttered by a political opponent. How do these findings fit with previous research on perceptions of bias? While the naive realism view predicts that misperceptions of bias should be particularly pronounced in the context of disagreement (see Sect. Perceived Partisan Bias in Judgments of Lying), research on the so-called bias blind spot suggests that people also have a general tendency to see others as more biased than themselves (presumably because of self-enhancing motives and an over-valuation of introspective rather than behavioral evidence when judging one’s own vs. other people’s susceptibility to biases; for an overview, see Pronin, 2007). Our findings on in-group perceptions of bias might thus reflect a general tendency to see others (or other partisans) as biased, while our findings for out-group members suit the idea that bias perceptions are heightened in the context of disagreement.

Limitations and Avenues for Future Research

In the present research, partisans’ lie judgments were examined in the context of deceptions about ideologically neutral topics (e.g., economy, taxes, unemployment). While the neutrality of the topics ensured that each statement could convincingly be ascribed to speakers from both political camps (and that people’s lie judgments would not be confounded by their prior beliefs 10 ), it is important to keep in mind that different results might have emerged if deceptions about ideologically central topics (e.g., abortion, immigration, or climate change) had been examined. Also, the present research employed generic party labels instead of using names of real political actors. The former were used because real-world statements made by existing political actors cannot be matched for content, which renders causal inferences regarding the role of the deceiver’s partisanship impermissible. 11 Falsely ascribing fictional deceptions to existing political actors, on the contrary, is morally questionable and comes along with further problems if participants know that a statement has not, in fact, been made by a speaker. Despite the advantages of our design choices, it should be noted that different results might have emerged if deceptive utterances by well-known elites to whom partisans feel more deeply attached to (e.g., Donald Trump or Joe Biden) had been looked at. Future studies remain needed to examine such cases in more detail. 12

One might worry that due to the present work being focused on neutral topics and generic party labels, our findings might suffer from a lack of ecological validity or overall relevance. Let us conclude by addressing this worry. First, as mentioned earlier, the focus on neutral and controlled cases allowed for inferences about the causal role that a deceiver’s partisanship plays for people’s lie judgments, thereby further contributing to the partisan bias literature that regularly relies on controlled designs. Second, the present findings are no less ecologically valid than investigations into deceptions of famous political figures and ideologically laden topics. This is because statements by actors like Trump and Biden about topics such as immigration or climate change are by no means the only deceptions people are confronted with in the political domain. Especially at the level of state and local governments, the vignettes employed in our experiments might even be more realistic. Finally, and perhaps most importantly, the present findings uncovered an important feature of people’s bias perception that might have been overlooked when including only cases such as discussed above. Specifically, the present work shows that even the slightest party cues (which, in fact, only mildly affect people’s lie judgments) can trigger perceptions of the other side as strongly biased. This finding provides a new insight into partisans’ misperception of bias that may prove helpful in identifying effective ways to counter it.

Supplemental Material

sj-docx-1-spp-10.1177_19485506231220702 – Supplemental material for Actual and Perceived Partisan Bias in Judgments of Political Misinformation as Lies

Supplemental material, sj-docx-1-spp-10.1177_19485506231220702 for Actual and Perceived Partisan Bias in Judgments of Political Misinformation as Lies by Louisa M. Reins and Alex Wiegmann in Social Psychological and Personality Science

Supplemental Material

sj-docx-2-spp-10.1177_19485506231220702 – Supplemental material for Actual and Perceived Partisan Bias in Judgments of Political Misinformation as Lies

Supplemental material, sj-docx-2-spp-10.1177_19485506231220702 for Actual and Perceived Partisan Bias in Judgments of Political Misinformation as Lies by Louisa M. Reins and Alex Wiegmann in Social Psychological and Personality Science

Supplemental Material

sj-docx-3-spp-10.1177_19485506231220702 – Supplemental material for Actual and Perceived Partisan Bias in Judgments of Political Misinformation as Lies

Supplemental material, sj-docx-3-spp-10.1177_19485506231220702 for Actual and Perceived Partisan Bias in Judgments of Political Misinformation as Lies by Louisa M. Reins and Alex Wiegmann in Social Psychological and Personality Science

Supplemental Material

sj-docx-4-spp-10.1177_19485506231220702 – Supplemental material for Actual and Perceived Partisan Bias in Judgments of Political Misinformation as Lies

Supplemental material, sj-docx-4-spp-10.1177_19485506231220702 for Actual and Perceived Partisan Bias in Judgments of Political Misinformation as Lies by Louisa M. Reins and Alex Wiegmann in Social Psychological and Personality Science

Footnotes

Handling Editor: Federico Christopher

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was supported by a grant from the Deutsche Forschungs-gemeinschaft for the Emmy Noether Independent Junior Research Group “Experimental Philosophy and the Method of Cases: Theoretical Foundations, Responses, and Alternatives (EXTRA),” project number 391304769.

Ethical Approval

Ethical approval was not required (neither by local law nor the involved institutions) for the low-risk studies included in this work.

Informed Consent

Participants gave their informed consent for inclusion prior to participation in the reported studies. All materials, data and code have been made available (see links in main text).

Supplemental Material

Supplemental material for this article is available online.

Notes

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.