Abstract

Recent years have seen a surge in research on why people fall for misinformation and what can be done about it. Drawing on a framework that conceptualizes truth judgments of true and false information as a signal-detection problem, the current article identifies three inaccurate assumptions in the public and scientific discourse about misinformation: (1) People are bad at discerning true from false information, (2) partisan bias is not a driving force in judgments of misinformation, and (3) gullibility to false information is the main factor underlying inaccurate beliefs. Counter to these assumptions, we argue that (1) people are quite good at discerning true from false information, (2) partisan bias in responses to true and false information is pervasive and strong, and (3) skepticism against belief-incongruent true information is much more pronounced than gullibility to belief-congruent false information. These conclusions have significant implications for person-centered misinformation interventions to tackle inaccurate beliefs.

Although psychologists have studied effects of misinformation for decades (for a review, see Lewandowsky et al., 2012), recent years have seen a surge in research on why people fall for misinformation and what can be done about it (for reviews, see Ecker et al., 2022; Pennycook & Rand, 2021b; Van der Linden, 2022). Three assumptions in the public and scientific discourse related to this work are that (1) people are bad at discerning true from false information, (2) partisan bias is not a driving force in judgments of misinformation, and (3) gullibility to false information is the main factor underlying inaccurate beliefs. Drawing on a framework that conceptualizes truth judgments of true and false information as a signal-detection problem (Batailler et al., 2022), we argue that the three assumptions are inconsistent with the available evidence, which shows that (a) people are quite good at discerning true from false information, (b) partisan bias in responses to true and false information is pervasive and strong, and (c) skepticism against true information that is incongruent with one’s beliefs is much more pronounced than gullibility to false information that is congruent with one’s beliefs. In the current article, we address each of these points and their implications for person-centered misinformation interventions to tackle inaccurate beliefs.

Judging True and False Information

A helpful framework to illustrate the key points of our analysis is signal detection theory (SDT; Green & Swets, 1966). Although SDT was originally developed for research on visual perception, its core concepts can be applied to any decision problem involving binary categorical judgments of two stimulus classes (e.g., judgments of true and false information as either true or false). According to SDT, a correct judgment of true information as true can be described as a

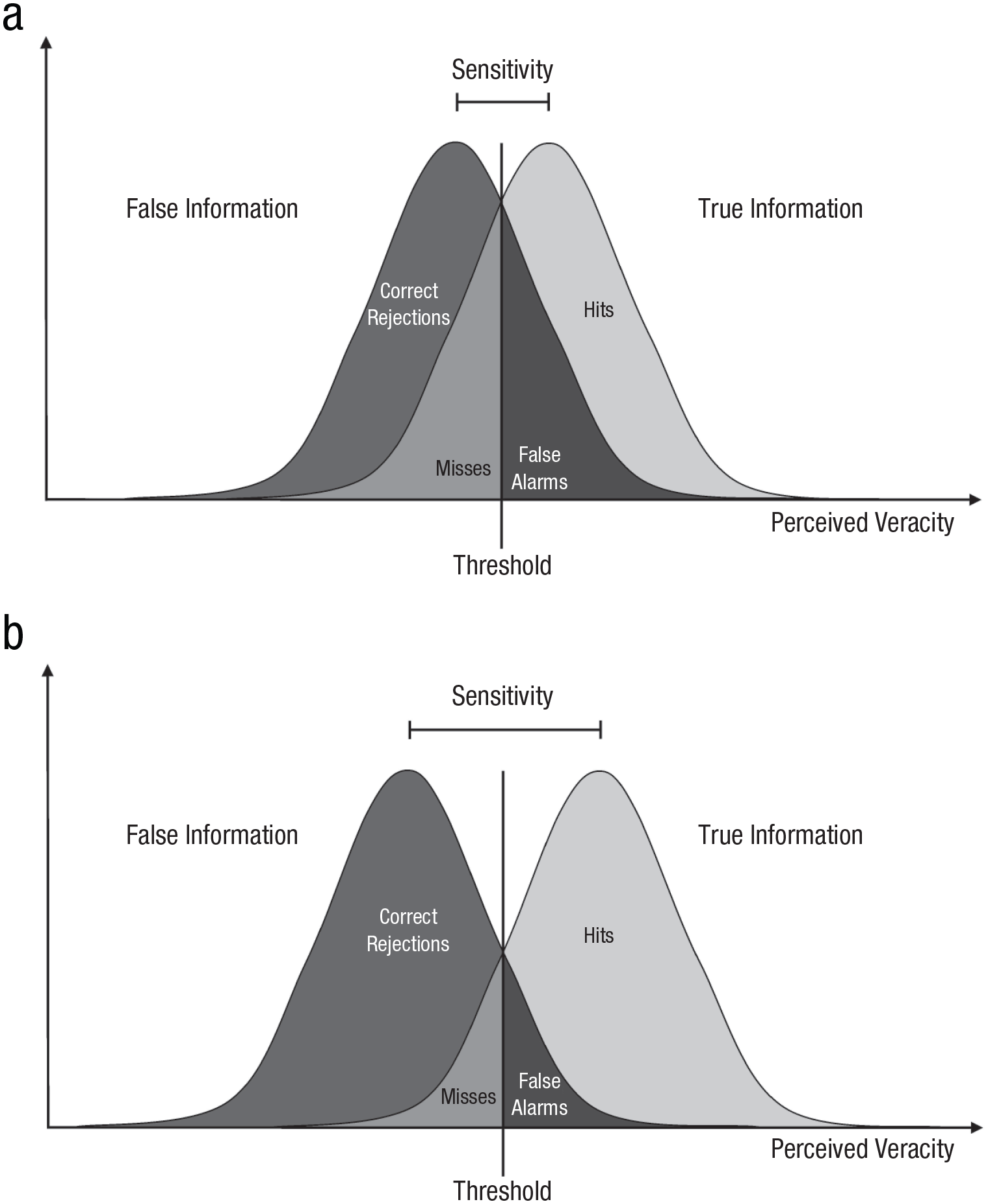

The first factor, labeled

Graphical depiction of sensitivity within signal detection theory, reflecting the distance between distributions of judgments about true and false information along the dimension of perceived veracity. Distributions that are closer together along the perceived-veracity dimension have a lower sensitivity, indicating that participants’ ability in correctly discriminating between true and false information is relatively low (upper panel). Distributions that are further apart along the perceived-veracity dimension have a higher sensitivity, indicating that participants’ ability in correctly discriminating between true and false information is relatively high (lower panel).

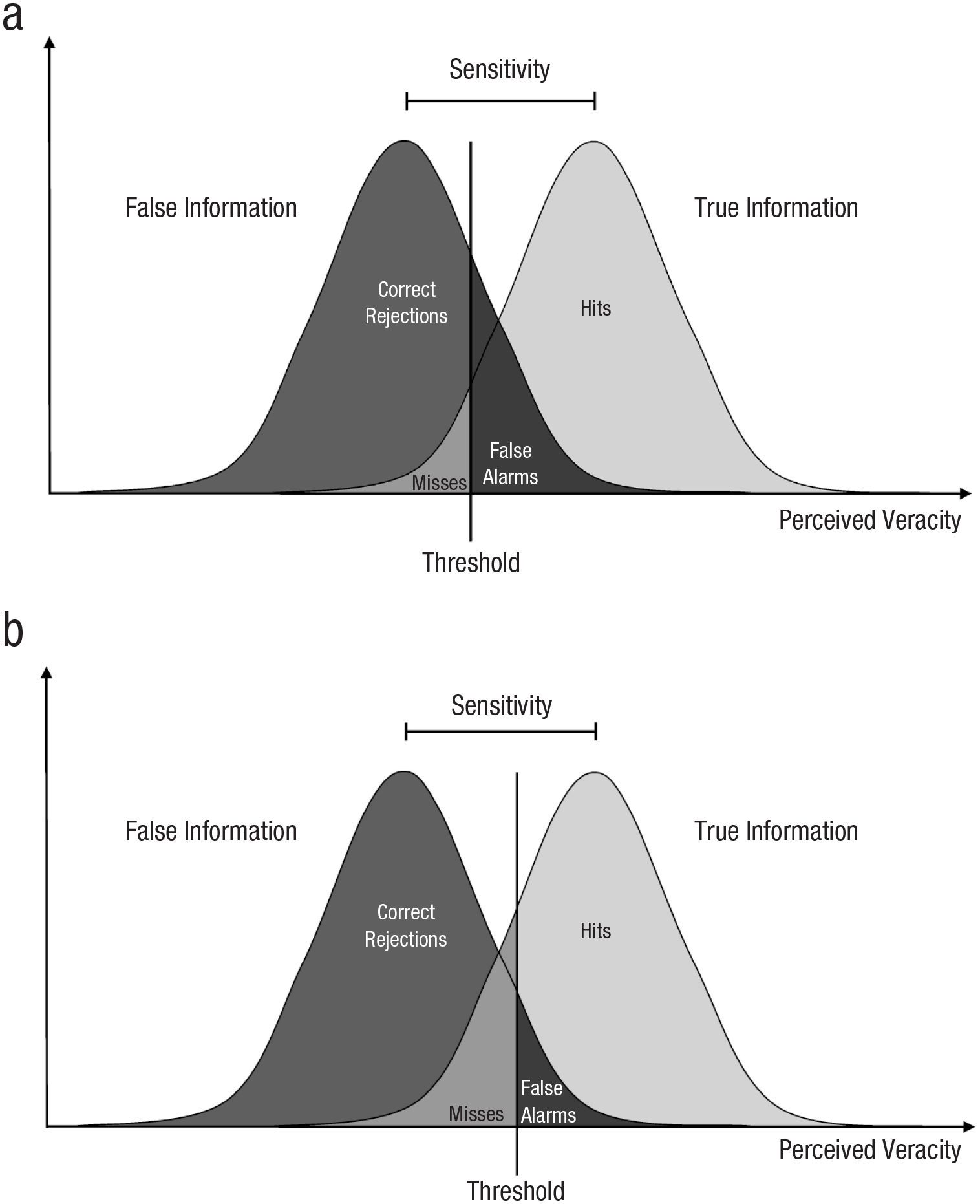

The second factor, labeled

Graphical depiction of threshold within signal detection theory, reflecting the threshold along the dimension of perceived veracity at which a participant decides to switch their decision. When judging information as true (vs. false), threshold indicates the degree of veracity the participant must perceive before judging information as true. Any stimulus with greater perceived veracity than that value will be judged as true, whereas any stimulus with lower perceived veracity than that value will be judged as false. A low threshold would indicate that a participant is generally more likely to judge information as true (upper panel), whereas a high threshold would indicate that a participant is generally less likely to judge information as true (lower panel).

An important aspect of the distinction between sensitivity and threshold is that the two factors are independent. For example, a higher threshold is not necessarily associated with higher sensitivity, because a higher threshold leads to greater accuracy in judgments of false information (i.e., more false information is correctly judged as false) and, at the same time, greater inaccuracy in judgments of true information (i.e., more true information is incorrectly judged as false). Conversely, a lower threshold is not necessarily associated with lower sensitivity, because a lower threshold leads to greater inaccuracy in judgments of false information (i.e., more false information is incorrectly judged as true) and, at the same time, greater accuracy in judgments of true information (i.e., more true information is correctly judged as true). Thus, overall accuracy in distinguishing between true and false information (i.e., sensitivity) often remains unaffected by higher or lower thresholds, in that greater accuracy in judgments of false information is compensated by greater inaccuracy in judgments of true information, or vice versa (see Fig. 2). As we explain next, these considerations are important when assessing the validity of the three assumptions that (1) people are bad at discerning true from false information, (2) partisan bias is not a driving force in responses to misinformation, and (3) gullibility to false information is the main factor underlying inaccurate beliefs.

Myth 1: People Are Bad at Discerning True From False Information

A central idea in the psychological literature is that person-centered misinformation interventions require approaches that effectively increase people’s ability to discern true from false information (Guay et al., 2023). That is, interventions should increase sensitivity. A tacit assumption underlying this quest is that people are not very good at discerning true from false information (i.e., people show low sensitivity). However, many experts on misinformation disagree with this assumption (Altay, Berriche, Heuer, et al., 2023), and a closer look at the available evidence suggests that it is incorrect (for a meta-analysis, see Pfänder & Altay, in press). For example, in a review of data from more than 15,000 participants, Pennycook and Rand (2021a) report an average difference of 2.9 standard deviations in judgments of real versus fake news as true, suggesting very high sensitivity in truth judgments of real and fake news. To provide a frame of reference, differences of that size are comparable to the differences between Democrats and Republicans on self-report measures of liberal versus conservative political ideology (Gawronski et al., 2023). These findings suggest that people are surprisingly good at discerning true from false information. 1

An important qualification of this conclusion is that it is specific to judgments of truth and does not generalize to sharing decisions. For the latter, sensitivity is typically close to chance level, in that people are as likely to share false information as they are to share true information (Gawronski et al., 2023). The discrepancy between truth judgments and sharing decisions suggests that although people can distinguish between true and false information with a high degree of accuracy when they are directly asked to judge the truth of information, they do not seem to utilize this skill in decisions to share information, possibly because they do not think much about truth when sharing information online. In line with this idea, nudging people to think about truth when making sharing decisions has been found to be effective in improving the quality of shared information (Pennycook et al., 2020). Nevertheless, the finding that people are able to distinguish between true and false information with a high degree of accuracy contradicts the idea that people are not very good at telling the difference between true and false information.

Myth 2: Partisan Bias Is Not a Driving Force in Judgments of Misinformation

Although people are quite good at discerning true from false information, they are not perfect. People still make errors when judging the veracity of true and false information. The available evidence suggests that these errors are highly systematic, in that (a) people are much more likely to mistakenly judge false information as true when it is congruent with their political views than when it is incongruent and (b) people are much more likely to mistakenly judge true information as false when it is incongruent with their political views than when it is congruent. More generally, people have different thresholds for belief-congruent and belief-incongruent information, in that they tend to (a) accept belief-congruent information as true regardless of whether it is true or false and (b) reject belief-incongruent information as false regardless of whether it is true or false (Batailler et al., 2022; Gawronski et al., 2023; Nahon et al., 2024). Such partisan-bias effects in thresholds have been found to be pervasive and strong among both left-leaning and right-leaning participants, with effect sizes that exceed the conventional benchmark for a large effect.

Although many experts believe that partisan bias is a major factor underlying susceptibility to misinformation (Altay, Berriche, Heuer, et al., 2023), there is an influential narrative in the misinformation literature claiming that partisan bias is not a driving force in responses to misinformation (e.g., Pennycook & Rand, 2019, 2021b). How can these conflicting views be reconciled? The answer to this question is that the dismissal of partisan bias is based on a questionable conceptualization of partisan bias in terms of sensitivity rather than threshold. For example, Pennycook and Rand (2021b) argued that partisan bias should lead to lower truth discernment for belief-congruent compared with belief-incongruent information. Yet, if anything, the empirical evidence suggests the opposite, which led them to dismiss partisan bias as a driving force in responses to misinformation. However, within an SDT framework, partisan bias is reflected in differential thresholds rather than differential sensitivity—and when conceptualized in terms of differential thresholds, partisan-bias effects are large and pervasive.

To illustrate why a conceptualization of partisan bias in terms of differential thresholds is more appropriate, consider a hypothetical case where Participant A judges 70% of true information as true and 30% of false information as true. Further imagine that Participant A shows this pattern for information that is congruent with their political views as well as for information that is incongruent with their political views. Now imagine a Participant B who judges 90% of true information as true and 50% of false information as true when the information is congruent with their political views. Imagine further that Participant B judges 50% of true information as true and 10% of false information as true when the information is incongruent with their political views. In a conceptualization of partisan bias in terms of differential sensitivity (e.g., Pennycook & Rand, 2021b), neither Participant A nor Participant B shows partisan bias, because both participants have the same sensitivity for belief-congruent and belief-incongruent information. For both types of information, the two participants show a 40% difference in the acceptance of true versus false information.

Yet, despite the identical sensitivities for belief-congruent and belief-incongruent information, Participant B clearly evaluates information in a manner biased toward their political views, in that the participant is much more likely to judge information as true when it is congruent than when it is incongruent with their political views (see Stanovich et al., 2013). Within SDT, this tendency is reflected in different thresholds, in that Participant B shows a lower threshold for belief-congruent compared with belief-incongruent information (Batailler et al., 2022). Because a conceptualization of partisan bias in terms of sensitivity misses this important difference, such a conceptualization can lead to the mistaken conclusion that partisan bias is not a driving force in responses to misinformation (e.g., Pennycook & Rand, 2021b). Yet, when partisan bias is conceptualized in terms of differential thresholds, partisan bias is pervasive and strong (Gawronski et al., 2023), even in studies that have been claimed to show its irrelevance (see Batailler et al., 2022; Gawronski, 2021). Although the mechanisms underlying partisan bias in judgments of true and false information are still unclear (see Ditto et al., 2025; Tappin et al., 2020), these considerations suggest that (a) the proclaimed irrelevance of partisan bias is based on a questionable conceptualization in terms of differential sensitivities and (b) partisan bias in responses to misinformation is pervasive and strong when it is conceptualized in terms of differential thresholds.

Myth 3: Gullibility to False Information Is the Main Factor Underlying Inaccurate Beliefs

A conceptualization of partisan bias in terms of differential thresholds includes two components: (a) a tendency to judge belief-congruent information as true regardless of whether it is true or false and (b) a tendency to judge belief-incongruent information as false regardless of whether it is true or false (Batailler et al., 2022). The first component involves gullibility to belief-congruent false information due to a low threshold for belief-congruent information (sometimes called confirmation bias; see Edwards & Smith, 1996); the second component involves skepticism against belief-incongruent true information due to a high threshold for belief-incongruent information (sometimes called disconfirmation bias; see Edwards & Smith, 1996). In our work, we consistently found that effect sizes for the second component are much larger than effect sizes for the first component (Gawronski et al., 2023; Nahon et al., 2024). A forthcoming meta-analysis with data from 193,282 participants from 40 countries across seven continents found the same (Pfänder & Altay, in press).

The identified asymmetry is important because inaccurate beliefs can be rooted in both gullibility to false information and skepticism against true information. Yet, traditional misinformation interventions primarily aim at reducing gullibility to false information, and reducing skepticism against true information likely requires different types of interventions (Altay, Berriche, & Acerbi, 2023)—especially when this skepticism is selectively directed at belief-incongruent information without being applied to belief-congruent information (see Ditto & Lopez, 1992; Taber & Lodge, 2006). An example illustrating the significance of this issue involves the effects of gamified inoculation interventions, which expose game players to weak examples of misinformation and forewarn them about the ways in which they might be misled (for a review, see Van der Linden, 2024). Although gamified inoculation interventions have been found to reduce incorrect judgments of false information as true, a reanalysis of the available data using SDT suggests that this effect is driven by increased thresholds, not increased sensitivity (Modirrousta-Galian & Higham, 2023). That is, the interventions made participants more likely to judge both false and true information as false, but the interventions did not improve participants’ overall accuracy in discerning true from false information. Importantly, if enhanced skepticism from a misinformation intervention is selectively directed at belief-incongruent information without being directed at belief-congruent information, the intervention could even have detrimental effects by exacerbating a major source of inaccurate beliefs (i.e., the dismissal of belief-incongruent true information as false). The broader points are that (a) skepticism against belief-incongruent true information is much stronger compared with gullibility to belief-congruent false information and (b) reducing skepticism against true information likely requires different types of interventions than reducing gullibility to false information (Altay, Berriche, & Acerbi, 2023), especially when skepticism is primarily directed against belief-incongruent information.

Conclusion

Decisions based on inaccurate beliefs can have detrimental effects not only for the individual but also for society. The COVID-19 pandemic provides an illustrative example of this issue (Van Bavel et al., 2024). Yet, effective interventions to tackle inaccurate beliefs also require an accurate understanding of why people hold inaccurate beliefs. The current analysis identified three inaccurate assumptions in the public and scientific discourse about misinformation. Our analysis suggests that (1) counter to the idea that people are bad at discerning true from false information, people are quite good at discerning true from false information; (2) counter to the claim that partisan bias is not a driving force in judgments of misinformation, partisan bias in truth judgments of true and false information is pervasive and strong; and (3) counter to the idea that gullibility to false information is the main factor underlying inaccurate beliefs, skepticism against belief-incongruent true information is much more pronounced than gullibility to belief-congruent false information. Although extant interventions may be helpful in tackling belief in false information, different kinds of interventions are likely needed to address the hitherto neglected problem that people readily dismiss true information as false when it conflicts with their views.

Recommended Reading

Batailler, C., Brannon, S. M., Teas, P. E., & Gawronski, B. (2022). (See References). An introduction to the use of signal detection theory for research on the psychology of misinformation, including reanalyses of existing data to illustrate the value of signal detection theory.

Ditto, P. H., Celniker, J. B., Spitz Siddiqi, S., Güngör, M., & Relihan, D. P. (2025). (See References). A review of research on partisan bias in political judgment, including a detailed analysis of debates about the processes underlying partisan bias.

Ecker, U. K. H., Lewandowsky, S., Cook, J., Schmid, P., Fazio, L. K., Brashier, N., Kendeou, P., Vraga, E. K., & Amazeen, M. A. (2022). (See References). A review of research on why people fall for misinformation and why effects of misinformation are often difficult to correct.

Pfänder, J., & Altay, S. (in press). (See References). A quantitative synthesis of studies that investigated judgments of misinformation, comprising data from 193,282 participants from 40 countries across seven continents.