Abstract

Coordinated influence campaigns on social media have become an increasingly important tool for political and economic elites to sway public opinion in their favor. As the study of this phenomenon has so far largely focused on traces of such campaigns on social media itself, we know relatively little about the people and networks implementing them. Furthermore, existing literature offers limited analytical handles to delineate and analyze different forms of influence operations. To address these challenges, we employ interviews with fifty-two members of the “cyber troops” implementing such operations in Indonesia. On the basis of this material, we propose that three key features—being secretly funded, highly coordinated, and involving mostly anonymous accounts—distinguish cyber troops from other types of domestic influence operations. In Indonesia cyber troops involve transient, project-based collaborations among freelancing individuals, not coincidentally mirroring the character of the country’s election campaigns. This rapidly growing industry is cementing the already oligarchic character of Indonesia’s democracy.

Introduction

Over the last decade, concerns have grown about the increasing use of social media to manipulate public opinion (e.g., Bennett and Livingston 2018; Bergh 2020; Bradshaw 2020; Bradshaw et al. 2021; Cantini et al. 2022; Freelon and Wells 2020). While influence operations in cyberspace have long been associated with foreign information warfare and interference, increasingly they are used in domestic political affairs as well. The body of evidence supporting the prevalence of “domestic influence operations” through social media is expanding. Martin et al. (2023) have identified such operations in twenty-five countries, indicating a growing trend. Additionally, a comprehensive study by Bradshaw et al. (2021) found instances of public opinion manipulation through social media in eighty-two countries worldwide.

This article addresses two—one analytical, one empirical—challenges in the study of this phenomenon. First, employing broad concepts such as “computational propaganda” (Bradshaw et al. 2021), the existing literature offers limited analytical handles to delineate and analyze different forms of influence operations occurring in different political conditions. In this article, we develop a definition of “cyber troops,” involving three crucial features—being secretly funded, highly coordinated, and involving mostly anonymous accounts—to better distinguish this phenomenon from other types of influence operations. Based on in-depth research of cyber troops in Indonesia, we argue that cyber troops represent a particular type of influence operations, which, in this case, evolved from specific political conditions and practices in Indonesia, but which is not, however, unique to the Indonesian case. Second, as most studies are either based on content analysis of media reports (Bradshaw et al. 2021; Martin et al. 2023; Masduki 2022) or quantitative analyses of Twitter data often using social network analysis (Golovchenko et al. 2020; Keller and Klinger 2019; Linvill and Warren 2020; Sastramidjaja and Wijayanto 2022; Uyheng et al. 2021), we have acquired a fairly good understanding of how these manipulative networks operate online. However, the actors and networks implementing such public opinion manipulation through social media have received much less attention (notable exceptions include Ong and Cabañes 2018; Zhdanova and Orlova 2019). Given the considerable and growing impact that cyber troops have on public debate and democracy, there is an urgent need to better understand these operations: how are cyber troops organized and funded, how are their campaigns devised, and what motivates these paid social media operators—the “cybertroopers”—for doing this kind of work?

This article addresses these questions using interviews with fifty-two individuals who have been involved in such secretly funded, coordinated social media campaigns to manipulate public opinion in Indonesia. The likely reason for the relative absence of this kind of research is that such individuals are difficult to contact and reluctant to disclose details about their work. We addressed this challenge by employing five purposefully selected field researchers; they all had connections with cybertroopers or had previously worked as cybertrooper themselves, which enabled them to contact informants and inspire trust. Over the period of one year (between January and December 2021), they succeeded in interviewing twenty-three “cybertroopers” (fake account operators), twenty-two influencers, two content creators, and five coordinators. We will further elaborate our methodology below.

Based on this unique interview material, this article contributes to the literature on influence operations in two ways. First, we offer a rare insight from “behind the scenes” into the secretive influence operations, illuminating the organization, infrastructure, and workflow. Second, we use this material to develop a categorization of different actors within influence operations, which we then use to distinguish cyber troops from other types of influence operations. We find that, in Indonesia, a sizable industry of cyber troops has emerged, engaging in highly coordinated social media campaigns on behalf of political and economic elites. We observe that these cyber troops consist of versatile networks set up temporarily for particular campaigns, mirroring, not coincidentally, the organization of election campaigns in Indonesia. We conclude that cyber troops are cementing the already oligarchic character of Indonesia’s democracy (Hadiz and Robison 2004; Winters 2011), as they are used to bend public opinion toward the interests of the wealthy elites employing them.

The article proceeds as follows: In the following section, we review available conceptual approaches before developing our definition of the particular type of influence operation executed by cyber troops. After outlining our methodology, we discuss how these networks are organized in Indonesia and analyze the character of their collaboration. We subsequently analyze how cyber troops grew out of the networks organizing election campaigns and discuss their financing. We conclude by highlighting the ways in which cyber troops affect the nature of Indonesia’s democracy.

Cyber Troops and Influence Operations: Some Analytical Distinctions

Being a relatively new topic facing considerable methodological challenges, the burgeoning literature on public opinion manipulation through social media struggles to define and distinguish different types of online influence operations. Broadly speaking, this field first defined itself in terms of mis- or disinformation being spread online. In an influential study of the Oxford Internet Institute, for example, Woolley and Howard (2017: 4) define “computational propaganda” in terms of distributing “misleading information over social media networks (. . .) to manipulate public opinion.” In this way, a wide range of publications on “networked disinformation” (Ong and Cabañes 2018), “digital disinformation” (Cabañes 2020), or “disinformation campaign production” (Ong and Tapsell 2022) take the nature of the disseminated information as the defining characteristic of such campaigns (see also Freelon and Wells 2020). The drawback of this approach is that such campaigns only partly revolve around spreading disinformation. These campaigns also focus on amplifying particular views and opinions, harassing opposing voices, and sometimes simply disseminating particular “real” news that suits a particular agenda (e.g., Gaw et al. 2023: 16–20). Spreading disinformation is just one aspect of campaigns to manipulate public opinion.

Addressing this limitation, the term “influence operations” is gaining ground as an alternative (e.g., Alizadeh et al. 2020; Barrie and Siegel 2021; Bergh 2020; Bradshaw 2020; Gaw et al. 2023), referring more broadly to coordinated campaigns to influence public opinion. The conceptual challenge of this definition is that it could potentially incorporate an overly wide range of different actors, as digital marketers and social media activists also aim at influencing and sometimes manipulating public opinion (e.g., Sinpeng 2021). Similarly, both highly coordinated click-farms and the activism of ideologically driven trolls might fall under this definition. How might such very different forms of influence operations be distinguished so as to arrive at categories that could both facilitate analysis and resonate empirically?

The majority of studies does not provide clear definitions (e.g., Han 2015; Masduki 2022; Ong and Tapsell 2022). Other studies focus solely on specific actors in particular settings, such as “China’s 50 cent army,” limiting a concept’s applicability in other contexts (King et al. 2017). Bradshaw et al. (2021: 1) do define cyber troops, in terms of “government or political party actors tasked with manipulating public opinion online.” This definition, however, omits the element of inauthenticity that others do associate with cyber troops, while it includes the everyday ways in which government institutions use social media accounts to inform the public about their policies. Online trolls, finally, are sometimes narrowly defined as paid fake account operators (Linvill and Warren 2020), but sometimes more broadly understood in terms of their “deceptive, destructive, or disruptive” behavior online (Buckels et al. 2014). In short, definitions in the surveyed literature do not yet adequately delineate different types of influence operations. Building on this literature and our research, we propose to address this conceptual challenge by departing from a categorization of the various actors who can participate in influence operations. As noted, many of the abovementioned studies are based on either content analysis of media reports (e.g., Bradshaw et al. 2021) or quantitative analyses of Twitter data (e.g., Linvill and Warren 2020), which might explain the preference for definitions based on characteristics that are observable online, and the inability to identify the types of actors involved in these operations. This is where qualitative, interview-based studies can make a relevant contribution, as they allow for defining and distinguishing influence operations in terms of the varied networks and actors engaged in such operations.

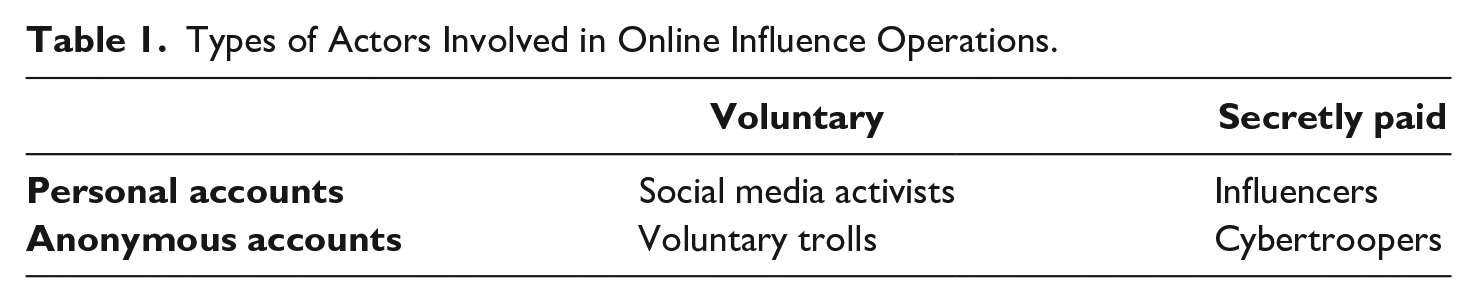

We propose that influence operations can be distinguished by paying attention to the different kinds of actors involved in them. Taking inspiration from our fieldwork, we propose to distinguish the different actors in terms of two key comparative dimensions: whether they are (secretly) paid, and whether they use anonymous accounts. Table 1 summarizes how these dimensions serve to distinguish four different types of actors, without claiming that these exhaust all possible participating actors.

Types of Actors Involved in Online Influence Operations.

Our first comparative dimension is whether actors are secretly paid for disseminating content online, either in the form of a monthly salary (for the duration of longer-running campaigns) or a fee per campaign, tweet, or account. This financial aspect distinguishes cybertroopers—that is, paid anonymous social media account operators—and certain influencers from social media activists and online voluntary trolls whose efforts to influence public opinion are not directly motivated by material gain—such as the group of volunteers referring to itself as “Trump’s Online War Machine,” which operates anonymously while flooding social media with content that lionizes the former president (Beisinger 2023). The paid aspect is important to distinguish cybertroopers from such voluntary efforts as funded influence operations tend to promote particular interests of the powerful and the wealthy, which voluntary troll armies might not do. A critical aspect to further highlight is the secrecy surrounding this financial compensation: The efficacy and credibility of the content disseminated by cybertroopers rely on the misconception of the public that they are genuine individuals—or, for influencers, that they express genuine, personal opinions—thus deliberately leaving their audience unaware of their remuneration (see also Martin et al. 2023: 2). Accordingly, some studies describe their functioning as “online astroturfing” (e.g., Chan 2022; Hameleers 2023; Keller and Klinger 2019). A second comparative dimension is whether participating actors use anonymous or their own personal accounts, distinguishing cybertroopers and voluntary trolls from social media activists and influencers who usually use their real identity (Riedl et al. 2023). Cybertroopers, then, are those being secretly paid for disseminating specific political messages using social media accounts that either involve a fake identity or lack a personal name.

This categorization of actors is helpful to define cyber troops and to distinguish this phenomenon from other types of influence operations. We distinguish cybertroopers (a specific actor) from cyber troops (a network), which we define as networks of secretly paid actors using mostly anonymous social media accounts to engage in coordinated campaigns to manipulate public opinion. Before substantiating and illustrating empirically this definition in subsequent sections, we will briefly highlight three key elements. First, a key characteristic is that the actors involved in cyber troops are secretly paid, a feature which distinguishes cyber troops from both volunteer activism and digital marketing. Second, cyber troops mostly use anonymous or fake accounts, which further distinguish them from digital marketing and social media activism. The bulk of the distribution of content occurs through social media accounts that either involve a fake identity or lack a personal name. We stress, however, the word “mostly” in our definition: as discussed below, occasionally cyber troops do actively collaborate with, and pay, influencers operating under their own name.

A third distinguishing feature of cyber troops is the concerted coordination of online campaigns to influence public opinion through organized networks. This online coordination goes beyond the kind of collaboration often characterizing social media activism, such as actively sharing and endorsing each other’s posts and hashtags. The coordination involves a division of tasks in well-organized teams, coordinated timing of postings, and concerted dissemination of shared, strategically selected content, typically with the aim of making a particular narrative a trending topic or drowning out opposing views. This coordinated division of tasks also involves interaction between cybertroopers and influencers—as cybertroopers disseminate and amplify (paid) posts of influencers.

Having thus proposed an approach to distinguish different influence operations and to define and delineate the phenomenon of cyber troops, we will discuss the functioning of cyber troops in Indonesia to substantiate and illustrate these distinctions. We start by clarifying how we gathered our material on this difficult-to-study phenomenon.

Methodology

To address the challenge of contacting and interviewing members of secretive cyber troops that are difficult to approach and reluctant to share information, we relied on local researchers that had either worked as cybertroopers themselves, or had good connections within these networks. The subsequent selection of informants proceeded as follows: First, we employed a social media analysis tool from Drone Emprit Academic 1 to crawl tweets related to five key public debates taking place in 2019 and 2020: the 2019 presidential election, Indonesia’s COVID-19 policy, the chairmanship of Partai Demokrat and the adoption of two controversial laws concerning Indonesia’s Corruption Eradication Commission (KPK) and a wide-ranging pro-business law (the Omnibus Law on Job Creation). We subsequently analyzed accounts with high-volume posts to manually identify suspicious accounts based on three criteria: participation in sudden dissemination of a particular narrative (i.e., hashtags that quickly achieve high volume), frequent sharing of content similar to other accounts, and lacking meaningful interaction with other accounts. Having thus identified possible cybertrooper accounts, we asked our field researchers whether they knew some of these accounts. This led to the identification of about 80 percent of the cybertroopers we ended up interviewing. The rest of the informants—including influencers and other actors involved in cyber troops—could be contacted from our researchers’ own networks and through referrals from previous interviews (“snowballing”). This selection process enabled us to successfully interview fifty-two individuals involved in cyber troops.

While we feel that such reliance on the personal networks of field researchers is unavoidable given the sensitivity and secrecy surrounding this topic, the drawback of this approach is that this might have skewed the selection of informants in some way; forty-four of our informants, for example, were based in Jakarta. Therefore, we cannot claim to have interviewed a representative sample of cybertroopers. Nonetheless, our interviews, all conducted in Bahasa Indonesia, garnered significant insights into the infrastructure and methods of cyber troops that are likely to be applicable to cyber troop operations generally.

To facilitate the research process, we prepared an extensive interview guide, a fieldwork guidance document, and a report template. The research procedures were discussed during a two-day training in January 2021, which included extensive interview training. Subsequently, the work was divided among the five researchers, each focusing on one of the abovementioned five public debates, by interviewing cybertroopers whom we found to be active in these debates. During the interviewing phase, the researchers had regular meetings with one of the authors (Wijayanto) to discuss the progress and potential challenges. After completing the interviews, the researchers wrote interview reports with partial transcriptions and five final reports on the involvement of cyber troops in the five public debates. These reports 2 constitute the material on which this article is based—with interview quotes translated into English. See the Supplemental Information file for a more detailed description of our methodology.

Organization of Cyber Troops

From our interviews a picture emerges of highly versatile ad-hoc networks, in which members of cyber troops typically work together for a limited period for the purpose of a particular campaign, and do so on a freelancing basis. Despite this limited institutionalization, these networks are marked by extensive coordination and a clear division of labor. We find that the implementation of cyber troop operations involves not just cybertroopers but also individuals performing other roles, the most important being coordinators, content creators, and influencers. We will discuss these different actors within cyber troops in turn.

Cybertroopers, in Indonesia often referred to as “buzzers,” are the lowest, most numerous tier within influence operations. Their work primarily consists of posting content that they receive from their coordinator, and retweeting and commenting on the posts of others, including influencers that have been enlisted for a particular campaign. Some cybertroopers also engage in attacking or “trolling” targeted individuals, with the aim of silencing or drowning out opposing views. Additionally, they need to monitor social media for posts that are relevant to the case they are working on, in order to determine or adjust their strategies. One cybertrooper described working on a team supporting the 2019 election campaign of incumbent president Joko Widodo (popular designation Jokowi) as follows:

I got up early in the morning at dawn. We were the first team. We had a picket system (. . .) divided into two shifts of twelve hours. When I had duty at night, I was drowsy in the morning, because I stayed up all night looking at my laptop [following] what was happening with the president. So it’s just like working as a presidential bodyguard in the real world. Only this one is for the cybersphere. We have to keep looking at the mentions on social media, what issues are raised, because in this way we can guard the president, by continuously [monitoring and responding to issues on] social media. (Interview cybertrooper, March 16, 2021)

To execute their work, one cybertrooper generally manages between, according to our informants, ten and three hundred accounts. Obtaining these accounts requires a telephone number, which is why they not only operate multiple phones but also need a great number of sim-cards: “So we make Twitter accounts, like this: from one Gmail account you can get five [Twitter accounts] and from one [telephone] number you can get 15 Gmail accounts” (interview cybertrooper, February 21, 2021). After making all these accounts, the interviewed cybertroopers spoke of “breeding” a number of core accounts: on those accounts, they regularly post attractive content on popular topics such as soccer or movie stars in order to attract followers. One cybertrooper explained: “(. . .) me and some of my friends have some accounts that are special, that become the champion [accounts], meaning that I take care of this account [well] so that it comes big [so that] many people know this account, and are loyal [to the account]” (interview cybertrooper, January 30, 2021). Such relatively patient work of building a following is important to be able to attract clients for their services. A cybertrooper having numerous accounts with a large following can generally request more money for their services. The practice of first “breeding” accounts and subsequently employing them for influence operations is thus an important aspect of the “career” of cybertroopers. This practice is sometimes noticeable on Twitter: some accounts post news about celebrities for months, before suddenly switching to regularly posting about political topics.

Cybertroopers tend to connect their numerous accounts in a semiautomated manner. Our informants mentioned (using militarized language) having a “leading (or General’s) account” and “troop (or soldiers) accounts.” The leading account is used to post particular content, after which the troop accounts are used to retweet these posts. One cybertrooper explained how they create semiautomated “troop accounts” using Tweetdeck, which facilitates timed posts and retweets, thus creating semiautomated bots with the goal of amplifying certain posts, hashtagstopics. One cybertrooper described (and boasted about) this strategy as follows:

[To push a] hashtag, first you need to have a lot of users [i.e. accounts, which are] spread out, in Sumatra, Java, Bali etc. Second, [you need] speed in posting. If in one hour we cannot catapult a hashtag to the world, it will not emerge [as a trending topic]. (. . .) Ultimately, even if the volume [of tweets] is small, but the speed is good, it can go straight to the top [of Twitter’s trending topics list]. (. . .) My [record] speed was 20 minutes [to make a hashtag] trending nationally. Just like that. In a few hours it could be trending worldwide [smiles]. That is my speed. And that matters, right? If a hashtag is trending in the world, it is read by the world. If it is trending in Indonesia, all Indonesians will read it, right? Because that is how Twitter works. (Interview cybertrooper, March 25, 2021.)

Cybertroopers often receive the content for such posts from content creators: individuals charged with creating particular memes, hashtags, pictures, and texts that carry the message(s) of the influence operation. Content creators do so following instructions from the coordinators, but they also seem to have considerable freedom in devising content that is attractive and visually appealing. A common tactic that we observed, for example, is using photographs of prominent politicians or other public figures, combined with a quote that promotes the influence operation’s message but that might not be from the featured politician or that is unrelated to the image. Our interviews show that content creators think strategically about how to craft effective posts, including thinking about narrative resonance with the audience (see also Cabañes 2020):

The key to going viral is timing and content. [It is important to] animate [menjiwai, literally: “put spirit into”] people’s emotions. I am crowned the king of hashtags. I make up these hashtags on my own, [according to] my principle. The hashtag must have a philosophical value [and be tailored to] the target, [and to the type of messages that] can be accepted by society. If the hashtag does not match [with society] I will not use it. But if the hashtag is appropriate, I will make it big. (Interview content creator, February 21, 2021.)

The work of cybertroopers and content creators is directed by a third type of actor: coordinators. These are usually senior cybertroopers who organize and direct the influence operation by deciding on the narrative of the campaign, the content of posts and the usage of hashtags, and by coordinating the work of cybertroopers. A coordinator distributes the memes or texts made by the content creators to the cybertroopers and coordinates the timing of the posts. One interviewed coordinator discussed this task as follows:

For coordination, timing is very important. The coordinator will indicate a time for posting something. The buzzers [cybertroopers] have to follow this. It is very important that this posting is done simultaneously. It should not happen that someone starts early or too late, because that could jeopardize their capacity to raise this hashtag to become a trending topic. (. . .) [An] influencer [whose posts the cybertroopers are tasked to amplify; see below] can also advise on the right hour to raise a hashtag. (Interview coordinator, June 18, 2021)

The coordinator is generally the person who brings together a group of cybertroopers and content creators for the purpose of working on a particular campaign. Moreover, the coordinator is the person maintaining contact with the client. While our interview material is limited here, it seems cyber troop campaigns are generally set up after a client approaches such coordinators with an assignment and provides the required funds to execute the campaign. During the campaign, the coordinator and the client can be in regular contact to discuss strategic matters such as the selection of narratives that cybertroopers promote.

While some cybertroopers, content creators and coordinators may collaborate over extended periods and across various campaigns, they usually do so without a formal institutional connection, coming together solely for the execution of specific campaigns (a characteristic of cyber troops in Indonesia elaborated below). Furthermore, as the subsequent comment from a coordinator highlights, the networks of collaborating individuals often stem from the coordinator’s personal and professional connections:

I usually get people from WhatsApp groups of volunteers [of election campaigns] or WhatsApp groups of hobby communities. But I also look at who is active on social media, like bloggers. A small part I attract from my circle of friends, people who are newbies with social media. For those [people] we give training first. (Interview coordinator, March 17, 2021.)

A fourth type of actors involved in cyber troop campaigns are influencers. These are individuals who—contrary to cybertroopers—operate on social media under their own name. They have some fame as celebrities, society figures, or simply online personalities, with consequently a relatively large number of followers. Our interviews yielded indications that, because of their large audience on social media, coordinators of cyber troops often attempt to involve influencers in their campaign. They would, for example, offer influencers money in exchange for posting a viewpoint that agrees with the narrative being promoted. 3 As cybertroopers subsequently get to work to amplify messages from influencers by retweeting, commenting, or quoting their posts, collaboration with an influencer can be highly effective. Influencers are sometimes actively courted to join a campaign because of their profile and large number of followers. One female influencer, for example, told us that she was called in by a minister of state, whose son she befriended since childhood, to (furtively) support Joko Widodo’s 2019 election campaign, as her profile as an Islamic female “celebgram” (Instagram celebrity), wearing a headscarf, “was very good to improve Jokowi’s image, who was being accused of not being Islamic”; she further disclosed that “now, for this purpose, sometimes I’m flown on a private plane just to campaign for him” (interview influencer, July 14, 2021).

Cyber Troops as Products of Election Campaigns

This brief overview of the organization and collaboration of cyber troops in Indonesia sketches a modus operandi that seems to differ considerably from similar influence operations elsewhere. In other contexts, such influence operations are organized primarily by advertising and PR agencies (as in the Philippines; Ong and Cabañes 2018), government agencies (as in Russia; Howard 2020: 38–44), or secretive companies employing click-farms (as in Columbia and Brazil; Grohmann et al. 2022). In Indonesia, these operations tend not to involve organizations or companies but rather rely on temporary, project-based connections between individuals. While we cannot exclude the possibility that PR agencies sometimes also engage in such activities, in our interviews, we only encountered accounts of ad-hoc associations between cybertroopers, influencers, content creators, and coordinators.

This raises the question why Indonesia’s cyber troops take such a freewheeling, noninstitutionalized form. While the relative newness of the phenomenon in Indonesia might play a role, our interview material concerning the career trajectories of cybertroopers suggests that the networks have largely grown out of election campaigns, and that the similarly freewheeling character of election campaigns in Indonesia has consequently shaped their character. Whereas accounts of career paths of cybertroopers in the Philippines suggest that people often rolled into this work after landing a job in a PR agency (Ong and Cabañes 2018), the cybertroopers we interviewed remarkably often took up this work after becoming a supporter of a particular election campaign.

One cybertrooper, for example, recalled how he started in 2012 (during the Jakarta gubernatorial election) as a volunteer in an election campaign team, still doing conventional campaigning such as “going door to door and then [offering] things to people”—but as he noticed that the opposing candidate used social media, “I started to understand that social media could be used for political campaigning and branding of candidates”; hence, they also began using social media in their campaign (interview cybertrooper, May 25, 2021). Another cybertrooper recalled that he initially, in 2018, was recruited through friends to become a campaign volunteer for Ma’aruf Amin (the incumbent Vice President under Joko Widodo); once Ma’aruf Amin was announced as vice-presidential candidate in the 2019 election, “we made a [campaign] team, initially only of offline volunteers, but as we were targeting the youth”—since young voters might need most persuasion to cast their vote on the elderly cleric Ma’aruf Amin—“we agreed to make a team of cyber volunteers” (interview cybertrooper, May 28, 2021). Soon, social media were filled with attractive memes promoting Ma’aruf Amin as a “cool guy” to vote for. As social media were thus increasingly used as a vital part of Indonesia’s election campaign industry—to spread both positive and negative propaganda on election candidates—many of the initial volunteers became part of cyber troops operating underground (Tapsell 2021).

Our interviews indicate that the employment of cyber troops for promoting (or criticizing) policies grew out of this increasing prominence of social media during election campaigns. It is likely that political elites, having seen the usefulness of social media campaigns during elections, decided to maintain their social media teams and task them with influencing public opinion about issues beyond elections. For those undergoing this transition from campaign volunteer to cybertrooper, it could be a challenging process. One cybertrooper, for example, recalled feeling conflicted when he, after supporting Jokowi in the 2019 elections, was assigned to promote the bill to reform (and weaken) Indonesia’s Corruption Eradication Commission (KPK):

[Working to support president Jokowi] means that (. . .) if people try to (. . .) hit [i.e. criticize] Jokowi we must make an effort to reframe [the issue] so that Jokowi does not get hurt. This means I’m having doubts, should I support [Jokowi’s policy on] the KPK (. . .) because there are fears that this revision [by Jokowi’s government] can damage the KPK’s independence. Yet I am in my position [i.e. in a team supporting Jokowi]. So I have to defend [Jokowi] and think about how not to hit my own friends [who criticize the revision] while keeping Mr. Jokowi safe. (Interview cybertrooper, March 17, 2021.)

To convey the relevance of election campaigns as the “birthplace” of cyber troops, it is useful to briefly discuss the character of campaign organization in Indonesia. Due to a combination of a candidate-centered electoral system and political parties with weak mobilizational ability, candidates participating in parliamentary or executive elections generally do not rely on political parties for the organization of election campaigns (Aspinall and Berenschot 2019). They tend to set up their own campaign organization, in Indonesia referred to as tim sukses. These “success teams” are temporary and versatile campaign networks, often consisting of a candidate’s friends, contacts, and personal supporters. Successful (and well-endowed) candidates can mobilize a large army of volunteers, assigned with a range of tasks, from mobilizing voters at the local level, to staffing social media teams. The reliance on an influx of volunteers makes these social media teams accessible to anybody with some computer skills and some free time. Generally these volunteers are motivated by a mixture of genuine enthusiasm for a candidate and pragmatic hopes of landing a job or a government contract after their candidate’s victory.

Hence, there is considerable similarity between the character of campaign organization and cyber troops: both are temporary networks set up for a particular purpose, and both draw on previous connections and friendships while regularly bringing in new recruits. It seems likely that this similarity is not a coincidence: if indeed cyber troops have grown out of the campaign organizations, they have likely copied the same freewheeling organizational style, while their clients—mostly political elites—likely expect and want cyber troops to operate like their campaign organization. The cyber troops have, however, by now largely separated ways from the tim sukses. While the election campaign teams disband after elections, cyber troops are now active year-round, serving a wider range of clients beyond politicians.

Who Funds Cyber Troops?

In Indonesia, public opinion manipulation is turning into a sizable industry providing a livelihood to a growing number of people; according to our informants, “thousands” of cybertroopers are active in Jakarta alone. As mentioned, some of the cybertroopers were motivated by political preferences and, in particular, sympathy for political candidates. But most of our interviewees were candid about doing this work mainly for the income it generated. This new industry seems to be offering a relatively attractive pay, while this pay varies considerably depending on the abovementioned roles within cyber troops.

Most cybertroopers are paid per account that they employ for a particular campaign. For example, one cybertrooper reported receiving “between 50 thousand to 100 thousand [rupiah] per account. That is multiplied by 35 [accounts], so about a million and a half [per month, about 95 USD]” (interview cybertrooper, January 2, 2021). Others reported getting up to 250 thousand rupiah (17 USD) per account. Some do get paid per month. One cybertrooper reportedly received 2.5 million rupiah per month (about 160 USD), but this involved considerable supervision: “I have to tweet at least 50 times per day [or else] I could be kicked out of the group” (interview cybertrooper, February 16, 2021). One content creator said he makes four million rupiah per month. Coordinators earn more. One of them explained that he was paid per account that he could bring to the campaign, including the ones the cybertroopers under him managed. Another coordinator mentioned receiving a total of 190 million rupiah (about 12.000 USD) for supporting a politician during a four-month election project. This coordinator hired six people with this money. His own daily activities mainly consisted of ensuring that each team member posted enough content: “Fifteen on Twitter, five on Facebook. Every day, you know, for at least one month.” He felt that otherwise he would get complaints from the campaign’s funder: “Those upstairs will say, why is there no post? They usually check.” To keep the client happy, he regularly sends updates to “those upstairs” (interview coordinator, April 23, 2021).

Larger earnings are made by influencers who agree to support an influence operation with a few social media posts. While influencers generally keep such deals secret, we managed to interview a journalist influencer who agreed to support a presidential candidate with a few positive posts in exchange for twenty million rupiah (about 1,260 USD). As influencers could support various campaigns, influencers can make large sums of money from participating in cyber troop campaigns. And there might be other rewards: we interviewed two influencers who were appointed as commissioners at state enterprises as reward for supporting a former governor’s election campaign. The “celebgram” quoted above was also offered a government position, which she refused.

While the earnings of particularly cybertroopers seems relatively modest, these amounts do add up to considerable sums of money. Who, then, provides this money; who are the clients for cyber troop campaigns? Most of our informants did not know this for certain and could only report from hearsay. Some of the interviewed coordinators who actually did meet with clients were reluctant to disclose their names. Therefore, the conclusions we can derive from our interviews remain somewhat speculative, yet such hearsay is the only material available to explore this important topic.

Keeping this disclaimer in mind, we feel we can conclude that the clients of cyber troops are remarkably diverse—particularly when compared to accounts of fully government-run operations in more authoritarian countries like China (Han 2015). Our interviewees mentioned four different types of clients. First, some cyber troop activity does seem to be funded by Indonesia’s government or at least by people close to government ministers. One informant, who worked on a campaign to promote the Omnibus Law for Job Creation, said that he was paid by someone close to the Coordinating Minister of Economic Affairs. Another informant, working on a campaign promoting Indonesia’s COVID-19 policies, said that he was paid by people from the circle of the minister in charge of the government’s pandemic response.

Individual politicians constitute a second group of clients. Indonesia’s candidate-centered electoral system requires politicians to pay considerable attention to their public profile. Cyber troops are useful tools for this purpose. Several informants related to us being hired by politicians to raise their online profile and post positive messages about them. For example, one interviewed cybertrooper currently worked to raise the online profile of a politician being considered for a cabinet position; this politician felt that an active Twitter account with many followers would help. This cybertrooper received three million (188 USD) a month to comment on, and retweet, posts from this politician’s Twitter account (interview cybertrooper, April 18, 2021).

Third, cyber troops are also by employed by political parties. For example, we found indications that cyber troops were involved in a campaign to get president’s Widodo’s chief of staff Moeldoko elected as chair of the Democrat Party, the largest opposition party (Wijayanto 2021). An interviewed cybertrooper suggested that the funding for this campaign originated from a politician within the ruling party, PDI-P, of which Jokowi was a member. It should be emphasized that this political usage of cyber troops is not restricted to ruling politicians or political parties. In contrast to, for example, Thailand—where this activity seems to emanate mainly from the state (Sombatpoonsiri 2018)—in Indonesia, opposition politicians also utilize cyber troops. Our research revealed that, during the 2019 election, cyber troops were enlisted to advocate for Prabowo, a presidential candidate from the opposition. These cyber troops consistently generated narratives to counter the attacks orchestrated by cyber troops supporting the incumbent President Jokowi, who sought re-election for a second term. One such example was the online campaign involving the hashtag #2019gantipresiden (2019changethepresident).

A fourth group of funders are economic elites. In Indonesia economic and political elites are closely connected, as wealthy entrepreneurs either enter politics themselves or opt to fund political activities to curry favor with ruling elites. In that light, we encountered indications that business actors pick up some of the bills of the online campaigns of politicians. For example, the abovementioned cybertrooper supporting Vice-President Ma’aruf Amin suggested that “many business people approached kyai Ma’aruf at that time (. . .) through his children” (interview cybertrooper, May 28, 2021).

Thus, our interview material suggests that both Indonesia’s political and economic elites actively employ cyber troops. They have become an important tool for elites to influence public debate in their favor, thereby further cementing the already highly oligarchic nature of Indonesia’s democracy.

Conclusion

Online influence operations have become an increasingly useful tool for Indonesia’s elites to manipulate public opinion to their advantage. In this article, we proposed the term “cyber troops” to describe influence operations to manipulate public opinion through social media in which networks of participants (1) receive covert compensation, (2) predominantly employ anonymous accounts, and (3) engage in extensive coordination to amplify their impact. While cyber troops are often linked with governmental usage, such as the centralized, institutionalized “troll factories” in Russia or China (Golovchenko et al. 2020; Han 2015), or outsourced to PR firms by political entities (as in the Philippines; Ong and Cabañes 2018), our study, based on fifty-two interviews, illustrates that Indonesia’s cyber troops take a different form: they are best understood as transient, project-based collaborations among freelancing individuals. The origins of this adaptable, ad-hoc structure can be traced to Indonesia’s election campaigns, which have a similarly freewheeling character.

Although the Indonesian cyber troops thus often collaborate for brief durations focused on specific campaigns, our interviews uncover substantial coordination and collaboration among the participants. We find that the influence operations implemented by cyber troops involve collaboration between individuals having different roles, as a coordinator (hired by the client) assembles a team of cybertroopers (anonymous account operators), influencers, and content creators, all working in tandem to maximize the impact for the sponsoring client. In addition, in contrast to state-led cyber troops in authoritarian settings such as Russia and China, in the context of hybrid or flawed democracies, such as Indonesia, this client can be, but is not limited to, government actors. A diverse array of political candidates, parties, and economic elites can establish their own cyber troops, contingent upon their financial resources. As a result, the cyber troops not only detrimentally affect democracy by subverting public discourse but also exacerbate existing inequalities, as those wielding economic influence can enhance their political leverage by swaying public sentiment in their favor.

In these aspects—limited institutionalization and more pluralistic sources of funding—cyber troops in Indonesia might differ from otherwise similar influence operations elsewhere. We consider it likely that the decentralized, fragmented nature of Indonesian cyber troops is a more common form of social media-based manipulation in hybrid regimes than in more closed autocracies, where it is the exclusive domain of the state. We thus conclude that cyber troops can and should not solely be studied as a top-down state propaganda instrument. There is a need for more comparative examinations of the different forms that influence operations may take in various countries and across different types of regimes. Given the considerable and growing impact that cyber troops are having on public debate around the world, more studies are needed to understand how their character and impact relate to prevailing economic and political conditions in different countries.

Supplemental Material

sj-docx-1-hij-10.1177_19401612241297832 – Supplemental material for The Infrastructure of Domestic Influence Operations: Cyber Troops and Public Opinion Manipulation Through Social Media in Indonesia

Supplemental material, sj-docx-1-hij-10.1177_19401612241297832 for The Infrastructure of Domestic Influence Operations: Cyber Troops and Public Opinion Manipulation Through Social Media in Indonesia by Wijayanto, Ward Berenschot, Yatun Sastramidjaja and Kris Ruijgrok in The International Journal of Press/Politics

Footnotes

Acknowledgements

We would like to convey our gratitude to the fieldworkers from Social Economic Institute of Research, Education and Information (LP3ES) who were involved in this project: Albanick Maizar, Ali Nur Alizen, Maarif Setiadi Fajar, and Pradipa Rasidi.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This study was funded by the Royal Dutch Academy of Sciences (KNAW).

Ethical Approval and Informed Consent Statements

This study received approval from the beoordelingscommissie Onderzoeksfonds of the Royal Netherlands Academic of Arts and Sciences (KNAW) (approval BDO/1809) on July 14, 2022. Respondents gave oral consent before the interview.

Data Availability Statement

Due to the highly sensitive nature of our interview data, we are unable to make our interview transcripts publicly available.

Supplemental Material

Supplemental material for this article is available online.

Notes

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.