Abstract

Despite increasing academic attention, several questions about news literacy messages (NLM) remain unanswered. First, it remains unclear how differences in the framing of the NLMs may influence their effectiveness. Second, we still know little about how NLMs work and, in particular, whether people also adopt the recommendations they are given. To answer these questions, this study conducts an experiment in the Netherlands and Belgium, where we manipulate the frames of the NLM, comparing interventions using a “fake news” frame with those using a “reliable news” frame or a mix of the two. We also manipulate elements in the false news article that participants have to evaluate afterwards. Our findings show that all three types of NLMs are effective in making people resilient to misinformation, without resulting in a general decrease in trust in accurate news (=spill-over effect). In particular, news literacy media messages with a “mixed” frame are effective, as they make people critical toward false news articles, and also result in a higher perceived accuracy of accurate news articles. Additionally, the findings provide suggestive evidence that NLMs work, at least partly, because participants also adopt (some) of the recommendations being given.

Recent years have seen a strong surge of information that is considered false and that is spread intentionally—disinformation—or unintentionally—misinformation (Vraga and Bode 2020). From a democratic viewpoint, the fast-paced dissemination of false information is highly troublesome. It can affect (mis)beliefs, and ultimately opinions, and may make citizens question basic facts needed for informed political decisions (Hameleers 2022). Given these potential detrimental effects, it is not surprising that scholars started investigating ways to counter people from believing misinformation. For a long time, solutions focused primarily on correcting misinformation a posteriori, for instance via fact-checks (Porter and Wood 2021, Walter et al. 2020). However, recently, scholars started looking also at a priori strategies; solutions aiming to make citizens resilient against misinformation by empowering them beforehand. A prominent example of this approach are news literacy messages (NLM); messages providing readers recommendations on how to distinguish misinformation from quality news (Tully et al. 2020). Compared to fact-checking, less research has been conducted on the effectiveness of these NLMs. Nevertheless, an emerging number of studies demonstrate that NLMs can be effective tools in making individuals resilient to false information (Clayton et al. 2020, Guess et al. 2020, Hameleers 2022). Yet, several questions about the effectiveness of NLMs remain open.

First, it remains unclear how differences in the content and framing of NLMs influence their effectiveness and affect the extent to which people become resilient to misinformation without becoming skeptical toward accurate news. Though previous studies have looked at the modality of the message (Domgaard and Park 2021), not many studies have examined how the content of these messages influence their effectiveness. Focusing on content is important, because NLMs could potentially have a spill-over effect. Recent discussions question whether NLMs always have a positive effect and suggest they may induce a general skepticism toward information. 1 There is indeed some evidence that in certain circumstances, rather than making people critical toward false information only, these messages may reduce accuracy perceptions of all news, including accurate news (Guess et al. 2020; Van der Meer et al. 2023). In this case, NLMs would be as problematic as the original problem of misinformation, or even worse, given that a large majority of information consumed by media users is not misinformation (Acerbi et al. 2022). We argue that the content, and specifically the way NLMs are framed, could potentially determine to what extent a spill-over effect occurs.

A second gap in our knowledge on NLMs is that we have little insight into how they work. As mentioned, it is possible that these tools are effective because they make people skeptical toward all news stories, in which case the fight against misinformation would come at the cost of decreasing trust in reliable information. Ideally, however, NLMs would be effective because they increase people’s news literacy and make people apply the recommendations given, but we do not know to what extent that is the case.

This study delves into the two questions raised above using a survey experiment conducted in the Netherlands and Belgium. The aim is twofold. First, we want to examine to what extent the content, and particularly the way NLMs are framed, influence their success in countering disinformation without resulting in a spill-over effect. We compare two frames, namely NLMs stressing how to identify false information and that use a, what we label, “fake news” frame versus NLMs giving recommendations about how to recognize reliable news and that use a “reliable news” frame. Second, by manipulating markers in a false news article, we aim to get insight into whether people also adopt the recommendations they are given—such as paying attention to sources and language—and whether these factors weight stronger in their accuracy evaluation of a news article after being exposed to a NML.

The Effectiveness of News Literacy Messages

Previous research showed that news literacy is one of the most important predictors for determining whether individuals are able to separate false information from accurate news (Chan 2024). In general, news literacy refers to the skills and knowledge individuals need to navigate the news environment in a conscious and critical way (Tully et al. 2020). Individuals with high news literacy understand the role news plays in society and have the ability to find, recognize, and critically evaluate news (Jones-Jang et al. 2021). This latter skill is particularly important in the context of disinformation, as it indicates that citizens are more critical about the information they receive and should be better to discern false from truthful news (Tully et al. 2020).

NLMs are designed to increase people’s news literacy skills. In a general form, they inform people on how news content is produced, how news may impact consumers, and provide tools allowing users to exercise some control over these processes (Vraga et al. 2021). Applied specifically to disinformation, they warn individuals about false information—often coining the term “fake news” (Vraga et al. 2022a)—or at least on a general level create awareness that some news is more accurate than other (Hameleers 2022). In most instances, NLMs also provide their audience concrete recommendations to assess the accuracy of information. Examples of such recommendations are to check the source of the article, to validate sources used in the article to justify claims, and to look at language (i.e., grammar and spelling or emotional language). Considering that these factors are often associated with false information (Damstra et al. 2021), media literacy interventions thus aim to show how misinformation may be distinguished from quality news or accurate information based on observable characteristics. In this experimental study, we follow a similar approach to media literacy as used in extant literature (e.g., Guess et al. 2020). We specifically design and expose participants to NLMs informing citizens about the fact that some news is more accurate than others and providing them with recommendations to assess a news story’s accuracy. These messages are also in line with many real-life examples of NLMs, such as Facebook’s “tips to spot false news,” one of the largest news literacy initiatives so far, spread to millions of users in 2017 (Guess et al. 2020).

Existing research provides evidence that NLMs can be effective tools to counter disinformation. Clayton et al. (2020), for instance, show that after being exposed to a general warning about “fake news” and recommendations to recognize it, Americans were more likely to recognize false headlines as less accurate. Similar findings were also found in other contexts, such as the Netherlands (Hameleers 2022), Korea (Hwang et al. 2021), and India (Guess et al. 2020).

A key explanation often brought forward for why NLMs could work, comes from inoculation theory. In its purest form, inoculation refers to the process where people are exposed to a weak and refuted form of a (false) message to induce a form of resistance to more persuasive versions of that same misinformation (Cook et al. 2017). More recently, it has been argued that for successful inoculation such prebunking messages do not need to be on the specific topic per se, but can also take the shape of more general warnings and recommendations against mis- and disinformation (Vraga et al. 2022b). Thus, exposing people to a NLM improves peoples’ “latent ability to spot misinformation techniques as opposed to just individual instances of misinformation” and thereby help to build “immunity against the underlying tactics of misinformation” (Roozenbeek et al. 2020: 2).

Altogether, based on inoculation theory, and the overall positive findings from existing research, we expect that NLMs help people to recognize misinformation. This results in our first, baseline, hypothesis:

Framing Effects of News Literacy Messages

Inoculation and an increase of news literacy skills is one explanation for why NLMs may be effective. Yet, there is an alternative, more pessimistic, explanation for why they may work. Rather than resulting in more skeptical attitudes specifically toward false information, they may induce general skepticism toward all information (Van der Meer et al. 2023). In that case, NLMs would not only result in people perceiving false news stories as less accurate but also reliable and factual news stories. In other words, there may be a spill-over effect where NLMs result in people becoming suspicious of news overall (Clayton et al. 2020). To better understand this effect, we can use Truth-Default theory (Levine 2019). This theory states that people, by default, are more likely to perceive incoming communication as truthful. However, it is possible to make people more skeptical toward information and less truth biased (Moore and Hancock 2022). Experimental research has shown that one way to do so is by priming people to become suspicious (Kim and Levine 2011, McCornack and Levine 1990). Doing so will make people more likely to perceive all incoming information as false. Given that NLMs warn people to be wary of false information, they could have such a priming effect, making people more suspicious of information in general.

Though the potential spill-over effect of NLMs has received limited academic attention, there is preliminary evidence for it. Clayton et al. (2020), for instance, find that after exposure to NLMs respondents are more likely to perceive both true and false articles as less accurate. Similar results have been found by Vraga et al. (2022c), Van der Meer et al. (2023), and Guess et al. (2020), though the latter study indicates that the decrease in the perceived accuracy of false news articles is stronger than its decrease for true articles, suggesting that there may still be an overall positive net effect.

One factor potentially driving the spill-over effect of NLMs is that many of them adopt the term “fake news,” such as prominent examples as Facebook’s “tips to spot false news” or the News Literacy Project (Vraga et al. 2022a). This use of a “fake news” frame over a frame that stresses the features that make news stories reliable—the “reliable news” frame—could further enforce a spill-over effect. In a slightly different context, Van Duyn and Collier (2019) demonstrated, for instance, that when individuals are exposed to discourse about “fake news” from elite actors, their trust in the media decreases and they identify real news as less accurate. They show that even simple exposure to discourses about “fake news” may prime people to think about false information when reading a news story and become generally suspicious. Similarly, NLMs using a “fake” news frame, by emphasizing the dangers of “fake news,” may prime respondents and activate a general mode of suspicion, making people leave their truth-default mode. A recent study by Tandoc and Seet (2022) shows that people generally link the term “fake news” to perceptions of falsity and concerns. Moreover, we know from previous research on deception detection accuracy that suspicion raises people’s abilities to detect lies and inaccurate information, while decreasing the accuracy perception of truthful information (Kim and Levine 2011).

Admittedly, when using the term frame in this study, we follow a more narrow frame-element approach by particularly zooming in on the effects of reasoning devices that promote a certain element of information by making deception salient. In line with a frame-element or reasoning devices approach (Matthes and Kohring 2008), using a term such as “fake news” highlights a certain problem definition that deception is prevalent. It may also suggest that news is morally false, and that there was an intention to deceive beyond the lack of facticity. In that sense, using “fake news” instead of more neutral interpretations of misinformation, may trigger general suspicion about the deliberate spread of false information.

In sum, we expect that, on the one hand, using a “fake news” frame over a “reliable news” frame in NLMs may be more effective to increase people’s deception detection accuracy, as it could prime suspicion and take people out of their truth-default mode (Levine 2019). However, at the same time, by raising general skepticism to information, the fake news frame may come at the expense of a decrease in the perceived accuracy of true news stories, the spill-over effect.

Of course, the two frames do not need to be mutually exclusive. NLMs can combine the two approaches. They may caution against “fake” news while also stressing that there is “reliable” news, and in the recommendations provide tips both for how to identify “fake” and “reliable” news. It is possible that such a “mixed” frame may offer benefits of both perspectives. On the one hand, by raising awareness about “fake news” they may make people more critical about information. However, by also emphasizing the existence of reliable news, they may mitigate excessive levels of skepticism. Since we have less strong theoretical expectations about this mixed frame, but rather want to test if the mix of the two results in more effective NLMs reducing the accuracy perceptions toward false news articles without spill-over effect, we formulate a RQ.

Adopting Recommendations

With our experiment, we aim not only to examine the effectiveness of media literacy interventions but also explore whether we can find suggestive evidence that individuals use the recommendations given when evaluating a news story. We focus specifically on two such recommendations. First, the tip to check whether the news story uses reliable sources to support claims. Second, the tip to check for language and style errors. These recommendations are relatively straightforward compared to other recommendations, for example, whether an article contains disproportionate emotional language, or whether it uses real pictures, which have been regarded as content features that enhance the likelihood of false information (Damstra et al. 2021). The recommendations we provide should be fairly easy for people to apply when judging information. While we do not presuppose that these content features are a direct indicator of misinformation, we point out that they may increase the likelihood of deception, therefore requiring a critical perspective. Since we do not study the effect of all recommendations, we do not know what exactly determines recognizing false news stories. Still, we believe our design allows us to explore whether emphasizing easy to identify and more descriptive markers of misinformation activates suspicion and helps people to deviate from the truth default based on the recognition of these specific aspects. As such, although our approach is not comprehensive, we regard it as an exploration of how priming suspicion through an intervention motivates people to more carefully assess elements of an article that may indicate suspicion.

Ultimately, if NLMs work by drawing on people’s media literacy skills and by providing them tools to evaluate a news story, then we should see that people are more likely to identify disinformation, and perceive news articles as less accurate, when they contain clear markers of being false—such as the article not using reliable sources or containing language errors—and that this is particularly the case for those exposed to NLMs warning for such markers.

Methods

We conducted an online survey experiment in two countries; the Netherlands and Flanders, the Dutch-speaking region of Belgium. Both countries are relatively similar in their multiparty consensual political system and democratic-corporatist media system, where public-service broadcasting still plays an important role (Newman et al. 2023). Given the overall similarity between the two countries—at least in their media and political system—we do not expect strong country differences in our findings. Additionally, both countries are characterized by relatively high levels of media trust and low levels of polarization, which should make them less vulnerable to misinformation (Humprecht et al. 2022). We opted for a two-country study to examine the robustness of the findings. As there are no language differences, we can use the same stimuli across settings. This high level of consistency across experiments enhances the robustness of the conclusions, enabling us to assess whether NLMs have similar effects in two relatively comparable contexts.

Sample

The experiment was fielded between March 29 and April 13, 2023, via the online panel of Dynata. Because we use an online panel and there might be some participants speeding through the survey without answering seriously, two data quality checks were made. Speeders—those completing the survey within 40 percent of the median time—were removed from the dataset, as were straight-liners. 2 After removing straight-liners and speeders, we are left with 1,797 participants in the Netherlands, and 1,819 participants in Belgium.

To improve the sample’s representativeness, soft quotas were used on gender, age, and education. Of all respondents 51.7 percent were female, and 43.6 percent were higher educated. The average age was 48.1 years (SD = 16.7). Supplemental Appendix A provides full descriptives. There are no notable differences between the countries in their sample distribution. The experiment was preregistered via aspredicted.com and ethical permission was granted by the Ethics Committee for the Social Sciences and Humanities of the University of Antwerp. 3

Design and Independent Variables

In the experiment, respondents were first exposed to a NLM. Subsequently, they were asked to read a real and a false news article created by the authors. For both articles we measured respondents’ accuracy perceptions of them afterward. To test the hypotheses, we manipulated both the NLM to which they were exposed and the false article they had to read.

Regarding the NLM, respondents could be exposed to one out of three versions, or be assigned to a control condition without exposure to a NLM. All three versions of the NLM first created awareness among respondents that some news may be more reliable than others. Subsequently, they provided five recommendations on how to assess the quality of news articles: (1) to look at the goal of the news article, (2) to check the sources used in the article, (3) to look at the date, (4) to look at the language, and (5) to use a Google reverse image search to check pictures. The NLMs were based on existing national and international examples to increase their external validity, such as Facebook’s “tips to spot false news” and NLMs from the Flemish organization Mediawijs. 4

The three versions differed in their framing. The first version used a “fake news” frame. It warned the reader for false information, using the label “fake news,” and the five features were formulated in such a way that they were recommendations to identify when a news article is “fake.” By repeating the term “fake news” in each feature, we indicate how they are part of the same overall frame. Furthermore, our first tip is rather broad and stresses the underlying purpose of “fake news” to mislead people.

The second version used a “reliable news” frame. Here, the message only said that not all news is reliable, rather than explicitly warning for “fake news.” The five recommendations were formulated in such a way that they provided tips on how to recognize that a news article is reliable. The reliable news frame stresses that news wants to inform us. Finally, the third version used a mix of the two frames. This mixed frame warned for fake news but simultaneously stressed that there is reliable news as well. Regarding the recommendations we made a mix of tips on how to identify “fake” news (source and image), how to identify “reliable” news (date and writing style), or that do both (purpose). The three versions of the NLMs, in Dutch and English, can be found in the Supplemental Appendix B.

After being exposed to the NLM, respondents read two news articles, one true, and one false created by the authors. The order was randomized and respondents first had to answer the questions about the specific article before moving on. The articles were of similar length (between 107 and 120 words) and dealt with the general topic of food safety.

The true article was taken from the news website of the Dutch public broadcaster and was about the increase of pesticides across Europe. The article was selected for three reasons. First, the topic of pesticides is relatively nonpoliticized in both countries. This avoids that prior political dispositions distort our findings. Second, because the article was about Europe it could be used for both countries. Third, it was relatively clear that the news article was accurate. It was neutrally written, justified its claims with sources, and contained no language or style errors.

For the false article, we created an article about the genetic modification of food (GMO’s). This topic was selected because it is an issue on which there is scientific consensus that it is safe (National Academies of Sciences, Engineering, and Medicine 2016), but the public is not always aware of this (Pew Research Center 2015). It has also been used in previous experiments on mis- and disinformation (Tully et al. 2020). Moreover, similar to pesticides it is a topic that is relatively nonpoliticized and nonsalient in both countries. The article claimed that GMO’s are bad for our health and can cause cancer. Moreover, to make it possible for respondents to identify it as inaccurate, it was written in a less neutral style, for instance attacking the food industry for deliberately using GMO’s.

To test hypotheses 4 and 5, we also manipulated two dimensions of the false news article. The first dimension is whether or not it referred to a reliable source. The article either claimed that GMOs cause cancer without referring to any source, or referred to the respected national public research institute (Sciensano in Belgium and the National Institute for Public Health and Environment (RIVM) in the Netherlands). The second dimension is whether it contained language and style errors. The article was either written correctly or contained several language errors-in spelling, grammar, and style (Supplemental Appendix C). The combination of these two dimensions results in four versions of the false article. Manipulating these two dimensions of the false article provides us with suggestive evidence of the extent to which people base their accuracy perceptions of a (false) news article on the inclusion or exclusion of sources and language errors. More importantly, it enables us to test whether these two factors play a stronger role in the evaluation of the accuracy perception of a news article when participants were exposed to a NLM giving them the recommendations to use these two factors to determine a news article’s accuracy.

Ideally, we would have manipulated dimensions of the false article relating to all five recommendations, but due to statistical power we had to make choices. We opted to manipulate the source and language errors for several reasons. First, these two elements appear in most real-life examples of NLMs and have been identified as common features of disinformation (Damstra et al. 2021). Second, compared to other tips, such as emotional language or using Google Reverse Images, these recommendations are fairly easy for people to comprehend and apply when judging articles. Finally, from a practical point of view these recommendations are easy to manipulate, while retaining equivalence on the rest of the article.

Altogether, we have a between-subject experimental design with 4 (NLM: none(control); “fake news” frame; “reliable news” frame; “mixed” frame) × 4 (false article: no language errors and reliable source; no language errors and no reliable source; language errors and reliable source; language errors and no reliable source) conditions, to which respondents were assigned randomly. Supplemental Appendix D provides an overview of the experimental conditions.

Dependent Variables

We use two dependent variables. First, to examine whether NLMs are effective, and whether there are differences based on the frame, we measured participants’ perceived accuracy of the false news article. To measure this variable, we used three items on a seven-point scale running from fully disagree to fully agree: (1) the news article is truthful, (2) the news article is accurate, (3) the news article is deceiving. Note that item 3 was reverse coded. The items were combined into one perceived accuracy scale (alpha = 0.81, M = 3.61, SD = 1.25) by taking their average.

Second, to investigate the spill-over effect and test hypothesis 3, we measured participants’ perceived accuracy of the true news article. The same three items were used for the perceived accuracy of the false news article. Again, these (recoded) items were combined into a single reliable scale (alpha = 0.74, M = 4.57, SD = 1.03).

Manipulation Checks

We included several manipulation checks. First, we checked whether participants in the experimental groups recognized the frame in the NLM. Participants had to indicate whether they thought that the NLM “gave tips on how to identify fake news” and whether the NLM “gave tips on how to identify reliable news,” both measured with a binary agree/disagree answer option. Independent sample t-tests show that participants exposed to the “fake news” frame were significantly more likely to identify the message as giving recommendations on how to identify “fake news” (Mdiff = 0.18, t(1820) = −9.30, p < .001, 95% CI [0.14, 0.21]), whereas participants exposed to the “reliable news” frame were significantly more likely to identify the message as giving recommendations on how to identify “reliable news” (Mdiff = 0.07, t(1820) = 4.38, p < .001, 95%CI [0.04, 0.11]). Second, we asked whether participants thought that the news article on GMOs “referred to a reliable and specific organization as source” and whether this article “contained language and grammar errors,” again measured with a binary agree/disagree answer option. We found that the manipulation of the false article succeeded. 61 percent of the participants in the condition containing no source also identified that there was no reliable source, compared to 36 percent in the condition with source (Mdiff = 0.25, t(3014) = 4.38, p < .001, 95% CI [0.22, 0.28]). Similarly, 49 percent who were in a condition containing language errors identified that there were language errors, compared to 29.7 percent in the condition without errors (Mdiff = 0.19, t(2123) = 4.38, p < .001, 95% CI [0.15, 0.23]).

Analyses

For the analyses, we opted for linear regressions over ANOVA because it enables to control for the order in which the articles were presented and to add an interaction between exposure to a NLM and the two dimensions of the false news article. We present the models including participants from the two countries simultaneously, including a dummy indicating whether the participant is from the Netherlands or Belgium.

Results

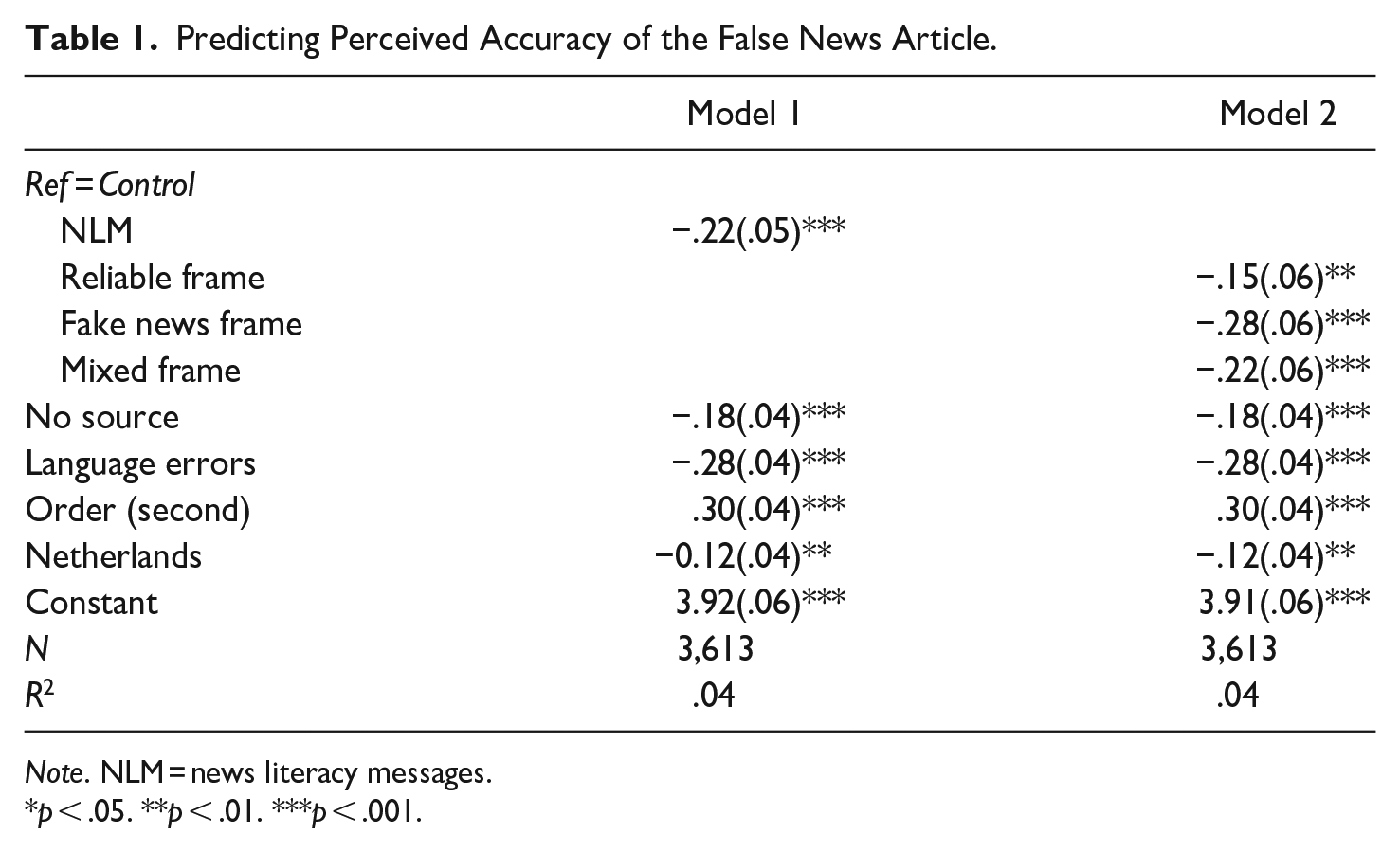

In Model 1 (Table 1), we first examine the general effect of NLMs and whether they improve people’s likelihood of identifying false news articles. The model shows that this is the case. Compared to the control group, participants exposed to one of the NLMs perceived the false article as less accurate (b = −0.22, 95% CI [−0.31, −0.12], p < .001). Though the effect size is moderate, it nevertheless shows that even exposure to a single NLM already lowers people’s accuracy perceptions of false news stories. This finding provides support for the first hypothesis that NLMs in general can be effective in countering mis- and disinformation.

Predicting Perceived Accuracy of the False News Article.

Note. NLM = news literacy messages.

p < .05. **p < .01. ***p < .001.

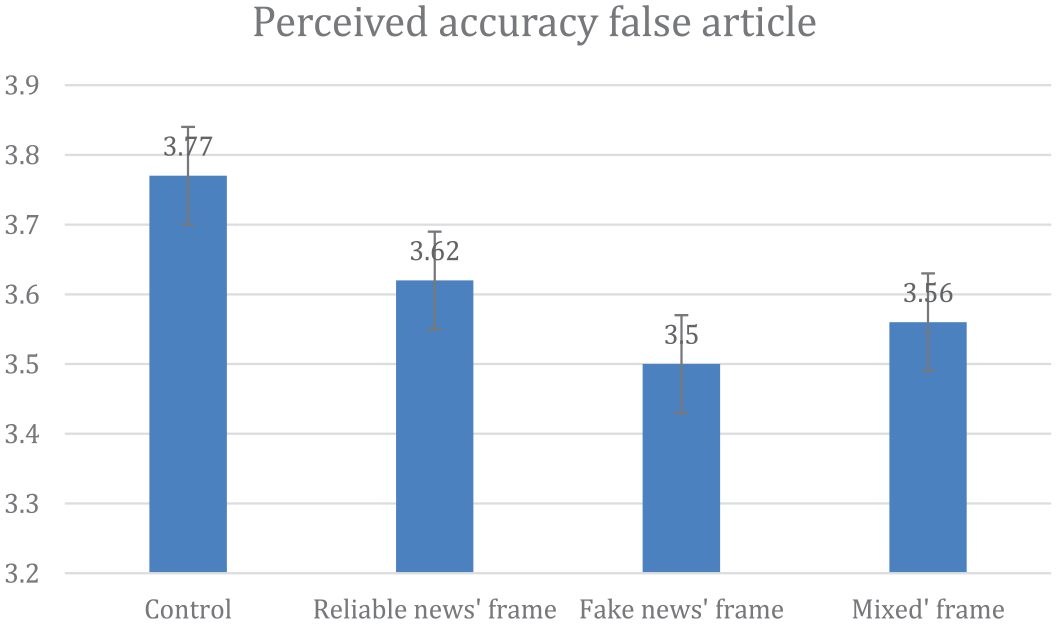

The next question is whether there are differences depending on the frame used. This is what we test in Model 2. Model 2 first of all shows that all three types of news literacy are effective in reducing peoples’ accuracy perception of the false article. At the same time, the effect sizes, as well as the margins (Figure 1), suggest that the NLM with the “fake news” frame is the most successful in lowering accuracy perceptions of the false article (b = −0.28, 95% CI [−0.39, −0.17], p < .001) and the NLM with the “reliable news” frame the least successful (b = 0.15, 95% CI [−0.27, −0.04], p < .001). This is confirmed when we perform a post-hoc F-test to compare whether the effects of the “fake news” frame and the “reliable news” frame are significantly different. This test shows that those participants exposed to the “fake news” frame perceive the false article to be significantly less accurate than those exposed to the “reliable news” frame (F(1, 3605) = 4.69, p = .030). Though it is only a moderate difference, it supports hypothesis 2 that NLMs using a “fake news” frame are somewhat more effective, at least compared to the “reliable news” frame. At the same time, no significant difference is found between the “fake news” frame and the “mixed” frame (F(1, 3605) = 1.21, p = .272), suggesting these work equally well.

Predicted margins on perceived accuracy (false article), controlling for order and multiple comparisons.

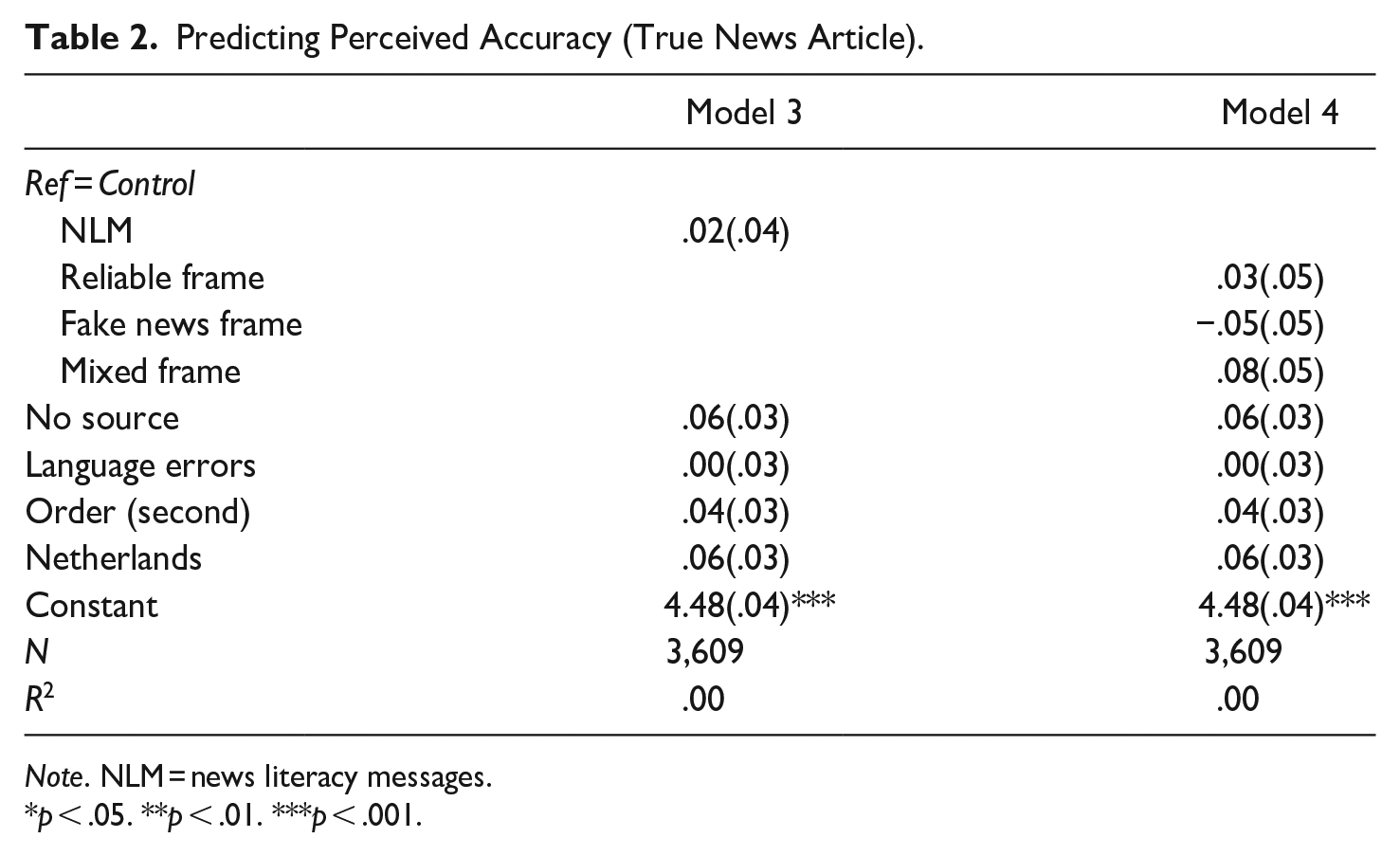

While Model 2 shows that NLMs employing a “fake news” reduce accuracy perceptions of the false article the most, we hypothesized that this may come at the cost of accuracy perceptions of the true article. This is what we set out to test in Table 2. Model 3 first examines whether there is a general spill-over effect of NLMs. We find no evidence that exposure to any of the NLMs affect participants’ accuracy perception of the true article, neither positively nor negatively (b = 0.02, 95% CI [−0.06, 0.10], p = .60).

Predicting Perceived Accuracy (True News Article).

Note. NLM = news literacy messages.

p < .05. **p < .01. ***p < .001.

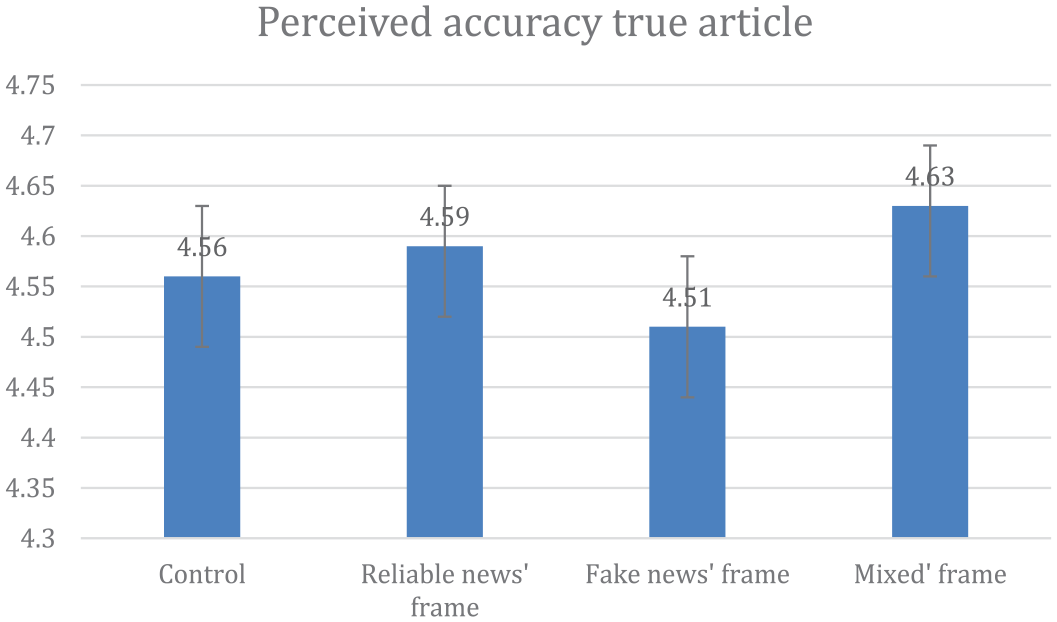

Next, in Model 4 (Table 2), we compare the three types of NLMs, with the margins plotted in Figure 2. Again, we find no traces of spill-over effects. The model gives some indication that there may be a spill-over effect of the “fake news” frame, but this effect is statistically insignificant (b = −0.05, 95% CI [−0.14, 0.05], p = .325). We should also note that whereas the coefficient of the “fake news” frame is negative, the “reliable news” frame, although not significant against the control group, has a positive coefficient. If we compare the difference between the “fake news” frame and the mixed frame directly using a post-hoc F-test, we actually find a significant difference between the effects of these two frames on the accuracy perception of the two articles (F(1, 3601) = 6.61, p = .010). This suggests that the “mixed” frame may have as advantage over the “fake news” frame that it actually (slightly) raises participants’ ability to detect true articles.

Predicted margins on perceived accuracy (true article), controlling for order and multiple comparisons.

Next, we examine whether markers of inaccurate news stories, such as the lack of reliable sources or language errors, have a stronger influence on accuracy perceptions after people read the NLM. Model 1 (Table 1) demonstrates that, irrespective of exposure to an NLM, articles are seen as less accurate when they contain language errors or lack credible sources. Participants reading versions of the false article without sources perceived it as significantly less accurate than those reading a version containing a source (b = −0.18, 95% CI [−0.26, −0.10], p < .001). Language and style errors were even more important markers; participants reading the version of the news article containing language and style errors gave significantly lower accuracy evaluations (b = −0.28, 95% CI [−0.36, −0.20], p < .001).

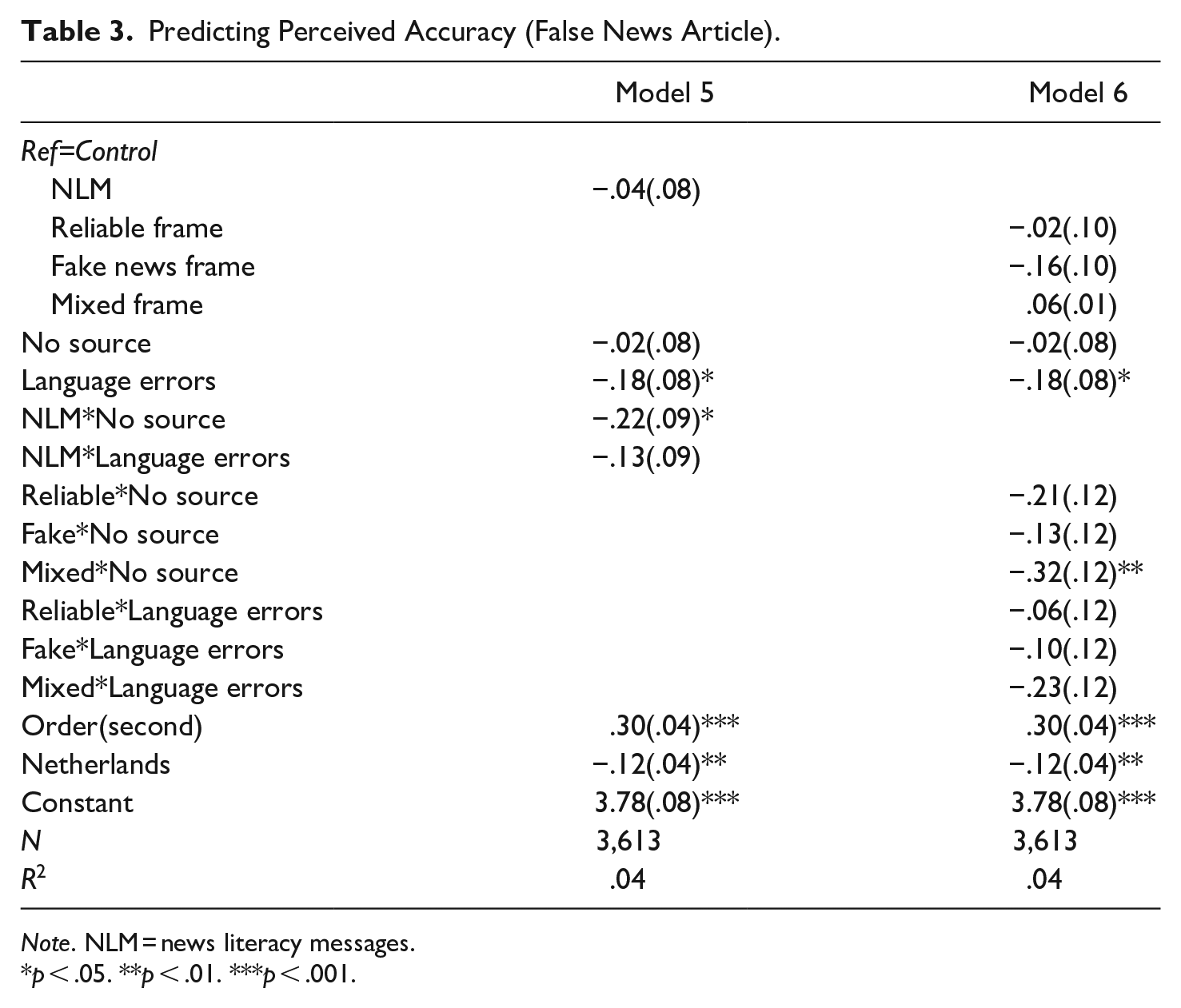

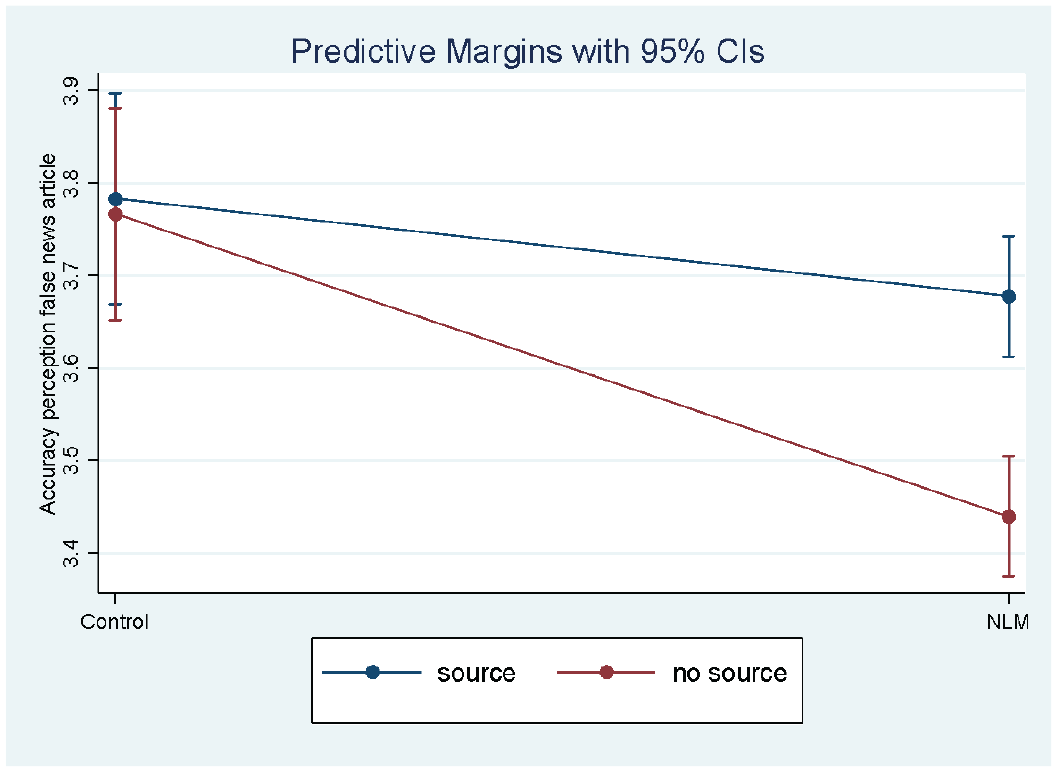

The question is whether these two elements played a stronger role for participants exposed to the NLM. This is what we tested in Model 5 (Table 3) by interacting the markers with exposure to the NLM. The model does not support hypothesis 4. There is no significant interaction between being exposed to a NLM and the presence of language errors on the article’s accuracy perception (b = −0.13, 95% CI [−0.31, 0.06], p = .174). For both participants in the control group, and the experimental groups language errors were an equally important marker. A potential explanation for this lack of effect may be that participants already used language as important criteria for judging accuracy, irrespective of exposure to the NLM.

Predicting Perceived Accuracy (False News Article).

Note. NLM = news literacy messages.

p < .05. **p < .01. ***p < .001.

The story is different when looking at sources. The significant interaction (b = −0.22, 95% CI [−0.41, −0.04], p = .020), plotted in Figure 3, supports hypothesis 5 and indicates that the presence or absence of sources on the accuracy perception of the news article plays a stronger role for participants exposed to a NLM. For the control group, the presence or absence of a source did not matter for their accuracy perceptions of the false article (b = −0.02, 95% CI [−0.18, 0.15], p = .842), whereas for those exposed to a NLM it did (b = -0.24, 95% CI [-0.15, -0.33], p = .020); those exposed to the NLM gave the false article an accuracy score of 3.7 when the article contained a reliable source and an accuracy score of 3.45 when it did not contain a source. This finding strongly suggests that NLMs may (partly) work because participants adopt the recommendations provided, at least when it comes to the recommendation about checking sources. Furthermore, the interaction in Figure 3 shows that being exposed to a NLM resulted in lower accuracy perceptions of the false article for the no source group, but not for those who read a false article with a source. This hints that NLM may not work under all instances.

Interaction plot between NLM exposure and presence of sources.

Finally, in model 6, we interacted the two markers with the different frames of the NLMs. Generally, we found no significant differences between the frames in this regard. One exception though is that the absence or presence of reliable sources on accuracy perceptions seemed to matter particularly for the mixed frame condition.

As robustness check, we reran the models per country (Supplemental Appendix E). Although most of the key findings hold across the two countries, there are two notable exceptions. First, we find that the conclusion that “fake news” frames are more effective than “reliable news” frames is more pronounced in Belgium. The results in the Netherlands give an indication that the “fake news” frame is more effective, because unlike the “reliable news” frame (b = −0.16, 95% CI [0.00, −0.33], p = .055), the “fake news” frame has a significant effect on the accuracy perception of the false article (b = −0.23, 95% CI [−0.06, −0.39], p = .008). However, the direct difference between the two effects does not reach statistical significance (F(1, 1788) = 0.57, p = .452). Second, unlike Belgium, the finding that participants are more likely to use the presence or absence of a source to evaluate the accuracy of an article does not hold in the Netherlands. While going in the expected direction, the interaction between news literacy exposure and source is insignificant (b = −0.12, 95% CI [−0.39, 0.15], p = .399).

Discussion

This study set out to advance the steadily increasing body of knowledge on whether NLMs are effective tools in making people resilient to mis- and disinformation. Particularly, we investigated whether the framing of NLMs influence their effectiveness and affect the extent to which people become better in detecting misinformation without resulting in a spill-over effect. We compared NLMs using a “fake news” frame with NLMs stressing how to recognize “reliable news,” or those who contain a mix. Additionally, we set out to examine to what extent people adopt the recommendations being given when evaluating a news article’s accuracy.

First, our findings show that NLMs can be effective. After being exposed to a NLM people perceive a false news story as less accurate. This corroborates previous research demonstrating that NLMs can be useful prebunking tools in the battle against misinformation (Guess et al. 2020; Hameleers 2022). However, our experiment does point toward some differences between these messages with regard to how they are framed. Though all NLMs result in lower accuracy perceptions of a false article, our findings suggest that messages using a “fake news” frame, or a mixed approach are slightly more effective in making people resilient to misinformation than messages using a “reliable news” frame.

Second, though concerns have been raised that NLMs may make people not only skeptical toward false information but also toward accurate information, we do not immediately find such spill-over effects. While exposure to the NLM lowered the accuracy perception of a false article, it did not lower the accuracy perception of an accurate article. This even holds for NLMs using a “fake news” frame, for which we expected the spill-over effect to be most likely to occur. However, we should mention that, at least compared to NLMs with a “mixed” frame, messages with the “fake news” frame do not increase accuracy perceptions of the true article either. Additionally, we find that, while not significant, the effect of the “fake news” frame on perceived accuracy of a true article runs in the expected negative direction. Although we can only speculate and future research is necessary, this could hint that a spill-over effect could occur after repeated exposure to the “fake news” frame. Therefore, while our findings give tentative room for optimism, more research is necessary with regard to repeated exposure to the “fake news” frame.

If we then compare the three types of framing of NLMs directly with one another, our experiment suggests that particular messages with a “mixed” frame are promising. Like messages using a “fake news” frame, they are the most effective in making people critical toward false news articles, but at the same time, compared to the “fake news” frame, also result in a higher perceived accuracy of accurate news articles.

We also aimed to get insight in whether people adopt the recommendations they were given. Our findings provide suggestive evidence that this is the case. When it comes to checking sources, we find that for people who are exposed to a NLM, the absence of reliable sources in the news article had a stronger effect on the accuracy perceptions of that article. We did not find this for language errors, which may be explained because people already use this as an important criteria in their accuracy evaluation irrespective of exposure to a NLM.

A limitation of this study is that, despite the fact that we used two relatively similar cases with regard to the political and media system, we found some country differences that we cannot fully explain. Most notably, we find that in Belgium, the effectiveness of the “fake news” frame in lowering accuracy perceptions of the false article is more pronounced, and that Belgians were more likely to adopt the recommendations of the NLMs. We can only speculate about the reasons behind these differences. One explanation for why the “fake news” frame is (slightly) more effective in Belgium, could be because people are more worried about misinformation. Though in comparative perspective, these worries are generally low in both countries, they are more pronounced in Belgium than in Netherlands, with, respectively, 46 percent and 30 percent of the population indicating that they are “worried about what is ‘real’ or ‘fake’ on the internet” (Newman et al. 2021). With regard to why we find that, compared to the Netherlands, Belgians are more likely to use the recommendations of the NLMs, a potential explanation could be that initial levels of digital literacy are higher in the Netherlands, particularly in the area of dealing with information. 5 This could mean that Dutch participants may be more likely to already check sources in the first place, even without exposure to a NLM. More comparative research is necessary, however, to test whether these factors indeed explain the differences.

Another key limitation lies in the design of this experiment, since we only manipulated two of the five elements of the NLMs in the false news article that participants had to evaluate. Consequently, while we demonstrate that the NLMs as a coherent whole had an effect, we cannot fully identify the underlying mechanisms of how they work and pinpoint which elements of these messages make a difference. The finding that the absence of reliable sources had a stronger effect on accuracy perceptions of a false news article after exposure to an NLM, provides tentative evidence that people may follow this recommendation, but since we did not manipulate all elements, we cannot fully exclude that something else in the news literacy message triggered this stronger influence of the source on news accuracy perception. In the ideal experimental set-up all salient aspects of the news literacy framing treatments would be manipulated in the false news article via an additive approach, to better tease out which mechanisms in the news literacy frames make a difference.

A related shortcoming is that the recommendation to focus on language and grammar errors dates back to early days of online disinformation, but that this may become less applicable over time as the writing quality of disinformation articles improves. Future research should expand on this study by focusing on other recommendations, such as checking images and the use of emotional language. This is especially relevant because these recommendations may be more difficult to apply for individuals than the ones we tested.

Our study has two other limitations as well. For one, we focused on a single topic, the genetic modification of food. While an advantage of this topic is that it is not politicized and salient in either of the two contexts, we do not know how the findings hold for other issues. It could be that NLMs are less effective in prebunking misinformation for more salient topics for which politically motivated reasoning may be stronger. Second, we followed a more narrow frame-element approach, zooming in on effects of reasoning devices related to the use of the term “fake news.” Future studies could investigate whether a stronger or more elaborate “fake news” frame, might yield different results and could result in a spill-over effect.

Finally, we should nuance our optimistic findings somewhat. While we show that NLMs work if people are exposed to them, the question remains how these messages can actually reach the general audience, as people may ignore them. Additionally, while NLMs are effective in reducing accuracy perceptions of false articles containing clear markers—such as the ones present in the recommendations (no sources, language errors, etc.)—they do not empower people against false articles that lack such clear markers. While research shows that often these markers are present in false articles (Damstra et al. 2021), they do not need to be. Especially if the “quality” of false articles would improve over time, NLMs may become less effective.

Despite these nuances to our findings, our study provides reasons for optimism about the use of NLMs as prebunking strategy. It shows that these messages can be (somewhat) effective, with only a limited risk of making people more skeptical toward all news stories. Particularly, messages not only using a “mixed” frame, warning for “fake news” but also giving tips on how to recognize “reliable news,” seem to be effective, resulting in people better discerning between false and accurate news articles. This suggests that—while definitely not the sole solution—news literacy interventions should be part of our toolbox in the fight against mis- and disinformation.

Supplemental Material

sj-docx-1-hij-10.1177_19401612241279534 – Supplemental material for Combating Disinformation With News Literacy Interventions: An Experimental Study on the Framing Effects of News Literacy Messages

Supplemental material, sj-docx-1-hij-10.1177_19401612241279534 for Combating Disinformation With News Literacy Interventions: An Experimental Study on the Framing Effects of News Literacy Messages by Patrick F. A. van Erkel, Peter van Aelst, Joren Van Nieuwenborgh, Claes H. de Vreese, Michael Hameleers and David N. Hopmann in The International Journal of Press/Politics

Footnotes

Acknowledgements

We would like to thank Jana Thys for her assistance in the project.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The study was founded by the project THREATPIE: The Threats and Potentials of a Changing Political Information Environment financially supported by NORFACE Joint Research Programme on Democratic Governance in a Turbulent Age and co-funded by FWO, DFF, ANR, DFG, National Science Centre, Poland, NWO, AEI, ESRC, and the European Commission through Horizon 2020 under grant agreement No 822166.

Ethical Approval and Informed Consent Statement

This study received ethical approval from the Ethics Committee for the Social Sciences and Humanities of the University of Antwerp (approval SHW_2023_107_1) on March 24, 2023. Respondents gave written consent before starting the survey.

Data Availability Statement

Supplemental Material

Supplemental material for this article is available online.

Notes

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.