Abstract

This article presents results from a national online survey on the unintended effects of research ethics board (REB) processes on research, teaching, and learning in the Social Sciences and Humanities across Canada (N = 620). Focusing on student responses (n = 93), key findings reveal how REB proceduralism, inconsistent risk assessments, and ethics creep disproportionately burden graduate students and restrict innovative, culturally responsive research. Students indicate that REB processes prioritize institutional liability over meaningful ethical engagement, often resulting in delays, emotional strain, and reduced academic motivation. Using DiMaggio and Powell’s framework of institutional isomorphism, the authors identify a paradox: while ethics processes are intended to be standardized, their implementation varies significantly across institutions. The authors recommend reducing barriers for low-risk studies, streamlining multi-institutional reviews, and enhancing REB transparency and cultural responsiveness, alongside offering student-informed strategies for reforming ethics review to better support early-career researchers.

Introduction

Released in 1998, the Tri-Council Policy Statement: Ethical Conduct for Research Involving Humans (TCPS) originated as a collaborative effort by the Canadian Institutes of Health Research (CIHR), the Natural Sciences and Engineering Research Council (NSERC), and the Social Sciences and Humanities Research Council (SSHRC) to promote a unified standard for conducting ethical research. They aim to promote ethics for research involving human participants, ensure respect for human dignity and personal safety for participants, and guide researchers, institutions, and institutional research ethics boards across disciplines. While research ethics boards (hereafter REBs) have an important role in safeguarding ethical standards in research, the expanding scope, procedural complexity, institutional dynamics, and increasing intricacies of research ethics processes of REBs disproportionately burden graduate students and hinder innovative, socially engaged primary data collection. Many graduate students, past and present, fear the impact of research ethics review (RER) processes on their future careers, reputations, and performance as they navigate and try to stay afloat in a publish or perish environment (van den Scott 2016). This article illuminates these issues and offers practical solutions to improve the experience of graduate student researchers facing these bureaucratic challenges.

To explore REB experiences within the Social Sciences and Humanities in Canada, we conducted a national online survey targeting postsecondary faculty and students. The Qualtrics survey was distributed via scholarly associations affiliated with the Federation of Humanities and Social Sciences (FHSS), direct email outreach to 4,625 faculty, and a social media campaign. We obtained ethics approval from relevant institutions, and 620 individuals across 58 institutions ultimately participated. This article draws on the approximately 15 percent of respondents who identified as students. We analyzed responses using descriptive statistics and open-ended, line-by-line coding to identify emergent themes from qualitative data, following an inductive, grounded approach (Kennedy and Thornberg 2018).

In analyzing student encounters with REBs, it is vital to distinguish three aspects: the formal rules and policies that govern REB review, the actual interactions between students and committee members, and the perceptions or interpretations students have about the process. While these aspects are different, each holds sociological importance. Our survey collected both experiences and perceptions, which we can apply in our understanding of formal REB rules and processes, and students’ interactions with committees. As Macdonald (2002) compellingly explains, law is not just about formalities on paper but is created and influenced through its implementation in practice and how it is perceived in everyday life. Therefore, even when students’ perceptions differ from official rules, these perceptions influence how they approach research, experience their education and research training, and understand their role within academia.

We use DiMaggio and Powell’s (1983) framework of institutional isomorphism to understand students’ experiences with REBs in Canadian universities. This framework helps explain why organizations tend to become more similar over time, not solely due to efficiency, but because of coercive, mimetic, and normative pressures. We reveal a paradox of isomorphism in our analysis: while institutions and organizations like REBs often align around shared ethical guidelines and policies, their interpretations and applications diverge, creating “red tape” that is difficult to navigate within a supposedly standardized ethical landscape. Ethics creep, redundant reviews, delays, and inconsistent risk assessments show how institutional norms both unify and fragment in practice. Our analysis indicates that graduate students are particularly affected by this process, especially when involved in multi-institutional research projects, where this inconsistency adds a substantial burden to their research and academic pathways. Consequently, this impacts their passion and motivation for conducting meaningful research.

Several key findings emerge from our respondents’ experiences with REBs. Head (2020) notes that RER procedures are bureaucratic and restrictive, with a strong focus on compliance. This is consistent with our respondents’ comments, noting that RER processes serve the needs of the institution and focus on protecting the researcher rather than the research project and its participants. The bureaucratic nature is also reflected in the tediousness of the process, which leaves many graduate students exhausted and disappointed. Thus, respondents believed that the process interfered with their graduate research more than it helped.

Our graduate student respondents also noticed how REB expectations influenced their coursework, with faculty often avoiding data collection in assignments and teaching. There seemed to be a mismatch between knowledge and expertise in specific fields, which can affect the ethics review process, shaping the feedback they receive and causing delays. REB scrutiny, intensified by bureaucratic loopholes and expectations, can also result in inconsistent communication, prolonged timelines, and diminished passion for research.

Participants extensively discussed the importance of cultural responsiveness and sensitivity, pointing out a disconnect between REB expectations and practices that student researchers viewed as essential when working with vulnerable populations and participants from diverse cultural backgrounds. This was especially emphasized around the roles of letters of information (LOI) and consent procedures. Overall, while recognizing the vital role of REBs in ensuring research is conducted ethically and safely, there is concern that they may hinder academic inquiry and the growth of emerging scholars. This occurs through burdensome procedures, regulatory overreach, and overly cautious risk assessments that often focus on highly unlikely vulnerabilities.

Our findings inform a series of recommendations aimed at addressing these issues—specifically, reducing barriers for low-risk research, eliminating redundant REB reviews for multi-institutional studies, and curbing the gatekeeping role that REBs have come to play—and provide suggestions from our student respondents on making REB processes more approachable and supportive.

Ethics Creep

Since the establishment of the TCPS, all postsecondary institutions that receive funding from Canada’s research councils have been required to establish REBs to review proposals for research involving human subjects. While REB guidelines are widely followed, there is discourse about the benefits and burdens of the operation of REBs at postsecondary institutions. One prominent set of critiques focuses on the notion that REBs have grown beyond their original intent. Haggerty (2004), who draws on his experience as an REB reviewer, was prominent in identifying and unpacking the phenomenon now often referred to as “ethics creep.” Ethics creep refers to the dual trend of ethics regulation extending its reach to encompass new fields and areas of research, ethical oversight, and research institutions, while simultaneously becoming more stringent within its existing scope (Haggerty 2004; Robson and Maier 2018). Haggerty (2004) expressed concern about how broad interpretations of potential harm can lead REB members to require researchers to account for an expanding list of hypothetical risks. This tendency is amplified by the fact that board members are often skilled at imagining unlikely but possible adverse scenarios, which can translate into increasingly burdensome regulatory expectations.

Taylor, Taylor-Neu, and Butterwick (2020) further question whether minimizing risk should be the main goal of ethics review. They argue that “risk” is often defined so broadly that REBs become conservative gatekeepers of research. This risk aversion tends to preserve the current state of society and its institutions, which can be especially problematic for research aimed at driving social change, as such research frequently involves some degree of risk.

Other critiques address the prioritization of formal process over ethical substance. Hemmings (2006) refers to this as an overemphasis on processes, a pattern that others have noted extends to the pedagogy of research ethics (Dixon and Quirke 2018). The expansion of consent form requirements and the expectation that researchers implement an increasing number of procedural safeguards, for example, reflect proceduralism and “red tape” that can undermine genuine ethical engagement with participants. Grayson and Myles noted that bureaucratically worded consent forms mandated by REBs reduced participant response rates and potentially skewed sample representativeness (Grayson and Myles 2005). In a study of formal research ethical review and its impact on qualitative research practices, van den Hoonaard (2001) found that many graduate students encounter difficulties in naturally developing participant-researcher bonds when required to adhere to strict ethical protocols. For instance, one graduate student conducting fieldwork in Nunavut, who had approval from their department and the Nunavut Council on research, was required to use multiple levels of consent forms. When it was time for the participant to sign the consent forms, they refused. The formalities felt obtrusive, and the graduate student wondered if it would have been different if she had not felt the need to strictly follow the formal protocol (van den Hoonaard 2001).

While the concept of ethics creep has been influential in critiquing the expanding scope of research ethics oversight, it has also been problematized within the literature. Guta, Nixon, and Wilson (2013) note that ethics creep is part of a broader shift in university governance, characterized by increasing managerialism and alignment with neoliberal policy frameworks, which reconfigure the ethics review process as a mechanism of surveillance and normalization. They suggest that ethics review functions as a disciplinary practice, shaping researcher behavior through processes that both constrain and produce knowledge. While some critics argue that ethics review imposes dominant epistemological assumptions and fosters confrontational relationships between researchers and REBs, Guta et al. (2013) warn against viewing this solely as repressive, calling for a more nuanced understanding that considers how REBs operate at the intersection of regulatory demands, institutional constraints, and shifting ethical norms. Importantly, they acknowledge that some expansions in ethics oversight, such as those involving research with Indigenous communities, may reflect ethical efforts to confront historical injustices, rather than merely bureaucratic overreach. Still, this practice may be seen as culturally insensitive, conflicting with the principles of Indigenous research (Bell and Kothiyal 2018).

While the procedural burdens of REBs are something many social science and humanities researchers face, it often falls most heavily on new researchers (notably graduate students and emerging scholars), especially those with limited institutional power or familiarity with the ethics review process. According to van den Scott (2016), REBs socialize graduate students into thinking about ethical research in terms of the framework created by the REB, creating docile acceptance of the permanency and of the governing ethics body. Navigating these complex and evolving requirements can consume significant time and energy, diverting attention from the research itself. Without the benefit of mentorship or institutional knowledge, these researchers struggle to meet expectations, potentially leading to delays in their research and program, increased stress, and even abandonment of projects, particularly those involving innovative or socially challenging topics.

Methodological Constraints and Risk Aversion

Cantin (2020) adds to the research on methodological constraints by examining how ethics protocols, though meant to safeguard participants and ensure accountability, have evolved into bureaucratic exercises marked by excessive documentation and revisions. Cantin (2020) characterizes this process as producing meaningless administrative labor that feels disconnected from the actual ethical dimensions of research. The bureaucratic pressures arising from REB protocols can cause graduate researchers to turn inward; instead of critically engaging with their participants, they focus on their own career paths, publication prospects, and how the REB process is vital to achieving their goals (van den Scott 2016).

The procedural focus of REBs often overrides methodological flexibility, leading to feedback that is more clerical or methodologically prescriptive than ethical. This is especially problematic for qualitative researchers, whose work often evolves during fieldwork. Cantin (2020) notes that research protocols are treated as static documents, yet the iterative nature of qualitative work resists such fixity. When ethical review is grounded in the principles and epistemology of deductive research, it erodes and impedes the foundational trust and purpose of qualitative research (van den Hoonaard 2001). Feedback from REBs is frequently perceived by researchers as “nitpicky” or epistemologically misaligned, with reviewers sometimes critiquing methodology without sufficient disciplinary understanding (Cantin 2020). Likewise, socio-legal scholars note that a focus on managing institutional risk rather than merely protecting research participants reflects a broader culture of risk aversion within universities, which can impede the academic community’s role in promoting socially engaged scholarship (Bernhard and Young 2009; Palys and Lowman 2010).

Board members themselves acknowledge that, while administrative ethics forms might sometimes encourage critical thinking, they also limit it, making them seem more like a chore that demands a lot of mental effort rather than a vital part of the thinking process. Graduate students are especially vulnerable in this setting, often lacking the institutional power or experience to resist methodological suggestions or unnecessary revisions. Consequently, their research can be delayed, altered, or even derailed—not because of ethical risks, but due to procedural demands that prioritize predictability over flexibility.

Institutional Redundancy and Delays

Although there is an increasing body of literature documenting the procedural burdens of multi-institutional RER, Canadian scholarship on this issue remains limited. One notable exception is Stephenson et al. (2020), who examined how 69 Canadian REBs responded to externally approved research protocols. Despite engaging in similar research activities (surveying and interviewing professors), each institution required distinct procedures—from streamlined acceptance at universities in harmonized provinces 1 to burdensome full application requirements—leading to a significant administrative burden. Notably, at universities requiring full applications, while the time-to-approval was relatively quick, the process of filling out ethics applications took three research assistants five months to complete 26 submissions. The authors concluded that such inefficiencies likely serve as a substantial disincentive to pan-Canadian education research (Stephenson et al. 2020).

While studies like Stephenson et al. (2020) illustrate the broader systemic issues of redundancy and inconsistency, there is a distinct lack of literature on how the burdens posed by REBs affect graduate students in Canada. This gap is particularly concerning given the time-sensitive nature of graduate research, which is often constrained by funding deadlines, degree completion timelines, and institutional requirements that mandate a faculty principal investigator. Delays caused by multiple ethics processes can jeopardize research feasibility, especially for multisite projects, and can disproportionately impact students with limited institutional power or mentorship.

Institutional Isomorphism

The steady accretion of requirements associated with ethics creep falls under the wider framework of institutional isomorphism. Developed as part of neo-institutional theory by DiMaggio and Powell (1983), it is used to explain why organizations within a given field tend to become more similar over time, not necessarily due to efficiency, but because of coercive, mimetic, and normative pressures.

Institutional isomorphism originates from initial definitions by Hawley (1968, as cited in DiMaggio and Powell 1983), who explained isomorphism as a constraining process that compels one organization to resemble other organizations facing the same environmental conditions. Subsequently, Hannan and Freeman (1977, as cited in DiMaggio and Powell 1983) explained that isomorphism can occur because nonoptimal forms are selected out of a population of organizations or because organizational decision-makers learn appropriate responses to situations and modify their behaviors accordingly. DiMaggio and Powell (1983) argue that institutional isomorphism accounts for the observation that organizations are becoming more homogeneous and tend to serve the interests of elites. It also foregrounds the irrationality and frustration of power, as well as the lack of innovation that is often seen in organizational life (DiMaggio and Powell 1983).

DiMaggio and Powell (1983) discuss the concept of organizational fields, which refers to organizations that constitute a recognized area of institutional life. Within these fields, institutional isomorphism unfolds through shared pressures and interactions. Upon establishment and in the initial stages of their life cycle, organizational fields are diverse in their approach and form. Once well-established, organizational fields exhibit an obvious push toward homogenization, and typically include several key components (DiMaggio and Powell 1983); in the case of REBs, they consist of:

Key suppliers, which are organizations or entities that provide resources REBs need to function, including universities, research funders (e.g., NSERC, SSHRC, CIHR), and trainers who provide mandatory research training.

Resource and Product Consumers who rely on the outputs or services of REBs, including researchers, participants (as indirect consumers), university administrators, and funding agencies.

Regulatory agencies that govern, standardize, or oversee ethical review practices, including the TCPS, privacy commissions, and institutional governance bodies.

Other organizations that produce similar services or products, like other institutional REBs from hospitals, school boards, independent organizational REBs, and international ethics review boards.

This article examines how universities, while formally aligned under the TCPS, exhibit variations in how REB processes are implemented. While the concept of isomorphism is tricky to unpack in the context of a centrally prescribed system such as this, we highlight a paradox of isomorphism: while institutions and organizations like REBs often unite around shared ethical guidelines and policies, TCPS guidelines and standards are intended to be interpreted and applied locally; thus, their interpretations and applications deviate, creating “red tape” that is difficult to navigate in a presumably standardized ethical landscape. However, the net outcome of this process is isomorphic because the dominant tendency that unites all REBs is an accretion of requirements. Ethics creep, redundant reviews, delays, and inconsistent risk assessments illustrate how institutional norms both align and fragment in practice. This is especially true for graduate students working on multi-institutional research projects, where this inconsistency adds a significant burden to their research and program pathways. This, in turn, impacts their passion and drive for conducting impactful research.

Given this interpretation, REBs can also be understood within the framework of “boundary objects” as described by Star (2010). While all REBs operate under the TCPS-2 framework, their interpretation and application vary across institutions, shaped by local priorities, member experience, and educational roles. The TCPS and similar guidelines can be characterized as boundary objects with considerable interpretive flexibility (Pinch and Bijker 1987), allowing researchers from diverse disciplinary and institutional backgrounds to engage with them according to their own practices and local contexts. Some REBs provide flexible, pedagogical oversight for graduate students, while others expect adherence comparable to faculty protocols. This variability reflects the material and organizational structure of REBs working to meet the information and coordination needs of multiple stakeholders.

REBs also operate at different levels of scale (Star 2010), from student-led projects to multisite faculty studies, enabling coordination without requiring full consensus on procedural details. Yet this interpretive flexibility can clash with the TCPS’s original intentions, particularly when local adaptations diverge from prescribed norms. Critics have highlighted the resulting lack of standardization, which complicates multisite research, and concerns over ethics creep, in which oversight extends beyond intended risks and imposes procedural burdens (Haggerty 2004). Viewed as boundary objects, REBs simultaneously enable cross-institutional coordination and local flexibility while producing uneven outcomes and tensions across disciplinary and institutional contexts.

By examining methodological constraints and risk aversion, institutional redundancy and delays, and other related issues from the perspective of graduate researchers, this article contributes new empirical insights into the implications of institutional redundancy and procedural delay in Canadian research ethics. In doing so, it highlights the need for more responsive and equitable ethics review systems that consider the structural and institutional vulnerabilities of emerging scholars.

Methodology

Data for this research were collected through an online survey disseminated primarily to postsecondary faculty and students in Social Sciences and Humanities across Canada. We received ethics approval from the REBs at both Wilfred Laurier University (#8793) and McMaster University (#7035). Our survey had a total of 28 questions, which included student and faculty-specific questions.

Beginning in late February to early March 2024, we reached out to 82 scholarly associations that are members of the FHSS via email, requesting that they circulate our study opportunity to their members. The email included a brief explanation of the study, an attached LOI, and a sample of a message they could share with their membership. Only 15 FHSS Associations responded with an agreement to circulate the survey. Through this initial process, a colleague shared a list of publicly available email addresses for 4,625 faculty members in Economics, Sociology, Political Science, and Anthropology. After an REB protocol amendment, we emailed these colleagues directly—a single time, with no incentives—with an invitation to participate in our survey. We also ran a social media campaign on LinkedIn and X/Twitter. We closed the survey on April 30, 2024. After these efforts, 620 unique individuals reporting 58 different institutional affiliations responded to the survey. This article focuses on the student responses, which account for approximately 15 percent (n = 93) of survey respondents. To protect participant anonymity, demographic information is not reported. Although some participants voluntarily provided their academic or research discipline, this was not required for survey completion. Where participants shared their discipline, it is included in the findings only when relevant and appropriate.

For the closed-ended questions, we simply report the descriptive statistics of the survey item for students. For the qualitative data, we employed an inductive, open-ended, line-by-line coding approach to analyze responses to open-ended survey questions. This method involves breaking down the raw text into smaller units of meaning without relying on pre-established categories or themes, allowing for the emergence of novel concepts directly from the data (Kennedy and Thornberg 2018). Coding was conducted using a combination of Microsoft Word, Excel, and printed copies of the survey responses. Responses were first organized by question or thematic similarity within a Word document, using a line-numbering system to facilitate reference during coding. We then engaged in close reading, highlighting segments of text, writing margin notes, and recording analytical memos in a notebook. Each response was read multiple times to identify recurring patterns and to ensure we exhausted all notable themes. This iterative process allowed us to develop a grounded understanding of participants’ perspectives. It is important to note that not all survey questions were mandatory; in particular, participants were not required to respond to the qualitative, open-ended items. Consequently, the quotes presented in the findings section reflect the perspectives of those participants who chose to answer the follow-up questions, including responses to the prompt, “Do you have any other comments or accounts of REB interactions you wish to share?”

Future research could benefit from a dedicated qualitative study exploring faculty and student experiences with REBs, which would allow for a more in-depth analysis of the patterns identified in this study.

Findings

Our findings reveal a complex interplay between the need for ethical oversight and the practical realities faced by graduate students. We provide a nuanced understanding of how current REB practices hinder rather than help graduate students’ research endeavors, alongside recommendations for more equitable and effective ethics review processes.

Co-opting Knowledge and Expertise

Critics argue that reviews by ethics committees often lack specific knowledge and expertise in certain ethical contexts (Head 2020). Our graduate student respondents highlighted that most REB members did not seem to be experts in the applications they reviewed and lacked understanding of qualitative methods. For instance, several noted that their REB applications were evaluated by academics from medical, biology, or science research fields, which may lead to reviews influenced by personal biases linked to their expertise in clinical, quantitative disciplines. One graduate student shared that their low-risk qualitative study on disability was subjected to questions typical of medical and science research, automatically implying a higher risk level: “[my interviews] are incredibly low risk and I felt that some of the questions being asked in the REB process were not applicable or not appropriate for the research I’m actually doing.” Another student reported that their REB, following a quantitative, medical model, made the approval process “very time-consuming and [the REB] placed far more requirements on my research than was needed given the type of [ethnographic] research I was doing.” This focus on particular research methods and disciplines, along with methodological bias, can lead to extensive “back and forth” between reviewers and researchers. In some cases, our graduate student participants found reviewer contact to be inconsistent in response times, ineffective in providing feedback, and often accusatory regarding issues with their applications.

How REB expectations shape university courses

Of the 77 students who responded to the question, 52 percent had been told by a faculty member that they had designed course assignments to avoid data collection because of concerns about the effort needed to complete the REB process and/or possible delays. This is in comparison with 71 percent of faculty respondents (n = 441) who said they avoided designing student assignments that involve conducting any form of data collection because of concern that the university REB process would make these assignments too burdensome. The higher rate of faculty members reporting this as a problem compared to the lower rate of graduate students suggests that not all faculty members would tell their students that they avoid data collection in course assignments—whether or not they divulge this information with their students is a very personal choice. It may also suggest that the faculty who answered the question have specific negative feelings toward the REB system, which are influenced by their own experiences and those of colleagues.

Playing the Game: REB Scrutiny in Shaping Graduate Research Practices

One participant, who conducts research in the arts-based and speculative space, explained the research ethics space in academia as one where you must “play the game” for approval, particularly when the research must be translated into something “digestible” for the review committee:

[. . .]even while, of course, you have a desire to maintain high ethical standards, it’s not always easy to spell that out in a project that does not follow a scientific approach with pre-determined outcomes or methodologies. It makes it difficult to do innovative work because you have to fit it into a preconceived box.

Most graduate students seeking ethics approval for their research projects are expected to “play the game,” which can be a challenge. However, graduate students are also conducting research under specific timelines, both in funding and completing their degree requirements. Under the regulations and perceived scrutiny of REB processes, many graduate students experience timeline extensions, which have both professional and economic costs that weigh heavily on many graduate students; in many cases, research funding expires during this process, leaving students feeling discouraged, stressed, and more inclined to change their projects for ease of ethics review.

We also found evidence that students believed in the conceptual importance of REBs to protect students, but that in practice the REB process seemed to be more concerned with protecting university liability than minimizing harm to potential respondents:

I appreciate the need for ethical review of research, particularly as universities do not exactly have an excellent reputation historically for ethical research conduct. However, the ethical review process feels more as a review to protect the university’s reputation than it is to protect anyone participating in the research.

Avoiding primary data collection and analysis due to REB concerns

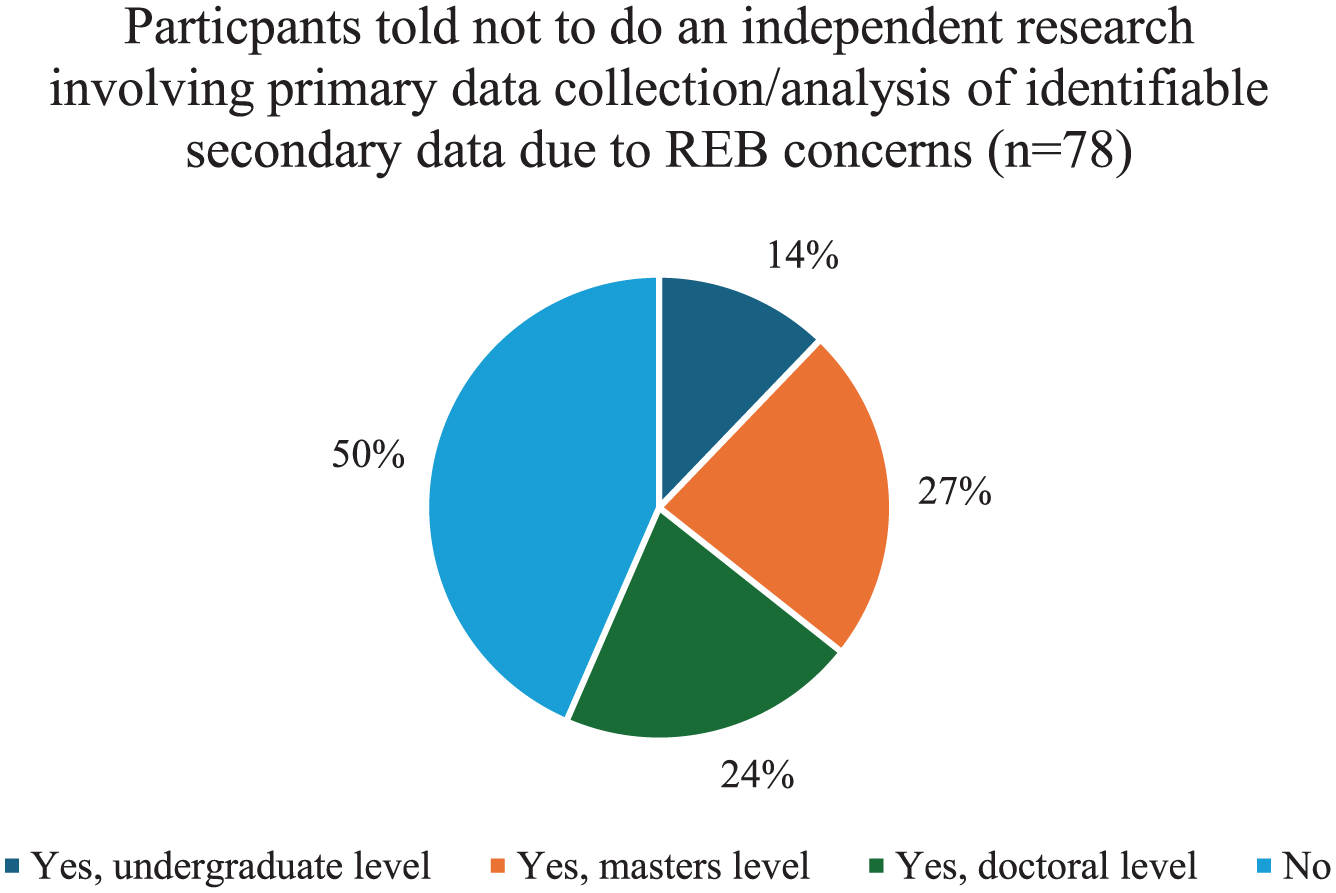

Due to timeline and funding pressures, many graduate students avoid conducting primary and secondary identifiable data collection to stay on track and avoid undue scrutiny from the REB. As shown in Figure 1, when asked if our respondents have ever been told not to do an independent research project that involves collecting primary data or analysis of identifiable secondary data because of concerns about the REB process, 50 percent said no, and 50 percent said yes, of which 14 percent were at the undergraduate level, 27 percent at the master’s level, and 24 percent at the doctoral level. There was also some overlap where several respondents noted they were told to avoid research projects that involve data collection/primary/identifiable secondary data analysis in all three levels of schooling.

Student participants advised to avoid primary data collection or analysis of identifiable secondary data.

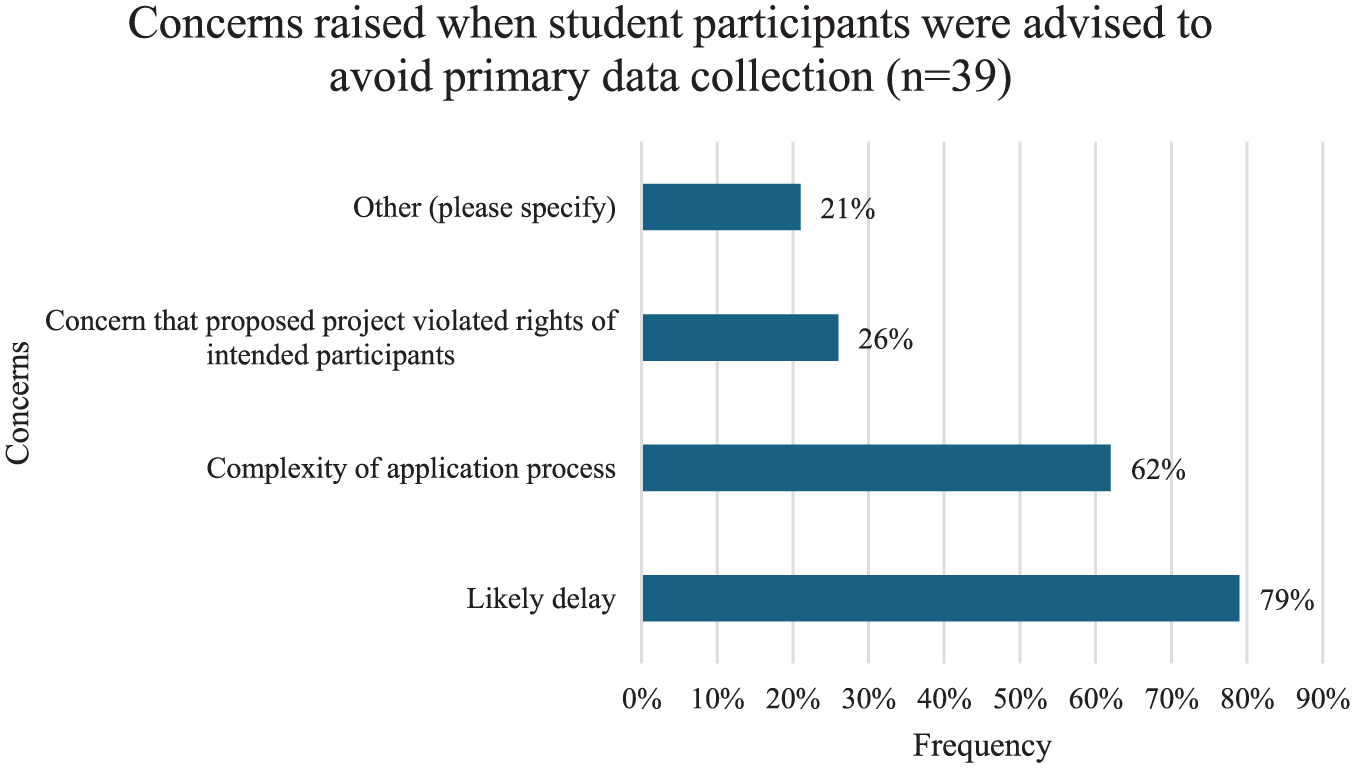

Concerns raised when student participants were advised to avoid primary data collection.

Respondents who received that advice emphasized a variety of concerns about the REB process. Sixty-two percent noted the complexity of the application process, and 79 percent noted that their projects would likely be delayed. For example, one doctoral-level student noted how the time and effort of doing an REB application would outweigh the value of it for her PhD:

The REB application process would require more time/effort than would be worth for my PhD was one major reason my supervisor suggested I use an existing dataset instead of conducting my own survey and/or interviews.

Over a quarter of students stated there could be concerns that the proposed project violated the rights of intended participants; for example, a doctoral-level graduate student in criminology explained that in receiving the advice to avoid primary data collection, “[There was] concern that the population was too precarious and vulnerable to have their voices heard (crime research).” Perceived challenges like this, and the advice that follows, are often informed by personal and observed experiences. REBs often adopt broad risk-averse norms, behaviors, and policies, operating under the assumption that research involving marginalized and/or at-risk groups is inherently risky. This can lead to the exclusion of vulnerable populations from research participation, not necessarily because of actual harm, but because they are viewed as too “at-risk” to participate safely.

Just over 20 percent of respondents raised other concerns, including,

I was told that using secondary data was easier because doing so avoided the REB process altogether. [There was concern] that [my project] simply would not go through [ethics approval] because snowball sampling is not permitted at my institution, even though that contradicts TCPS-2. [There was a] lack of expertise at REB to evaluate the application.

These respondents’ experiences emphasize the perception that REBs often take a rigid, risk-averse approach. Avoiding ethics review by using secondary data, banning snowball sampling despite national guidelines, and lacking expertise to evaluate certain applications point to experiences with REB systems focused on checking boxes, which deters many researchers; in fact, the percentage of our participants who have ever been deterred from researching because of actual or perceived requirements and/or workload associated with the REB process showed a near-equal distribution—49 percent have never been deterred, while 51 percent have been deterred.

The emotional and professional toll of REB red tape

Our open-ended responses revealed conflicting perspectives on the effectiveness of REBs. Overwhelmingly, respondents were left discouraged after lengthy back-and-forth communications with their institutional REBs. One respondent, for example, explained their experience was time-consuming and full of contradictory recommendations:

The process I did (twice) was overkill for the kind of research I was doing. It was very time consuming, and they placed far more requirements on my research than was needed given the type of research I was doing. They seemed to follow a medical model, but my research is ethnographic. Also, the process was frankly crazy, with endless comments pouring in, many contradictory recommendations, different recommendations for the second phase of research that should have been the same as for the first phase and so on.

In this case, the graduate student conducting ethnographic research believed their REB follows a biomedical-style review process, resulting in unnecessary obstacles, conflicting feedback, and excessive back-and-forth. This illustrates the expansion of oversight beyond the original purpose of the REB (ethics creep) and embodies the paradox of isomorphism: while this REB adopted a dominant model of research and ethical review and presumably follows TCPS guidelines, their interpretations and applications of those guidelines deviate, leading to contradictory reviews, unnecessary delays, and inconsistent risk assessments.

These contradictory recommendations, multiple review processes, and bureaucratic expectations can often lead to miscommunication. Several respondents felt that the “blame” for miscommunication often fell on the researcher(s), who were then scrutinized for their perceived lack of effort:

I read a paragraph explaining that I was doing participant information to a group and had it signed. I kept it in my files. One year later I was informed that I was supposed to submit the signed sheet to my REB (I was under the assumption that, as with my interviews, I was fine so long as I kept a dated and signed permission form from the participants). I had to have a meeting with my supervisor, where I was told my year of research couldn’t be used. We both begged. It should have been a non-issue, as I had permission from all participants, and it was miscommunication on the part of the REB that was the error. I was treated by the REB representative like an evildoing fool. [My experience] made me really dislike the control the REB has over earnest dignified research, as they had the ability to dismiss not only the work of the researcher, on a technicality, but also dismiss the voices of the participants who otherwise had no problem whatsoever and wanted to be heard, and were willing to attest to this on my behalf (and their own). The power dynamic between my REB and researchers is horribly off-balance.

The experiences of these participants illustrate the emotional and professional toll that rigid REB procedures can impose on graduate student researchers, particularly when miscommunications result in the invalidation of otherwise ethically sound data. Their experiences also underscore a power imbalance, where the REB’s authority overrides both researcher intent and participant autonomy, effectively silencing voices that research ethics frameworks are meant to protect. This signals the need for more dialogical, context-sensitive ethics practices and processes that value procedural flexibility alongside participant protection. This underscores a broader tension between regulatory oversight and research feasibility, particularly for early-career scholars navigating institutional structures and bureaucratic procedures.

Cultural Sensitivity and Responsiveness

The lack of cultural responsiveness and sensitivity within REBs was highlighted extensively throughout our survey results. We asked, “Have you designed research to avoid looking at identity factors as variables (e.g. demographic characteristics like sex, gender, or race) because you suspect it will lead to additional REB scrutiny or delay?” Of those who responded (n = 294), just over half (54 percent) said they had avoided including identity factors in their research to reduce ethics board scrutiny. An even larger share (70 percent) reported avoiding questions about vulnerable populations. These results underscore how concerns about oversight can shape—and restrict—the research that scholars undertake. While these statistics are representative of all respondents (including faculty and research staff), student respondents emphasized a disconnect between REB expectations and the practices student researchers believed were critical for conducting research with both vulnerable populations and participants from other cultures. One PhD student reflected on the contradictions between REB expectations regarding data collection with human participants and their work with people in a rural international location

2

:

I did my PhD fieldwork in rural [international location] because I [have personal ties to the rural area]. REB’s expectations about human interaction were not compatible with the local culture at all. I was lucky enough to have REB accept verbal consent on some occasions, but asking for written consent created a lot of suspicion and mistrust. In fact, this coupled with COVID measures caused me to lose a lot of important contacts as well as time and money since the PhD got prolonged.

REBs in Canada are largely structured around Western frameworks of knowledge, ethics, and consent, which limit their applicability and sensitivity in diverse research contexts. For example, a respondent in our survey discussed how their REB seemed to be uninformed or not reflective on the cultural norms in other countries regarding consent and information letters:

People I interviewed laughed at me when I shared the information consent forms I was required to give them (almost 4 pages of words to people with time constraints). This negatively affected the rapport I built with my participants and on one occasion put me at risk personally. I worked on a qualitative research project with rural populations in South America, and being required to include and inform them that I would report child abuse was insane and put me in danger. The sentence regarding child abuse is broad enough that can be interpreted by participants as a foreigner imposition on indigenous or peasant traditional practices in a way that justified discrimination against them (as clearly known in the Canadian—Indigenous history). [. . .] Because of this insensitive sentence that the REB requires, the community I was conducting research with lost significant trust in me. This is because it made them believe that I would share their secrets with authorities, despite me reassuring that their data was safe with me.

Instead of improving researcher and participant safety, the “safeguarding” practices enforced by the REB had the opposite effect in this case, exposing the researcher to potential physical and relational harm. Both quotes reveal a troubling contradiction: the very protocols meant to prevent harm can, in some situations, cause harm. This is not just a minor implementation flaw, but a structural problem rooted in an REB’s broad assumptions about ethics and risk, which often overlook the social and cultural nuances of fieldwork in non-Western contexts. The graduate student researcher was required to provide a four-page consent form, which participants found unfamiliar and unnecessary, undermining trust, damaging rapport, and raising suspicion. Furthermore, the mandated inclusion of language about child abuse reporting signaled to the community that the researcher might serve as an agent of surveillance or colonial discipline, echoing long-standing histories of foreign interference. This highlights a key tension: the REB, in trying to exercise ethical authority from afar, often worsens existing power imbalances on the ground. Researchers are caught between institutional compliance and respecting local norms and sensitivities and must choose between safety and protocol. When they attempt to strike a safe balance independently, they risk facing backlash from the REB. Therefore, as seen in this case with the graduate student researcher, REB protocols can inadvertently make researchers more vulnerable under the guise of protection.

Discussion

Our research suggests that issues related to ethics burden are prevalent in Canadian postsecondary institutions and are likely having a significant impact not only on the amount and types of research undertaken in the social sciences and humanities and the extent to which we understand issues of equity and issues facing vulnerable populations, but there is a significant impact on how graduate students navigate research-focused degrees and develop the essential skills for conducting and disseminating impactful research. Our findings suggest that graduate students (as emerging scholars and researchers) may experience significant procedural burdens when navigating REB processes. These burdens can arise from their relative inexperience with institutional protocols, the need to balance expectations from their REB and department, the availability of resources, and varying levels of mentorship or support. While this study does not systematically quantify differences across graduate programs and degree stages, our findings align with prior literature indicating that emerging researchers often face unique challenges in completing ethical review requirements efficiently (van den Hoonaard 2001; van den Scott 2016).

A separate manuscript on the impact of ethics creep on teaching and learning in the Social Sciences and Humanities focuses on the faculty perspective and shows that the hassle and delay associated with ethics review restrict students’ opportunity to gain hands-on experience with research techniques, including interviewing, surveying and secondary data analysis. This sentiment is felt by our student respondents, many of whom feel discouraged and blamed for miscommunications and wrongdoings, ultimately impacting their attitudes toward and interest in conducting data collection in the future.

This perspective invites a shift away from viewing REBs solely as bureaucratic obstacles and toward understanding them as sites shaped by broader institutional, political, and historical forces. Rather than rejecting ethics oversight altogether, researchers might engage more critically and constructively with REBs, advocating for review processes that recognize diverse epistemologies and ethical frameworks, particularly those grounded in community-based, participatory, or decolonial approaches. For graduate students and emerging scholars, this means that mentorship and institutional support must focus not only on navigating procedural requirements but also on fostering dialogue about the values and power relations embedded in ethics governance. Acknowledging the tensions raised by ethics creep, while also considering its potential to surface neglected ethical responsibilities, may help move the conversation beyond critique and toward reform.

Addressing Cultural Responsiveness and Sensitivity in REBs

Bell and Kothiyal (2018) note that a lack of cultural sensitivity within research ethics runs counter to Indigenous research principles, which work to acknowledge and redress power relations that result in the marginalization of non-Western forms of knowledge. Many respondents emphasized the need to broaden REB frameworks and practices to incorporate Indigenous ways of knowing and community-centered values. Such an approach would not only strengthen ethical research practices with Indigenous, First Nations, Métis, and Inuit communities but also enhance the relevance and responsiveness of ethics review processes in international and cross-cultural settings. Our respondents believe that decolonizing the REB process involves reimagining ethics review to prioritize community needs, recognize collective forms of knowledge, and create space for culturally grounded methodologies that extend beyond Western frameworks. Indeed, Bell and Kothiyal (2018) emphasize that decolonizing methodologies (Smith 2022) are an effective way to encourage reflexive investigation of deep-rooted assumptions, ideologies, and conceptions that strongly influence research practices. Decolonizing approaches to research ethics start from the belief that research is done with humans rather than on them (Bell and Kothiyal 2018; Czymoniewicz-Klippel, Brijnath, and Crockett 2010), building on an ethics of care and values of integrity, reciprocity, and responsibility for personal conduct. Working with a decolonized approach to research ethics is also built on the importance of understanding local cultural context and building trust with research participants before approaching the discussion and negotiation of informed consent (Bell and Kothiyal 2018; Czymoniewicz-Klippel et al. 2010).

Returning to the Paradox of Isomorphism

The framework of institutional isomorphism (DiMaggio and Powell 1983) is a great explanation of how university REBs have developed to this point, but can also be used to understand the best pathway forward. In analyzing the current state of REBs across Canadian Universities as a paradox of isomorphism, our results show how REBs are working counterproductively in protecting research and researchers because they are nonstandardized and go far beyond the bounds of what they are meant to achieve. While REBs are governed by shared ethical guidelines and policy (TCPS), their interpretations and applications can deviate and create difficult conditions to navigate, which presents an obvious challenge of navigating a presumably standardized but uneven ethical landscape. While our findings and subsequent recommendations suggest more consistency is needed across institutions, every university is situated in a unique geographical, cultural, and environmental context and ethical landscape, and each REB receives ethics applications across a diverse landscape of research topics, areas, and methods. Thus, while a certain level of standardization in following TCPS guidelines would be impactful, we recognize that practices and approaches that work at one institution may need to operate differently at another. This variability also echoes the way REBs function as “boundary objects” (Star 2010), flexible enough to be interpreted differently across local institutional settings while still coordinating researchers under the broader TCPS framework. Reframing REBs in this way helps explain why they simultaneously enable shared ethical oversight and generate uneven procedural experiences for researchers and graduate students, particularly when local interpretations stretch or diverge from the policy’s original intent. The recommendations below could provide insight into this debate, offering impactful ideas for the Tri-Council and associated institutions.

Recommendations

In a separate article (Gallagher-Mackay, Robson, and Pulchny, under review) that discusses our findings, we provide recommendations for the Tri-Council to reduce the volume and complexity of reviews, which are equally applicable within the context of this article. First, we suggest the Tri-Council could adopt a “common rule”–style approach that expands exemptions for minimal-risk survey, interview, and observational research with adults. Second, all REBs under Tri-Council authority should be required to provide a clear, standardized exemption-determination process on their main webpage. Third, the Tri-Council should use its spending power to ensure institutional acceptance of reviews conducted by other TCPS-2–compliant REBs. Finally, REBs should limit gatekeeping activities that extend beyond their core mandate of protecting research participants.

In addition to these recommendations, our student respondents provided practical suggestions for improving institutional REB processes, which was not something we asked for in the survey. Respondents willingly shared practical suggestions in open-ended responses to questions about their experiences with REBs, and in their responses to “Do you have any other comments or accounts of REB interactions you wish to share?” Some institutions already adopt these measures, but they are not universal across Canada:

REB workshops for students (undergraduate honors students and graduate students) who are interested in pursuing data collection for research projects.

Instructional videos on institutional REB websites to help new and returning researchers navigate the application process.

Useful links on institutional REB websites include application checklists, FAQ for common application mistakes, and sample ethics applications for different kinds of research.

Expedited approval processes for minimal-to low-risk studies for graduate students who are on strict funding and research timelines. These applications could be reviewed by departmental committees under established research ethics guidelines to expedite the process.

Limitations and Conclusion

This survey was based on a convenience sample collected at a single time point. Due to the lack of a comprehensive directory of social science and humanities researchers (and their contact details), we relied on publicly available information. It is unclear to what extent the survey may have been forwarded beyond our initial contacts and shared with graduate students. It is, of course, likely that our respondents are overrepresented in terms of those who have had lengthy and unpleasant interactions with their respective REBs.

Our survey-based design provided limited opportunities for respondents to offer contextual detail, resulting in a lack of qualitative depth in some areas. Although an open-ended item invited additional comments about REB interactions and yielded rich responses, several were too incomplete or unclear to quote without risking misinterpretation. As a result, respondent quotations appear selectively, reflecting the quality and clarity of the data provided. In addition, our data did not provide sufficient insight into the relationship between students and their mentors or supervisors, despite this being a crucial dynamic in shaping early research training. Because respondents rarely commented on these interactions, we were unable to analyze how mentorship practices influence students’ perceptions of, or navigation through, REB processes. We acknowledge that this relationship is especially important during the formative years in which emerging scholars develop their skills and professional identities, and that mentors play a vital role in their education, success, and even their interactions with REBs. This remains an important gap in which future research—particularly conducting qualitative interviews with a smaller sample of participants—could explore these contextual and relational dimensions in greater depth.

Nonetheless, the findings reveal some troubling trends: REBs, in several cases, appear to be impeding academic inquiry and the development of emerging scholars who are passionate about conducting critical primary research through burdensome processes, regulatory overreach, and overly cautious risk assessments that assume highly improbable vulnerabilities. We offer a series of recommendations aimed at addressing these issues—specifically, reducing barriers for low-risk research, eliminating redundant REB reviews for multi-institutional studies, and curbing the gatekeeping role that REBs have come to play—and provide suggestions from our student respondents on making REB processes more approachable and supportive.

Footnotes

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.