Abstract

Background:

Diet interventions often have poor adherence due to burdensome food logging. Approaches using photographs assessed by artificial intelligence (AI) may make food logging easier, if they are adequately accurate.

Method:

We used OpenAI’s GPT-4o model with one-shot prompts and no fine-tuning to assess energy, fat, protein, carbohydrate, fiber, and salt through photographs of 22 meals, comparing assessments to weighed food records for each meal and to assessments of dieticians.

Results:

The model had poor performance overall. For fiber, though, the model achieved an intraclass correlation coefficient of 0.71 (0.67-0.74 95% CI), well above the dietician performance of 0.57.

Conclusions:

The simplest use of current AI via one-shot prompting and no fine-tuning accurately assesses fiber content in meals but is inaccurate for other nutritional parameters.

Introduction

Type 2 diabetes (T2D) affects hundreds of millions of patients worldwide, causing serious cardiovascular and microvascular complications, including blindness, amputation, and death. 1 Changes in diet are a key part of treatment recommendations. 2 Although dietary changes have proven clinical benefit, 3 patients struggle to change their diets. A class of interventions relies on feedback to patients about their food choices, so that they can modify their dietary behavior based on data. Assessing diet throughout the day is challenging. Logging food, such as from within a smartphone application, is notoriously difficult, with generally low usage rates. 4 For example, our DialBetesPlus intervention 5 had high usage rates for measuring exercise (68.5–81.6%) but low usage rates for diet recording (37.2%-54.0%).

Anecdotally, our patients report that they often take pictures of meals then record the meal later. Researchers have experimented with assessing meals from photographs.6-9 For instance, we conducted trials where the photographs were reviewed by licensed dieticians who provided nutritional assessments and feedback. 10 This feedback was not real time, with a delay of 1 or 2 days, and patients generally did not find the feedback to be useful.

Researchers have experimented with using machine learning (ML) to assess the nutritional content of meals. For example, we used custom models to make an ML assessment of “healthiness” trained on the opinions of licensed dieticians.11,12 By avoiding a human in the loop, these approaches offer near-real-time feedback at very low cost. Until recently, results were not especially good. The recent development of Transformers-based large language models (LLMs) 13 has changed this landscape. Even if current LLMs cannot provide the accuracy needed to support a medical intervention in diet, it seems likely that soon-to-appear more advanced LLMs will provide this accuracy. One company, Healthify, trained (via fine-tuning) an earlier version of OpenAI’s generative pre-trained Transformer (GPT) to give nutritional feedback based on photographs of meals, albeit in a health/wellness context rather than a medical one. 14 Fine tuning takes time and money, with the likelihood that all the work put into a particular LLM will be outdated by the next release of a far more advanced LLM, and multi-shot prompting worsens user engagement due to increased latency. In this study, our objective was to assess the accuracy of current generation LLMs, without use of fine-tuning and using simple one-shot prompting.

Methods

We used a database of Japanese meals. 15 The meals were prepared with weighing of all ingredients, forming a weighed food record (WFR) with very high accuracy assessment of the nutritional content. We treat these nutritional assessments as ground truth. Meals were also photographed (Figure 1). We provided these photographs to an LLM to get nutritional estimates.

Example meal photograph.

Estimating nutrition from just a photograph is challenging, though it can provide useful levels of accuracy. In our earlier work, 15 we inserted these photographs into the workstream of dieticians who were assessing the nutritional content of the meals of patients with diabetes using our DialBetics (DB) app to get their nutritional assessments. This performance of expert humans provides a good benchmark for comparisons.

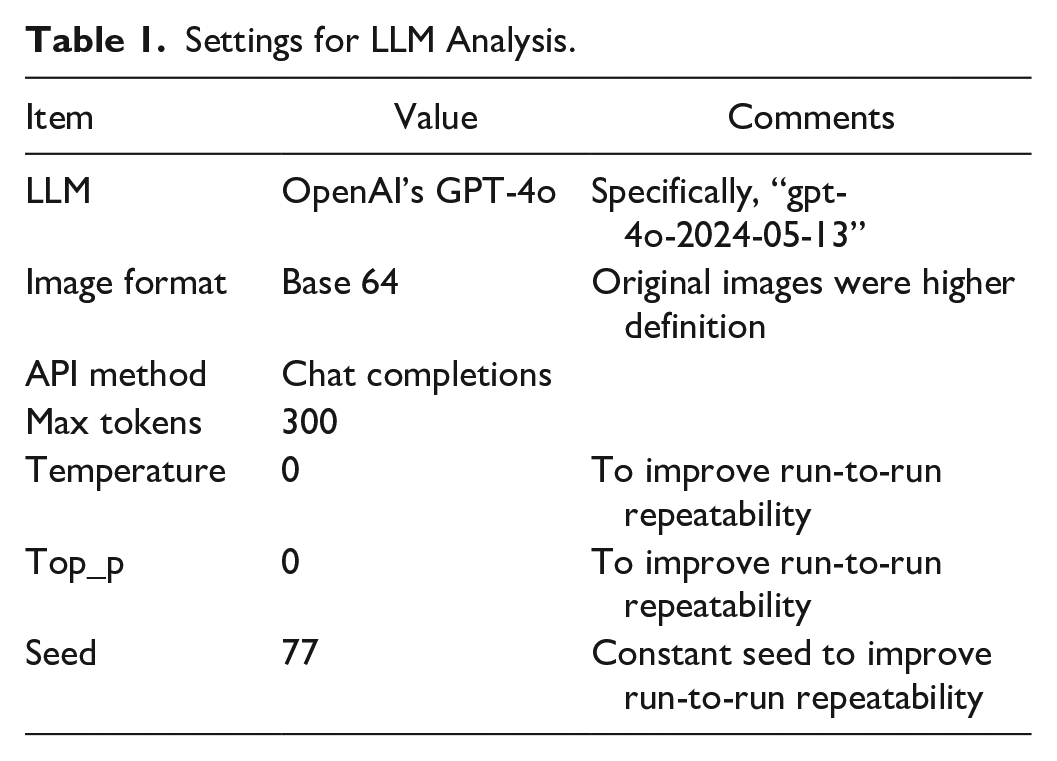

In this study, we used OpenAI’s “gpt-4o-2024-05-13” model, without fine tuning, via application programming interface (API) 16 as a representative leading LLM. This model was released while this work was underway, so it was state-of-the-art at that time. We used image-processing oriented settings (Table 1) with one-shot prompts 17 to task the LLM to generate a JavaScript Object Notation (JSON) object capturing the requested nutritional estimate. We used separate prompts and separate LLM engagements for each of the 6 nutrients of interest (energy, fat, protein, carbohydrates, dietary fiber, and salt.) Because LLM’s show significant run-to-run variability, for each nutrient we ran each set of 22 meals with 11 repeated trials, and report the mean ICC (comparing the LLM’s estimate with ground-truth from the WFR). All ICCs are two-way mixed effects, absolute agreement, single rater.18,19 We also assessed the mean absolute error (MAE) and root mean squared error (RMSE). All model software was custom Python code (Python 3.12). All statistical analysis was done in R (R 4.2.1).

Settings for LLM Analysis.

Results

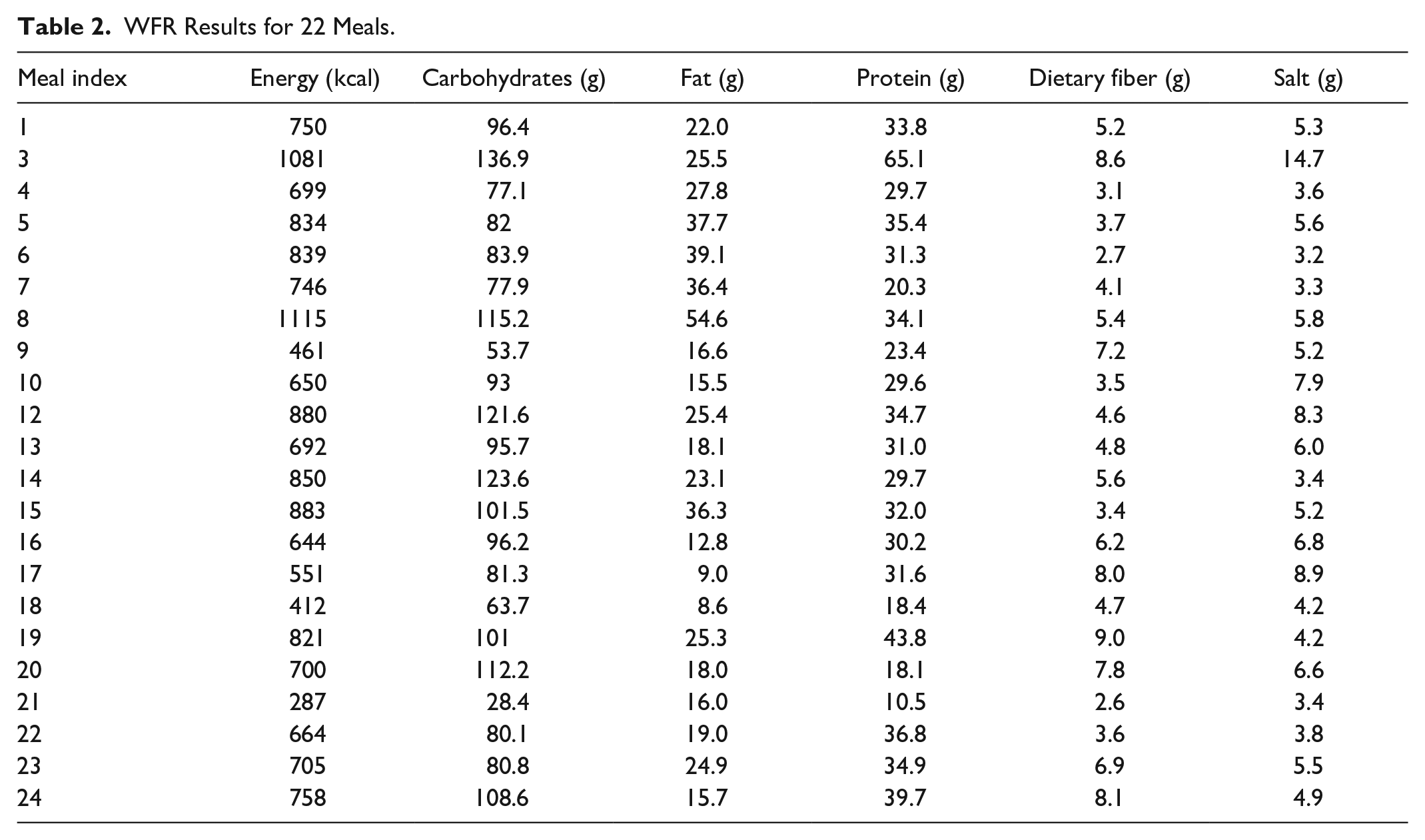

We analyzed the original database 15 and identified 22 meals based on 56 dishes that had complete data. The WFR for these meals (Table 2) shows a diverse range of nutrient values, with energy ranging from 287 to 1115 kcal and dietary fiber ranging from 2.6 to 9.0 g.

WFR Results for 22 Meals.

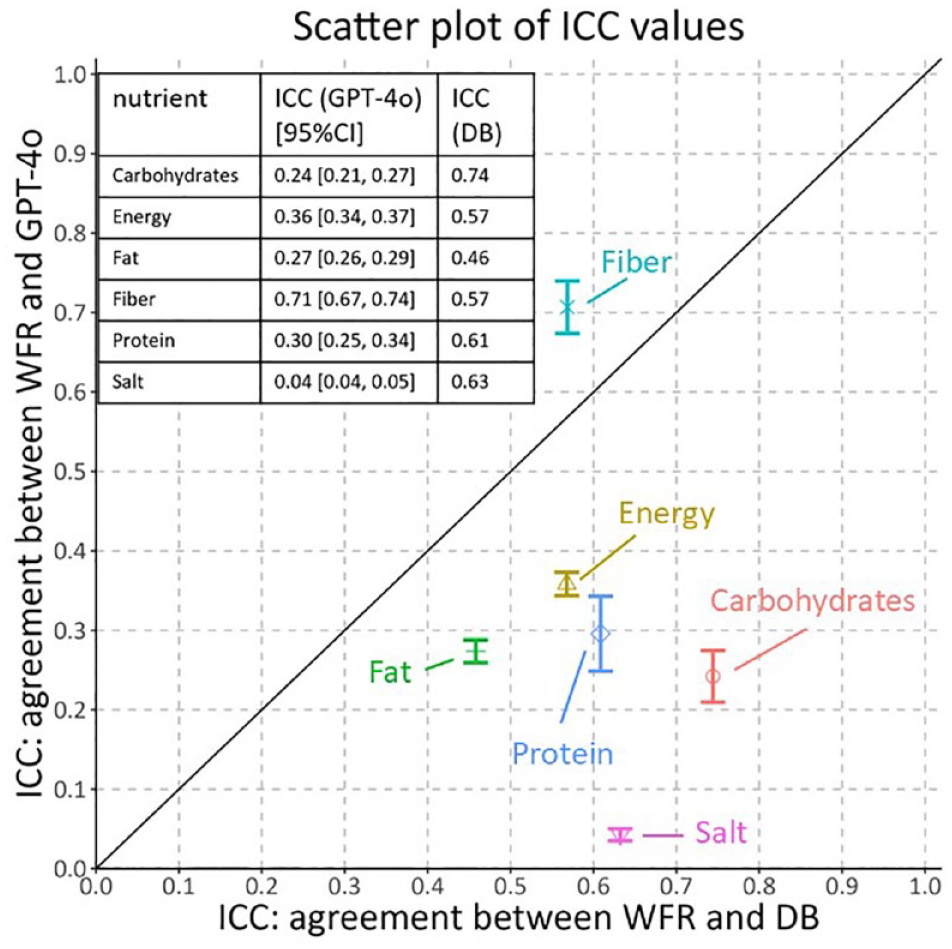

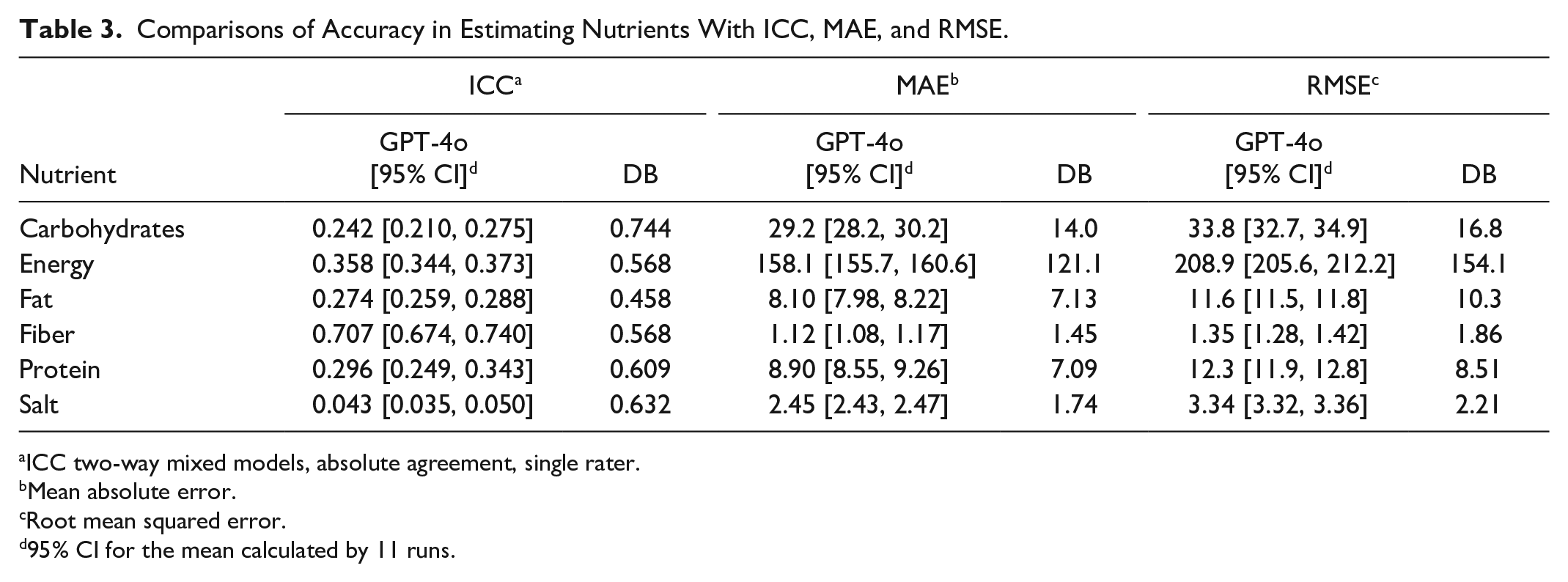

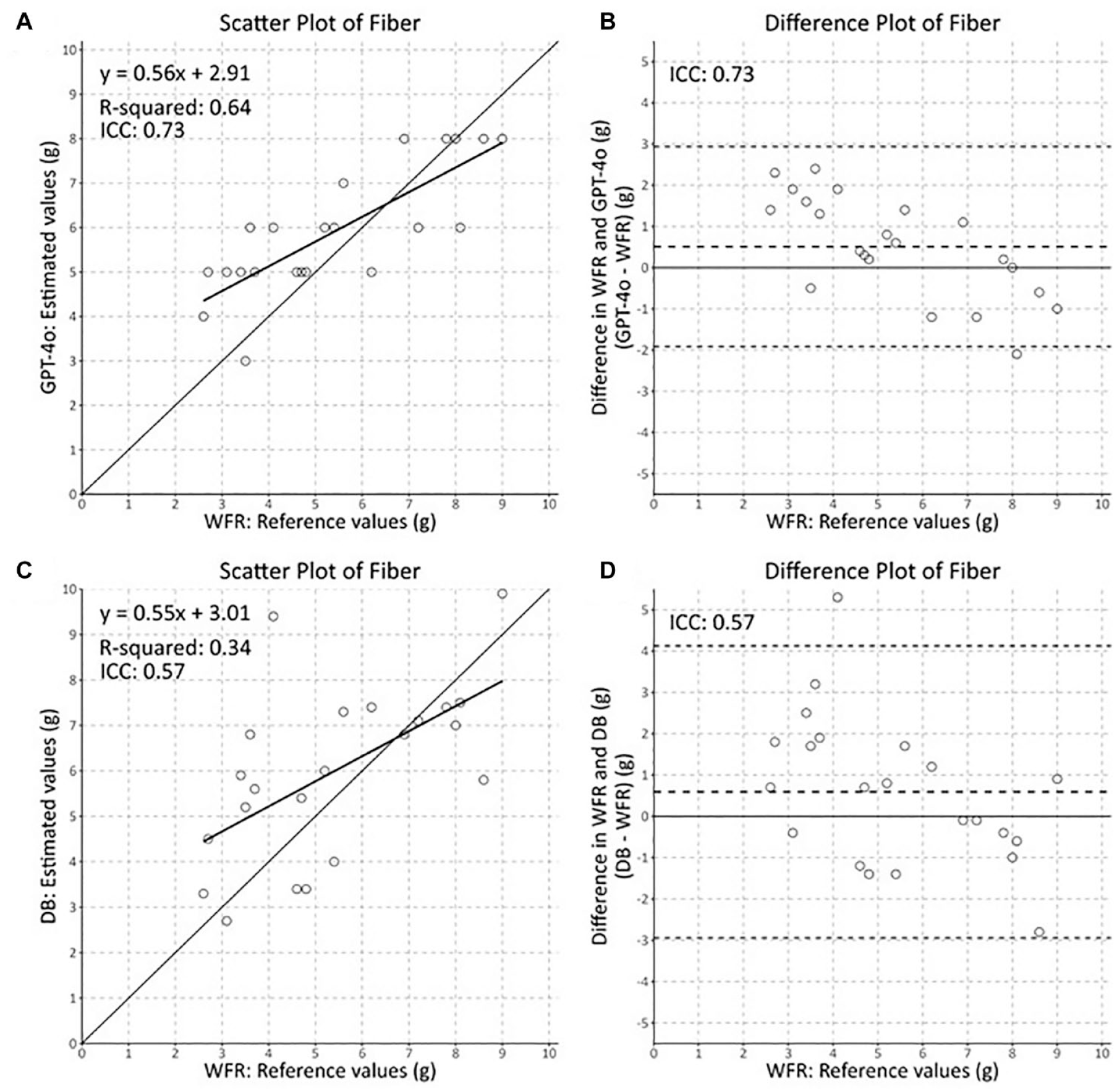

The ICC values for the DB dietician results and LLM results (Figure 2) show that, while the dieticians generally do quite well relative to the WFR, the LLM had mixed and generally poor results. Results were quite poor for 5 of the 6 parameters, energy, fat, protein, carbohydrates, and salt, with ICCs of 0.36 and lower, far below the ICCs of the DB dieticians’ estimates. For fiber, though, the ICC of 0.71 was markedly superior to the ICC from the DB dieticians’ of 0.57. The results have similar trends for MAE and RMSE (Table 3), with fiber the only nutrient with smaller errors than achieved by the DB dieticians. The individual fiber estimates were generally reasonable with similar characteristics as the dietician estimates (Figure 3). Latency was low, averaging 5.4 seconds per photograph. Costs were moderately low, averaging around $0.01 per photograph. Our attempts to use the “gpt-4o-mini” LLM led to universally poor performance for this task (results not shown). Similarly, our attempts to use the updated “gpt-4o-2024-08-06” LLM produced ICC’s that were markedly inferior to those from using the “gpt-4o-2024-05-13” model (results not shown).

LLM ICCs and DB dietician ICCs for 6 parameters.

Comparisons of Accuracy in Estimating Nutrients With ICC, MAE, and RMSE.

ICC two-way mixed models, absolute agreement, single rater.

Mean absolute error.

Root mean squared error.

95% CI for the mean calculated by 11 runs.

Scatter plot of DB dietician and LLM fiber estimates versus WFR.

Discussion

Performance was sensitive to minor changes in prompt wording. Additions of instructions laying out steps to use in making the assessment following methods used by dieticians frequently worsened performance. We saw worse performance using a new version of GPT-4o, suggesting that performance on this task is unusually sensitive to details of the specific LLM implementation; further study is needed to understand the reasons and impacts of this. Performance for fiber started out well with our first try at a prompt, with ICCs around 0.55, and incremental changes via trial and error were able to improve the performance. For other parameters, we were not able to make prompt changes that led to acceptable performance. Better prompts could have provided better performance, but we were unable to find such prompts. For most of the nutrients, the current generation of LLM using this simple one-shot prompt engineering without fine tuning does not provide adequate accuracy for most applications. For fiber, though, the level of accuracy is high enough that it may be acceptable for many applications. Performance for all nutrients would likely be much better if multi-shot prompting or fine-tuning were employed.

Predicting salt levels from a photograph is difficult. There is no way to see the salt, and assessments have to be made based on typical content for meals of this type. It also makes some sense that predicting fat and carbohydrates (and thus energy level) is difficult, as some ingredients (such as oil or sugar) are largely invisible. We expected to achieve better estimates of protein, as many sources of protein would be visible in photographs, but we did not achieve good results for protein. Although an assessment from a photograph should never be expected to match that achieved from weighed food records, dieticians are able to make assessments with usable levels of accuracy, and we should expect a stock commercial AI, without fine-tuning or multi-shot prompting, to be able to do it also, some day.

It is interesting that the LLM’s fiber estimates were more accurate than the dieticians’ estimates. Perhaps there is less of an impact from hidden ingredients, with the LLM able to see things like legumes or vegetables in the photograph. Perhaps the task benefits from specificity, as most ingredients don’t contribute much fiber so the model can focus on the high contributors.

The fiber results are very encouraging. Eating an adequate amount of fiber is a key part of diabetes treatment recommendations, 2 but few patients meet guidelines. For example, in Japan, one cross-sectional study showed an average intake below 13 g among patients with diabetes, 20 far below a recommended value of 35 g per day. 21 Helping patients measure fiber levels with some degree of accuracy opens up interesting possibilities for interventions to increase fiber consumption among patients with diabetes, and we think that the level of accuracy that we have achieved will be useful in such interventions.

This study was a quick look, and it has significant limitations. The number of meals, 22, was low. There is some risk that prompt iteration led to overfitting, though the simple nature of the prompts makes this unlikely. All photographs were taken under good conditions, and actual patient photographs may be harder to evaluate and lead to worse results. All meals were typical Japanese home-cooked meals, so they are not reflective of a full range of cuisines and do not account for snacks, restaurant food, or packaged meals. Variation in meal preparation methods could influence results significantly. Simple one-shot prompts were used, with no fine tuning—more involved methods may produce better results, including better accommodating variation in meal preparation methods. There is a need for follow-up work.

Conclusions

Using simple prompts with a current generation LLM produces a useful level of accuracy in assessing dietary fiber, with accuracy better than previously achieved by dieticians. This measurement technique enables intervention approaches that might have clinically-significant improvements in glycemic control for patients with diabetes.

Performance for other nutritional parameters was poor with the studied approach. The field of LLMs is advancing rapidly, and we expect that the next generation will provide better, and likely acceptable, performance for our one-shot, non-fine-tune approach for more than just fiber. Combined with applications that support a healthy diet for people with T2D, the assessment of dietary fiber using commercial artificial intelligence might lead to better interventions.

Footnotes

Acknowledgements

We thank Daniel Lane for his support in manuscript editing and scientific discussions. We made no use of generative AI in the development of this paper.

Abbreviations

AI, artificial intelligence; API, application programming interface; CI, confidence interval; DB, DialBetics; GPT, generative pre-trained Transformer; ICC, intraclass correlation coefficient; JSON, JavaScript Object Notation; LLM, large language model; ML, machine learning; T2D, type 2 diabetes; WFR, weighed food record.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.