Abstract

Keywords

Screening for diabetic retinopathy (DR) allows for timely detection and treatment of vision threatening disease 1 but adherence with DR screening exams remains low. Point-of-care fundus photography with remote interpretation has been employed to improve access to and adherence with screening. 2 However, in resource-poor settings there are very few eye care providers to review images and accurately identify referable DR. Previous literature has found that non-physicians can be trained to detect DR in adult fundus photos. 3 Artificial intelligence (AI) algorithms can also be used to detect DR in digital images, but they have not been cleared by the Food and Drug Administration (FDA) for use in pediatric patients.

In our study, two image graders completed an online “Diabetic Retinopathy Grading” course (link here) and one hour of training by retina specialists over videoconferencing. The two graders were a master’s graduate and an undergraduate student, both with no prior experience in ophthalmology. Our objective was to assess the performance of these graders in evaluating digital fundus photos from pediatric patients with diabetes, and to our knowledge this is the first reported study of its kind. We compared their sensitivity and specificity in detecting retinopathy from fundus photos to assessments from retina specialists and to a reference standard.

Retinal images from 90 patients were randomly chosen from a set of 308 patients, enrolled in a previously published prospective study conducted at the Johns Hopkins Children’s center. 4 Both graders independently reviewed the same retinal images from 90 patients and assigned each patient a DR grade according to the International Clinical Diabetic Retinopathy classification. 5 If the two eyes had different grades, then the eye with the higher grade was considered the DR grade for the patient. The reference standard determination is described in our prior publication. 4 Sensitivity, specificity, and agreement were determined against this reference standard.

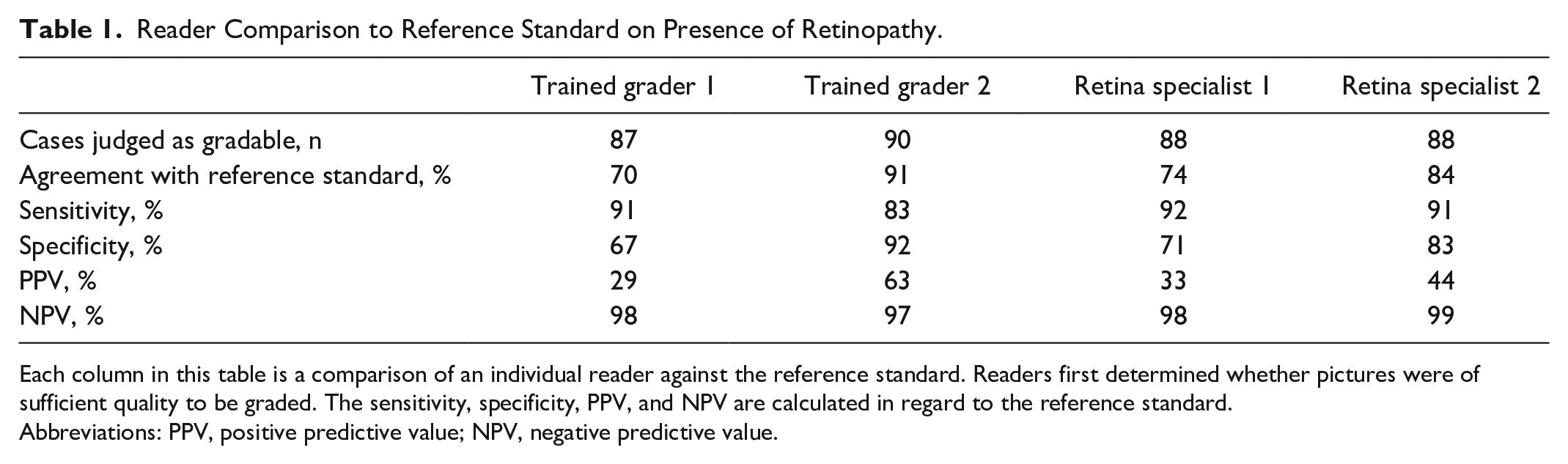

The average age of our patients was 13.8 years (range = 7-19 years), 52% were non-Hispanic white, 38% were non-Hispanic black, and 52% were female. Most patients had T1D (76%), and their average hemoglobin A1c was 9.1% (range = 5.6%-15%). Of the participants, 87% had no DR, 5% had mild DR, and 8% had moderate DR, per the reference standard. The performance of graders and retina specialists in comparison with reference standard is shown in Table 1.

Reader Comparison to Reference Standard on Presence of Retinopathy.

Each column in this table is a comparison of an individual reader against the reference standard. Readers first determined whether pictures were of sufficient quality to be graded. The sensitivity, specificity, PPV, and NPV are calculated in regard to the reference standard.

Abbreviations: PPV, positive predictive value; NPV, negative predictive value.

Our analysis demonstrates that graders with no prior experience in ophthalmology had high sensitivity and NPV, comparable to that of retina specialists, for detection of DR from fundus photos of pediatric patients after undergoing a standardized training program. The generalizability of these findings should be further evaluated in a larger group of graders. As prevalence of youth onset type 2 diabetes 6 and DR increases, policy planners will need to develop local solutions for DR screening in children. In areas with low resources or limited availability of retina specialists, graders can be used for triaging images for further review. In the future, as AI algorithms become FDA approved for children, AI-based screening may be an additional viable option.

Footnotes

Abbreviations

DR, diabetic retinopathy; AI, artificial intelligence; FDA, Food and Drug Administration; PPV, positive predictive value; NPV, negative predictive value.

Author Contributions

Conceptualization: PZ, TYL, RMW, and RC. Methodology: TYL, RMW, and RC. Investigation: PZ, LE, MB, TYL, RMW, and RC. Data curation: LP. Analysis: PZ and LP. Visualization: PZ, RMW, and RC. Writing and editing: PZ, LE, MB, TYL, RMW, and RC. Supervision: RMW and RC. Funding acquisition: RMW and RC.

Declaration of Conflicting Interests

The author(s) declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: RMW receives research support from Dexcom, not relevant to this study. No other disclosures to report.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported in part by an Unrestricted Grant from Research to Prevent Blindness to the Department of Ophthalmology and Visual Sciences at University of Wisconsin Madison. Support also comes from the Johns Hopkins Children’s Center Innovation Award to RMW and RC. RC is funded by the National Eye Institute K23 grant (5K23EY030911-03). The funders had no role in the design and conduct of the study; collection, management, analysis, and interpretation of the data; preparation, review, or approval of the manuscript and decision to submit the manuscript for publication.

Ethical Approval

This study was approved by the Johns Hopkins University School of Medicine institutional review board and has therefore been performed in accordance with the ethical standards laid down in the 1964 Declaration of Helsinki and its later amendments.

Informed Consent

Written informed consent was obtained from the caregiver for each participant.